Overcoming the OOD Generalization Challenge in Molecular Property Prediction: Methods, Benchmarks, and Future Frontiers

Accurately predicting molecular properties for out-of-distribution (OOD) compounds is a critical frontier in accelerating drug discovery and materials science.

Overcoming the OOD Generalization Challenge in Molecular Property Prediction: Methods, Benchmarks, and Future Frontiers

Abstract

Accurately predicting molecular properties for out-of-distribution (OOD) compounds is a critical frontier in accelerating drug discovery and materials science. This article explores the fundamental challenges, current methodological solutions, and rigorous validation frameworks for OOD generalization. We examine why machine learning models often fail when extrapolating beyond their training data, covering advanced techniques from transductive learning and bilinear transduction to invariant representation learning and semantic frameworks. The article also provides a comprehensive analysis of emerging benchmarks like BOOM, which reveal that even state-of-the-art models exhibit OOD errors 3x larger than their in-distribution performance. Finally, we discuss optimization strategies and future directions for researchers and development professionals seeking to build more robust, generalizable predictive models in biochemical domains.

The OOD Generalization Problem: Why Molecular Discovery Requires Moving Beyond IID Assumptions

Frequently Asked Questions

1. What are the primary types of Out-of-Distribution (OOD) generalization in molecular property prediction? The two principal paradigms are Extrapolation in Property Range and Extrapolation in Chemical Space [1] [2]. The first involves predicting property values that lie outside the range seen in the training data. The second involves making predictions for molecular structures or chemistries that are not represented in the training set [3].

2. My model performs well on a scaffold-split test set. Does this mean it can generalize well to truly novel chemistries? Not necessarily. Recent benchmarks indicate that traditional scaffold splits, based on the Bemis-Murcko framework, often do not pose a significant challenge to modern ML models, and performance on such splits can be strongly correlated with in-distribution (ID) performance [4]. More rigorous splitting strategies, such as those based on chemical similarity clustering, present a harder challenge and are a better indicator of true OOD generalization [4].

3. Why does my model, which excels at interpolation, fail dramatically on OOD tasks? Standard machine learning models, including deep learning, often rely on spurious correlations and statistical patterns present in the training data. When the test distribution shifts—either in property value or input space—these correlations break down, leading to poor performance [2] [5] [3]. This is a fundamental challenge for empirical risk minimization.

4. Can I trust a model that shows strong in-distribution performance to also perform well out-of-distribution? The relationship between ID and OOD performance is not guaranteed. While a strong positive correlation may exist for some OOD split strategies (e.g., scaffold splits), this correlation can be weak or non-existent for more challenging splits (e.g., cluster splits) [4]. Therefore, model selection based solely on ID performance is unreliable for OOD applications.

5. Does increasing the size of my training data always improve OOD generalization? No, contrary to typical neural scaling laws, increasing training data size or training time can yield only marginal improvement or even degradation in performance on genuinely challenging OOD tasks [3]. This highlights that simply adding more data from the same distribution does not teach the model the underlying causal mechanisms needed for extrapolation.

Troubleshooting Guides

Issue 1: Poor Performance on High-Value Property Prediction

Problem: Your model fails to identify molecules with property values (e.g., catalytic activity, binding affinity) that are higher than any seen in the training set [1].

Diagnosis: The model is likely struggling with extrapolation in the property range. Standard regression models are often biased towards predicting values close to the mean of the training data.

Solutions:

- Adopt a Transductive Approach: Implement methods like Bilinear Transduction or the Multi-Anchor Latent Transduction (MALT) framework. These techniques reparameterize the prediction problem by learning how property values change as a function of molecular differences, rather than predicting absolute values from new molecules directly [1] [6].

- Reframe as a Classification Task: Instead of regression, set a threshold within the in-distribution range to classify high-value samples, which can be more robust for identifying extremes [1].

Experimental Protocol: Evaluating Property Range Extrapolation

- Data Splitting: Sort your dataset by the target property value. Use the lower 80% of values for training and the upper 20% for testing. This ensures the test set contains property values outside the training support [1] [7].

- Model Training: Train your chosen model (e.g., a standard graph neural network) on the training set.

- Benchmarking: Compare against a transductive method like MALT [6].

- Evaluation Metrics:

Table 1: Example Performance Comparison for Property Range Extrapolation (Bulk Modulus Prediction)

| Model | OOD MAE | Extrapolative Precision | Recall |

|---|---|---|---|

| Ridge Regression (Baseline) | 12.5 | 0.15 | 0.10 |

| CrabNet | 11.8 | 0.18 | 0.12 |

| Bilinear Transduction | 9.1 | 0.27 | 0.30 |

Issue 2: Failure to Generalize to Novel Molecular Scaffolds

Problem: Your model's accuracy drops significantly when predicting properties for molecules with core structures (scaffolds) not present in the training data [4].

Diagnosis: The model has overfitted to specific structural motifs in the training data and cannot generalize to new chemical spaces.

Solutions:

- Leverage Quantum Mechanical Descriptors: Use a dataset like QMex to provide fundamental physics-based features. Combine this with an Interactive Linear Regression (ILR) model that incorporates interactions between QM descriptors and categorical structural information. This enhances interpretability and extrapolative performance, especially with small datasets [2].

- Utilize Advanced Molecular Representations: Move beyond simple composition or fingerprints. Use word-embedding-derived material vectors created from scientific literature, which can capture latent knowledge and improve predictions for compositionally complex molecules [8].

- Employ Robust OOD Detection: Use frameworks like PGR-MOOD to detect when a query molecule is OOD. This allows you to flag predictions that may be unreliable [9].

Experimental Protocol: Evaluating Chemical Space Extrapolation

- Data Splitting:

- Scaffold Split: Group molecules by their Bemis-Murcko scaffolds. Assign entire scaffolds to either training or test sets [4].

- Cluster Split: Generate molecular fingerprints (e.g., ECFP4), perform K-means clustering, and assign entire clusters to training or test sets. This is a more challenging and realistic OOD test [4].

- Model Training: Train models using representations that encode physical knowledge, such as QM descriptors [2] or literature-derived embeddings [8].

- Evaluation Metrics:

Table 2: Performance of Models on Different Chemical Space Splits (Example)

| Model | Scaffold Split (MAE) | Cluster Split (MAE) | ID vs. OOD Correlation (Pearson r) |

|---|---|---|---|

| Random Forest | 0.85 | 1.52 | ~0.9 (Scaffold) / ~0.4 (Cluster) |

| Message-Passing GNN | 0.78 | 1.48 | ~0.9 (Scaffold) / ~0.4 (Cluster) |

| QMex-ILR | - | - | Improved extrapolation reported [2] |

Issue 3: Model Shows Systemic Bias Against Certain Element Classes

Problem: Your model makes consistently poor predictions (e.g., systematic overestimation or underestimation) for molecules containing specific elements, such as H, F, or O, when they are left out of training [3].

Diagnosis: The model has learned element-specific biases from the training data and cannot handle the chemical dissimilarity introduced when these elements are absent during training.

Solutions:

- Bias Diagnosis with SHAP: Use SHAP (SHapley Additive exPlanations) analysis to quantify the contribution of compositional versus structural features to model predictions. This identifies if poor performance stems from chemistry or geometry [3].

- Incorporate Physical Heuristics: Use domain knowledge to apply post-hoc corrections or to design models that explicitly account for known chemical behaviors of problematic elements.

Experimental Protocol: Diagnosing Elemental Bias

- Task Creation: Perform a leave-one-element-out evaluation. For each element X, train a model on all materials not containing X and test it exclusively on materials that do contain X [3].

- Model Training & Evaluation: Train multiple models (e.g., RF, GNNs) and evaluate them on these tasks using R².

- SHAP Analysis:

- Train a correction model to predict the error of the primary model.

- Compute the mean absolute SHAP values for compositional and structural features for the correction model.

- A dominance of compositional feature contributions indicates a chemical origin for the bias [3].

The Scientist's Toolkit

Table 3: Essential Resources for OOD Molecular Property Prediction Research

| Item | Function | Example/Reference |

|---|---|---|

| BOOM Benchmark | Provides systematic benchmarks for evaluating OOD performance on molecular property prediction tasks. | [7] |

| QMex Descriptor Dataset | A set of quantum mechanical descriptors to improve model interpretability and extrapolative performance on small experimental datasets. | [2] |

| MatEx | An open-source implementation for materials extrapolation, providing a transductive approach to OOD property prediction. | [1] |

| Bilinear Transduction Algorithm | A method that improves extrapolation by learning how properties change as a function of material differences. | [1] |

| PGR-MOOD Framework | An OOD detection method for molecular graphs that uses prototypical graph reconstruction to identify out-of-distribution samples. | [9] |

| Word-Embedding Vectors | Representations of materials derived from scientific literature mining, used to enhance predictive models for complex compositions. | [8] |

| SHAP (SHapley Additive exPlanations) | A game-theoretic method to explain the output of any machine learning model, crucial for diagnosing sources of OOD error. | [3] |

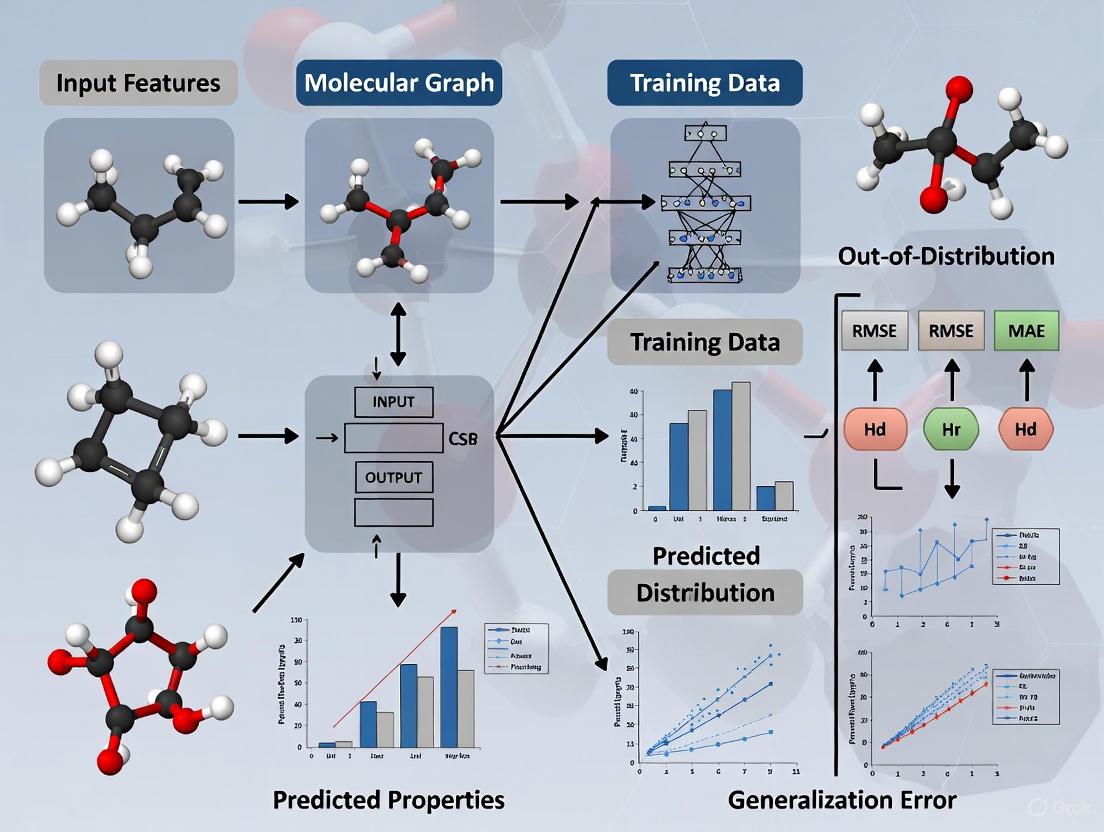

Experimental Workflow Visualization

The following diagram illustrates a robust workflow for developing and evaluating models for OOD generalization, integrating the troubleshooting steps and solutions discussed above.

Workflow for OOD Model Development and Evaluation

The Critical Importance of OOD Prediction for Real-World Molecular Discovery Pipelines

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Why does my molecular property prediction model, which has excellent in-distribution (ID) performance, fail to identify high-performing candidate molecules during virtual screening?

A1: This is a classic symptom of poor Out-of-Distribution (OOD) generalization. Molecule discovery is inherently an OOD prediction problem, as identifying novel, high-performing molecules requires extrapolating to property values or chemical structures outside the training data's distribution [1] [10]. Models are often trained and selected based on ID performance, which does not guarantee their ability to extrapolate. One study found that even top-performing models can exhibit an average OOD error three times larger than their ID error [10]. To address this, you should benchmark your models on specifically designed OOD splits that hold out high or low property values.

Q2: What is the difference between OOD generalization on inputs (chemical space) versus outputs (property values), and why does it matter for my discovery pipeline?

A2: These are two distinct but critical types of extrapolation [1]:

- Input Space (Chemical Structure): Generalizing to unseen classes of molecules, novel scaffolds, or functional groups not present in the training data.

- Output Space (Property Values): Generalizing to predict property values that fall outside the range of values seen during training.

Both are crucial for discovery. Focusing solely on input space generalization can sometimes reduce to an interpolation problem if test sets remain within the training data's representation space [1]. For discovering high-performance materials, output space extrapolation is often the primary goal. Your pipeline's success depends on clearly defining which type of OOD generalization is most relevant to your campaign and evaluating it accordingly.

Q3: I am using a large chemical foundation model. Should I expect it to have strong OOD generalization capabilities by default?

A3: Not necessarily. Current benchmarks indicate that existing chemical foundation models do not yet show strong OOD extrapolation capabilities across a wide range of tasks [10]. While they offer promise for limited-data scenarios through transfer and in-context learning, their OOD performance is not guaranteed. Factors such as the diversity of the pre-training data, the pre-training objectives, and the model architecture all significantly impact OOD generalization. You should perform your own OOD evaluation on your target property rather than assuming strong baseline performance.

Q4: How can I handle dataset shift when applying a model trained on one dataset (e.g., computational data) to another (e.g., experimental data)?

A4: Dataset shift is a common form of OOD data that degrades model performance. A proven strategy is to implement a reject option [11]. The Out-of-Distribution Reject Option for Prediction (ODROP) method involves a two-stage process:

- OOD Detection: An OOD detection model scores how much a new test sample diverges from the training data distribution.

- Reject Prediction: Samples identified as OOD beyond a certain threshold are rejected, and the primary model abstains from making a prediction for them. This method has been shown to improve AUROC metrics on real-world health data by rejecting OOD samples, and it can be applied without modifying your existing pre-trained predictive model [11].

Troubleshooting Common Experimental Issues

| Problem Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| High ID accuracy, poor real-world screening performance | Model fails at output space (property value) extrapolation. | Implement a transductive model like Bilinear Transduction [1]; Benchmark on OOD splits [10]. |

| Model performs poorly on molecules with novel substructures | Model fails at input space (chemical) extrapolation. | Use models with high inductive bias for specific properties [10]; Explore domain generalization algorithms [12]. |

| Inconsistent model performance across different design cycles | Distribution shift between iterative experimental cycles. | Apply domain generalization (DG) methods and leverage ensembling for robustness [12]. |

| Unreliable predictions on external datasets | Dataset shift due to different data sources or measurement instruments. | Deploy an OOD reject option (ODROP) to filter out-of-domain samples before prediction [11]. |

| High variance in OOD performance across tasks | Over-reliance on a single model architecture. | Test a diverse suite of models (e.g., GNNs, Transformers, traditional ML); performance is task-dependent [10]. |

Key Experimental Protocols & Data

Protocol 1: Benchmarking OOD Generalization for Molecular Properties

This protocol, based on the BOOM benchmark, evaluates a model's ability to extrapolate to tail-end property values [10].

1. Objective: Systematically assess the OOD generalization performance of molecular property prediction models. 2. Materials:

- Datasets: Standard molecular datasets like QM9 (for isotropic polarizability, HOMO-LUMO gap, etc.) or others (e.g., 10k Dataset for density).

- Models: A range of models from Random Forests (with RDKit features) to Graph Neural Networks (GNNs) and Transformers (ChemBERTa, MolFormer).

3. OOD Splitting Procedure:

- Fit a Kernel Density Estimator (KDE) with a Gaussian kernel to the distribution of the target property values for the entire dataset.

- Calculate the probability of each molecule given its property value using the KDE model.

- Assign the molecules with the lowest 10% of probability scores to the OOD test set. This captures the tails of the property value distribution.

- Randomly sample from the remaining molecules (e.g., 10%) to create an In-Distribution (ID) test set.

- Use the rest for training and validation. 4. Evaluation:

- Compare Mean Absolute Error (MAE) or other regression metrics on the ID test set versus the OOD test set.

- A robust model should maintain low error on both sets. A large performance gap indicates poor OOD generalization.

Protocol 2: Implementing a Transductive Model for OOD Extrapolation

This protocol details the use of a Bilinear Transduction model to improve extrapolation to high-target property values [1].

1. Objective: Train a predictor that extrapolates zero-shot to property value ranges higher than those in the training data. 2. Core Idea: Reparameterize the prediction problem. Instead of predicting a property value from a new material's representation, the model learns to predict how the property value changes based on the difference in representation space between a known training example and the new sample. 3. Methodology: * Input: During inference, a prediction for a new candidate molecule is made based on a chosen training example and the representation difference between that training example and the new candidate. * Training: The model is trained to learn these analogical input-target relations from the training set. 4. Evaluation: * Extrapolative Precision: Measures the fraction of true top OOD candidates correctly identified among the model's top predictions. The Bilinear Transduction method has been shown to improve this precision by 1.5x for molecules [1]. * Recall of high-performing candidates: This method can boost the recall of top OOD candidates by up to 3x compared to baseline models [1].

Quantitative Performance Comparison of Models on OOD Tasks

The following table summarizes key quantitative findings from recent OOD studies in molecules and materials. This data can serve as a baseline for evaluating your own models.

| Model / Method | Task / Domain | In-Distribution (ID) Performance | Out-of-Distribution (OOD) Performance | Key Metric |

|---|---|---|---|---|

| Bilinear Transduction [1] | Solid-state Materials | Low MAE (see reference) | 1.8x improvement in extrapolative precision | MAE, Recall |

| Bilinear Transduction [1] | Molecules | Low MAE (see reference) | 1.5x improvement in extrapolative precision | MAE, Recall |

| Top Performing Model (BOOM) [10] | Molecular Property Prediction | Low MAE | OOD error 3x larger than ID error | Mean Absolute Error |

| ODROP (VAE method) [11] | Diabetes Onset Prediction (Health Data) | AUROC: ~0.80 (on training domain) | AUROC: 0.90 (after rejecting 31.1% OOD data) | AUROC |

Workflow Visualizations

Diagram 1: OOD Reject Option (ODROP) Workflow

Diagram 2: OOD Benchmarking via Property Splitting

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" – models, representations, and algorithms – essential for building robust OOD prediction pipelines.

| Tool Name | Type | Primary Function in OOD Research | Key Consideration |

|---|---|---|---|

| Bilinear Transduction [1] | Algorithm | Enables zero-shot extrapolation to higher property value ranges by learning from analogical differences. | A transductive method that shows consistent improvement in precision and recall for OOD extremes. |

| Kernel Density Estimation (KDE) [10] | Statistical Method | Used to create meaningful OOD splits for benchmarking by identifying low-probability samples from the property value distribution. | Provides a more nuanced split than simple value thresholds, especially for non-unimodal distributions. |

| Graph Neural Networks (GNNs) [10] | Model Architecture | Learns property-structure relationships directly from molecular graphs. Can have high inductive bias suitable for certain OOD tasks. | Performance varies; architectures include invariant GNNs (permutation), equivariant GNNs (E(3)), and more. |

| Chemical Transformers (ChemBERTa, MolFormer) [10] | Model Architecture | Foundation models pre-trained on large chemical corpora (e.g., SMILES) for transfer learning. | Current versions may not generalize strongly OOD by default and require careful evaluation. |

| Deep Ensembles [13] [12] | Uncertainty Method | Improves predictive performance and uncertainty quantification on OOD data by combining multiple models. | Shown to be effective for "far-OOD" detection and is a robust baseline for domain generalization. |

| DomainBed Framework [12] | Benchmarking Tool | Provides a standardized environment for evaluating domain generalization algorithms across multiple domains (design cycles). | Adapted for therapeutic antibody design, useful for testing robustness to distribution shifts. |

Troubleshooting Guide: OOD Generalization in Molecular Property Prediction

This guide addresses common failure modes and solutions when machine learning models for molecular property prediction encounter out-of-distribution (OOD) samples.

FAQ: OOD Generalization Challenges

Q1: Why do our models perform well during validation but fail to identify promising drug candidates during virtual screening?

This failure often stems from a fundamental mismatch between the model's training data and the chemical space being explored during discovery. Molecular discovery is inherently an OOD prediction problem, as finding novel, high-performing molecules requires extrapolating beyond known chemical space and property values [1] [10]. Models optimized for in-distribution (ID) performance often struggle with OOD generalization, with one large-scale benchmark showing average OOD error can be 3x larger than ID error [10]. This performance drop occurs because standard training assumes independent and identically distributed data, while real-world discovery involves compounds with novel scaffolds or extreme property values not seen during training.

Q2: What types of OOD splitting strategies pose the greatest challenge for molecular property prediction models?

The difficulty of OOD generalization strongly depends on how the OOD data is defined and split. The table below summarizes common splitting strategies and their impact on model performance:

| Splitting Strategy | Description | Impact on Model Performance |

|---|---|---|

| Random Split | Data randomly divided into training/test sets | Represents best-case performance; models typically perform well |

| Scaffold Split | Test set contains different molecular frameworks (Bemis-Murcko scaffolds) | Moderate challenge; some performance degradation but models often generalize reasonably well [14] [15] |

| Cluster-Based Split | Test set from distinct chemical clusters (via UMAP/K-means + ECFP4 fingerprints) | Most challenging; causes significant performance drop for both classical ML and GNN models [14] [15] |

| Property Value Split | Test set contains molecules with property values at distribution tails | Critical for discovery; models struggle to predict extremes beyond training range [1] [10] |

Q3: Can we use in-distribution performance as a reliable indicator for OOD generalization capability?

The relationship between ID and OOD performance is complex and depends heavily on the splitting strategy. While a strong positive correlation exists for scaffold splitting (Pearson's r ∼ 0.9), this correlation weakens significantly for cluster-based splitting (Pearson's r ∼ 0.4) [14] [15]. This nuanced relationship means model selection based solely on ID performance may not yield optimal OOD generalization, particularly for challenging OOD scenarios.

Q4: How does experimental error in training data impact model reliability for OOD prediction?

Experimental uncertainty fundamentally limits predictive performance. For solubility prediction, experimental errors between 0.17-0.6 logs constrain maximum achievable correlation (Pearson's r) to approximately 0.77 when error is 0.6 logs [16]. This propagates through model development, establishing a performance ceiling unaffected by model architecture complexity.

Troubleshooting OOD Failure Modes

Problem: Poor extrapolation to high-value property ranges during virtual screening

Explanation: Models fail to identify molecules with property values beyond the training distribution, which is crucial for discovering high-performance materials or drug candidates [1].

Solution: Implement transductive learning approaches like Bilinear Transduction that reparameterize the prediction problem to focus on how property values change as a function of material differences rather than predicting absolute values from new materials [1].

Experimental Protocol: Bilinear Transduction for OOD Property Prediction

- Representation: Encode molecular structures as stoichiometry-based representations or molecular graphs

- Training: Learn to predict property values based on known training examples and differences in representation space

- Inference: For new candidates, predict properties based on chosen training examples and their differences from target samples

- Validation: Use kernel density estimation to quantify alignment between predicted and ground truth OOD distributions [1]

Performance: This approach improves extrapolative precision by 1.8× for materials and 1.5× for molecules, boosting recall of high-performing candidates by up to 3× [1]

OOD Failure Diagnosis and Solution Workflow

Problem: Model overconfidence on novel molecular scaffolds

Explanation: Despite common belief that scaffold splitting presents major OOD challenges, recent evidence shows both classical ML and GNN models often generalize reasonably well to novel scaffolds [14]. The more significant failure occurs with cluster-based splits that isolate chemically distinct populations.

Solution:

- Implement more challenging evaluation using chemical similarity clustering (UMAP/K-means with ECFP4 fingerprints)

- Focus development on improving performance for these most challenging OOD scenarios [14]

Problem: Toxicity prediction failures in preclinical development

Explanation: Approximately 56% of drug candidates fail due to safety problems, often detected too late in preclinical animal studies. This represents a critical OOD generalization failure where models cannot predict adverse effects for novel compound classes [17].

Solution: Implement integrative AI platforms like SAFEPATH that combine cheminformatics and bioinformatics:

- Cheminformatics: Machine learning models predicting proteome-wide binding at different concentrations and species

- Bioinformatics: Causal knowledge graphs mapping pathways using diverse omics databases [18]

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Resource | Function | Application Context |

|---|---|---|

| BOOM Benchmark | Standardized framework for evaluating OOD generalization | Benchmarking model performance across 10+ molecular properties and 140+ model combinations [10] |

| Bilinear Transduction | Transductive learning algorithm for OOD prediction | Improving extrapolation to high-value property ranges in materials and molecules [1] |

| Kernel Density Estimation | Non-parametric method for estimating probability densities | Identifying tail-end samples for OOD test set creation [10] |

| SAFEPATH | Integrative AI platform combining cheminformatics and bioinformatics | Predicting toxicity mechanisms and redesigning failed drug candidates [18] |

| Therapeutic Data Commons | Curated benchmark resources for molecular machine learning | Accessing standardized ADMET and bioactivity prediction datasets [14] |

| Cluster-Based Splitting | Method using chemical similarity to create challenging OOD tests | Realistic model evaluation using UMAP/K-means + ECFP4 fingerprints [14] |

Molecular Property Prediction Model Development Workflow

Welcome to the OOD Generalization Technical Support Center

This resource is designed for researchers and scientists tackling the challenge of out-of-distribution (OOD) generalization in molecular property prediction. Here you will find troubleshooting guides and FAQs to help you diagnose and address the common issue where model performance significantly degrades on novel chemical data.

Troubleshooting Guide: Diagnosing OOD Generalization Failures

Problem: My molecular property prediction model shows a significant performance drop (e.g., a 3x increase in error) when evaluating on out-of-distribution compounds.

| Troubleshooting Step | Key Questions to Ask | Common Symptoms & Solutions |

|---|---|---|

| 1. Diagnose the Data Split | Was OOD defined by input (chemical structure) or output (property value)? How was the OOD test set constructed? [10] | Symptom: Unclear OOD criteria lead to contaminated evaluation.Solution: Adopt a rigorous splitting method, such as using a Kernel Density Estimator on the target property values to assign the lowest 10% probability samples to the OOD test set [10]. |

| 2. Analyze Model Architecture | Is the model architecture appropriate for the complexity of the property? Does it have sufficient inductive bias? [10] | Symptom: High error on OOD tasks involving simple, specific properties.Solution: For such tasks, use deep learning models with high inductive bias. Graph Neural Networks (GNNs) like Chemprop can be a good starting point [10]. |

| 3. Check Pre-training & Foundation Models | Was the model pre-trained? On what data? Does it use in-context learning? [10] | Symptom: Current chemical foundation models do not show strong OOD extrapolation despite in-context learning capabilities [10].Solution: Do not rely solely on foundation models for OOD tasks without extensive benchmarking on your specific property. |

| 4. Review Hyperparameter Optimization | Was hyperparameter optimization (HPO) performed with an OOD validation set? [10] | Symptom: Model is overfitted to the in-distribution data due to HPO that only maximizes ID performance.Solution: Perform extensive ablation studies and HPO with a separate OOD validation split to guide the model selection towards better generalization [10]. |

Frequently Asked Questions (FAQs)

Q1: Our team's benchmark shows that even the top-performing model has an average OOD error that is 3x larger than its in-distribution error. Is this normal? Yes, unfortunately, this is a common and significant finding in current research. A large-scale benchmarking study (BOOM) that evaluated over 140 model-and-task combinations found that no existing model achieved strong OOD generalization across all molecular property prediction tasks. The top-performing model in that study still exhibited this 3x average error increase on OOD data, highlighting that OOD generalization remains a major, unsolved frontier challenge in the field [7] [10].

Q2: What is the fundamental difference between an "OOD split" and a standard random "test split"? The key difference lies in how the test data relates to the training data.

- A standard random test split assumes that the test data is drawn from the same distribution as the training data (in-distribution). It evaluates a model's ability to interpolate or handle seen variations.

- An OOD split is deliberately constructed so that the test data is fundamentally different from the training data. In the context of molecular property prediction, a robust method is to define OOD with respect to the target property values, placing molecules with property values on the tail ends of the overall distribution into the OOD test set. This evaluates a model's ability to extrapolate, which is essential for genuine molecular discovery [10].

Q3: We are using a large, pre-trained chemical foundation model. Why is it still failing on our specific OOD task? While chemical foundation models with transfer and in-context learning are promising for data-limited scenarios, current evidence suggests they have not yet solved the OOD extrapolation problem. The BOOM benchmark found that present-day foundation models do not demonstrate strong OOD generalization capabilities across the board [10]. Their performance can be influenced by factors like the diversity of the pre-training data, the specific pre-training tasks, and the architectural alignment with the target property.

Q4: How can we systematically evaluate and improve our model's OOD performance? A robust methodology involves several key steps, many of which are formalized in the BOOM benchmark [10]:

- Standardized OOD Splitting: Implement a consistent, property-based OOD splitting method (e.g., KDE on targets) for your dataset.

- Architecture Auditing: Benchmark a variety of models, from traditional GNNs to modern transformers, as their OOD performance can vary significantly across different chemical tasks [10].

- Ablation Studies: Systematically analyze how OOD performance is impacted by components like pre-training data, hyperparameters, and molecular representations.

- Focus on OOD during HPO: Use an OOD validation set, not just an ID validation set, to guide hyperparameter optimization and model selection.

Experimental Protocol: Establishing a Benchmark for OOD Performance

This protocol outlines the methodology for creating standardized OOD benchmarks, as used in the BOOM study, to ensure consistent and comparable evaluation of molecular property prediction models [10].

Objective: To generate training, in-distribution (ID) test, and out-of-distribution (OOD) test splits for molecular property datasets that rigorously test a model's extrapolation capabilities.

Materials:

- Datasets: Standard molecular property datasets such as QM9 (containing ~133k small molecules with CHONF atoms) or the 10k Dataset (with ~10k experimentally synthesized CHON molecules) [10].

- Software: A computational environment with Python and scientific libraries (e.g., Scikit-learn) for density estimation.

Methodology:

- Data Preprocessing: Load the dataset and extract the molecular structures (e.g., SMILES strings) and their associated numerical property values for the target property (e.g., HOMO-LUMO gap, polarizability).

- OOD Splitting via Kernel Density Estimation (KDE): a. Fit a kernel density estimator (with a Gaussian kernel) to the distribution of the target property values for the entire dataset. b. Use the fitted KDE to calculate the probability (density) of each molecule's property value. c. Rank all molecules based on their calculated probability, from lowest to highest.

- Splitting: a. OOD Test Set: Assign the molecules with the lowest probabilities (e.g., the lowest 10% for QM9, or the lowest 1000 molecules for the 10k dataset) to the OOD test split. These represent the "tail ends" of the property distribution. b. ID Test Set: From the remaining pool of molecules (those not in the OOD set), randomly select a subset (e.g., 10% for QM9, 5% for 10k) for the ID test set. c. Training Set: The remaining molecules are used for model training and fine-tuning.

This workflow creates a clear separation where the OOD test set contains molecules with property values that are least likely under the training distribution, directly testing extrapolation.

The Scientist's Toolkit: Research Reagent Solutions for OOD Benchmarking

This table details the key "research reagents"—in this context, model architectures and data representations—essential for conducting a thorough investigation into OOD generalization.

| Item / Solution | Function / Description | Key Considerations for OOD |

|---|---|---|

| Random Forest (RDKit) [10] | A baseline model using chemically-informed molecular descriptors as input to a Random Forest regressor. | Serves as a crucial performance baseline. Its performance can help gauge the complexity of the OOD task. |

| Graph Neural Networks (GNNs) [10] | Models (e.g., Chemprop, TGNN) that operate directly on the graph structure of a molecule, encoding atoms and bonds. | Offer high inductive bias; can perform well on OOD tasks with simple, specific properties. Permutation-invariant [10]. |

| Equivariant GNNs (e.g., EGNN, MACE) [10] | Advanced GNNs that incorporate 3D molecular geometry (atom positions, distances) and are equivariant to rotations/translations. | Provide E(3)-equivariance. May capture finer geometric determinants of properties, potentially aiding OOD generalization [10]. |

| Transformer Models (e.g., ChemBERTa, MolFormer) [10] | Large models pre-trained on vast chemical corpora (e.g., PubChem) using SMILES string representations of molecules. | Offer transfer learning. Current evidence shows they do not consistently solve OOD extrapolation, making them important to benchmark [10]. |

| Kernel Density Estimation (KDE) Splitting [10] | A statistical method for creating rigorous OOD test splits based on the tail-ends of the property value distribution. | Critical for producing a reliable benchmark. Avoids ad-hoc splitting methods that can lead to contaminated or non-representative OOD evaluations. |

Model Selection Framework for OOD Tasks

Selecting the right model for an OOD task is non-trivial. The following diagram outlines a decision framework based on current research findings to guide researchers. No single model is best for all scenarios; the choice depends on the property complexity and available data [10].

Frequently Asked Questions (FAQs)

1. What are "activity cliffs" and why are they a problem for molecular property prediction?

Activity cliffs (ACs) occur when structurally similar molecules exhibit significantly different biological activities [19] [20] [21]. This creates sharp discontinuities in the structure-activity relationship (SAR) landscape that are difficult for machine learning (ML) models, particularly Graph Neural Networks (GNNs), to capture accurately [20]. When the latent space of a model is primarily optimized for structural similarity, it tends to place these structurally-similar molecules close together, leading to poor predictions when their activities are vastly different [20] [22].

2. How do dataset biases impact the real-world performance of my models?

Dataset biases can severely limit a model's ability to generalize, especially to out-of-distribution (OOD) data. The BOOM benchmark study found that even top-performing models exhibited an average OOD error 3x larger than their in-distribution error [7]. Common biases include:

- Representation Bias: Training and test sets may not share the same distribution of chemical scaffolds or property values [1].

- Size Bias: Performance on activity cliff molecules is highly dataset-dependent, particularly in low-data scenarios [22].

- Splitting Bias: Random splits can create artificially optimistic performance by allowing information leakage between train and test sets [1] [22].

3. What is "structural entanglement" and how does it relate to activity cliffs?

Structural entanglement refers to the phenomenon where a model's latent space confounds structural similarity with activity similarity. In standard GNNs, the close embedding of structurally similar molecules makes it difficult to resolve cases where small structural changes lead to large activity differences—the very definition of activity cliffs [20]. This entanglement results in latent spaces that are not optimally organized for activity prediction tasks [19] [20].

4. Are some ML models better at handling activity cliffs than others?

According to comprehensive benchmarking, classical machine learning methods with engineered molecular descriptors often outperform more complex deep learning approaches on activity cliff prediction [22]. Graph-based models have shown particular difficulty with these challenging cases [22]. However, newer approaches specifically designed to address activity cliffs, such as AC-informed contrastive learning (ACANet), have demonstrated significant improvements in capturing these difficult relationships [19] [20].

5. How can I properly benchmark my model's performance on activity cliffs?

Specialized tools like MoleculeACE (Activity Cliff Estimation) have been developed specifically for this purpose [22]. This Python tool allows you to:

- Calculate standard performance metrics (e.g., RMSE) on your entire test set

- Specifically evaluate performance on activity cliff molecules (RMSE_cliff)

- Use predefined or custom definitions of activity cliffs Proper benchmarking should enforce similar proportions of activity cliff compounds in both training and test sets through stratified splitting strategies [22].

Troubleshooting Guides

Issue: Poor Performance on Out-of-Distribution (OOD) Data

Symptoms:

- Model performs well on validation data but poorly on new chemical series or property ranges

- Inability to identify high-performing candidates outside training distribution

- Predictions fail to extrapolate to higher property value ranges than seen in training

Diagnosis and Solutions:

Table: Methods for Improving OOD Generalization

| Method | Key Principle | Best For | Reported Improvement |

|---|---|---|---|

| Bilinear Transduction [1] | Reparameterizes prediction based on material differences rather than absolute values | Extrapolating to higher property value ranges | 1.5× better extrapolative precision for molecules; 3× boost in recall of high-performing OOD candidates [1] |

| AC-informed Contrastive Learning (ACANet) [19] [20] | Introduces activity cliff awareness through triplet loss in latent space | Datasets with prevalent activity cliffs; bioactivity prediction | 7.16% average improvement on LSSNS datasets; 6.59% on HSSMS datasets [20] |

| Transductive Learning Approaches [1] | Leverages analogical input-target relations in training and test sets | Virtual screening of large candidate databases | Improves extrapolative precision by 1.8× for materials, 1.5× for molecules [1] |

Step-by-Step Protocol: Implementing AC-Informed Contrastive Learning

Triplet Mining: For each batch during training, identify high-value activity cliff triplets (HV-ACTs) consisting of:

- Anchor (A): A reference compound

- Positive (P): Structurally similar to A with similar activity

- Negative (N): Structurally similar to A but with significantly different activity

Parameter Setup: Define cliff cut-off parameters:

- Cliff lower (cl): Minimum activity difference for meaningful cliffs

- Cliff upper (cu): Maximum activity difference to focus on

Loss Calculation: Compute the combined ACA loss function:

- Standard regression loss (MAE or MSE)

- Triplet Soft Margin (TSM) loss with unique margins calculated from ground truth labels

- Balance with tunable hyperparameter α:

L_ACA = L_regression + α * L_TSM

Training Monitoring: Track the number of mined HV-ACTs throughout training - successful AC-awareness should gradually reduce this number as the latent space becomes better organized [20].

Issue: Model Fails to Distinguish Structurally Similar Compounds with Different Activities

Symptoms:

- High error rates on matched molecular pairs (MMPs) with large activity differences

- Latent space clusters compounds primarily by structure rather than activity

- Poor performance in lead optimization where small structural changes matter

Diagnosis and Solutions:

Experimental Protocol: Evaluating Activity Cliff Sensitivity

Data Preparation:

Model Assessment:

- Calculate overall RMSE on the entire test set

- Compute RMSE_cliff specifically on activity cliff molecules

- Compare the performance gap between these metrics

Benchmarking:

- Compare against traditional ECFP methods, which show natural advantages for MMP-cliff prediction [23]

- Evaluate across multiple datasets to account for dataset-specific biases

Table: Performance Comparison on Activity Cliff Prediction

| Model Type | Overall RMSE | RMSE on Activity Cliffs | Performance Gap |

|---|---|---|---|

| Traditional ECFP [23] [22] | Competitive | Lower than deep learning methods | Smaller gap |

| Graph Neural Networks [20] [22] | Variable | Higher error rates | Larger gap, especially without AC-awareness |

| AC-informed GNNs (ACANet) [20] | Improved | Significantly better than AC-agnostic models | Reduced gap by better latent space organization |

Experimental Protocols & Workflows

ACANet Experimental Workflow

ACANet Workflow for Molecular Property Prediction

OOD Property Prediction Using Bilinear Transduction

OOD Prediction via Bilinear Transduction

Research Reagent Solutions

Table: Essential Tools and Resources for Molecular Property Prediction Research

| Resource | Type | Primary Function | Access |

|---|---|---|---|

| ACNet [23] | Dataset | Large-scale benchmark for activity cliff prediction with 400K+ MMPs across 190 targets | GitHub |

| MoleculeACE [22] | Python Tool | Benchmarking model performance on activity cliffs with customizable definitions | GitHub |

| MatEx [1] | Algorithm | Open-source implementation for OOD property prediction using bilinear transduction | GitHub |

| ACANet [19] [20] | Framework | AC-informed contrastive learning compatible with any GNN architecture | Code available with publication |

| BOOM Benchmark [7] | Evaluation Framework | Systematic benchmarking of OOD molecular property prediction across 140+ model/task combinations | GitHub |

Advanced Architectures and Techniques for Improved OOD Generalization

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is the core conceptual difference between Bilinear Transduction and traditional regression models for property prediction?

A1: Traditional regression models learn to predict a property value directly from a new material's representation. In contrast, Bilinear Transduction reparameterizes the problem. It does not predict property values for new candidates directly. Instead, it learns how property values change as a function of the difference in representation space between a known training example and the new sample. Predictions are made based on a chosen training example and the representation-space difference between it and the new sample [1] [24].

Q2: My model performs well on in-distribution (ID) data but fails on out-of-distribution (OOD) samples. Is this normal?

A2: Yes, this is a common and documented challenge. Classical machine learning models face significant difficulties in extrapolating property predictions through regression. A comprehensive benchmark study (BOOM) found that even top-performing models exhibited an average OOD error 3x larger than their in-distribution error. This highlights that strong ID performance does not guarantee OOD generalization [7].

Q3: What are the practical benefits of improved OOD extrapolation for drug development?

A3: Enhancing extrapolative capabilities improves the precision of screening large candidate databases. This identifies more promising compounds and molecules with exceptional properties, which can guide synthesis and computational efforts. In practice, this translates to reduced time and resource expenditure on low-potential candidates, thereby accelerating the discovery of viable materials and molecules [1] [24] [25].

Q4: How is "extrapolation" defined in the context of this method?

A4: In materials science, extrapolation can refer to the domain (materials space) or the range (property values) of the predictive function. Bilinear Transduction specifically addresses extrapolation in the output material property values, aiming to predict values that fall outside the range observed in the training data [1] [24].

Q5: I am getting inconsistent results when applying the method to different datasets. What could be the cause?

A5: The performance of extrapolation methods can be sensitive to the dataset's characteristics. The BOOM benchmark found that no single model achieves strong OOD generalization across all molecular property prediction tasks. Performance can be influenced by factors like the specific property being predicted, dataset size, and the chemical diversity of the molecules. It is recommended to benchmark the method on your specific task and dataset [7].

Performance Data and Experimental Protocols

Quantitative Performance of Bilinear Transduction

Table 1: Mean Absolute Error (MAE) for OOD Predictions on Solid-State Materials [24]

| Dataset | Property | Ridge Regression | MODNet | CrabNet | Bilinear Transduction (Ours) |

|---|---|---|---|---|---|

| AFLOW | Bulk Modulus (GPa) | 74.0 ± 3.8 | 93.06 ± 3.7 | 59.25 ± 3.2 | 47.4 ± 3.4 |

| AFLOW | Debye Temperature (K) | 0.45 ± 0.03 | 0.62 ± 0.03 | 0.38 ± 0.02 | 0.31 ± 0.02 |

| AFLOW | Shear Modulus (GPa) | 0.69 ± 0.03 | 0.78 ± 0.04 | 0.55 ± 0.02 | 0.42 ± 0.02 |

| Matbench | Band Gap (eV) | 6.37 ± 0.28 | 3.26 ± 0.13 | 2.70 ± 0.13 | 2.54 ± 0.16 |

| Matbench | Yield Strength (MPa) | 972 ± 34 | 731 ± 82 | 740 ± 49 | 591 ± 62 |

| MP | Bulk Modulus (GPa) | 151 ± 14 | 60.1 ± 3.9 | 57.8 ± 4.2 | 45.8 ± 3.9 |

Table 2: Extrapolative Precision for Identifying Top 30% of OOD Candidates [1]

Bilinear Transduction demonstrated a significant boost in the recall of high-performing OOD candidates—up to 3x for materials and 2.5x for molecules—compared to non-transductive baselines. It also improved extrapolative precision by 1.8x for materials and 1.5x for molecules [1] [24].

Detailed Experimental Protocol

Objective: Train and evaluate the Bilinear Transduction model for zero-shot extrapolation to higher property value ranges than present in the training data.

Materials and Datasets:

- Solids Data: Use established benchmarks such as AFLOW, Matbench, and the Materials Project (MP). These cover various property classes (electronic, mechanical, thermal) and provide material compositions and property values [1] [24].

- Molecules Data: Use datasets from MoleculeNet (e.g., ESOL, FreeSolv, Lipophilicity, BACE) which provide molecular graphs (as SMILES strings) and associated property values [1].

Baseline Models:

- For solids, compare against Ridge Regression, MODNet, and CrabNet [24].

- For molecules, compare against Random Forest (RF) and Multi-Layer Perceptron (MLP) using RDKit descriptors [1].

Methodology:

- Data Splitting: Partition the data into training, in-distribution (ID) validation, and OOD test sets. The held-out set should consist of an ID validation set and an OOD test set of equal size. The OOD test set contains property values outside the range of the training data [1].

- Model Training:

- Inference:

- For a new test sample, select a reference sample from the training set.

- Compute the difference in the representation space between the test sample and the reference sample (

ΔX). - Use the trained model to predict the property difference (

Δy). - The final property prediction for the test sample is

y_reference + Δy.

- Evaluation:

- Primary Metric: Calculate Mean Absolute Error (MAE) on the OOD test set [24].

- Extrapolative Precision: Measure the ability to identify the top 30% of OOD candidates. This is computed as the ratio of correctly predicted top OOD candidates to the total number of predicted top candidates [1].

- Recall: Evaluate the improvement in recalling high-performing OOD candidates.

The Scientist's Toolkit

Table 3: Key Research Reagents and Computational Tools

| Item Name | Type | Function / Description | Key Feature |

|---|---|---|---|

| AFLOW Database | Data | Provides material compositions & properties from high-throughput calculations [1]. | Standardized benchmark for solid-state materials. |

| Matbench | Data | An automated leaderboard for benchmarking ML algorithms on solid material properties [1]. | Contains diverse composition-based regression tasks. |

| Materials Project (MP) | Data | A database of computed materials properties and crystal structures [1]. | Includes formation energy, band structure, and elastic properties. |

| MoleculeNet | Data | A benchmark collection for molecular property prediction [1]. | Covers multiple properties like solubility and binding affinity. |

| RDKit | Software | Open-source cheminformatics toolkit [1]. | Generates molecular descriptors from SMILES strings. |

| MatEx | Software | Open-source implementation of the Materials Extrapolation method [1]. | Available on GitHub for reproducibility. |

Workflow and Conceptual Diagrams

Bilinear Transduction Workflow

OOD Generalization Challenge

Contents

- FAQ: Core Concepts

- Troubleshooting Common Experimental Issues

- Experimental Protocols & Benchmarking

- Workflow & Model Architecture Diagrams

- Research Reagent Solutions

FAQ: Core Concepts

Q1: What is the fundamental difference between E(3)-equivariance and invariance in the context of GNNs?

E(3)-equivariance is a property where the model's internal representations and outputs transform predictably (covariantly) under rotations, translations, and inversions in 3D Euclidean space. For example, if the input molecular structure is rotated, the predicted Hamiltonian matrix transforms according to the Wigner D-matrix [26]. In contrast, E(3)-invariance means the model's outputs are unchanged by these transformations. Invariance is typically desired for scalar properties like energy, while equivariance is crucial for modeling directional quantities like forces or Hamiltonian operators [26] [27].

Q2: Why are equivariant GNNs particularly important for molecular property prediction?

Equivariant GNNs inherently respect the physical symmetries of molecular systems. This means they can learn more effectively from limited data, generalize better to unseen configurations, and produce more physically plausible predictions. By explicitly building in knowledge of geometric transformations, these models avoid having to learn these symmetries from data, leading to improved data efficiency and robustness, which is critical for accurate quantum mechanical calculations like predicting DFT Hamiltonians [26].

Q3: What is Out-of-Distribution (OOD) generalization, and why is it a challenge in molecular research?

OOD generalization refers to a model's ability to make accurate predictions on data that falls outside the distribution of its training set. In molecular property prediction, this is crucial for discovering new materials and molecules with exceptional, previously unobserved properties [1]. Models often struggle with OOD data because they can learn spurious correlations present in the training data that do not hold more broadly. This is a significant challenge as the ultimate goal of computational research is often to venture beyond known chemical space [1] [7].

Q4: How can I assess my model's OOD generalization capability?

A robust method is to use systematic benchmarks like BOOM (Benchmarking Out-Of-distribution Molecular property predictions), which evaluates models on property-based OOD tasks [7]. Key performance metrics to monitor include:

- OOD Mean Absolute Error (MAE): Compare this to the in-distribution MAE; a large gap indicates poor OOD generalization.

- Extrapolative Precision: The fraction of true top-performing OOD candidates correctly identified by the model [1].

- Kernel Density Estimation (KDE) Overlap: Measures how well the predicted distribution of OOD targets aligns with the ground truth distribution [1].

Troubleshooting Common Experimental Issues

Issue 1: Poor Model Performance on OOD Property Values

- Symptoms: High error on data with property values outside the training range, despite good in-distribution performance.

- Investigation Steps:

- Verify the Data Split: Ensure your training and test splits are separated by property value ranges, not just randomly. The OOD test set should contain property values beyond the maximum and minimum of the training set [1].

- Benchmark Against Simple Models: Compare your model's OOD performance against a strong baseline like Ridge Regression, which can be surprisingly robust [1].

- Analyze Error Patterns: Plot predicted vs. true values. If predictions plateau or fail to extend into OOD ranges, the model is likely interpolating rather than extrapolating.

- Potential Solutions:

- Leverage Transductive Methods: Implement approaches like Bilinear Transduction, which reparameterizes the problem to learn how property values change as a function of material differences, rather than predicting values from new materials directly [1].

- Incorporate Physical Priors: Use models that embed geometric and physical quantities (e.g., forces, vectors) into the message passing itself, as in SEGNNs, to ground the model in physical reality [27].

Issue 2: Model Fails to Respect 3D Symmetries

- Symptoms: The model's predictions for a molecule's properties change inconsistently when the molecule is rotated or translated.

- Investigation Steps:

- Perform Symmetry Tests: Create a set of rotated and translated copies of a single molecule and pass them through the model. Check if scalar properties remain invariant and directional quantities transform equivariantly [26].

- Inspect the Architecture: Confirm that all layers of your network are strictly equivariant. The use of non-equivariant operations (e.g., standard MLPs on vector features) will break the overall equivariance.

- Potential Solutions:

- Adopt a Fully Equivariant Framework: Use established E(3)-equivariant architectures like the one presented in DeepH-E3, which ensures all internal features transform equivariantly under the Euclidean group, even with spin-orbit coupling [26].

- Use Steerable Features: Implement models that use steerable feature fields, which are capable of representing not just scalars and vectors, but other geometric objects that transform consistently under symmetry operations [27].

Issue 3: High Computational Cost and Long Training Times

- Symptoms: Training on even moderately sized molecular datasets is prohibitively slow.

- Investigation Steps:

- Profile the Code: Identify computational bottlenecks. A common culprit is the use of overly complex message-passing steps or inefficient tensor operations.

- Check Graph Connectivity: Highly connected graphs (e.g., from a large radial cutoff) increase the number of messages that need to be computed and aggregated.

- Potential Solutions:

- Implement Sampling Strategies: For large-scale graphs, use sampling methods like those in GraphSAGE (node sampling) or ClusterGCN (subgraph sampling) to reduce memory and computation load [28].

- Leverage Locality: Exploit the nearsightedness of electronic matter by using a localized atomic orbital basis and a sensible radial cutoff for defining interactions in the graph [26].

Experimental Protocols & Benchmarking

Table 1: OOD Performance Benchmark on Solid-State Properties (MAE)

| Property (Dataset) | Ridge Regression [1] | MODNet [1] | CrabNet [1] | Bilinear Transduction [1] |

|---|---|---|---|---|

| Bulk Modulus (AFLOW) | 15.2 | 16.8 | 14.9 | 13.1 |

| Shear Modulus (AFLOW) | 11.5 | 12.1 | 10.8 | 9.7 |

| Debye Temperature (AFLOW) | 63.4 | 65.2 | 60.1 | 55.3 |

| Band Gap (Matbench) | 0.41 | 0.39 | 0.38 | 0.35 |

| Aspect | Finding | Implication for Researchers |

|---|---|---|

| Overall OOD Performance | No single model achieved strong OOD generalization across all tasks; top models had OOD error ~3x larger than in-distribution error. | OOD generalization remains an open challenge; performance claims should be verified on dedicated OOD benchmarks. |

| Inductive Bias | Models with high geometric inductive bias (e.g., equivariant GNNs) performed well on OOD tasks with simple, specific properties. | Prioritize architecturally constrained models for problems with clear physical symmetries. |

| Foundation Models | Current chemical foundation models did not show strong OOD extrapolation capabilities. | Transfer and in-context learning alone may not solve OOD problems. |

| Critical Factors | OOD performance is highly sensitive to data generation, pre-training, model architecture, and molecular representation. | Holistic experimental design is necessary; no single factor guarantees OOD success. |

Protocol: Evaluating OOD Generalization for Molecular Property Prediction

- Data Curation: Select a dataset (e.g., from MoleculeNet) and a target property. Identify a property value range of interest for discovery that is not covered in your full dataset.

- OOD Split: Partition the dataset such that the test set contains only molecules with property values outside a specified range (e.g., the top and bottom 10% of values). This ensures an output-based OOD evaluation [1] [7].

- Model Training: Train your chosen equivariant GNN (and baseline models) exclusively on the in-distribution training set.

- Zero-Shot Evaluation: Evaluate the trained models on the held-out OOD test set without any fine-tuning.

- Metrics Calculation: Calculate OOD-specific metrics:

- OOD MAE: Mean Absolute Error on the OOD test set.

- Extrapolative Precision: For the top 30% of test samples with the highest property values, calculate the fraction that are correctly identified [1].

- Recall@k: The fraction of true top-performing OOD candidates found in the model's top-k predictions.

Workflow & Model Architecture Diagrams

E(3)-Equivariant GNN Workflow

OOD Generalization Challenge

Research Reagent Solutions

Table 3: Essential Computational Tools for E(3)-Equivariant GNN Research

| Item / "Reagent" | Function / Purpose | Key Considerations |

|---|---|---|

| Equivariant Model Architectures (e.g., SEGNN [27], DeepH-E3 [26]) | Core model frameworks that guarantee E(3)-equivariance by construction using steerable features and equivariant operations. | Choice depends on the target output (Hamiltonian, energy, forces) and the need to handle spin-orbit coupling. |

| OOD Benchmarking Suites (e.g., BOOM [7], MatEx [1]) | Standardized datasets and evaluation protocols to rigorously test model performance on out-of-distribution molecular and materials property prediction tasks. | Critical for validating real-world applicability and moving beyond optimistic in-distribution metrics. |

| Transductive Prediction Methods (e.g., Bilinear Transduction [1]) | Algorithms that reparameterize the prediction problem to improve extrapolation to OOD property values by learning from input-target relations. | Can be applied on top of existing model architectures to enhance OOD performance. |

| Message Passing with Geometric Features [27] | A method to incorporate covariant geometric information (e.g., position, force) and physical quantities directly into the message functions of a GNN. | Grounds the model in physical reality, improving data efficiency and generalization. |

Troubleshooting Guide: Frequently Asked Questions

FAQ: Why does my model perform poorly on molecules with property values outside the training range?

Answer: This is a fundamental challenge known as Out-of-Distribution (OOD) property prediction. Traditional machine learning models, including many transformer-based approaches, struggle to extrapolate to property values beyond those seen during training [1]. This occurs because models often learn to interpolate within the training distribution but fail to generalize to unseen property ranges.

Solution: Consider implementing transductive approaches like Bilinear Transduction, which reframes the prediction problem. Instead of predicting property values directly from new materials, it learns how property values change as a function of material differences [1]. This method has demonstrated improved extrapolative precision by 1.5-1.8× for molecules and materials, and boosted recall of high-performing candidates by up to 3× [1].

Experimental Protocol for Bilinear Transduction:

- Representation: Encode molecular structures using stoichiometry-based representations or graph embeddings

- Training: Learn the function that maps differences in representation space to differences in property values

- Inference: Predict properties for new candidates based on known training examples and their representation differences

- Validation: Use scaffold-based splits to ensure OOD evaluation with unique molecular scaffolds absent from training data

FAQ: How can I improve inference speed for large-scale molecular property prediction?

Answer: Transformer architectures face computational bottlenecks due to their self-attention mechanism, which scales quadratically with sequence length [29]. This becomes particularly problematic with long SMILES strings in large chemical databases.

Solution: Explore alternative architectures like Structured State Space Sequence Models (SSMs), such as the Mamba-based foundation model [29]. These models combine characteristics of RNNs and CNNs to achieve linear or near-linear scaling with sequence length while maintaining competitive performance.

Table 1: Performance and Speed Comparison of Architecture Types

| Architecture | Inference Speed (HOMO Prediction) | GPU Usage | MAE on Benchmark Tasks | Best Use Cases |

|---|---|---|---|---|

| Transformer-based | 20,606.76 seconds (10M samples) | Higher | Comparable to SOTA | Standard molecular properties |

| Mamba-based (SSM) | 9,735.64 seconds (10M samples) | ~54% faster | State-of-the-art on 3/6 classification tasks | Long SMILES strings, high-throughput screening |

| Bilinear Transduction | Varies by implementation | Moderate | 1.5-1.8× better OOD precision | Out-of-distribution property prediction |

FAQ: What are the current limitations of chemical foundation models for OOD generalization?

Answer: Systematic benchmarking through the BOOM (Benchmarking Out-Of-distribution Molecular property predictions) initiative reveals that even the top-performing models exhibit an average OOD error 3× larger than in-distribution performance [7]. No existing models achieve strong OOD generalization across all tasks, indicating this remains a significant frontier challenge in chemical ML development.

Critical Limitations Identified:

- Data Generation Bias: Models trained on patented chemical spaces (like USPTO datasets) suffer from generalization issues and are unsuitable for practical applications [30]

- Architecture Constraints: Transformer-based models are limited by finite context windows and inability to incorporate information outside this window [29]

- Representation Gaps: Discrepancies between molecular graph representations and SMILES-based sequential representations hinder robust learning [31]

FAQ: How can I assess my model's OOD generalization capabilities?

Answer: Implement comprehensive benchmarking protocols that explicitly test extrapolation to unseen property values and molecular scaffolds.

Experimental Protocol for OOD Benchmarking:

- Data Splitting: Use scaffold-based splits where test sets contain unique Bemis-Murcko scaffolds absent from training data [29]

- Property Range Testing: Explicitly evaluate on property values outside the training distribution range [1]

- Metrics: Track both in-distribution and OOD performance separately, with emphasis on extrapolative precision and recall of high-performing candidates

- Baseline Comparison: Compare against multiple baseline methods including Random Forests, Multi-Layer Perceptrons, and state-of-the-art models like CrabNet [1]

Table 2: Key Benchmark Datasets for OOD Evaluation

| Dataset | Domain | Sample Size | Properties Measured | OOD Evaluation Method |

|---|---|---|---|---|

| MoleculeNet | Molecules | 600-4,200 samples | Solubility, lipophilicity, binding affinity | Scaffold splitting, property range testing |

| AFLOW | Solid-state materials | ~300-14,000 samples | Band gap, bulk modulus, thermal conductivity | Property value extrapolation |

| Matbench | Materials | Varies | Formation energy, yield strength, refractive index | Composition-based OOD testing |

| BRS (Broad Reaction Set) | Chemical reactions | 20 generic templates | Reaction products | Generic template application |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Resources

| Resource Name | Type | Primary Function | Access |

|---|---|---|---|

| BOOM Benchmark | Evaluation framework | Systematic OOD performance assessment | GitHub Repository |

| MatEx (Materials Extrapolation) | Prediction method | Bilinear Transduction for OOD property prediction | GitHub Repository |

| OSMI-SSM-336M | Foundation model | Mamba-based architecture for molecular tasks | Research implementation |

| ProPreT5 | Transformer model | Chemical reaction product prediction | Research implementation |

| BRS Dataset | Data resource | Generic reaction templates for broader chemical space exploration | Research dataset |

Experimental Workflows and Methodologies

Key Experimental Considerations

Data Curation Best Practices:

- Source Diversity: Utilize datasets from multiple sources (experimental and computational) to mitigate dataset-specific biases [1]

- Template Generality: For reaction prediction, consider generic reaction templates (like Broad Reaction Set) rather than highly specific patented reactions [30]

- Explicit OOD Splits: Implement scaffold-based splits and property range partitions explicitly during dataset creation [7]

Model Selection Guidelines:

- For Long Sequences: Prioritize Mamba-based architectures for long SMILES strings due to linear scaling [29]

- For OOD Extrapolation: Implement Bilinear Transduction methods when predicting properties outside training ranges [1]

- For Multi-task Learning: Leverage pre-trained chemical foundation models (ChemBERTa, MoLFormer) when labeled data is scarce [32]

Performance Optimization:

- Inference Speed: Consider architecture alternatives for large-scale screening applications

- Carbon Efficiency: Factor in computational efficiency and CO₂ emissions in model selection [29]

- Validation Rigor: Implement comprehensive OOD testing beyond standard train/test splits

The field of transformer-based foundation models in chemical domains continues to evolve rapidly, with OOD generalization remaining a significant challenge. By implementing the troubleshooting strategies, experimental protocols, and benchmarking approaches outlined above, researchers can better navigate current limitations while contributing to the development of more robust and generalizable chemical AI systems.

The discovery of new, high-performing materials and molecules fundamentally depends on identifying candidates with property values that fall outside the bounds of known data. However, machine learning (ML) models, which are increasingly central to accelerating discovery, often struggle with out-of-distribution (OOD) generalization, where they must make accurate predictions for these novel candidates. This challenge is acute in molecular property prediction (MPP), where a model's failure to extrapolate can lead to missed opportunities in drug and material design [1] [7]. The core of the problem lies in the complex entanglement of molecular functional groups. This often leads to inconsistent semantics, where molecules sharing what appear to be identical invariant substructures can exhibit drastically different properties, severely confusing ML models [33].

To address this, Consistent Semantic Representation Learning (CSRL) has emerged as a powerful multi-view framework. CSRL enhances OOD molecular property prediction by ensuring that the semantic information—the underlying meaning related to a molecule's function or property—is consistently represented across different molecular data views. By exploring the potential correlation between consistent semantic information and molecular properties in a dedicated semantic space, CSRL provides a robust solution to the distribution shifts that plague traditional models [33].

This technical support center is designed to help researchers, scientists, and drug development professionals successfully implement and troubleshoot the CSRL framework in their own experiments, ultimately advancing their work in dealing with OOD generalization.

Key Concepts: CSRL Framework and Components

The CSRL framework is designed to learn molecular representations that remain consistent and reliable even when the model encounters data outside its training distribution. Its architecture primarily consists of two key modules [33]:

- Semantic Uni-Code (SUC) Module: This module addresses the problem of inconsistent mapping of semantic information in different molecular representation forms (e.g., molecular graph vs. fingerprint). It works by adjusting incorrect embeddings into the correct, unified embeddings for these different forms.

- Consistent Semantic Extractor (CSE) Module: This module uses non-semantic information as training labels to guide a discriminator's learning process. This helps suppress the model's reliance on non-semantic, or "spurious," information that may be present in the different molecular representation embeddings, forcing it to focus on the consistent, core semantics.

The Scientist's Toolkit: Essential Components for a CSRL Experiment

Table 1: Key research reagents and computational tools for implementing CSRL.

| Item Name | Type | Primary Function in CSRL |

|---|---|---|

| Molecular Graphs | Data Representation | Provides a structured view of the molecule (atoms as nodes, bonds as edges) for model input [34]. |

| SMILES Strings | Data Representation | A text-based line notation offering a sequential, string-based view of the molecular structure. |

| RDKit | Software Library | Used to generate molecular descriptors and convert SMILES strings into featured molecular graphs [34]. |

| Semantic Uni-Code (SUC) | Algorithmic Module | Corrects embedding inconsistencies between different molecular representations (e.g., graph vs. SMILES) [33]. |

| Consistent Semantic Extractor (CSE) | Algorithmic Module | Extracts the core, invariant semantics by suppressing reliance on non-semantic information in the embeddings [33]. |

| Graph Neural Network (GNN) | Model Architecture | A common backbone for learning from the graph-based view of a molecule [34]. |

Diagram 1: High-level workflow of the CSRL framework.

Frequently Asked Questions (FAQs)

Q1: What is "inconsistent semantics" in the context of molecular property prediction, and why is it a problem for OOD generalization?

Inconsistent semantics occurs when molecules that share identical invariant substructures, as identified by a model, exhibit drastically different properties. This is often due to the complex entanglement of molecular functional groups and the presence of "activity cliffs." This inconsistency confounds models that try to learn simple structure-property relationships. For OOD generalization, where a model encounters entirely new molecular scaffolds or property ranges, this problem is magnified, leading to highly inaccurate predictions. The CSRL framework directly addresses this by learning to map different molecular representations to a unified semantic space where this inconsistency is minimized [33].

Q2: My model performs well on the validation set (in-distribution) but fails to identify true high-performing candidates during screening. How can CSRL help?

This is a classic symptom of poor OOD extrapolation. Traditional models are often trained to minimize error on data from the same distribution, which does not guarantee performance on the extreme, high-value tails of the property distribution. CSRL is explicitly designed for this scenario. By learning consistent semantic representations that are invariant to distribution shifts, it improves extrapolative precision—the fraction of true high-performing candidates correctly identified. For example, one OOD property prediction method improved precision by 1.8x for materials and 1.5x for molecules, and boosted the recall of high-performing candidates by up to 3x [1].

Q3: What are the most common molecular "views" used in a multi-view framework like CSRL?

The multi-view approach leverages different representations of the same molecular object. The most common views are:

- Molecular Graph View: The molecule is represented as a graph with atoms as nodes and bonds as edges, often with features for atoms (e.g., degree, formal charge) and bonds (e.g., conjugation, stereo configuration) [34].

- SMILES String View: The Simplified Molecular Input Line Entry System (SMILES) provides a text-based, sequential representation of the molecule [34]. Other views can include molecular fingerprints (like Morgan fingerprints) or 3D conformers, providing complementary information for the model to learn a more comprehensive representation.

Q4: Are there any publicly available benchmarks to evaluate my CSRL model's OOD performance?