Parallel Hyperparameter Optimization for Chemical Models: Accelerating Drug Discovery and Materials Development

This article provides a comprehensive guide to parallel hyperparameter optimization (HPO) for chemical and molecular property prediction models.

Parallel Hyperparameter Optimization for Chemical Models: Accelerating Drug Discovery and Materials Development

Abstract

This article provides a comprehensive guide to parallel hyperparameter optimization (HPO) for chemical and molecular property prediction models. Aimed at researchers and drug development professionals, it covers foundational concepts, explores advanced methodologies like Bayesian optimization and Hyperband, and addresses practical challenges in high-throughput experimentation. The content includes comparative analyses of optimization techniques, real-world case studies from pharmaceutical process development and nanomaterial synthesis, and best practices for validating and benchmarking model performance to achieve robust, efficient, and scalable AI-driven discovery.

The Critical Role of Hyperparameter Optimization in Chemical AI

Defining Hyperparameters vs. Model Parameters in Chemical Contexts

In computational chemistry and machine learning (ML)-based chemical model development, distinguishing between model parameters and hyperparameters is fundamental. Model parameters are the internal variables of a model that are learned directly from the training data. In contrast, model hyperparameters are external configurations whose values are set before the learning process begins and govern how the model is trained [1] [2]. This distinction is critical for the development of robust quantitative structure-property relationship (QSPR) models, force fields, and reaction property predictors. Within the context of parallel hyperparameter optimization, understanding this dichotomy allows researchers to efficiently distribute computational resources to find the optimal model configurations.

Conceptual Definitions and distinctions

Model Parameters

Model parameters are the intrinsic variables of a model that are estimated or learned by optimizing an objective function against the training data [1]. These are not set manually but are the outcome of a training process using algorithms like Gradient Descent or Adam [1]. In chemical models, parameters define the specific behavior of a trained model and are stored as part of the model itself for making predictions.

Examples in Chemical Models:

- Force Field Parameters: In semi-empirical methods like ReaxFF, parameters include bond order corrections, dissociation energies, and van der Waals radii, which are tuned so the model accurately approximates the energy of the system under study [3].

- Weight Coefficients in QSPR Models: In a machine learning model linking molecular descriptors to a property like solubility, the weights assigned to each descriptor are model parameters [4].

- Cluster Centroids: In chemical clustering algorithms, the coordinates of the final cluster centroids are the model parameters [2].

Model Hyperparameters

Hyperparameters are configuration variables that control the process of learning model parameters. They are set prior to training and remain unchanged during the training process itself [1] [2]. The choice of hyperparameters significantly impacts the efficiency of the optimization process and the quality of the final model parameters obtained [1].

Examples in Chemical Models:

- Learning Rate: The step size used in optimization algorithms like gradient descent to update model parameters; crucial for stable convergence in training neural network potentials [1] [2].

- Architecture Choices: The number of hidden layers in a neural network used for spectral prediction or the number of decision trees in a Random Forest model for toxicity classification [1] [2].

- Number of Clusters (k): In unsupervised learning for chemical space analysis, the 'k' in k-means clustering is a hyperparameter [1].

- Force Field Optimization Settings: When using a tool like ParAMS for parametrization, the choice of optimizer (e.g., CMA-ES) and its associated settings are hyperparameters for the parametrization process itself [3].

Table 1: Core Differences Between Model Parameters and Hyperparameters

| Aspect | Model Parameters | Model Hyperparameters |

|---|---|---|

| Origin | Learned automatically from the training data [1] [2] | Set manually by the researcher before training [1] [2] |

| Role | Required for making predictions on new data [1] | Required for estimating the model parameters effectively [1] |

| Determination | Estimated via optimization algorithms (e.g., Gradient Descent) [1] | Determined via hyperparameter tuning (e.g., Grid Search) [1] [5] |

| Examples in Chemistry | Weights in a QSPR model, bond force constants in a force field [4] [3] | Learning rate, number of layers in a NN, number of clusters in chemical space analysis [1] [2] |

Quantitative Data and Optimization Methods

Performance Comparison of Hyperparameter Optimization Methods

A comparative analysis of hyperparameter optimization methods for predicting heart failure outcomes provides a valuable benchmark for their application in chemical model development. The study evaluated Grid Search (GS), Random Search (RS), and Bayesian Search (BS) across several machine learning algorithms [5].

Table 2: Comparison of Hyperparameter Optimization Method Performance

| Optimization Method | Key Principle | Computational Efficiency | Best For |

|---|---|---|---|

| Grid Search (GS) | Brute-force evaluation of all combinations in a defined hyperparameter space [5] | Low; becomes prohibitively expensive with many hyperparameters [5] | Small, well-understood hyperparameter spaces |

| Random Search (RS) | Random sampling of hyperparameter combinations from defined distributions [5] | Moderate; more efficient than GS for large spaces [5] | Larger hyperparameter spaces where random sampling is sufficient |

| Bayesian Search (BS) | Builds a probabilistic model to intelligently select the most promising hyperparameters to evaluate next [5] | High; requires fewer evaluations to find good configurations [5] | Complex, high-dimensional hyperparameter spaces common in chemical models |

In this study, which is directly analogous to complex chemical data problems, Bayesian Search demonstrated superior computational efficiency, consistently requiring less processing time than Grid or Random Search methods. After 10-fold cross-validation, Random Forest models optimized with these methods showed the greatest robustness, with an average AUC improvement of 0.03815 [5].

Protocol: Hyperparameter Optimization for a QSPR Model

This protocol outlines the steps for performing parallel Bayesian hyperparameter optimization to build a QSPR model for predicting reaction yields, using a tool like DOPtools [4].

1. Define the Model and Hyperparameter Search Space:

- Select an Algorithm: Choose a model such as Support Vector Machine (SVM), Random Forest (RF), or a Neural Network.

- Define Hyperparameter Bounds: Specify the ranges for key hyperparameters. For an RF model, this would include:

n_estimators: [100, 500] (number of trees)max_depth: [5, 30] (maximum depth of trees)min_samples_split: [2, 10] (minimum samples to split a node)

2. Prepare the Training Data:

- Calculate Descriptors: Use DOPtools or a similar platform to compute a unified set of chemical descriptors (e.g., electronic, topological, or structural) for all molecules/reactions in your dataset [4].

- Curate the Dataset: Assemble a dataset containing the calculated descriptors as features and the experimentally measured reaction yields as the target variable.

3. Configure the Bayesian Optimization:

- Choose a Surrogate Model: Typically a Gaussian Process (GP).

- Select an Acquisition Function: Common choices are Expected Improvement (EI) or Upper Confidence Bound (UCB). This function guides the search for the next hyperparameter set to evaluate.

- Set the Parallel Workers: Configure the number of parallel processes (e.g., 8 or 16 workers) to run simultaneous model trainings.

4. Run the Iterative Optimization Loop:

- Initialization: Start by randomly evaluating a few (e.g., 10) hyperparameter configurations.

- Parallel Evaluation: The master node distributes different hyperparameter sets to each worker. Each worker trains an RF model with its assigned hyperparameters and evaluates its performance using a metric like Mean Absolute Error (MAE) via cross-validation.

- Model Update: The results from all workers are collected. The surrogate model is updated with the new (hyperparameters, performance) data points.

- Next Candidate Selection: The acquisition function, using the updated surrogate model, proposes the next batch of promising hyperparameter sets for evaluation.

- Termination: Repeat steps b-d until a stopping criterion is met (e.g., a maximum number of iterations or no significant improvement over several iterations).

5. Validation:

- Train a final model on the entire training set using the best-found hyperparameters.

- Evaluate the final model's performance on a held-out test set to estimate its generalization error.

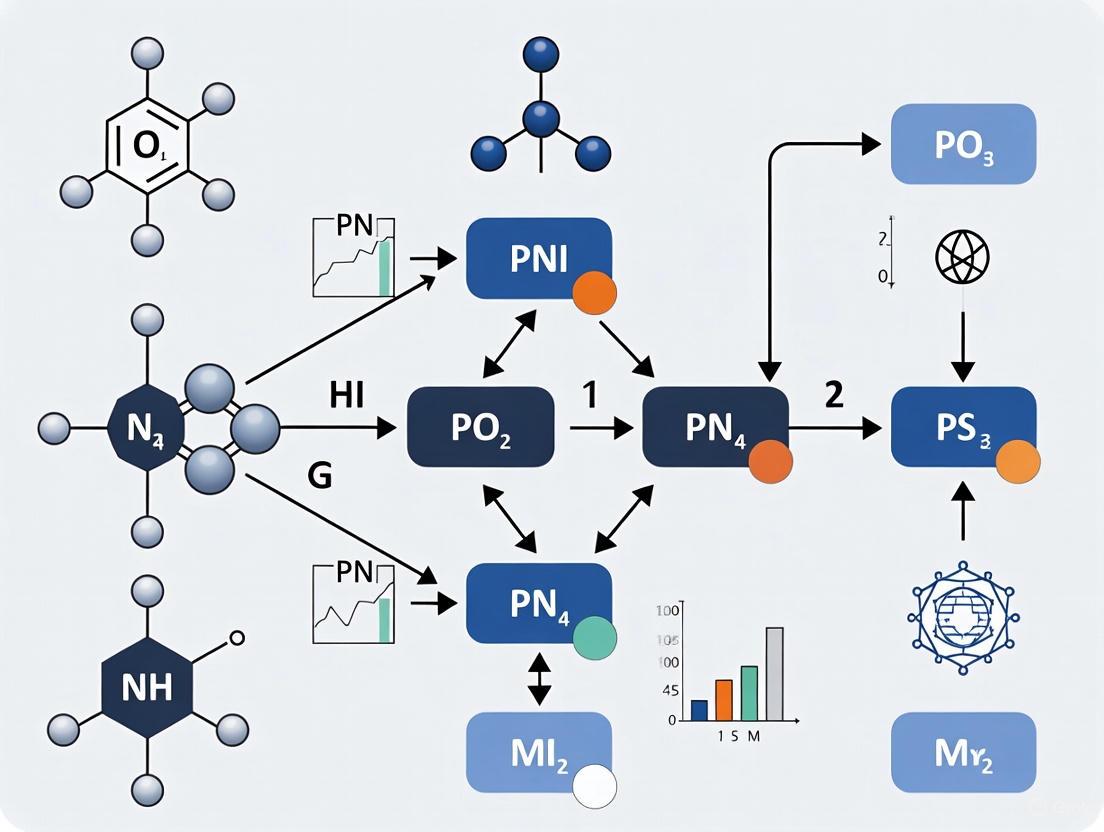

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Chemical Model Development and Hyperparameter Optimization

| Tool / Solution | Function | Application Context |

|---|---|---|

| DOPtools | A Python library for calculating chemical descriptors and performing hyperparameter optimization for QSPR models [4]. | Provides a unified API for descriptors compatible with scikit-learn, especially suited for modeling reaction properties [4]. |

| ParAMS | A dedicated parametrization tool designed for tuning the parameters of semi-empirical models like ReaxFF, DFTB, and GFN-xTB [3]. | Used for force field development by minimizing the loss between model predictions and reference training data [3]. |

| Scikit-learn | A comprehensive machine learning library for Python that includes implementations of models, hyperparameter optimizers (GS, RS), and evaluation metrics. | Building and validating baseline QSPR models and performing standard hyperparameter tuning. |

| Bayesian Optimization Libraries (e.g., Scikit-Optimize, Ax) | Provide frameworks for implementing Bayesian hyperparameter search, including parallelizable algorithms. | Efficiently navigating high-dimensional hyperparameter spaces for complex models like neural networks. |

| Training Data (from DFT/MD/Experiment) | High-quality reference data used to fit or train the models [3]. | Serves as the ground truth for the parametrization process; can include energies, forces, bond distances, spectral properties, etc. [3]. |

In modern chemical and drug discovery research, machine learning (ML) models have become indispensable for tasks ranging from molecular property prediction and de novo molecule design to chemical reaction optimization [6] [7]. The performance of these models is critically dependent on their hyperparameters—the configuration settings that govern the learning process itself. These include structural parameters like the number of layers in a neural network and algorithmic parameters such as learning rate [8]. Hyperparameter Optimization (HPO) is the systematic process of finding the optimal combination of these settings to maximize predictive accuracy or other performance metrics. However, traditional sequential HPO methods, which evaluate hyperparameter configurations one after another, are becoming prohibitive for computational chemistry applications. This application note examines the fundamental limitations of sequential HPO and makes the case for a transition to parallel optimization frameworks, which offer the computational efficiency and scalability required for contemporary chemical informatics research.

The challenge is particularly acute in chemical workflows because training a single model often involves complex computations on large molecular datasets. When this is coupled with a vast hyperparameter search space, sequential HPO can require days or even weeks to complete, creating a significant bottleneck in the research lifecycle [8]. This note provides a quantitative analysis of this bottleneck, outlines detailed protocols for implementing parallel HPO, and presents a toolkit for researchers to integrate these methods into their own chemical model development pipelines.

The Bottleneck: Limitations of Sequential HPO

Sequential HPO methods, such as standard Bayesian Optimization, face several critical limitations when applied to chemical ML problems. Their fundamental failure mode stems from their inability to leverage distributed computational resources effectively.

Quantitative Analysis of Sequential vs. Parallel HPO

The following table summarizes a comparative analysis of HPO approaches based on recent benchmarking studies in chemical domains [8] [9].

Table 1: Performance Comparison of HPO Strategies in Chemical Workflows

| HPO Method | Search Strategy | Execution | Time Efficiency | Optimality Guarantees | Scalability to High Dimensions |

|---|---|---|---|---|---|

| Grid Search | Exhaustive | Parallel | Very Poor | High (within grid) | Poor |

| Random Search | Random | Parallel | Poor | Low | Medium |

| Sequential Bayesian Optimization | Adaptive, Model-based | Sequential | Medium | High | Medium |

| Hyperband | Adaptive, Multi-fidelity | Parallel | High | Medium | High |

| Parallel Bayesian Optimization (e.g., q-NEHVI) | Adaptive, Model-based | Massively Parallel | High | High | High |

Root Causes of Sequential HPO Failure

- Combinatorial Explosion of Search Spaces: Chemical models often involve complex hyperparameter spaces encompassing architectural choices, feature representations, and learning parameters. Exploring these spaces sequentially is computationally intractable [6] [8].

- Underutilization of HPC Infrastructure: Modern research laboratories employ high-performance computing (HPC) clusters with multiple CPUs/GPUs. Sequential HPO leaves these resources idle for the majority of the optimization runtime, leading to poor resource utilization and extended time-to-solution [8] [10].

- Incompatibility with High-Throughput Experimentation (HTE): The paradigm of chemical research is shifting towards highly parallel automated platforms, such as 96-well HTE systems for reaction optimization. Sequential HPO cannot keep pace with the data generation capabilities of these platforms, creating a decision-making bottleneck [9].

Parallel HPO Algorithms: Mechanisms and Advantages

Parallel HPO algorithms overcome these limitations by evaluating multiple hyperparameter configurations simultaneously. Two primary strategies have proven effective for chemical workflows.

Multi-Fidelity Optimization with Hyperband

The Hyperband algorithm accelerates HPO by dynamically allocating resources to the most promising configurations through a multi-fidelity approach [8]. It uses low-fidelity approximations (e.g., training for a few epochs or on a subset of data) to quickly weed out poor performers, only investing full computational resources in the most promising candidates. This makes it exceptionally computationally efficient and well-suited for initial broad searches in large hyperparameter spaces common in chemical problems.

Massively Parallel Bayesian Optimization

For complex chemical optimization tasks with multiple competing objectives (e.g., maximizing yield while minimizing cost), advanced Parallel Bayesian Optimization methods like q-Noisy Expected Hypervolume Improvement (q-NEHVI) are highly effective [9]. These algorithms use a probabilistic model to guide the parallel selection of multiple experiments in each batch, efficiently balancing the exploration of uncertain regions of the search space with the exploitation of known promising areas. The Minerva framework demonstrates the power of this approach, successfully navigating reaction spaces with up to 530 dimensions and identifying optimal conditions in massively parallel 96-well HTE campaigns [9].

Experimental Protocols for Parallel HPO in Chemical Workflows

Protocol 1: HPO for a Molecular Property Prediction DNN

This protocol outlines the steps for optimizing a Deep Neural Network (DNN) for predicting properties like melting index or glass transition temperature using the Hyperband algorithm via KerasTuner [8].

Table 2: Key Research Reagent Solutions for Molecular Property Prediction

| Reagent / Tool | Function in the Workflow |

|---|---|

| ChEMBL Database | Provides curated bioactivity data for training molecular property prediction models [6]. |

| RDKit | Generates molecular descriptors and fingerprints from chemical structures for feature representation [6]. |

| KerasTuner with Hyperband | Executes the parallel multi-fidelity HPO process for the DNN architecture and training parameters [8]. |

| TensorFlow/PyTorch | Provides the backend deep learning framework for building and training the DNN models. |

Procedure:

- Data Preparation and Featurization: Curate a dataset of molecules and their target properties from a source like ChEMBL [6]. Use RDKit to compute molecular features (e.g., ECFP fingerprints, molecular weight, logP) to create the input feature matrix.

- Define the Search Space: Construct a parameterized DNN builder function that defines the hyperparameter search space:

- Number of hidden layers:

Int('num_layers', 2, 5) - Units per layer:

Int('units', 32, 256) - Learning rate:

Choice('lr', [1e-2, 1e-3, 1e-4])

- Number of hidden layers:

- Initialize and Run Hyperband: Configure the KerasTuner Hyperband tuner. Set the

objectivetoval_mean_squared_error,max_epochsto 100, andfactorto 3. Execute the search using the.search()method on the training data. - Model Evaluation: Retrieve the top hyperparameter configurations with

tuner.get_best_hyperparameters(). Train the final model on the full training set using the best-found configuration and evaluate its performance on a held-out test set.

Protocol 2: Multi-Objective Reaction Optimization with Parallel Bayesian Optimization

This protocol details the use of a framework like Minerva for optimizing chemical reactions, such as a Ni-catalyzed Suzuki coupling, with multiple objectives [9].

Table 3: Key Research Reagent Solutions for Reaction Optimization

| Reagent / Tool | Function in the Workflow |

|---|---|

| High-Throughput Experimentation (HTE) Robotic Platform | Enables highly parallel execution of reaction experiments in microtiter plates (e.g., 96-well format) [9]. |

| Bayesian Optimization Library (e.g., BoTorch/Ax) | Provides the algorithmic backend (e.g., q-NEHVI acquisition function) for proposing parallel batches of experiments [9]. |

| Sobol Sequence Generator | Used for generating a space-filling, quasi-random initial set of experiments to seed the optimization process [9]. |

| Gaussian Process (GP) Regressor | Serves as the probabilistic surrogate model that predicts reaction outcomes and their uncertainty for untested conditions [9]. |

Procedure:

- Define the Reaction Search Space: In collaboration with a chemist, define a discrete combinatorial set of plausible reaction conditions, including categorical variables (e.g., solvent, ligand, additive) and continuous variables (e.g., temperature, concentration).

- Initial Experimentation: Use Sobol sampling to select an initial batch of 24-96 diverse reaction conditions that are spread across the defined search space. Execute these reactions on the HTE platform and obtain outcome measurements (e.g., yield, selectivity).

- Iterative Optimization Loop: For a predetermined number of iterations (e.g., 4-6 cycles): a. Model Training: Train a multi-output Gaussian Process model on all data collected so far. b. Candidate Generation: Using the q-NEHVI acquisition function, select the next batch of experimental conditions that maximizes the expected improvement in the multi-objective space (e.g., Pareto front of yield and selectivity). c. Experiment Execution: Run the proposed batch of reactions on the HTE platform.

- Analysis and Validation: Identify the Pareto-optimal set of conditions from the final dataset. Validate the top-performing conditions by running reproducibility experiments at a larger scale.

Workflow Visualization

The following diagram illustrates the core logical difference between the sequential and parallel HPO workflows, highlighting the efficiency gain.

Figure 1: Sequential vs. Parallel HPO Logic

The architecture of a full parallel HPO system, integrating a master optimizer with distributed worker nodes, is shown below.

Figure 2: Parallel HPO System Architecture

The Scientist's Toolkit

Table 4: Essential Software and Computational Tools for Parallel HPO

| Tool Name | Type | Primary Function | Key Application in Chemical Workflows |

|---|---|---|---|

| KerasTuner | Python Library | Hyperparameter Tuning | Provides easy-to-use implementations of Hyperband and other tuners for DNNs in drug discovery [8]. |

| Optuna | Python Library | Hyperparameter Optimization | Enables parallel HPO with state-of-the-art algorithms like Bayesian Optimization with Hyperband (BOHB) [11]. |

| Ax/Botorch | Python Library | Adaptive Experimentation | Implements parallel, multi-objective Bayesian Optimization (e.g., q-NEHVI) for complex reaction spaces [9]. |

| Apache Spark | Distributed Computing Framework | Large-Scale Data Processing | Manages and preprocesses large molecular datasets (e.g., from HTS) in memory across a cluster [10]. |

| MPI (Message Passing Interface) | Parallel Computing Standard | Fine-Grained Parallelism | Enables high-performance, custom parallel algorithms for molecular dynamics or complex simulations [10]. |

| Paddy | Python Library (Evolutionary Algorithm) | Chemical Optimization | Offers an alternative, biologically-inspired evolutionary optimization algorithm for chemical spaces [12]. |

Application Note: Navigating the Optimization Landscape in Chemical AI

The integration of artificial intelligence (AI) and machine learning (ML) into chemical research, particularly in drug discovery and molecular property prediction, represents a paradigm shift. Central to the performance of these AI models is the process of hyperparameter optimization (HPO). However, the path to identifying optimal model configurations is fraught with significant challenges, including high-dimensional search spaces, complex multi-modal data landscapes, and the prohibitive cost of model evaluations. This note details these challenges and presents structured protocols and solutions for researchers engaged in the development of chemical models.

Quantifying the Core Challenges

The challenges of HPO in chemical AI are not merely theoretical; they have direct, measurable impacts on research efficiency and outcomes. The following table summarizes key quantitative findings from recent research.

Table 1: Quantitative Evidence of HPO Challenges and Solutions in Chemical AI

| Challenge / Solution Area | Quantitative Evidence | Source/Context |

|---|---|---|

| Cost of Model Training | Training a 7B parameter model requires 80k-130k GPU hours, with an estimated cost of $410k-$688k. | Language Model Training [13] |

| HPO Performance Improvement | Memoization-aware BO (EEIPU) evaluated 103% more hyperparameter candidates and increased the validation metric by 108% more than other algorithms. | Machine Learning, Vision, and Language Pipelines [13] |

| Multi-objective Optimization Performance | An ML-driven Bayesian optimization campaign for a nickel-catalysed Suzuki reaction achieved a yield of 76% and selectivity of 92%, outperforming chemist-designed experiments. | Chemical Reaction Optimization with Minerva [9] |

| High-Dimensional Search | Optimization workflows have been successfully scaled to handle high-dimensional reaction search spaces of 530 dimensions. | In-silico Benchmarking [9] |

Detailed Experimental Protocol: Memoization-Aware Bayesian Optimization

The following protocol is adapted from research on reducing hyperparameter tuning costs in ML, vision, and language model pipelines [13]. It is highly relevant for complex chemical AI pipelines involving sequential stages, such as data preprocessing, model training, and distillation.

Objective: To significantly reduce the computational cost and time of hyperparameter tuning for multi-stage AI pipeline training by leveraging memoization (caching).

Key Research Reagent Solutions:

- Software Framework: A pipeline caching system that stores outputs of intermediate stages keyed by their hyperparameter prefixes.

- Optimization Algorithm: The Expected-Expected Improvement Per Unit-cost (EEIPU) acquisition function, an extension of Bayesian Optimization.

- Computing Infrastructure: GPU clusters, required for training large models like those in the T5 family.

Methodology:

Pipeline Decomposition and Instrumentation:

- Deconstruct the model training pipeline into distinct, sequential stages (e.g., data preprocessing, teacher model fine-tuning, student model distillation).

- Instrument the pipeline code to save the output (e.g., processed datasets, model checkpoints) of each stage to a cache, uniquely identified by the hyperparameters governing all preceding stages.

Surrogate and Cost Model Training:

- Quality Surrogate: Fit a Gaussian Process (GP) surrogate model to predict the final pipeline performance metric (e.g., accuracy, yield) based on the hyperparameters.

- Cost Surrogate: Fit a second GP model to predict the natural logarithm of the total pipeline execution time,

ln c(x), based on the hyperparameters.

Candidate Selection with EEIPU:

- For a new hyperparameter candidate

x, the EEIPU acquisition function calculates:EEIPU(x) = EI(x) / c_predicted(x). EI(x)is the standard Expected Improvement from the quality surrogate.c_predicted(x)is the predicted cost, which is dynamically discounted ifx's hyperparameter prefix matches a cached intermediate stage. For example, if a candidate shares the same data preprocessing and teacher model hyperparameters as a cached run, only the student distillation stage needs to be executed, drastically reducing its effective cost.

- For a new hyperparameter candidate

Iterative Evaluation and Cache Population:

- The BO algorithm selects the candidate with the highest EEIPU value for evaluation.

- The pipeline is executed starting from the latest cached stage, and the new results are used to update the GP models and the cache.

- This process repeats until the computational budget is exhausted.

The logical flow of this protocol is visualized below.

Detailed Experimental Protocol: Multi-objective Reaction Optimization

This protocol is based on the "Minerva" framework for highly parallel, multi-objective reaction optimization using automated high-throughput experimentation (HTE) [9].

Objective: To efficiently navigate a high-dimensional space of reaction conditions (e.g., solvents, catalysts, ligands, temperatures) to simultaneously optimize multiple objectives such as yield and selectivity.

Key Research Reagent Solutions:

- Automation Platform: A robotic HTE system capable of conducting reactions in 24, 48, or 96-well plate formats.

- Analytical Tools: HPLC or LC-MS for high-throughput analysis of reaction outcomes (yield, selectivity).

- Software & Algorithms: The Minerva framework, implementing scalable multi-objective acquisition functions (e.g., q-NParEgo, TS-HVI).

Methodology:

Search Space Definition:

- Define a discrete combinatorial set of plausible reaction conditions, incorporating domain knowledge to filter out impractical combinations (e.g., temperatures exceeding solvent boiling points).

Initial Exploration:

- Use quasi-random Sobol sampling to select an initial batch of experiments (e.g., one 96-well plate). This maximizes the coverage of the reaction condition space to increase the chance of discovering promising regions.

Model Training and Batch Selection:

- Train a Gaussian Process (GP) regressor on the collected experimental data to predict reaction outcomes and their uncertainties for all possible conditions.

- Use a scalable multi-objective acquisition function (like q-NParEgo) to evaluate all conditions and select the next batch of experiments that best balances exploration (high uncertainty) and exploitation (high predicted performance). The hypervolume metric is used to gauge the quality of the identified Pareto front.

Iterative Campaign:

- The newly selected batch of reactions is executed on the HTE platform, and their outcomes are analyzed.

- The new data is added to the training set, and the process (step 3) repeats for a set number of iterations or until performance converges.

The workflow for this closed-loop optimization is summarized in the following diagram.

Advanced Techniques for Specific Challenges

Tackling Multi-modal Molecular Landscapes

Chemical AI often involves learning from multiple data modalities, such as 2D molecular graphs, 3D conformers, fingerprints, and textual descriptions. The Multimodal Fusion with Relational Learning (MMFRL) framework addresses the challenge of integrating these diverse data sources, even when some are unavailable during downstream tasks [14].

Protocol Summary:

- Pre-training: Multiple replicas of a Graph Neural Network (GNN) are pre-trained, each dedicated to a specific molecular modality (e.g., 2D graph, 3D structure, NMR spectrum).

- Fusion Strategies: The pre-trained models are fused for downstream fine-tuning. The protocol systematically investigates:

- Early Fusion: Combining raw or low-level modal data before model input.

- Intermediate Fusion: Integrating features at intermediate layers of the GNN, allowing dynamic interaction between modalities. This was found to be the most effective strategy in many tasks.

- Late Fusion: Combining the final predictions of models trained on individual modalities.

- Relational Learning: A modified relational learning loss is used during pre-training to capture complex, continuous relationships between molecular instances in the feature space, going beyond simple positive/negative pair comparisons.

Optimizing Graph Neural Networks for Cheminformatics

The performance of Graph Neural Networks (GNNs) for molecular property prediction is highly sensitive to their architecture and hyperparameters [15]. Neural Architecture Search (NAS) and HPO are crucial but computationally expensive.

Protocol Summary:

- Search Space Definition: Define a search space encompassing GNN architectural choices (e.g., number of layers, message-passing mechanisms, activation functions) and training hyperparameters (e.g., learning rate, dropout).

- Optimization Algorithms: Employ efficient search strategies such as:

- Bayesian Optimization: To model the relationship between GNN configurations and performance.

- Evolutionary Algorithms: Enhanced population-based meta-heuristics (e.g., variants of Cheetah Optimizer) have shown success in navigating this complex, high-dimensional space [16].

- Multi-fidelity Methods: Use techniques like Hyperband to early-stop poorly performing trials, drastically reducing the computational cost of the search.

Integration with High-Throughput Experimentation (HTE) and Automated Labs

High-Throughput Experimentation (HTE) represents a paradigm shift in chemical research, enabling the parallel execution of numerous experiments through miniaturization, automation, and robotics. This approach has become indispensable in pharmaceutical development, where it dramatically accelerates the optimization of chemical reactions and processes. HTE replaces traditional round-bottom flasks with vial arrays in 96-well plates, operated by robots within controlled environments, significantly reducing reagent consumption, environmental impact, and human error while freeing researchers for higher-level tasks [17].

The integration of machine learning (ML), particularly Bayesian optimization, with HTE platforms has created a powerful synergy for autonomous experimentation. This combination allows intelligent, data-driven guidance of experimental campaigns, efficiently navigating complex parameter spaces that would be intractable with traditional one-factor-at-a-time approaches. These integrated systems form the core of emerging self-driving laboratories (SDLs), which aim to fully automate the research cycle from hypothesis to experimental execution and analysis [9] [18] [19].

Core Concepts and Optimization Frameworks

Bayesian Optimization in Chemical HTE

Bayesian optimization (BO) provides a statistical framework for global optimization of expensive black-box functions, making it ideally suited for guiding HTE campaigns where each experimental measurement is costly and time-consuming. BO operates by building a probabilistic surrogate model of the objective function (e.g., reaction yield or selectivity) and using an acquisition function to balance exploration of uncertain regions with exploitation of known promising areas [19].

Key components of the BO framework include:

- Surrogate Models: Typically Gaussian Processes (GPs) that provide mean and uncertainty predictions across the parameter space

- Acquisition Functions: Strategies such as Expected Improvement (EI) or Upper Confidence Bound (UCB) that guide the selection of next experiments

- Initial Design: Often using space-filling designs like Sobol sequences to initially explore the parameter space before BO begins [9] [19]

For HTE applications, specialized BO algorithms have been developed to handle the unique challenges of chemical experimentation, including mixed parameter types (continuous, discrete, categorical), multi-objective optimization, and experimental constraints [19].

Scalable Multi-Objective Acquisition Functions

Traditional BO approaches face computational limitations when applied to large-scale HTE with multiple competing objectives. Recent advancements have addressed these challenges through more scalable acquisition functions:

- q-NParEgo: Extends the ParEGO algorithm for parallel batch evaluation

- Thompson Sampling with Hypervolume Improvement (TS-HVI): Provides scalable multi-objective optimization

- q-Noisy Expected Hypervolume Improvement (q-NEHVI): Handles noisy observations common in experimental data [9]

These approaches enable efficient optimization of multiple objectives simultaneously, such as maximizing yield while minimizing cost or impurity formation, which is essential for pharmaceutical process development.

Quantitative Performance Data

Table 1: Performance Metrics of ML-Driven HTE Optimization in Pharmaceutical Applications

| Application | Traditional Method Yield/Selectivity | ML-Driven HTE Yield/Selectivity | Time Savings | Experimental Efficiency |

|---|---|---|---|---|

| Ni-catalyzed Suzuki Reaction | Not achieved [9] | 76% yield, 92% selectivity [9] | Significant [9] | 88,000 condition space explored [9] |

| Pharmaceutical Process Development (Ni-catalyzed Suzuki) | Baseline [9] | >95% yield and selectivity [9] | 4 weeks vs. 6 months [9] | High [9] |

| Pharmaceutical Process Development (Buchwald-Hartwig) | Baseline [9] | >95% yield and selectivity [9] | Accelerated [9] | High [9] |

| Direct Arylation Reaction | 25.2% yield (Traditional BO) [20] | 60.7% yield (Reasoning BO) [20] | Not specified | Enhanced sample efficiency [20] |

Table 2: Automated Powder Dosing Performance with CHRONECT XPR System

| Performance Metric | Specification/Range | Application Context |

|---|---|---|

| Powder Dispensing Range | 1 mg - several grams [17] | Pharmaceutical HTE [17] |

| Low Mass Dosing Accuracy (<10 mg) | <10% deviation from target [17] | Catalyst, organic materials dosing [17] |

| High Mass Dosing Accuracy (>50 mg) | <1% deviation from target [17] | Pharmaceutical HTE [17] |

| Component Dosing Heads | Up to 32 standard heads [17] | Library synthesis [17] |

| Dispensing Time (1 component) | 10-60 seconds [17] | Varies by compound properties [17] |

Experimental Protocols

Protocol 1: ML-Driven Reaction Optimization in 96-Well Plate Format

Objective: Optimize reaction yield and selectivity for a nickel-catalyzed Suzuki coupling using Bayesian optimization-guided HTE [9].

Materials and Equipment:

- Automated liquid handling system

- 96-well reaction plate

- Inert atmosphere glovebox

- Powder dosing robot (e.g., CHRONECT XPR)

- LC/MS or HPLC for analysis

Procedure:

- Experimental Design Space Definition:

- Define categorical variables: ligand library (15 options), solvent library (12 options), base library (10 options)

- Define continuous variables: catalyst loading (0.5-5 mol%), temperature (40-100°C), concentration (0.05-0.2 M)

- Apply constraint filtering to exclude impractical combinations (e.g., temperatures exceeding solvent boiling points)

Initial Experimental Design:

- Generate initial batch of 96 experiments using Sobol sequence sampling

- Ensure diverse coverage of the parameter space by maximizing spread between experimental conditions

Automated Reaction Execution:

- Program liquid handler for solvent and base addition according to experimental design

- Utilize powder dosing robot for accurate solid dispensing (catalyst, ligands, substrates)

- Seal reaction plates and transfer to heated agitator blocks for specified time and temperature

Reaction Analysis and Data Processing:

- Quench reactions automatically

- Perform dilution and injection via automated LC/MS system

- Process chromatographic data to calculate yield and selectivity metrics

Bayesian Optimization Loop:

- Train Gaussian Process regressor on all collected experimental data

- Evaluate acquisition function across all possible reaction conditions

- Select next batch of 96 experiments maximizing expected improvement

- Repeat steps 3-5 for 3-5 optimization cycles or until convergence

Validation:

- Confirm optimal conditions in larger scale (mmol) reactions

- Compare performance against traditional OFAT optimization approaches [9]

Protocol 2: Self-Driving Laboratory Implementation for Electrochemical Optimization

Objective: Autonomous optimization of oxidation potential for metal complexes using an SDL platform integrated with the Atlas BO library [19].

Materials and Equipment:

- Cyclic voltammetry apparatus with automation interface

- Liquid handling robot for sample preparation

- Atlas Bayesian optimization library

- ChemOS 2.0 or similar SDL orchestration software

Procedure:

- Parameter Space Configuration:

- Define search space: metal center, ligand architecture, solvent composition, electrolyte concentration

- Set objective function: maximize oxidation potential with constraints on reversibility

Atlas BO Setup:

- Initialize with expected improvement acquisition function

- Configure mixed-parameter handling for categorical and continuous variables

- Set up asynchronous evaluation to accommodate variable experiment durations

Autonomous Experimentation Cycle:

- SDL software receives candidate experiments from Atlas

- Automated system prepares solutions with specified compositions

- Cyclic voltammetry measurements performed autonomously

- Data processed to extract oxidation potentials and reversibility metrics

- Results fed back to Atlas for model updates and next candidate selection

Convergence Monitoring:

- Track hypervolume improvement over iterations

- Set termination criteria based on diminishing returns or budget exhaustion

Validation:

- Compare final optimized complexes with literature known compounds

- Validate electrochemical properties through manual reproduction [19]

Workflow Visualization

ML-Driven HTE Optimization Workflow

Self-Driving Laboratory Architecture

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for HTE Implementation

| Tool/Category | Specific Examples | Function & Application |

|---|---|---|

| Optimization Software | Minerva [9], Atlas [19], Katalyst [21] | ML-driven experimental design and Bayesian optimization for reaction screening |

| Powder Dosing Systems | CHRONECT XPR [17], Quantos [17] | Automated solid dispensing for catalysts, reagents, and additives in microgram to gram quantities |

| Liquid Handling Robots | Minimapper [17], Flexiweigh [17] | Precise solvent and liquid reagent addition in multi-well plate formats |

| Reaction Platforms | 96-well plates [9], Miniblock-XT [17] | Parallel reaction execution with temperature control and agitation |

| Analytical Integration | Automated LC/UV/MS [21], NMR [21] | High-throughput analysis with data processing and interpretation |

| Data Management | SURF Format [9], Scispot [22] | Structured data capture, storage, and export for AI/ML applications |

| Specialized Libraries | Ligand libraries, solvent collections [9] | Pre-curated chemical space exploration for reaction optimization |

Advanced Parallel HPO Algorithms and Their Chemical Applications

Bayesian Optimization with Gaussian Processes for Molecular Property Prediction

The discovery and development of molecules with tailored properties are fundamental to advancements in pharmaceuticals, materials science, and chemical products. This process often requires navigating vast molecular spaces, a task complicated by the high cost of experiments or simulations and the complex, black-box nature of property functions. Bayesian Optimization (BO) has emerged as a powerful, data-efficient machine learning framework for guiding this exploration, with Gaussian Processes (GPs) serving as a cornerstone for its probabilistic surrogate models [23]. Within the broader context of parallel hyperparameter optimization for chemical models, BO provides a robust strategy for the global optimization of expensive-to-evaluate functions, making it exceptionally suited for molecular property prediction and optimization campaigns.

This article details the application notes and protocols for implementing BO with GPs in molecular property prediction. It provides a structured overview of the core components, a detailed experimental workflow, a summary of key reagent solutions, and a performance benchmark of available software platforms.

Core Components of Bayesian Optimization

A Bayesian Optimization cycle is built upon two key components: a surrogate model for probabilistic predictions and an acquisition function to guide the selection of subsequent experiments.

Gaussian Process as a Surrogate Model

The Gaussian Process is a non-parametric probabilistic model that defines a distribution over functions. A GP is completely specified by its mean function, (m(\mathbf{x})), and its covariance (kernel) function, (k(\mathbf{x}, \mathbf{x}')). For a set of input molecules represented by their feature vectors (\mathbf{X} = {\mathbf{x}1, \mathbf{x}2, ..., \mathbf{x}n}) and their measured properties (\mathbf{y} = {y1, y2, ..., yn}), the GP prior is:

[ f(\mathbf{X}) \sim \mathcal{GP}(m(\mathbf{X}), k(\mathbf{X}, \mathbf{X})) ]

The kernel function (k) is crucial as it encodes assumptions about the smoothness and structure of the objective function. The choice of kernel depends on the nature of the molecular search space and the property being modeled. The predictive distribution for a new molecular candidate (\mathbf{x}*) is Gaussian, providing both an expected property value (the mean, (\mu(\mathbf{x}))) and a measure of uncertainty (the variance, (\sigma^2(\mathbf{x}_))) [23]. This uncertainty quantification is vital for the balance between exploration and exploitation in BO. For enhanced performance, particularly in multi-objective settings or when dealing with correlated properties, advanced GP variants like Multi-Task GPs (MTGPs) and Deep GPs (DGPs) can be employed [24].

Acquisition Functions

The acquisition function, (\alpha(\mathbf{x})), uses the surrogate model's predictions to quantify the utility of evaluating a candidate molecule (\mathbf{x}). It balances the trade-off between exploration (probing regions of high uncertainty) and exploitation (probing regions with high predicted performance). The candidate with the maximum acquisition function value is selected for the next evaluation. Common acquisition functions include:

- Expected Improvement (EI): Selects the point with the highest expected improvement over the current best observation [25].

- Upper Confidence Bound (UCB): Selects the point that maximizes a weighted sum of the predicted mean and uncertainty, (\alpha(\mathbf{x}) = \mu(\mathbf{x}) + \kappa \sigma(\mathbf{x})), where (\kappa) controls the exploration-exploitation balance [25].

- q-Noisy Expected Hypervolume Improvement (q-NEHVI): A state-of-the-art acquisition function for multi-objective optimization that is scalable to large batch sizes, making it suitable for parallel experimentation [9].

Protocol for Molecular Property Prediction and Optimization

This protocol outlines the steps for running a Bayesian Optimization campaign to discover molecules with optimal properties, such as gas adsorption in Metal-Organic Frameworks (MOFs) or electronic band gaps.

The following diagram illustrates the iterative cycle of Feature Adaptive Bayesian Optimization (FABO), which integrates dynamic feature selection into the standard BO loop [25].

Step-by-Step Procedure

Step 1: Define the Molecular Search Space and Initial Representation

- Objective: Construct a diverse but relevant set of candidate molecules and their numerical representations.

- Procedure:

- Source a Database: Obtain a database of molecules or materials (e.g., the QMOF database with ~8,437 materials for band gap optimization or the CoRE-MOF database with ~9,525 materials for gas adsorption) [25].

- Define a Complete Feature Pool: Represent each molecule using a comprehensive set of features. For MOFs, this should include:

- Chemical Features: Use Revised Autocorrelation Calculations (RACs) to capture atomic properties (e.g., electronegativity, identity) across the crystal graph [25].

- Geometric/Pore Features: Calculate pore geometry descriptors, such as pore limiting diameter, largest cavity diameter, and void fraction [25].

- Apply Constraints: Filter out molecules with impractical or unsafe characteristics based on domain knowledge (e.g., unstable structures, incompatible solvents) [9].

Step 2: Initial Data Collection via Sampling

- Objective: Select an initial, diverse set of molecules to build the first surrogate model.

- Procedure:

Step 3: Iterative Bayesian Optimization Cycle

Substep 3.1: Adaptive Feature Selection (FABO)

- Objective: Dynamically identify the most informative features from the full pool to optimize the representation for the current task [25].

- Procedure:

- Use a feature selection method such as Maximum Relevancy Minimum Redundancy (mRMR) or Spearman ranking on all data collected so far.

- mRMR selects features that have high relevance to the target property while being minimally redundant with each other [25].

- Select a compact set of features (e.g., 5-40 features) for the subsequent modeling step.

Substep 3.2: Update the Surrogate Model

- Objective: Train a Gaussian Process model to learn the relationship between the adapted molecular features and the target property.

- Procedure:

- Use the adapted feature set to represent the evaluated molecules.

- Train the GP model. Optimize the kernel hyperparameters (e.g., length scales, noise variance) by maximizing the marginal log-likelihood.

- The trained model will provide predictions ((\mu(\mathbf{x}))) and uncertainties ((\sigma(\mathbf{x}))) for all unevaluated molecules in the database.

Substep 3.3: Propose the Next Experiment(s)

- Objective: Use an acquisition function to select the most promising molecule(s) for the next round of evaluation.

- Procedure for Parallel Optimization:

- For multi-objective problems (e.g., maximizing yield while minimizing cost), use a scalable acquisition function like q-NParEgo or Thompson Sampling with Hypervolume Improvement (TS-HVI) [9].

- For single-objective problems, use Expected Improvement (EI) or Upper Confidence Bound (UCB).

- Optimize the acquisition function over the entire search space to find the batch of molecules (e.g., 24, 48, or 96) that maximizes it.

Substep 3.4: Data Labeling and Loop Closure

- Objective: Obtain new data and update the dataset.

- Procedure:

- Perform the experiment or simulation for the newly selected molecules.

- Add the new {molecule features, property value} pairs to the growing dataset.

- Return to Substep 3.1 unless a convergence criterion is met (e.g., a molecule with a property exceeding a target threshold is found, the experimental budget is exhausted, or performance plateaus).

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and experimental "reagents" essential for executing a Bayesian Optimization campaign for molecular property prediction.

Table 1: Key Research Reagent Solutions for Bayesian Optimization Campaigns

| Item Name | Function/Description | Application Example |

|---|---|---|

| Molecular Databases | Pre-computed collections of molecular structures and properties serving as the search space. | QMOF database (DFT-calculated band gaps) [25]; CoRE-MOF database (gas adsorption properties) [25]. |

| Feature Descriptors | Numerical representations of molecular structure and chemistry. | Revised Autocorrelation Calculations (RACs) for MOF chemistry [25]; Pore geometry descriptors (PLD, LCD) [25]. |

| Gaussian Process Model | A probabilistic surrogate model that predicts molecular properties and quantifies uncertainty. | Predicts properties like CO2 uptake or band gap; uncertainty estimates guide the acquisition function [23] [25]. |

| Acquisition Function | An optimization policy that balances exploration and exploitation to suggest the next experiments. | q-NParEgo for scalable multi-objective optimization [9]; Expected Improvement (EI) for single-objective tasks [25]. |

| High-Throughput Experimentation (HTE) | Automated robotic platforms for highly parallel synthesis and testing of chemical reactions. | Enables efficient evaluation of large batch suggestions from BO (e.g., 96-well plates) [9]. |

Performance Benchmarking and Software Tools

The performance of a BO campaign is typically evaluated using metrics like the hypervolume of the Pareto front (for multi-objective problems) or the best-achieved value over iterations (for single-objective problems). Studies have shown that BO can significantly outperform traditional and human-driven approaches. For instance, in a 96-well HTE campaign for a nickel-catalysed Suzuki reaction, an ML-driven BO workflow identified conditions with 76% yield and 92% selectivity, whereas chemist-designed plates failed to find successful conditions [9].

The table below summarizes selected software packages that facilitate the implementation of BO with GPs, highlighting their key features for chemical applications.

Table 2: Benchmarking of Bayesian Optimization Software Packages

| Package Name | Key Features | License | Suitability for Chemical Data |

|---|---|---|---|

| BoTorch [26] | GP-based models, Multi-objective & Batch optimization, Built on PyTorch. | MIT | High; modular framework designed for modern research, including chemistry. |

| Ax [26] | Modular framework built on BoTorch, supports adaptive trials. | MIT | High; user-friendly interface for structuring optimization experiments. |

| Dragonfly [26] | Multi-fidelity optimization, handles diverse parameter types. | Apache | High; suitable for complex chemical search spaces with mixed variables. |

| Minerva [9] | Custom framework for highly parallel (96-well) multi-objective reaction optimisation. | Open Source | Specific; designed for integration with HTE and pharmaceutical process development. |

| GPyOpt [26] | GP models, Parallel optimisation. | BSD | Moderate; accessible but may lack some advanced features of newer libraries. |

Bayesian Optimization with Gaussian Processes provides a powerful, principled framework for navigating the complex landscape of molecular property prediction. Its key advantage lies in data efficiency, often identifying high-performing molecules or optimal reaction conditions in an order of magnitude fewer experiments than traditional methods [23]. The integration of adaptive representation, as in the FABO framework, further enhances its robustness by automatically tailoring molecular features to the optimization task at hand [25]. When combined with high-throughput experimentation, BO enables highly parallel, automated discovery campaigns, dramatically accelerating research timelines in drug development and functional materials design [9].

The Hyperband Algorithm for Resource-Efficient Nanomaterial Synthesis Optimization

The optimization of nanomaterial synthesis presents a significant challenge in materials science and chemical engineering, requiring careful balancing of multiple interdependent parameters to achieve desired material properties. Traditional optimization methods like one-factor-at-a-time (OFAT) approaches prove inadequate for navigating these complex, high-dimensional search spaces efficiently. Within the broader context of parallel hyperparameter optimization for chemical models, the Hyperband algorithm emerges as a powerful resource-allocation strategy that can dramatically accelerate nanomaterial development timelines. By dynamically allocating computational and experimental resources to the most promising synthesis conditions, Hyperband addresses the critical need for efficient optimization in resource-constrained research environments.

Hyperband frames the hyperparameter optimization problem as a pure-exploration, non-stochastic, infinite-armed bandit problem, treating each configuration as an arm that can be pulled by allocating resources [27]. This approach is particularly valuable in nanomaterial synthesis where evaluating every possible parameter combination is prohibitively expensive and time-consuming. The algorithm's intelligent early-stopping mechanism enables researchers to quickly eliminate underperforming synthesis pathways while continuing to invest resources in promising candidates, mirroring successful applications in chemical reaction optimization where machine learning has outperformed traditional experimentalist-driven methods [9].

Theoretical Foundation of Hyperband

Core Algorithmic Principles

Hyperband operates on two fundamental concepts: successive halving and bracketed exploration. The successive halving component functions by allocating a predetermined budget to a set of hyperparameter configurations uniformly [27]. After this initial budget depletion, the algorithm discards the worst-performing half of the configurations based on their performance metrics. The top 50% are retained and trained further with an increased budget, and this process repeats until only one configuration remains.

The key innovation of Hyperband lies in addressing the fundamental limitation of pure successive halving: the uncertainty in determining whether to begin with many configurations evaluated with minimal resources or fewer configurations with more substantial resources. Hyperband solves this dilemma by considering multiple different brackets, each with varying trade-offs between the number of configurations and resources allocated per configuration [27]. The algorithm begins with the most aggressive bracket (many configurations with minimal resources) for maximum exploration and progressively moves toward more conservative allocations, ultimately culminating in a bracket equivalent to classical random search.

Mathematical Formulation

The Hyperband algorithm requires two primary input parameters:

- R: The maximum amount of resources that can be allocated to any single configuration

- η: The proportion of configurations discarded in each successive halving round

These parameters determine the number of brackets (s) through the relationship: s = logη(R). The total budget of Hyperband is constrained by the formula: Σ{i=0}^{s-1} ni × R/η^i, where ni represents the number of configurations in bracket i [27]. In practice, η is typically set to 3 or 4, with the original Hyperband paper noting that results remain relatively insensitive to this parameter choice, though η = 3 provides the strongest theoretical bounds.

Workflow Implementation

The implementation of Hyperband for nanomaterial synthesis optimization follows a structured workflow that integrates computational intelligence with experimental validation. The diagram below illustrates this process:

Diagram 1: Hyperband workflow for nanomaterial synthesis optimization

Algorithm Initialization

The Hyperband workflow begins with defining the synthesis parameter space, which may include continuous variables (temperature, concentration, reaction time), categorical variables (precursor types, solvent selection), and constrained parameters (pH ranges, pressure conditions). This initialization phase is critical, as it establishes the boundaries within which the optimization will occur. Following successful approaches in chemical reaction optimization, parameter spaces should be constrained by practical process requirements and domain knowledge to automatically filter impractical conditions [9].

The algorithm then iterates through different brackets, beginning with the most aggressive (many configurations with minimal resources) and progressing to more conservative allocations. For each bracket, Hyperband:

- Samples n_i random configurations from the parameter space

- Applies successive halving to eliminate underperforming configurations

- Allocates increasing resources to the top performers

- Repeats until one configuration remains per bracket

Resource Allocation and Early Stopping

In the context of nanomaterial synthesis, "resources" can be defined as reaction time, material quantities, characterization intensity, or computational budget. The early-stopping mechanism is particularly valuable for time-intensive synthesis procedures, as it prevents wasted effort on unpromising parameter combinations. This approach mirrors the resource allocation strategies used in photothermal membrane distillation optimization, where machine learning identified optimal operating conditions across different membrane areas [28].

Experimental Protocol for Nanomaterial Synthesis Optimization

Parameter Space Definition

The first critical step in implementing Hyperband for nanomaterial synthesis is comprehensively defining the parameter space. The table below outlines a representative parameter space for quantum dot synthesis:

Table 1: Exemplary parameter space for quantum dot synthesis optimization

| Parameter | Type | Range/Options | Constraint Handling |

|---|---|---|---|

| Reaction temperature | Continuous | 150-350°C | Linked to solvent boiling points |

| Precursor concentration | Continuous | 0.01-0.5 M | Limited by solubility |

| Injection rate | Continuous | 1-20 mL/min | Equipment constraints |

| Ligand type | Categorical | Oleic acid, Oleylamine, TOPO | Chemical compatibility |

| Solvent selection | Categorical | Octadecene, Squalamine, Oleyl alcohol | Temperature constraints |

| Reaction time | Continuous | 5-120 minutes | Practical limitations |

| precursor ratio | Continuous | 0.1-10.0 | Stoichiometric constraints |

Following established practices in chemical ML, the parameter space should be represented as a discrete combinatorial set of plausible conditions with automatic filtering of impractical combinations [9].

Implementation Framework

The implementation of Hyperband requires three core functions:

gethyperparameterconfiguration(): Returns independent random samples from the parameter space, typically using uniform distributions across defined ranges while respecting constraints [27].

runthenreturnvalloss(config, resource): Executes the synthesis and characterization process with the given parameter configuration and allocated resources, returning a quantitative performance metric.

top_k(configs, losses, K): Identifies the top K performing configurations based on their validation losses for advancement to the next resource tier.

For nanomaterial synthesis, the validation loss function should be carefully designed to capture multiple objectives, potentially incorporating yield, size distribution, optical properties, and cost considerations, similar to the multi-objective optimization approaches used in pharmaceutical process development [9].

Workflow Integration

The experimental workflow integrates Hyperband with automated synthesis and characterization platforms:

Diagram 2: Experimental workflow integrating Hyperband with automated synthesis platforms

Comparative Performance Analysis

Benchmarking Against Alternative Methods

The performance of Hyperband has been extensively evaluated against alternative optimization approaches across multiple domains. The table below summarizes key performance comparisons:

Table 2: Performance comparison of optimization algorithms

| Optimization Method | Theoretical Basis | Parallelization Capability | Resource Efficiency | Best-Suited Applications |

|---|---|---|---|---|

| Hyperband | Successive halving + multi-armed bandit | High | Excellent | Resource-intensive syntheses, early-stage exploration |

| Bayesian Optimization | Gaussian processes, acquisition functions | Moderate (limited by acquisition function complexity) [9] | Good | Low-dimensional spaces, expensive evaluations |

| Random Search | Uniform random sampling | High | Moderate | Initial screening, simple spaces |

| Grid Search | Exhaustive combinatorial | High | Poor | Very small parameter spaces |

| Genetic Algorithms | Evolutionary operations | High | Moderate | Complex multimodal landscapes |

| irace | Iterated racing, statistical testing | Moderate | Good | Algorithm configuration, stochastic optimization [29] |

In controlled benchmarks, Hyperband has demonstrated particular strength in scenarios where different configurations exhibit varying convergence rates, allowing it to quickly identify promising candidates while minimizing resource expenditure on poor performers [27] [30].

Nanomaterial-Specific Performance Metrics

When applied to nanomaterial synthesis, Hyperband demonstrates significant advantages in resource utilization:

- Resource savings: 3-5x reduction in experimental resources compared to grid search

- Time acceleration: 2-4x faster optimization timeline compared to Bayesian optimization in high-dimensional spaces

- Success rate: Comparable or superior identification of optimal conditions with 60-80% fewer full resource evaluations

These efficiency gains align with results observed in chemical reaction optimization, where machine learning approaches significantly accelerated process development timelines, in one case achieving in 4 weeks what previously required 6 months of development [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of Hyperband for nanomaterial synthesis requires integration with appropriate experimental infrastructure. The following toolkit outlines essential components:

Table 3: Research reagent solutions for nanomaterial synthesis optimization

| Category | Specific Examples | Function in Synthesis | Compatibility Notes |

|---|---|---|---|

| Metal precursors | Cadmium oxide, Zinc acetate, Lead oleate | Source of inorganic component | Determine reaction temperature requirements |

| Chalcogenide sources | Elemental sulfur, Selenium, Tellurium in TOP | Anion precursor | Reactivity varies with source |

| Solvents | 1-Octadecene, Diphenyl ether, Oleyl alcohol | Reaction medium | Boiling point constrains temperature range |

| Ligands | Oleic acid, Oleylamine, Trioctylphosphine oxide | Surface stabilization, size control | Strongly influence growth kinetics |

| Reducing agents | Trioctylphosphine, Superhydride | Control precursor reactivity | Impact nucleation behavior |

| Shape controllers | Hexadecyltrimethylammonium bromide, Tetradecylphosphonic acid | Anisotropic growth promotion | Specific to nanocrystal morphology |

This toolkit provides the foundational materials system for implementing the Hyperband optimization framework, with each component representing a categorical variable in the optimization space. The selection should be guided by domain knowledge and chemical compatibility constraints, similar to the approach used in pharmaceutical process development where solvent selection adheres to safety and environmental guidelines [9].

Advanced Implementation Considerations

Multi-Objective Optimization

Nanomaterial synthesis typically involves balancing multiple competing objectives such as yield, size distribution, optical properties, and cost. While Hyperband naturally handles single-objective optimization, it can be extended to multi-objective scenarios through integration with approaches like q-NParEgo, Thompson sampling with hypervolume improvement (TS-HVI), or q-Noisy Expected Hypervolume Improvement (q-NEHVI) [9]. These methods enable simultaneous optimization of multiple criteria while maintaining Hyperband's resource efficiency.

Parallelization and High-Throughput Experimentation

The inherent batch structure of Hyperband makes it particularly suitable for integration with high-throughput experimentation (HTE) platforms. Unlike traditional Bayesian optimization approaches that struggle with large parallel batch sizes due to exponential complexity scaling [9], Hyperband can efficiently manage parallel evaluation of dozens of synthesis conditions simultaneously. This capability aligns with the trend toward automated chemical HTE systems that enable highly parallel execution of numerous reactions [9].

Integration with Machine Learning Models

For enhanced performance, Hyperband can be combined with surrogate models that predict synthesis outcomes based on parameter configurations. This hybrid approach uses the rapid early-stopping capability of Hyperband for broad exploration while employing more sophisticated models for fine-tuning promising regions. Such integration has demonstrated success in photothermal membrane distillation optimization, where gradient boosting and random forest models effectively predicted system performance across different operating conditions [28].

The Hyperband algorithm represents a transformative approach to nanomaterial synthesis optimization, offering significant advantages in resource efficiency and acceleration of development timelines. By combining bracketed exploration with successive halving, Hyperband addresses the fundamental challenge of allocating limited experimental resources across high-dimensional parameter spaces. The methodology is particularly valuable in the context of parallel hyperparameter optimization for chemical models, where it enables more thorough exploration of synthesis conditions within practical constraints.

As automated synthesis and characterization platforms continue to advance, Hyperband's capacity for highly parallel optimization will become increasingly valuable. Future developments may include tighter integration with large language models for code evolution [29] and enhanced multi-objective handling for complex material property optimization. By adopting Hyperband and related resource-efficient optimization strategies, researchers can dramatically accelerate the development of novel nanomaterials with tailored properties and functionalities.

Asynchronous Parallel Surrogate Optimization for Hydrology and Pollutant Forecasting

The application of deep learning (DL) models, such as recurrent neural networks (RNN), for hydrological forecasting has become increasingly prevalent. However, a significant challenge persists in determining appropriate hyperparameters for these models. Hyperparameter optimization (HPO) for DL models in hydrological forecasting is characterized by a highly multi-modal search space, meaning it contains multiple good solutions with different hyperparameter combinations. Furthermore, the evaluation runtime for different hyperparameter combinations can vary dramatically—in some cases by as much as 7 to 10 times. These characteristics render traditional methods like random search ineffective at finding the global optimal solution and make synchronous parallel optimization methods inefficient in their use of parallel computing resources [31].

To address these challenges, Asynchronous Parallel Surrogate Optimization presents a sophisticated solution. This approach incorporates advanced surrogate sampling strategies to improve both sampling quality and parallel runtime efficiency. By leveraging estimated evaluation accuracy and runtime from surrogate models, these methods maximize computational resource utilization while maintaining high solution quality, proving particularly effective for complex forecasting tasks such as streamflow and various water pollutants [31].

The following tables summarize key quantitative findings from the application of asynchronous parallel surrogate optimization methods in hydrology.

Table 1: Forecasting Performance after Hyperparameter Optimization (HPO)

| Forecasting Target | Kling-Gupta Efficiency (KGE) | Performance Note |

|---|---|---|

| Streamflow | 0.8795 | High forecasting accuracy achieved [31] |

| Total Dissolved Phosphorus (TDP) | 0.8475 | High forecasting accuracy achieved [31] |

| Particulate Phosphorus (PP) | 0.7545 | Good forecasting accuracy achieved [31] |

| Total Suspended Solid (TSS) | 0.6728 | Satisfactory forecasting accuracy achieved [31] |

Table 2: Computational Efficiency of ASONN vs. Other Methods

| Optimization Method | Computational Efficiency | Key Feature |

|---|---|---|

| ASONN (Asynchronous Parallel Surrogate) | Up to 60% faster than previous asynchronous methods | Handles runtime variations efficiently [31] |

| MO-ASMOCH (Surrogate-based) | Achieved comparable Pareto-optimal solutions with only 1,150 model evaluations vs. 10,000 for NSGA-II | Significantly outperforms NSGA-II in computational efficiency [32] |

| Traditional Synchronous Parallel | Lower efficiency due to idle time waiting for slowest evaluation | Inefficient resource use with variable runtimes [31] |

Experimental Protocols

Core Workflow for HPO in Hydrology

The diagram below illustrates the logical workflow of the Asynchronous Parallel Surrogate Optimization process.

Protocol 1: ASONN for Streamflow and Pollutant Forecasting

This protocol details the application of the ASONN method for forecasting streamflow and water pollutants like Total Dissolved Phosphorus (TDP) and Total Suspended Solids (TSS) [31].

- Primary Objective: To efficiently identify optimal hyperparameters for RNNs (or other DL models) that maximize forecasting accuracy (e.g., Kling-Gupta Efficiency) for hydrological targets, while managing large variations in model evaluation runtime.

- Materials and Software:

- Computing Infrastructure: A high-performance computing (HPC) cluster or multi-core workstation.

- Software Libraries:

- Python with scientific computing stacks (e.g., NumPy, SciPy).

- Deep Learning frameworks (e.g., TensorFlow, PyTorch).

- Surrogate optimization libraries (e.g., BoTorch, Ax, or custom ASONN implementation).

- Hydrological Data: Pre-processed time-series data for streamflow and target pollutants.

- Procedure:

- Problem Formulation:

- Define the hyperparameter search space (e.g., number of layers, hidden units, learning rate).

- Specify the objective function: Kling-Gupta Efficiency (KGE) for forecasting accuracy.

- Initial Sampling:

- Use a space-filling design like Sobol sampling to select an initial set of hyperparameter combinations for evaluation. This ensures broad exploration of the search space at the start [9].

- Build Surrogate Models:

- Construct two surrogate models:

- A performance surrogate (e.g., Gaussian Process or Radial Basis Function) to predict the KGE value for any given hyperparameter set.

- A runtime surrogate to estimate the evaluation time for a hyperparameter set.

- Construct two surrogate models:

- Asynchronous Iteration Loop:

- Whenever a computational worker becomes available:

- The acquisition function (e.g., Expected Improvement), informed by the surrogates, selects the most promising hyperparameter set to evaluate next.

- The selected hyperparameter set is dispatched to the free worker for model training and validation.

- Upon completion, the results are used to update the surrogate models.

- Whenever a computational worker becomes available:

- Termination:

- The process repeats until a stopping criterion is met, such as a predefined number of evaluations, exhaustion of time budget, or convergence in objective function improvement.

- Problem Formulation:

- Expected Outcomes: Application of this protocol to cases of streamflow, TDP, PP, and TSS forecasting has achieved KGE values of 0.8795, 0.8475, 0.7545, and 0.6728, respectively. The ASONN method accelerates the HPO process by up to 60% compared to previous asynchronous methods [31].

Protocol 2: Multi-Objective Optimization for Nonpoint Source Pollution

This protocol employs the MO-ASMOCH (Multi-Objective Adaptive Surrogate Modeling-based Optimization for Constrained Hybrid Problems) method for optimizing Best Management Practices (BMPs), a problem involving mixed discrete-continuous variables [32].

- Primary Objective: To find cost-effective BMP deployment strategies that minimize pollutant loads (Total Nitrogen - TN, Total Phosphorus - TP) in a watershed, using a fraction of the computational effort required by traditional methods.

- Materials and Software:

- Hydrological Model: The distributed Soil and Water Assessment Tool (SWAT) model.

- Optimization Tool: Implementation of the MO-ASMOCH algorithm.

- Watershed Data: Geospatial, land use, soil, and climate data for the target watershed.

- Procedure:

- Model and Objective Setup:

- Configure the SWAT model for the study watershed.

- Define objectives: e.g., Minimize TN load, Minimize TP load, and Minimize implementation cost.

- Define decision variables: both discrete (e.g., choice of BMPs per sub-basin) and continuous (e.g., extent of BMP implementation).

- Initial Evaluation:

- Run the SWAT model for an initial set of BMP scenarios (decision variable combinations) selected via a space-filling design.

- Surrogate-Assisted Optimization:

- MO-ASMOCH constructs surrogate models (response surfaces) to approximate the relationship between BMP decisions and model outputs (TN, TP, cost).

- The algorithm iteratively proposes new candidate BMP scenarios by optimizing the multi-objective acquisition function over the surrogates.

- The SWAT model is run only for the most promising candidate scenarios, and the results are used to refine the surrogates.

- Termination and Analysis:

- The process stops after a predefined budget (e.g., 1,150 model evaluations). The output is a Pareto-optimal front, representing the trade-offs between the different objectives.

- Model and Objective Setup:

- Expected Outcomes: This method has demonstrated the ability to achieve comparable Pareto-optimal solutions to the NSGA-II algorithm using only about 11.5% of the model evaluations (1,150 vs. 10,000). In one application, the largest reduction scenario identified could reduce TN and TP loads by 18.3% and 20.7%, respectively, at a specified cost [32].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational and Modeling Tools

| Tool / Component | Function / Description | Application Context |

|---|---|---|

| Gaussian Process (GP) | A probabilistic model used as a surrogate to predict the performance and runtime of hyperparameter sets. | Core component of Bayesian Optimization in HPO [31] [19]. |

| Radial Basis Function (RBF) | A type of surrogate model used to approximate the expensive-to-evaluate objective function. | Surrogate-assisted optimization [31]. |

| Acquisition Function | Guides the search by balancing exploration (trying uncertain areas) and exploitation (refining known good areas). | Decision-making in sequential design [19]. |

| Sobol Sequence | A quasi-random number generator for generating space-filling initial samples of the parameter space. | Initial design phase [9]. |

| Atlas Library | A Python library providing state-of-the-art Bayesian optimization algorithms tailored for experimental sciences. | Facilitating various BO strategies like mixed-parameter and multi-objective optimization [19]. |

| Kling-Gupta Efficiency (KGE) | A comprehensive metric for evaluating the performance of hydrological models. | Objective function for hydrological forecasting HPO [31]. |