Parallel Tempering Monte Carlo: Advanced Sampling for Complex Thermodynamic Properties in Drug Discovery

This article provides a comprehensive examination of Parallel Tempering (PT) Monte Carlo, a powerful enhanced sampling technique crucial for calculating thermodynamic properties in complex molecular systems.

Parallel Tempering Monte Carlo: Advanced Sampling for Complex Thermodynamic Properties in Drug Discovery

Abstract

This article provides a comprehensive examination of Parallel Tempering (PT) Monte Carlo, a powerful enhanced sampling technique crucial for calculating thermodynamic properties in complex molecular systems. We explore the foundational principles of PT, detailing its mechanism for overcoming energy barriers that trap conventional simulations. The content covers practical methodological implementation and diverse applications, from biomolecular folding to material design, while addressing key troubleshooting and optimization strategies for improving algorithmic efficiency. Finally, we present validation frameworks and performance comparisons with alternative sampling methods, highlighting PT's critical role in accelerating drug discovery and biomedical research through reliable free energy estimation and landscape exploration.

Understanding Parallel Tempering: Foundations for Overcoming Sampling Barriers

FAQ: Troubleshooting Parallel-Tempering Monte Carlo Simulations

FAQ 1: My PTMC simulation appears "stuck," with replicas failing to explore diverse low-energy states. What is the fundamental cause and solution?

The primary cause is that the low-temperature replicas have become trapped in local metastable states, separated by high energy barriers that local Metropolis moves cannot overcome [1]. The solution is to leverage Parallel Tempering itself. PTMC addresses this by running multiple replicas in parallel at a series of temperatures [2]. High-temperature replicas can cross these energy barriers and explore the configuration space more freely. The global 'swap' moves then allow these high-temperature configurations to be exchanged down to the low-temperature replicas of interest, effectively rescuing them from traps and restoring ergodicity [1].

FAQ 2: How do I diagnose and fix low acceptance rates for replica exchange swaps?

Low swap acceptance rates typically indicate poor overlap in the energy distributions of adjacent replicas [2]. To diagnose this, you should plot the energy histograms for each temperature. If the histograms for neighboring temperatures do not sufficiently overlap, swap moves will be rejected. The most common fix is to adjust your temperature ladder. You may need to add more replicas to decrease the temperature spacing in the problematic region, ensuring that for any two adjacent temperatures, their energy distributions have significant overlap [2].

FAQ 3: What thermodynamic properties can be reliably calculated from a well-converged PTMC simulation, and how?

A well-converged PTMC simulation provides a robust ensemble that allows for precise calculation of thermodynamic properties that are difficult to compute in the canonical ensemble. A key example is the specific heat (Cv) [2]. The heat capacity can be calculated from the fluctuations in the potential energy. PTMC improves the precision of this calculation because it samples both low and high energy configurations effectively, leading to a more accurate estimation of these fluctuations [3] [2].

Troubleshooting Guide: Common PTMC Issues and Solutions

Table 1: Diagnosing and resolving common problems in Parallel Tempering Monte Carlo simulations.

| Symptom | Likely Cause | Corrective Action |

|---|---|---|

| Low replica swap acceptance rates [2] | Insufficient overlap in energy distributions between adjacent temperatures [2]. | Add more replicas to reduce ∆T between neighbors; optimize temperature spacing. |

| Simulation is non-ergodic; low-T replicas trapped in metastable states [1]. | High energy barriers prevent sampling of relevant configuration space. | Verify temperature range is sufficiently high; utilize more replicas to improve "lateral" diffusion [1] [2]. |

| Poor convergence of thermodynamic averages (e.g., specific heat). | Inadequate sampling across all energy basins; simulation not converged. | Increase the number of Monte Carlo steps; validate convergence with multiple random seeds. |

| Specific heat peak does not align with expected transition temperature. | Temperature ladder too coarse around the transition region. | Concentrate more replicas in the suspected critical temperature region for finer resolution [3]. |

Experimental Protocol: PTMC for Structural Transitions in Colloidal Clusters

This protocol is adapted from a study investigating structural transitions in charged colloidal clusters characterized by short-range attraction and long-range repulsion (SALR) [3].

1. System Definition and Interaction Potential

- Model: The system consists of a cluster of N colloidal particles.

- Total Energy: The energy of the cluster is given by a sum of pair potentials:

V_cluster = ∑ [V_a(r_i) + V_r(r_i)], where the sum runs over all unique pairs of particles [3]. - Attractive Potential (V_a): Modeled using a Morse potential for short-range depletion attraction:

V_a(r_i) = ε_M * exp[ρ(1 - r_i/σ)] * (exp[ρ(1 - r_i/σ)] - 2)[3]. - Repulsive Potential (V_r): Modeled using a Yukawa function for long-range screened electrostatic repulsion:

V_r(r_i) = ε_Y * exp[-κσ(r_i/σ - 1)] / (r_i/σ)[3]. - Parameters (in reduced units): For a type II SALR potential, use:

ρ=30,ε_M=2.0,σ=1,κσ=0.5, andε_Y=1.0[3].

2. Parallel Tempering Simulation Setup

- Replicas: Run N parallel simulations (replicas), each at a different temperature.

- Local Moves: For each replica at its specific temperature, perform standard Metropolis Monte Carlo steps (e.g., random particle displacements) to sample the local configuration space [1].

- Swap Moves: Periodically propose a swap of configurations between two replicas at adjacent temperatures, Ti and Tj. Accept this swap with a probability based on the Metropolis criterion [1] [2]:

p_accept = min(1, exp[(E_i - E_j) * (1/kT_i - 1/kT_j)])where Ei and Ej are the energies of the configurations at temperatures Ti and Tj, respectively.

3. Data Collection and Analysis

- Thermodynamic Properties: Calculate the heat capacity as a function of temperature from the energy fluctuations collected during the simulation. Peaks or shoulders in the heat capacity curve serve as thermodynamic signatures of structural transitions or cluster dissociation [3].

- Structural Analysis: Classify sampled configurations by their energy and structure (e.g., low-energy Bernal spirals, intermediate-energy "beaded-necklace" motifs, high-energy linear dissociative structures) to correlate with features in the heat capacity plot [3].

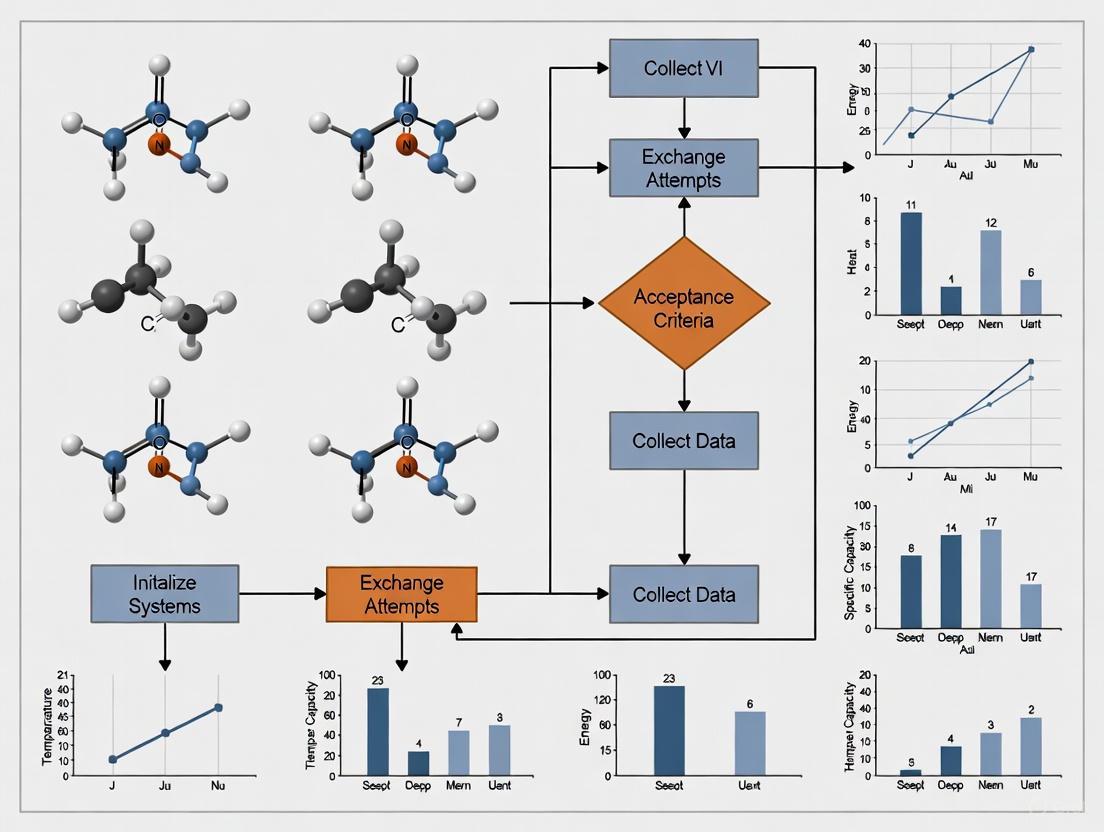

The following workflow diagram summarizes the core PTMC algorithm:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key components and their functions in a PTMC study of colloidal clusters. [3]

| Item | Function in the Experiment |

|---|---|

| Morse Potential | Models the short-range attractive component of the effective pair potential, representing depletion forces between colloidal particles. |

| Yukawa Potential | Models the long-range repulsive component of the pair potential, representing screened electrostatic repulsion between charged colloids. |

| Temperature Ladder | The carefully chosen set of temperatures for each replica; enables effective configuration swapping and prevents trapping. |

| Metropolis Criterion | The probabilistic rule used to accept or reject both local configuration moves and global replica swap moves. |

| Heat Capacity (Cv) Calculation | The key thermodynamic property calculated from energy fluctuations; used to identify structural transitions and dissociation events. |

The logical relationship between the system, methodology, and analysis in a PTMC study is shown below:

Frequently Asked Questions

Q1: The exchange rate between my replicas is too low. What parameters should I adjust to improve it? A low exchange rate often indicates poor overlap in the energy distributions of adjacent replicas. To address this:

- Optimize the Temperature Ladder: A geometric series (constant ratio between temperatures) is a common but often suboptimal starting point [4]. The number of required replicas scales with the square root of the system's degrees of freedom [5]. Adjust the temperatures to ensure the exchange probability is between 20-40%. The acceptance probability for a swap between replicas i and j is given by:

p = min(1, exp( (E_i - E_j) * (β_i - β_j) ))whereEis the energy andβ = 1/kT[6] [7]. You may need to derive a custom temperature ladder through preliminary runs [4]. - Increase Sampling: Ensure you run enough Monte Carlo steps between exchange attempts to allow each replica to adequately sample its current temperature. Insufficient sampling can lead to poor energy histogram overlap [8].

- Focus on a Subsystem: For large systems like proteins, consider partial or local replica exchange methods (PREMD/LREMD). These methods only replicate a subset of atoms (e.g., a ligand or protein loop), drastically reducing the number of replicas needed for efficient exchange [5].

Q2: How do I choose the number of replicas and the temperature range for my system? The goal is to have a sufficient number of replicas to ensure a continuous "annealing path" from the highest to the lowest temperature.

- Number of Replicas: This is system-dependent. As a rule of thumb, it should be proportional to the square root of the number of degrees of freedom in your system,

O(f^(1/2)[5]. For instance, a system with 100 degrees of freedom might require around 10 replicas. Theemceelibrary uses an exponential ladder where the highest temperature isT_max = (√2)^(N_temps-1) * T_min[9]. - Temperature Range: The lowest temperature is typically your target (e.g., 300 K for room temperature). The highest temperature should be high enough to flatten the energy landscape, allowing the system to escape deep local minima. A good check is that the highest-temperature replica explores a distribution close to the prior [9]. For a multi-modal Gaussian, the highest temperature should result in an effective Gaussian width that is comparable to the separation between the modes [9].

Q3: My parallel tempering simulation is computationally slow. What are potential bottlenecks and optimization strategies? Performance issues can arise from several areas:

- Synchronization Overhead: In traditional parallel tempering, all replicas must synchronize before an exchange. The entire simulation proceeds at the pace of the slowest replica, which is often the one at the lowest temperature exploring compact, high-energy barrier configurations [4].

- Alternative Algorithm: Consider using Simulated Tempering, where a single simulation performs a random walk in temperature space. This avoids synchronization delays and runs at the average speed across temperatures [4].

- Communication Costs: If running on multiple nodes, the cost of swapping configurations (or temperatures) can be high. For large state spaces, it can be more efficient to swap temperatures between chains rather than the states themselves [6].

- System-Specific Potentials: The cost of evaluating your energy function (e.g., for complex neural network potentials or look-up tables [8]) is a major factor. Profile your code to identify if the bottleneck is in the energy calculation or the parallel tempering mechanics themselves.

Q4: How can I ensure my simulation has sampled all relevant low-energy states, especially when studying phase transitions? Inadequate sampling of configurational space is a common challenge.

- Monitor Replica Trajectories: Track the path of a single replica as it travels through temperatures over time (demultiplexing) [7]. A healthy simulation will show replicas freely diffusing from the highest to the lowest temperatures and back.

- Perform Structural Analysis: Implement post-processing analysis tools. This can include:

- Use Multi-Histogram Analysis: Re-weight energy histograms collected at all temperatures to compute the partition function and derive accurate thermodynamic properties like entropy and heat capacity across the entire temperature range [8].

Q5: Can parallel tempering be applied to protein sequence design, and how is the "energy" defined? Yes, parallel tempering is a viable alternative to simulated annealing for protein design [7].

- Energy Function: In this context, the "energy" is a scoring function that you seek to minimize. This is often a composite score from a structure prediction tool like ESMfold, which can incorporate confidence in structure prediction (pLDDT), globularity, and the minimization of surface hydrophobic residues [7].

- Workflow: Starting from random amino acid sequences, multiple replicas undergo Monte Carlo sampling at different temperatures. Sequences are mutated and accepted/rejected based on the Metropolis criterion. Replica exchanges allow promising sequences to migrate to lower temperatures, facilitating a continuous flow of design candidates [7].

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational tools and their functions used in parallel-tempering experiments.

| Research Reagent | Function / Explanation |

|---|---|

| Extended Lennard-Jones Potentials | Models atomic interactions in simple systems like bulk argon or neon clusters [8]. |

| Embedded-Atom Models (EAM) | A more complex potential for metallic systems, providing a better description of bonding [8]. |

| Atomic Neural Network Potentials | A machine-learning potential offering high accuracy and computational efficiency; often has a Fortran interface [8]. |

| ESMfold | A machine learning tool used in protein design to predict 3D structure from sequence and calculate a design score used as the "energy" [7]. |

| Multi-Histogram Method | A post-processing technique that combines energy data from all temperatures to compute the partition function and accurate thermodynamic properties [8]. |

| Common-Neighbor Analysis (CNA) | A structural analysis method used to identify local crystal structures (e.g., FCC, HCP) in atomic systems during phase transitions [8]. |

Experimental Protocol: A Standard Workflow for Parallel Tempering

This protocol outlines a typical workflow for studying thermodynamic properties using the Parallel-Tempering Monte Carlo method.

1. System and Parameter Initialization

- Define the System: Specify the interaction potential (e.g., Lennard-Jones), boundary conditions (e.g., periodic), and the thermodynamic ensemble (e.g., NVT or NPT) [8].

- Set up Replicas: Choose the number of replicas (

M), the temperature range (T_mintoT_max), and initialize the configurations (e.g., random particle positions) [8] [7]. - Define the Temperature Ladder: Create an array of temperatures,

T[1] ... T[M]. An exponential ladder is a common starting point [9].

2. Monte Carlo Sampling Cycle

Each replica performs a standard Monte Carlo simulation at its assigned temperature for a fixed number of steps (N_cycles_between_swap).

- Perturb: Propose a new configuration, often via particle displacement, volume change, or spin flip [8] [10].

- Evaluate: Calculate the change in energy,

ΔE. - Accept/Reject: Apply the Metropolis criterion:

p_accept = min(1, exp(-β ΔE)), whereβ = 1/kT[7].

3. Replica Exchange Cycle After the sampling cycle, attempt to swap configurations between adjacent-temperature replicas.

- Select Pair: Choose a pair of adjacent replicas,

iandj, at temperaturesT_iandT_j[6]. - Calculate Acceptance Probability: Compute the probability for swapping their configurations:

p_swap = min(1, exp( (β_i - β_j) * (E_i - E_j) ))[6] [7]. - Accept/Reject Swap: Draw a random number

R ~ Uniform(0,1). IfR < p_swap, exchange the configurations of replicasiandj[6].

4. Data Collection and Post-Processing

- Collect Data: At intervals, store energies, configurations, radial distribution functions, and other observables for each replica [8].

- Post-Process: After the simulation, use methods like multi-histogram analysis to compute thermodynamic properties across all temperatures [8]. Perform structural analysis on saved configurations to understand sampled states [8].

Parallel Tempering Workflow and Exchange Mechanics

The following diagram illustrates the core mechanics of the parallel tempering algorithm, showing the interaction between replicas at different temperatures.

Core Concepts and Definitions

What is the fundamental mathematical object that defines a pMCMC algorithm?

The operation of a broad class of pMCMC algorithms can be described by a Markovian kernel of the form:

Pα,S,𝒱(q,dq˜)=∑j=0p∫Yαj(q,v)δΠ1∘Sj(q,v)(dq˜)𝒱(q,dv)

In this formulation, q is the current state, 𝒱(q,dv) is the multiproposal mechanism that generates a cloud of proposals, the Sjs are involutive operators acting on an extended phase space, Π1 is the projection onto the first component (e.g., Π1(q,v)=q), and the αjs determine the acceptance probabilities for each proposed state in the cloud. This formulation generalizes the reversible single-proposal Metropolis-Hastings algorithm to a multiproposal setting [11].

How is the "Work Function" conceptualized in the extended phase space formalism?

Within the extended phase space formalism, the "work" of the algorithm is governed by the interplay between the multiproposal mechanism (𝒱) and the involutive operators (Sj). The acceptance probabilities αj are not arbitrary but must be carefully derived to satisfy detailed balance conditions with respect to the target probability distribution μ. The general criteria that ensure ergodicity and reversibility require that these components are chosen such that the resulting Markov chain is unbiased and reversible, fulfilling the condition:

Pα,S,𝒱(q,dq˜)μ(dq)=Pα,S,𝒱(q˜,dq)μ(dq˜)

This ensures the chain preserves the target measure μ [11].

Acceptance Probability Formulations

What is the general form of the acceptance probability for a state swap in Parallel Tempering?

For a replica exchange (or parallel tempering) simulation involving two replicas with configurations c_i and c_j at temperatures T_i and T_j (with corresponding inverse temperatures β_i = 1/(kT_i) and β_j = 1/(kT_j)), the probability for accepting a swap of their configurations is given by:

This is equivalent to:

This formulation ensures that detailed balance is satisfied [2] [10].

What are the necessary and sufficient conditions for the acceptance probabilities in a general multiproposal pMCMC algorithm?

For the general multiproposal kernel Pα,S,𝒱 to be reversible with respect to the target measure μ, the acceptance probabilities α_j must be chosen to satisfy a generalized detailed balance condition. Theorems 2.2, Corollary 2.4, Corollary 2.6, Theorem 2.10, and Theorem 2.11 in the foundational literature provide specific criteria on α_j, S_j, and 𝒱 to guarantee reversibility. For a conditionally independent proposal structure, these conditions simplify, but the general case requires that the acceptance probabilities are set to counteract the bias introduced by the proposal mechanism and the involutions, ensuring that the stationary distribution of the Markov chain is the desired target μ [11].

Table: Key Components of the pMCMC Mathematical Framework

| Component | Mathematical Symbol | Role in the Algorithm |

|---|---|---|

| Target Distribution | μ |

The high-dimensional probability distribution from which we want to obtain samples. |

| Current State | q |

The state of the Markov chain at the current iteration. |

| Multiproposal Mechanism | 𝒱(q, dv) |

A kernel that generates a cloud of p+1 proposals (including the current state) based on q. |

| Involutive Operators | S_j |

A set of maps on the extended space X × Y that are involutions (S_j ∘ S_j = Identity). |

| Projection Operator | Π₁ |

The map that takes an element of the extended space (q, v) and returns the state q. |

| Acceptance Probabilities | α_j(q, v) |

The probability of transitioning to the state Π₁ ∘ S_j(q, v); must be chosen to satisfy detailed balance. |

Experimental Protocols and Implementation

What is a standard protocol for running a Parallel Tempering simulation? A typical protocol, as implemented in the PROFASI software, involves these steps [4]:

- System Definition: Define the system of interest, such as a peptide sequence (e.g.,

*KLVFFAE*). - Replica Initialization: Choose the number of replicas (e.g.,

4), which determines the level of parallelization. - Temperature Ladder: Define the temperature range, typically with a minimum (

tmin) and maximum (tmax) temperature (e.g.,274 Kelvinto374 Kelvin). Temperatures can be distributed in a geometric series or read from a pre-computed file for optimal performance. - Execution: Run the simulation using an MPI command, for example:

mpirun -np 4 profasi_run.mex -S ParTemp -ac 1 "*KLVFFAE*" -tmin "274 Kelvin" -tmax "374 Kelvin" -ncyc 10000. - Swapping: The simulation runs each replica at its assigned temperature, occasionally proposing swaps of configurations between adjacent temperatures based on the Metropolis-Hastings acceptance probability.

How does one implement a multiproposal pMCMC algorithm like mpCN for high-dimensional problems? The multiproposal pCN (mpCN) algorithm is derived from the general pMCMC framework and is designed for high- and infinite-dimensional target measures that are absolutely continuous with respect to a Gaussian base measure [11]. The implementation involves:

- Proposal Cloud: At the current state

q, generate a cloud of multiple proposals{q'_0, q'_1, ..., q'_p}. The proposal mechanism should be tailored to the Gaussian reference measure. - Acceptance Step: Calculate the acceptance probability for each proposal in the cloud using formulas derived from the general pMCMC reversibility theorems. These probabilities will depend on the log-likelihoods (or energies) of the current and proposed states.

- Selection: Select the next state from the cloud of proposals based on the calculated acceptance probabilities.

- Parallelization: Leverage modern high-performance computing architectures (e.g., GPUs) to evaluate the target density and its gradients for all proposals in the cloud simultaneously, as the multiproposal structure is naturally parallelizable.

Troubleshooting Common Issues

What is the recommended target acceptance rate for swap moves in Parallel Tempering, and how can it be achieved?

For parallel tempering, a common target acceptance probability for swaps between chains is 0.234 [12]. Achieving this target often requires careful tuning of the temperature ladder. If the acceptance rate is too low, the temperature increments between adjacent replicas are likely too large, preventing sufficient overlap in their energy distributions. If the acceptance rate is too high, the increments are too small, reducing the efficiency of sampling as chains are too similar. Modern implementations like the one in BEAST2's CoupledMCMC can automatically tune the temperature difference (Delta Temperature) during a run to achieve the desired target acceptance probability [12].

Why might a Parallel Tempering simulation run slower than a simulated tempering simulation? In a Parallel Tempering implementation, all replicas must be kept in sync. The overall progress of the simulation is therefore limited by the speed of the slowest replica. In molecular simulations, lower temperature replicas (which often have more compact structures) can run much slower than high-temperature ones due to more aggressive energy calculations. Consequently, the parallel tempering simulation runs as fast as the slowest, low-temperature replica. In contrast, Simulated Tempering runs all temperatures in a single chain, with its speed being approximately the average speed across all temperatures [4].

How can one diagnose and address poor sampling in multimodal distributions? Poor sampling of multiple modes is a classic problem that pMCMC and Parallel Tempering aim to solve. If certain modes are underrepresented:

- Problem: The temperature ladder may not provide a smooth enough pathway for chains to escape local modes. The highest temperature might not be high enough to allow free exploration of the state space.

- Solution: Increase the number of replicas to create a finer temperature ladder, ensuring better overlap in energy distributions. Extend the maximum temperature to ensure the hottest chain can freely cross all energy barriers. The general pMCMC framework, with its multiproposal mechanisms, can also be more effective than single-proposal methods or running multiple independent chains, as it allows communication between proposals/chains, helping to traverse difficult geometries [11].

Table: Troubleshooting Guide for Common Parallel Tempering Issues

| Problem | Potential Causes | Diagnostic Checks | Solutions |

|---|---|---|---|

| Low Swap Acceptance | Temperature ladder is too sparse; energy distributions have insufficient overlap. | Check calculated acceptance rate is << 0.234. Plot energy histograms for adjacent temperatures. | Increase the number of chains/replicas. Manually optimize the temperature ladder (e.g., using a "tfile"). |

| Slow Simulation Speed | The slowest replica (often the coldest) is a computational bottleneck. | Check for load imbalance; profile time spent per replica. | Use an optimized proposal mechanism for low-T replicas. Consider Simulated Tempering if parallel speedup is insufficient [4]. |

| Poor Mixing in a Specific Replica | The replica is trapped in a local metastable state. | Check time series of energy and order parameters for a single replica; look for high autocorrelation. | Ensure the temperature ladder facilitates "lateral movement." Verify proposal distributions (e.g., step size) are appropriate for that temperature. |

| Failure to Sample All Modes | High-T chains are not hot enough to overcome energy barriers; no pathway for modes to communicate. | Check if samples from all chains are confined to a single mode. | Increase the maximum temperature. Add more chains to the ladder. Consider a multiproposal pMCMC algorithm designed for multimodal targets [11]. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Parallel Tempering and pMCMC Research

| Tool / Resource | Type | Primary Function | Key Feature / Use Case |

|---|---|---|---|

| MPI (Message Passing Interface) | Library | Enables inter-process communication for running parallel replicas across multiple CPUs or nodes. | Essential for implementing Parallel Tempering, as it allows replicas to run simultaneously and communicate for swap moves [4] [10]. |

| PROFASI | Software Package | A Monte Carlo simulation package for studying protein folding and biophysical properties. | Includes built-in implementations of Parallel Tempering and Simulated Tempering for complex biomolecular systems [4]. |

| BEAST2 with CoupledMCMC | Software Package (BEAST2 Package) | A platform for Bayesian evolutionary analysis via MCMC. | Provides a Parallel Tempering implementation that automatically tunes the temperature difference to a target acceptance rate, simplifying setup [12]. |

| GPU with TensorFlow/CUDA | Hardware/Software | Provides massive parallelization for computationally intensive tasks. | Ideal for scaling pMCMC algorithms that leverage thousands of proposals per step, as the multiproposal structure maps naturally to GPU architectures [11]. |

| Preconditioned Crank-Nicolson (pCN) | Algorithm | A proposal mechanism for MCMC sampling in high- and infinite-dimensional spaces. | The basis for the novel multiproposal pCN (mpCN) algorithm, which is derived from the general pMCMC framework for targets with a Gaussian base measure [11]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why is my Parallel Tempering Monte Carlo (PTMC) simulation failing to achieve convergence in the calculated free energy? Convergence failure in PTMC often stems from poor overlap between the energy distributions of adjacent replicas, preventing efficient random walks in temperature space [2]. To address this, ensure an optimal temperature distribution; the feedback-optimized algorithm can generate an optimal set of simulation temperatures to improve replica round-trip time and enhance sampling [13]. Furthermore, for alchemical free energy calculations, a critical best practice is to inspect for good phase space overlap for all pairs of adjacent lambda states [14].

FAQ 2: How can I identify structural transitions from my PTMC simulation data? Structural transitions can be identified by calculating thermodynamic properties like the heat capacity from the PTMC simulations. Peaks or shoulders on the heat-capacity curve serve as thermodynamic signatures of these transitions [3]. For instance, in the study of charged colloidal clusters, the dissociation transition was characterized by a peak in the heat capacity at T=0.20 (in reduced units) and the appearance of linear structures [3].

FAQ 3: What are the best practices for analyzing alchemical free energy calculations? A robust analysis of alchemical free energy calculations should consist of several key stages [14]:

- Subsampling the data to retain only uncorrelated samples for analysis.

- Calculating free energy differences using multiple estimators (e.g., from Thermodynamic Integration and Free Energy Perturbation) along with their statistical errors.

- Producing textual and graphical outputs of the computed data.

- Inspecting the data for convergence, identifying the equilibrated portion of the simulation, and verifying good phase space overlap between adjacent states.

FAQ 4: My ligand-binding free energy calculation is inaccurate. What sampling issues could be the cause? Inadequate sampling of ligand conformational, translational, and orientational degrees of freedom in the binding site is a common source of error. A proven methodology to enhance convergence is to use restraining potentials. The absolute binding free energy can be decomposed into steps where biasing potentials restraining the ligand's translation, orientation, and conformation (e.g., based on RMSD relative to the bound state) are turned on and off. The effect of these restraints must be rigorously unbiased in the final calculation [15].

Troubleshooting Guides

Issue 1: Poor Replica Exchange in Parallel Tempering

Problem Low acceptance probability for swaps between replicas at different temperatures, leading to simulations that are "stuck" in metastable states and fail to sample the global energy landscape effectively [2].

Diagnosis and Solutions

- Check Temperature Spacing: The acceptance probability for a swap between two replicas at temperatures T_i and T_j is given by

min(1, exp((E_i - E_j)(1/kT_i - 1/kT_j)))[2]. If the energy histograms of adjacent replicas do not sufficiently overlap, the acceptance rate will be low. - Solution: Optimize the set of temperatures. Use a feedback-optimized algorithm that adjusts temperatures to minimize the replica round-trip time in temperature space, ensuring efficient diffusion of replicas from the lowest to the highest temperature and back [13].

- Verify Number of Replicas: Using too few replicas forces wider temperature spacing, reducing overlap. Using too many is computationally inefficient.

- Solution: Select the optimal number of replicas for your system. For a lattice homopolymer study, this was done empirically by measuring the replica's round-trip time for different chain lengths [13].

Issue 2: High Variance in Alchemical Free Energy Estimates

Problem Large statistical errors in the computed free energy differences between the end states of an alchemical transformation.

Diagnosis and Solutions

- Diagnosis: Insufficient Overlap between λ States: The transformation pathway between states is divided into many intermediate λ states. If the conformational spaces of adjacent λ states do not overlap sufficiently, the free energy estimate will be noisy and inaccurate [14].

- Solution: Increase the number of intermediate λ states, particularly in regions where the system's energy changes rapidly. For complex transformations, use a λ-vector that controls different interaction types (e.g., Coulomb and Lennard-Jones) separately [14].

- Diagnosis: Inadequate Sampling of Ligand Pose: In binding free energy calculations, the ligand may not sufficiently sample the bound pose.

- Solution: Apply a restraining potential to the ligand's conformation, based on the root-mean-square deviation (RMSD) relative to the known bound structure, to enforce sampling relevant to the complexed state [15].

The tables below consolidate key parameters and results from referenced studies to serve as a benchmark for your experiments.

Table 1: SALR Potential Parameters for Colloidal Cluster Simulations [3]

| Parameter | Description | Value |

|---|---|---|

| ρ | Controls range of attractive interaction | 30 |

| ϵ_M | Well depth of Morse potential | 2.0 |

| σ | Diameter of colloidal particles | 1.0 |

| κσ | Inverse Debye length (screened electrostatic) | 0.5 |

| ϵ_Y | Strength of Yukawa repulsion | 1.0 |

Table 2: Thermodynamic Signatures in SALR Clusters [3]

| Event | Thermodynamic Signature | Structural Characteristic |

|---|---|---|

| Dissociation | Peak in heat-capacity curve | Appearance of linear and branched structures |

| Structural Transition | Peak or shoulder in heat-capacity curve | Unrolling of Bernal spiral to "beaded-necklace" motifs |

Experimental Protocols

Detailed Methodology: PTMC for Thermodynamic Properties

This protocol outlines the use of Parallel Tempering Monte Carlo to calculate thermodynamic properties like heat capacity and identify structural transitions, as applied to charged colloidal clusters [3].

System Definition:

- Model the cluster energy as a sum of effective pairwise potentials. The total energy is given by:

V_cluster = Σ [V_a(r_i) + V_r(r_i)]whereV_ais an attractive component andV_ris a repulsive component [3]. - For a type II SALR system, use a short-range Morse function for attraction (

V_a) and a long-range Yukawa function for repulsion (V_r) [3]. - Initialize the system with

Ncolloidal particles.

- Model the cluster energy as a sum of effective pairwise potentials. The total energy is given by:

Parameter Setup:

- Replica Configuration: Choose a set of temperatures, optimized for good energy overlap between adjacent replicas [13].

- MC Parameters: Define the number of MC steps, equilibration period, and sampling frequency.

Simulation Execution:

- Run

Ncopies (replicas) of the system in parallel, each at a different temperature [2]. - For each replica, perform standard Monte Carlo sampling (e.g., particle displacement moves) at its assigned temperature.

- Periodically attempt a swap of configurations between two replicas at adjacent temperatures,

T_iandT_j. Accept the swap with probability:p = min(1, exp((E_i - E_j)(1/kT_i - 1/kT_j)))[2].

- Run

Data Analysis:

- Heat Capacity Calculation: Within the canonical ensemble (NVT), compute the heat capacity per particle from the energy fluctuations obtained from the simulations [3].

- Structural Analysis: Monitor structural metrics (e.g., radius of gyration, end-to-end distance) to characterize the clusters present at different temperatures [3] [13]. Correlate changes in these metrics with features in the heat-capacity curve.

Workflow: PTMC for Free Energy

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item | Function in the Experiment |

|---|---|

| Parallel Tempering Algorithm | Enhances sampling by allowing replicas at different temperatures to exchange configurations, helping simulations escape metastable states and converge on the global energy landscape [2]. |

| SALR Potential (Morse + Yukawa) | An effective pairwise potential used to model charged colloidal systems; describes the competition between Short-Range Attraction and Long-Range Repulsion that leads to self-assembly of anisotropic structures [3]. |

| Alchemical λ Pathway | Defines a series of intermediate, non-physical states to gradually transform one system into another (e.g., decoupling a ligand from its environment), enabling free energy calculations between end states with little phase space overlap [14]. |

| Restraining Potentials | Biasing potentials applied to a ligand's translation, orientation, or conformation (RMSD) in binding free energy calculations; they improve sampling efficiency and convergence, and their effect is removed analytically in the final result [15]. |

| Free Energy Perturbation (FEP) | A method for estimating free energy differences from simulations by analyzing the energy differences between adjacent states in the alchemical pathway [14]. |

| Thermodynamic Integration (TI) | A method for estimating free energy differences by computing the ensemble average of the derivative of the system's Hamiltonian with respect to the coupling parameter λ [14]. |

Frequently Asked Questions (FAQs)

Q1: My parallel tempering simulation is not achieving good exchange rates between replicas. What could be wrong? Poor exchange rates often stem from an insufficient overlap in the energy distributions of adjacent replicas. This is frequently caused by a poorly chosen temperature set. Ensure your temperatures are spaced to provide a consistent exchange probability (e.g., 20-30%) across all replica pairs. If temperatures are too far apart, the energy distributions will not overlap enough for exchanges to occur. You can diagnose this by plotting the potential energy distributions for each replica; they should significantly overlap with their neighbors [3].

Q2: How can I identify a structural transition from my simulation data? Structural transitions are often signaled by peaks or shoulders in the heat capacity (Cv) curve as a function of temperature [3]. Calculate the heat capacity from the fluctuations in the potential energy. A sharp peak typically indicates dissociation of the cluster at higher temperatures, while more subtle shoulders can signify internal structural rearrangements, such as the unrolling of a Bernal spiral into a beaded-necklace motif [3].

Q3: What is the practical difference between interpolation and extrapolation in this context? Interpolation estimates unknown values within the range of your existing data, such as estimating energy for a temperature between two known simulation points. This is generally reliable. Extrapolation attempts to predict values outside the known range, which is riskier and not recommended for constructing interpolating distributions, as it can lead to mathematically unstable and unreliable results [16] [17].

Q4: My simulation is sampling unrealistic, high-energy structures. How can I guide it better? This can happen if the temperature of a replica is too high, leading to sampling in the dissociative regime. Focus on the lower-temperature replicas, which sample the low-energy structures of interest, such as spherical shapes, Bernal spirals, and beaded-necklace motifs [3]. Also, verify the parameters of your interaction potential to ensure they accurately reflect the physical system you are modeling [3].

Troubleshooting Guides

Problem: Poor Replica Exchange Efficiency A low acceptance rate for swaps between adjacent replicas slows down convergence and hampers the exploration of the energy landscape.

- Diagnosis:

- Calculate and plot the acceptance rate for swaps between each pair of adjacent temperatures.

- Plot the potential energy distributions for all replicas. Look for gaps between the distributions of neighboring replicas.

- Solutions:

- Retune the Temperature Ladder: The most common solution is to adjust the temperatures of your replicas. Use a formula that spaces temperatures geometrically, as the energy variance often increases with temperature. If a geometric progression is insufficient, consider adaptive schemes that dynamically adjust temperatures to achieve uniform exchange rates.

- Increase the Number of Replicas: If the energy landscape is particularly rough, you may need to add more replicas to reduce the energy gap between adjacent temperatures, thereby increasing overlap.

Problem: Failure to Observe Expected Structural Transitions The heat capacity curve is flat and does not show the expected peaks or shoulders that indicate thermodynamic events.

- Diagnosis:

- Check if your temperature range is wide enough. The dissociation transition, for instance, might occur at a higher temperature than you are simulating [3].

- Ensure your simulation has run for enough Monte Carlo steps to adequately sample the relevant configurations.

- Solutions:

- Extend the Temperature Range: Add replicas at higher temperatures to capture dissociation events and at lower temperatures to better resolve low-energy structural transitions.

- Increase Sampling: Run the simulation for longer. Use the integrated autocorrelation time of the energy to estimate the required simulation length.

- Analyze Configurations: Manually inspect snapshots from replicas at different temperatures to classify the structures (e.g., spherical, spiral, linear). This can confirm if a transition is occurring even if the Cv signal is weak [3].

Problem: Inaccurate Interpolation of Data Points When using interpolation to analyze results (e.g., for free energy calculations), the interpolated values are inaccurate or show oscillatory behavior.

- Diagnosis:

- This is a classic sign of applying an inappropriate interpolation method for your data's characteristics.

- Solutions:

- Choose the Correct Method: Select an interpolation technique suited to your data:

- Linear Interpolation: Best for data where you expect a constant rate of change between points. Simple and stable [17].

- Polynomial Interpolation: Can capture more complex, non-linear relationships but can suffer from runaway oscillations (Runge's phenomenon) if the degree is too high [16].

- Spline Interpolation: Often the best compromise, as it fits piecewise low-degree polynomials, creating smooth curves without excessive oscillation [16] [17].

- Cross-Validate: Use techniques like leave-one-out cross-validation (LOOCV) to empirically assess the error of your interpolation method on your specific dataset [16].

- Choose the Correct Method: Select an interpolation technique suited to your data:

Experimental Protocols & Methodologies

Table 1: Key Parameters for a SALR Colloidal Cluster PTMC Simulation

| Parameter | Description | Example Value / Method |

|---|---|---|

| Interaction Potential | A sum of pairwise SALR potentials [3]. | V(r) = V_a(r) + V_r(r) |

| Attractive Component (V_a) | Models depletion attraction; often a Morse potential [3]. | ϵ_M * [e^(ρ(1-r/σ)) * (e^(ρ(1-r/σ)) - 2)] with ϵ_M=2.0, ρ=30, σ=1 [3] |

| Repulsive Component (V_r) | Models screened electrostatic repulsion; often a Yukawa potential [3]. | (ϵ_Y * e^(-κσ(r/σ - 1)))/(r/σ) with ϵ_Y=1.0, κσ=0.5 [3] |

| Number of Replicas | Number of parallel simulations at different temperatures. | 8-64, depending on system size and temperature range. |

| Temperature Set | The temperatures for each replica. | Geometrically spaced, e.g., T = 0.10, 0.15, 0.20, ..., 0.40 [3]. |

| Monte Carlo Steps | Total steps per replica for equilibration and production. | Typically millions to tens of millions. |

| Exchange Attempt Frequency | How often to attempt a swap between replicas. | Every 100-1000 Monte Carlo steps. |

Protocol 1: Setting Up a Basic PTMC Simulation for Thermodynamic Analysis

- System Definition: Define the cluster size (number of particles, N) and the interaction potential with its specific parameters (e.g., M30+Y1.0) [3].

- Replica Initialization: Choose the number of replicas and a set of temperatures. Initialize particle positions for each replica, which could be random, a lattice, or a known low-energy structure.

- Equilibration: Run each replica independently for a sufficient number of Monte Carlo steps to reach equilibrium at its respective temperature. Monitor energies to confirm stability.

- Production Run with Exchanges: Run the parallel tempering simulation, periodically attempting swaps of configurations between adjacent-temperature replicas based on the Metropolis criterion.

- Data Collection: Record the potential energy, configuration snapshots, and acceptance rates for swaps throughout the production run.

Protocol 2: Calculating Heat Capacity and Identifying Transitions

- Energy Time Series: From the production run, extract the time series of potential energy for each replica.

- Heat Capacity Calculation: Calculate the heat capacity per particle (Cv) at each temperature (T) from the energy fluctuations within the canonical ensemble:

Cv = (〈E²〉 - 〈E〉²) / (kT²), whereEis the potential energy,kis Boltzmann's constant, and angle brackets denote ensemble averages [3]. - Plot and Analyze: Plot Cv as a function of T. Identify peaks (signaling dissociation) and shoulders (signaling internal structural transitions) [3].

- Structural Analysis: Correlate features in the Cv curve with structural changes by analyzing saved configuration snapshots from temperatures around the feature.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for SALR Colloidal Simulations

| Item | Function in the Simulation / Experiment |

|---|---|

| Effective Pair Potential (SALR) | The fundamental model describing the isotropic interaction between colloidal particles, combining short-range attraction and long-range repulsion [3]. |

| Morse Potential Parameters (ϵ_M, ρ, σ) | Controls the depth, range, and core diameter of the short-range attractive part of the interaction [3]. |

| Yukawa Potential Parameters (ϵ_Y, κ) | Controls the strength and inverse screening length of the long-range repulsive tail, modeling electrostatic effects in a solvent [3]. |

| Parallel Tempering Algorithm | The enhanced sampling method that facilitates escape from local energy minima by allowing replicas at different temperatures to exchange configurations [3]. |

| Global Optimization Algorithm (e.g., Evolutionary Algorithm) | Used to find the low-energy (e.g., global minimum) structures of the cluster at T=0 K, providing a reference for structures observed in finite-temperature simulations [3]. |

Workflow and System Visualization

PTMC Simulation Workflow for SALR Clusters

SALR Potential Components and Parameters

Implementing Parallel Tempering: Methods and Real-World Applications

Frequently Asked Questions

1. What is the primary purpose of the communication phase in Parallel Tempering? The communication phase, involving replica swap attempts, is crucial for preventing the simulation from becoming trapped in local energy minima. It allows configurations sampled at high temperatures, which can explore the energy landscape more freely, to propagate down to the lower-temperature replicas. This facilitates tunneling through energy barriers and enables a more thorough exploration of the configuration space, which is essential for achieving ergodic sampling in complex, multimodal systems [1] [2].

2. My simulation exhibits low swap acceptance rates between adjacent replicas. What is the most likely cause? Low swap acceptance rates are typically caused by insufficient overlap in the energy distributions of neighboring replicas [2] [18]. This occurs when the temperature spacing in your replica ladder is too wide. To correct this, you should adjust your temperature set to ensure that the difference in average energies between adjacent replicas is approximately equal to the square root of the sum of their energy variances, which generally targets an acceptance rate of 20-30% [18].

3. How does the local exploration phase contribute to the overall sampling? During the local exploration phase, each replica undergoes independent Markov chain Monte Carlo (MCMC) sampling (e.g., using Metropolis-Hastings updates) at its assigned fixed temperature [1] [18]. This phase is responsible for detailed exploration of the local energy basin. The high-temperature replicas broadly survey the landscape, while the low-temperature replicas refine the sampling in deep energy minima, providing high-resolution detail for the target thermodynamic distribution [2] [18].

4. Can Parallel Tempering be used for global optimization, not just sampling? Yes. By using the Boltzmann distribution ( p(x) \propto \exp(-E(x)/kT) ), the modes of the probability density correspond to the minima of the energy function ( E(x) ) [19]. After running Parallel Tempering, the configuration with the lowest energy sampled at the coldest temperature provides an approximation of the global minimum [19]. The algorithm acts as a "super simulated annealing that does not need restart" [2].

Troubleshooting Guides

Problem: Poor Mixing at Low Temperatures

Symptoms: The lowest-temperature replica becomes stuck in a single metastable state for long periods, with a noticeable lack of improvement in the sampled energy and high autocorrelation times.

Solutions:

- Verify Temperature Spacing: Ensure the energy histogram overlap between the coldest replica and the next one is sufficient. The swap acceptance probability is given by ( p = \min\left(1, \exp\left(\Delta\beta \Delta E\right)\right) ), where ( \Delta\beta = 1/(kTi) - 1/(kT{i+1}) ) and ( \Delta E = E{i+1} - Ei ) [1] [2] [18].

- Increase Number of Replicas: Adding more replicas between the current temperature extremes allows for smoother diffusion of configurations from high to low temperatures [18].

- Check Local Moves: Ensure the local MCMC move set (e.g., particle displacement magnitude) is appropriately tuned for each temperature to achieve a good local acceptance rate (~40-60%) [3].

Problem: The Simulation Fails to Converge

Symptoms: Thermodynamic averages, such as mean energy or heat capacity, do not stabilize even after long runtimes.

Solutions:

- Extended Equilibration: Discard a larger portion of the initial sampling data as equilibration. Monitor the time evolution of energies to identify a stable plateau.

- Diagnose Replica Flow: Track the "round-trip time" for a configuration to travel from the lowest temperature to the highest and back. Slow round-trips indicate inefficient mixing and may require more replicas or adjusted temperatures [18].

- Re-initialize Configurations: Start from different, randomized initial conditions to verify that the simulation converges to the same average properties, thus testing for ergodicity.

Problem: Computational Cost is Prohibitively High

Symptoms: The simulation runtime, driven by running many replicas in parallel, is too long for the available resources.

Solutions:

- Optimize Replica Count: The number of replicas needed scales approximately with the square root of the system's degrees of freedom. Use the minimum number that still provides adequate swap acceptance [18].

- Parallelization Efficiency: Ensure the code is efficiently parallelized so that the computational cost of the local exploration phase scales linearly with the number of replicas. The communication overhead for swaps should be minimal.

- Non-Reversible Algorithms: Consider implementing a non-reversible swap scheme (alternating between even-odd and odd-even neighbor pairs), which has been shown to provide up to a 42% improvement in efficiency under optimal conditions [20].

Experimental Protocol & Parameters

The following methodology is adapted from a study investigating structural transitions in charged colloidal clusters, a system with a SALR (short-range attraction, long-range repulsion) potential [3].

Interaction Potential

The total energy of a cluster of ( N ) particles is given by a sum of pairwise potentials: [ V{\text{cluster}} = \sum{i=1}^{N(N-1)/2} \left[ Va(ri) + Vr(ri) \right] ]

- Attractive Component (( Va )): Modeled with a Morse potential for short-range depletion attraction. [ Va(ri) = \epsilonM \left( e^{\rho (1 - ri/\sigma)} \left( e^{\rho (1 - ri/\sigma)} - 2 \right) \right) ]

- Repulsive Component (( Vr )): Modeled with a Yukawa potential for screened electrostatic repulsion. [ Vr(ri) = \epsilonY \frac{e^{-\kappa \sigma (ri / \sigma - 1)}}{ri / \sigma} ]

Default PTMC Parameters (Colloidal Clusters)

The table below summarizes key parameters and their functions from a typical application [3].

| Parameter | Symbol | Value/Type | Function |

|---|---|---|---|

| Potential Type | - | SALR (Type II) | Models competition between short-range attraction and long-range repulsion [3]. |

| Morse Well Depth | ( \epsilon_M ) | 2.0 (reduced) | Controls the strength of the attractive interaction [3]. |

| Morse Range | ( \rho ) | 30 | Governs the range of attraction; higher values mean shorter range [3]. |

| Yukawa Strength | ( \epsilon_Y ) | 1.0 (reduced) | Determines the magnitude of the repulsive charge interaction [3]. |

| Inverse Debye Length | ( \kappa ) | ( 0.5 / \sigma ) | Controls the screening length for the repulsive Yukawa potential [3]. |

| Particle Diameter | ( \sigma ) | 1.0 (reduced) | Defines the length scale of the system [3]. |

| Local Move Sampler | - | Metropolis-Hastings MCMC | Performs local exploration within a single replica [3] [19]. |

| Analysis Metric | - | Heat Capacity vs. Temperature | Identifies thermodynamic signatures of structural transitions and dissociation [3]. |

Workflow Visualization

PTMC High-Level Workflow: This diagram illustrates the two-phase structure of the Parallel Tempering Monte Carlo algorithm, highlighting the cycle between local exploration and global communication.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in PTMC Simulation |

|---|---|

| Replica Ladder | A set of simulations at different temperatures (T1 < T2 < ... < TN); the lowest (T1) targets the distribution of interest, while higher temperatures enable barrier crossing [2] [18]. |

| Local MCMC Sampler | The algorithm (e.g., Metropolis-Hastings) that performs stochastic moves (e.g., particle displacements) to explore the local energy landscape of a single replica [1] [19]. |

| Swap Acceptance Criterion | The Metropolis-based rule, ( p = \min(1, \exp((Ei - Ej)(1/kTi - 1/kTj))) ), that determines whether to exchange configurations between two replicas, preserving detailed balance [2] [18]. |

| SALR Potential | An effective pair potential with competing Short-Range Attraction and Long-Range Repulsion, used to model systems like charged colloids and proteins [3]. |

| Heat Capacity Curve | A key thermodynamic property ( C_v ) plotted against temperature; its peaks and shoulders signal structural transitions or dissociation events in the cluster [3]. |

| Convergence Diagnostic | Metrics (e.g., round-trip time, stability of averages) used to determine if the simulation has sufficiently sampled the configuration space [18]. |

Frequently Asked Questions

How many replicas do I need for my system, and how should I space the temperatures? The number of replicas required depends on the system's size and complexity, and the temperature range you need to cover. The temperatures should be spaced to achieve a swap acceptance rate between 20% and 40%, with around 30% often being optimal for efficient sampling [21]. Using too few replicas, or temperatures that are too far apart, will result in low swap rates and poor sampling. Using too many is computationally inefficient.

What is the consequence of a poorly chosen temperature ladder? A poorly chosen temperature ladder can lead to two main problems [21]:

- Low Acceptance Rate (<20%): The replicas do not swap states effectively. The high-temperature replicas cannot help the low-temperature ones escape local energy minima, defeating the purpose of parallel tempering.

- Poor Temperature Space Traversal: Individual replicas may become trapped within a small subset of temperatures, preventing the system from fully exploring the configuration space.

Are there methods to find a good temperature set automatically? Yes, adaptive algorithms exist that dynamically adjust the temperature ladder during sampling. Some aim for uniform acceptance rates between adjacent replicas [22], while more advanced methods use policy gradient reinforcement learning to optimize the temperature spacings to minimize the integrated autocorrelation time of the sampler [22].

What is a simple way to generate an initial temperature ladder? For a first attempt, a geometric temperature distribution (constant ratio between adjacent temperatures) is a common starting point [4] [22]. Online calculators and pre-processing tools are also available, where you input the number of particles, temperature range, and target acceptance probability to generate a proposed temperature set [21].

Troubleshooting Guide

Symptom: Low swap acceptance rate between replicas.

- Diagnosis: The potential energy distributions of adjacent replicas do not have enough overlap. The temperature spacing is likely too wide [23] [21].

- Solution: Increase the number of replicas to reduce the temperature difference between neighbors. Alternatively, if you cannot add more replicas, reduce the maximum temperature (though this may reduce the ability to cross large energy barriers).

Symptom: A replica is trapped in a single temperature or a small subset of temperatures.

- Diagnosis: The replica is not successfully walking through temperature space. This can occur if the swap frequency is too low or if the temperature spacing is non-optimal [21].

- Solution: First, ensure your temperature ladder is well-tuned for a ~30% acceptance rate. If the problem persists, you may need to increase the frequency of swap attempts between replicas.

Symptom: The simulation fails to find the global energy minimum, getting stuck in a local minimum.

- Diagnosis: The highest temperature in your ladder may not be high enough to allow the system to overcome large energy barriers [23].

- Solution: Increase the maximum temperature (

Tmax). As a guideline, the highest-temperature replica should be able to freely traverse the entire energy landscape [23] [21].

Experimental Protocols

Protocol 1: Setting Up a Basic Parallel Tempering Simulation This protocol outlines the steps for a typical parallel tempering simulation using a geometric temperature spacing, as might be implemented in software like LAMMPS or PROFASI [4] [21].

- Define System and Range: Identify your system and define the lower (

Tmin) and upper (Tmax) temperature bounds. - Estimate Replica Count: Use the formula for geometric spacing or an online calculator to estimate the number of replicas

Nneeded for a ~30% acceptance rate. - Generate Temperatures: Calculate the temperature for replica

iusing the formula: ( Ti = T{min} \times \left( \frac{T{max}}{T{min}} \right)^{\frac{i-1}{N-1}} ). - Configure Simulation: Assign each of the

Nparallel processes one temperature from the ladder. - Set Swap Frequency: Choose a frequency for attempting replica swaps (e.g., every 100-1000 Monte Carlo steps or MD steps).

- Run and Monitor: Execute the simulation and monitor the acceptance rates between all adjacent replica pairs.

Protocol 2: Adaptive Temperature Spacing Using Acceptance Rates This methodology, based on contemporary research, dynamically adjusts the temperature ladder to achieve uniform acceptance rates [22].

- Initialization: Start with a preliminary temperature set (e.g., a geometric distribution).

- Equilibration Run: Perform a short parallel tempering simulation.

- Measure Acceptance Rates: Calculate the acceptance rate for swaps between every pair of adjacent temperatures.

- Adjust Temperatures: Apply an adaptive algorithm. A common heuristic is to move temperatures closer if their swap rate is too low and push them apart if the rate is too high [22].

- Iterate: Repeat steps 2-4 until the acceptance rates between all adjacent replicas are approximately equal and within the 20-40% target range.

- Production Run: Use the optimized temperature set for a long, production-level simulation.

The table below summarizes the key parameters for these protocols.

| Parameter | Protocol 1: Basic Setup | Protocol 2: Adaptive Setup |

|---|---|---|

| Number of Replicas | Estimated via calculation [21] | Determined adaptively during initialization |

| Temperature Spacing | Geometric series [4] [22] | Dynamically adjusted to uniform acceptance [22] |

| Target Acceptance Rate | ~30% [21] | Uniform across all pairs (e.g., 20-40%) [22] |

| Swap Frequency | Every 100-1000 steps [21] | Every 100-1000 steps |

| Key Advantage | Simple to implement | Improved sampling efficiency |

Workflow Diagram

The following diagram illustrates the logical workflow for setting up and troubleshooting a parallel tempering simulation.

The Scientist's Toolkit

The table below details key computational reagents and their functions for setting up parallel tempering simulations.

| Research Reagent | Function / Purpose |

|---|---|

| MPI (Message Passing Interface) | Enables parallel computation by managing communication between different replicas running on multiple processors [4] [21]. |

| Temperature Ladder File | A text file containing a sorted list of temperatures (or inverse temperatures) for the replicas, allowing for custom, non-geometric spacing [4]. |

| Langevin Thermostat | A common thermostat used in molecular dynamics simulations to maintain each replica at its specific target temperature [21]. |

| Replica Exchange Log File | The master log file that records the temperature index of each replica at every swap step, essential for analyzing temperature traversal and acceptance rates [21]. |

| Geometric Spacing Calculator | An online or script-based tool that calculates a geometric temperature series given a target temperature range and acceptance probability [21]. |

Troubleshooting Common PTMC Simulation Issues

Q1: My simulation is trapped in a local energy minimum and cannot sample the full configuration space. What should I do?

A: This is a fundamental challenge that Parallel Tempering Monte Carlo (PTMC) is designed to address. The issue arises from a rough energy landscape with high barriers between low-energy states [24].

- Solution: Verify that your temperature ladder is properly configured. The temperatures should be spaced closely enough to ensure a high acceptance probability for replica exchanges (typically 20-30%). If exchanges are too infrequent, add more replicas or adjust the temperature range. Also, ensure that the highest temperature is sufficient to overcome the largest energy barriers in your system [24].

Q2: How can I identify structural transitions or dissociation events from my PTMC data?

A: Thermodynamic properties like heat capacity are excellent indicators. Calculate the heat capacity as a function of temperature from your simulation data [3].

- Solution: Peaks or shoulders in the heat capacity curve often signify thermodynamic events. A strong peak at higher temperatures typically indicates cluster dissociation, while features at lower temperatures can signal internal structural transitions, such as a Bernal spiral unrolling into a beaded-necklace structure [3]. Monitor the populations of different structural motifs (e.g., spherical, spiral, linear) across temperatures to correlate with heat capacity features.

Q3: I want to construct a kinetic model of conformational dynamics, but some states are poorly sampled at the temperature of interest. Can PTMC data help?

A: Yes. A key advantage of PTMC is that data from all temperatures can be integrated. While standard analysis uses data only from the temperature of interest, advanced reweighting techniques allow you to use information from all replicas [25].

- Solution: Implement dynamical reweighting methods. These techniques permit the estimation of transition probabilities and rates at a target temperature by reweighting short trajectory segments from all simulated temperatures, thereby reducing statistical uncertainty and providing estimates for transitions not observed at the temperature of interest [25].

Q4: The acceptance rate for replica exchanges is very low. What parameters can I adjust?

A: A low acceptance rate defeats the purpose of parallel tempering. The primary factor is the overlap in potential energy distributions between adjacent replicas [24].

- Solution: Adjust your temperature ladder. You may need to increase the number of replicas to decrease the temperature spacing between them, especially over temperature ranges where the system's energy changes rapidly. Alternatively, for some systems, tempering in other Hamiltonian parameters (e.g., interaction strengths) might be more efficient.

Thermodynamic Property Analysis Table

The following table summarizes key thermodynamic properties and structural features that can be extracted from PTMC simulations to study transitions, as demonstrated in studies of molecular and colloidal systems.

| Property to Calculate | Technical Method of Calculation | Interpretation of Results | Representative Example from Research |

|---|---|---|---|

| Heat Capacity (Cv) | Fluctuations in potential energy: ( Cv = \frac{ \langle E^2 \rangle - \langle E \rangle^2 }{kB T^2} ) | Peaks indicate major thermodynamic events like dissociation; shoulders suggest subtler structural transitions [3]. | Charged colloidal clusters show a dissociation peak at T=0.20 (reduced units) and lower-T shoulders for spiral-to-necklace transitions [3]. |

| Potential Energy | Direct average of the total energy from sampled configurations at each temperature: ( \langle E \rangle ) | A smooth, monotonic increase with temperature is expected. Sharp jumps can coincide with phase transitions. | Used to compute other derived properties like heat capacity and to monitor the equilibration of the simulation [3]. |

| State Populations | Decompose configuration space into metastable states (e.g., spherical, spiral, necklace) and calculate fractional population at each T [25]. | Reveals temperature-dependent stability of different structures. A crossover in population indicates a structural transition. | In SALR clusters, low-energy Bernal spirals are populated at low T, while linear, dissociated structures dominate at high T [3]. |

| Radial Distribution Function (RDF) | Average density of particles as a function of distance from a reference particle: ( g(r) ) | Reveals changes in short-range order and packing, useful for identifying melting or structural rearrangements. | Not explicitly detailed in results, but a standard analysis for structural changes in clusters and liquids. |

Essential Experimental Protocols

Protocol 1: Setting up a PTMC Simulation for a Biomolecular System

This protocol outlines the steps for simulating a peptide like Met-enkephalin [24].

- System Preparation: Obtain or generate the initial atomic coordinates for the molecule. Place it in a simulated solvent box and add counterions if necessary.

- Parameter Selection:

- Number of Replicas (M): Typically 8-64, depending on the system size and temperature range.

- Temperature Ladder (T1...TM): Choose a maximum temperature high enough to overcome major barriers (e.g., 600K for peptides). Space temperatures to achieve a 20-30% replica exchange acceptance rate [24].

- Energy Function: Use a realistic all-atom force field (e.g., AMBER, CHARMM). The total energy is typically ( E{tot} = E{es} + E{vdW} + E{hb} + E_{tors} ), covering electrostatics, van der Waals, hydrogen bonding, and torsion terms [24].

- Simulation Execution:

- Each replica is simulated in parallel at its assigned temperature using standard Monte Carlo (or Molecular Dynamics) updates.

- After a fixed number of steps (e.g., 100-1000), attempt a swap between configurations of adjacent replicas (e.g., Ti and Ti+1). The swap is accepted with probability ( A = min(1, exp[(\betai - \beta{i+1})(E(C{i+1}) - E(Ci))] ) ), where ( \beta = 1/k_B T ) [24].

- Data Harvesting: Save the trajectory of configurations for each replica for subsequent analysis.

Protocol 2: Analyzing Structural Transitions in Colloidal Clusters

This protocol is adapted from studies of charged colloidal clusters with SALR potentials [3].

- Interaction Potential Definition: Use an effective pair potential that captures the essential physics. A common choice is the sum of a short-range Morse attraction and a long-range Yukawa repulsion:

- Total Energy: ( V{cluster} = \sum{i=1}^{N(N-1)/2} [Va(ri) + Vr(ri)] )

- Morse Attraction: ( Va(ri) = \epsilonM e^{\rho (1 - ri/\sigma)} (e^{\rho (1 - ri/\sigma)} - 2) )

- Yukawa Repulsion: ( Vr(ri) = \epsilonY \frac{e^{-\kappa \sigma (ri / \sigma - 1)}}{ri / \sigma} )

- Parameters (example): ( \rho = 30 ), ( \epsilonM = 2.0 ), ( \sigma = 1.0 ), ( \kappa \sigma = 0.5 ), ( \epsilonY = 1.0 ) (reduced units) [3].

- Global Optimization: Perform a separate global optimization (e.g., using evolutionary algorithms) to identify the lowest-energy structures (e.g., Bernal spirals, beaded-necklaces).

- PTMC Simulation: Run a PTMC simulation as in Protocol 1, but for the cluster system.

- Post-Processing:

- Calculate the heat capacity as a function of temperature.

- Identify all saved configurations and classify them into structural families (e.g., based on their similarity to the global minima found in step 2).

- Plot the population of each structural family versus temperature to identify transition points corresponding to features in the heat capacity curve [3].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Computational Tool | Function in PTMC Research |

|---|---|

| Effective SALR Potential | Models the competing short-range attraction and long-range repulsion in colloidal systems, enabling the study of self-assembly into anisotropic structures like Bernal spirals [3]. |

| All-Atom Force Field | Provides a realistic energy function (( E_{tot} )) for biomolecules, accounting for electrostatic, van der Waals, hydrogen-bonding, and torsional interactions, which create the rough energy landscape PTMC aims to navigate [24]. |

| Markov State Model (MSM) | A framework for building a kinetic model of biomolecular dynamics from many short simulation trajectories, which can be enhanced using data reweighted from PTMC simulations [25]. |

| Dynamical Reweighting Algorithm | A computational technique that integrates data from all temperatures in a PT simulation to construct more accurate kinetic models at a specific temperature of interest [25]. |

Workflow and Signaling Diagrams

Frequently Asked Questions (FAQs)

1. What is the primary advantage of combining Parallel Tempering Monte Carlo with electronic structure calculations? This integration enables the efficient exploration of complex energy landscapes and accurate determination of thermodynamic properties for materials and molecular systems. PTMC enhances sampling across energetic barriers by simulating multiple replicas at different temperatures, while electronic structure methods provide the essential energy evaluations with quantum mechanical accuracy [3] [26].

2. My PTMC simulation is not converging. What could be wrong? Poor convergence often stems from an improperly chosen temperature set. Ensure temperatures span a sufficiently wide range to facilitate random walks between low-energy states and fully disordered configurations. For biomolecular systems, a typical range might be 270K to 370K, while materials science applications may require different ranges based on the energy scales involved [27].

3. How do I determine the appropriate number of replicas for my system? The number of replicas depends on system size and complexity. Start with 8-16 replicas, ensuring sufficient overlap between energy distributions at adjacent temperatures. Monitor acceptance ratios for replica exchanges; optimal values typically range between 20-40% [27] [26].

4. Can machine learning accelerate these integrated calculations? Yes, machine learning frameworks like MALA can dramatically accelerate electronic structure calculations by predicting electronic properties using neural networks trained on DFT data. This approach can achieve up to three orders of magnitude speedup, enabling simulations of systems with over 100,000 atoms that would be infeasible with conventional DFT [28] [29].

5. How do I verify the numerical accuracy of my electronic structure computations? Compare results across multiple electronic structure codes with different numerical implementations. For all-electron codes, total energies should agree within 10⁻⁶ using identical exchange-correlation functionals. Monitor convergence thresholds for density (typically 10⁻⁶) and total energy (10⁻⁸ Hartree/Rydberg) [30].

Troubleshooting Guides

Poor Replica Exchange Acceptance Rates

Symptoms:

- Replicas become trapped at specific temperatures

- Low acceptance rate for configuration exchanges between adjacent temperatures

Solutions:

- Adjust Temperature Spacing: Use a geometric progression for temperature distribution. For example: 270K, 280K, 295K, 310K, 325K, 340K, 355K, 370K [27]

- Increase Replica Count: Add more replicas to reduce gaps between temperature levels

- Modify Exchange Attempt Frequency: Adjust the

T_update_intervalparameter if using PROFASI or equivalent in other packages [27]

Inaccurate Energy Evaluations

Symptoms:

- Erratic thermodynamic averages

- Unphysical structural transitions in heat-capacity curves [3]

Solutions:

- Verify Electronic Structure Parameters:

- For all-electron codes: Check basis set completeness and convergence parameters [30]

- Ensure consistent treatment of exchange-correlation functionals across all replicas

- Validate Energy Differences: Compare energy differences between structures with multiple codes when possible [30]

Memory and Performance Issues in Large Systems

Symptoms:

- Excessive computational time for energy evaluations

- Memory limitations with increasing system size

Solutions:

- Implement Machine Learning Acceleration:

- Optimize Parallelization:

- Use multiplexing when available to enhance replica exchange efficiency [27]

- Distribute replicas across CPU cores with appropriate load balancing

Experimental Protocols

Standard Protocol for PTMC with Electronic Structure Calculations

System Preparation:

- Define the atomic system and initial configuration

- Select appropriate interaction potential or electronic structure method

- For colloidal systems, use established SALR potentials with Morse and Yukawa terms [3]

Temperature Setup:

- Determine temperature range covering relevant physical processes

- Create temperature set with geometric progression

- Verify sufficient overlap between adjacent distributions

Simulation Execution:

- Initialize all replicas with different configurations

- Set convergence criteria for electronic structure calculations

- Run parallel simulation with periodic replica exchange attempts

Data Collection:

- Monitor replica trajectories and exchange statistics

- Calculate thermodynamic observables (heat capacity, structural metrics)

- Verify convergence through multiple independent runs

Specialized Protocol for Large-Scale Systems with Machine Learning

Training Phase:

- Generate reference data with DFT calculations on small systems (256 atoms)

- Train neural networks to map atomic environments to local density of states [28]

- Validate model predictions against held-out DFT data

Production Phase:

- Apply trained ML model to predict electronic structure in PTMC

- Calculate energies and forces from predicted electronic properties

- Perform PTMC sampling with ML-accelerated energy evaluations

Computational Setups for Different System Types

Table 1: Representative Parameter Sets for Various Applications

| System Type | Temperature Range | Replicas | Electronic Structure Method | Key Parameters |

|---|---|---|---|---|

| Colloidal Clusters [3] | T=0.20 (reduced units) | 8-16 | SALR potential (Morse + Yukawa) | ρ=30, εM=2.0, εY=1.0 |

| Protein Folding [27] | 270K-370K | 8 | Force field or QM/MM | loglevel=10, numcycles=5M |

| Large Materials [28] [29] | System-dependent | 8-32 | MALA ML-DFT | Bispectrum descriptors, neural network inference |

| Metallic Systems [30] | Varies with lattice parameter | 8-16 | All-electron DFT (Wien2k/FPLO) | Rkmax=4-12, PBE functional |

Research Reagent Solutions

Table 2: Essential Computational Tools and Their Functions

| Tool/Software | Primary Function | Application Context |

|---|---|---|

| PROFASI [27] | Parallel Tempering implementation | Biomolecular simulations with replica exchange |

| MALA [28] [29] | Machine learning for electronic structure | Large-scale materials simulations beyond DFT limits |

| Wien2k [30] | All-electron DFT calculations | High-accuracy electronic structure reference data |

| FPLO [30] | Full-potential local-orbital method | Efficient all-electron calculations with precision |