Particle Swarm Optimization for Carbon Cluster Structures: From Theory to Biomedical Applications

This article explores the application of Particle Swarm Optimization (PSO) for determining the stable structures of carbon clusters (Cn).

Particle Swarm Optimization for Carbon Cluster Structures: From Theory to Biomedical Applications

Abstract

This article explores the application of Particle Swarm Optimization (PSO) for determining the stable structures of carbon clusters (Cn). As a powerful global optimization algorithm inspired by swarm intelligence, PSO effectively navigates the complex potential energy surfaces of these clusters to identify global minimum energy structures, which are critical for understanding their properties. We cover foundational concepts, detailed methodologies, common challenges with optimization strategies, and rigorous validation techniques. By comparing PSO with other global optimization methods and highlighting its integration with machine learning and quantum calculations, this review provides researchers and drug development professionals with a comprehensive guide to leveraging PSO for material design and biomedical applications, such as drug discovery and the development of new nanomaterials.

Understanding Carbon Clusters and the Power of Swarm Intelligence

Technical Troubleshooting Guides

This section addresses common experimental challenges in carbon cluster research, with a specific focus on investigations utilizing Particle Swarm Optimization (PSO).

FAQ 1: Why does my PSO simulation for carbon cluster structures converge on unrealistic or physically unstable configurations?

This is a common issue often related to the formulation of the fitness function or inadequate sampling of the complex potential energy surface.

- Issue: PSO convergence on unrealistic carbon clusters.

- Explanation: The stability of carbon clusters is highly sensitive to bonding hybridization (sp, sp2, sp3) and geometry. An inaccurate fitness function fails to properly penalize high-energy structures. Furthermore, standard PSO can get trapped in local minima on the complex energy landscape of carbon systems [1].

- Solution:

- Refine the Fitness Function: Incorporate multiple stability criteria beyond just total energy. Use a weighted fitness function that includes:

- Implement an Advanced PSO Variant: Use a more robust algorithm like the Integrated Learning PSO based on Clustering (ILPSO-C). This algorithm dynamically divides the population into subpopulations and includes a "stalled activation strategy" to help the search escape local optima, which is crucial for finding the true global minimum structure [3].

- Validate with Known Data: Benchmark your algorithm's output against well-established, small carbon clusters (e.g., C60, graphene flakes) whose properties are known from literature to verify the fitness function's accuracy [4] [1].

FAQ 2: How can I account for the formation of different carbon cluster hybridizations (sp, sp2, sp3) in my computational model?

The resulting hybridization is not an input but an output determined by simulation conditions and cluster size.

- Issue: Controlling for sp/sp2/sp3 hybridization in carbon clusters.

- Explanation: The hybridization state is emergent and depends on factors like cluster size and formation pathway. Theoretical studies show that initial aggregation density is a primary factor; low-density aggregation favors structures with mixed sp and sp2 hybridization, while high-density conditions lead to sp2-dominant molecules resembling polycyclic aromatic hydrocarbons (PAHs) or fullerene fragments [4].

- Solution:

- Systematic Composition Search: For a given cluster size (Cn), use the PSO algorithm to perform a global search for the lowest energy structure without presupposing hybridization. Tools like evolutionary algorithms (e.g., USPEX) coupled with Density Functional Theory (DFT) are commonly used for this purpose to map the energy landscape [2].

- Analyze Resultant Geometry: Once stable structures are identified, analyze their bond lengths and angles to determine the dominant hybridization state.

- Infer from Precursor Conditions: In experimental models, correlate computational initial conditions (like atomic density) with the resulting hybridization states observed in the simulations [4].

Discrepancies often arise from approximations in the computational method and the idealized nature of simulations versus real-world experiments.

- Issue: Discrepancy between predicted and experimental cluster properties.

- Explanation: Computational methods (like DFT) use functional approximations that can slightly shift predicted vibrational frequencies. Moreover, simulations often model perfect, isolated clusters at 0 K, whereas experiments may detect imperfect structures or clusters interacting with a substrate or solvent [4].

- Solution:

- Apply Scaling Factors: Use standard frequency scaling factors for your specific DFT functional to align theoretical IR spectra with experimental data.

- Model Imperfections: Be aware that imperfect, "messy" carbon nanostructures predicted by theory can have IR spectra that closely resemble those of perfect fullerenes (e.g., C70), leading to potential misidentification. Focus on the pattern of peaks rather than exact wavenumbers [4].

- Use Multiple Validation Metrics: Do not rely on a single property. Compare a suite of properties such as stability (from mass spectrometry), optical absorption, and structural data (from electron microscopy) to validate your models robustly [5].

Experimental Protocols & Workflows

This section outlines a standard methodology for studying carbon clusters, integrating computational prediction with experimental synthesis and validation.

Detailed Protocol: Prediction, Synthesis, and Characterization of Carbon Clusters

Objective: To computationally identify stable carbon cluster structures using an optimization algorithm and guide their experimental synthesis and characterization.

Part A: Computational Prediction of Stable Structures using PSO

Problem Definition:

- Define the search space for your carbon cluster, Cn, specifying the range of

n(number of carbon atoms) to be investigated. - Define the position and velocity of each "particle" in the swarm as a specific atomic configuration of the cluster.

- Define the search space for your carbon cluster, Cn, specifying the range of

Fitness Evaluation:

- For each particle's structure, perform a quantum chemical calculation (e.g., DFT) to compute its total energy.

- Calculate the fitness score using a multi-objective function:

Fitness = f(Binding Energy, HOMO-LUMO Gap, Fragmentation Energy)[2].

Swarm Optimization:

- Allow the particles to update their positions (structures) based on the standard PSO rules or a more advanced variant like ILPSO-C [3].

- Iterate until the population converges on a structure (or set of structures) with the highest fitness score, indicating a stable, low-energy configuration.

Structure Analysis:

- Analyze the geometric parameters (bond lengths, angles, ring types) and electronic properties of the predicted stable clusters.

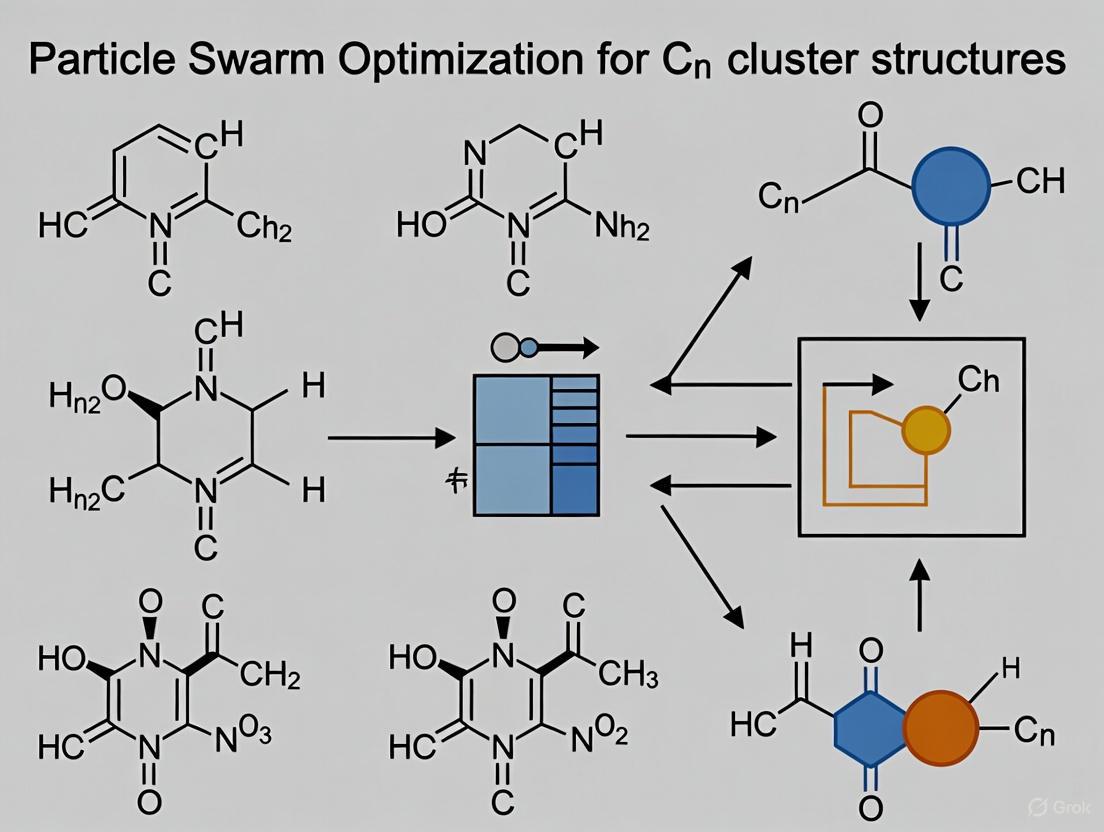

The following workflow diagrams the integrated computational and experimental process for carbon cluster research.

Part B: Experimental Synthesis and Characterization

Synthesis via Methane Pyrolysis [1]:

- Principle: Break methane gas at high temperatures to produce hydrogen and solid carbon clusters:

CH4 → 2H2 + C. - Procedure: Introduce high-purity CH4 into a high-temperature tube furnace (typically 1000-1500°C). Control the reaction time and temperature to influence the size and morphology of the resulting carbon clusters.

- Safety: Use appropriate gas handling equipment and high-temperature safety protocols. Ensure proper ventilation for H2 gas.

- Principle: Break methane gas at high temperatures to produce hydrogen and solid carbon clusters:

Extraction from Coal [1]:

- Principle: Use liquid phase exfoliation (LPE) with alkali metal intercalation to break down coal into graphene quantum dots and nanosheets, which are specific types of carbon clusters.

- Procedure: Mix and heat powdered coal with an alkali metal (e.g., potassium) in a solvent. The intercalation expands the coal layers, facilitating exfoliation into nano-sized carbon materials via sonication.

Characterization Techniques:

- Mass Spectrometry: Determines the mass-to-charge ratio of clusters, identifying "magic number" clusters with exceptional stability [5].

- Infrared (IR) Spectroscopy: Provides a fingerprint of the cluster's bonding and structure by measuring vibrational modes. Compare directly with computationally predicted spectra [4].

- Transmission Electron Microscopy (TEM): Used to visualize the morphology and size of the synthesized carbon clusters or nanoparticles [1].

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and their functions in carbon cluster research.

| Reagent/Material | Function in Carbon Cluster Research | Key Considerations |

|---|---|---|

| High-Purity Graphite | A common precursor for synthesizing carbon nanotubes and graphene via exfoliation or CVD methods [1]. | Purity and crystallinity affect the quality and defect density of the resulting nanomaterials. |

| Coal Feedstock | Abundant, low-cost carbon source for the environmentally friendly extraction of graphene quantum dots and nanosheets via LPE [1]. | The rank and composition of coal influence the yield and type of carbon nanomaterial produced. |

| Methane (CH₄) | Feedstock for methane pyrolysis, a method to produce hydrogen and various carbon materials, including carbon black and more ordered structures [1]. | Reaction temperature and catalyst use are critical for controlling the structure of the carbon co-product. |

| Alkali Metals (e.g., K) | Used as an intercalation agent to assist in the liquid phase exfoliation of layered carbon sources like coal or graphite [1]. | Highly reactive; requires handling in an inert atmosphere (e.g., glovebox). |

| Computational Reagents: DFT Functionals | The "reagents" in computational chemistry used to calculate the energy and electronic properties of candidate cluster structures [2] [5]. | The choice of functional (e.g., PBE) impacts the accuracy of predicted geometries and energies. |

| Reference Carbon Clusters (e.g., C₆₀) | Well-characterized clusters used as benchmarks to validate both computational methods and experimental synthesis pathways [4]. | Commercially available; serve as a standard for comparing stability and spectral features. |

Quantitative Data on Carbon Clusters

Key quantitative metrics for evaluating carbon cluster stability and properties are summarized below. These are critical for defining fitness functions in PSO.

| Metric | Formula / Description | Interpretation |

|---|---|---|

| Binding Energy per Atom | E_bind = [n*E(C) - E(Cn)] / n Where E(C) is the energy of a single C atom, and E(Cn) is the total energy of the cluster. |

A higher (more positive) value indicates a more stable cluster relative to its constituent atoms. |

| HOMO-LUMO Gap | Energy difference between the Highest Occupied and Lowest Unoccupied Molecular Orbitals. | A larger gap generally signifies higher kinetic stability and chemical inertness. |

| Fragmentation Energy | Energy required to split the cluster into two smaller fragments (e.g., E(Cn) -> E(Ck) + E(Cn-k)). |

Indicates the cluster's resistance to fragmentation; higher values mean greater stability. |

| Second-Order Energy Difference (Δ²E) | Δ²E(Cn) = E(Cn+1) + E(Cn-1) - 2*E(Cn) |

A peak in Δ²E for a specific cluster size n identifies it as a "magic number" cluster with exceptional stability. |

The Challenge of Global Optimization on Complex Potential Energy Surfaces

Within computational chemistry and materials science, determining the globally minimum energy structure of a cluster of atoms (Cₙ) represents a significant challenge. The potential energy surface (PES) of such systems is typically characterized by a vast, high-dimensional, and rugged landscape filled with numerous local minima. This technical support document, framed within the broader thesis research on "Particle swarm optimization for Cₙ cluster structures," provides targeted troubleshooting guides and FAQs to assist researchers in overcoming common experimental hurdles in this domain.

Particle Swarm Optimization (PSO) is a powerful population-based metaheuristic inspired by the collective behavior of bird flocking or fish schooling [6] [7]. Its application to global optimization on complex PESs involves simulating a swarm of "particles," where each particle represents a candidate cluster structure. These particles navigate the high-dimensional search space, iteratively adjusting their positions based on their own experience and the swarm's collective knowledge to locate the global minimum energy configuration [8].

Researcher FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

FAQ 1: What makes PSO particularly suitable for locating global minima on complex Cₙ PESs compared to other optimization algorithms?

PSO offers several advantages for this specific problem. Its population-based nature allows it to explore a wide area of the PES concurrently, reducing the chance of becoming trapped in a single local minimum. The algorithm efficiently balances exploration (searching new regions) and exploitation (refining known good areas) through its velocity update mechanism [7]. Furthermore, PSO is a derivative-free algorithm, making it highly suitable for complex PESs where analytical gradients are difficult or expensive to compute. Its simplicity, ease of implementation, and relatively few tuning parameters also contribute to its popularity for navigating high-dimensional, rugged landscapes [6] [8].

FAQ 2: How do I handle the discrete nature of atomic positions when using PSO, which is inherently a continuous optimization algorithm?

While standard PSO operates in a continuous space, the problem of cluster optimization often involves discrete or permutation-related configurations. In such cases, researchers typically employ modified PSO variants. A common approach is to use a real-valued PSO for optimization but map the continuous particle position to a specific cluster structure for energy evaluation. For problems inherently requiring discrete solutions, Binary PSO variants exist, which interpret particle positions as probabilities for a variable to be 0 or 1 [7]. For Cₙ cluster structures, the continuous representation is often retained to describe atomic coordinates, with the objective function (e.g., from a quantum chemistry calculation) handling the discrete nature of atomic interactions.

FAQ 3: My PSO simulation appears to be converging prematurely to a local minimum. What strategies can I employ to enhance global exploration?

Premature convergence is a common challenge. Several strategies can mitigate this:

- Parameter Adjustment: Increase the inertia weight (

w) to promote exploration [7]. Alternatively, use a time-decreasing inertia weight, starting high (e.g., 0.9) and reducing it to a lower value (e.g., 0.4) over iterations. - Topology Modification: Switch from a global best (gBest) topology to a local best (lBest) topology. In an lBest topology, a particle is influenced only by a subset of its neighbors, which helps maintain swarm diversity and delays premature convergence [7].

- Hybridization: Incorporate a local search operator or a mutation strategy, similar to those in evolutionary algorithms. For instance, an adaptive Gaussian mutation can be applied to a subset of particles to help them escape local optima [9].

- Re-initialization: Trigger a partial re-initialization of the swarm if stagnation is detected for a certain number of iterations.

FAQ 4: What are the essential components of a fitness function for Cₙ cluster structure optimization?

The fitness function is critical and is typically the total energy of the cluster system. The key components are:

- Energy Calculation: The total potential energy of the cluster, computed using empirical potentials (e.g., Lennard-Jones, Gupta) or, for higher accuracy, quantum mechanical methods like Density Functional Theory (DFT). This is the primary term to be minimized.

- Constraints: Penalty terms to handle physical constraints. For example, a penalty can be added if atoms get too close, preventing unphysically high repulsive energies, or to enforce a specific cluster size.

- Optional Descriptors: In multi-objective formulations, other descriptors like stability, electronic properties, or symmetry measures can be included [10] [9].

Troubleshooting Common Experimental Issues

Issue 1: Poor Convergence or Stagnating Performance

- Symptoms: The algorithm's best fitness value does not improve over many iterations, or the swarm diversity collapses rapidly.

- Potential Causes and Solutions:

- Cause: Incorrectly set PSO parameters (e.g., too low inertia, high social component).

- Solution: Refer to Table 1 for parameter effects and tuning guidance. Perform a parametric sweep on a smaller, simpler system to find robust values.

- Cause: The swarm size is too small for the complexity of the PES.

- Solution: Increase the swarm size. A typical range is 20-50 particles, but this may need to be larger for high-dimensional Cₙ clusters [7].

- Cause: Lack of diversity in the initial population.

- Solution: Improve the initialization scheme. Instead of purely random structures, consider initializing with a mix of random geometries and known stable motifs for the material.

- Cause: Incorrectly set PSO parameters (e.g., too low inertia, high social component).

Issue 2: Unphysically High Energy or Unstable Cluster Structures

- Symptoms: The computed energy for candidate structures is abnormally high, or the optimization frequently produces fragmented or disordered clusters.

- Potential Causes and Solutions:

- Cause: Inadequate handling of boundary conditions, allowing atoms to drift too far apart.

- Solution: Implement a boundary condition strategy. Common methods include: (1) Absorbing Walls: If a particle leaves the boundary, its position is clamped to the boundary, and velocity is set to zero. (2) Reflecting Walls: The particle is reflected back into the search space, and its velocity component is reversed. (3) Invisible Walls: Particles that leave the feasible space are assigned a very poor fitness, naturally driving the swarm away from the boundaries [7].

- Cause: The potential energy model or calculator is failing for certain atomic configurations.

- Solution: Implement sanity checks in the fitness function to detect and penalize unphysical configurations before passing them to the energy calculator.

- Cause: Inadequate handling of boundary conditions, allowing atoms to drift too far apart.

Issue 3: Prohibitively Long Computation Time

- Symptoms: A single PSO run takes an impractically long time to complete, hindering research progress.

- Potential Causes and Solutions:

- Cause: The energy evaluation (e.g., a DFT calculation) is computationally expensive.

- Solution: Use a multi-fidelity approach. Begin optimization with a fast, empirical potential to locate promising regions, then switch to a higher-fidelity method for final refinement. Also, ensure energy evaluations are parallelized across the swarm.

- Cause: The number of PSO iterations or swarm size is too high.

- Solution: Set rational stopping criteria, such as a maximum number of iterations, a target fitness value, or convergence based on a lack of improvement over a set number of steps [7].

- Cause: The energy evaluation (e.g., a DFT calculation) is computationally expensive.

Table 1: Key PSO Parameters and Tuning Guidelines for PES Optimization

| Parameter | Description | Effect on Search | Recommended Tuning for Cₙ PES |

|---|---|---|---|

| Swarm Size | Number of particles in the swarm. | Small swarms converge fast but risk premature convergence. Large swarms explore better but are computationally costly. | Start with 20-50; increase for larger clusters (n) or more complex PESs [7]. |

| Inertia Weight (w) | Controls the momentum of the particle. | High w (~0.9) favors exploration. Low w (~0.4) favors exploitation. | Use a time-decreasing strategy (e.g., from 0.9 to 0.4) to transition from global to local search [7]. |

| Cognitive Coefficient (c₁) | Weight for the particle's own best experience. | High c₁ promotes independent exploration and diversity. | Typically set between 1.5-2.0. Increase if swarm converges prematurely [7]. |

| Social Coefficient (c₂) | Weight for the swarm's best experience. | High c₂ promotes convergence to the global best. | Typically set between 1.5-2.0, often equal to c₁. Increase to accelerate convergence [7]. |

| Velocity Clamping (vₘₐₓ) | Limits the maximum particle velocity per dimension. | Prevents particles from overshooting and destabilizing. | Set to a fraction (e.g., 10-20%) of the dynamic range of each search dimension [7]. |

Experimental Protocols & Workflows

Standard Protocol for PSO-based Cₙ Cluster Optimization

This protocol provides a detailed methodology for a standard PSO run aimed at finding the global minimum energy structure of a Cₙ cluster.

1. Problem Definition and Initialization:

- Define Search Space: Determine the bounds for atomic coordinates. This is typically a cubic box with a side length proportional to the cluster size to allow sufficient space for exploration.

- Initialize Swarm: For each of the

Sparticles, generate an initial position vector. This is often done by randomly placingnatoms within the defined search space. Velocities are initialized to zero or with small random values. - Set Hyperparameters: Choose the swarm size (

S), inertia weight (w), cognitive and social coefficients (c₁,c₂), and maximum iterations based on guidelines in Table 1.

2. Energy Evaluation:

- For each particle's position (a candidate cluster structure), compute the total potential energy using a chosen method (e.g., an empirical potential or DFT). This energy value is the particle's fitness.

3. Main PSO Loop:

- Update Personal and Global Bests: Compare each particle's current fitness with its personal best (

pBest). If the current position is better, updatepBest. Identify the bestpBestin the swarm as the global best (gBest). - Update Velocities and Positions: For each particle

i, update its velocityv[i]and positionx[i]using the standard PSO equations [7]:v[i] = w * v[i] + c₁ * r₁ * (pBest[i] - x[i]) + c₂ * r₂ * (gBest - x[i])x[i] = x[i] + v[i](Here,r₁andr₂are random vectors between 0 and 1.)

- Apply Boundary Conditions: Check and adjust any particles that have moved outside the predefined search space using one of the methods described in the troubleshooting guide.

- Re-evaluate Fitness: Compute the energy for the new particle positions.

4. Termination and Analysis:

- The loop repeats until a stopping criterion is met (e.g., maximum iterations, convergence threshold).

- The final

gBestposition is reported as the predicted global minimum energy structure, which must be carefully validated.

The following workflow diagram visualizes this iterative process:

Protocol for a Multi-Objective PSO (MOPSO) for Stable Cluster Design

For problems involving multiple conflicting objectives, such as minimizing energy while maximizing stability or a specific electronic property, a multi-objective approach is required.

1. Extended Initialization:

- Define Multiple Objectives: Formally define all objective functions (e.g.,

f₁(S) = energy(M(S)),f₂(S) = -HOMO-LUMO gapfor stability) [10] [9]. - Initialize an Archive: Create an external archive to store non-dominated solutions (the Pareto front).

2. Modified Main Loop:

- Fast Non-Dominated Sorting: Rank particles in the swarm based on Pareto dominance [9].

- Update Leaders: Select a global guide for each particle from the non-dominated solutions in the archive, often using a crowding distance measure to promote diversity.

- Velocity & Position Update: Proceed similarly to standard PSO, but using the selected leader.

- Update Archive: Add newly found non-dominated solutions to the archive and remove any that become dominated.

3. Result:

- The output is not a single solution but a set of Pareto-optimal solutions, representing the best trade-offs between the defined objectives.

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential computational "reagents" and tools required for conducting PSO-based research on Cₙ cluster structures.

Table 2: Essential Research Reagents & Computational Tools

| Item / Tool | Function / Description | Application in Cₙ PSO Research |

|---|---|---|

| Potential Energy Function | A mathematical function describing the interaction between atoms. | The core of the fitness evaluation. Can be an empirical potential (e.g., Tersoff, REBO for carbon) for speed, or a quantum mechanical method (DFT) for accuracy. |

| PSO Algorithm Core | The software implementation of the PSO logic. | Drives the optimization process. Can be custom code (e.g., in Python, MATLAB) or a library (e.g., PySwarms). Must be adaptable to the problem. |

| Geometry Analysis Scripts | Codes for analyzing cluster properties. | Used to validate results. Calculate properties like bond lengths, coordination numbers, radial distribution functions, and common symmetry metrics. |

| Visualization Software | Tools for rendering atomic structures. | Critical for interpreting and validating the final cluster geometries (e.g., VMD, Ovito, Jmol). |

| High-Performance Computing (HPC) Cluster | A network of computers for parallel processing. | Essential for computationally expensive energy evaluations (like DFT). Allows parallel evaluation of the entire particle swarm. |

Core Principles and Algorithm FAQ

Frequently Asked Questions

Q1: What is the core biological inspiration behind Particle Swarm Optimization? Particle Swarm Optimization (PSO) is a computational method inspired by the collective intelligence and social behavior observed in nature, such as bird flocking or fish schooling. The algorithm simulates how individuals in a group share information to benefit the collective, allowing the swarm to find optimal locations in a search space [11] [12] [13].

Q2: What are the key components and parameters of the PSO algorithm I need to configure? The PSO algorithm relies on a few key components and parameters that control the swarm's behavior. Proper configuration is crucial for balancing the exploration of the search space and the exploitation of known good areas. The core parameters are summarized in the table below [11] [12].

| Parameter | Description | Role and Impact on Optimization |

|---|---|---|

| Inertia Weight (w) | Influences the particle's momentum by retaining a fraction of its previous velocity. | A higher weight promotes global exploration, while a lower weight favors local exploitation and fine-tuning [11]. |

| Cognitive Coefficient (c1) | Determines the influence of a particle's own best-known position (pBest). | Higher values encourage particles to return to their personal best areas, fostering individual learning [11]. |

| Social Coefficient (c2) | Determines the influence of the swarm's best-known position (gBest). | Higher values pull particles toward the global best, promoting social learning and collaboration [11]. |

| Swarm Size | The number of particles (candidate solutions) in the swarm. | Larger swarms can explore more space but increase computational cost. A common starting point is 20-40 particles [11]. |

| Position (x) | A vector that represents a candidate solution in the search-space. | The algorithm's goal is to find the position that yields the best value for the objective function [12]. |

| Velocity (v) | A vector that determines the direction and speed of a particle's movement. | It is updated each iteration based on the inertia, cognitive, and social components [12]. |

- Q3: What is the basic procedure for running a PSO simulation? A standard PSO workflow can be visualized as a cyclic process of initialization, evaluation, and update, as shown in the following workflow.

The corresponding mathematical update equations are:

- Velocity Update:

v[t+1] = w * v[t] + c1 * r1 * (pBest[t] - x[t]) + c2 * r2 * (gBest[t] - x[t])[11] - Position Update:

x[t+1] = x[t] + v[t+1][11] Here,r1andr2are random numbers between 0 and 1, which introduce a stochastic element to the search [12].

Troubleshooting Common Experimental Issues

Frequently Asked Questions

Q4: My PSO simulation is converging to a local optimum, not the global one. How can I improve exploration? Premature convergence is a common challenge. You can address it by:

- Adjusting Parameters: Temporarily increase the Inertia Weight (w) and the Cognitive Coefficient (c1) to encourage more exploration and individual particle movement [11].

- Changing Swarm Topology: Switch from a global best (gbest) topology to a local best (lbest) topology, such as a ring topology. This slows down information propagation and helps prevent the entire swarm from prematurely clustering around a single local optimum [11] [12].

- Using Adaptive PSO: Implement an Adaptive PSO (APSO) variant that can automatically adjust parameters like the inertia weight during the run to balance exploration and exploitation based on feedback from the search process [12] [14].

Q5: The optimization process is too slow. How can I improve convergence speed? Slow convergence can be mitigated by:

- Parameter Tuning: Reducing the inertia weight can help focus the search and accelerate convergence in later stages. Ensuring your social and cognitive coefficients are well-balanced is also key [11].

- Swarm Size: While a larger swarm explores more, it also costs more. For initial tests, try a moderate swarm size (e.g., 20-40 particles) [11].

- Hybrid Algorithms: Consider combining PSO with a local search method. The global search capability of PSO can be used to find promising regions, after which a efficient local search algorithm can quickly find the precise local optimum [15].

Q6: How do I handle complex, real-valued optimization of molecular structures with PSO? For optimizing molecular structures like carbon clusters, the standard PSO algorithm operates in a continuous search space defined by atomic coordinates (

R^3N). The key is to define a suitable objective function, such as the potential energy of the molecular system, which the PSO algorithm will then minimize [16] [17]. The experimental setup for such a problem requires specific reagents and computational tools, as outlined below.

Research Reagent Solutions for Carbon Cluster Studies

| Item Name | Function / Explanation |

|---|---|

| Harmonic (Hooke's) Potential | A model potential energy function used to represent atomic bonds as springs, providing a computationally efficient way to approximate interatomic forces and calculate the system's energy during the initial global optimization stage [17]. |

| Density Functional Theory (DFT) | A high-accuracy computational method used for calculating the electronic structure of atoms, molecules, and solids. It is often used for final, precise energy evaluations and geometry refinements after PSO has identified a candidate low-energy structure [16] [17]. |

| Basin-Hopping (BH) Algorithm | A metaheuristic global optimization method used for performance comparison and validation of the structures found by the PSO algorithm [17]. |

| Global Minimum Search | The primary objective of applying PSO in this context is to find the atomic configuration with the lowest possible potential energy, which corresponds to the most stable structure of the cluster [17]. |

Experimental Protocol: PSO for Cn Cluster Global Minimum Search

This protocol outlines the methodology for using Particle Swarm Optimization to find the global minimum energy structure of a carbon cluster (C~n~), as applied in recent research [16] [17].

1. Objective Definition

- Goal: Find the atomic coordinates that represent the global minimum on the Potential Energy Surface (PES) for a given carbon cluster C~n~.

- Search Space: A hyperdimensional space

R^3N, where N is the number of atoms in the cluster. Each particle's position vector in the swarm represents a complete set of 3D coordinates for all N atoms.

2. Algorithm Initialization

- Swarm Generation: Initialize a population of S particles (e.g., 20-50). Each particle is assigned a random position within the defined boundaries of the search space.

- Velocity Initialization: Initialize each particle's velocity vector randomly within a specified range.

- Personal Best (pBest): Set each particle's initial position as its personal best.

- Global Best (gBest): Identify the particle with the lowest energy and set its position as the global best for the swarm.

3. Iterative Optimization Loop Repeat the following steps for a maximum number of iterations or until convergence is achieved.

- Energy Evaluation: For each particle, calculate the potential energy of the molecular configuration it represents. For a preliminary and fast search, this can be done using a Harmonic Potential. For higher accuracy, single-point energy calculations with Density Functional Theory (DFT) software like Gaussian can be interfaced [16].

- Update pBest and gBest: Compare the current energy of each particle with its pBest and the swarm's gBest. Update these values if a better (lower energy) position is found.

- Update Velocity and Position: Apply the standard PSO update equations to move each particle through the search space.

4. Validation and Analysis

- Structure Comparison: Compare the final gBest structure (lowest energy found) with known structures from literature or databases.

- Method Benchmarking: Validate the result by comparing it against structures found using other global optimization methods like Basin-Hopping (BH) [17].

- DFT Refinement: Use the PSO-found structure as a starting point for a final, precise geometry optimization using DFT to obtain accurate energy and structural parameters.

The relationship between the PSO search and the energy landscape it navigates is crucial for understanding its performance, as illustrated in the following diagram.

Why PSO is Suited for Carbon Cluster Structure Prediction

Frequently Asked Questions (FAQs)

Q1: Why is Particle Swarm Optimization (PSO) particularly effective for finding the global minimum structures of carbon clusters compared to other methods?

PSO is highly effective for carbon cluster structure prediction because it efficiently navigates the complex, high-dimensional Potential Energy Surface (PES) to find the global minimum (GM). The PES of carbon clusters is characterized by a vast number of local minima that grows exponentially with the number of atoms [17] [18]. Unlike local optimization methods (e.g., steepest descent) which get trapped in local minima, or some other stochastic methods, PSO uses a population-based search that balances broad exploration of the search space with focused exploitation of promising regions [14] [16]. Particles in the swarm share information, collectively following the best-known positions (pbest and gbest), which allows the algorithm to overcome energy barriers and converge to the GM with a lower computational cost, making it superior for this specific problem [17] [14].

Q2: My PSO simulation for a C10 cluster is converging to a local minimum too quickly. How can I improve the exploration of the search space? Premature convergence is often linked to an improper balance between exploration and exploitation. You can address this by:

- Adjusting the Inertia Weight (

w): Use a dynamically decreasing inertia weight. Start with a higher value (e.g., 0.9-1.2) to encourage global exploration and gradually reduce it to facilitate local exploitation and convergence [19] [20]. - Modifying Cognitive (

c1) and Social (c2) Parameters: Temporarily increase the cognitive parameterc1to make particles rely more on their own best-known position, enhancing exploration. Alternatively, implement adaptive parameter control strategies [19]. - Implementing Chaotic Maps: Replace the standard random number generators in the velocity update with chaotic maps (e.g., Singer, sinusoidal). These maps provide better ergodicity and randomness, helping the swarm escape local minima more effectively [19].

Q3: The energy evaluations using DFT in my PSO loop are computationally very expensive. Are there any strategies to reduce this cost? Yes, integrating a two-stage local search (2LS) method can significantly reduce computational cost. Instead of performing a full, expensive local search (e.g., using BFGS) for every particle update, the algorithm first performs a short, cheap local search. Only those solutions that show improved energy in the first stage proceed to the second stage for a full local optimization. This approach focuses computational resources on the most promising candidates and can lead to a threefold acceleration in finding the optimal solution [19].

Experimental Protocol: PSO for Carbon Cluster (Cn) Global Minimum Search

This protocol outlines the key steps for employing a modified PSO algorithm to locate the global minimum energy structure of small carbon clusters (Cn, n=3–6, 10), as demonstrated in recent literature [17] [14] [16].

1. Algorithm Initialization

- Swarm Generation: Initialize a population (swarm) of particles. Each particle represents a candidate structure for the carbon cluster, with its position in 3N-dimensional space (where N is the number of atoms) corresponding to the atomic coordinates [17].

- Parameter Setting: Set the PSO control parameters. Common initial values are an inertia weight

wof 0.9, and cognitive (c1) and social (c2) parameters both set to 2.0. The swarm size is typically chosen based on the system's complexity [14] [19].

2. Iterative Optimization Loop

The core of the algorithm proceeds iteratively until a convergence criterion is met (e.g., no improvement in gbest for a set number of iterations).

- Energy Evaluation: For each particle's position, calculate the potential energy. This can be done using a fast, approximate potential (like a harmonic/Hookeian potential) for initial screening, or directly with a more accurate method like Density Functional Theory (DFT) for higher precision [17] [14].

- Update Personal and Global Bests (

pbestandgbest): Compare the current energy of each particle to its best-known energy (pbest). Updatepbestand its position if the current energy is lower. Then, identify the bestpbestenergy and position in the entire swarm as the global best (gbest) [17]. - Update Velocity and Position: For each particle, update its velocity and position using the standard PSO equations:

3. Structure Refinement and Validation

- Local Optimization: Once the PSO algorithm converges, perform a final local geometry optimization on the putative global minimum structure using a high-level quantum chemical method (e.g., DFT in Gaussian 09/16) to refine the structure and energy [17].

- Validation: Compare the optimized bond lengths, angles, and energy with experimental data (e.g., from X-ray diffraction) and results from other global optimization methods like Basin-Hopping (BH) to validate the prediction [17].

Table 1: Comparison of Global Optimization Methods for Carbon Clusters

| Method | Type | Key Mechanism | Suitability for Carbon Clusters |

|---|---|---|---|

| Particle Swarm Optimization (PSO) | Stochastic, Population-based | Social learning via pbest and gbest; updates velocity/position [17] [18]. |

High; efficient in high-dimensional space; low computational cost; good for small clusters (C3-C10) [17] [14]. |

| Genetic Algorithm (GA) | Stochastic, Population-based | Natural selection via crossover, mutation, and selection [18]. | Medium; can be slower than PSO due to complex operations like crossover [14]. |

| Basin-Hopping (BH) | Stochastic | Transforms PES into a staircase of local minima; uses Monte Carlo steps to jump between basins [17] [18]. | Medium-High; effective but can be computationally expensive compared to PSO for some systems [17] [14]. |

| Simulated Annealing (SA) | Stochastic | Mimics annealing process; accepts worse solutions with a probability that decreases over "temperature" [18]. | Medium; can be less efficient than population-based methods for complex landscapes [14]. |

Table 2: Optimized Structural Parameters for Selected Carbon Clusters

| Cluster | Putative Global Minimum Structure | Key Bond Length(s) (Å) | Notes / Reference Method |

|---|---|---|---|

| C3 | Linear | ~1.30 (C-C) [17] | Validated against BH/DFT calculations [17]. |

| C4 | Linear / Rhombus | Varies by structure | PSO successfully finds both linear and cyclic isomers [14]. |

| C5 | Linear / Cyclic | Varies by structure | PSO identifies the linear structure as the global minimum [14]. |

| C6 | Linear / Cyclic | Varies by structure | PSO is effective for locating the cyclic ground state [14]. |

| C10 | Cyclic | Varies by structure | Modified PSO with DFT integration reliably finds the stable cyclic ring [14] [16]. |

Research Reagent Solutions

Table 3: Essential Computational Tools for PSO-based Structure Prediction

| Item | Function in the Experiment | Example / Note |

|---|---|---|

| PSO Algorithm Code | The core optimizer that drives the global search for the minimum energy structure. | Custom code in Fortran 90 or Python [17]. Modified versions integrate with DFT software [14] [16]. |

| Quantum Chemistry Software | Provides accurate energy and force calculations for a given molecular geometry. | Gaussian 09/16 [17] [14]. Used for single-point energy calculations and final structure refinement. |

| Approximate Potential | A fast, less accurate potential used for initial screening to reduce computational cost. | Harmonic (Hooke's law) potential between atoms [17]. |

| Local Optimizer | A local search algorithm used to refine particles to the nearest local minimum on the PES. | BFGS (Broyden–Fletcher–Goldfarb–Shanno) algorithm [19]. |

| Visualization Software | Used to visualize and analyze the predicted cluster geometries. | VESTA, Avogadro, or GaussView [17]. |

Workflow Diagram

PSO Workflow for Carbon Clusters

Key Metrics for Evaluating Cluster Stability and Algorithm Performance

Frequently Asked Questions

What are the most reliable metrics for evaluating cluster stability, and how do they differ?

Several metrics are essential for evaluating cluster stability, each providing unique insights. The Silhouette Score measures how similar an object is to its own cluster compared to other clusters, with scores ranging from -1 to 1; values near 1 indicate well-separated clusters [21]. The Davies-Bouldin Index calculates the average similarity between each cluster and its most similar one, where lower values indicate better cluster separation [21]. The Calinski-Harabasz Index assesses the ratio of between-cluster dispersion to within-cluster dispersion, and higher scores signify tight, well-separated clusters [21]. For comparing clusterings against a ground truth, the Adjusted Rand Index measures the similarity between two data clusterings, with a score of 1 indicating perfect agreement [21]. In time-series clustering, the Cluster Over-Time Stability Evaluation (CLOSE) toolkit specifically examines the temporal stability of clusters, which is vital for data where the temporal aspect is important [22].

How can I assess clustering results when no ground truth labels are available?

When true labels are unknown, you must rely on internal validation metrics that evaluate the clustering based on the data's intrinsic properties. The Silhouette Score, Davies-Bouldin Index, and Calinski-Harabasz Index are all prominent examples of internal measures [21]. Furthermore, stability-based methods like the Proportion of Ambiguously Clustered Pairs (PAC) and Element-Centric Clustering Similarity (ECS) are particularly powerful. PAC uses consensus clustering to measure how often pairs of elements are co-clustered across multiple iterations; a lower PAC value indicates a more stable clustering [23]. ECS provides a per-observation measure of clustering similarity by analyzing a cluster-induced element graph, which helps avoid pitfalls of other similarity measures [23].

My PSO-clustering algorithm converges prematurely. What strategies can improve its performance?

Premature convergence in PSO is a known challenge, often caused by a rapid loss of population diversity [24]. Effective strategies to address this include:

- Hybrid Algorithms: Combine PSO with other methods. The HPE-PSOC algorithm uses a hybrid population replacement mechanism that integrates K-means, Gaussian Distribution Estimation, and XK-means into the PSO evolutionary process. This maintains population diversity and prevents premature convergence [24].

- Adaptive PSO (APSO): Implement adaptive mechanisms that control parameters like inertia weight and acceleration coefficients during runtime. APSO can also act on the globally best particle to help it jump out of local optima [12] [25].

- Improved Initialization: Generate the initial population of particles using a method like K-means preliminary partitioning followed by random sampling within clusters. This expands the coverage and improves the distribution quality of initial solutions [24].

What is a common pitfall in cluster validation, and how can it be avoided?

A critical pitfall is relying on a single metric or a single run of a clustering algorithm. Different metrics capture different aspects of cluster quality (e.g., separation, compactness, stability), and a single run may not be representative, especially for stochastic algorithms like PSO.

To avoid this, adopt a comprehensive validation strategy:

- Use multiple internal validation metrics (Silhouette Score, Davies-Bouldin, etc.) to get a balanced view [21].

- Employ stability assessment methods like PAC to evaluate the robustness of your clusters across multiple algorithm runs or subsamples of your data [23].

- For PSO-based clustering, run the algorithm multiple times with different random seeds and compare the consistency of the results using the mentioned metrics.

Troubleshooting Guides

Problem: Inconsistent Clustering Results Across Successive PSO Runs

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Premature Convergence | Plot the objective function value over iterations. A flatline early on suggests premature convergence. | Implement a hybrid algorithm like HPE-PSOC [24] or use Adaptive PSO (APSO) to maintain swarm diversity [12]. |

| Poor Parameter Tuning | Systematically vary PSO parameters (inertia weight, cognitive/social coefficients) and observe the variance in outcomes. | Use meta-optimization techniques to tune PSO parameters or employ parameter sets recommended in literature for clustering tasks [12] [25]. |

| Algorithm Sensitivity | Calculate stability metrics like Element-Centric Consistency across multiple runs [23]. | Increase the number of independent runs and use consensus clustering to derive a final, stable result. |

Problem: Poor Cluster Quality Metrics (e.g., Low Silhouette Score)

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect Number of Clusters (k) | Calculate metrics like the Silhouette Score or PAC for a range of k values and plot them to find the optimum [21] [23]. | Use the k value that corresponds to a local optimum in the metric (e.g., a peak for Silhouette Score, a valley for PAC). |

| Presence of Noise/Outliers | Perform a visual check using dimensionality reduction (e.g., PCA) and check for points that are distant from all clusters. | Use clustering algorithms robust to noise, such as DBSCAN [26], or incorporate an outlier detection method like the one enabled by the CLOSE toolkit [22]. |

| Unsuitable Distance Metric | Evaluate the data's inherent geometry. Standard Euclidean distance may not work for all data shapes. | Experiment with alternative metrics like DTW for time series [22] or use algorithms designed for non-flat geometries, like Spectral Clustering [26]. |

Quantitative Data on Clustering Metrics

Table 1: Characteristics of Key Clustering Evaluation Metrics

| Metric | Optimal Value | Score Range | Requires Ground Truth? | Primary Use Case |

|---|---|---|---|---|

| Silhouette Score [21] | Maximize (→1) | -1 to 1 | No | General cluster cohesion and separation |

| Davies-Bouldin Index [21] | Minimize (→0) | 0 to ∞ | No | Cluster compactness and separation |

| Calinski-Harabasz Index [21] | Maximize | 0 to ∞ | No | Identifying optimal k with variance ratio |

| Adjusted Rand Index [21] | Maximize (→1) | -1 to 1 | Yes | Comparing against a known benchmark |

| PAC [23] | Minimize (→0) | 0 to 1 | No | Measuring clustering stability |

| Element-Centric Similarity [23] | Maximize (→1) | 0 to 1 | No | Per-element comparison of clusterings |

Table 2: Performance of PSO in a Practical Application (VLC Localization) [27]

| Localization Method | Average Error (at 1m height) | Key Finding |

|---|---|---|

| Trilateration without PSO | 11.7 cm | Baseline method shows significant error. |

| Trilateration with PSO | 0.5 cm | PSO optimization drastically improves accuracy by over 95%. |

Experimental Protocols

Protocol 1: Evaluating Cluster Stability Using the Proportion of Ambiguously Clustered Pairs (PAC)

Objective: To determine the most stable number of clusters (k) for a dataset using the PAC metric [23].

- Input: A dataset (if large, first create a geometric sketch of <1000 elements).

- Consensus Clustering: For a candidate k, perform a large number (e.g.,

n_reps = 100) of hierarchical clusterings, each on a resampled or perturbed version of the data. - Compute Co-clustering Rates: For each pair of elements, record the proportion of times they were assigned to the same cluster across all

n_repsiterations. This creates a consensus matrix. - Calculate PAC: The PAC is the fraction of element pairs whose co-clustering rate is between a lower (e.g., 0.1) and upper (e.g., 0.9) bound. A low PAC indicates that most pairs are either always or never clustered together, implying stability.

- Convergence Check: Ensure the PAC value has stabilized by plotting it against the number of iterations.

- Iterate: Repeat steps 2-5 for a range of k values.

- Interpretation: The k with the lowest PAC value in the resulting landscape is considered the most stable.

Stability Assessment with PAC

Protocol 2: Integrating PSO with K-means for Enhanced Clustering (HPE-PSOC)

Objective: To cluster data using an enhanced PSO algorithm that mitigates premature convergence and handles empty clusters effectively [24].

- Initialization: Use K-means for a preliminary data partitioning. Then, generate the initial PSO population by applying random sampling within each K-means cluster. This produces high-quality, diverse initial particles.

- PSO Evolution: Run the standard PSO algorithm, where each particle's position represents a set of cluster centroids.

- Hybrid Population Replacement: Periodically, replace poorly performing particles in the swarm. Generate new particles by adaptively combining information from:

- The personal best (

Pbest) and global best (Gbest) of the swarm. - Centroids from a K-means run on the current data.

- Centroids from a Gaussian Distribution Estimation.

- Centroids from XK-means. This mechanism maintains diversity.

- The personal best (

- Empty Cluster Correction: If a particle's solution contains an empty cluster, activate the dynamic correction strategy. This typically involves splitting the worst-performing (largest or least compact) existing cluster to reassign points to the empty one.

- Termination: Repeat until convergence (e.g., no significant improvement in the objective function) or a maximum number of iterations is reached.

- Validation: Evaluate the final

Gbestsolution using internal metrics like Silhouette Score or Davies-Bouldin Index.

Enhanced PSO Clustering Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for PSO and Cluster Analysis

| Tool / Solution | Function / Role | Key Feature / Application |

|---|---|---|

| CLOSE Toolkit [22] | Evaluates temporal stability of clusters in time-series data. | Enables parameter selection and outlier detection for time-dependent clustering. |

| ClustAssess R Package [23] | Assesses clustering robustness via PAC and Element-Centric Similarity. | Optimized for single-cell RNA-seq data but generalizable to other domains. |

| Scikit-learn Library [26] | Provides implementations of classic clustering algorithms (K-means, DBSCAN) and evaluation metrics. | Industry-standard for practical machine learning; includes Silhouette Score and others. |

| HPE-PSOC Algorithm [24] | An enhanced PSO algorithm for clustering with hybrid population replacement. | Addresses premature convergence and empty clusters, outperforming standard PSO. |

| Adaptive PSO (APSO) [12] [25] | A PSO variant with automatic control of inertia and acceleration coefficients. | Improves search efficiency and helps the swarm escape local optima. |

Implementing PSO for Carbon Cluster Structure Prediction: A Step-by-Step Guide

This guide provides troubleshooting and methodological support for researchers applying Particle Swarm Optimization (PSO) to the challenge of predicting global minimum energy structures for carbon (Cn) clusters.

Frequently Asked Questions & Troubleshooting

1. Why is my PSO simulation for C₅ clusters converging to a high-energy structure too quickly? This is a classic sign of premature convergence, where the swarm loses diversity and gets trapped in a local minimum. To address this:

- Adjust the Inertia Weight: Increase the inertia weight (

w) to promote global exploration of the potential energy surface (PES). A higher value (e.g., 0.9) encourages particles to explore new areas, while a lower value (e.g., 0.4) focuses the search locally [11] [12]. - Review Your Social and Cognitive Coefficients: If the social coefficient (

c2) is too high compared to the cognitive coefficient (c1), particles will rush toward the first good solution found. Try increasingc1to strengthen the pull of a particle's own best-found position (pBest), fostering more individual exploration [11]. - Change the Swarm Topology: Using a global best (gbest) topology, where all particles communicate, can cause information to spread too quickly. Switch to a local best (lbest) topology, like a "ring" where particles only communicate with immediate neighbors. This slows convergence and improves exploration [11] [12].

2. The optimization is taking too long and does not converge. How can I improve its efficiency? This indicates excessive exploration and a lack of exploitation. The following adjustments can help:

- Use an Adaptive Inertia Weight: Instead of a fixed value, implement a strategy where the inertia weight starts high (e.g., 0.9) to encourage broad exploration and gradually decreases over iterations (e.g., to 0.4) to fine-tune the solution in later stages [12].

- Combine Solvers for Cost Reduction: For computationally expensive evaluations, like those using Density Functional Theory (DFT), use a multi-fidelity approach. First, run the PSO using a faster, less accurate method to find a promising region of the PES. Then, validate the final optimized structure with a high-accuracy solver [28] [29].

3. How should I handle position updates for my Cn cluster atoms in 3D space?

In cluster structure prediction, a particle's position is a vector of all atomic coordinates in 3D space (∈ R³ⁿ). The standard update rule is [11] [12] [30]:

x_{new} = x_{current} + v_{new}

Ensure that after updating the position, you evaluate the new structure's energy using your chosen objective function (e.g., a harmonic potential or a DFT calculation) [17]. The update is a straightforward addition of the position and velocity vectors.

4. What is a good starting point for the number of particles and iterations?

There is no universal setting, but a balanced starting point is often 20-40 particles for 1000-2000 iterations [11]. The complexity of the Cn cluster (value of n) and the ruggedness of its potential energy surface will influence the required resources. Start with these values and adjust based on observed convergence behavior.

PSO Algorithm Parameters and Their Roles

The performance of PSO is highly dependent on the correct tuning of its parameters. The table below summarizes the key parameters and their effect on the search for the global minimum of a carbon cluster.

| Parameter | Symbol | Typical Range/Value | Function in Cn Cluster Optimization |

|---|---|---|---|

| Inertia Weight [11] [12] | w |

0.4 - 0.9 | Balances exploration (high value) and exploitation (low value) of the potential energy surface. |

| Cognitive Coefficient [11] [30] | c1 |

1.5 - 2.0 | Controls a particle's attraction to its own best-found structure (pBest). |

| Social Coefficient [11] [30] | c2 |

1.5 - 2.0 | Controls a particle's attraction to the swarm's best-found structure (gBest or lBest). |

| Number of Particles [11] | S |

20 - 50 | The number of candidate cluster structures in the swarm. A larger swarm explores more but is costlier. |

| Swarm Topology [11] [12] | - | Global (star) or Local (ring) | Controls information flow. Global topologies converge faster; local topologies are better for exploration. |

Core PSO Workflow for Cluster Optimization

The following diagram illustrates the iterative workflow of the PSO algorithm for optimizing cluster structures. Each particle represents a full atomic configuration of a carbon cluster, and its "fitness" is typically the potential energy of that configuration, which the algorithm seeks to minimize.

Experimental Protocol: PSO with Harmonic Potential for Cn Clusters

This protocol outlines the steps for using a PSO algorithm to find the global minimum energy structure of a carbon cluster (e.g., C₄ or C₅) using a harmonic (Hookean) potential, as described in search results [17].

1. Objective: To locate the molecular structure of a Cₙ cluster associated with the global minimum of its potential energy surface.

2. Methodology:

- Potential Energy Function: A harmonic potential is used to model the interactions between carbon atoms. In this model, atoms are treated as rigid spheres connected by springs, with the restoring force following Hooke's Law [17].

- Algorithm: A modified PSO algorithm implemented in Fortran 90 [17].

3. Procedure:

- Step 1: Initialization.

- Define the number of atoms (

n) in the Cₙ cluster. - Initialize a swarm of particles. Each particle's position (

x_i) is a vector inR^{3n}space, representing the 3D coordinates of allnatoms in a cluster structure. Positions are initialized randomly within the search-space boundaries [12] [17]. - Initialize each particle's velocity (

v_i) randomly to direct its initial movement [11].

- Define the number of atoms (

- Step 2: Fitness Evaluation.

- For each particle (cluster structure) in the swarm, calculate the total potential energy based on the harmonic potential function. This energy value is the particle's fitness, which the PSO aims to minimize [17].

- Step 3: Update Personal and Global Bests.

- Compare each particle's current energy to the energy of its

pBest(the best structure it has found so far). If the current structure has lower energy, updatepBest[11] [12]. - Find the particle with the lowest energy in the entire swarm. If this energy is lower than the swarm's

gBest, updategBestto this new best-known structure [11] [12].

- Compare each particle's current energy to the energy of its

- Step 4: Update Velocity and Position.

- For each particle

i, update its velocity using the standard velocity update equation [11] [12] [30]:v_i^{new} = w * v_i^{current} + c1 * r1 * (pBest_i - x_i) + c2 * r2 * (gBest - x_i)wherer1andr2are random numbers between 0 and 1. - Update the particle's position (the atomic coordinates of the cluster) using [11] [12]:

x_i^{new} = x_i^{current} + v_i^{new}

- For each particle

- Step 5: Iteration and Convergence.

- Repeat Steps 2-4 until a stopping criterion is met. This is typically a maximum number of iterations or when the

gBestenergy shows no significant improvement over many iterations [11].

- Repeat Steps 2-4 until a stopping criterion is met. This is typically a maximum number of iterations or when the

- Step 6: Validation.

- The structure corresponding to the final

gBestis the predicted global minimum. This structure should be validated, for example, by comparing its geometry and relative energy to those obtained from more accurate methods like Basin-Hopping (BH) or DFT calculations [17].

- The structure corresponding to the final

Research Reagent Solutions: Computational Tools

The table below lists key computational "reagents" used in a typical PSO-based cluster structure search.

| Tool / Component | Function in the Workflow |

|---|---|

| PSO Algorithm [17] | The core optimization engine that drives the search for the global minimum energy structure. |

| Harmonic (Hookean) Potential [17] | A computationally efficient model to approximate the potential energy of a cluster configuration during the PSO search. |

| Basin-Hopping (BH) Algorithm [17] | An alternative metaheuristic global optimization method used to cross-check and validate results obtained from PSO. |

| Density Functional Theory (DFT) [17] | A high-accuracy quantum mechanical method used for final validation of the optimized cluster structure's energy and properties. |

| Fortran 90 / Python [17] | Programming languages commonly used to implement the PSO and BH algorithms, respectively. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why is my PSO simulation for carbon clusters stalling in a high-energy local minimum?

A: This is a common challenge when the objective function landscape is complex. To address this:

- Verify Potential Parameters: Ensure the force constant (k) and equilibrium bond length (r₀) in your harmonic potential accurately represent the interatomic interactions for your specific system (e.g., C-C bonds). Using generic parameters can create a misleading energy landscape [17].

- Adjust PSO Parameters: Implement an adaptive inertia weight strategy. Allowing the inertia weight to decrease non-linearly or based on the swarm's phenotypic entropy helps the search transition from global exploration to local exploitation, preventing premature convergence [31].

- Incorporate Stochasticity: Use random acceleration factors (c₁, c₂) in your velocity update equation to help the swarm escape local minima [32].

Q2: How do I validate that my harmonically optimized cluster structure is a viable precursor for DFT calculation?

A: Validation is crucial before proceeding to computationally expensive DFT.

- Compare with Known Data: Perform a sanity check by comparing the geometrically optimized structure and its relative energy with those obtained from other global optimization methods like the Basin-Hopping (BH) algorithm [17].

- Check Structural Integrity: Inspect the resulting cluster for unphysical bond lengths or angles, which indicate poor potential function parameters. The harmonic potential should produce structures that are reasonable approximations of known configurations [17].

- Use a Stepping-Stone Approach: The structure obtained from the PSO with a harmonic potential should be considered an initial guess. Its primary purpose is to be close enough to the global minimum on the Potential Energy Surface (PES) to ensure efficient and correct convergence in DFT [17] [18].

Q3: What are the primary causes of inconsistent results between repeated PSO runs for the same cluster?

A: Inconsistencies typically stem from the stochastic nature of PSO.

- Insufficient Population Diversity: If the initial swarm lacks diversity, the algorithm may consistently converge to the same local minimum. Techniques like sinusoidal chaos mapping for initial particle generation can improve initial coverage of the search space [31].

- Lack of Convergence Criteria: Ensure your stopping criteria are robust, based on both the stability of the

gbestfitness value over iterations and the swarm's phenotypic entropy, rather than a simple iteration limit [31]. - Random Number Generation: Use a high-quality pseudo-random number generator with a reproducible seed for debugging purposes to track the source of variability.

Q4: When moving from a harmonic to a DFT potential, my cluster geometry changes significantly. Why does this happen?

A: This is expected due to the fundamental differences in the potential functions.

- Potential Function Limitations: A simple harmonic potential is a crude approximation that does not account for electronic effects, bond breaking/formation, angular strain, or van der Waals interactions. Density Functional Theory, while also an approximation, uses an exchange-correlation functional (e.g., LDA, GGA, PBE) to model the many-body quantum mechanical interactions of electrons, leading to a more physically accurate result [33] [18].

- Confirmation with DFT Frequency Calculation: After DFT geometry optimization, always perform a frequency calculation. The absence of imaginary frequencies confirms the structure is a true local minimum on the DFT-level PES, not a saddle point [34].

Troubleshooting Common Experimental Issues

Problem: The PSO algorithm converges too quickly, yielding poor results.

- Potential Cause: The inertia weight (ω) might be too low, or the cognitive (c₁) and social (c₂) parameters are unbalanced.

- Solution: Implement a dynamic parameter adjustment strategy. Classify the swarm's state (e.g., exploration, exploitation, convergence) using a metric like phenotypic entropy and adapt parameters accordingly. For instance, increase inertia weight to promote exploration when the swarm is too cohesive [31].

Problem: The DFT single-point energy calculation fails to converge after PSO pre-optimization.

- Potential Cause: The initial structure from PSO may still contain atoms that are too close, leading to unreasonably high forces in the DFT solver.

- Solution: Implement a constraint in the harmonic potential to include a simple repulsive term for very short interatomic distances. Additionally, check the input parameters for your DFT code (e.g., VASP, Gaussian 09), such as the SCF convergence criteria and smearing settings [33] [34].

Problem: The predicted cluster structure does not match known experimental data (e.g., from X-ray diffraction).

- Potential Cause 1: The harmonic potential is insufficient to describe the complex bonding in the cluster.

- Action: Consider using a more sophisticated potential (e.g., Morse potential) for the PSO stage or validate your findings with a higher-level of DFT theory (e.g., hybrid functionals) if computationally feasible [18].

- Potential Cause 2: The PSO algorithm did not find the true global minimum.

- Action: Increase the swarm size and the number of generations. Use competitive PSO or clustering-based variants to improve the robustness of the global search [32].

Detailed Methodology: PSO with Harmonic Potential for Cluster Optimization

The following protocol outlines the hybrid approach for finding the global minimum structure of carbon clusters (Cₙ) using a harmonic potential in PSO, followed by DFT refinement [17] [34].

Problem Formulation:

- Objective Function: Define the total potential energy of the cluster as the sum of pairwise harmonic potentials:

E_total = Σᵢⱼ ½kᵢⱼ(rᵢⱼ - r₀ᵢⱼ)², wherekᵢⱼis the force constant,rᵢⱼis the distance between atoms i and j, andr₀ᵢⱼis the equilibrium bond length [17]. - Search Space: The search occurs in a hyperdimensional space ∈ R³ᴺ, where N is the number of atoms. Each particle's position vector represents the 3D coordinates of all atoms in the cluster.

- Objective Function: Define the total potential energy of the cluster as the sum of pairwise harmonic potentials:

PSO Algorithm Initialization (Fortran 90/Python):

- Swarm Setup: Initialize a population of particles. Each particle is assigned a random position and velocity within the defined search space [17] [31].

- Parameter Tuning: Set the PSO parameters. While fixed values can work, dynamic adjustment is superior [31].

- Inertia weight (ω): Start with a higher value (~0.9) for exploration and decrease it over iterations to ~0.4 for exploitation. A sinusoidal chaos mapping can be used for this dynamic adjustment [31].

- Acceleration coefficients (c₁, c₂): Common to set c₁ = c₂ = 2.0, but they can be adapted based on the swarm's state [32].

Iterative Optimization Loop:

- Fitness Evaluation: For each particle, calculate the

E_totalusing the harmonic potential function. - Update Personal and Global Bests (

pbest,gbest): Compare the current energy with the particle's historical best (pbest) and the swarm's best (gbest). Update these values if a lower energy is found [17]. - State Classification (Advanced): Use a K-means clustering technique to group particles by their fitness. Calculate the population's phenotypic entropy to classify the swarm's state into one of four categories: convergence, exploitation, escape, or exploration. This classification informs adaptive parameter changes [31].

- Velocity and Position Update: Update each particle's velocity and position using the standard PSO equations [17] [31]:

v_i(t+1) = ω * v_i(t) + c₁ * r₁ * (pbest_i - x_i(t)) + c₂ * r₂ * (gbest - x_i(t))x_i(t+1) = x_i(t) + v_i(t+1)

- Termination: The loop continues until a maximum number of iterations is reached or the

gbestposition shows no significant improvement.

- Fitness Evaluation: For each particle, calculate the

DFT Refinement (Gaussian 09/VASP):

- Structure Transfer: Use the

gbestgeometry from PSO as the initial input for DFT software. - Geometry Optimization: Perform a full DFT-based geometry optimization to relax the structure to the nearest local minimum on the true quantum-mechanical PES. Common settings include [17] [34]:

- Functional: B3LYP or PBE.

- Basis Set: 6-31G for C atoms.

- Task: Perform

Opt(geometry optimization) andFreq(frequency calculation) to confirm a true minimum.

- Structure Transfer: Use the

Table 1: Comparison of Optimization Methods for Cluster Structures

| Method | Computational Cost | Typical Application | Key Advantage | Key Limitation |

|---|---|---|---|---|

| PSO with Harmonic Potential | Low | Initial structure screening of Cₙ, WOₙ clusters [17] | Fast exploration of configurational space; good for generating candidate structures [17] | Relies on accuracy of classical potential; energy values are not quantum-mechanically accurate [17] |

| Basin-Hopping (BH) | Medium | Global optimization of clusters and molecules [17] [18] | Effectively transforms the PES for easier exploration via Monte Carlo jumps [17] | Performance can be sensitive to the choice of step size for perturbations [18] |

| Density Functional Theory (DFT) | High | Final structure refinement, electronic property calculation [17] [33] | Provides quantum-mechanically accurate energies, structures, and properties [33] | Computationally expensive; requires a good initial guess to find the global minimum [18] |

Table 2: Essential Research Reagent Solutions for Computational Experiments

| Item / Software | Function / Purpose | Example in Workflow |

|---|---|---|

| CALYPSO Code | Structure prediction using PSO and other evolutionary algorithms. | Used to generate initial candidate structures for CoKₙ clusters by evolving a population of structures over generations [34]. |

| VASP / Gaussian 09 | First-Principles DFT Codes for electronic structure calculation. | Used for final geometry optimization and single-point energy calculations after PSO pre-optimization [17] [34]. |

| Harmonic (Hookean) Potential | A simple classical potential function for initial structure optimization. | Serves as the fast, low-cost objective function E_total = ½k(r - r₀)² in the PSO loop to find approximate global minima [17]. |

| PBE / B3LYP Functional | Exchange-Correlation Functionals within DFT. | Defines the approximation for electron-electron interactions in DFT calculations (e.g., PBE in VASP, B3LYP in Gaussian) [33] [34]. |

| Grid Ranking Mechanism | Maintains diversity in multi-objective PSO. | Helps select leading particles from different regions of the objective space to prevent premature convergence [32]. |

Workflow and Relationship Visualizations

The following diagram illustrates the logical workflow for the hybrid PSO-DFT approach to cluster structure prediction.

This diagram shows the key logical relationships between the concepts of the Potential Energy Surface (PES), optimization algorithms, and the objective function.

FAQs & Troubleshooting for PSO in Cluster Structure Research

This guide addresses common challenges researchers face when applying Particle Swarm Optimization (PSO) to determine the geometric and energetic properties of carbon clusters (Cn). The methodologies are framed within the context of a broader thesis on this topic.

FAQ 1: How can I improve my algorithm's ability to find the global minimum and avoid premature convergence on local optima?

Answer: Premature convergence is a common issue where the algorithm gets trapped in a local minimum. Implementing enhanced PSO variants with specific strategies has proven effective.

- Recommended Solution: Adopt a Ternary Historical Memory-based Robust Clustered PSO (THM-RCPSO) framework [35].

- Experimental Protocol:

- Cluster Initialization: Divide the initial particle swarm into multiple sub-swarms (clusters). Each cluster represents a group of candidate structures for the Cn cluster.

- Parallel Local Search: Each cluster conducts independent local searches to identify regional optima (low-energy structures).

- Information Exchange: Clusters periodically communicate and share information on their best-found solutions to iteratively refine the global best structure.

- Ternary Historical Memory: Implement a memory mechanism that records and compares the best solutions from three different search strategies (e.g., standard PSO, gradient descent, and Lévy flight). The best solution from this memory bank guides the swarm, balancing local exploitation and global exploration [35].

- Expected Outcome: This method significantly improves convergence speed, solution accuracy, and robustness, particularly for complex, multimodal potential energy surfaces (PES) of larger clusters [35].

FAQ 2: My PSO runs are yielding inconsistent results for the same cluster system. How can I improve consistency and reliability?

Answer: Inconsistency often stems from high sensitivity to initial conditions or random parameters. Enhancing the algorithm's robustness is key.

- Recommended Solution: Integrate elite decentralization and crossbar strategies [36].

- Experimental Protocol:

- Elite Decentralization: During the search process, intentionally disperse the population's elite particles (those with the lowest energies) to explore different regions of the PES. This prevents the entire swarm from collapsing into a single, potentially local, optimum [36].

- Crossbar Strategy: Employ a crossover technique (similar to genetic algorithms) between different particles. This generates new candidate solutions by combining structural features of existing ones, enhancing the algorithm's ability to escape local minima and explore new configurations [36].

- Dynamic Parameters: Introduce a dynamic exponential factor to control the trade-off between exploration and exploitation adaptively throughout the optimization process [36].

- Expected Outcome: The upgraded algorithm demonstrates higher global optimization capability, greater consistency (lower standard deviation across multiple runs), and proficiency in handling complex optimization landscapes [36].

FAQ 3: For large-scale cluster systems, the computational cost is prohibitively high. Are there efficient protocols for systems like C150?

Answer: Standard PSO can be inefficient for large-scale problems. Leveraging clustered PSO approaches designed for large-scale scenarios is essential.

- Recommended Solution: Utilize a Robust Clustered PSO (RCPSO) framework, which has been tested successfully on large-scale instances with 150 vessels (analogous to complex systems with 150 interacting entities) [35].

- Experimental Protocol:

- Decomposition: Treat the large cluster system as a set of interacting subsystems.

- Clustered Optimization: Apply the THM-RCPSO protocol from FAQ 1, where each cluster works on optimizing a part of the system or a specific conformational subspace.