Performance Comparison of Validated Clinical Prediction Models: A Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of comparing validated clinical prediction models.

Performance Comparison of Validated Clinical Prediction Models: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of comparing validated clinical prediction models. It covers the foundational principles of prediction models, best practices in methodology and application, strategies for troubleshooting and optimizing model performance, and robust frameworks for external validation and comparative analysis. Using real-world case studies, such as the comparison of C-AKI prediction models, the article synthesizes current methodologies to equip scientists with the knowledge to evaluate, select, and implement the most reliable models for clinical and biomedical research.

Understanding Clinical Prediction Models: Core Concepts and Development Lifecycle

Defining Clinical Prediction Models and Their Role in Biomedical Research

Clinical Prediction Models (CPMs) are quantitative tools that use a combination of patient characteristics to estimate the probability of a current disease (diagnostic) or a future health outcome (prognostic) for an individual [1] [2]. In the era of precision medicine, CPMs are pivotal for transforming raw patient data into objective, personalized risk assessments that can inform clinical decisions, guide resource allocation, and shape drug development strategies [2].

The development and validation of these models are active areas of research, with an estimated 248,431 articles on CPM development published across all medical fields by 2024, a number that continues to grow rapidly [3]. This guide provides a comparative analysis of validated CPMs, detailing their performance, underlying methodologies, and the essential tools for their evaluation.

Core Concepts and Applications of Clinical Prediction Models

Definition and Function

A Clinical Prediction Model is a parametric, semi-parametric, or non-parametric mathematical model that estimates the probability of a health outcome based on a patient's known features [2]. The core function of a CPM is to move beyond relative risk metrics (like Odds Ratios or Hazard Ratios) to provide an absolute risk or probability of an outcome, thereby offering a more direct and actionable insight for patient care [2].

Classification and Applications

CPMs are generally categorized based on their clinical objective, which dictates their research design and application [2].

- Diagnostic Models: Used to determine the likelihood of a current disease. They are typically developed using cross-sectional study designs and require a reliable "gold standard" for diagnosis [2].

- Prognostic Models: Used to predict the risk of a future outcome (e.g., recurrence, death, complication) over a specific time frame in patients with a known condition. Prospective cohort studies are the most common design for these models [2].

The applications of CPMs span the entire disease prevention and management spectrum, from primary prevention (e.g., the Framingham cardiovascular risk score) to tertiary prevention (e.g., prognostic models for cancer survival) [2]. They provide a scientific basis for health education, early diagnosis, and personalized rehabilitation plans [2].

Comparative Performance of Modeling Approaches

The predictive analytics landscape is evolving from traditional regression-based models to include advanced machine learning (ML) and artificial intelligence (AI) approaches. The table below compares the performance of various modeling techniques across different clinical forecasting tasks.

Table 1: Performance Comparison of Clinical Forecasting Models on Diverse Medical Tasks

| Model Type | Model Name | NSCLC Dataset (Scaled MAE) | ICU Dataset (Scaled MAE) | Alzheimer's Dataset (Scaled MAE) | Key Characteristics |

|---|---|---|---|---|---|

| LLM-based | DT-GPT | 0.55 | 0.59 | 0.47 | Processes all patient variables simultaneously; enables zero-shot forecasting [4]. |

| Traditional ML | LightGBM | 0.57 | 0.60 | - | A gradient boosting framework effective for tabular data [4]. |

| Deep Learning | Temporal Fusion Transformer (TFT) | - | - | 0.48 | Designed to capture temporal relationships and known inputs [4]. |

| Channel-Independent LLM | Time-LLM | 0.62 | 0.64 | - | Processes each clinical time series separately, limiting variable interaction modeling [4]. |

| Baseline LLM (No Fine-tuning) | BioMistral-7B | 1.03 | 0.83 | 1.21 | Demonstrates poor performance and "hallucination" without clinical data fine-tuning [4]. |

MAE: Mean Absolute Error. A lower Scaled MAE indicates better performance. Scaled MAE is normalized by the standard deviation of the data, meaning DT-GPT's forecasting errors are smaller than the natural variability in the data [4].

Recent advancements show that fine-tuned Large Language Models (LLMs), such as the Digital Twin-Generative Pretrained Transformer (DT-GPT), can outperform state-of-the-art machine learning models in forecasting clinical trajectories [4]. DT-GPT reduces the scaled MAE by 3.4% on a non-small cell lung cancer (NSCLC) dataset and by 1.3% on an intensive care unit (ICU) dataset compared to the next best model [4]. A key advantage of LLM-based models over "channel-independent" models is their ability to process all patient variables together, thereby capturing crucial biological correlations [4].

Essential Experimental Protocols for Model Validation

A critically important yet often overlooked aspect of CPM research is rigorous validation. A model's performance in the development dataset is often optimistic and does not reflect its real-world accuracy [1]. The following workflow and protocols outline the standard for model evaluation.

Internal Validation

Objective: To estimate the model's performance in the underlying population from which the development data was drawn and correct for over-optimism [1].

- Methods: Resampling methods like bootstrapping or k-fold cross-validation are preferred. Data splitting (hold-out validation) is generally advised against as it is inefficient, especially with small to moderate sample sizes [1].

- Performance Metrics: The process involves calculating apparent performance (on the development data) and then correcting for "optimism" to obtain a more realistic internal performance estimate [1].

External Validation

Objective: To evaluate the model's predictive accuracy in new data from a different population, time period, or setting [1]. This is the cornerstone of establishing model generalizability and trustworthiness.

- Methods: Applying the model—using the exact same mathematical formula and coefficients—to a completely independent dataset [1] [5].

- Performance Metrics: A comprehensive assessment includes:

- Discrimination: The ability to differentiate between those with and without the outcome, typically measured by the C-statistic (AUC) [1].

- Calibration: The agreement between predicted risks and observed outcomes, assessed with a calibration plot and quantified by the calibration slope (ideal value=1) and calibration-in-the-large (ideal value=0) [1].

- Overall Accuracy: Measured by metrics like the Brier score [1].

One systematic review found that only 27% of implemented models had undergone external validation, highlighting a significant gap in the field [5].

Impact Assessment and Implementation

Objective: To determine whether the use of the model in a clinical setting actually improves patient outcomes or decision-making [5].

- Methods: This is often evaluated through randomized controlled trials (RCTs) comparing clinician decisions and patient outcomes with and without the model's guidance [5].

- Implementation: Successful models are often integrated into clinical workflows via Hospital Information Systems (HIS) or web applications [5].

Model Updating

Objective: To maintain or restore a model's predictive performance after it has degraded over time or in a new setting [5].

- Methods: Techniques range from simple recalibration (adjusting the model's intercept or baseline risk) to more complex model refitting (re-estimating some or all predictor coefficients) [5]. A review showed that only 13% of implemented models had been updated, indicating a need for more proactive model maintenance [5].

The Scientist's Toolkit: Key Reagents and Materials

The following tools and concepts are fundamental for researchers developing, validating, and implementing clinical prediction models.

Table 2: Essential Research Reagent Solutions for CPM Development and Validation

| Item Name | Category | Function in Research |

|---|---|---|

| R Statistical Software | Software Platform | An open-source environment for statistical computing and graphics, essential for implementing model development, validation, and visualization techniques [2]. |

| Validation Dataset | Data Resource | An independent dataset, distinct from the development data, used for the critical process of external validation to test model generalizability [1]. |

| TRIPOD Statement | Reporting Guideline | The "Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis" guidelines, which ensure complete and reproducible reporting of prediction model studies [1]. |

| PROBAST Tool | Quality Assessment Tool | The "Prediction model Risk Of Bias Assessment Tool," used to critically appraise the methodology and risk of bias in prediction model studies [5]. |

| Nomogram | Visualization Tool | A graphical representation of a prediction model that allows for manual approximation of an individual's risk based on their predictor values [6]. |

| Algorithmic Fairness Framework | Conceptual Framework | A set of principles and tools, such as the GUIDE framework, used to identify and mitigate bias and ensure equitable model performance across racial and demographic subgroups [7]. |

Clinical Prediction Models represent a powerful fusion of clinical medicine and data science, offering a pathway to more personalized and effective patient care. The field is characterized by a proliferation of new models, with an estimated nearly 250,000 development articles published to date [3]. However, the true test of a model's value lies not in its development but in its rigorous validation and demonstrated clinical utility.

The current evidence shows a shift towards more complex AI-based models like DT-GPT, which show promise in forecasting patient trajectories with high accuracy. Nevertheless, core principles of rigorous validation—including internal and external validation, calibration assessment, and impact analysis—remain the bedrock of trustworthy model research. As the field advances, a greater focus on addressing algorithmic bias, ensuring model fairness, and maintaining models through updates will be crucial for the ethical and effective integration of CPMs into biomedical research and clinical practice.

In modern clinical research and drug development, multivariable prediction models are indispensable tools for estimating the probability of a specific disease being present (diagnostic models) or a particular event occurring in the future (prognostic models) [8]. These models, which integrate multiple patient characteristics, symptoms, and test results, inform critical decision-making processes throughout the clinical pathway—from referral for further testing and treatment initiation to risk stratification in clinical trials [8]. The methodological rigor with which these models are developed and validated directly impacts their reliability and ultimate utility in real-world healthcare settings.

The pathway from initial model conception to clinically implementable tool follows a structured pipeline encompassing development, validation, and reporting phases. Prior to the establishment of formal reporting guidelines, the field suffered from significant deficiencies in methodological conduct and transparent reporting [8]. Numerous systematic reviews have demonstrated that poor reporting and serious methodological shortcomings—including inadequate handling of missing data, use of small datasets, and lack of proper validation—were commonplace, leading to prediction models that were rarely implemented in clinical practice [8]. The PROGRESS (Prognosis Research STRategy) framework laid important groundwork for understanding different types of prognosis research and their interrelationships. This article examines the evolution of methodological standards from foundational concepts to the comprehensive TRIPOD (Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis) statement, which provides a structured framework for ensuring the development and validation of clinically valuable prediction models.

The TRIPOD Framework: Components and Specifications

The TRIPOD Initiative developed a set of evidence-based recommendations to address the poor quality of reporting in prediction model studies [8]. The resulting TRIPOD Statement is a checklist of 22 essential items that aim to improve the transparency and completeness of reporting for studies that develop, validate, or update a diagnostic or prognostic prediction model [9]. This guideline was specifically designed for multivariable prediction models for individual prognosis or diagnosis, distinguishing it from related guidelines focused on observational studies (STROBE), tumor markers (REMARK), or diagnostic accuracy studies (STARD) [8].

Core Principles of TRIPOD

The TRIPOD statement emphasizes several fundamental principles that underpin reliable prediction model studies. First, it explicitly covers both diagnostic and prognostic prediction models across all medical domains and considers all types of predictors [8]. Second, it places significant emphasis on validation studies—a critical phase often neglected in early prediction research. Third, it addresses the reporting of studies that evaluate the incremental value of specific predictors beyond established predictors or existing models [8]. The guideline categorizes studies into model development, model validation (with or without updating), or a combination of both, with specific reporting recommendations for each study type [8].

The TRIPOD Checklist: Essential Reporting Items

The TRIPOD checklist encompasses items across several domains, including title and abstract, introduction, methods, results, discussion, and other information. Each item specifies the essential elements that should be reported to enable critical appraisal and replication. For example, the title and abstract should identify the study as developing and/or validating a multivariable prediction model and state the target population and outcome to be predicted [8]. The methods section should clearly describe the study design, participant eligibility, sources of data, and handling of missing data [8]. The results should report the model's performance in terms of discrimination and calibration, while the discussion should address limitations and potential clinical applicability [8].

Table 1: Key Components of the TRIPOD Reporting Guideline

| Component Category | Key Reporting Elements | Purpose & Importance |

|---|---|---|

| Title & Abstract | Identification as development/validation study; target population & outcome | Ensures appropriate identification and indexing of prediction model studies |

| Introduction | Explanation of study rationale & objectives; specific research goals | Provides context and establishes scientific and clinical relevance |

| Methods | Source of data, participant eligibility, statistical analysis methods | Enables assessment of methodological rigor and potential biases |

| Results | Participant flow, model specification, performance measures | Allows judgment of model validity and potential usefulness |

| Discussion | Interpretation of results, limitations, implications for practice & research | Places findings in context and guides appropriate implementation |

| Other Information | Funding sources, conflicts of interest, data availability | Supports evaluation of potential biases and facilitates replication |

Evolution to TRIPOD+AI and Extensions

Responding to the rapid integration of artificial intelligence and machine learning in prediction modeling, the TRIPOD framework has been updated to create TRIPOD+AI [10]. This extension provides updated guidance for reporting clinical prediction models that use regression or machine learning methods, addressing unique considerations such as complex model architectures, hyperparameter tuning, and computational requirements [10]. Additional specialized extensions have also been developed, including TRIPOD-SRMA for systematic reviews and meta-analyses of prediction model studies, TRIPOD-Cluster for studies using clustered data, and TRIPOD-LLM for studies utilizing large language models [10].

Experimental Protocols in Model Development and Validation

Model Development Studies

Development studies aim to derive a new prediction model by selecting relevant predictors and combining them statistically into a multivariable model [8]. The protocol for model development must begin with a clear definition of the study objective, target population, and outcome to be predicted. Researchers should explicitly specify eligibility criteria for participants and provide detailed descriptions of data sources, including the study design, settings, locations, and dates of data collection [8]. Candidate predictors should be clearly defined and measured, with appropriate handling of missing data explicitly described.

Statistical analysis methods require particular attention in the protocol. Researchers should specify the type of model (e.g., logistic regression, Cox regression), the approach to model building (including predictor selection procedures), and how continuous predictors were handled [8]. The protocol should also describe how the model's performance will be assessed in terms of discrimination (ability to distinguish between different outcomes) and calibration (agreement between predicted and observed outcomes) [8]. Most critically, development studies must include plans for internal validation to quantify optimism in the model's performance using techniques such as bootstrapping or cross-validation [8]. Overfitting—which occurs when there are too few outcome events relative to the number of candidate predictors—can be addressed through shrinkage methods or penalization techniques [8].

Model Validation Studies

Validation studies represent a crucial step in the prediction model pipeline, evaluating the performance of an existing model in new participant data [8]. The validation protocol requires clear specification of the model being validated and the study population, with particular attention to similarities and differences from the development population. Researchers should describe how predictions were obtained for individuals in the validation dataset using the original model's specifications [8].

The validation protocol must detail how performance measures will be calculated, including both discrimination and calibration metrics. Importantly, the protocol should anticipate the possibility of poor performance and specify plans for model updating if needed [8]. Updating methods may include simple recalibration (adjusting the baseline risk or predictor effects) or more extensive model revision [8]. Validation can take several forms, including temporal validation (using data from a later period), geographic validation (using data from different locations), or validation in different but related populations [8].

Table 2: Comparison of Model Development and Validation Study Protocols

| Protocol Component | Development Study | Validation Study |

|---|---|---|

| Primary Objective | Derive new model by selecting and weighting predictors | Evaluate performance of existing model in new data |

| Data Requirements | Dataset with predictors and outcomes for model building | Independent dataset with predictors and outcomes |

| Statistical Methods | Model building techniques (e.g., regression, machine learning), internal validation (bootstrapping, cross-validation) | Calculation of performance measures (discrimination, calibration), model updating if needed |

| Key Outputs | Model equation/algorithm, apparent performance, optimism-corrected performance | Performance measures in new data, comparison with development performance |

| Common Pitfalls | Overfitting, predictor selection bias, optimistic performance estimates | Spectrum bias, transportability issues, insufficient sample size |

| Reporting Standards | TRIPOD Development Checklist | TRIPOD Validation Checklist |

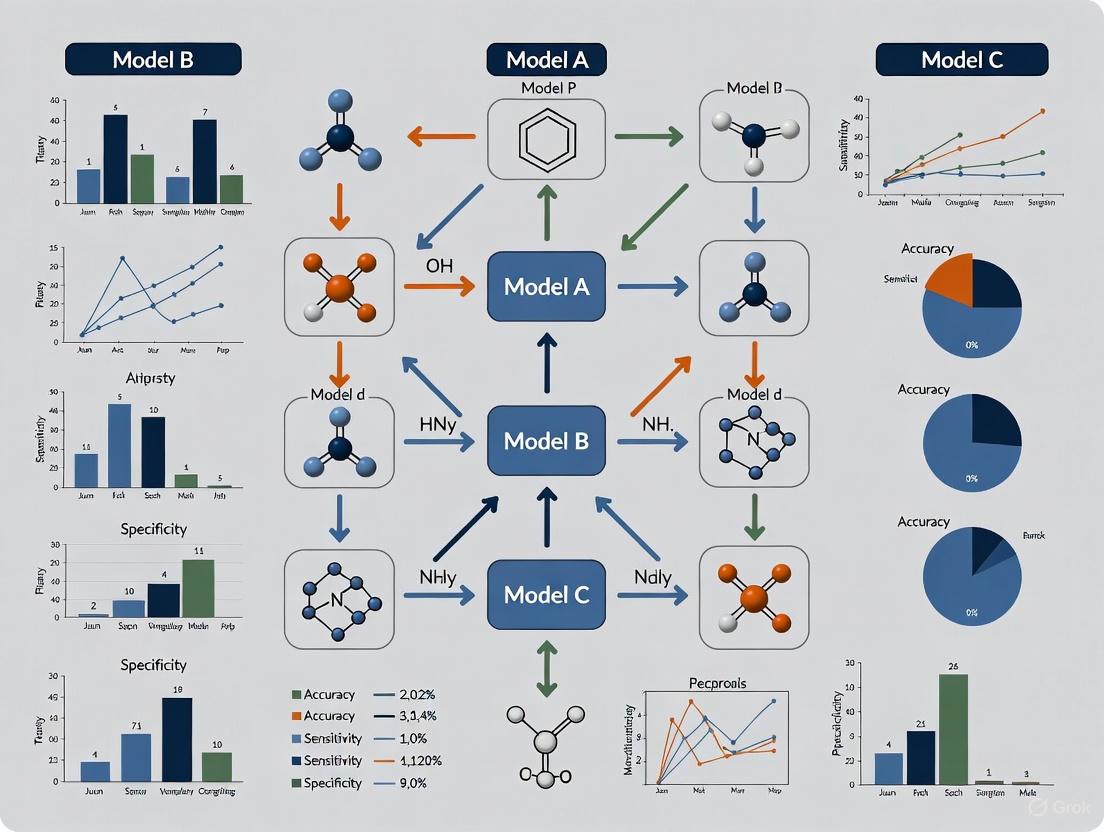

Visualization of the Prediction Model Pipeline

The following diagram illustrates the complete prediction model development and validation pipeline, from initial conceptualization through to implementation and monitoring, highlighting key decision points and methodological considerations at each stage.

Prediction Model Development and Validation Pipeline

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of prediction model studies requires careful consideration of methodological tools and resources. The following table details essential components of the methodological toolkit for researchers conducting prediction model studies according to TRIPOD standards.

Table 3: Essential Methodology Toolkit for Prediction Model Research

| Research Component | Function & Purpose | Implementation Considerations |

|---|---|---|

| Study Protocol | Detailed plan outlining objectives, methods, and analysis plans | Should be developed before study initiation; registered in public repositories when possible |

| Data Collection Tools | Standardized forms for predictor and outcome assessment | Must ensure consistent measurement across sites and over time; electronic data capture preferred |

| Statistical Software | Platforms for model development, validation, and analysis | R, Python, Stata, SAS; should include packages for advanced validation methods (e.g., bootstrapping) |

| Internal Validation Methods | Techniques to quantify optimism in model performance | Bootstrapping, cross-validation; essential for all development studies |

| Performance Measures | Metrics to evaluate model discrimination and calibration | Discrimination: C-statistic, AUC; Calibration: calibration slope, intercept, plots |

| TRIPOD Checklist | Reporting guideline for transparent documentation | Should be completed during manuscript preparation; many journals now require it |

Comparative Performance of Reporting Standards

The implementation of structured reporting guidelines has significantly improved the quality and transparency of prediction model research. The following table compares key aspects of reporting and methodology before and after the introduction of standardized frameworks like TRIPOD.

Table 4: Evolution of Reporting Standards in Prediction Model Research

| Aspect | Pre-Standardized Reporting | Post-TRIPOD Implementation |

|---|---|---|

| Completeness of Reporting | Generally poor with insufficient information on patient data, statistical methods, and validation [8] | Structured reporting with essential details on development, validation, and model performance |

| Handling of Missing Data | Often poorly described or inappropriately handled [8] | Explicit description of missing data and appropriate statistical handling methods |

| Internal Validation | Frequently omitted, leading to optimistic performance estimates [8] | Standard inclusion of bootstrapping or cross-validation to quantify optimism |

| Model Specification | Often incomplete, preventing replication or implementation [8] | Clear presentation of full model equation or algorithm for replication |

| Performance Measures | Selective reporting of only favorable metrics | Comprehensive reporting of discrimination, calibration, and clinical utility |

| External Validation | Rarely performed, limiting assessment of generalizability [8] | Recognition as essential step before clinical implementation |

The evolution from PROGRESS to TRIPOD represents significant maturation in the methodology and reporting of clinical prediction models. The structured framework provided by TRIPOD has addressed critical deficiencies in transparent reporting, while the recent TRIPOD+AI extension ensures relevance in the era of machine learning and artificial intelligence [10]. For researchers, scientists, and drug development professionals, adherence to these guidelines ensures that developed models can be adequately assessed for risk of bias and potential usefulness, ultimately facilitating the implementation of robust prediction tools in clinical practice and drug development. The continued refinement of these standards, coupled with increased adoption by researchers and journals, promises to enhance the quality and clinical impact of prediction model research moving forward.

Cisplatin-associated acute kidney injury (C-AKI) represents a major dose-limiting complication of cisplatin chemotherapy, occurring in 20-30% of treated patients and significantly impacting treatment continuity, prognosis, and healthcare costs [11] [12]. Accurate pre-therapy risk stratification is crucial for implementing preventive measures and personalizing patient management. Two prominent clinical prediction models—the Motwani model (2018) and the Gupta model (2024)—have been developed for this purpose, but their performance characteristics differ substantially [11].

This case study provides an objective comparison of these competing models, focusing on their validation in a Japanese cohort. We examine their architectural differences, predictive performance, and clinical utility to inform researchers and clinicians about their appropriate application in diverse populations.

Model Architectures and Defining Characteristics

The Motwani and Gupta models differ fundamentally in their predictor variables, outcome definitions, and intended clinical use, reflecting their development in distinct clinical contexts and patient populations.

Predictor Variables and Scoring Systems

The Motwani model employs a parsimonious set of four readily available clinical variables, favoring simplicity and ease of clinical implementation [13]. In contrast, the Gupta model incorporates a more comprehensive panel of eight variables, including hematological parameters and serum magnesium levels, aiming to capture a broader spectrum of pathophysiology [11].

Table 1: Comparison of Model Architectures and Scoring Systems

| Characteristic | Motwani Model [11] [13] | Gupta Model [11] |

|---|---|---|

| Definition of AKI | Increase in serum creatinine ≥ 0.3 mg/dL within 14 days | Increase in serum creatinine ≥ 2.0-fold or RRT initiation within 14 days |

| AKI Severity Targeted | Mild AKI (aligns with KDIGO stage 1) | Severe AKI (KDIGO stage ≥ 2) |

| Predictor Variables | Age, Cisplatin dose, Hypertension, Serum albumin | Age, Cisplatin dose, Diabetes, Smoking, Hypertension, Hemoglobin, WBC count, Serum albumin, Serum magnesium |

| Age Points | ≤60: 0; 61-70: 1.5; >70: 2.5 | ≤45: 0; 46-60: 2.5; 61-70: 3.5; >70: 4.5 |

| Cisplatin Dose Points | ≤100mg: 0; 101-150mg: 1; >150mg: 3 | ≤50mg: 0; 51-75mg: 2; 76-100mg: 2.5; 101-125mg: 3; 126-150mg: 5; 151-200mg: 7.5; >200mg: 9.5 |

| Hypertension Points | 2 points | 1 point |

| Albumin Points | ≤3.5 g/dL: 2 points; >3.5: 0 | <3.3 g/dL: 1.5; 3.3-3.8: 1; >3.8: 0 |

Outcome Definitions and Clinical Implications

A critical distinction lies in their AKI definitions. The Motwani model targets a milder creatinine elevation (≥0.3 mg/dL), while the Gupta model identifies more severe kidney damage (≥2-fold creatinine increase or need for renal replacement therapy) [11]. This fundamental difference dictates their clinical applications: the Motwani model may be suited for broad monitoring, whereas the Gupta model is designed to flag patients at risk for clinically significant nephrotoxicity requiring intervention.

Experimental Validation: Methodology and Performance

Validation Cohort and Experimental Protocol

A recent retrospective single-center study provided a direct external validation and comparison of both models in a Japanese population, a setting distinct from their original development cohorts [11] [14] [12].

- Data Source: The study utilized data from 1,684 patients who received cisplatin at Iwate Medical University Hospital between April 2014 and December 2023 [11] [12].

- Patient Selection: Inclusion criteria encompassed adults receiving cisplatin within the study period. Patients on daily or weekly regimens, those with missing renal function data, or those receiving treatment at other institutions were excluded [11].

- Outcome Measures: The study evaluated two endpoints: (1) C-AKI, defined as a ≥0.3 mg/dL increase or a ≥1.5-fold rise in serum creatinine from baseline, and (2) Severe C-AKI, defined as a ≥2.0-fold increase or initiation of renal replacement therapy [11] [12].

- Model Performance Metrics: The validation assessed three key aspects [11] [12]:

- Discrimination: The ability to distinguish between patients who did and did not develop AKI, measured by the Area Under the Receiver Operating Characteristic Curve (AUROC).

- Calibration: The agreement between predicted probabilities and observed outcomes, assessed via calibration plots and metrics.

- Clinical Utility: The net benefit of using the model to guide decisions, evaluated via Decision Curve Analysis (DCA).

The following diagram illustrates the workflow of this external validation study:

Comparative Performance Results

The validation study revealed key differences in how the models performed in the Japanese cohort, particularly regarding the type of AKI being predicted.

Table 2: Performance Metrics in Japanese Validation Cohort [11] [12]

| Performance Metric | C-AKI Outcome | Motwani Model | Gupta Model | P-value |

|---|---|---|---|---|

| Discrimination (AUROC) | Any C-AKI (≥0.3 mg/dL or 1.5x) | 0.613 | 0.616 | 0.84 |

| Discrimination (AUROC) | Severe C-AKI (≥2.0x or RRT) | 0.594 | 0.674 | 0.02 |

| Calibration | Initial Performance | Poor | Poor | - |

| Calibration | After Recalibration | Improved | Improved | - |

| Clinical Utility (DCA) | Severe C-AKI | Lower Net Benefit | Higher Net Benefit | - |

For predicting the broader C-AKI definition, both models demonstrated similar, modest discriminatory power (AUROC ~0.61). However, for predicting severe C-AKI, the Gupta model showed significantly superior discrimination (AUROC 0.674) compared to the Motwani model (AUROC 0.594) [11] [12]. Both models initially exhibited poor calibration, systematically over- or under-estimating the risk in the Japanese population. This highlights a common challenge in translating prediction models across geographic and ethnic populations. However, simple logistic recalibration successfully improved the agreement between predictions and observed outcomes for both models [11].

Discussion and Clinical Implications

Interpretation of Varied Outcomes

The superior performance of the Gupta model for severe AKI is likely multifactorial. First, its outcome definition (severe AKI) is more specific and clinically consequential, which can be easier to predict accurately. Second, its inclusion of additional predictors like low hemoglobin, elevated white blood cell count, and hypomagnesemia may capture a wider array of pathophysiological processes leading to significant kidney damage [11]. Hypomagnesemia, in particular, is a known risk factor for cisplatin nephrotoxicity [11] [12].

The observed miscalibration in both models before adjustment underscores the necessity of external validation and model updating before implementation in new populations. Differences in baseline risk, clinical practices (e.g., hydration protocols), or genetic backgrounds can affect model performance [11] [15]. The successful recalibration in this study demonstrates that these models can be adapted for local use with appropriate statistical refinement.

Table 3: Key Reagents and Methodological Solutions for C-AKI Prediction Research

| Item / Solution | Function / Application in C-AKI Research |

|---|---|

| Electronic Health Record (EHR) Data | Primary data source for retrospective model development and validation, providing demographics, lab values, and drug administration records [11] [16]. |

| Regression-Based Imputation | Statistical technique for handling missing data in predictor variables (e.g., lab values) to preserve sample size and generalizability in retrospective analyses [11] [12]. |

| Logistic Recalibration | A model-updating method to adjust the intercept and slope of a pre-existing model, improving calibration for a new target population without altering its discriminative ability [11]. |

| Decision Curve Analysis (DCA) | A statistical method to evaluate the clinical utility of prediction models by quantifying net benefit across different decision thresholds, balancing true and false positives [11] [12]. |

| R Statistical Software | Open-source environment used for comprehensive statistical analysis, including model validation, recalibration, and performance visualization [11]. |

This direct comparison reveals that the choice between the Motwani and Gupta prediction models is not one of overall superiority but of contextual application.

- The Motwani model, with its simpler architecture, may be advantageous for broad surveillance of mild creatinine elevations.

- The Gupta model is demonstrably more effective for identifying patients at high risk for severe, clinically significant kidney injury, making it more suitable for triggering intensive preventive strategies.

The critical finding from the Japanese validation study is that neither model should be applied directly to new populations without local validation and recalibration [11] [12]. Future work should focus on prospective validation of the recalibrated models and exploration of novel biomarkers to enhance predictive performance further, ultimately enabling more personalized and safer cisplatin chemotherapy.

Best Practices for Model Development, Validation, and Implementation

In clinical prediction model research, internal validation is a critical step to ensure that a model's performance is not overly optimistic and that it can generalize beyond the specific dataset used for its development. When developing models that estimate the risk of clinical deterioration, cancer survival probabilities, or other health outcomes, researchers must accurately assess predictive accuracy to avoid potentially harmful decisions in clinical practice [1]. Internal validation techniques provide a means to estimate how well a model will perform on unseen data from the same underlying population, using only the development dataset itself.

The fundamental challenge in prediction model development is overfitting, where a model learns patterns specific to the development dataset that do not generalize to new patients [1]. This is particularly problematic in clinical settings, where models developed on small or idiosyncratic samples may fail in broader application. Internal validation methods help researchers detect and correct for this over-optimism before proceeding to external validation in completely independent datasets [1].

Among the various internal validation approaches, cross-validation and bootstrapping have emerged as two of the most widely used and recommended techniques. These resampling methods provide more reliable performance estimates than simple data splitting, especially when working with limited clinical datasets that are costly to obtain and often restricted by privacy concerns [17] [18]. This guide provides a comprehensive comparison of these two fundamental techniques, their methodological foundations, implementation protocols, and performance characteristics in the context of clinical prediction model research.

Cross-Validation: Concepts and Workflow

Core Principles and Variants

Cross-validation is a resampling technique that systematically partitions the available data into complementary subsets to train and validate models [19]. The fundamental principle involves iteratively holding out a subset of data for validation while using the remaining data for model training, then averaging performance metrics across all iterations to produce a robust estimate of model performance [20].

The most common variants of cross-validation include:

- k-Fold Cross-Validation: The dataset is randomly divided into k equal-sized folds (typically 5 or 10). For each iteration, one fold is held out as the validation set while the remaining k-1 folds are used for training. This process repeats k times, with each fold serving as the validation set exactly once [19] [20].

- Stratified k-Fold Cross-Validation: This approach maintains the same distribution of target classes in each fold as in the complete dataset, which is particularly important for imbalanced clinical outcomes [19] [17].

- Leave-One-Out Cross-Validation (LOOCV): A special case of k-fold CV where k equals the number of observations in the dataset. Each iteration uses a single observation as the validation set and the remainder as training data [19].

- Nested Cross-Validation: Employs two layers of cross-validation—an inner loop for hyperparameter tuning and model selection, and an outer loop for performance estimation. This approach reduces optimistic bias in performance estimates but increases computational demands [17] [18].

Methodological Protocol

The standard k-fold cross-validation workflow follows these methodological steps [19] [20]:

- Data Preparation: Clean the dataset, handle missing values, and perform necessary preprocessing. For clinical data with multiple records per patient, implement subject-wise splitting to ensure all records from a single patient remain in either training or validation sets to prevent data leakage [17] [18].

- Fold Generation: Randomly partition the dataset into k mutually exclusive folds of approximately equal size. For classification problems with imbalanced outcomes, use stratified sampling to maintain outcome proportions across folds [17].

- Iterative Training and Validation: For each fold i (where i = 1 to k):

- Designate fold i as the validation set

- Combine the remaining k-1 folds to form the training set

- Train the model using the training set

- Calculate performance metrics by applying the trained model to the validation set

- Performance Aggregation: Compute the final performance estimate by averaging the metrics obtained from all k iterations.

The following diagram illustrates the k-fold cross-validation workflow:

Clinical Research Considerations

In clinical prediction research, several domain-specific factors influence cross-validation implementation:

- Subject-wise vs. Record-wise Splitting: When working with electronic health records containing multiple encounters per patient, subject-wise validation ensures all records from an individual remain in either training or validation sets. This prevents optimistic bias from models learning patient-specific patterns rather than generalizable clinical relationships [17] [18].

- Temporal Validation: For clinical prediction models where temporal factors matter, variations such as rolling cross-validation ensure the model is always validated on future time points relative to training data, simulating real-world deployment conditions [21].

- Stratification for Rare Outcomes: Many clinical outcomes are rare (e.g., ≤1% incidence). Stratified cross-validation ensures each fold contains representative cases of the outcome, preventing folds with zero positive cases that would make validation impossible [17].

Bootstrapping: Concepts and Workflow

Core Principles and Variants

Bootstrapping is a resampling technique that draws samples with replacement from the original dataset to create multiple bootstrap datasets [19] [22]. Unlike cross-validation, which divides data without overlap, bootstrapping creates new datasets of the same size as the original by randomly selecting observations with replacement, meaning some observations may appear multiple times while others may not appear at all in a given bootstrap sample [19] [23].

The primary variants of bootstrapping for model validation include:

- Standard Bootstrap Validation: Involves drawing multiple bootstrap samples, fitting a model to each, and evaluating performance on both the bootstrap sample and the original dataset to estimate optimism [23].

- Out-of-Bag (OOB) Validation: Leverages the fact that each bootstrap sample typically contains approximately 63.2% of the original observations, with the remaining ~36.8% serving as natural validation sets [19] [22].

- Optimism Correction Bootstrap: A refined approach that calculates the difference between performance on bootstrap samples and the original data, then averages these differences to produce a bias-corrected performance estimate [23] [24].

- .632 and .632+ Bootstrap: Advanced methods that weight the original and out-of-bag estimates to correct for the bias in standard bootstrap validation, with .632+ specifically designed to handle situations with high overfitting [24].

Methodological Protocol

The bootstrap validation workflow with optimism correction follows these steps [23]:

- Bootstrap Sample Generation: Draw a bootstrap sample from the original dataset by randomly selecting n observations with replacement (where n is the total sample size).

- Model Training: Fit the model to the bootstrap sample.

- Performance Calculation: Calculate the performance metric of interest (e.g., Somers' D, c-index) for:

- The model applied to the bootstrap sample (training performance)

- The model applied to the original dataset (test performance)

- Optimism Estimation: Compute the difference between the training and test performance metrics.

- Iteration: Repeat steps 1-4 a large number of times (typically 200-1000 iterations).

- Bias Correction: Calculate the average optimism across all bootstrap samples and subtract this from the apparent performance of the model fitted on the original dataset.

The following diagram illustrates the bootstrap validation workflow:

Clinical Research Considerations

Bootstrapping offers particular advantages in clinical research contexts:

- Small Sample Sizes: Bootstrapping is particularly valuable when working with small datasets (n < 200), such as rare disease studies, where data splitting or cross-validation would create impractically small training or validation sets [1] [21].

- Variance Estimation: Unlike cross-validation, bootstrapping provides direct estimates of the variability in performance metrics, enabling construction of confidence intervals around performance estimates [19] [24].

- Optimism Correction: The bootstrap optimism correction method specifically addresses the overfitting inherent in model development, providing a more realistic estimate of how the model will perform on new patient data [23].

Comparative Analysis: Performance and Applications

Direct Comparison of Key Characteristics

The table below summarizes the fundamental differences between cross-validation and bootstrapping for internal validation of clinical prediction models:

| Aspect | Cross-Validation | Bootstrapping |

|---|---|---|

| Definition | Splits data into k subsets (folds) for training and validation [19] | Samples data with replacement to create multiple bootstrap datasets [19] |

| Data Partitioning | Mutually exclusive subsets; each observation in test set exactly once per cycle [19] | Sampling with replacement; creates overlapping training sets with omitted out-of-bag samples [19] [22] |

| Typical Applications | Model evaluation, hyperparameter tuning, model selection [19] [20] | Uncertainty estimation, small sample studies, optimism correction [19] [23] |

| Bias-Variance Tradeoff | Generally lower variance with higher bias (especially with small k) [19] | Generally lower bias with higher variance (especially with small samples) [19] |

| Computational Demand | Requires k model fits; manageable for most k values (typically 5-10) [19] | Typically requires 200-1000 model fits; more computationally intensive [23] |

| Recommended Dataset Size | Medium to large datasets [21] | Small datasets (n < 200) [21] |

| Performance Estimate Stability | Can have high variance with small samples or small k [19] | Provides stable estimates with sufficient bootstrap samples [19] |

Empirical Performance Comparison

Research studies have compared the performance of these methods in various clinical and statistical scenarios:

- Error Estimation Accuracy: Simulation studies indicate that repeated k-fold cross-validation (particularly 5 or 10 folds) and the bootstrap .632+ method generally provide the most accurate estimates of prediction error, with no single method dominating across all scenarios [24].

- Bias and Variance Characteristics: Leave-one-out cross-validation and 10-fold cross-validation typically demonstrate low bias but can have high variance. In contrast, bootstrap methods (particularly out-of-bag validation) tend to have lower variance but may exhibit higher bias, especially with small sample sizes and strong signal-to-noise ratios [22] [24].

- Small Sample Performance: In small sample settings common in clinical studies, the .632+ bootstrap method generally performs well, though it may show slight underestimation bias when outcome events are very rare [24].

- Computational Efficiency: For models with complex fitting procedures or large datasets, k-fold cross-validation (especially with k=5 or 10) is often more computationally efficient than bootstrap methods requiring hundreds of iterations [19] [24].

Guidelines for Method Selection in Clinical Research

Based on empirical evidence and methodological considerations:

- For Medium to Large Datasets (n > 200): Use 5- or 10-fold cross-validation, repeated multiple times (50-100) for precise estimates. This approach balances bias and variance while maintaining computational feasibility [24] [21].

- For Small Datasets (n < 200): Prefer bootstrapping methods, particularly the optimism-corrected bootstrap or .632+ bootstrap, which provide more stable performance estimates in data-limited scenarios [23] [21].

- For High-Dimensional Data (e.g., genomics, imaging): Cross-validation is generally preferred as bootstrapping may overfit due to repeated sampling of the same individuals [21].

- For Uncertainty Quantification: When confidence intervals for performance metrics are needed, bootstrapping provides direct estimates of variability without additional computational burden [19] [24].

- For Computational Efficiency: With computationally intensive models or very large datasets, k-fold cross-validation requires fewer model fits than comprehensive bootstrap validation [19].

Experimental Protocols and Implementation

Detailed k-Fold Cross-Validation Protocol

For clinical prediction model development, the following detailed protocol ensures rigorous internal validation using k-fold cross-validation:

Data Preprocessing and Partitioning:

- Determine the appropriate k value (typically 5 or 10 for medium-sized datasets)

- For clustered data (multiple observations per patient), implement cluster-level partitioning

- For time-series data, use temporal splitting to avoid future information leakage

- For imbalanced outcomes, implement stratified partitioning

Iteration and Model Training:

- For each fold, train an identical model specification on the training folds

- Apply identical preprocessing (imputation, scaling, feature selection) independently to each training set

- For hyperparameter tuning, use nested cross-validation within the training folds only

Performance Metrics Calculation:

- Calculate discrimination metrics (c-statistic/AUC, Dxy) for each validation fold

- Calculate calibration metrics (calibration slope, calibration-in-the-large) for each validation fold

- Record overall performance metrics (Brier score, R²) as appropriate

Results Aggregation:

- Compute mean and standard deviation of performance metrics across all folds

- Calculate confidence intervals using appropriate methods (e.g., percentile method or t-based intervals)

The following Python code illustrates a basic implementation using scikit-learn:

Detailed Bootstrap Validation Protocol

For bootstrap validation with optimism correction, implement the following protocol:

Bootstrap Iteration Setup:

- Determine the number of bootstrap samples (B = 200-1000)

- Initialize arrays to store performance metrics

Bootstrap Loop:

- For each bootstrap iteration:

- Draw a bootstrap sample with replacement from the original data

- Fit the model to the bootstrap sample

- Calculate apparent performance on the bootstrap sample

- Calculate test performance on the original dataset

- Compute optimism as the difference: apparent performance - test performance

- For each bootstrap iteration:

Optimism Correction:

- Compute average optimism across all bootstrap samples

- Calculate bias-corrected performance: original apparent performance - average optimism

The following R code illustrates bootstrap validation for a logistic regression model:

Research Reagent Solutions

The table below details key computational tools and their functions for implementing internal validation techniques:

| Tool/Software | Primary Function | Implementation Notes |

|---|---|---|

| scikit-learn (Python) | Machine learning pipeline with built-in cross-validation | Provides KFold, StratifiedKFold, crossvalscore, and cross_validate functions for comprehensive CV [20] |

| caret (R) | Classification and regression training | Unified interface for model training with built-in cross-validation and bootstrap resampling |

| rms (R) | Regression modeling strategies | Includes validate() function for bootstrap validation of model performance metrics [23] |

| boot (R) | Bootstrap resampling | General framework for bootstrap methods with extensive configuration options [23] |

| pymc3 (Python) | Bayesian modeling | Supports posterior predictive checks and Bayesian cross-validation methods [21] |

| tidymodels (R) | Modular modeling framework | Modern approach to modeling with consistent resampling interface for CV and bootstrap |

Cross-validation and bootstrapping represent two fundamentally different approaches to internal validation, each with distinct strengths and optimal application scenarios in clinical prediction research. Cross-validation, particularly in its k-fold and stratified variants, provides an efficient approach for model evaluation and selection in medium to large datasets. Bootstrapping, with its various bias-correction techniques, offers robust performance estimation particularly valuable in small-sample clinical studies and when uncertainty quantification is essential.

For clinical researchers developing prediction models, the choice between these methods should be guided by dataset characteristics, research objectives, and computational resources. When feasible, implementing both approaches can provide complementary insights into model performance and stability. Regardless of the chosen method, rigorous internal validation represents a crucial step in developing clinically useful prediction models that generalize beyond the development dataset and can be trusted to inform patient care decisions.

The integration of clinical prediction models (CPMs) into healthcare systems represents a paradigm shift toward data-driven medicine. These models, which estimate the risk of current diagnostic or future prognostic events for individuals, are increasingly transitioning from research artifacts to tools that actively shape patient care [1] [25]. Successful implementation hinges on a fundamental principle: a model's predictive performance is not an intrinsic property but is highly dependent on the specific population and clinical setting in which it is deployed [1] [25]. This comparison guide examines the pathways and considerations for implementing CPMs across two primary domains—hospital systems and web applications—framed within the critical context of performance validation across different environments.

The pipeline for CPM implementation traditionally begins with model development and internal validation, progresses through external validation in new data, and may culminate in impact assessment studies before clinical deployment [25]. Whether models are developed using traditional regression techniques or advanced machine learning (ML) and artificial intelligence (AI) approaches, the core requirement for robust validation remains unchanged [26] [25]. As healthcare stands at the precipice of a predictive revolution, with nearly 60% of U.S. hospitals expected to adopt AI-assisted predictive tools by 2025, understanding the nuances of implementation across different platforms becomes paramount [27].

Comparative Analysis of Implementation Platforms

Performance Metrics Across Implementation Environments

Table 1: Comparative Performance of CPMs in Different Clinical Settings

| Implementation Platform | Model Type | Target Population | Key Performance Metrics | Reported Outcomes |

|---|---|---|---|---|

| Hospital System (Integrated EMR) | AI-based risk prediction for colorectal cancer surgery | Patients undergoing elective colorectal cancer surgery [28] | AUROC: 0.79 (validation set); Comprehensive Complication Index >20: 19.1% vs 28.0% (personalized vs standard care) [28] | 37% reduction in medical complications; cost-effective in short-term modeling [28] |

| Hospital System (Clinical Workflow) | Prediction model for cisplatin-associated AKI | Japanese patients receiving cisplatin therapy [14] | AUROC: 0.616 (Gupta) vs 0.613 (Motwani); Severe AKI: 0.674 vs 0.594 [14] | Required recalibration for local population; improved net benefit after recalibration [14] |

| Web Application (Decision Support) | QRISK cardiovascular risk model | UK primary care population [25] | Not specifically reported in search results; performance known to vary by population | Intended for risk calculation in primary care; performance population-dependent [25] |

| Registry-Based Approach | AI-based decision support for perioperative care | Danish colorectal cancer patients [28] | AUROC: 0.82 (development), 0.77 (internal validation) [28] | Scalable approach using readily available registry data [28] |

Methodological Frameworks for Implementation

Table 2: Implementation Methodologies and Validation Approaches

| Implementation Framework Component | Hospital System Applications | Web Application Platforms |

|---|---|---|

| Validation Requirements | External validation in local patient population essential; recalibration often needed [14] | Targeted validation for intended user population; may require multiple validations for different settings [25] |

| Data Integration | Integration with Electronic Medical Records (EMRs); requires data mapping and extraction pipelines [28] | API-based connectivity to health systems; potentially lighter integration burden |

| Regulatory Considerations | FDA/EMA approval for clinical decision support systems; institutional review board approval [26] | Varied regulatory oversight depending on functionality and claims; data privacy compliance (HIPAA, PIPEDA) [29] |

| Implementation Workflow | Embedded in clinical workflow at point of care; often part of order sets or clinical pathways [28] | Accessed on-demand by clinicians; may support patient-facing functionality |

| Scalability Considerations | Institution-specific deployment; may require local customization | Broad accessibility; easier updates but requires validation across diverse settings [25] |

Experimental Protocols for Model Validation

Protocol 1: External Validation and Recalibration

The external validation protocol follows the methodology outlined by Saito et al. in their validation of cisplatin-associated acute kidney injury (C-AKI) prediction models [14]. This protocol is specifically designed to evaluate whether models developed in one population (typically from published literature or different healthcare systems) maintain their predictive performance when applied to a new target population.

Population Definition and Data Collection: The validation cohort comprised 1,684 patients treated with cisplatin at a single Japanese university hospital, with C-AKI defined as either a ≥0.3 mg/dL increase in serum creatinine or a ≥1.5-fold rise from baseline. Severe C-AKI was defined as a ≥2.0-fold increase or renal replacement therapy initiation [14]. This careful population definition is crucial for "targeted validation" – estimating model performance within the specific intended population and setting [25].

Performance Assessment: Researchers evaluated discrimination using the area under the receiver operating characteristic curve (AUROC), calibration through calibration plots, and clinical utility via decision curve analysis (DCA). The Gupta and Motwani models showed similar discrimination for C-AKI (AUROC 0.616 vs. 0.613) but differed for severe C-AKI (0.674 vs. 0.594) [14].

Recalibration Procedure: When both models exhibited poor initial calibration, researchers applied logistic recalibration to adapt them to the local population. This process adjusts the model's intercept or slope to better align predicted probabilities with observed outcomes in the new setting, significantly improving clinical utility as measured by DCA [14].

Protocol 2: Stepwise Implementation Framework

The stepwise implementation framework demonstrated by the Danish colorectal cancer study provides a comprehensive protocol for integrating AI-based prediction models into clinical practice [28]. This approach systematically progresses from model development to impact assessment.

Registry-Based Model Development: The initial phase utilized national registry data from 18,403 patients undergoing curative-intent surgery for colorectal cancer. This large-scale data enabled identification of challenges related to clinical outcomes and supported robust model development with internal validation [28].

External Validation and Clinical Integration: The model underwent external validation using a retrospective clinical cohort of 806 patients from a single center. This step is critical for assessing performance in real-world clinical data before implementation [28]. The implementation used a predefined risk stratification system with four clinical risk groups (A, B, C, D) based on predicted 1-year mortality (≤1%, >1-≤5%, >5-≤15%, >15%) [28].

Impact Assessment: The final phase evaluated clinical outcomes in a prospective cohort of 194 patients receiving personalized perioperative treatment based on model predictions. Researchers compared comprehensive complication indices and medical complication rates between the personalized treatment group and standard-care historical controls, demonstrating significant improvements in outcomes [28].

Visualization of Implementation Workflows

Clinical Prediction Model Implementation Pathway

Diagram Title: CPM Implementation Pathway

Model Validation and Integration Workflow

Diagram Title: Validation & Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Clinical Prediction Model Implementation

| Tool/Resource | Function in Implementation | Application Context |

|---|---|---|

| TRIPOD+AI Reporting Guidelines [26] [30] | Standardized reporting framework for prediction model studies; ensures transparent documentation of development and validation processes | Essential for publication and critical appraisal of model performance; required for study reproducibility |

| PROBAST Risk of Bias Tool [25] | Quality assessment instrument for evaluating potential biases in prediction model studies | Critical for systematic reviews of prediction models; helps identify methodological limitations |

| ColorBrewer & Data Color Picker [31] | Color palette selection tools for creating accessible data visualizations | Important for developing clinician-friendly interfaces and dashboards; ensures colorblind-accessible displays |

| Chroma.js Color Palette Helper [31] | Advanced color palette generation with built-in color blindness simulation | Useful for testing visualization accessibility in clinical decision support interfaces |

| Good Machine Learning Practice (GMLP) [26] | Framework of principles for responsible ML development in healthcare | Guides ethical implementation with emphasis on diverse data, transparency, and ongoing monitoring |

| Precondition-Postcondition Framework [29] | Healthcare-specific implementation framework based on software engineering concepts | Helps bridge gaps between model performance and clinical implementation through "required clinical parameters" and "expected clinical output" |

| Fairness Assessment Metrics [30] | Statistical measures to evaluate algorithmic bias across demographic groups | Critical for ensuring equitable model performance across diverse patient populations |

The implementation of clinical prediction models represents a complex interplay between statistical performance, clinical workflow integration, and ongoing validation. The evidence from recent studies indicates that successful implementation requires more than just a well-performing model; it demands careful attention to the specific context of deployment and continuous monitoring of real-world impact [28] [25] [14].

Hospital system implementations offer the advantage of deep integration with clinical workflows and electronic health records, enabling automated risk stratification at the point of care. The Danish colorectal cancer study demonstrates how this approach can yield significant clinical improvements, with a 37% reduction in medical complications when using personalized treatment pathways based on model predictions [28]. However, this implementation model requires substantial institutional investment, information technology resources, and rigorous local validation to ensure models perform adequately in the specific patient population [14].

Web application platforms provide greater accessibility and potentially lower implementation barriers, particularly for smaller healthcare organizations or research settings. These platforms can more easily disseminate models across multiple institutions but face challenges in maintaining consistent performance across diverse populations [25]. The concept of "targeted validation" becomes particularly crucial for web applications, as their broader reach necessitates careful assessment of performance in each distinct setting where they are deployed [25].

A critical consideration across all implementation platforms is the emerging focus on algorithmic fairness. As noted in recent research, "algorithmic fairness" requires that models do not produce biased or discriminatory outcomes, particularly against specific groups or populations [30]. This necessitates rigorous assessment of model performance across demographic subgroups and proactive mitigation of biases that could exacerbate healthcare disparities [30]. The finding that models like the Framingham cardiovascular risk score have shown differential performance across racial and ethnic groups underscores the importance of these fairness considerations [30].

Ultimately, the choice between hospital system integration and web application deployment depends on multiple factors, including the specific clinical use case, available technical infrastructure, required workflow integration, and resources for ongoing maintenance and validation. What remains constant across both approaches is the fundamental requirement for robust validation in the intended population and setting, continuous monitoring of real-world performance, and careful attention to the ethical implications of algorithm-guided clinical care.

Addressing Model Limitations: Recalibration, Updating, and Overcoming Bias

In the realm of clinical prediction models (CPMs), the work does not conclude with model development and validation. The dynamic nature of healthcare environments, characterized by evolving patient demographics, changing clinical practices, and updates to medical technology, necessitates a proactive approach to model maintenance. Model updating—the process of refining an existing prediction model to maintain or improve its performance in a new setting or over time—has emerged as a crucial methodology for ensuring that CPMs remain fit for purpose. Despite its importance, a recent systematic review found that only 13% of clinically implemented models have undergone any form of updating, indicating a significant gap in current practice [5].

The consequences of using outdated or miscalibrated models can be severe, potentially leading to incorrect risk assessments, suboptimal treatment decisions, and ultimately, patient harm. This is particularly critical in fields like oncology and cardiology, where prediction models directly influence high-stakes therapeutic decisions. For instance, in the case of cisplatin-associated acute kidney injury (C-AKI), applying an unupdated model to a new population resulted in poor calibration, though its discriminatory ability remained acceptable [11]. This review provides a comprehensive comparison of model updating strategies, offering methodological guidance and empirical evidence to support researchers, scientists, and drug development professionals in maintaining the validity and clinical utility of their prediction models over time.

When to Update: Identifying the Need for Model Revision

Determining the optimal timing for model updating is both an art and a science. While periodic updates at predetermined intervals represent one approach, a more nuanced strategy involves monitoring performance metrics to identify signs of deterioration. The degradation of model performance, often termed "calibration drift," occurs as the relationship between predictors and outcomes evolves due to changes in the underlying population or healthcare processes [32].

Several indicators suggest that a model may require updating. A noticeable decline in discrimination, measured by metrics such as the Area Under the Receiver Operating Characteristic Curve (AUROC), signals that the model is becoming less capable of distinguishing between patients who experience the outcome and those who do not. More commonly, models exhibit miscalibration, where predicted probabilities systematically diverge from observed outcomes. This can manifest as overestimation or underestimation of risk across the entire spectrum (calibration-in-the-large) or in specific risk ranges [33]. For example, when the PTP2019 model for chest pain assessment was applied to a Colombian cohort, it underestimated the probability of coronary artery disease by 59%, representing a significant calibration issue that necessitated updating [34].

The context of model deployment also influences updating decisions. Major structural changes in healthcare systems, updates to electronic health record platforms, shifts in clinical guidelines, or the emergence of new treatment modalities can all precipitate the need for model refinement [32]. Additionally, when extending a model to a new population with different characteristics or prevalence rates, updating becomes essential to ensure transportability. The "three triggers" for model updating can be summarized as: (1) statistical evidence of performance degradation, (2) significant changes in the clinical environment or population, and (3) planned expansion to new settings or populations.

How to Update: A Spectrum of Methodological Approaches

Model updating strategies exist on a continuum of complexity and intrusiveness, ranging from simple adjustments to extensive revisions. The choice of method depends on factors such as the availability of new data, the extent of performance degradation, and the resources available for model refinement.

Recalibration Methods

Recalibration represents the least intrusive updating approach, adjusting the model's baseline risk or coefficient scaling without altering the underlying predictor-outcome relationships. Intercept recalibration modifies only the model's baseline hazard or intercept term to align overall predicted probabilities with observed outcome rates in the new population. This approach preserves the original model's relative risk orderings while correcting for systematic over- or under-prediction [35]. Logistic recalibration takes this a step further by adjusting both the intercept and the overall slope of the linear predictor, effectively applying a uniform scaling factor to all coefficients [35]. This method addresses both systematic miscalibration and issues with the overall strength of predictor effects in the new setting.

The effectiveness of recalibration was demonstrated in the C-AKI prediction study, where both the Motwani and Gupta models exhibited poor initial calibration when applied to a Japanese population. After simple recalibration, their performance significantly improved, highlighting the value of this straightforward approach even for models developed in different countries [11].

Model Revision and Extension

When recalibration proves insufficient, more extensive updating may be necessary. Model revision involves re-estimating some or all of the original predictor coefficients while retaining the same set of variables [35]. This approach acknowledges that not only the baseline risk but also the relative importance of predictors may differ in the new context. Model extension introduces new predictors not included in the original model, potentially capturing additional prognostic information or accounting for novel risk factors that have emerged since the initial development [33]. This strategy is particularly valuable when scientific advancements have identified previously unrecognized predictors or when implementing the model in settings with additional available data.

For situations involving multiple existing models, meta-model approaches such as stacked regression offer a sophisticated updating framework. These methods combine predictions from several existing CPMs, weighting them according to their performance in the new dataset [35]. The hybrid method extends this concept by integrating stacked regression with covariate-specific revisions, effectively leveraging information from multiple source models while allowing for population-specific adjustments [35].

Table 1: Comparison of Clinical Prediction Model Updating Methods

| Method | Key Features | Data Requirements | Complexity | Best Use Cases |

|---|---|---|---|---|

| Intercept Recalibration | Adjusts only baseline risk; preserves relative predictor effects | Outcome prevalence in new population | Low | Overall risk over/under-prediction with preserved discrimination |

| Logistic Recalibration | Adjusts intercept and slope of linear predictor | Individual-level data for linear predictor calculation | Low to Moderate | Uniform miscalibration across risk spectrum |

| Model Revision | Re-estimates some or all predictor coefficients | Individual-level data with original predictors | Moderate | Changing relationships between predictors and outcome |

| Model Extension | Adds new predictors to existing model | Individual-level data including new variables | Moderate to High | Availability of novel, informative predictors |

| Stacked Regression | Combines multiple existing models with optimal weights | Individual-level data; multiple existing models | High | Multiple relevant source models with varying performance |

| Hybrid Method | Combines model stacking with covariate-specific revisions | Individual-level data; multiple existing models | High | Complex scenarios with multiple models and evolving predictor effects |

Comparative Performance of Updating Strategies

Empirical evidence supports the strategic application of model updating methods across diverse clinical scenarios. Research has demonstrated that the relative performance of different updating strategies depends critically on the sample size of the new dataset and the degree of heterogeneity between the development and implementation populations [35].

When the available sample size for updating is small (typically < 100 events), simpler approaches like intercept recalibration and model stacking tend to outperform more complex methods. These approaches make efficient use of limited information while avoiding overfitting. In contrast, with larger sample sizes (> 200 events), more extensive revision methods or even de novo model development may become feasible and potentially more effective [35].

The clinical context also influences the choice of updating strategy. In the C-AKI prediction study, researchers found that while both the Motwani and Gupta models required calibration adjustments for use in a Japanese population, their discriminatory performance for severe C-AKI differed significantly, with the Gupta model demonstrating superior performance (AUROC 0.674 vs. 0.594) [11]. This finding suggests that model selection before updating is crucial, as some models may have inherently better underlying structure for certain outcomes or populations.

A systematic comparison of updating methods in a full-scale track beam test, while from an engineering domain, offers methodological insights applicable to clinical models. The study found that the optimal updating approach depended on whether static or dynamic responses were targeted, with dynamic weight methods outperforming equal weight approaches by more effectively balancing multiple performance criteria [36]. This principle translates to clinical settings where models must simultaneously maintain calibration across multiple subgroups or outcomes.

Experimental Protocols for Model Updating

Implementing a robust model updating protocol requires systematic assessment of model performance and application of appropriate statistical methods. The following workflow outlines a comprehensive approach to model evaluation and updating:

Diagram Title: Clinical Prediction Model Updating Workflow

Performance Assessment Protocol

The initial phase involves comprehensive evaluation of model performance in the target population:

Data Collection: Assemble a representative dataset from the target population, ensuring complete capture of all predictor variables and outcomes specified in the original model. Clearly define outcome endpoints consistent with the original development study [11] [34].

Discrimination Assessment: Calculate the C-statistic (AUROC) to evaluate the model's ability to distinguish between patients who do and do not experience the outcome. Compare this to the performance reported in the original development study [11] [33].

Calibration Assessment: Assess the agreement between predicted probabilities and observed outcomes using calibration plots, the calibration slope, and calibration-in-the-large. Test for significant differences using goodness-of-fit tests [11] [33].

Clinical Utility Evaluation: Perform decision curve analysis to evaluate the net benefit of the model across a range of clinically relevant decision thresholds [11] [33].

Updating Implementation Protocol

Based on the performance assessment, implement the appropriate updating method:

Intercept Recalibration: Fit a logistic regression model with the original model's linear predictor as the only covariate, constraining its coefficient to 1. Estimate the new intercept based on the outcome prevalence in the new dataset [35].

Logistic Recalibration: Fit a logistic regression model with the original linear predictor as the only covariate, allowing both the intercept and slope to be freely estimated. This adjusts for both overall risk miscalibration and issues with the overall strength of the predictor effects [35].

Model Revision: Refit the model with the original predictors, re-estimating some or all coefficients. Variable selection methods may be applied to identify predictors requiring revision, particularly when sample size is limited [35].

Model Extension: Incorporate new predictors alongside the original variables, using penalized regression or other methods to prevent overfitting when adding multiple new terms [33] [35].

Table 2: Research Reagent Solutions for Model Updating Studies

| Tool/Resource | Function | Application Context |

|---|---|---|

| R Statistical Software | Open-source environment for statistical computing and graphics | Primary platform for implementing model updating procedures, performance assessment, and visualization |

| Python Scikit-learn | Machine learning library with comprehensive model evaluation tools | Alternative platform for updating implementations, particularly for machine learning-based prediction models |

| PROBAST (Prediction Model Risk of Bias Assessment Tool) | Structured tool for assessing methodological quality of prediction model studies | Critical for evaluating existing models before selection for updating or implementation |

| TRIPOD (Transparent Reporting of a Multivariable Prediction Model) | Reporting guideline for prediction model studies | Ensures comprehensive reporting of updating studies, enhancing reproducibility and critical appraisal |