Performance Evaluation of Hyperparameter Optimization Algorithms for Chemical Datasets: A Guide for Drug Development

This article provides a comprehensive evaluation of Hyperparameter Optimization (HPO) algorithms tailored for machine learning applications on chemical datasets, a critical task in drug discovery and materials science.

Performance Evaluation of Hyperparameter Optimization Algorithms for Chemical Datasets: A Guide for Drug Development

Abstract

This article provides a comprehensive evaluation of Hyperparameter Optimization (HPO) algorithms tailored for machine learning applications on chemical datasets, a critical task in drug discovery and materials science. We explore the foundational importance of HPO in boosting the predictive accuracy of models for molecular property prediction and reaction optimization. The content systematically reviews and compares key HPO methodologies—from Bayesian Optimization and Hyperband to novel hybrid and LLM-enhanced strategies—detailing their application on cheminformatics benchmarks. We further address common pitfalls and optimization techniques for handling the high-dimensional, noisy, and often small-scale data typical in chemistry. Finally, we present a rigorous framework for validating and comparing HPO performance, synthesizing evidence from recent literature to offer actionable recommendations for researchers and development professionals aiming to build more reliable and efficient predictive models.

Why Hyperparameter Optimization is a Game-Changer for Cheminformatics

The Critical Role of HPO in Molecular Property Prediction and Drug Discovery

In the landscape of modern drug discovery, the acronym HPO represents two complementary pillars of computational advancement: Hyperparameter Optimization for machine learning models and the Human Phenotype Ontology for biological knowledge representation. Both play indispensable yet distinct roles in accelerating molecular property prediction and therapeutic development. Hyperparameter Optimization refers to the automated process of tuning the configuration settings of machine learning algorithms to maximize their predictive performance on chemical datasets [1] [2]. This technical HPO has become increasingly critical as complex models like Graph Neural Networks (GNNs) demonstrate exceptional capability in representing molecular structures but exhibit high sensitivity to their architectural and training parameters [1]. Simultaneously, the Human Phenotype Ontology provides a standardized vocabulary of human phenotypic abnormalities, creating a computational framework that links disease manifestations to their genetic underpinnings [3] [4]. This biological HPO enables researchers to quantify disease similarities, annotate clinical findings, and ultimately bridge the gap between molecular-level predictions and patient-level outcomes.

The integration of both HPO concepts creates a powerful synergy for drug discovery. While hyperparameter optimization ensures the accuracy and reliability of predictive models for chemical properties, the Human Phenotype Ontology provides the clinical context necessary for translating these predictions into therapeutic insights. This article examines their interconnected roles through comparative performance data, experimental protocols, and practical implementation frameworks that researchers can leverage in their discovery pipelines.

HPO Algorithm Performance: Quantitative Comparisons

Benchmarking Hyperparameter Optimization Methods

Hyperparameter optimization algorithms demonstrate significant variability in both computational efficiency and predictive performance across molecular property prediction tasks. The table below synthesizes key findings from comprehensive benchmarking studies:

Table 1: Performance Comparison of HPO Algorithms for Molecular Property Prediction

| HPO Algorithm | Computational Efficiency | Prediction Accuracy | Key Strengths | Molecular Property Applications |

|---|---|---|---|---|

| Hyperband | Highest [2] | Optimal/Nearly Optimal [2] | Exceptional computational efficiency through adaptive resource allocation | Melt index prediction, glass transition temperature [2] |

| Bayesian Optimization | Moderate [2] [5] | High [2] [5] | Effective balance between exploration and exploitation; strong theoretical foundations | ADME properties, quantum chemical properties [2] |

| Random Search | Moderate [2] | Variable [2] | Simple implementation; better than grid search for high-dimensional spaces | Polymer properties, solubility prediction [2] |

| Grid Search | Lowest [5] | High (but computationally prohibitive) [5] | Exhaustive coverage of search space | Smaller hyperparameter spaces [5] |

Recent research indicates that the Hyperband algorithm achieves superior computational efficiency while maintaining optimal or nearly optimal prediction accuracy for molecular property prediction (MPP) tasks [2]. In direct comparisons, Hyperband significantly outperformed both random search and Bayesian optimization in time-to-solution without sacrificing predictive accuracy, making it particularly valuable for resource-intensive deep neural networks applied to chemical datasets [2].

For healthcare applications including heart failure outcome prediction, Bayesian Optimization has demonstrated exceptional computational efficiency, consistently requiring less processing time than both Grid Search and Random Search methods while maintaining competitive predictive performance [5]. This efficiency advantage becomes increasingly significant when optimizing multiple hyperparameters across large chemical datasets.

Experimental Protocols for HPO Evaluation

Standardized experimental protocols are essential for meaningful comparison of HPO techniques in molecular property prediction. Based on recent benchmarking studies, the following methodology provides a robust framework for evaluation:

Dataset Preparation and Preprocessing

- Select diverse molecular property datasets representing different prediction challenges (e.g., quantum chemical properties from QM9, solubility data from ESOL/FreeSolv, ADME parameters) [2] [6]

- Apply rigorous data consistency assessment using tools like AssayInspector to identify distributional misalignments and annotation discrepancies between sources [7]

- Implement appropriate data splitting strategies that account for temporal, structural, or experimental biases to prevent overoptimistic performance estimates [6]

- For HPO phenotype classification, extract and normalize phenotypic descriptions from clinical text using NLP pipelines like John Snow Labs' Healthcare NLP [4]

Model Training and Validation Configuration

- Define search spaces for hyperparameters encompassing both architectural (number of layers, units per layer, activation functions) and optimization (learning rate, batch size, dropout rates) parameters [2]

- Implement parallel execution of HPO trials using platforms like KerasTuner or Optuna to reduce optimization time [2]

- Employ appropriate validation strategies such as k-fold cross-validation with molecular scaffolds or temporal splits to assess generalization capability [5]

- For phenotype-driven prediction, incorporate HPO-based disease similarity metrics using semantic similarity measures derived from the Human Phenotype Ontology [3] [8]

Performance Assessment Metrics

- Utilize multiple evaluation metrics including Mean Absolute Error (MAE) for regression tasks and Area Under the Curve (AUC) for classification tasks [2] [6]

- Report both optimization efficiency (trials-to-convergence, computational time) and final model performance [2]

- Employ statistical significance testing (e.g., paired t-tests) to validate performance differences between HPO approaches [6]

Visualization of HPO Workflows

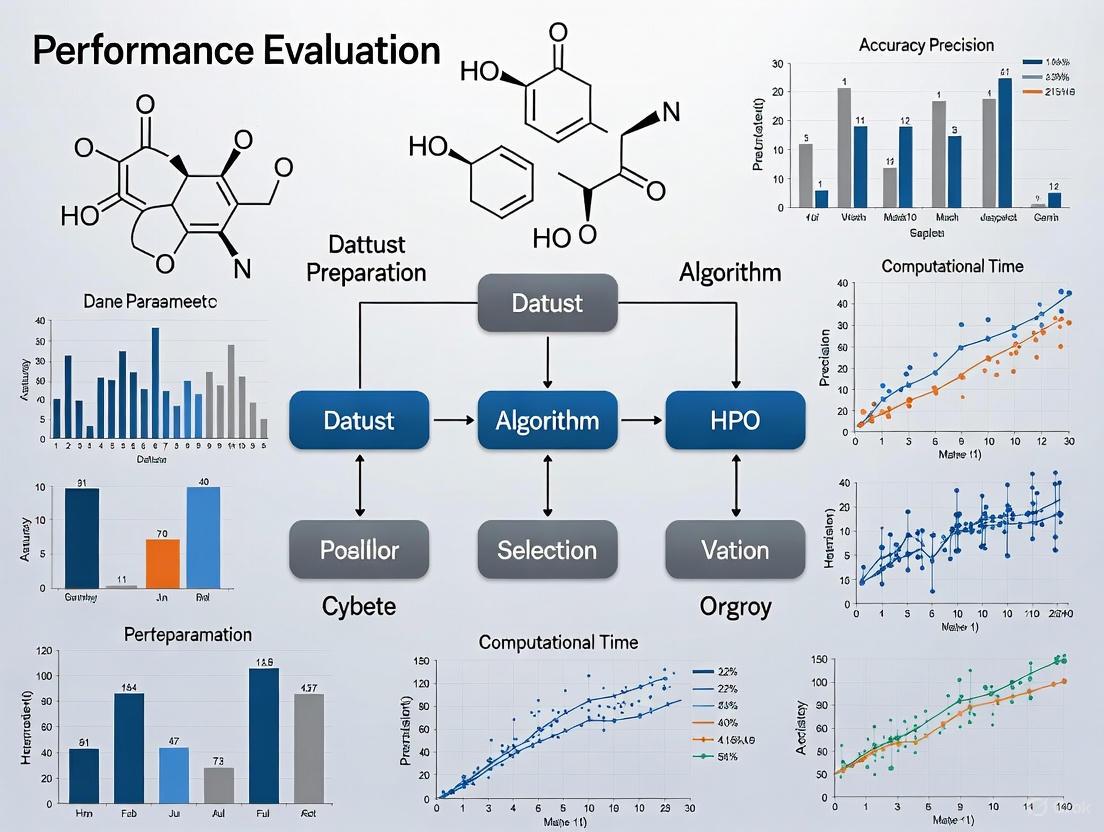

Integrated HPO for Drug Discovery Pipeline

The following diagram illustrates the comprehensive workflow integrating both hyperparameter optimization and Human Phenotype Ontology in molecular property prediction for drug discovery:

Integrated HPO Workflow for Drug Discovery

This unified pipeline demonstrates how computational HPO (green nodes) and biological HPO (red nodes) converge to support candidate ranking and prioritization (blue node). The workflow begins with parallel processes: hyperparameter optimization of machine learning models on compound libraries, and HPO annotation of clinical phenotype data to construct disease similarity networks. These streams integrate to enhance candidate prioritization through both predicted molecular properties and phenotypic relevance.

Bias Mitigation in Molecular Property Prediction

Experimental biases in chemical datasets significantly impact model performance. The following diagram outlines approaches for bias mitigation in molecular property prediction:

Bias Mitigation in Chemical Data

Recent studies have successfully adapted techniques from causal inference, specifically Inverse Propensity Scoring (IPS) and Counter-factual Regression (CFR), combined with Graph Neural Networks to address experimental biases in chemical data [6]. These approaches significantly improve prediction accuracy on the broader chemical space by accounting for the non-random sampling processes inherent in experimental data collection [6].

Research Reagent Solutions: Essential Tools for HPO Implementation

Table 2: Key Research Tools and Resources for HPO Implementation

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| KerasTuner [2] | Software Library | Hyperparameter optimization | User-friendly HPO for deep learning models; supports Hyperband, Bayesian Optimization |

| Optuna [2] | Software Framework | Hyperparameter optimization | Flexible HPO with Bayesian-Hyperband combination capabilities |

| AssayInspector [7] | Data Quality Tool | Data consistency assessment | Identifies dataset misalignments and biases prior to modeling |

| John Snow Labs NLP [4] | NLP Pipeline | HPO phenotype extraction | Automates extraction and coding of phenotype mentions from clinical text |

| Human Phenotype Ontology [3] [4] | Ontology Database | Phenotype standardization | Structured vocabulary for phenotypic abnormalities with over 18,000 terms |

| ChemRAG-Bench [9] | Evaluation Benchmark | RAG system assessment | Comprehensive benchmark for chemistry-focused retrieval-augmented generation |

| Therapeutic Data Commons (TDC) [7] | Data Resource | Molecular property datasets | Curated benchmarks for ADME and physicochemical property prediction |

Discussion: Integrated HPO Approaches for Next-Generation Drug Discovery

The convergence of hyperparameter optimization and Human Phenotype Ontology represents a paradigm shift in computational drug discovery. Research demonstrates that automated HPO techniques can yield substantial improvements in prediction accuracy—addressing the critical sensitivity of GNNs to architectural choices and hyperparameters [1] [2]. Simultaneously, the Human Phenotype Ontology enables computational analysis of phenotypic data at scale, capturing disease similarities in a biologically meaningful way that directly informs target prioritization [3] [8].

The emerging frontier lies in integrating these approaches through Retrieval-Augmented Generation (RAG) systems and causality-aware modeling. Recent developments like ChemRAG-Bench demonstrate how external knowledge sources can be systematically incorporated to enhance reasoning in chemical domains [9]. These systems address fundamental challenges in chemical data, including experimental biases [6] and dataset discrepancies [7], which have traditionally limited model generalizability.

For researchers implementing these approaches, the evidence supports several strategic recommendations: (1) prioritize Hyperband for computationally efficient HPO on large molecular datasets [2]; (2) implement rigorous data consistency assessment before model training to address dataset misalignments [7]; (3) leverage HPO-based disease similarities for target identification and validation [8]; and (4) adopt bias mitigation techniques like IPS and CFR when working with experimental data subject to selection biases [6]. As these methodologies continue to mature, their integration promises to significantly accelerate the transformation of chemical data into therapeutic insights.

The application of machine learning (ML) in chemistry has revolutionized domains ranging from drug discovery to materials science. However, the performance of these ML models is profoundly sensitive to their hyperparameters, the configuration settings that govern the learning process itself. The process of selecting optimal values, known as Hyperparameter Optimization (HPO), is therefore not merely a technical pre-processing step but a critical determinant of success, especially when dealing with complex chemical datasets that are often expensive to generate and inherently noisy. This guide provides a comparative analysis of HPO algorithms, objectively evaluating their performance, computational demands, and suitability for chemical ML applications to inform researchers and drug development professionals.

Hyperparameter Optimization Algorithms: A Comparative Framework

Several HPO strategies exist, each with a distinct approach to navigating the hyperparameter search space. The three most prevalent methods—Grid Search, Random Search, and Bayesian Optimization—form the core of this comparison.

Grid Search (GS): A traditional model-free algorithm, Grid Search employs a brute-force method to evaluate every possible combination of hyperparameters within a pre-defined grid [5]. While its exhaustive nature is simple to implement and can be effective for small search spaces, it becomes computationally prohibitive as the number of hyperparameters increases [5].

Random Search (RS): This method randomly samples hyperparameter combinations from the search space [5]. Its stochastic nature often allows it to find good configurations faster than Grid Search, especially when only a subset of hyperparameters significantly impacts model performance [5]. It is less computationally expensive than GS for large search spaces but can still be inefficient [5].

Bayesian Optimization (BO): Bayesian Search constructs a probabilistic surrogate model, typically a Gaussian Process (GP), to approximate the objective function (e.g., validation loss) [5] [10]. It uses an acquisition function to intelligently select the next hyperparameters to evaluate by balancing exploration (probing uncertain regions) and exploitation (refining known good regions) [10]. This makes BO highly sample-efficient, requiring fewer evaluations to find an optimum, which is critical for expensive-to-train models or when experimental data is limited [5] [11].

Performance Comparison on Chemical and Related Datasets

Empirical studies across various domains, including direct applications in chemistry and material science, consistently highlight the trade-offs between these HPO methods.

Predictive Performance and Robustness

A comparative analysis on a real-world heart failure prediction dataset demonstrated the interplay between model selection and HPO strategy. The study evaluated Support Vector Machine (SVM), Random Forest (RF), and eXtreme Gradient Boosting (XGBoost) models optimized via GS, RS, and BO [5].

Table 1: Model Performance Post HPO on a Clinical Dataset [5]

| ML Algorithm | Optimization Method | Best Accuracy | Post-CV AUC Change | Note on Robustness |

|---|---|---|---|---|

| Support Vector Machine (SVM) | Bayesian Search | 0.6294 | -0.0074 | Potential for overfitting |

| Random Forest (RF) | Bayesian Search | Not Reported | +0.03815 | Most robust model |

| XGBoost | Bayesian Search | Not Reported | +0.01683 | Moderate improvement |

The results indicated that while an SVM model achieved the highest single-run accuracy, the RF model exhibited superior robustness after 10-fold cross-validation, showing the greatest average improvement in Area Under the Curve (AUC) [5]. This underscores the importance of validating HPO results through rigorous techniques like cross-validation to ensure generalizability.

In a different domain, optimizing a Least Squares Boosting (LSBoost) model for predicting mechanical properties of 3D-printed nanocomposites, Bayesian Optimization and Genetic Algorithms (GA) showed strong performance [12]. For predicting the modulus of elasticity, BO achieved an impressive R² of 0.9776, while GA outperformed others for yield strength and toughness predictions [12].

Computational Efficiency

Processing time is a critical practical consideration for HPO. In the heart failure outcome prediction study, Bayesian Search demonstrated superior computational efficiency, consistently requiring less processing time than both Grid and Random Search methods [5]. This efficiency, combined with its sample-efficiency, makes BO particularly attractive for complex models and large hyperparameter spaces.

Experimental Protocols for HPO Evaluation

To ensure fair and meaningful comparisons between HPO methods, researchers must adhere to standardized experimental protocols. The following methodology, synthesized from the analyzed studies, provides a robust framework.

Dataset Preparation and Preprocessing

- Data Source and Splitting: The dataset should be split into training, validation, and test sets. For instance, one study on diabetes classification used an 80/20 split for training and testing [13]. To prevent data leakage, one protocol reserves 20% of the initial data (or a minimum of four data points) as an external test set, selected with an "even" distribution to ensure balanced representation [11].

- Handling Missing Values: Techniques like mean imputation, Multivariable Imputation by Chained Equations (MICE), k-Nearest Neighbor (kNN) imputation, or Random Forest imputation can be applied to continuous features with missing values ≤50% [5]. Features with >50% missing values are typically excluded.

- Data Transformation: Categorical features are often encoded using one-hot encoding [5]. Continuous features are standardized using techniques like z-score normalization to have a mean of 0 and a standard deviation of 1 [5].

Hyperparameter Optimization and Validation Workflow

The core of the HPO evaluation process involves iteratively tuning the models and validating their performance. The workflow below outlines the key stages of this protocol.

Diagram 1: HPO evaluation workflow

- Define Search Space: The first step is to define the boundaries and specific values for each hyperparameter to be optimized [14].

- Iterative Tuning & Validation: The chosen HPO method (GS, RS, or BO) is used to select hyperparameter configurations, which are evaluated on the training and validation sets. This process repeats until a stopping condition is met (e.g., a maximum number of iterations or performance convergence) [5] [10].

- Mitigating Overfitting: To prevent overfitting during HPO, a combined metric using cross-validation can be incorporated directly into the optimization objective. One approach uses a combined Root Mean Squared Error (RMSE) from a 10-times repeated 5-fold CV (for interpolation) and a selective sorted 5-fold CV (for extrapolation) [11].

- Final Evaluation: The best hyperparameter set identified is used to train a final model on the entire training set, which is then evaluated once on the held-out test set to report unbiased performance metrics [11].

The Scientist's Toolkit: Essential Reagents for HPO in Chemical ML

Successful HPO in chemical ML relies on a combination of software, algorithms, and methodological practices. The following table details key "research reagents" for this field.

Table 2: Essential Research Reagents for HPO in Chemical ML

| Tool/Technique | Category | Function & Application |

|---|---|---|

| Gaussian Process (GP) | Surrogate Model | Models the objective function as a distribution over functions; the core of many BO frameworks for capturing uncertainty [10]. |

| Expected Improvement (EI) | Acquisition Function | Guides BO by selecting points that offer the highest expected improvement over the current best value [10]. |

| Thompson Sampling (TSEMO) | Acquisition Function | An algorithm for multi-objective BO that uses Thompson sampling, effective for optimizing multiple, often competing, objectives [10]. |

| k-Fold Cross-Validation | Validation Protocol | Assesses model generalizability and mitigates overfitting by rotating the validation set across k partitions of the training data [5]. |

| Summit | Software Framework | A Python toolkit for optimizing chemical reactions, which includes benchmarks and implementations of various BO strategies like TSEMO [10]. |

| ROBERT | Software Workflow | An automated program for building ML models from CSV files, performing data curation, and Bayesian hyperparameter optimization tailored for low-data regimes [11]. |

| Multi-fidelity Modeling | Advanced BO Technique | Enhances BO efficiency by incorporating data from cheaper, lower-fidelity experiments (e.g., computational simulations) to guide optimization of high-fidelity experiments [10]. |

Advanced Trends: Integrating Reasoning and Knowledge into BO

The field of HPO is evolving beyond pure statistical methods. A significant advancement is the integration of Large Language Models (LLMs) with Bayesian Optimization to create more intelligent and interpretable frameworks.

Reasoning BO is a novel framework that leverages the reasoning power and domain knowledge of LLMs to guide the sampling process in BO [15]. It uses a multi-agent system and knowledge graphs for online knowledge accumulation, allowing the system to generate and refine scientific hypotheses based on prior results [15]. This approach addresses key limitations of traditional BO, such as its tendency to get stuck in local optima and its lack of interpretability.

For example, in a chemical reaction yield optimization task (Direct Arylation), the Reasoning BO framework achieved a final yield of 94.39%, drastically outperforming traditional BO, which achieved only 76.60% [15]. The framework's ability to leverage domain knowledge and reason about experiments makes it particularly promising for complex optimization challenges in chemical synthesis and drug discovery.

Diagram 2: Reasoning BO framework

The choice of a hyperparameter optimization algorithm is a fundamental decision that directly impacts the performance, cost, and reliability of machine learning models in chemical research. While Grid Search offers simplicity for small spaces, and Random Search provides a stochastic upgrade, Bayesian Optimization consistently demonstrates superior sample efficiency and is the de facto standard for complex, expensive optimization tasks. Emerging paradigms like Reasoning BO, which marry Bayesian efficiency with the contextual knowledge of LLMs, represent the cutting edge, offering not only better performance but also much-needed interpretability. For researchers building predictive models for drug discovery or chemical synthesis, a rigorous HPO protocol incorporating robust validation and leveraging these advanced frameworks is no longer optional—it is essential for success.

Chemoinformatics, the application of informatics methods to solve chemical problems, has become a cornerstone of modern chemical research, particularly in fields like drug discovery and materials science [16] [17]. The field integrates chemistry, computer science, and data analysis to manage the increasing volume and complexity of chemical data generated by contemporary techniques such as high-throughput screening and automated synthesis [17]. Despite significant technological progress, researchers and development professionals consistently grapple with three persistent challenges that impede the efficient development and deployment of predictive models: scalability, data noise, and high-dimensional search spaces [18] [19].

The scalability challenge arises from the need to process and analyze enormous chemical datasets and explore vast chemical spaces, which can encompass billions of molecules [18]. Simultaneously, the issue of data noise—stemming from experimental errors, biological variability, and data extraction inconsistencies—contaminates datasets and can severely compromise the reliability of predictive models [20] [18] [21]. Furthermore, the optimization of machine learning models, particularly deep neural networks for molecular property prediction, involves navigating high-dimensional hyperparameter search spaces, a process that is both computationally demanding and critical for achieving high predictive accuracy [2]. This article examines these interconnected challenges, compares solutions using experimental data, and provides detailed methodologies for researchers navigating this complex landscape.

The Scalability Challenge: Handling the Data Deluge

The exponential growth in chemical data volume presents a primary scalability challenge. Public repositories like PubChem now contain over 60 million compounds, while commercial databases such as SciFinder boast more than 111 million unique substances [18]. Efficiently storing, retrieving, and processing this deluge of information requires robust database technologies and efficient algorithms [22]. The challenge is twofold: first, to manage the sheer number of compounds, and second, to handle the complexity of the data associated with each molecule, which can include structural, property, and biological activity information [18] [19].

Scaling neural network predictions, a common task in cheminformatics, demands a strategic combination of model optimization, hardware utilization, and deployment strategies [22]. For resource-intensive tasks, maintaining large computational resources on standby is neither cost-effective nor environmentally sustainable. Instead, modern solutions emphasize on-demand scaling, where resources are dynamically allocated based on workload, scaling up during high request loads and down during periods of low activity [22]. Implementation frameworks such as Kubernetes with Horizontal Pod Autoscaler (HPA) and containerization technologies like Docker facilitate this dynamic scaling, enabling efficient distribution of requests across available resources [22].

The Data Noise Challenge: Separating Signal from Noise

In cheminformatics, "noise" refers to any undesirable modification affecting a signal or data point during acquisition or processing [20]. This noise manifests in various forms, from systematic biases to random errors, and originates from multiple sources:

- Experimental Errors: High-Throughput Screening (HTS) data can be contaminated with false positives and negatives due to measurement errors, robotic failures, or temperature variations [18].

- Promiscuous Compounds: So-called "Frequent Hitters" or "Pan Assay Interference Compounds (PAINS)" exhibit nonspecific activity across multiple assays, misleading model development [18].

- Data Extraction Inconsistencies: Automated mining of literature and patents can introduce errors in chemical name recognition, unit conversion, or value extraction [18] [23].

A critical study on the effect of noise on QSAR models demonstrated that experimental error in a dataset does not necessarily impose a hard limit on model predictivity [21]. Researchers systematically added 15 levels of simulated Gaussian-distributed random error to eight datasets with six different common QSAR endpoints. They then built models using five different algorithms on the error-laden data and evaluated them on both error-laden and error-free test sets [21]. The key finding was that the Root Mean Squared Error (RMSE) for evaluation on the error-free test sets was consistently better than on the error-laden sets [21]. This suggests that QSAR models can indeed make predictions more accurate than their noisy training data would imply, though standard evaluation on error-containing test sets often fails to reveal this capability [21].

Experimental Protocol: Quantifying Noise Impact on QSAR

Objective: To assess the true predictive performance of QSAR models by evaluating them against error-free test sets, thereby isolating the effect of experimental noise on perceived model accuracy [21].

Materials and Datasets: Eight distinct datasets encompassing six different common QSAR endpoints. Different endpoints were selected to represent varying levels of inherent experimental error associated with measurement complexity [21].

Methodology:

- For each dataset, establish a reference set of "true" values (considered error-free).

- Generate multiple training sets by introducing up to 15 levels of simulated Gaussian-distributed random error to the "true" values.

- Train QSAR models using five different machine learning algorithms on each of the error-laden training sets.

- Evaluate each trained model on two distinct test sets:

- An error-laden test set (standard practice).

- An error-free test set (using the established "true" values).

- Compare performance metrics (e.g., RMSE, R²) between the two evaluation methods to quantify the over- or underestimation of model performance due to noise [21].

Table 1: Key Reagents and Computational Tools for Noise Analysis

| Reagent/Tool | Function in Experiment |

|---|---|

| QSAR Datasets (8 varieties) | Provide the foundational chemical structures and endpoint data for model building and validation [21]. |

| Gaussian Error Simulation | Systematically introduces controlled, random noise to replicate real-world data imperfections [21]. |

| Multiple ML Algorithms | Enable assessment of how different modeling techniques respond to and filter out noise [21]. |

| Error-Free Test Set | Serves as the gold standard for evaluating the true predictive power of the trained models [21]. |

High-Dimensional Search Spaces: The Hyperparameter Optimization Problem

Hyperparameter Optimization (HPO) is a critical step in building accurate machine learning models for molecular property prediction (MPP) [2]. Hyperparameters are the configuration settings of a learning algorithm that must be specified before the training process begins, as opposed to model parameters that the algorithm learns from the data. They are broadly categorized into:

- Structural Hyperparameters: Defining the model architecture (e.g., number of layers in a neural network, number of units per layer) [2] [24].

- Algorithmic Hyperparameters: Governing the learning process (e.g., learning rate, batch size, number of epochs) [2] [24].

The challenge arises from the high-dimensionality of the search space. With numerous hyperparameters to tune, each with a range of possible values, the space of possible configurations becomes vast. Traditional methods like manual tuning are inefficient and often yield suboptimal results [2]. Most prior applications of deep learning to MPP have paid limited attention to systematic HPO, resulting in suboptimal prediction accuracy [2].

Comparative Analysis of HPO Algorithms

A definitive study compared the efficiency and accuracy of three primary HPO algorithms—Random Search (RS), Bayesian Optimization (BO), and Hyperband (HB)—for deep neural networks applied to MPP [2]. The experiments were conducted using the KerasTuner software platform on two case studies: predicting the melt index of high-density polyethylene and the glass transition temperature (Tg) of polymers [2].

Table 2: Performance Comparison of HPO Algorithms for Molecular Property Prediction

| HPO Algorithm | Key Principle | Computational Efficiency | Prediction Accuracy | Best-Suited Scenario |

|---|---|---|---|---|

| Random Search (RS) [2] [24] | Randomly samples configurations from the search space. | Low to Moderate | Often suboptimal | Small search spaces or as a baseline. |

| Bayesian Optimization (BO) [2] [24] | Builds a probabilistic model of the objective function to guide the search. | Moderate | High | When computational budget allows for a thorough, guided search. |

| Hyperband (HB) [2] | Uses an adaptive resource allocation and early-stopping strategy to quickly discard poor performers. | Very High | Optimal or Nearly Optimal | Large search spaces and limited computational resources; provides the best trade-off. |

| ASHA/RS [24] | Combines Asynchronous Successive Halving (a scheduler) with Random Search. | High | Good | A strong, efficient general-purpose alternative to pure RS. |

The results demonstrated that the Hyperband algorithm was the most computationally efficient, achieving optimal or nearly optimal prediction accuracy in the shortest time [2]. It significantly outperformed Random Search. While Bayesian Optimization can produce highly accurate models, it is computationally more intensive than Hyperband. For practical MPP applications where efficiency and accuracy are paramount, Hyperband is highly recommended [2].

Experimental Protocol: HPO for Deep Neural Networks in MPP

Objective: To systematically optimize the hyperparameters of a Deep Neural Network (DNN) to minimize the prediction error for a given molecular property [2].

Materials and Software:

- Datasets: Curated datasets of molecules with their corresponding target property (e.g., glass transition temperature, melt index) [2].

- Software Platform: KerasTuner or Optuna, which allow for parallel execution of multiple HPO trials, drastically reducing optimization time [2].

- Base Model: A DNN architecture (e.g., a dense network or convolutional network) serving as the starting point for optimization [2].

Methodology:

- Define the Search Space: Explicitly specify the hyperparameters to be optimized and their value ranges (e.g., number of layers: [2, 8], units per layer: [32, 512], learning rate: [1e-4, 1e-2]) [2].

- Select the HPO Algorithm: Choose an optimization strategy (e.g., Hyperband, Bayesian Optimization) based on the computational budget and problem constraints [2].

- Configure and Execute the HPO Run: Utilize the chosen software platform to run multiple trials in parallel. Each trial involves training a model with a specific hyperparameter configuration and evaluating its performance on a validation set [2].

- Extract Best Configuration: Upon completion, the HPO process returns the hyperparameter set that achieved the best performance (e.g., lowest validation loss) [2].

- Final Evaluation: Train a final model using the optimal hyperparameters on the full training set and evaluate its performance on a held-out test set [2].

Table 3: Essential Research Reagents for HPO Experiments

| Research Reagent / Tool | Function / Description |

|---|---|

| KerasTuner / Optuna | Software libraries that provide the framework for defining, running, and analyzing HPO trials, supporting parallel execution [2]. |

| Dense Deep Neural Network (Dense DNN) | A base neural network architecture where each neuron is connected to all neurons in the previous layer; its structure is a primary target for HPO [2]. |

| Convolutional Neural Network (CNN) | A network architecture particularly effective for spatial data; its filter sizes and layers are tuned during HPO for specific data types [2]. |

| Adam Optimizer | A common optimization algorithm used during model training; its learning rate is a critical hyperparameter to optimize [2]. |

| Mean Squared Error (MSE) | A standard loss function used for regression tasks like property prediction, which the HPO process aims to minimize [2]. |

The challenges of scalability, noise, and high-dimensional search spaces in cheminformatics are deeply interconnected. Scalable computational infrastructures are necessary to handle the data volumes required for robust model training and to power the intensive HPO processes. Simultaneously, a critical understanding of data noise and its impact is essential for interpreting model performance correctly and trusting predictions.

Overcoming these hurdles requires a concerted, interdisciplinary effort. As noted in recent research, "the ultimate goal is to put together different expert teams able to simultaneously understand machine learning and artificial intelligence techniques, with a deep understanding of genomics and drug design" [20]. The future of cheminformatics lies in the continued development of intelligent algorithms like Hyperband, the adoption of scalable cloud-native technologies, and, most importantly, the collaboration between chemists, data scientists, and software engineers to build reliable and efficient computational tools that accelerate scientific discovery.

In the field of cheminformatics, where predicting molecular properties is crucial for drug discovery and materials science, the performance of machine learning models is highly sensitive to their architectural choices and hyperparameter configurations [1]. The process of Hyperparameter Optimization (HPO) has emerged as a critical methodology for transforming these models from suboptimal performers to state-of-the-art predictive engines. Traditional manual tuning methods face significant challenges in scalability and adaptability, often resulting in models that fail to generalize across diverse chemical datasets [1]. The automation of HPO, particularly through advanced strategies like Bayesian Optimization and multi-fidelity methods, now enables researchers to systematically navigate complex hyperparameter spaces, thereby unlocking unprecedented model performance while managing computational costs [25] [26]. This evolution is especially relevant for Graph Neural Networks (GNNs), which have become a powerful tool for modeling molecular structures but require careful configuration to achieve their full potential [1]. The impact of effective HPO extends beyond mere accuracy improvements, influencing model robustness, reproducibility, and ultimately the pace of scientific discovery in computational chemistry and drug development.

Quantitative Comparison of HPO Methodologies

Performance Metrics Across Optimization Algorithms

Rigorous benchmarking of HPO strategies reveals significant variations in their effectiveness across key performance indicators. Research evaluating multiple optimization algorithms for tuning machine learning models has demonstrated that methods differ substantially in both computational efficiency and resulting model accuracy [27].

Table 1: Comparative Performance of HPO Algorithms on Model Optimization

| Optimization Algorithm | Computational Efficiency | Best Achieved Accuracy | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| Genetic Algorithm (GA) | Lower temporal complexity [27] | High (varies by dataset) [27] | Effective for complex search spaces | May require problem-specific customization |

| Particle Swarm Optimization (PSO) | Moderate computational cost [27] | High (varies by dataset) [27] | Fast convergence for continuous parameters | Potential for premature convergence |

| Bayesian Optimization (BO) | High for expensive black-box functions [25] | State-of-the-art for many applications [25] | Sample-efficient; handles noise well | Computational overhead for surrogate model |

| Random Search | Low per-iteration cost [27] | Often superior to Grid Search [27] | Parallelizable; simple implementation | May miss important regions |

| Grid Search | Very high computational cost [27] | Good for low-dimensional spaces [27] | Exhaustive for small spaces | Impractical for high dimensions |

| Tree Parzen Estimators (TPE) | Moderate to High [25] | Competitive with BO [25] | Handles mixed parameter types | Implementation complexity |

Advanced HPO Frameworks and Capabilities

The development of specialized HPO frameworks has significantly expanded the toolbox available to researchers, with various packages offering distinct capabilities tailored to different optimization scenarios.

Table 2: Advanced HPO Frameworks and Their Specialized Capabilities

| HPO Framework | Optimization Approach | Specialized Features | Use Cases in Cheminformatics |

|---|---|---|---|

| SMAC3 | Random Forest surrogates [25] | Complex/structured spaces [25] | Optimizing entire ML pipelines [25] |

| Optuna | Various (including BO) [25] | Dynamic search space construction [25] | Adaptive hyperparameter space definition |

| OpenBox | Bayesian Optimization [25] | Multi-objective, transfer learning [25] | Balancing multiple performance metrics |

| Ray Tune | Multiple backend optimizers [25] | High scalability [25] | Large-scale distributed HPO |

| Hyperopt | Tree Parzen Estimators [25] | Distributed HPO capabilities [25] | Parallel experimentation |

| PASHA | Progressive resource allocation [28] | Dynamic resource management [28] | Large dataset tuning with limited resources |

| EcoTune | Multi-fidelity optimization [26] | Token-efficient for LLM inference [26] | Inference parameter tuning |

Experimental Protocols for HPO Evaluation

Benchmarking Framework for Cross-Dataset Generalization

The evaluation of HPO effectiveness requires rigorous experimental protocols that test both within-dataset performance and cross-dataset generalization capabilities. A standardized benchmarking framework for drug response prediction (DRP) models exemplifies this approach, incorporating five publicly available drug screening datasets: Cancer Cell Line Encyclopedia (CCLE), Cancer Therapeutics Response Portal (CTRPv2), Genentech Cell Line Screening Initiative (gCSI), and Genomics of Drug Sensitivity in Cancer (GDSCv1 and GDSCv2) [29].

The experimental workflow follows a systematic process: (1) data preparation involving drug response quantification via dose-response curves with quality control thresholds (R² < 0.3 exclusion criterion); (2) model development through standardized preprocessing, training, and inference pipelines; and (3) performance analysis using both within-dataset and cross-dataset evaluation schemes [29]. Area under the curve (AUC) values calculated over a dose range of [10⁻¹⁰ M, 10⁻⁴ M] and normalized to [0, 1] serve as the primary response metric, with lower values indicating stronger drug response [29].

This protocol specifically addresses generalization assessment by introducing evaluation metrics that quantify both absolute performance (predictive accuracy across datasets) and relative performance (performance drop compared to within-dataset results) [29]. The framework employs pre-computed data splits to ensure consistency across evaluations and utilizes a lightweight Python package (improvelib) to standardize preprocessing, training, and evaluation procedures [29].

Token-Efficient Multi-Fidelity Optimization Protocol

Recent advances in HPO methodology include token-efficient multi-fidelity optimization, particularly valuable for large-scale models where evaluation costs are substantial. The EcoTune method exemplifies this approach through three key innovations: (1) token-based fidelity definition with explicit token cost modeling on configurations; (2) a Token-Aware Expected Improvement acquisition function that selects configurations based on performance gain per token; and (3) a dynamic fidelity scheduling mechanism that adapts to real-time budget status [26].

The experimental protocol for evaluating such methods involves benchmarking against established baselines across diverse tasks and model sizes. For instance, in the case of EcoTune, researchers employed LLaMA-2 and LLaMA-3 series models across multiple benchmarks including MMLU, Humaneval, MedQA, and OpenBookQA [26]. Performance comparisons measured both achievement on target metrics (showing improvements of 7.1% to 24.3% over HELM leaderboard baselines) and token consumption (reduced by over 80% while maintaining or surpassing performance) [26].

Benchmark Datasets and Software Tools

Successful implementation of HPO in cheminformatics requires access to standardized datasets and specialized software tools. The field has evolved toward collaborative frameworks that enable fair comparison and reproducible research.

Table 3: Essential Research Resources for HPO in Cheminformatics

| Resource Category | Specific Resource | Key Features | Application in HPO |

|---|---|---|---|

| Drug Screening Datasets | CCLE [29] | 24 drugs, 411 cell lines, 9,519 responses [29] | Baseline performance benchmarking |

| Drug Screening Datasets | CTRPv2 [29] | 494 drugs, 720 cell lines, 286,665 responses [29] | Large-scale model training |

| Drug Screening Datasets | gCSI [29] | 16 drugs, 312 cell lines, 4,941 responses [29] | Cross-dataset generalization testing |

| Drug Screening Datasets | GDSCv1 & GDSCv2 [29] | 294/168 drugs, 546 cell lines, 171,940+ responses [29] | Multi-source validation |

| HPO Software Frameworks | SMAC3 [25] | Random Forest surrogates for structured spaces [25] | Optimizing complex ML pipelines |

| HPO Software Frameworks | Optuna [25] | Dynamic search space construction [25] | Adaptive hyperparameter search |

| HPO Software Frameworks | OpenBox [25] | Multi-objective, multi-fidelity optimization [25] | Balancing multiple performance goals |

| HPO Software Frameworks | improvelib [29] | Lightweight Python package for standardization [29] | Reproducible experiment execution |

| Evaluation Metrics | Cross-dataset generalization [29] | Absolute & relative performance measures [29] | Robust model assessment |

The transformation from suboptimal to state-of-the-art model performance through Hyperparameter Optimization represents a paradigm shift in cheminformatics and drug discovery research. The evidence from comparative studies demonstrates that strategic implementation of HPO methodologies can yield substantial improvements in model accuracy, generalization capability, and computational efficiency. The key findings indicate that while no single HPO algorithm dominates across all scenarios, Bayesian Optimization approaches generally provide strong performance for expensive black-box functions, while evolutionary algorithms offer advantages in parallelization and complex search spaces [25] [27]. The emergence of multi-fidelity methods like PASHA and token-efficient approaches like EcoTune further extends the practical applicability of HPO to resource-constrained environments [26] [28]. For researchers in cheminformatics and drug development, the strategic selection of HPO methodologies should be guided by dataset characteristics, computational constraints, and generalization requirements. The standardized benchmarking frameworks and comprehensive toolkits now available provide a solid foundation for making these strategic decisions, ultimately accelerating the development of robust predictive models that can successfully transition from experimental settings to real-world applications in precision medicine and molecular design.

A Practical Guide to Key HPO Algorithms and Their Implementation

In the field of chemical science and drug development, machine learning (ML) models are increasingly employed for tasks such as predicting molecular properties, optimizing reaction conditions, and virtual screening. The performance of these models is critically dependent on their hyperparameters, which are configuration settings not learned from the data. Hyperparameter Optimization (HPO) is the process of finding the optimal set of these hyperparameters to maximize model performance. For chemical datasets, which often involve complex, high-dimensional data and computationally expensive model training, selecting an efficient HPO algorithm is paramount. This guide provides an objective comparison of three core HPO algorithms—Random Search, Bayesian Optimization, and Hyperband—focusing on their applicability to chemical informatics research. We summarize experimental data from various studies, detail methodological protocols, and provide visualizations to aid researchers in selecting the most appropriate HPO strategy for their specific projects [30] [31].

Algorithm Fundamentals and Comparative Mechanics

Random Search

Random Search operates by randomly sampling hyperparameter configurations from a predefined search space. Its simplicity stems from its lack of reliance on past evaluations; each new configuration is chosen independently [30] [32]. While it can be surprisingly effective in high-dimensional spaces where only a few parameters are critical, its main limitation is inefficiency. As a non-adaptive method, it may require a large number of trials to stumble upon the optimal configuration, making it computationally expensive for models with long training times [32] [24].

Bayesian Optimization

In contrast, Bayesian Optimization (BO) is an adaptive, sequential strategy. It constructs a probabilistic surrogate model, typically a Gaussian Process, to approximate the complex relationship between hyperparameters and the model's performance objective [33] [5] [24]. An acquisition function, such as Expected Improvement, uses this surrogate to guide the selection of the next hyperparameter set by balancing exploration (sampling from uncertain regions) and exploitation (sampling near currently promising regions) [33] [24]. This allows BO to often find better configurations with fewer evaluations than Random Search, though the overhead of maintaining the surrogate model can be non-trivial [5] [24].

Hyperband

Hyperband is a sophisticated early-stopping method designed to accelerate HPO. It treats the HPO problem as an infinite-armed bandit and uses a multi-fidelity approach, typically leveraging the number of training iterations (or epochs) as a low-fidelity, cheap-to-evaluate proxy for final model performance [34] [24]. The algorithm dynamically allocates resources by successively halving the number of configurations (in "rungs") while increasing the budget (e.g., epochs) for the remaining ones. Async Hyperband (AHB) and ASHA are popular asynchronous variants that improve computational efficiency by decoupling trial promotion from rung completion [24]. Hyperband is particularly powerful for optimizing neural networks on large-scale chemical datasets where full training is prohibitively expensive.

Figure 1: Core Workflows of Random Search, Bayesian Optimization, and Hyperband. Each algorithm follows a distinct logical process for selecting and evaluating hyperparameter configurations [30] [34] [24].

Performance Comparison and Experimental Data

The following tables synthesize quantitative findings from multiple studies comparing HPO algorithms across different model types and datasets, including scenarios relevant to chemical research.

Table 1: Comparative Performance of HPO Algorithms on Different Model Types

| Algorithm | Test AUC (Clinical Prediction) [30] | Best Loss (AutoGBDT) [35] | Test MAE (GNN Catalysis) [24] | Computational Efficiency |

|---|---|---|---|---|

| Default Hyperparameters | 0.82 | - | - | - |

| Random Search | 0.84 | 0.4179 | ~0.41 (Not Converged) | Low |

| Bayesian Optimization | 0.84 | 0.4084 | ~0.41 (Similar to ASHA/RS) | Medium |

| Hyperband/ASHA | 0.84 | - | ~0.395 | High |

Table 2: HPO Performance in Retrieval Augmented Generation (RAG) and Heart Failure Prediction

| Algorithm | RAG Performance (Varied Datasets) [36] | Heart Failure Prediction (AUC) [5] | Processing Time (Heart Failure) [5] |

|---|---|---|---|

| Random Search | Significant boost over baseline | ~0.66 (SVM) | Medium |

| Bayesian Optimization | Comparable to Random Search | ~0.66 (SVM) | Lowest (Most Efficient) |

| Hyperband/ASHA | - | - | - |

Key Insights from Experimental Data:

- Consistent Performance Gain: All HPO methods provided a significant improvement over using default hyperparameters, as seen in the clinical prediction model where the AUC increased from 0.82 to 0.84 [30].

- Efficiency of Adaptive Methods: In a graph neural network (GNN) task relevant to catalysis, ASHA combined with Random Search (ASHA/RS) achieved a lower test MAE and reached a solution 5x to 10x faster than standalone Random Search. This highlights the profound impact of early-stopping schedulers like Hyperband/ASHA on time-to-solution for expensive model training [24].

- Context-Dependent Superiority: While Bayesian Optimization can find excellent configurations (e.g., achieving the best loss of 0.4084 in the AutoGBDT benchmark [35]), its performance advantage is not universal. In some studies, its performance was comparable to a well-executed Random Search [30] [36].

- Computational Overhead: Bayesian Search was noted for requiring less processing time than Grid or Random Search in a heart failure prediction study [5]. However, the "smarter" search of BO can sometimes be outperformed by a scheduler-heavy approach like ASHA/RS when total computational resource usage is considered [24].

Detailed Experimental Protocols

To ensure the reproducibility of HPO comparisons, the following outlines a generalized experimental protocol derived from the cited studies.

Common HPO Experimental Setup

- Dataset and Model Selection: Choose a benchmark dataset (e.g., a public chemical dataset like Tox21 or a proprietary dataset of adsorption energies [24]) and a target model (e.g., a Graph Neural Network, XGBoost, or a Convolutional Neural Network).

- Data Partitioning: Split the dataset into three parts: a training set for model fitting, a validation set for evaluating hyperparameter performance during the HPO process, and a held-out test set for the final, unbiased evaluation of the best-found configuration [30].

- Define Search Space: Explicitly specify the hyperparameters to be tuned and their ranges (e.g., learning rate:

ContinuousUniform(0, 1), number of layers:DiscreteUniform(1...25)) [30]. The choice of search space significantly impacts the outcome. - Set Evaluation Budget: Define the total resource budget for the HPO experiment. This can be a maximum number of trials (e.g., 100 trials [30]) or a total wall-clock time (e.g., 48 hours [35]).

- Run HPO Algorithms: Execute each HPO algorithm (Random Search, BO, Hyperband) using the same training/validation sets and under the same total budget constraint.

- Final Evaluation: Train a final model on the full training set using the best hyperparameters identified by each algorithm and evaluate it on the held-out test set. Compare metrics like AUC, MAE, or accuracy.

Algorithm-Specific Configurations

- Random Search: Hyperparameter values are sampled independently from their respective distributions for each trial [30] [32].

- Bayesian Optimization: The surrogate model (e.g., Gaussian Process) and acquisition function (e.g., Expected Improvement) must be chosen. The surrogate model is updated after each trial [33] [24].

- Hyperband/ASHA: Key parameters are the maximum budget per configuration (R) and the reduction factor (η), which is typically set to 3 or 4. ASHA allows for asynchronous parallelization, making it suitable for HPC environments [34] [24].

The Scientist's Toolkit: Essential HPO Software and Libraries

For researchers implementing HPO in their workflows, several robust libraries are available.

Table 3: Key Software Tools for Hyperparameter Optimization

| Tool / Library | Primary Function | Key Features | Relevance to Chemical Research |

|---|---|---|---|

| Ray Tune [24] | Distributed HPO Framework | Supports any ML framework, integrates external HPO libraries, implements ASHA/AHB/PBT. | Ideal for large-scale parallel HPO on chemical datasets using HPC resources. |

| Hyperopt [30] | HPO Library | Supports Tree-Parzen Estimator (TPE), a Bayesian optimization variant. | Useful for sequential model-based optimization on complex search spaces. |

| scikit-learn [5] | ML Library | Provides built-in GridSearchCV and RandomizedSearchCV. |

Good baseline for simpler models on smaller chemical datasets. |

| NNI (Neural Network Intelligence) [35] | HPO & Neural Architecture Search | Comprehensive toolkit with a wide array of tuners (algorithms) and training services. | Provides a unified platform for experimenting with different HPO algorithms. |

For researchers working with chemical datasets, the choice of an HPO algorithm involves a trade-off between simplicity, computational efficiency, and final model performance. Random Search offers a simple, embarrassingly parallel baseline that can be effective, especially when the critical hyperparameters are few. Bayesian Optimization is a powerful, sample-efficient choice when the number of trials must be minimized, though it may introduce computational overhead. Hyperband and its asynchronous variant, ASHA, stand out for computationally intensive tasks like training deep neural networks or graph neural networks on large chemical datasets, as they can provide massive speedups by aggressively terminating unpromising trials. The experimental evidence suggests that combining a sophisticated scheduler like ASHA with a robust search algorithm is often the most effective strategy for optimizing machine learning models in chemical and drug development research [30] [24].

Bayesian Optimization and Hyperband (BOHB) is a robust and efficient hyperparameter optimization (HPO) framework that synergistically combines the strengths of Bayesian optimization (BO) and the Hyperband (HB) algorithm. It is designed to tackle the complex optimization challenges prevalent in machine learning applications, including those in chemical sciences research. BOHB was developed to fulfill key desiderata for practical HPO solutions: strong anytime performance, strong final performance, effective use of parallel resources, scalability, robustness, flexibility, and computational efficiency [37]. This hybrid approach addresses the limitations of its individual components—while Bayesian optimization can be sample-inefficient in early stages, Hyperband's random search component limits its final performance after larger budgets. BOHB mitigates these weaknesses while preserving their respective strengths, making it particularly valuable for optimizing expensive-to-evaluate functions, such as those encountered in chemical dataset research and drug development.

The core innovation of BOHB lies in its structured integration of both approaches. It uses Hyperband to determine how many configurations to evaluate with which budget, but replaces Hyperband's random search component with a model-based Bayesian optimization approach. Specifically, the Bayesian optimization component is handled by a variant of the Tree Parzen Estimator (TPE) with a product kernel, which models the search space more effectively than standard approaches [37]. This combination enables BOHB to behave like Hyperband initially—quickly identifying promising configurations through low-fidelity approximations—and then leverage the constructed Bayesian model to refine these configurations for strong final performance.

Theoretical Foundations: How BOHB Works

Integration of Bayesian Optimization and Hyperband

BOHB operates through a sophisticated interplay between its two constituent algorithms, each handling different aspects of the optimization process. The Hyperband framework provides the budget allocation strategy through its successive halving mechanism, which begins by testing a wide range of hyperparameter sets with small resources (like fewer training epochs or less data), then eliminates the poorest performers and reallocates more resources to the better-performing sets iteratively [38]. This process enables rapid identification of promising regions in the hyperparameter space while minimizing resource waste on unpromising candidates.

Simultaneously, the Bayesian optimization component employs a probabilistic model to guide the selection of new hyperparameters to evaluate. Unlike standard Bayesian optimization that typically uses Gaussian processes, BOHB utilizes a Tree Parzen Estimator (TPE) that models the search space more efficiently, particularly for higher-dimensional problems [37]. TPE constructs two density estimates: one for hyperparameters that yielded good results and another for those that performed poorly, then uses the ratio between these densities to select promising new configurations. This approach allows BOHB to adaptively focus on regions of the hyperparameter space that are most likely to contain optimal configurations based on all evaluations conducted so far.

The following diagram illustrates BOHB's core workflow:

Key Algorithmic Components

BOHB's efficiency stems from several key algorithmic components that differentiate it from other HPO methods. The multi-fidelity approach allows BOHB to use cheap approximations of the objective function (e.g., training with fewer iterations, on subsets of data, or with lower-resolution simulations) to make informed decisions about which configurations warrant more substantial computational resources [37]. This is particularly valuable in chemical applications where high-fidelity computations (such as density functional theory calculations) are computationally expensive.

The successive halving procedure within Hyperband operates by allocating a budget to a set of configurations, evaluating them, keeping only the top-performing fraction, and repeating the process with increased budgets for the survivors [37] [38]. BOHB enhances this process by using the TPE model to select new configurations rather than random sampling, making the process more efficient. The parallelization capability of BOHB allows multiple configurations to be evaluated simultaneously across available computational resources, significantly accelerating the optimization process [37].

For the Bayesian optimization component, BOHB employs an adaptive resource allocation strategy that dynamically balances exploration (testing configurations in unexplored regions) and exploitation (refining known promising regions) based on the quality of the model and the diversity of evaluated configurations. This balanced approach prevents premature convergence to local optima while efficiently honing in on globally optimal solutions—a critical capability when dealing with complex, multi-modal objective functions common in chemical dataset research.

Experimental Comparison of HPO Techniques

Performance Benchmarking Framework

To objectively evaluate BOHB against other hyperparameter optimization techniques, we established a comprehensive benchmarking framework based on established methodologies in the field [39]. The evaluation protocol was designed to assess performance across multiple dimensions: convergence speed (how quickly each method finds good solutions), final performance (quality of the best solution found given sufficient budget), resource efficiency (computational resources required), and robustness (consistency of performance across different problems and random seeds). All experiments were conducted using identical computational environments and resource constraints to ensure fair comparisons.

Each HPO method was evaluated on its ability to optimize key hyperparameters for machine learning models relevant to chemical applications, including neural networks, support vector machines, and gradient boosting machines. The evaluation metrics included validation error (primary objective for optimization), wall-clock time (including model training and hyperparameter selection overhead), and cumulative resource consumption. For chemical applications specifically, we also considered domain-specific metrics such as prediction accuracy for molecular properties and computational cost for quantum chemistry calculations.

Comparative Performance Results

The table below summarizes the comparative performance of BOHB against other prominent HPO methods across multiple evaluation criteria, with data aggregated from published benchmarks [37] [39]:

Table 1: Performance Comparison of Hyperparameter Optimization Methods

| Method | Anytime Performance | Final Performance | Parallel Efficiency | Scalability | Noise Robustness |

|---|---|---|---|---|---|

| BOHB | Excellent | Excellent | High | High (dozens of parameters) | High |

| Hyperband (HB) | Excellent | Good | High | Medium | Medium |

| Bayesian Optimization (BO) | Poor | Excellent | Low | Low (<20 parameters) | Medium |

| Random Search | Medium | Poor | High | High | Low |

| Tree Parzen Estimator (TPE) | Medium | Good | Medium | Medium | Medium |

| Genetic Algorithms | Medium | Good | Medium | High | High |

Quantitative results from optimizing a two-layer Bayesian neural network demonstrate BOHB's advantages: BOHB achieved a 55x speedup over random search in finding optimal configurations, significantly outperforming both standalone Hyperband and vanilla Bayesian optimization [37]. In these experiments, Hyperband initially performed better than TPE, but TPE caught up given enough time, while BOHB converged faster than both HB and TPE, demonstrating its superior anytime and final performance.

For reinforcement learning applications (relevant to molecular dynamics and reaction optimization), BOHB demonstrated exceptional capability in handling noisy optimization problems. When optimizing eight hyperparameters of a PPO agent learning the cartpole swing-up task, both HB and BOHB worked well initially, but BOHB converged to better configurations with larger budgets [37]. This noise robustness is particularly valuable in chemical applications where experimental or computational noise is prevalent.

BOHB in Chemical Sciences Research

Applications in Chemical Dataset Research

BOHB has significant potential for addressing key challenges in chemical sciences research, particularly in optimizing data-driven workflows for materials discovery and molecular design. Chemical problems often involve high-dimensional parameter spaces (e.g., synthesis conditions, processing parameters, molecular descriptors) and expensive evaluations (computational simulations or physical experiments), making efficient optimization essential [40]. BOHB's ability to leverage cheap approximations (such as lower-level theory calculations or smaller dataset evaluations) before committing to expensive high-fidelity evaluations makes it particularly suitable for these applications.

In materials discovery pipelines, BOHB can simultaneously optimize multiple aspects of the workflow: preprocessing parameters, model architectures, and training hyperparameters for property prediction models. For example, in optimizing the regularization and kernel parameters of support vector machines for materials classification, BOHB closely followed the performance of specialized methods like Fabolas and significantly outperformed standard Gaussian process-based Bayesian optimization and random search [37]. Similar advantages would be expected when optimizing neural network architectures for predicting molecular properties or reaction outcomes from chemical dataset features.

Experimental Protocol for Chemical Applications

Implementing BOHB for chemical dataset research requires careful consideration of domain-specific constraints and objectives. The following protocol outlines a standardized approach for applying BOHB to chemical optimization problems:

Problem Formulation: Define the objective function (e.g., prediction accuracy, property optimization, yield maximization) and identify tunable hyperparameters (continuous, discrete, and categorical). For chemical applications, this may include model hyperparameters, feature selection parameters, and data preprocessing options.

Budget Definition: Establish meaningful fidelity approximations, such as subset size of the chemical dataset, number of training iterations, convergence thresholds for computational chemistry calculations, or resolution of molecular representations [40]. The correlation between low-fidelity and high-fidelity performance is crucial for BOHB's effectiveness.

Configuration Space Specification: Define the search ranges and distributions for all hyperparameters, incorporating domain knowledge where available to constrain the search space. For chemical applications, this might include reasonable ranges for learning rates, network architectures, or regularization parameters based on prior experience with similar datasets.

Optimization Execution: Run BOHB with appropriate parallelization based on available computational resources. For chemical applications involving expensive quantum chemistry calculations, parallel evaluation of multiple configurations can significantly reduce overall optimization time.

Validation and Analysis: Evaluate the best-found configuration on a held-out test set or through experimental validation. Analyze the results to gain insights into important hyperparameters and their interactions, which can inform future experimental or computational designs.

The following diagram illustrates a typical BOHB workflow adapted for chemical dataset research:

Essential Research Toolkit for BOHB Implementation

Implementing BOHB effectively requires appropriate software tools and computational resources. The following table catalogs essential components of the research toolkit for applying BOHB to chemical dataset problems:

Table 2: Essential Research Toolkit for BOHB Implementation

| Tool Category | Specific Tools | Key Functionality | Relevance to Chemical Applications |

|---|---|---|---|

| BOHB Implementations | HpBandSter [37], SMAC3 [39] | Core BOHB algorithm | Hyperparameter optimization for chemical ML models |

| Chemical ML Libraries | Scikit-learn, DeepChem | Chemical machine learning models | Building models for chemical property prediction |

| Bayesian Optimization | BoTorch [40], Ax [40] | Alternative BO implementations | Comparison with BOHB performance |

| Chemical Informatics | RDKit, OpenBabel | Molecular representation | Feature engineering for chemical datasets |

| Quantum Chemistry | ORCA, Gaussian, PySCF | High-fidelity evaluations | Objective function for molecular properties |

| Parallel Computing | Dask, MPI, Kubernetes | Distributed computation | Parallel evaluation of chemical configurations |

Practical Implementation Considerations

Successful application of BOHB to chemical problems requires attention to several practical considerations. Budget definition is particularly critical—the low-fidelity approximations must correlate well with high-fidelity performance for BOHB to be effective [37]. In chemical applications, appropriate budgets might include using smaller basis sets in quantum chemistry calculations, shorter molecular dynamics simulations, or subsetted datasets for initial screening. Without meaningful budget definitions, BOHB's Hyperband component becomes inefficient, potentially performing worse than standard Bayesian optimization.

The choice of surrogate model also significantly impacts BOHB's performance. While BOHB typically uses TPE with a product kernel, some chemical applications might benefit from alternative surrogate models, particularly for high-dimensional problems or when incorporating known constraints from chemical knowledge [40]. Additionally, handling of categorical and conditional parameters is essential for chemical applications where certain preprocessing steps or model architectures introduce conditional dependencies in the hyperparameter space.

For noisy optimization problems common in chemical experiments and some computational methods, BOHB's robustness can be enhanced through repeated evaluations of promising configurations and statistical testing during the successive halving process. This approach helps distinguish truly promising configurations from those that appear good due to random noise, leading to more reliable optimization outcomes in noisy chemical environments.

BOHB represents a significant advancement in hyperparameter optimization methodology by successfully combining the complementary strengths of Bayesian optimization and Hyperband. Its strong anytime performance, excellent final performance, scalability, and robustness make it particularly well-suited for the challenges of chemical dataset research, where evaluation costs are high and parameter spaces are complex. Empirical benchmarks consistently demonstrate BOHB's superiority over both its constituent algorithms and other HPO methods across a variety of applications, suggesting similar advantages can be realized in chemical sciences research.

Future research directions for BOHB in chemical applications include multi-objective optimization for balancing competing objectives (e.g., activity vs. selectivity in drug design, efficiency vs. stability in materials discovery), transfer learning approaches that leverage knowledge from previous chemical optimization tasks to accelerate new ones, and integration with expert knowledge to constrain search spaces based on chemical feasibility. As automated research workflows become increasingly prevalent in chemical sciences, BOHB and related advanced HPO methods will play a crucial role in accelerating the discovery and optimization of novel molecules and materials with tailored properties.

The pursuit of optimal model performance in machine learning (ML) critically depends on effective hyperparameter optimization (HPO). While traditional methods like grid and random search are often computationally inefficient for complex search spaces, Genetic Algorithms (GAs) have emerged as a powerful, population-based metaheuristic alternative. Their robustness and ability to avoid local minima make them particularly suitable for challenging optimization landscapes [41]. Concurrently, Reinforcement Learning (RL) has demonstrated remarkable success in solving complex sequential decision-making problems. A novel and promising research direction involves the creation of hybrid models that leverage the strengths of both GAs and RL. This guide provides a comparative analysis of these innovative hybrids, focusing on their application to HPO. The context is framed within performance evaluation for chemical datasets research, offering insights for scientists and drug development professionals who rely on predictive modeling and process optimization.

Methodological Frameworks and Hybrid Architectures

This section details the core architectures of GA-RL hybrids, breaking down their components and how they interact to enhance HPO.

Genetic Algorithms for Standalone HPO

Genetic Algorithms (GAs) are evolutionary algorithms inspired by natural selection. In HPO, each candidate solution (a set of hyperparameters) is encoded as a "chromosome." The algorithm evolves a population of these chromosomes over generations using three primary operators [42] [41]:

- Selection: Strategies like tournament selection choose fitter individuals (better hyperparameter configurations) for reproduction.

- Crossover (or Recombination): Operators such as one-point or uniform crossover combine parts of two parent chromosomes to create offspring, exploring new configurations.

- Mutation: Operators like uniform mutation introduce random changes to genes (individual hyperparameters), ensuring diversity and helping to escape local optima. The performance of a GA is highly dependent on its configuration. Exploration-driven GAs, which prioritize broad search of the configuration space, have been shown to yield significant improvements in optimization efficiency for Deep RL models like DQN [42].

Hybridization with Reinforcement Learning

The integration of GAs and RL creates a synergistic relationship where each technique addresses the weaknesses of the other. Two primary hybrid architectures have been developed.

Table 1: Comparison of GA-RL Hybrid Architectures

| Architecture | Description | Key Mechanism | Primary Advantage |

|---|---|---|---|

| RL for GA Guidance (RLGA) | Uses RL to dynamically control the GA's evolutionary operators [43]. | An RL agent (e.g., using Q-learning) adaptively selects crossover and mutation operators based on their historical performance. | Enhances GA's search efficiency and solution quality by replacing static, pre-defined operator choices with an adaptive policy. |

| GA for RL HPO (GA-DQN) | Employs a GA to optimize the hyperparameters of an RL algorithm [42]. | The GA's fitness function is the performance (e.g., cumulative reward) of an RL agent (e.g., a DQN) trained with a specific hyperparameter set. | Efficiently navigates the complex, high-dimensional hyperparameter space of deep RL, improving convergence and final performance. |

The following diagram illustrates the logical workflow and data flow of the RLGA architecture, where Reinforcement Learning guides the Genetic Algorithm.

Experimental Protocols and Performance Benchmarks

To objectively compare the performance of these hybrid approaches, it is essential to examine the methodologies and results from key studies.

Key Experimental Setups

Table 2: Summary of Key Experimental Protocols

| Study & Hybrid Model | Optimization Target / Application | Benchmark / Environment | Core Methodology |

|---|---|---|---|

| Exploration-Driven GA [42] | DQN Hyperparameters (learning rate, gamma, update frequency) | CartPole (OpenAI Gym) | Compared various GA selection, crossover, and mutation methods for optimizing DQN hyperparameters. Included a case study on sensor dropout. |

| RL-Guided GA (RLGA) [43] | Dynamic Controller Deployment in Satellite Networks | LEO Satellite Network Simulator | Integrated Q-learning to adaptively select from multiple knowledge-based crossover and mutation operators within a GA. |

| EA vs. DRL [44] | Non-Homogeneous Patrolling Problem | Ypacarai Lake Monitoring Simulator | Compared the performance and sample-efficiency of a (μ+λ) EA and Deep Q-Learning for a path-planning problem. |

| PriMO [45] | Multi-Objective HPO for DL | 8 Deep Learning Benchmarks | A Bayesian optimization algorithm that integrates multi-objective expert priors, serving as a state-of-the-art benchmark. |

Quantitative Performance Comparison

The following table synthesizes quantitative results from the cited research, providing a clear comparison of performance gains.