Preventing Overfitting in Chemical Machine Learning: A Practical Guide to Robust Hyperparameter Tuning

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to prevent overfitting during hyperparameter optimization of chemical machine learning models.

Preventing Overfitting in Chemical Machine Learning: A Practical Guide to Robust Hyperparameter Tuning

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to prevent overfitting during hyperparameter optimization of chemical machine learning models. Covering foundational concepts to advanced validation strategies, we explore the unique challenges of low-data regimes in chemistry, present automated workflows and tools like ROBERT and DeepMol, and detail robust evaluation protocols. A special focus is given to troubleshooting common pitfalls and implementing rigorous comparative assessments to ensure models generalize effectively to new chemical space, ultimately enhancing the reliability of computational predictions in biomedical research.

Understanding Overfitting: Core Concepts and Chemistry-Specific Challenges

Defining Overfitting and Underfitting in Chemical ML Contexts

Frequently Asked Questions (FAQs)

Q1: What are overfitting and underfitting in the context of chemical machine learning?

A1: Overfitting and underfitting describe two fundamental ways a model can fail to learn correctly from chemical data.

- Overfitting occurs when a model learns the training data too well, including its noise and random fluctuations. It performs exceptionally on training data but generalizes poorly to new, unseen data [1] [2] [3]. In chemical ML, this might mean a model memorizes experimental artifacts in its training set instead of the underlying structure-property relationships.

- Underfitting occurs when a model is too simple to capture the underlying trends or patterns in the data. It performs poorly on both the training data and new data [4] [3]. For instance, a linear model might underfit when trying to predict a complex, non-linear chemical reaction yield.

The goal is a well-fitted model that accurately captures the dominant patterns from the training data and applies them effectively to new data [3].

Q2: How can I quickly diagnose if my model is overfit or underfit?

A2: The most straightforward diagnostic method is to compare the model's performance on training data versus a held-out testing (validation) set [4] [3].

| Condition | Training Error | Testing Error |

|---|---|---|

| Well-Fitted | Low | Low |

| Overfitting | Low | Significantly High [3] |

| Underfitting | High | High [4] |

For time-series forecasting common in chemical processes (e.g., using LSTM networks), monitoring learning curves is also effective. An overfit model will show training loss decreasing while validation loss increases after a certain point [1] [3].

Q3: My dataset for a new catalyst is small. How can I prevent overfitting?

A3: Small datasets are highly susceptible to overfitting. Several strategies can help:

- Regularization (L1/L2): These techniques penalize overly complex models by adding a constraint to the loss function, discouraging the model from relying too heavily on any single feature [5] [3].

- Simplify the Model: Reduce the number of model parameters. For neural networks, this means removing layers or reducing the number of units per layer [5].

- Early Stopping: Halt the training process when the performance on the validation set stops improving and begins to degrade [5].

- Data Augmentation: Artificially increase the size and diversity of your training set by creating modified versions of existing data. In chemical ML, this could involve adding noise to spectral data or using domain knowledge to generate plausible virtual compounds [2] [5].

Q4: What are the best practices for hyperparameter tuning to avoid these issues?

A4: Hyperparameter tuning is critical for finding the balance between underfitting and overfitting [3]. Best practices include:

- Use Automated Search: Move beyond manual tuning. Employ frameworks like Optuna with Bayesian optimization to efficiently navigate the hyperparameter space [6].

- Apply Robust Validation: Use K-fold cross-validation to obtain a more reliable estimate of model performance and ensure tuning is not based on a lucky split of the data [2] [5].

- Implement Nested Cross-Validation: For a rigorous protocol that prevents information leakage between tuning and evaluation, use nested cross-validation. An outer loop assesses generalization, while an inner loop performs the hyperparameter tuning [1] [3].

Troubleshooting Guides

Problem: Model Performance is Poor on Both Training and Test Data (Potential Underfitting)

Symptoms:

- High and similar error rates for both training and test sets [4].

- The model fails to capture known, complex relationships in the data.

Actionable Steps:

| Step | Action | Technical Details |

|---|---|---|

| 1 | Increase Model Complexity | Switch from a linear to a non-linear algorithm (e.g., Random Forest, Gradient Boosting, or a deeper neural network) [3]. |

| 2 | Enhance Feature Engineering | Add more informative features, create interaction terms, or include polynomial features to help the model capture underlying patterns [3]. |

| 3 | Reduce Regularization | Decrease the strength of L1 (Lasso) or L2 (Ridge) regularization parameters. Regularization penalizes complexity; reducing it allows a more complex fit [3]. |

| 4 | Increase Training Time | Train for more epochs (iterations) to allow the model more time to learn from the data [3]. |

Problem: Model Performs Excellently on Training Data but Fails on New Data (Potential Overfitting)

Symptoms:

- Very low training error but high test error [3].

- The model's decision boundaries are overly complex and specific to the training set.

Actionable Steps:

| Step | Action | Technical Details |

|---|---|---|

| 1 | Gather More Data | Increase the size of the training dataset. This is often the most effective solution [2] [5]. |

| 2 | Apply Regularization | Introduce or increase the strength of L1/L2 regularization to constrain the model [5] [3]. For neural networks, use Dropout, which randomly ignores units during training to prevent co-adaptation [5]. |

| 3 | Simplify the Model | Reduce the number of parameters. In neural networks, remove layers or units. In tree-based models, reduce the maximum depth [5]. |

| 4 | Perform Feature Selection | Identify and use only the most important features to prevent the model from learning from noise [5]. |

| 5 | Use Early Stopping | Monitor validation loss during training and stop when it no longer improves [5]. |

Experimental Protocols & Workflows

Protocol for Evaluating Model Generalization

This protocol provides a robust methodology for assessing whether a model is overfit or underfit during hyperparameter tuning, as referenced in the broader thesis.

Title: Nested Cross-Validation Workflow

Detailed Methodology:

- Data Partitioning: Split the entire chemical dataset (e.g., molecular structures and associated properties) into a Training Set (e.g., 80%) and a held-out Testing Set (e.g., 20%). The test set must only be used for the final evaluation [5] [3].

- Outer Loop (Evaluation): On the training set, initiate a cross-validation cycle (e.g., 5-fold). In each fold:

- Further split the training data into a learning set and a validation set.

- Pass the learning set to the inner loop.

- Inner Loop (Hyperparameter Tuning): Using only the learning set from the outer loop, perform a second, independent cross-validation (e.g., 3-fold) to tune the model's hyperparameters (e.g., learning rate, regularization strength). This prevents bias in the error estimate [1] [3].

- Final Model Evaluation: Train a final model on the entire original training set using the best hyperparameters found in the inner loop. Evaluate this model on the untouched Testing Set to obtain an unbiased estimate of its generalization error [3].

The Scientist's Toolkit: Key Research Reagents

This table details essential computational "reagents" and their functions for developing robust chemical ML models.

| Research Reagent | Function & Explanation |

|---|---|

| L1 / L2 Regularization | Prevents overfitting by adding a penalty to the loss function. L1 (Lasso) can shrink feature coefficients to zero, performing feature selection. L2 (Ridge) shrinks all coefficients evenly to reduce model complexity [5] [3]. |

| Dropout | A regularization technique for neural networks where randomly selected neurons are ignored during training. This prevents units from co-adapting too much and forces the network to learn more robust features [5]. |

| K-Fold Cross-Validation | A resampling procedure used to evaluate models on limited data samples. The data is partitioned into K subsets; the model is trained on K-1 folds and validated on the remaining fold. This process is repeated K times [2] [5]. |

| Bayesian Optimization (e.g., Optuna) | A powerful framework for hyperparameter tuning. It builds a probabilistic model of the function mapping hyperparameters to model performance and uses it to select the most promising hyperparameters to evaluate next [6]. |

| Data Augmentation | The process of artificially expanding the training dataset by creating modified copies of existing data. For chemical data, this could include adding noise to instrumental readings or applying symmetry operations to molecular structures [2] [5]. |

| Ensemble Methods (Bagging/Boosting) | Combines multiple models to improve generalizability. Bagging (e.g., Random Forests) trains models in parallel to reduce variance. Boosting (e.g., XGBoost) trains models sequentially, where each new model corrects errors of the previous one [2] [3]. |

The Bias-Variance Tradeoff in Molecular Property Prediction

Troubleshooting Guide: FAQs on Bias and Variance

FAQ: My model performs excellently on training data but fails on new experimental molecules. What is happening? This is a classic sign of overfitting (high variance), where your model has learned the noise and specific patterns in the training data rather than the underlying generalizable relationships [7]. It often occurs when the model is too complex for the amount of available data, causing it to perform poorly on any new, unseen data [8]. To resolve this, you must reduce model variance.

- Primary Solution: Increase the effective amount of data using techniques like cross-validation (CV). A robust method involves using a combined RMSE from different CV types to evaluate both interpolation and extrapolation performance during hyperparameter optimization [9].

- Alternative Solutions:

- Apply Regularization: Techniques like L1 (Lasso) or L2 (Ridge) regularization penalize model complexity.

- Simplify the Model: Use fewer parameters or a less complex algorithm (e.g., switch from a deep neural network to a Random Forest).

- Curate Features: Reduce the number of molecular descriptors to only the most predictive ones to avoid fitting to irrelevant information [8].

FAQ: My model is consistently inaccurate, even on the training data. How can I improve it? This indicates underfitting (high bias), meaning your model is too simple to capture the underlying trends in the data [7].

- Primary Solution: Increase model complexity. This can be done by adding more relevant features (e.g., advanced electronic or steric molecular descriptors), choosing a more powerful algorithm (e.g., a neural network over linear regression), or reducing the constraints of regularization [9].

- Alternative Solutions:

FAQ: I have a very small dataset of chemical reactions. Can I still use complex, non-linear models? Yes, but it requires a carefully designed workflow to mitigate overfitting. Traditionally, Multivariate Linear Regression (MVL) is preferred for small datasets due to its robustness [9]. However, non-linear models can perform on par with or even outperform MVL if properly tuned.

- Primary Solution: Employ automated workflows that integrate Bayesian Hyperparameter Optimization with an objective function specifically designed to penalize overfitting. The objective should incorporate metrics for both interpolation (standard CV) and extrapolation (sorted CV) performance [9].

- Key Consideration: Using pre-set, sensible hyperparameters can sometimes yield similar performance to full-scale optimization while reducing computational effort by orders of magnitude, thus also minimizing the risk of overfitting the hyperparameter search itself [11].

FAQ: After extensive hyperparameter tuning, my model's performance on the test set got worse. Why? This can result from overfitting by hyperparameter optimization. When you perform a vast number of tuning experiments on a fixed test set, you may inadvertently select parameters that work well for that specific test set partition but do not generalize [11].

- Primary Solution: Use nested cross-validation, where an inner loop is used for hyperparameter tuning and an outer loop is used for unbiased performance evaluation. This prevents information from the validation/test sets from leaking into the model selection process [9].

- Alternative Solution: Validate the final model on a completely independent, external dataset that was never used during the training or tuning phases [8].

Diagnostic Table: Identifying Model Issues

The table below summarizes key indicators and solutions for bias and variance problems in molecular property prediction.

| Observed Symptom | Likely Cause | Key Performance Metric | Recommended Solution |

|---|---|---|---|

| High error on training and new data | High Bias (Underfitting) | Low R² on training data [10] | Increase model complexity; Add more predictive features [7] |

| Large gap between training and test error | High Variance (Overfitting) | Large RMSE difference between CV and test set [9] | Apply regularization; Use more data; Simplify model [7] |

| Good performance on internal test set, poor performance on external validation | Overfitting on the test set | High cuRMSE/standard RMSE discrepancy [11] | Use nested cross-validation; Validate on a true external set [8] |

| Model performance is highly sensitive to small changes in the training data | High Variance | High standard deviation in repeated CV runs [9] | Use ensemble methods; Get more data; Apply bagging |

Experimental Protocols for Robust Models

Protocol 1: Automated Workflow for Low-Data Regimes

This protocol is designed for building non-linear models with datasets smaller than 50 data points [9].

- Data Curation: Clean and standardize molecular structures (e.g., using SMILES standardization). Remove duplicates and handle missing values.

- Train-Test Split: Reserve a minimum of 20% of the data (or at least 4 points) as an external test set. Use an "even" split to ensure the test set is representative of the target value range [9].

- Hyperparameter Optimization: Use Bayesian Optimization to tune model parameters. The key is to use a combined objective function that accounts for both interpolation and extrapolation:

- Interpolation RMSE: Calculated using a 10-times repeated 5-fold cross-validation.

- Extrapolation RMSE: Assessed via a selective sorted 5-fold CV, where data is sorted by the target value and partitioned.

- The objective function to minimize is the combined RMSE of these two components [9].

- Model Selection & Evaluation: Select the model with the best combined RMSE score. Finally, evaluate its performance only once on the held-out external test set.

Protocol 2: Bayesian Optimization for Hyperparameter Tuning

Bayesian Optimization has been shown to provide higher performance and reduced computation time compared to methods like Grid Search [10]. It is particularly useful for optimizing expensive-to-evaluate functions, such as training complex neural networks.

- Define the Search Space: Specify the hyperparameters to optimize and their value ranges (e.g., learning rate, number of layers, dropout rate).

- Select a Surrogate Model: A common choice is a Gaussian Process (GP) regressor, which models the function mapping hyperparameters to the model's performance [12].

- Choose an Acquisition Function: This function decides the next set of hyperparameters to evaluate by balancing exploration (trying uncertain areas) and exploitation (refining known good areas). For multi-objective optimization (e.g., yield and selectivity), scalable functions like q-NParEgo or TS-HVI are recommended for large batch sizes [12].

- Iterate: Repeat the process of evaluating the model, updating the surrogate, and using the acquisition function until performance converges or the experimental budget is exhausted.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Technique | Function in the Workflow | Application Context |

|---|---|---|

| Bayesian Optimization | Efficiently navigates hyperparameter space to find optimal model settings with fewer evaluations [10] [12]. | Hyperparameter tuning for any ML model, especially when model training is computationally expensive. |

| Cross-Validation (CV) | Provides a robust estimate of model performance and generalization by repeatedly splitting the training data [8]. | Model validation and selection, particularly critical in low-data regimes. |

| Nested Cross-Validation | Prevents optimistic performance estimates by keeping a separate, untouched set for final evaluation after model selection [9]. | The gold standard for obtaining an unbiased estimate of a model's performance when extensive hyperparameter tuning is required. |

| TransformerCNN | A representation learning method that uses Natural Language Processing on SMILES strings; can provide higher accuracy than graph-based methods with less computational time [11]. | Molecular property prediction from SMILES strings. |

| Double-Hybrid DFT | A quantum chemical method that can be parameterized to have low variance; its systematic error (bias) can be corrected using a low-bias reference [13]. | Generating accurate reference data for molecular electronic properties (e.g., singlet-triplet gaps) when experimental data is scarce. |

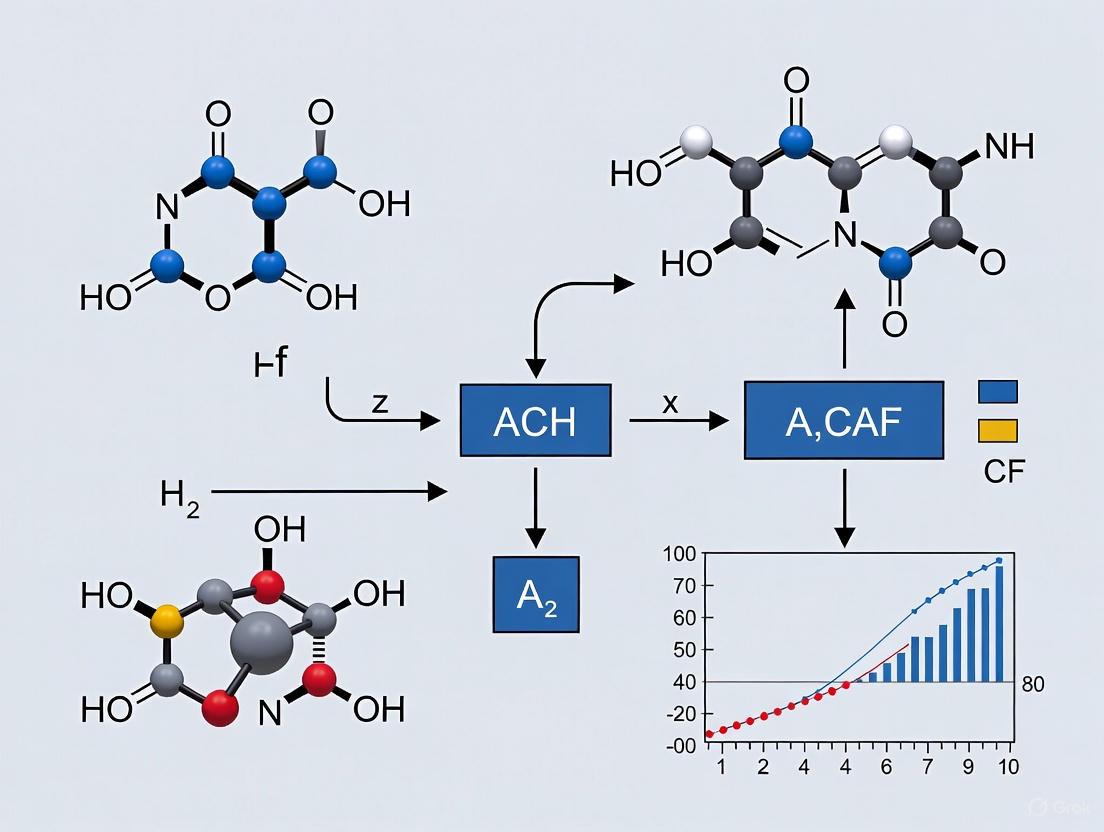

Workflow Visualization

Diagram 1: Bias-Variance Tradeoff in Model Complexity

Bias-Variance Tradeoff Relationship

Diagram 2: Low-Data ML Workflow with Overfitting Control

Low-Data ML Workflow with Overfitting Control

Why Low-Data Regimes in Chemistry are Particularly Vulnerable

Troubleshooting Guide: Common Issues in Low-Data Chemical ML

Why is my model's performance excellent during training but poor on new experimental data?

This is a classic sign of overfitting, where your model has memorized noise and specific patterns in your training data rather than learning the underlying chemical relationships. In low-data regimes, the risk of this is significantly higher because the model has fewer examples to learn from [14] [15].

Diagnosis Checklist:

- Check if the performance metrics (e.g., RMSE, R²) on your training data are significantly better than on your validation or test set.

- Perform a y-scrambling (or y-randomization) test. If a model trained on data with a randomly shuffled target variable performs similarly to your original model, it indicates your model is learning noise, not a real signal [15].

- Use cross-validation and compare the performance across different data splits. High variance in cross-validation scores is a red flag [11].

Solutions:

- Incorporate Extrapolation Metrics: During hyperparameter optimization, use an objective function that evaluates performance on both interpolation (standard cross-validation) and extrapolation (e.g., sorted cross-validation based on target value). This helps select models that generalize beyond the immediate training data [14] [15].

- Bayesian Hyperparameter Optimization: Utilize Bayesian optimization with a combined objective function that actively penalizes overfitting, rather than simply maximizing training performance [14] [9].

- Simplify the Model: If using non-linear models, increase regularization strength or consider switching to a simpler model like linear regression, which can be more robust with very small datasets [14].

Hyperparameter optimization is computationally expensive and isn't improving my model. What am I doing wrong?

Extensive hyperparameter optimization (HPO) in a low-data context can sometimes lead to overfitting the validation set [11]. You might be fine-tuning the model to perform well on a specific, small validation split, which does not translate to general performance.

Diagnosis Checklist:

Solutions:

- Use Efficient HPO Algorithms: For faster and more effective results, consider the Hyperband algorithm, which has been shown to provide optimal or nearly optimal results with greater computational efficiency compared to some Bayesian optimization methods [16].

- Limit the Search Space: Do not optimize an excessively large number of hyperparameters simultaneously. Focus on the most impactful ones [11].

- Consider Pre-set Parameters: In some cases, using a set of sensible, pre-optimized hyperparameters can yield similar performance to a full HPO, while reducing computational effort by orders of magnitude. This can serve as a strong baseline [11].

I have less than 50 data points. Are non-linear models completely unusable?

No, but they require careful handling. Traditionally, multivariate linear regression (MVL) is preferred in low-data scenarios due to its simplicity and lower risk of overfitting [14] [15]. However, recent research demonstrates that properly regularized non-linear models can compete with or even outperform linear models [14] [17].

Diagnosis Checklist:

- You are defaulting to linear models without testing non-linear alternatives.

- Your non-linear models (e.g., Neural Networks, Random Forest) show high variance in cross-validation.

Solutions:

- Adopt Automated Workflows: Use ready-to-use tools like the ROBERT software, which incorporates automated workflows designed specifically for low-data regimes. These workflows integrate the overfitting mitigation strategies mentioned above [15] [9].

- Benchmark Algorithms: Systematically compare MVL against non-linear algorithms like Neural Networks and Gradient Boosting on your dataset. Evidence shows that for datasets with 18-44 data points, non-linear models can perform on par with or better than linear regression when properly tuned [14].

- Leverage Multi-Task Learning (MTL): If you have data for several related properties, techniques like Adaptive Checkpointing with Specialization (ACS) can be used to train a multi-task graph neural network. This allows the model to learn from correlations between tasks, dramatically reducing the required labeled data per property and enabling accurate predictions with as few as 29 samples [18].

Performance of ML Algorithms in Low-Data Chemical Research

The table below summarizes quantitative benchmarking data on the performance of different machine learning algorithms across eight diverse chemical datasets, ranging in size from 18 to 44 data points [14].

| Dataset Size (Data Points) | Best Performing Algorithm(s) | Key Performance Metric (Scaled RMSE) | Vulnerability if Misapplied |

|---|---|---|---|

| 18–44 (across 8 studies) | Neural Networks (NN) & Gradient Boosting (GB) | Performed as well as or better than Linear Regression (MVL) in 5/8 cases [14]. | High overfitting without proper regularization and extrapolation checks [15]. |

| 18–44 (across 8 studies) | Multivariate Linear (MVL) | Robust baseline performance; best in 3/8 cases [14]. | Potential underfitting, failing to capture complex, non-linear chemical relationships [14]. |

| 18–44 (across 8 studies) | Random Forest (RF) | Best in only 1/8 cases; limitations in extrapolation [14]. | Poor performance predicting outside the range of training data [14]. |

Experimental Protocol: Hyperparameter Tuning to Prevent Overfitting

This protocol details the methodology for using the ROBERT automated workflow, designed to enable reliable use of non-linear models in low-data regimes [15] [9].

Objective

To optimize hyperparameters for machine learning models in a way that explicitly minimizes overfitting and improves generalization, particularly for interpolation and extrapolation.

Materials/Software Requirements

- Software: ROBERT software package [15] [9].

- Input Data: A curated CSV file containing molecular descriptors and target property values.

- Computing Environment: Standard academic computing resources are sufficient for the low-data regime [11].

Step-by-Step Procedure

Data Preparation and Splitting:

Defining the Hyperparameter Optimization Objective:

- The key to this protocol is the use of a combined Root Mean Squared Error (RMSE) as the objective function for Bayesian optimization [15]. This combined metric is calculated as follows:

- Interpolation RMSE: Derived from a 10-times repeated 5-fold cross-validation on the training/validation data.

- Extrapolation RMSE: Assessed via a selective sorted 5-fold CV. The data is sorted by the target value (y) and split; the highest RMSE from the top and bottom partitions is used [15].

- The two RMSE values are averaged to form the final "combined RMSE" that the optimizer seeks to minimize.

- The key to this protocol is the use of a combined Root Mean Squared Error (RMSE) as the objective function for Bayesian optimization [15]. This combined metric is calculated as follows:

Execution of Bayesian Optimization:

- Execute the ROBERT workflow. It will iteratively propose hyperparameter sets, train models, and evaluate them using the combined RMSE metric.

- The process continues for a predefined number of iterations, with the Bayesian algorithm focusing on regions of the hyperparameter space that yield models with lower combined RMSE and thus lower overfitting [15].

Model Selection and Evaluation:

- Upon completion, the model with the best (lowest) combined RMSE score is selected.

- The final model's performance is evaluated on the held-out external test set that was reserved in Step 1 [9].

Visualization of Workflow

The following diagram illustrates the logical flow and key components of the hyperparameter optimization workflow designed to prevent overfitting.

The Scientist's Toolkit: Key Research Reagents & Solutions

This table lists essential computational "reagents" and their functions for building robust machine learning models in low-data regimes.

| Tool / Solution | Function & Explanation |

|---|---|

| ROBERT Software | An automated workflow tool that performs data curation, hyperparameter optimization (using the combined RMSE metric), model selection, and generates comprehensive evaluation reports, reducing human bias [15] [9]. |

| Combined RMSE Metric | The core objective function that measures a model's performance on both interpolation (standard CV) and extrapolation (sorted CV), directly targeting overfitting during optimization [14] [15]. |

| Bayesian Optimization | An efficient strategy for navigating the hyperparameter space. It is used within ROBERT to iteratively find parameter sets that minimize the combined RMSE [14] [9]. |

| Adaptive Checkpointing (ACS) | A training scheme for multi-task graph neural networks that mitigates "negative transfer" by saving task-specific model checkpoints, allowing accurate predictions with as few as 29 labeled samples per property [18]. |

| Hyperband Algorithm | A hyperparameter optimization algorithm that is highly computationally efficient, providing optimal or near-optimal accuracy much faster than some other methods, which is crucial for iterative research [16]. |

FAQ: Identifying and Troubleshooting Overfitting

What are the definitive signs that my solubility model is overfitting?

An overfit model exhibits a significant performance gap between training and validation data. Key indicators include:

- High Training Accuracy, Low Testing Accuracy: The model achieves a very high R² or low error on the training data but performs poorly on unseen test or validation data [2] [19].

- Failure to Generalize: The model makes inaccurate predictions for new data points that fall within the same feature space as the training data but were not part of the training set. For example, a model trained to predict solubility might fail if the new pressure and temperature conditions are slightly different from the training set, even if they are within the same range [2] [19].

- Complex, Unjustified Predictions: The model's predictions for solubility might show unrealistic, highly complex fluctuations in response to small changes in temperature or pressure that are not supported by the underlying physics [19].

Our drug solubility model uses only 45 data points. Are we at high risk of overfitting?

Yes, a small dataset is a primary risk factor for overfitting [2] [20]. With only a limited number of data samples, the machine learning model may memorize the noise and specific characteristics of the training data instead of learning the general underlying relationship between input features (like temperature and pressure) and solubility. To mitigate this, you should employ techniques such as cross-validation and consider using simpler models or regularization to constrain the model's complexity [2] [20].

What is the most effective method to detect overfitting during model development?

K-fold cross-validation is a highly effective and standard method for detecting overfitting [2] [19]. The process involves:

- Splitting the entire training dataset into K equally sized subsets (folds).

- Training the model K times, each time using a different fold as the validation set and the remaining K-1 folds as the training set.

- Calculating the performance metrics for each of the K validation folds. A large variance in performance scores across the different folds or a consistently high error rate on the validation folds indicates that the model is overfitting and is not generalizing well [2].

How can ensemble methods help prevent overfitting in our chemical models?

Ensemble methods, such as bagging and boosting, combine multiple weaker models to create a more robust and accurate final model [2] [19].

- Bagging (Bootstrap Aggregating): This method (e.g., Random Forest) trains multiple models in parallel on different random subsets of the training data. It reduces variance and overfitting by averaging the results, which helps to cancel out errors [19] [21].

- Boosting: This method (e.g., AdaBoost, Gradient Boosting) trains models sequentially, where each new model focuses on correcting the errors made by the previous ones. While powerful, boosting must be carefully managed as it can sometimes lead to overfitting on the training data if the number of iterations is too high [22] [19]. Studies have shown that boosted versions of models like K-Nearest Neighbors (KNN) can achieve high accuracy (R² > 0.99) when properly optimized, demonstrating their utility in solubility prediction [22].

Experimental Protocol: A Tale of Two Solubility Models

This protocol analyzes a published study on predicting the solubility of the drug Letrozole in supercritical CO₂ to illustrate a robust methodology that mitigates overfitting [22].

Objective

To predict the solubility of Letrozole using temperature and pressure as inputs, and to evaluate the generalizability of K-Nearest Neighbors (KNN) and its ensemble versions.

Materials and Dataset

- Dataset: 45 experimental data points for Letrozole solubility across temperatures (308-338 K) and pressures (12.2-35.5 MPa) [22].

- Software: Python 3.11 with scikit-learn, NumPy, and Matplotlib libraries [22].

Methodology and Workflow

The following diagram illustrates the experimental workflow designed to prevent overfitting.

Detailed Experimental Steps

Data Pre-processing:

- Normalization: Input features (temperature and pressure) were normalized using a min-max scaler to ensure that variables with larger scales did not disproportionately influence the model [22].

- Outlier Removal: The Isolation Forest algorithm was used to identify and remove anomalous data points that could mislead the model during training [22].

Data Splitting: The cleaned dataset was split into a training set (80% of data) and a hold-out test set (20% of data). The test set was kept completely separate and was only used for the final evaluation to provide an unbiased assessment of generalization [22].

Model Training and Hyperparameter Optimization:

- Three model types were selected: standard KNN, AdaBoost-KNN, and Bagging-KNN [22].

- The Golden Eagle Optimizer (GEOA) was used to systematically tune the hyperparameters of each model, rather than relying on manual tuning which can introduce bias. This meta-heuristic algorithm helps find a robust set of parameters that improve model performance [22].

Model Evaluation and Overfitting Check:

- The final optimized models were evaluated on the unseen test set.

- Performance metrics from the training and test sets were compared. A close alignment in performance indicates a well-generalized model, while a large discrepancy signals overfitting.

Results and Analysis

The table below summarizes the performance of the three models, highlighting the key differences between training and testing performance that are critical for diagnosing overfitting.

Table: Performance Comparison of Solubility Prediction Models for Letrozole [22]

| Model | R-squared (R²) Training (Typical) | R-squared (R²) Testing (Reported) | Key Indicator of Generalization |

|---|---|---|---|

| KNN | Very High (e.g., >0.99) | 0.9907 | Good generalization, minimal overfitting |

| AdaBoost-KNN | Very High (e.g., >0.99) | 0.9945 | Best generalization, high accuracy on new data |

| Bagging-KNN | Very High (e.g., >0.99) | 0.9938 | Excellent generalization, robust performance |

Case Study Insight: In this instance, all three models, particularly the ensemble methods, showed excellent performance on the test set, indicating that the workflow (including data pre-processing, train-test splitting, and hyperparameter optimization) successfully minimized overfitting. The AdaBoost-KNN model demonstrated the highest predictive accuracy on unseen data [22]. This successful outcome contrasts with a scenario of overfitting, which would be characterized by near-perfect training scores (e.g., R² = 0.999) but significantly lower test scores (e.g., R² < 0.9).

The Scientist's Toolkit: Essential Reagents for Robust ML Models

Table: Key Solutions for Preventing Overfitting in Chemical ML Models

| Research Reagent / Solution | Function in Preventing Overfitting |

|---|---|

| K-Fold Cross-Validation [2] [19] | A data resampling procedure that thoroughly tests model generalizability by using different subsets of data for training and validation in multiple rounds, providing a reliable performance estimate. |

| Golden Eagle Optimizer (GEOA) [22] | A bio-inspired optimization algorithm used for automated and effective hyperparameter tuning, helping to find a model configuration that generalizes well rather than just memorizes training data. |

| Ensemble Methods (e.g., Bagging, Boosting) [22] [2] [19] | Machine learning techniques that combine multiple base models to reduce variance (Bagging) and bias (Boosting), leading to a more stable and accurate final model. |

| Hold-Out Test Set [22] [20] | A portion of the dataset (e.g., 20%) that is completely withheld from the model training process. It serves as the ultimate benchmark for assessing real-world performance and detecting overfitting. |

| Regularization (L1/L2) [19] [20] | A technique that adds a penalty term to the model's loss function to discourage complexity by constraining the size of model coefficients, effectively simplifying the model. |

| Isolation Forest [22] | An algorithm used for anomaly detection during data pre-processing to identify and remove outliers that could otherwise force the model to learn spurious and non-generalizable patterns. |

| Data Augmentation [20] | A strategy to artificially expand the size and diversity of the training dataset by creating modified versions of existing data points, helping the model learn more invariant patterns. |

The Critical Role of Data Quality and Curation in Mitigation

Frequently Asked Questions (FAQs)

Q1: Why is my highly-tuned model failing on new chemical compounds despite excellent validation scores? This is a classic sign of overfitting due to inadequate data curation. If your training data contains duplicates, inconsistencies, or experimental artifacts, hyperparameter optimization (HPO) can simply learn these flaws rather than the underlying chemistry. One study found that a kinetic solubility dataset contained over 37% duplicated measurements due to different standardization procedures, which severely biases performance estimates [11].

Q2: Should I prioritize collecting more data or improving my existing dataset quality? Quality consistently outperforms quantity. In a study predicting Normal Boiling Point (NBP), models trained on a smaller, rigorously curated dataset from DIPPR 801 significantly outperformed models using a larger, uncurated public dataset, despite the smaller size. The curated dataset provided better accuracy, reduced bias, and improved generalization [23].

Q3: Does advanced hyperparameter optimization always lead to better models? Not necessarily. Research shows that aggressive HPO does not always result in better models and can itself become a source of overfitting. In some solubility prediction tasks, using pre-set hyperparameters yielded similar performance to extensive HPO but reduced computational effort by around 10,000 times [11].

Q4: What is the most common data-related mistake in chemical ML pipelines? Neglecting systematic data deduplication across aggregated sources. Molecules often appear multiple times with different SMILES representations (e.g., with/without stereochemistry, ionized/neutral forms) or slightly different experimental values. Failing to account for this creates data leakage and over-optimistic performance [11].

Troubleshooting Guides

Problem: Model Performance Deteriorates on Real-World Compounds

Symptoms

- High training accuracy but poor performance on new, external test sets.

- Significant performance drop when moving from benchmark datasets to proprietary compounds.

- Model predictions are inconsistent with established chemical principles.

Diagnosis and Solutions

| Step | Action | Expected Outcome |

|---|---|---|

| 1. Check for Data Leakage | Audit your dataset for structural duplicates using standardized InChI keys and value-based deduplication (merging records with differences <0.5 log units) [11]. | Eliminates artificially inflated performance by ensuring compound independence. |

| 2. Assess Data Provenance | Review experimental protocols for training data. Filter for consistent conditions (e.g., temperature 25±5°C, pH 7±1 for solubility). Remove data from non-standard protocols [11]. | Creates a more coherent and reliable dataset, reducing "noise" the model might learn. |

| 3. Validate Against Quality Benchmarks | Test your model on a small, high-quality internal set of compounds with reliably measured properties. | Provides an unbiased estimate of true generalization performance and identifies specific failure modes. |

| 4. Simplify the Model | Try training with pre-set hyperparameters or a less complex model architecture. | If performance remains similar, it suggests previous HPO was overfitting to data artifacts. A robust model should not rely on excessive tuning [11]. |

Problem: Hyperparameter Optimization is Inefficient and Unstable

Symptoms

- HPO results vary significantly between different random seeds.

- The best hyperparameter set performs no better than reasonable defaults.

- The optimization process requires massive computational resources.

Diagnosis and Solutions

| Step | Action | Expected Outcome |

|---|---|---|

| 1. Pre-Curate Your Data | Before any tuning, apply rigorous data cleaning: remove duplicates, standardize structures, and handle outliers. | A cleaner dataset provides a more stable and meaningful signal for the HPO algorithm to exploit, improving consistency. |

| 2. Choose an Efficient HPO Method | Move beyond Grid Search. Use Bayesian Optimization (e.g., with Optuna) which can find optimal parameters 6.77 to 108.92 times faster than Grid or Random Search [24]. | Drastically reduces computational cost and time while achieving equal or better performance. |

| 3. Implement Early Stopping | Use a framework that supports aggressive pruning to halt unpromising trials early in the training process [25]. | Saves substantial computational resources by focusing only on hyperparameter sets that show potential. |

| 4. Use a Robust Validation Scheme | Employ K-Fold Cross-Validation with a focus on the test set performance. Never tune hyperparameters based solely on training metrics [26]. | Provides a more reliable estimate of generalization and prevents overfitting to the validation set. |

Quantitative Evidence: Data Quality vs. Model Performance

The following table summarizes key experimental findings from recent studies that quantify the impact of data curation on machine learning models in chemistry.

Table 1: Impact of Data Curation on Model Performance in Chemical Property Prediction

| Study Focus | Dataset Description | Curation Method | Key Experimental Result |

|---|---|---|---|

| Normal Boiling Point (NBP) Prediction [23] | • Larger Set: 5277 entries from public SPEED DB• Smaller Set: Rigorously curated DIPPR 801 DB | Rigorous evaluation of experimental values, agreement with vapor pressure curves, removal of physically implausible values (e.g., Cl₂ NBP listed as 993K vs. actual 239K). | The model trained on the smaller, curated set outperformed the model trained on the larger, uncurated set in accuracy, bias, and generalization, demonstrating data quality trumps quantity. |

| Solubility Prediction [11] | Seven thermodynamic/kinetic solubility datasets (e.g., AQUA, ESOL, CHEMBL) | SMILES standardization, deduplication, removal of metal-containing compounds, inter-dataset curation with weighting based on source quality. | Hyperparameter optimization offered no consistent advantage over using pre-set parameters, suggesting HPO can overfit to noise in insufficiently curated data. |

| Kinetic Solubility Data [11] | KINECT dataset from OCHEM (164k+ records) | Identification and merging of 24,199 duplicate records originating from the same original PubChem assay but processed differently. | Over 37% duplication rate was identified. Failure to deduplicate would lead to highly biased and optimistic model validation. |

Experimental Protocol: A Data-Centric Workflow for Robust Chemical ML

This section provides a detailed, step-by-step methodology for a data curation and model training experiment, as cited in the research.

Objective: To systematically evaluate the impact of data curation and hyperparameter optimization on the generalization performance of a solubility prediction model.

Materials & Computational Setup:

- Software: Python with libraries for cheminformatics (e.g., RDKit), machine learning (e.g., Scikit-learn, PyTorch, ChemProp, TransformerCNN), and hyperparameter optimization (e.g., Optuna).

- Hardware: Access to GPU resources is recommended for efficient deep learning and HPO.

Procedure:

Data Acquisition and Versioning:

- Collect raw solubility data from multiple public sources (e.g., AqSolDB, CHEMBL, OCHEM).

- Use a data version control system (e.g., DVC or lakeFS) to create a branch for the experiment, preserving the original raw data [27].

Data Curation and Cleaning:

- Standardization: Standardize all SMILES strings using a tool like MolVS to a consistent representation (e.g., neutral form, specific tautomer) [11].

- Deduplication: Identify and merge duplicates using InChI keys. If multiple values exist for the same molecule, merge them if the difference is within experimental error (e.g., < 0.5 log units) or apply a weighting scheme [11].

- Filtering: Remove compounds that do not meet the experimental criteria of your study (e.g., remove metal-organics, compounds measured at non-standard pH/temperature) [11].

- Validation: Manually inspect a sample of curated data and check for obvious errors (e.g., implausible property values). Commit the final cleaned dataset to your version control system.

Data Splitting:

- Split the curated dataset into training, validation, and test sets using a scaffold split to assess the model's ability to generalize to novel chemical structures, which is more challenging than a random split.

Model Training with HPO vs. Pre-sets:

- HPO Arm: Use a Bayesian optimization tool (e.g., Optuna) to tune the hyperparameters of a graph-based model (e.g., ChemProp) on the training set. Use the validation set for guidance.

- Pre-set Arm: Train the same model architecture on the same training set using a set of pre-defined, reasonable hyperparameters from the literature.

- Alternative Model: Train a TransformerCNN model (which uses a NLP-based representation of SMILES) with its pre-set hyperparameters for comparison [11].

Evaluation:

- Evaluate all trained models on the held-out test set.

- Record key performance metrics (e.g., RMSE, R²) and compare the results between the HPO-tuned model, the pre-set model, and the alternative TransformerCNN model.

The entire workflow is summarized in the following diagram:

The Scientist's Toolkit: Key Research Reagents & Solutions

This table details essential computational tools and their functions for implementing a data-centric machine learning pipeline in chemical research.

Table 2: Essential Tools for Data-Centric Chemical Machine Learning

| Tool / Solution | Category | Primary Function | Relevance to Mitigating Overfitting |

|---|---|---|---|

| MolVS [11] | Cheminformatics | Standardizes chemical structures (SMILES) to a consistent representation. | Preprocessing. Reduces noise by ensuring each unique molecule has a single, canonical representation, preventing false duplicates. |

| InChI Key | Cheminformatics | Provides a standardized unique identifier for chemical substances. | Deduplication. The definitive method for identifying and merging duplicate molecular records across different datasets. |

| lakeFS / DVC [27] | Data Version Control | Manages and versions datasets, enabling reproducible data pipelines and experiment branching. | Reproducibility & Governance. Allows isolation of preprocessing steps and rollback, ensuring experiments are based on a consistent, auditable data state. |

| Optuna [25] [24] | Hyperparameter Optimization | A Bayesian optimization framework that supports efficient searching and pruning of trials. | Efficient HPO. Reduces computational cost and the risk of overfitting the validation set by intelligently exploring the hyperparameter space. |

| TransformerCNN [11] | Model Architecture | A neural network using NLP-based representation of SMILES strings. | Alternative Representation. Cited as providing superior results with less tuning, potentially bypassing overfitting issues associated with graph-based methods. |

| Scikit-learn | Machine Learning | Provides tools for data splitting, preprocessing, baseline models, and validation. | Pipeline Foundation. Offers robust, standardized implementations for cross-validation and metrics, preventing evaluation errors. |

Practical Strategies and Automated Tools for Robust Hyperparameter Tuning

In the data-driven landscape of modern chemistry, machine learning (ML) models are powerful tools for accelerating discovery. However, their effectiveness, particularly with small datasets common in chemical research, is often limited by overfitting—a condition where a model memorizes training data noise rather than learning generalizable patterns, leading to poor performance on new, unseen data [28] [9]. Automated ML (AutoML) workflows like DeepMol and ROBERT are specifically designed to overcome this challenge. They provide robust, automated pipelines that integrate advanced hyperparameter optimization and regularization techniques to build models that are both accurate and reliable [29] [9]. This guide provides a technical overview and troubleshooting support for using these platforms.

Tool Comparison: DeepMol vs. ROBERT

The table below summarizes the core characteristics of DeepMol and ROBERT to help you understand their different approaches to preventing overfitting.

| Feature | DeepMol | ROBERT |

|---|---|---|

| Primary Focus | General-purpose AutoML for computational chemistry & drug discovery [29] [30] | Non-linear models in low-data regimes [9] |

| Core Anti-Overfitting Strategy | End-to-end pipeline optimization; automated hyperparameter tuning via Optuna [29] | Custom objective function combining interpolation & extrapolation performance [9] |

| Key Technical Implementation | Explores 140+ models, 34 feature extraction methods, and 14 scaling/selection methods [29] | Bayesian optimization using a combined RMSE metric from 10x 5-fold CV and sorted 5-fold CV [9] |

| Supported Learning Tasks | Regression, Classification (binary, multi-class, multi-label), Multi-task [29] | Regression (as applied in low-data scenarios) [9] |

| User Interface | Python-based framework; modular for custom pipelines [30] | Automated software; generates a comprehensive PDF report [9] |

The Scientist's Toolkit: Essential Components for Robust Chemical ML

| Item Category | Specific Examples | Function in Preventing Overfitting |

|---|---|---|

| Hyperparameter Optimizers | Bayesian Optimization [9] [16], Hyperband [16] | Automates the search for model configurations that generalize well, avoiding manual over-tuning. |

| Regularization Techniques | L1/L2 Regularization [31] [28], Dropout [31] [28] | Penalizes model complexity to prevent the model from becoming overly complex and fitting noise. |

| Data Splitting Strategies | Sorted 5-Fold CV (for extrapolation) [9] | Specifically tests the model's ability to predict data outside the range of the training set. |

| Validation Metrics | Combined RMSE [9] | Provides a holistic view of model performance on both familiar and new data domains. |

| Molecular Featurization | Morgan Fingerprints, Mol2Vec [30] | Creates meaningful numerical representations of molecules that capture relevant chemical features. |

Troubleshooting Guides and FAQs

Problem 1: My Model Has a Large Gap Between Training and Validation Performance

This is a classic sign of overfitting, where your model performs well on training data but poorly on validation or test data [28].

- Check 1: Verify Your Optimization Objective

- DeepMol: Ensure you are using the AutoML functionality, which is designed to select pipelines that generalize well. Manually check if your custom pipeline has appropriate complexity for your dataset size [29].

- ROBERT: Confirm the workflow is using the default combined RMSE metric. This is central to its design for low-data regimes, as it explicitly penalizes models that overfit during the hyperparameter optimization phase [9].

- Solution 1: Increase Regularization

- All Models: Explore increasing the strength of L1 or L2 regularization [28] [32].

- Neural Networks: In DeepMol, when building a

KerasModel, you can addDropoutlayers or increase the dropout rate. This randomly "drops out" neurons during training, forcing the network to learn more robust features [31] [30].

- Solution 2: Tune Critical Hyperparameters

- For Tree-Based Models (like Random Forest in DeepMol's

SklearnModel), reduce the maximum depth of the trees (max_depth). This limits the complexity of the model [28]. - For Neural Networks, reduce the number of hidden layers or neurons, or employ early stopping to halt training once validation performance stops improving [31] [28].

- For Tree-Based Models (like Random Forest in DeepMol's

Problem 2: Poor Performance on New Data Despite Good Validation Scores

This can occur if the validation set is not representative of real-world data variability or the model is overfitted to the validation set.

- Check 1: Assess Data Splitting Strategy

- ROBERT: It uses a systematic "even" distribution split for the external test set to ensure balanced representation. Verify your input data's target values (

y) are well-distributed [9]. - DeepMol: When using a

SingletaskStratifiedSplitterfor classification, ensure it is appropriate. For regression, consider alternative splitters if your data has a skewed distribution [30].

- ROBERT: It uses a systematic "even" distribution split for the external test set to ensure balanced representation. Verify your input data's target values (

- Check 2: Evaluate Extrapolation Capability

- ROBERT: Its core strength is testing extrapolation via sorted 5-fold CV. Check the report's "ROBERT score," which includes an evaluation of the model's performance on the highest and lowest data folds [9].

- DeepMol: Manually implement a similar evaluation by sorting your data by the target value and testing the model's performance on the extreme ranges to see if performance drops.

- Solution: Incorporate Domain Knowledge

- Ensure your molecular descriptors (features) are chemically meaningful. Using irrelevant features increases the risk of learning spurious correlations. DeepMol offers various feature selection methods (e.g.,

LowVarianceFS) to remove non-informative features [30].

- Ensure your molecular descriptors (features) are chemically meaningful. Using irrelevant features increases the risk of learning spurious correlations. DeepMol offers various feature selection methods (e.g.,

Problem 3: Long Training Times for Hyperparameter Optimization

Hyperparameter optimization (HPO) can be computationally expensive, especially with large search spaces [16].

- Check: HPO Algorithm Selection

- DeepMol: It uses Optuna, which supports various algorithms. For faster convergence, ensure you are using an efficient search algorithm like Bayesian optimization over a pure grid search [29].

- Solution 1: Leverage Efficient HPO Methods

- Recent studies suggest that the Hyperband algorithm can be significantly more computationally efficient than other methods while delivering optimal or near-optimal accuracy for molecular property prediction tasks [16].

- Solution 2: Adjust Search Scope

Problem 4: My Small Chemical Dataset (<50 Data Points) is Not Producing Reliable Models

Low-data regimes are inherently challenging and highly susceptible to overfitting [9].

- Check: Confirm Tool Suitability

- ROBERT: This tool was specifically designed and benchmarked on datasets with 18 to 44 data points. It is likely the more suitable choice for this scenario [9].

- DeepMol: While powerful, ensure you are using its full AutoML capabilities rather than a single, complex model. Let the AutoML engine find a simple, well-regularized pipeline [29].

- Solution: Adopt a specialized low-data workflow.

- Use ROBERT for its built-in methodology. Its use of a combined RMSE metric that explicitly penalizes poor extrapolation performance and its rigorous scoring system are tailored for small datasets [9].

- If using DeepMol, be extra conservative: use stronger regularization, a very small model architecture, and consider using a larger fraction of data for validation during the AutoML process.

Experimental Protocols for Key Studies

Protocol 1: Benchmarking DeepMol's AutoML Engine

This protocol is based on the rigorous experimental framework used to validate DeepMol [29].

- Data Preparation: Load your molecular dataset (e.g., ADMET properties from TDC repository) using DeepMol's

CSVLoaderorSDFLoader[29] [30]. - Standardization: Apply a molecular standardizer (e.g.,

BasicStandardizer,ChEMBLStandardizer) to ensure structural consistency and validity [29] [30]. - Featurization: Convert molecules into numerical features using a featurizer like

MorganFingerprint[30]. - AutoML Configuration: Initialize the

AutoMLclass. The engine will automatically explore a vast configuration space, including:- Data Standardization: 3 methods.

- Feature Extraction: 4 options encompassing 34 methods.

- Scaling & Selection: 14 methods.

- Models & Ensembles: 140 options [29].

- Optimization & Training: Run the AutoML experiment for a specified number of

trials. The system, powered by Optuna, will iteratively train models, evaluate them on a validation set, and feedback results to guide the search for the optimal pipeline [29]. - Evaluation: The best-performing pipeline is automatically selected and can be used for predictions on new, unseen data [29].

Protocol 2: Evaluating Models in Low-Data Regimes with ROBERT

This protocol summarizes the workflow used to benchmark ROBERT on small chemical datasets [9].

- Input Data: Provide a CSV file with your chemical data and descriptors.

- Automated Workflow: Execute ROBERT via a command line. The software automatically performs:

- Data curation and splitting (80/20 split with an "even" distribution).

- Hyperparameter Optimization: Uses Bayesian optimization with a custom objective function.

- Objective Function Calculation: The key to preventing overfitting is the combined RMSE, which is the average of:

- Interpolation RMSE: From a 10-times repeated 5-fold cross-validation.

- Extrapolation RMSE: From a selective sorted 5-fold CV, which assesses performance on the highest and lowest folds of data [9].

- Model Selection: The model with the best (lowest) combined RMSE is selected.

- Reporting & Scoring: ROBERT generates a PDF report containing performance metrics, cross-validation results, and a proprietary ROBERT score (on a scale of 10). This score evaluates predictive ability, overfitting, prediction uncertainty, and robustness against spurious models [9].

Workflow Diagrams

DeepMol Automated ML Pipeline

ROBERT Hyperparameter Optimization for Low-Data

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of Bayesian Optimization over traditional methods like Grid Search in chemical ML?

Bayesian Optimization (BO) uses a smarter, probabilistic approach to hyperparameter tuning. Instead of blindly testing combinations like Grid Search (exhaustive) or Random Search (random), BO builds a surrogate model of the objective function and uses an acquisition function to intelligently select the most promising hyperparameters to evaluate next. This allows it to find optimal configurations with far fewer evaluations, saving significant computational time and resources [33] [34] [35]. This is particularly crucial in chemistry where model training can be expensive.

FAQ 2: How can I prevent overfitting during the hyperparameter optimization process itself?

Overfitting during optimization, sometimes called "overtuning," occurs when an HPO method over-optimizes to the noise in the validation set, resulting in a configuration that does not generalize to unseen test data [36] [11]. Mitigation strategies include:

- Using Robust Validation: Employ repeated cross-validation (e.g., 10x 5-fold CV) instead of a single hold-out set to get a more stable performance estimate [9] [36].

- Incorporating Extrapolation Metrics: Design your objective function to penalize overfitting explicitly. One effective method is to use a combined metric that averages performance from both interpolation (standard CV) and extrapolation (sorted CV) tasks [9].

- Being Wary of Over-Optimization: In some low-data chemical ML scenarios, hyperparameter optimization may not provide a significant advantage over using a set of sensible pre-set parameters, drastically reducing computational cost and the risk of overfitting [11].

FAQ 3: Why is my tree-based model (like Random Forest) performing poorly on extrapolation tasks despite high validation scores?

Tree-based models are inherently limited in their ability to extrapolate beyond the range of values seen in the training data [9] [37]. If your chemical dataset requires predicting properties for molecules outside the training domain, this can lead to large errors. To address this:

- Algorithm Selection: Consider using models better suited for extrapolation, such as Neural Networks with appropriate regularization [9].

- Objective Function Design: As mentioned in FAQ 2, using an optimization objective that includes an extrapolation term (e.g., from a sorted CV) can guide the HPO process to select models that are more robust in these scenarios [9].

Troubleshooting Guides

Problem 1: The optimization process is taking too long and not converging.

Description: The hyperparameter tuning is consuming excessive computational resources without yielding a satisfactory model configuration.

Solution:

- Step 1: Switch to Bayesian Optimization. Replace Grid Search or Random Search with Bayesian Optimization, which is designed to find good parameters with fewer iterations [33] [35].

- Step 2: Narrow the Search Space. Use domain knowledge to define more realistic hyperparameter ranges. For instance, instead of a wide range for

max_depthin a decision tree, limit it based on your dataset size and complexity [33] [34]. - Step 3: Use a Faster Surrogate Model. While Gaussian Processes (GPs) are common, for high-dimensional or mixed parameter spaces, Random Forest or Tree-structured Parzen Estimator (TPE) surrogates can be faster [33].

- Step 4: Reduce Validation Overhead. If using cross-validation, consider a lower number of folds (e.g., 3-fold instead of 10-fold) for the optimization phase, and only perform rigorous validation on the final candidate model [36].

Problem 2: The optimized model performs well in validation but fails on new, external test data.

Description: The model shows signs of overfitting, likely due to overtuning on the validation set.

Solution:

- Step 1: Re-assess Your Objective Function. Ensure your optimization score is a reliable estimator of generalization. Implement a combined metric that evaluates both interpolation and extrapolation performance, as shown in recent chemical ML workflows [9].

- Step 2: Check for Data Leakage. Verify that the test set was completely held out and not used during the optimization process. The optimization should only use the training and validation splits [9] [11].

- Step 3: Analyze Overtuning. Compare the performance of your optimized model with a default model configuration. If the default model performs similarly or better on the true test set, you may be a victim of overtuning [36] [11].

- Step 4: Increase Regularization. During optimization, include strong regularization hyperparameters (e.g., L1/L2 penalties, dropout rates) and allow the HPO process to tune them to higher values to prevent overfitting [9] [38].

Experimental Protocols & Data

Table 1: Comparative Performance of Hyperparameter Optimization Methods on a Heart Failure Dataset

This table summarizes results from a study comparing optimization methods across different ML algorithms for a clinical dataset [35].

| Optimization Method | Algorithm | Best Accuracy | AUC Score | Computational Efficiency |

|---|---|---|---|---|

| Grid Search (GS) | Support Vector Machine (SVM) | 0.6294 | >0.66 | Low (High processing time) |

| Random Search (RS) | Random Forest (RF) | - | Robustness: +0.03815* | Medium |

| Bayesian Search (BS) | eXtreme Gradient Boosting (XGBoost) | - | Improvement: +0.01683* | High (Consistently less time) |

| Bayesian Search (BS) | Random Forest (RF) | - | - | High (Consistently less time) |

*Average AUC improvement after 10-fold cross-validation.

Table 2: Impact of Hyperparameter Optimization on Solubility Prediction Models

This table is based on a study that questioned the necessity of extensive HPO for graph-based solubility prediction models, highlighting the risk of overfitting [11].

| Dataset | Model | With HPO (cuRMSE) | With Pre-set Hyperparameters (cuRMSE) | Computational Effort |

|---|---|---|---|---|

| AQUA | ChemProp | ~0.90 | ~0.90 | ~10,000x reduction |

| ESOL | AttentiveFP | ~1.00 | ~1.05 | ~10,000x reduction |

| PHYSP | TransformerCNN | 0.79 | - | Used pre-set, outperformed others |

Protocol: Hyperparameter Optimization with Overfitting Control

This methodology is adapted from a state-of-the-art workflow for chemical ML in low-data regimes [9].

- Data Splitting: Reserve 20% of the initial data (or a minimum of four data points) as an external test set. This set must be locked away and only used for the final evaluation.

- Define the Search Space: Specify the hyperparameters and their ranges (e.g., learning rate: [0.001, 0.1], number of layers: [2, 4, 6]).

- Configure the Objective Function: Instead of a simple validation score, use a combined RMSE:

- Interpolation RMSE: Calculated using a 10-times repeated 5-fold cross-validation on the training/validation data.

- Extrapolation RMSE: Calculated using a sorted 5-fold CV, where data is partitioned based on the target value to assess performance on out-of-domain samples.

- The final objective to minimize is the average of these two RMSE values.

- Run Bayesian Optimization: Use a surrogate model (e.g., Gaussian Process) and an acquisition function (e.g., Expected Improvement) to optimize the combined RMSE over multiple iterations.

- Final Evaluation: Train a model with the best-found hyperparameters on the entire training/validation set and evaluate it once on the held-out external test set.

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Bayesian Optimization Workflow in Chemical ML

| Item | Function / Description | Examples / Notes |

|---|---|---|

| Surrogate Model | A probabilistic model that approximates the expensive black-box objective function. It predicts performance and uncertainty for unseen hyperparameters. | Gaussian Process (GP), Random Forest, Tree-structured Parzen Estimator (TPE) [33] [37]. |

| Acquisition Function | A function that guides the search by balancing exploration (high uncertainty) and exploitation (high predicted performance) to select the next hyperparameters to evaluate. | Expected Improvement (EI), Upper Confidence Bound (UCB) [33] [39]. |

| Objective Function | The function to be optimized. In chemical ML, this should be designed to measure generalization and control overfitting. | Combined RMSE (Interpolation + Extrapolation) [9], weighted cuRMSE [11]. |

| Resampling Strategy | The method used to validate model performance during optimization, providing the estimate for the objective function. | Repeated K-Fold Cross-Validation, Hold-Out Validation, Sorted K-Fold (for extrapolation) [9] [36]. |

| Automated ML Workflow | Software that integrates data curation, hyperparameter optimization, and model evaluation into a reproducible pipeline. | ROBERT software [9], other AutoML platforms. |

Implementing Effective Regularization Techniques for Neural Networks

Troubleshooting Guides and FAQs

This technical support resource addresses common challenges researchers face when applying neural networks to chemical machine learning, particularly in data-limited scenarios like hyperparameter tuning for chemical property prediction.

Troubleshooting Guide: Overcoming Overfitting

Problem: My model performs well on training data but generalizes poorly to new chemical data or external test sets. Symptoms:

- High accuracy on training data but significantly lower accuracy on validation/test sets [40] [41]

- Validation loss stops decreasing or begins increasing while training loss continues to decrease [42]

- Model fails to predict properties for new molecular structures outside training distribution [9]

Solutions:

Apply Regularization Techniques

Optimize Training Process

Improve Data Quality and Quantity

Frequently Asked Questions

Q1: Which regularization technique should I prioritize for small chemical datasets (<100 samples)?

For small datasets common in chemical ML, combine multiple approaches:

- Start with L2 regularization and dropout in fully connected layers [43]

- Implement early stopping with patience based on validation performance [40]

- Use data augmentation through SMILES randomization or molecular fingerprint variations [9]

- Consider Bayesian hyperparameter optimization with objective functions that penalize overfitting [9]

Q2: How can I detect if my hyperparameter optimization is causing overfitting?

Monitor these warning signs:

- Large performance gap between cross-validation and external test set results [11]

- Model requires excessively complex hyperparameter configurations [11]

- Small changes in data cause significant changes in optimal hyperparameters [11]

- Hyperparameter optimization provides minimal improvement over sensible defaults despite substantial computational cost [11]

Q3: What's the most effective way to regularize neural networks for molecular property prediction?

Based on recent benchmarking studies [9]:

- For datasets under 50 data points, neural networks with proper regularization can perform on par with or outperform multivariate linear regression

- Combined regularization approaches work best: dropout + weight decay + early stopping

- Bayesian hyperparameter optimization with objective functions that account for both interpolation and extrapolation performance significantly reduces overfitting

- Transfer learning from larger chemical datasets can provide strong regularization for target tasks with limited data

Regularization Techniques Comparison

Table 1: Regularization Methods for Chemical Machine Learning

| Technique | Mechanism | Best For | Chemical ML Considerations |

|---|---|---|---|

| L1 (Lasso) | Adds absolute value of weights to loss function; promotes sparsity [45] [43] | High-dimensional data; feature selection [43] | Identifying most relevant molecular descriptors; reducing feature space |

| L2 (Ridge) | Adds squared magnitude of weights to loss function; shrinks weights [45] [43] | Small datasets; correlated features [43] | Handling multicollinear molecular descriptors; general-purpose regularization |

| Dropout | Randomly disables neurons during training [40] [42] | Deep networks; overparameterized models [42] | Preventing overfitting to specific functional groups or structural patterns |

| Early Stopping | Halts training when validation performance stops improving [40] [43] | All network types; simple implementation [42] | Conserving computational resources during hyperparameter optimization |

| Data Augmentation | Creates modified versions of training samples [40] [44] | Image-based tasks; insufficient data [43] | SMILES enumeration; conformational variations; synthetic data generation |

| Batch Normalization | Normalizes layer inputs; acts as regularizer [42] [43] | Deep networks; unstable training [42] | Stabilizing training with diverse molecular representations |

Table 2: Regularization Parameters and Typical Values

| Technique | Key Hyperparameter | Typical Range | Optimization Method |

|---|---|---|---|

| L1/L2 | Regularization strength (λ) | 0.0001-0.1 [45] | Bayesian optimization [9] |

| Dropout | Drop probability | 0.2-0.5 [40] | Grid search or random search |

| Early Stopping | Patience (epochs) | 5-20 [40] | Based on dataset size and complexity |

| Data Augmentation | Augmentation intensity | Task-dependent [44] | Manual tuning based on domain knowledge |

| Elastic Net | L1/L2 ratio (α) | 0.2-0.8 [45] | Bayesian optimization [9] |

Experimental Protocols

Protocol 1: Evaluating Regularization Effectiveness in Low-Data Regimes

Based on: ROBERT Software Benchmarking Study [9]

Objective: Systematically compare regularization techniques for neural networks using chemical datasets of 18-44 data points.

Methodology:

- Data Preparation:

- Reserve 20% of data (minimum 4 points) as external test set with even distribution of target values

- Apply identical molecular descriptors for both linear and non-linear models

- Use steric and electronic descriptors following Cavallo et al. methodology

Hyperparameter Optimization:

- Implement Bayesian optimization with combined RMSE metric

- Calculate metric using 10-times repeated 5-fold CV for interpolation performance

- Include selective sorted 5-fold CV for extrapolation assessment

- Use highest RMSE between top and bottom partitions for extrapolation term

Model Assessment:

- Compare scaled RMSE (percentage of target value range) across techniques

- Evaluate using 10× 5-fold CV to mitigate splitting effects and human bias

- Apply scoring system (0-10 scale) assessing predictive ability, overfitting, prediction uncertainty, and detection of spurious predictions

Key Findings: Properly regularized neural networks performed as well as or better than multivariate linear regression in 5 of 8 benchmark datasets, demonstrating their viability in low-data chemical ML applications.

Protocol 2: Hyperparameter Optimization Without Overfitting

Based on: Solubility Prediction Study [11]

Objective: Determine whether hyperparameter optimization provides significant benefits over preset parameters for chemical property prediction.

Methodology:

- Dataset Curation:

- Collect seven thermodynamic and kinetic solubility datasets

- Apply rigorous data cleaning: SMILES standardization, duplicate removal, protocol filtering

- Implement "inter-dataset curation" with weighting to avoid overrepresentation

- Assign dataset quality weights (e.g., AQUA, PHYSP, ESOL: 1.0; OCHEM: 0.85)

Model Comparison:

- Compare computationally intensive hyperparameter optimization vs preset parameters

- Evaluate TransformerCNN against graph-based methods (ChemProp, AttentiveFP)

- Use identical statistical measures (RMSE vs cuRMSE) for fair comparison

- Assess computational requirements and performance tradeoffs

Statistical Evaluation:

- Use traditional RMSE and weighted cuRMSE for performance assessment

- Conduct pairwise comparisons across all datasets and methods

- Evaluate significance of differences using consistent statistical measures

Key Findings: Hyperparameter optimization did not always yield better models and sometimes led to overfitting. Present parameters achieved similar performance with approximately 10,000× reduction in computational effort.

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagents for Regularization Experiments

| Tool/Resource | Function | Application Notes |

|---|---|---|

| ROBERT Software | Automated ML workflow with hyperparameter optimization and regularization [9] | Implements combined RMSE metric for interpolation/extrapolation performance |

| Bayesian Optimization | Efficient hyperparameter search method [9] | Reduces overfitting risk during optimization; incorporates regularization terms |

| Cross-Validation Framework | Model performance assessment [9] | 10× repeated 5-fold CV for interpolation; sorted CV for extrapolation testing |

| Data Curation Pipeline | SMILES standardization and duplicate removal [11] | Critical for avoiding overfitting to duplicated molecular representations |

| Molecular Descriptors | Steric and electronic features [9] | Consistent descriptor sets enable fair regularization technique comparisons |

| Weighting Scheme | Inter-dataset curation [11] | Prevents overrepresentation of frequently measured compounds |

| Performance Metrics | Scaled RMSE, cuRMSE [9] [11] | Enables meaningful comparison across different regularization approaches |

Leveraging Combined Metrics to Penalize Interpolation and Extrapolation Errors

Frequently Asked Questions

1. What is the difference between interpolation and extrapolation, and why does it matter for my chemical model?

Answer: In machine learning, interpolation occurs when you make a prediction for a data point that falls within the bounds of your training dataset. Extrapolation happens when you try to predict for a point outside the training data range [46]. This is critical in chemistry because if your model is used to predict the properties of a new molecule that is very different from your training set (an extrapolation), it is much more likely to be inaccurate. Properly assessing a model's performance in both scenarios is key to ensuring its reliability in real-world applications like drug discovery [9].

2. My model has excellent cross-validation scores but performs poorly on new, diverse compounds. What is happening?