QSAR Techniques in Modern Drug Discovery: From Foundational Principles to AI-Driven Applications

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) modeling, a cornerstone computational method in chemical and pharmaceutical research.

QSAR Techniques in Modern Drug Discovery: From Foundational Principles to AI-Driven Applications

Abstract

This article provides a comprehensive overview of Quantitative Structure-Activity Relationship (QSAR) modeling, a cornerstone computational method in chemical and pharmaceutical research. Tailored for researchers, scientists, and drug development professionals, it explores the evolution of QSAR from its foundational principles to the integration of advanced artificial intelligence and machine learning. The scope encompasses core methodologies, diverse applications in drug design and toxicology, strategies for model optimization and troubleshooting, and rigorous validation frameworks. By synthesizing current trends and future directions, this review serves as a guide for developing robust, predictive QSAR models to accelerate efficient and ethical therapeutic discovery.

The Foundations of QSAR: From Historical Principles to Core Concepts

Quantitative Structure-Activity Relationship (QSAR) is a computational modeling approach that mathematically correlates chemical structures with biological activity [1]. These models are founded on the principle that variations in molecular structure lead to predictable changes in biological response, enabling researchers to predict the activity of new, untested compounds [2]. In QSAR, a set of "predictor" variables (molecular descriptors) is related to the potency of a "response" variable (biological activity), typically using regression or classification techniques [1]. This methodology has become a cornerstone in modern drug discovery, toxicology, and environmental risk assessment, allowing for the efficient prioritization of promising drug candidates and reducing the reliance on extensive laboratory testing [3] [2].

The fundamental equation of a QSAR model can be expressed as: Activity = f(physicochemical properties and/or structural properties) + error where the "error" term includes both model bias and observational variability [1]. Related terms include Quantitative Structure-Property Relationships (QSPR), which model chemical properties as the response variable, and specialized variants such as QSTR (toxicity), QSPR (pharmacokinetics), and QSBR (biodegradability) [1].

Core Principles and Historical Development

The conceptual foundation of QSAR was established in the 19th century. In 1868, Crum-Brown and Fraser first proposed that the physiological action of a substance was a function of its chemical composition and constitution [3]. However, it was not until 1964 that Hansch and Fujita formulated the first truly predictive mathematical QSAR tools, correlating biological activity with electronic, hydrophobic, and steric properties of phenyl substituents [4] [3]. This was complemented the same year by the Free-Wilson approach, which identified important positional features in a molecular scaffold [3].

The Hansch equation represents a landmark in QSAR development, establishing that a molecule's biological activity is primarily determined by its hydrophobic, steric, and electronic properties [4]. The classic form of the Hansch equation is:

lg(1/C) = aπ + bσ + cE_s + k

where C is the molar concentration of the compound that produces a standard biological response, π represents the hydrophobic substituent constant, σ represents the electronic Hammett constant, and E_s represents Taft's steric constant [5] [4].

Subsequent developments introduced pseudo-3D steric features in the 1970s, followed by true three-dimensional QSAR approaches in the 1980s, such as Comparative Molecular Field Analysis (CoMFA) in 1988, which revolutionized the field by considering the spatial distribution of molecular properties [5].

Essential Steps in QSAR Modeling

The development of a robust QSAR model follows a systematic workflow comprising several critical stages [1] [2]:

Data Preparation and Curatio

The initial phase involves compiling a high-quality dataset of chemical structures and their associated biological activities from reliable sources [2]. Key steps include:

- Data Cleaning: Removing duplicate, ambiguous, or erroneous entries; standardizing chemical structures (e.g., removing salts, normalizing tautomers, handling stereochemistry) [2]

- Activity Data Preparation: Converting all biological activities to a common unit (typically logarithms of half-maximal concentrations like IC₅₀ or EC₅₀) to ensure comparability [4] [6]

- Handling Missing Values: Employing appropriate techniques such as removal or imputation for compounds with missing data [2]

Molecular Descriptor Calculation and Selection

Molecular descriptors are numerical representations that quantify structural, physicochemical, and electronic properties of molecules [2]. A diverse set of descriptors should be calculated using software tools such as PaDEL-Descriptor, Dragon, or RDKit [2]. Common descriptor classes include:

Table 1: Categories of Molecular Descriptors Used in QSAR Modeling

| Descriptor Category | Description | Examples |

|---|---|---|

| Constitutional | Elementary molecular properties | Molecular weight, atom counts, bond counts, hydrogen bond donors/acceptors [4] [2] |

| Topological | Molecular connectivity and branching patterns | Molecular connectivity indices, Kier & Hall indices, graph-theoretical descriptors [5] [2] |

| Geometric | 3D molecular geometry | Molecular surface area, solvent-accessible surface area, molecular volume [5] [2] |

| Electronic | Electrical characteristics of molecules | Hammett constants (σ), dipole moment, HOMO/LUMO energies, partial atomic charges [5] [4] |

| Hydrophobic | Molecular partitioning behavior | Octanol-water partition coefficient (logP), hydrophobic substituent constants (π) [5] [4] |

Feature selection techniques are then applied to identify the most relevant descriptors, reduce dimensionality, and prevent overfitting. Common methods include filter methods (e.g., correlation analysis), wrapper methods (e.g., genetic algorithms), and embedded methods (e.g., LASSO regression) [2].

Model Building and Validation

The curated dataset is typically split into training, validation, and external test sets [2]. Various algorithms can be employed for model construction:

- Linear Methods: Multiple Linear Regression (MLR), Partial Least Squares (PLS) [3] [2]

- Non-linear Methods: Support Vector Machines (SVM), Artificial Neural Networks (ANN), Random Forest [3] [2]

Model validation is crucial to assess predictive performance and robustness [1] [7]. The OECD guidelines mandate that a valid QSAR model must have (1) a defined endpoint, (2) an unambiguous algorithm, (3) a defined domain of applicability, (4) appropriate measures of goodness-of-fit, robustness, and predictivity, and (5) a mechanistic interpretation when possible [4].

Table 2: Key Validation Parameters for QSAR Models

| Validation Type | Parameter | Description | Acceptance Criteria |

|---|---|---|---|

| Internal Validation | q² (LOO-CV) | Cross-validated correlation coefficient | q² > 0.5 considered good [6] |

| Internal Validation | R² | Coefficient of determination for training set | Closer to 1.0 indicates better fit [6] |

| External Validation | Q²ext | Predictive squared correlation coefficient for test set | Q²ext > 0.5 indicates good predictive power [1] |

| External Validation | RMSEP | Root Mean Square Error of Prediction | Lower values indicate better performance [8] |

| Randomization Test | R²scramble | Measures chance correlation | Should be significantly lower than model R² [6] |

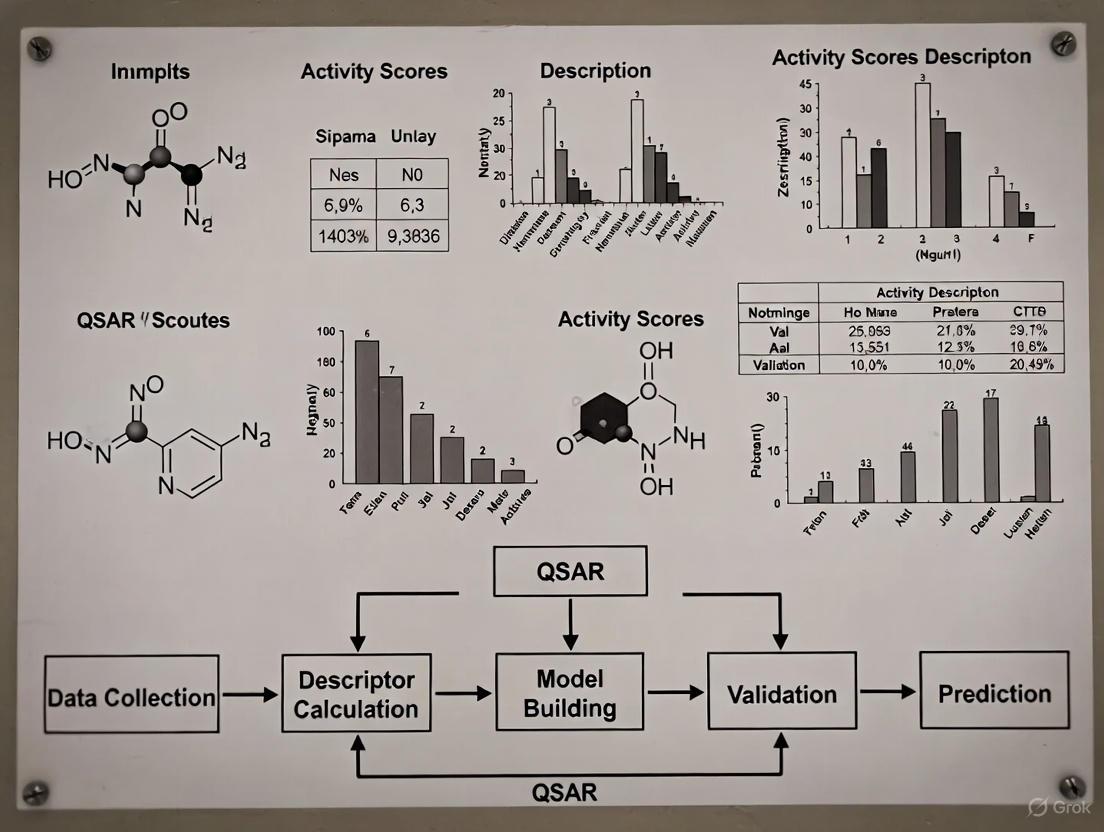

The following workflow diagram illustrates the complete QSAR modeling process:

Types of QSAR Approaches

2D-QSAR

Traditional 2D-QSAR methods utilize molecular descriptors derived from two-dimensional molecular representations, without considering spatial orientation [5]. The primary approaches include:

- Hansch Analysis: Establishes linear relationships between biological activity and physicochemical parameters (hydrophobicity, electronic, and steric properties) [5] [4]

- Free-Wilson Analysis: Uses indicator variables to represent the presence or absence of specific substituents at particular molecular positions [5]

2D-QSAR advantages include computational efficiency, no need for molecular alignment, and easier interpretation. Limitations include inability to capture three-dimensional steric and electronic effects crucial for receptor binding [5].

3D-QSAR

3D-QSAR methods incorporate the three-dimensional structures of molecules and their spatial property distributions [5]. Key methodologies include:

- Comparative Molecular Field Analysis (CoMFA): Analyzes steric and electrostatic interaction fields around aligned molecules [1] [5] [6]

- Comparative Molecular Similarity Indices Analysis (CoMSIA): Extends CoMFA to include additional fields such as hydrophobic, hydrogen bond donor, and acceptor fields [5] [6]

The 3D-QSAR workflow involves:

- Molecular Modeling and Conformational Analysis: Generating low-energy conformations [6]

- Molecular Alignment: Superimposing molecules based on their common pharmacophoric features [6]

- Interaction Field Calculation: Placing probe atoms on grid points around molecules to compute steric, electrostatic, and other relevant fields [6]

- Partial Least Squares (PLS) Analysis: Correlating field values with biological activity [6]

The following diagram illustrates the comparative 3D-QSAR methodology:

Advanced QSAR Approaches

Recent advancements have introduced several specialized QSAR methodologies:

- HQSAR (Hologram QSAR): Utilizes molecular fragment fingerprints and does not require molecular alignment, offering advantages in speed and simplicity [8]

- GQSAR (Group-Based QSAR): Considers fragment-based descriptors and their interactions to capture nonlinear effects [1]

- q-RASAR: A hybrid approach merging QSAR with similarity-based read-across techniques [1]

Application Notes: Case Studies

HQSAR for Predicting Gas Chromatography Retention Indices of Essential Oils

A recent study demonstrated the application of HQSAR to predict gas chromatography retention indices (RI) for 60 plant essential oil components [8]. The optimized HQSAR model achieved significant predictive performance with the following parameters:

Table 3: Validation Parameters for Essential Oil RI Prediction Model [8]

| Validation Method | Parameter | Value | Interpretation |

|---|---|---|---|

| External Test Set | RMSEP | 40.45 | Good predictive accuracy |

| External Test Set | R²pred | 0.984 | Excellent model fit |

| External Test Set | CCC | 0.968 | Strong agreement |

| External Test Set | MRE | 2.20% | Low relative error |

| Leave-One-Out CV | RMSECV | 72.56 | Moderate internal consistency |

| Leave-One-Out CV | MRE | 4.17% | Acceptable relative error |

The optimal model parameters were: fragment size = 1-4 atoms, fragment distinction = "C, Ch" (atoms and chain atoms), and hologram length = 199 [8]. Molecular contribution maps revealed that aromatic compounds with hydroxyl groups attached to alkyl chains showed increased RI values, while aliphatic compounds with long alkyl chains also exhibited higher RI values [8].

AI-Powered QSAR for HGFR Inhibitor Development in Cancer Therapy

Artificial intelligence has revolutionized QSAR modeling through machine learning algorithms that automatically extract complex features from molecular structures [9]. In developing Hepatocyte Growth Factor Receptor (HGFR) inhibitors for cancer treatment, AI-powered QSAR models have demonstrated:

- Higher Predictive Accuracy: Ability to capture nonlinear relationships between molecular structure and inhibitory activity [9]

- Accelerated Screening: Rapid virtual screening of large compound libraries to identify potential HGFR inhibitors [9]

- Toxicity Prediction: Prediction of compound toxicity profiles to prioritize safer drug candidates [9]

- Structural Optimization: Guidance for medicinal chemists to optimize lead compounds based on structure-activity relationships [9]

Challenges in AI-powered QSAR include data quality dependence, model interpretability issues, and the need for experimental validation [9].

Table 4: Essential Resources for QSAR Modeling

| Resource Category | Examples | Function/Application |

|---|---|---|

| Descriptor Calculation Software | PaDEL-Descriptor, Dragon, RDKit, Mordred [2] | Generate molecular descriptors from chemical structures |

| Cheminformatics Platforms | DataWarrior, OpenBabel, ChemAxon [3] [2] | Chemical structure visualization, data analysis, and property calculation |

| 3D-QSAR Software | SYBYL (for CoMFA/CoMSIA), Schrodinger [6] | Perform 3D-QSAR analyses including molecular alignment and field calculation |

| Statistical Analysis Tools | R packages (pls, caret, randomForest), Python (scikit-learn) [3] [2] | Model building, validation, and statistical analysis |

| Chemical Databases | PubChem, ChEMBL, ZINC [2] | Sources of chemical structures and associated biological activity data |

| Model Validation Tools | QSAR Model Reporting Format (QMRF), Applicability Domain Assessment Tools [1] [4] | Standardized model reporting and reliability assessment |

Experimental Protocols

Protocol 1: Developing a 2D-QSAR Model Using Multiple Linear Regression

Purpose: To create a predictive 2D-QSAR model for a series of compounds with known biological activity.

Materials and Reagents:

- Set of chemical structures with associated biological activities (IC₅₀, EC₅₀, Ki, etc.)

- Molecular descriptor calculation software (e.g., PaDEL-Descriptor)

- Statistical analysis software (e.g., R, Python with scikit-learn)

Procedure:

- Data Preparation:

- Curate a dataset of 30-50 compounds with consistent biological activity measurements

- Convert activity values to logarithmic scale (e.g., pIC₅₀ = -logIC₅₀)

- Apply Lipinski's "Rule of Five" filters if developing drug-like compounds [4]

Descriptor Calculation and Preprocessing:

- Calculate molecular descriptors using selected software

- Remove descriptors with zero variance or high correlation (>0.95)

- Standardize remaining descriptors (mean=0, variance=1)

Dataset Division:

- Randomly split data into training set (70-80%) and external test set (20-30%)

- Ensure both sets represent similar chemical space and activity ranges

Model Development:

- Perform stepwise regression or genetic algorithm-based feature selection

- Build MLR model using training set: Activity = β₀ + β₁D₁ + β₂D₂ + ... + βₙDₙ

- Validate model using leave-one-out cross-validation

Model Validation:

- Apply model to external test set

- Calculate R²pred, RMSEP, and other validation metrics

- Perform Y-randomization test to confirm model significance

Protocol 2: Conducting a 3D-QSAR CoMFA/CoMSIA Study

Purpose: To develop a 3D-QSAR model using CoMFA/CoMSIA methodologies.

Materials and Reagents:

- Set of ligand structures with known 3D configurations

- Molecular modeling software with CoMFA/CoMSIA capabilities (e.g., SYBYL)

- Hardware capable of molecular mechanics calculations

Procedure:

- Ligand Preparation:

- Generate 3D structures for all compounds

- Perform energy minimization using appropriate force fields

- Conduct conformational analysis to identify lowest energy conformers

Molecular Alignment:

- Identify common structural framework or pharmacophore

- Align molecules using atom-based or field-based fitting methods

- Verify alignment quality through visual inspection

Field Calculation:

- Create a 3D grid box encompassing all aligned molecules

- Set grid spacing to 2.0 Å for optimal resolution

- Calculate steric (Lennard-Jones) and electrostatic (Coulombic) fields using appropriate probe atoms

CoMSIA Field Calculation (Optional):

- Calculate additional similarity fields: hydrophobic, hydrogen bond donor, hydrogen bond acceptor

- Use Gaussian distance functions with default attenuation factor (α=0.3)

Partial Least Squares (PLS) Analysis:

- Apply PLS regression to correlate field values with biological activity

- Use cross-validation to determine optimal number of components

- Generate statistical parameters (q², R², standard error)

Contour Map Analysis:

- Visualize steric and electrostatic contour maps

- Interpret regions where specific molecular features enhance or diminish activity

- Use insights for rational molecular design

Troubleshooting Tips:

- Poor alignment: Try different alignment rules or field-fit methods

- Low q² value: Check for outliers, reconsider molecular alignment, or adjust grid parameters

- Overfitting: Reduce number of components in PLS analysis or increase sample size

QSAR modeling represents a powerful computational approach that quantitatively links molecular structure to biological activity, serving as an indispensable tool in modern drug discovery and chemical risk assessment [1] [3] [7]. The methodology has evolved significantly from its origins in classical 2D-QSAR to sophisticated 3D and AI-powered approaches capable of capturing complex structure-activity relationships [5] [9]. Successful implementation requires careful attention to data quality, appropriate descriptor selection, rigorous validation, and clear definition of the model's applicability domain [1] [4] [2]. As QSAR methodologies continue to advance through integration with artificial intelligence and federated learning approaches [10] [9], their impact on accelerating chemical discovery while reducing laboratory testing requirements is expected to grow substantially.

The development of Quantitative Structure-Activity Relationships (QSAR) represents a pivotal advancement in modern chemistry and drug discovery, enabling researchers to mathematically correlate the structural features of compounds with their biological activity. This paradigm shifted pharmaceutical research from a purely empirical endeavor to a rational, predictive science. The journey began with fundamental physical organic chemistry principles established by Hammett and evolved through the pioneering work of Hansch and others, who recognized that these principles could be systematically applied to biological systems. These methodologies form the historical cornerstone of contemporary computer-aided drug design (CADD), providing a quantitative framework that continues to underpin modern virtual screening and lead optimization strategies [11]. The core premise—that molecular behavior can be predicted from quantifiable structural parameters—has expanded into a sophisticated interdisciplinary field, integrating computational chemistry, statistics, and biology to accelerate the development of new therapeutic agents.

The Hammett Equation: The Pioneering QSAR Model

In 1937, Louis Plack Hammett introduced a groundbreaking quantitative model that forever changed how chemists analyze substituent effects on reaction rates and equilibria. The Hammett equation formalized the relationship between chemical structure and reactivity for meta- and para-substituted benzoic acid derivatives, establishing the first robust linear free-energy relationship (LFER) [12].

Mathematical Formulation and Parameters

The Hammett equation is elegantly simple yet powerfully predictive:

[ \log\left(\frac{K}{K_0}\right) = \sigma\rho ]

or for reaction rates:

[ \log\left(\frac{k}{k_0}\right) = \sigma\rho ]

Where:

- ( K ) and ( k ) are the equilibrium constant and rate constant for a substituted compound

- ( K0 ) and ( k0 ) are the corresponding values for the unsubstituted reference compound (where the substituent is hydrogen)

- ( \sigma ) (sigma) is the substituent constant, a quantitative measure of a substituent's electron-withdrawing or electron-donating ability

- ( \rho ) (rho) is the reaction constant, which indicates the sensitivity of a given reaction to substituent effects [12] [13]

Experimental Protocol: Determining Substituent Constants

Principle: The substituent constant (σ) quantifies the electronic effect of a substituent relative to hydrogen. By convention, σ values are determined using the ionization of benzoic acids in water at 25°C as the model reaction, with ρ set to 1.0 [12].

Procedure:

- Prepare benzoic acid derivatives: Synthesize or obtain high-purity meta- and para-substituted benzoic acids with various substituents (e.g., -NO₂, -CN, -Cl, -CH₃, -OCH₃).

- Measure acid dissociation constants: Determine the acid dissociation constant (Kₐ) for each substituted benzoic acid in aqueous solution at 25°C using potentiometric titration or UV-spectrophotometric methods.

- Calculate substituent constants: For each substituent, calculate σ using the equation: [ \sigmaX = \log(K{X}/K{H}) ] where ( KX ) is the acid dissociation constant for the substituted benzoic acid and ( K_H ) is the constant for unsubstituted benzoic acid.

- Tabulate values: Compile σₘ (meta) and σₚ (para) values for each substituent. Electron-withdrawing groups have positive σ values, while electron-donating groups have negative σ values [12] [13].

Table 1: Selected Hammett Substituent Constants

| Substituent | σₘ (meta) | σₚ (para) |

|---|---|---|

| -N(CH₃)₂ | -0.211 | -0.83 |

| -NH₂ | -0.161 | -0.66 |

| -OCH₃ | +0.115 | -0.268 |

| -CH₃ | -0.069 | -0.170 |

| -H | 0.000 | 0.000 |

| -F | +0.337 | +0.062 |

| -Cl | +0.373 | +0.227 |

| -Br | +0.393 | +0.232 |

| -CF₃ | +0.43 | +0.54 |

| -CN | +0.56 | +0.66 |

| -NO₂ | +0.710 | +0.778 |

Source: [12]

Advanced Applications: σ⁺ and σ⁻ Constants

The standard Hammett equation has limitations when substantial resonance interactions occur between the substituent and reaction center. Extended substituent constants were developed to address these cases:

- σₚ⁻ constants: Used when a negative charge develops at a position in direct conjugation with the aromatic ring (e.g., ionization of phenols) [12] [13]

- σₚ⁺ constants: Applied when a positive charge develops in direct conjugation with the ring (e.g., SN1 reactions of cumyl chlorides) [12]

The selection between σ, σ⁺, and σ⁻ depends on the reaction mechanism and should be guided by the quality of the linear correlation in the Hammett plot [13].

Protocol: Conducting a Hammett Analysis

Objective: Determine the reaction constant (ρ) for a new reaction and gain insight into its mechanism.

Procedure:

- Select substituents: Choose a series of 8-12 meta- and para-substituted aromatic compounds with diverse electronic properties (covering both electron-donating and -withdrawing groups).

- Measure kinetic or equilibrium data: For the reaction of interest, determine rate constants (k) or equilibrium constants (K) for each substituted compound under identical conditions.

- Plot and calculate ρ: Plot log(k/k₀) or log(K/K₀) against the appropriate σ values. The slope of the resulting line provides the ρ value.

- Interpret results:

- ρ > 0: Negative charge is built (or positive charge is lost) in the rate-determining step

- ρ < 0: Positive charge is built (or negative charge is lost)

- |ρ| ≈ 0: Little charge development in the transition state

- Large |ρ|: Significant charge development that is highly sensitive to substituent effects [12] [13]

Figure 1: Workflow for conducting a Hammett analysis to determine the reaction constant ρ and gain mechanistic insights.

Hansch Analysis: Extending QSAR to Biological Systems

In the early 1960s, Corwin Hansch and Toshio Fujita revolutionized drug discovery by extending Hammett's principles to biological systems. They recognized that biological activity depends not only on electronic effects but also on lipophilicity and steric properties [14] [15] [11]. This multidimensional approach marked the formal beginning of modern QSAR as a discipline bridging chemistry and biology.

The Hansch Equation: A Multivariate Approach

The fundamental Hansch equation incorporates multiple physicochemical parameters:

[ \log(1/C) = a(\log P)^2 + b(\log P) + c\sigma + dE_s + k ]

Where:

- ( C ) is the molar concentration of compound producing a standard biological response (e.g., ED₅₀, IC₅₀)

- ( \log P ) is the logarithm of the octanol-water partition coefficient, measuring lipophilicity

- ( \sigma ) is the Hammett electronic constant

- ( E_s ) is the Taft steric parameter

- ( a, b, c, d, k ) are coefficients determined by multiple regression analysis [15] [11]

Key Physicochemical Parameters in Hansch Analysis

Table 2: Essential Physicochemical Parameters in Hansch Analysis

| Parameter | Symbol | Description | Experimental Determination |

|---|---|---|---|

| Lipophilicity | log P | Logarithm of the octanol-water partition coefficient for the whole molecule | Shake-flask method; HPLC retention time |

| Lipophilic Substituent Constant | π | π = log PX - log PH, measuring lipophilicity of a substituent | Derived from partition coefficient measurements |

| Electronic Effect | σ | Hammett constant measuring electron-withdrawing or -donating ability | Based on ionization of substituted benzoic acids |

| Steric Effect | E_s | Taft steric parameter based on acid-catalyzed hydrolysis of esters | Es = log(kX) - log(k_CH₃) |

| Molar Refractivity | MR | Measure of molecular volume and polarizability | MR = [(n²-1)/(n²+2)] × (MW/ρ), where n=refractive index |

Source: [15]

Protocol: Developing a Hansch Equation

Objective: Derive a quantitative model relating biological activity to physicochemical parameters for a series of analogous compounds.

Procedure:

- Compound selection: Assemble a congeneric series of 15-20 compounds with systematic structural variation. Ensure the biological activity data (e.g., IC₅₀, ED₅₀) is obtained under consistent experimental conditions.

- Parameter calculation: For each compound, calculate or obtain values for relevant physicochemical parameters (typically log P, π, σ, E_s, MR).

- Regression analysis: Perform multiple linear regression with log(1/C) as the dependent variable and the physicochemical parameters as independent variables.

- Model validation: Evaluate the model using statistical measures: correlation coefficient (r), standard deviation (s), and Fisher criterion (F). Use cross-validation to assess predictive power.

- Interpretation and prediction: Interpret the significance of each parameter in the final equation and use the model to predict the activity of new analogs [15] [11].

Example Application: A classic example includes the Hansch equation for the adrenergic blocking activity of β-haloaryl amines: [ \log(1/C) = 1.22\pi - 1.59\sigma + 7.89 ] This indicated that activity increased with higher lipophilicity (positive π coefficient) and electron-donating character (negative σ coefficient) [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for Hammett and Hansch Analysis

| Category | Specific Items | Function and Application |

|---|---|---|

| Reference Compounds | Benzoic acid, substituted benzoic acids, phenols, anilines | Standard compounds for determining substituent constants and validating methods |

| Solvent Systems | High-purity water, n-octanol, buffers at various pH values | Partition coefficient measurements and equilibrium constant determinations |

| Analytical Instruments | pH meter with combination electrode, UV-Vis spectrophotometer, HPLC system | Quantifying concentrations, determining pKₐ values, and analyzing compound purity |

| Computational Tools | Statistical software (R, Python with scikit-learn), molecular descriptor calculation tools (PaDEL, Dragon) | Performing regression analysis and calculating molecular descriptors |

| Chemical Libraries | Substituted benzoic acids, phenethylamines, or other congeneric series | Providing structurally related compounds with systematic variation for QSAR studies |

The Free-Wilson Model: A Complementary Approach

Concurrent with Hansch's work, Free and Wilson developed an alternative approach that relies solely on the presence or absence of specific substituents. The Free-Wilson model operates on the principle of additivity, where the biological activity of a compound equals the sum of contributions from its parent structure and substituents [11].

Mathematical Formulation

The Free-Wilson model can be expressed as:

[ \log(1/C) = \mu + \sum a_{ij} ]

Where:

- ( \mu ) is the overall average biological activity

- ( a_{ij} ) is the contribution of substituent j at position i

- The summation includes all substituent positions in the molecule [15] [11]

Protocol: Implementing Free-Wilson Analysis

Procedure:

- Define molecular framework: Identify the common parent structure and relevant substitution positions.

- Create indicator matrix: Construct a data matrix where each column represents a specific substituent at a specific position, coded as 1 (present) or 0 (absent).

- Regression analysis: Perform multiple regression analysis with log(1/C) as the dependent variable and the indicator matrix as independent variables.

- Interpret substituent contributions: The regression coefficients represent the activity contribution of each substituent at each position.

Advantages and Limitations:

- Advantages: No need for measured physicochemical parameters; simple implementation; effective for congeneric series.

- Limitations: Cannot predict activity for compounds with new substitution patterns not included in the original dataset; assumes strict additivity of substituent effects [15].

Modern Applications and Future Perspectives

The principles established by Hammett and Hansch continue to evolve, finding applications in diverse areas of chemical and pharmaceutical research.

Contemporary QSAR in Drug Discovery

Modern QSAR has expanded significantly beyond its origins, now incorporating advanced machine learning algorithms and complex molecular descriptors. Key applications include:

- Anti-cancer drug development: QSAR models have been successfully applied to design novel breast cancer therapeutics, optimizing compounds against specific targets like hormone receptors and kinase enzymes [11].

- Toxicology prediction: Regulatory agencies increasingly use QSAR models to predict endocrine disruption potential, including thyroid hormone system disruption, reducing reliance on animal testing [16].

- Environmental chemistry: Predicting the environmental fate and ecotoxicity of chemicals for risk assessment [17].

Emerging Trends and Integration with Modern Approaches

Figure 2: Evolution from classical QSAR foundations to modern computational approaches.

The field continues to advance rapidly with several emerging trends:

- Machine learning and AI: Advanced algorithms including random forests, support vector machines, and deep neural networks now handle complex, high-dimensional descriptor spaces [17].

- Generative models: AI-driven generative models can design novel molecular structures with desired properties, going beyond prediction to de novo molecular design [17].

- Integrated drug discovery: QSAR is now one component of comprehensive drug discovery pipelines that incorporate structural biology, molecular dynamics, and systems pharmacology [11].

The journey from Hammett constants to Hansch analysis represents more than historical progression—it embodies the evolution of chemical thinking from qualitative observation to quantitative prediction. Hammett's insight that substituent effects could be quantified and generalized across reactions laid the essential groundwork for Hansch's revolutionary extension of these principles to biological systems. Together, these approaches established the fundamental paradigm that molecular properties and activities can be predicted from quantifiable structural parameters, a concept that continues to drive computational chemistry and drug discovery today. As QSAR methodologies continue to evolve with artificial intelligence and machine learning, the foundational principles established by these pioneers remain remarkably relevant, providing the conceptual framework for contemporary predictive toxicology and rational drug design. The integration of these classical approaches with modern computational power ensures their continued utility in addressing the complex challenges of 21st-century pharmaceutical research and chemical safety assessment.

QSAR Protocols for Predictive Toxicology and Drug Discovery

Quantitative Structure-Activity Relationship (QSAR) modeling represents a computational approach that correlates chemical structure with biological activity or physicochemical properties using mathematical models [1] [3]. These models enable researchers to predict the activity of new compounds based on their molecular descriptors, thereby accelerating drug discovery and toxicological assessment while reducing reliance on costly experimental screening [18] [19]. The fundamental QSAR equation takes the form: Activity = f(physicochemical properties and/or structural properties) + error, where the function relates molecular descriptors to a quantifiable biological response [1].

The key objectives of QSAR modeling align with three critical needs in pharmaceutical research: enabling accurate prediction of biological activities and properties for unsynthesized compounds; rationalizing mechanisms of action through identification of structurally-relevant features that drive bioactivity; and significantly reducing costs and time requirements by prioritizing the most promising candidates for experimental validation [18] [19] [20]. Modern QSAR implementations have evolved from classical statistical approaches to incorporate artificial intelligence (AI) and machine learning (ML), dramatically enhancing predictive capabilities across diverse chemical spaces [18] [20].

Experimental Protocols

Comprehensive QSAR Model Development Workflow

The development of robust QSAR models follows a systematic protocol encompassing data collection, descriptor calculation, model construction, and validation [1]. The principal steps include:

Phase 1: Data Set Selection and Preparation

- Compound Curation: Acquire bioactivity data (e.g., IC₅₀, Ki, LD₅₀) from public databases such as ChEMBL or proprietary sources. A minimum of 20 compounds is recommended for reliable modeling, though larger datasets (hundreds to thousands of compounds) improve model robustness [19] [3].

- Chemical Structure Standardization: Convert all structures to standardized representations (e.g., SMILES, InChI) and remove duplicates. Apply necessary corrections for tautomers, stereochemistry, and protonation states [19].

- Dataset Division: Split the curated dataset into training (≈80%) and test (≈20%) sets using appropriate methods such as random sampling, stratified sampling based on activity distribution, or more advanced techniques like Butina clustering or UMAP splitting to ensure chemical diversity representation [21].

Phase 2: Molecular Descriptor Calculation and Selection

- Descriptor Computation: Calculate molecular descriptors using software tools such as DRAGON, PaDEL, RDKit, or Mordred [20]. Descriptors span multiple dimensions:

- 1D descriptors: Molecular weight, atom counts, bond counts

- 2D descriptors: Topological indices, connectivity indices, molecular fingerprints

- 3D descriptors: Molecular surface area, volume, conformational energies

- Quantum chemical descriptors: HOMO-LUMO energies, dipole moments, electrostatic potential surfaces [20]

- Descriptor Filtering: Apply feature selection methods including LASSO regression, recursive feature elimination (RFE), mutual information ranking, or stepwise regression to identify the most relevant descriptors and reduce dimensionality [20].

Phase 3: Model Construction and Training

- Algorithm Selection: Choose appropriate modeling techniques based on dataset characteristics:

- Classical methods: Multiple Linear Regression (MLR), Partial Least Squares (PLS), Principal Component Regression (PCR) for linearly separable datasets with limited descriptors [1] [3]

- Machine learning methods: Random Forests (RF), Support Vector Machines (SVM), k-Nearest Neighbors (kNN) for nonlinear relationships [19] [20]

- Deep learning methods: Graph Neural Networks (GNNs), SMILES-based transformers for large, complex datasets [18] [20]

- Hyperparameter Optimization: Tune model parameters using grid search, random search, or Bayesian optimization with cross-validation to prevent overfitting [19] [21].

Phase 4: Model Validation and Performance Assessment

- Internal Validation: Assess model robustness using cross-validation techniques (e.g., leave-one-out, k-fold) and calculate Q² (cross-validated R²) [1] [3].

- External Validation: Evaluate predictive performance on the held-out test set that was not used during model training [1].

- Statistical Metrics: Compute multiple validation metrics including R² (coefficient of determination), MSE (mean squared error), and ROC-AUC for classification models [19] [22].

- Applicability Domain: Define the chemical space where the model provides reliable predictions based on the training set characteristics [1].

Phase 5: Model Interpretation and Deployment

- Feature Importance Analysis: Identify influential molecular descriptors using permutation importance, SHAP (SHapley Additive exPlanations), or LIME (Local Interpretable Model-agnostic Explanations) [20].

- Mechanistic Interpretation: Relate significant descriptors to biological mechanisms (e.g., hydrophobicity influencing membrane permeability, electronic properties affecting protein binding) [16] [3].

- Predictive Implementation: Deploy validated models for virtual screening of compound libraries to prioritize synthesis and testing [19].

Table 1: Key Validation Parameters for QSAR Models

| Validation Type | Key Metrics | Acceptance Criteria | Purpose |

|---|---|---|---|

| Internal Validation | Q² (cross-validated R²), R² | Q² > 0.5, R² > 0.6 | Assess model robustness and stability |

| External Validation | Predictive R², RMSE | R²ₑₓₜ > 0.6 | Evaluate performance on unseen data |

| Y-Scrambling | R², Q² of scrambled models | Significantly lower than original | Verify absence of chance correlation |

| Applicability Domain | Leverage, distance metrics | Compounds within domain boundaries | Define reliable prediction scope |

Integrated QSAR-TNKS2 Inhibitor Identification Protocol

This protocol exemplifies the application of QSAR modeling for target-specific inhibitor identification, as demonstrated in a recent study on Tankyrase (TNKS2) inhibitors for colorectal cancer [19]:

Step 1: Bioactivity Data Retrieval

- Source TNKS2 inhibitors from ChEMBL database (Target ID: CHEMBL6125)

- Curate a dataset of 1,100 compounds with experimentally determined IC₅₀ values

- Standardize structures and remove compounds with missing or inconsistent activity data [19]

Step 2: Descriptor Calculation and Feature Selection

- Compute 2D and 3D molecular descriptors using appropriate software

- Apply random forest-based feature selection to identify the most relevant descriptors

- Retain top descriptors based on importance ranking for model construction [19]

Step 3: Random Forest QSAR Model Development

- Implement random forest classification with optimized hyperparameters

- Train model using the curated training set (80% of data)

- Validate model performance using 5-fold cross-validation [19]

Step 4: Virtual Screening and Compound Prioritization

- Apply trained QSAR model to screen virtual compound libraries

- Rank compounds based on predicted TNKS2 inhibitory activity

- Select top candidates for further computational validation [19]

Step 5: Computational Validation of Hits

- Perform molecular docking studies to evaluate binding modes and interactions with TNKS2 binding site

- Conduct molecular dynamics simulations (100-200 ns) to assess complex stability

- Analyze binding free energies using MM-PBSA/GBSA methods

- Evaluate ADMET properties to ensure drug-likeness [19]

Step 6: Experimental Verification

- Synthesize or procure top-ranked compounds (1-5 candidates)

- Conduct in vitro TNKS2 inhibition assays to validate computational predictions

- Perform cell-based assays to assess efficacy in relevant cancer cell lines [19]

Data Presentation and Analysis

Performance Comparison of QSAR Modeling Algorithms

Table 2: Comparative Performance of Machine Learning Algorithms in QSAR Modeling

| Algorithm | MSE Range | R² Range | Best Suited Applications | Interpretability |

|---|---|---|---|---|

| Ridge Regression | 3540-3618 [22] | 0.93-0.94 [22] | Linear relationships, multicollinear descriptors | Medium |

| Lasso Regression | 3540-3618 [22] | 0.93-0.94 [22] | Feature selection, high-dimensional data | Medium |

| Random Forest | 6485 [22] | 0.66-0.98 [19] [22] | Complex nonlinear relationships, noisy data | Medium-High |

| Gradient Boosting | 1495-4488 [22] | 0.57-0.92 [22] | Imbalanced datasets, hierarchical features | Medium |

| Support Vector Machines | Variable | Variable | High-dimensional datasets, clear margin separation | Low |

| Graph Neural Networks | Variable | Variable | Large diverse chemical spaces, structure-activity learning | Low-Medium |

Consensus Modeling for Predictive Toxicology

Recent advances in predictive toxicology have demonstrated the value of consensus approaches for improving prediction reliability:

Conservative Consensus Model (CCM) for Acute Oral Toxicity [23]

- Objective: Combine predictions from multiple QSAR models (CATMoS, VEGA, TEST) to generate health-protective estimates of rat acute oral toxicity (LD₅₀)

- Methodology: Assign the most conservative (lowest) predicted LD₅₀ value from individual models as the CCM output

- Performance: CCM showed lowest under-prediction rate (2%) compared to individual models (TEST: 20%, CATMoS: 10%, VEGA: 5%), ensuring protective classification for hazard assessment

- Application: Particularly valuable under conditions of uncertainty where experimental data are limited or absent [23]

Signaling Pathways and Workflow Visualization

Diagram 1: Wnt Signaling Pathway and TNKS2 Inhibition

Diagram 2: QSAR Model Development Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for QSAR Modeling

| Tool/Reagent | Category | Function | Access |

|---|---|---|---|

| ChEMBL Database | Bioactivity Data | Curated database of bioactive molecules with drug-like properties | https://www.ebi.ac.uk/chembl/ [19] |

| DRAGON Software | Descriptor Calculation | Computes >5,000 molecular descriptors covering structural, topological, and quantum chemical features | Commercial [20] |

| PaDEL-Descriptor | Descriptor Calculation | Open-source software for calculating 2D and 3D molecular descriptors and fingerprints | Open Source [20] |

| RDKit | Cheminformatics | Open-source toolkit for cheminformatics and machine learning with Python integration | Open Source [20] |

| QSARINS | Model Development | Software for MLR-based QSAR model development with comprehensive validation tools | Commercial [20] |

| ChemProp | Deep Learning | Message-passing neural networks for molecular property prediction | Open Source [21] |

| Mordred | Descriptor Calculation | Calculates >1,800 molecular descriptors with Python API | Open Source [21] |

| GNINA | Structure-Based Modeling | Deep learning-based molecular docking and scoring function | Open Source [21] |

| OECD QSAR Toolbox | Regulatory Assessment | Software to group chemicals and fill data gaps for regulatory purposes | Free for Use [24] |

Advanced Applications and Regulatory Considerations

AI-Enhanced QSAR in Modern Drug Discovery

The integration of artificial intelligence with QSAR modeling has transformed drug discovery pipelines through several key advancements:

Deep Learning Architectures

- Graph Neural Networks (GNNs): Operate directly on molecular graphs, capturing atomic interactions and structural patterns without manual descriptor engineering [18] [20]

- Transformer Models: Process SMILES strings to learn complex molecular representations and predict activities with state-of-the-art accuracy [18] [20]

- Multimodal Approaches: Combine structural information with biological context (e.g., protein sequences, binding pockets) for target-specific activity prediction [21]

Explainable AI (XAI) Integration

- SHAP Analysis: Quantifies the contribution of individual molecular features to model predictions, enabling mechanistic interpretation [20]

- Attention Mechanisms: In transformer models, attention weights highlight structurally important regions influencing bioactivity [21]

- Counterfactual Explanations: Generates modified structures to illustrate minimal changes that significantly alter predicted activity [21]

Regulatory Frameworks and Validation Standards

The Organisation for Economic Co-operation and Development (OECD) has established principles for validating QSAR models for regulatory use [24]:

OECD QSAR Assessment Framework (QAF)

- Purpose: Provides guidance for regulatory assessment of (Q)SAR predictions to establish confidence in computational approaches [24]

- Key Principles:

- Scientific Basis: Defined endpoint and unambiguous algorithm

- Applicability Domain: Clear description of chemical space coverage

- Internal Performance: Measures of goodness-of-fit and robustness

- External Predictivity: Demonstration of performance on unseen data [24]

- Impact: Facilitates regulatory acceptance of QSAR models as valid alternatives to animal testing for chemical hazard assessment [24]

Validation Best Practices

- Data Quality: Ensure experimental data from reliable sources with consistent protocols

- Model Transparency: Document all modeling steps, parameters, and descriptor definitions

- Uncertainty Quantification: Provide confidence estimates for individual predictions

- Independent Verification: External validation by third parties when possible [1] [24]

The pursuit of novel chemical entities, particularly in pharmaceutical research, is a complex, costly, and time-consuming endeavor, with an estimated duration of up to 14 years and a cost exceeding one billion USD per molecule [11]. Quantitative Structure-Activity Relationship (QSAR) modeling serves as a cornerstone computational methodology in this landscape, providing a powerful framework for predicting the biological activity and properties of compounds from their chemical structures alone [1] [25] [26]. By establishing a mathematical relationship between molecular descriptors and a biological endpoint, QSAR models enable the prioritization of synthesis and testing, thereby accelerating discovery and reducing reliance on extensive animal testing [2] [25]. The reliability and predictive power of any QSAR study hinge entirely on three fundamental pillars: a curated dataset, informative molecular descriptors, and a robust mathematical model [27] [25]. This article delineates these essential components, providing detailed protocols and resources to guide the development of validated QSAR models.

The Dataset: Foundation of the Model

A high-quality dataset is the indispensable bedrock of a reliable QSAR model. The principle that "similar molecules have similar activities" (Structure-Activity Relationship, SAR) underpins QSAR, though this principle can sometimes be paradoxical [1]. The model's predictive ability is constrained by the chemical space and data quality of its training set [27] [11].

Data Collection and Curation

The initial phase involves gathering structural information and associated biological activity data from reliable public and proprietary sources. Key public repositories include ChEMBL, PubChem, and CCRIS [28] [29]. For specialized endpoints, such as genotoxicity, targeted literature searches using text-mining tools like the BioBERT large language model can significantly expand dataset coverage [28].

Protocol: Data Preparation Workflow

- Dataset Collection: Compile chemical structures (e.g., as SMILES strings) and their associated biological activities (e.g., IC₅₀, MIC₉₀) from trusted sources [2] [29].

- Data Cleaning and Standardization:

- Handling Missing Values and Inconsistencies: Identify and address missing data through removal or imputation techniques. Resolve conflicting experimental records by applying predefined regulatory criteria or expert review [28].

- Data Normalization and Splitting: Scale molecular descriptors to have zero mean and unit variance. Partition the cleaned dataset into training, validation, and external test sets. The external test set must be reserved for final model assessment and remain entirely independent of the model training and selection process [2].

Table 1: Exemplary Dataset Construction for a Genotoxicity Endpoint [28]

| Endpoint | Source | Initial Data Points | After Curation | Positive:Negative Ratio |

|---|---|---|---|---|

| Micronucleus in vitro | Public DBs & PubMed (BioBERT) | 20,000 Abstracts | 894 Organic Chemicals | 70% : 30% |

| Micronucleus in vivo (Mouse) | Public DBs & PubMed (BioBERT) | 20,000 Abstracts | 1,222 Organic Chemicals | 32% : 68% |

Addressing Data Imbalance

Imbalanced datasets, where one activity class is underrepresented, are a common challenge that can lead to biased models. Techniques such as data balancing during model construction or the use of ensemble models that combine multiple individual models can mitigate this issue and improve predictive performance for the minority class [28].

Figure 1: Data Curation and Preparation Workflow

Molecular Descriptors: Quantifying Chemical Structure

Molecular descriptors are numerical representations of a molecule's structural, physicochemical, and electronic properties [2]. They translate chemical information into a quantitative format that statistical and machine learning algorithms can process. The accuracy and relevance of descriptors directly determine a model's predictive power and stability [27].

Types of Molecular Descriptors

Descriptors can be categorized based on their dimensionality and the nature of the properties they encode [26].

Table 2: Categorization of Common Molecular Descriptors

| Dimension | Descriptor Type | Description | Examples |

|---|---|---|---|

| 1D | Constitutional | Describe atom and bond counts, molecular weight. | Molecular Weight, Number of H-Bond Donors/Acceptors [26] |

| 2D | Topological | Based on molecular graph theory, encoding connectivity. | Molecular Connectivity Indices (χ), Wiener Index (W) [26] |

| 2D/3D | Fragment-Based | Account for contributions of specific substituents/substructures. | Hydrophobicity (π), Hammett σ constant [1] [26] |

| 3D | Geometric & Field-Based | Derived from 3D structure, representing shape and interaction fields. | Molecular Volume, CoMFA/CoMSIA fields, WHIM descriptors [1] [26] |

| Text-Based | String Representations | Use linear string notations of the molecular structure. | SMILES (Simplified Molecular-Input Line-Entry System) [30] |

Descriptor Calculation and Selection

Protocol: From Chemical Structure to Descriptor Set

- Input Structure Preparation: Provide standardized molecular structures, typically as SMILES strings or in an SDF format [29].

- Descriptor Calculation: Use specialized software to compute a wide array of descriptors.

- Software Tools: PaDEL-Descriptor, Dragon, RDKit, and Mordred are widely used to generate hundreds to thousands of descriptors [2].

- Specialized Descriptors: For specific tasks, custom descriptors like the Local atom-based stochastic quadratic indices (LQI), weighted by atomic properties, can be calculated [29].

- Feature Selection: A critical step to avoid overfitting and improve model interpretability.

The Mathematical Model: From Data to Prediction

The mathematical model serves as the bridge between molecular structure and biological activity. The choice of algorithm depends on the complexity of the relationship, dataset size, and desired model interpretability [27] [2].

Model Building and Validation

Protocol: QSAR Model Development Workflow

- Algorithm Selection: Choose appropriate linear or non-linear machine learning methods.

- Model Training and Validation:

- Training Set: Used to build the model.

- Internal Validation: Assess model robustness via k-fold cross-validation or leave-one-out (LOO) cross-validation [1] [2].

- External Validation: The definitive test of predictive power, performed on the held-out external test set that was not used in any model building steps [1] [2].

- Ensemble Modeling: To enhance predictive performance and stability, build a comprehensive ensemble by combining multiple individual models created with different algorithms, descriptors, or data samples [28] [30].

Table 3: Common Algorithms for QSAR Modeling [2] [30]

| Algorithm | Type | Key Characteristics | Typical Input |

|---|---|---|---|

| Multiple Linear Regression (MLR) | Linear | Simple, highly interpretable. Prone to overfitting with many descriptors. | Selected 1D/2D Descriptors |

| Partial Least Squares (PLS) | Linear | Handles multicollinearity well. Robust for many descriptors. | Many 1D/2D/3D Descriptors |

| Random Forest (RF) | Non-linear (Ensemble) | High performance, handles non-linearity, provides feature importance. | Molecular Fingerprints (ECFP) |

| Support Vector Machines (SVM) | Non-linear | Effective in high-dimensional spaces, robust to overfitting. | Various Descriptor Types |

| Neural Networks (NN) | Non-linear | Highly flexible, can learn complex patterns. Less interpretable. | Descriptors or SMILES strings |

Figure 2: QSAR Model Building and Validation Workflow

The Scientist's Toolkit: Essential Research Reagents & Software

The following table details key software and computational resources essential for conducting modern QSAR studies.

Table 4: Key Research Reagents and Software Solutions for QSAR

| Item Name | Type | Function in QSAR Protocol |

|---|---|---|

| ChEMBL / PubChem | Database | Public repositories for obtaining chemical structures and associated bioactivity data for model training [28] [29]. |

| RDKit | Software Library | An open-source toolkit for cheminformatics, used for descriptor calculation, fingerprint generation, and molecular standardization [28] [30]. |

| PaDEL-Descriptor | Software | Calculates a comprehensive set of molecular descriptors and fingerprints directly from chemical structures [2]. |

| Scikit-learn | Software Library | A Python library providing a wide range of machine learning algorithms (e.g., RF, SVM, PLS) and model validation utilities [30]. |

| Keras/TensorFlow | Software Library | Deep learning frameworks used to build and train complex neural network models, including end-to-end models from SMILES [30]. |

| BioBERT | Software Model | A pre-trained language model for biomedical text mining, used to automate the extraction of experimental results from scientific literature [28]. |

The Similarity Principle and the SAR Paradox

The Similarity Principle and the SAR Paradox represent two foundational, yet seemingly contradictory, concepts in quantitative structure-activity relationship (QSAR) research and modern drug discovery. The Similarity Principle, often considered a core tenet of cheminformatics, posits that structurally similar molecules are likely to exhibit similar biological activities [1] [31]. This principle provides the fundamental justification for using molecular descriptors, fingerprints, and similarity metrics to predict the activity of new compounds based on known data.

Conversely, the SAR Paradox highlights the critical limitation of this principle by demonstrating that structurally similar molecules can, in fact, exhibit dramatically different biological activities [1] [31]. This paradox presents a significant challenge in drug discovery, where minor structural modifications—sometimes as simple as a single atom substitution—can lead to unexpected and drastic changes in potency, creating what are known as "activity cliffs" in the structure-activity landscape [32].

This application note examines these competing concepts within the framework of QSAR techniques, providing researchers with practical methodologies to navigate and leverage this dichotomy for more effective drug development.

Theoretical Foundation

The Similarity Principle in QSAR

The Similarity Principle provides the philosophical and mathematical basis for most QSAR modeling approaches. In practice, this principle is operationalized through molecular descriptors and similarity metrics that quantify molecular characteristics for predictive modeling [1]. QSAR models relate a set of "predictor" variables (X), consisting of physico-chemical properties or theoretical molecular descriptors, to the potency of a biological response variable (Y) [1].

The mathematical foundation of QSAR typically follows the form: Activity = f(physicochemical properties and/or structural properties) + error [1]

This approach encompasses various methodologies including:

- 2D-QSAR: Uses computed chemical descriptors quantifying electronic, geometric, or steric properties [1]

- 3D-QSAR: Applies force field calculations requiring three-dimensional structures (e.g., CoMFA) [1]

- Fragment-based QSAR: Utilizes group contribution methods where molecular fragments are analyzed [1]

- Graph-based QSAR: Uses molecular graphs directly as input

The SAR Paradox: Limitations of Similarity

The SAR Paradox reveals situations where the Similarity Principle fails, presenting significant challenges in drug discovery. This paradox is exemplified by "activity cliffs"—regions in chemical space where small structural changes result in large potency differences [32]. Maggiora's provocative statement that, "Similarity, like pornography, is difficult to define, but you know it when you see it," highlights the subjective nature of molecular similarity and its relationship to biological activity [32].

The underlying challenge stems from the fact that different biological activities (e.g., reaction ability, biotransformation ability, solubility, target activity) may depend on different molecular features, meaning that "similarity" must be defined differently for each activity type [1] [31].

Table 1: Key Concepts in Similarity and SAR Paradox

| Concept | Definition | Implications for Drug Discovery |

|---|---|---|

| Similarity Principle | Structurally similar molecules have similar biological activities [31] | Foundation for predictive modeling, virtual screening, and lead optimization |

| SAR Paradox | Not all similar molecules have similar activities [1] [31] | Challenges prediction accuracy; requires careful model validation |

| Activity Cliffs | Sharp changes in activity with small structural modifications [32] | Creates hotspots for optimization but risks misleading SAR trends |

| Similarity-Activity Landscape | Graphical representation of SAR heterogeneity [32] | Identifies smooth regions, cliffs, and chemical bridges in SAR |

Experimental Protocols and Methodologies

Protocol 1: Similarity-Based SAR (SIBAR) Analysis

The SIBAR approach addresses the SAR Paradox by using similarity calculations to predict activity while accounting for the limitations of simple similarity measures.

Materials and Reagents:

- Compound dataset with known biological activities

- Chemical computing software (e.g., RDKit, OpenBabel)

- Molecular descriptor calculation package

- Statistical analysis software (R, Python with scikit-learn)

Procedure:

- Reference Compound Selection: Select a highly diverse reference compound set representing the chemical space of interest [33]

- Similarity Calculation: Calculate similarity values between each test compound and all reference compounds using appropriate molecular fingerprints (e.g., ECFP, FCFP, SubstructureCount) [33] [34]

- Descriptor Generation: Use the similarity values as SIBAR descriptors for subsequent analysis [33]

- Model Building: Apply Partial Least Squares (PLS) analysis or other machine learning techniques to build predictive models [33]

- Validation: Perform internal and external validation using cross-validation procedures and external test sets [33]

Application Notes: The SIBAR approach has demonstrated good predictivity for challenging ADME properties like P-glycoprotein inhibition, where traditional QSAR methods often fail due to high structural diversity of ligands [33].

Protocol 2: Activity Cliff Identification Using SALI

This protocol provides a method to identify and quantify activity cliffs in SAR datasets, directly addressing the SAR Paradox.

Materials and Reagents:

- Curated dataset of compounds with measured biological activities (IC50, Ki, etc.)

- Chemical structure standardization tools

- Similarity calculation software

- SALI calculation script or package

Procedure:

- Data Curation: Collect and standardize molecular structures and associated activity data [35]

- Similarity Matrix Calculation: Compute pairwise molecular similarities using appropriate fingerprints (e.g., Tanimoto coefficient on ECFP4 fingerprints) [32]

- SALI Calculation: For each compound pair (i, j), calculate the Structure-Activity Landscape Index (SALI) using the formula:

SALIᵢⱼ = |Aᵢ - Aⱼ| / (1 - Sᵢⱼ)

Where Aᵢ and Aⱼ are activity values, and Sᵢⱼ is the similarity value [32]

- Cliff Identification: Identify activity cliffs as compound pairs with high SALI values (typically above a defined threshold)

- Visualization: Create similarity-activity landscape maps to visualize cliffs and smooth regions [32]

Application Notes: High SALI values indicate activity cliffs where small structural changes (low 1-Sᵢⱼ) result in large activity changes (high |Aᵢ - Aⱼ|). These regions are critical for understanding key molecular interactions but challenging for predictive modeling [32].

Protocol 3: Machine Learning Classification for SAR Analysis

Modern machine learning approaches can navigate the SAR Paradox by handling complex, non-linear relationships in structural data.

Materials and Reagents:

- Balanced and imbalanced compound datasets

- Machine learning environment (Python with scikit-learn, TensorFlow)

- Molecular fingerprinting capabilities (ECFP, FCFP, SubstructureCount)

- Model validation frameworks

Procedure:

- Data Preparation: Curate inhibitors from databases like ChEMBL, applying appropriate activity thresholds [34]

- Fingerprint Generation: Compute multiple fingerprint types (e.g., SubstructureCount, ECFP, FCFP) to represent molecular structures [34] [36]

- Data Balancing: Apply oversampling or undersampling techniques to address class imbalance [34]

- Model Training: Train multiple machine learning models (Random Forest, Deep Neural Networks, etc.) using different fingerprint representations [34] [36]

- Model Validation: Perform rigorous internal validation (cross-validation) and external validation using test sets [34] [35]

- Feature Importance Analysis: Use methods like Gini index (for Random Forest) to identify structural features critical for activity [34]

Application Notes: In recent studies, Random Forest with SubstructureCount fingerprints demonstrated excellent performance for predicting PfDHODH inhibitory activity, with MCC values exceeding 0.76 in external validation [34]. Feature importance analysis revealed that nitrogenous groups, fluorine atoms, oxygenation features, aromatic moieties, and chirality significantly influenced inhibitory activity [34].

Visualization and Workflows

Diagram 1: Integrated workflow for SAR analysis that addresses both the Similarity Principle and SAR Paradox through multiple pathways.

Research Reagents and Computational Tools

Table 2: Essential Research Reagent Solutions for SAR Studies

| Reagent/Software Tool | Function/Purpose | Application Context |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit | Calculation of molecular descriptors and fingerprints [37] [36] |

| ECFP/FCFP Fingerprints | Circular topological fingerprints | Molecular similarity calculations and machine learning feature generation [36] |

| SubstructureCount Fingerprints | Fragment-based molecular representation | QSAR model building with interpretable features [34] |

| Random Forest Algorithm | Ensemble machine learning method | Robust classification and regression for QSAR modeling [34] [36] |

| Deep Neural Networks (DNN) | Advanced machine learning approach | Capturing complex non-linear structure-activity relationships [36] |

| ChEMBL Database | Public repository of bioactive molecules | Source of curated training and test data for QSAR models [34] [37] |

Data Analysis and Validation Protocols

QSAR Model Validation Framework

Robust validation is essential for reliable QSAR models, particularly given the challenges posed by the SAR Paradox.

Procedure:

- Internal Validation: Perform cross-validation (leave-one-out, leave-many-out) to assess model robustness [35]

- External Validation: Split data into training and test sets to evaluate predictive performance on new compounds [35]

- Statistical Parameters: Calculate multiple validation metrics including:

- Y-Scrambling: Verify absence of chance correlations by randomizing response variables [35]

- Applicability Domain Assessment: Define chemical space where models can make reliable predictions [35]

Application Notes: Studies have shown that using the coefficient of determination (r²) alone is insufficient to indicate QSAR model validity [35]. The predictive squared correlation coefficient (r₀²) between observed and predicted values of the test set should be close to the r² of the training set, with |r₀² - r'₀²| < 0.3 indicating good predictivity [35].

Table 3: Comparative Performance of Machine Learning Methods in SAR Modeling

| Modeling Method | Training Set Size | r² (Training) | R²pred (Test) | Key Advantages |

|---|---|---|---|---|

| Random Forest | 6069 compounds | ~0.90 | ~0.90 | High accuracy, robust with diverse data [36] |

| Deep Neural Networks | 6069 compounds | ~0.90 | ~0.90 | Feature weighting, handles complexity [36] |

| Partial Least Squares | 6069 compounds | ~0.65 | ~0.65 | Traditional, interpretable [36] |

| Multiple Linear Regression | 6069 compounds | ~0.65 | ~0.65 | Simple, fast computation [36] |

| Random Forest | 303 compounds | ~0.84 | ~0.84 | Maintains performance with small datasets [36] |

| Deep Neural Networks | 303 compounds | ~0.94 | ~0.94 | Superior with limited training data [36] |

Advanced Integration Approaches

Hybrid Methods for SAR Navigation

Emerging approaches combine multiple methodologies to better address both the Similarity Principle and SAR Paradox:

q-RASAR Framework: This hybrid method merges traditional QSAR with similarity-based read-across techniques, enhancing predictive capability while providing mechanistic interpretation [1].

Matched Molecular Pair Analysis (MMPA): Coupled with QSAR models, MMPA helps identify activity cliffs by systematically analyzing small structural changes and their dramatic effects on activity [1].

Conformal Prediction: This recent QSAR approach provides information on prediction certainty, helping researchers make more informed decisions despite the uncertainties introduced by the SAR Paradox [37].

Diagram 2: Relationship between the Similarity Principle and SAR Paradox, showing how they drive different aspects of SAR analysis and method development.

The Similarity Principle and SAR Paradox together form a complementary framework that guides modern QSAR research. While the Similarity Principle provides the foundation for predictive modeling, the SAR Paradox highlights its limitations and drives the development of more sophisticated methods. Successful navigation of this landscape requires:

- Multiple Modeling Approaches: Employing various descriptors, machine learning algorithms, and validation techniques

- Activity Cliff Awareness: Systematically identifying and analyzing regions where the Similarity Principle fails

- Robust Validation: Implementing rigorous statistical validation beyond simple correlation coefficients

- Hybrid Methods: Leveraging integrated approaches like q-RASAR that combine the strengths of different methodologies

By acknowledging both the power of similarity-based prediction and its limitations, researchers can develop more reliable QSAR models that account for the complex reality of chemical-biological interactions, ultimately accelerating the drug discovery process while respecting the fundamental complexities of molecular recognition.

Within the framework of quantitative structure-activity relationship (QSAR) research, a model's reliability is not solely determined by its statistical prowess but by the clear definition of its purpose and boundaries. A good QSAR model is a predictive tool grounded in two foundational principles: a defined endpoint and a well-characterized applicability domain (AD) [25] [38]. The defined endpoint ensures the model has a clear objective, while the applicability domain delineates the chemical space within which its predictions are reliable [39]. These principles are paramount for the transparent and regulatory acceptance of QSAR models, guiding researchers and drug development professionals in their quest to optimize lead compounds and predict properties efficiently [40] [25]. This document outlines detailed protocols and application notes for establishing these critical components.

Defined Endpoint: The Cornerstone of QSAR Modeling

Concept and Significance

The defined endpoint is the specific biological activity or physicochemical property that a QSAR model is built to predict [25]. It is the model's unambiguous objective. A clearly defined endpoint is crucial because it determines the selection of experimental data, guides the choice of molecular descriptors, and forms the basis for all subsequent validation [40]. Without a precise endpoint, a model lacks direction and its predictions become unreliable and uninterpretable.

Protocol for Endpoint Definition and Data Preparation

Protocol 1: Establishing a Defined Endpoint and Curating a Robust Dataset

- Objective: To define a clear modeling endpoint and assemble a high-quality, curated dataset for QSAR model development.

Materials: A set of compounds with experimentally measured biological activities (e.g., IC₅₀, EC₅₀) or properties; chemical structure representation software (e.g., for generating SMILES strings or molecular graphs); data curation tools.

Procedure:

- Endpoint Specification: Clearly state the endpoint to be modeled. This must be a consistent, quantitative measure, such as

pIC₅₀(negative logarithm of the half-maximal inhibitory concentration) orlogP(partition coefficient) [25]. - Data Collection: Gather experimental data from reliable, peer-reviewed sources or in-house databases. The data should originate from a consistent experimental protocol to ensure comparability [3].

- Data Curation:

- Structure Standardization: Convert all chemical structures into a standardized format (e.g., canonical SMILES) [3].

- Error Detection: Identify and rectify errors in structures and associated endpoint values.

- Duplicate Removal: Eliminate duplicate entries, ensuring each unique chemical structure is represented only once.

- Outlier Examination: Professionally inspect the dataset for experimental or transcriptional outliers that may skew the model [40].

- Dataset Division: Split the curated dataset into a training set (typically 70-80%) for model construction and a test set (20-30%) for external validation [3]. This split should ensure the test set is representative of the chemical space covered by the training set.

- Endpoint Specification: Clearly state the endpoint to be modeled. This must be a consistent, quantitative measure, such as

Applicability Domain: Ensuring Reliable Predictions

Concept and Regulatory Importance

The Applicability Domain (AD) is the "physico-chemical, structural, or biological space, knowledge or information on which the training set of the model has been developed, and for which it is applicable to make predictions for new compounds" [39]. It is a critical tool for estimating the uncertainty of a prediction based on the similarity of a new compound to the training set molecules [41]. The OECD mandates the definition of an AD as one of its five principles for validating QSAR models for regulatory purposes [38] [39]. A model should only be used for prediction if a query compound falls within its AD, as extrapolation beyond this domain leads to unreliable predictions [42] [41].

Table 1: Common Types of Applicability Domain and Their Characteristics

| AD Type | Description | Common Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Range-Based | Defines AD based on the min-max range of each descriptor in the training set. | Bounding Box | Simple, easy to implement. | Does not account for correlation between descriptors; can define overly large, sparse regions. |

| Distance-Based | Assesses the similarity of a new compound to its nearest neighbors in the training set. | Euclidean Distance, Mahalanobis Distance, Tanimoto Distance [42] [39] | Intuitive; based on the similarity principle. | Performance depends on the distance metric and descriptor scaling. |

| Geometric | Defines a geometrical boundary encompassing the training set data points. | Convex Hull, Leverage [41] [39] | Precisely defines the interpolation space. | Computationally intensive for high-dimensional data. |

| Probability-Density Based | Models the underlying probability distribution of the training set data. | Probability Density Function | Statistically robust. | Complex to implement; requires a large training set. |

The Critical Role of the Applicability Domain

In QSAR, prediction error has been robustly demonstrated to increase as the distance (e.g., Tanimoto distance on molecular fingerprints) between a query molecule and the nearest training set molecule increases [42]. This underscores the molecular similarity principle: similar molecules are likely to have similar activities [42] [1]. Consequently, defining the AD is not an optional step but a necessity for identifying when a prediction transitions from reliable interpolation to uncertain extrapolation.

Table 2: Impact of Distance from Training Set on QSAR Prediction Error [42]

| Mean Squared Error (MSE) on log IC₅₀ | Typical Error in IC₅₀ | Interpretation for Lead Optimization |

|---|---|---|

| 0.25 | ~3x | Sufficiently accurate to support hit discovery and lead optimization. |

| 1.0 | ~10x | Can distinguish potent leads from inactives, but reduced precision. |

| 2.0 | ~26x | Generally insufficient for reliable decision-making in lead optimization. |

Protocol for Determining the Applicability Domain

Protocol 2: Determining the Applicability Domain using the Standardization Approach

- Objective: To identify outliers in the training set and define the AD for reliable prediction of test set compounds.

Materials: The optimized QSAR model's training set; the pool of molecular descriptors used in the final model; software for basic statistical calculations (e.g., MS Excel) or standalone AD tools (e.g., "Applicability domain using standardization approach").

Procedure:

- Descriptor Standardization: For each descriptor ( i ) used in the model, standardize the values for all training set compounds using the formula:

S_ki = (X_ki - X̄_i) / σ_iwhereS_kiis the standardized value of descriptor ( i ) for compound ( k ),X_kiis the original descriptor value,X̄_iis the mean of descriptor ( i ) in the training set, andσ_iis its standard deviation [41]. - Calculate Standardization Value: For each training set compound ( k ), compute the standardized value ( S_k ) as the square root of the sum of its squared standardized descriptors:

S_k = sqrt(Σ(S_ki)²) - Set Threshold for Training Set: Establish a threshold for identifying outliers in the training set. A common threshold is a standardized value ( Sk ) of 3 [41]. Any training compound with ( Sk > 3 ) is considered an outlier and should be investigated and potentially removed to refine the model's AD.

- Apply to Test Set: For a new test or query compound, standardize its descriptors using the training set's mean (( X̄i )) and standard deviation (( σi )).

- Calculate Test Compound Svalue: Compute the ( Svalue ) for the test compound using the formula from Step 2.