Query-Based Molecular Optimization: A Framework for Accelerating AI-Driven Drug Discovery

This article provides a comprehensive guide to implementing query-based molecular optimization (QMO), an AI framework that accelerates the design of novel molecules and materials.

Query-Based Molecular Optimization: A Framework for Accelerating AI-Driven Drug Discovery

Abstract

This article provides a comprehensive guide to implementing query-based molecular optimization (QMO), an AI framework that accelerates the design of novel molecules and materials. Aimed at researchers and drug development professionals, we explore the foundational principles of QMO, which decouples molecular representation learning from guided property search. The piece details methodological workflows for optimizing properties like binding affinity and solubility, addresses key challenges such as high-dimensional chemical spaces and data sparsity, and validates performance against state-of-the-art methods through real-world case studies, including the optimization of SARS-CoV-2 inhibitors and antimicrobial peptides. The conclusion synthesizes key takeaways and discusses future directions for integrating these frameworks into biomedical research.

What is Query-Based Molecular Optimization? Laying the Groundwork for AI-Driven Discovery

Defining the Molecular Optimization Challenge in Drug Discovery

Molecular optimization represents a pivotal stage in the drug discovery pipeline, situated between the initial identification of a lead compound and preclinical testing [1]. The fundamental challenge lies in modifying a lead molecule to enhance its key properties—such as binding affinity, solubility, or reduced toxicity—while rigorously preserving its core structural features and other essential characteristics [1]. This delicate balancing act requires navigating a chemical space of staggering proportions; for a peptide sequence of just 60 amino acids, the number of possible variants approaches the number of atoms in the known universe [2]. The pharmaceutical industry faces immense pressure to reduce attrition rates, shorten development timelines, and increase translational predictivity, driving the adoption of advanced computational approaches to manage this complexity [3].

The transition from a promising lead molecule to a viable drug candidate demands careful optimization of multiple, often competing, properties simultaneously. A lead molecule might demonstrate promising biological activity but suffer from poor solubility, suboptimal pharmacokinetics, or undesirable toxicity profiles [1]. The molecular optimization process addresses these deficiencies through strategic structural modifications while maintaining the structural core responsible for its initial therapeutic activity. This process is formally defined as: given a lead molecule x with properties p₁(x),...,pₘ(x), generate a molecule y with properties p₁(y),...,pₘ(y) satisfying pᵢ(y) ≻ pᵢ(x) for i=1,2,...,m, and sim(x,y) > δ, where δ represents a similarity threshold [1]. Maintaining structural similarity preserves crucial pharmacological properties while exploring chemical space for improved characteristics.

Current Methodologies in AI-Aided Molecular Optimization

Artificial intelligence has revolutionized molecular optimization approaches, enabling researchers to navigate the vast chemical space more efficiently than traditional methods. Current AI-aided methodologies can be broadly categorized based on their operational spaces and optimization strategies, each with distinct advantages and limitations as summarized in Table 1.

Table 1: Comparison of AI-Aided Molecular Optimization Approaches

| Category | Representative Methods | Molecular Representation | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Discrete Space Optimization | STONED, MolFinder, GCPN, MolDQN [1] | SELFIES, SMILES, Molecular Graphs [1] | Direct structural interpretation; No training data required for some methods [1] | High computational cost for property evaluation; Sequential optimization struggles with multi-objective tasks [1] |

| Continuous Latent Space Optimization | VAE+BO, VAE+GA, Mol-CycleGAN [1] | Continuous latent vectors [2] | Efficient exploration in continuous space; Smooth property landscapes [2] [1] | Decoder collapse issues; Generated molecules may lack diversity [1] |

| Query-Based Frameworks | QMO (Query-based Molecular Optimization) [2] [4] | SMILES, Latent representations [2] [5] | Decouples representation learning from optimization; Compatible with black-box property predictors [2] [4] | Dependent on quality of pre-trained encoder-decoder [2] |

The Query-Based Molecular Optimization (QMO) Framework

The QMO framework introduces a novel approach that decouples molecular representation learning from the optimization process itself [2] [4]. This method employs an encoder-decoder architecture, where an encoder transforms molecular sequences into continuous latent representations, and a corresponding decoder maps these latent vectors back to molecular sequences [2] [5]. The optimization occurs in this continuous latent space guided by external property predictors that evaluate sequences at the molecular level rather than their latent representations [2]. This architecture enables QMO to leverage existing property predictors and black-box evaluators—including physics-based simulators, informatics tools, or experimental data—without requiring retraining for new optimization tasks [4] [5].

QMO employs zeroth-order optimization, a technique that performs efficient mathematical optimization using only function evaluations rather than gradient calculations [2]. This approach is particularly valuable when working with discrete molecular sequences and black-box property predictors where gradient computation is infeasible [2]. The framework supports two practical optimization scenarios: (1) optimizing molecular similarity while satisfying desired chemical properties, and (2) optimizing chemical properties while respecting similarity constraints [5]. This flexibility makes it suitable for diverse drug discovery applications, from improving binding affinity while maintaining similarity to lead compounds to reducing toxicity while preserving antimicrobial activity [4].

Application Notes: QMO Protocol for Molecular Optimization

Experimental Workflow for Query-Based Molecular Optimization

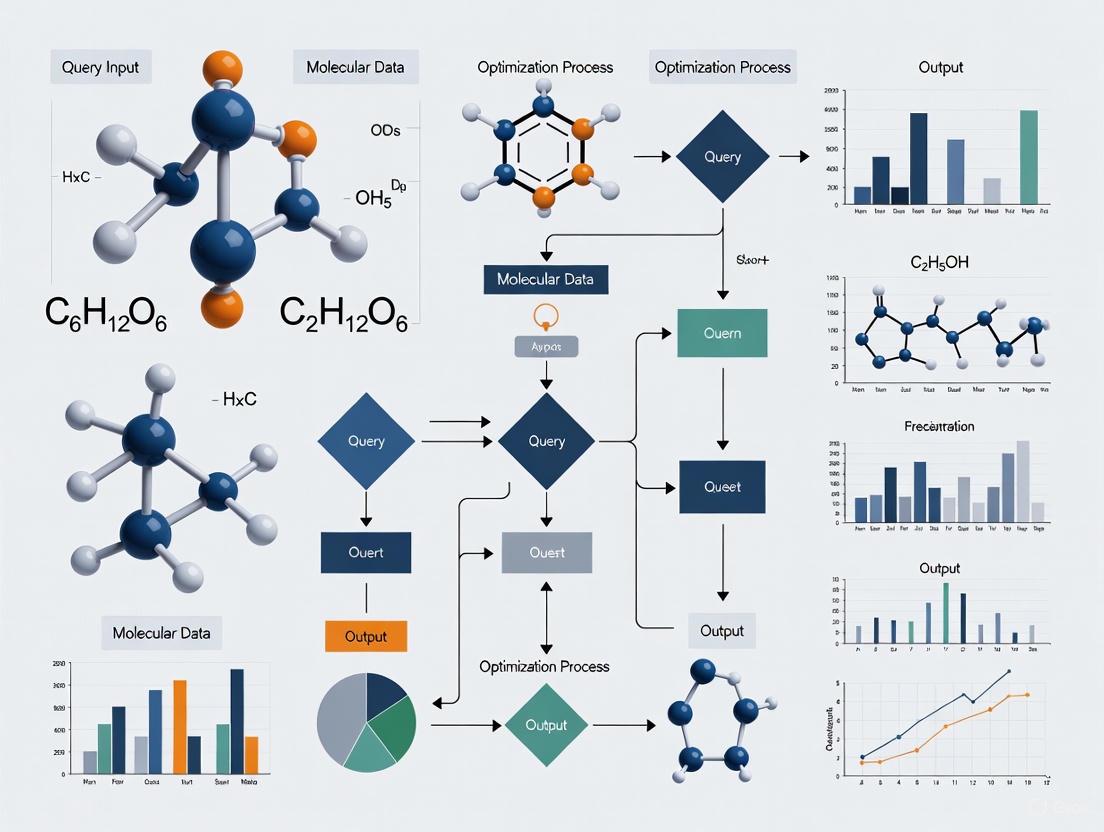

The following diagram illustrates the complete QMO experimental workflow, from molecular encoding through iterative optimization to validation:

Step-by-Step Protocol for Molecular Optimization Using QMO

Molecular Representation and Encoder-Decoder Training

Purpose: To create a continuous latent space representation of molecular structures that enables efficient optimization [2] [5].

Procedure:

- Molecular Representation: Represent molecules as sequences using SMILES (Small Organic Molecules) or amino acid character strings (Peptides) [2]. For SMILES representations, ensure standardized formatting and validity checking.

- Encoder-Decoder Selection: Implement a pre-trained encoder-decoder framework. Suitable architectures include:

- Latent Space Dimension: Set the latent dimension d to a fixed size (typically 128-512 dimensions) universal to all sequences [5].

- Validation: Verify reconstruction accuracy and latent space continuity by sampling and decoding random latent vectors to ensure generated molecules are valid and diverse.

Note: While training a custom encoder-decoder is possible, QMO is designed to work with any pre-trained encoder-decoder framework, significantly reducing implementation time [2].

Property Predictor Selection and Configuration

Purpose: To establish accurate evaluation metrics for guiding the optimization toward desired molecular properties [2] [5].

Procedure:

- Identify Target Properties: Select one or more properties for optimization (e.g., binding affinity, solubility, toxicity, drug-likeness).

- Choose Prediction Models: Implement appropriate predictive models for each property:

- For drug-likeness: Quantitative Estimate of Drug-likeness (QED) [2].

- For solubility: Penalized logP (octanol-water partition coefficient) [2].

- For binding affinity: Machine learning models trained on IC₅₀ values or free energy calculations [2].

- For toxicity: Specialized toxicity predictors (e.g., for antimicrobial peptides) [4].

- Similarity Metric Implementation: Implement Tanimoto similarity based on Morgan fingerprints [1]: sim(x,y) = fp(x)·fp(y) / [fp(x)² + fp(y)² - fp(x)·fp(y)] where fp(x) represents the Morgan fingerprints of molecule x.

- Constraint Thresholding: Define minimum similarity constraints (typically δ ≥ 0.4) and target property thresholds based on lead molecule baseline values [1].

Query-Based Optimization Execution

Purpose: To efficiently explore the latent space and identify optimized molecular structures satisfying all constraints [2] [5].

Procedure:

- Latent Representation: Encode the lead molecule to obtain its latent representation vector z [5].

- Perturbation Generation: Apply random perturbations to z to generate neighboring points in latent space using neighborhood sampling [2] [5].

- Candidate Decoding: Decode perturbed latent vectors to generate candidate molecular sequences [5].

- Property Evaluation: Query all property predictors and similarity metrics for each candidate molecule [2].

- Loss Calculation: Compute loss values measuring the differences between property predictions and target constraints [5].

- Selection and Iteration: Select candidates with the most favorable loss values and use their latent representations as new starting points [2].

- Convergence Check: Repeat steps 2-6 until molecules satisfying all constraints are identified or maximum iterations reached [5].

Critical Parameters:

- Number of random perturbations per iteration: 50-100

- Similarity constraint threshold (δ): 0.4-0.8

- Maximum iterations: 100-500

- Perturbation magnitude: Adaptive based on success rate

Validation and Output

Purpose: To verify optimization success and prepare optimized molecules for experimental testing.

Procedure:

- In Silico Validation: Assess optimized molecules using external predictors not used during optimization [4].

- Structural Analysis: Visually inspect key structural changes between lead and optimized molecules.

- Diversity Check: Ensure multiple distinct optimized candidates are generated for experimental consideration.

- Output Final Molecules: Select top candidates for synthesis and experimental validation.

Table 2: Essential Research Reagent Solutions for QMO Implementation

| Resource Category | Specific Tools & Resources | Function in QMO Protocol | Key Features & Considerations |

|---|---|---|---|

| Molecular Representations | SMILES [2], SELFIES [1], Molecular Graphs [1] | Standardized representation of chemical structures | SMILES for small organic molecules; Amino acid sequences for peptides [2] |

| Encoder-Decoder Frameworks | Deterministic Autoencoder (AE) [5], Variational Autoencoder (VAE) [5] | Learning continuous latent representations of molecules | Pre-trained models available; VAE provides better latent space organization [5] |

| Property Predictors | AutoDock [3], SwissADME [3], QED [2], Toxicity predictors [4] | Evaluating molecular properties for optimization guidance | Compatibility with sequence-level input crucial for QMO [2] |

| Similarity Metrics | Tanimoto Similarity [1], Morgan Fingerprints [1] | Quantifying structural conservation during optimization | Tanimoto similarity with Morgan fingerprints is gold standard [1] |

| Optimization Algorithms | Zeroth-order Optimization [2], Bayesian Optimization [6] | Efficient search in latent space without gradients | Zeroth-order optimization enables black-box function evaluation [2] |

Performance Benchmarks and Case Studies

QMO has demonstrated significant performance improvements across multiple molecular optimization tasks, particularly in challenging real-world discovery scenarios beyond standard benchmarks. Table 3 summarizes quantitative performance data across different optimization tasks.

Table 3: QMO Performance Across Molecular Optimization Tasks

| Optimization Task | Lead Molecules | Key Constraints | Success Rate | Performance Improvement |

|---|---|---|---|---|

| Drug-Likeness (QED) Optimization [2] [4] | 800 molecules | Tanimoto similarity ≥ 0.4 | 92.9% | At least 15% higher than other methods [4] |

| Solubility (Penalized logP) Optimization [2] [4] | 800 molecules | Tanimoto similarity ≥ 0.4 | Not specified | ~30% relative improvement over other methods [4] |

| SARS-CoV-2 Mpro Inhibitor Binding Affinity [2] [4] | 23 existing inhibitors | High structural similarity | Not specified | Improved binding free energy while maintaining similarity [4] |

| Antimicrobial Peptide Toxicity Reduction [2] [4] | 150 toxic AMPs | High sequence similarity | 71.8% | Reduced toxicity with conserved antimicrobial activity [4] |

Case Study: Optimizing SARS-CoV-2 Main Protease Inhibitors

Background: During the COVID-19 pandemic, rapid optimization of existing inhibitor molecules for SARS-CoV-2 Main Protease (Mpro) represented an urgent priority for therapeutic development [2].

QMO Application:

- Lead Molecules: 23 existing Mpro inhibitors with confirmed but suboptimal activity [4].

- Optimization Target: Improve binding affinity (pIC₅₀ > 7.5) while maintaining high structural similarity to leverage existing knowledge and manufacturing pipelines [2].

- Property Predictors: Binding affinity predictors trained on IC₅₀ values; Tanimoto similarity with Morgan fingerprints [2].

- Results: QMO successfully identified optimized variants with improved predicted binding free energy while maintaining structural similarity to lead compounds [4]. Docking analysis confirmed improved binding poses, with one example showing enhanced interactions between dipyridamole derivatives and Mpro active site [2].

Significance: This application demonstrated QMO's capability to address real-world discovery challenges with therapeutic relevance, particularly valuable during public health emergencies requiring rapid response [2].

Case Study Reducing Antimicrobial Peptide Toxicity

Background: Antimicrobial resistance represents a critical global health threat, with antimicrobial peptides (AMPs) offering promising alternatives to conventional antibiotics [2]. However, many potent AMPs exhibit unacceptable toxicity levels [4].

QMO Application:

- Lead Molecules: 150 known toxic AMPs [4].

- Optimization Target: Reduce predicted toxicity while maintaining high sequence similarity to preserve antimicrobial activity [2].

- Property Predictors: Toxicity classifiers; Sequence similarity metrics [2].

- Results: QMO achieved a 71.8% success rate in generating less toxic variants [4]. External validation using state-of-the-art toxicity predictors not employed during optimization confirmed the reduced toxicity of QMO-optimized sequences [4].

Significance: This case highlights QMO's effectiveness in multi-property optimization, balancing toxicity reduction with structural conservation to maintain desired biological activity [2].

Technical Considerations and Implementation Challenges

Molecular Representation Selection

The choice of molecular representation significantly impacts QMO performance. SMILES representations offer simplicity and compatibility with existing natural language processing architectures but can generate invalid structures [2]. SELFIES representations guarantee 100% validity but may limit structural diversity [1]. Molecular graphs explicitly capture structural relationships but require more complex encoder-decoder architectures [1]. For most applications, SMILES representations provide the optimal balance of simplicity, compatibility, and performance when paired with robust validity checking.

Encoder-Decoder Training Considerations

While QMO can utilize pre-trained encoder-decoder models, practitioners must ensure these models provide high-fidelity reconstruction and meaningful latent space organization [5]. Variational autoencoders (VAEs) typically outperform deterministic autoencoders by generating more structured latent spaces with smoother property gradients [5]. Training should utilize diverse chemical libraries relevant to the optimization domain, with appropriate regularization to prevent overfitting and ensure latent space continuity [1].

Multi-Property Optimization Strategies

Real-world molecular optimization typically requires balancing multiple property improvements simultaneously [1]. QMO addresses this through constraint-based optimization, where certain properties must satisfy minimum thresholds while others are optimized [5]. For complex multi-property optimization, a phased approach often proves effective: first optimizing the most critical property with relaxed constraints on secondary properties, then performing refinement cycles to address additional properties [2].

The molecular optimization challenge in drug discovery represents a critical bottleneck in therapeutic development, requiring sophisticated approaches to balance multiple property improvements with structural conservation. The Query-based Molecular Optimization (QMO) framework addresses this challenge through a novel architecture that decouples representation learning from optimization, enabling efficient exploration of chemical space using existing property predictors and similarity constraints. As demonstrated across diverse applications—from SARS-CoV-2 inhibitor refinement to antimicrobial peptide detoxification—QMO provides researchers with a powerful protocol for accelerating the development of optimized therapeutic candidates with enhanced properties and maintained structural integrity.

Core Principles of the Query-Based Molecular Optimization (QMO) Framework

Query-based Molecular Optimization (QMO) is a generic AI framework designed to optimize existing lead molecules by efficiently searching for variants with more desirable properties. The core challenge in molecular optimization lies in navigating the prohibitively large chemical space; for instance, the number of possible 60-amino-acid peptides already approaches the number of atoms in the known universe [2]. QMO addresses this by decoupling the process into two main components: (1) learning continuous latent representations of molecules using a deep generative autoencoder, and (2) performing an efficient guided search within this latent space using feedback from external property evaluators [2] [4]. This separation reduces problem complexity and allows the framework to leverage existing property prediction models directly. QMO is distinguished from prior methods by its use of zeroth-order optimization, a technique that performs efficient mathematical optimization using only function evaluations (queries), without requiring gradient information from the property predictors [2] [7]. This enables the optimization of properties evaluated by "black-box" functions, such as physics-based simulators or proprietary prediction APIs, which is a common scenario in real-world discovery problems.

Core Principles and Architectural Components

The QMO framework operates on several foundational principles that contribute to its efficiency and versatility in molecular optimization tasks.

Principle 1: Decoupling Representation Learning from Guided Search. QMO is not a single, monolithic model. Instead, it is designed to work with any pre-trained encoder-decoder architecture that can learn meaningful continuous latent representations of molecules [2] [5]. This plug-in approach allows researchers to use state-of-the-art generative models for representation learning while keeping the optimization logic consistent.

Principle 2: Query-Based Guided Search via Zeroth-Order Optimization. The optimization process does not rely on gradients from the property predictors. Instead, it performs iterative updates in the latent space by querying the property evaluators with decoded candidate sequences [2] [4]. This makes it particularly suitable for optimizing properties where the functional relationship between the molecular structure and the property is complex, non-differentiable, or handled by a black-box evaluator.

Principle 3: Direct Utilization of Sequence-Level Property Evaluations. The property evaluators used for guidance operate on the decoded molecular sequence (e.g., SMILES string or amino acid sequence), not on the latent representation itself [4]. This allows QMO to incorporate a wide range of existing and well-established property prediction tools, simulators, and expert knowledge without modification.

Principle 4: Unified Handling of Multi-Property and Similarity Constraints. The framework formally supports two practical optimization scenarios: (i) optimizing molecular similarity while satisfying desired chemical property thresholds, and (ii) optimizing chemical properties while respecting molecular similarity constraints [5]. Multiple properties and constraints can be incorporated into a single loss function that guides the search process.

The architectural workflow of QMO can be visualized as a three-phase process, encompassing representation learning, iterative query-based search, and final candidate selection.

Experimental Protocols for Key Applications

The QMO framework has been validated across several molecular optimization tasks, from standard benchmarks to real-world discovery challenges. The following protocols detail its application.

Protocol 1: Optimizing Drug-Likeness (QED) under Similarity Constraints

This protocol describes the process for optimizing the Quantitative Estimate of Drug-likeness (QED) of small organic molecules, a common benchmark task [2] [4].

- Objective: Given a lead molecule, generate an optimized molecule with a higher QED score while maintaining a Tanimoto similarity above a specified threshold (δ).

- Lead Molecule Representation: Input molecules are represented as SMILES strings [2] [5].

- Encoder-Decoder Setup: A pre-trained autoencoder (e.g., a deterministic AE or VAE) is used. The encoder maps the SMILES string to a latent vector

z, and the decoder reconstructs the SMILES string fromz[5]. - Property Evaluators:

- Optimization Configuration:

- Loss Function: The loss is designed to maximize QED subject to the similarity constraint. For example:

Loss = -QED_score + λ * max(0, δ - Similarity), whereλis a penalty weight [5]. - Zeroth-Order Optimization: The latent vector

zis iteratively perturbed. For each perturbation, the candidate is decoded, its QED and similarity are queried, and the loss is computed. The best candidate is selected to updatezfor the next iteration [2] [4].

- Loss Function: The loss is designed to maximize QED subject to the similarity constraint. For example:

- Output: An optimized SMILES string with improved QED and similarity ≥ δ.

Protocol 2: Optimizing SARS-CoV-2 Main Protease Inhibitor Binding Affinity

This protocol applies QMO to a real-world discovery problem: improving the binding affinity of existing drug candidates for the SARS-CoV-2 Mpro target [2] [4].

- Objective: Given a known Mpro inhibitor (lead molecule), generate an optimized molecule with higher predicted binding affinity (pIC₅₀ > 7.5) while maximizing similarity to the lead.

- Lead Molecule Representation: SMILES strings of known inhibitors (e.g., Dipyridamole) [2].

- Encoder-Decoder Setup: A pre-trained SMILES autoencoder is used to obtain latent representations.

- Property Evaluators:

- Binding Affinity Predictor: A pre-trained machine learning model that predicts pIC₅₀ (half-maximal inhibitory concentration) from the molecular structure [2]. Experimental IC₅₀ values can also be used if available.

- Similarity Calculator: Tanimoto similarity calculator [2].

- Optional Drug-likeness Check: A QED predictor can be added to ensure optimized molecules retain drug-like properties [2].

- Optimization Configuration:

- Loss Function: A composite loss that penalizes low binding affinity and low similarity. For example:

Loss = -pIC₅₀ - μ * Similarity, whereμis a tuning parameter [2] [5]. - Search Process: The zeroth-order optimizer searches the latent space for vectors that, when decoded, yield molecules with high binding affinity and high similarity.

- Loss Function: A composite loss that penalizes low binding affinity and low similarity. For example:

- Validation: The optimized molecules should be validated through external docking simulations or wet-lab experiments to confirm improved binding free energy [2] [4].

Quantitative Performance Data

The performance of QMO on standard benchmark tasks demonstrates its effectiveness compared to other methods. The following tables summarize key quantitative results.

Table 1: Performance on Drug-Likeness (QED) Optimization Task [2] [5]

| Similarity Constraint (δ) | Success Rate of QMO | Success Rate of Next Best Method | Key Result |

|---|---|---|---|

| 0.4 | ~93% | <78% | QMO achieves at least 15% higher success rate. |

| 0.5 | ~83% | Data not available | Robust performance under stricter constraints. |

| 0.6 | ~63% | Data not available | Maintains strong performance at high similarity. |

Table 2: Performance on Penalized logP Optimization Task [2] [5]

| Similarity Constraint (δ) | Average Improvement in Penalized logP (QMO) | Average Improvement in Penalized logP (Next Best Method) |

|---|---|---|

| 0.0 | ~3.5 | ~1.8 |

| 0.2 | ~2.9 | ~1.7 |

| 0.4 | ~2.1 | ~1.4 |

| 0.6 | ~1.1 | Data not available |

Table 3: Performance on Real-World Discovery Tasks [2] [4]

| Optimization Task | Lead Molecules | Key Metric | QMO Performance |

|---|---|---|---|

| SARS-CoV-2 Mpro Inhibitor Binding Affinity | 23 | Molecules with improved affinity & high similarity | Successfully generated molecules meeting pIC₅₀ > 7.5 |

| Antimicrobial Peptide (AMP) Toxicity | 150 | Success Rate in Reducing Toxicity | ~72% of lead molecules optimized |

The Scientist's Toolkit: Research Reagent Solutions

Implementing the QMO framework requires a set of computational tools and reagents. The following table details the essential components.

Table 4: Essential Research Reagents and Tools for QMO Implementation

| Tool / Reagent Name | Type/Function | Role in the QMO Framework | Example & Notes |

|---|---|---|---|

| SMILES/SELFIES Strings | Molecular Representation | Represents the molecule as a sequence for the encoder. | Standardized text-based representation of molecular structure [2] [8]. |

| Autoencoder (AE) | Deep Learning Model | Learns the continuous latent space of molecules; comprises the encoder and decoder. | Can be a deterministic AE, Variational Autoencoder (VAE), or other architectures [2] [5]. |

| Property Prediction APIs | Black-box Evaluator | Provides the properties (QED, pIC₅₀, etc.) for a given sequence to guide the search. | Can be QED calculators, docking software, or pre-trained ML models like toxicity classifiers [2] [4]. |

| Similarity Calculator | Evaluation Metric | Computes structural similarity (e.g., Tanimoto) between original and optimized molecules. | Typically based on Morgan fingerprints [2] [5]. |

| Zeroth-Order Optimizer | Optimization Algorithm | Drives the guided search in latent space using only function queries. | Implements algorithms for gradient-free optimization [2] [4]. |

The Query-Based Molecular Optimization framework establishes a powerful and versatile paradigm for accelerating molecular discovery. Its core principles—decoupling representation learning from guided search, leveraging zeroth-order optimization for efficient querying, and directly utilizing sequence-level property evaluations—make it uniquely suited for complex, real-world optimization problems where property predictors are sophisticated but non-differentiable black boxes. The provided protocols and performance data demonstrate that QMO consistently outperforms existing methods on standard benchmarks and shows high success rates in challenging discovery scenarios, such as optimizing SARS-CoV-2 inhibitors and antimicrobial peptides. As a generic AI framework, QMO holds significant promise for broader application in optimizing other classes of materials, including inorganic compounds and polymers, thereby offering a robust tool for the scientific community.

Query-based Molecule Optimization (QMO) represents a paradigm shift in computational molecular design by fundamentally decoupling the process of learning molecular representations from the guided search for optimized compounds [2]. This separation creates a modular, efficient, and powerful framework for drug discovery and materials science. Traditional approaches often intertwine these components, requiring retraining for new optimization tasks and limiting flexibility. In contrast, QMO's architecture allows researchers to leverage pre-trained, general-purpose molecular representations and apply them to diverse optimization challenges with multiple constraints, from improving binding affinity to reducing toxicity [2] [5].

The critical advantage of this decoupling lies in its data efficiency and practical applicability. By exploiting latent representations learned from abundant unlabeled molecular data, QMO minimizes the need for expensive property-labeled datasets. Simultaneously, its guided search mechanism directly incorporates specialized property predictors and similarity metrics, enabling precise optimization toward specific therapeutic goals [2]. This framework has demonstrated superior performance across multiple challenging tasks, including optimizing SARS-CoV-2 main protease inhibitors for higher binding affinity and improving antimicrobial peptides toward lower toxicity while preserving desired characteristics [2].

Theoretical Foundation and Mechanism of Action

Core Architectural Principles

The QMO framework operates on several foundational principles that enable its effectiveness. First, it employs a continuous latent space learned by an encoder-decoder model, typically a variational autoencoder (VAE), which maps discrete molecular sequences (e.g., SMILES strings or amino acid sequences) to continuous vector representations [2] [9]. This transformation from discrete to continuous space is crucial as it enables efficient optimization through gradient-free mathematical techniques that would be impossible to apply directly to discrete molecular structures.

Second, QMO utilizes external guidance mechanisms through property prediction models and evaluation metrics that operate directly on the molecular sequence level [2] [5]. These predictors provide the "query" function that evaluates candidate molecules during optimization. By keeping these evaluators separate from the representation learning component, the framework maintains flexibility—different property predictors can be swapped in or out without modifying the underlying molecular representation.

Third, the framework implements zeroth-order optimization for guided search in the latent space [2]. This mathematical approach enables gradient-like optimization using only function evaluations (queries), making it suitable for working with black-box property predictors where gradient information is unavailable or difficult to compute. The optimizer perturbs latent vectors and evaluates the corresponding decoded molecules, gradually moving toward regions of the latent space that yield molecules with improved properties.

Mathematical Formulation

The QMO optimization process can be formally expressed as solving the continuous optimization problem in latent space [2]:

[ \min_{z \in \mathbb{R}^d} L(\text{Decode}(z); S) ]

where (z) represents a point in the d-dimensional latent space, (\text{Decode}(z)) is the molecule sequence decoded from (z), and (L) is a loss function that incorporates multiple property predictors and similarity metrics relative to reference molecules (S). This formulation transforms the inherently discrete molecular optimization problem into a tractable continuous optimization task while maintaining the ability to evaluate candidates using discrete-sequence property predictors.

Experimental Protocols

Protocol 1: Representation Learning with Molecular Autoencoders

Objective: Train an encoder-decoder model to learn meaningful continuous representations of molecules in a latent space.

Materials:

- Dataset: Large collection of unlabeled molecular structures (e.g., 250,000+ molecules from public databases) [10]

- Software: Deep learning framework (e.g., PyTorch, TensorFlow) with RDKit cheminformatics package

- Hardware: GPU-accelerated computing environment

Procedure:

- Data Preparation:

- Collect and curate molecular structures in SMILES format [11]

- Standardize molecular representation (e.g., normalize tautomers, remove salts)

- Split dataset into training (80%), validation (10%), and test sets (10%)

Model Architecture Selection:

- Implement a sequence-to-sequence model with encoder and decoder components [2]

- For small molecules: Use transformer-based architecture processing SMILES strings [11]

- For peptides: Use recurrent neural networks processing amino acid sequences [2]

- Set latent space dimensionality (typically 128-512 dimensions) based on molecular complexity [2]

Training Configuration:

- Initialize model with appropriate pre-trained weights if available

- Set reconstruction loss function (e.g., cross-entropy for sequence generation)

- For variational autoencoders: Add Kullback-Leibler divergence term to encourage smooth latent space [9]

- Train for 100-500 epochs with early stopping based on validation reconstruction accuracy

Validation:

- Measure reconstruction accuracy on test set

- Evaluate latent space smoothness through interpolation between known active molecules

- Assess property predictability from latent representations using auxiliary tasks

Troubleshooting Tips:

- If reconstruction accuracy is poor, increase model capacity or check for data quality issues

- If latent space is discontinuous, adjust the weight of the KL divergence term in VAE training

- For specialized molecular classes (e.g., peptides, inorganic compounds), consider domain-specific tokenization [9]

Protocol 2: Guided Search for Molecular Optimization

Objective: Optimize lead molecules for improved properties while satisfying constraints using QMO guided search.

Materials:

- Pre-trained autoencoder from Protocol 1

- Property predictors: Machine learning models or simulation tools for evaluating target properties [2] [12]

- Reference molecules: Lead compounds to be optimized

- Software: QMO implementation with zeroth-order optimization capabilities

Procedure:

- Initialization:

- Encode reference lead molecule (m0) to obtain latent representation (z0)

- Define property objectives and constraints (e.g., improve binding affinity >10x, maintain similarity >0.7)

- Configure loss function (L) combining property terms and similarity metrics [2]

Search Configuration:

- Set zeroth-order optimization parameters (step size, perturbation radius, query budget) [2]

- Define convergence criteria (max iterations, minimal improvement threshold)

- For multi-property optimization: Weight individual property terms based on priority

Iterative Optimization:

- Generate candidate latent vectors by perturbing current best (z) [2]

- Decode candidates to molecular structures using the pre-trained decoder

- Evaluate properties of decoded molecules using property predictors [5]

- Compute loss function values for all candidates

- Select promising candidates for next iteration based on loss values

- Repeat until convergence or query budget exhaustion

Validation and Selection:

- Cluster optimized molecules to select diverse candidates

- Verify chemical validity and synthetic accessibility

- Apply additional filters (e.g., toxicity, pharmacokinetics) if available [10]

- Select top candidates for experimental validation

Troubleshooting Tips:

- If optimization stagnates, increase perturbation radius or adjust loss function weights

- If generated molecules are invalid, check decoder performance or adjust latent space constraints

- For slow property evaluation, implement batch processing and parallel computation

Protocol 3: SARS-CoV-2 Main Protease Inhibitor Optimization

Objective: Improve binding affinity of existing SARS-CoV-2 Mpro inhibitors while maintaining similarity and drug-like properties.

Materials:

- Reference inhibitors: Known Mpro inhibitor structures (e.g., dipyridamole analogs) [2]

- Binding affinity predictor: Machine learning model or docking software for pIC50 prediction [2] [12]

- Similarity metric: Tanimoto similarity based on molecular fingerprints [2]

- Drug-likeness evaluator: QED (Quantitative Estimate of Drug-likeness) calculator [2]

Procedure:

- Setup:

- Encode reference inhibitor molecules to latent space

- Define optimization goal: Maximize pIC50 (>7.5) with similarity constraint (>0.7) [2]

- Configure composite loss function: (L = \lambda1 \cdot \text{affinity_loss} + \lambda2 \cdot \text{similarity_loss} + \lambda_3 \cdot \text{QED_penalty})

Execution:

- Run QMO guided search with 10,000-50,000 query budget [2]

- Monitor progress through per-iteration best candidate evaluation

- Store all promising candidates for post-processing

Analysis:

Validation Results: QMO-generated Mpro inhibitors showed substantial improvement over original compounds, with maintained similarity and improved binding affinity confirmed through docking studies [2].

Research Reagent Solutions

Table 1: Essential Research Reagents and Computational Tools for QMO Implementation

| Category | Specific Tool/Resource | Function in QMO Pipeline | Implementation Notes |

|---|---|---|---|

| Molecular Representation | SMILES/SELFIES [11] | String-based molecular representation | Standardizes molecular input for encoder |

| Graph Neural Networks [9] | Learns structural molecular representations | Captures atom-bond relationships explicitly | |

| Variational Autoencoders [2] [9] | Learns continuous latent space of molecules | Enables smooth interpolation and sampling | |

| Property Prediction | Random Forest/QSAR Models | Predicts molecular properties from structure | Fast approximation for high-throughput screening |

| Molecular Docking (e.g., AutoDock, GNINA) [12] | Predicts binding affinity and poses | Provides structural insights for optimization | |

| AQFEP [12] | Absolute free energy perturbation | Physics-based binding affinity calculation | |

| Similarity Assessment | Tanimoto Similarity [2] | Measures molecular similarity using fingerprints | Maintains structural relevance to lead compounds |

| Molecular Fingerprints (ECFP) [11] | Encodes molecular substructures as binary vectors | Enables rapid similarity computation | |

| Optimization Engine | Zeroth-order Optimization [2] | Gradient-free optimization in latent space | Works with black-box property evaluators |

| Bayesian Optimization [12] | Probabilistic global optimization | Sample-efficient for expensive evaluations |

Experimental Validation and Benchmarking

Performance on Standard Benchmarks

Table 2: QMO Performance on Molecular Optimization Benchmarks

| Optimization Task | Similarity Constraint | QMO Performance | Baseline Performance | Improvement |

|---|---|---|---|---|

| QED Optimization | τ = 0.4 | Success Rate: ~92% | JT-VAE: ~77% | +15% success rate [2] |

| τ = 0.6 | Success Rate: ~85% | JT-VAE: ~70% | +15% success rate [2] | |

| Penalized logP | τ = 0.4 | Improvement: +4.78 | JT-VAE: +3.08 | +1.70 absolute [2] |

| τ = 0.6 | Improvement: +2.02 | JT-VAE: +1.76 | +0.26 absolute [2] | |

| SARS-CoV-2 Mpro | τ > 0.7 | pIC50 > 7.5 achieved | N/A (Novel task) | Significant affinity improvement [2] |

| Antimicrobial Peptides | Sequence similarity | 72% success rate | N/A (Novel task) | Substantial toxicity reduction [2] |

Application-Specific Results

Table 3: QMO Optimization Results for SARS-CoV-2 Mpro Inhibitors

| Original Molecule | Optimized Molecule | Similarity | Original pIC50 | Optimized pIC50 | QED |

|---|---|---|---|---|---|

| Dipyridamole | QMO-Compound-1 | 0.72 | 5.91 | 8.18 | 0.72 [2] |

| Compound A | QMO-Compound-2 | 0.75 | 6.12 | 7.93 | 0.68 [2] |

| Compound B | QMO-Compound-3 | 0.69 | 5.87 | 8.24 | 0.71 [2] |

| Compound C | QMO-Compound-4 | 0.71 | 6.04 | 7.87 | 0.65 [2] |

Workflow Visualization

QMO Framework Workflow

Zeroth-Order Optimization Process

Molecular representation serves as the foundational bridge between chemical structures and their predicted biological, chemical, or physical properties, forming a cornerstone of modern computational chemistry and drug design [11]. It involves translating molecules into mathematical or computational formats that algorithms can process to model, analyze, and predict molecular behavior [11]. The evolution of these representations—from simple, human-readable strings to sophisticated, machine-learned embeddings—has been a critical driver in advancing artificial intelligence (AI)-assisted drug discovery. Effective representation is a key prerequisite for developing machine learning (ML) and deep learning (DL) models, enabling critical tasks such as virtual screening, activity prediction, and molecular optimization [11].

This article explores the journey of molecular representation methods, detailing their transition from classical rule-based formats to modern AI-driven continuous embeddings. Furthermore, it provides practical protocols for implementing these representations within a query-based molecular optimization (QMO) framework, a powerful AI approach for accelerating the discovery of novel molecules and materials [4].

Classical Molecular Representation Methods

Traditional molecular representation methods rely on explicit, rule-based feature extraction derived from chemical and physical properties [11]. These methods have laid a strong foundation for numerous computational approaches in drug discovery.

String-Based Representations

String-based notations provide a compact and efficient way to encode chemical structures.

- SMILES (Simplified Molecular-Input Line-Entry System): Introduced in 1988, SMILES represents a molecular structure as a line of text using a short set of grammar rules [11] [13]. Atoms are represented by their atomic symbols (e.g., C, N, O), double bonds by '=', and branches are depicted using parentheses. For example, the SMILES for acetic acid is "CC(=O)O". Despite its widespread use, SMILES has inherent limitations, including an inability to capture the full complexity of molecular interactions and a susceptibility to generating invalid strings due to syntax errors like unbalanced parentheses [11] [13].

- DeepSMILES and SELFIES: Developed to address SMILES' syntactic limitations, DeepSMILES resolves most issues related to long-term dependencies, such as unbalanced ring identifiers and parentheses [13]. SELFIES (Self-referencing embedded strings) is a more robust alternative designed to guarantee 100% chemical validity, ensuring that every string corresponds to a valid molecular graph [13] [1].

Molecular Descriptors and Fingerprints

These methods encode molecular structures using predefined rules derived from quantifiable properties or substructural information.

- Molecular Descriptors: These are numerical values that quantify a molecule's physical or chemical properties, such as molecular weight, hydrophobicity (LogP), or topological indices [11] [14].

- Molecular Fingerprints: These typically encode substructural information as binary strings or numerical vectors [11]. A prominent example is the Extended-Connectivity Fingerprint (ECFP), which represents local atomic environments in a compact and efficient manner, making it invaluable for similarity search and virtual screening [11] [14].

Table 1: Comparison of Classical Molecular Representation Methods

| Representation Type | Format | Key Features | Primary Applications | Key Limitations |

|---|---|---|---|---|

| SMILES | String | Human-readable, compact | QSAR, molecular generation | Syntax errors, invalid outputs |

| DeepSMILES | String | Resolves ring/branch syntax | Molecular generation | Semantically incorrect strings possible |

| SELFIES | String | Guarantees 100% validity | Robust molecular generation | Less human-readable |

| Molecular Descriptors | Numerical Vector | Quantifies physchem properties | QSAR, similarity search | Predefined, may miss complex features |

| Molecular Fingerprints | Binary/Numerical Vector | Encodes substructures | Similarity search, virtual screening | Predefined, fixed resolution |

Modern AI-Driven Molecular Representations

Advances in AI have ushered in a new era of molecular representation, shifting from predefined rules to data-driven learning paradigms [11]. These methods leverage DL models to directly extract and learn intricate features from molecular data, enabling a more sophisticated understanding of molecular structures and their properties.

Language Model-Based Representations

Inspired by natural language processing (NLP), models such as Transformers have been adapted for molecular representation by treating molecular sequences (e.g., SMILES or SELFIES) as a specialized chemical language [11]. These models tokenize molecular strings at the atomic or substructure level. Each token is mapped into a continuous vector, or embedding, and these vectors are then processed by architectures like Transformers or BERT [11]. For instance, models like ChemBERTa are pre-trained on millions of SMILES strings using techniques like masked language modeling, learning to generate context-aware embeddings that capture rich semantic information about the molecule [15].

Graph-Based Representations

Graph-based methods offer a more natural representation of molecules, where atoms are represented as nodes and bonds as edges [11]. Graph Neural Networks (GNNs) operate directly on this structure, learning to aggregate information from a node's neighbors to create meaningful representations for atoms and the entire molecule [11]. The Junction Tree Variational Autoencoder (JT-VAE) is a notable example that first decomposes a molecular graph into a junction tree of chemical substructures (functional groups, rings) and then encodes both the tree and the original graph into latent embeddings, effectively capturing hierarchical structural information [1] [16].

Fragment-Based and Multiscale Representations

Fragment-based approaches aim to strike a balance between atomic-level detail and molecular-level efficiency. The t-SMILES (tree-based SMILES) framework is a recent innovation that describes molecules using SMILES-type strings obtained by performing a breadth-first search on a full binary tree formed from a fragmented molecular graph [13]. This method uses chemical fragments as the basic vocabulary, significantly reducing the search space compared to atom-based techniques and providing fundamental insights into molecular recognition [13]. Systematic evaluations show that t-SMILES models can achieve 100% theoretical validity and generate highly novel molecules, outperforming state-of-the-art SMILES-based models on various benchmarks [13].

Continuous Latent Embeddings via Deep Generative Models

Deep generative models, such as Variational Autoencoders (VAEs) and autoencoder-based neural machine translation models, can learn continuous, low-dimensional representations of molecules. These models map discrete molecular structures into a continuous latent space, where mathematical operations can be performed.

- Variational Autoencoders (VAEs): A VAE consists of an encoder and a decoder. The encoder transforms a molecular representation (e.g., a SMILES string) into a probability distribution in a continuous latent space. The decoder then samples from this distribution to reconstruct the original molecule [15]. The latent space serves as a continuous embedding, where nearby points correspond to molecules with similar structures and properties [15].

- Neural Machine Translation (NMT) Autoencoders: Models like MolAI employ an autoencoder based on an encoder-decoder architecture, often using LSTM or GRU units, to translate SMILES strings into a continuous latent vector and back again [15]. The model is trained to minimize the reconstruction error, forcing the latent space to capture the essential information needed to recreate the molecule. This approach has been scaled to train on hundreds of millions of compounds, generating robust molecular descriptors useful for downstream prediction tasks [15].

Table 2: Comparison of Modern AI-Driven Molecular Representation Methods

| Method | Underlying Technology | Molecular Input | Representation Output | Key Advantage |

|---|---|---|---|---|

| Language Models | Transformers, BERT | SMILES, SELFIES | Context-aware token embeddings | Captures semantic meaning from string |

| Graph Networks | GNNs, JT-VAE | Molecular Graph | Atom/Molecule embeddings | Naturally represents topology |

| Fragment Methods | t-SMILES | Fragmented Graph | SMILES-type string from tree | Multiscale, reduces search space |

| Latent Embeddings | VAE, NMT Autoencoder | SMILES, Graph | Continuous latent vector | Enables interpolation & optimization |

Application Note: Molecular Representation in a Query-Based Optimization Framework

Query-based molecular optimization (QMO) is an AI framework designed to efficiently identify optimal molecular variants from a vast search space by leveraging learned molecular representations [4]. The integration of advanced molecular representations is pivotal to its success. The following workflow diagram illustrates the QMO process.

Protocol: Implementing QMO with Continuous Latent Embeddings

This protocol details the steps for optimizing a lead molecule using the QMO framework with a pre-trained VAE.

Objective: To optimize a lead molecule for improved binding affinity against a target protein while maintaining a high degree of structural similarity.

Materials and Reagents:

- Lead Molecule: Provided as a SMILES string.

- Pre-trained Model: A VAE or NMT autoencoder (e.g., MolAI) pre-trained on a large chemical database (e.g., ZINC, ChEMBL).

- Computational Environment: Python with libraries such as TensorFlow/PyTorch, RDKit, and NumPy.

- Property Evaluators: External black-box functions, which could be physics-based simulators (e.g., for binding free energy), informatics models, or experimental data pipelines [4].

Procedure:

Molecular Representation and Latent Space Mapping

Define Search Space and Constraints

- The search space is defined as the continuous region in the latent space surrounding ( z_{lead} ).

- Set a similarity constraint (e.g., Tanimoto similarity > 0.7) to ensure the optimized molecule remains structurally similar to the lead [1].

Query-Based Guided Search

- Initialize the search with the candidate embedding ( z = z_{lead} ).

- Repeat for a predefined number of iterations: a. Sampling: Use random neighborhood sampling around the current candidate embedding ( z ) to generate a batch of new latent vectors ( z{candidate} ) [4]. b. Decoding: Decode each ( z{candidate} ) using the VAE decoder to generate its corresponding molecular sequence (SMILES) [4] [15]. c. Evaluation: For each decoded candidate molecule, query the external evaluators to predict its properties (e.g., binding affinity, solubility) [4]. d. Selection and Update: Based on the feedback from the evaluators, select the best-performing candidate embeddings. Use a zeroth-order optimization technique to update the search towards regions of the latent space that yield molecules with improved properties [4].

Output and Validation

- Decode the final, optimized latent vector to obtain the SMILES of the optimized molecule.

- Validate the optimized molecule by ensuring it is chemically valid and meets all predefined property and similarity constraints.

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Key Resources for Molecular Representation and Optimization

| Category | Item/Software | Function/Description | Example Use Case |

|---|---|---|---|

| Representation Libraries | RDKit | Open-source cheminformatics toolkit; generates descriptors, fingerprints, and handles SMILES. | Converting SMILES to molecular graph, calculating Morgan fingerprints. |

| Deep Learning Frameworks | TensorFlow, PyTorch | Platforms for building and training deep learning models. | Implementing and training a VAE or a Graph Neural Network. |

| Pre-trained Models | ChemBERTa, MolAI | Models pre-trained on large chemical datasets, providing ready-to-use molecular embeddings. | Generating contextual embeddings for a set of molecules for a QSAR model. |

| Optimization Algorithms | Zeroth-Order Optimization, Genetic Algorithms | Search strategies for navigating complex spaces where gradients are not available. | Guiding the search in the latent space in the QMO framework [4]. |

| Evaluation & Simulation | Molecular Dynamics Simulators, QSAR Models | Black-box evaluators to predict molecular properties. | Providing feedback on binding affinity or toxicity during optimization [4]. |

| Benchmark Datasets | ZINC, ChEMBL, QM9 | Large, publicly available databases of chemical compounds. | Pre-training representation models or benchmarking optimization algorithms. |

The evolution of molecular representation from deterministic strings to learned, continuous embeddings has fundamentally transformed the landscape of computational drug discovery. Modern AI-driven representations, including those from language models, graph networks, and deep generative models, offer a more powerful and nuanced means of capturing the complex relationships between molecular structure and function. When integrated into innovative frameworks like Query-based Molecular Optimization, these representations empower researchers to navigate the vast chemical space with unprecedented efficiency and precision, significantly accelerating the delivery of new molecules and materials to address some of the world's most pressing challenges.

In query-based molecular optimization (QMO), black-box evaluators are external functions that assess molecular sequences and return a property score without exposing their internal mechanics [5] [2]. They provide the critical guidance needed to steer the optimization process toward molecules with desired characteristics. These evaluators act as objective functions, enabling the optimization framework to navigate the vast chemical space efficiently by querying these external sources for instant feedback on proposed molecular structures [4] [2]. The QMO framework effectively decouples the representation learning process from the property-guided search, allowing researchers to incorporate diverse evaluation sources—from physics-based simulators to experimental data—without retraining the core model [5] [2].

Types of Black-Box Evaluators

Black-box evaluators in molecular optimization can be categorized into three primary types based on their underlying methodology and data sources.

Table 1: Classification of Black-Box Evaluators in Molecular Optimization

| Evaluator Type | Description | Common Examples | Key Advantages |

|---|---|---|---|

| Predictive Models | Machine learning models trained on chemical data to predict molecular properties | Quantitative Estimate of Drug-likeness (QED), Penalized logP, Toxicity predictors [2] [1] | Fast evaluation, high throughput, cost-effective |

| Physics-Based Simulators | Computational methods based on physical principles and molecular mechanics | Molecular docking simulations, Molecular Dynamics (MD), Quantum Mechanics (QM) calculations [17] [18] | High accuracy, physical interpretability, no training data required |

| Experimental Data Sources | Direct empirical measurements from wet-lab experiments or databases | Binding affinity (IC50) values, antimicrobial activity assays, solubility measurements [2] | Ground truth data, high reliability, directly relevant to real-world performance |

Predictive Models

Predictive models represent the most frequently deployed black-box evaluators in molecular optimization frameworks [1] [19]. These machine learning models are trained on existing chemical datasets to predict various molecular properties of interest. For instance, in the QMO framework, such models are used to evaluate drug-likeness (QED), solubility (penalized logP), and toxicity [2]. These models operate directly on molecular sequences or structures, providing rapid property assessments that guide the optimization process [5]. Their key advantage lies in the speed of evaluation, enabling the screening of thousands of candidate molecules in the time that would be required for a single physical simulation or experimental test.

Physics-Based Simulators

Physics-based simulators employ fundamental physical principles to evaluate molecular properties and behaviors [17]. These include molecular docking simulations for predicting protein-ligand interactions, molecular dynamics (MD) for studying conformational changes and binding stability, and quantum mechanical (QM) calculations for determining electronic properties and reaction energies [17] [18]. In the QMO framework for optimizing SARS-CoV-2 main protease inhibitors, docking simulations were used to evaluate the binding free energy of candidate molecules [2]. While computationally intensive, these methods provide high accuracy and valuable insights into molecular interactions without requiring extensive training datasets.

Experimental data serves as the most reliable form of black-box evaluation, providing ground truth measurements from actual laboratory experiments [2]. This can include IC50 values from binding assays, toxicity measurements from cell-based assays, or solubility data from physicochemical characterization [2]. When available, these data sources can be directly incorporated into the optimization loop or used to validate candidates identified through computational screening. The integration of experimental data creates a closed-loop optimization system that progressively improves molecular designs based on empirical evidence.

Quantitative Performance of Black-Box Evaluators in QMO

The effectiveness of black-box evaluators is demonstrated through their performance in various molecular optimization tasks. The following table summarizes key results from QMO implementations across different optimization challenges.

Table 2: Performance Metrics of QMO with Various Black-Box Evaluators

| Optimization Task | Evaluator Type | Key Metric | Performance Result | Reference |

|---|---|---|---|---|

| Drug-likeness (QED) optimization | Predictive Models (QED predictor) | Success rate | ~93% success rate, ≥15% higher than other methods | [4] [2] |

| Solubility optimization | Predictive Models (Penalized logP) | Property improvement | Absolute improvement of 1.7 in penalized logP | [2] |

| SARS-CoV-2 Mpro inhibitor optimization | Physics-Based Simulators (Docking) | Binding affinity improvement | Improved binding free energy while maintaining high similarity | [4] [2] |

| Antimicrobial peptide optimization | Predictive Models (Toxicity predictors) | Success rate | ~72% of lead molecules optimized for reduced toxicity | [4] [2] |

| Multi-property optimization | Hybrid Evaluators | Consistency with external validation | High consistency with state-of-the-art predictors not used in QMO | [2] |

Implementation Protocols for Black-Box Evaluators

Protocol 1: Integration of Predictive Models in QMO Pipeline

Objective: Implement machine learning-based property predictors as black-box evaluators in a query-based molecular optimization framework.

Materials and Reagents:

- Pre-trained molecular property prediction models (e.g., QED, toxicity, solubility predictors)

- Molecular representation converter (SMILES to molecular fingerprints)

- Latent representation model (autoencoder or variational autoencoder)

- Query-based optimization algorithm (zeroth-order optimization)

Procedure:

- Molecular Encoding: Encode input molecules into a continuous latent representation using a pre-trained encoder model [5] [2].

- Latent Space Perturbation: Apply random perturbations to the latent representation to generate candidate molecular variants [5].

- Sequence Decoding: Decode the perturbed latent vectors back to molecular sequences using the decoder component [5].

- Property Prediction: Convert decoded sequences to appropriate molecular representations (e.g., Morgan fingerprints) and input to pre-trained property predictors [2] [1].

- Guidance and Selection: Use predicted property scores to calculate loss values and guide the selection of perturbations for the next iteration [5] [2].

- Convergence Check: Repeat steps 2-5 until property constraints are satisfied or query budget is exhausted.

Validation: Compare optimized molecules with original leads using similarity metrics (e.g., Tanimoto similarity) and ensure property improvement aligns with predictor confidence levels.

Protocol 2: Molecular Docking as Black-Box Evaluator for Binding Affinity Optimization

Objective: Utilize molecular docking simulations to evaluate and optimize protein-ligand binding affinity in a QMO framework.

Materials and Reagents:

- Protein structure file (PDB format)

- Molecular docking software (AutoDock Vina, Glide, or similar)

- Ligand preparation toolkit (Open Babel, RDKit)

- Scoring function for binding affinity prediction

Procedure:

- Protein Preparation: Prepare the protein structure by removing water molecules, adding hydrogen atoms, and assigning partial charges [17] [2].

- Binding Site Definition: Identify and define the binding pocket coordinates based on known ligand positions or computational prediction.

- Ligand Preparation: Convert candidate molecules from QMO decoding to 3D structures and optimize their geometry using molecular mechanics force fields [17].

- Docking Execution: Perform molecular docking of prepared ligands into the defined binding site using appropriate sampling parameters [2].

- Pose Scoring: Evaluate and rank docking poses based on scoring functions to predict binding affinity [18] [2].

- Result Integration: Return the best docking score to the QMO framework as the evaluation metric for the candidate molecule.

- Iterative Refinement: Use docking scores to guide the latent space search toward molecules with improved predicted binding affinity.

Validation: Validate top-ranked optimized molecules through more rigorous binding free energy calculations (e.g., MM/PBSA) or comparison with experimental binding data where available.

Workflow Visualization: Black-Box Evaluators in Query-Based Molecular Optimization

The following diagram illustrates the integration of various black-box evaluators within the query-based molecular optimization framework:

Figure 1: QMO workflow integrating multiple black-box evaluators. The process begins with encoding an input molecule into latent space, followed by perturbation and decoding to generate candidate molecules. These candidates are evaluated by various black-box evaluators, whose outputs guide subsequent searches until constraints are met [5] [4] [2].

Essential Research Reagents and Computational Tools

Table 3: Key Research Reagent Solutions for Implementing Black-Box Evaluators

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Molecular Representation | SMILES, SELFIES, Molecular Graphs | Standardized molecular encoding | Foundation for all evaluator types [2] [1] |

| Property Predictors | QED, Penalized logP, Toxicity Classifiers | Rapid property estimation | High-throughput screening in QMO [2] [19] |

| Docking Software | AutoDock Vina, Glide, GOLD | Protein-ligand binding affinity prediction | Structure-based optimization [17] [2] |

| Simulation Platforms | GROMACS, AMBER, NAMD | Molecular dynamics simulations | Conformational analysis and binding stability [17] [18] |

| Quantum Chemistry | Gaussian, ORCA, DFT-based codes | Electronic structure calculations | Reaction mechanism and property prediction [17] [18] |

| Experimental Assays | Binding Assays (IC50), Toxicity Tests, Solubility Measurements | Empirical property validation | Ground truth verification [2] |

| Optimization Algorithms | Zeroth-order Optimization, Bayesian Optimization | Efficient search in latent space | Navigation of chemical space [5] [2] |

Implementing QMO: A Step-by-Step Guide to Workflows and Real-World Applications

Molecular optimization is a critical step in drug discovery, focused on improving the properties of lead molecules while preserving their core structural features [1]. The exploration of vast chemical spaces for optimal candidates has been revolutionized by artificial intelligence (AI), particularly through encoder-decoder models and latent space exploration techniques [1] [19]. These architectural components enable researchers to transform discrete, complex molecular structures into continuous, navigable latent representations, thereby accelerating the identification of novel compounds with enhanced pharmaceutical properties.

Encoder-decoder frameworks learn meaningful lower-dimensional representations of molecules, capturing essential chemical and structural features in a latent space. Subsequent optimization strategies—including reinforcement learning, Bayesian optimization, and diffusion processes—navigate this continuous space to discover molecules with improved target properties while maintaining structural similarity to the original lead compound [1]. This approach has demonstrated significant potential in various applications, from single-property enhancement to complex multi-objective optimization tasks required for real-world drug development.

Core Architectural Components

Encoder-Decoder Foundation Models

Encoder-decoder models serve as fundamental architectural components for molecular representation learning. These models are typically pre-trained on large-scale molecular databases to learn generalizable chemical representations before being fine-tuned for specific optimization tasks.

The SMI-TED289M model family represents a significant advancement in this domain, featuring transformer-based encoder-decoder architectures pre-trained on 91 million carefully curated molecular sequences from PubChem [20]. This family includes two primary variants: a base model with 289 million parameters and a Mixture-of-OSMI-Experts (MoE-OSMI) configuration characterized by a composition of 8 × 289M parameters [20]. The architectural innovation includes a novel pooling function that differs from standard max or mean pooling techniques, enabling accurate SMILES reconstruction while preserving molecular properties.

These models support diverse applications including property prediction, reaction outcome prediction, and molecular generation. Extensive benchmarking across 11 MoleculeNet datasets demonstrates that SMI-TED289M matches or exceeds existing approaches in both classification and regression tasks [20]. The learned representations exhibit compositional structure in the embedding space, supporting few-shot learning and separating molecules based on chemically relevant features, which emerges from the decoder-based reconstruction objective employed during pre-training.

Latent Space Representations

The latent space in encoder-decoder models provides a continuous, lower-dimensional representation of molecular structures where optimization occurs. This space transforms discrete molecular representations (SMILES, SELFIES, or molecular graphs) into continuous vectors that capture essential chemical features and relationships.

Table 1: Evaluation of Latent Space Properties in Generative Models

| Model Architecture | Reconstruction Rate | Validity Rate | Continuity Assessment |

|---|---|---|---|

| VAE (Logistic Annealing) | Significant performance loss due to posterior collapse | Moderate | Limited continuity with higher variance noise |

| VAE (Cyclical Annealing) | Good reconstruction performance | Good | Smooth continuity with σ=0.1 noise variance |

| MolMIM Model | High reconstruction performance | High | Excellent continuity across multiple noise variances |

The quality of latent space representations critically impacts optimization effectiveness [21]. Key properties include:

- Reconstruction capability: The ability to accurately reconstruct original molecules from latent representations

- Validity rate: The percentage of decoded latent vectors that correspond to valid molecular structures

- Continuity: Smooth transitions in structural similarity when latent vectors are perturbed, enabling efficient optimization

Research indicates that training modifications such as cyclical annealing for Variational Autoencoders (VAEs) significantly improve these latent space properties compared to standard training approaches [21].

Molecular Optimization Methodologies

Latent Space Exploration Strategies

Multiple strategies have been developed for navigating molecular latent spaces to identify optimized compounds. These approaches transform molecular optimization into a continuous space exploration problem rather than discrete structural modifications.

Reinforcement Learning in Latent Space: The MOLRL framework exemplifies this approach by utilizing Proximal Policy Optimization (PPO) to navigate the latent space of pre-trained generative models [21]. This method operates directly on latent representations, bypassing the need for explicitly defining chemical rules when computationally designing molecules. The reinforcement learning agent explores regions of the latent space that correspond to molecules with desired properties, with reward functions shaped to guide toward specific chemical properties.

Bayesian Optimization for Sample Efficiency: Conditional Latent Space Molecular Scaffold Optimization (CLaSMO) integrates a Conditional Variational Autoencoder (CVAE) with Latent Space Bayesian Optimization (LSBO) to strategically modify molecules while preserving similarity to the original input [22]. This approach frames molecular optimization as constrained optimization, improving sample efficiency—a crucial consideration for resource-limited applications where property evaluations are computationally expensive.

Multi-Objective Pareto Learning: The MLPS approach addresses the fundamental challenge of optimizing multiple conflicting objectives in molecular design [23]. This methodology employs an encoder-decoder model to transform discrete chemical space into continuous latent space, then utilizes local Bayesian optimization models to search for local optimal solutions within predefined trust regions. A global Pareto set learning model understands the mapping between direction vectors in objective space and the entire Pareto set in the continuous latent space.

Text-Guided and Diffusion-Based Optimization

Recent advancements incorporate textual descriptions and diffusion processes to guide molecular optimization without relying on external property predictors.

The TransDLM approach leverages a transformer-based diffusion language model for text-guided multi-property molecular optimization [16]. This method uses standardized chemical nomenclature as semantic representations of molecules and implicitly embeds property requirements into textual descriptions, mitigating error propagation during the diffusion process. By fusing detailed textual semantics with specialized molecular representations, TransDLM integrates diverse information sources to guide precise optimization while balancing structural retention and property enhancement.

Diffusion models progressively add noise to molecular representations then learn to reverse this process through denoising, effectively generating optimized molecular structures [16] [19]. These approaches have demonstrated remarkable success in producing high-quality molecular candidates while maintaining structural constraints.

Experimental Protocols and Applications

Benchmarking Performance Evaluation

Rigorous evaluation protocols assess the performance of encoder-decoder models and latent space exploration methods across diverse molecular optimization tasks.

Table 2: Performance Comparison of Molecular Optimization Methods

| Method | Optimization Approach | Key Advantages | Representative Results |

|---|---|---|---|

| SMI-TED289M | Encoder-decoder pre-training | State-of-the-art performance across 11 MoleculeNet datasets | Superior results in 4/6 classification and 5/5 regression tasks |

| MOLRL | Latent space reinforcement learning | Architecture-agnostic optimization; handles continuous high-dimensional spaces | Comparable or superior to state-of-the-art on benchmark optimization tasks |

| CLaSMO | Latent space Bayesian optimization | Remarkable sample efficiency; preserves molecular similarity | State-of-the-art in docking score and multi-property optimization |

| TransDLM | Diffusion language model | Reduces error propagation; text-guided optimization | Surpasses SOTA in optimizing ADMET properties while maintaining structural similarity |

| MLPS | Multi-objective Pareto learning | Handles conflicting objectives; enables preference-based exploration | State-of-the-art across various multi-objective scenarios |

Evaluation Metrics and Protocols:

- Property Prediction Accuracy: Assessed using benchmark datasets like MoleculeNet with standardized train/validation/test splits [20]

- Structural Similarity Maintenance: Measured via Tanimoto similarity of Morgan fingerprints between original and optimized molecules [1]

- Multi-objective Optimization Performance: Evaluated using Pareto front analysis and hypervolume indicators [23]

- Reconstruction Capability: Tested on MOSES benchmarking dataset with scaffold test sets to assess generation of novel molecular scaffolds [20]

Application-Specific Implementation Protocols

Protocol 1: Single-Property Optimization with Similarity Constraints

This protocol details the widely adopted benchmark for improving penalized LogP (pLogP) while maintaining structural similarity [21]:

- Initialization: Encode the source molecule into its latent representation using a pre-trained encoder

- Optimization Setup: Define the objective function combining pLogP improvement and similarity constraint

- Latent Space Navigation: Employ reinforcement learning (PPO) or Bayesian optimization to explore the latent space

- Decoding and Validation: Decode promising latent vectors to molecular structures and validate chemical correctness

- Iterative Refinement: Repeat steps 3-4 until convergence or satisfaction of termination criteria

Protocol 2: Multi-Objective Molecular Optimization

For complex optimization tasks with multiple conflicting objectives [23]:

- Objective Definition: Specify multiple target properties and their relative importance or constraints

- Latent Space Mapping: Transform molecular structures to continuous latent representations using encoder-decoder models

- Local Optimization: Employ Bayesian optimization within trust regions to identify local optima

- Pareto Set Learning: Train a global model to understand the mapping between preference vectors and the Pareto set

- Solution Generation: Generate diverse molecules across the Pareto front for decision-maker evaluation

Protocol 3: Scaffold-Constrained Optimization

For real-world drug discovery scenarios requiring specific molecular scaffolds [21] [22]:

- Scaffold Definition: Identify the core molecular scaffold that must be preserved

- Conditional Latent Space Encoding: Utilize conditional variational autoencoders to incorporate scaffold constraints

- Focused Exploration: Navigate latent regions corresponding to the specified scaffold

- Property Enhancement: Optimize target properties while maintaining the scaffold structure through constrained optimization

- Synthetic Accessibility Assessment: Evaluate practical synthesizability of generated molecules

Visualization of Workflows

Encoder-Decoder Molecular Optimization Framework

Multi-Objective Pareto Learning Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function | Representative Implementation |

|---|---|---|---|

| SMILES/Tokens | Data Representation | String-based molecular encoding | SMI-TED289M tokenization [20] |

| Molecular Fingerprints | Feature Extraction | Structural similarity calculation | Morgan fingerprints for Tanimoto similarity [1] |

| Pre-trained Encoder-Decoder | Foundation Model | Molecular representation learning | SMI-TED289M family [20] |

| Reinforcement Learning | Optimization Algorithm | Latent space navigation | MOLRL with PPO [21] |

| Bayesian Optimization | Optimization Algorithm | Sample-efficient latent space search | CLaSMO framework [22] |

| Diffusion Models | Generation Framework | Iterative denoising for molecule generation | TransDLM [16] |