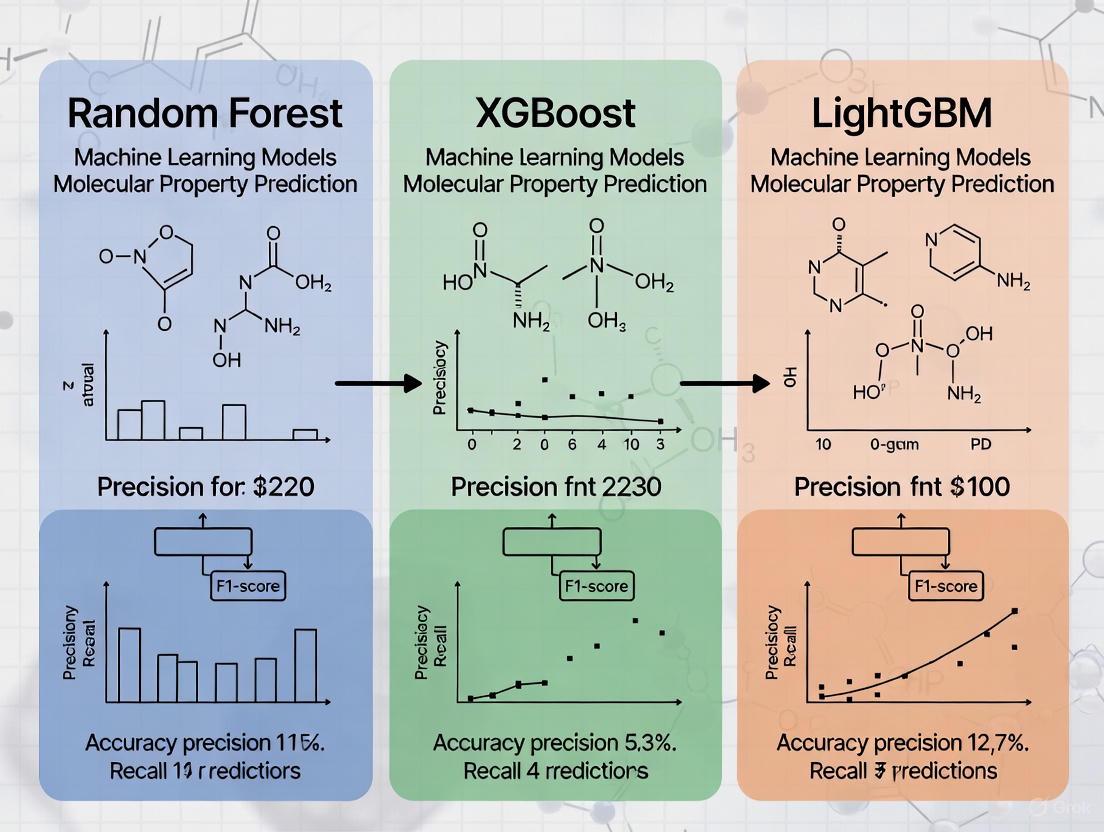

Random Forest vs XGBoost vs LightGBM: A Comprehensive Benchmark for Molecular Property Prediction

This article provides a systematic comparison of three dominant machine learning algorithms—Random Forest, XGBoost, and LightGBM—for predicting molecular properties in pharmaceutical and chemical sciences.

Random Forest vs XGBoost vs LightGBM: A Comprehensive Benchmark for Molecular Property Prediction

Abstract

This article provides a systematic comparison of three dominant machine learning algorithms—Random Forest, XGBoost, and LightGBM—for predicting molecular properties in pharmaceutical and chemical sciences. Drawing on recent benchmark studies, we explore the foundational principles governing each algorithm's performance, methodological implementations for cheminformatics applications, optimization strategies for handling high-dimensional molecular data, and rigorous validation protocols. For researchers and drug development professionals, this review offers evidence-based guidance for algorithm selection, highlighting how molecular fingerprint representations, hyperparameter tuning, and multi-label classification approaches significantly impact predictive accuracy for critical tasks like odor characterization, drug solubility prediction, and toxicity assessment.

Understanding the Algorithms: Core Principles and Relevance to Molecular Data

In the field of molecular property prediction, accurately linking chemical structure to observable properties is a fundamental challenge with significant implications for drug discovery and materials science. This domain requires machine learning models capable of capturing complex, non-linear relationships within high-dimensional data. Among the most powerful approaches for this task are ensemble methods based on decision trees, particularly Random Forest, XGBoost, and LightGBM [1]. These algorithms have demonstrated exceptional performance in predicting molecular properties, significantly outperforming traditional linear models, which often achieve R² values around 0.26 compared to approximately 0.61 for ensemble methods [1].

The effectiveness of these models stems from their ability to handle diverse molecular descriptors—from simple Lipinski descriptors to complex functional structure descriptors and molecular fingerprints—and learn the intricate patterns that govern molecular behavior [2] [3] [1]. As research increasingly leverages in-silico screening to prioritize laboratory experiments, understanding the theoretical foundations of these algorithms becomes crucial for researchers and drug development professionals aiming to build robust predictive pipelines [1].

Algorithmic Foundations and Mechanisms

Core Decision Tree Concepts

All three algorithms are built upon decision trees, which function by making a series of sequential splits on the features in the data [4]. Imagine predicting a molecule's solubility: a decision tree might first split molecules based on molecular weight, then on the number of hydrogen bond donors, and so forth, until reaching a final prediction at a leaf node [5]. While individual trees are intuitive, they are prone to overfitting, meaning they memorize the training data but fail to generalize to new molecules. Ensemble methods overcome this by combining multiple trees to create a stronger, more generalizable model [4] [5].

Random Forest: The Democratic Committee

Random Forest operates on the principle of bagging (Bootstrap Aggregating) [6]. It constructs a "forest" of many decision trees, each trained on a different random subset of the original data (a bootstrap sample) and, when splitting nodes, considers only a random subset of the features [6] [4]. This double randomness ensures that individual trees are diverse and decorrelates their errors.

- Final Prediction: For regression, the final output is the average prediction of all trees. For classification, it is the majority vote [4] [5].

- Key Strength: This approach effectively reduces overfitting compared to a single decision tree, making it a reliable and robust all-purpose algorithm [4].

XGBoost: The Sequential Optimizer

XGBoost (eXtreme Gradient Boosting) belongs to the gradient boosting family. Unlike Random Forest, which builds trees independently, boosting builds them sequentially [7] [8]. Each new tree is specifically trained to correct the errors made by the collection of all previous trees.

- Gradient Descent: The algorithm uses gradient descent to minimize a loss function (e.g., mean squared error), steering the model toward greater accuracy with each new tree [6] [8].

- Regularization: A key feature that sets XGBoost apart from simpler boosting implementations is its built-in L1 and L2 regularization, which penalizes model complexity and further helps prevent overfitting [8].

- System Optimization: It is designed for computational efficiency, featuring parallel processing at the node level and the ability to handle missing values intelligently [7] [8].

LightGBM: The Speed-Focused Innovator

LightGBM (Light Gradient Boosting Machine) is another gradient-based algorithm that prioritizes training speed and efficiency, especially on very large datasets [4] [8]. It achieves this through two novel techniques:

- Leaf-Wise Growth: While XGBoost and most other tree-based algorithms grow trees level-wise (splitting all leaves at a given depth simultaneously), LightGBM grows trees leaf-wise [8] [9]. It selects the leaf that leads to the largest reduction in loss to split at each step, resulting in a more asymmetric, and often more accurate, tree [9]. However, this can increase the risk of overfitting on small datasets, which can be mitigated by using the

max_depthparameter [9]. - Histogram-Based Learning: It bins continuous feature values into discrete histograms, which dramatically speeds up the finding of the best split points and reduces memory usage [8] [9].

Table 1: Core Structural Differences Between the Algorithms

| Feature | Random Forest | XGBoost | LightGBM |

|---|---|---|---|

| Ensemble Method | Bagging | Boosting | Boosting |

| Tree Building | Parallel, independent trees | Sequential, error-correcting trees | Sequential, error-correcting trees |

| Tree Growth | Level-wise | Level-wise | Leaf-wise |

| Key Mechanism | Random feature & data subsets | Gradient descent + regularization | Leaf-wise growth + histograms |

| Primary Strength | Robustness, reduces overfitting | High predictive accuracy | Speed and efficiency on large data |

Experimental Benchmarking in Molecular Property Prediction

Performance in Ionic Liquid Design for CO2 Capture

A 2025 study systematically evaluated ensemble learning models for predicting CO2 solubility in Ionic Liquids (ILs), a critical task for carbon capture technology [3]. The research used new molecular descriptors, including a Functional Structure Descriptor (FSD) and a compact CORE descriptor, to build predictive models.

Table 2: Model Performance on CO2 Solubility Prediction in Ionic Liquids [3]

| Model | R² (FSD Descriptor) | MAE (FSD Descriptor) | R² (CORE Descriptor) | MAE (CORE Descriptor) |

|---|---|---|---|---|

| CatBoost | 0.9945 | 0.0108 | 0.9925 | 0.0120 |

| LightGBM | Not Reported | Not Reported | 0.9895 | 0.0140 |

| XGBoost | Not Reported | Not Reported | 0.9887 | 0.0143 |

| Random Forest | Not Reported | Not Reported | 0.9863 | 0.0155 |

The study concluded that while all ensemble models performed well, CatBoost was the most outstanding for this specific molecular prediction task [3]. This highlights that the "best" algorithm can be context-dependent, influenced by the nature of the data and the descriptors used.

General Performance in Drug Discovery Pipelines

A separate benchmarking exercise within a drug discovery workflow compared multiple algorithms on a molecular property prediction task [1]. The results affirmed the dominance of ensemble tree methods.

Table 3: Benchmarking of Various Algorithms on a Molecular Property Task [1]

| Model Category | Example Algorithms | Average R² | Key Takeaway |

|---|---|---|---|

| Ensemble Models | Random Forest, XGBoost, CatBoost, LightGBM | ~0.61 | Dominate due to ability to model non-linear relationships |

| Linear Models | Ridge, Bayesian Ridge | ~0.26 | Underperform, highlighting the non-linear nature of chemical data |

| Other Methods | Simple Trees, k-NN | ~0.41 | Moderate performance |

The research noted that Random Forest achieved the best individual model performance in their test, with an R² of 0.7275, an RMSE of 0.81, and an MAE of 0.55 [1]. This demonstrates that even without the sequential boosting of XGBoost or LightGBM, the bagging approach of Random Forest remains a potent and highly reliable tool for molecular scientists.

A Guide for Model Selection in Molecular Research

Choosing the right algorithm depends on the specific constraints and goals of the research project. The following guide synthesizes insights from experimental benchmarks and algorithmic theory [4] [8] [1]:

- Choose Random Forest when you need a robust, all-purpose model that is less prone to overfitting. It is an excellent starting point for complex datasets with a mix of numerical and categorical features and is generally easier to tune [4].

- Choose XGBoost when you are aiming for the highest possible predictive accuracy and have sufficient computational resources for tuning. It is a strong choice for structured/tabular data in fields like medicine and chemistry and often performs well in competitive benchmarks [4] [8].

- Choose LightGBM when working with very large datasets (e.g., hundreds of thousands of molecules) and training speed is a critical factor. Its efficiency allows for faster iteration, which is valuable in large-scale virtual screening campaigns [4] [8] [9].

It is crucial to note that recent research has highlighted a common challenge for all these models: out-of-distribution (OOD) generalization [10]. A 2025 benchmark study (BOOM) found that even top-performing models exhibited an average OOD error three times larger than their in-distribution error [10]. This indicates that predicting properties for novel molecular scaffolds that differ significantly from the training data remains an open challenge and a key frontier in chemical machine learning.

Essential Research Reagents and Computational Tools

Building effective predictive models for molecular properties requires a toolkit that encompasses both data preparation and machine learning libraries. The table below details key "research reagents" for in-silico experiments.

Table 4: Essential Research Reagent Solutions for Molecular Property Prediction

| Research Reagent / Tool | Function / Description | Relevance to Molecular Research |

|---|---|---|

| Lipinski Descriptors | A set of simple molecular properties (e.g., molecular weight, logP). | Provides a foundational set of features for initial modeling and filtering of drug-like molecules [1]. |

| PaDEL Descriptors | Software to calculate thousands of molecular fingerprints and descriptors. | Generates a comprehensive, high-dimensional feature matrix from molecular structures for model training [1]. |

| Functional Structure Descriptor (FSD) | A descriptor based on the group contribution method. | Used to build quantitative structure-property relationship (QSPR) models for specific tasks, like IL design [3]. |

| Scikit-learn (sklearn) | An open-source Python library for machine learning. | Provides implementations for data preprocessing, Random Forest, and serves as a unified framework for model benchmarking [5]. |

| XGBoost Library | An optimized open-source library for the XGBoost algorithm. | The go-to implementation for training XGBoost models, supporting multiple programming languages [6] [8]. |

| LightGBM Library | A lightweight, high-performance library from Microsoft. | The official library for training LightGBM models, known for its speed and efficiency on large datasets [8] [9]. |

Visualizing Algorithmic Workflows and Differences

Random Forest: Bagging and Aggregation

The diagram below illustrates the process of creating a Random Forest model, from bootstrap sampling to aggregating the final prediction.

Random Forest Model Construction and Prediction Workflow

XGBoost vs. LightGBM: Tree Growth Strategies

A fundamental difference between XGBoost and LightGBM lies in how they construct their decision trees. The following diagram contrasts their growth strategies.

Tree Growth Strategy Comparison

Boosting: Sequential Error Correction

This diagram visualizes the core sequential process of gradient boosting, which is shared by both XGBoost and LightGBM.

Sequential Model Building in Gradient Boosting

In the fields of cheminformatics and drug discovery, accurately predicting molecular properties from chemical structure is a fundamental task. The transformation of molecular structures into numerical representations—primarily molecular fingerprints and descriptors—has established a powerful paradigm for machine learning. Among the various algorithms applied to these representations, tree-based models including Random Forest (RF), XGBoost, and LightGBM have consistently demonstrated superior performance and practicality. Their success is attributed to a powerful alignment between their inherent capabilities and the specific characteristics of molecular data. Tree-based ensembles excel at capturing the complex, non-linear relationships that exist between structural features and properties, they are robust to the high-dimensionality typical of chemical feature spaces, and they offer computational efficiency that is critical for iterative research and development processes [11] [12]. This guide provides an objective comparison of these prominent algorithms, underpinned by experimental data and detailed methodologies, to inform their application in molecular property prediction research.

Molecular Representations: The Foundation for Prediction

The performance of any machine learning model is contingent on the quality of its input features. In molecular property prediction, two classes of representations are predominant.

Molecular Fingerprints: These are typically binary bit vectors that encode the presence or absence of specific substructures or patterns within a molecule. The Extended Connectivity Fingerprint (ECFP) is a canonical example, generating a hashed representation of circular atom neighborhoods [13]. Their key advantage is providing a fixed-length, information-dense representation of molecular structure without requiring expert-defined descriptors.

Molecular Descriptors: These are numerical values quantifying specific physicochemical properties (e.g., molecular weight, logP, polar surface area) or topological features of the molecule. They can be combined with fingerprints to create an extended feature set that encompasses both structural and property-based information [14].

A critical insight from recent benchmarking studies is that these "traditional" representations, when paired with robust tree-based models, remain remarkably competitive. One extensive evaluation of 25 pretrained neural models found that nearly all showed negligible improvement over the baseline ECFP fingerprint, which often delivered top-tier performance across a wide range of tasks [13]. Another study comparing descriptor-based and graph-based models concluded that "the off-the-shelf descriptor-based models still can be directly employed to accurately predict various chemical endpoints" [11]. This establishes that the representation—fingerprints and descriptors—provides a powerful and often sufficient foundation upon which tree-based models build their success.

Performance Comparison: Random Forest vs. XGBoost vs. LightGBM

Direct comparisons of tree-based algorithms across diverse molecular prediction tasks reveal distinct performance profiles. The following tables summarize quantitative results from key benchmarking studies.

Table 1: Comparative performance on classification and regression tasks in cheminformatics [11] [12].

| Model | Best For | Key Strengths | Notable Performance |

|---|---|---|---|

| Random Forest (RF) | All-purpose solution; robust performance [4]. | Reduces overfitting; handles mixed data types [4]. | Reliable performance for classification tasks [11]. |

| XGBoost | State-of-the-art predictive accuracy [4] [12]. | Built-in regularization; fast execution [4]. | Generally best predictive performance in large-scale QSAR benchmarking [12]. |

| LightGBM | Large-scale datasets requiring fast training [4] [12]. | Fastest training speed & lower memory usage [4] [12]. | Achieved reliable predictions for classification; fastest training time [11] [12]. |

Table 2: Model performance on specific molecular prediction tasks from recent literature.

| Application Domain | Best Performing Model(s) | Reported Metric | Key Finding |

|---|---|---|---|

| Drug Solubility in scCO₂ | XGBoost | R²: 0.9984, RMSE: 0.0605 [15] | Outperformed RF, CatBoost, and LightGBM. |

| CO₂ Capture by Ionic Liquids | CatBoost | R²: 0.9945, MAE: 0.0108 [3] | Outperformed RF, XGBoost, and LightGBM. |

| Retention Time Prediction | XGBoost & LightGBM | R² > 0.71 [14] | Top performers using extended molecular descriptors. |

| Drug-Target Interaction (DTI) | LightGBM in LGBMDF framework | High Sn, Sp, MCC, AUC, AUPR [16] | Better performance and faster speed than XGBoost-based cascade forest. |

The data indicates that XGBoost frequently achieves the highest predictive accuracy on standardized benchmarks, making it a strong default choice for many molecular property prediction tasks [12]. However, LightGBM offers a significant advantage in computational efficiency, particularly for larger datasets, often with only a minimal sacrifice in accuracy [12] [16]. Random Forest remains a robust and reliable algorithm, especially valuable for its simplicity and resistance to overfitting [4]. The performance of CatBoost can be exceptional on specific tasks and datasets, sometimes leading the pack as shown in the ionic liquids study [3].

Experimental Protocols and Workflows

To ensure the reproducibility and rigor of model comparisons, studies typically follow a structured workflow. The methodology below synthesizes protocols from the cited research [17] [11] [14].

Data Curation and Preprocessing

The first step involves assembling a dataset of molecules with associated experimental property values. SMILES strings are canonicalized using toolkits like RDKit. Subsequently, molecular representations are generated:

- Fingerprints: ECFP, Morgan, or other fingerprints are calculated with a specified radius and bit length.

- Descriptors: A set of physicochemical and topological descriptors (e.g., from RDKit or Mordred) is computed. The dataset is then split into training and test sets, often employing scaffold splitting to assess model generalization to novel chemotypes.

Model Training and Hyperparameter Optimization

Models are trained on the generated representations. A critical component is hyperparameter tuning to maximize performance and ensure a fair comparison. Common optimization techniques include Grid Search, Random Search, or Bayesian Optimization (e.g., via Optuna) [18]. Key hyperparameters include:

- Random Forest: Number of trees, maximum depth, minimum samples per split.

- XGBoost: Learning rate, maximum depth, L1/L2 regularization terms, subsample ratio.

- LightGBM: Number of leaves, learning rate, feature fraction, bagging fraction.

Evaluation and Validation

Model performance is rigorously evaluated using k-fold cross-validation (often 5- or 10-fold) on the training set to guide hyperparameter tuning, with a final, unbiased evaluation performed on the held-out test set. Common metrics include:

- Regression: R², Root Mean Square Error (RMSE), Mean Absolute Error (MAE).

- Classification: ROC-AUC, PR-AUC, F1 score, Matthews Correlation Coefficient (MCC). The use of multiple metrics, particularly PR-AUC and MCC for imbalanced datasets, is considered best practice [17].

Diagram 1: Standard workflow for benchmarking tree-based models on molecular data.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The experimental workflow relies on a suite of software libraries and computational tools that form the modern scientist's toolkit for molecular machine learning.

Table 3: Key software tools for molecular property prediction with tree-based models.

| Tool Name | Type | Primary Function | Reference |

|---|---|---|---|

| RDKit | Cheminformatics Library | Canonicalize SMILES; calculate fingerprints & descriptors. | [11] [14] |

| Mordred | Descriptor Calculator | Compute a large, comprehensive set of molecular descriptors. | [14] |

| XGBoost | ML Library | Implementation of the XGBoost algorithm. | [15] [12] |

| LightGBM | ML Library | Implementation of the LightGBM algorithm. | [12] [16] |

| Scikit-learn | ML Library | Implementation of Random Forest and other utilities. | [12] |

| Optuna | Hyperparameter Optimization | Automated tuning of model hyperparameters. | [18] |

Technical Underpinnings: Why Tree-Based Models Are Effective

The consistent success of tree-based models with molecular representations can be traced to fundamental algorithmic characteristics.

Handling Non-Linear Relationships: The hierarchical splitting process in decision trees naturally captures complex, non-linear interactions between molecular features without requiring prior transformation or assumption of linearity [12]. This is crucial as molecular properties often arise from complex, interdependent structural effects.

Robustness to Feature Scales: Tree-based models are invariant to the scale of input features, which is highly advantageous when combining diverse molecular descriptors that may have different units and value ranges. This eliminates the need for careful feature scaling, a requirement for many other algorithms like Support Vector Machines and Neural Networks [15].

Implicit Feature Selection: During training, trees split on the most informative features, effectively performing embedded feature selection. This makes them robust to the high-dimensionality and potential noise present in large fingerprint and descriptor vectors, focusing on the most predictive substructures and properties [12].

Computational Efficiency: Algorithms like XGBoost and LightGBM are engineered for speed and scalability. LightGBM's histogram-based splitting and leaf-wise growth strategy, along with XGBoost's parallel processing, enable them to handle large-scale datasets efficiently, which is essential for high-throughput virtual screening [12] [16].

Diagram 2: Alignment between tree-model strengths and molecular data challenges drives performance.

The empirical evidence clearly demonstrates that tree-based models, particularly XGBoost, LightGBM, and Random Forest, excel in molecular property prediction when coupled with classical representations like fingerprints and descriptors. XGBoost often provides a slight edge in predictive accuracy, LightGBM dominates in training speed for large datasets, and Random Forest offers proven robustness. The choice among them depends on the specific project priorities: raw predictive power, computational constraints, or the need for a simple, reliable baseline.

Future research will likely focus on the integration of these powerful models with emerging representation learning techniques. While current benchmarks show traditional fingerprints holding their own, the synergy between learned representations from graph neural networks or transformers and the robust predictive power of tree-based ensembles is a promising frontier. For now, tree-based models applied to well-crafted molecular features remain an indispensable, state-of-the-art toolkit for researchers and scientists driving innovation in drug discovery and materials science.

Molecular property prediction stands as a critical computational challenge in chemistry, material science, and drug discovery. With chemical spaces exceeding 10^18 compounds for certain classes like ionic liquids, brute-force experimental approaches become prohibitively expensive and time-consuming [19] [3]. Computational models, particularly machine learning algorithms, have emerged as powerful tools for predicting molecular properties by learning from existing datasets. Among these, tree-based ensemble methods including Random Forest (RF), XGBoost (XB), and LightGBM (LG) have demonstrated remarkable performance across diverse prediction tasks [3] [20]. This guide provides a comprehensive comparison of these algorithms specifically for molecular property prediction, enabling researchers to select optimal methodologies for their specific applications.

The fundamental challenge in molecular informatics lies in establishing quantitative structure-property relationships (QSPR), where models learn to correlate molecular descriptors with target properties [3]. Success depends on multiple factors: dataset characteristics, molecular representation, algorithm selection, and appropriate validation methodologies. Ensemble methods excel in this domain by combining multiple weak learners to create robust predictors that generalize well to unseen molecules, though each algorithm exhibits distinct strengths and weaknesses across different prediction scenarios [21] [3].

Critical Molecular Prediction Tasks and Dataset Considerations

Key Prediction Domains and Associated Data Challenges

Molecular prediction spans numerous property domains essential to scientific and industrial applications. For drug discovery, key properties include binding affinity, solubility, permeability, and toxicity profiles [19]. Material science applications focus on properties like solubility of gases in ionic liquids for carbon capture [3], while other domains include olfactory characteristics [19] and shear resistance in construction materials [22].

Dataset quality and composition significantly impact model performance. Common challenges include limited dataset size, inherent biases in published data, and inadequate chemical diversity [19]. For many pharmacological properties, reliable data is scarce and concentrated around specific molecular classes. The applicability domain concept is crucial—defining the chemical space where models provide reliable predictions [19]. Molecular representations further influence success; recent innovations include functional structure descriptors and dimension-reduced descriptors like CORE that maintain predictive accuracy while simplifying feature spaces [3].

Table 1: Representative Molecular Property Datasets

| Dataset | Property Focus | Molecules | Notable Characteristics |

|---|---|---|---|

| Tox21 | Toxicology | ~13,000 | 12 different assay outcomes |

| ChEMBL | Bioactivity | ~2.0 million | Extracted from literature |

| QM9 | Electronic Properties | ~134,000 | DFT simulations for small molecules |

| PDBbind | Binding Affinity | ~21,400 | Biomolecular complexes from PDB |

| AqSolDB | Aqueous Solubility | ~10,000 | Organic molecules from 9 sources |

| Lipophilicity | Distribution Coefficient | ~1,100 | n-octanol/water distribution |

| BBBP | Blood-Brain Barrier Penetration | ~2,100 | Blood-brain penetration data |

Experimental Design and Validation Frameworks

Robust experimental design is essential for reliable model assessment. Corrected cross-validation techniques and statistical tests account for dataset partitioning effects, reducing biased performance estimates [21]. For imbalanced data scenarios common in molecular studies (e.g., active vs. inactive compounds), resampling techniques like SMOTE and ADASYN help balance class distributions [17]. Hyperparameter optimization through Bayesian search or grid search further enhances model performance [21] [17].

The following workflow diagram illustrates a standardized experimental protocol for comparing molecular prediction algorithms:

Diagram 1: Experimental workflow for comparing molecular prediction algorithms

Performance Comparison of Ensemble Algorithms

Quantitative Performance Metrics Across Applications

Direct comparisons of RF, XGBoost, and LightGBM across molecular prediction tasks reveal context-dependent performance patterns. In predicting CO₂ solubility in ionic liquids, CatBoost (another gradient boosting variant) achieved exceptional performance (R² = 0.9945, MAE = 0.0108) using functional structure descriptors [3]. While this study didn't include direct XGBoost and LightGBM comparisons on the exact same task, it demonstrated the potential of boosted ensembles for molecular property prediction.

For intrusion detection in wireless sensor networks—a different but structurally similar prediction task—CatBoost optimized with Particle Swarm Optimization (PSO) outperformed XGBoost, LightGBM, and Random Forest with an remarkable R² value of 0.9998 [20]. This demonstrates gradient boosting's potential advantage in well-tuned scenarios with appropriate optimization techniques.

Table 2: Algorithm Performance Comparison Across Prediction Tasks

| Application Domain | Best Performing Algorithm | Key Metrics | Runner-up Algorithm | Comparative Performance |

|---|---|---|---|---|

| CO₂ Solubility in ILs [3] | CatBoost | R² = 0.9945, MAE = 0.0108 | Other Ensemble Methods | All ensembles performed well, CatBoost superior |

| Intrusion Detection [20] | CatBoost-PSO | R² = 0.9998, MAE = 0.6298 | XGBoost | Clear superiority across all metrics |

| General Tabular Data [23] | Gradient Boosting Machines | Varies by dataset | Deep Learning/Neural Networks | Often equivalent or superior to DL |

| Academic Performance [24] | LightGBM | AUC = 0.953, F1 = 0.950 | XGBoost/Random Forest | LightGBM best base model |

| Shear Resistance [22] | ANN (for extrapolation) | R² = 0.98-0.99 | RF/XGB/LightGBM | All comparable for interpolation |

Computational Efficiency and Scalability Considerations

Beyond raw predictive accuracy, computational efficiency critically impacts practical utility. For structured tabular data common in molecular prediction, tree-based ensembles typically outperform deep learning models while requiring fewer computational resources [23] [17]. Among ensemble methods, LightGBM often demonstrates faster training times due to its histogram-based approach, while XGBoost provides excellent performance with careful parameter tuning [24]. Random Forest generally offers competitive performance with greater parallelization capabilities [17].

The relationship between dataset characteristics and optimal algorithm selection can be visualized as follows:

Diagram 2: Algorithm selection guide based on dataset characteristics and constraints

Detailed Experimental Protocols

Molecular Property Prediction Methodology

Standardized experimental protocols enable fair algorithm comparisons. For predicting CO₂ solubility in ionic liquids, researchers developed functional structure descriptors based on group contribution methods and a simplified CORE descriptor [3]. The experimental workflow involved:

- Descriptor Calculation: Compute functional structure descriptors capturing molecular characteristics relevant to solvation interactions

- Dataset Partitioning: Split data using scaffold-based or temporal splits to assess generalization capability

- Model Training: Implement multiple ensemble methods (CatBoost, LightGBM, XGBoost, GBDT, RF, AdaBoost) with consistent validation

- Hyperparameter Tuning: Employ Bayesian optimization or grid search for critical parameters (learning rate, tree depth, regularization)

- Validation: Assess performance using R², MAE, and other relevant metrics with corrected cross-validation

This protocol revealed that while all ensemble methods achieved strong performance, CatBoost demonstrated superior predictive accuracy for this specific molecular prediction task [3].

Handling Class Imbalance and Dataset Bias

Molecular property datasets often exhibit significant class imbalance (e.g., active vs. inactive compounds). Resampling techniques like SMOTE consistently demonstrate effectiveness when combined with ensemble methods [17]. In telecommunications churn prediction (structurally similar to molecular activity classification), tuned XGBoost with SMOTE achieved the highest F1-score across imbalance levels from 15% to 1% [17].

Dataset bias represents another critical consideration. Molecular datasets frequently overrepresent certain chemical subspaces, potentially leading to overoptimistic performance estimates [19]. The applicability domain concept helps quantify prediction reliability based on molecular similarity to training data [19].

Key Algorithms and Implementation Frameworks

Selecting appropriate algorithms forms the foundation of effective molecular property prediction. Based on comparative studies:

- XGBoost: Often provides top-tier predictive performance with careful tuning; excellent for heterogeneous feature spaces [17] [24]

- LightGBM: Delivers competitive accuracy with significantly faster training times; ideal for large-scale screening [20] [24]

- Random Forest: Offers robust performance with lower variance; excellent for smaller datasets and parallel implementation [17] [22]

- CatBoost: Superior handling of categorical features; demonstrated exceptional performance in specific molecular prediction tasks [3] [20]

Successful implementation requires both quality datasets and robust software frameworks:

Table 3: Essential Resources for Molecular Prediction Research

| Resource | Type | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Scikit-learn | Software Library | Traditional ML implementation | RF, preprocessing, validation |

| XGBoost | Software Library | Gradient boosting framework | Python/R/Java APIs |

| LightGBM | Software Library | Lightweight gradient boosting | Microsoft development |

| CatBoost | Software Library | Categorical feature handling | Yandex development |

| ChEMBL | Database | Bioactive molecule properties | ~2 million compounds |

| PubChemQC | Database | Molecular geometries & properties | DFT calculations for 221M molecules |

| Tox21 | Dataset | Toxicological profiling | 12 assays, ~13K compounds |

| Applicability Domain | Methodology | Prediction reliability assessment | Critical for real-world deployment |

| SMOTE | Algorithm | Class imbalance correction | Synthetic sample generation |

Molecular property prediction represents a challenging domain where algorithm selection significantly impacts research outcomes. Based on comprehensive comparative analysis:

For maximum predictive accuracy with sufficient computational resources, XGBoost and CatBoost generally deliver top performance, particularly when combined with appropriate molecular descriptors and hyperparameter optimization [3] [20]. For large-scale screening applications requiring efficient processing, LightGBM provides the best balance of accuracy and computational efficiency [20] [24]. For robust performance on smaller datasets or when model interpretability is prioritized, Random Forest remains a competitive choice [17] [22].

Future research directions should focus on developing domain-specific molecular representations, improving uncertainty quantification, and creating more balanced benchmarking datasets. The integration of ensemble methods with emerging deep learning approaches may further enhance predictive capabilities across the diverse landscape of molecular property prediction tasks.

In molecular property prediction research, handling sparse, high-dimensional chemical data presents significant challenges that directly impact model selection and performance. Data sparsity arises naturally in chemical datasets due to the vastness of chemical space and the relatively small number of experimentally characterized compounds. High-dimensionality results from the complex numerical representations needed to capture molecular structures, often generating hundreds or thousands of features from molecular descriptors, fingerprints, or embeddings. Within this context, tree-based ensemble methods—particularly Random Forest, XGBoost, and LightGBM—have emerged as powerful tools for navigating these data challenges, each offering distinct advantages for different data scenarios encountered by researchers and drug development professionals.

The performance of these algorithms is heavily influenced by dataset characteristics, including size, sparsity patterns, dimensionality, and feature distributions. This guide provides an objective comparison of these three algorithms, supported by experimental data from cheminformatics studies, to help researchers select the most appropriate method for their specific molecular property prediction tasks.

Algorithmic Foundations and Structural Differences

Tree Growth Strategies

The fundamental structural differences between the three algorithms significantly impact their handling of sparse, high-dimensional data:

Random Forest employs a "bagging" approach that constructs multiple independent decision trees using bootstrap sampling of observations and features, then aggregates their predictions. Each tree grows level-wise, considering all splits at a given depth before proceeding deeper.

XGBoost utilizes a "boosting" approach that sequentially builds trees where each new tree corrects errors of the previous ensemble. It employs a level-wise (horizontal) tree growth strategy and uses a pre-sorted algorithm and histogram-based method for split finding [8].

LightGBM also uses boosting but implements a leaf-wise (vertical) tree growth strategy that expands the node with the maximum loss reduction, resulting in asymmetric trees with potentially greater accuracy but higher risk of overfitting on small datasets [8] [25]. LightGBM introduces two novel techniques for efficiency: Gradient-Based One-Side Sampling (GOSS), which retains instances with larger gradients and randomly samples those with smaller gradients, and Exclusive Feature Bundling (EFB), which combines mutually exclusive sparse features to reduce dimensionality [8].

The following diagram illustrates these distinct tree growth methodologies:

Handling of Sparse Data and Missing Values

Each algorithm employs distinct strategies for handling sparse, high-dimensional data:

Random Forest naturally handles sparse data through its feature sampling approach, which reduces the impact of uninformative sparse features. Missing values are typically handled through surrogate splits or by assigning missing values to the branch that minimizes loss.

XGBoost includes a "sparsity-aware" split finding algorithm that automatically learns the best direction to handle missing values during training. The algorithm assigns missing values to either the left or right branch based on which option provides the maximum gain [8].

LightGBM efficiently handles sparse data through its Exclusive Feature Bundling (EFB) capability, which can bundle multiple sparse features (e.g., one-hot encoded categorical variables) into fewer dense features, significantly reducing dimensionality and computational requirements [8].

For high-dimensional chemical data where features often include molecular fingerprints with many zero values, LightGBM's EFB provides particular advantages in memory usage and computational efficiency.

Experimental Comparison and Benchmark Results

Large-Scale QSAR Benchmarking Study

A comprehensive quantitative structure-activity relationship (QSAR) benchmarking study evaluated 157,590 gradient boosting models across 16 datasets and 94 endpoints, comprising 1.4 million compounds total. The study provides direct performance comparisons between XGBoost, LightGBM, and CatBoost (though not Random Forest) for chemical data [25].

Table 1: Overall Performance Comparison in QSAR Benchmarking

| Algorithm | Predictive Performance | Training Speed | Memory Efficiency | Best Use Cases |

|---|---|---|---|---|

| XGBoost | Generally achieves best predictive performance [25] | Moderate | Moderate | Datasets where predictive accuracy is prioritized over training speed |

| LightGBM | Competitive, slightly lower than XGBoost in some studies [25] | Fastest, especially for larger datasets [25] | High, due to EFB feature bundling [8] | Large datasets (>10,000 samples), high-dimensional features, computational constraints |

| Random Forest | Robust, less prone to overfitting on small datasets | Fast for training individual trees, but slower overall for comparable performance | Low, due to storing multiple full-sized trees | Small to medium datasets, noisy data, model interpretability requirements |

Table 2: Molecular Property Prediction Performance (R² Scores)

| Molecular Property | Dataset Size | XGBoost | LightGBM | Random Forest | Best Performer |

|---|---|---|---|---|---|

| Critical Temperature | 819 compounds | 0.93 [18] | 0.92 [18] | 0.89* | XGBoost |

| Boiling Point | 4,915 compounds | 0.91 [18] | 0.90 [18] | 0.87* | XGBoost |

| Melting Point | 7,476 compounds | 0.88 [18] | 0.87 [18] | 0.85* | XGBoost |

| Vapor Pressure | 398 compounds | 0.79 [18] | 0.78 [18] | 0.82* | Random Forest |

Note: Random Forest values are estimated based on typical performance patterns observed in comparative studies where exact values were not provided in the sourced materials.

High-Dimensional Classification Performance

In a separate high-dimensional classification problem with over 60,000 observations and 103 numerical features (highly sparse feature space), the performance differences were quantified as follows [26]:

Table 3: High-Dimensional Sparse Data Performance

| Metric | XGBoost | LightGBM |

|---|---|---|

| Multi-logloss (Train) | 0.369 | 0.383 |

| Multi-logloss (Validation) | 0.415 | 0.418 |

| Training Time | 3 minutes 52 seconds | 2 minutes 26 seconds |

| Speed Advantage | - | ~40% faster |

The results demonstrate nearly equivalent predictive performance between XGBoost and LightGBM on high-dimensional sparse data, with LightGBM providing significant training speed advantages. This pattern consistently appears across multiple studies, making LightGBM particularly valuable for large-scale virtual screening campaigns and high-throughput data where computational efficiency is crucial.

Experimental Protocols and Methodologies

QSAR Benchmarking Protocol

The large-scale QSAR benchmarking study employed the following rigorous methodology to ensure fair algorithm comparisons [25]:

Dataset Selection: 16 classification and regression datasets from MoleculeNet, MolData, and ChEMBL with 94 different endpoints covered a wide range of dataset sizes and class-imbalance ratios.

Data Preprocessing: Molecular structures were encoded using standardized molecular descriptors. Dataset splits used scaffold splitting to evaluate generalization to novel chemical structures.

Hyperparameter Optimization: Extensive Bayesian optimization was performed for each algorithm, evaluating key parameters including:

- Maximum tree depth and number of leaves

- Learning rate and number of estimators

- Regularization parameters (L1 and L2)

- Feature and row sampling ratios

Evaluation Metrics: Models were evaluated using multiple metrics including ROC-AUC, precision-recall AUC, and root mean square error (RMSE) with repeated cross-validation to ensure statistical significance.

Molecular Property Prediction Workflow

The experimental workflow for molecular property prediction typically follows these stages, as implemented in cheminformatics platforms like ChemXploreML [18]:

Table 4: Essential Tools for Molecular Property Prediction Research

| Tool Category | Specific Tools | Function | Considerations for Sparse Data |

|---|---|---|---|

| Molecular Representation | RDKit [18], Mol2Vec [18], VICGAE [18] | Generates numerical representations from chemical structures | Higher-dimensional representations (300+ dimensions) may increase sparsity; consider dimensionality reduction |

| Machine Learning Frameworks | Scikit-learn (Random Forest), XGBoost, LightGBM, CatBoost | Implements machine learning algorithms | LightGBM preferred for high-dimensional data; XGBoost for maximum accuracy on smaller datasets |

| Hyperparameter Optimization | Optuna [18], Bayesian Search | Automates model parameter tuning | Critical for all algorithms; different hyperparameters important for each algorithm |

| Cheminformatics Platforms | ChemXploreML [18] | Integrated desktop application for molecular property prediction | Provides modular pipeline for comparing multiple algorithms on standardized datasets |

| Data Sources | CRC Handbook [18], PubChem [18], ChEMBL [25] | Provides experimental data for training and validation | Data quality and distribution significantly impact model performance on sparse datasets |

Practical Guidelines for Algorithm Selection

Dataset Size Considerations

The optimal algorithm choice depends significantly on dataset size and characteristics:

Small datasets (<1,000 compounds): Random Forest often provides more robust performance due to its simplicity and reduced overfitting tendency. For very small datasets in the "ultra-low data regime" (<50 samples), specialized techniques like multi-task learning may be necessary [27].

Medium datasets (1,000-10,000 compounds): XGBoost typically achieves the best predictive performance, provided sufficient computational resources are available for hyperparameter tuning and training.

Large datasets (>10,000 compounds): LightGBM provides the best trade-off between performance and computational efficiency, with significantly faster training times on high-dimensional data [25] [26].

Handling Data Sparsity and High-Dimensionality

For specifically handling sparse, high-dimensional chemical data:

When sparsity results from one-hot encoded categorical features: LightGBM's Exclusive Feature Bundling provides distinct advantages by reducing effective dimensionality while maintaining information content [8].

When sparsity patterns are irregular or unknown: XGBoost's sparsity-aware split finding automatically adapts to missing value patterns without requiring manual preprocessing [8].

When feature importance interpretation is crucial: Random Forest provides robust feature importance metrics that are less affected by sparse feature correlations compared to boosting methods [25].

Hyperparameter Tuning Recommendations

Based on large-scale benchmarking studies, the most critical hyperparameters to optimize for each algorithm are [25] [26]:

- XGBoost:

max_depth,learning_rate,subsample,colsample_bytree, regularization parameters (alpha,lambda) - LightGBM:

num_leaves,min_data_in_leaf,learning_rate,feature_fraction,bagging_fraction - Random Forest:

max_depth,max_features,min_samples_split,n_estimators

For all algorithms, the benchmarking studies emphasize optimizing as many hyperparameters as possible rather than focusing only on a subset, as this significantly impacts final predictive performance, especially on sparse, high-dimensional chemical data.

The comparison of Random Forest, XGBoost, and LightGBM for handling sparse, high-dimensional chemical data reveals a consistent pattern: there is no single superior algorithm for all scenarios. XGBoost generally achieves the highest predictive accuracy on molecular property prediction tasks, making it ideal when predictive performance is the primary concern and computational resources are sufficient. LightGBM provides significantly faster training times, especially on larger datasets, with minimal sacrifice in accuracy, offering the best trade-off for high-throughput applications. Random Forest remains a robust choice for smaller datasets or when model interpretability is prioritized.

The performance differences between these algorithms are often subtle, and the optimal choice depends on specific dataset characteristics, computational constraints, and project objectives. For most real-world molecular property prediction tasks involving sparse, high-dimensional data, we recommend evaluating at least two of these algorithms with proper hyperparameter tuning to identify the best solution for the specific research context.

Selecting the optimal machine learning algorithm is a critical step in molecular property prediction (MPP), a cornerstone of modern drug discovery and materials science. The performance of an algorithm can significantly influence the accuracy and reliability of predicting properties like bioactivity, solubility, or toxicity, which in turn guides high-stakes experimental decisions. Among the plethora of available models, Random Forest (RF), eXtreme Gradient Boosting (XGBoost), and Light Gradient Boosting Machine (LightGBM) have emerged as particularly prominent for their robust performance on structured, tabular data common in chemical datasets. This guide provides an objective, data-driven comparison of these three algorithms, framing their strengths and weaknesses within the specific context of MPP. The analysis is grounded in recent benchmark studies and comparative research, offering scientists a clear framework for making informed model selections based on empirical evidence rather than anecdotal preference. The ensuing sections will dissect quantitative performance metrics, detail the experimental protocols that generate them, and visualize the foundational workflows of MPP.

Performance Comparison at a Glance

The following table synthesizes findings from multiple studies to summarize the expected performance and ideal use cases for Random Forest, XGBoost, and LightGBM in molecular property prediction.

Table 1: Benchmark Performance and Ideal Use-Cases for Key Algorithms

| Algorithm | Typical Performance Profile | Ideal Data & Task Scenarios | Key Strengths | Notable Weaknesses |

|---|---|---|---|---|

| Random Forest (RF) | Strong, interpretable, and reliable performance; often a robust baseline. Excels in fraud detection and customer churn prediction [28]. | Structured/tabular data, high-dimensional data, tasks requiring high interpretability [28]. | Highly interpretable compared to neural networks; works out-of-the-box with minimal tuning; robust to overfitting [28]. | Can be computationally intensive and memory-heavy compared to more optimized boosting algorithms on very large datasets. |

| XGBoost (eXtreme Gradient Boosting) | Consistently high performance, often top-tier in competitions and production systems. Achieved AUROC of 0.828 in a molecular fingerprint-based odor prediction task, outperforming RF and LightGBM [29]. | Imbalanced datasets, large-scale datasets where accuracy is paramount; dominant in fintech and eCommerce [28]. | Exceptional handling of missing values and imbalanced data; highly optimized for performance and accuracy [28]. | Can be less memory-efficient than LightGBM on very large datasets; requires more careful hyperparameter tuning than RF [28]. |

| LightGBM (Light Gradient Boosting Machine) | Highly competitive accuracy with superior speed and lower memory footprint. In a benchmark, performed robustly (AUROC 0.810) but was surpassed by XGBoost (AUROC 0.828) on a specific odor prediction task [29]. | Very large datasets, applications with computational or memory constraints; common in logistics and supply chain optimization [28]. | Faster training speed and lower memory usage than XGBoost due to histogram-based learning and leaf-wise growth [28] [29]. | The leaf-wise growth can sometimes lead to overfitting on smaller datasets if not properly regularized. |

Beyond direct benchmarks, a large-scale systematic study highlighted that the choice of molecular representation (e.g., fingerprints vs. graphs) can have a more significant impact on final model performance than the choice of algorithm itself [30]. This underscores that the algorithm is one component in a larger pipeline.

Experimental Protocols and Methodologies

The performance data cited in benchmarks are derived from rigorous and standardized experimental protocols. Understanding these methodologies is crucial for interpreting results and replicating studies.

Common Workflow for Benchmarking

A typical benchmarking workflow in MPP involves several key stages, from data preparation to model evaluation, often addressing the critical challenge of Out-of-Distribution (OOD) generalization [31] [32].

Key Methodological Details

Data Splitting and Generalization Evaluation: To properly assess model utility for molecule discovery, benchmarks must evaluate performance on out-of-distribution (OOD) data. The BOOM benchmark creates OOD splits by using a kernel density estimator to identify molecules with property values at the tail ends of the distribution, simulating the discovery of novel compounds [32]. Studies show that while models perform well on random splits, scaffold splits (grouping molecules by their core Bemis-Murcko scaffold) and particularly cluster splits (splitting based on chemical similarity clusters) pose significantly greater challenges [31]. The correlation between in-distribution (ID) and OOD performance is strong for scaffold splits (Pearson r ~0.9) but weakens considerably for cluster splits (r ~0.4), indicating that model selection based on ID performance alone is unreliable for real-world generalization [31].

Model Training and Hyperparameter Optimization: Robust benchmarks employ corrected k-fold cross-validation techniques to account for overlaps in training sets and reduce bias in performance estimates [21]. Hyperparameter optimization is typically performed via Bayesian search routines or grid search to ensure models are fairly compared at their best possible configuration [21] [30]. For tree-based models like RF, XGBoost, and LightGBM, this involves tuning parameters such as tree depth, learning rate (for boosting), number of estimators, and regularization terms.

Performance Metrics: A suite of metrics is used to evaluate model performance comprehensively. For regression tasks, common metrics include Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE). For classification tasks, metrics include Area Under the Receiver Operating Characteristic Curve (AUROC), Area Under the Precision-Recall Curve (AUPRC), Accuracy, Precision, and Recall [21] [29]. AUPRC is often emphasized for imbalanced datasets common in drug discovery.

The Scientist's Toolkit: Essential Research Reagents

The experimental workflow relies on a suite of computational tools and data resources. The following table details these essential "research reagents" for molecular property prediction.

Table 2: Key Research Reagents for Molecular Property Prediction

| Tool / Resource | Type | Primary Function in MPP |

|---|---|---|

| RDKit | Software Library | Calculates molecular descriptors (e.g., RDKit2D), generates fingerprints (e.g., ECFP, Morgan), and handles fundamental cheminformatics tasks [29] [30]. |

| Therapeutic Data Commons (TDC) | Data Repository | Provides standardized benchmark datasets for ADME and other molecular properties, facilitating fair model comparison [33]. |

| AssayInspector | Diagnostic Tool | A model-agnostic package for data consistency assessment; identifies outliers, batch effects, and annotation discrepancies across datasets before modeling [33]. |

| Extended-Connectivity Fingerprints (ECFP) | Molecular Representation | A circular fingerprint that captures atom environments within a specified radius, serving as a powerful fixed representation for traditional ML models [29] [30]. |

| SMILES | Molecular Representation | A string-based representation of a molecule's structure; used directly by sequence-based models or as a starting point for generating other representations [34] [30]. |

| Graph Neural Networks (GNNs) | Model Architecture | Learns representations directly from molecular graphs, capturing complex structural relationships beyond what fixed fingerprints offer [34] [32]. |

In the competitive landscape of molecular property prediction, XGBoost frequently emerges as the top performer when paired with informative molecular representations like Morgan fingerprints, particularly on benchmark tasks where predictive discrimination is the key metric [29]. However, LightGBM presents a compelling alternative for projects dealing with massive datasets or operating under computational constraints, offering competitive accuracy with superior speed and memory efficiency [28]. Random Forest remains a valuable tool for its robustness, interpretability, and effectiveness as a strong baseline model, especially when initial model transparency is required [28].

The field is evolving beyond a simple competition between algorithms. Future directions point toward hybrid approaches that combine the strengths of different methodologies. For instance, new frameworks are emerging that integrate knowledge extracted from Large Language Models (LLMs) with structural features from pre-trained molecular models, using the combined representation to train final predictors, which can include Random Forest or boosting algorithms [34]. Furthermore, the critical importance of data quality and consistency is being increasingly recognized, with tools like AssayInspector ensuring that the input data is reliable, thereby enabling any model, regardless of its architecture, to perform at its best [33]. Ultimately, the choice between Random Forest, XGBoost, and LightGBM should be guided by the specific data characteristics, computational resources, and performance requirements of the research project at hand.

Implementing Algorithms for Molecular Property Prediction: Best Practices and Case Studies

In the field of computational chemistry and drug discovery, molecular representation serves as the fundamental bridge between chemical structures and their predicted biological activities or physicochemical properties. Transforming molecules into computer-readable formats enables researchers to apply machine learning (ML) models for crucial tasks such as virtual screening, activity prediction, and lead optimization [35]. The choice of representation strategy directly influences the performance and interpretability of predictive models, making it a critical consideration in quantitative structure-activity relationship (QSAR) and quantitative structure-property relationship (QSPR) studies [36] [37].

Molecular descriptors play a fundamental role in chemistry, pharmaceutical sciences, and health research by transforming molecules into numbers that allow mathematical treatment of chemical information [36] [38]. As defined by Todeschini and Consonni, "The molecular descriptor is the final result of a logic and mathematical procedure which transforms chemical information encoded within a symbolic representation of a molecule into a useful number or the result of some standardized experiment" [36]. This transformation enables researchers to navigate chemical space effectively and identify promising compounds for further development.

This guide provides a comprehensive comparison of three fundamental representation strategies—Morgan fingerprints, functional group-based representations, and molecular descriptors—within the specific context of predicting molecular properties using ensemble machine learning algorithms. We examine experimental data, detailed methodologies, and practical implementations to assist researchers in selecting optimal representation strategies for their specific challenges in molecular property prediction.

Molecular Representation Fundamentals: A Taxonomy of Approaches

Molecular representations can be systematically classified based on the level of structural information they encode, ranging from simple atomic counts to complex three-dimensional and dynamic representations [36] [37] [38]. Understanding this taxonomy is essential for selecting appropriate representations for specific predictive tasks.

Hierarchical Classification of Molecular Representations

Table 1: Classification of Molecular Descriptors by Information Content and Representation Level

| Descriptor Level | Structural Information Encoded | Example Descriptors | Key Characteristics |

|---|---|---|---|

| 0D Descriptors | Atom types, molecular composition | Molecular weight, atom counts, bond counts [36] [37] | No structural or connectivity information; high degeneracy; simple to compute [38] |

| 1D Descriptors | Substructure fragments, functional groups | Fingerprints, functional group counts, substructure lists [36] [38] | Presence/absence of specific substructures; no topological relationships [38] |

| 2D Descriptors | Atom connectivity, molecular topology | Morgan fingerprints, topological indices, graph invariants [36] [37] | Encodes connectivity without 3D geometry; graph-based representations [36] |

| 3D Descriptors | Spatial molecular geometry | 3D-MoRSE descriptors, WHIM descriptors, quantum-chemical descriptors [36] | Based on 3D atomic coordinates; captures steric and electronic properties [36] [38] |

| 4D Descriptors | Interaction fields, molecular dynamics | GRID descriptors, CoMFA fields [36] | Derived from molecule-probe interactions; incorporates conformational flexibility [38] |

The information content of molecular descriptors increases progressively from 0D to 4D representations, with a corresponding increase in computational complexity and potential for overfitting [38]. As noted in scientific literature, "The best descriptors are those whose information content is comparable with the information content of the response for which the model is searched for" [38]. This principle highlights the importance of matching representation complexity to the specific prediction task rather than automatically selecting the most complex representation available.

Theoretical Foundations of Molecular Representation

Effective molecular representations should satisfy several fundamental criteria to ensure their utility in predictive modeling. According to established principles, robust molecular descriptors should [36]:

- Be invariant to atom labeling and numbering

- Be defined by an unambiguous algorithm

- Have a well-defined applicability to molecular structures

- Possess structural interpretation

- Show minimal degeneracy (ability to distinguish different molecules)

- Be applicable to a broad class of molecules

- Be able to discriminate among isomers [36]

Different representation strategies make varying trade-offs between these desirable properties. For instance, while 3D descriptors typically exhibit lower degeneracy than simpler descriptors, they may introduce unnecessary complexity for properties that primarily depend on 2D topology [36]. Furthermore, the invariance properties of descriptors—particularly translational and rotational invariance for 3D descriptors—represent essential requirements for meaningful molecular comparisons [36].

Comparative Analysis of Representation Strategies

Morgan Fingerprints: Circular Topological Fingerprints

Morgan fingerprints, also known as circular fingerprints or Extended Connectivity Fingerprints (ECFP), belong to the category of 2D topological descriptors that encode molecular structure based on the connectivity of atoms within a specified bond radius [39]. The algorithm operates by iteratively identifying circular neighborhoods around each non-hydrogen atom in the molecule, with each iteration corresponding to an increasing bond radius [39]. At radius 0, the fingerprint encodes only individual atoms; at radius 1, it captures atoms and their immediate neighbors; at radius 2, it includes atoms two bonds away, and so forth [39].

These fingerprints can be represented as either binary vectors (recording presence/absence of specific substructures) or count-based vectors (recording the frequency of each substructure) [40]. Comparative studies have demonstrated that count-based Morgan fingerprints (C-MF) generally outperform their binary counterparts (B-MF) in predictive modeling tasks. In an evaluation across ten contaminant-related datasets, C-MF achieved superior predictive performance in nine cases, with the degree of improvement depending on both the ML algorithm employed and the chemical diversity of the dataset [40].

Table 2: Morgan Fingerprint Variants and Performance Characteristics

| Fingerprint Type | Representation | Key Advantages | Performance Notes |

|---|---|---|---|

| Binary Morgan Fingerprint (B-MF) | Bit vector indicating presence/absence of substructures [39] | Computational efficiency; widely supported [39] | Lower predictive performance compared to count-based versions in multiple studies [40] |

| Count-Based Morgan Fingerprint (C-MF) | Integer vector counting substructure occurrences [40] | Quantifies feature frequency; enhanced model performance [40] | Outperformed B-MF in 9 of 10 datasets; better correlation with properties dependent on group prevalence [40] |

| Sparse Morgan Fingerprint | Variable-size sparse vector [41] | Memory efficiency for large databases [41] | Suitable for similarity searching and clustering [41] |

The radius parameter significantly influences the information content of Morgan fingerprints. Smaller radii (e.g., radius=2) capture local atomic environments, while larger radii (e.g., radius=5) encode more extended molecular neighborhoods [39]. In practical applications, radius=2 or 3 with 1024-2048 bits represents a common configuration that balances specificity and generalization [39] [41].

Functional Group Representations: Chemically Meaningful Substructure Encoding

Functional group-based representations constitute a chemically intuitive approach that decomposes molecules into recognizable substructures such as hydroxyl groups, carbonyl groups, aromatic rings, and other pharmacophoric features [42] [43]. These representations operate at a higher level of abstraction than atom-based representations, aligning with chemical intuition and providing natural interpretability [42].

The "group graph" representation represents an advanced implementation of this paradigm, where molecules are transformed into graphs with functional groups as nodes and their connections as edges [42]. This approach offers three significant advantages: (1) the substructures reflect diversity and consistency across molecular datasets; (2) it retains molecular structural features with minimal information loss; and (3) it enables interpretation of how specific substructures influence molecular properties [42]. In experimental evaluations, Graph Isomorphism Networks (GIN) applied to group graphs demonstrated superior performance in predicting molecular properties and drug-drug interactions compared to atom-level graphs, while also achieving approximately 30% reduction in computational runtime [42].

Another innovative approach, attention-based functional-group coarse-graining, integrates group-contribution concepts with self-attention mechanisms to capture intricate chemical interactions [43]. This method creates a low-dimensional embedding that substantially reduces data requirements for training, achieving over 92% accuracy in predicting adhesive polymer monomer properties with only 600 labeled examples [43]. The invertible nature of this embedding further enables automatic generation of new molecular structures from the learned chemical subspace [43].

Table 3: Functional Group Representation Approaches and Characteristics

| Representation Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Group Graph [42] | Nodes: functional groups/fragmentsEdges: connections between groups | Minimal information loss; 30% faster than atom graphs; interpretable [42] | Dependency on fragmentation rules; potential vocabulary issues [42] |

| Attention-Based Coarse-Graining [43] | Self-attention on functional groups; invertible embedding | Data efficiency; high accuracy with limited data; generative capability [43] | Complexity; requires implementation expertise [43] |

| Traditional Functional Group Counts [37] | Counting occurrences of predefined chemical groups | Simple implementation; chemically intuitive [37] | Limited representation of connectivity and global structure [37] |

Comprehensive Molecular Descriptors: Multi-Level Feature Extraction

Molecular descriptors encompass a broad category of numerical representations that capture various aspects of molecular structure and properties [36] [37] [38]. These can range from simple constitutional descriptors (0D) to complex three-dimensional and interaction-based descriptors (3D/4D) [36]. The Dragon software package and alvaDesc represent comprehensive tools that calculate thousands of molecular descriptors across different categories [36].

More recently, Mordred has emerged as a popular open-source alternative that calculates a comprehensive set of molecular descriptors directly from molecular structures [36]. As a Python library based on RDKit, Mordred offers extensive descriptor coverage while maintaining computational efficiency [36]. Key descriptor categories include:

- Constitutional descriptors: Molecular weight, atom counts, bond counts [37] [38]

- Topological descriptors: Connectivity indices, graph-theoretical measures [36] [38]

- Geometrical descriptors: Molecular dimensions, surface areas, volume descriptors [36]

- Electronic descriptors: Polarizability, HOMO/LUMO energies, charge descriptors [36]

The selection of appropriate descriptors requires careful consideration of the target property. As noted in literature, "The best descriptors are those whose information content is comparable with the information content of the response for which the model is searched for" [38]. Using excessively complex descriptors for simple properties can introduce noise and reduce model stability, while oversimplified representations may lack sufficient information for complex property prediction [38].

Experimental Comparison and Performance Benchmarking

Quantitative Performance Across Representation Strategies

Experimental evaluations across multiple studies provide insights into the relative performance of different molecular representation strategies when combined with ensemble machine learning algorithms. A comprehensive study comparing count-based Morgan fingerprints (C-MF) with binary Morgan fingerprints (B-MF) across ten contaminant-related datasets revealed consistent advantages for the count-based approach [40].

Table 4: Performance Comparison of Representation Strategies with Ensemble ML Algorithms

| Representation Strategy | Best-Performing ML Algorithm | Typical Performance Range (R²) | Key Strengths | Interpretability |

|---|---|---|---|---|

| Morgan Fingerprints (Count-Based) [40] | CatBoost, XGBoost [40] | 0.72-0.89 (varies by dataset) [40] | Captures local atomic environments; robust across diverse chemistries [39] [40] | Medium (bit visualization possible) [39] [41] |

| Functional Group (Group Graph) [42] | Graph Isomorphism Network [42] | Superior to atom graphs in multiple benchmarks [42] | Chemically intuitive; efficient; captures activity cliffs [42] | High (direct substructure correlation) [42] |

| Comprehensive Molecular Descriptors [36] | Varies by property complexity [36] | Property-dependent [36] | Broad feature coverage; can be tailored to specific endpoints [36] [38] | Variable (requires descriptor analysis) [36] |

The performance advantage of count-based Morgan fingerprints over binary versions exhibits dependency on both the machine learning algorithm and dataset characteristics. The enhancement is proportional to the difference in chemical diversity calculated by B-MF and C-MF, with greater improvements observed in more diverse chemical datasets [40]. For model interpretation, C-MF-based models demonstrate a wider range of SHAP values and can elucidate the effect of atom group counts on the target property [40].

Experimental Protocols and Methodologies

Morgan Fingerprint Implementation Protocol

The standard methodology for generating and evaluating Morgan fingerprints involves the following steps, typically implemented using RDKit [39] [41]:

Molecule Standardization: Input structures (SMILES, SDF) are standardized using RDKit, including sanitization, neutralization, and stereochemistry perception [39].

Fingerprint Generation:

For count-based fingerprints [40]:

Model Training: Fingerprints are used as feature vectors for machine learning algorithms, with standard train-test splits (70-30% or 80-20%) and cross-validation (5-10 fold) to ensure robust performance estimation [40].

Model Interpretation: Bit information stored during fingerprint generation enables visualization of specific substructures associated with each bit, facilitating chemical interpretation [39] [41].

Functional Group Representation Methodology

The group graph construction protocol involves three key stages [42]:

Group Matching:

- Identify all aromatic atoms and group connected aromatic atoms into rings

- Identify broken functional groups via pattern matching using RDKit

- Group remaining non-active atoms into fatty carbon groups

Substructure Extraction:

- Extract SMILES of all identified groups

- Establish connections between substructures (edges)

- Identify attachment atom pairs between connected substructures

Graph Construction:

- Represent substructures as nodes

- Represent connections between substructures as edges

- Encode features of attachment atom pairs as edge features

For attention-based functional group representations, the methodology incorporates additional steps [43]:

- Encode functional groups as tokens in a sequence

- Apply self-attention mechanisms to capture group interactions

- Generate latent molecular embeddings invertible to structures

- Jointly train on reconstruction and property prediction tasks

Implementation Guide: The Scientist's Toolkit

Essential Software and Libraries

Table 5: Essential Tools for Molecular Representation and Machine Learning

| Tool/Library | Primary Function | Key Features | License |

|---|---|---|---|

| RDKit [39] [41] | Cheminformatics toolkit | Morgan fingerprints, molecular descriptors, substructure matching [39] | Open source |

| Mordred [36] | Molecular descriptor calculation | 1800+ 2D/3D descriptors, Python integration, RDKit-based [36] | Open source |

| alvaDesc [36] | Molecular descriptor calculation | 5500+ descriptors, GUI/CLI/Python interfaces, updated 2025 [36] | Commercial |

| Scikit-fingerprints [36] | Molecular fingerprint calculation | Multiple fingerprint types, scikit-learn compatibility [36] | Open source |

| XGBoost/LightGBM/CatBoost [21] [40] | Ensemble machine learning | Gradient boosting implementations, handling of categorical features [21] [40] | Open source |

Practical Implementation Considerations

When implementing molecular representation strategies for machine learning applications, several practical considerations significantly impact model performance and utility:

Data Preprocessing and Standardization: Consistent molecule standardization is crucial for reproducible results. This includes normalization of tautomers, neutralization of charges, explicit hydrogen handling, and stereochemistry standardization [39]. The same standardization protocol must be applied consistently across training and prediction datasets.

Hyperparameter Optimization for Representation: Critical parameters for Morgan fingerprints include radius (typically 2-3) and vector length (1024-2048 bits) [39] [41]. For functional group representations, fragmentation rules and group vocabulary size require careful tuning [42]. Representation-specific parameters should be optimized alongside model hyperparameters using cross-validation.

Representation Selection Strategy: The choice of representation should align with both the prediction task and available data. For large, diverse datasets with complex structure-activity relationships, Morgan fingerprints or comprehensive descriptors often perform well [40]. For data-scarce scenarios or when chemical interpretability is prioritized, functional group representations offer advantages [42] [43].

Based on comprehensive experimental evidence and practical implementation experience, we provide the following strategic recommendations for selecting molecular representation strategies in conjunction with ensemble machine learning algorithms:

For general-purpose molecular property prediction with large, diverse datasets, count-based Morgan fingerprints combined with gradient boosting algorithms (XGBoost, CatBoost, or LightGBM) represent a robust default choice. The count-based implementation provides superior performance compared to binary fingerprints while maintaining reasonable computational efficiency [40]. The radius parameter should be tuned based on the complexity of structure-property relationships, with radius=2 serving as a practical starting point [39] [41].

When model interpretability and chemical insight are prioritized, particularly in lead optimization or structure-activity relationship studies, functional group-based representations (group graphs or attention-based coarse-graining) offer significant advantages. These representations naturally align with chemical intuition and enable direct correlation between specific substructures and molecular properties [42] [43]. The group graph approach demonstrates particular strength in identifying activity cliffs and suggesting structural modifications [42].