Reducing Computational Cost in Chemistry ML: Strategies for Faster, Cheaper Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on reducing the computational cost of machine learning (ML) in chemistry.

Reducing Computational Cost in Chemistry ML: Strategies for Faster, Cheaper Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on reducing the computational cost of machine learning (ML) in chemistry. It explores the foundational need for cost-effective ML, driven by the high expense of quantum mechanical calculations and the growing market for AI in drug discovery. The piece details cutting-edge methodological approaches, including low-scaling quantum mechanics, efficient geometric deep learning architectures, and knowledge-enhanced models. It further offers practical troubleshooting and optimization techniques, such as white-box ML and active learning, and concludes with validation strategies through case studies and performance benchmarks, demonstrating how streamlined ML pipelines can accelerate biomedical research.

The High Stakes of Computation: Why Cost Reduction is Critical in Chemistry ML

Frequently Asked Questions

FAQ 1: What makes simulating catalysts with transition metals so computationally expensive? Transition metals have partially filled d-orbitals that allow them to easily exchange electrons with other molecules [1]. This property makes their electronic structure "multireference" in nature, meaning they cannot be accurately described by a single electronic configuration. High-level quantum chemical methods required to model this are prohibitively slow, making the simulation of catalytic dynamics under realistic conditions extremely costly [1].

FAQ 2: Why is the "gold standard" of quantum chemistry, CCSD(T), not used for most practical applications? While coupled cluster with single, double, and perturbative triple excitations (CCSD(T)) is considered the gold standard for accuracy, its computational cost is staggering [2]. The cost scales so steeply with system size that it becomes impossible to apply to large molecules like pharmaceuticals or complex materials, creating a significant bottleneck for high-accuracy studies [2].

FAQ 3: What is the primary source of cost when using quantum algorithms on quantum computers?

For quantum phase estimation, a key algorithm for finding molecular energies, a major cost comes from approximating the time evolution operator, e^{iĤt}, using techniques like Trotterization [3]. The number of quantum gates required to achieve a desired accuracy can be immense. Furthermore, to be useful for chemistry, these algorithms require millions of physical qubits to model industrially relevant systems, a scale far beyond current hardware [4].

FAQ 4: How does the choice of basis set affect the cost of a quantum chemistry calculation?

The number of spin orbitals (N) in a molecule grows with the size of the basis set. For a system with η electrons, the number of possible configurations scales as the binomial coefficient C(N, η), which grows very quickly with both N and η [3]. This explosion in possible states is a fundamental reason why exact calculations on classical computers become intractable for even moderately sized molecules.

FAQ 5: What are "quantum-inspired" algorithms and how do they help with cost? Quantum-inspired algorithms are techniques designed for quantum computers that are instead run on classical computers [4]. They can sometimes solve specific problems more efficiently than traditional classical methods, offering a way to explore the potential of quantum approaches without needing access to fragile and expensive quantum hardware. However, they cannot fully replicate a true quantum computer [4].

Data Tables: Scaling of Computational Cost

Table 1: Computational Resource Estimates for Quantum Simulation of a Model System (Homogeneous Electron Gas)

| Method | Trotter Error Bound Method | Relative Gate Count | Key Application Insight |

|---|---|---|---|

| Trotter-based Phase Estimation | Previous Methods | Baseline | Resource estimates were often overestimated, making algorithms seem more costly than necessary [3]. |

| Trotter-based Phase Estimation | New Factorized Bounds (Cholesky) | ~13x lower [3] | Tighter error bounds allow for more economical use of quantum hardware, especially at half-filling [3]. |

| Qubitization | N/A | Varies with density | Scales more favorably in high-electron-density regimes compared to Trotter methods [3]. |

Table 2: Qubit Requirements for Industrial Chemistry Problems on a Quantum Computer

| Target System | Example | Estimated Physical Qubits Required | Classical Computing Challenge |

|---|---|---|---|

| Nitrogen-fixing Enzyme | Iron-molybdenum cofactor (FeMoco) | ~2.7 million (2021 estimate) [4] | Strongly correlated electrons make these systems extremely difficult for classical methods like DFT to model accurately [4]. |

| Metabolic Enzyme | Cytochrome P450 | ~Similar to FeMoco [4] | Modeling the reaction mechanisms of these large metalloenzymes is currently infeasible with exact quantum methods on classical computers [4]. |

Experimental Protocols for Cost Reduction

Protocol 1: Utilizing the Weighted Active Space Protocol (WASP) for Catalyst Dynamics

- Objective: To simulate the dynamics of transition metal catalysts with multireference accuracy at a fraction of the computational cost [1].

- Methodology:

- Generate Reference Data: Use a high-level multireference quantum chemistry method (like MC-PDFT) to calculate consistent wave function labels (energies and forces) for a set of sampled molecular geometries along a reaction pathway [1].

- Train Machine-Learned Potential: Employ the WASP framework to train a machine-learned interatomic potential on the generated reference data. WASP ensures label consistency by creating a unique wave function for a new geometry as a weighted combination of wave functions from known, similar structures [1].

- Run Molecular Dynamics: Use the trained machine-learned potential to run fast molecular dynamics simulations, capturing catalytic behavior under realistic conditions of temperature and pressure [1].

- Outcome: This protocol can reduce simulation time from months to minutes while maintaining the accuracy of the high-level quantum method [1].

Protocol 2: Applying the AIQM1 Hybrid Method for Organic Molecules

- Objective: To compute ground-state energies and geometries of diverse organic compounds at near-CCSD(T) accuracy with the speed of semiempirical methods [2].

- Methodology:

- Energy Calculation: The total energy in AIQM1 is a sum of three components:

E_AIQM1 = E_SQM + E_NN + E_disp[2]. - SQM Baseline: Calculate the base energy (

E_SQM) using a modified semiempirical quantum mechanical (SQM) Hamiltonian (ODM2*) [2]. - Neural Network Correction: Apply a neural network (

E_NN) trained to correct the SQM energy towards a higher-level of theory (DFT or coupled cluster) [2]. - Dispersion Correction: Add a state-of-the-art dispersion correction term (

E_disp) to properly describe long-range interactions [2].

- Energy Calculation: The total energy in AIQM1 is a sum of three components:

- Outcome: This method provides a general-purpose tool for rapidly and accurately investigating chemical compounds, as demonstrated by its ability to determine geometries of challenging systems like polyyne molecules and fullerene C60 [2].

Protocol 3: Using Δ-Machine Learning to Select Quantum Chemistry Methods

- Objective: To intelligently select the most computationally efficient quantum chemical method that will still deliver a result within a desired accuracy threshold [5].

- Methodology:

- Train ∆-ML Models: Train machine learning models on a diverse dataset of molecular interactions. The models learn to predict the error of a given quantum chemistry method relative to the CCSD(T)/CBS gold standard [5].

- Predict Method Performance: For a new molecular system, use the trained ∆-ML models to predict the errors of various candidate quantum chemistry methods.

- Select Optimal Method: Choose the method that is predicted to meet the required accuracy (e.g., error < 0.1 kcal/mol) with the lowest computational cost [5].

- Outcome: This framework allows researchers to bypass costly benchmarking and directly identify reliable and efficient computational methods, dramatically reducing the overall computational cost of projects [5].

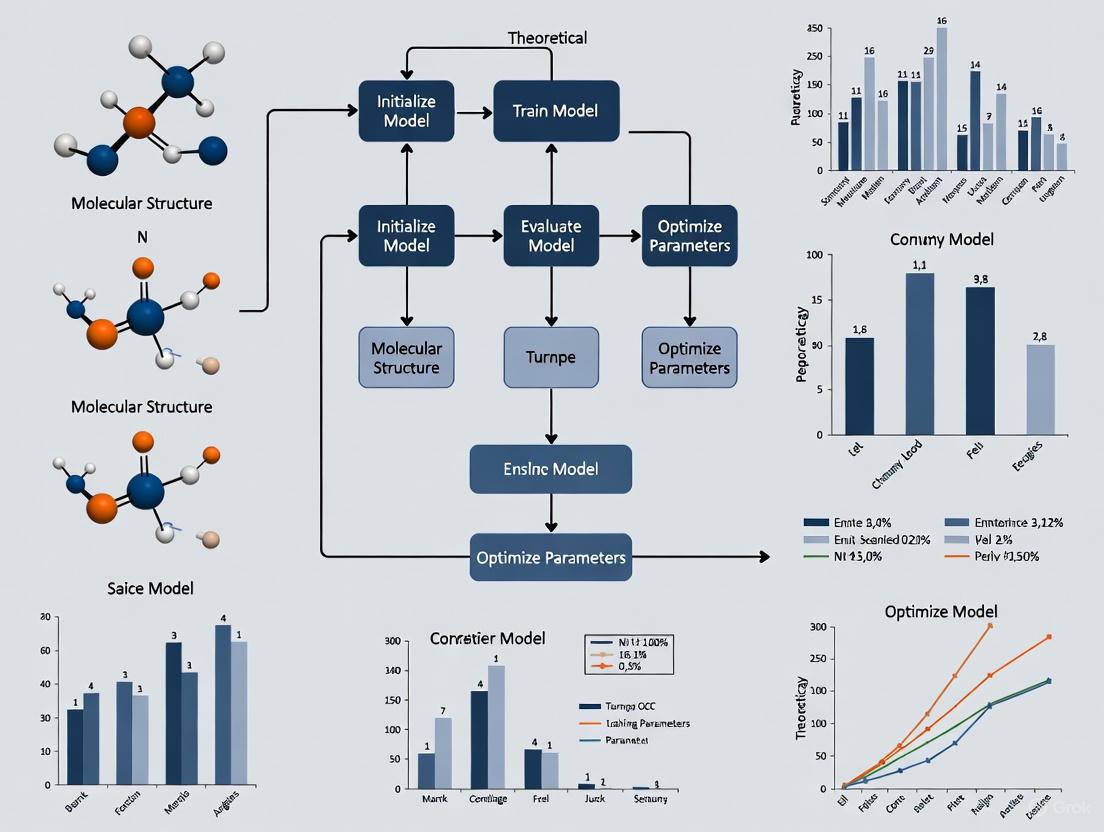

Visualizing the Computational Cost Bottleneck

Diagram 1: The Fundamental Accuracy vs. Cost Trade-off

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Software and Algorithmic "Reagents" for Managing Computational Cost

| Tool / Method | Function | Applicable Scenario |

|---|---|---|

| Weighted Active Space Protocol (WASP) | Integrates multireference quantum chemistry with machine learning to simulate catalytic dynamics accurately and quickly [1]. | Studying transition metal catalysts and reaction dynamics. |

| AIQM1 | A hybrid AI-quantum mechanical method that provides coupled-cluster level accuracy at semiempirical speed for neutral, closed-shell organic molecules [2]. | Rapid screening and accurate geometry optimization of organic compounds. |

| Δ-Machine Learning (∆-ML) Ensembles | Predicts the error of a quantum chemistry method relative to a gold standard, enabling optimal method selection for a given accuracy target [5]. | Choosing the most efficient computational method for calculating intermolecular interactions. |

| Trotter Error Bounds (e.g., Cholesky) | Provides tighter estimates of the error in quantum algorithms, reducing the number of quantum gates required for a simulation [3]. | Optimizing resource requirements for quantum simulations of chemical systems on future quantum hardware. |

| GPU-Accelerated Libraries (e.g., cuQuantum) | Drastically speeds up the simulation of quantum circuits and molecular dynamics on classical hardware [6]. | Running high-fidelity simulations of quantum systems or molecular dynamics. |

Technical Support Center: FAQs & Troubleshooting Guides

This support center provides targeted assistance for researchers and scientists working on computational cost reduction in chemistry-focused machine learning (ML) tuning. The guides below address common technical issues, with protocols and solutions framed within our core thesis: maximizing research efficiency and model performance while minimizing resource expenditure.

Frequently Asked Questions (FAQs)

Q1: Our AI model performs well in training but fails catastrophically in real-world deployment. What could be the cause and how can we fix it?

This is a classic sign of underspecification, where models learn spurious correlations from the training data that do not generalize [7].

- Diagnosis: Check for significant performance drops between validation sets and a small, curated test set that mirrors the real-world application. A large discrepancy indicates underspecification.

- Solution:

- Improve Data Quality: Incomplete, inconsistent, or outdated datasets are a primary cause [7]. Implement rigorous data validation and continuous monitoring of data sources.

- Data Harmonization: Variations in lab protocols create "batch effects" that can mislead models. Standardize data recording and storage where possible [8].

- Increase Data Diversity: Ensure your training data covers the full spectrum of biological and chemical scenarios your model will encounter. Utilize federated data access to safely learn from distributed datasets without moving sensitive information [8].

Q2: Training our link prediction model on a large biological knowledge graph is computationally prohibitive, taking over 14 days. How can we reduce this time?

This issue stems from computational inefficiency in the model architecture and infrastructure [9].

- Diagnosis: Profile your code to identify bottlenecks. Common issues include inefficient data loading, non-optimized model layers, or hardware limitations.

- Solution:

- Leverage Cloud Infrastructure: Migrate to a cloud-based system (e.g., AWS) designed for high-performance computing to gain scalability [9].

- Optimize the AI Model: Research and benchmark more efficient model architectures suitable for graph data. This can lead to system becoming over 50 times faster and reducing training time by a factor of 20 [9].

- Implement a Continuous Loop: Instead of retraining from scratch, implement a continuous training and inference loop that updates models incrementally as new data arrives [9].

Q3: How can we enforce monotonicity for individual features in our Explainable Boosting Machine (EBM) to align with known biological relationships?

Enforcing domain knowledge like monotonicity improves model trust and performance.

- Diagnosis: Examine the learned graphs for a specific feature. If the relationship is known to be strictly increasing or decreasing (e.g., drug dose and efficacy), but the graph is not, monotonicity constraints are needed.

- Solution:

- During Training: Set the

monotone_constraintsparameter in theExplainableBoostingClassifierorExplainableBoostingRegressorconstructor. This parameter is a list of integers (e.g.,[1, -1, 0]) to enforce increasing, decreasing, or no monotonicity for each corresponding feature [10]. - Post-Training (Recommended): Use postprocessing (e.g., isotonic regression) on each graph output. You can call the

monotonizemethod on the EBM object. This is often preferred as it prevents the model from compensating for constraints by learning non-monotonic effects in other, correlated features [10].

- During Training: Set the

Q4: Our deep learning models for large-scale proteomics analysis yield noisy representations and fail to group patients into coherent clusters. What is the solution?

This indicates that conventional analytical methods are insufficient for the complexity and noise level of your data [9].

- Diagnosis: Confirm that the data has been properly ingested, curated, and harmonized. Standard clustering methods may fail if the data is not "AI-ready."

- Solution:

- Employ a Foundational Model: Utilize a foundational model pre-trained on large biological datasets. This model can build a robust, lower-noise representation of patients based on proteomic readings, which can then be clustered effectively [9].

- Leverage Explainability: Use explainable AI (XAI) techniques on the model's outputs to determine which proteins are responsible for the identified clusters, transforming noisy data into actionable scientific insights and biomarkers [9].

Troubleshooting Guide: Parameter-Efficient Fine-Tuning (PEFT)

Problem: Fine-tuning large language models (LLMs) for chemical tasks (e.g., molecular property prediction) is too slow and requires excessive GPU memory, making research iteration costly.

Objective: Achieve performance comparable to full fine-tuning while dramatically reducing computational costs.

Detailed Methodology:

This protocol outlines the use of Low-Rank Adaptation (LoRA), a leading PEFT method. LoRA freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture, thereby reducing the number of trainable parameters by ~0.1% to 3% [11].

Step-by-Step Experimental Protocol:

Task and Data Formulation:

- Define your specific task (e.g., classifying molecules as toxic/non-toxic).

- Prepare a high-quality dataset of 1,000-5,000 examples formatted as input-output pairs (e.g., SMILES string -> binary label).

- Perform an 80/20 train/validation split. Clean noise, handle missing values, and remove duplicates [11].

Model and Tool Setup:

- Base Model: Select a pre-trained model (e.g.,

ChemBERTafor molecular data). - Libraries: Use the

transformers,datasets, andpeftlibraries from Hugging Face. - Quantization (Optional): For extreme memory constraints, use

bitsandbytesfor 4-bit quantization (QLoRA), which allows fine-tuning a 13B parameter model on a 16 GB GPU [11].

- Base Model: Select a pre-trained model (e.g.,

LoRA Configuration:

- Key parameters to set in the

LoraConfigare:r(rank): The rank of the low-rank matrices. Start with 8.lora_alpha: The scaling parameter. Start with 16.lora_dropout: Dropout probability; start with 0.1.target_modules: The layers to apply LoRA to (e.g.,["q_proj", "v_proj"]in many Transformer models).

- Key parameters to set in the

Training Loop:

- Use a learning rate between 1e-4 and 2e-4, which is typically effective for fine-tuning [11].

- Use the

SFTTrainerfrom thetrllibrary for simplified training. - Monitor both training and validation loss continuously to detect overfitting.

Evaluation and Deployment:

- Evaluate the model on a held-out test set.

- For deployment, the LoRA matrices can be merged into the base model weights, introducing zero inference latency [11].

Expected Outcome: A fine-tuned model that achieves >95% of the performance of a fully fine-tuned model, while using drastically less memory and time, directly contributing to computational cost reduction.

Visual Guide to PEFT Decision-Making: This workflow helps you select the most efficient fine-tuning strategy for your project constraints.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential tools and materials for conducting efficient AI-driven drug discovery research, as featured in the case studies and guides above.

| Item Name | Function & Application in Cost-Reduction Research |

|---|---|

| Parameter-Efficient Fine-Tuning (PEFT) | A collection of methods (e.g., LoRA, QLoRA) that adapt large models to new tasks by updating <3% of parameters, slashing compute time and memory needs [11]. |

| Foundational Models | Large models pre-trained on vast biological datasets (protein sequences, compounds). They provide a powerful starting point for specific tasks, improving data efficiency, especially with small datasets [8]. |

| Federated Data Access | A security-compliant framework that allows AI models to learn from distributed data sources (e.g., different hospitals) without the data ever leaving its secure source, enabling research on otherwise inaccessible data [8]. |

| Knowledge Graphs | A data structure that stores and organizes extensive knowledge from multiple sources in a graph format. AI can perform link prediction on it to discover novel drug targets and generate repurposing hypotheses [9]. |

| Digital Twin Generator | An AI-driven model that creates a digital simulation of a patient's disease progression. This allows for smaller, more efficient clinical trials with reliable control arms, drastically cutting trial costs and duration [12]. |

| Explainable Boosting Machines (EBMs) | A interpretable ML model that uses modern bagging and boosting techniques while remaining highly accurate and graphable, crucial for validating model decisions against domain knowledge [10]. |

For researchers in computational chemistry and drug development, the high computational cost of machine learning (ML) and quantum chemistry calculations is a major bottleneck. It can extend critical R&D timelines from weeks to months, directly impacting project Return on Investment (ROI) by delaying time-to-market and increasing development expenses [13] [14]. This technical support center provides targeted guidance to help you overcome these hurdles, offering troubleshooting advice, clear protocols, and essential tools to optimize your computational workflows, reduce costs, and accelerate your research.

Quantifying the Impact: Computational Cost Reduction in Research

The table below summarizes key quantitative data from recent studies, demonstrating the significant advances in reducing computational costs for chemical research.

Table 1: Impact of Advanced Computational Methods on Research Efficiency

| Method / Technology | Key Performance Improvement | Application Area | Source |

|---|---|---|---|

| Variational Reaction Path Optimization | 50-70% reduction in computational cost vs. NEB method | Finding transition states in chemical reactions | [15] |

| Yet Another Reaction Program (YARP) | Nearly 100-fold reduction in computational cost; improved reaction coverage | Automated prediction of reaction outcomes and material stability | [14] |

| Quantum Machine Learning (QML) Cost Models | 10% to 90% reduction in CPU time overhead for job scheduling | Predicting wall times for quantum chemistry tasks | [16] |

| AI in R&D (General Business Context) | Average ROI of 3.5X on investment; top performers achieve 8X | General AI-driven business insights and workflows | [17] |

Frequently Asked Questions (FAQs) and Troubleshooting

1. Our transition state searches are consuming too much computational time and resources. What are more efficient alternatives? The Nudged Elastic Band (NEB) method is a common but computationally expensive approach. A reliable and efficient alternative is the Variational Reaction Path Optimization method [15].

- Problem Solved: This method focuses intensively on the region around the transition state, requiring only about 3 images compared to the large number needed for NEB. Its variational principle (minimizing an objective function) also leads to more efficient convergence [15].

- Implementation: A program implementing this method is available on GitHub (

github.com/shin1koda/dmf) and is designed to be used with the Atomic Simulation Environment (ASE) [15].

2. How can we broadly and accurately screen material stability without prohibitive costs? Conventional methods often force researchers to use intuition to narrow the scope of reactions due to high computational costs, which can lead to missed important reactions [14].

- Solution: Implement the Yet Another Reaction Program (YARP) automated computational method [14].

- Methodology: YARP uses a mixed-fidelity approach. It uses inexpensive models to form approximate solutions before refining them with more expensive, accurate models. This strategy achieves a nearly 100-fold reduction in cost without a loss in accuracy, enabling broader and more reliable reaction coverage [14].

3. Our ML model training for quantum chemistry tasks is inefficient, leading to high overhead. How can we improve scheduling? Inefficient job scheduling, where computational jobs are treated indiscriminately, wastes significant resources [16].

- Solution: Use Quantum Machine Learning (QML) models to predict the computational cost (wall time) of your quantum chemistry tasks [16].

- Protocol: After training on thousands of molecular systems, these QML models can systematically predict the wall times for single-point, geometry optimization, and transition state calculations. Using these predictions for job scheduling can reduce CPU time overhead by 10% to 90% [16].

4. How do we justify the high initial investment in advanced computing infrastructure for R&D? Justifying large investments requires connecting them to tangible returns and strategic advantage [13] [17].

- ROI Justification:

- Direct Returns: A market study shows AI investments now deliver an average return of 3.5X, with top companies seeing 8X returns [17].

- Intangible Benefits: Beyond direct financial gains, consider the strategic ROI of accelerated time-to-market. Products that ship six months late can be 33% less profitable over time. Faster computation directly addresses this risk [13] [18].

- Portfolio Strategy: Frame investments using a stage-gate model: start with small, low-cost pilot projects to demonstrate value before scaling up, effectively balancing risk and reward [13] [19].

Experimental Protocols for Key Cited Experiments

Protocol 1: Variational Transition State Search

This protocol outlines the use of a variational method for finding transition states, as an efficient alternative to the NEB method [15].

1. Objective: To find the transition state (TS) between a known reactant and product with high reliability and reduced computational cost.

2. Prerequisites:

- Known initial and final states (reactant and product geometries).

- Python environment with the necessary computational library installed from

github.com/shin1koda/dmf. - Atomic Simulation Environment (ASE).

3. Step-by-Step Methodology:

- Step 1: Set up the reactant and product structures in your ASE-compatible workflow.

- Step 2: Initialize the reaction path optimizer. The key difference from NEB is that the path is represented with a minimal number of images (as low as 3), focused on the TS region.

- Step 3: Define the variational objective function, which is the line integral of the exponential of the energy along the path. The optimizer will work to minimize this function.

- Step 4: Run the optimization. The variational principle ensures efficient convergence to the reaction path that passes through the transition state.

- Step 5: Validate the identified transition state by confirming it has a single imaginary frequency and connects to the correct reactant and product.

4. Key Technical Parameters:

- Cost Reduction: This method reduces the total computational cost by 50-70% compared to the standard NEB method [15].

Protocol 2: High-Coverage Reaction Prediction with YARP

This protocol describes using YARP for low-cost, high-coverage automated reaction prediction, crucial for assessing material stability [14].

1. Objective: To predict a wide range of possible reaction outcomes for a given material or set of reactants, minimizing the risk of missing critical degradation pathways.

2. Prerequisites:

- SMILES strings or molecular structures of the starting materials.

- Access to the YARP computational method.

3. Step-by-Step Methodology:

- Step 1: Input the molecular system of interest into YARP.

- Step 2: The algorithm first uses fast, inexpensive molecular mechanics or low-level quantum mechanical models to rapidly screen thousands of potential reaction pathways and generate approximate reaction coordinates.

- Step 3: YARP then automatically selects the most promising candidate reactions from the low-cost screen for refinement with more accurate, high-level ab initio methods (e.g., DFT).

- Step 4: The final output is a list of characterized reactions with their energetics, providing a comprehensive view of the system's reactivity.

4. Key Technical Parameters:

- Cost Reduction: Achieves a nearly 100-fold reduction in computational cost relative to state-of-the-art methods that use high-level calculations for all reactions [14].

- Coverage: The low cost allows for vastly improved reaction coverage, reducing the chance of erroneous conclusions from missed reactions [14].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Tools for Cost-Effective Chemistry ML Research

| Tool Name | Type | Primary Function | Relevance to Cost Reduction |

|---|---|---|---|

| Variational Reaction Path Code [15] | Software Library | Efficiently finds transition states by optimizing a variational objective function. | Reduces cost 50-70% vs. NEB by using fewer images and a more efficient search principle. |

| YARP (Yet Another Reaction Program) [14] | Automated Workflow | Predicts reaction outcomes and material stability with broad coverage. | Mixed-fidelity approach cuts cost 100-fold, enabling comprehensive screening. |

| QML Wall Time Predictor [16] | Machine Learning Model | Predicts the computational cost (wall time) of quantum chemistry tasks. | Improves job scheduling efficiency, reducing CPU time overhead by 10-90%. |

| Atomic Simulation Environment (ASE) [15] | Software Platform | A Python suite for setting up, running, and analyzing atomistic simulations. | Provides a common, flexible environment for integrating and using efficient tools like the variational path code. |

| Amazon SageMaker / TensorFlow [17] | ML Development Platform | Managed (SageMaker) and open-source (TensorFlow) environments for building, training, and deploying ML models. | SageMaker can reduce development time; TensorFlow offers control for cost optimization by experienced teams. |

Workflow Visualizations

Diagram 1: Efficient vs. Traditional Transition State Search

Diagram 2: Mixed-Fidelity Reaction Screening Workflow (YARP)

Frequently Asked Questions

Q: What are the most significant computational costs when running machine learning for chemistry applications?

Q: My quantum chemistry calculations are too slow for large-scale ML training. What can I do?

- A: You can use machine learning to create surrogate models that approximate the results of the expensive quantum calculations. These models are trained on a dataset of existing calculations and can predict properties like energy and forces orders of magnitude faster. Techniques like active learning can also help minimize the number of expensive computations needed by intelligently selecting the most informative data points to run [22].

Q: How can I predict and optimize the resource usage of my computational chemistry jobs on a supercomputer?

- A: Machine learning strategies can be developed to predict the execution time and optimal runtime parameters (like the number of nodes and tile sizes) for computations such as Coupled Cluster (CCSD) methods. This helps answer key user inquiries, such as the configuration for the shortest execution time or the cheapest run in terms of node-hours [23].

Q: Beyond raw computation, what other factors contribute to the overall cost of an ML project in chemistry?

- A: Significant costs often come from data acquisition and preparation, which includes generating, cleaning, and annotating datasets. Furthermore, ongoing support and maintenance for model retraining and deployment, as well as cloud infrastructure and integration costs, contribute substantially to the total expense [20].

Q: How can I reduce energy consumption and improve yield in chemical manufacturing using ML?

- A: White-box machine learning can optimize processes in real-time. It can recommend operational adjustments to reduce energy usage (e.g., by running processes at lower temperatures) and to maximize yield and recovery of raw materials, directly cutting production costs [24].

Quantitative Cost Metrics and Factors

The tables below summarize key cost factors and estimates for machine learning initiatives in scientific domains.

Table 1: Primary Cost Factors in Machine Learning Projects

| Cost Factor | Description | Impact |

|---|---|---|

| Solution Complexity | Complexity of the ML model and its performance, responsiveness, and compliance needs [20]. | High |

| Data Preparation | Costs associated with acquiring, cleaning, and labeling training data [20]. | High |

| Model Training Approach | Choice between supervised, unsupervised, or reinforcement learning, and use of pre-trained models [20]. | Medium |

| Cloud Infrastructure | Ongoing costs for computing, storage, and data transfer [20] [25]. | Medium |

| Support & Maintenance | Ongoing costs for model retraining, monitoring, and updates, which can be 25%-75% of initial development resources [20]. | Medium-High |

Table 2: Example Machine Learning Project Cost Estimates

| Project Type | Team Efforts | Estimated Cost (Based on Central European rates) | Key Cost Drivers |

|---|---|---|---|

| Emotion Recognition Solution | 350 hours [20] | ~$26,000 [20] | Research, testing multiple neural networks, model fine-tuning. |

| Exploratory Stage (Feasibility Study) | ~500-600 hours [20] | $39,000 - $51,000 [20] | Team of business analyst, data engineer, ML engineer, and project manager. |

| Annual Cloud (Simpler Solution) | N/A | ~$1,500 - $3,600 /year [20] | Lower-dimensional data, fewer virtual CPUs. |

| Annual Cloud (Complex Deep Learning) | N/A | >$120,000 /year [20] | High latency requirements, complex algorithms. |

Experimental Protocols for Cost Estimation and Reduction

Protocol 1: ML-Based Resource Prediction for Chemistry Computations

This methodology uses machine learning to forecast the resources needed for massively parallel chemistry computations, helping users optimize for speed or cost before running jobs on supercomputers [23].

- Data Collection: Collect historical experimental data, including execution times for various problem sizes, numbers of compute nodes, and application-specific parameters like tile sizes [23].

- Model Training and Evaluation: Train a suite of ML models on the collected data. Research indicates that Gradient Boosting (GB) regression often performs best for this task, aiming for high accuracy metrics (e.g., low Mean Absolute Percentage Error) [23].

- Addressing User Inquiries:

- For the Shortest-Time Question (STQ), use the model to predict the execution time for different configurations and select the one with the minimum time [23].

- For the Budget Question (BQ), use the model to find the configuration that minimizes the total node-hours (number of nodes × execution time) [23].

- Active Learning for Data-Scarce Scenarios: When historical data is limited and expensive to generate, employ active learning. Techniques like uncertainty sampling or query-by-committee can select the most informative data points to run next, maximizing model accuracy with a minimal number of experiments [23].

The workflow for this protocol is illustrated below.

Protocol 2: Developing a Surrogate Model for Quantum Calculations

This protocol outlines creating a fast machine learning model to approximate slow, expensive quantum chemistry calculations, enabling their large-scale use [22].

- Generate Training Data: Perform a set of high-fidelity but computationally expensive quantum chemistry calculations (e.g., DFT) on a representative set of molecules to obtain target properties (energy, forces, etc.) [22] [21].

- Create Molecular Descriptors: Convert the chemical structures of the molecules into computer-readable vectors (descriptors). These can range from simple molecular weights to more complex representations that capture electronic structure [21].

- Train the Surrogate Model: Use the molecular descriptors as input features and the quantum chemistry results as labels to train a supervised machine learning model. This model learns the mapping from structure to property [22] [21].

- Validate the Model: Test the trained model on a held-out set of molecules not seen during training to ensure it generalizes well and provides accurate predictions.

- Deploy for Prediction: Use the validated surrogate model to rapidly predict properties for new molecules, bypassing the need for the expensive quantum calculations.

The workflow for building and using a surrogate model is as follows.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Tools for Chemistry ML Research

| Tool / Solution | Function in Research |

|---|---|

| Gradient Boosting (GB) | A powerful machine learning model for regression tasks, shown to be highly effective in predicting computational chemistry application execution times and optimal parameters [23]. |

| Active Learning | A strategy to minimize expensive data generation costs by intelligently selecting the most informative data points to run next, improving model accuracy with fewer experiments [23] [22]. |

| White-Box Machine Learning | ML models that provide interpretable results, allowing engineers to understand the reasoning behind recommendations for process optimization, such as reducing energy use or improving yield [24]. |

| Chemical Language Models (CLMs) | Pre-trained transformer-based models (e.g., variants of BERT, GPT) that are adapted for chemical SMILES data, useful for tasks like molecular property prediction and de novo molecular design [26]. |

| Scikit-Learn, TensorFlow, Keras | Common, open-source programming libraries used to easily implement a wide variety of machine learning algorithms [21]. |

| MLatom | A software package specifically designed for computational chemists, providing interfaces for common machine learning algorithms and molecular descriptor generators without an extensive programming background [21]. |

Efficient by Design: Core Algorithms and Architectures for Low-Cost Chemistry ML

Leveraging Low-Scaling Quantum Mechanics (QM) Methods for Large Systems

This technical support center provides troubleshooting guides and FAQs for researchers employing low-scaling Quantum Mechanics (QM) methods, particularly in conjunction with machine learning (ML), to reduce computational costs in chemical research and drug development.

Frequently Asked Questions (FAQs)

What does "low-scaling" mean in the context of quantum simulations? "Low-scaling" refers to algorithms whose computational cost (in time and memory) grows polynomially with system size, rather than exponentially. This makes simulating large molecules or complex materials feasible on classical computers, or on near-term quantum devices with limited qubits [27]. For example, a new approach using the truncated Wigner approximation (TWA) allows some quantum dynamics problems to be solved on a laptop in hours instead of requiring a supercomputer [27].

My Variational Quantum Eigensolver (VQE) optimization is stuck. What can I do? This is a common problem known as a "barren plateau," where gradients vanish, making optimization difficult [28]. To mitigate this:

- Use a pre-trained model: Leverage transfer learning. Machine learning models, such as Graph Attention Networks (GAT) or Schrödinger's Networks (SchNet), can be trained to predict good initial parameters for your quantum circuit, bypassing the need for random initialization [29] [30].

- Simplify your ansatz: Choose a more hardware-efficient circuit design or one that incorporates molecular symmetries, like the Separable Pair Ansatz (SPA) [29].

- Adopt a hybrid approach: Use a classical deep neural network (DNN) as the optimizer instead of a memoryless classical optimizer. The DNN can learn from previous optimization runs on other molecules, improving efficiency and noise resilience [30].

How can I model chemical dynamics, not just static properties? While many quantum algorithms focus on ground-state energy, new methods are emerging for dynamics. A semiclassical method like the truncated Wigner approximation (TWA) has been extended to model dissipative spin dynamics, where particles interact with their environment [27]. Furthermore, researchers at the University of Sydney have demonstrated the first quantum simulation of chemical dynamics, modeling how a molecule's structure evolves over time [4].

My quantum resource requirements are too high for practical use. Any advice? Algorithmic improvements are rapidly reducing resource needs. You can:

- Investigate new algorithms: Recent papers describe techniques like "spectrum amplification" and "improved tensor factorization" that significantly cut the cost of Hamiltonian simulation [31].

- Use system-adapted circuits: Design your quantum circuits based on the molecular graph of your specific system, which can reduce the number of unnecessary operations [29].

- Check vendor roadmaps: A NERSC analysis shows that qubit and gate requirements for key scientific problems have dropped sharply, and hardware capabilities are projected to rise steeply. What seems infeasible today may be practical within a few years [32].

When will quantum computers be truly useful for my drug discovery research? Practical use for scientific workloads is projected within the next 5-10 years [32]. Current applications are nascent but growing. For example, 16-qubit computers have been used to find potential cancer drug inhibitors, and others have simulated the folding of a 12-amino-acid protein chain [4]. The focus for now should be on developing and testing algorithms and workflows on current hardware and simulators to be prepared for more powerful machines.

Troubleshooting Guides

Issue 1: Initial Quantum Circuit Parameters Lead to Poor Convergence

This guide addresses the challenge of initializing parameters for variational quantum algorithms like the Variational Quantum Eigensolver (VQE).

Detailed Methodology for a Machine Learning Transfer Learning Protocol

The following protocol uses a classical machine learning model to predict optimal initial parameters for a quantum circuit, reducing convergence time and improving reliability [29].

Workflow: ML-Parameterized Quantum Circuit

Step-by-Step Procedure:

Data Generation (Steps A1-A4): For a training set of molecules (e.g., 230,000 linear H4 configurations) [29]:

- A1. Generate 3D Coordinates: Use a tool like

quanti-gin[29] to create diverse molecular geometries. Apply constraints to avoid unrealistic structures (e.g., inter-atomic distances between 0.5 and 2.5 Å). - A2. Estimate Optimal Chemical Graph: From the coordinates, compute a perfect matching graph that represents the most likely chemical bonds, using scaled Euclidean distances as edge weights [29].

- A3. Construct Circuit Ansatz: Build the quantum circuit, for instance, the Separable Pair Ansatz (SPA), based on the chemical graph from the previous step [29].

- A4. Run Full VQE Optimization: For each molecule, run a complete, computationally expensive VQE to find the true optimal parameters (θ). This data serves as the ground truth for training.

- A1. Generate 3D Coordinates: Use a tool like

Model Training (Step B):

- Input Features: Atomic coordinates and the perfect matching graph structure [29].

- Model Choice: Train a model such as a Graph Attention Network (GAT) or a Schrödinger's Network (SchNet), which are designed for molecular data [29].

- Output Target: The model learns to map the molecular structure to the optimal VQE parameters (θ) found in Step A4.

Deployment & Execution (Steps C-F):

- C. New Target Molecule: Present the geometry of a new, larger molecule (e.g., H12) not seen during training.

- D. Apply Model: Use the trained model to predict the initial parameters (θ′) for this new molecule.

- E. Initialize Circuit: Use these ML-predicted parameters to initialize the quantum circuit, providing a much better starting point than random initialization.

- F. Final Optimization: Proceed with the standard VQE optimization. The refinement from a near-optimal starting point will require significantly fewer iterations and be less prone to failure [29] [30].

Issue 2: Classical QM Simulation is Too Slow for Desired System Size

This guide covers applying low-scaling semiclassical methods to bypass the high cost of full quantum simulations.

Detailed Methodology for Truncated Wigner Approximation (TWA)

The TWA is a semiclassical technique that approximates quantum dynamics by using a statistical ensemble of classical trajectories, offering a computationally affordable alternative [27].

Workflow: Semiclassical Quantum Dynamics

Step-by-Step Procedure:

Define System (Step P1): Clearly define the quantum system and its Hamiltonian, including any dissipative terms (e.g., interactions with an external environment) [27].

Apply TWA Formalism (Step P2): Use a pre-computed conversion table (as provided in recent research [27]) to map the quantum operators of your system onto a set of classical stochastic differential equations. This step avoids the need to derive the complex math from scratch.

Initialization (Step P3): Sample the initial conditions for your classical variables not from a single point, but from a distribution that represents your initial quantum state, known as the Wigner distribution.

Dynamics (Step P4): Propagate a large ensemble of these classical trajectories forward in time. Each trajectory is independent, making this step highly parallelizable.

Analysis (Step P5): Calculate the observable of interest (e.g., magnetization, correlation function) for each trajectory at the final time. The average of this observable over the entire ensemble of trajectories provides an approximation of the quantum mechanical expectation value.

Research Reagent Solutions

The table below catalogs key computational tools and methods used in modern low-scaling QM and quantum-accelerated chemistry research.

| Item Name | Function & Application |

|---|---|

| Separable Pair Ansatz (SPA) [29] | A robust, system-adapted quantum circuit design. Used as a parameterized ansatz in VQE for electronic structure problems, known for good performance with fewer parameters. |

| Truncated Wigner Approximation (TWA) [27] | A semiclassical physics method. Used for efficient simulation of quantum dynamics on classical hardware, extended to model open quantum systems with dissipation. |

| Schrödinger's Network (SchNet) [29] | A deep neural network architecture for molecular modeling. Used to predict quantum circuit parameters from molecular geometry, enabling transfer learning. |

| Graph Attention Network (GAT) [29] | A graph neural network using attention mechanisms. Used to model molecules as graphs (atoms=nodes, bonds=edges) to predict molecular properties or circuit parameters. |

| Variational Quantum Eigensolver (VQE) [4] [30] | A hybrid quantum-classical algorithm. Used to find ground-state energies of molecules on near-term quantum hardware by varying circuit parameters. |

| Deep Neural Network (DNN) Optimizer [30] | A classical AI optimizer in hybrid workflows. Replaces traditional optimizers in VQE, learning from previous runs to improve efficiency and resist quantum noise. |

| pUCCD-DNN Ansatz [30] | A hybrid quantum-classical ansatz. Combines a physically-motivated trial wavefunction (pUCCD) with DNN optimization for highly accurate energy calculations. |

The following tables summarize key performance metrics and resource estimates from recent research, aiding in method selection and project planning.

Table 1: Machine Learning Models for Parameter Prediction (Based on data from [29]) This table compares ML models for predicting VQE parameters, evaluated on hydrogen chain (Hn) datasets.

| Model | Training Dataset | Model Parameters | Key Input Features | Demonstrated Transferability |

|---|---|---|---|---|

| Graph Attention Network (GAT) | 230k linear H4 | ~302,625 | Euclidean distance matrix with angles | Good performance on small systems. |

| Linear SchNet | 230k linear H4 | ~28,273 | Euclidean distance matrix with angles | Reduced-parameter model for efficient training. |

| Mixed SchNet | 1k H4 + 2k H6 | ~472,450 | Coordinates reordered by perfect matching graph | Yes. Systematically transfers to larger molecules (e.g., H12). |

Table 2: Algorithmic Scaling & Resource Projections (Synthesized from [4] [32]) This table compares the scaling and hardware requirements for different computational chemistry methods.

| Method / System Type | Computational Scaling | Estimated Qubits for FeMoco | Key Challenges |

|---|---|---|---|

| Classical (e.g., DFT) | Polynomial (e.g., O(N³)) | Not Applicable | Accuracy limited by approximations for complex systems. |

| Early Quantum Algorithms | Exponential (but reduced cost) | ~2.7 million (2021 estimate) | High qubit/gate counts were prohibitive. |

| Improved Quantum Algorithms | Exponential (significantly reduced) | ~100,000 (recent estimate) | Error correction and qubit quality remain critical. |

| Semiclassical (e.g., TWA) [27] | Low-scaling (Polynomial) | Not Applicable | Accuracy depends on system and suitability of approximation. |

Frequently Asked Questions

Q1: What are the main advantages of using Physics-Informed Geometric Deep Learning (PI-GDL) over traditional data-driven models in chemical and molecular research? PI-GDL offers two primary advantages critical for computational chemistry: superior data efficiency and enhanced physical consistency. By integrating physical laws directly into the model's loss function or architecture, these models can learn reliably from small datasets, reducing the need for expensive quantum chemistry calculations or molecular dynamics simulations [33] [34]. Furthermore, they ensure predictions adhere to known physical constraints and respect the underlying geometric structure of molecular systems, leading to more interpretable and physically plausible results [35] [36].

Q2: My model fails to generalize to unseen molecular geometries or graph topologies. What steps can I take? This is a common challenge indicating the model may be overfitting to the specific geometries in the training set. The solution is to use an architecture that is inherently geometry-aware.

- Solution: Implement a framework that explicitly encodes the molecular geometry. For instance, you can use a Shape Encoding Network (SEN), like a Variational Auto-Encoder (VAE), to compress irregular molecular geometries into a latent representation. This latent vector is then concatenated with spatial coordinates and used as input to a physics-informed network, enabling it to handle a wide variety of non-parametric shapes seen during training [37]. Frameworks like PI-GANO (Physics-Informed Geometry-Aware Neural Operator) are specifically designed for this purpose [38].

Q3: How can I enforce boundary conditions or physical constraints in my molecular model? Hard enforcement of boundary conditions can be achieved through a dedicated Boundary Constraining Network (BCN). The BCN is trained to map spatial coordinates (especially those on the boundary) to their known values. The outputs of the BCN and the main physics-informed network are then combined to ensure the boundary conditions are exactly satisfied throughout training, rather than just being encouraged through a soft penalty in the loss function [37].

Q4: The physics-informed loss function causes unstable training and poor convergence. How can I mitigate this? Training instability often arises from an imbalanced loss landscape. You can address this by:

- Loss Weighting: Applying adaptive weighting schemes to different terms in the loss function (e.g., data loss, PDE residual loss, boundary condition loss) to prevent one term from dominating the gradient updates [33].

- Curriculum Learning: Start training on simpler physical regimes or smoother sections of the data before progressively introducing more complex scenarios [33].

- Gradient Pathology: Be aware that the gradients from PDE residual losses can become pathological, making optimization difficult. Using specialized training techniques can help overcome this [34].

Q5: For a new molecular property prediction task, how do I choose between a Graph Neural Network (GNN) and a Neural Operator? The choice depends on the scope of your problem.

- Use a Graph Neural Network (GNN) when you are working with a collection of discrete molecular graphs and want to make a prediction for each individual graph (e.g., predicting the binding affinity of a specific protein-ligand complex) [35] [39].

- Use a Neural Operator when you want to learn a mapping between infinite-dimensional function spaces. This is powerful for learning the solution to a family of partial differential equations (PDEs) across different parameters or geometries. For example, a neural operator can learn to predict the continuous pressure field in a fluid flow for any vessel shape within a class, without re-training [38].

Troubleshooting Guides

Problem: Model exhibits high accuracy on training data but poor performance on test data, especially for out-of-distribution molecules.

- Potential Cause 1: The model is overfitting to the training geometries and has not learned a generalizable, inductive bias.

- Diagnosis: Check if performance drops significantly on test molecules with larger sizes or different topological features compared to the training set.

- Fix: Adopt a framework designed for generalization. PAMNet, for instance, explicitly models both local (e.g., bond vibrations) and non-local (e.g., electrostatic) interactions inspired by molecular mechanics. This physics-informed bias helps the model generalize more effectively across different molecular systems and sizes [35].

- Potential Cause 2: The physics-based regularisation in the loss function is too weak.

Problem: Training process is computationally expensive and consumes too much memory.

- Potential Cause 1: Inefficient modeling of interactions in large molecular graphs.

- Diagnosis: The model slows down considerably with an increase in the number of atoms or nodes.

- Fix: Use a framework like PAMNet that reduces expensive operations by separately processing local and non-local interactions. This leads to significantly better time and memory efficiency compared to standard GNNs while maintaining high accuracy [35].

- Potential Cause 2: Use of a "vanilla" Physics-Informed Neural Network (PINN) for a complex geometry.

- Diagnosis: Training is slow due to a large number of collocation points needed to capture irregular molecular surfaces.

- Fix: Implement a geometry-aware method like GAPINN or PI-GANO. These frameworks use a compact latent representation of the geometry, which simplifies the learning task for the network and can reduce the computational overhead [38] [37].

Problem: Model violates known physical laws (e.g., energy conservation) in its predictions.

- Potential Cause: The physical equations are only softly enforced via the loss function, and the model is finding a "cheat" that minimizes the loss without fully satisfying the physics.

- Diagnosis: Manually inspect the model's predictions for unphysical behavior, such as negative densities or implausible energy values.

- Fix:

- Hard Enforcement: Where possible, redesign the network architecture to inherently satisfy physical constraints. For example, output a positive quantity by using a softplus activation function on the final layer.

- Enhanced Loss Function: Add more specific penalty terms to the loss function that directly punish the violation of the conserved quantity.

- Automatic Differentiation: Ensure the PDE residuals are calculated using automatic differentiation, which provides exact gradients, rather than approximate numerical methods, leading to more precise enforcement of physical laws [37] [34].

Performance Benchmarking of PI-GDL Frameworks

The table below summarizes the quantitative performance of several key frameworks as reported in their respective studies, providing a basis for comparison.

| Framework | Key Innovation | Reported Accuracy/Efficiency Gains |

|---|---|---|

| PAMNet [35] | Physics-informed bias for local/non-local molecular interactions. | Outperforms state-of-the-art baselines in accuracy and efficiency on small molecule properties, RNA 3D structures, and protein-ligand binding affinity tasks. |

| PI-GANO [38] | Neural operator generalizing across PDE parameters & domain geometries. | Demonstrates accuracy and efficiency in solving parametric PDEs on variable geometries; reduces need for costly finite element data. |

| GAPINN [37] | VAE for geometry encoding + PINN for solving PDEs on irregular shapes. | Accurately models laminar flow (Re=500) in irregular vessels; purely physics-driven training without simulation data. |

| Physics-Informed GNN for Power Systems [36] | Applies GNNs with physics-informed loss for state estimation. | Achieves high accuracy in state estimation under high sensor failure rates and noise; generalizes to unseen grid topologies. |

Experimental Protocol: Implementing a PI-GANO-like Framework for Molecular Systems

This protocol outlines the key steps for creating a physics-informed, geometry-aware model for molecular simulations, adapting the PI-GANO framework for chemical applications.

1. Problem Formulation:

- Objective: Learn a surrogate model for a molecular property (e.g., solvation energy field) that maps from a 3D molecular structure to a continuous physical field, generalizing across different molecular geometries.

- Governing Equations: Identify the underlying PDEs, such as the Poisson-Boltzmann equation for solvation or the Navier-Stokes equations for fluid flow around a molecule.

2. Data Preparation & Geometry Encoding:

- Input: A set of 3D molecular structures (e.g., from PDB files).

- Shape Encoding Network (SEN): Train a Variational Auto-Encoder (VAE) to compress the molecular surface or density map into a low-dimensional latent vector

z. This vector captures the essential geometric features [37].

3. Network Architecture and Training:

- Model Pipeline: The architecture follows a sequence where a geometry latent vector is combined with spatial coordinates to produce a physical field prediction.

- Loss Function: The total loss (

L_total) is a weighted sum of multiple objectives:L_data = MSE(u_pred, u_data)(if supervised data is available)L_physics = MSE(f(u_pred, ∂u/∂x, ...), 0)(the PDE residual)L_BC = MSE(u_pred, u_BC)(boundary conditions)L_total = λ_data * L_data + λ_physics * L_physics + λ_BC * L_BC

4. Validation:

- Validate the model's predictive accuracy on a held-out test set of molecular geometries.

- Crucially, test its ability to generalize to novel molecular structures not seen during training.

- Verify that the predicted fields satisfy the governing PDEs by numerically checking the residuals.

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table lists key computational "reagents" essential for building and experimenting with PI-GDL models.

| Item / Tool | Function / Purpose |

|---|---|

Automatic Differentiation (e.g., PyTorch autograd) |

Calculates exact derivatives of the network output with respect to its inputs, which is essential for computing the residuals of differential equations in the physics loss [34]. |

| Geometric Deep Learning Library (e.g., PyTor Geometric) | Provides pre-built, optimized modules for Graph Neural Networks (GNNs), making it easier to construct models that operate on molecular graphs [35]. |

| Shape Encoder (e.g., VAE, PointNet) | Encodes complex, irregular molecular geometries into a fixed-length, low-dimensional latent representation, enabling generalization across shapes [40] [37]. |

| Collocation Points | A set of spatial points (within the domain and on boundaries) where the PDE residuals are evaluated and minimized during the physics-informed training process [34]. |

| Differentiable Parameter | A model parameter (e.g., a reaction rate or diffusion coefficient) that is treated as trainable and can be discovered jointly with the network weights during training [34]. |

Troubleshooting Guides and FAQs

Common Experimental Challenges and Solutions

FAQ: My knowledge-enhanced model fails to generalize to new molecular structures. What could be wrong?

- Problem Diagnosis: This is often caused by the model's over-reliance on data-driven patterns from its training set without a fundamental understanding of chemical principles. It indicates poor integration of domain knowledge, leading to failures when encountering molecules outside the training distribution.

- Solution:

- Inspect Knowledge Integration: Verify that your knowledge graph or shape representation is not simply appended but deeply fused with the neural network's learning process. For instance, in frameworks like KANO, an element-guided graph augmentation explores microscopic atomic associations without violating molecular semantics [41].

- Implement Functional Prompts: During fine-tuning, use functional prompts based on fundamental chemical knowledge (e.g., functional groups) to bridge the gap between pre-training tasks and specific downstream predictions. This evokes task-related knowledge from the pre-trained model [41].

- Expand Knowledge Coverage: Construct or integrate a comprehensive knowledge base of molecular substructures. This provides a foundational prior, extending the model's effective coverage of chemical space and improving reasoning on atypical cases [42].

FAQ: The process of generating molecules is computationally expensive and slow due to reliance on docking simulations. How can I speed this up?

- Problem Diagnosis: Traditional atom-by-atom generation methods often require frequent, time-consuming docking simulations or costly experimental data to evaluate generated molecules [43].

- Solution:

- Adopt a Shape-Centric Generation Paradigm: Implement a two-stage generation process, such as that used by ShapeGen. First, a shape sketching stage selects molecular shapes that complement the target protein pocket. Second, a shape filling stage uses a pre-trained model to convert the shape into a concrete molecular structure. This constrains the design space efficiently and minimizes the need for docking simulations, which become an optional post-processing step rather than a core part of the generation loop [43].

- Leverage Pre-trained Models: Utilize a pre-trained generative model for the shape filling stage. This model can be trained on large-scale, unlabeled datasets, reducing dependency on expensive labeled data [43].

FAQ: My molecular property prediction model performs well on common compounds but poorly on rare or complex ones. How can I improve its robustness?

- Problem Diagnosis: Purely data-driven models can be biased toward common local atomic patterns in the training data, lacking the global perspective needed for complex molecules [43] [41].

- Solution:

- Incorporate Global Shape Information: Enhance your model with global molecular shape descriptors. For example, the ShapePred model uses electrostatic potential (ESP) as a source of global information. ESP provides a 3D map of electrical potential around a molecule, which is determined by the entire molecular ensemble and offers insight into charge distribution [43].

- Use Equivariant Networks: For 3D shape data represented as point clouds or graphs, employ rotation- and translation-invariant graph neural networks to extract robust molecular representations [43].

- Apply Contrastive Learning with Knowledge-Guided Augmentation: Replace generic graph augmentations (like random node dropping) with element-guided augmentations that use a knowledge graph to create chemically meaningful positive pairs for contrastive learning, preserving molecular semantics [41].

FAQ: The large language model (LLM) I am using for molecular tasks lacks chemical knowledge and provides inaccurate evaluations. How can I address this?

- Problem Diagnosis: LLMs have inherent limitations in their coverage of the chemical structure space and often function poorly as reward models for domain-specific tasks like matching molecules to spectral data [42].

- Solution:

- Build an External Knowledge Base: Construct a molecular substructure knowledge base that the LLM can query during reasoning. This supplements the LLM's internal knowledge [42].

- Develop a Specialized Reward Scorer: Design a dedicated molecule-spectrum scorer that acts as an external reward model. This scorer, comprising a molecule encoder and a spectrum encoder, evaluates the alignment between molecular structures and spectral data, providing accurate guidance for tree-search-based reasoning processes [42].

- Integrate a Knowledge-Enhanced Reasoning Framework: Plug your LLM into a framework like K-MSE, which uses Monte Carlo Tree Search (MCTS). This framework leverages the external knowledge base and the specialized scorer to guide the LLM's reasoning, enabling it to explore and optimize molecular structures effectively [42].

Experimental Protocols and Data

Protocol: Implementing a Shape-Based Molecular Generation Pipeline (Based on ShapeGen)

Objective: To generate high-quality drug molecules for a given protein pocket with reduced reliance on labeled data and docking simulations.

Methodology:

- Shape Sketching: Prioritize and select suitable molecular shapes that exhibit favorable interactions (shape complementarity) with the target binding pocket. This stage is parameter-free and relies on the biological principle that shape dictates bioactivity [43].

- Shape Filling: Employ a pre-trained generative model to translate the selected molecular shape into a concrete, atomically-detailed molecular structure. This model is trained on large-scale, unlabeled molecular datasets [43].

- Optional Post-Processing: Use docking simulations as a final filtering step to validate and rank the generated candidate molecules. This minimizes the number of costly simulations performed [43].

Table 1: Performance Comparison of Molecular Generation Methods

| Method | Key Approach | Reliance on Labeled Data | Reliance on Docking | Generation Quality |

|---|---|---|---|---|

| ShapeGen [43] | Shape sketching & filling | Low | Low (Optional post-step) | High |

| Traditional Methods [43] | Atomic-level generation | High (for supervised learning) | High (during generation) | Variable |

Protocol: Enhancing Molecular Property Prediction with Global Shape (Based on ShapePred)

Objective: To accurately predict molecular properties by integrating local atomic information with global molecular shape.

Methodology:

- Feature Extraction:

- Representation Learning: Process the 3D graph using an equivariant graph neural network to learn a rotation- and translation-invariant representation of the molecule [43].

- Prediction: Combine the learned atomic and shape representations for the final property prediction task.

Table 2: ShapePred Performance on Molecule Prediction Datasets

| Model | Key Features | Number of Datasets Evaluated | Performance |

|---|---|---|---|

| ShapePred [43] | Local atomic info + Global ESP (shape) | 11 | Strong performance across all |

Protocol: Knowledge-Enhanced Contrastive Learning for Molecules (Based on KANO)

Objective: To pre-train a molecular graph encoder using contrastive learning guided by fundamental chemical knowledge.

Methodology:

- Knowledge Graph Construction: Build an element-oriented knowledge graph (ElementKG) containing elements, their attributes, and functional groups [41].

- Element-Guided Augmentation:

- For a given molecule, identify its element types.

- Retrieve relationships between these elements from ElementKG to form an element relation subgraph.

- Link element entities in this subgraph to their corresponding atoms in the original molecular graph to create an augmented graph. This establishes associations between atoms of the same type that are not directly bonded [41].

- Contrastive Pre-training: Train a graph encoder by maximizing the agreement (via a contrastive loss) between the embeddings of the original molecular graph and its knowledge-augmented version [41].

Workflow Visualizations

Diagram Title: Knowledge-Enhanced Molecular Structure Elucidation with MCTS

Diagram Title: Two-Stage ShapeGen Workflow for Molecule Generation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Knowledge-Enhanced Molecular Modeling

| Item | Function in the Experiment |

|---|---|

| Element-Oriented Knowledge Graph (ElementKG) | A structured repository of fundamental chemical knowledge (elements, attributes, functional groups) used to guide model pre-training and augmentation [41]. |

| Electrostatic Potential (ESP) Map | A 3D representation of the electrical potential around a molecule, providing global shape information that complements local atomic data for property prediction [43]. |

| Equivariant Graph Neural Network | A type of neural network designed to process 3D graph data (like molecular shapes) that is invariant to rotations and translations, ensuring robust feature learning [43]. |

| Functional Prompt | A fine-tuning technique that uses prompts derived from functional group knowledge to bridge the gap between pre-training and downstream tasks, improving prediction accuracy [41]. |

| Molecule-Spectrum Scorer | A specialized reward model comprising molecular and spectral encoders that evaluates the alignment between a proposed molecule and input spectral data, guiding reasoning processes [42]. |

| Molecular Substructure Knowledge Base | An external database of common molecular substructures and their descriptions, used to supplement LLMs' knowledge for more accurate structure elucidation [42]. |

Harnessing Large-Scale Public Datasets (e.g., Open Molecules 2025) for Pre-Trained Models

The Open Molecules 2025 (OMol25) dataset represents a milestone in quantum chemical data for machine learning, enabling the development of pre-trained models that dramatically reduce computational costs in molecular simulations.

Table 1: OMol25 Dataset Quantitative Profile

| Attribute | Specification | Significance for Computational Cost Reduction |

|---|---|---|

| Total Calculations | >100 million DFT calculations [44] [45] | Pre-trained models avoid repeating billions of CPU-hours [45] |

| Computational Cost | ~6 billion CPU core-hours [46] [45] | ML potentials offer ~10,000x speedup over DFT [45] |

| Unique Molecular Systems | ~83 million [44] [46] | Broad coverage reduces need for expensive target-specific data generation |

| Maximum System Size | Up to 350 atoms [44] [45] | Enables simulation of biologically/pharmaceutically relevant molecules |

| Element Coverage | 83 elements [44] | Eliminates cost of generating data for rare or heavy elements |

| Primary Method | ωB97M-V/def2-TZVPD level of theory [44] | Provides high-accuracy training target for ML potentials |

Table 2: Comparison with Other Representative Chemistry Datasets

| Dataset | Size | Key Focus | Notable Features |

|---|---|---|---|

| OMol25 (2025) | >100M calculations [44] | General chemical diversity & large systems [44] [45] | Explicit solvation, variable charge/spin, metal complexes [44] |

| QCML (2025) | 33.5M DFT, 14.7B semi-empirical calculations [47] | Small molecules (≤8 heavy atoms) [47] | Hierarchical data, multipole moments, Kohn-Sham matrices [47] |

| PubChemQC | 86M molecules [47] | Equilibrium structures from PubChem [47] | B3LYP/6-31G*//PM6 level of theory [47] |

| ANI-1 | >20M conformations [47] | Conformational diversity [47] | Organic molecules with H, C, N, O atoms [47] |

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What are the licensing terms for using OMol25 and its pre-trained models? The OMol25 dataset is available under a CC-BY-4.0 license. However, the pre-trained model checkpoints are governed by the FAIR Chemistry License, which includes specific restrictions on prohibited uses (e.g., military applications, illegal drug development, and harassment) [48]. Always review these terms before deployment.

Q2: How does utilizing the OMol25 pre-trained model reduce computational costs for my specific research? Training a machine learning interatomic potential (MLIP) from scratch requires massive computational resources. By starting with a model pre-trained on OMol25's 6 billion CPU-hours of DFT data, you bypass this initial cost. The resulting MLIP can provide DFT-level accuracy at approximately 10,000 times the speed, making high-accuracy simulations of large systems feasible on standard computing resources [45].

Q3: My molecule contains a rare element. Will the OMol25 model work? The OMol25 dataset includes 83 elements from across the periodic table, significantly improving the likelihood of coverage for rare elements compared to older datasets limited to organic components [44]. For the best performance, check the dataset's elemental coverage and consider fine-tuning the model on a small set of custom calculations for your specific system of interest.

Q4: I am getting physically unsound results (e.g., energy drift in dynamics). What should I do? This is a common issue when ML potentials extrapolate beyond their training domain.

- Run the provided evaluations: The OMol25 release includes comprehensive evaluation benchmarks. Use them to diagnose known failure modes [45].

- Check your system's similarity: Ensure the chemical motifs in your target system are reasonably represented in the OMol25 data distribution (e.g., bond types, coordination environments).

- Fine-tune with targeted data: A small amount of additional DFT data (10-100 calculations) on representative configurations from your project can often correct these inaccuracies and improve physical soundness.

Troubleshooting Guide: Common Experimental Issues

Problem: Inaccurate Force/Energy Predictions on Target System This indicates a potential domain mismatch between your application and the model's training data.

| Step | Action | Principle |

|---|---|---|

| 1. Diagnosis | Run the model on the OMol25 evaluation benchmarks. If it passes, the issue is likely domain shift. | Systematically isolate the problem to the model itself versus your specific use case [45]. |

| 2. Data Collection | Generate a small (50-100 structures), targeted dataset of your molecules using DFT. Include both equilibrium and non-equilibrium geometries. | Create a relevant dataset for fine-tuning, following OMol25's principle of including diverse configurations [44]. |

| 3. Fine-tuning | Continue training the pre-trained model on your new, small dataset using a low learning rate. | Leverage transfer learning; the model adapts its general knowledge to your specific problem without forgetting foundational chemistry [49] [50]. |

| 4. Validation | Validate the fine-tuned model on a held-out set of your DFT data and simple MD simulations. | Ensure the model improves on your target without losing generalizability or becoming unstable [45]. |

Problem: High Memory Usage When Modeling Large Systems The OMol25 baseline models are designed to handle systems up to 350 atoms, but memory can be a constraint.

Table: Memory Management Strategies

| Strategy | Implementation | Benefit |

|---|---|---|

| Adjust Model Inference | Use the model in "conserving" mode if available (e.g., eSEN-sm-conserving) [48]. |

Some model variants are optimized for lower memory footprint at a potential cost to speed. |

| Batch Size | Reduce the batch size during inference or training. | Decreases peak memory usage at the cost of slower processing. |

| Hardware | Utilize a GPU with more VRAM. | Directly addresses hardware limitation, but has an associated cost. |

Experimental Protocols for Cost-Effective Model Benchmarking

Protocol 1: Fine-tuning a Pre-trained Model for a Specific Molecular Class

Objective: Adapt the universal OMol25 model to accurately simulate electrolyte molecules for battery research, using minimal computational resources.

Materials & Computational Setup:

- Base Model: Pre-trained eSEN model checkpoint from the OMol25 Hugging Face repository [48].

- Target Data: A subset of the electrolyte structures within OMol25, or a custom dataset of 100-500 electrolyte configurations with DFT-calculated energies and forces.

- Software: Common MLIP frameworks (e.g., MACE, NequIP).

- Hardware: A single modern GPU (e.g., NVIDIA A100 or similar).

Methodology:

- Data Preparation:

- Extract your target electrolyte structures and properties. Split the data into training (80%), validation (10%), and test (10%) sets.

- Ensure the data is in the format required by your chosen MLIP framework.

Model Initialization:

- Load the weights from a pre-trained OMol25 model checkpoint (e.g.,

esen_sm_direct_all.pt) [48]. This initializes the model with a robust understanding of general chemistry.

- Load the weights from a pre-trained OMol25 model checkpoint (e.g.,

Fine-tuning Loop:

- Freezing (Optional): For very small target datasets (< 50 structures), consider freezing the lower layers of the model and only training the final output layers. This prevents overfitting.

- Full Fine-tuning: For larger target datasets, use a low learning rate (e.g., 10–100x smaller than the initial training rate) to update all model weights.

- Monitoring: Track the loss on the validation set throughout training. Implement early stopping if the validation loss fails to improve for a predetermined number of epochs.

Validation and Testing:

- Evaluate the fine-tuned model on the held-out test set to assess its prediction accuracy (Mean Absolute Error for energy and forces).

- Run a short, stable molecular dynamics simulation (e.g., 10 ps) to check for physical realism and stability, which are critical for production use [45].

Table: Key Resources for Leveraging OMol25

| Resource Name | Type | Function & Utility | Access/Source |

|---|---|---|---|

| OMol25 Dataset | Primary Data | Core training dataset with 100M+ DFT calculations for foundational model training or fine-tuning [44] [45]. | Hugging Face [48] |

| Baseline Model Checkpoints (eSEN) | Pre-trained Model | Ready-to-use models (e.g., direct/conserving) provide a starting point for inference or transfer learning, saving billions of CPU-hours [48]. | Hugging Face [48] |

| OMol25 Evaluations | Benchmark Suite | Standardized challenges to objectively measure model performance on chemically relevant tasks, fostering trust and comparison [45]. | Included with dataset release [44] |

| ORCA Quantum Chemistry Package | Software | High-performance quantum chemistry code used to generate the OMol25 dataset; essential for generating new reference calculations [46]. | https://orcaforum.kofo.mpg.de/ |