Refining Semi-Empirical Hamiltonian Parameters: From Foundational Theory to Advanced Applications in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the refinement of semi-empirical Hamiltonian parameters.

Refining Semi-Empirical Hamiltonian Parameters: From Foundational Theory to Advanced Applications in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the refinement of semi-empirical Hamiltonian parameters. It covers the foundational principles of popular methods like PM7 and GFN-xTB, details modern parameterization workflows that integrate high-level ab initio data and machine learning, and addresses common pitfalls in parameter optimization for complex systems like organic solids and metal-containing compounds. A strong emphasis is placed on validation protocols, comparing method performance against experimental and high-fidelity computational benchmarks for properties critical to biomolecular simulation, such as non-covalent interactions and reaction barriers. The goal is to equip scientists with the knowledge to select, apply, and refine these computationally efficient methods for reliable predictions in drug design and materials science.

The Principles and Evolution of Semi-Empirical Quantum Methods

Frequently Asked Questions

1. What is the fundamental difference between the ZDO and NDDO approximations?

The Zero Differential Overlap (ZDO) and Neglect of Diatomic Differential Overlap (NDDO) are both central approximations used to reduce computational cost in semi-empirical quantum chemistry. The key difference lies in their scope [1] [2]:

- ZDO (Zero Differential Overlap): This is a more drastic approximation. It neglects all differential overlap, meaning that all two-electron integrals involving atomic orbitals on different atoms are set to zero.

- NDDO (Neglect of Diatomic Differential Overlap): This is a more nuanced approximation and a specific type of ZDO. Within NDDO, only differential overlap between atomic orbitals centered on different atoms is neglected. It retains monoatomic differential overlap, meaning that one-center two-electron integrals (e.g., on the same atom) are calculated or parameterized. Modern semi-empirical models like MNDO, AM1, PM3, and PM7 are based on the NDDO approximation [1] [2].

2. My NDDO-based calculation (e.g., AM1, PM3) for a sterically crowded molecule shows excessive repulsion and poor thermochemical predictions. What could be the cause and how can I address it?

This is a known limitation of several NDDO-based methods. For instance, MNDO is characterized by "overestimation of repulsion in sterically crowded systems," and AM1's modified core repulsion function can lead to "non-physical attractive forces" [2]. To troubleshoot:

- Diagnosis: Confirm the issue by comparing results against a higher-level ab initio method or experimental data for a similar, less crowded molecule.

- Mitigation: Consider using a more modern parameterization. The PM3 method was noted for having slightly better thermochemical accuracy than AM1, though it can introduce other non-physical attractions [2]. The PDDG/PM3 model was specifically developed to provide a better description of van der Waals interactions and has shown improved accuracy for heats of formation and intermolecular complexes [2].

3. When should I use the Slater-Koster formalism versus NDDO-based methods?

The choice depends on your system and the properties of interest.

- Slater-Koster Tight-Binding: This formalism, originating from solid-state physics, is highly efficient for generating electronic energy bands in periodic materials like crystals and semiconductors [3]. It is often used as a post-processing step for plane-wave pseudopotential calculations to generate system-specific tight-binding models [3].

- NDDO-based Methods (MNDO, AM1, PM3): These are primarily designed for quantum chemical calculations on molecular systems. They are parameterized to predict molecular properties such as heats of formation, dipole moments, ionization potentials, and geometries [1] [2]. They are particularly useful for large organic molecules where ab initio methods are too expensive.

4. Can semi-empirical methods describe excited states and electronic spectra?

Yes, but the accuracy depends on the specific method and parameterization. Some semi-empirical methods were developed primarily for this purpose [1]:

- Methods for π-electrons: The Pariser-Parr-Pople (PPP) method can provide good estimates of π-electronic excited states when well-parameterized [1] [4].

- All-valence electron methods: ZINDO is a well-known method designed for calculating excited states and predicting electronic spectra [1]. The combination of the OM2 method with multi-reference configuration interaction (MRCI) is also noted as an important tool for excited state molecular dynamics [1].

Troubleshooting Common Computational Issues

| Issue / Symptom | Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|---|

| Poor hydrogen bonding description | Known limitation in early NDDO methods (e.g., MNDO) [2]. | Compare calculated dimer geometry (e.g., water dimer) with reference data. | Switch to a method with a modified core repulsion function (e.g., AM1, PM3) or a specifically parameterized method like PDDG/PM3 [2]. |

| Excessive repulsion in crowded molecules | Overestimation of core-core repulsions in NDDO methods [2]. | Analyze potential energy surface and compare conformational energies with a higher-level theory. | Use a method with reparameterized core repulsion (e.g., PM3, AM1) or apply the PDDG modification [2]. |

| Erratic accuracy for new element combinations | Parameters are not transferable; method is outside its parametrization set [1] [2]. | Validate on a small test set with known properties for the uncommon bonds. | Use a non-empirically parameterized method like NOTCH, or a broadly parameterized method like PM6/PM7 if available for the elements [1]. |

| Incorrect prediction of reaction barriers | Semi-empirical methods often systematically overestimate activation barriers [2]. | Calculate the reaction profile for a known benchmark reaction. | Use the method only for initial screening; final barriers should be computed with higher-level ab initio or DFT methods. |

Research Reagent Solutions: Key Software and Hamiltonians

This table details essential computational "reagents" – the software and theoretical models that are foundational for research in this field.

| Item Name | Function / Application | Key Reference |

|---|---|---|

| MOPAC | A primary software platform for the development and use of the MNDO family of models (MNDO, AM1, PM3) [3]. | [3] |

| xTB | Software implementation for the modern GFN family of semi-empirical methods, heavily used for conformational sampling of large molecules [3]. | [3] |

| DFTB+ | Software for Density Functional Tight Binding (DFTB) methods, often used as a low-cost approximation to DFT [3]. | [3] |

| NDDO Hamiltonian | The underlying formalism for popular methods like MNDO, AM1, and PM3. It simplifies the Hartree-Fock equations by neglecting diatomic differential overlap [1] [2]. | [1] [2] |

| Slater-Koster Formalism | A tight-binding formalism using atomic orbitals to describe electronic energy bands in crystals; widely used in physics for solid-state materials [3]. | [3] |

| Pariser-Parr-Pople (PPP) Hamiltonian | A semi-empirical Hamiltonian for π-electron systems, useful for developing new computational approaches and understanding conjugated polymers [4]. | [4] |

Workflow for Method Selection and Diagnosis

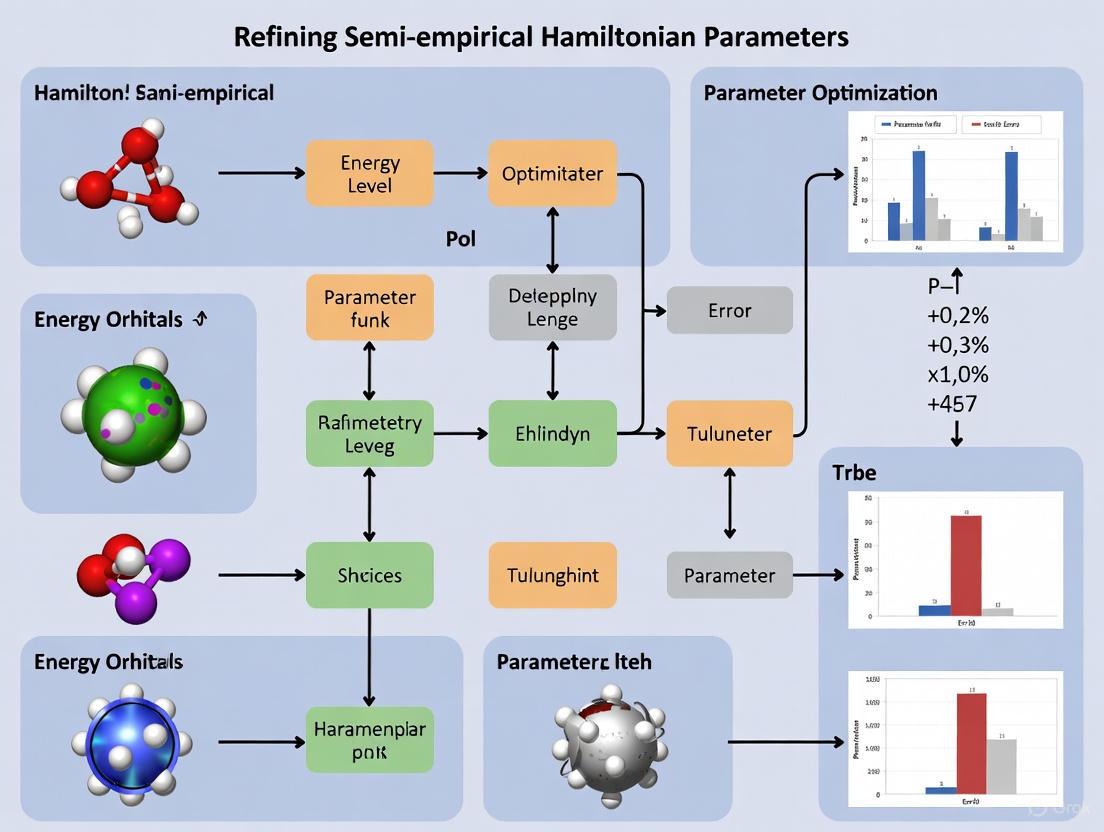

The following diagram outlines a logical workflow for selecting and diagnosing issues with semi-empirical methods based on your research problem.

Comparison of Core Approximations and Their Impact

This table provides a structured comparison of the core approximations discussed, highlighting their theoretical foundations and the resulting implications for computational research.

| Approximation | Theoretical Basis & Key Simplification | Primary Domain | Implications for Research |

|---|---|---|---|

| ZDO (Zero Differential Overlap) | Neglects all electron repulsion integrals involving differential overlap between different AOs. Drastically reduces integral number [1] [4]. | Foundation for many methods. | Greatest computational speed, but can lack physical accuracy. Inspired the development of more refined approximations [4]. |

| NDDO (Neglect of Diatomic Differential Overlap) | A specific ZDO approximation that retains one-center electron repulsion integrals. More physically realistic than simple ZDO [2]. | Quantum chemistry (MNDO, AM1, PM3). | Allows for better description of molecular properties but requires extensive parameterization. Known issues with H-bonds and repulsion in some implementations [2]. |

| Slater-Koster Formalism | Uses atomic orbitals to construct Hamiltonian matrix elements for crystals. Parameters (hopping integrals) are fitted to data or higher-level calculations [3]. | Solid-state physics (tight-binding). | Highly efficient for periodic systems and band structure calculation. Less directly applicable to general molecular quantum chemistry [3]. |

Semiempirical quantum chemical (SQC) methods are a class of computational models that use tailored approximations and empirical parameterizations to drastically reduce computational cost while maintaining a reasonable level of accuracy for studying large chemical systems [5] [6]. These methods originated soon after the discovery of quantum mechanics as scientists sought to perform quantum mechanical calculations before computers were powerful enough for accurate ab initio methods [5]. The core idea is to simplify the complex equations of quantum mechanics by neglecting certain terms (like some electron repulsion integrals) and parameterizing others to fit experimental data or higher-level theoretical results [6]. This makes them significantly faster than density functional theory (DFT) or wave function-based methods, allowing researchers to study systems comprising hundreds to thousands of atoms [7] [6].

Historical Development and Key Methodologies

The Foundations: Neglect of Differential Overlap Approximations

The development of SQC methods is rooted in the "neglect of differential overlap" approximation, which drastically reduces the number of electron repulsion integrals that need to be computed [8] [6].

- CNDO (Complete Neglect of Differential Overlap) was developed by Pople's group and was designed to roughly mimic minimal basis ab initio calculations [8].

- INDO (Intermediate Neglect of Differential Overlap) retains some one-center differential overlap terms, offering improved accuracy over CNDO [8].

- NDDO (Neglect of Diatomic Differential Overlap) provides a more sophisticated approximation and serves as the foundation for the widely used MNDO and AM1 methods [8].

The Dewar Group Methods: MNDO and AM1

The work of the Dewar group led to practical SQC methods optimized to reproduce molecular structures and energetics [8].

- MNDO (Modified Neglect of Diatomic Overlap) was parameterized for main group elements and represented a significant step forward in accuracy for organic molecules [8] [6].

- AM1 (Austin Model 1) refined MNDO by modifying the core-core repulsion function, leading to better treatment of hydrogen bonding and reduced repulsion between atoms at close distances [7] [6]. By citation count, AM1 reached a pinnacle of success among early SQM methods [5].

The PMx Family and DFTB Developments

Further refinements led to more advanced parameterizations and theoretical frameworks.

- PM3 (Parametric Method 3) and PM6 introduced more empirical parameters into the NDDO framework to improve accuracy [7] [6]. PM7 further corrected PM6 using classical potentials [7].

- DFTB (Density Functional Tight-Binding) methods are derived from a series expansion of the DFT energy expression with respect to a reference electron density, representing a different theoretical approach from NDDO-based methods [9].

- GFN-xTB (Geometry, Frequency, and Noncovalent interactions eXtended Tight Binding) is a modern DFTB-type method that includes anisotropic effects via multipolar contributions, parametrized for all elements up to atomic number 86 [7] [9].

Table 1: Historical Evolution of Key Semiempirical Methods

| Method | Development Era | Theoretical Basis | Key Improvements/Features |

|---|---|---|---|

| CNDO/INDO | 1960s-70s | Neglect of Differential Overlap | Early approximations mimicking minimal basis ab initio calculations [8] |

| MNDO | 1970s | NDDO | Parameterized for main group elements and thermochemistry [8] [6] |

| AM1 | 1980s | NDDO | Modified core-core repulsion; improved hydrogen bonding [7] [6] |

| PM3/PM6/PM7 | 1990s-2000s | NDDO | Additional empirical parameters; improved accuracy for broader chemistry [7] [6] |

| DFTB2/DFTB3 | 1990s-2000s | DFT Tight-Binding | Second- and third-order expansions of DFT energy [9] |

| GFN-xTB | 2010s | DFTB-type | Anisotropic atom-pairwise interactions; parametrized for entire periodic table [7] [9] |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Computational Tools for Semiempirical Research

| Software Tool | Primary Function | Common Applications |

|---|---|---|

| MOPAC | Implementation of MNDO family models (AM1, PM3, PM6, PM7) | Predicting heat of formation, equilibrium geometry of molecules [5] |

| DFTB+ | Platform for Density Functional Tight Binding methods | Generic low-cost approximation of DFT calculations [5] |

| xTB | Implementation of GFN-xTB methods | Heavy use with CREST for conformational sampling of molecules [5] |

| ORCA | General quantum chemistry package with SQM support | Structure optimizations, spectroscopic property calculations [8] |

| AMBER | Molecular dynamics package with SQM/MM capabilities | Enzymatic reaction simulations with QM/MM methods [10] |

Performance Benchmarking and Quantitative Comparisons

Modern semiempirical methods are routinely evaluated across diverse chemical domains to assess their accuracy and limitations.

Table 3: Performance Comparison of Modern Semiempirical Methods for Drug Discovery Applications [7]

| Method | Conformational Energies | Intermolecular Interactions | Tautomer/Protonation States | Relative Computational Cost |

|---|---|---|---|---|

| AM1 | Moderate | Poor to Moderate | Moderate | Low |

| PM6 | Moderate | Moderate | Moderate | Low |

| PM7 | Moderate to Good | Good | Good | Low |

| GFN1-xTB | Good | Good | Good | Medium |

| GFN2-xTB | Good | Good | Good | Medium |

| DFTB3 | Moderate | Moderate | Moderate to Good | Medium |

| AIQM1 (QM/Δ-MLP) | Excellent | Excellent | Excellent | High |

| QDπ (QM/Δ-MLP) | Excellent | Excellent | Excellent | High |

Recent benchmarking studies reveal important limitations. For liquid water simulations, most SQC methods with original parameters poorly describe static and dynamic properties due to overly weak hydrogen bonds [9]. Specifically, AM1 and PM6 produce a "far too fluid water with highly distorted hydrogen bond kinetics," while GFN2-xTB tends to overstructure water [9]. However, reparameterized versions like PM6-fm can quantitatively reproduce water's static and dynamic features [9].

Troubleshooting Guides and FAQs

Frequently Asked Questions: Method Selection and Applications

Q: Which semiempirical method should I choose for studying enzymatic reactions with QM/MM? A: For enzymatic QM/MM studies, GFN2-xTB and PM7 are good starting points due to their balanced performance for organic molecules and noncovalent interactions [7]. However, for critical applications like hydride transfer reactions where barriers are often underestimated, consider using optimized parameters specifically trained for your enzymatic system [10] [11]. Recent research demonstrates that multi-objective evolutionary strategies can significantly improve GFN2-xTB performance for specific enzymes like dihydrofolate reductase (DHFR) and Crotonyl-CoA carboxylase/reductase (CCR) [10].

Q: Why does my geometry optimization fail with ZINDO/S? A: ZINDO/S is parameterized specifically for electronic excitation calculations and lacks an accurate representation of nuclear repulsion [8]. The ORCA manual explicitly warns that using ZINDO/S for geometry optimizations "will lead to disastrous results" [8]. Instead, use ZINDO/1 or ZINDO/2 for geometry optimizations, and reserve ZINDO/S for excited state property calculations [8].

Q: How can I improve semiempirical method accuracy for my specific system? A: Parameter optimization is the most direct approach. Implement a multi-objective evolutionary strategy that targets ab initio or DFT-reference potential energy surfaces, atomic charges, and gradients [10]. For condensed phase systems, include free energy validation through minimum free energy path calculations [10]. The two-stage optimization process (initial training on reaction path data followed by refinement with targeted additional geometries) has proven effective for enzymatic systems while minimizing computational cost [11].

Q: Why are my liquid water simulations inaccurate with standard semiempirical parameters? A: Most standard SQC parameterizations (AM1, PM6, DFTB2, GFN-xTB) produce poor descriptions of liquid water because they underestimate hydrogen bond strength [9]. This results in overly fluid water with distorted hydrogen bond kinetics. Use specifically reparameterized methods like PM6-fm, which has been optimized for water and can quantitatively reproduce its static and dynamic features [9].

Troubleshooting Common Computational Issues

Problem: Unphysical molecular geometries or bond lengths during optimization

- Potential Cause: Incorrect method selection for geometry optimization (e.g., using spectroscopic methods instead of structure-optimized methods)

- Solution: Use methods specifically parameterized for structures (AM1, PM3, PM6, PM7 for main group elements; ZINDO/1 for transition metals) rather than spectroscopic methods (ZINDO/S) [8]

- Advanced Fix: Modify core repulsion parameters in the NDDO framework using the

%ndoparasblock in ORCA to adjust specific element interactions [8]

Problem: Systematic error in activation energy barriers for enzymatic reactions

- Potential Cause: Intrinsic limitations of generic parameters for specific chemical environments

- Solution: Implement Hamiltonian optimization through a multi-objective evolutionary strategy targeting reference potential energy surfaces [10]

- Workflow:

- Generate training set from reaction path data at DFT level

- Optimize parameters using evolutionary algorithm targeting energies, charges, and gradients

- Validate with minimum free energy path calculations [10]

Problem: Inaccurate description of hydrogen bonding in biomolecular systems

- Potential Cause: Underlying method limitations in capturing noncovalent interactions

- Solution: Use modern methods with better hydrogen bonding treatment (PM7, GFN2-xTB) or specialized reparameterizations (PM6-fm for water) [7] [9]

- Alternative: Apply dispersion corrections (e.g., D3H4X for PM6) or use hybrid QM/ML approaches (AIQM1, QDπ) for higher accuracy [7]

Diagram 1: Computational Workflows in Semiempirical Research

Advanced Protocols and Methodologies

Protocol: Multi-objective Evolutionary Strategy for Hamiltonian Optimization

This protocol describes the methodology for optimizing semiempirical Hamiltonian parameters for specific enzymatic systems [10].

Materials and Software Requirements:

- Quantum chemistry software (ORCA, Gaussian, or similar) for reference calculations

- QM/MM simulation package (AMBER with GFN2-xTB API support)

- Python environment with evolutionary algorithm implementation

- Training set data (IRC calculations, geometry scans at DFT level)

Procedure:

- Training Set Generation:

- Perform intrinsic reaction coordinate (IRC) calculations for target reactions at DFT level (e.g., M06-2X-D3/def2-TZVP)

- Conduct geometry scans around reaction center

- Extract potential energy surface data, atomic charges, and gradients

Multi-objective Optimization:

- Initialize population of parameter sets

- Evaluate objective functions (deviation from reference energies, charges, gradients)

- Apply evolutionary operators (selection, crossover, mutation)

- Iterate until convergence criteria met

Validation:

- Compute minimum free energy paths using Adaptive String Method

- Compare activation barriers and reaction energies to DFT reference

- Validate on secondary systems to ensure transferability

Troubleshooting Tips:

- If optimization converges slowly, adjust mutation rates or population size

- If parameters overfit, include more diverse geometries in training set

- For poor transferability, incorporate multiple chemical environments in training [10]

Protocol: Liquid Water Simulations with Reparameterized Methods

This protocol describes approaches for accurate liquid water simulations using reparameterized semiempirical methods [9].

Materials:

- Molecular dynamics simulation package (CP2K, AMBER, or similar)

- Reparameterized method files (PM6-fm, AM1-W, or DFTB2-iBi parameters)

- Initial water configuration (e.g., equilibrated cubic water box)

Procedure:

- Method Selection:

- For quantitative water properties: Use PM6-fm (force-matched reparameterization)

- For slightly overstructured water: Use DFTB2-iBi

- Avoid standard AM1, PM6, or GFN-xTB parameters for pure water simulations

Simulation Setup:

- Implement periodic boundary conditions

- Set temperature to 300K and appropriate density

- Use NVT or NPT ensemble based on property of interest

Analysis:

- Calculate radial distribution functions (O-O, O-H, H-H)

- Determine hydrogen bond lifetime via time correlation functions

- Compute diffusion coefficient from mean squared displacement

Troubleshooting:

- If water appears "too fluid": Check method parameters; standard methods underestimate H-bonding [9]

- If simulation crashes: Verify parameter file compatibility with simulation package

- If properties deviate from experimental: Ensure sufficient equilibration and sampling time [9]

Diagram 2: Hamiltonian Refinement and Application Strategy

The field of semiempirical quantum chemistry continues to evolve with several promising directions. Hybrid quantum mechanical/machine learning potentials (QM/Δ-MLPs) like AIQM1 and QDπ represent the cutting edge, combining the physical foundation of SQM methods with the accuracy of machine learning corrections [7]. These approaches perform exceptionally well for tautomers and protonation states relevant to drug discovery [7].

There is also growing interest in tighter integration between ab initio and semiempirical quantum mechanics through more flexible theoretical frameworks and modular software components [5]. This unification could enable more systematic improvement of SQM methods while maintaining their computational efficiency.

The historical trajectory from MNDO and AM1 to modern PMx and GFN-xTB methods demonstrates continuous progress in balancing computational efficiency with accuracy. As parameter optimization strategies become more sophisticated and integration with machine learning advances, semiempirical methods are poised to remain indispensable tools for studying large molecular systems in drug discovery, materials science, and biochemistry.

Troubleshooting Guides and FAQs

Data Sourcing and Curation

Q: What are the primary sources of high-quality reference data for training semi-empirical Hamiltonian parameters? A: The highest-quality reference data comes from two main sources:

- Experimental thermochemical data, particularly standard enthalpies of formation (ΔfH°) determined via high-precision combustion calorimetry [12].

- High-level ab initio quantum chemical methods, such as the W1X-1 composite method, which can achieve chemical accuracy (mean absolute deviation < 4 kJ mol⁻¹) and provide benchmark-quality data, especially for molecules where experimental data is unreliable or unavailable [12].

Q: How can I identify and handle inconsistent experimental data in my training set? A: Inconsistent data, a known issue in organosilicon thermochemistry, can be identified and handled through a specific protocol [12]:

- Benchmarking: Use a consistent set of high-level ab initio calculations (e.g., W1X-1) to generate a benchmark dataset for a range of molecules [12].

- Comparison: Compare existing experimental data against this computational benchmark to assess accuracy and pinpoint outliers [12].

- Curation: Systematically flag or remove experimental values that show significant, unexplained deviations from the high-level reference data. Literature reviews often note which experimental datasets have been repeatedly criticized for potential systematic errors [12].

Parameterization and Methodology

Q: My semi-empirical method performs poorly on compounds similar to those it was trained on. What could be wrong? A: This is a classic sign of over-fitting or an unbalanced training set. To troubleshoot [1]:

- Action 1: Verify your parameterization dataset includes a diverse and representative set of molecular structures, including various functional groups and element combinations relevant to your application domain.

- Action 2: Ensure the method is not overly fitted to a small subset of molecules. Use a hold-out validation set to test predictive performance on molecules not used in training.

- Action 3: Cross-check against established databases (e.g., NIST, Active Thermochemical Tables) to ensure your target properties, like enthalpies of formation, are accurate and consistent [13].

Q: What is the fundamental workflow for refining semi-empirical parameters using reference data? A: The standard workflow involves a cyclic process of calculation, comparison, and adjustment, as visualized below.

Q: How do I calculate thermochemical properties for large, flexible molecules where high-level ab initio methods are too expensive? A: For large molecules like long-chain alkanes, a conformational search is critical for an accurate entropy and free energy calculation. Do not rely on a single minimum-energy conformer [13]. Follow this integrated protocol:

- Conformational Search: Use a force-field-based method (e.g., Global-MMX) to perform an extensive search of the molecular conformational space [13].

- Quantum Chemical Calculation: Perform DFT calculations on all unique, low-energy conformers identified in the search [13].

- Boltzmann Averaging: Apply Boltzmann statistics to the calculated thermochemical properties of the conformers to obtain the final, temperature-dependent property for the flexible molecule [13].

The following diagram illustrates this multi-step framework.

Validation and Performance

Q: What strategies can I use to validate my newly parameterized semi-empirical method? A: A robust validation goes beyond the training set. Implement this multi-faceted approach:

- Strategy 1: Predict thermochemical properties for a hold-out set of molecules not used in training. Compare the results against high-level ab initio or reliable experimental data [12] [14].

- Strategy 2: Test the method's performance on property types not directly fitted, such as dipole moments, ionization potentials, or vibrational frequencies, to assess its transferability [1].

- Strategy 3: For systems with known chemical intuition (e.g., strain energy, conjugation effects), verify that the method reproduces these expected trends and does not produce unphysical results [14].

Q: My computational calculations are running out of memory or are too slow. How can I optimize performance? A: Performance issues in quantum chemical calculations can often be mitigated by adjusting numerical algorithms and parallelization strategies [15].

- Symptom: Running out of memory.

- For general calculations: Disable

store_gridsin the algorithm parameters. Consider disablingstore_basis_on_grid(this may impact speed) [15]. - For large molecules/bulk systems: If using a diagonalization solver, set the

density_matrix_methodtoDiagonalizationSolver(processes_per_kpoint=2). This reduces memory per MPI process [15]. - For device configurations: Set the

storage_strategyin theSelfEnergyCalculatortoStoreOnDiskto reduce memory usage [15].

- For general calculations: Disable

- Symptom: Calculation is too slow.

- For molecules/bulk systems: Set

store_basis_on_gridtoTruefor a speed increase (requires more memory). Limit the number of empty bands (bands_above_fermi_level) in the calculation, as including all bands significantly slows down the simulation without improving accuracy [15]. - Parallelization: When running in parallel over k-points, use the

processes_per_kpointparameter to allow for extra levels of parallelization beyond the number of k-points [15].

- For molecules/bulk systems: Set

Essential Data and Methods Reference

Table 1: Key High-LevelAb InitioMethods for Benchmark Data Generation

| Method | Description | Typical Accuracy (MAD) | Best For | Key Reference |

|---|---|---|---|---|

| W1X-1 | A high-level composite method; often used for generating benchmark-quality thermochemical data. | Can achieve chemical accuracy (< 4 kJ mol⁻¹) | Standard enthalpies of formation for molecules up to ~35 atoms [12]. | [12] |

| CBS-QB3 | A complete basis set method; offers a good balance between accuracy and computational cost. | Comparable to W1X-1 for some systems, at lower cost [12]. | Larger molecules where W1X-1 is prohibitive; validation [12]. | [12] |

Table 2: Common Semi-Empirical Method Families and Their Primary Fitting Targets

| Method Family | Examples | Primary Fitting Targets & Application Notes |

|---|---|---|

| NDDO-based | MNDO, AM1, PM3, PM6, PM7 | Targets: Experimental heats of formation, dipole moments, ionization potentials, and molecular geometries. This is the most common family [1]. |

| Spectroscopy-focused | ZINDO, SINDO | Targets: Electronically excited states; primarily used for predicting electronic spectra [1]. |

| Recent Advances | GFNn-xTB, NOTCH | Targets: GFNn-xTB is suited for geometries, vibrational frequencies, and non-covalent interactions. NOTCH is less empirical and designed for broad applicability [1]. |

| Resource / Solution | Function / Description | Relevance to Research |

|---|---|---|

| High-Level Ab Initio Codes (e.g., Molpro, Gaussian) | Software packages that implement high-accuracy composite methods (e.g., W1X-1, CBS-QB3) to generate reference data [12]. | Provides the "ground truth" benchmark data used to train and validate new semi-empirical parameters [12]. |

| Semi-Empirical Packages (e.g., MOPAC, CP2K) | Software implementing semi-empirical methods (e.g., AM1, PM6, DFTB) that are the target for parameter refinement [1]. | The platform where new parameters are implemented and tested for performance and accuracy. |

| Thermochemical Databases (e.g., NIST, ATcT, Burcat's database) | Curated collections of experimental and computed thermochemical data, such as standard enthalpies of formation [13]. | Used for constructing training and validation sets, and for identifying inconsistencies in experimental data [12] [13]. |

| Conformational Search Tools (e.g., PCMODEL/GMMX) | Software that uses force fields and Monte Carlo techniques to explore the conformational space of flexible molecules [13]. | Essential for obtaining accurate entropic contributions and free energies for large, flexible molecules during training set creation [13]. |

| Group Additivity Parameters | A set of contributions for molecular groups that allow fast estimation of thermochemical parameters [12]. | Useful for quick sanity checks on calculated or experimental data and for estimating properties of very large molecules [12]. |

Frequently Asked Questions (FAQs)

Q1: What is a common cause of poor transferability in a newly parameterized model? Poor transferability often arises from an insufficiently diverse training set. If the training data (e.g., molecular configurations) does not adequately represent the chemical space of the target application, the model's parameters will be overfitted and perform poorly on new systems [16].

Q2: How can I balance high model accuracy with maintaining physical interpretability? Using a physics-based model form, like a Semiempirical Quantum Chemical (SEQC) Hamiltonian, as the foundation for parameterization allows for high accuracy while retaining interpretability. The model learns from data but remains constrained to a physically meaningful functional form, unlike a "black box" neural network [16].

Q3: What is a practical strategy for managing a high-dimensional parameter space? A Global Sensitivity Analysis (GSA) can identify which parameters have the strongest influence on your model's output. You can then choose to optimize only the most sensitive parameters, which reduces computational cost and the risk of overfitting while still significantly improving model skill [17].

Q4: My model optimization is converging to different parameter sets. Is this a problem? Not necessarily. This phenomenon, known as equifinality, is common in complex models. The key is to ensure the different optimal parameter sets are uncorrelated and all produce a similar, high level of model performance for the assimilated variables [17].

Q5: How do I know if my parameter set is robust? A robust parameter set should demonstrate portability, meaning it performs well on data not used in training (a test set) and, ideally, on related but distinct systems (far-transfer). Testing on a hold-out dataset or a more complex system is crucial for validation [16].

Troubleshooting Guides

Issue 1: Catastrophic Forgetting in Sequential Parameter Learning

Problem: When learning parameters for a new task (e.g., a new class of molecules), the model loses performance on previously learned tasks.

Solution: Implement a parameter isolation strategy.

- Description: This method allocates distinct, task-specific sub-networks within a larger model. To balance stability of old tasks with plasticity for the new one, the sub-network for the current task can be decomposed into task-general and task-specific parameter spaces. The general parameters (which may overlap with old tasks) are updated with constraints to aid new learning without interfering excessively with old knowledge. The old task sub-networks remain frozen [18].

- Protocol:

- Identify Sub-network: For the new task, select a sub-network from the main model.

- Decompose Parameters: Split the sub-network's parameters into task-general (shared) and task-specific (unique) spaces.

- Constrained Training: Update the general parameter space with a higher regularization factor to minimize interference while training on the new task data.

- Freeze Old Experts: Keep the sub-networks and classifiers for all previous tasks frozen.

Issue 2: Low Accuracy Despite Extensive Training Data

Problem: The model fails to achieve target accuracy, even when trained on a large dataset.

Solution: Re-evaluate the flexibility and form of your model.

- Description: The model's functional form itself may be a limitation. A model with low flexibility cannot fully capture the complexities of the data, no matter how much training data is used. This is known as the model's "saturation point" [16].

- Protocol:

- Check for Saturation: Perform a learning curve analysis by training the model on increasing subsets of your data.

- Diagnose: If accuracy plateaus, the model form itself is the bottleneck.

- Refine the Model: Increase the model's flexibility. In the context of SEQC, this could mean replacing fixed parameters with one-dimensional functions (e.g., splines) for interatomic interactions, providing more degrees of freedom to learn from data [16].

Issue 3: High Computational Cost of Parameter Optimization

Problem: Optimizing all parameters in a complex model is computationally prohibitive.

Solution: Employ a strategic, sensitivity-based parameter selection.

- Description: Instead of optimizing all parameters, use a Global Sensitivity Analysis (GSA) to identify a smaller subset of parameters that control the majority of the model's behavior. This can dramatically reduce the dimensionality of the optimization problem [17].

- Protocol:

- Perform GSA: Conduct a global sensitivity analysis (e.g., using variance-based methods) on your model's parameters.

- Rank Parameters: Rank parameters by their "Total effect" sensitivity indices, which capture both their main and interaction effects.

- Select Subset: Choose a subset of the most sensitive parameters for optimization. Research suggests that optimizing a subset based on "Total effects" can achieve similar performance gains to optimizing all parameters at a fraction of the cost [17].

Experimental Protocols for Parameter Space Refinement

Protocol 1: Density-Functional Based Tight Binding Machine Learning (DFTBML) Parameterization

This protocol details the process for training a high-accuracy, interpretable SEQC model using a machine learning approach [16].

1. Objective: To determine the optimal parameters for a DFTB Hamiltonian by directly training them on high-quality ab initio data, achieving accuracy comparable to Density Functional Theory (DFT) while maintaining a physically meaningful model form.

2. Materials & Computational Setup:

- Training Data: A curated set of molecular configurations with associated reference energies (e.g., the ANI-1CCX dataset for organic molecules). Ensure no empirical formula overlap between training and test sets.

- Software: A differentiable programming framework (e.g., PyTorch [16]) for model implementation and optimization.

- Model Form: The SKF-DFTB model form, where atomic orbital energies are constants and Hamiltonian matrix elements, overlap integrals, and repulsive potentials are one-dimensional functions of interatomic distance.

3. Step-by-Step Procedure:

- Step 1: Data Preparation. Split the data by unique empirical formulas into training, validation, and testing sets to ensure genuine generalization [16].

- Step 2: Function Representation. Represent the one-dimensional electronic functions (H, S) using fifth-order splines and repulsive potentials (R) using third-order splines. Define their distance ranges based on the distribution of pairwise distances in the training data.

- Step 3: Precomputation. In a precompute phase, calculate and store all information that is independent of the model parameters to drastically speed up the training loop.

- Step 4: Model Training. Optimize all model parameters by minimizing an L2 loss function between the model's predicted energies and the reference ab initio energies. Use the validation set for early stopping.

- Step 5: Model Export. Export the final trained parameters in the standard Slater-Koster File (SKF) format, making them usable in various computational chemistry packages.

4. Data Interpretation:

- Success is achieved when the root-mean-square error (RMSE) on the independent test set approaches chemical accuracy (1-3 kcal/mol) and is comparable to the target level of theory (e.g., DFT).

- Performance saturation with increasing training data size indicates the model form's intrinsic limits have been reached [16].

Protocol 2: Multi-Variable Data Assimilation for Biogeochemical Parameters

This protocol outlines a framework for optimizing a large number of parameters (e.g., 95) in a complex model using diverse observational data [17].

1. Objective: To constrain a high-dimensional parameter space using a rich, multi-variable dataset, reducing model uncertainty and producing a robust, portable parameter set.

2. Materials & Data Requirements:

- Observational Data: A comprehensive suite of contemporaneous and co-located observations (e.g., from BGC-Argo floats measuring 20+ biogeochemical metrics).

- Model: The numerical model to be optimized (e.g., the PISCES biogeochemical model in a 1D configuration).

- Optimization Algorithm: An iterative Importance Sampling (iIS) algorithm or similar Bayesian inference method.

3. Step-by-Step Procedure:

- Step 1: Global Sensitivity Analysis (GSA). Perform a GSA on all model parameters to identify those with the strongest influence on the model outputs. This is computationally expensive but critical for strategic optimization [17].

- Step 2: Strategy Selection. Choose an optimization strategy:

- Main Effects: Optimize only the top parameters identified by their main (direct) effects.

- Total Effects: Optimize a larger subset of parameters identified by their total effects (including interaction effects).

- All-Parameters: Simultaneously optimize all model parameters (recommended for a comprehensive uncertainty quantification) [17].

- Step 3: Parameter Optimization. Assimilate the multi-variable dataset using the chosen strategy (e.g., with iIS) to find the posterior distributions of the parameters.

- Step 4: Validation. Assess the optimized model's skill by calculating the Normalized Root Mean Square Error (NRMSE) reduction against a held-out portion of the data. Test the portability of the parameter set to independent data or different locations.

4. Data Interpretation:

- A successful optimization will show a significant reduction (e.g., 54-56%) in NRMSE across the assimilated variables [17].

- The posterior parameter distributions should show reduced uncertainty and minimal inter-correlation, indicating the data provide orthogonal constraints ("uncorrelated equifinality") [17].

Experimental Workflow Visualization

The following diagram illustrates the high-level workflow for refining parameters in a semi-empirical Hamiltonian, integrating strategies from the troubleshooting guides and protocols.

Diagram 1: Parameter Space Refinement Workflow.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational and data "reagents" essential for parameter space refinement experiments.

| Item Name | Function & Purpose | Key Characteristics |

|---|---|---|

| High-Quality Training Dataset [16] | Provides the reference data for parameter optimization. | Includes diverse molecular configurations; reference energies from high-level ab initio methods (e.g., CCSD(T)*/CBS); split by empirical formula to prevent data leakage. |

| Differentiable Model Framework [16] | Enables efficient computation of gradients for all model parameters with respect to a loss function. | Implemented in frameworks like PyTorch or TensorFlow; allows for seamless integration of model prediction and parameter update steps. |

| Global Sensitivity Analysis (GSA) Tool [17] | Identifies which model parameters have the greatest influence on outputs, guiding optimization efforts. | Calculates variance-based sensitivity indices (e.g., Sobol indices); distinguishes between "Main" and "Total" effects to capture parameter interactions. |

| Iterative Importance Sampling (iIS) [17] | A Bayesian optimization algorithm used to find posterior distributions of parameters by assimilating observational data. | Efficiently explores high-dimensional parameter spaces; provides estimates of parameter uncertainty and model prediction spread. |

| Slater-Koster File (SKF) Format [16] | A standard file format for distributing the parameters of semi-empirical quantum chemical methods. | Ensures interoperability; allows trained models to be used in various computational chemistry packages (e.g., DFTB+). |

Modern Parameterization Workflows and Software Tools

Frequently Asked Questions (FAQs)

FAQ 1: What is Average Unsigned Error (AUE), and why is it critical for validating semiempirical methods?

The Average Unsigned Error (AUE) is a key metric used to quantify the average magnitude of errors between a property predicted by a computational method (like a semiempirical Hamiltonian) and its reference value, which can be from high-level ab initio calculations or experimental data [19]. It is calculated as the average of the absolute values of these differences. AUE is critical for validation because it provides a single, easily interpretable number that summarizes the accuracy of a method for a given property, such as heat of formation (∆Hf) or molecular geometry (bond lengths, angles) [19]. A lower AUE indicates a more accurate and reliable parameterization.

FAQ 2: My optimized protein structures have unrealistically high "clashscores." What parameter is likely responsible, and how can I fix it?

High clashscores often result from inadequate description of long-range, weak van der Waals (vdW) repulsive interactions in the Hamiltonian [20]. In methods like PM6-D3H4, the absence of a repulsive force between non-bonded, non-interacting atoms allows them to be pulled too close during optimization. Solution: Implement a modified core-core repulsion function. A recent approach adds a repulsive term to the diatomic core-core parameter (cA,B). You can optimize parameters for this new function using a training set that includes proxy systems for vdW repulsion, forcing the method to learn the correct physical behavior at contact distances [20].

FAQ 3: How can I optimize parameters for a specific enzymatic reaction without losing transferability to other systems?

This is a delicate balance. A multi-objective evolutionary strategy is effective. This approach optimizes parameters against multiple target properties simultaneously (e.g., potential energy surfaces, atomic charges, and gradients from DFT references) [11] [21]. To maintain transferability:

- First Stage: Optimize parameters using high-quality reference data from a representative model reaction (e.g., a hydride transfer in a specific enzyme like CCR) [11] [21].

- Second Stage: Refine these parameters by incorporating a targeted set of additional training geometries from a second enzymatic system (e.g., DHFR) [11] [21]. This two-stage process ensures accuracy for your systems of interest while grounding the parameters in broader chemical data, preserving general applicability [11] [21].

FAQ 4: What are the most efficient algorithms for navigating a high-dimensional parameter space in Hamiltonian optimization?

For high-dimensional and computationally expensive optimizations, modern strategies favor Bayesian Optimization (BO) and Evolutionary Strategies.

- Bayesian Optimization (BO): Builds a probabilistic surrogate model of the objective function (e.g., AUE) to intelligently select the most promising parameter sets to evaluate next, leading to faster convergence [22]. It is particularly useful when each evaluation (e.g., a full protein geometry optimization) is very costly.

- Multi-Objective Evolutionary Strategy: A population-based method inspired by natural selection. It is highly effective for exploring complex parameter spaces and is well-suited for optimizing multiple, potentially competing objectives simultaneously, such as minimizing AUE for both geometry and reaction barriers [11] [21].

Troubleshooting Guide

| Symptom | Possible Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| High AUE in heats of formation (∆Hf) for organic solids. | Parameterization focused only on gas-phase molecules; poor description of long-range electrostatics in periodic systems [19]. | Compare AUEs for gas-phase molecules vs. crystalline solids. A large discrepancy points to a solid-state issue. | Modify the NDDO formalism to ensure electron-electron, electron-nuclear, and nuclear-nuclear interaction terms converge exactly to point charge values at distances beyond 7.0 Å [19]. |

| Unrealistically low activation energy barriers in QM/MM simulations. | The Hamiltonian incorrectly describes the potential energy surface around the transition state [11]. | Calculate the minimum free energy path (MFEP) and compare the barrier height to high-level (e.g., DFT) reference data [11]. | Re-optimize parameters using a multi-objective strategy targeting the reproduction of the ab initio reference potential energy surface along the reaction path [11] [21]. |

| Poor prediction of protein-ligand interaction energies. | Inaccurate modeling of the diverse non-covalent interactions (e.g., dispersion, halogen bonding) in the binding site [20]. | Check for unrealistic atom clashes in the optimized protein-ligand complex and analyze energy component breakdowns. | Start from a method proven for PLI (e.g., PM6-D3H4) and expand the training set to include diverse protein-ligand complexes and their interaction energies [20]. |

| Parameter optimization fails to converge or converges to a poor solution. | The training set is too narrow, contains conflicting data, or the optimization algorithm is stuck in a local minimum. | Audit the training set: Remove high-energy, non-biochemically relevant species and add small-system proxies for specific interactions (e.g., vdW repulsion) [20]. | Use a broader, more biochemically relevant training set [20]. Switch to a global optimization algorithm like an evolutionary strategy, which is better at escaping local minima [11]. |

Experimental Protocols & Data Presentation

Protocol 1: Reparameterization to Reduce Geometric AUE and Clashscores

Objective: To optimize core-core repulsion parameters to minimize structural AUE and reduce atom-clash artifacts in protein structures [20].

Methodology:

- Select a Training Set: Choose a set of high-resolution protein crystal structures with low experimental clashscores.

- Define Proxy Systems: For critical non-bonded atom pairs (e.g., H···O, C···C), create simple molecular dimers (e.g., two water molecules for H···O). Calculate the reference interaction energy at a large separation (e.g., 50 Å) and at the vdW contact distance using a high-level ab initio method. The energy difference is your repulsion proxy [20].

- Modify the Core-Core Function: Implement a new repulsive term in the core-core interaction function. For example, the modified diatomic parameter ( c'{A,B} ) can be: ( c'{A,B} = c_{A,B} + a \cdot e^{-b(r - c)^2} ) where ( a, b, c ) are the new element-pair-specific parameters to be optimized, and ( r ) is the interatomic distance [20].

- Optimize Parameters: Use an optimization algorithm (e.g., evolutionary strategy) to find the parameters ( a, b, c ) that minimize the AUE of the training set protein geometries and the error in the proxy repulsion energies.

- Validation: Validate the new parameter set on a separate set of protein structures not included in the training. Check for improved clashscores and maintained or improved accuracy in bond lengths and angles.

Protocol 2: Multi-Objective Optimization for Enzymatic Reaction Barriers

Objective: To refine Hamiltonian parameters for accurate prediction of potential and free energy surfaces in enzymatic QM/MM simulations [11] [21].

Methodology:

- Generate Reference Data: For a target enzymatic reaction (e.g., hydride transfer in DHFR), compute the intrinsic reaction coordinate (IRC) and associated potential energy surface, atomic charges, and gradients using a high-level DFT method [11] [21].

- Define Objective Functions: Set up multiple objective functions to be minimized simultaneously:

- AUE between semiempirical and DFT potential energies.

- AUE between atomic charges.

- AUE between energy gradients.

- Run Multi-Objective Evolutionary Optimization: Employ an evolutionary algorithm to search the parameter space. The algorithm generates populations of parameter sets, evaluates them against all objectives, and uses selection, crossover, and mutation to evolve improved parameter sets over generations [11].

- Validation via Free Energy: Use the optimized parameters in QM/MM simulations to calculate the Minimum Free Energy Path (MFEP) and the activation free energy barrier. Compare this to the DFT-derived barrier to confirm improvement [11] [21].

Quantitative Data from Literature

Table 1: Reported AUE Reductions from Parameter Optimization in Semiempirical Methods

| Method / Version | Property | System Type | AUE Before Optimization | AUE After Optimization | % Reduction | Citation |

|---|---|---|---|---|---|---|

| PM7 vs PM6 | Heat of Formation (∆Hf) | Simple Gas-Phase Organics | (Baseline PM6) | ~10% lower than PM6 | ~10% | [19] |

| PM7 vs PM6 | Bond Lengths | Simple Gas-Phase Organics | (Baseline PM6) | ~5% lower than PM6 | ~5% | [19] |

| PM7 vs PM6 | Heat of Formation (∆Hf) | Organic Solids | (Baseline PM6) | 60% lower than PM6 | 60% | [19] |

| PM7 vs PM6 | Geometry (Overall) | Organic Solids | (Baseline PM6) | 33.3% lower than PM6 | 33.3% | [19] |

| PM7-TS vs PM7 | Reaction Barrier Heights | Simple Organic Reactions | 10.8 kcal/mol | 3.8 kcal/mol | ~65% | [19] |

Workflow Visualization

Diagram Title: High-Level Parameter Optimization Workflow

Diagram Title: Systematic Troubleshooting for High AUE

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Software and Computational Tools for Parameter Optimization

| Tool Name | Type | Primary Function in Optimization | Reference |

|---|---|---|---|

| MOPAC | Software | The classic platform for developing and using MNDO-family semiempirical methods (e.g., PM6, PM7). Used for calculating heats of formation and equilibrium geometries. | [23] |

| xTB (with GFN families) | Software | Implementation of the modern GFN-xTB methods. Heavily used for conformational sampling and as a base for re-parameterization. | [23] |

| DFTB+ | Software | Implementation of the Density Functional Tight-Binding (DFTB) method. Used as a low-cost approximation to DFT. | [23] |

| Multi-Objective Evolutionary Algorithm | Algorithm | An optimization strategy that evolves a population of parameter sets to simultaneously improve multiple, competing objectives (e.g., energy, charge, and gradient accuracy). | [11] [21] |

| Bayesian Optimization (BO) | Algorithm | An efficient global optimization algorithm that uses a surrogate model to guide the search for optimal hyperparameters, ideal for expensive objective functions. | [22] [24] |

| Adaptive String Method (ASM) | Method | A technique used in QM/MM simulations to calculate the Minimum Free Energy Path (MFEP), crucial for validating optimized parameters against reaction barriers. | [11] [21] |

Frequently Asked Questions (FAQs)

Q1: My semi-empirical calculations are not converging or are producing unrealistic energies for a large protein-ligand system. What are the first steps I should take?

A1: Begin by systematically checking the following, as inaccurate parameters or improper system setup are common causes:

- Parameter Transferability: Verify that the Hamiltonian parameters you are using were fitted for all the atom types present in your system, especially any metal ions or unusual functional groups. Using parameters outside their intended chemical space is a primary source of error [25] [26].

- Coordinate and Bonding Check: Manually inspect the input crystal structure, paying close attention to the protonation states of residues, the assignment of bond orders in the ligand, and the overall geometry. Use a tool like Monster to validate the atomic coordinate file and identify any improbable non-covalent interactions [27].

- Control Calculation: Perform a single-point energy calculation on a smaller, well-defined fragment of your system (e.g., the active site with the ligand and key residues). If this fails, the issue is likely with the specific parameters or the chemical setup of that fragment.

Q2: How can I use experimental crystal structure data to validate and improve the description of non-covalent interactions in my semi-empirical method?

A2: Experimental crystal structures are a critical benchmark for evaluating theoretical models. You can:

- Quantify Interactions: Use computational tools like QTAIM (Quantum Theory of Atoms in Molecules) and IGMH (Independent Gradient Model based on Hirshfeld partitioning) on your experimental crystal structure. These methods can identify and quantify key non-covalent interactions such as C–H···F contacts and C–H···π interactions [28].

- Compare with Calculations: Run your semi-empirical method on the geometry from the crystal structure. Compare the non-covalent interaction patterns and energies it predicts against the QTAIM/IGMH analysis. Systematic discrepancies highlight areas where the Hamiltonian parameters may need refinement to better capture the true physical interactions [28] [29].

- Leverage Hirshfeld Surface Analysis: Generate Hirshfeld surfaces for your crystal structure. This provides a visual and quantitative map of all intermolecular interactions, which can be directly compared to the electron density properties predicted by your model [29].

Q3: What does it mean that a machine learning model can "dynamically parameterize" a semi-empirical Hamiltonian, and how does this help with troubleshooting?

A3: Traditional semi-empirical methods use a single, static set of parameters for each atom type. A dynamically parameterized Hamiltonian, such as in the HIPNN+SEQM (Hierarchical Interacting Particle Neural Network + Semi-Empirical Quantum Mechanics) approach, uses a neural network to predict Hamiltonian parameters that change based on the atom's local chemical environment [25].

- This helps troubleshooting by:

- Improving Transferability: The model becomes more accurate when applied to large or new chemical systems not present in the training data, reducing a major source of error [25].

- Providing Interpretability: The neural network's adjustments to parameters like "orbital energy" can be interpreted through the lens of established chemical concepts like atomic hybridization and bonding, giving you insight into why a particular calculation might be failing [25].

Troubleshooting Guides

Guide 1: Systematic Troubleshooting of Failed Energy Calculations

Follow this logical pathway to diagnose and resolve common calculation failures.

Guide 2: Resolving Issues with Non-Covalent Interaction Predictions

If your model poorly reproduces experimental observables linked to non-covalent forces (e.g., binding affinities, conformational stability), follow this guide.

Step 1: Benchmark Against Advanced Reference Data

- Action: Perform a QTAIM or IGMH analysis on the high-resolution experimental crystal structure relevant to your system. These methods quantify the strength and nature of non-covalent interactions [28].

- Rationale: This provides a "ground truth" reference against which to compare your semi-empirical method's predictions.

Step 2: Identify the Specific Discrepancy

- Action: Compare the key interactions identified by QTAIM/IGMH (e.g., strong C–H···F contacts, diffuse C–H···π networks) with those from your calculation. Note which interactions are missing, too weak, or too strong in the theoretical model [28] [29].

Step 3: Refine Hamiltonian Parameters

- Action: The discrepancies identified in Step 2 serve as direct targets for Hamiltonian refinement.

- Methodology: Employ a parameterization strategy that uses simulation techniques to systematically improve how the Hamiltonian reproduces energy components, particularly those responsible for non-covalent interactions [26]. The goal is to optimize parameters so the model's description of electron density aligns with the benchmark QTAIM/IGMH data.

Data and Protocol Tables

Table 1: Computational Tools for Analyzing Crystal Structures and Interactions

| Tool Name | Primary Function | Application in Troubleshooting | Key Reference / Source |

|---|---|---|---|

| QTAIM (Quantum Theory of Atoms in Molecules) | Quantifies bond paths and properties at bond critical points to characterize interactions. | Validates the strength and type of non-covalent interactions predicted by a theoretical model against a crystal structure benchmark. | [28] [29] |

| IGMH (Independent Gradient Model based on Hirshfeld) | Visualizes and quantifies non-covalent interactions in real space; highlights directionality. | Identifies key stabilizing contacts (e.g., C–H···F) and contrasts diffuse vs. directional interactions in a system. | [28] |

| Monster | Infers and classifies non-bonding interactions in macromolecular structures from coordinate files. | Provides an initial, rapid validation of input coordinates and a checklist of expected interactions to be modeled correctly. | [27] |

| HIPNN+SEQM | A machine learning model that dynamically generates environment-dependent Hamiltonian parameters. | Improves accuracy and transferability for large systems; parameter changes are interpretable based on chemical environment. | [25] |

| Item | Function / Relevance | Brief Explanation / Troubleshooting Tip |

|---|---|---|

| High-Resolution Crystal Structure | Experimental reference data. | Serves as the fundamental benchmark for validating and refining computational models of non-covalent interactions. |

| Semi-Empirical Parameter Set | Defines the Hamiltonian for the calculation. | Ensure the set is designed for your specific atom types. Dynamic parameterization can overcome transferability issues [25]. |

| Protocol Repositories (e.g., Bio-protocol, Current Protocols) | Source of reliable experimental and computational methods. | Provides established troubleshooting guides and expected results for standard techniques, helping to isolate user error [30]. |

| Validated Atomic Coordinate File (ACF) | Input for computational analysis. | Use tools to check for proper protonation, geometry, and absence of clashes. A faulty AF is a common source of failure [27]. |

Experimental Protocol: Utilizing QTAIM/IGMH to Benchmark a Semi-Empirical Hamiltonian

Objective: To evaluate and refine the performance of a semi-empirical Hamiltonian by comparing its prediction of non-covalent interactions against a benchmark analysis of an experimental crystal structure.

Methodology:

Select and Prepare the Benchmark System:

- Obtain a high-resolution crystal structure (e.g., from the Protein Data Bank or Cambridge Structural Database) relevant to your research, such as a protein-ligand complex or organic cocrystal.

- Prepare the structure for analysis by adding hydrogen atoms using a program like WHAT IF [27] to ensure correct protonation states.

Perform Reference Analysis on the Crystal Structure:

- Subject the prepared crystal structure to a QTAIM analysis to calculate electron density-derived properties at bond critical points.

- In parallel, perform an IGMH analysis on the same structure to visualize and quantify the non-covalent interaction networks, noting the strength and spatial directionality of key contacts like C–H···F or C–H···π interactions [28].

Run the Semi-Empirical Calculation:

- Using the exact same geometry from the crystal structure, perform a single-point energy calculation with your semi-empirical method of choice.

Compare and Analyze Discrepancies:

- Compare the non-covalent interactions identified by the semi-empirical method's electron density with the QTAIM/IGMH benchmark.

- Tabulate the differences in interaction energy, presence/absence of specific contacts, and overall stabilization energy. This table becomes the quantitative basis for refinement.

Refine Hamiltonian Parameters:

- Use a systematic parameterization procedure, such as simulation techniques that minimize the difference between the semi-empirical and benchmark energy components [26].

- Focus the refinement on improving the simulation of electron repulsion integrals, which are fundamental to representing total electronic and core-core repulsion energies [26].

- Iterate until the semi-empirical model's description of non-covalent interactions aligns closely with the QTAIM/IGMH benchmark.

In the realm of computational chemistry and drug development, semi-empirical quantum mechanical methods provide an essential balance between computational cost and accuracy. Software packages like MOPAC, xtb, and DFTB+ leverage carefully parameterized Hamiltonian models to enable the study of large molecular systems, including proteins, nanomaterials, and complex solvated environments, which would be prohibitively expensive with purely ab initio methods. These built-in parameter sets are not static; they represent the culmination of decades of research, fitted to both experimental data and high-level theoretical references, covering properties such as heats of formation, geometric data, ionization potentials, and dipole moments [31] [32]. The central thesis of modern research in this field is the ongoing refinement of these semi-empirical Hamiltonian parameters, aiming to bridge the accuracy gap with more computationally intensive methods without sacrificing the interpretability and speed that make semi-empirical approaches so valuable.

This technical support center is designed to assist researchers, scientists, and drug development professionals in navigating the practical challenges of using these powerful tools. The following sections provide troubleshooting guides, FAQs, and detailed protocols to help you effectively leverage built-in parameters and explore the frontier of parameter refinement in your research.

Comparative Analysis of Core Software Packages

The table below summarizes the core features and parameter sets of the three primary software packages discussed in this guide.

Table 1: Comparison of MOPAC, xtb, and DFTB+ Software Features

| Feature | MOPAC | xtb | DFTB+ |

|---|---|---|---|

| Primary Method | NDDO-based Semi-empirical [32] | Extended Tight-Binding (GFNn-xTB) [33] | Density Functional Tight-Binding (DFTB) [34] |

| Built-in Parameter Sets | AM1, PM3, PM6, PM7 [32] [35] | GFN1-xTB, GFN2-xTB [33] | Non-SCC, SCC, DFTB3 [36] |

| Key Specialization | Biomolecules & Thermochemistry [31] | General-purpose, including non-covalent interactions [33] | Versatile, including materials and periodic systems [34] [36] |

| Solvation Models | COSMO [31] [32] | COSMO, CPCM-X [33] | Several implicit models [36] |

| Periodic Systems | Basic support (Gamma point) [32] | Supported via DFTB+ & others [33] | Full support with arbitrary k-point sampling [36] |

| Unique Tools | MOZYME (linear-scaling for enzymes) [31] | GFN-FF (force field), DIPRO [33] | Electron transport (NEGF), EMD, CI finder [34] [36] |

| License | Apache 2.0 (Open Source) [32] | LGPL (Open Source) [34] | LGPL (Open Source) [34] |

The Scientist's Toolkit: Essential Research Reagents and Software Solutions

In computational research, "reagents" refer to the fundamental software components and parameter sets that form the basis of your experiments.

Table 2: Key Research Reagent Solutions in Semi-Empirical Quantum Chemistry

| Item | Function & Explanation |

|---|---|

| Semi-Empirical Parameter Sets (e.g., PM7, GFN2-xTB) | These are the core "reagents." They are pre-fitted collections of atomic parameters (e.g., orbital energies, Slater orbital exponents) that define the Hamiltonian. They replace expensive integrals with approximations, granting speed while maintaining quantum mechanical treatment [31] [35] [33]. |

| Implicit Solvation Models (e.g., COSMO, CPCM) | These models act as a "reagent" for simulating solution-phase environments. They replace explicit solvent molecules with a dielectric continuum, dramatically reducing computation cost for studying solvation effects, pKa, and redox potentials [31] [33]. |

| Repulsive Potentials (2nd and 3rd order) | In DFTB and xTB, these are pairwise functions that account for internuclear repulsion and corrections for the incomplete electronic Hamiltonian. They are critical for obtaining accurate geometries and energies and are a primary target for parameterization [16]. |

| Dispersion Correction Schemes (e.g., D3, D4) | These are "add-on reagents" that account for long-range van der Waals interactions, which are often poorly described in base semi-empirical methods. They are essential for modeling non-covalent interactions in drug binding and supramolecular chemistry [36]. |

| Linear-Scaling Solvers (e.g., MOZYME) | This algorithmic "reagent" enables the study of very large systems (e.g., proteins with 15,000 atoms) by reducing the computational scaling of the self-consistent field procedure. It is crucial for applying semi-empirical methods to biological systems [31] [32]. |

Troubleshooting Common Software and Parameterization Issues

Frequently Asked Questions (FAQs)

Q1: My geometry optimization of a large protein is failing or running extremely slowly in MOPAC. What should I check? A: This is a common issue. First, ensure you are using the MOZYME keyword, which enables the linear-scaling algorithm designed specifically for large systems like proteins [31] [32]. Second, verify that your input structure has a reasonable initial geometry and all bonds are correctly assigned. MOZYME requires the identification of a Lewis structure to initialize the calculation, which can fail for systems with unusual bonding or unphysical initial coordinates [31].

Q2: When calculating vibrational frequencies with xtb, I get unrealistic low-frequency modes. What could be the cause?

A: Unrealistic low-frequency modes, often called "imaginary frequencies" if negative, can stem from two main sources. First, your initial geometry may not be fully optimized to a true minimum on the potential energy surface. Re-run the geometry optimization with tighter convergence criteria (e.g., --gfn 2 --opt extreme). Second, ensure you are using an appropriate Hamiltonian for your system; for example, GFN2-xTB generally provides more accurate geometries and frequencies than GFN1-xTB for organic molecules [33].

Q3: Can I use DFTB+ to simulate a chemical reaction in an explicit solvent environment? A: While DFTB+ itself primarily uses implicit solvation models [36], it has robust support for QM/MM (Quantum Mechanics/Molecular Mechanics) coupling. This allows you to treat the reacting part of the system with DFTB (QM) while embedding it in a shell of explicit solvent molecules modeled with a force field (MM). This is a more advanced but highly accurate approach for modeling solvation effects on reactivity [36].

Q4: My calculation involving a lanthanide ion fails in MOPAC. Are these elements supported? A: Yes, but with a specific approach. For lanthanides from Ce to Yb, MOPAC represents them as "sparkles," which are parameterized ions without electrons, designed to mimic the electrostatic and steric influence of the ion [32]. You must use the appropriate sparkle model, such as Sparkle/PM6, for these elements. Note that the electronic structure of the lanthanide itself is not calculated [32].

Q5: What is the difference between the "static" parameters in standard DFTB+ and the "dynamic" parameters in machine-learning approaches? A: Static parameters in standard DFTB (and other SEQM methods) are fixed values, optimized to reproduce reference data for a wide range of molecules [16]. They are transferable but can lack accuracy for specific systems. Dynamic parameters, as used in emerging machine-learning approaches like HIPNN+SEQM or DFTBML, are not fixed. Instead, they are predicted on-the-fly by a neural network that considers the local chemical environment of each atom [25] [16]. This allows the Hamiltonian to adapt to specific bonding situations (e.g., changes in hybridization), often leading to significantly improved accuracy while retaining the physical interpretability of the model.

Workflow for Troubleshooting Calculations

The following diagram outlines a logical pathway for diagnosing and resolving common issues encountered during semi-empirical calculations.

Diagram 1: Troubleshooting Calculation Failures

Experimental Protocols for Parameter Refinement Research

A key area of modern research is the refinement of semi-empirical parameters to improve accuracy and transferability. The following protocol details a methodology for refining Hamiltonian parameters using machine learning, as demonstrated in recent literature [25] [16].

Protocol: Machine-Learning Assisted Refinement of DFTB Parameters (DFTBML)

Objective: To train a physics-based DFTB model to reproduce high-level ab initio data (e.g., CCSD(T)/CBS or DFT) for molecular energies and forces, thereby creating a more accurate yet interpretable model.

Materials (Software):

- DFTB+ software package (as a library or for final validation) [34] [16].

- Python (v3.8+) with PyTorch for building and training the model [16].

- Training Dataset: A curated set of molecular configurations with reference energies and forces. The ANI-1CCX dataset is a suitable example for organic molecules (C, H, N, O) [16].

Methodology:

Data Preparation and Preprocessing:

- Divide the dataset into training, validation, and test sets, ensuring no molecular formulas overlap between sets to test generalizability.

- Analyze the distribution of interatomic distances in the training data to define the appropriate range for the spline functions used in the model.

Model Definition (DFTBML Form):

- The model retains the standard DFTB Hamiltonian form but replaces fixed parameters with flexible functions.

- Atomic Orbital Energies: Train these as constants.

- Electronic Functions (H, S): Represent these one-dimensional functions of interatomic distance using fifth-order splines with a large number (e.g., 100) of knots for flexibility.

- Repulsive Potential (R): Represent this using third-order splines.

- Apply strong regularization and boundary conditions (e.g., forcing functions and derivatives to zero at long range) to prevent non-physical behavior [16].

Model Training:

- The optimization objective is to minimize the loss function, typically a weighted sum of L2 losses on the total molecular energy and atomic forces.

L = (1/N_prop) * Σ (E_pred - E_ref)² + w_force * (1/N_prop) * Σ |F_pred - F_ref|²- Use a standard optimizer like Adam or L-BFGS. Training is considered complete when the error on the validation set no longer decreases.

Model Validation and Deployment:

- Validate the final trained model on the held-out test set of molecules.

- The output of the training process is a set of Slater-Koster (SKF) files. These can be used directly in the standard DFTB+ software to perform calculations on new systems, ensuring the model's practicality and transferability [16].

The following diagram visualizes this integrated workflow, showing how machine learning enhances the traditional parameterization process.

Diagram 2: ML-Driven Hamiltonian Refinement Workflow

Advanced Topics: Integrating Machine Learning with Semi-Empirical Frameworks

The field is rapidly evolving with the integration of machine learning to create more powerful and intelligent computational tools. Two prominent approaches are:

Dynamically Responsive Hamiltonians (e.g., HIPNN+SEQM): This approach uses a deep neural network (Hierarchical Interacting Particle Neural Network) to predict the parameters of a semi-empirical Hamiltonian (like PM3) dynamically based on the local chemical environment of each atom [25]. The HIPNN acts as an encoder, learning from atomic positions and producing parameters such as orbital energies and core-core repulsion terms. These dynamic parameters are then fed into a semi-empirical engine (e.g., PYSEQM) which performs a self-consistent field calculation to obtain the molecular properties. This method retains the interpretability of the SEQM framework (as the parameters have physical meaning) while achieving high accuracy and transferability, even to much larger systems than those in the training set [25].

Physics-Informed Machine Learning (e.g., DFTBML): This method, detailed in the protocol above, keeps the functional form of the DFTB Hamiltonian rigid but uses machine learning to optimize its one-dimensional function parameters (Slater-Koster files) against high-quality reference data [16]. It sacrifices some of the extreme flexibility of a dynamic Hamiltonian for a guaranteed physics-based model form. The result is a model that is inherently interpretable, can be distributed as standard SKF files, and requires less training data than typical black-box machine learning models.

These approaches represent the cutting edge of the thesis on refining Hamiltonian parameters, moving beyond static parameter sets towards models that are both accurate and physically grounded.

Troubleshooting Guide: Common Parameterization Issues

This guide addresses frequent challenges researchers encounter when parameterizing biomolecular systems and drug-like molecules.

Table 1: Troubleshooting Common Parameterization Problems

| Problem Symptom | Possible Cause | Recommended Solution |

|---|---|---|

| Poor prediction of hydration free energies or liquid properties [37] | Inconsistent or non-polarizable force field parameters; Lack of environment-specificity. | Derive environment-specific charges and Lennard-Jones parameters directly from quantum mechanical calculations using atoms-in-molecule electron density partitioning [37]. |