Regularization Techniques for Chemical Machine Learning: Overcoming Overfitting in Drug Discovery and Materials Science

This article provides a comprehensive guide to regularization techniques tailored for chemical machine learning applications.

Regularization Techniques for Chemical Machine Learning: Overcoming Overfitting in Drug Discovery and Materials Science

Abstract

This article provides a comprehensive guide to regularization techniques tailored for chemical machine learning applications. Aimed at researchers, scientists, and drug development professionals, it explores foundational concepts from L1/L2 penalization to advanced methods like topological regularization and early stopping. The content bridges theoretical understanding with practical implementation, covering applications in predictive modeling, drug repositioning, and reaction optimization. It further delivers crucial troubleshooting advice for low-data regimes and hyperparameter tuning, and concludes with a comparative analysis of model performance and validation strategies to ensure robust, generalizable models that accelerate biomedical innovation.

Why Regularization is Essential for Robust Chemical ML Models

FAQs: Understanding Overfitting in Chemical ML

What is overfitting, and why is it a particular concern in chemical data science? Overfitting occurs when a machine learning model fits its training data too closely, learning both the underlying patterns and the random noise or irrelevant information within the dataset [1] [2]. As a result, the model performs exceptionally well on its training data but fails to generalize to new, unseen data [3]. In chemical data science, this is a critical challenge because experimental data is often scarce, difficult, and expensive to produce [4]. Building a model that cannot make reliable predictions on new molecular structures or reaction conditions defeats the core purpose of using ML to accelerate discovery and promote sustainability [4].

How can I tell if my chemical model is overfitted? A clear sign of overfitting is a high performance on the training dataset but a significantly lower performance on a hold-out test set or new experimental data [1] [5]. For instance, if your model's training accuracy is 99.9% but its test accuracy is only 45%, it is likely overfitted [5]. Low error rates on training data coupled with high error rates on test data are good indicators [1]. Techniques like k-fold cross-validation are essential for a more robust assessment of model generalizability [1] [4].

What is the difference between overfitting and underfitting? Overfitting and underfitting represent two ends of an undesirable spectrum. An overfitted model is too complex, capturing noise in the training data and resulting in high variance in its predictions [1] [2]. In contrast, an underfitted model is too simple and fails to capture the underlying dominant trend in the data, leading to high bias and inaccurate predictions even on training data [1] [2]. The goal is to find a well-fitted model that balances bias and variance—the "sweet spot" [1] [3].

What is "target leakage," and how does it relate to overfitting? Target leakage occurs when information that would not be available at the time of prediction inadvertently finds its way into the training dataset [5]. This causes the model to "cheat" and can result in unrealistically high, "too good to be true" accuracy, which does not hold up in real-world deployment [5] [6]. While not overfitting in the strictest sense, it shares the same consequence: a model that generalizes poorly.

Can non-linear models be used in low-data chemical regimes without overfitting? Yes, recent research demonstrates that non-linear models like neural networks can perform on par with or even outperform traditional multivariate linear regression (MVL) in low-data scenarios, but only when they are properly tuned and regularized [4]. This requires specialized workflows that incorporate techniques like Bayesian hyperparameter optimization with objective functions designed to penalize overfitting in both interpolation and extrapolation tasks [4].

Troubleshooting Guides

Guide 1: Diagnosing an Overfit Model

Follow this workflow to systematically identify overfitting in your chemical ML project.

Step-by-Step Procedure:

- Split Your Data: Before training, reserve a portion of your chemical dataset (e.g., 20%) as an external test set. This data must not be used in any part of model training or tuning [4] [7].

- Train Your Model: Train your model on the remaining 80% of the data (the training set).

- Evaluate Performance: Calculate relevant performance metrics (e.g., RMSE, R²) for both the training set and the held-out test set.

- Compare Metrics: Use the logic in the diagram above to diagnose the issue.

Guide 2: Implementing Regularization Techniques to Prevent Overfitting

Regularization techniques are essential for preventing overfitting, especially for complex models and small chemical datasets [8]. The following table summarizes key regularization methods.

| Technique | Core Principle | Ideal Use Case in Chemical ML |

|---|---|---|

| L1 (Lasso) | Adds a penalty equal to the absolute value of coefficients. Can shrink less important features to zero [8]. | Feature selection; identifying the most critical molecular descriptors from a large pool [8]. |

| L2 (Ridge) | Adds a penalty equal to the square of the coefficients. Shrinks coefficients but rarely zeroes them out [8]. | Handling multicollinearity; when electronic and steric descriptors are highly correlated [8]. |

| Elastic Net | Combines L1 and L2 penalties. Balances feature selection and coefficient shrinkage [8]. | Datasets with many correlated features where pure Lasso might be unstable [8]. |

| Dropout | Randomly "drops" neurons during neural network training, preventing over-reliance on any single node [8]. | Training deep learning models on complex chemical data (e.g., spectral analysis, molecular property prediction) [8]. |

| Early Stopping | Halts training when validation performance stops improving and begins to degrade [1] [7]. | All iterative training processes; a simple and effective first line of defense against overfitting [1]. |

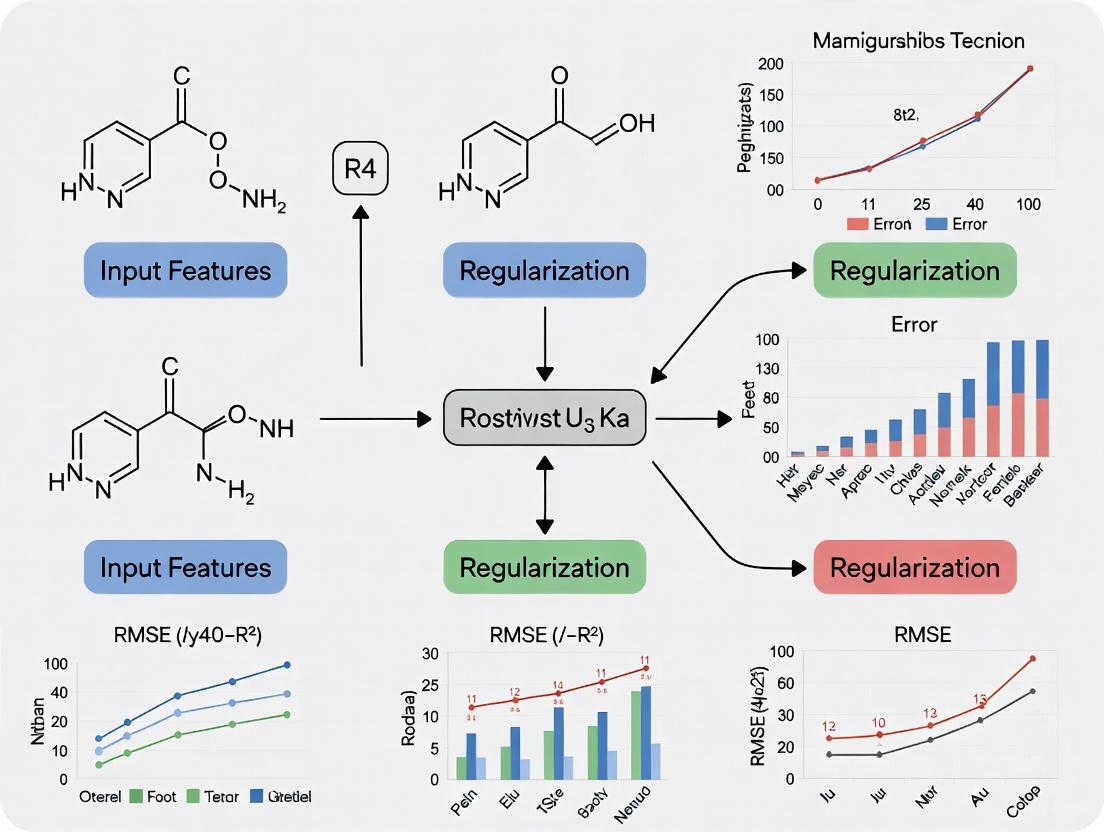

The diagram below illustrates how to integrate these techniques into a robust chemical ML workflow.

Experimental Protocol for Hyperparameter Optimization with Regularization:

This protocol is based on workflows used to successfully apply non-linear models to small chemical datasets [4].

- Objective Function Definition: Define a combined metric for Bayesian optimization that accounts for both interpolation and extrapolation performance. For example:

- Interpolation Score: Use a 10-times repeated 5-fold cross-validation (10× 5-fold CV) on the training/validation data [4].

- Extrapolation Score: Use a selective sorted 5-fold CV, where data is sorted by the target value and the highest RMSE from the top and bottom partitions is used [4].

- Combined RMSE: The objective function for the optimizer is the average of the interpolation and extrapolation RMSE scores [4].

- Bayesian Optimization: Use a Bayesian optimization algorithm to systematically explore the hyperparameter space. This includes tuning regularization-specific parameters like:

- L1/L2/ElasticNet regularization strength (

alpha,l1_ratio). - Dropout rates for neural networks.

- The number of layers and units per layer to control model complexity [7].

- L1/L2/ElasticNet regularization strength (

- Final Model Selection: Select the model and hyperparameter set that minimizes the combined RMSE objective function. This model is then evaluated on the completely held-out external test set for a final, unbiased assessment of its performance [4].

The following table summarizes benchmark results from a recent study that evaluated linear and non-linear models on eight diverse chemical datasets with limited data points (ranging from 18 to 44 data points) [4]. The performance is measured using scaled Root Mean Squared Error (RMSE) expressed as a percentage of the target value range, which allows for easier comparison across different datasets.

Table: Model Performance (Scaled RMSE %) on Low-Data Chemical Tasks [4]

| Dataset | Size (Data Points) | Multivariate Linear Regression (MVL) | Random Forest (RF) | Gradient Boosting (GB) | Neural Network (NN) |

|---|---|---|---|---|---|

| A | 19 | ~40% | ~55% | ~50% | ~35%* |

| B | 20 | ~22% | ~50% | ~35% | ~27% |

| C | 22 | ~30% | ~50% | ~35% | ~20%* |

| D | 21 | ~37% | ~45% | ~37% | ~30%* |

| E | 27 | ~25% | ~37% | ~27% | ~20%* |

| F | 44 | ~17% | ~27% | ~20% | ~15%* |

| G | 19 | ~37% | ~45% | ~32%* | ~35% |

| H | 44 | ~20% | ~30% | ~22% | ~15%* |

Note: An asterisk () indicates the best-performing model for that specific dataset. This data demonstrates that properly regularized non-linear models can be competitive with or even outperform traditional linear models in low-data chemical research.*

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" and their functions for building robust, generalizable chemical machine learning models.

Table: Essential Tools for Mitigating Overfitting in Chemical ML

| Tool / Technique | Function & Explanation |

|---|---|

| K-Fold Cross-Validation | A resampling procedure used to evaluate a model on limited data. It provides a more reliable estimate of model performance than a single train/test split by using all data for both training and validation in rounds [1] [3]. |

| Bayesian Hyperparameter Optimization | An efficient strategy for navigating the hyperparameter space. It builds a probabilistic model of the objective function to direct the search toward hyperparameters that improve a custom metric (e.g., a combined RMSE that penalizes overfitting) [4]. |

| Data Augmentation | Artificially increasing the size and diversity of the training set by creating modified copies of existing data. In chemistry, this could involve adding noise to spectral data or generating similar molecular structures [7] [8]. |

| Ensemble Methods (Bagging) | A technique that combines predictions from multiple models (e.g., decision trees) to improve generalizability and reduce variance. Training on random subsets of data with replacement helps stabilize predictions [1] [3]. |

| Automated ML Workflows (e.g., ROBERT) | Software that automates the entire ML pipeline, from data curation and feature selection to hyperparameter optimization and model interpretation. This reduces human bias and systematically incorporates overfitting prevention measures [4]. |

| Combined Validation Metric | A custom objective function, like the one used in ROBERT, that explicitly optimizes for generalization by averaging performance across both standard cross-validation (interpolation) and sorted cross-validation (extrapolation) tasks [4]. |

Frequently Asked Questions (FAQs)

What is regularization and why is it crucial for chemical ML models? Regularization is a set of methods for reducing overfitting in machine learning models by adding a penalty to the loss function during training to discourage excessive model complexity [9]. For chemical ML researchers, this is vital because it increases a model's generalizability—its ability to produce accurate predictions on new, unseen molecular datasets or experimental conditions, which is essential for reliable drug discovery and materials design [9]. It trades a marginal decrease in training accuracy for significantly improved performance on test data, ensuring your model learns underlying chemical patterns rather than memorizing noise [10] [9].

My model performs perfectly on training data but fails on new molecular structures. What is happening? This is the classic symptom of overfitting [11]. Your model has likely become too complex and has learned not only the underlying patterns in your training data but also the noise and specific idiosyncrasies within it [10] [11]. In the context of chemical ML, this means it may have memorized specific structural features in your training set rather than learning the generalizable relationships between structure and activity or property [12].

How do I choose between L1 (Lasso) and L2 (Ridge) regularization? The choice depends on your dataset and goal [10] [13].

- Use L1 (Lasso) when you suspect many features are irrelevant and you want a sparse model that performs feature selection [10] [9]. For example, when working with high-dimensional chemical descriptors and you need to identify the most impactful molecular features for a particular property [14] [13].

- Use L2 (Ridge) when you want to keep all features but control the size of their coefficients, which is particularly useful when features are highly collinear [10] [9]. This is common in spectroscopy or quantum chemistry data where many parameters may be correlated.

- Use Elastic Net, a hybrid of L1 and L2, when you have high dimensionality and correlated features and want the benefits of both feature selection and coefficient shrinkage [10] [9].

Are there regularization techniques specific to deep learning models in computational chemistry? Yes. For deep neural networks used in tasks like molecular property prediction or quantum chemistry simulation, specific techniques include:

- Dropout: Randomly "dropping out" neurons during training to prevent the network from relying too heavily on any single node and forcing it to learn more robust features [10] [9].

- Early Stopping: Halting the training process when performance on a validation set stops improving, which prevents the model from over-optimizing on the training data [10] [9].

- Batch Normalization: Normalizing the inputs to each layer, which stabilizes and accelerates training while also acting as a regularizer [10].

Can I use regularization even if my dataset is small? Yes, in fact, regularization is especially important with small datasets, which are common in experimental chemistry and drug discovery where data generation is costly and time-consuming [15]. Techniques like L1/L2 regularization and data augmentation are highly recommended in low-data regimes to prevent overfitting. However, care must be taken not to set the regularization parameter too high, as this can lead to underfitting, where the model becomes too simple to capture the true underlying chemical relationships [9].

Troubleshooting Guides

Problem: High Variance and Model Overfitting

Symptoms

- Excellent performance on training data (e.g., low Mean Squared Error) but poor performance on testing/validation data [11].

- The model fails to predict properties for new, unseen molecular structures accurately.

- Large discrepancy between training and validation error metrics.

Solutions

- Apply L2 (Ridge) Regularization

- Concept: Adds a penalty equal to the sum of the squared coefficients (L2 norm) to the loss function. This discourages any single feature from having an excessively large weight, smoothing the model's predictions [9] [11].

- Implementation:

- When to Use: Ideal for datasets with many correlated features, such as in quantum chemical descriptor sets [10].

- Implement Early Stopping for Deep Learning Models

- Concept: Monitors the model's performance on a validation set and stops training when performance begins to degrade, preventing the model from over-optimizing on the training data [10] [9].

- Implementation (Pseudocode):

- Best Practice: Use a "patience" parameter to avoid stopping prematurely due to noise in the validation loss [13].

Problem: Model Interpretability and Feature Selection

Symptoms

- Difficulty identifying which molecular descriptors or features are most important for the prediction.

- Model contains many features with non-zero coefficients, making it hard to interpret.

Solutions

- Apply L1 (Lasso) Regularization

- Concept: Adds a penalty equal to the sum of the absolute values of the coefficients (L1 norm). This can drive the coefficients of less important features to exactly zero, effectively performing feature selection [14] [9].

- Implementation:

- Outcome: Creates a simpler, more interpretable model that highlights the most critical features, such as key molecular fragments influencing bioactivity [14].

Problem: Managing Complex Deep Learning Models

Symptoms

- Very deep neural networks (e.g., Graph Neural Networks for molecules) show signs of overfitting.

- Training is unstable or slow.

Solutions

- Use Dropout Regularization

Table 1: Comparison of Key Regularization Techniques

| Technique | Mathematical Penalty | Primary Effect | Best For Chemical ML Tasks |

|---|---|---|---|

| L1 (Lasso) | λ × Σ|w_i| | Feature selection & sparsity | Identifying critical molecular descriptors [14] |

| L2 (Ridge) | λ × Σ(w_i)² | Shrinks all coefficients | Modeling with correlated quantum chemical features [9] |

| Elastic Net | λ₁ × Σ|wi| + λ₂ × Σ(wi)² | Balances sparsity and shrinkage | High-dimensional data with correlated features [9] |

| Dropout | N/A | Randomly ignores neurons during training | Deep Neural Networks for property prediction [10] |

| Early Stopping | N/A | Stops training when validation performance degrades | All models, especially when training time is long [9] |

Experimental Protocols and Data

Protocol: Applying Lasso Regularization for Feature Selection in Molecular Property Prediction

This protocol is based on a study applying Lasso to mitigate overfitting in air quality prediction models, a methodology directly transferable to chemical datasets [14].

1. Data Preparation

- Data Collection: Gather a dataset of molecular structures and their associated target property (e.g., solubility, activity). The study used 40,172 data points over 10 years [14].

- Feature Engineering: Compute molecular descriptors (e.g., molecular weight, logP, topological indices) or use learned representations (e.g., from graph neural networks).

- Data Splitting: Split data randomly into training (70%) and testing (30%) sets. Use a fixed random state (e.g.,

random_state=21) for reproducibility [16].

2. Model Training with Hyperparameter Tuning

- Objective: Minimize the loss function:

Loss = MSE + α * Σ|w|, whereα(alpha, equivalent to λ) is the regularization strength [11]. - Hyperparameter Optimization: Use Bayesian optimization or grid search to find the optimal

αvalue. The search should aim to minimize the mean squared error (MSE) on the training data while preventing overfitting [16] [15]. - Validation: Employ five-fold cross-validation during optimization to robustly assess model performance and prevent overfitting [16].

3. Model Evaluation and Feature Analysis

- Performance Metrics: Calculate R-squared (R²), Mean Absolute Error (MAE), and Root Mean Square Error (RMSE) on the test set [16] [14].

- Feature Analysis: Examine the model coefficients. Features with coefficients shrunk to zero are considered less important. The remaining features are your selected, most impactful descriptors [14].

Table 2: Key Performance Metrics from a Lasso Regularization Study [14]

| Predicted Pollutant | R² Score with Lasso | Interpretation in Chemical Context |

|---|---|---|

| PM2.5 | 0.80 | Model explains 80% of variance; good for a key target property. |

| PM10 | 0.75 | Model explains 75% of variance; reasonable performance. |

| SO₂ | 0.65 | Model explains 65% of variance; may indicate challenging relationship. |

| NO₂ | 0.55 | Model explains 55% of variance; significant unexplained variance. |

| CO | 0.45 | Model explains 45% of variance; poor for a primary output. |

| O₃ | 0.35 | Model explains 35% of variance; relationship is difficult to capture. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Regularization in Chemical ML

| Tool / Technique | Function | Application in Chemical ML |

|---|---|---|

| L1 Regularization (Lasso) | Performs feature selection by shrinking less important coefficients to zero. | Identifying critical molecular descriptors or fragments affecting a property [14] [11]. |

| L2 Regularization (Ridge) | Shrinks all coefficients to handle multicollinearity and prevent large weights. | Stabilizing models trained on correlated quantum chemical features [9]. |

| Elastic Net | Combines L1 and L2 penalties for both feature selection and coefficient shrinkage. | Ideal for high-dimensional molecular data with correlated features [9]. |

| Bayesian Optimization | Efficiently tunes hyperparameters (like α) using a probabilistic model. | Optimizing regularization strength and other model parameters with limited computational budget [16] [15]. |

| k-Fold Cross-Validation | Robust model validation by splitting data into k subsets. | Providing a reliable estimate of model generalizability on new chemical entities [16]. |

| SHAP Sensitivity Analysis | Explains model predictions by quantifying feature importance. | Interpreting complex ML models to gain chemical insights [16]. |

Workflow and Conceptual Diagrams

Regularization Technique Selection Workflow

Impact of Regularization on Model Behavior

In the field of chemical machine learning (ML) and drug development, researchers often work with high-dimensional data, including molecular structures, protein targets, and gene expression profiles. This data landscape presents significant challenges with overfitting, where models perform well on training data but fail to generalize to new, unseen data [17]. Regularization provides a crucial set of techniques to prevent overfitting by constraining model complexity, thereby improving generalization performance [11]. For pharmaceutical researchers building predictive models for drug discovery, drug testing, and drug repurposing, mastering regularization is essential for developing robust, reliable models that can accelerate the drug development pipeline [18].

The regularization toolkit encompasses various methods that operate through different mechanisms. Explicit regularization techniques, such as L1, L2, and Elastic Net, add penalty terms to the model's loss function to constrain parameter values [19]. Implicit techniques, including Dropout and Early Stopping, modify the training process itself to prevent overfitting without explicitly changing the objective function [19]. This technical support center provides a comprehensive guide to implementing these techniques specifically for chemical ML applications, with troubleshooting guides, FAQs, and experimental protocols tailored to drug development professionals.

Core Regularization Techniques: Mechanisms and Mathematical Foundations

Explicit Regularization Methods

L1 Regularization (Lasso) L1 regularization, also known as Lasso (Least Absolute Shrinkage and Selection Operator), adds a penalty term proportional to the absolute value of the model's coefficients [11]. In mathematical terms, for a standard linear regression model, the L1-regularized objective function becomes:

Loss = MSE + α * Σ|w|

Where MSE represents the mean squared error, 'w' represents the model's coefficients, and 'α' is the regularization strength hyperparameter [11]. The distinctive characteristic of L1 regularization is its tendency to drive some coefficients exactly to zero, effectively performing feature selection [20] [19]. This is particularly valuable in chemical ML where researchers often work with thousands of molecular descriptors but seek to identify the most predictive subset [21].

L2 Regularization (Ridge) L2 regularization, or Ridge regression, adds a penalty term proportional to the square of the model's coefficients [17]. The modified objective function becomes:

Loss = MSE + α * Σ|w|²

Unlike L1 regularization, L2 regularization shrinks all coefficients by the same proportion but does not set any to exactly zero [19]. This approach is particularly effective for handling multicollinearity (highly correlated features), which is common in chemical data where multiple molecular descriptors may capture similar structural properties [22]. L2 regularization tends to produce more stable models with better generalization performance when most features contribute to the prediction [20].

Elastic Net Regularization Elastic Net combines both L1 and L2 regularization penalties, offering a balanced approach [19]. The objective function incorporates both penalty terms:

Loss = MSE + α * [ρ * Σ|w| + (1-ρ) * Σ|w|²]

Where ρ is a mixing parameter that controls the balance between L1 and L2 regularization [19]. Elastic Net is particularly useful when working with chemical data that contains groups of correlated features, as it can select entire groups while providing the stability benefits of L2 regularization [22].

Table 1: Comparison of Explicit Regularization Techniques

| Technique | Mathematical Formulation | Key Characteristics | Best Use Cases in Chemical ML |

|---|---|---|---|

| L1 (Lasso) | Loss = MSE + α * Σ|w| | Produces sparse models; drives irrelevant feature weights to zero | Feature selection from high-dimensional molecular descriptors; identifying key molecular properties |

| L2 (Ridge) | Loss = MSE + α * Σ|w|² | Shrinks all weights proportionally; handles multicollinearity | Modeling with correlated molecular features; QSAR models with multiple relevant descriptors |

| Elastic Net | Loss = MSE + α * [ρ * Σ|w| + (1-ρ) * Σ|w|²] | Balances L1 sparsity and L2 stability | Datasets with correlated feature groups; when unsure between L1/L2 approaches |

Implicit Regularization Methods

Dropout Regularization Dropout is a regularization technique commonly used in deep neural networks, particularly relevant for complex chemical ML models such as graph neural networks for molecular property prediction [20] [21]. During training, Dropout randomly "drops out" (temporarily removes) a proportion of neurons from the network at each iteration, forcing the network to learn robust features that aren't dependent on specific neurons [20]. This approach prevents complex co-adaptations of neurons to training data, effectively simulating the training of an ensemble of multiple neural networks with different architectures [20]. In drug synergy prediction models like SynerGNet, Dropout helps prevent overfitting to specific molecular patterns in the training data, enhancing generalization to novel drug combinations [21].

Early Stopping Early Stopping regularizes models by monitoring performance on a validation set during training and halting the process when validation error begins to increase, indicating overfitting [20] [17]. This technique is particularly valuable in chemical ML where training data may be limited, and models can quickly memorize training examples rather than learning generalizable patterns [21]. For neural networks training on molecular datasets, Early Stopping prevents the model from continuing to minimize training error at the expense of validation performance [19]. Implementation typically involves setting aside a validation set and establishing a patience parameter—how many epochs to wait after validation performance plateaus or worsens before stopping training [20].

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q: How do I choose between L1 and L2 regularization for my molecular property prediction model? A: The choice depends on your dataset characteristics and modeling goals. Use L1 regularization when you have high-dimensional data with many molecular descriptors but believe only a subset is truly relevant [19] [22]. L1 will help identify the most important features. Choose L2 regularization when you have correlated features (common in molecular descriptors) and want to retain all features while reducing overfitting [22]. For example, in QSAR modeling, if you're starting with thousands of molecular fingerprints but expect only dozens to be relevant for a specific biological activity, L1 would be appropriate. If you have a curated set of molecular properties that are all theoretically relevant but correlated, L2 would be better suited.

Q: Why is my regularized model performing poorly on both training and validation data? A: This indicates underfitting, likely due to excessive regularization strength [23] [22]. When the regularization parameter (λ or α) is set too high, the model becomes overly constrained and cannot capture the underlying patterns in the data. Reduce the regularization parameter systematically while monitoring validation performance. Additionally, ensure your model has sufficient capacity to learn the relationships in your chemical data—if using an overly simple model with strong regularization, consider increasing model complexity while maintaining moderate regularization.

Q: How should I preprocess chemical data before applying regularization? A: Feature scaling is crucial before applying L1 or L2 regularization [22]. Since regularization penalties are applied uniformly to all coefficients, features on different scales would be penalized disproportionately. Standardize continuous features (e.g., molecular weight, logP) to have zero mean and unit variance. For categorical features (e.g., functional group presence, scaffold type), use appropriate encoding schemes such as one-hot encoding [22]. For molecular structures represented as graphs, consider using graph normalization techniques before applying Dropout in graph neural networks.

Q: Can I combine multiple regularization techniques in my drug synergy prediction model? A: Yes, combining regularization techniques often yields better performance [19] [21]. For example, in deep learning models for drug synergy prediction like SynerGNet, researchers commonly use both Dropout and L2 regularization (weight decay) simultaneously [21]. The combination addresses different aspects of overfitting: L2 constrains weight magnitudes while Dropout prevents co-adaptation of neurons. Similarly, you might combine Early Stopping with any of the explicit regularization methods to provide multiple safeguards against overfitting.

Troubleshooting Common Experimental Issues

Problem: Inconsistent performance across different random seeds with L1 regularization Solution: L1 regularization can be unstable with correlated features, potentially selecting different feature subsets across runs [22]. This is particularly problematic in chemical ML where molecular descriptors are often correlated. To address this:

- Use Elastic Net regularization with a higher ρ value toward L1 to maintain sparsity while improving stability [19]

- Ensemble multiple L1-regularized models trained with different random seeds

- Perform bootstrap analysis to identify frequently selected features across multiple runs

Problem: Validation loss increases immediately when using Dropout Solution: A high Dropout rate can introduce excessive noise, preventing the model from learning [20] [21]:

- Start with a lower Dropout rate (0.1-0.3) and gradually increase if the model shows signs of overfitting

- Use different Dropout rates for different layers—lower rates for layers capturing fundamental chemical features, higher rates for later combinatorial layers

- Combine with a lower learning rate and longer training to accommodate the noisier training process

Problem: Early Stopping terminates training too early Solution: This occurs when the validation loss has random fluctuations that trigger stopping prematurely [20]:

- Increase the patience parameter—the number of epochs to wait before stopping after validation plateaus

- Apply smoothing to the validation loss (e.g., moving average) to reduce the impact of random fluctuations

- Use a learning rate schedule that reduces when validation performance plateaus, then monitor for further improvements before stopping

Problem: Regularized model fails to generalize to new chemical scaffolds Solution: This indicates dataset bias where training data lacks sufficient diversity [21]:

- Apply data augmentation specific to chemical structures (e.g., SMILES enumeration, adding similar compounds) [21]

- Use transfer learning by pre-training on larger chemical databases then fine-tuning with regularization on your specific dataset

- Incorporate domain knowledge through additional regularization terms that penalize chemically implausible relationships

Experimental Protocols and Methodologies

Protocol: Implementing Regularization for QSAR Modeling

Objective: Build a robust QSAR model with appropriate regularization to predict compound activity while generalizing to novel chemical structures.

Materials and Reagents: Table 2: Research Reagent Solutions for Regularization Experiments

| Reagent/Resource | Function | Example Specifications |

|---|---|---|

| Molecular Dataset | Provides features (molecular descriptors) and labels (activity) | Curated chemical compounds with experimentally measured activity values; should include training, validation, and test sets with diverse scaffolds |

| Descriptor Calculator | Generates molecular features from chemical structures | Software such as RDKit, Dragon, or custom descriptors; should produce standardized, meaningful molecular representations |

| Regularization Implementation | Provides algorithmic framework for regularized models | Scikit-learn, TensorFlow, PyTorch, or specialized chemical ML libraries with regularization capabilities |

| Hyperparameter Optimization Tool | Identifies optimal regularization parameters | Grid search, random search, or Bayesian optimization implemented with cross-validation |

| Model Evaluation Framework | Assesses model performance and generalization | Metrics appropriate for chemical ML: RMSE, MAE, R² for regression; AUC, balanced accuracy for classification; with scaffold splitting |

Methodology:

- Data Preparation: Calculate molecular descriptors (e.g., topological, electronic, geometric) for all compounds. Split data using scaffold-based splitting to ensure training and test sets contain distinct chemical scaffolds, providing a rigorous test of generalization [21].

- Feature Preprocessing: Remove near-constant descriptors, then scale remaining features to zero mean and unit variance to ensure regularization penalties apply equally across features [22].

- Initial Model Training: Train baseline models without regularization to establish performance benchmarks and identify overfitting (large gap between training and validation performance).

- Regularization Selection: Based on data characteristics:

- For high-dimensional descriptor spaces (>1000 descriptors), start with L1 regularization to identify relevant features

- For moderate-dimensional spaces with correlated descriptors, use L2 regularization

- When uncertain, implement Elastic Net with a grid search over both α and ρ parameters

- Hyperparameter Tuning: Use k-fold cross-validation with the same scaffold-based splitting strategy to optimize regularization parameters. Search over a logarithmic scale (e.g., 10^-5 to 10^2 for α) to identify the value that minimizes validation error.

- Model Validation: Evaluate the final regularized model on the held-out test set with novel scaffolds. Perform additional validation using external datasets or temporal splitting if available.

Protocol: Regularized Deep Learning for Drug Synergy Prediction

Objective: Implement a regularized graph neural network to predict synergistic drug combinations against cancer cell lines.

Methodology:

- Graph Construction: Construct feature-rich graphs by integrating heterogeneous molecular and cellular attributes into human protein-protein interaction networks, as done in SynerGNet [21]. Use drug-protein association scores as drug features rather than relying solely on SMILES representations.

- Data Augmentation: Apply advanced synergy data augmentation by replacing drugs in a pair with chemically and pharmacologically similar compounds based on Drug Action/Chemical Similarity (DACS) metric [21]. This artificially expands training data while preserving biological relevance.

- Architecture Design: Implement a graph neural network with multiple graph convolutional layers to capture intricate relationships between drugs and cell lines. Include attention mechanisms to identify crucial proteins involved in biomolecular interactions.

- Regularization Strategy: Apply strong regularization techniques including:

- Dropout between graph convolutional layers (rate: 0.3-0.5)

- L2 weight decay on network parameters (λ: 10^-4 to 10^-6)

- Early Stopping with patience of 20-50 epochs based on validation loss

- Progressive Training: Gradually integrate augmented data into training instead of incorporating all available data at once. This allows observation of model adaptability to incremental changes and prevents sudden performance degradation [21].

- Evaluation: Use balanced accuracy, AUC, and false positive rate as metrics. Compare against non-regularized baselines and existing methods to quantify improvement.

Implementation Workflows and Visualization

Regularization Technique Selection Workflow

Regularization Technique Selection Workflow

Chemical ML Regularization Implementation Workflow

Chemical ML Regularization Implementation Workflow

Advanced Applications in Drug Development

Regularization techniques play a critical role in addressing specific challenges in pharmaceutical ML. In drug synergy prediction, strong regularization enables models to capture genuine biological relationships rather than memorizing training data patterns [21]. For example, in SynerGNet, regularization combined with data augmentation led to a 5.5% increase in balanced accuracy and a 7.8% decrease in false positive rate compared to models trained only on original data [21].

In de novo molecular design, regularization in generative models helps balance exploration of novel chemical space with exploitation of known bioactive scaffolds. L2 regularization in variational autoencoders can produce smoother latent spaces where similar molecules cluster together, facilitating optimization of lead compounds.

For multi-task learning in drug discovery, where models simultaneously predict multiple properties (e.g., activity, toxicity, solubility), carefully tuned regularization helps share information across related tasks while preventing negative transfer. Elastic Net regularization is particularly valuable here, as it can identify features relevant to all tasks versus those specific to individual tasks.

The FDA's increasing attention to AI in drug development underscores the importance of robust, regularized models. With CDER having reviewed over 500 submissions with AI components from 2016 to 2023, proper regularization demonstrates a commitment to model generalizability and reliability in regulatory contexts [24].

For researchers in chemistry and drug development, the scarcity of reliable, high-quality data is a major obstacle to building robust machine learning (ML) models. This issue is particularly acute in fields like molecular property prediction and reaction optimization, where data collection is often costly and time-consuming [25]. In these low-data regimes, models are highly susceptible to overfitting, where they memorize noise and specific patterns in the training data rather than learning the underlying chemical relationships, leading to poor performance on new, unseen data [4] [26].

Regularization encompasses a set of techniques designed to prevent overfitting by intentionally simplifying the model or penalizing complexity. The core trade-off is a slight decrease in training accuracy for a significant gain in generalizability—the model's ability to make accurate predictions on novel data, which is the ultimate goal of most scientific applications [9]. For chemical ML researchers working with small datasets, mastering regularization is not just an advanced technique; it is a critical skill for ensuring that their digital tools are reliable and predictive.

Troubleshooting Guides & FAQs

This section addresses common problems encountered when applying ML to small chemical datasets.

Frequently Asked Questions

Q: My model performs perfectly on training data but fails on new molecules. What is happening?

- A: This is a classic sign of overfitting. Your model has become too complex and has learned the training data, including its noise, by heart. To fix this, employ regularization techniques such as L2 regularization (Ridge Regression) to shrink model parameters or implement early stopping during the model training process to halt before it starts memorizing the data [26] [9].

Q: Why should I consider non-linear models for my small dataset instead of sticking with traditional linear regression?

- A: Recent research demonstrates that properly regularized non-linear models (e.g., Neural Networks) can perform on par with or even outperform linear regression on small chemical datasets. The key is using rigorous workflows that mitigate overfitting through advanced techniques like Bayesian hyperparameter optimization which explicitly penalizes overfitting during model selection [4].

Q: I have multiple related properties to predict but each has very little data. What can I do?

- A: Multi-Task Learning (MTL) is a powerful strategy for this scenario. MTL allows a model to learn several tasks simultaneously, leveraging common information across tasks to improve generalization. However, beware of Negative Transfer (NT), where learning one task harms another. Use specialized methods like Adaptive Checkpointing with Specialization (ACS) to counteract NT and protect task-specific performance [25].

Q: My dataset is not just small, it's also imbalanced (e.g., few active compounds vs. many inactive ones). How can regularization help?

- A: While not regularization in the traditional sense, techniques like synthetic data generation can address data scarcity and imbalance. Methods like Generative Adversarial Networks (GANs) can create synthetic data points to balance the dataset and expose the model to a wider range of scenarios, which acts as a form of regularization by improving generalization [27] [28].

Troubleshooting Common Experimental Issues

Problem: High Variance in Cross-Validation Results

- Symptoms: Model performance metrics fluctuate wildly between different data splits.

- Solutions:

- Use repeated cross-validation (e.g., 10x 5-fold CV) to get a more stable estimate of performance [4].

- Increase the regularization strength (e.g., a higher

λparameter in L2 regularization) to constrain the model. - Ensure your training/test split is systematic and "even" to avoid biased splits, especially in tiny datasets [4].

Problem: Model Fails to Extrapolate

- Symptoms: The model performs poorly on data points outside the range of the training set.

- Solutions:

- Incorporate an extrapolation metric directly into the hyperparameter optimization objective. The ROBERT framework, for instance, uses a combined RMSE from interpolation and extrapolation CV folds [4].

- Note that tree-based models (e.g., Random Forest) are inherently weak at extrapolation; neural networks with appropriate regularization may be a better choice for such tasks [4].

Problem: Negative Transfer in Multi-Task Learning

- Symptoms: The performance on one or more tasks is worse when trained jointly compared to training them independently.

- Solutions:

- Implement the ACS training scheme, which saves model checkpoints specifically when each task's validation loss is at a minimum, effectively shielding tasks from detrimental parameter updates from other tasks [25].

Key Regularization Techniques: Protocols and Data

This section provides a detailed look at core regularization methods, complete with experimental protocols.

Core Regularization Techniques for Chemical ML

The table below summarizes the primary regularization methods relevant to low-data chemical research.

Table 1: Essential Regularization Techniques for Low-Data Regimes

| Technique | Mechanism | Best For | Key Hyperparameter(s) |

|---|---|---|---|

| L2 (Ridge) Regularization [26] [9] | Adds a penalty equal to the sum of the squared coefficients. Shrinks weights but does not zero them out. | Linear models, preventing overfitting when features are correlated. | λ (penalty strength) |

| L1 (Lasso) Regularization [9] [29] | Adds a penalty equal to the sum of the absolute coefficients. Can shrink coefficients to zero, performing feature selection. | High-dimensional data, automated feature selection in linear models. | λ (penalty strength) |

| Early Stopping [26] [9] | Halts the training process once performance on a validation set stops improving. | Iterative models like Neural Networks and Gradient Boosting. | Patience (number of epochs to wait before stopping) |

| Dropout [26] [9] | Randomly "drops out" a fraction of neurons during each training step in a neural network. | Neural Networks, forcing the network to learn redundant representations. | Dropout rate |

| Multi-Task Learning (MTL) [25] | Shares representations between related tasks, encouraging the model to learn generalizable features. | Predicting multiple molecular properties with limited data for each. | Model architecture (shared vs. task-specific layers) |

| Bayesian Hyperparameter Optimization [4] | Systematically tunes model hyperparameters using an objective function that explicitly penalizes overfitting. | Any complex model in low-data regimes, ensuring robust model selection. | Objective function definition (e.g., combined RMSE) |

Experimental Protocol: Implementing a Regularized Non-Linear Workflow

The following protocol is adapted from the ROBERT software workflow, which is designed to enable the use of non-linear models in low-data regimes [4].

Table 2: Key Reagents & Computational Tools

| Item | Function/Description |

|---|---|

| ROBERT Software | An automated program for ML model development that performs data curation, hyperparameter optimization, and model evaluation [4]. |

| Bayesian Optimization | A strategy for finding the optimal hyperparameters of a model by building a probabilistic model and using it to select the most promising parameters [4]. |

| Combined RMSE Metric | An objective function that averages performance from both interpolation (standard CV) and extrapolation (sorted CV) to penalize overfitting [4]. |

Workflow Diagram: Regularized Non-Linear Model Development

Step-by-Step Protocol:

Data Curation & Splitting:

- Start with a curated CSV file of your chemical data (e.g., reaction yields, property values).

- Reserve 20% of the data (or a minimum of 4 data points) as an external test set. Use an "even" split to ensure the test set is representative of the target value range. This prevents data leakage and ensures a fair final evaluation [4].

Define the Optimization Objective:

- Implement a combined RMSE as the objective function for hyperparameter tuning. This metric is calculated as:

- Interpolation RMSE: Perform a 10-times repeated 5-fold cross-validation on the training/validation data.

- Extrapolation RMSE: Perform a sorted 5-fold CV. Sort the data by the target value (y), partition it, and use the highest RMSE from the top and bottom partitions.

- The final objective score is the average of the interpolation and extrapolation RMSE. This directly penalizes models that overfit and fail to extrapolate [4].

- Implement a combined RMSE as the objective function for hyperparameter tuning. This metric is calculated as:

Execute Bayesian Hyperparameter Optimization:

- For each candidate non-linear algorithm (e.g., Neural Networks, Random Forest), run a Bayesian optimization routine.

- The optimizer will iteratively explore the hyperparameter space, using the combined RMSE to guide its search toward models that are both accurate and generalizable.

Model Selection & Final Evaluation:

- Select the model and hyperparameter set that achieved the lowest combined RMSE during optimization.

- Train this model on the entire training/validation set and perform a final, single evaluation on the held-out external test set to report its real-world performance.

Advanced Strategies: Multi-Task Learning & Specialization

When predicting multiple molecular properties, Multi-Task Learning (MTL) is a powerful regularization strategy that uses the shared information across tasks to improve generalization. However, task imbalance can lead to Negative Transfer (NT). The Adaptive Checkpointing with Specialization (ACS) method effectively mitigates this [25].

Workflow Diagram: ACS for Multi-Task Learning

Protocol Summary for ACS:

- Architecture: Use a Graph Neural Network (GNN) as a shared backbone to learn general molecular representations, with separate Multi-Layer Perceptron (MLP) "heads" for each specific property prediction task [25].

- Training & Checkpointing: During the joint training process, continuously monitor the validation loss for each task individually. Whenever a task achieves a new minimum validation loss, save (checkpoint) the combination of the shared backbone and that task's specific head [25].

- Outcome: At the end of training, you obtain a specialized model for each task, protected from the negative interference of other tasks while still benefiting from the shared representations learned during training. This approach has been shown to enable accurate predictions with as few as 29 labeled samples for a given property [25].

In the context of chemical machine learning (ML) and drug discovery, regularization encompasses a suite of techniques designed to control model complexity by adding information, thereby solving ill-posed problems and preventing overfitting [29]. For researchers developing predictive models for molecular properties, activity, or toxicity, overfitting poses a significant threat to the real-world applicability of their results. The core aim of regularization is to improve model generalizability—the ability of a model to maintain performance when applied to new, unseen data, such as a different chemical space or an external validation cohort [29] [30].

This technical guide connects the theory of regularization to practical experimental protocols, providing troubleshooting advice to help you, the biomedical researcher, build more robust and reliable ML models.

Frequently Asked Questions (FAQs)

1. What is the fundamental trade-off addressed by regularization? Regularization explicitly manages the trade-off between model fit and model complexity [29]. A model that fits the training data too closely (overfitting) will learn noise and spurious correlations specific to that dataset, leading to poor performance on new data. Regularization penalizes complexity, encouraging simpler, more generalizable models.

2. Why should I use regularization for a chemical language model (CLM) in drug discovery? CLMs, when combined with reinforcement learning (RL), are powerful tools for de novo molecule generation [31]. Without regularization, an RL-trained CLM can quickly over-optimize for the reward function, potentially generating molecules that score highly but are synthetically infeasible or possess undesirable chemical properties. Regularization helps maintain reasonable chemistry by keeping the model's policy close to a prior trained on known, valid chemical structures [31].

3. Can regularization help if my training data for a toxicity model is imbalanced? Yes. Data imbalance is a common issue in computational toxicology, where the number of inactive compounds vastly outnumbers the actives. Techniques like focal loss have been explored to address this imbalance directly. Furthermore, artificial data augmentation can be used to address data imbalance, allowing the model to learn from newly generated compounds [32].

4. We are developing a clinical prediction model. Which regularization method is best for external validation? A recent large-scale study on healthcare data suggests that L1 (LASSO) and ElasticNet regularization generally provide the best discriminative performance (AUC) upon external validation [30]. However, if your goal is a parsimonious model with better calibration and high interpretability, L0-based methods like Iterative Hard Thresholding (IHT) or the Broken Adaptive Ridge (BAR) may be advantageous, as they significantly reduce model complexity [30].

5. Does regularization always work for improving out-of-domain generalization? Not always. Research has shown that regularization can sometimes overregularize, inadvertently suppressing causal features along with spurious ones [33]. Its effectiveness depends on the specific data and the nature of the "shortcuts" or spurious correlations the model is learning. It is not a guaranteed solution and requires careful evaluation.

Troubleshooting Guides

Problem 1: Model Performance Drops Significantly on External Validation Data

Symptoms: High accuracy on internal train/test splits, but poor performance when the model is applied to data from a different institution, experimental batch, or chemical series.

Potential Causes and Solutions:

Cause: The model has overfit to technical noise or spurious correlations in the training data.

- Solution A: Implement Sharpness-Aware Minimization (SAM) or Mixup regularization. In Human Activity Recognition tasks, these were among the best-performing regularizers for Out-of-Distribution (OOD) robustness [34].

- Solution B: Apply Distributionally Robust Optimization (DRO) methods, such as Invariant Risk Minimization (IRM) or Variance-Risk Extrapolation (V-REx). These explicitly penalize the loss function to learn representations that are invariant across multiple source domains [34].

Cause: High collinearity among features (e.g., correlated molecular descriptors or healthcare codes) leads to unstable feature selection.

- Solution: Switch from L1 (LASSO) to ElasticNet regularization. ElasticNet combines L1 and L2 penalties, which helps in selecting entire groups of correlated features rather than picking one randomly, leading to more stable and generalizable models [30].

Problem 2: Reinforcement Learning-Driven Molecule Generation Produces Invalid or Impractical Structures

Symptoms: A CLM optimized with RL generates molecules with high predicted reward but invalid structures, unrealistic chemistry, or poor synthetic accessibility.

Potential Causes and Solutions:

Cause: The RL policy has diverged too far from the foundational chemical space of the pre-trained model.

- Solution: Strengthen policy regularization via reward shaping. Incorporate a term in the reward function that penalizes the Kullback–Leibler (KL) divergence between the current policy and the pre-trained prior policy. This encourages the model to explore but remain within a region of plausible chemistry [31].

Cause: The reward function is sparse, and the gradient estimates have high variance.

- Solution: Use a baseline in the REINFORCE algorithm to reduce the variance of gradient estimates. A moving-average baseline (MAB) or a leave-one-out baseline (LOO) can stabilize training [31].

Problem 3: Deep Learning Model for Image-Based Screening is Overfitting

Symptoms: The training loss continues to decrease, but the validation loss stagnates or begins to increase.

Potential Causes and Solutions:

- Cause: The model architecture is too complex for the available dataset.

- Solution A: Incorporate DropBlock regularization. Unlike standard dropout, which removes random neurons, DropBlock removes contiguous regions of feature maps, which is more effective for convolutional layers handling spatial data [35].

- Solution B: Use early stopping. Monitor the validation performance and halt training when it begins to degrade. This is a simple but highly effective form of regularization [29] [35].

- Solution C: Leverage transfer learning. Start with a pre-trained model (e.g., on ImageNet) and fine-tune it on your specific biomedical images. This has been shown to improve performance and convergence compared to training from scratch [35].

Protocol 1: Comparing Penalization Methods for Clinical Prediction Models

This protocol is based on a large-scale empirical study comparing regularization methods for logistic regression on electronic health record data [30].

- Objective: To identify the regularization method that provides the best discrimination and calibration for models validated externally.

- Datasets: Data from 5 US claims and EHR databases mapped to the OMOP-CDM.

- Study Population: Patients with pharmaceutically treated major depressive disorder (MDD).

- Prediction Tasks: 21 different binary outcomes (e.g., suicide and suicidal ideation, acute liver injury, fracture) occurring 1 day to 1 year after index MDD diagnosis.

- Features: Age, sex, and binary indicators for conditions, drug ingredients, procedures, and observations from the year prior to index.

- Algorithms Compared: L1 (LASSO), L2 (Ridge), ElasticNet, Adaptive L1, Adaptive ElasticNet, Broken Adaptive Ridge (BAR), Iterative Hard Thresholding (IHT).

- Validation: Models were trained on one database and externally validated on the other four.

Table 1: Summary of Regularization Method Performance in Healthcare Prediction Models (Adapted from [30])

| Regularization Method | Key Characteristic | Internal Discrimination (AUC) | External Discrimination (AUC) | Model Complexity (Number of Features) |

|---|---|---|---|---|

| L1 (LASSO) | Promotes sparsity; selects features. | High | High | Medium |

| ElasticNet | Mix of L1 & L2; handles correlated groups. | High | High | Larger than L1 |

| L2 (Ridge) | Shrinks coefficients but does not select. | Medium | Medium | All features |

| BAR | L0 approximation; seeks best subset. | Slightly less discriminative | Slightly less discriminative | Lowest |

| IHT | L0 approximation; specifies max features. | Slightly less discriminative | Slightly less discriminative | Lowest |

- Key Findings: L1 and ElasticNet were superior for discrimination. BAR and IHT offered the best internal calibration with significantly fewer features, favoring interpretability and parsimony [30].

Protocol 2: Joint Regularization and Calibration in Deep Ensembles

This protocol is based on recent research into optimizing deep ensembles for both performance and uncertainty quantification [36].

- Objective: To assess the impact of jointly tuning regularization parameters on ensemble performance and calibration.

- Models: Deep ensembles for image classification or molecular property prediction.

- Key Tuned Parameters:

- Weight Decay: A form of L2 regularization applied to the model weights.

- Temperature Scaling: A post-processing method to calibrate the confidence of the model's predictions.

- Early Stopping: Halting training based on validation performance.

- Methodology:

- Compare individual tuning (each model tuned separately) vs. joint tuning (all models tuned together as an ensemble).

- Propose a partially overlapping holdout strategy to enable joint evaluation while maximizing data for training.

- Key Finding: Jointly tuning the ensemble generally matches or improves performance over individual tuning, with significant variation across tasks and metrics [36].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Regularization in Chemical ML

| Tool / Technique | Function | Application Context in Biomedical Research |

|---|---|---|

| ElasticNet Regression | Performs variable selection and stabilizes estimates via a mix of L1 & L2 penalties. | Developing clinical prediction models with correlated features from EHRs [30]. |

| REINFORCE Algorithm | A policy gradient RL algorithm for optimizing sequential decision processes. | Fine-tuning Chemical Language Models (CLMs) for de novo molecule generation with property-based rewards [31]. |

| Sharpness-Aware Minimization (SAM) | An optimizer that seeks parameters in neighborhoods of uniformly low loss ("flat minima"). | Improving the out-of-distribution generalization of models, e.g., in image-based histology classification [34]. |

| Mixup | A data augmentation technique that creates new samples via linear interpolation of inputs and labels. | Regularizing models to be more robust to outliers and spurious correlations in training data [34]. |

| Invariant Risk Minimization (IRM) | A framework for learning causal features that are invariant across multiple environments. | Mitigating dataset-specific biases (e.g., from a specific lab's protocols) in biomarker discovery [34]. |

| Broken Adaptive Ridge (BAR) | An iterative method that approximates L0 penalization (best subset selection). | Creating highly interpretable and parsimonious models for clinical deployment where simplicity is key [30]. |

Workflow and Conceptual Diagrams

Diagram 1: Adaptive Graph Regularization for Drug Side Effect Prediction

The following diagram illustrates the workflow of a sparse structure learning model with adaptive graph regularization, a method proposed for predicting drug side effects by fusing multiple types of drug data [37].

Workflow for Predicting Drug Side Effects

Diagram 2: Regularization Decision Logic for Biomedical ML

This diagram provides a logical pathway for selecting an appropriate regularization strategy based on the specific problem context in biomedical research.

Regularization Strategy Selection Logic

Implementing Regularization Methods in Pharmaceutical and Materials Research

In the realm of chemical machine learning (ML), where datasets are often characterized by a high number of molecular descriptors, catalyst properties, or reaction conditions, overfitting presents a significant challenge to model reliability. Regularization techniques are indispensable statistical methods used to mitigate this by preventing models from becoming overly complex and tailoring them too closely to training data noise [38] [39]. For researchers and drug development professionals, selecting the appropriate regularization method is crucial for building robust, interpretable, and predictive models that can accurately guide experimental design, such as in catalyst development or compound screening [40]. This technical support center provides a detailed guide on the three primary penalization techniques—L1 (Lasso), L2 (Ridge), and Elastic Net regression—framed within the specific context of chemical ML research.

Understanding the Core Penalization Techniques

L1 Regularization: Lasso Regression

Lasso (Least Absolute Shrinkage and Selection Operator) regression introduces an L1 penalty term, which is the absolute value of the magnitude of the model's coefficients, to the loss function [38] [41]. Its primary strength lies in its ability to perform automatic feature selection by driving the coefficients of less important features exactly to zero [41] [42]. This is particularly valuable in chemical ML where you might start with a large number of potential molecular descriptors and need to identify the most influential ones. However, a key limitation is its behavior with highly correlated features; it tends to arbitrarily select one feature from a correlated group and discard the others, which can lead to model instability [41].

- Mathematical Formulation: The loss function minimized by Lasso regression is:

Loss = RSS + λ * Σ|βⱼ|WhereRSSis the Residual Sum of Squares,λ(lambda) is the regularization parameter controlling penalty strength, andΣ|βⱼ|is the sum of the absolute values of the coefficients [41].

L2 Regularization: Ridge Regression

Ridge regression employs an L2 penalty term, which is the squared magnitude of the coefficients, added to the loss function [38] [39]. Unlike Lasso, it does not perform feature selection; instead, it shrinks all coefficients towards zero but never exactly to zero [38] [43]. This makes it exceptionally well-suited for handling multicollinearity—a common scenario in chemical data where descriptors like molecular weight and surface area might be correlated [39] [43]. By reducing the magnitude of all coefficients in a proportional manner, Ridge regression stabilizes the model and ensures that the effect of correlated predictors is evenly distributed [38].

- Mathematical Formulation: The Ridge regression loss function is:

Loss = RSS + λ * Σβⱼ²Here,Σβⱼ²represents the sum of the squared coefficients [39].

Combined Regularization: Elastic Net Regression

Elastic Net regression is a hybrid approach that combines both L1 and L2 penalty terms into the loss function [44] [45]. This combination allows it to leverage the strengths of both parent techniques: it can perform feature selection like Lasso while maintaining stability with correlated groups of features like Ridge [45] [46]. It is particularly powerful in chemical ML applications dealing with "wide" data, where the number of features (e.g., spectroscopic data points) far exceeds the number of observations (e.g., experimental runs) [45].

- Mathematical Formulation: The Elastic Net loss function is:

Loss = RSS + λ * [ (1 - α) * Σβⱼ² + α * Σ|βⱼ| ]The key hyperparameterα(orl1_ratioin some libraries) controls the mix between L1 and L2 penalties. Whenα = 1, it is equivalent to Lasso, and whenα = 0, it is equivalent to Ridge [45].

Table 1: Core Characteristics of L1, L2, and Elastic Net Regularization

| Feature | L1 (Lasso) Regression | L2 (Ridge) Regression | Elastic Net Regression | ||

|---|---|---|---|---|---|

| Penalty Term | Absolute value of coefficients (`Σ | βⱼ | `) [38] | Squared value of coefficients (Σβⱼ²) [38] |

Mix of absolute and squared values [45] |

| Effect on Coefficients | Can shrink coefficients to exactly zero [41] | Shrinks coefficients close to zero, but not exactly [39] | Can shrink coefficients to zero and shrinks others [45] | ||

| Feature Selection | Yes (automatic) [42] | No [39] | Yes [45] | ||

| Handling Multicollinearity | Handles some, but may arbitrarily drop one feature from a correlated pair [41] | Excellent; stabilizes coefficient estimates [39] [43] | Very good; more robust than Lasso alone [45] | ||

| Best Use Case in Chemical ML | Identifying key catalyst descriptors from a large initial set [40] | Modeling with highly correlated reaction condition parameters [39] | High-dimensional data with many correlated features, e.g., genetic or spectroscopic data [45] |

Frequently Asked Questions (FAQs) & Troubleshooting

FAQ 1: My model's performance is highly unstable when I retrain it with slightly different data. Which regularization technique should I use?

- Problem: High model variance and instability, often due to multicollinearity among features.

- Solution: Ridge Regression (L2) is specifically designed to address this. Its penalty term shrinks coefficients and reduces their variance, leading to a more stable model that is less sensitive to minor fluctuations in the training data [39] [43]. This is common when using correlated physicochemical properties as features.

- Troubleshooting Step: Check for correlated features in your dataset using a correlation matrix. If many features show high correlation, Ridge is a strong candidate.

FAQ 2: I have hundreds of molecular descriptors but believe only a few are truly important. How can I identify them?

- Problem: The need for feature selection to improve model interpretability and efficiency in high-dimensional spaces.

- Solution: Lasso Regression (L1) is ideal for this purpose. By driving the coefficients of irrelevant descriptors to zero, it automatically performs feature selection, leaving you with a simpler, more interpretable model that highlights the most impactful features [41] [42].

- Troubleshooting Step: Use a path plot to visualize how coefficients change as the regularization strength (

λ) increases. This helps in understanding the order in which features are selected or dropped.

FAQ 3: Lasso is randomly selecting one feature from a group I know to be important, and Ridge keeps all of them. Is there a middle ground?

- Problem: Lasso's instability with groups of correlated but relevant features.

- Solution: Elastic Net Regression is the recommended solution. By combining L1 and L2 penalties, it can select groups of correlated features without arbitrarily excluding them, providing a better balance between selection and shrinkage [45] [46].

- Troubleshooting Step: Tune the

l1_ratioparameter. Start with a value of 0.5 and use cross-validation to find the optimal balance for your specific dataset.

FAQ 4: How do I choose the right value for the regularization parameter lambda (λ)?

- Problem: The performance of regularized models is highly sensitive to the value of

λ. - Solution: Cross-validation is the standard and most reliable method. Techniques like k-fold cross-validation are used to test a range of

λvalues and select the one that gives the best predictive performance on held-out validation data [39] [41] [43]. - Troubleshooting Step: Plot the cross-validated error against the log of

λ. Choose the value ofλthat minimizes the error, or the most regularized model within one standard error of the minimum (the "one-standard-error" rule for a simpler model).

Experimental Protocols & Implementation

This section provides a practical, code-driven guide to implementing these techniques, using a chemical research context.

Protocol for Lasso Regression in Python

The following protocol is adapted for a scenario such as predicting catalyst yield based on compositional and reaction descriptors [41] [42].

1. Data Preprocessing:

- Rationale: Standardization (scaling to mean=0, std=1) ensures that the penalty term

λis applied uniformly to all coefficients, preventing features with larger natural scales from being unfairly penalized [41].

2. Model Training with Cross-Validation:

3. Model Evaluation and Interpretation:

Workflow Diagram: Regularization Technique Selection

The following diagram outlines the logical decision process for choosing between Lasso, Ridge, and Elastic Net in a chemical ML workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software Tools and Packages for Regularization in Chemical ML Research

| Tool / Package | Function | Chemical ML Application Example |

|---|---|---|

scikit-learn (Python) |

Provides Lasso, Ridge, ElasticNet, and their cross-validation counterparts (LassoCV, etc.) for easy implementation and tuning [42]. |

Building predictive models for reaction yield or catalyst activity from descriptor data [40]. |

glmnet (R) |

A highly efficient package for fitting Lasso, Ridge, and Elastic Net models with built-in cross-validation [41]. | Statistical analysis and visualization of the relationship between catalyst composition and performance. |

| StandardScaler | A preprocessing module to standardize features to mean=0 and variance=1, which is a critical step before regularization [41]. | Ensuring that catalyst descriptors (e.g., particle size, binding energy) are on a comparable scale. |

| Cross-Validation | A technique (e.g., GridSearchCV or LassoCV) to objectively tune the hyperparameter λ and prevent overfitting during model selection [43]. |

Robustly estimating the performance of a model predicting drug solubility from molecular fingerprints. |

| Matplotlib / Seaborn | Libraries for creating path plots and validation curves to visualize the effect of λ and diagnose model behavior [41]. |

Visualizing how the importance of chemical descriptors changes with regularization strength. |

Advanced Concepts: The Bias-Variance Tradeoff

All regularization techniques operate on the fundamental principle of the bias-variance tradeoff [39] [43]. In machine learning:

- Bias is the error from erroneous assumptions in the model. High bias can cause underfitting.

- Variance is the error from sensitivity to small fluctuations in the training set. High variance can cause overfitting.

Regularization intentionally introduces a small amount of bias into the model by penalizing coefficients. In return, it achieves a significant reduction in variance. This results in a model that is less complex, more stable, and generalizes better to new, unseen data [39]. The hyperparameter λ directly controls this trade-off: a larger λ increases bias but decreases variance, and vice-versa [43]. The goal is to find the λ that minimizes the total error, which is the sum of bias², variance, and irreducible error.

In chemical machine learning research, where datasets are often small and high-dimensional, preventing overfitting is paramount to developing reliable models for tasks like predicting molecular properties or reaction outcomes. Regularization techniques are essential tools in this endeavor. This guide focuses on two powerful, algorithm-specific regularization strategies: Dropout for neural networks and the inherent ensemble methods in Tree-Based Algorithms like Random Forests. Understanding their mechanics and application is critical for researchers and drug development professionals building robust, generalizable models.

# FAQs on Regularization Fundamentals

Q1: What is the fundamental difference between how Dropout and Random Forests achieve regularization?

While both techniques introduce randomness to improve generalization, their underlying mechanisms are distinct.

| Feature | Dropout (Neural Networks) | Random Forests (Tree-Based Methods) |

|---|---|---|

| Core Mechanism | Randomly "drops" (deactivates) neurons during training. [47] [48] | Builds multiple trees on random subsets of data and features (Bagging). [47] [49] |

| Model Output | A single, averaged neural network. [48] [49] | An explicit ensemble (forest) of decision trees. [47] |

| Training Process | Iterative, sequential weight updates with different subnetworks. [49] | Embarrassingly parallel; each tree is independent. [49] |

| Primary Goal | Prevent co-adaptation of features by forcing redundant representations. [47] [48] | Reduce variance by averaging predictions from diverse, decorrelated trees. [47] |

Q2: How can I apply dropout regularization to a neural network for predicting chemical properties?

Implementing dropout in modern machine learning libraries is straightforward. Here is a conceptual example using a deep neural network for a regression task, such as predicting reaction yields:

In this architecture, dropout layers are strategically inserted after activation functions in hidden layers. During each training iteration, a random subset of neurons is ignored, forcing the network to learn more robust features. [48] [50] This is crucial in low-data chemical regimes to prevent the model from memorizing noise. [4]

Q3: My model is still overfitting. How do I choose the right dropout rate?

There is no universal optimal dropout rate; it is a hyperparameter that requires tuning. The following table provides best practices and a tuning strategy.

| Layer Type | Suggested Dropout Rate | Rationale |

|---|---|---|

| Input Layer | 0.1 - 0.2 [47] | Prevents the removal of too many input features/descriptors at once. |

| Hidden Layers | 0.2 - 0.5 [47] [48] [50] | Higher rates combat overfitting in deeper, more complex networks. |

Systematic Tuning Protocol:

- Start Low: Begin with a low dropout rate (e.g., 0.1 or 0.2). [50]

- Monitor Validation Loss: Use a separate validation set or cross-validation to track performance. [48]

- Iterate Gradually: Slowly increase the dropout rate until the gap between training and validation performance (a sign of overfitting) minimizes. [50]

- Avoid Underfitting: Excessively high dropout rates (e.g., >0.5) can prevent the model from learning, leading to underfitting. [48]

For chemical datasets, which are often small, integrating this tuning into a broader Bayesian hyperparameter optimization framework that uses a combined validation score (accounting for both interpolation and extrapolation performance) is highly recommended. [4]

Q4: Are tree-based methods like Random Forests better than neural networks for small chemical datasets?

Not necessarily. The performance depends heavily on proper tuning and regularization. While multivariate linear regression (MVL) has been the traditional choice for low-data scenarios due to its simplicity, recent studies show that properly regularized non-linear models can be competitive.

A benchmark on eight diverse chemical datasets (ranging from 18 to 44 data points) demonstrated that when neural networks (NN) and gradient boosting (GB) were tuned with an objective function that penalized overfitting in both interpolation and extrapolation, they could perform on par with, or even outperform, MVL. [4] Random Forests (RF), while robust, may be limited in their ability to extrapolate beyond the training data range, a crucial consideration for some chemical applications. [4] The key takeaway is that with automated, careful hyperparameter optimization, non-linear models are valuable tools even in low-data regimes. [4]

# Troubleshooting Guide: Common Experimental Issues

Problem: High Variance in Model Performance During Cross-Validation

Potential Cause: The model is highly sensitive to the specific train-validation split, which is common in small datasets. [4]

Solutions:

- Use Repeated Cross-Validation: Implement a 10x repeated 5-fold CV to get a more stable estimate of performance and reduce the effect of a lucky/unlucky split. [4]