Residual Plots for Regression Diagnostics: A Practical Guide for Biomedical Researchers

This article provides a comprehensive guide to using residual plots for validating regression models in biomedical and pharmaceutical research.

Residual Plots for Regression Diagnostics: A Practical Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to using residual plots for validating regression models in biomedical and pharmaceutical research. It covers foundational principles, from defining residuals and their role in checking model assumptions like linearity, homoscedasticity, and normality. The guide then explores advanced methodological applications, including partial residual plots for covariate analysis in Model-Based Meta-Analysis (MBMA) and diagnostics for Generalized Linear Models (GLMs). A dedicated troubleshooting section outlines how to identify and correct common issues like heteroscedasticity, non-linearity, and outliers. Finally, it discusses validation frameworks and compares diagnostic tools to ensure model robustness, empowering researchers to build reliable models for drug development and clinical analysis.

Understanding Residuals: The Foundation of Regression Model Diagnostics

In statistical modeling, particularly within regression analysis, a residual is defined as the difference between an observed value and the value predicted by a model [1]. This fundamental concept serves as a critical diagnostic measure for assessing model quality and accuracy. The mathematical expression for a residual is straightforward: Residual = Observed Value - Predicted Value [1] [2]. When a model's predictions are perfectly accurate, all residuals equal zero. In practice, however, residuals are almost never zero, and their magnitude and pattern provide valuable insights into model performance [1].

The analysis of residuals is particularly crucial in scientific fields such as pharmaceutical development, where predictive models must be rigorously validated to ensure reliability and regulatory compliance. For researchers and scientists, residual analysis transcends mere error calculation; it forms the basis for diagnosing model adequacy, verifying statistical assumptions, and guiding model improvement efforts [3] [4]. By systematically examining residuals, professionals can determine whether their models sufficiently capture the underlying relationships in the data or require refinement to account for more complex patterns.

Mathematical Definition and Calculation

Core Formula and Interpretation

The mathematical foundation for residuals is expressed through the formula:

[ d = y - \hat{y} ]

Where:

- (d) represents the residual

- (y) represents the observed value from actual data

- (\hat{y}) represents the predicted value from the regression model [5]

The direction and magnitude of residuals provide immediate feedback on model performance. A positive residual indicates that the observed value exceeds the predicted value, meaning the model has underestimated the actual measurement. Conversely, a negative residual signifies that the observed value falls below the predicted value, indicating overestimation by the model [1] [5]. The absolute value of the residual reflects the magnitude of this prediction error, with values closer to zero representing more accurate predictions.

Practical Calculation Example

The following table illustrates a simplified calculation of residuals using hypothetical data from a linear regression model predicting pharmaceutical product stability:

| Observation | Observed Value (y) | Predicted Value (ŷ) | Residual (y - ŷ) |

|---|---|---|---|

| 1 | 50.2 | 48.5 | +1.7 |

| 2 | 47.8 | 49.1 | -1.3 |

| 3 | 52.1 | 53.0 | -0.9 |

| 4 | 55.5 | 54.2 | +1.3 |

| 5 | 49.3 | 50.8 | -1.5 |

Table 1: Example residual calculations for a regression model

This tabular representation of residuals allows researchers to quickly identify both the direction and magnitude of prediction errors across observations. In the example above, the model appears to be slightly overestimating for observations 2, 3, and 5, while underestimating for observations 1 and 4. The systematic calculation and examination of these residuals forms the basis for more advanced diagnostic procedures [2].

The Role of Residuals in Model Diagnostics

Assessing Model Quality and Assumptions

Residuals serve as primary indicators for evaluating whether a regression model adequately represents the data. The core assumption in linear regression is that residuals should be randomly distributed with constant variance and no discernible patterns [4]. When this ideal condition is met, it suggests that the model has successfully captured the underlying relationship between variables. However, when residuals exhibit systematic patterns, they reveal deficiencies in the model that require attention [1] [3].

Statistical measures such as R-squared derive directly from residual analysis. The R-squared statistic quantifies the proportion of variance in the dependent variable explained by the model, and it is calculated using the sum of squared residuals [1]. A higher R-squared value indicates that residuals are generally smaller relative to the total variance, suggesting a better model fit. Similarly, other diagnostic metrics leverage residuals to provide insights into model performance and potential improvements.

Identifying Common Model Problems

Residual analysis can reveal several specific problems in regression models:

- Systematic Bias: When the average residual differs significantly from zero, it indicates that the model is consistently over- or under-predicting the observed values [1]

- Non-Linearity: Curved patterns in residual plots suggest that the relationship between variables may not be linear, requiring polynomial terms or transformations [2] [4]

- Heteroscedasticity: When the spread of residuals changes systematically with the predicted values, it violates the constant variance assumption [2] [3]

- Autocorrelation: When residuals display correlated patterns, particularly in time-series data, it indicates that errors are not independent [1]

Each of these patterns provides diagnostically valuable information that can guide researchers in refining their models to better represent the underlying data structure [3] [4].

Experimental Protocols for Residual Analysis

Protocol 1: Comprehensive Residual Analysis Workflow

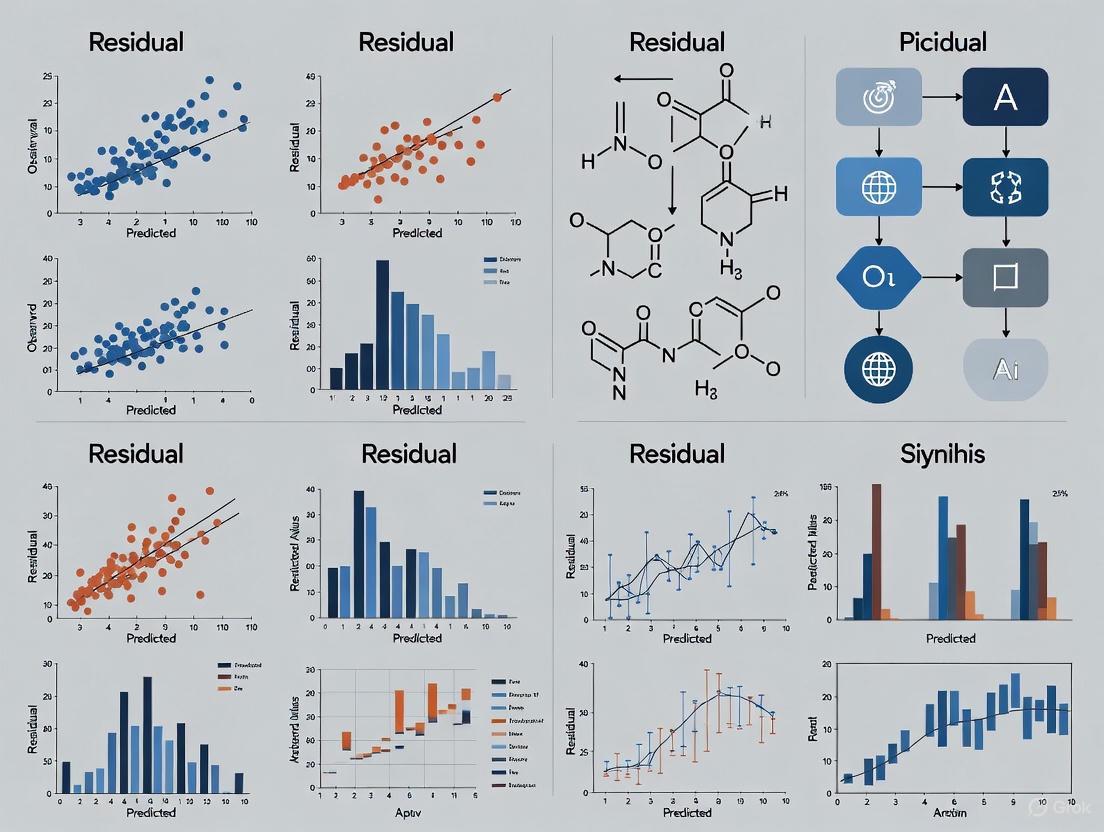

Figure 1: Comprehensive workflow for residual analysis in regression diagnostics

Protocol 2: Residual Plot Interpretation Guide

Objective: Systematically interpret residual plots to identify specific model deficiencies and appropriate remedial actions.

Procedure:

- Generate Residual vs. Fitted Plot: Plot residuals on the y-axis against predicted values on the x-axis [4]

- Interpret Pattern:

- Generate Normal Q-Q Plot: Plot sample quantiles against theoretical normal quantiles [4]

- Assess Normality:

- Check for Influential Points: Identify observations with high leverage and large residuals using Cook's distance [4]

Remedial Actions Based on Diagnostic Results:

| Pattern Detected | Proposed Solution | Application Context |

|---|---|---|

| Non-linearity | Add polynomial terms, use splines, or apply Generalized Additive Models (GAMs) [6] | When theoretical basis suggests curved relationships |

| Heteroscedasticity | Transform response variable, use weighted least squares, or apply variance-stabilizing transformations [6] | When variability changes with predicted values |

| Non-normality | Apply Box-Cox transformation to response variable [3] | When statistical inference requires normal errors |

| Outliers & influential points | Investigate data quality, consider robust regression techniques [3] [4] | When certain observations disproportionately influence results |

Table 2: Diagnostic patterns and corresponding remedial actions for residual analysis

Visualization and Interpretation of Residual Plots

Standard Diagnostic Plots for Regression

The most effective approach to residual analysis involves examining multiple complementary visualizations. Statistical software typically generates four key diagnostic plots that together provide a comprehensive assessment of model adequacy [4]:

- Residuals vs. Fitted Plot: Reveals patterns in residuals relative to prediction magnitude, highlighting non-linearity or heteroscedasticity [4]

- Normal Q-Q Plot: Assesses the normality assumption by comparing residual distribution to theoretical normal distribution [4]

- Scale-Location Plot: Displays the square root of standardized residuals against fitted values to better detect heteroscedasticity [4]

- Residuals vs. Leverage Plot: Identifies influential observations that disproportionately affect regression results [4]

Each plot addresses different model assumptions, and together they form a powerful diagnostic toolkit for researchers validating regression models.

Decision Framework for Residual Plot Interpretation

Figure 2: Diagnostic decision framework for interpreting residual plots

Applications in Pharmaceutical and Scientific Research

Residual Solvent Analysis in Drug Development

In pharmaceutical research, the term "residual" takes on additional specialized meaning in the context of residual solvent analysis. This application involves quantifying volatile organic compounds that remain in active pharmaceutical ingredients (APIs) and drug products after manufacturing [7] [8]. Regulatory guidelines such as ICH Q3C and USP <467> establish strict limits for these residuals based on their toxicity profiles, classifying solvents into three categories [7]:

- Class 1 solvents: Known human carcinogens or environmental hazards that should be avoided

- Class 2 solvents: Substances with inherent but reversible toxicity that must be limited

- Class 3 solvents: Compounds with low toxic potential subject to less stringent limits

The analytical methods for residual solvent detection primarily utilize headspace gas chromatography (GC) coupled with mass spectrometry (GC-MS) to achieve the sensitivity and specificity required for regulatory compliance [7]. This application demonstrates how residual analysis extends beyond statistical modeling into critical quality control processes in pharmaceutical manufacturing.

Research Reagent Solutions for Residual Analysis

| Reagent/Instrument | Function in Residual Analysis | Application Context |

|---|---|---|

| Headspace Gas Chromatograph (GC) | Separates and quantifies volatile residual solvents [7] | Pharmaceutical impurity profiling according to USP <467> |

| Mass Spectrometer (GC-MS) | Provides definitive identification of residual compounds [7] | Confirmatory testing and unknown peak identification |

| Statistical Software (R, Python) | Generates diagnostic plots and calculates residual statistics [4] | Regression model validation across scientific disciplines |

| Reference Standards | Enables calibration and quantification of specific residuals [7] | Method validation and compliance with regulatory guidelines |

Table 3: Essential research tools for residual analysis in pharmaceutical and scientific applications

Advanced Topics in Residual Analysis

Specialized Residual Types and Their Applications

Beyond ordinary residuals, several specialized residual types enhance diagnostic capabilities for specific analytical scenarios:

- Studentized Residuals: Residuals scaled by an estimate of their standard deviation, making them more comparable across observations and useful for outlier detection [3]

- Standardized Residuals: Residuals divided by their standard deviation, facilitating comparison across different models and datasets [2]

- PRESS Residuals: Calculation approach used in cross-validation where each residual is computed from a model fitted without that observation

These specialized residuals address specific diagnostic needs, such as identifying influential observations or comparing model performance across different measurement scales.

Addressing Violations of Regression Assumptions

When residual analysis reveals violations of regression assumptions, researchers can employ several advanced techniques to remedy these issues:

- Weighted Least Squares: Addresses heteroscedasticity by assigning different weights to observations based on their variance [6]

- Generalized Additive Models (GAMs): Accommodates non-linear relationships through smooth functions without requiring specific parametric forms [6]

- Robust Regression Techniques: Reduces the influence of outliers using alternative estimation methods less sensitive to extreme values

The appropriate remedial approach depends on the specific pattern identified through residual analysis and the theoretical understanding of the underlying phenomena being modeled.

Residuals, defined as the differences between observed and predicted values, serve as fundamental diagnostic tools in regression analysis and quality control processes across scientific disciplines. Through systematic calculation, visualization, and interpretation of residuals, researchers can validate model assumptions, identify deficiencies, and guide model improvement efforts. The protocols and frameworks presented in this document provide comprehensive guidance for implementing residual analysis in both statistical modeling and specialized applications such as pharmaceutical residual solvent testing. As regulatory requirements and analytical methodologies continue to evolve, the principles of residual analysis remain essential for ensuring the validity and reliability of scientific models and manufacturing processes.

The Critical Role of Residuals in Checking Model Assumptions

In statistical regression analysis, a residual is the difference between an observed value and the value predicted by a model [1]. Represented by the simple formula Residual = Observed – Predicted, these seemingly simple values form the cornerstone of model diagnostics, providing critical insights into whether a statistical model adequately represents the underlying data [4] [1]. For researchers and scientists in drug development, residual analysis is not merely a statistical formality; it is an essential practice for validating analytical methods, ensuring regulatory compliance, and building models that can reliably inform critical decisions from drug discovery to clinical trials [9].

The core premise of residual analysis is that if a model is perfectly specified, the residuals should exhibit no systematic patterns. They should appear as random noise, fluctuating randomly around zero [9]. Conversely, patterns in the residuals are the model's way of communicating that it has failed to capture some essential characteristic of the data. By meticulously examining residuals, researchers can verify key model assumptions—linearity, normality, independence, and constant variance (homoscedasticity)—and identify outliers or influential points that could disproportionately skew the results [3] [10]. This process transforms residuals from simple errors into a powerful diagnostic tool, guiding scientists toward more robust, reliable, and interpretable models.

Key Diagnostic Plots and Their Interpretation

Visual inspection of residuals is the most effective method for diagnosing model adequacy. The following plots, typically generated in tandem, provide a multi-faceted view of model performance and assumption violations.

Residuals vs. Fitted Values Plot

This plot displays residuals on the y-axis against the model's predicted (fitted) values on the x-axis [4]. Its primary purpose is to check the assumptions of linearity and homoscedasticity.

- Ideal Pattern: A random scatter of points around the horizontal line at zero (residual=0), with no discernible systematic patterns [4] [9].

- Problematic Patterns and Interpretations:

- A U-shaped or curved pattern indicates non-linearity. The model has failed to capture a non-linear relationship in the data, suggesting that a quadratic term, transformation, or a different non-linear model might be more appropriate [4] [10].

- A funnel-shaped pattern (where the spread of residuals increases or decreases with the fitted values) indicates heteroscedasticity—a violation of the constant variance assumption. This can lead to inefficient estimates and invalid inference [3].

Normal Q-Q Plot

The Normal Quantile-Quantile (Q-Q) plot assesses whether the residuals follow a normal distribution [4]. It plots the sorted residuals against the theoretically expected values from a normal distribution.

- Ideal Pattern: The points follow the dashed 45-degree reference line closely, with minor deviations at the tails [4].

- Problematic Patterns and Interpretations:

- Systematic deviations from the line, particularly an S-shape or curves at the ends, indicate departures from normality. This can affect the validity of confidence intervals and p-values [3].

Scale-Location Plot

Also known as the Spread-Location plot, this graph shows the square root of the absolute standardized residuals against the fitted values [4]. It is another powerful tool for detecting heteroscedasticity.

- Ideal Pattern: A horizontal line with randomly spread points, indicating that the spread (variance) of the residuals is constant across all levels of the predictor [4].

- Problematic Patterns and Interpretations:

Residuals vs. Leverage Plot

This plot helps identify influential observations that have a disproportionate impact on the regression model's results [4]. It plots residuals against leverage, often with contours of Cook's distance.

- Ideal Pattern: All points are clustered closely together, well within the boundaries of Cook's distance lines (typically shown as red dashed lines) [4].

- Problematic Patterns and Interpretations:

Table 1: Summary of Key Diagnostic Residual Plots

| Plot Type | Primary Assumption Checked | Ideal Pattern | Common Violations & Implications |

|---|---|---|---|

| Residuals vs. Fitted | Linearity & Homoscedasticity | Random scatter around zero | Curve: Non-linearity. Funnel: Non-constant variance (Heteroscedasticity) [4] [3] |

| Normal Q-Q | Normality of Errors | Points on the diagonal line | S-shape/Curves: Non-normal residuals; impacts significance tests [4] [3] |

| Scale-Location | Homoscedasticity | Horizontal line with random spread | Upward/Downward trend: Non-constant variance [4] [3] |

| Residuals vs. Leverage | Influence & Outliers | Points clustered inside Cook's distance lines | Points in top/bottom right: Influential cases that alter model results [4] [11] |

Advanced Diagnostic Techniques

Beyond the four standard plots, several advanced techniques offer deeper insights, particularly in complex modeling scenarios common in pharmaceutical research.

Partial Residual Plots

Partial Residual Plots (PRPs) are invaluable for diagnosing the functional form of a specific predictor in a multiple regression model after accounting for the effects of all other covariates [12]. They help answer whether the relationship between a predictor and the outcome is linear or requires transformation.

In a recent application for a Model-based Meta-Analysis (MBMA) of antidepressant treatments, PRPs were used to visualize the dose-response relationship for Venlafaxine while normalizing for other effects like placebo response and baseline score [12]. This provided a "like-to-like" comparison, revealing how well the model captured the dose-effect relationship independently of other variables. PRPs are particularly useful when dealing with large numbers of studies, where traditional forest plots become unwieldy [12].

Identifying Outliers and Influential Points

Not all outliers are influential. It is crucial to distinguish between them using specific diagnostic statistics:

- Outliers: Observations where the response value is unusual given its covariate pattern. These can be detected using studentized residuals. A common rule is that absolute studentized residuals greater than 3 may be considered outliers [3] [11].

- Leverage: Points with an unusual combination of predictor values (far from the average covariate pattern). Leverage is measured by the hat value. A common cutoff is 2p/n, where p is the number of predictors and n is the number of observations [11].

- Influence: The product of being an outlier and having high leverage. Influential points, if removed, cause a substantial change in the model coefficients. Cook's distance is a key metric, with values greater than 4/n often flagged for investigation [4] [11].

Table 2: Diagnostics for Unusual Observations

| Diagnostic | Statistic | What It Identifies | Common Cut-off Guideline |

|---|---|---|---|

| Outlier | Studentized Residual | Observation with an unusual response value | Absolute value > 3 [11] |

| Leverage | Hat Value | Observation with extreme predictor values | > 2p/n [11] |

| Influence | Cook's Distance | Observation that significantly changes model coefficients | > 4/n [4] |

Experimental Protocols for Residual Analysis

This section provides a detailed, step-by-step protocol for conducting a comprehensive residual analysis, suitable for inclusion in a method validation report.

Protocol: Comprehensive Residual Analysis for Linear Model Validation

1. Purpose and Scope To provide a standardized methodology for evaluating the adequacy of a linear regression model by examining its residuals. This protocol verifies key statistical assumptions and identifies potential model misspecifications, ensuring the reliability of inferences drawn from the model. It is applicable during analytical method validation, calibration curve assessment, and clinical data analysis.

2. Materials and Software Requirements

- Dataset with observed and predictor variables.

- Statistical software (e.g., R, Python with StatsModels, SAS, SPSS).

- The

plot.lmfunction in R is specifically designed for this purpose [4].

3. Step-by-Step Procedure

Step 1: Model Fitting

- Fit the proposed linear regression model to your dataset using standard procedures (e.g.,

lm()in R).

- Fit the proposed linear regression model to your dataset using standard procedures (e.g.,

Step 2: Generate Diagnostic Plots

- Execute the appropriate command to produce the four core diagnostic plots. In R, using the

plot()function on the fitted model object (plot(fitted_model)) will generate them sequentially [4]. - To view all four plots simultaneously in R, use:

- Execute the appropriate command to produce the four core diagnostic plots. In R, using the

Step 3: Systematic Visual Inspection

- Residuals vs. Fitted Plot: Check for a random scatter of points and the absence of U-shaped or funnel-shaped patterns [4].

- Normal Q-Q Plot: Assess how closely the points adhere to the diagonal line. Note any systematic deviations [4].

- Scale-Location Plot: Verify that the points form a roughly horizontal band and that the red smoothing line is flat [4].

- Residuals vs. Leverage Plot: Identify any points with high leverage and/or high influence, particularly those outside the Cook's distance contours [4].

Step 4: Quantitative Validation (Supplementary)

- Perform formal statistical tests to complement visual inspection:

- Normality: Shapiro-Wilk test on the residuals.

- Heteroscedasticity: Breusch-Pagan test.

- Independence: Durbin-Watson test for time-series data.

- Perform formal statistical tests to complement visual inspection:

Step 5: Documentation and Interpretation

- Document all plots and test results.

- For any assumption violation, propose and investigate remedial measures (e.g., data transformation, weighted regression, non-linear terms, robust regression) [9].

- Clearly state the final conclusion regarding model adequacy.

Workflow Visualization

The following diagram illustrates the logical workflow for the residual analysis protocol.

The Scientist's Toolkit: Essential Reagents and Software

For researchers embarking on residual analysis, the following tools and statistical "reagents" are essential for conducting a robust diagnostic evaluation.

Table 3: Essential Research Reagent Solutions for Residual Analysis

| Tool Category | Specific Item / Software | Function and Application in Diagnostics |

|---|---|---|

| Statistical Software | R with stats & car packages [11] [10] |

The base R plot.lm() function generates the four core plots. The car package provides enhanced diagnostic functions like influencePlot() and residualPlots() [11]. |

| Statistical Software | Python (StatsModels, scikit-learn) [10] | Provides comprehensive regression diagnostics and residual analysis capabilities through libraries like StatsModels. |

| Statistical Software | SAS, SPSS, MATLAB [10] | Enterprise and commercial software with robust procedures for regression diagnostics and residual analysis. |

| Diagnostic Metrics | Studentized Residuals [3] [11] | Standardized residuals used to detect outliers (unusually large differences between observed and predicted values). |

| Diagnostic Metrics | Hat Values (Leverage) [11] | Identifies observations with extreme or unusual combinations of predictor variables. |

| Diagnostic Metrics | Cook's Distance [4] [3] [11] | A composite measure that quantifies the influence of a single observation on the entire set of regression coefficients. |

Application in Pharmaceutical Research and Regulatory Compliance

In the pharmaceutical industry, residual analysis transcends theoretical statistics and becomes a matter of quality and regulatory rigor. Regulatory agencies like the FDA and EMA require stringent validation of analytical methods used in drug development and manufacturing [9]. Residual plots serve as a critical component of this validation, providing visual and quantitative evidence that a method is fit for its intended purpose.

During analytical method validation, residual plots are used to:

- Confirm Linearity: A random scatter of residuals around zero in a calibration curve reinforces that a linear model is appropriate over the specified concentration range. Systematic deviations suggest the range may be too broad or a non-linear model is needed [9].

- Detect Non-constant Variance: Heteroscedasticity in a bioanalytical assay, for example, can lead to unreliable quantification at certain concentration levels. Identifying this through a residual plot allows scientists to apply weighted regression or other corrections to ensure the method's accuracy and precision across its entire range [9].

- Identify Outliers: An outlier in a clinical trial data analysis or an analytical run could indicate sample contamination, measurement error, or an instrumental anomaly. Pinpointing these points for investigation is crucial before a method can be fully validated and implemented in routine quality control [9].

The inclusion of residual plots and their interpretation in validation reports enhances transparency and demonstrates a commitment to statistical rigor, which is highly valued during regulatory reviews and inspections [9].

In the context of regression model diagnostics research, residual analysis serves as a fundamental methodology for verifying model assumptions and assessing model adequacy. Residuals, defined as the differences between observed values and model-predicted values, contain valuable information about why a model may not fit well [2]. Diagnostic plots transform this information into visual patterns, enabling researchers to detect violations of statistical assumptions that could compromise analytical conclusions. For researchers and drug development professionals, these diagnostics are particularly crucial as they ensure the validity of models used in critical applications such as dose-response modeling, pharmacokinetic studies, and clinical trial data analysis.

The regression framework assumes a linear relationship between predictors and the response variable, independent and normally distributed errors with constant variance, and no influential outliers disproportionately affecting the model [13] [4]. Violations of these assumptions can lead to biased parameter estimates, inaccurate confidence intervals, and compromised predictive validity. This article systematically examines four primary diagnostic plots: Residuals vs. Fitted, Normal Q-Q, Scale-Location, and Residuals vs. Leverage, providing comprehensive protocols for their implementation and interpretation within pharmaceutical research contexts.

Theoretical Foundations of Residual Analysis

Residual Calculation and Properties

In linear regression analysis, residuals are mathematically defined as:

[ ei = yi - \hat{y_i} ]

where ( yi ) represents the observed value and ( \hat{yi} ) represents the predicted value for the i-th observation [2]. The diagnostic power of residuals stems from their relationship to the unobservable error term; while errors represent the deviation from the true population regression line, residuals represent the deviation from the estimated sample regression line.

A fundamental property of residuals in ordinary least squares (OLS) regression is that they sum to zero, with zero covariance with the fitted values when the model includes an intercept term [14]. This theoretical foundation ensures that residuals behave in predictable ways when model assumptions are satisfied, allowing systematic deviations from these patterns to indicate assumption violations.

Assumptions of Linear Regression

The validity of linear regression inference depends on several critical assumptions:

- Linearity: The relationship between predictors and response is linear

- Independence: Errors are statistically independent

- Homoscedasticity: Constant variance of errors across all predictor levels

- Normality: Errors follow a normal distribution

Diagnostic plots essentially operationalize the verification of these assumptions, with each plot targeting specific potential violations [13] [4]. For drug development researchers, understanding these assumptions is crucial when modeling biological phenomena where violation risks are substantial, such as in saturated response effects, heterogeneous population responses, or assay measurement limitations.

Key Diagnostic Plots

Residuals vs. Fitted Values Plot

Purpose and Interpretation

The Residuals vs. Fitted plot graphically displays the predicted values (( \hat{y} )) on the horizontal axis against the residuals (( e_i )) on the vertical axis [13]. This plot primarily addresses the assumptions of linearity and homoscedasticity (constant variance).

In a well-specified model, this plot should show:

- Residuals randomly scattered around zero (the reference line)

- No discernible systematic patterns

- Constant spread across all fitted values

- No prominent outliers [13]

Table 1: Patterns in Residuals vs. Fitted Plots and Their Interpretations

| Pattern Observed | Likely Cause | Implications for Model |

|---|---|---|

| Random scatter around zero | Assumptions met | No action needed |

| U-shaped or inverted U-shaped curve | Non-linear relationship | Model misspecification; add quadratic terms |

| Funnel or cone shape | Heteroscedasticity | Non-constant variance; transformations needed |

| One or two points far from the rest | Outliers | Investigate influential points |

Protocol for Implementation

Protocol 1: Creating and Interpreting Residuals vs. Fitted Plot

- Model Fitting: Fit your regression model using standard software (R, Python, SAS)

- Extract Values: Obtain fitted values and residuals from the model object

- Create Scatterplot: Plot fitted values on x-axis against residuals on y-axis

- Add Reference Line: Include horizontal line at y=0 for visual reference

- Assess Patterns: Examine for non-linearity, non-constant variance, or outliers

In R, after fitting a model (fit <- lm(y ~ x, data)), the plot can be generated with:

In Python using statsmodels:

Normal Q-Q Plot

Purpose and Interpretation

The Normal Quantile-Quantile (Q-Q) plot assesses whether residuals follow a normal distribution [15] [4]. It compares the quantiles of the residual distribution against the theoretical quantiles of a normal distribution with the same mean and variance.

Interpretation guidelines:

- Points following the diagonal line suggest normality

- Systematic deviations indicate non-normality

- S-shaped curves indicate heavy or light tails relative to normal distribution

- C-shaped curves indicate skewness [15]

Table 2: Common Q-Q Plot Patterns and Distributional Issues

| Pattern in Q-Q Plot | Distribution Issue | Corrective Actions |

|---|---|---|

| Points follow reference line | Normal distribution | No action needed |

| S-shaped curve | Heavy or light tails | Transform response variable |

| Consistent upward deviation | Right skew | Log or square root transformation |

| Consistent downward deviation | Left skew | Reflection then transformation |

| Few points deviate at ends | Outliers | Investigate data quality |

Protocol for Implementation

Protocol 2: Creating and Interpreting Normal Q-Q Plots

- Sort Residuals: Arrange residuals in ascending order

- Calculate Theoretical Quantiles: Generate corresponding quantiles from standard normal distribution

- Create Scatterplot: Plot theoretical quantiles against observed residual quantiles

- Add Reference Line: Include line of perfect agreement (y=x)

- Assess Distribution: Evaluate deviation from reference line

In R:

In Python using statsmodels:

The following diagram illustrates the systematic workflow for creating and interpreting Normal Q-Q plots:

Scale-Location Plot

Purpose and Interpretation

Also known as the Spread-Location plot, this diagnostic tool specifically assesses the assumption of homoscedasticity (constant variance) [4]. Instead of plotting raw residuals, it displays the square root of the absolute standardized residuals against fitted values.

Interpretation guidelines:

- Horizontal line with randomly scattered points indicates constant variance

- Upward or downward sloping pattern indicates heteroscedasticity

- The presence of a non-flat smooth line (often added to the plot) indicates changing variance across fitted values

Protocol for Implementation

Protocol 3: Creating and Interpreting Scale-Location Plots

- Standardize Residuals: Calculate standardized or studentized residuals

- Transform Values: Compute square root of absolute standardized residuals

- Create Scatterplot: Plot fitted values against transformed residuals

- Add Smooth Line: Include a loess or similar smooth line to visualize trend

- Assess Pattern: Evaluate whether the smooth line is approximately horizontal

In R:

In Python using statsmodels:

Residuals vs. Leverage Plot

Purpose and Interpretation

This plot identifies influential observations that disproportionately affect the regression results [4]. It displays residuals against leverage, with contours representing Cook's distance—a measure of influence.

Key concepts:

- Leverage: Measures how extreme an observation is in the predictor space

- Influence: Combines leverage and residual size to measure impact on parameter estimates

- Cook's Distance: Quantifies how much regression coefficients change if a case is omitted

Interpretation guidelines:

- Points in upper/lower right corners are potentially influential

- Cases outside Cook's distance contours warrant investigation

- The plot helps distinguish between outliers and influential points

Protocol for Implementation

Protocol 4: Creating and Interpreting Residuals vs. Leverage Plots

- Calculate Leverage: Obtain leverage values (hat values) from model

- Compute Influence Measures: Calculate Cook's distance for each observation

- Create Scatterplot: Plot leverage against residuals

- Add Contour Lines: Include Cook's distance contours (typically 0.5 and 1.0)

- Identify Influential Points: Flag observations beyond contour lines

In R:

In Python using statsmodels:

Integrated Diagnostic Workflow

The following diagram presents a comprehensive workflow for regression diagnostics, integrating all four primary diagnostic plots:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Regression Diagnostics

| Tool/Software | Primary Function | Application Context |

|---|---|---|

| R Statistical Software | Comprehensive regression analysis | Primary analysis platform for complex models |

| Python (Statsmodels) | Flexible statistical modeling | Integration with machine learning pipelines |

| SAS PROC REG | Enterprise-level regression | Clinical trial analysis (pharma industry) |

| JMP Interactive Visualization | Exploratory data analysis | Rapid model prototyping and diagnostics |

| MATLAB Statistics Toolbox | Computational mathematics | Engineering-based modeling applications |

For drug development researchers, these tools facilitate the implementation of diagnostic protocols within various analytical contexts. R provides the most comprehensive suite of diagnostic functions through its base graphics and packages like car and ggplot2 [4]. Python's statsmodels and scikit-learn libraries offer similar capabilities with integration advantages for machine learning workflows. SAS remains prevalent in pharmaceutical regulatory submissions, while JMP provides interactive capabilities valuable for exploratory analyses during early research phases.

Advanced Applications in Pharmaceutical Research

Case Study: Dose-Response Modeling

In dose-response studies, diagnostic plots play a crucial role in validating model assumptions. The Residuals vs. Fitted plot can detect non-linear response patterns that might indicate alternative functional forms (e.g., Emax models instead of linear models). The Scale-Location plot can identify variance heterogeneity across dose levels, common when higher doses produce more variable biological responses.

Case Study: Pharmacokinetic Data Analysis

Pharmacokinetic (PK) data often exhibit heteroscedasticity where measurement error increases with concentration levels. Diagnostic plots help identify this pattern, guiding appropriate variance-stabilizing transformations or weighted regression approaches. The Normal Q-Q plot is particularly valuable for assessing distributional assumptions in population PK models.

Protocol for Model Remediation

Protocol 5: Addressing Identified Diagnostic Issues

Non-linearity Detection (Residuals vs. Fitted plot):

- Add polynomial terms

- Apply non-linear transformation to predictors

- Implement spline regression or generalized additive models

Heteroscedasticity (Scale-Location plot):

- Apply variance-stabilizing transformations (log, square root)

- Use weighted least squares regression

- Implement generalized linear models with appropriate variance structure

Non-normality (Normal Q-Q plot):

- Transform response variable (Box-Cox transformation)

- Use robust regression methods

- Apply non-parametric approaches

Influential Observations (Residuals vs. Leverage plot):

- Verify data quality for influential cases

- Consider robust regression techniques

- Report results with and without influential points

Diagnostic plots constitute an essential methodology for verifying regression model assumptions in pharmaceutical research. The integrated workflow presented—encompassing Residuals vs. Fitted, Normal Q-Q, Scale-Location, and Residuals vs. Leverage plots—provides a comprehensive approach to model validation. For drug development professionals, these diagnostics offer critical insights into model adequacy, guiding appropriate model refinement and ensuring the validity of analytical conclusions that underpin regulatory decisions and scientific understanding.

The protocols and implementation guidelines presented in this article provide researchers with practical tools for incorporating rigorous diagnostic assessment into their analytical workflows, ultimately enhancing the reliability and interpretability of regression models in drug development contexts.

Residual analysis is a fundamental diagnostic procedure in regression modeling, serving to validate the core assumptions that underpin the reliability of a model's inferences and predictions [3]. For researchers and scientists in drug development, where models often inform critical decisions, ensuring that a regression model is an accurate representation of the underlying data is paramount. A residual, defined as the difference between an observed value and the value predicted by the model (Residual = Observed – Predicted), contains valuable information about the model's deficiencies [2]. A healthy residual plot is one where these residuals display a random scatter around zero and maintain constant variance (homoscedasticity) across all levels of the prediction [4] [3]. This application note details the quantitative criteria and experimental protocols for identifying such a plot, thereby confirming that a model is well-specified for its intended purpose in scientific research.

Characteristics of a Healthy Residual Plot

A residual plot that confirms model adequacy exhibits two primary characteristics: random scatter and constant variance. These features indicate that the model has successfully captured the underlying systematic relationship in the data, leaving only unpredictable, random error in the residuals.

Visual Characteristics

- Random Scatter: The residuals should be randomly dispersed above and below the horizontal line at zero, with no discernible patterns, curves, or trends [2] [4].

- Constant Variance (Homoscedasticity): The vertical spread of the residuals should be approximately the same across the entire range of fitted values. The cloud of points should not fan out (funnel shape) or narrow in a systematic way [4] [3].

Quantitative Assessment Criteria

The following table summarizes the key features and their quantitative interpretations for a healthy residual plot.

Table 1: Quantitative Criteria for Assessing a Healthy Residual Plot

| Assessment Feature | Quantitative Measure | Interpretation in a Healthy Plot |

|---|---|---|

| Mean of Residuals | Mean (μ) of all residuals | Should be approximately zero [2]. |

| Distribution of Residuals | Standard Deviation (σ) of residuals | Should be relatively small and consistent across the range of fitted values [2]. |

| Residual Pattern | Durbin-Watson statistic, plots of residuals vs. predictors | No significant autocorrelation; no clear patterns in any residual vs. predictor plot [3]. |

| Variance Homogeneity | Breusch-Pagan or White test, Scale-Location plot | Statistical tests for heteroscedasticity are non-significant (p > 0.05); red line in Scale-Location plot is roughly horizontal [4] [3]. |

| Normality of Errors | Shapiro-Wilk test, Normal Q-Q plot | For valid inference, residuals should be approximately normal; points in Q-Q plot closely follow the 45-degree reference line [4]. |

Experimental Protocol for Residual Plot Analysis

This protocol provides a step-by-step methodology for generating and diagnosing residual plots, suitable for validating regression models in scientific research.

Workflow for Residual Analysis

The following diagram illustrates the logical workflow for conducting a residual analysis to diagnose a regression model.

Step-by-Step Procedure

Protocol 1: Generation and Assessment of a Residual vs. Fitted Plot

Purpose: To visually and quantitatively assess the linearity and homoscedasticity assumptions of a regression model.

Materials: See Section 5, "The Scientist's Toolkit."

Procedure:

- Model Fitting: Fit your linear regression model to the experimental dataset.

- Residual Calculation: For every observation i in your dataset, calculate the residual eᵢ using the formula:

- eᵢ = Observed Valueᵢ - Predicted Valueᵢ [2].

- Plot Generation: Create a scatter plot, known as the Residual vs. Fitted plot.

- X-axis: The predicted (fitted) values from the model.

- Y-axis: The calculated residuals.

- Add a horizontal reference line at Y = 0 [4].

- Visual Diagnosis: Examine the plot for the characteristics of health as defined in Section 2.1.

- Healthy Indication: Residuals are randomly scattered around the zero line with no systematic patterns and with constant spread.

- Unhealthy Indications:

- Non-linearity: A curved pattern (e.g., U-shaped or inverted U-shaped) in the residuals suggests the relationship between a predictor and the outcome is not linear [2] [4].

- Heteroscedasticity: A funnel-shaped pattern (increasing or decreasing spread of residuals along the x-axis) indicates non-constant variance [2] [3].

- Outliers: Points that are exceptionally far from the zero line may be outliers that disproportionately influence the model [3].

Protocol 2: Supplemental Diagnostic Plots

Purpose: To formally evaluate the normality and homoscedasticity assumptions.

Procedure:

- Normal Q-Q Plot:

- Generation: Plot the quantiles of the standardized residuals against the quantiles of a theoretical normal distribution.

- Assessment: If the residuals are normally distributed, the points will closely follow the 45-degree reference line. Systematic deviations from the line indicate skewness or heavy tails [4].

- Scale-Location Plot:

- Generation: Plot the square root of the absolute standardized residuals (on the Y-axis) against the fitted values (on the X-axis).

- Assessment: A healthy model will show a roughly horizontal trend line with randomly scattered points. A positively sloped trend line is a clear indicator of heteroscedasticity [4] [3].

Remedial Workflow for Unhealthy Plots

When a residual plot reveals a violation of assumptions, a systematic approach to remediation is required.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Statistical Model Diagnostics

| Tool or Reagent | Function in Residual Analysis |

|---|---|

| Statistical Software (R/Python) | Provides the computational environment for fitting regression models, calculating residuals, and generating the suite of diagnostic plots (e.g., using plot(lm()) in R) [4]. |

| Residual vs. Fitted Plot | The primary diagnostic tool for visually assessing the linearity and constant variance assumptions of the regression model [2] [4]. |

| Normal Q-Q Plot | A graphical tool to assess the validity of the normality assumption of the regression errors [4]. |

| Scale-Location Plot | A specialized plot used to detect heteroscedasticity (non-constant variance) more effectively than the standard residuals vs. fitted plot [4] [3]. |

| Influence Measures (Cook's Distance) | A statistical metric used to identify influential observations that have a disproportionate impact on the regression model's coefficients; points with Cook's D > 4/n may require investigation [4] [3]. |

| Variance Stabilizing Transformations | Mathematical transformations (e.g., log, square root) applied to the response variable to correct for heteroscedasticity [2] [3]. |

Linking Residual Analysis to Model Validity in Scientific Inference

Residual analysis is a fundamental diagnostic technique used to evaluate the validity and adequacy of statistical regression models. A residual is defined as the difference between an observed value and the value predicted by a regression model (eᵢ = yᵢ - ŷᵢ). These residuals contain valuable information about model performance and potential violations of regression assumptions [3]. The primary goal of residual analysis is to validate whether the key assumptions of a regression model are met, ensuring the reliability of statistical inferences and predictions [3]. For researchers in scientific fields, particularly drug development, thorough residual analysis is crucial for establishing model robustness and drawing meaningful conclusions from experimental data.

Core Principles and Purpose

Residual analysis serves as a critical link between a fitted model and scientific inference by providing diagnostic tools to assess model quality. Its core purposes include [3]:

- Evaluating Model Assumptions: Validating the assumptions of linearity, normality, homoscedasticity, and independence of errors.

- Identifying Model Inadequacies: Detecting systematic patterns that suggest model misspecification or omitted variables.

- Detecting Influential Observations: Recognizing outliers and leverage points that disproportionately impact model parameters.

- Guiding Model Improvement: Informing data transformations, variable selection, and alternative modeling approaches.

Table 1: Key Characteristics of Residuals in Model Diagnostics

| Characteristic | Definition | Diagnostic Importance |

|---|---|---|

| Magnitude | Absolute difference between observed and predicted values | Indicates overall model precision and prediction error |

| Pattern | Systematic structure in residual distribution | Reveals violations of model assumptions |

| Distribution | Statistical distribution of residual values | Assesses normality assumption and identifies outliers |

| Leverage | Influence of individual data points on model fit | Identifies disproportionately influential observations |

Diagnostic Methods and Protocols

Graphical Residual Analysis Techniques

Visual inspection of residuals provides intuitive diagnostics for model adequacy. The following protocols outline key graphical methods:

Protocol 1: Residuals vs. Fitted Values Plot

- Purpose: Assess linearity assumption and detect heteroscedasticity

- Procedure:

- Plot residuals on vertical axis against fitted values on horizontal axis

- Add horizontal reference line at zero

- Examine pattern of points around reference line

- Interpretation: Random scatter indicates adequate model; funnel shape suggests heteroscedasticity; curved pattern indicates non-linearity [3]

Protocol 2: Normal Q-Q Plot

- Purpose: Evaluate normality assumption of residuals

- Procedure:

- Order residuals from smallest to largest

- Plot ordered residuals against theoretical quantiles of normal distribution

- Add 45-degree reference line for perfect normality

- Interpretation: Points following reference line support normality assumption; systematic deviations indicate violation [3]

Protocol 3: Scale-Location Plot

- Purpose: Detect heteroscedasticity (non-constant variance)

- Procedure:

- Calculate square root of absolute standardized residuals

- Plot these values against fitted values

- Add smoothing line to visualize trend

- Interpretation: Horizontal smoothing line indicates constant variance; sloping line suggests heteroscedasticity [3]

Protocol 4: Residuals vs. Predictor Variables

- Purpose: Identify omitted variable relationships and pattern misspecification

- Procedure:

- Plot residuals against each predictor variable in model

- Plot residuals against potential predictors not included in model

- Examine for systematic patterns

- Interpretation: Random scatter indicates adequate specification; systematic patterns suggest missing terms or transformations [3]

Quantitative Diagnostic Measures

Numerical diagnostics complement graphical methods by providing objective measures of model adequacy:

Table 2: Quantitative Measures for Residual Analysis

| Measure | Calculation | Interpretation | Threshold | ||

|---|---|---|---|---|---|

| Studentized Residuals | rᵢ = eᵢ/(s√(1-hᵢᵢ)) where hᵢᵢ is leverage |

Identifies outliers | Values > | 3 | indicate potential outliers |

| Cook's Distance | Dᵢ = (eᵢ²/(p·MSE))·(hᵢᵢ/(1-hᵢᵢ)²) |

Measures influence of single observation | Values > 4/n indicate influential points | ||

| DFFITS | Standardized change in predicted value | Measures effect on fitted values | Values > 2√(p/n) suggest high influence | ||

| DFBETAS | Standardized change in parameter estimates | Assesses effect on each coefficient | Values > 2/√n indicate influential observations | ||

| Durbin-Watson | d = Σ(eᵢ - eᵢ₋₁)²/Σeᵢ² |

Tests autocorrelation in residuals | Values near 2 suggest no autocorrelation |

Experimental Workflow for Comprehensive Residual Analysis

The following workflow provides a systematic protocol for conducting residual analysis in scientific research:

Common Residual Patterns and Interpretations

Understanding residual patterns is essential for diagnosing model deficiencies:

Research Reagent Solutions for Statistical Diagnostics

Table 3: Essential Tools for Comprehensive Residual Analysis

| Tool Category | Specific Solutions | Application in Residual Analysis | Key Features |

|---|---|---|---|

| Statistical Software | R Statistical Environment, Python SciKit-Learn, SAS, MATLAB | Primary platforms for calculating residuals and creating diagnostic plots | Comprehensive regression diagnostics, customizable plotting capabilities, statistical testing functions |

| Specialized Diagnostic Packages | R: car, lmtest, MASSPython: statsmodels, scipy.stats | Enhanced diagnostic tests and visualization capabilities | Specific tests for heteroscedasticity (Breusch-Pagan), normality (Shapiro-Wilk), and influential points |

| Visualization Tools | ggplot2 (R), matplotlib/seaborn (Python), commercial visualization software | Creation of publication-quality diagnostic plots | High-resolution graphics, customizable themes, multiple plot arrangements |

| Influence Diagnostics | Cook's distance calculation, DFFITS, DFBETAS algorithms | Identification of influential observations and outliers | Automated detection of problematic data points, threshold-based flagging systems |

Advanced Diagnostic Protocols

Protocol for Detecting Heteroscedasticity

Heteroscedasticity (non-constant variance) violates regression assumptions and requires specific diagnostic approaches:

Objective: Identify and quantify non-constant variance in residuals Procedure:

- Calculate standardized residuals from fitted model

- Create scale-location plot (sqrt(|residuals|) vs. fitted values)

- Perform Breusch-Pagan or White test for heteroscedasticity

- Calculate confidence intervals for variance estimates across fitted value ranges Interpretation: Significant test results (p < 0.05) indicate heteroscedasticity; funnel pattern in plot confirms visual evidence Remedial Actions: Weighted least squares, variance-stabilizing transformations (log, square root), generalized linear models [3]

Protocol for Identifying Influential Observations

Influential observations disproportionately affect parameter estimates and require careful assessment:

Objective: Detect observations with undue influence on regression results Procedure:

- Calculate leverage values (hat matrix diagonals) for all observations

- Compute Cook's distance for each observation

- Calculate DFBETAS for each observation's effect on each parameter

- Compute DFFITS for each observation's effect on predictions Interpretation:

- Leverage > 2p/n indicates high leverage

- Cook's D > 4/n indicates high influence

- |DFBETAS| > 2/√n suggests parameter influence

- |DFFITS| > 2√(p/n) suggests prediction influence Decision Framework: Investigate influential points for data quality issues; consider robust regression techniques if justified exclusion is not appropriate [3]

Residual analysis provides the critical link between statistical models and valid scientific inference. By systematically applying the diagnostic protocols and methodologies outlined in this document, researchers can verify model assumptions, identify deficiencies, and implement appropriate remedial measures. The integration of graphical techniques with quantitative diagnostics creates a comprehensive framework for model validation, particularly crucial in regulated research environments such as drug development where inference validity directly impacts decision-making. Proper residual analysis ensures that statistical models not only fit historical data but also provide reliable inference for future predictions and scientific conclusions.

Creating and Interpreting Diagnostic Plots in Practice

Step-by-Step Guide to Generating Standard Residual Plots

Residual plots are fundamental graphical tools used in regression diagnostics to assess the adequacy of statistical models and validate key assumptions. Within pharmaceutical research and drug development, these plots are indispensable for verifying analytical methods, ensuring compliance with regulatory standards, and guaranteeing the reliability of data used in critical decision-making processes [9]. A residual is the difference between an observed value and the value predicted by a regression model. Visualizing these residuals helps scientists identify patterns indicating model shortcomings, such as non-linearity, non-constant variance, or the presence of outliers, which might otherwise compromise the integrity of scientific conclusions [2] [16].

This guide provides a structured, step-by-step protocol for generating and interpreting standard residual plots, contextualized for the rigorous demands of regulatory-grade research.

Quantitative Foundation of Residuals

Before generating plots, a clear understanding of the underlying quantitative data is essential. The core data for residual analysis is derived from the model's predictions and the corresponding discrepancies.

Observations, Predictions, and Residuals: For a given dataset, each observation has an actual (observed) value, a predicted value from the regression model, and a residual calculated as Residual = Observed - Predicted [2]. The following table illustrates this data structure using a simplified example from an analytical calibration study.

Table 1: Example Data Structure for Residual Calculation from a Calibration Curve

| Standard Concentration (μg/mL) | Observed Response | Predicted Response | Residual (Observed - Predicted) |

|---|---|---|---|

| 10.0 | 104.5 | 102.1 | 2.4 |

| 25.0 | 251.2 | 249.8 | 1.4 |

| 50.0 | 499.8 | 505.3 | -5.5 |

| 75.0 | 740.1 | 745.1 | -5.0 |

| 100.0 | 1005.5 | 1000.6 | 4.9 |

The residuals from a well-specified model are expected to be randomly scattered around zero. Key assumptions tied to these residuals include linearity of the relationship, constant variance (homoscedasticity), normality, and independence [17] [18].

Step-by-Step Experimental Protocol

This protocol outlines the process for generating and analyzing residual plots, using R as the primary statistical environment.

Software and Reagent Solutions

Table 2: Essential Research Tools for Residual Plot Analysis

| Tool Name | Type/Function |

|---|---|

| R Statistical Software | Open-source environment for statistical computing and graphics. |

| RStudio IDE | Integrated development environment that simplifies coding and visualization in R. |

ggplot2 & ggfortify Packages |

R packages that provide powerful and standardized functions for creating diagnostic plots. |

broom Package |

R package that neatly organizes model outputs, including fitted values and residuals. |

| Validated Analytical Dataset | Experimental data from a calibrated method (e.g., concentration-response data). |

Procedure

Step 1: Fit the Regression Model Begin by fitting your linear regression model to the experimental data. The following R code uses a simple linear regression with concentration as the predictor and instrument response as the outcome.

Step 2: Generate the Residuals vs. Fitted Values Plot This is the primary plot for checking homoscedasticity and linearity. A random scatter of points around the horizontal line at zero indicates the assumptions are met.

Step 3: Generate the Normal Q-Q Plot This plot assesses the normality of the residuals. Points that closely follow the dashed line indicate that the residuals are approximately normally distributed.

Step 4: Generate the Scale-Location Plot Also known as the Spread-Location plot, this is used to check the assumption of homoscedasticity. A horizontal line with randomly spread points indicates constant variance.

Step 5: Generate the Residuals vs. Leverage Plot This plot helps identify influential data points that disproportionately affect the regression results.

Workflow Visualization

The logical sequence from model fitting to diagnostic checking is encapsulated in the following workflow. This diagram provides a high-level overview of the standard operating procedure for residual diagnostics.

Interpretation and Diagnostic Guidelines

Correct interpretation is critical. The following table catalogues common residual plot patterns, their diagnostic implications, and potential remedial actions for researchers.

Table 3: Diagnostic Guide for Interpreting Residual Plots

| Observed Pattern | Diagnostic Interpretation | Potential Corrective Actions |

|---|---|---|

| Random Scatter around the zero line | The model assumptions of linearity and homoscedasticity are likely met [2]. | No action required; the model is adequate. |

| A distinct U-shaped or curved pattern | Non-linearity: The model may not correctly capture the true functional form of the relationship [2] [19]. | Consider adding polynomial terms, transforming variables (e.g., log, square root), or using a non-linear model. |

| Funnel or fan shape (increasing/decreasing spread) | Heteroscedasticity: Non-constant variance of the residuals [2] [19] [9]. | Apply a variance-stabilizing transformation (e.g., log) to the response variable or use weighted least squares regression. |

| A point far removed from the random cloud | Potential Outlier: An observation with a large residual [19] [18]. | Investigate the data point for measurement error. If no error is found, analyze the model with and without the point. |

| Points deviating from the diagonal in Q-Q plot | Non-normality: The residuals are not normally distributed [17]. | Apply a transformation to the response variable or check for missing predictors. |

Regulatory Considerations in Pharmaceutical Sciences

In drug development, regulatory frameworks from the FDA and EMA mandate rigorous analytical method validation [9]. Residual plots serve as objective evidence during this process.

- Linearity of Calibration Curves: A random residual plot is fundamental proof that a calibration curve is linear across its specified range, a key parameter in bioanalytical method validation [9].

- Demonstrating Control: Systematically patterned residuals can indicate a lack of control over the analytical procedure. A random pattern supports the claim that the method is robust and produces reliable results [9].

- Documentation and Submission: Residual plots and any actions taken based on their interpretation should be thoroughly documented in validation reports submitted to regulatory agencies to demonstrate statistical rigor [9].

By adhering to this structured protocol for generating and interpreting standard residual plots, researchers and scientists in drug development can ensure their regression models are valid, their analytical methods are sound, and their data meets the highest standards of quality and regulatory compliance.

Interpreting Residuals vs. Fitted Plots for Linearity and Homoscedasticity

Within the broader thesis on advanced regression diagnostics, this document establishes standardized protocols for interpreting residuals versus fitted plots, fundamental tools for verifying the core assumptions of linearity and homoscedasticity in regression analysis. The application notes provide a structured framework for researchers and scientists, particularly in drug development, to diagnose model inadequacies, thereby ensuring the reliability of inferences drawn from regression models. The methodologies outlined are critical for validating analytical models used in pharmacokinetics, dose-response analysis, and other quantitative research applications.

Residual plots serve as a primary diagnostic tool for assessing the validity of linear regression models, which are extensively used in statistical analysis across scientific disciplines. A residual is defined as the difference between an observed value and the value predicted by the model (Residual = Observed – Predicted) [2]. The residuals versus fitted plot is a scatterplot with residuals on the vertical axis and fitted values (predicted values) on the horizontal axis [13] [20]. This plot is indispensable for detecting violations of the assumptions of linearity (that the relationship between predictors and the outcome is linear) and homoscedasticity (that the variance of the residuals is constant) [21]. This protocol details the interpretation of these plots within the context of rigorous model diagnostics.

Theoretical Framework and Key Concepts

Characteristics of a Well-Behaved Residual Plot

An ideal residuals vs. fitted plot indicates that the regression model's assumptions are met. The key characteristics are [13] [20]:

- Random Scatter: The residuals bounce randomly around the residual = 0 line, suggesting the linearity assumption is reasonable.

- Horizontal Band: The residuals roughly form a horizontal band around the residual = 0 line, indicating constant variance of the error terms (homoscedasticity).

- No Outliers: No single residual stands out markedly from the overall random pattern.

Table 1: Key Characteristics of an Ideal Residuals vs. Fitted Plot

| Characteristic | Description | Implied Assumption |

|---|---|---|

| Random Scatter | Residuals are randomly dispersed above and below zero. | Linearity |

| Constant Spread | The vertical spread of residuals is consistent across all fitted values. | Homoscedasticity |

| No Influential Points | Absence of points with extreme residual or fitted values. | No outliers |

Homoscedasticity and Heteroscedasticity

- Homoscedasticity signifies that the variance of the errors remains constant across all levels of the independent variable(s) [22]. This is a crucial assumption for the reliability of statistical inferences (e.g., p-values, confidence intervals) derived from the model.

- Heteroscedasticity occurs when the variability of the residuals is not constant, often appearing as a fan-shaped pattern in the residual plot [22] [23]. While it may not bias the coefficient estimates, it reduces their precision, leading to unreliable standard errors and potentially incorrect inferences [23].

The following diagram illustrates the logical workflow for interpreting a residuals vs. fitted plot, guiding the user from initial pattern recognition to final diagnosis.

Experimental Protocol: Interpretation and Diagnosis

Protocol 3.1: Visual Inspection of Residuals vs. Fitted Plots

Purpose: To diagnose potential violations of linearity and homoscedasticity in a fitted regression model through visual analysis.

Materials and Software:

- A fitted linear regression model object (e.g., an

lmobject in R). - Statistical software (e.g., R, Python with

statsmodels, Stata). - The dataset used to fit the model.

Procedure:

- Generate the Plot: Using your statistical software, plot the model's residuals on the y-axis against the corresponding fitted (predicted) values on the x-axis. Ensure a horizontal line at residual=0 is displayed for reference [4].

- Assess Linearity: Observe the overall distribution of points relative to the residual=0 line.

- Acceptable: Residuals are randomly dispersed above and below zero without a discernible systematic pattern [13] [4].

- Violation Indicated: A clear curved pattern (e.g., U-shaped or inverted U-shaped) is present. This suggests a non-linear relationship between a predictor and the outcome variable has not been captured by the model [2] [4].

- Assess Homoscedasticity: Observe the vertical spread of the residuals across the range of fitted values.

- Acceptable (Homoscedastic): The spread of the residuals remains approximately constant from left to right, forming a horizontal band [13] [21].

- Violation Indicated (Heteroscedastic): The spread of residuals systematically increases or decreases with the fitted values, forming a funnel or fan shape [22] [23].

- Check for Outliers: Identify any points that fall far outside the overall cloud of residuals. These may be outliers or influential points requiring further investigation [4].

Troubleshooting: For small datasets, avoid over-interpreting minor twists and turns in the plot, as humans naturally seek patterns in randomness [13] [20].

Protocol 3.2: Quantitative Confirmation of Visual Diagnoses

Purpose: To use formal statistical tests to confirm patterns suspected in the visual inspection.

Materials and Software:

- The same fitted model and dataset from Protocol 3.1.

- Software capable of performing heteroscedasticity tests (e.g.,

statsmodelsin Python,lmtestpackage in R).

Procedure for Heteroscedasticity:

- Breusch-Pagan Test: This test regresses the squared residuals on the independent variables.

- Interpretation: A significant p-value (e.g., p < 0.05) provides statistical evidence against homoscedasticity, confirming heteroscedasticity [22].

- Goldfeld-Quandt Test: This test compares the variances of residuals from two different segments of the data (e.g., low vs. high fitted values).

- Interpretation: A significant p-value (e.g., p < 0.05) suggests heteroscedasticity is present [22].

Procedure for Non-Linearity:

- Lack-of-Fit Test: If replicate data are available, a lack-of-fit test can be used to formally test for non-linearity by comparing the linear model to a more complex model that fits the means of the replicates.

Table 2: Diagnostic Patterns and Remedial Actions

| Pattern in Plot | Diagnosis | Potential Remedial Actions |

|---|---|---|

| Random Scatter | Assumptions met; no major issues detected. | None required. Proceed with interpretation. |

| Curved Pattern | Non-linearity; the model form is incorrect. | - Add polynomial terms (e.g., x²) [2] [23].- Apply a non-linear transformation to the predictor or outcome variable [2].- Use a generalized additive model (GAM). |

| Funnel Shape | Heteroscedasticity; non-constant variance. | - Transform the outcome variable (e.g., log(Y)) [2] [23].- Use weighted least squares regression [22] [23].- Use robust standard errors (e.g., Huber-White estimators) [23]. |

| Outlier(s) | Potential influential points. | - Investigate data points for errors.- Use Cook's distance to quantify influence [4].- Consider robust regression techniques. |

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential analytical "reagents" required for conducting thorough residual diagnostics.

Table 3: Essential Tools for Regression Diagnostics

| Tool / Solution | Function / Purpose |

|---|---|

| Residuals vs. Fitted Plot | Primary visual tool for detecting non-linearity and heteroscedasticity [13] [20]. |

| Scale-Location Plot | A variant of the residual plot that uses the square root of the absolute residuals, making it easier to detect trends in spread [22] [4]. |

| Normal Q-Q Plot | Assesses the normality assumption of the residuals, which is important for the validity of hypothesis tests [2] [4] [21]. |

| Breusch-Pagan Test | A formal statistical test used to quantitatively confirm the presence of heteroscedasticity [22]. |

| Cook's Distance | Identifies influential data points that have a disproportionate impact on the regression model's coefficients [4]. |

| Variance Inflation Factor (VIF) | Diagnoses multicollinearity—high correlation among predictor variables—which does not affect residuals but can destabilize coefficient estimates [21]. |

The residuals versus fitted plot is an indispensable, first-line diagnostic for validating regression models. Mastery of its interpretation is non-negotiable for ensuring the integrity of scientific conclusions, especially in high-stakes fields like drug development. This protocol provides a standardized, actionable framework for researchers to diagnose and remediate common model violations, thereby strengthening the analytical foundation of their work. Future research within the broader thesis will explore automated interpretation algorithms and advanced diagnostic techniques for complex model architectures.

Using Normal Q-Q Plots to Assess the Normality of Errors

Within the broader context of research on residual plots for regression model diagnostics, assessing the normality of errors stands as a critical verification step for validating the inferential foundation of linear models. The assumption of normally distributed errors underpins the validity of p-values, confidence intervals, and hypothesis tests for regression coefficients [4]. Violations of this assumption can lead to biased parameter estimates and reduced statistical power, potentially compromising the reliability of scientific conclusions, particularly in high-stakes fields like drug development [24]. Among the available diagnostic tools, the Normal Quantile-Quantile (Q-Q) plot provides a powerful graphical method for evaluating this normality assumption, offering advantages over purely numerical tests by revealing the nature and extent of departures from normality [25] [26].

This protocol details the theoretical principles, practical implementation, and nuanced interpretation of Normal Q-Q plots for diagnosing error distributions in regression analysis, providing researchers with a standardized framework for model diagnostics.

Theoretical Foundations of the Normal Q-Q Plot

A Normal Q-Q plot is a graphical technique that compares the quantiles of an observed distribution—typically regression residuals—to the quantiles of a theoretical normal distribution [26]. If the residuals are perfectly normally distributed, the points will fall approximately along a straight reference line. The plot leverages the properties of quantiles, which are points that divide a dataset into equal-sized, continuous intervals (e.g., percentiles, quartiles) [26].

The underlying statistical principle involves plotting the sorted standardized residuals against the theoretically expected z-scores from a standard normal distribution. The resulting pattern allows researchers to visually assess the Gaussian fit of their model's errors. While formal statistical tests for normality exist, the Q-Q plot's strength lies in its ability to visually communicate not just whether a distribution deviates from normality, but how it deviates, revealing characteristics such as skewness, kurtosis, and the presence of outliers [25] [26]. This makes it an indispensable tool for exploratory model diagnostics, guiding subsequent model refinement strategies.

Workflow for Q-Q Plot Analysis in Regression Diagnostics

The following workflow diagram outlines the systematic process of using Q-Q plots for diagnosing normality of errors in regression models, from model fitting to interpretation and remedial actions.

Protocol: Implementation and Interpretation

Software-Specific Implementation

The following table summarizes the core functions and packages for creating Normal Q-Q plots across common statistical software environments.

Table 1: Software Implementation for Normal Q-Q Plots

| Software | Core Function/Package | Key Syntax Example | Reference Line Command |

|---|---|---|---|

| R Stats | qqnorm(), qqplot() |

qqnorm(residuals) |

qqline(residuals, col="red") |

| R ggplot2 | stat_qq(), stat_qq_line() |

ggplot(data, aes(sample=residuals)) + stat_qq() |

Included in stat_qq_line() |

| Python StatsModels | statsmodels.api.qqplot() |

sm.qqplot(residuals, line='45') |

line='45' parameter |

| Python SciPy | scipy.stats.probplot() |

scipy.stats.probplot(residuals, dist="norm", plot=plt) |

fit=True parameter |

| Minitab | Stat > Quality Tools > Normal Plot | GUI-based workflow | Automatically generated |

Step-by-Step Protocol for Residual Analysis:

Model Fitting and Residual Extraction: After fitting your regression model (e.g., using Ordinary Least Squares), extract the residuals. While raw residuals can be used, standardized residuals (e.g., Studentized or Pearson residuals) are generally preferred as they are normalized by their standard error, providing a more stable variance [27] [28].

Plot Generation: Generate the Normal Q-Q plot using the appropriate function for your software environment. Ensure a reference line is added, which represents perfect normality [26].

Visual Inspection: Systematically examine the plot. Look for whether the points adhere closely to the reference line. Pay particular attention to the behavior at both tails of the distribution, as deviations often manifest most prominently there [26].

Interpretation of Common Patterns

Interpreting Q-Q plots requires understanding the diagnostic implications of specific patterns. The following table catalogs common deviations and their statistical meanings.

Table 2: Interpretation Guide for Q-Q Plot Patterns