Revolutionizing Drug Discovery: A Guide to Active Learning with Graph Neural Networks for Efficient Chemical Space Exploration

The exploration of vast chemical spaces for drug and materials discovery is fundamentally bottlenecked by the high cost and time of traditional experimental and computational methods.

Revolutionizing Drug Discovery: A Guide to Active Learning with Graph Neural Networks for Efficient Chemical Space Exploration

Abstract

The exploration of vast chemical spaces for drug and materials discovery is fundamentally bottlenecked by the high cost and time of traditional experimental and computational methods. This article details how the integration of Active Learning (AL) with Graph Neural Networks (GNNs) creates a powerful, data-efficient paradigm to overcome these challenges. We cover the foundational principles of GNNs for representing molecular structures and the iterative AL cycle for targeted data acquisition. The scope extends to methodological frameworks that combine uncertainty quantification with multi-objective optimization, addressing key challenges like model reliability and explainability. Through validation across diverse applications—from photosensitizer design to materials discovery—and comparative analysis with conventional techniques, we demonstrate how this synergy accelerates the discovery of novel therapeutic and functional materials while significantly reducing resource expenditure. This guide provides researchers and development professionals with the strategic insights needed to implement these cutting-edge computational approaches.

Laying the Groundwork: Why GNNs and Active Learning are Revolutionizing Chemical Exploration

The Challenge of Vast Chemical Spaces in Drug and Materials Discovery

The exploration of chemical space, estimated to contain up to 10^60 small molecules, represents one of the most significant challenges in modern drug and materials discovery [1]. This vastness renders traditional experimental screening methods fundamentally incapable of comprehensive exploration, necessitating sophisticated computational approaches that can efficiently navigate this expansive landscape. The concept of the biologically relevant chemical space (BioReCS) further complicates this challenge, as it encompasses molecules with biological activity—both beneficial and detrimental—spanning diverse application areas from drug discovery to agrochemistry [2].

Artificial intelligence, particularly geometric deep learning, has emerged as a transformative technology for addressing this challenge. Graph neural networks (GNNs) have demonstrated remarkable success in molecular property prediction by directly operating on molecular graphs, capturing detailed connectivity and spatial relationships between atoms [3] [4]. However, accurate prediction alone is insufficient for efficient exploration. The integration of active learning paradigms with GNNs creates a powerful framework for balancing exploration with exploitation, systematically guiding the search toward promising regions of chemical space while quantifying prediction uncertainty to avoid misleading results [4].

This Application Note outlines structured protocols and experimental frameworks that leverage active learning with graph neural networks to address the fundamental challenge of vast chemical spaces in discovery science. We present quantitative comparisons of emerging methodologies, detailed experimental protocols for implementation, and visual workflows that illustrate the integration of these technologies into cohesive research strategies.

Quantitative Comparison of Advanced GNN Architectures

Recent advancements in GNN architectures have significantly enhanced their capability to model molecular structures and properties. The integration of Kolmogorov-Arnold networks (KANs) with traditional GNN frameworks represents a particularly promising development. The table below summarizes the performance improvements achieved by KA-GNNs across seven molecular benchmarks compared to conventional GNNs:

Table 1: Performance comparison of KA-GNN variants against conventional GNNs

| Model Architecture | Average Prediction Accuracy (%) | Parameter Efficiency | Interpretability Enhancement | Key Innovation |

|---|---|---|---|---|

| KA-GCN [3] | 84.7 | High | Medium | Fourier-based KAN modules in node embedding and message passing |

| KA-GAT [3] | 86.2 | Medium | High | Incorporates edge embeddings with attention mechanisms |

| Conventional GCN [3] | 79.3 | Medium | Low | Standard graph convolutional operations |

| Conventional GAT [3] | 80.5 | Medium | Medium | Attention-based message passing |

| RG-MPNN [5] | 82.1 | Medium | High | Integrates pharmacophore information via reduced-graph pooling |

The superior performance of KA-GNNs stems from their foundation in the Kolmogorov-Arnold representation theorem, which enables them to replace fixed activation functions with learnable univariate functions, offering enhanced expressivity and parameter efficiency [3]. The integration of Fourier-series-based univariate functions within KA-GNNs further enhances function approximation capabilities, allowing the models to effectively capture both low-frequency and high-frequency structural patterns in molecular graphs [3].

Beyond architectural improvements, the incorporation of domain knowledge into GNN architectures has demonstrated significant benefits. The RG-MPNN model, which hierarchically integrates pharmacophore information into message-passing neural networks through pharmacophore-based reduced-graph pooling, has shown consistent performance improvements across eleven benchmark datasets and ten kinase datasets [5]. This approach demonstrates that augmenting GNNs with chemical prior knowledge can enhance both predictive accuracy and model interpretability by highlighting chemically meaningful substructures.

Active Learning Integration with GNNs

Uncertainty Quantification Frameworks

The integration of uncertainty quantification (UQ) with directed message passing neural networks (D-MPNNs) represents a critical advancement for reliable molecular design across expansive chemical spaces. This approach addresses the fundamental challenge of domain shift, where models trained on limited chemical datasets often fail to maintain predictive accuracy when applied to novel molecular scaffolds [4].

Table 2: Comparison of uncertainty quantification methods in GNNs for molecular optimization

| UQ Method | Implementation Approach | Computational Efficiency | Optimization Success Rate (%) | Best Suited Applications |

|---|---|---|---|---|

| Probabilistic Improvement Optimization (PIO) [4] | Quantifies likelihood of exceeding property thresholds | High | 78.5 | Multi-objective optimization, threshold-based design |

| Expected Improvement [4] | Balances exploration and exploitation based on expected gain | Medium | 72.3 | Single-objective optimization |

| Gaussian Process Regression [4] | Non-parametric Bayesian approach with inherent UQ | Low (O(n³) scaling) | 68.7 | Small datasets, well-characterized chemical spaces |

| Ensemble Methods [4] | Multiple model instances with variance analysis | Medium | 70.2 | General-purpose applications |

The Probabilistic Improvement Optimization (PIO) method has demonstrated particular efficacy in molecular design benchmarks, enhancing optimization success in most cases and supporting more reliable exploration of chemically diverse regions [4]. In multi-objective tasks, PIO proves especially advantageous, balancing competing objectives and outperforming uncertainty-agnostic approaches by quantifying the likelihood that candidate molecules will exceed predefined property thresholds [4].

Active Learning Workflow Protocol

Protocol 1: Active Learning with UQ-Enhanced GNNs for Molecular Optimization

Materials and Reagents:

- Chemical Dataset: Curated molecular structures with associated property data (e.g., ChEMBL, PubChem)

- Software: Chemprop with D-MPNN implementation [4] or KA-GNN codebase [3]

- Computational Resources: GPU-accelerated computing environment with sufficient memory for GNN training

Experimental Procedure:

Initial Model Training

- Prepare molecular structures in SMILES format and convert to graph representations

- Initialize D-MPNN or KA-GNN architecture with appropriate hyperparameters

- Train initial surrogate model on available labeled data (typically 10-20% of total data)

- Implement uncertainty quantification method (recommend PIO for multi-objective tasks)

Active Learning Loop

- Generate or select candidate molecules from chemical space (e.g., using genetic algorithms)

- Use trained surrogate model to predict properties and associated uncertainties

- Apply acquisition function (e.g., probabilistic improvement) to select informative candidates

- Evaluate selected candidates using expensive computational methods (e.g., FEP+, docking) or experiments

- Augment training data with newly evaluated candidates

- Fine-tune surrogate model on expanded dataset

Termination and Validation

- Continue iterations until performance plateaus or computational budget exhausted

- Validate final candidates using rigorous experimental assays

- Analyze explored chemical space diversity using molecular descriptors

Technical Notes:

- For multi-objective optimization, maintain separate surrogate models for each property of interest

- Implement early stopping based on validation performance to prevent overfitting

- Balance exploration and exploitation by adjusting acquisition function parameters throughout the process

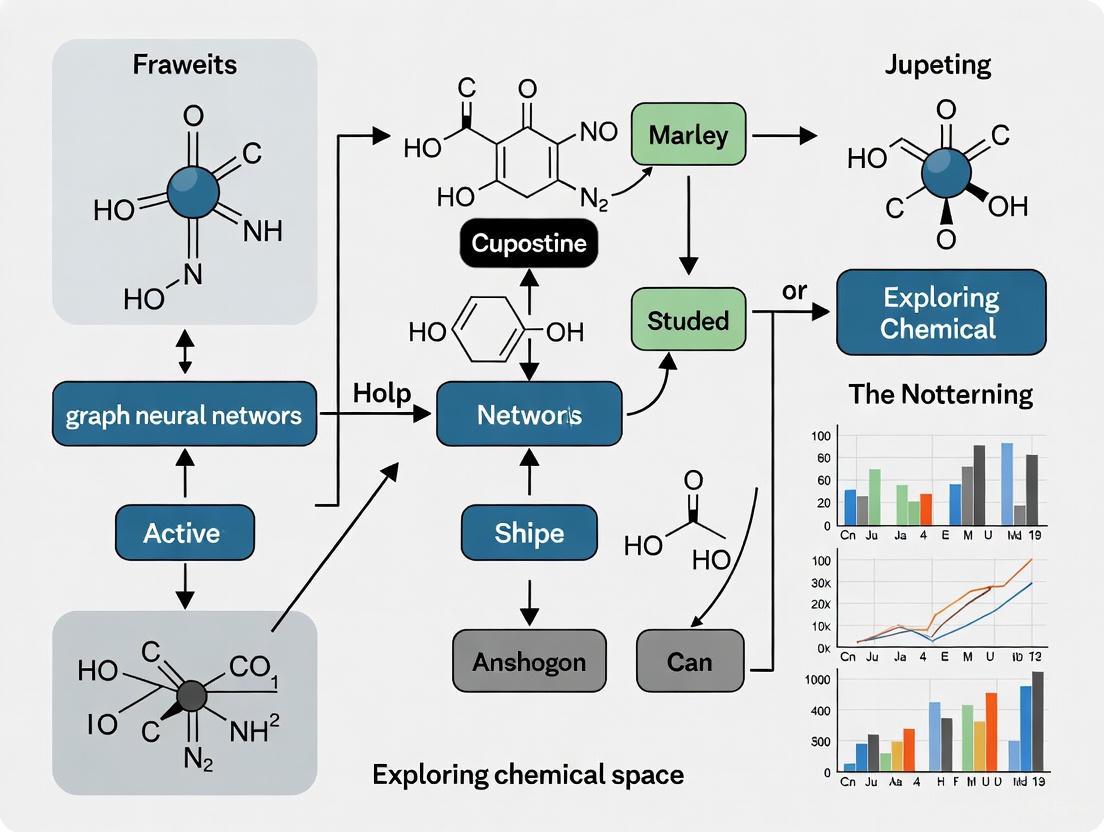

The following diagram illustrates the iterative active learning workflow for molecular optimization:

Multi-Objective Optimization Frameworks

STELLA Protocol for Fragment-Based Exploration

The STELLA (Systematic Tool for Evolutionary Lead optimization Leveraging Artificial intelligence) framework provides a metaheuristics-based approach for extensive fragment-level chemical space exploration with balanced multi-parameter optimization [6]. This method combines an evolutionary algorithm with a clustering-based conformational space annealing method and leverages deep learning models for accurate prediction of pharmacological properties.

Protocol 2: STELLA Implementation for De Novo Molecular Design

Materials and Reagents:

- Seed Molecules: Initial lead compounds or fragment libraries

- Fragment Library: Curated collection of molecular fragments with synthetic accessibility filters

- Property Prediction Models: Pre-trained models for key molecular properties (e.g., QED, docking scores)

Experimental Procedure:

Initialization Phase

- Input seed molecule(s) as starting point for exploration

- Generate initial pool of molecules using FRAGRANCE mutation operator

- Optionally add user-defined molecules to increase initial diversity

Evolutionary Optimization Loop

- Molecule Generation: Create variants through:

- FRAGRANCE mutation

- Maximum common substructure (MCS)-based crossover

- Trimming operations to maintain drug-likeness

- Scoring: Evaluate generated molecules using objective function incorporating:

- Docking scores (e.g., GOLD PLP Fitness)

- Quantitative Estimate of Drug-likeness (QED)

- Additional user-defined properties

- Clustering-based Selection:

- Cluster all generated molecules with distance cutoff

- Select molecules with best objective scores from each cluster

- Iteratively select next-best molecules if target number not met

- Molecule Generation: Create variants through:

Progressive Refinement

- Gradually reduce distance cutoff in clustering step across iterations

- Transition selection criteria from maintaining structural diversity to optimizing objective function

- Terminate when convergence criteria met or maximum iterations reached

Technical Notes:

- Weight objective function components appropriately for specific design goals

- Adjust mutation and crossover rates to balance exploration and exploitation

- Implement synthetic accessibility filters to ensure practical utility of results

In comparative studies, STELLA generated 217% more hit candidates with 161% more unique scaffolds and achieved more advanced Pareto fronts compared to REINVENT 4, demonstrating superior performance in both efficient exploration of chemical space and multi-parameter optimization [6].

Foundation Models for Chemical Space Navigation

Recent advances in foundation models for chemistry offer promising approaches for navigating vast chemical spaces. The MIST (Molecular Insight SMILES Transformers) family of models, with up to 1.8 billion parameters trained on 6 billion molecules, represents a significant step toward general-purpose molecular representation learning [1]. These models use a novel tokenization scheme (Smirk) that comprehensively captures nuclear, electronic, and geometric information, enabling effective fine-tuning for over 400 structure-property relationships [1].

The following diagram illustrates the hierarchical structure of the MIST foundation model and its application to molecular property prediction:

Research Reagent Solutions

Table 3: Essential computational tools and resources for active learning in chemical space exploration

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| KA-GNN Framework [3] | Graph Neural Network Architecture | Molecular property prediction with enhanced expressivity | Drug discovery, materials design |

| Chemprop with D-MPNN [4] | Software Package | Molecular property prediction with uncertainty quantification | Active learning, molecular optimization |

| STELLA [6] | Metaheuristics Framework | Fragment-based chemical space exploration and multi-parameter optimization | De novo molecular design, lead optimization |

| MIST Models [1] | Foundation Models | General-purpose molecular representation learning | Transfer learning across multiple chemical domains |

| Schrödinger Active Learning [7] | Commercial Platform | Machine learning-guided molecular docking and free energy calculations | Ultra-large library screening, lead optimization |

| Tartarus [4] | Benchmarking Platform | Evaluation of molecular design algorithms across multiple domains | Method validation, performance comparison |

| FRAGRANCE [6] | Mutation Operator | Fragment-based molecular generation in STELLA framework | Chemical space exploration, scaffold hopping |

The integration of active learning methodologies with advanced graph neural network architectures represents a paradigm shift in addressing the fundamental challenge of vast chemical spaces in drug and materials discovery. The protocols and frameworks outlined in this Application Note provide structured approaches for leveraging these technologies to efficiently navigate biologically relevant chemical space while balancing multiple optimization objectives.

The quantitative comparisons demonstrate that emerging approaches—including KA-GNNs, UQ-enhanced D-MPNNs, metaheuristic frameworks like STELLA, and foundation models such as MIST—offer significant advantages over conventional methods in both prediction accuracy and exploration efficiency. By implementing these protocols and utilizing the associated research reagents, discovery scientists can accelerate the identification of novel molecular entities with optimized properties while reducing the computational and experimental resources required.

As these methodologies continue to evolve, the integration of active learning with increasingly sophisticated molecular representations promises to further compress discovery timelines and expand the accessible regions of chemical space for therapeutic and materials applications.

The pursuit of efficient molecular representation is fundamental to advancements in materials science and drug discovery. Traditional methods, such as molecular fingerprints or string-based representations like SMILES, often face challenges with high dimensionality, information loss, and limited generalization capabilities [8]. Graph Neural Networks (GNNs) have emerged as a transformative solution by directly modeling the inherent graph structure of molecules, where atoms naturally represent nodes and chemical bonds represent edges [9] [10]. This structural congruence provides GNNs with a powerful inductive bias for processing molecular data.

Over the past five years, GNNs have revolutionized computational drug design by accurately modeling molecular structures and their interactions with binding targets [11]. They enable end-to-end learning, automatically extracting rich, fine-grained representations that capture information about atoms, chemical bonds, multi-order adjacencies, and molecular topology, thereby eliminating the need for extensive manual feature engineering [8]. This capability is crucial for active learning frameworks in chemical space research, where models must intelligently select the most informative data points to optimize experimental resources and accelerate the discovery process.

Core Applications in Chemistry and Drug Discovery

GNNs are being deployed across the entire drug discovery and materials development pipeline. Their applications can be broadly categorized into several key areas, each contributing to a significant acceleration of the research process.

Molecular Property Prediction: GNNs trained on high-quality experimental data can accurately predict key molecular properties such as the Kováts retention index, normal boiling point, and mass spectra [12]. By learning from molecular graphs, these models establish complex structure-property relationships, providing "instant" predictions for properties like formation energies, band gaps, and mechanical properties that would otherwise require costly simulations or laboratory experiments [13] [9]. This capability is vital for virtual screening of large chemical databases.

De Novo Molecule Generation: GNN-based generative models can design novel molecular structures with desired properties, dramatically expanding the explorable chemical space [10]. These approaches can be unconstrained, prioritizing structural diversity; constrained, incorporating specific functional groups or substructures relevant to desired properties; or ligand-protein-based, designed to generate molecules that interact with specific protein targets [10]. This application is particularly powerful for the initial candidate selection phase in drug discovery.

Drug-Target and Drug-Drug Interaction Prediction: Predicting the interactions between drugs and their biological targets or between multiple drugs in combination therapies is a critical challenge. GNNs can model these complex relationships as network problems, achieving state-of-the-art performance in predicting binding affinities and synergistic or adverse drug-drug interactions [11] [10]. This helps in identifying effective combination therapies and mitigating potential safety issues early in the development process.

Table 1: Key Application Areas of GNNs in Drug Discovery

| Application Area | Primary Task | Impact |

|---|---|---|

| Molecular Property Prediction [12] [9] | Regression or classification of molecular properties (e.g., toxicity, solubility) from structure. | Reduces need for extensive experimental validation; enables high-throughput virtual screening. |

| Molecule Generation [10] | Designing novel molecular structures with specified constraints or properties. | Accelerates early-stage candidate discovery; expands explorable chemical space. |

| Interaction Prediction [11] [10] | Predicting drug-target binding affinity or drug-drug interactions (synergistic/adverse). | Improves efficacy and safety profiling of drug candidates and combination therapies. |

Quantitative Performance of GNN Models

The effectiveness of GNNs is demonstrated through their state-of-the-art performance on a wide range of benchmark datasets. The following table summarizes the reported accuracy of various GNN architectures and approaches for predicting different molecular properties, highlighting their utility in quantitative structure-property relationship (QSPR) modeling.

Table 2: Performance of GNNs on Molecular Property Prediction Tasks

| Model / Architecture | Dataset / Property | Reported Performance | Key Feature |

|---|---|---|---|

| M3GNet [13] | Materials property prediction (Formation energy) | MAE ~0.03 eV/atom (on test set) | Interatomic potential for molecules and crystals. |

| MEGNet [13] | QM9 (Internal Energy U) | MAE ~0.012 eV/atom (on test set) | Includes global state attribute. |

| Invariant GNN [12] | Kováts Retention Index | Accurate models trained with experimental data. | Uses graph representations with high-quality data. |

| GNN General [9] | Various molecular properties | Outperformed conventional ML models. | Learns internal representations end-to-end. |

Experimental Protocols for Molecular Property Prediction

This section provides a detailed, step-by-step protocol for training and applying a GNN model to predict molecular properties, a cornerstone task in chemical space research. The protocol is based on established practices and the workflow implemented in libraries like MatGL [13].

Data Preparation and Graph Conversion

- Data Collection: Assemble a dataset of molecular structures and their corresponding experimentally measured or quantum-chemically computed target properties (e.g., boiling point, solubility, formation energy). The data should be formatted as a list of

StructureorMoleculeobjects (from Pymatgen or RDKit) and a parallel list of target propertylabels[13]. - Graph Conversion: Convert each molecular structure into a graph representation

G = (V, E), whereVis the set of atoms (nodes) andEis the set of chemical bonds (edges). This is typically done using a graph converter.- Node Features (

h_v^0): Initialize node feature vectors for each atom. Common features include atomic number, atom type, hybridization state, formal charge, and valence (e.g., one-hot encoded or as continuous values) [9]. - Edge Features (

h_e^0): Initialize edge feature vectors for each bond. Common features include bond type (single, double, triple), bond length, and stereochemistry [9]. - Cutoff Radius: Define a cutoff radius (e.g., 5 Å) to determine the connectivity between atoms for periodic systems or when explicit bonds are not defined. Atoms within this distance are connected by an edge [13].

- Node Features (

- Dataset Creation: Utilize a dedicated data pipeline like

MGLDataset(from MatGL) to handle the processing, loading, and caching of the graph data. This dataset class efficiently batches the graphs and their associated labels for training [13].

Model Architecture and Training

- Model Selection: Choose a GNN architecture suitable for your task. For a standard property prediction task, an invariant MPNN is often sufficient.

- Message Passing Phase (

Ksteps): Fort = 1...Klayers, the network performs [9]:- Message Function (

M_t): For each nodev, a messagem_v^(t+1)is computed by aggregating information from its neighborsw ∈ N(v). The functionM_toperates on the node featuresh_v^t,h_w^t, and the edge featuree_vw. - Update Function (

U_t): The node's feature vector is updated toh_v^(t+1)by combining its current stateh_v^twith the aggregated messagem_v^(t+1), typically using a learned neural network.

- Message Function (

- Readout / Pooling Phase: After

Kmessage passing steps, a graph-level representationyis obtained by pooling the final node embeddings{h_v^K | v ∈ G}from the entire graph using a permutation-invariant functionR(⋅), such as a sum, mean, or a more sophisticated operation like Set2Set [13] [9]. - Output Layer: The pooled graph-level representation is passed through a final multilayer perceptron (MLP) to produce the predicted property value (for regression) or class probabilities (for classification) [13].

- Message Passing Phase (

- Training Loop:

- Loss Function: For regression tasks, use Mean Absolute Error (MAE) or Mean Squared Error (MSE). For classification, use Cross-Entropy loss.

- Optimization: Use a standard optimizer like Adam. Leverage a training wrapper like PyTorch Lightning (integrated in MatGL) to streamline the training process, manage logging, and enable early stopping based on validation performance [13].

- Active Learning Integration: In an active learning cycle, the trained model is used to predict properties on a large, unlabeled pool of molecules. The molecules for which the model is most uncertain (or those predicted to have high desired properties) are selected for the next round of experimental measurement or simulation. Their new labels are then added to the training set, and the model is retrained, creating a feedback loop that efficiently explores the chemical space [11].

Model Evaluation and Deployment

- Validation: Evaluate the trained model on a held-out test set to assess its generalization performance using metrics like MAE or Root Mean Squared Error (RMSE).

- Inference: Use the model's convenient

predict_structuremethod (as provided in MatGL) to make predictions on new, unseen molecular structures directly from theirStructureorMoleculeobject [13].

Successful implementation of GNNs for molecular representation requires a suite of software tools and datasets. The following table details key "research reagents" for this field.

Table 3: Essential Tools and Resources for GNN-based Molecular Research

| Tool / Resource | Type | Function in Research |

|---|---|---|

| MatGL (Materials Graph Library) [13] | Software Library | An open-source, "batteries-included" library built on Deep Graph Library (DGL) and Pymatgen. Provides implementations of models like M3GNet and MEGNet, pre-trained foundation potentials, and tools for training property prediction models and interatomic potentials. |

| DGL (Deep Graph Library) [13] | Software Library | A foundational library for implementing GNNs. Known for high memory efficiency and speed, which is critical for training on large molecular graphs. |

| Pymatgen [13] | Software Library | A robust Python library for materials analysis. Used extensively for manipulating structural objects (Molecules and Crystals) and converting them into graph representations for input to GNN models. |

| Benchmark Datasets (e.g., QM9, Materials Project) [9] | Data | Curated datasets containing thousands to millions of molecular or crystal structures with associated quantum-mechanical or experimental properties. Essential for training and benchmarking model performance. |

| Message Passing Neural Network (MPNN) Framework [9] | Conceptual Model | A general framework that describes most modern GNNs used in chemistry. It breaks down the GNN operation into a message function, update function, and readout function, providing a blueprint for model development. |

Graph Neural Networks represent a paradigm shift in computational molecular representation. Their natural alignment with the graph structure of molecules allows them to overcome the limitations of traditional fingerprint and string-based methods, leading to more expressive, adaptive, and multipurpose representations [8]. As reviewed in these application notes, GNNs are already delivering significant acceleration in critical tasks like property prediction, molecule generation, and interaction modeling within drug discovery pipelines [11] [10]. The ongoing development of open-source libraries like MatGL, combined with the integration of active learning strategies, positions GNNs as a cornerstone technology for the efficient and intelligent exploration of vast chemical spaces, ultimately accelerating the design of novel materials and therapeutics.

Graph Neural Networks (GNNs) represent a transformative class of machine learning models specifically designed to operate on graph-structured data, making them particularly suited for chemical applications. In molecular graphs, atoms naturally constitute nodes and chemical bonds form edges, creating an inherent structural representation that traditional neural network architectures struggle to process effectively [9]. This direct alignment between molecular structure and graph representation has positioned GNNs as powerful tools across diverse chemical domains, from drug discovery and materials science to catalytic reaction prediction [11] [9].

The fundamental advantage of GNNs lies in their ability to learn from the complete topological information of molecules, capturing complex relationships that determine chemical properties and reactivity patterns. Unlike traditional machine learning approaches that rely on pre-defined molecular descriptors or fingerprints, GNNs automatically learn informative molecular representations through message passing and feature transformation operations [9] [14]. This capability is revolutionizing computational chemistry by enabling more accurate property prediction, accelerating molecular design, and providing insights into chemical phenomena that were previously computationally prohibitive to model.

Core Architectural Frameworks

Graph Convolutional Networks (GCNs)

Graph Convolutional Networks (GCNs) serve as a foundational architecture that generalizes convolutional operations from regular grids (like images) to irregular graph structures [15]. In chemical contexts, GCNs operate on node features (atom properties) and adjacency matrices (bond connectivity) to generate meaningful molecular representations. The core operation involves feature propagation and transformation, where each node aggregates feature information from its neighboring nodes, followed by a non-linear transformation [15].

The mathematical formulation of a graph convolution layer can be represented as:

[H^{(l+1)} = \sigma(\hat{D}^{-\frac{1}{2}}\hat{A}\hat{D}^{-\frac{1}{2}}H^{(l)}W^{(l)})]

Where (\hat{A} = A + I) is the adjacency matrix with self-connections, (\hat{D}) is the diagonal degree matrix of (\hat{A}), (H^{(l)}) contains node features at layer (l), (W^{(l)}) is a trainable weight matrix, and (\sigma) is a non-linear activation function [15]. This normalization strategy ensures numerical stability while propagating features across the graph. For molecular property prediction, multiple GCN layers are typically stacked to capture increasingly complex chemical environments, followed by global pooling operations to generate graph-level embeddings that feed into downstream prediction layers [15].

Graph Attention Networks (GAT/GATv2)

Graph Attention Networks (GATs) introduce an attention mechanism that assigns learned importance weights to neighboring nodes during feature aggregation [16]. Unlike GCNs with fixed normalization based on node degrees, GATs compute attention coefficients between connected nodes, enabling the model to focus on more relevant chemical neighbors when updating node representations. The attention mechanism for a single head is computed as:

[\alpha{ij} = \frac{\exp(\text{LeakyReLU}(\mathbf{a}^T[W\mathbf{h}i \| W\mathbf{h}j]))}{\sum{k \in \mathcal{N}(i)} \exp(\text{LeakyReLU}(\mathbf{a}^T[W\mathbf{h}i \| W\mathbf{h}k]))}]

Where (\alpha_{ij}) represents the attention coefficient between nodes (i) and (j), (W) is a shared weight matrix, (\mathbf{a}) is a learnable attention vector, (\|) denotes concatenation, and (\mathcal{N}(i)) is the neighborhood of node (i) [16]. Multi-head attention is commonly employed to stabilize learning and capture different aspects of molecular interactions [16].

GATv2 represents an improved formulation that addresses static attention limitations in original GAT by applying the attention function after non-linearities, resulting in more dynamic and expressive attention patterns [16]. In chemical applications, this enables more nuanced modeling of molecular interactions where certain atomic neighbors or functional groups may disproportionately influence molecular properties regardless of topological distance.

Message Passing Neural Networks (MPNNs)

The Message Passing Neural Network (MPNN) framework provides a generalized abstraction that encompasses many spatial-based GNN architectures, including GCN and GAT variants [17] [9]. MPNNs operate through two primary phases: a message passing phase and a readout phase. During message passing, node representations are iteratively updated by aggregating "messages" from neighboring nodes over multiple steps, effectively capturing higher-order chemical environments.

The MPNN formulation can be summarized as:

[\mathbf{m}v^{(t+1)} = \sum{w \in \mathcal{N}(v)} Mt(\mathbf{h}v^{(t)}, \mathbf{h}w^{(t)}, \mathbf{e}{vw})]

[\mathbf{h}v^{(t+1)} = Ut(\mathbf{h}v^{(t)}, \mathbf{m}v^{(t+1)})]

Where (\mathbf{m}v^{(t+1)}) is the message for node (v) at step (t+1), (Mt) is the message function, (\mathcal{N}(v)) denotes neighbors of (v), (\mathbf{h}v^{(t)}) is the node feature of (v) at step (t), (\mathbf{e}{vw}) represents edge features between (v) and (w), and (U_t) is the update function [9]. After (T) message passing steps, a readout function generates a graph-level representation:

[\mathbf{y} = R({\mathbf{h}_v^{(T)} | v \in G})]

Where (R) is a permutation-invariant readout function such as sum, mean, or more sophisticated Set2Set aggregation [9]. The flexibility in defining message, update, and readout functions makes MPNN highly adaptable to diverse chemical tasks, from molecular property prediction to reaction optimization.

Quantitative Performance Comparison

Table 1: Comparative Performance of GNN Architectures in Chemical Applications

| Architecture | Key Mechanism | Chemical Application Example | Performance Metric | Advantages | Limitations |

|---|---|---|---|---|---|

| GCN [15] [9] | Spectral graph convolution with degree normalization | Molecular property prediction from 2D structure | R² ~0.65-0.70 on QM9 dataset | Computational efficiency, simplicity | Fixed neighbor weighting, limited expressivity |

| GAT [18] [16] | Attention-weighted neighbor aggregation | Focus on key functional groups in drug discovery | ~5-10% improvement over GCN on toxicity prediction | Dynamic attention, interpretability | Higher computational cost, parameter sensitivity |

| GATv2 [16] | Dynamic attention after non-linearities | Molecular property prediction with complex interactions | ~3-5% improvement over GAT on challenging targets | More expressive attention | Increased complexity, training instability risk |

| MPNN [18] [17] | Generalized message passing framework | Cross-coupling reaction yield prediction | R² = 0.75 on heterogeneous catalysis dataset [18] | Framework flexibility, state-of-the-art performance | Architecture design complexity, computational cost |

| ABT-MPNN [17] | Atom-bond transformer with attention | Multi-property prediction for drug candidates | Outperforms baselines on 9/9 benchmark datasets [17] | Enhanced interpretability, bond-level attention | Significant implementation complexity |

Table 2: GNN Performance in Specific Chemical Tasks

| Application Domain | Best Performing Architecture | Comparative Performance | Dataset Characteristics | Key Factors for Success |

|---|---|---|---|---|

| Cross-coupling Reaction Yield Prediction [18] | MPNN | R² = 0.75 (highest among tested architectures) | Heterogeneous datasets (Suzuki, Sonogashira, etc.) | Effective handling of diverse reaction types |

| Molecular Property Prediction [17] | ABT-MPNN | Outperforms or comparable to SOTA on 9 datasets | Diverse QSPR/QSAR tasks | Atom-bond attention, multi-level representation |

| Energetic Materials Design [19] | Neural Network Potentials (NNPs) | DFT-level accuracy for structure and mechanics | C, H, N, O-based HEMs | Transfer learning with minimal DFT data |

| Zintl Phase Discovery [20] | GNN with bonding insights | 90% precision vs. 40% for M3GNet | >90,000 hypothetical phases | Incorporation of domain knowledge (ionic bonding) |

Experimental Protocols

Protocol: Implementing MPNN for Reaction Yield Prediction

This protocol outlines the methodology for implementing Message Passing Neural Networks to predict yields in cross-coupling reactions, based on the approach that achieved state-of-the-art performance (R² = 0.75) in recent research [18].

Materials and Software Requirements:

- Python 3.8+

- DeepChem or PyTorch Geometric library

- RDKit for molecular processing

- Dataset of reaction SMILES with corresponding yields

Step-by-Step Procedure:

Data Preparation and Preprocessing:

- Compile heterogeneous dataset encompassing various cross-coupling reactions (Suzuki, Sonogashira, Cadiot-Chodkiewicz, Ullmann-type, Buchwald-Hartwig)

- Convert reaction SMILES to molecular graphs with reaction centers annotated

- Represent atoms as nodes with features: atomic number, formal charge, hybridization, ring membership

- Represent bonds as edges with features: bond type, conjugation, stereochemistry

Model Architecture Configuration:

- Implement message passing with learned functions: (Mt) and (Ut) as multi-layer perceptrons

- Set message passing steps T = 3-5 to capture sufficient molecular context

- Use edge-conditioned convolution for bond feature integration

- Employ gated recurrent units (GRUs) for update functions to mitigate oversmoothing

Readout and Prediction Head:

- Implement set2set readout for permutation-invariant graph-level representation

- Stack two fully connected layers with ReLU activation and dropout (p=0.2)

- Final linear layer outputs continuous yield prediction

Training Protocol:

- Initialize model with Xavier uniform initialization

- Optimize using Adam with learning rate 0.001, β₁ = 0.9, β₂ = 0.999

- Implement early stopping with patience of 50 epochs monitoring validation loss

- Train with mini-batch size 32-128 depending on GPU memory

Interpretation and Analysis:

- Apply integrated gradients method to determine contribution of input descriptors

- Visualize atomic contributions to identify key structural features influencing yield

Protocol: Active Learning Integration with GNNs

This protocol describes the integration of active learning with GNNs for efficient chemical space exploration, based on recently developed batch active learning methods that significantly reduce experimental costs [21].

Materials and Software Requirements:

- Pre-trained GNN model on relevant chemical domain

- Pool of unlabeled molecular compounds

- Bayesian optimization framework

- Sanofi's COVDROP or COVLAP implementation [21]

Step-by-Step Procedure:

Initial Model Setup:

- Start with pre-trained GNN on available labeled data or transfer learning from related chemical domain

- For cold start, use diverse random sampling to collect initial batch (1-5% of pool)

Uncertainty Estimation:

- For COVDROP: Use Monte Carlo dropout (10-20 forward passes) to estimate epistemic uncertainty

- For COVLAP: Apply Laplace approximation to obtain posterior distribution over model parameters

- Compute predictive variance for each unlabeled sample

Batch Selection Optimization:

- Construct covariance matrix C between predictions on unlabeled samples

- Use greedy algorithm to select submatrix CB of size B × B with maximal determinant

- This maximizes joint entropy, balancing uncertainty and diversity

- Batch size B typically set to 30 for drug discovery applications [21]

Iterative Active Learning Cycle:

- Select batch using above method and obtain labels (experimental or computational)

- Augment training set with newly labeled compounds

- Fine-tune GNN on expanded dataset with reduced learning rate (1/10 of initial)

- Repeat until performance plateaus or experimental budget exhausted

Performance Validation:

- Monitor root mean square error (RMSE) on hold-out validation set

- Compare against random selection and baseline methods (k-means, BAIT)

- Evaluate sample efficiency as iterations to target performance

The Scientist's Toolkit

Table 3: Essential Research Tools and Resources for GNN Implementation in Chemistry

| Tool/Resource | Type | Function | Application Example | Availability |

|---|---|---|---|---|

| RDKit [15] | Cheminformatics Library | Molecular graph generation from SMILES | Convert chemical structures to graph representation | Open source |

| PyTorch Geometric [15] | Deep Learning Library | GNN implementation and training | Implement MPNN, GCN, GAT architectures | Open source |

| DeepChem [21] | Drug Discovery Platform | End-to-end molecular ML pipelines | Active learning integration for property prediction | Open source |

| OGB (Open Graph Benchmark) [9] | Benchmark Datasets | Standardized performance evaluation | Compare architecture performance on molecular tasks | Open source |

| COVDROP/COVLAP [21] | Active Learning Methods | Batch selection for efficient experimentation | Reduce experimental costs in lead optimization | Research implementation |

| Integrated Gradients [18] | Interpretability Method | Feature attribution for model predictions | Identify atomic contributions to reaction yield | Open source implementations |

| DP-GEN [19] | Neural Potential Generator | Automated training data generation for NNPs | Accelerate materials simulation with DFT accuracy | Open source |

The evolution of GNN architectures from foundational GCNs to sophisticated MPNN frameworks has fundamentally transformed computational chemistry research. The comparative performance data demonstrates that while MPNNs currently achieve state-of-the-art results for complex chemical prediction tasks like reaction yield optimization, the optimal architecture choice remains application-dependent [18] [17]. The integration of attention mechanisms, as exemplified by GAT and ABT-MPNN, provides not only performance improvements but also valuable interpretability that aligns with chemical intuition [17] [16].

The emerging paradigm of active learning with GNNs represents a powerful methodology for efficient chemical space exploration, potentially reducing experimental costs by strategically selecting the most informative compounds for testing [21]. Future developments will likely focus on multi-modal approaches that combine structural graph representations with additional data types such as spectroscopic information, reaction conditions, and synthetic accessibility constraints [14]. As GNN methodologies continue to mature, their integration with experimental workflows will play an increasingly crucial role in accelerating the discovery and optimization of novel molecules and materials with tailored properties.

In the field of chemical science and drug discovery, navigating the vastness of chemical space represents a fundamental challenge. The number of possible small molecules is estimated to be on the order of 10^60, making exhaustive experimental investigation impossible [1]. Traditional machine learning approaches for molecular property prediction rely on labeled training data, which is often sparse, scarce, and expensive to generate, leading to models with poor generalization capabilities [1]. Within this context, active learning has emerged as a powerful framework to maximize model performance while minimizing labeling costs by intelligently selecting the most informative samples for annotation [22].

Active learning operates through an iterative cycle of prediction, acquisition, and expansion. This strategic approach is particularly valuable in chemical research where experimental validation through wet lab experimentation or density functional theory (DFT) calculations remains time-consuming and computationally expensive [19] [1]. When combined with graph neural networks (GNNs)—which provide a natural representation for molecular structures by treating atoms as nodes and bonds as edges—active learning creates a powerful paradigm for accelerating materials discovery and drug development [13] [11].

The integration of active learning with GNNs is revolutionizing drug design processes by accurately modeling molecular structures and interactions with binding targets. Over the past five years, GNNs have emerged as transformative tools that significantly speed up drug discovery through improved predictive accuracy, reduced development costs, and fewer late-stage failures [11]. This application note details the protocols and methodologies for implementing active learning cycles within GNN frameworks specifically for chemical space exploration.

The Active Learning Framework

Core Cycle and Mathematical Foundation

The active learning framework for chemical space research follows a structured, iterative process consisting of three core phases: prediction, acquisition, and expansion. In the prediction phase, a GNN model is trained on initially labeled molecular data to predict properties of interest. In the acquisition phase, this model is used to evaluate unlabeled molecules and select the most informative candidates for experimental validation based on a defined acquisition function. In the expansion phase, the newly acquired labeled data is incorporated into the training set to improve the model for the next cycle [22].

Formally, let dataset ( D ) be divided into a labeled set ( L ) and a pool of unlabeled data ( U ). Each sample in the dataset belongs to a class ( y ), with ( c ) total classes. The active learning acquisition function consists of mining a subset of samples from ( U ) and transferring them to ( L ), incurring a labeling cost. For a molecule ( x ), a GNN ( \theta ) generates a feature vector ( f ) and a softmax probability distribution ( p_i ), where ( p ) represents the model's confidence across possible classes or properties [22].

Table 1: Performance Comparison of Acquisition Functions in Chemical Research

| Acquisition Function | Key Principle | Performance Notes | Computational Complexity |

|---|---|---|---|

| Entropy | Selects samples with highest predictive uncertainty | Outperforms other methods in 72.5% of acquisition steps; superior for general settings [22] | Low |

| Margin | Focuses on difference between top two predicted probabilities | Generally outperformed by entropy in comprehensive evaluations [22] | Low |

| Query-by-Committee | Leverages disagreements between ensemble models | Can be computationally expensive without consistent performance gains [22] | High |

| CoreSet | Aims to maximize spatial coverage in feature space | Performance highly dependent on dataset characteristics [22] | Medium |

Workflow Visualization

The following diagram illustrates the iterative active learning cycle for GNNs in chemical space research:

Experimental Protocols

Protocol 1: Implementing the Base Active Learning Cycle

Purpose: To establish a foundational active learning workflow for molecular property prediction using graph neural networks.

Materials and Reagents:

- Initial seed compounds: 50-100 molecules with experimentally validated properties

- Unlabeled compound library: 10,000-100,000 molecules from databases such as ChEMBL or Enamine REALSpace

- GNN framework: MatGL, PyTorch Geometric, or Deep Graph Library

- Computational resources: GPU-enabled workstation or computing cluster

Procedure:

- Data Preparation:

- Convert molecular representations (SMILES, SDF) into graph representations using tools from MatGL or PyTorch Geometric [13]

- For each molecule, create nodes (atoms) with feature vectors encoding element type, hybridization, and valence state

- Create edges (bonds) with features encoding bond type, distance, and conjugation status

Initial Model Training:

- Initialize a GNN architecture such as Message Passing Neural Network (MPNN) or Materials Graph Network (MEGNet) [13] [23]

- Configure training parameters: learning rate (0.001-0.01), batch size (32-128), number of epochs (100-500)

- Train the model on the initial labeled dataset using mean squared error for regression or cross-entropy for classification tasks

Acquisition Phase:

- Use the trained model to predict properties for all compounds in the unlabeled pool

- Calculate entropy scores for each prediction: ( H(x) = -\sum{i=1}^{c} pi \log pi ), where ( pi ) is the predicted probability for class ( i ) [22]

- Rank unlabeled compounds by descending entropy score

- Select the top 50-100 compounds for experimental validation based on budget constraints

Expansion Phase:

- Submit selected compounds for experimental testing or DFT calculation

- Incorporate newly acquired labels into the training dataset

- Retrain the model on the expanded dataset

Evaluation:

Troubleshooting:

- If model performance plateaus, consider increasing batch size or diversifying acquisition strategy

- If computational costs are prohibitive, implement early stopping or reduce model complexity

- For small molecular datasets, apply data augmentation through molecular graph transformations

Protocol 2: Explanation-Guided Active Learning for Activity Cliffs

Purpose: To enhance both predictive accuracy and interpretability in activity cliff prediction through explanation-supervised active learning.

Background: Activity cliffs (ACs) are pairs of structurally similar compounds with significantly different binding affinities, posing challenges for traditional QSAR models [23]. The ACES-GNN framework integrates explanation supervision into GNN training to address this challenge.

Materials and Reagents:

- Activity cliff dataset: Curated from ChEMBL or other sources with measured potency values (Ki or EC50)

- Similarity metrics: Extended Connectivity Fingerprints (ECFPs), scaffold similarity, SMILES Levenshtein distance

- GNN model: MPNN or other explainable architecture

- Attribution method: Integrated gradients or other gradient-based approach

Procedure:

- Activity Cliff Identification:

- Calculate pairwise molecular similarities using ECFPs (radius=2, length=1024) with Tanimoto coefficient [23]

- Identify AC pairs as molecules with structural similarity >90% and potency difference ≥10-fold

- Label a molecule as an AC molecule if it forms an AC relationship with at least one other molecule

Ground-Truth Explanation Generation:

- For each AC pair, identify uncommon substructures attached to shared scaffolds

- Assign ground-truth atom-level feature attributions such that the sum of uncommon atomic contributions preserves the direction of activity difference [23]

- Validate that ( (\Phi(\psi(M{uncom}^i)) - \Phi(\psi(M{uncom}^j)))(yi - yj) > 0 ) for each AC pair

Model Training with Explanation Supervision:

- Implement a multi-task learning objective combining property prediction and explanation alignment

- Configure loss function: ( L{total} = \alpha L{pred} + \beta L{expl} ), where ( L{pred} ) is prediction loss and ( L_{expl} ) is explanation fidelity loss

- Train the model with both activity data and explanation supervision

Active Learning with Explanation-Guided Acquisition:

- After initial training, use the model to predict on unlabeled compounds

- Calculate both predictive uncertainty and explanation confidence for each sample

- Prioritize compounds with high uncertainty and chemically meaningful attribution patterns

- Expand training set with newly acquired compounds and their validated explanations

Validation:

- Evaluate predictive performance on held-out AC compounds

- Assess explanation quality through chemist evaluation or alignment with known SAR

- Measure correlation between prediction improvement and explanation accuracy

Troubleshooting:

- If explanation quality is poor, adjust the weighting between prediction and explanation losses

- For datasets with limited ACs, apply transfer learning from related targets

- If model attributions highlight chemically irrelevant features, incorporate structural constraints

Table 2: Research Reagent Solutions for Active Learning in Chemical Space

| Reagent / Resource | Function | Example Sources / implementations |

|---|---|---|

| MatGL Library | Extensible graph deep learning library with pre-trained models for materials science [13] | Python package: matgl |

| MEGNet Models | Pre-trained graph networks for molecular and crystal property prediction [13] | MatGL model zoo |

| M3GNet Potential | Foundation potential for energy, force, and stress predictions [13] | MatGL.apps.pes |

| ReSolved Dataset | DFT-computed reduction potentials for diverse organic molecules [24] | ChemRxiv supplementary |

| Activity Cliff Benchmark | Curated datasets for AC prediction and explanation [23] | ChEMBL-based repositories |

| Smirk Tokenizer | Advanced tokenization capturing nuclear, electronic, and geometric features [1] | MIST model codebase |

| Enamine REALSpace | Large library of synthetically accessible organic molecules for pretraining [1] | Enamine database |

Advanced Applications and Case Studies

Case Study: Accelerating Energetic Materials Discovery with EMFF-2025

The EMFF-2025 neural network potential exemplifies how active learning principles can be applied to discover and optimize high-energy materials (HEMs) with C, H, N, and O elements. By leveraging transfer learning with minimal data from DFT calculations, researchers achieved DFT-level accuracy in predicting structures, mechanical properties, and decomposition characteristics of 20 HEMs [19].

Implementation Details:

- Used Deep Potential Generator (DP-GEN) framework for automated active learning

- Incorporated small amounts of new training data from structures not in existing databases

- Achieved mean absolute errors within ± 0.1 eV/atom for energy and ± 2 eV/Å for force predictions

- Discovered surprising similarity in high-temperature decomposition mechanisms across different HEMs

Impact: The approach enabled large-scale exploration of chemical space for HEMs while dramatically reducing computational costs compared to traditional DFT methods [19].

Case Study: Inverse Design of Redox-Active Molecules

A multi-solvent GNN was trained on approximately 20,000 reduction potentials of chemically diverse organic redox-active molecules (the "ReSolved" dataset). When coupled with an evolutionary algorithm, this framework enabled inverse design of synthetically accessible candidate molecules with target reduction potentials for battery applications [24].

Methodological Innovations:

- Message passing GNN architecture with set transformer readout

- Generalization capability to previously unseen solvents

- Mean absolute error of approximately 0.2 eV for reduction potential prediction

- Active learning cycle focusing on diverse regions of redox chemical space

Visualization of Explanation-Guided Active Learning

The following diagram illustrates the specialized ACES-GNN workflow for activity cliff prediction:

Active learning represents a paradigm shift in how researchers navigate chemical space, transforming the discovery process from one of exhaustive screening to intelligent exploration. When integrated with graph neural networks, the prediction-acquisition-expansion cycle enables rapid identification of promising compounds and materials while minimizing resource-intensive experimental validation. The protocols outlined in this application note provide researchers with practical methodologies for implementing these approaches across diverse chemical domains—from drug discovery to energy materials.

Future developments in this field will likely focus on several key areas: multi-objective acquisition functions that balance multiple property optimizations simultaneously, improved uncertainty quantification for better sample selection, and tighter integration with automated experimental platforms for closed-loop discovery systems. As foundation models like MIST continue to expand their coverage of chemical space, the potential for transfer learning and few-shot active learning will further accelerate materials innovation and drug development [1].

The integration of explanation-guided learning, as demonstrated in the ACES-GNN framework, points toward a future where active learning systems not only identify promising candidates but also provide chemically intuitive rationales for their selections, fostering greater collaboration between artificial intelligence and human expertise in the pursuit of scientific discovery.

The exploration of chemical space represents one of the most significant challenges in modern drug discovery and materials science, with an estimated (10^{60}) synthetically accessible organic molecules potentially existing. This vastness renders exhaustive experimental investigation impossible, creating a critical need for computational approaches that can intelligently navigate this space. Graph Neural Networks have emerged as powerful tools for molecular representation and property prediction by natively processing molecular graph structures, where atoms constitute nodes and chemical bonds form edges [25]. Concurrently, Active Learning provides a framework for iterative model improvement by selectively querying the most informative data points. The integration of GNNs with AL creates a synergistic partnership that significantly accelerates molecular discovery campaigns while reducing resource consumption.

This combination addresses fundamental limitations in both approaches: GNNs alone require large, labeled datasets that can be expensive to acquire, while AL strategies need informative molecular representations to effectively select candidates. When unified, GNN-AL systems achieve unprecedented efficiency by focusing computational and experimental resources on the most promising regions of chemical space. Recent advances have demonstrated the practical implementation of this synergy across diverse applications, from optimizing organic electronic materials to designing novel therapeutic compounds with tailored multi-property profiles [26] [27].

Application Notes: GNN-AL Implementation Frameworks and Performance

Molecular Representation Strategies for AL

Effective molecular representation forms the foundation for successful GNN-AL integration. Multiple representation strategies have been developed, each with distinct advantages for active learning scenarios:

Graph Representations: Molecular graphs directly encode atomic connectivity, with GNNs using message-passing mechanisms to learn topological features. The Direct Inverse Design Generator (DIDgen) approach leverages the differentiable nature of GNNs to optimize molecular graphs directly toward target properties through gradient ascent, effectively inverting the prediction process to become a generator [26].

Geometric Representations: E(n)-Equivariant GNNs incorporate 3D molecular coordinates and demonstrate superior performance on geometry-sensitive properties like partition coefficients (log K~ow~, log K~aw~), achieving MAEs of 0.18-0.25 in benchmark studies [28]. This equivariance ensures consistent predictions regardless of molecular orientation.

Hybrid Representations: FP-GNN architecture couples graph-based representations with traditional molecular fingerprints, combining local atomic environment information with global molecular features to enhance predictive robustness, particularly for toxicity and bioavailability predictions [29].

Uncertainty Quantification Methods for Acquisition Functions

Uncertainty quantification represents the critical bridge between GNN predictors and AL acquisition functions. Several UQ methods have been successfully implemented in GNN-AL frameworks:

Probabilistic Improvement Optimization: This approach quantifies the probability that candidate molecules will exceed predefined property thresholds, effectively balancing exploration and exploitation in chemical space navigation. Implementation with directed message-passing neural networks has demonstrated significantly improved optimization success rates in both single-objective and multi-objective molecular design tasks [27].

Ensemble-based Uncertainty: Multiple GNN instances with varied initializations provide uncertainty estimates through prediction variance, enabling the selection of molecules where model consensus is low, indicating regions where additional training data would be most beneficial.

Bayesian GNNs: These models maintain distributions over network weights, naturally capturing epistemic uncertainty in predictions, though at increased computational cost compared to ensemble methods.

Table 1: Performance Comparison of GNN-AL Frameworks on Molecular Design Tasks

| Framework | GNN Architecture | AL Strategy | Success Rate | Time per Molecule | Diversity Metric |

|---|---|---|---|---|---|

| DIDgen [26] | Graph Attention Network | Gradient Ascent | Comparable or better than state-of-the-art | 2.1-12.0 seconds | Highest diversity |

| PIO-UQ [27] | D-MPNN | Probabilistic Improvement | 15-30% improvement over baseline | N/Reported | Balanced exploration |

| FP-GNN [29] | GAT + Fingerprints | Uncertainty Sampling | ROC-AUC: 0.807-0.892 on bioactivity | N/Reported | Moderate diversity |

Multi-property Optimization with GNN-AL

Real-world molecular design typically requires simultaneous optimization of multiple, often competing properties. GNN-AL systems demonstrate particular advantage in these multi-objective scenarios:

Weighted Sum Approaches: Transform multi-objective problems into single-objective using weighted sums, with AL guiding the search toward Pareto-optimal frontiers.

Probability Improvement Optimization: Particularly effective for multi-property optimization, PIO naturally balances competing objectives by quantifying the joint probability of satisfying multiple property constraints [27]. This approach has demonstrated superior performance compared to weighted scalarization methods, which often over-emphasize single properties at the expense of others.

Constraint-based Optimization: AL strategies can incorporate hard constraints (e.g., synthetic accessibility, structural alerts) during candidate selection, ensuring generated molecules satisfy practical requirements alongside target properties.

Experimental Protocols

Protocol 1: Direct Inverse Design with GNNs

This protocol implements the DIDgen approach for generating molecules with target electronic properties through gradient-based optimization of molecular graphs [26].

Materials and Reagents

- Pre-trained GNN property predictor (e.g., HOMO-LUMO gap prediction model trained on QM9 dataset)

- Initial molecular graph (random initialization or existing molecule)

- Computational environment with PyTorch/TensorFlow and RDKit

- DFT validation setup (optional)

Procedure

- GNN Predictor Preparation: Train or load a pre-trained GNN model for the target property prediction. The model should use molecular graphs as input with explicit adjacency matrix (A) and feature matrix (F) representations.

Graph Construction with Constraints:

- Initialize a weight vector w~adj~ for the upper triangular elements of the adjacency matrix

- Apply sloped rounding function [x]~sloped~ = + a(x-[x]) to maintain gradient flow through discrete bond orders

- Construct symmetric adjacency matrix with zero diagonal

- Enforce valence constraints through penalty terms and gradient blocking when valence exceeds 4

Feature Matrix Construction:

- Define atomic identities based on node valence (sum of bond orders)

- Use additional weight matrix w~fea~ to differentiate elements with identical valence states

Gradient Ascent Optimization:

- Fix GNN weights and compute property prediction for current graph

- Calculate gradient of target property with respect to w~adj~ and w~fea~

- Update molecular graph parameters via gradient ascent

- Apply valence and chemical validity constraints after each update

- Iterate until property prediction reaches target range or convergence

Validation: Verify generated molecules through DFT calculation or empirical validation models

Troubleshooting Tips

- If optimization produces invalid molecules, increase valence penalty strength

- If optimization stagnates, adjust learning rate or sloped rounding parameter 'a'

- For diversity, initiate optimization from different starting molecules

Protocol 2: Uncertainty-Guided Molecular Optimization

This protocol implements uncertainty-quantified GNNs with active learning for efficient molecular optimization, based on the PIO framework [27].

Materials and Reagents

- Directed MPNN with uncertainty quantification capabilities

- Initial molecular dataset (e.g., ZINC subset, QM9)

- Property prediction oracle (computational or experimental)

- Genetic algorithm framework for candidate proposal

Procedure

- Initial Model Training:

- Train D-MPNN on initial labeled molecular dataset

- Implement uncertainty quantification method (ensemble, Bayesian, or dropout-based)

Active Learning Loop:

- Generate candidate molecules using genetic algorithm operations (mutation, crossover)

- Compute property predictions and uncertainty estimates for all candidates

- Calculate acquisition function scores (e.g., probability of improvement)

- Select top candidates based on acquisition scores

- Evaluate selected candidates using property oracle (computational or experimental)

- Add newly labeled molecules to training set

- Retrain D-MPNN on expanded dataset

Multi-property Optimization:

- For multiple objectives, compute joint probability of improvement across all properties

- Alternatively, use constrained optimization with AL focusing on constraint satisfaction

Termination: Continue iterations until performance plateaus or resource limits reached

Validation Methods

- Compare optimized molecules against known actives/leads

- Assess property prediction accuracy on hold-out test sets

- Evaluate synthetic accessibility and novelty of proposed molecules

Protocol 3: Hybrid Representation with FP-GNN

This protocol implements the FP-GNN architecture for enhanced molecular property prediction, suitable for active learning scenarios requiring robust representations [29].

Materials and Reagents

- Molecular dataset with annotated properties

- Fingerprint generation tools (RDKit, OpenBabel)

- Graph neural network framework (PyTorch Geometric, DGL)

- Hyperparameter optimization setup (Hyperopt, Optuna)

Procedure

- Molecular Representation Generation:

- Graph Representation: Convert molecules to graphs with atom features (type, hybridization, valence) and bond features (type, conjugation)

- Fingerprint Representation: Generate multiple fingerprint types:

- MACCS keys (167-bit structural keys)

- PubChem fingerprints (881-bit substructure keys)

- ErG fingerprints (2D pharmacophore representation)

FP-GNN Architecture Implementation:

- GNN Stream: Implement graph attention network with multi-head attention mechanism

- Node feature update: ( hi^{(l+1)} = \|{k=1}^K \sigma\left(\sum{j \in \mathcal{N}(i)} \alpha{ij}^k W^k h_j^{(l)}\right) )

- Attention coefficients: ( \alpha{ij} = \frac{\exp(\text{LeakyReLU}(a^T[Whi\|Whj]))}{\sum{k \in \mathcal{N}(i)} \exp(\text{LeakyReLU}(a^T[Whi\|Whk]))} )

- Fingerprint Stream: Implement fully connected network for fingerprint processing

- Fusion Layer: Concatenate graph and fingerprint representations before final prediction layer

- GNN Stream: Implement graph attention network with multi-head attention mechanism

Hyperparameter Optimization:

- Optimize GNN parameters: attention heads, hidden dimensions, dropout rates

- Optimize fingerprint network: hidden layers, activation functions

- Use Bayesian optimization with Tree-structured Parzen Estimator

Active Learning Integration:

- Use ensemble of FP-GNN models for uncertainty estimation

- Select candidates maximizing acquisition function (e.g., UCB, Thompson sampling)

- Retrain model with newly acquired labels

Performance Validation

- Benchmark against GNN-only and fingerprint-only baselines

- Evaluate on diverse molecular property datasets (e.g., MoleculeNet)

- Assess calibration and uncertainty quantification quality

Visualization of GNN-AL Workflows

GNN-AL Active Learning Cycle

Molecular Representation Strategies

Table 2: Key Research Reagents and Computational Tools for GNN-AL Implementation

| Category | Item | Specification/Version | Function/Purpose |

|---|---|---|---|

| Software Libraries | PyTorch Geometric | 2.0+ | Graph neural network implementation and molecular graph processing |

| RDKit | 2022.09+ | Cheminformatics toolkit for molecular manipulation and fingerprint generation | |

| DeepGraph | 0.2.5+ | Graph representation learning for large-scale molecular datasets | |

| Hyperopt | 0.2.7+ | Bayesian hyperparameter optimization for model tuning | |

| Benchmark Datasets | QM9 | ~134k molecules | Quantum chemical properties for model training and validation [26] [28] |

| ZINC | 10M+ compounds | Drug-like molecules for virtual screening and optimization | |

| MoleculeNet | Multiple datasets | Standardized benchmark for molecular property prediction | |

| LIT-PCBA | 15 targets, 7844 actives | Bioactivity data for validation [29] | |

| GNN Architectures | Graph Isomorphism Network | Custom implementation | Powerful graph representation with theoretical guarantees [28] |

| E(n)-Equivariant GNN | Custom implementation | Geometric learning with 3D coordinate integration [28] | |

| Graphormer | Custom implementation | Transformer architecture adapted for graph structures [28] | |

| Directed MPNN | Custom implementation | Message passing with directional information for improved UQ [27] | |

| Experimental Validation | Density Functional Theory | Gaussian16, ORCA | Quantum chemical validation of predicted molecular properties [26] |

| Automated Synthesis Platforms | Custom implementations | Robotic systems for experimental validation of designed molecules |

The integration of Graph Neural Networks with Active Learning frameworks represents a paradigm shift in computational molecular design, enabling unprecedented efficiency in navigating complex chemical spaces. The protocols and applications detailed in this work demonstrate measurable improvements in optimization success rates, diversity of generated compounds, and reduction in resource requirements compared to traditional approaches. The integration of uncertainty quantification methods, particularly probabilistic improvement optimization, provides a mathematically grounded approach to balancing exploration and exploitation in molecular discovery campaigns.

Future developments in this field will likely focus on several key areas: (1) improved integration of synthetic accessibility constraints to ensure generated molecules are practically realizable; (2) development of federated learning approaches to leverage distributed chemical data while preserving privacy; (3) incorporation of multi-fidelity data to combine expensive high-fidelity computations with cheaper approximate measurements; and (4) enhanced interpretability methods to extract chemically meaningful insights from GNN-AL decision processes. As these technologies mature, we anticipate their increasing adoption across industrial and academic research environments, accelerating the discovery of novel materials and therapeutic agents with tailored properties.

Building and Deploying an AL-GNN Pipeline: From Theory to Practice

The design of high-performance molecules for applications such as photosensitizers in clean energy technologies presents a formidable challenge due to the vastness of the chemical space and the computational limitations of traditional quantum chemistry methods [30]. A Unified Active Learning (AL) framework that systematically integrates semi-empirical quantum calculations with adaptive molecular screening strategies offers a powerful solution to accelerate molecular discovery [30]. This framework is particularly potent when combined with Graph Neural Networks (GNNs), which have revolutionized molecular property prediction by leveraging graph-based representations that provide full access to atomic-level information [9] [23]. By iteratively selecting the most informative data points for labeling, active learning addresses critical bottlenecks of data scarcity and inefficient resource allocation, enabling a more efficient exploration of high-dimensional chemical spaces while respecting synthetic constraints [30]. The following sections detail the core components, experimental protocols, and practical implementations of such a unified framework, providing a structured workflow for researchers in chemical and drug development fields.

Core Components of the Unified AL Framework

A unified Active Learning framework for chemical space research is built upon four tightly coupled components that form an iterative discovery loop. The integration of these components enables the efficient navigation of vast molecular design spaces.

1. Chemical Space Definition and Dataset Preparation: The foundation of any AL workflow is a chemically diverse and relevant molecular library. This involves curating a large collection of molecular structures, typically represented as Simplified Molecular-Input Line-Entry System (SMILES) strings or chemical graphs. The initial library is often constructed by merging public molecular datasets and expert-designed scaffolds to ensure broad coverage of photophysical characteristics. Standardization tools like RDKit are used to normalize stereochemistry and tautomer states, often utilizing Morgan fingerprint clustering for this purpose [30].

2. Surrogate Model for Property Prediction: At the heart of the AL framework is a surrogate model that predicts molecular properties with millisecond inference times, replacing expensive quantum simulations. The directed message-passing neural network (D-MPNN) from the Chemprop framework is a leading choice for this role due to its strong performance in molecular property prediction [30]. These GNNs operate on a message-passing paradigm, where node (atom) information is propagated as messages through edges (bonds) to neighboring nodes, allowing the model to learn molecular representations that include the local chemical environment [9]. The surrogate model is trained to predict key photophysical properties, such as the lowest singlet (S1) and triplet (T1) excitation energies, which are critical for photosensitizer performance [30].

3. Acquisition Function and Selection Strategy: This component defines the algorithm for prioritizing which unlabeled molecules should undergo costly computational or experimental validation. Unlike conventional methods that treat all molecules equally, AL algorithms dynamically identify the most informative candidates—typically those with high prediction uncertainty or high potential to improve model performance. A hybrid acquisition strategy that combines ensemble-based uncertainty estimation with a physics-informed objective function has demonstrated superior performance, enabling a balanced approach between exploring broad chemical regions and exploiting promising molecular subspaces [30].

4. Validation and Model Update Loop: The selected molecules undergo targeted validation through quantum-chemical calculations (e.g., xTB-sTDA or TD-DFT) or experiments. The newly acquired data is then used to retrain and update the surrogate model, initiating another cycle of prediction and selection. This iterative process continues until a predefined stopping criterion is met, such as a performance target or exhaustion of resources. This closed-loop system ensures continuous improvement of the predictive model with optimally acquired data [30].

Workflow Visualization

The logical relationship and data flow between these core components are visualized in the following workflow diagram:

Experimental Protocols and Methodologies

ML-xTB Calibration Pipeline

The ML-xTB pipeline provides a cost-effective method for generating quantum-chemical data at near-DFT accuracy but at approximately 1% of the computational cost [30]. This protocol is essential for creating the large-scale labeled datasets required for training the surrogate model.

Step 1: Initial Seed Generation: Curate a diverse set of 50,000 molecules from public databases (e.g., PubChemQC, QMspin) and expert-designed scaffolds (e.g., porphyrins, phthalocyanines). Standardize SMILES strings using RDKit, with stereochemistry and tautomer states normalized via Morgan fingerprint clustering (radius = 2, 1024 bits) [30].

Step 2: xTB-sTDA High-Throughput Calculations: Perform geometry optimization and excited-state calculation for each molecule using the GFN2-xTB method combined with the simplified Tamm–Dancoff approximation (sTDA). Calculate critical energy levels using:

Step 3: Machine Learning Calibration: Train a 10-model ensemble of Chemprop Message Passing Neural Networks (Chemprop-MPNN) to correct systematic errors between the xTB-sTDA and TD-DFT calculations for S1 and T1 excitations separately. The multitask loss function minimized during training is:

Active Learning Protocol

A standardized AL protocol ensures reproducible and efficient exploration of the chemical space.