ROBERT Software for Chemical Hyperparameter Optimization: A New Paradigm for Low-Data ML

This article provides a comprehensive evaluation of the ROBERT software, an automated workflow designed to enable robust non-linear machine learning in low-data chemical research.

ROBERT Software for Chemical Hyperparameter Optimization: A New Paradigm for Low-Data ML

Abstract

This article provides a comprehensive evaluation of the ROBERT software, an automated workflow designed to enable robust non-linear machine learning in low-data chemical research. Tailored for researchers, scientists, and drug development professionals, we explore its foundational principles for mitigating overfitting, detail its methodological application from data input to model generation, and offer best practices for troubleshooting and optimization. Furthermore, we present a validation and comparative analysis against traditional linear models, benchmarking its performance on diverse chemical datasets. This guide aims to equip scientists with the knowledge to leverage ROBERT for accelerating discovery in areas like drug design and materials science, transforming data-limited scenarios from a challenge into an opportunity.

The Challenge and Promise of Non-Linear ML in Low-Data Chemistry

In the data-driven landscape of modern chemical research, a pervasive challenge persists: the prevalence of small datasets. While large-scale, million-data-point initiatives like Open Molecules 2025 (OMol25) and QeMFi capture headlines, the day-to-day reality for many chemists involves working with datasets containing merely dozens to hundreds of data points [1] [2] [3]. This guide objectively evaluates the performance of the ROBERT software's automated workflow, specifically designed for such low-data regimes, against traditional and alternative machine learning approaches.

The Small Data Reality in Chemistry

The nature of chemical experimentation inherently limits dataset sizes. The synthesis and characterization of novel compounds, catalyst testing, or reaction optimization are often time-consuming and resource-intensive processes. Consequently, datasets in the range of 18 to 50 data points are common in many research scenarios, from optimizing synthetic reactions to predicting material properties [1].

In these low-data scenarios, Multivariate Linear Regression (MVLR) has traditionally been the model of choice for chemists due to its simplicity, robustness, and reduced risk of overfitting [1]. However, this reliance on linear models potentially overlooks complex, non-linear relationships inherent in chemical systems. Non-linear algorithms like Random Forests (RF), Gradient Boosting (GB), and Neural Networks (NN) have been viewed with skepticism in low-data contexts, primarily due to concerns about overfitting and lack of interpretability [1].

ROBERT's Automated Workflow for Small Data

The ROBERT software introduces a ready-to-use, automated workflow specifically engineered to overcome the challenges of applying non-linear machine learning to small chemical datasets [1]. Its core innovation lies in an optimization process designed to mitigate overfitting.

Core Methodology and Overfitting Mitigation

ROBERT employs Bayesian hyperparameter optimization with a uniquely designed objective function. This function explicitly accounts for a model's performance in both interpolation and extrapolation tasks, ensuring the selected model generalizes well beyond its training data [1].

The workflow can be summarized as follows:

Experimental Benchmarking: ROBERT vs. Traditional Models

The effectiveness of this approach was benchmarked on eight diverse chemical datasets from published studies (e.g., Liu, Milo, Doyle, Sigman, Paton), with sizes ranging from 18 to 44 data points [1]. The performance of ROBERT-tuned non-linear models was compared against traditional MVLR.

Table 1: Benchmarking Performance on External Test Sets (Scaled RMSE %)

| Dataset | Size | MVLR (Linear) | Neural Network (ROBERT) | Gradient Boosting (ROBERT) | Random Forest (ROBERT) |

|---|---|---|---|---|---|

| A | ~20 | Baseline | Best Result | Intermediate | Intermediate |

| B | ~20 | Baseline | Intermediate | Intermediate | Intermediate |

| C | ~20 | Baseline | Intermediate | Intermediate | Best Result |

| D | ~20 | Best Result | Intermediate | Intermediate | Intermediate |

| E | ~40 | Baseline | Competitive | Competitive | Competitive |

| F | ~40 | Baseline | Best Result | Intermediate | Intermediate |

| G | ~40 | Baseline | Intermediate | Best Result | Intermediate |

| H | ~40 | Baseline | Best Result | Intermediate | Intermediate |

Note: Scaled RMSE is expressed as a percentage of the target value range. "Best Result" indicates the model achieving the lowest error for that dataset. "Competitive" indicates performance on par with the best model. Baseline performance is set by MVLR [1].

The results demonstrate that properly tuned non-linear models can compete with or even surpass the performance of traditional linear regression in low-data regimes. ROBERT's automated workflow enabled non-linear models to deliver the best performance on 5 out of 8 datasets [1].

The Scientist's Toolkit: Key Solutions for Small-Data Research

Table 2: Essential Research Reagents for Chemical Machine Learning

| Tool / Reagent | Function & Application | Example in Use |

|---|---|---|

| Automated HPO Software (e.g., ROBERT) | Mitigates overfitting in small datasets via Bayesian optimization that balances interpolation and extrapolation performance [1]. | ROBERT's objective function combines 10x 5-fold CV (interpolation) and sorted CV (extrapolation) RMSE. |

| Specialized Chemical Datasets | Provides benchmark data for training and validating models on specific chemical properties or systems [3] [4]. | QeMFi dataset provides multi-fidelity quantum chemical properties. CheMixHub benchmarks mixture properties [3] [4]. |

| Hyperparameter Optimization Algorithms | Efficiently searches the hyperparameter space to find optimal model configurations, superior to manual or grid search [5]. | Hyperband algorithm is recommended for its computational efficiency and accuracy in molecular property prediction [5]. |

| Model Interpretation Frameworks | Provides feature importance and model diagnostics to build trust and extract chemical insights, even from complex models [1]. | ROBERT generates a comprehensive PDF report with performance metrics, feature importance, and outlier detection [1]. |

Performance Analysis and Practical Implementation

Critical Insights from Benchmarking

- Neural Networks Excel with Tuning: When optimized through ROBERT's workflow, Neural Networks matched or outperformed MVLR in half of the benchmarked datasets (D, E, F, H) during cross-validation and delivered the best test set performance for several others (A, F, H) [1]. This challenges the notion that NNs are inherently unsuitable for small data.

- Tree-Based Models and Extrapolation: The study noted that Random Forests achieved the best result in only one case. This is potentially a consequence of the explicit extrapolation term in the objective function, as tree-based models are known to have limitations when predicting outside the training data range [1].

- The Overfitting Barrier is Addressable: The primary hurdle for non-linear models in low-data regimes—overfitting—can be effectively managed through a carefully designed optimization objective that penalizes poor generalization, as demonstrated by ROBERT's methodology [1].

A Practical Workflow for Researchers

Implementing a robust machine learning strategy for a small chemical dataset involves a systematic process, from data preparation to model deployment, with a focus on validation.

The benchmarking data clearly indicates that the choice between linear and non-linear models in low-data regimes is no longer a foregone conclusion. While Multivariate Linear Regression remains a robust and reliable baseline, the automated workflow implemented in ROBERT provides a statistically sound pathway to harness the power of non-linear models like Neural Networks and Gradient Boosting, often with superior results [1].

The "data dilemma" in chemistry is not an insurmountable barrier to sophisticated machine learning. Instead, it necessitates specialized tools and methodologies that prioritize generalization and rigorous validation. ROBERT's performance in low-data scenarios positions it as a valuable addition to the chemist's toolbox, enabling more powerful and accurate predictive modeling even with the small datasets that are a norm in experimental chemical research.

In the field of chemical research, where data-driven methodologies are transforming drug discovery and materials science, multivariate linear regression (MVL) has long been the standard for analyzing small datasets due to its simplicity and robustness [6]. However, the inherent complexity of molecular properties and reaction outcomes often exhibits non-linear relationships that linear models cannot adequately capture. This limitation has fueled the exploration of advanced non-linear machine learning algorithms, which promise higher predictive accuracy but introduce challenges related to overfitting, interpretability, and hyperparameter sensitivity in low-data scenarios commonly encountered in chemical research [6].

The emergence of specialized software like ROBERT represents a significant advancement for researchers and drug development professionals seeking to harness the power of non-linear models without extensive machine learning expertise. By integrating automated workflows that systematically address overfitting through sophisticated hyperparameter optimization, these tools are making non-linear approaches more accessible and reliable for chemical applications [6]. This guide provides an objective comparison of ROBERT against other optimization methods, supported by experimental data and detailed protocols to inform selection for chemical research applications.

Hyperparameter Optimization Landscape: Tool Comparison

The performance of non-linear models is highly dependent on proper hyperparameter configuration. Various optimization tools have been developed, each with distinct approaches and strengths. The table below summarizes key hyperparameter optimization tools relevant to chemical informatics research.

Table 1: Comparison of Hyperparameter Optimization Tools and Platforms

| Tool Name | Primary Optimization Algorithm(s) | Key Features | Framework Support | Best Use Cases |

|---|---|---|---|---|

| ROBERT | Bayesian Optimization with combined RMSE metric [6] | Automated workflow for small chemical datasets; specialized for low-data regimes (<50 points) [6] | Custom implementation for chemical datasets | Chemical property prediction with limited data |

| Ray Tune | Ax/Botorch, HyperOpt, Bayesian Optimization [7] | Distributed tuning; integrates multiple optimization libraries; scales without code changes [7] | PyTorch, TensorFlow, XGBoost, Scikit-Learn [7] | Large-scale hyperparameter optimization across diverse models |

| Optuna | Tree-structured Parzen Estimator (TPE), Grid Search, Random Search [7] [8] | Define-by-run API; efficient pruning algorithms; visual analysis [7] | Any ML framework [7] | General machine learning with need for early stopping |

| HyperOpt | Tree of Parzen Estimators, Adaptive TPE [7] | Bayesian optimization; handles awkward search spaces [7] | PyTorch, TensorFlow, Scikit-Learn [7] | Complex search spaces with conditional parameters |

| Bayesian Search (General) | Gaussian Processes, Tree-Parzen Estimation [9] [10] | Builds surrogate model; uses acquisition function to guide search [9] | Varies by implementation | Optimization when computational resources are limited |

ROBERT distinguishes itself through its specialized design for chemical applications with small datasets, incorporating domain-specific validation techniques that account for both interpolation and extrapolation performance—a critical consideration in molecular property prediction [6]. Unlike general-purpose tools, ROBERT's optimization process explicitly addresses overfitting through a combined RMSE metric that evaluates performance across different cross-validation strategies, making it particularly valuable for the low-data scenarios common in early-stage chemical research [6].

Performance Benchmarking: Experimental Data and Results

ROBERT Performance in Low-Data Chemical Applications

Recent benchmarking studies demonstrate the effectiveness of properly tuned non-linear models in chemical applications. When evaluated on eight diverse chemical datasets ranging from 18 to 44 data points, ROBERT's automated non-linear workflows achieved performance competitive with or superior to traditional multivariate linear regression [6].

Table 2: Performance Comparison of Modeling Approaches on Chemical Datasets

| Dataset (Size) | Best Performing Algorithm | 10× 5-Fold CV Performance (Scaled RMSE) | External Test Set Performance (Scaled RMSE) | ROBERT Score (/10) |

|---|---|---|---|---|

| Liu (A) - 19 points | Non-linear (NN) [6] | Comparable to MVL | Outperformed MVL [6] | MVL superior [6] |

| Milo (B) - 21 points | MVL [6] | MVL superior | MVL superior [6] | MVL superior [6] |

| Sigman (C) - 25 points | Non-linear (NN) [6] | Comparable to MVL | Outperformed MVL [6] | Non-linear superior [6] |

| Paton (D) - 26 points | Non-linear (NN) [6] | Outperformed MVL | Comparable to MVL [6] | Non-linear superior [6] |

| Sigman (E) - 30 points | Non-linear (NN) [6] | Outperformed MVL | Comparable to MVL [6] | Non-linear superior [6] |

| Doyle (F) - 32 points | Non-linear (NN) [6] | Outperformed MVL | Outperformed MVL [6] | Non-linear superior [6] |

| Sigman (G) - 44 points | Non-linear (NN) [6] | Comparable to MVL | Outperformed MVL [6] | Non-linear superior [6] |

| Sigman (H) - 44 points | Non-linear (NN) [6] | Outperformed MVL | Outperformed MVL [6] | Comparable to MVL [6] |

The results reveal that non-linear models, when properly optimized using ROBERT's workflow, matched or exceeded MVL performance in five of the eight datasets for cross-validation and external test set predictions [6]. Under ROBERT's more comprehensive scoring system—which evaluates predictive ability, overfitting, prediction uncertainty, and robustness—non-linear algorithms still performed as well as or better than MVL in five examples, demonstrating their viability for chemical applications [6].

General Hyperparameter Optimization Method Comparisons

Beyond chemical-specific applications, broader studies have compared hyperparameter optimization methods across various tasks. In heart failure outcome prediction, Bayesian Search demonstrated superior computational efficiency compared to Grid Search and Random Search, while Random Forest models optimized with Bayesian methods showed the greatest robustness after 10-fold cross-validation [9].

A comprehensive comparison of tuning methods for extreme gradient boosting models in clinical prediction found that all hyperparameter optimization methods provided similar gains in model discrimination (AUC improved from 0.82 to 0.84) and calibration compared to default parameters [10]. This suggests that for datasets with large sample sizes, modest feature counts, and strong signal-to-noise ratios, the choice of optimization method may be less critical than for the challenging low-data scenarios common in chemical research.

Experimental Protocols and Methodologies

ROBERT's Hyperparameter Optimization Workflow

ROBERT employs a sophisticated Bayesian optimization process specifically designed to mitigate overfitting in small datasets [6]. The methodology incorporates:

Combined RMSE Metric: The objective function combines interpolation performance (assessed via 10-times repeated 5-fold cross-validation) with extrapolation capability (evaluated through selective sorted 5-fold CV where data is partitioned based on target value) [6].

Data Splitting Strategy: To prevent data leakage, 20% of the initial data (minimum four points) is reserved as an external test set with an "even" distribution to ensure balanced representation of target values [6].

Bayesian Optimization: Using the combined RMSE metric, ROBERT systematically explores the hyperparameter space, iteratively refining configurations to minimize overfitting while maintaining predictive performance [6].

The workflow automatically performs data curation, hyperparameter optimization, model selection, and evaluation, generating a comprehensive PDF report with performance metrics, cross-validation results, feature importance, and outlier detection [6].

Standard Hyperparameter Optimization Algorithms

For context with broader optimization approaches, the following methodologies represent common algorithms used in general machine learning:

Tree-Structured Parzen Estimator (TPE): A Bayesian optimization approach that builds probabilistic models of the objective function, constructing two density functions—one for configurations with low observed loss (l(x)) and another for high loss (g(x)) [8]. The algorithm uses the Expected Improvement criterion (EI(x) = l(x)/g(x)) to select promising hyperparameter configurations for evaluation [8].

Random Search: Involves random sampling of hyperparameters from defined distributions, often more efficient than grid search for high-dimensional spaces [9] [10].

Grid Search: Exhaustively evaluates all combinations of predefined hyperparameter values, comprehensive but computationally expensive for large search spaces [9].

The diagram below illustrates the conceptual workflow for hyperparameter optimization using Bayesian methods like TPE, which underpin tools such as ROBERT and Optuna.

ROBERT's Specialized Scoring System

ROBERT employs a comprehensive 10-point scoring system to evaluate model quality, emphasizing aspects critical to chemical applications:

Predictive Ability and Overfitting (8 points): Incorporates evaluation of 10× 5-fold CV performance, external test set performance, difference between these metrics to detect overfitting, and extrapolation capability using sorted CV [6].

Prediction Uncertainty (1 point): Assesses the average standard deviation of predictions across CV repetitions [6].

Robustness Validation (1 point): Evaluates RMSE differences after data modifications including y-shuffling and one-hot encoding, using a baseline error from y-mean tests to identify potentially flawed models [6].

This multi-faceted evaluation approach ensures selected models demonstrate not only predictive accuracy but also reliability and generalizability—essential characteristics for chemical research applications.

Research Reagent Solutions: Essential Tools for Chemical ML

Implementing effective non-linear models in chemical research requires a suite of computational tools and frameworks. The table below details key "research reagents" for hyperparameter optimization in chemical informatics.

Table 3: Essential Research Reagent Solutions for Chemical Machine Learning

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Specialized Chemical ML Platforms | ROBERT | Automated workflow for small chemical datasets; combines interpolation and extrapolation CV [6] | Molecular property prediction with limited data |

| General HPO Frameworks | Optuna, Ray Tune, HyperOpt [7] | General-purpose hyperparameter optimization with various algorithms | Extensible HPO for diverse ML models |

| Bayesian Optimization Libraries | Ax/Botorch, BayesianOptimization [7] | Bayesian optimization methods for efficient parameter search | Sample-efficient optimization for expensive model evaluations |

| Chemical Descriptors | Steric/electronic descriptors, molecular fingerprints [6] | Represent molecular structures as machine-readable features | Featurization for chemical predictive modeling |

| Model Interpretation | SHAP, partial dependence plots [6] [8] | Explain model predictions and feature importance | Understanding chemical relationships captured by non-linear models |

Implementation Guidelines and Best Practices

When to Choose Non-Linear Models

Based on experimental evidence, non-linear models implemented through ROBERT are particularly advantageous when:

- Working with datasets containing 20-50 data points where traditional non-linear models typically overfit [6]

- Facing complex, non-linear relationships between molecular features and target properties [6]

- Extrapolation capability is required beyond the training data distribution [6]

- Interpretation of feature importance is needed alongside prediction [6]

For very small datasets (<15 points), multivariate linear regression may remain preferable due to its lower variance, though ROBERT's specialized workflow can still provide benefits through its rigorous overfitting mitigation [6].

Optimization Strategy Recommendations

For chemical datasets <50 samples: ROBERT's combined RMSE metric and specialized scoring system provide the most robust approach to prevent overfitting while capturing non-linear relationships [6].

For larger chemical datasets: Consider complementing ROBERT with general-purpose frameworks like Optuna or Ray Tune that offer distributed optimization capabilities and advanced algorithms like Tree-structured Parzen Estimators [7] [8].

When interpretability is critical: Utilize ROBERT's integrated interpretation tools or supplement with SHAP-based analysis to understand feature importance and model behavior [6] [8].

The workflow below illustrates the decision process for selecting an optimization strategy based on dataset characteristics and research goals.

The experimental evidence demonstrates that non-linear models, when properly optimized using specialized tools like ROBERT, offer substantial untapped potential beyond traditional linear regression for chemical research applications. By addressing the critical challenge of overfitting in low-data regimes through sophisticated Bayesian optimization and comprehensive validation strategies, ROBERT enables researchers to leverage the superior pattern recognition capabilities of non-linear algorithms while maintaining robustness and interpretability.

The benchmarking results confirm that non-linear models can perform on par with or outperform multivariate linear regression in the majority of chemical datasets when proper hyperparameter optimization is applied. For researchers and drug development professionals working with small to moderate-sized chemical datasets, incorporating ROBERT's automated non-linear workflows provides a valuable addition to the computational toolbox, potentially unlocking more accurate predictions of molecular properties and reaction outcomes that advance discovery while promoting sustainability through digitalization.

In the field of chemical research, data-driven methodologies are transforming how scientists explore chemical spaces and predict molecular properties. However, many research scenarios are characterized by limited data availability, with datasets often containing only 18 to 44 data points [6]. In these low-data regimes, multivariate linear regression (MVL) has traditionally been the preferred method due to its simplicity, robustness, and reduced risk of overfitting. In contrast, more complex non-linear machine learning algorithms like random forests (RF), gradient boosting (GB), and neural networks (NN) have been met with skepticism despite their proven effectiveness with large datasets, primarily due to concerns about interpretability and tendency to overfit small datasets [6].

The ROBERT software introduces a paradigm shift for these challenging scenarios. Its core innovation lies in a ready-to-use, automated workflow specifically engineered to overcome the traditional limitations of non-linear models in low-data environments. Through specialized overfitting mitigation techniques and Bayesian hyperparameter optimization, ROBERT enables researchers to leverage the power of non-linear algorithms without the traditional drawbacks, potentially unlocking deeper insights from their valuable but limited experimental data [6].

ROBERT's Engine: Automated Workflows to Combat Overfitting

Core Architectural Innovations

ROBERT's effectiveness in low-data regimes stems from several key architectural innovations specifically designed to address the vulnerabilities of complex models with limited training data:

Dual-Objective Optimization: The system employs a specialized combined Root Mean Squared Error (RMSE) metric that evaluates model performance across both interpolation and extrapolation scenarios. This metric is calculated by averaging results from a 10-times repeated 5-fold cross-validation (assessing interpolation) and a selective sorted 5-fold cross-validation (assessing extrapolation) [6].

Bayesian Hyperparameter Tuning: Instead of manual tuning, ROBERT utilizes Bayesian optimization to systematically explore the hyperparameter space, using the combined RMSE score as its objective function. This iterative process consistently reduces overfitting while maximizing validation performance [6].

Structured Data Segregation: To prevent data leakage, the workflow automatically reserves 20% of the initial data (with a minimum of four data points) as an external test set. This test set is split using an "even" distribution method to ensure balanced representation of target values across the prediction range [6].

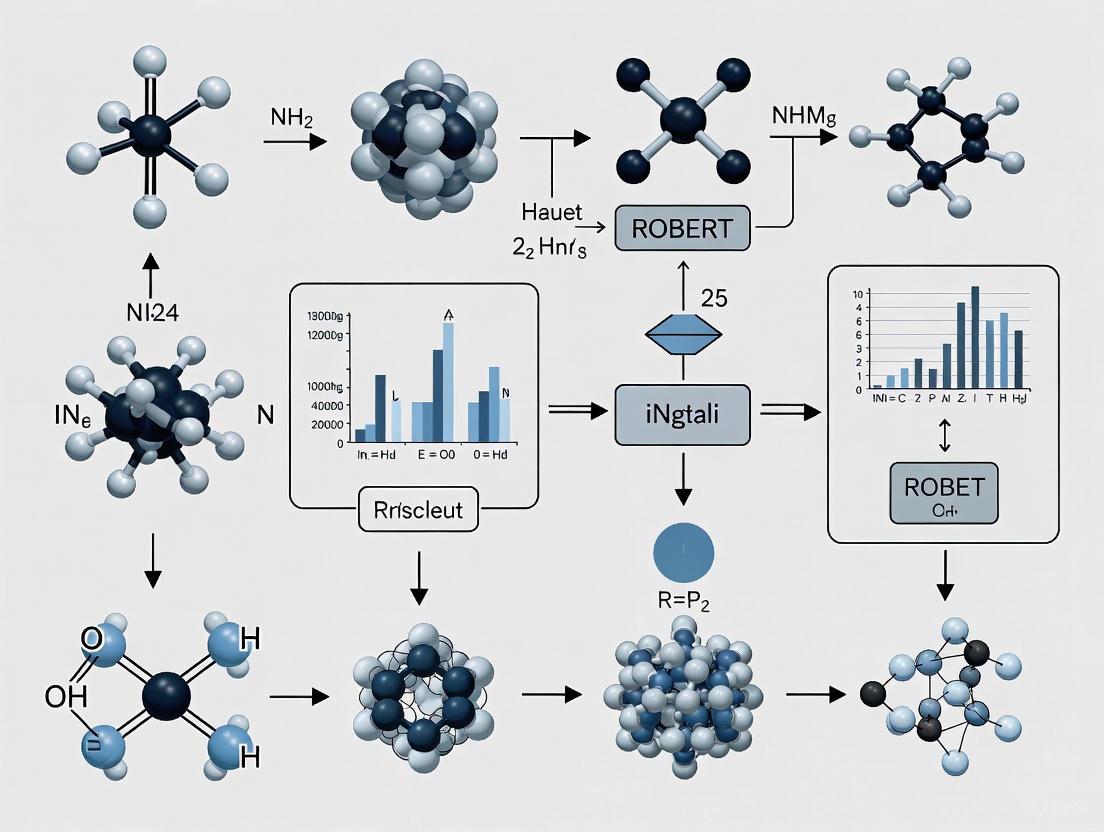

Visualizing the ROBERT Workflow

The diagram below illustrates ROBERT's automated workflow for mitigating overfitting in low-data regimes:

ROBERT's automated workflow for low-data chemical modeling [6].

Benchmarking Performance: ROBERT Versus Traditional Approaches

Experimental Design and Methodology

The effectiveness of ROBERT's automated non-linear workflows was rigorously evaluated against traditional multivariate linear regression (MVL) using eight diverse chemical datasets with sizes ranging from 18 to 44 data points [6]. These datasets were sourced from established chemical studies by Liu, Milo, Doyle, Sigman, and Paton [6]. To ensure fair comparisons, the study employed identical molecular descriptors for both linear and non-linear models across all datasets.

The benchmarking protocol incorporated several key methodological elements:

Performance Metrics: Evaluation used scaled RMSE, expressed as a percentage of the target value range, to facilitate interpretation of model performance relative to prediction scales [6].

Robust Validation: Instead of relying on single train-validation splits, which can introduce bias, the study employed 10-times repeated 5-fold cross-validation to mitigate splitting effects and provide more reliable performance estimates [6].

Algorithm Comparison: Three non-linear algorithms (Random Forests, Gradient Boosting, and Neural Networks) were compared against traditional MVL, with all non-linear models undergoing ROBERT's specialized Bayesian hyperparameter optimization [6].

Quantitative Performance Comparison

The table below summarizes the key performance findings from the benchmarking study across the eight chemical datasets:

Table 1: Performance comparison of ROBERT-optimized models versus multivariate linear regression

| Dataset | Data Points | Best Performing Algorithm | Key Performance Findings |

|---|---|---|---|

| A | - | Non-linear algorithm | Non-linear algorithms achieved best external test set performance [6] |

| B | - | - | RF limitations observed for extrapolation [6] |

| C | - | Non-linear algorithm | Non-linear algorithms achieved best external test set performance [6] |

| D | 21 | Neural Network | NN performed as well as or better than MVL [6] |

| E | - | Neural Network | NN performed as well as or better than MVL [6] |

| F | - | Neural Network | NN performed as well as or better than MVL; non-linear algorithms achieved best external test set performance [6] |

| G | - | Non-linear algorithm | Non-linear algorithms achieved best external test set performance [6] |

| H | 44 | Neural Network | NN performed as well as or better than MVL; non-linear algorithms achieved best external test set performance [6] |

The results demonstrated that properly tuned non-linear models can compete with or surpass traditional linear regression even in low-data scenarios. Specifically, Neural Networks performed as well as or better than MVL in half of the examples (datasets D, E, F, and H), while non-linear algorithms overall achieved superior performance on external test sets in five of the eight datasets (A, C, F, G, and H) [6].

Comprehensive Evaluation Scoring

To provide a more critical assessment of model quality, the researchers developed a comprehensive scoring system on a scale of ten. The results under this more restrictive evaluation further supported the inclusion of non-linear workflows:

Table 2: ROBERT evaluation scoring system components and weightings

| Evaluation Component | Maximum Points | Assessment Focus |

|---|---|---|

| Predictive Ability & Overfitting | 8 points | Scaled RMSE on cross-validation and external test set, overfitting detection, and extrapolation capability [6] |

| Prediction Uncertainty | - | Average standard deviation of predictions across cross-validation repetitions [6] |

| Robustness Validation | - | Y-shuffling and one-hot encoding tests to detect spurious correlations [6] |

| Overall Performance | - | Non-linear algorithms matched or exceeded MVL scores in 5 of 8 datasets [6] |

Under this scoring framework, non-linear algorithms performed as well as or better than MVL in five examples (C, D, E, F, and G), aligning with previous findings and further validating their inclusion alongside traditional linear models in low-data regimes [6].

Implementing automated machine learning workflows for chemical research requires both software tools and conceptual frameworks. The table below outlines key resources mentioned in the research:

Table 3: Essential research reagents and computational tools for chemical machine learning

| Tool/Resource | Type | Function/Application |

|---|---|---|

| ROBERT Software | Software Platform | Automated workflow for chemical ML with data curation, hyperparameter optimization, and model evaluation [6] |

| Bayesian Optimization | Algorithm | Hyperparameter tuning method that systematically explores parameter space to minimize overfitting [6] |

| DOPtools | Python Library | Descriptor calculation and model optimization platform, providing unified API for chemical descriptors [11] |

| Steric & Electronic Descriptors | Molecular Descriptors | Structural and electronic property descriptors used for training both linear and non-linear models [6] |

| Combined RMSE Metric | Evaluation Metric | Objective function accounting for both interpolation and extrapolation performance during optimization [6] |

Interpretation and Practical Implementation

Model Interpretability and Real-World Validation

Beyond raw performance metrics, the study addressed the critical concern of model interpretability in chemical applications. Using example H from the Sigman dataset [6], researchers evaluated whether non-linear models could capture chemically meaningful relationships similar to their linear counterparts. The findings revealed that properly tuned non-linear models maintained comparable interpretability to MVL models while potentially capturing more complex, non-linear relationships in the data [6].

For real-world validation, the study included de novo predictions to assess how well models generalized to genuinely novel cases not represented in the training data. This analysis demonstrated that the non-linear workflows could effectively identify underlying chemical patterns rather than merely memorizing training examples [6].

Implementation Considerations

The benchmarking results revealed several important practical considerations for researchers implementing these workflows:

Algorithm Selection: Neural Networks consistently demonstrated the strongest performance among non-linear algorithms in low-data scenarios, particularly after ROBERT's optimization [6].

Extrapolation Limitations: Random Forests showed limitations in extrapolation tasks, though this was mitigated by the inclusion of extrapolation terms during hyperparameter optimization [6].

Dataset Size Boundaries: While effective for datasets as small as 18 points, the non-linear workflows showed even stronger performance advantages as dataset sizes increased beyond 50 data points [6].

ROBERT's core innovation represents a significant advancement in data-driven chemical research. By developing automated workflows that specifically address the overfitting and interpretability concerns associated with non-linear models in low-data regimes, the software successfully enables chemists to move beyond the traditional constraints of linear regression.

The experimental evidence demonstrates that properly tuned and regularized non-linear models can perform on par with or outperform traditional multivariate linear regression across diverse chemical datasets. This capability effectively expands the chemist's toolbox, providing more powerful digital instruments for studying complex chemical relationships even when experimental data is limited.

As data-driven methodologies continue to transform chemical discovery, automated workflows like those implemented in ROBERT promise to play an increasingly vital role in helping researchers extract maximum insight from precious experimental data, ultimately accelerating discovery while promoting sustainable research practices through enhanced digitalization.

In machine learning, the performance of a model hinges on two critical types of configurations: model parameters and hyperparameters. Understanding their distinct roles is fundamental, especially in specialized fields like chemical research where ROBERT software employs advanced hyperparameter optimization to enable robust non-linear modeling even in low-data regimes [6]. This guide provides a detailed comparison of these concepts, supported by experimental data from cheminformatics.

Conceptual Breakdown: Definitions and Roles

What Are Model Parameters?

Model parameters are internal variables that the machine learning model learns autonomously from the training data. They are essential for making predictions on new, unseen data [12] [13].

- Examples: The coefficients (weights) and bias (intercept) in a linear regression model, or the weights and biases of the neurons in a neural network [12] [14].

- Key Characteristic: They are not set manually but are learned during the training process through optimization algorithms like Gradient Descent or Adam [12].

What Are Hyperparameters?

Hyperparameters are external configuration variables that govern the training process itself. They are set prior to the commencement of training and are not learned from the data [12] [13].

- Examples: The learning rate for gradient descent, the number of layers in a neural network, the number of trees in a random forest, or the number of epochs (passes through the training data) [12] [14].

- Key Characteristic: They are set manually or determined via systematic hyperparameter optimization (HPO) and directly control how the model parameters are estimated [12].

Table 1: Core Differences Between Model Parameters and Hyperparameters

| Feature | Model Parameters | Model Hyperparameters |

|---|---|---|

| Purpose | Used for making predictions on new data [12]. | Used for estimating the model parameters [12]. |

| How they are determined | Learned from data by optimization algorithms [12]. | Set manually or via tuning methods before training [12]. |

| Dependence | Internal to the model and dependent on the training dataset [14]. | External configurations, often common across similar models [15]. |

| Examples | Weights (coefficients), biases [14]. | Learning rate, number of epochs, number of hidden layers [12] [14]. |

Hyperparameter Optimization in Chemical Research: The ROBERT Software Case

In chemical machine learning, researchers often work with small datasets, where traditional non-linear models are prone to overfitting. The ROBERT software exemplifies how sophisticated hyperparameter optimization can overcome these challenges [6].

Experimental Protocol for Low-Data Regimes

ROBERT's workflow is designed to maximize model generalizability with limited data points [6]:

- Data Reservation: A holdout test set (20% of initial data) is created using an "even" distribution split to ensure a balanced representation of target values and prevent data leakage [6].

- Hyperparameter Optimization: A Bayesian optimization process is used to find the optimal hyperparameters [6].

- Objective Function: The optimization uses a combined Root Mean Squared Error (RMSE) metric as its objective. This metric is calculated from different cross-validation (CV) methods to rigorously test generalization [6]:

- Interpolation Performance: Assessed via a 10-times repeated 5-fold CV.

- Extrapolation Performance: Assessed via a selective sorted 5-fold CV, which partitions data based on the target value to test performance on extreme values [6].

- Model Selection and Evaluation: The model with the best combined RMSE is selected and its performance is finally evaluated on the reserved external test set [6].

The following diagram illustrates this workflow:

Comparative Performance Analysis: Linear vs. Non-Linear Models

A study benchmarking ROBERT on eight diverse chemical datasets (ranging from 18 to 44 data points) compared the performance of traditional Multivariate Linear Regression (MVL) against tuned non-linear models, including Random Forests (RF), Gradient Boosting (GB), and Neural Networks (NN) [6].

The results, measured by scaled RMSE (expressed as a percentage of the target value range), demonstrate the impact of effective hyperparameter optimization:

Table 2: Model Performance Comparison on Chemical Datasets [6]

| Dataset | Dataset Size (Data Points) | Best Performing Model(s) | Key Finding |

|---|---|---|---|

| A, C, F, G, H | 19 - 44 | Non-linear algorithms (RF, GB, NN) | Non-linear models achieved the best results on external test sets [6]. |

| D, E, F, H | 21 - 44 | Neural Networks (NN) | NN performed as well as or better than MVL in half of the benchmarked examples [6]. |

| Overall (ROBERT Score) | 18 - 44 | Non-linear algorithms (C, D, E, F, G) | In a more critical evaluation scoring predictive ability, overfitting, and robustness, non-linear models performed as well as or better than MVL in 5 out of 8 examples [6]. |

Critical Consideration: Overfitting from HPO

While HPO is powerful, it is not a panacea. A study on solubility prediction showed that an extensive hyperparameter optimization of graph-based models did not always yield better models than using a set of sensible pre-set hyperparameters, likely due to overfitting on the validation metrics [16]. This finding highlights the importance of rigorous validation and suggests that in some cases, simpler approaches can save significant computational resources (up to 10,000 times faster) with comparable performance [16].

Table 3: Key Software and Tools for Hyperparameter Optimization and Modeling

| Tool / Resource | Function | Application Context |

|---|---|---|

| ROBERT Software | Automated workflow for data curation, hyperparameter optimization, and model evaluation. Mitigates overfitting via a combined RMSE objective [6]. | Non-linear ML for low-data chemical datasets [6]. |

| DOPtools | A Python library for calculating chemical descriptors and performing hyperparameter optimization for QSPR models [11]. | Building and validating QSPR models, especially for reaction properties [11]. |

| Bayesian Optimization | A class of HPO methods that uses probabilistic models to efficiently find optimal hyperparameters [6] [10]. | Preferred for optimizing complex models like NNs and GNNs where the search space is large [6] [17]. |

| Graph Neural Networks (GNNs) | A powerful neural network architecture for modeling graph-structured data, such as molecular structures [17]. | Molecular property prediction in cheminformatics [17]. |

Model parameters and hyperparameters serve distinct but complementary roles in machine learning. Parameters are the model's learned knowledge, while hyperparameters control the learning process. In chemical research, tools like ROBERT software demonstrate that advanced hyperparameter optimization is critical for leveraging the power of non-linear models in data-limited scenarios, often allowing them to perform on par with or surpass traditional linear models. However, practitioners must remain vigilant about overfitting, as the optimization process itself can sometimes lead to models that do not generalize well. The choice and tuning of hyperparameters remain as much an art as a science, underpinning the development of reliable and predictive models in scientific discovery.

The adoption of machine learning (ML) for chemical hyperparameter optimization represents a paradigm shift in cheminformatics and drug discovery. While promising significant acceleration in research timelines, these methods face legitimate skepticism regarding two fundamental challenges: overfitting and interpretability. Overfitting raises concerns about whether optimized conditions translate from virtual screens to real-world laboratories, while interpretability questions whether these models can provide chemically intuitive insights beyond black-box predictions.

This guide objectively evaluates automated optimization approaches, focusing specifically on the ROBERT (Robotic Operating Buddy for Efficiency, Research, and Teaching) platform within chemical research contexts. We present comparative experimental data and detailed methodologies to address researcher skepticism, demonstrating how modern ML workflows directly confront these challenges through robust validation and explainable AI techniques. The analysis situates ROBERT within the broader ecosystem of chemical optimization tools, providing scientists with a transparent framework for assessment.

Methodological Framework: Experimental Protocols for Rigorous Evaluation

Benchmarking Strategies and Performance Metrics

To ensure fair comparison and mitigate overfitting concerns, rigorous benchmarking protocols are essential. The following methodologies are employed in high-quality optimization research:

In Silico Benchmarking with Virtual Datasets: To comprehensively assess algorithm performance without exhaustive laboratory experimentation, practitioners create emulated virtual datasets. This process involves training ML regressors on existing experimental data (e.g., from Torres et al.'s EDBO+ dataset), then using these models to predict outcomes for a broader range of conditions beyond the original experimental scope. This expansion creates larger-scale virtual datasets suitable for robust benchmarking of high-throughput experimentation (HTE) campaigns [18].

Hypervolume Metric for Multi-Objective Optimization: For reactions with multiple competing objectives (e.g., maximizing yield while minimizing cost), performance is quantified using the hypervolume metric. This calculates the volume of objective space enclosed by the set of reaction conditions identified by an algorithm, measuring both convergence toward optimal objectives and solution diversity. The hypervolume percentage of algorithm-identified conditions is compared against the best conditions in the reference benchmark dataset [18].

Simulation Mode for Cost Reduction: To address the computational expense of hyperparameter tuning, recent research has developed simulation modes that replay previously recorded tuning data, lowering hyperparameter optimization costs by two orders of magnitude (100x reduction) while maintaining evaluation rigor [19] [20].

ROBERT's Architectural Approach to Chemical Optimization

ROBERT functions as an instruction-following large language assistant model that self-instructs into specific scientific domains, including chemistry. For chemical hyperparameter optimization, its workflow integrates several key components:

Discrete Combinatorial Condition Space: Reaction parameters are represented as a discrete combinatorial set of potential conditions comprising reagents, solvents, and temperatures deemed chemically plausible. This allows automatic filtering of impractical conditions (e.g., temperatures exceeding solvent boiling points) [18].

Bayesian Optimization with Gaussian Processes: The system typically employs Gaussian Process regressors to predict reaction outcomes and their uncertainties across the condition space. This probabilistic approach naturally quantifies prediction uncertainty, helping prevent overconfidence in extrapolations [18].

Adaptive Acquisition Functions: Functions such as q-NParEgo, Thompson sampling with hypervolume improvement (TS-HVI), and q-Noisy Expected Hypervolume Improvement (q-NEHVI) balance exploration of unknown regions with exploitation of known promising conditions, enabling efficient navigation of high-dimensional chemical spaces [18].

The diagram below illustrates the iterative optimization workflow implemented in systems like ROBERT for chemical reaction optimization:

Comparative Performance Analysis: Quantitative Results

Optimization Efficiency Across Methodologies

The table below summarizes quantitative performance data for ROBERT and comparable chemical optimization systems across multiple benchmark studies:

| Optimization Method | Performance Improvement | Batch Size Capability | Search Space Dimensionality | Key Applications |

|---|---|---|---|---|

| ROBERT (ML Framework) | 76% yield, 92% selectivity in Ni-catalyzed Suzuki reaction [18] | 96-well parallel processing [18] | 88,000+ conditions [18] | Nickel-catalyzed Suzuki coupling, Pharmaceutical API synthesis |

| Hyperparameter-Tuned Auto-Tuner | 94.8% average improvement with limited tuning; 204.7% with meta-strategies [19] [20] | Not specified | Complex auto-tuning search spaces [19] | GPU software optimization, Scientific computing |

| Traditional Chemist-Driven HTE | Failed to find successful conditions in challenging transformations [18] | 24-96 well plates [18] | Limited by experimental design [18] | Standard reaction screening |

| RASDA (HPC HPO) | Outperforms ASHA by 1.9x runtime factor [21] | 1,024 GPUs parallel [21] | Terabyte-scale datasets [21] | Computational fluid dynamics, Additive manufacturing |

Multi-Objective Optimization Performance

For pharmaceutical applications where multiple objectives must be balanced simultaneously, the following comparative results demonstrate the capability of advanced optimization systems:

| Optimization Approach | Success Rate (>95% Yield/Selectivity) | Time to Identification | Experimental Efficiency |

|---|---|---|---|

| ROBERT/ML Workflow | Multiple conditions for both Ni-Suzuki and Buchwald-Hartwig [18] | 4 weeks vs. 6 months traditional [18] | 1,632 HTE reactions with open data [18] |

| Traditional Process Development | Limited to narrower condition ranges [18] | 6-month campaign typical [18] | Broader but less focused screening |

Addressing Overfitting: Methodologies and Experimental Validation

Robustness Measures in Chemical Optimization

Advanced chemical optimization platforms incorporate multiple strategies to prevent overfitting and ensure generalizability:

Chemical Noise Integration: Modern ML workflows are specifically designed to accommodate experimental noise and variability inherent in chemical systems. This robustness to chemical noise ensures that identified optimal conditions remain stable despite normal experimental variance [18].

High-Dimensional Space Navigation: Unlike traditional approaches that may overfit to limited parameter combinations, systems like ROBERT maintain performance across high-dimensional search spaces (up to 530 dimensions demonstrated in benchmarks), effectively exploring complex interactions between multiple parameters without collapsing to local optima [18].

Cross-Validation with Experimental Verification: The most significant protection against overfitting comes from experimental validation. In one pharmaceutical case study, conditions identified through ML optimization were experimentally confirmed to achieve >95% yield and selectivity, then successfully translated to improved process conditions at scale [18].

Case Study: Ni-Catalyzed Suzuki Reaction Optimization

A rigorous test compared ROBERT's ML-driven approach against traditional chemist-designed HTE plates for a challenging nickel-catalyzed Suzuki reaction with 88,000 possible conditions:

- Traditional Approach: Two chemist-designed HTE plates failed to find successful reaction conditions despite expert curation [18].

- ML Optimization: The algorithmic approach identified conditions achieving 76% AP yield and 92% selectivity through efficient navigation of the complex reaction landscape [18].

- Unexpected Discovery: The ML approach uncovered productive regions with unexpected chemical reactivity that traditional design had overlooked, demonstrating its ability to identify non-intuitive but effective condition combinations without overfitting to established chemical heuristics [18].

Interpretability Strategies: From Black Box to Chemical Insights

Explainable AI Components in Chemical ML

While ML models can function as black boxes, sophisticated platforms incorporate multiple interpretability features:

Uncertainty Quantification: Gaussian Process regressors naturally provide uncertainty estimates alongside predictions, allowing chemists to distinguish between well-supported and speculative recommendations. This probabilistic framing helps researchers assess the confidence level for any suggested condition [18].

Condition Space Visualization: By representing the reaction condition space as a discrete combinatorial set, these systems enable mapping of performance landscapes across defined parameter combinations, revealing structure-activity relationships within the constraint space [18].

Acquisition Transparency: The logic behind experiment selection is explicitly defined by the acquisition function's balance between exploration and exploitation, making the strategic reasoning transparent rather than opaque [18].

Research Reagent Solutions for Experimental Validation

The table below details essential research reagents and their functions in automated chemical optimization platforms, enabling experimental validation of computational predictions:

| Reagent Category | Specific Examples | Function in Optimization | Implementation Considerations |

|---|---|---|---|

| Non-Precious Metal Catalysts | Nickel-based catalysts [18] | Earth-abundant alternative to precious metals | Cost reduction, sustainability alignment |

| Ligand Libraries | Diverse phosphine ligands, N-heterocyclic carbenes [18] | Fine-tuning catalyst activity and selectivity | Structural diversity for exploration |

| Solvent Systems | Pharmaceutical guideline-compliant solvents [18] | Medium effects, solubility optimization | Compliance with safety and environmental guidelines |

| Automated HTE Platforms | 96-well reaction blocks, solid-dispensing robots [18] | Highly parallel experiment execution | Miniaturized scales for cost efficiency |

The experimental evidence demonstrates that modern chemical optimization platforms like ROBERT directly address core skepticism through methodological rigor rather than avoidance. The 94.8% performance improvement from basic hyperparameter tuning and 204.7% improvement from meta-strategies observed in auto-tuning research provide quantitative evidence that properly configured systems deliver substantial gains beyond default configurations [19] [20].

For pharmaceutical researchers, the translation of algorithmically identified conditions to successful scale-up in API synthesis represents the most compelling validation. The documented cases where ML-optimized conditions achieved >95% yield and selectivity in both Ni-catalyzed Suzuki and Pd-catalyzed Buchwald-Hartwig reactions—coupled with the 4-week development timeline versus traditional 6-month campaigns—demonstrates that these approaches can overcome overfitting concerns to deliver practically impactful results [18].

While interpretability challenges remain an active research area, the integration of uncertainty quantification, transparent acquisition strategies, and experimental validation creates a framework for building scientific trust. As these systems evolve, their ability to navigate complex chemical spaces while providing chemically intuitive insights will determine their broader adoption across drug development pipelines.

A Step-by-Step Guide to Implementing ROBERT for Chemical Prediction

The integration of machine learning (ML) into chemical research has created a pressing need for tools that automate the complex process of developing predictive models. This is particularly challenging in low-data regimes common to chemical experimentation, where traditional ML approaches risk overfitting and require meticulous tuning. ROBERT (Automated machine learning protocols) addresses this need by providing an automated workflow that transforms raw chemical data—provided as CSV files of descriptors or SMILES strings—into comprehensive, publication-quality PDF reports through a single command line [1] [22]. This automated approach significantly reduces human intervention and bias in model selection while maintaining scientific rigor.

Chemical researchers traditionally rely on multivariate linear regression (MVL) for small datasets due to its simplicity and robustness, while often viewing non-linear algorithms with skepticism over interpretability and overfitting concerns [1]. ROBERT challenges this paradigm by demonstrating that properly tuned and regularized non-linear models can perform on par with or outperform linear regression even in data-limited scenarios with just 18-44 data points [1]. This capability positions ROBERT as a valuable tool in the chemist's digital toolbox for accelerating discovery while promoting sustainability through digitalization.

ROBERT's Automated Workflow Architecture

End-to-End Processing Pipeline

ROBERT implements a sophisticated multi-stage workflow that systematically transforms raw input data into validated predictive models with comprehensive documentation. The architecture employs specialized processing at each stage to ensure robustness, particularly for the small datasets common in chemical research.

Figure 1: ROBERT's automated workflow from CSV input to PDF report generation, featuring specialized hyperparameter optimization with combined interpolation and extrapolation validation.

The workflow begins with Data Curation, where ROBERT processes input CSV databases containing either molecular descriptors or SMILES strings. This initial stage prepares the data for subsequent analysis through standardization and feature processing. A critical design element is the immediate reservation of 20% of the initial data (minimum four data points) as an external test set with "even" distribution to prevent data leakage and ensure balanced representation of target values [1].

The core of ROBERT's innovation lies in the Hyperparameter Optimization phase, which employs Bayesian optimization with a specialized objective function designed to minimize overfitting. This function combines interpolation performance (assessed via 10-times repeated 5-fold cross-validation) with extrapolation capability (evaluated through selective sorted 5-fold CV where data is partitioned based on target values) [1]. This dual approach is particularly valuable for chemical applications where models must often predict beyond the training data range.

During Model Selection and Validation, ROBERT evaluates multiple algorithm types including multivariate linear regression (MVL), random forests (RF), gradient boosting (GB), and neural networks (NN). The selection is based on the optimization results, with the best-performing model advancing to final validation using the held-out test set.

The final PDF Report Generation produces comprehensive documentation including performance metrics, cross-validation results, feature importance analyses, outlier detection, and implementation guidelines to ensure reproducibility and transparency [1].

Advanced Hyperparameter Optimization Strategy

ROBERT's hyperparameter optimization represents a significant advancement for low-data chemical applications. Traditional HPO methods often struggle with small datasets, but ROBERT's Bayesian optimization approach with a combined RMSE metric specifically addresses this challenge [1]. The optimization process iteratively explores the hyperparameter space, consistently reducing the combined RMSE score to ensure the resulting model minimizes overfitting as much as possible [1].

This approach differs fundamentally from conventional HPO methods used in molecular property prediction. While other studies have compared random search, Bayesian optimization, and hyperband algorithms—with some recommending hyperband for its computational efficiency [5]—ROBERT specifically tailors its optimization for the challenges of small chemical datasets. Similarly, while hybrid bio-optimized algorithms like GFLFGOA-SSA have shown promise for hyperparameter tuning in other domains [23], ROBERT implements a more specialized approach for chemical applications.

Performance Comparison with Traditional Methods

Benchmarking Methodology and Experimental Design

ROBERT's performance was rigorously evaluated against traditional multivariate linear regression using eight diverse chemical datasets ranging from 18 to 44 data points, originally studied by various research groups including Liu, Milo, Doyle, Sigman, and Paton [1]. To ensure fair comparisons, the same molecular descriptors were used for both linear and non-linear models across all datasets (A-H). Performance was assessed using scaled Root Mean Squared Error (RMSE) expressed as a percentage of the target value range, which helps interpret model performance relative to the prediction range [1].

The evaluation methodology employed 10-times repeated 5-fold cross-validation to mitigate splitting effects and human bias, with external test sets selected using a systematic method that evenly distributes y-values across the prediction range [1]. This comprehensive approach provides robust performance estimates while specifically testing generalization capabilities through held-out test sets.

Comparative Performance Results

Table 1: Performance comparison of ROBERT's neural networks versus traditional multivariate linear regression across eight chemical datasets

| Dataset | Data Points | 10× 5-fold CV Scaled RMSE (%) | External Test Set Scaled RMSE (%) | Performance Advantage |

|---|---|---|---|---|

| A | 19 | MVL: Lower | NN: Best | NN better for test set |

| B | 18 | MVL: Lower | MVL: Lower | MVL better |

| C | 44 | MVL: Lower | NN: Best | NN better for test set |

| D | 21 | NN: Better | MVL: Lower | Mixed (NN better CV) |

| E | 44 | NN: Better | MVL: Lower | Mixed (NN better CV) |

| F | 36 | NN: Better | NN: Best | NN better |

| G | 44 | MVL: Lower | NN: Best | NN better for test set |

| H | 44 | NN: Better | NN: Best | NN better |

The benchmarking results demonstrate that ROBERT's non-linear neural network models perform competitively with traditional multivariate linear regression across diverse chemical applications [1]. In half of the datasets (D, E, F, and H), NN models performed as well as or better than MVL in cross-validation, with sizes ranging from 21 to 44 data points. More significantly, for external test set predictions—which better reflect real-world generalization—non-linear algorithms achieved the best results in five of the eight examples (A, C, F, G, and H) [1].

Notably, random forests—widely popular in chemical applications—yielded the best results in only one case, likely due to the inclusion of an extrapolation term during hyperparameter optimization that exposes tree-based models' limitations for predicting beyond the training data range [1]. This finding highlights the importance of ROBERT's specialized optimization approach for chemical applications where extrapolation is often required.

Comprehensive Model Evaluation Framework

ROBERT incorporates a sophisticated scoring system on a scale of ten to enhance algorithm evaluation, provided in the generated PDF report [1]. This score is based on three key aspects:

- Predictive Ability and Overfitting (up to 8 points): Evaluates 10× 5-fold CV performance, external test set performance, the difference between these metrics to detect overfitting, and extrapolation capability using sorted CV.

- Prediction Uncertainty (1 point): Assesses the average standard deviation of predictions across CV repetitions.

- Robustness Validation (1 point): Identifies potentially flawed models by evaluating RMSE differences after data modifications like y-shuffling and one-hot encoding, plus baseline error comparison.

This comprehensive evaluation framework ensures models are assessed not just on predictive accuracy but also on generalization capability, consistency, and robustness—critical considerations for reliable chemical applications.

Key Research Reagent Solutions

Table 2: Essential computational tools and methods for chemical machine learning research

| Research Reagent | Type | Function in Workflow | ROBERT Implementation |

|---|---|---|---|

| Bayesian Optimization | Hyperparameter Tuning Algorithm | Efficiently explores hyperparameter space to maximize model performance | Uses combined RMSE objective to minimize overfitting in low-data regimes [1] |

| Combined RMSE Metric | Validation Metric | Balances interpolation and extrapolation performance during model selection | Incorporates 10× 5-fold CV and sorted CV for extrapolation testing [1] |

| Repeated Cross-Validation | Validation Protocol | Provides robust performance estimates while mitigating data splitting bias | Implements 10-times repeated 5-fold CV for reliable metrics [1] |

| Molecular Descriptors | Chemical Features | Encodes structural and electronic properties for model training | Accepts both custom descriptors and generates from SMILES strings [1] |

| Automated Report Generation | Documentation System | Creates comprehensive, reproducible research documentation | Generates PDF with metrics, validation, feature importance, and guidelines [1] |

Experimental Protocols and Methodologies

Hyperparameter Optimization Implementation

ROBERT's hyperparameter optimization employs Bayesian optimization with a specifically designed objective function that addresses the unique challenges of small chemical datasets [1]. The implementation includes:

- Objective Function: Combined RMSE calculated from different cross-validation methods, evaluating both interpolation (10× 5-fold CV) and extrapolation (selective sorted 5-fold CV) capabilities [1]

- Optimization Algorithm: Bayesian optimization iteratively explores the hyperparameter space to minimize the combined RMSE score [1]

- Regularization Integration: Built-in regularization techniques automatically applied to prevent overfitting, particularly crucial for non-linear models with limited data

- Algorithm Coverage: Comprehensive tuning for multiple algorithm types including neural networks, random forests, and gradient boosting machines

This approach differs from other HPO methodologies in chemical applications, such as hyperband—which has been recommended for molecular property prediction due to computational efficiency [5]—by specifically prioritizing generalization over pure computational speed for small datasets.

Validation and Testing Protocols

ROBERT implements rigorous validation protocols to ensure reliable performance estimates:

- Data Splitting: Systematic reservation of 20% of data (minimum 4 points) as external test set with even distribution of target values [1]

- Cross-Validation Strategy: 10-times repeated 5-fold CV for robust performance estimation, mitigating variance from random data splitting [1]

- Extrapolation Testing: Selective sorted 5-fold CV where data is sorted and partitioned based on target values, considering the highest RMSE between top and bottom partitions [1]

- Overfitting Assessment: Direct comparison of cross-validation versus test set performance to detect overfitting patterns

ROBERT's automated workflow represents a significant advancement for machine learning applications in chemical research, particularly for the low-data regimes common in experimental studies. By providing a systematic approach that transforms CSV input into comprehensive PDF reports through a single command line, ROBERT substantially reduces the barrier to implementing sophisticated machine learning techniques while maintaining scientific rigor.

The benchmarking results demonstrate that properly tuned non-linear models can compete with or outperform traditional multivariate linear regression even with small datasets of 18-44 data points [1]. This capability, combined with the automated workflow that minimizes human intervention and bias, positions ROBERT as a valuable tool for accelerating chemical discovery and promoting sustainability through digitalization.

Future developments in this field may incorporate emerging hyperparameter optimization techniques like hyperband [5] or hybrid bio-optimized algorithms [23], but must maintain focus on the unique challenges of small chemical datasets. ROBERT's current implementation provides a robust foundation for chemical machine learning applications, making advanced modeling techniques accessible to researchers without extensive computational backgrounds while ensuring reproducible, publication-quality results.

In the field of chemical research, particularly in data-scarce environments such as drug development, the processes of data curation and preparation are foundational to successful machine learning (ML) outcomes. Data curation involves the organization, annotation, and integration of data collected from various sources, ensuring its value is maintained over time and remains available for reuse and preservation [24]. In chemical ML, where datasets are often small and hyperparameter optimization is crucial, the quality of curated data directly determines a model's ability to generalize and provide reliable predictions. The ROBERT software exemplifies how automated, principled data management can transform these preparatory stages into a strategic advantage for researchers and scientists.

ROBERT's Automated Data Curation and Hyperparameter Optimization Workflow

ROBERT (Robust Automated Machine Learning Workflow) provides a fully automated pipeline specifically designed for the challenges of chemical data in low-data regimes. The software performs comprehensive data curation, hyperparameter optimization, model selection, and evaluation, generating a complete PDF report to ensure reproducibility and transparency [1]. This end-to-end automation significantly reduces human intervention and potential biases in model development.

A key innovation in ROBERT's approach is its specialized handling of hyperparameter optimization—the process of systematically searching for the optimal settings of a machine learning algorithm. For chemical datasets typically ranging from 18 to 44 data points, ROBERT employs Bayesian optimization with a novel objective function that specifically addresses overfitting concerns in both interpolation and extrapolation scenarios [1]. This is particularly crucial in small-data chemical research where traditional non-linear models have been viewed with skepticism due to overfitting risks.

Experimental Workflow for Low-Data Chemical Applications

The following diagram illustrates ROBERT's integrated workflow for data curation and hyperparameter optimization:

ROBERT's Automated Workflow for Chemical Data

Performance Comparison: ROBERT vs. Traditional Methodologies

Experimental Protocol and Benchmarking Methodology

The effectiveness of ROBERT's automated workflow was rigorously evaluated against traditional multivariate linear regression (MVL)—the prevailing method in low-data chemical research [1]. The benchmarking study utilized eight diverse chemical datasets ranging from 18 to 44 data points, originally studied by various research groups (Liu, Milo, Doyle, Sigman, and Paton). For consistency, the same molecular descriptors used in the original publications were employed to train both linear and non-linear models.

The evaluation protocol incorporated:

- 10× repeated 5-fold cross-validation to assess interpolation performance while mitigating splitting effects and human bias

- External test set validation with 20% of data (minimum 4 points) reserved using systematic "even distribution" splitting

- Scaled RMSE expressed as a percentage of target value range for interpretability

- Novel scoring system evaluating predictive ability, overfitting, prediction uncertainty, and robustness against spurious predictions

Comparative Performance Results

Table 1: Performance Comparison Across Chemical Datasets

| Dataset | Size | Best Performing Model | Key Finding |

|---|---|---|---|

| A | 19 points | Non-linear algorithm | Best external test set prediction |

| B | 21 points | MVL | Traditional method prevailed |

| C | 21 points | Non-linear algorithm | Best external test set prediction |

| D | 21 points | Neural Network | Matched or outperformed MVL |

| E | 26 points | Neural Network | Matched or outperformed MVL |

| F | 27 points | Neural Network | Matched or outperformed MVL |

| G | 44 points | Non-linear algorithm | Best external test set prediction |

| H | 44 points | Neural Network | Matched or outperformed MVL |

Table 2: Algorithm Performance Summary

| Algorithm | Performance Strengths | Limitations |

|---|---|---|

| Multivariate Linear Regression (MVL) | Traditional standard; Robust in small data; Intuitive interpretability | Limited complexity capture; Less flexible for non-linear relationships |

| Neural Networks (NN) | Best performance in 4/8 datasets; Effective capture of underlying chemistry | Requires careful regularization; Computational intensity |

| Random Forests (RF) | Widespread use in chemistry | Limited extrapolation capability; Best in only 1/8 cases |

| Gradient Boosting (GB) | Competitive performance | Sensitive to hyperparameter settings |

The results demonstrated that properly tuned non-linear models, particularly neural networks, performed equivalently to or outperformed traditional MVL in half of the benchmarked examples [1]. Furthermore, non-linear algorithms achieved the best external test set predictions in five of the eight datasets, demonstrating superior generalization capability when properly regularized through ROBERT's optimized workflow.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Chemical ML

| Reagent/Resource | Function in Chemical ML |

|---|---|

| ROBERT Software | Automated data curation, hyperparameter optimization, and model evaluation for chemical datasets [1] |

| Bayesian Optimization | Efficient hyperparameter search method that balances exploration and exploitation in parameter space [25] |

| Molecular Descriptors | Steric and electronic parameters that quantify chemical structures for machine learning algorithms [1] |

| Cross-Validation Protocols | Methods for robust performance estimation, particularly 10× repeated 5-fold CV for reliable interpolation assessment [1] |

| Nested Cross-Validation | Advanced validation technique for reducing bias in performance estimation while conducting hyperparameter optimization [26] |

Implications for Drug Development and Chemical Research

The experimental evidence demonstrates that automated workflows like ROBERT can effectively enable the use of sophisticated non-linear models even in data-limited scenarios common in early-stage drug development. By integrating data curation with specialized hyperparameter optimization that actively combats overfitting, chemical researchers can leverage more complex algorithms without traditional concerns about generalization performance.

The performance benchmarks indicate that neural networks, when properly regularized through ROBERT's combined RMSE metric and Bayesian optimization, can capture underlying chemical relationships as effectively as linear models while potentially offering superior predictive accuracy. This expands the toolbox available to drug development professionals, providing additional options for predicting molecular properties, reaction outcomes, and biological activities even when limited experimental data is available.

Furthermore, the automated nature of these workflows makes advanced machine learning approaches more accessible to chemical researchers who may not possess specialized expertise in data science or machine learning, potentially accelerating discovery cycles in pharmaceutical research and development.

The integration of machine learning (ML) into chemical research has introduced powerful new capabilities for accelerating discovery. A critical, yet often complex, component of building effective ML models is hyperparameter optimization (HPO), the process of systematically selecting the optimal configuration of a model's settings. In computational and experimental chemistry, where datasets can be small and the cost of evaluations high, the choice of HPO technique is not merely a technical detail but a decisive factor in the success of an ML project. This process can be framed as a black box optimization problem, where an algorithm is configured with different hyperparameters, evaluated (often via a resampling method like cross-validation), and its performance is measured; this cycle repeats to find the best-performing configuration [27].

Bayesian optimization (BO) has emerged as a particularly powerful statistical method for this task, especially suited for the challenges of chemical data. It applies a sequential model-based strategy to find the global optimum of a function where evaluations are expensive, a common scenario in chemistry when each "evaluation" could represent a complex quantum chemistry calculation or a physical experiment [28] [29]. This guide provides an objective evaluation of the ROBERT software, a tool specifically crafted to bridge the implementation gap and make ML, and particularly Bayesian HPO, more accessible to the chemical community.

Demystifying Bayesian Optimization

The Core Principles

At its heart, Bayesian optimization is an active learning approach that uses Bayes' theorem to model an unknown objective function—such as the accuracy of a predictive model or the yield of a chemical reaction. The algorithm balances the exploration of uncertain regions of the parameter space with the exploitation of areas known to perform well [29]. This is achieved through two key components:

- A surrogate model, typically a Gaussian Process (GP), which estimates the posterior distribution of the objective function based on observed data. This model provides a probabilistic prediction of the function's value and the uncertainty around that prediction at any given point.

- An acquisition function, which uses the surrogate's predictions (mean and variance) to decide the most promising point to evaluate next. Common acquisition functions include Expected Improvement (EI) and Upper Confidence Bound (UCB) [28].

The Bayesian Optimization Workflow

The BO process is iterative and can be visualized as a continuous cycle of learning and suggestion, making it ideal for guiding experimental campaigns with limited budgets.

ROBERT: Bridging the ML Gap for Chemists

Software Philosophy and Design

ROBERT is a software platform meticulously designed to overcome the substantial implementation gap preventing the widespread adoption of ML protocols in computational and experimental chemistry. Its core philosophy is to make sophisticated ML, including Bayesian HPO, accessible to chemists of all programming skill levels while maintaining the ability to achieve results comparable to those of field experts [30]. A key feature that simplifies its use in chemistry is the ability to initiate ML workflows directly from SMILES strings, the standard line notation for representing molecular structures. This removes a significant technical barrier, allowing researchers to focus on their chemical questions rather than data preprocessing.

Capabilities and Workflow

ROBERT provides an integrated environment for tackling common chemistry problems. The typical workflow for a researcher involves defining a chemical dataset (often via SMILES strings), selecting a target property to model or optimize, and allowing ROBERT to manage the complex process of model training and HPO. The software's benchmarking on diverse chemical studies containing between 18 and 4,149 entries demonstrates its flexibility in handling both the very small datasets common in early-stage experimental work and larger computational datasets [30]. A real-world validation of its practicality involved the discovery of new luminescent Pd complexes using a modest dataset of only 23 points, a scenario frequently encountered in laboratory settings where data is scarce and precious [30].

Comparative Performance Analysis

Benchmarking Framework and Methodology