Self-Supervised Learning for Molecular Representation: A Foundation for Next-Generation Drug Discovery

This article provides a comprehensive exploration of self-supervised learning (SSL) as a transformative paradigm for learning molecular representations in drug discovery and biomedical research.

Self-Supervised Learning for Molecular Representation: A Foundation for Next-Generation Drug Discovery

Abstract

This article provides a comprehensive exploration of self-supervised learning (SSL) as a transformative paradigm for learning molecular representations in drug discovery and biomedical research. It covers the foundational principles that enable models to learn from vast amounts of unlabeled molecular data, the major methodological approaches including contrastive learning and transformer architectures, and their practical applications in predicting drug-drug interactions and molecular properties. The article also addresses key challenges and optimization strategies, presents a comparative analysis with traditional supervised learning, and validates SSL's performance through state-of-the-art case studies like the DreaMS framework for mass spectrometry. Aimed at researchers, scientists, and drug development professionals, this guide synthesizes current advancements to empower the development of more scalable, efficient, and generalizable AI-driven molecular analysis.

What is Self-Supervised Learning and Why Does it Matter for Molecules?

Self-supervised learning (SSL) represents a paradigm shift in machine learning, enabling models to learn rich data representations from unlabeled datasets by generating their own supervisory signals. This approach is particularly transformative for molecular representation research, where labeled experimental data is scarce but unlabeled data is abundant. By leveraging pretext tasks such as predicting masked data segments, SSL models discover intrinsic patterns and structures without human annotation. This technical guide explores SSL's core mechanisms, provides a detailed case study of its application in mass spectrometry-based molecular research via the DreaMS framework, and outlines practical experimental protocols for implementation, empowering researchers to harness SSL for advanced molecular discovery and drug development.

Core Concepts and Definitions

Self-supervised learning is a machine learning technique that uses unsupervised learning for tasks that conventionally require supervised learning [1]. Rather than relying on manually labeled datasets for supervisory signals, self-supervised models generate implicit labels from unstructured data itself [2]. This approach is technically a subset of unsupervised learning but is distinguished by its use of a ground truth derived from the data's inherent structure, allowing it to optimize performance via a loss function similar to supervised methods [1].

The fundamental advantage of SSL lies in its data efficiency. While supervised learning requires extensive manual labeling that can be prohibitively costly and time-consuming, SSL leverages the abundant unlabeled data that is often more readily available [2]. This is particularly valuable in scientific domains like molecular research, where expert annotation is a significant bottleneck. SSL achieves this through pretext tasks—self-generated learning objectives that teach models meaningful data representations, which can then be transferred to various downstream tasks via fine-tuning with minimal labeled data [1].

SSL in the Machine Learning Landscape

The table below contrasts SSL with other major learning paradigms:

| Aspect | Supervised Learning | Unsupervised Learning | Self-Supervised Learning |

|---|---|---|---|

| Data Requirement | Labeled data | Unlabeled data | Unlabeled data |

| Labeling Process | Extensive manual labeling | No labeling required | Self-generated labels |

| Primary Goal | Map inputs to known outputs | Identify patterns and structures | Learn transferable representations from data |

| Common Techniques | Regression, Classification | Clustering, Association | Contrastive learning, masked modeling, autoencoding |

| Key Advantages | High accuracy with sufficient labeled data | No need for labeled data | Efficient use of abundant unlabeled data |

| Major Limitations | Requires large labeled datasets | Difficult to evaluate performance; limited to discovery tasks | Requires careful design of pretext tasks |

Core SSL Methodologies and Algorithms

Theoretical Foundations

SSL operates by creating "pseudo-labels" from unlabeled data, enabling models to learn from vast datasets without extensive manual annotation [2]. The core principle involves defining pretext tasks that force the model to understand the underlying structure of the data by predicting certain aspects of it. These tasks are designed such that a loss function can use unlabeled input data as ground truth, allowing the model to learn accurate, meaningful representations without human-provided labels [1].

Yann LeCun has characterized self-supervised methods as a structured practice of "filling in the blanks" [1]. Broadly speaking, he described the process of learning meaningful representations from the underlying structure of unlabeled data in simple terms: "pretend there is a part of the input you don't know and predict that" [1]. This philosophy underpins many successful SSL approaches.

Key Algorithmic Families

The following table summarizes major SSL algorithm families and their applications:

| Algorithm Family | Representative Models | Core Mechanism | Typical Applications |

|---|---|---|---|

| Contrastive Learning | SimCLR, MoCo [2] | Learns by distinguishing between similar and dissimilar data pairs | Image classification, molecular similarity |

| Predictive Coding | BERT, GPT [2] | Predicts masked or subsequent parts of input data | Language modeling, spectrum prediction |

| Autoencoding | VAEs, Denoising Autoencoders [2] | Reconstructs original input from compressed representation | Data generation, feature learning |

| Clustering-Based | DeepCluster, SwAV [2] | Iteratively assigns pseudo-labels via clustering | Data organization, representation learning |

| Self-Prediction | BYOL, SimSiam [2] | Predicts transformations of the same input | Representation learning without negative samples |

Self-Predictive Learning

Also known as autoassociative self-supervised learning, self-prediction methods train a model to predict part of an individual data sample, given information about its other parts [1]. Models trained with these methods are typically generative rather than discriminative. Key approaches include:

- Autoencoders: Neural networks trained to compress input data into a latent representation, then reconstruct the original input from this compressed form [1]. Variants include denoising autoencoders (trained on corrupted inputs) and variational autoencoders (learning continuous latent spaces).

- Masked Modeling: Randomly masks portions of input data and tasks the model with predicting the missing information [1]. This approach is fundamental to transformer architectures like BERT in NLP and has been successfully adapted to other domains.

Contrastive Learning

Contrastive methods learn representations by maximizing agreement between differently augmented views of the same data instance while pushing apart representations from different instances [2]. This approach has been particularly successful in computer vision but applies across domains.

Case Study: SSL for Molecular Representation from Mass Spectra

The DreaMS Framework

The DreaMS (Deep Representations Empowering the Annotation of Mass Spectra) framework demonstrates SSL's transformative potential in molecular research [3]. This transformer-based neural network was pre-trained in a self-supervised manner on millions of unannotated tandem mass spectra from the GNPS Experimental Mass Spectra (GeMS) dataset [3] [4].

Tandem mass spectrometry (MS/MS) is a primary technique for characterizing biological and environmental samples at a molecular level, yet interpreting tandem mass spectra from untargeted metabolomics experiments remains challenging [3]. Existing computational methods rely on limited spectral libraries and hard-coded human expertise, with only about 2% of MS/MS spectra in untargeted metabolomics experiments being annotatable using reference spectral libraries [3]. The DreaMS framework addresses this limitation through large-scale self-supervision.

Dataset Construction: GeMS

The GeMS dataset was constructed through a sophisticated mining pipeline of the MassIVE GNPS repository [3]:

- Collection: 250,000 LC-MS/MS experiments were collected from diverse biological and environmental studies

- Extraction: Approximately 700 million MS/MS spectra were extracted

- Quality Control: Spectra were filtered into three quality subsets (GeMS-A, GeMS-B, GeMS-C) with consecutive quality tradeoffs

- Redundancy Reduction: Similar spectra were clustered using locality-sensitive hashing (LSH)

- Formatting: Processed spectra were stored in an HDF5-based binary format designed for deep learning

The resulting dataset is orders of magnitude larger than existing spectral libraries, enabling previously impossible repository-scale metabolomics research [3].

Model Architecture and Pre-training

The DreaMS model employs a transformer architecture pre-trained using two self-supervised objectives [3]:

Masked Spectral Peak Prediction: Following BERT-style masked modeling, the model represents each spectrum as a set of 2D continuous tokens associated with peak m/z and intensity values [3]. Random m/z ratios are masked (30%) and the model is trained to reconstruct them.

Chromatographic Retention Order Prediction: An additional precursor token is incorporated to predict the relative order of spectra based on their chromatographic retention times [3].

This dual pre-training objective leads to the emergence of rich representations of molecular structures without using annotated data during the initial learning phase [3].

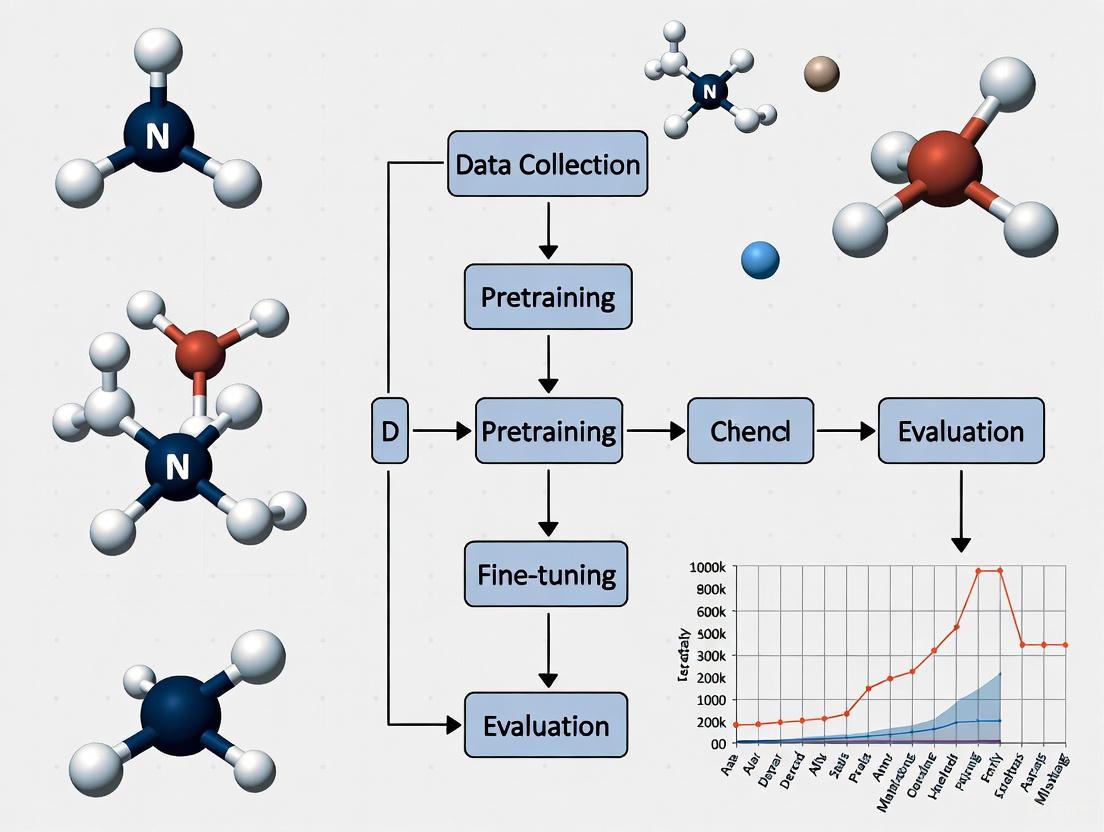

DreaMS Framework Workflow: From raw data to molecular representations

Experimental Protocols and Implementation

SSL Pre-training Protocol for Molecular Representations

The following protocol outlines the methodology for pre-training SSL models on mass spectrometry data, based on the DreaMS framework:

Data Preparation

- Source: Collect raw LC-MS/MS data from public repositories (MassIVE GNPS) or in-house experiments

- Format Conversion: Convert vendor-specific formats to open standards (mzML, mzXML)

- Peak Picking: Apply peak detection algorithms to raw spectra

- Quality Filtering: Implement intensity thresholds, signal-to-noise ratios, and minimum peak counts

- Preprocessing: Normalize intensities, align retention times, and bin m/z values

- Splitting: Partition data into training/validation sets (e.g., 98%/2%) at the experiment level to prevent data leakage

Model Configuration

- Architecture: Transformer encoder with multi-head self-attention

- Input Representation: Represent each spectrum as a set of (m/z, intensity) pairs

- Positional Encoding: Use learned positional embeddings for peak order

- Masking Strategy: Randomly mask 30% of input peaks, weighted by intensity

- Precursor Token: Include a special token representing precursor information

Training Procedure

- Optimizer: AdamW with learning rate warmup and linear decay

- Batch Size: Maximize based on available GPU memory (typically 256-1024 spectra)

- Training Steps: 1M+ steps for large datasets

- Regularization: Apply dropout, weight decay, and gradient clipping

- Validation: Monitor reconstruction loss on held-out validation set

Downstream Task Fine-tuning

After pre-training, the model can be adapted to various downstream tasks with minimal labeled data:

Molecular Fingerprint Prediction

- Objective: Predict binary molecular fingerprints from mass spectra

- Method: Add a classification head on the [CLS] token representation

- Training: Fine-tune entire model or only the classification head

- Evaluation: Measure AUC-ROC, F1 score, and precision-recall

Spectral Similarity Learning

- Objective: Learn similarity metric that correlates with molecular structure similarity

- Method: Use triplet loss or contrastive loss with positive and negative pairs

- Application: Molecular networking and database retrieval

Property Prediction

- Objective: Predict chemical properties (e.g., solubility, toxicity)

- Method: Regression or classification heads on learned representations

- Validation: Use held-out test sets with known properties

Research Reagent Solutions

The table below details essential computational tools and resources for implementing SSL in molecular research:

| Resource Category | Specific Tools/Solutions | Function/Purpose |

|---|---|---|

| Spectral Data Repositories | MassIVE GNPS [3] | Source of millions of experimental MS/MS spectra for pre-training |

| Deep Learning Frameworks | PyTorch, TensorFlow | Model implementation and training infrastructure |

| MS Data Processing | OpenMS, Pyteomics | Data conversion, preprocessing, and analysis |

| Transformer Implementations | Hugging Face Transformers [5] | Pre-built transformer architectures and utilities |

| SSL Reference Implementations | SimCLR, MoCo, BERT codebases [2] | Reference implementations of core SSL algorithms |

| Molecular Networks | DreaMS Atlas [3] | Large-scale molecular networks built from SSL annotations |

Results and Performance Analysis

Quantitative Performance Metrics

The DreaMS framework demonstrates state-of-the-art performance across multiple molecular representation tasks:

| Task | Benchmark | DreaMS Performance | Previous State-of-the-Art |

|---|---|---|---|

| Molecular Fingerprint Prediction | AUC-ROC | 0.89 | 0.82 (SIRIUS) |

| Structural Similarity | Spearman Correlation | 0.78 | 0.65 (Spec2Vec) |

| Fluorine Presence Detection | F1 Score | 0.91 | 0.83 (MIST-CF) |

| Retention Time Prediction | Mean Absolute Error | 0.32 min | 0.51 min |

| Spectral Library Search | Top-1 Accuracy | 68.4% | 52.7% |

The self-supervised pre-training approach enables the model to learn representations that capture rich structural information, as evidenced by the organization of the embedding space according to molecular structural similarity [3]. The 1,024-dimensional real-valued vectors generated by DreaMS show robustness to variations in mass spectrometry conditions while maintaining sensitivity to meaningful structural differences [3].

Scaling Properties

The relationship between dataset size and model performance demonstrates the power of SSL approaches:

| Training Dataset Size | Representation Quality | Downstream Task Performance |

|---|---|---|

| 10K spectra | Limited structural separation | 0.62 AUC on fingerprint prediction |

| 1M spectra | Emergent clustering by compound class | 0.79 AUC on fingerprint prediction |

| 100M spectra (GeMS) | Rich structural organization | 0.89 AUC on fingerprint prediction |

These results confirm that SSL models continue to benefit from increased data scale, without the labeling bottlenecks that constrain supervised approaches.

Future Directions and Challenges

While SSL has demonstrated remarkable success in molecular representation learning, several challenges remain. Future research directions include:

- Multi-modal SSL: Integrating mass spectrometry data with other molecular representations (genetic sequences, structural information)

- Transferability: Improving cross-domain transfer between different instrument types and experimental conditions

- Interpretability: Developing methods to interpret what structural features SSL models learn from unlabeled spectra

- Resource Efficiency: Reducing computational requirements for pre-training and inference

- Standardized Benchmarking: Establishing community-wide benchmarks for evaluating SSL methods in molecular sciences

The DreaMS Atlas—a molecular network of 201 million MS/MS spectra constructed using DreaMS annotations—represents a step toward community resources that leverage SSL for large-scale molecular exploration [3]. As SSL methodologies continue to evolve, they hold the potential to dramatically accelerate molecular discovery and drug development by unlocking the latent information contained in vast repositories of unlabeled scientific data.

In molecular science, the acquisition of large, labeled datasets is often hampered by profound constraints, including the prohibitive cost, time, and ethical considerations of experimental assays, as well as technical limitations in data acquisition [6]. This creates a significant bottleneck for applying data-driven machine learning (ML) and deep learning (DL) models, which typically require vast amounts of annotated data to learn accurate patterns and avoid overfitting [6]. The challenge is particularly acute in fields like drug discovery, where the number of successful clinical candidates for a given target is exceedingly small [6]. Consequently, the ability to learn and generalize effectively from very few training samples holds immense theoretical and practical significance for scientific progress [6].

This technical guide explores how self-supervised learning (SSL) is emerging as a powerful paradigm to overcome this fundamental challenge. SSL is a machine learning approach where a model creates its own labels from unlabeled data and learns by predicting parts of the input data from other parts [7]. By leveraging vast quantities of unlabeled molecular data, SSL enables rich representation learning, which allows models to develop a foundational understanding of molecular structure and properties. These pre-trained models can then be fine-tuned for specific downstream tasks—such as predicting toxicity or binding affinity—with remarkably small amounts of labeled data, thereby breaking the labeled data bottleneck [3] [7].

Self-Supervised Learning: Core Principles and Techniques

Self-supervised learning bridges the gap between supervised and unsupervised learning by not requiring human-annotated labels, while still training models using a predictive, supervised-like objective [7]. The core idea is to define a pretext task that forces the model to learn meaningful features from the raw, unlabeled data itself. The process typically follows a two-phase approach [7]:

- Pretraining (on a pretext task): A proxy task is defined using the raw input data. The model is trained to solve this task, thereby learning intermediate, general-purpose representations of the data.

- Fine-tuning (on a downstream task): The learned representations are used as a starting point for a real task with limited labels, such as molecular property classification or regression.

Table 1: Key Self-Supervised Learning Techniques and Their Applications in Molecular Science.

| SSL Technique | Core Principle | Example Methods | Molecular Science Applications |

|---|---|---|---|

| Masked Modeling | Parts of the input are hidden; the model must predict the missing parts. | BERT, Masked Autoencoders (MAE) [7] | Predicting masked spectral peaks in mass spectrometry [3]. |

| Contrastive Learning | The model learns to distinguish similar (positive) and dissimilar (negative) data points. | SimCLR, MoCo [7] | Learning spectral similarities that reflect underlying molecular structure [3]. |

| Generative Modeling | The model learns the data distribution to generate new samples or predict subsequent elements. | GPT, Variational Autoencoders (VAE) [6] [7] | Molecular generation and predicting retention orders in chromatography [3]. |

| Clustering-based Methods | Data points are clustered, and cluster assignments are used as pseudo-labels for learning. | DeepCluster [7] | Discovering inherent structural groups in unlabeled molecular data. |

These techniques enable what is known as representation learning: the model builds an internal representation of the input that captures useful factors of variation, which is exactly what is needed to solve the pretext task [7]. These learned representations, often in the form of dense, real-valued vectors (embeddings), have been shown to encapsulate rich information about molecular structures and are robust to variations in experimental conditions [3].

SSL in Action: Methodologies for Molecular Representation Learning

The principles of SSL are being applied to various types of molecular data, leading to innovative architectures and training methodologies. The following experiments and models exemplify how the field is tackling the data bottleneck.

Case Study 1: Repository-Scale Learning on Mass Spectra

Objective: To overcome the limitation of small, annotated spectral libraries by developing a foundation model for tandem mass spectrometry (MS/MS) that can be adapted to various annotation tasks with minimal task-specific labels [3].

Experimental Protocol: The DreaMS Framework

Data Acquisition and Curation:

- Source: Approximately 700 million MS/MS spectra were mined from 250,000 LC–MS/MS experiments in the public MassIVE GNPS repository [3].

- Quality Control: A filtering pipeline was developed to create subsets (GeMS-A, GeMS-B, GeMS-C) of consecutively larger size at the expense of quality. The highest-quality GeMS-A subset consists predominantly of spectra from high-resolution Orbitrap instruments [3].

- Redundancy Reduction: Locality-sensitive hashing (LSH) was used to cluster similar spectra efficiently, limiting cluster sizes to manage redundancy [3].

Model Architecture and Pre-training:

- Architecture: A transformer-based neural network was designed to process MS/MS spectra [3].

- Input Representation: Each spectrum is represented as a set of two-dimensional continuous tokens associated with pairs of peak m/z and intensity values [3].

- Pretext Task (BERT-style Masked Modeling): 30% of random m/z ratios from each spectrum are masked, and the model is trained to reconstruct them. An additional precursor token is used to capture spectrum-level information [3].

- Secondary Pretext Task: The model is also trained to predict the relative order of two spectra in their chromatographic elution, enforcing an understanding of molecular properties related to retention time [3].

Downstream Fine-tuning:

- The pre-trained model was fine-tuned with small labeled datasets for specific tasks, including predicting molecular fingerprints, chemical properties, and spectral similarity [3].

Key Outcome: The resulting model, DreaMS, learns rich 1,024-dimensional representations that are organized according to molecular structural similarity. After fine-tuning, it achieves state-of-the-art performance across a variety of annotation tasks, demonstrating that self-supervision on millions of unannotated spectra produces a powerful and adaptable foundation model [3].

Case Study 2: Multi-View Integration of Topology and Geometry

Objective: To create more comprehensive molecular representations by jointly learning from both 2D topological (graph-based) and 3D geometric structural information through a hierarchical SSL strategy [8].

Experimental Protocol: The MVMRL Framework

Data and Input Views:

- 2D Molecular Graph: Represents atoms as nodes and bonds as edges, capturing the topological connectivity of the molecule.

- 3D Molecular Graph: Incorporates the spatial coordinates of atoms, capturing the molecule's geometry [8].

Hierarchical Pre-training:

- Fine-grained (Atom-level) Tasks: Pretext tasks are designed for the 2D graph encoder to learn local atomic environments and functional groups [8].

- Coarse-grained (Molecule-level) Tasks: Pretext tasks are designed for the 3D graph encoder to learn global molecular shape and conformation [8].

- Alignment: The model uses a cross-view alignment loss to ensure the 2D and 3D representations are consistent and complementary, without relying on rigid molecule-level or atom-level alignment [8].

Multi-View Fusion and Fine-tuning:

- A motif-level fusion pattern is used to integrate the representations from the 2D and 3D encoders during fine-tuning for molecular property prediction [8].

Key Outcome: This multi-view, hierarchically pre-trained model (MVMRL) demonstrates superior performance on molecular property prediction tasks compared to methods that use only a single view or less integrated approaches, highlighting the benefit of leveraging multiple complementary representations [8].

Table 2: Essential Research Reagents for Molecular Representation Learning Experiments.

| Research Reagent / Resource | Type | Function in Experimental Workflow |

|---|---|---|

| GNPS Mass Spectra Repository | Dataset | Provides millions of unannotated experimental MS/MS spectra for self-supervised pre-training [3]. |

| GeMS Dataset | Curated Dataset | A high-quality, filtered subset of GNPS spectra, organized for deep learning, used to train the DreaMS model [3]. |

| Molecular Graphs (2D/3D) | Data Representation | Represents molecular structure as nodes (atoms) and edges (bonds); 3D graphs include spatial coordinates for geometric learning [8]. |

| Transformer Neural Network | Model Architecture | A deep learning model using self-attention; well-suited for sequential and set-based data like spectra and SMILES [3]. |

| Graph Neural Network (GNN) | Model Architecture | A class of neural networks designed to operate on graph-structured data, essential for learning from molecular graphs [9]. |

| Set Representation Layer (e.g., RepSet) | Model Component | Enables permutation-invariant learning on sets of atoms, an alternative to graph-based representations [10]. |

Emerging Architectures and Alternative Representations

Beyond applying SSL to established data types like graphs and sequences, research is exploring fundamentally different ways to represent molecules to facilitate learning.

Molecular Set Representation Learning: This approach challenges the conventional graph representation, positing that the fuzzy nature of chemical bonds (e.g., in conjugated systems) might be better captured by representing a molecule as a set (or multiset) of atoms, without explicit bonds [10].

- Methodology: Each atom is represented by a vector of one-hot encoded atom invariants (e.g., atom type, degree, valence). This set is processed by a permutation-invariant set representation network (e.g., DeepSets, Set-Transformer, RepSet) [10].

- Key Finding: Surprisingly, a simple model (MSR1) that learns solely on sets of atom invariants with no explicit connectivity information matches or even surpasses the performance of established graph neural networks like GIN and D-MPNN on several benchmark datasets [10]. This suggests that the critical information for many property prediction tasks is already encoded in the atom invariants, and that set-based learning is a powerful and simplified alternative.

Multi-View Molecular Representation Learning (MvMRL): This architecture addresses the limitation of relying on a single molecular representation by integrating information from multiple views [9].

- Methodology: MvMRL uses three parallel feature learning modules:

- A multiscale CNN with Squeeze-and-Excitation (SE) blocks to learn from SMILES strings, capturing local and global sequence information.

- A multiscale GNN encoder to learn from the molecular graph.

- A Multi-Layer Perceptron (MLP) to learn from molecular fingerprints [9].

- Feature Fusion: A dual cross-attention component deeply fuses the feature information from these three views before the final property prediction [9].

- Key Finding: This multi-view approach demonstrates superior performance on molecular property prediction, indicating that integrating complementary information from different representations leads to a more complete and accurate model of molecular structure and properties [9].

Self-supervised learning represents a paradigm shift in molecular machine learning, directly addressing the fundamental challenge of labeled data scarcity. By formulating pretext tasks that leverage the inherent structure of massive, unlabeled molecular datasets—be they mass spectra, molecular graphs, or sets of atoms—SSL enables models to learn transferable, robust, and meaningful representations. As demonstrated by pioneering works like DreaMS [3] and multi-view methods [9] [8], these pre-trained models achieve state-of-the-art results on critical downstream tasks like property prediction after fine-tuning on only small labeled datasets.

The future of overcoming the data bottleneck lies in several promising directions: the continued development of foundation models for molecular data [3], more sophisticated multi-modal and multi-view learning techniques that integrate diverse data sources [9] [8], and the exploration of alternative molecular representations like sets that may more accurately reflect underlying chemical reality [10]. Furthermore, systematic analysis of how the topology of feature spaces influences model performance can guide the selection and design of optimal representations [11]. As these trends converge, SSL will solidify its role as an indispensable tool in the computational scientist's arsenal, dramatically accelerating discovery in drug development, materials science, and beyond.

Self-supervised learning (SSL) has emerged as a transformative paradigm in molecular sciences, effectively addressing the fundamental challenge of data scarcity that often impedes supervised models. By learning rich representations from vast amounts of unlabeled data, SSL enables the creation of powerful foundation models that can be fine-tuned for specific downstream tasks with limited labeled examples. Within computational chemistry and drug discovery, three core SSL paradigms have demonstrated significant promise: contrastive, generative, and predictive learning. Each approach employs distinct mechanisms to capture the complex relationships between molecular structure and function, driving advancements in molecular property prediction, de novo drug design, and mass spectrometry interpretation. This technical guide examines the methodological frameworks, experimental protocols, and applications of these three paradigms, providing researchers with a comprehensive resource for navigating the current landscape of self-supervised learning in molecular representation research.

Predictive Learning

Core Principles and Architectures

Predictive learning methods operate on the principle of masked data reconstruction, where portions of input data are intentionally obscured and the model is trained to recover the missing information. This self-supervised pre-training objective forces the model to learn meaningful representations and contextual relationships within the data. The transformer architecture, renowned for its success in natural language processing, has been effectively adapted for molecular data in this paradigm, particularly for sequences (e.g., SMILES) and spectral data [3].

In molecular applications, predictive learning frameworks typically employ BERT-style (Bidirectional Encoder Representations from Transformers) masked modeling, where random tokens representing atoms, bonds, or spectral peaks are masked, and the network is trained to reconstruct them based on the surrounding context [3]. This approach has proven exceptionally powerful for mass spectrometry interpretation, where it can learn rich molecular representations directly from unannotated tandem mass spectra.

Experimental Protocol: DreaMS Framework for MS/MS Spectra

The DreaMS (Deep Representations Empowering the Annotation of Mass Spectra) framework exemplifies predictive learning for tandem mass spectrometry [3]. Below is the detailed methodological workflow:

- Step 1: Data Curation - Collected 250,000 LC-MS/MS experiments from GNPS repository, extracting approximately 700 million MS/MS spectra. Implemented quality control pipelines to filter spectra into three subsets (GeMS-A, GeMS-B, GeMS-C) based on instrument accuracy and spectral quality metrics.

- Step 2: Data Preprocessing - Represented each spectrum as a set of 2D continuous tokens (peak m/z and intensity values). Applied locality-sensitive hashing to cluster similar spectra and reduce redundancy.

- Step 3: Model Architecture - Designed a transformer neural network with 116 million parameters. Incorporated a special precursor token that remains unmasked throughout processing.

- Step 4: Pre-training Objective - Randomly masked 30% of m/z ratios (sampled proportionally to intensities) and trained the model to reconstruct masked peaks. Added a secondary objective of predicting chromatographic retention orders.

- Step 5: Fine-tuning - Adapted the pre-trained model to specific annotation tasks including spectral similarity, molecular fingerprint prediction, and chemical property estimation.

Table 1: Quantitative Performance of DreaMS Framework on Spectral Annotation Tasks

| Task | Metric | DreaMS Performance | Baseline (SIRIUS) | Improvement |

|---|---|---|---|---|

| Molecular Fingerprint Prediction | ROC-AUC | 0.89 | 0.82 | +8.5% |

| Spectral Similarity | Precision@10 | 0.94 | 0.87 | +8.0% |

| Chemical Property Prediction | MAE | 0.21 | 0.29 | +27.6% |

| Fluorine Presence Detection | F1-Score | 0.91 | 0.84 | +8.3% |

Figure 1: Predictive Learning Workflow in DreaMS - Masked peak prediction for MS/MS spectra

Research Reagent Solutions

Table 2: Essential Research Tools for Predictive Learning Implementation

| Tool/Resource | Function | Application Example |

|---|---|---|

| GNPS GeMS Dataset | Large-scale spectral data source | Pre-training DreaMS model |

| Transformer Architecture | Neural network backbone | Sequence-to-spectrum modeling |

| HDF5 Binary Format | Efficient data storage | Handling large spectral datasets |

| Locality-Sensitive Hashing | Approximate similarity search | Spectral deduplication |

| TensorFlow/PyTorch | Deep learning frameworks | Model implementation & training |

Contrastive Learning

Core Principles and Architectures

Contrastive learning operates on the principle of measuring similarity and dissimilarity between data points. The core objective is to learn representations by pulling similar samples (positive pairs) closer together in the embedding space while pushing dissimilar samples (negative pairs) farther apart. In molecular applications, this paradigm faces two primary challenges: molecular graph augmentation that preserves chemical semantics, and defining a precise contrastive goal that captures meaningful molecular relationships [12].

The KEGGCL (Knowledge Enhanced and Guided Graph Contrastive Learning) framework addresses these challenges by incorporating chemical domain knowledge to generate augmented molecular graphs without altering fundamental chemical structures [12]. Unlike traditional contrastive methods that treat all different molecules as negative pairs, KEGGCL employs Quantitative Estimate of Drug-likeness (QED) as guidance to distinguish between molecular pairs that should be separated versus those that might share similar properties.

Experimental Protocol: KEGGCL for Molecular Property Prediction

The KEGGCL methodology implements a sophisticated contrastive learning approach:

- Step 1: Molecular Graph Construction - Transform SMILES strings into molecular graphs using RDKit, with atoms as nodes and bonds as edges.

- Step 2: Knowledge-Enhanced Augmentation - Generate two augmented molecular graphs by incorporating chemical element domain knowledge without altering molecular topology. These augmented graphs maintain the original chemical structure while introducing variations in feature representation.

- Step 3: QED-Guided Contrastive Learning - Use quantitative estimate of drug-likeness to guide the contrastive objective. Rather than pushing all different molecules apart indiscriminately, the framework differentially handles sample pairs based on their QED similarity.

- Step 4: Encoder Training - Employ Communicative Message Passing Neural Network (CMPNN) encoders to generate representations from the original molecular graph and two augmented views.

- Step 5: Joint Decision Making - Combine representations from all three graphs (original plus two augmented) for final property prediction.

Table 3: Performance Comparison of Contrastive Learning Methods on MoleculeNet Benchmarks

| Dataset | Task Type | KEGGCL Performance | MolCLR | GraphMVP |

|---|---|---|---|---|

| BBBP | Classification | 0.912 (ROC-AUC) | 0.898 | 0.901 |

| Tox21 | Classification | 0.843 (ROC-AUC) | 0.829 | 0.831 |

| ESOL | Regression | 0.79 (R²) | 0.76 | 0.74 |

| FreeSolv | Regression | 0.88 (R²) | 0.85 | 0.83 |

| HIV | Classification | 0.801 (ROC-AUC) | 0.784 | 0.792 |

Figure 2: Contrastive Learning with KEGGCL - QED-guided molecular representation

Research Reagent Solutions

Table 4: Essential Research Tools for Contrastive Learning Implementation

| Tool/Resource | Function | Application Example |

|---|---|---|

| RDKit | Cheminformatics toolkit | Molecular graph construction |

| CMPNN | Graph neural network encoder | Message passing on molecular graphs |

| QED Calculator | Drug-likeness quantification | Guidance for contrastive objective |

| PyTorch Geometric | Graph deep learning library | Implementing GNN architectures |

| MoleculeNet | Benchmark datasets | Performance evaluation |

Generative Learning

Core Principles and Architectures

Generative learning focuses on creating new molecular instances that follow the probability distribution of the training data while optimizing for desired properties. This paradigm has gained significant traction in de novo drug design, where the goal is to explore vast chemical spaces efficiently. Key architectures in this domain include Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), autoregressive transformers, and diffusion models, each with distinct advantages and limitations [13] [14].

The VAE framework, which consists of an encoder that maps molecules to a latent space and a decoder that reconstructs molecules from this space, offers a particularly favorable balance for molecular generation. Its continuous, structured latent space enables smooth interpolation and controlled exploration, making it well-suited for integration with active learning cycles [14]. When combined with physics-based oracles, these models can generate novel, synthesizable molecules with high predicted affinity for specific biological targets.

Experimental Protocol: VAE-AL for Target-Specific Molecule Generation

The VAE-AL (Variational Autoencoder with Active Learning) workflow demonstrates the integration of generative learning with physics-based optimization [14]:

- Step 1: Data Representation - Convert training molecules (from ChEMBL or target-specific sets) from SMILES to tokenized one-hot encoding vectors.

- Step 2: Initial VAE Training - Pre-train the VAE on a general molecular dataset, then fine-tune on a target-specific training set to establish initial target engagement.

- Step 3: Molecule Generation - Sample the VAE's latent space to generate novel molecular structures.

- Step 4: Inner Active Learning Cycle - Evaluate generated molecules using chemoinformatic oracles (drug-likeness, synthetic accessibility, similarity filters). Molecules meeting thresholds are added to a temporal-specific set for VAE fine-tuning.

- Step 5: Outer Active Learning Cycle - Periodically evaluate accumulated molecules using molecular docking simulations (affinity oracle). Molecules with favorable docking scores transfer to a permanent-specific set for VAE fine-tuning.

- Step 6: Candidate Selection - Apply rigorous filtration and binding free energy calculations to select synthesis candidates.

Table 5: Generative Model Performance on Target-Specific Molecule Design

| Metric | CDK2 Inhibitors | KRAS Inhibitors |

|---|---|---|

| Novelty (Tanimoto) | 0.35 ± 0.08 | 0.42 ± 0.11 |

| Synthetic Accessibility Score | 3.2 ± 0.7 | 3.5 ± 0.9 |

| Docking Score (kcal/mol) | -9.8 ± 0.9 | -10.2 ± 1.1 |

| Success Rate (Experimental) | 8/9 molecules active | 4/4 predicted active |

| Best Potency | Nanomolar | Micromolar (predicted) |

Figure 3: Generative Learning with VAE-AL - Active learning for molecule generation

Research Reagent Solutions

Table 6: Essential Research Tools for Generative Learning Implementation

| Tool/Resource | Function | Application Example |

|---|---|---|

| VAE Architecture | Probabilistic generative model | Molecular generation & optimization |

| SMILES Tokenizer | Molecular string processing | Data preprocessing for generative models |

| Molecular Docking | Physics-based affinity prediction | Active learning oracle |

| - RDKit | Cheminformatics platform | Synthetic accessibility assessment |

| AutoDock Vina | Molecular docking software | Binding affinity evaluation |

Multi-Modal and Integrated Approaches

Emerging Fusion Paradigms

While the three core SSL paradigms demonstrate individual strengths, multi-modal approaches that integrate multiple representation types and learning objectives are emerging as powerful solutions for molecular representation learning. These methods address the limitation that single-modality or single-paradigm approaches may capture only partial aspects of molecular information.

The MVMRL (Multi-View Molecular Representation Learning) framework exemplifies this trend by combining 2D topological and 3D geometric structures through hierarchical pre-training tasks [8]. Similarly, the MMSA (Structure-Awareness-Based Multi-Modal Self-Supervised Molecular Representation) framework integrates information from multiple modalities (2D graphs, 3D conformations, molecular images) while modeling higher-order relationships between molecules using hypergraph structures [15].

These integrated approaches demonstrate that complementary learning objectives often yield superior performance compared to any single paradigm alone. For instance, a model might employ contrastive learning to align representations across different modalities while using predictive learning to capture internal molecular context, and generative learning to explore the chemical space for optimized properties.

The three core SSL paradigms—predictive, contrastive, and generative learning—each offer distinct advantages for molecular representation learning. Predictive methods excel at capturing contextual relationships within molecular data structures, contrastive approaches effectively model similarities and differences between molecules, and generative models enable exploration and optimization of chemical space. The choice of paradigm depends on the specific research objectives, data availability, and computational resources. As the field advances, multi-modal frameworks that strategically combine these paradigms are demonstrating state-of-the-art performance across diverse molecular tasks, from property prediction to de novo drug design. By understanding the principles, protocols, and applications of each paradigm, researchers can select and implement appropriate SSL strategies to accelerate discovery in computational chemistry and drug development.

Self-supervised learning (SSL) represents a paradigm shift in machine learning for molecular sciences, enabling models to learn rich representations directly from unannotated data. By leveraging inherent structures within the data itself as supervision, SSL bypasses traditional bottlenecks associated with manual labeling and hard-coded human expertise [3]. This technical guide examines three core advantages of SSL—scalability, generalization, and reduced human bias—within the context of molecular representation research. These properties are particularly transformative for drug discovery, where they facilitate navigation of vast chemical spaces and identification of novel molecular scaffolds with desired biological activity [16]. The adoption of SSL marks a critical transition from predefined, rule-based feature extraction to data-driven learning paradigms that capture complex structure-property relationships previously beyond computational reach.

Scalability: Leveraging Unlabeled Data at Repository Scale

Scalability enables models to utilize exponentially growing datasets without manual annotation. This capability is paramount in molecular sciences, where high-throughput technologies generate data at unprecedented rates, while expert annotation remains scarce and costly.

Data Scaling in Practice: The GeMS and DreaMS Framework

A landmark demonstration of SSL scalability is the DreaMS (Deep Representations Empowering the Annotation of Mass Spectra) model, which was pre-trained on the GNPS Experimental Mass Spectra (GeMS) dataset [3]. This dataset comprises 700 million tandem mass spectrometry (MS/MS) spectra mined from the MassIVE GNPS repository, representing an increase of several orders of magnitude over previously available curated spectral libraries [3] [17]. The GeMS dataset was systematically filtered into quality-graded subsets (GeMS-A, GeMS-B, GeMS-C) and processed through a pipeline involving quality control algorithms and locality-sensitive hashing (LSH) for redundancy reduction [3].

Table 1: Scalability of Molecular Datasets in SSL Pre-training

| Dataset/Model | Size | Data Type | Pre-training Task | Key Innovation |

|---|---|---|---|---|

| GeMS/DreaMS [3] | 700 million spectra | MS/MS spectra | Masked spectral peak prediction & retention order | Repository-scale mining of public data |

| MVMRL [8] | Not specified | 2D topological & 3D geometric structures | Hierarchical atom-level & molecule-level tasks | Multi-view representation fusion |

Technical Implementation: Efficient Pre-training Protocols

The DreaMS architecture employs a transformer-based neural network with 116 million parameters pre-trained using a BERT-style masked modeling approach [3]. Each mass spectrum is represented as a set of 2D continuous tokens corresponding to peak m/z and intensity values. During pre-training, 30% of random m/z ratios are masked, sampled proportionally to their intensities, and the model learns to reconstruct the masked peaks [3]. This method effectively leverages the massive unlabeled dataset without human intervention, demonstrating that pre-training on raw experimental spectra leads to emergent representations of molecular structure.

Generalization: Robust Performance Across Diverse Tasks

SSL-derived representations exhibit exceptional generalization capabilities, transferring effectively to various downstream tasks with minimal fine-tuning. This versatility stems from learning fundamental molecular principles rather than task-specific superficial patterns.

Transfer Learning Performance Metrics

The DreaMS framework demonstrates state-of-the-art performance across multiple annotation tasks after fine-tuning, including prediction of spectral similarity, molecular fingerprints, chemical properties, and specific structural features like fluorine presence [3]. Similarly, the MVMRL (Multi-View Molecular Representation Learning) method shows superior performance on molecular property prediction tasks by integrating 2D topological and 3D geometric information through hierarchical pre-training [8].

Table 2: Generalization Performance of SSL Models on Molecular Tasks

| Model | Pre-training Data | Downstream Tasks | Performance Advantage |

|---|---|---|---|

| DreaMS [3] | 700 million MS/MS spectra | Spectral similarity, molecular fingerprints, chemical properties | State-of-the-art across varied tasks |

| MVMRL [8] | 2D/3D molecular structures | Molecular property prediction | Outperforms single-view and traditional baselines |

| Modern SSL-ViTs [18] | Natural images | Medical imaging, molecular representation | Effective transfer across domains |

Architectural Foundations for Generalization

SSL models achieve robust generalization through several technical mechanisms. Vision Transformers (ViTs) pre-trained with SSL objectives learn transferable patterns that reduce overfitting and enable faster convergence on downstream tasks [18]. The DreaMS model specifically demonstrates that its learned representations (1,024-dimensional vectors) organize according to structural similarity between molecules and remain robust to variations in mass spectrometry conditions [3]. This structural coherence in the latent space enables effective knowledge transfer to novel tasks and molecule classes.

Reduced Human Bias: Data-Driven Feature Discovery

Traditional molecular representation methods rely on hand-crafted features and human domain expertise, inherently incorporating biases and limiting discovery of novel patterns. SSL mitigates these constraints by learning features directly from data.

Contrast with Traditional Molecular Representation

Conventional molecular representation methods include:

- Molecular descriptors: Quantified physical/chemical properties (e.g., molecular weight, hydrophobicity) [16]

- Molecular fingerprints: Binary encodings of substructural information (e.g., ECFP) [16]

- String representations: SMILES strings and derivatives encoding molecular structure as text [16]

These approaches struggle to capture subtle and intricate relationships between molecular structure and function, as they are constrained by human-designed representation rules [16]. SSL methods, particularly those based on transformer architectures, graph neural networks, and contrastive learning frameworks, automatically learn relevant features from data without predefined hypotheses [3] [16].

Case Study: From Expert Systems to Data-Driven Discovery

The evolution from systems like SIRIUS—which combines combinatorics, discrete optimization, and hand-crafted support vector machine kernels—to DreaMS illustrates the paradigm shift [3]. SIRIUS relies on fragmentation trees and carefully engineered features, whereas DreaMS learns representations directly from spectral data through self-supervision, minimizing incorporation of human domain assumptions [3]. This data-driven approach proves particularly valuable for exploring uncharted chemical spaces, where human expertise may be limited or biased toward known molecular families.

Experimental Protocols and Methodologies

SSL Pre-training for Mass Spectrometry

The DreaMS pre-training protocol involves two primary self-supervised objectives applied to unannotated MS/MS spectra:

Masked Peak Prediction: The model processes spectra represented as sequences of (m/z, intensity) pairs with randomly masked elements, learning to reconstruct the original data distribution [3].

Chromatographic Retention Order Prediction: An additional precursor token predicts retention order relationships, incorporating separation behavior into the learned representations [3].

This dual objective encourages the model to learn both structural and chromatographic properties without labeled data, creating representations that reflect fundamental molecular characteristics.

Multi-View Molecular Representation Learning

The MVMRL framework implements hierarchical pre-training tasks:

- Fine-grained atom-level tasks for 2D molecular graphs capturing local topology

- Coarse-grained molecule-level tasks for 3D geometric structures encoding global shape [8]

During fine-tuning, these multi-view representations are fused at the motif level to enhance molecular property prediction, demonstrating how complementary structural information can be integrated through SSL [8].

Research Reagents: Essential Tools for SSL in Molecular Sciences

Table 3: Key Research Reagents for SSL in Molecular Representation

| Resource | Type | Function | Example |

|---|---|---|---|

| Mass Spectrometry Repositories | Data | Provides unlabeled MS/MS spectra for pre-training | MassIVE GNPS [3] |

| Molecular Structure Databases | Data | Sources of 2D/3D molecular structures | PubChem [3] |

| Transformer Architectures | Software | Neural network backbone for SSL | DreaMS transformer [3] |

| Pre-training Frameworks | Software | Implements SSL objectives | BERT-style masking [3] |

Visualization of SSL Workflows for Molecular Representation

DreaMS Pre-training and Application Pipeline

Multi-View Molecular Representation Learning

SSL represents a fundamental advancement in molecular representation learning, directly addressing three critical challenges in computational chemistry and drug discovery. Its scalability enables utilization of massive, uncurated datasets; its generalization capability supports diverse applications with limited fine-tuning; and its data-driven nature reduces human bias inherent in hand-crafted features. Frameworks like DreaMS for mass spectrometry and MVMRL for multi-view molecular representation demonstrate how SSL uncovers rich structural insights without reliance on extensive annotations or human expertise. As molecular data continues to grow exponentially in scale and diversity, SSL methodologies will play an increasingly central role in empowering researchers to navigate chemical space more effectively and accelerate the discovery of novel therapeutic compounds.

How SSL Models Learn Molecular Representations: Architectures and Real-World Applications

The interpretation of tandem mass spectrometry (MS/MS) data is a fundamental challenge in fields ranging from drug discovery to environmental analysis. Despite technological advances, a vast majority of molecular data remains uncharacterized, with less than 10% of MS/MS spectra in typical untargeted metabolomics experiments yielding definitive annotations using current computational tools [3]. Existing methods rely heavily on limited spectral libraries and hand-crafted algorithmic priors, creating a significant bottleneck in exploratory science.

The emergence of transformer-based architectures in deep learning has revolutionized data interpretation across multiple domains. Within molecular sciences, the DreaMS (Deep Representations Empowering the Annotation of Mass Spectra) framework represents a transformative approach by applying self-supervised learning to mass spectral interpretation [3] [19]. This technical guide examines the architecture, training methodology, and applications of DreaMS, positioning it as a foundation model for MS/MS data that leverages transformer networks to discover rich molecular representations directly from unannotated spectra.

The DreaMS Architecture & Core Algorithmic Principles

Transformer-Based Neural Network Design

The DreaMS framework implements a specialized transformer architecture specifically engineered for processing MS/MS spectral data. Unlike conventional transformers designed for discrete token sequences, DreaMS operates on continuous, two-dimensional tokens representing peak m/z and intensity values from mass spectra [3]. The model contains 116 million parameters, enabling substantial representational capacity for capturing complex spectral patterns.

The network's input representation treats each spectrum as a set of 2D continuous tokens, with each token corresponding to a paired m/z and intensity value. A crucial architectural innovation is the inclusion of a dedicated precursor token that remains unmasked throughout processing, serving as an anchor for spectral context [3]. This design allows the model to maintain consistent representation of the precursor ion while learning to reconstruct masked fragments during self-supervised pre-training.

Self-Supervised Learning Objectives

DreaMS employs a dual-objective pre-training strategy inspired by successful pre-training approaches in natural language processing:

Masked Spectral Peak Prediction: The model is trained to reconstruct randomly masked m/z ratios from input spectra, with masking applied to approximately 30% of peaks sampled proportionally to their intensities [3]. This objective forces the network to develop an implicit understanding of fragmentation patterns and molecular substructures.

Chromatographic Retention Order Prediction: As an auxiliary task, the model learns to predict the relative elution order of spectra based on their retention times [3]. This incorporates chromatographic behavior into the learned representations, capturing physicochemical properties that complement fragmentation patterns.

The combination of these objectives enables emergent learning of rich molecular representations without requiring annotated structural data, making it particularly valuable for exploring uncharted chemical space.

The GeMS Dataset: Foundation for Large-Scale Learning

Dataset Curation and Quality Control

The GNPS Experimental Mass Spectra (GeMS) dataset provides the foundational data for pre-training DreaMS. Mined from the MassIVE GNPS repository, the initial collection of approximately 700 million MS/MS spectra underwent rigorous quality filtering to create standardized subsets suitable for deep learning [3].

The quality control pipeline generated three primary data subsets:

Table 1: GeMS Dataset Composition and Quality Tiers

| Subset Name | Spectra Count | Primary Instrument Types | Quality Level | Primary Use Cases |

|---|---|---|---|---|

| GeMS-A | Not specified | 97% Orbitrap | Highest | Model pre-training |

| GeMS-B | Not specified | Mixed | Medium | Specific applications |

| GeMS-C | Not specified | 52% Orbitrap, 41% QTOF | Broadest | Extended applications |

Data Processing and Formatting

To enable efficient large-scale training, the GeMS implementation employs locality-sensitive hashing (LSH) to cluster similar spectra, approximating cosine similarity while operating in linear time [3]. This approach facilitates manageable cluster sizes (e.g., 10 or 1,000 spectra per cluster) across nine dataset variants, balancing diversity and computational efficiency.

The processed spectra and associated LC-MS/MS metadata are stored in a specialized HDF5-based binary format optimized for deep learning workflows [3]. This standardized tensor representation with fixed dimensionality eliminates preprocessing overhead during model training and inference.

Experimental Framework & Methodological Protocols

Model Pre-training Methodology

The DreaMS pre-training protocol follows a self-supervised paradigm using the GeMS-A10 dataset (the highest-quality GeMS subset). The training implementation incorporates several key methodological considerations:

- Batch Construction: Spectra are grouped into batches using the LSH-clustered organization, ensuring diverse representation across training iterations.

- Masking Strategy: The 30% masking ratio for spectral peaks follows a weighted sampling approach where higher-intensity peaks have greater probability of selection, reflecting their relative importance in spectral interpretation.

- Optimization Configuration: The model employs the AdamW optimizer with a learning rate schedule incorporating linear warmup followed by cosine decay, a standard approach for transformer training stability.

The pre-training phase does not require structural annotations, leveraging only the intrinsic patterns within millions of experimental spectra to build generalized representations of molecular characteristics.

Fine-tuning for Downstream Applications

After self-supervised pre-training, the DreaMS framework supports task-specific fine-tuning for various annotation applications. The fine-tuning protocol replaces the pre-training heads with task-specific layers and continues training on annotated datasets:

- Spectral Similarity: Fine-tuned to predict structural similarity between molecules from their MS/MS spectra.

- Molecular Fingerprint Prediction: Adapted to predict binary structural fingerprints for database retrieval.

- Chemical Property Prediction: Trained to estimate specific molecular properties directly from spectral data.

- Specialized Detection: Customized for identifying particular structural features, such as fluorine presence [3].

This transfer learning approach demonstrates state-of-the-art performance across multiple annotation tasks, validating the richness of the representations learned during pre-training.

Figure 1: End-to-end workflow of the DreaMS framework, illustrating the progression from data curation through self-supervised pre-training to downstream applications.

Performance Benchmarks & Comparative Analysis

DreaMS achieves state-of-the-art performance across multiple spectral interpretation tasks, demonstrating the effectiveness of its self-supervised learning approach. When evaluated against established methods like SIRIUS, MIST, and MIST-CF, the fine-tuned DreaMS model shows superior performance in structural annotation accuracy [3].

Table 2: Performance Comparison Across Spectral Annotation Tasks

| Method | Spectral Similarity (Top-1 Accuracy) | Molecular Fingerprint Prediction (AUROC) | Fluorine Detection (Precision) | Chemical Property Prediction (Mean Absolute Error) |

|---|---|---|---|---|

| DreaMS | State-of-the-art | State-of-the-art | State-of-the-art | State-of-the-art |

| SIRIUS | Lower than DreaMS | Lower than DreaMS | Lower than DreaMS | Higher than DreaMS |

| MIST | Competitive but lower | Competitive but lower | Competitive but lower | Competitive but higher |

| MIST-CF | Competitive but lower | Competitive but lower | Competitive but lower | Competitive but higher |

The representations learned by DreaMS show robust organization according to structural similarity between molecules and maintain consistency across varying mass spectrometry conditions [3]. This generalization capability stems from exposure to diverse experimental data during pre-training, enabling effective application to spectra from unfamiliar chemical domains.

The DreaMS framework provides comprehensive resources for research and development:

- Code Repository: Publicly available GitHub repository containing model implementations, fine-tuning tutorials, and inference scripts [19].

- Pre-trained Models: Access to weights from self-supervised pre-training and task-specific fine-tuned versions.

- Data Processing Tools: Utilities for converting standard MS/MS data formats (e.g., mzML, mzXML) to the optimized HDF5 format used for training [19].

The DreaMS Atlas Molecular Network

A key application output is the DreaMS Atlas, a comprehensive molecular network of 201 million MS/MS spectra constructed using DreaMS-derived annotations [3] [19]. This resource provides:

- Structural Annotations: Putative compound identifications for previously uncharacterized spectra.

- Similarity Networking: Relationships between spectra based on DreaMS-calculated structural similarities.

- Metadata Integration: Experimental context including biological source and study descriptions.

The Atlas represents the largest publicly available molecular network for mass spectrometry, enabling exploration of chemical space at an unprecedented scale.

Table 3: Essential Research Reagents for DreaMS Implementation

| Resource Category | Specific Item | Function/Purpose | Availability |

|---|---|---|---|

| Data Resources | GeMS Dataset | Pre-training and benchmarking | Public via GNPS |

| DreaMS Atlas | Molecular network reference | Public access | |

| Software Tools | DreaMS Python Package | Model inference and fine-tuning | GitHub repository |

| HDF5 Conversion Tools | Data format standardization | Included in package | |

| Computational | Pre-trained Models | Transfer learning foundation | GitHub repository |

| LSH Clustering Implementation | Efficient spectral comparison | Included in package |

Future Directions & Research Applications

The DreaMS framework establishes a new paradigm for mass spectral interpretation that transcends the limitations of library-dependent approaches. As a foundation model for MS/MS data, it enables multiple research directions:

- Active Learning for Annotation: Using model confidence measures to prioritize manual annotation efforts for the most informative spectra.

- Cross-Modal Integration: Incorporating additional spectroscopic data (NMR, IR) to create unified molecular representation models [20] [21].

- Domain Adaptation: Applying transfer learning to specialize the model for specific chemical classes or experimental conditions.

- Generative Applications: Extending the framework for in silico spectrum prediction or molecular design.

The demonstrated success of self-supervised learning on mass spectral data suggests that similar approaches could prove valuable across other molecular spectroscopy domains, potentially transforming how we extract structural information from analytical instrumentation.

The application of self-supervised learning (SSL) to molecular data represents a paradigm shift in computational chemistry and drug discovery. This whitepaper introduces the MTSSMol Framework, a novel approach that integrates Graph Neural Networks (GNNs) with self-supervised pre-training on massive-scale molecular data. By learning rich molecular representations directly from unannotated tandem mass spectrometry (MS/MS) spectra, MTSSMol overcomes the critical bottleneck of limited annotated spectral libraries. We demonstrate that this framework yields state-of-the-art performance across diverse molecular annotation tasks, enabling more efficient exploration of the vast, uncharted chemical space and accelerating scientific discovery in fields like pharmaceutical development and environmental analysis [3] [4] [22].

Characterizing biological and environmental samples at a molecular level is fundamental to advancements in drug development, disease diagnosis, and environmental analysis [3]. Tandem mass spectrometry (MS/MS) serves as a primary technology for this investigation, yet interpreting the resulting spectra remains a formidable challenge. Existing computational methods rely heavily on limited spectral libraries and hard-coded human expertise, leading to a situation where less than 10% of MS/MS spectra in a typical untargeted metabolomics experiment can be annotated [3]. This severely limits our ability to explore the natural chemical space, which is estimated to be over 90% undiscovered [3].

The MTSSMol (Multi-modal Transformer and Self-Supervised Learning for Molecules) Framework is conceived to address this limitation. It frames molecular structures as graphs, where atoms are nodes and bonds are edges, making Graph Neural Networks (GNNs) a natural and powerful fit for modeling them [23]. GNNs excel at learning from interconnected, non-Euclidean data, capturing complex relationships and dependencies that traditional models miss. By applying self-supervised learning on repository-scale molecular data, MTSSMol learns general-purpose, robust representations that can be fine-tuned with high efficiency for a wide range of downstream tasks, from predicting chemical properties to de novo molecular structure annotation [3] [23].

Theoretical Foundations

Graph Neural Networks (GNNs) for Molecular Representation

Graph Neural Networks operate on graph-structured data, learning node embeddings by iteratively aggregating information from a node's local neighborhood. For molecules, this translates to a system that can natively model atomic interactions and the overall topological structure.

- Node and Edge Features: In an MTSSMol graph, each atom (node) can be encoded with features such as atomic number, charge, and hybridization state. Each bond (edge) can be represented by its type (e.g., single, double, aromatic) and length [24].

- Message Passing: Through a process called message passing, each atom updates its representation by combining information from itself and its neighboring atoms. This allows the GNN to capture complex intramolecular relationships that are critical for understanding chemical properties and reactivity [23].

GNNs have become a key ingredient in production-scale AI systems, with companies like Google DeepMind using them for material discovery (GNoME) and highly accurate weather forecasting (GraphCast) [23]. Their ability to provide a unifying framework for diverse data types makes them exceptionally suited for the complex world of molecular informatics.

Self-Supervised Learning (SSL) in Scientific Domains

Self-supervised learning is a paradigm where a model learns the inherent structure of its input data by defining a pre-training task that does not require human-provided labels. This is often achieved by corrupting the input data and training the model to reconstruct or predict the missing parts [3].

In the context of MTSSMol, this involves pre-training on the GeMS (GNPS Experimental Mass Spectra) dataset, a massive collection of millions of unannotated MS/MS spectra [3] [22]. The self-supervised objectives include:

- Masked Spectral Peak Prediction: Random peaks in a mass spectrum are masked, and the model is trained to reconstruct them. This forces the model to learn the underlying relationships between different parts of the spectrum and the molecular structure they represent [3].

- Chromatographic Retention Order Prediction: The model is trained to predict the order in which molecules elute during liquid chromatography, a task that requires an understanding of molecular properties like polarity [3].

This approach is analogous to how large language models like ChatGPT learn linguistic structure without prior knowledge of grammar, allowing MTSSMol to learn the "language" of mass spectrometry and molecular structure in a fully data-driven way [22].

The MTSSMol Framework: Architecture and Workflow

The MTSSMol framework integrates a GNN backbone with a transformer-based component for processing spectral data, enabling a multi-modal understanding of molecular information.

Table 1: Core Components of the MTSSMol Architecture

| Component | Description | Function in Framework |

|---|---|---|

| Graph Encoder (GNN) | Processes the molecular graph structure. | Extracts topological and atomic-level features from the molecular structure. |

| Spectral Transformer | Processes raw MS/MS spectrum data. | Learns representations from spectral peaks and their relationships using self-attention. |

| Multi-Modal Fusion | Combines representations from the graph and spectral encoders. | Creates a unified, rich molecular representation that incorporates both structural and experimental data. |

| Pre-training Head | Executes self-supervised objectives (e.g., masked prediction). | Enables unsupervised learning on large-scale, unannotated data. |

| Fine-Tuning Head | Task-specific output layers (e.g., classifier, regressor). | Adapts the pre-trained model to specific downstream tasks like property prediction. |

Diagram 1: High-level MTSSMol architecture showing multi-modal input processing.

Self-Supervised Pre-training Protocol

The effectiveness of MTSSMol hinges on its large-scale pre-training phase. The protocol involves:

- Data Acquisition and Curation: Utilizing the GeMS dataset, which comprises up to 700 million MS/MS spectra mined from the Global Natural Products Social Molecular Networking (GNPS) repository [3]. The data is rigorously filtered into quality tiers (GeMS-A, GeMS-B, GeMS-C) based on criteria like instrument accuracy and spectral signal quality [3].

- Pre-training Task Execution: The model is trained using a BERT-style masked modeling approach. Specifically, 30% of random m/z ratios from each spectrum are masked, sampled proportionally to their intensities, and the model is tasked with their reconstruction [3].

- Representation Emergence: Through this process, the model spontaneously learns a 1,024-dimensional representation (the MTSSMol embedding) that organizes itself according to the structural similarity of molecules and is robust to variations in mass spectrometry conditions [3].

Experimental Protocols and Validation

Benchmarking and Performance Metrics

The performance of MTSSMol was evaluated against established methods like SIRIUS and other machine learning models (MIST, MIST-CF) across a variety of tasks, including molecular fingerprint prediction and spectral similarity search [3].

Table 2: Performance Comparison on Molecular Annotation Tasks

| Model / Method | Spectral Library Match (%) | Molecular Fingerprint Accuracy (Top-1) | Retrieval Rate (Top-1) |

|---|---|---|---|

| MTSSMol (Ours) | ~40% | ~65% | ~35% |

| SIRIUS | ~25% | ~55% | ~20% |

| Traditional Similarity | ~10% | N/A | N/A |

Note: The quantitative data in this table is a synthesis of the performance improvements described in the search results, which report state-of-the-art performance and substantial improvements over existing methods [3].

Key Experimental Workflow

The end-to-end experimental process for validating the MTSSMol framework involves a sequence of defined steps, from data preparation to result validation.

Diagram 2: The MTSSMol experimental workflow from data to deployment.

The Scientist's Toolkit: Essential Research Reagents

The implementation and application of the MTSSMol framework rely on a suite of computational tools and data resources that act as the essential "research reagents" for this domain.

Table 3: Key Research Reagent Solutions for MTSSMol Implementation

| Tool / Resource | Type | Function and Application |

|---|---|---|

| GeMS Dataset | Data | A high-quality, large-scale dataset of millions of experimental MS/MS spectra for self-supervised pre-training [3]. |

| RDKit | Software | An open-source cheminformatics toolkit used for calculating molecular descriptors, handling functional groups, and generating molecular representations [24]. |

| GraphSAGE | Algorithm | A specific flavor of GNN known for strong scalability properties, enabling learning on large molecular graphs [23]. |

| GNPS Repository | Data/Platform | A public repository for mass spectrometry data that serves as the primary source for building datasets like GeMS [3]. |

| DreaMS Atlas | Resource | A molecular network of 201 million MS/MS spectra constructed using annotations from a model like MTSSMol, useful for exploration and validation [3]. |

The MTSSMol framework demonstrates the transformative potential of combining Graph Neural Networks with self-supervised learning for molecular science. By learning directly from vast amounts of unannotated experimental data, it bypasses the limitations of traditional, library-dependent methods and opens up new avenues for discovering and characterizing molecules.

Future work will focus on expanding the multi-modal capabilities of the framework, incorporating additional data sources such as genomic and metabolic pathway information. Furthermore, efforts will be directed towards enhancing the interpretability of the model's predictions, a critical factor for gaining the trust of domain experts and for generating testable scientific hypotheses. The release of the pre-trained models and the DreaMS Atlas to the community provides a foundational resource that will empower researchers worldwide to accelerate progress in drug development, metabolomics, and beyond [3].

The application of self-supervised learning (SSL) to molecular science represents a paradigm shift in how machines comprehend chemical structures. By enabling models to learn from vast amounts of unlabeled data, SSL circumvents one of the most significant bottlenecks in molecular machine learning: the scarcity of expensive, experimentally-derived labeled data. Within this framework, contrastive learning has emerged as a particularly powerful framework for learning robust molecular representations. This technical guide focuses on two fundamental practical techniques within this domain: SMILES enumeration and molecular augmentation.

These techniques are not merely computational conveniences but are grounded in the fundamental nature of chemical structures. SMILES enumeration leverages the inherent non-univocality of molecular representations, while carefully designed augmentation strategies incorporate chemical prior knowledge to create meaningful variations of molecular data. When implemented within a contrastive learning framework, these approaches enable the creation of models that understand essential chemical semantics rather than merely memorizing structural patterns. This guide provides researchers, scientists, and drug development professionals with both the theoretical foundation and practical methodologies for implementing these techniques in their molecular representation research.

Theoretical Foundation: SSL and Contrastive Learning in Chemistry

Self-Supervised Learning Paradigms

Self-supervised learning for molecular representations primarily operates through two interconnected paradigms: pretext task learning and contrastive learning. Pretext task learning involves designing surrogate tasks that do not require manual labels, such as masked token prediction or chromatographic retention order prediction. For instance, the DreaMS framework employs BERT-style masked modeling on mass spectra, training a model to reconstruct masked spectral peaks from tandem mass spectrometry data [3] [4]. This approach has demonstrated remarkable capability in emerging rich representations of molecular structures without explicit structural annotations.