Semi-Automated Validation of Clinical Prediction Models: Methods, Applications, and Future Directions

The adoption of clinical prediction models in practice is hindered by the time-consuming and complex nature of traditional manual validation.

Semi-Automated Validation of Clinical Prediction Models: Methods, Applications, and Future Directions

Abstract

The adoption of clinical prediction models in practice is hindered by the time-consuming and complex nature of traditional manual validation. This article explores the emerging paradigm of semi-automated validation as a solution to increase the efficiency, accessibility, and frequency of model evaluation. Drawing on recent evidence from oncology, psychiatry, and critical care, we detail the methodological approaches, including specialized platforms and AutoML frameworks. We critically evaluate performance compared to manual methods, address key challenges like bias mitigation and algorithmic hallucination, and outline best practices for implementation. Aimed at researchers, scientists, and drug development professionals, this review synthesizes current evidence to guide the development of robust, reliable, and clinically useful prediction tools.

The Urgent Need for Validation: Why Semi-Automation is Transforming Clinical Prediction

The Critical Role of Validation in Clinical Prediction Models

Clinical prediction models aim to forecast future health outcomes to support medical decision-making. However, their value depends entirely on demonstrating robust performance beyond the data used for their creation [1]. Validation is the process of evaluating a prediction model's performance and ensuring its reliability, generalizability, and transportability to new patient populations and clinical settings [2]. For semi-automated surveillance systems—such as those for surgical site infections (SSIs) or hospital-induced delirium—proper validation is particularly critical as these models directly impact patient safety and resource allocation [3] [4].

Without rigorous validation, prediction models may appear accurate during development but fail in clinical practice due to overfitting, population differences, or temporal drift [1]. This document outlines comprehensive validation protocols to establish credibility for clinical prediction models within semi-automated research frameworks.

Key Validation Concepts and Terminology

Table 1: Essential Validation Concepts in Clinical Prediction Models

| Term | Explanation |

|---|---|

| Discrimination | Model's ability to distinguish between different outcome classes (e.g., SSI vs. no SSI) [1]. |

| Calibration | Agreement between predicted probabilities and observed outcomes [1]. |

| Overfitting | Model performs well on training data but fails to generalize to new data [1]. |

| Internal Validation | Assessment of model reproducibility using data from the same underlying population [2]. |

| External Validation | Evaluation of model transportability to different populations, settings, or time periods [2]. |

| Temporal Validity | Algorithm performance consistency over time at the development setting [2]. |

| Geographical Validity | Generalizability to different institutions or locations [2]. |

| Domain Validity | Generalizability across different clinical contexts or patient demographics [2]. |

Types of Validation and Assessment Protocols

Internal Validation Techniques

Internal validation provides optimism-corrected performance estimates using the development data. Key methodologies include:

- Bootstrapping: Creating multiple resampled datasets with replacement to assess model stability and correct optimism [1]

- k-fold Cross-Validation: Partitioning data into k subsets, iteratively training on k-1 folds and validating on the remaining fold [2]

- Split-sample Validation: Randomly dividing data into development and validation sets [1]

Protocol 1: Bootstrap Validation for Optimism Correction

- Draw bootstrap sample (n' = n) with replacement from original dataset

- Develop model in bootstrap sample using identical modeling strategy

- Test model performance in bootstrap sample and original dataset

- Calculate optimism (bootstrap performance - test performance)

- Repeat steps 1-4 ≥200 times

- Calculate optimism-corrected performance statistics

External Validation Frameworks

External validation assesses model transportability to new settings and comprises three distinct generalizability types [2]:

Temporal Validation: Assesses performance over time at the development institution using a "waterfall" design where development time windows are repeatedly increased [2].

Geographical Validation: Evaluates generalizability across different institutions using leave-one-site-out validation where the model is developed on all but one location and tested on the left-out site [2].

Domain Validation: Tests generalizability across clinical contexts, medical settings, or patient demographics [2].

Protocol 2: External Validation for Semi-Automated Surveillance Models

- Define validation context: temporal (same institution, later time), geographical (different institution), or domain (different clinical context)

- Obtain appropriate dataset: Ensure sufficient sample size and outcome incidence in validation cohort

- Apply original model: Use identical predictor definitions and pre-processing steps

- Assess performance: Calculate discrimination (AUROC), calibration (calibration plot, intercept, slope), and clinical utility (Net Benefit)

- Compare performance: Evaluate degradation from development performance

- Document limitations: Report any operational barriers encountered

Performance Metrics for Model Validation

Table 2: Key Performance Metrics for Clinical Prediction Model Validation

| Metric | Interpretation | Target Value |

|---|---|---|

| Area Under ROC (AUROC) | Overall discrimination ability | >0.7 (acceptable), >0.8 (good), >0.9 (excellent) |

| Area Under PRC (AUPRC) | Precision-recall balance, valuable for imbalanced outcomes | Context-dependent; higher is better |

| Sensitivity | Proportion of true positives detected | Depends on clinical context; high for critical outcomes |

| Specificity | Proportion of true negatives correctly identified | Balanced against sensitivity based on application |

| Negative Predictive Value (NPV) | Probability no outcome occurs when predicted negative | High for ruling-out applications |

| Calibration Intercept | Agreement between predicted and observed risk average | Close to 0 indicates good mean calibration |

| Calibration Slope | Agreement across prediction range | Slope of 1 indicates perfect calibration |

| Brier Score | Overall accuracy measure (lower is better) | <0.25 generally acceptable, depends on outcome incidence |

Case Studies in Model Validation

Semi-Automated Surgical Site Infection Surveillance

A 2025 study developed machine learning and rule-based models for semi-automated SSI detection in 3,931 surgical patients [3]. The best-performing ML models (Naïve Bayes and dense neural network) achieved sensitivity up to 0.90, AUROC up to 0.968, and workload reduction over 90% at a 0.5 decision threshold [3]. The rule-based model demonstrated perfect sensitivity (1.000) but lower workload reduction (70%) [3].

Validation Approach: Internal validation showed no significant performance decrease between training and validation datasets, suggesting no substantial overfitting [3]. Feature importance analysis using SHAP values revealed that the Naïve Bayes model prioritized microbiological data (cultures), while the DNN relied more on contextual characteristics (contamination class, implant presence) [3].

Hospital-Induced Delirium Prediction Model Protocol

A 2023 protocol outlines development of prediction models for hospital-induced delirium using structured and unstructured EHR data [4]. The validation strategy employs geographical validation, leveraging data from two academic medical centers—using one for training and the other for testing [4].

Validation Metrics: The protocol specifies evaluation of both discriminative ability (AUROC, balanced accuracy, sensitivity, specificity) and calibration (Brier score) [4]. This comprehensive approach addresses common limitations in prediction model studies where calibration is often overlooked [5].

Implementation Considerations and Model Updating

Despite the importance of validation, implementation of clinical prediction models often proceeds without full adherence to prediction modeling best practices. A systematic review found that only 27% of implemented models underwent external validation, and just 13% were updated following implementation [5].

Common implementation approaches include [5]:

- Integration into hospital information systems (63%)

- Web applications (32%)

- Patient decision aid tools (5%)

When models demonstrate performance degradation in new settings, several updating approaches can be employed:

- Recalibration: Adjusting intercept or slope to improve calibration

- Revision: Re-estimating a subset of predictor effects

- Extension: Adding new predictors or interaction terms

- Complete rebuilding: Developing a new model for the specific setting

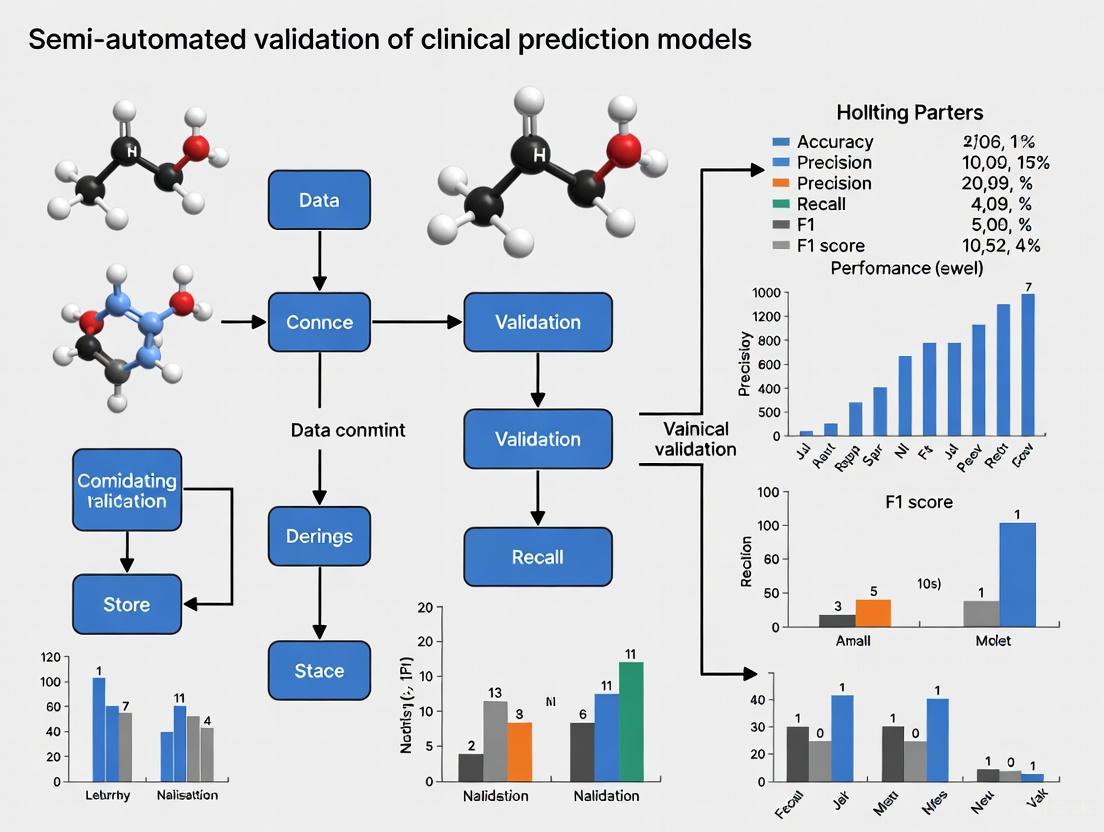

Figure 1: Clinical Prediction Model (CPM) Validation and Implementation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Clinical Prediction Model Validation

| Resource Category | Specific Tools/Methods | Function in Validation |

|---|---|---|

| Reporting Guidelines | TRIPOD, TRIPOD-AI [2] | Standardized reporting of prediction model studies |

| Risk of Bias Assessment | PROBAST [1] | Structured assessment of methodological quality |

| Statistical Software | R, Python with scikit-learn | Implementation of validation techniques |

| Internal Validation Methods | Bootstrapping, k-fold cross-validation [1] | Optimism-correction and stability assessment |

| Performance Measures | AUROC, calibration plots, Brier score [3] [4] | Comprehensive performance quantification |

| Clinical Utility Assessment | Decision curve analysis, Net Benefit [2] | Evaluation of clinical value beyond statistical performance |

| Data Extraction Tools | Natural language processing, structured query tools [4] | Processing of unstructured EHR data for validation |

Validation constitutes a fundamental component in the development and implementation of clinical prediction models, particularly for semi-automated surveillance systems. The presented protocols provide a structured framework for establishing model credibility across different temporal, geographical, and clinical contexts. Through rigorous application of these validation techniques, researchers can ensure that clinical prediction models deliver reliable, generalizable performance that translates to genuine improvements in patient care and clinical decision-making.

The integration of artificial intelligence and machine learning into clinical medicine has opened new possibilities for enhancing diagnostic accuracy and therapeutic decision-making [6]. Within this landscape, clinical prediction models (CPMs) have emerged as crucial tools for estimating the probability of patients experiencing specific health outcomes. However, the pathway from model development to routine clinical implementation is fraught with systematic barriers. Recent evidence indicates that a significant gap persists between the creation of evidence-based tools and their tangible adoption in healthcare settings [7]. This application note examines the primary constraints hindering the routine validation of CPMs—time limitations, expertise deficits, and resource scarcity—within the context of semi-automated validation workflows. By synthesizing current research and empirical findings, we provide structured frameworks and practical protocols to identify and mitigate these barriers, thereby accelerating the translation of predictive models from research artifacts to clinically impactful tools.

The challenge of validation is particularly acute in clinical environments where traditional resource constraints intersect with the novel demands of AI integration. Studies reveal that while over 80% of healthcare administrators endorse support for evidence-based tools, only 30-45% of frontline practitioners report regularly utilizing them in clinical practice [7]. This implementation gap underscores the critical importance of addressing validation barriers systematically. The emergence of semi-automated validation approaches offers promising pathways to overcome these constraints, but requires careful methodological consideration and strategic resource allocation. This document provides researchers, scientists, and drug development professionals with actionable frameworks to navigate these challenges while maintaining rigorous validation standards.

Quantitative Analysis of Validation Barriers

Understanding the prevalence and impact of different validation barriers enables targeted resource allocation and strategic planning. The following data synthesis, drawn from recent empirical studies across healthcare and validation sciences, quantifies the most significant constraints affecting clinical prediction model validation.

Table 1: Prevalence and Impact of Primary Validation Barriers

| Barrier Category | Specific Challenge | Reported Prevalence | Impact Level |

|---|---|---|---|

| Time Constraints | Insufficient staffing and time resources | 66% of teams report increased workloads [8] | High |

| Manual validation processes | 58% adoption of digital systems remains incomplete [8] | Medium-High | |

| Expertise Deficits | Lack of AI/ML validation knowledge | 42% of professionals have 6-15 years experience (mid-career gap) [8] | High |

| Methodological gaps in time-to-event modeling | 86% of prediction publications show high risk of bias [5] | High | |

| Resource Limitations | Computational infrastructure costs | Limited external validation (27% of models) [5] | Medium-High |

| Data accessibility and quality | Bias toward high healthcare utilizers in training data [9] | Medium | |

| Organizational Factors | Audit readiness pressures | Primary concern for 69% of organizations [8] | Medium |

| Siloed workflows and documentation | Only 13% integrate validation with project tools [8] | Medium |

The data reveal systematic challenges across the validation ecosystem. Time constraints manifest most significantly through overwhelming workloads, with 66% of validation teams reporting increased responsibilities without proportional resource expansion [8]. This is compounded by persistent manual processes, as despite growing digital tool adoption, 42% of organizations still struggle with experience gaps that slow validation workflows. Expertise deficits present particularly concerning challenges, with methodological shortcomings observed in 86% of clinical prediction publications, indicating widespread issues with model development and validation rigor [5]. The high risk of bias primarily stems from inadequate handling of temporal relationships, poor calibration assessment, and limited external validation practices.

Resource limitations further constrain validation activities, with computational infrastructure representing a significant barrier, particularly for memory-intensive large language models (LLMs) being adapted for clinical prediction tasks [9]. Organizational factors complete the challenge landscape, with audit readiness emerging as the primary concern for 69% of organizations, potentially diverting resources from substantive validation activities to documentation compliance [8]. The integration gap between validation systems and project management tools (only 13% integration rate) creates significant workflow inefficiencies and data reconciliation challenges throughout the validation lifecycle.

Experimental Protocols for Barrier Identification and Mitigation

Protocol 1: Barrier Assessment Matrix for Validation Workflows

Purpose: To systematically identify and prioritize organization-specific barriers to CPM validation using a structured assessment framework.

Materials:

- Digital survey platform (e.g., REDCap, Qualtrics)

- Structured interview guides for stakeholder engagement

- Data synthesis software (NVivo, Excel with advanced analytical tools)

- Validation process mapping templates

Procedure:

- Stakeholder Identification and Recruitment: Identify key participants across the validation lifecycle, including data scientists (3-5), clinical domain experts (2-3), validation specialists (2-3), and end-users (3-5). Secure participation commitments through formal invitations outlining time requirements (45-60 minutes for interviews, 20 minutes for surveys).

Multi-Method Data Collection:

- Administer structured surveys quantifying perceived barriers using 5-point Likert scales assessing frequency and impact of specific constraints

- Conduct semi-structured interviews exploring experiential dimensions of barriers using open-ended questions about resource allocation, workflow interruptions, and skill gaps

- Facilitate process mapping sessions visualizing current-state validation workflows to identify bottleneck areas

Data Synthesis and Analysis:

- Employ inductive coding techniques for qualitative data, identifying emergent themes related to time, expertise, and resource constraints

- Calculate quantitative barrier scores by multiplying frequency and impact ratings to establish priority rankings

- Triangulate findings across data sources to validate identified barriers and understand their interconnected nature

Barrier Prioritization Matrix Development:

- Plot identified barriers on a 2x2 matrix assessing implementability versus impact

- Categorize barriers as "Quick Wins" (high impact, high implementability), "Strategic Projects" (high impact, low implementability), "Fill-Ins" (low impact, high implementability), or "Thankless Tasks" (low impact, low implementability)

- Develop specific mitigation strategies for barriers in the "Quick Wins" and "Strategic Projects" quadrants

Validation Measures:

- Establish inter-rater reliability for qualitative coding (target Cohen's κ > 0.8)

- Calculate internal consistency for survey instruments (target Cronbach's α > 0.7)

- Conduct member checking with participants to verify interpretation accuracy

This protocol enables organizations to move beyond anecdotal understanding of validation constraints to evidence-based prioritization of mitigation efforts. The multi-method approach addresses both the quantitative prevalence and qualitative impact of barriers, providing a comprehensive foundation for resource allocation decisions.

Protocol 2: Semi-Automated Validation Implementation Framework

Purpose: To establish a structured approach for implementing semi-automated validation techniques that address time, expertise, and resource constraints while maintaining methodological rigor.

Materials:

- Digital validation platforms with API capabilities

- Version control systems (Git)

- Containerization tools (Docker, Singularity)

- Continuous integration/continuous deployment (CI/CD) pipelines

- Synthetic test datasets representing various clinical scenarios

Procedure:

- Validation Process Deconstruction:

- Map existing manual validation workflows into discrete, standardized components

- Identify automation candidates through complexity-impact analysis, prioritizing high-frequency, rule-based tasks

- Establish validation checkpoints requiring human expert oversight, particularly for clinical relevance assessment

Tool Selection and Configuration:

- Evaluate digital validation platforms based on interoperability with existing systems, scalability, and audit trail capabilities

- Implement version control for validation protocols, enabling traceability and collaborative development

- Configure containerized environments to ensure computational reproducibility across different infrastructure setups

Workflow Integration and Hybrid Validation:

- Develop API-based connections between validation systems and clinical data repositories (e.g., EHR systems, OMOP CDM databases)

- Implement automated data quality checks and preprocessing validation routines

- Establish human-in-the-loop checkpoints for clinical concept validation, model output interpretation, and context-specific performance assessment

Performance Benchmarking:

- Execute parallel validation using both traditional and semi-automated approaches on historical CPMs with known performance characteristics

- Compare time-to-completion, resource utilization, and identified issues across approaches

- Assess reproducibility through repeated executions with varying team compositions

Continuous Validation Monitoring:

- Implement automated performance drift detection comparing model outputs against established baselines

- Configure alert systems for performance metric deviations beyond predetermined thresholds

- Establish scheduled re-validation triggers based on data drift, concept drift, or clinical practice changes

Validation Metrics:

- Process efficiency: Time reduction compared to manual validation (target: ≥40% improvement)

- Resource utilization: Personnel hours required per validation cycle (target: ≥35% reduction)

- Quality indicators: Issues identified, false negative/positive rates in validation detection

- Reproducibility score: Consistency across repeated validation cycles (target: ≥90% agreement)

This protocol provides a structured pathway for organizations to incrementally introduce automation while maintaining necessary human oversight. The hybrid approach balances efficiency gains with clinical safety requirements, addressing both time constraints and expertise limitations through strategic task allocation.

Visualization of Semi-Automated Validation Workflows

The following diagrams illustrate key workflows and relationships in semi-automated validation of clinical prediction models, highlighting how strategic automation addresses common barriers while maintaining rigorous oversight.

Semi-Automated Validation Workflow with Barrier Mitigation

The workflow visualization demonstrates how strategic automation insertion addresses specific validation barriers while preserving essential human oversight. Automated components (green) target time-intensive, repetitive tasks like data quality verification and test execution, directly addressing time constraints. The centralized expert review phase (red) ensures clinical relevance assessment receives appropriate specialized attention, mitigating expertise deficits through focused resource allocation. Documentation automation further addresses resource limitations by reducing manual effort while maintaining audit trail completeness.

Research Reagent Solutions for Validation Research

Implementing effective semi-automated validation requires specific tools and platforms that address the identified constraints. The following table catalogs essential solutions with demonstrated applicability to clinical prediction model validation.

Table 2: Essential Research Reagent Solutions for Validation Constraints

| Solution Category | Specific Tool/Platform | Primary Function | Constraint Addressed |

|---|---|---|---|

| Digital Validation Platforms | Kneat Gx | Electronic validation management with automated audit trails | Time constraints through workflow efficiency |

| Custom-built solutions with API integration | Interoperability between validation and clinical systems | Resource limitations through connected infrastructure | |

| Data Quality & Processing | OMOP CDM with standardized vocabularies | Harmonized data structure for reproducible validation | Expertise deficits through standardization |

| Synthetic Public Use Files (SynPUF) | Representative test data for validation pipeline development | Resource limitations through accessible test data | |

| Computational Environments | Docker/Singularity containers | Reproducible computational environments across systems | Expertise deficits through environment consistency |

| Git version control systems | Protocol versioning and collaborative development | Time constraints through change management efficiency | |

| AI & Automation Tools | LLMs (GPT-4, Llama3) for concept mapping | Automated criteria transformation to database queries | Time constraints through task automation |

| Custom scripts for automated testing | Batch execution of validation test cases | Time constraints and resource limitations | |

| Analysis & Reporting | R/Python validation frameworks | Statistical assessment of model performance | Expertise deficits through standardized metrics |

| Automated documentation generators | Report generation from structured validation results | Time constraints through reduced manual effort |

The reagent solutions highlighted above provide practical approaches to addressing the three core barriers. Digital validation platforms demonstrate particular effectiveness for time constraints, with early adopters reporting 50% faster cycle times and 63% of organizations meeting or exceeding ROI expectations [8]. For expertise deficits, standardized frameworks like the OMOP Common Data Model create consistent validation approaches across organizations, while containerization tools address the "it worked on my machine" reproducibility challenge that often plagues validation efforts. Resource limitations are mitigated through synthetic datasets that enable validation pipeline development without requiring extensive real-world data access during early stages, and automated documentation tools that reduce manual effort while maintaining comprehensive audit trails.

The routine validation of clinical prediction models faces significant barriers related to time constraints, expertise deficits, and resource limitations, but systematic approaches using semi-automated methodologies offer promising pathways forward. The protocols, visualizations, and tooling solutions presented in this application note provide researchers and drug development professionals with actionable frameworks to address these challenges. By strategically implementing targeted automation while preserving essential human oversight, organizations can accelerate validation cycles without compromising scientific rigor or patient safety.

Successful implementation requires organizational commitment to both technological adoption and cultural shift. The transition from document-centric to data-centric validation models represents a fundamental paradigm change that demands reskilling initiatives and governance framework updates [8]. Organizations should prioritize solutions that offer immediate efficiency gains while building toward long-term, sustainable validation ecosystems. Through the structured application of these principles and protocols, the research community can overcome current validation constraints and fully realize the potential of clinical prediction models to improve patient care and treatment outcomes.

Semi-automated validation represents a pragmatic methodology that strategically combines automated computational procedures with expert researcher oversight to assess the performance and reliability of clinical prediction models. This hybrid approach is particularly valuable in healthcare research, where complete automation may be unsuitable due to the complexity of clinical data, the need for domain expertise, and the critical importance of validation accuracy. By leveraging specialized software tools to handle repetitive computational tasks while retaining human judgment for strategic decisions and interpretation, semi-automated validation creates an efficient bridge between entirely manual processes and fully automated systems [10].

The fundamental value proposition of this approach lies in its balanced efficiency. Research demonstrates that semi-automated validation can achieve nearly identical statistical results to traditional manual methods while significantly reducing the time and specialized programming expertise required. For instance, in validation studies of breast cancer prediction models, differences between semi-automated and manual validation for key calibration metrics (intercepts and slopes) ranged from 0 to 0.03, which was determined not clinically relevant, while discrimination metrics (AUCs) were identical between methods [10]. This comparable performance, combined with substantial time savings and improved accessibility for researchers without advanced programming backgrounds, positions semi-automated validation as a compelling methodology for accelerating the validation of clinical prediction models.

Comparative Analysis of Validation Approaches

Characterizing the Validation Spectrum

The validation of clinical prediction models exists along a continuum from fully manual to completely automated processes, each with distinct characteristics, advantages, and limitations. Understanding these differences is essential for selecting the appropriate validation strategy for a specific research context.

Table 1: Comparison of Validation Approaches for Clinical Prediction Models

| Feature | Manual Validation | Semi-Automated Validation | Fully Automated Validation |

|---|---|---|---|

| Implementation Process | Custom statistical programming (R, Python, Stata) | Pre-built platforms with researcher input (Evidencio) | End-to-end automated systems |

| Time Requirements | High (weeks to months) | Moderate (days to weeks) | Low (hours to days) |

| Statistical Expertise Needed | Advanced programming skills | Basic to intermediate skills | Minimal skills |

| Flexibility & Customization | Highly customizable | Moderately customizable | Limited customization |

| Transparency & Reproducibility | Variable, depends on documentation | High with platform consistency | High but often "black box" |

| Error Risk | Prone to coding errors | Reduced through automation | Systematic error potential |

| Ideal Use Case | Novel methodologies, complex adjustments | Routine validation, multi-model testing | High-volume, standardized tasks |

Quantitative Performance Comparison

Research directly comparing manual and semi-automated approaches demonstrates remarkably similar performance outcomes. A comprehensive 2019 study examining four breast cancer prediction models (CancerMath, INFLUENCE, PPAM, and PREDICT v.2.0) found that discrimination metrics (AUCs) were identical between semi-automated and manual validation methods. Calibration metrics showed minimal, clinically irrelevant differences, with intercepts and slopes varying by only 0 to 0.03 between approaches [10]. This negligible variation confirms that semi-automated validation maintains statistical integrity while offering efficiency advantages.

Beyond statistical equivalence, semi-automated validation addresses a critical bottleneck in clinical prediction research: the scarcity of external validations. Despite hundreds of prediction models being developed annually across various medical domains, only a small fraction undergo proper external validation in the target populations where they would be implemented [11]. This validation gap represents a significant patient safety concern, as unvalidated models may perform poorly in new populations due to differences in disease severity, patient demographics, or clinical practices. Semi-automated approaches directly address this problem by making validation more accessible and less resource-intensive.

Semi-Automated Validation in Practice: Protocols and Applications

Implementation Framework and Workflow

The implementation of semi-automated validation follows a structured workflow that integrates researcher expertise at critical decision points while automating computational tasks. This process can be visualized through the following workflow:

Experimental Protocol: Model Validation Using the Evidencio Platform

Purpose: To externally validate clinical prediction models using a semi-automated approach that maintains statistical rigor while improving efficiency.

Materials and Reagents:

- Validation Dataset: Clinical registry data (e.g., Netherlands Cancer Registry) formatted according to model requirements [10]

- Semi-Automated Validation Platform: Evidencio platform or equivalent (version 2.5 or newer) [10]

- Statistical Software: R (version 3.4.0 or newer) or Python for supplementary analyses [10]

- Data Anonymization Tool: Secure data de-identification software compliant with local regulations

Procedure:

- Dataset Preparation and Harmonization

- Obtain dataset from relevant clinical registry (e.g., Netherlands Cancer Registry)

- Apply inclusion/exclusion criteria matching original development population

- Harmonize variable definitions and coding with model requirements

- Handle missing data according to pre-specified rules (e.g., set to 'unknown' or exclude) [10]

- Anonymize dataset to ensure patient privacy

Platform Configuration

- Upload prediction model to Evidencio platform (formula, coefficients, or source code)

- Configure validation parameters (discrimination, calibration, clinical utility)

- Map dataset variables to model requirements

- Set outcome definitions and time horizons

Validation Execution

- Upload anonymized dataset to platform

- Execute automated validation procedures

- Generate performance metrics (calibration intercept/slope, AUC, Brier score)

- Produce visualizations (calibration plots, ROC curves)

Expert Review and Interpretation

- Compare performance metrics with manual validation results if available

- Assess clinical relevance of performance differences

- Evaluate calibration across risk strata

- Identify potential dataset or model issues

Reporting

- Document validation methodology according to TRIPOD guidelines [11]

- Report performance metrics with confidence intervals

- Contextualize findings relative to clinical application

Troubleshooting:

- Model Specification Issues: Verify all model components are correctly specified in platform

- Data Quality Flags: Investigate unexpected missing data patterns or outliers

- Performance Discrepancies: Compare with manual validation to identify potential platform issues

Domain-Specific Applications

The semi-automated validation approach has demonstrated utility across multiple healthcare domains with varying methodological requirements:

Table 2: Domain-Specific Applications of Semi-Automated Validation

| Clinical Domain | Application Example | Technical Approach | Key Outcomes |

|---|---|---|---|

| Oncology | Validation of breast cancer prediction models (CancerMath, PREDICT) [10] | Logistic regression, Cox models, Kaplan-Meier estimates | Near-identical performance to manual validation (AUC differences: 0) |

| Critical Care | Prediction of interventions in community-acquired pneumonia [12] | Tree-based machine learning models | Strong discrimination for mechanical ventilation, vasopressor use |

| Medical Imaging | Analysis of shear wave elastography clips in muscle tissue [13] | Image processing algorithm with manual segmentation option | Excellent correlation with manual measurements (Spearman's ρ > 0.99) |

| Vascular Medicine | Detection of active bleeding in DSA images [14] | Color-coded parametric imaging with deep learning | Improved diagnostic efficiency for hemorrhage detection (P < 0.001) |

The Scientist's Toolkit: Essential Research Reagents and Platforms

Implementing semi-automated validation requires specific computational tools and platforms designed to streamline the validation process while maintaining methodological rigor. The following toolkit represents essential resources for researchers conducting semi-automated validation of clinical prediction models:

Table 3: Research Reagent Solutions for Semi-Automated Validation

| Tool Category | Specific Tools | Primary Function | Implementation Considerations |

|---|---|---|---|

| Specialized Validation Platforms | Evidencio [10] | Online platform for prediction model validation and sharing | Handles various model types; provides performance metrics and visualizations |

| Data Validation Libraries | Pydantic, Pandera [15] | Data quality assurance and schema validation | Pydantic for type annotations; Pandera for dataframe-specific validation |

| Statistical Analysis Environments | R, Python with scikit-learn, caret [10] | Statistical computing and model evaluation | Extensive validation package ecosystems; customizable analyses |

| Imaging Analysis Tools | Custom MATLAB algorithms [13] | Medical image processing and quantification | Specialized for DICOM format; enables batch processing of image clips |

| Reporting Frameworks | TRIPOD checklist [11] | Standardized reporting of prediction model studies | Ensures transparent and complete methodology reporting |

Semi-automated validation represents a methodological advancement that successfully bridges the gap between labor-intensive manual processes and potentially opaque fully automated systems. By strategically distributing tasks according to their requirements for human judgment versus computational efficiency, this approach maintains statistical rigor while addressing practical implementation barriers. The demonstrated equivalence in performance metrics between semi-automated and manual approaches, combined with significant efficiency gains, supports broader adoption of this methodology across clinical prediction model research [10].

Future developments in semi-automated validation will likely focus on enhanced integration with electronic health record systems, more sophisticated handling of temporal validation challenges, and improved methods for assessing model fairness and generalizability across diverse populations. As these tools evolve, maintaining the crucial balance between automation efficiency and expert oversight will remain essential for ensuring that clinical prediction models are both statistically sound and clinically applicable.

The development and implementation of Clinical Prediction Models (CPMs) have seen significant activity, yet key validation and updating processes remain underutilized. The following table summarizes the current state of CPM implementation and validation practices based on a systematic review of 56 prediction models [5].

| Aspect | Metric | Value/Percentage |

|---|---|---|

| Model Development & Internal Validation | Models assessed for calibration | 32% |

| External Validation | Models undergoing external validation | 27% |

| Implementation Platform | Hospital Information System (HIS) | 63% |

| Web Application | 32% | |

| Patient Decision Aid Tool | 5% | |

| Post-Implementation | Models updated after implementation | 13% |

| Risk of Bias | Publications with high overall risk of bias | 86% |

Experimental Protocol for Semi-Automated External Validation

This protocol provides a detailed methodology for performing semi-automated external validation of a clinical prediction model, facilitating the assessment of model performance in a new patient population [16].

Pre-Validation Preparatory Phase

- Objective: To prepare the target dataset and the model for the validation procedure.

- Step 1: Model Selection and Formulae Acquisition

- Select a prediction model with a clinically relevant outcome.

- Obtain the complete underlying formulae, coefficients, or source code from the original publication or authors.

- Confirm the availability of all required predictor variables in the target dataset.

- Step 2: Target Dataset Curation

- Obtain a registry or cohort dataset (e.g., from a cancer registry) for validation.

- Align the inclusion and exclusion criteria of the validation population with the original model's development population.

- Handle missing data according to the model's requirements, either by:

- Setting specific variables to 'unknown' if the model can handle weighted averages for missing covariates.

- Excluding patients with one or more missing values if the model cannot accommodate them.

- Ensure the dataset is fully anonymized to guarantee patient privacy.

Semi-Automated Validation Execution Phase

- Objective: To execute the validation process using a semi-automated platform and compute performance metrics.

- Step 3: Platform Setup and Data Input

- Access a semi-automated validation platform (e.g., Evidencio, https://www.evidencio.com).

- Upload the underlying formula of the model to the platform, if required.

- Input or upload the prepared target dataset into the validation tool.

- Step 4: Performance Metric Calculation

- Execute the validation run within the platform.

- The tool automatically calculates key performance metrics:

- Discrimination: Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve.

- Calibration: Calibration-in-the-large (intercept) and calibration slope.

Analysis and Interpretation Phase

- Objective: To interpret the validation results and assess the model's transportability.

- Step 5: Results Comparison and Reporting

- Compare the computed AUC, intercept, and slope from the semi-automated process against values from manual validation, if available. Differences of ≤0.03 in intercepts and slopes are generally not considered clinically relevant [16].

- Report the final validation metrics, concluding on the model's performance and generalizability in the target population.

Workflow for Semi-Automated Validation of Clinical Prediction Models

The following diagram illustrates the logical workflow for the semi-automated validation of clinical prediction models, from initial model selection to the final assessment of clinical utility.

Research Reagent Solutions for Prediction Model Validation

The following table details key "research reagents" — essential datasets, software tools, and platforms — required for conducting robust validation studies of clinical prediction models.

| Research Reagent | Type | Function / Application |

|---|---|---|

| Registry Data (e.g., Netherlands Cancer Registry) | Dataset | Provides large-scale, real-world patient data for external validation, ensuring the validation population is geared to the original development cohort. [16] |

| Semi-Automated Validation Platform (e.g., Evidencio) | Software Tool | Partly automates the validation procedure, calculating discrimination and calibration metrics, saving time and reducing the need for advanced statistical programming. [16] [17] |

| Statistical Software (e.g., R, Stata) | Software Tool | Used for manual validation comparisons, data cleaning, and advanced statistical analyses not covered by automated platforms. [16] |

| Model Coefficients & Formulae | Information | The exact mathematical representation of the prediction model, essential for performing any form of external validation, whether manual or semi-automated. [16] |

| TRIPOD+AI Statement | Reporting Guideline | Provides updated guidance for transparently reporting clinical prediction models that use regression or machine learning, improving reproducibility. [18] |

The rapid proliferation of clinical prediction models (CPMs) has created a significant validation gap in healthcare research, with evidence suggesting most models carry high risk of bias and insufficient validation. Bibliometric analyses reveal an estimated 248,431 CPM development articles were published by 2024, with notable acceleration from 2010 onward [19]. This surge in model development has far outpaced rigorous validation efforts, creating a substantial mismatch between model creation and implementation readiness. The healthcare research community now faces a critical challenge: while new models continue to be developed at an accelerating pace, most lack the robust validation necessary for safe clinical deployment.

This application note documents the systemic gaps in current CPM validation practices and presents semi-automated protocols to address these deficiencies. The validation gap is quantifiable and substantial - across all medical fields, only 27% of implemented models undergo external validation, and a mere 13% are updated following implementation [5]. This insufficiency is particularly concerning given that 86% of published prediction models demonstrate high risk of bias when assessed using standardized tools like PROBAST (Prediction model Risk Of Bias ASsessment Tool) [20]. The consequences of this validation gap directly impact patient care, potentially introducing algorithmic biases that disproportionately affect marginalized populations and undermining the reliability of clinical decision support systems [21].

Quantitative Evidence of Systemic Gaps

Proliferation of Clinical Prediction Models

Table 1: Bibliometric Analysis of Clinical Prediction Model Publications (1950-2024)

| Category | Estimated Publications | 95% Confidence Interval | Key Characteristics |

|---|---|---|---|

| Regression-based CPM Development Articles | 156,673 | 123,654 - 189,692 | Linear, proportional hazards, or logistic regression |

| Non-regression-based CPM Development Articles | 91,758 | 76,321 - 107,195 | Machine learning, scoring rules based on multiple unadjusted bivariate associations |

| Total CPM Development Articles | 248,431 | 207,832 - 289,030 | All medical fields, diagnostic and prognostic models |

| Annual Acceleration Pattern | Marked increase from 2010 onward | N/A | Consistent upward trajectory in publications |

The massive scale of CPM development demonstrated in Table 1 highlights the impracticality of addressing validation gaps exclusively through manual methods. This proliferation necessitates more scalable, semi-automated approaches to validation [19].

Validation Status of Implemented Prediction Models

Table 2: Implementation and Validation Status of Clinical Prediction Models

| Implementation Aspect | Frequency (%) | Examples/Tools | Clinical Implications |

|---|---|---|---|

| Overall High Risk of Bias | 86% of models | PROBAST assessment tool | Compromised reliability for clinical decision-making |

| External Validation Performance | 27% of models | Epic Deterioration Index (EDI) | Limited generalizability to diverse populations |

| Post-Implementation Updating | 13% of models | National Early Warning Score (NEWS) | Model drift and performance degradation over time |

| Hospital Information System Integration | 63% of models | Electronic Cardiac Arrest Risk Triage (eCART) | Wider deployment despite validation gaps |

| Web Application Implementation | 32% of models | Various risk calculators | Accessibility without sufficient validation |

The data presented in Table 2 reveals systemic weaknesses throughout the model lifecycle, from development through implementation and maintenance [5]. These gaps are particularly concerning for early warning systems widely used in nursing practice, such as the Modified Early Warning Score (MEWS) and the Electronic Cardiac Arrest Risk Triage (eCART), where biased predictions can directly impact patient safety [22].

Experimental Protocols for Gap Assessment

Protocol 1: Standardized Risk of Bias Assessment Using PROBAST

Purpose: To systematically evaluate methodological quality and risk of bias in clinical prediction model studies.

Materials:

- PROBAST assessment tool (domains: participants, predictors, outcome, analysis)

- Study manuscripts for evaluation

- Standardized data extraction forms

Procedure:

- Domain Identification: Categorize assessment into four PROBAST domains: participants, predictors, outcome, and analysis.

- Signaling Questions: For each domain, answer specific signaling questions to identify potential biases.

- Risk Judgments: Assign risk of bias ratings (high/low/unclear) for each domain.

- Overall Assessment: Synthesize domain-level judgments into overall risk of bias rating.

- Consensus Building: Conduct structured consensus meetings to resolve discrepant ratings between assessors.

Validation Notes: Interrater reliability (IRR) using prevalence-adjusted bias-adjusted kappa (PABAK) is higher for overall risk of bias judgments (0.78-0.82) compared to domain-level judgments. Consensus discussions primarily lead to item-level improvements but rarely change overall risk of bias ratings [20].

Protocol 2: External Validation Assessment Framework

Purpose: To evaluate model performance across diverse, independent populations not used in model development.

Materials:

- Independent dataset with representative population characteristics

- Model performance metrics calculator (discrimination, calibration)

- Fairness assessment framework

Procedure:

- Dataset Characterization: Document demographic composition, clinical settings, and temporal factors of external validation dataset.

- Performance Metrics Calculation:

- Discrimination: Area under curve (AUC) with confidence intervals

- Calibration: Calibration plots and statistics

- Clinical utility: Decision curve analysis

- Fairness Assessment: Evaluate performance disparities across demographic subgroups (race, ethnicity, sex, socioeconomic status).

- Comparative Analysis: Compare performance between development and validation cohorts.

- Generalizability Judgment: Determine suitability for broader clinical implementation.

Application Context: This protocol is particularly relevant for digital pathology-based AI models for lung cancer diagnosis, where external validation remains limited despite numerous developed models [23].

Protocol 3: Semi-Automated Bias Assessment Using Large Language Models

Purpose: To accelerate risk of bias assessments while maintaining accuracy through LLM assistance.

Materials:

- Large language model (Claude 3.5 Sonnet or equivalent)

- Structured prompts for RoB2 assessment

- Validation dataset of previously assessed studies

Procedure:

- Prompt Development: Create structured prompts based on RoB2 assessment framework.

- Information Extraction: Guide LLM to identify and extract key information relevant to each signaling question.

- Judgment Generation: LLM responds to signaling questions and makes domain judgments.

- Rationale Documentation: LLM provides basis for judgments to enable verification.

- Human Verification: Experienced reviewers validate LLM-generated assessments.

- Iterative Refinement: Optimize prompts based on performance feedback.

Performance Metrics: LLMs demonstrate 65-70% accuracy against human reviewers for domain judgments, completing assessments in 1.9 minutes versus 31.5 minutes for human reviewers [24].

Visualization of Systemic Gaps and Solutions

Systemic Gaps and Solutions Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Semi-Automated Model Validation

| Tool/Resource | Primary Function | Application Context | Implementation Considerations |

|---|---|---|---|

| PROBAST Tool | Standardized risk of bias assessment | Critical appraisal of prediction model studies | Requires trained assessors; consensus meetings improve reliability |

| RoB2 Framework | Revised risk of bias tool for randomized trials | Bias assessment in clinical trials | Complex signaling questions; LLM assistance reduces time from 31.5 to 1.9 minutes |

| TRIPOD+AI Guidelines | Reporting standards for AI prediction models | Ensuring transparent model reporting | Mandates fairness assessment reporting |

| ASReview Tool | Semi-automated literature screening | Accelerating systematic review process | Workload reduction of 32.4-59.7% for prognosis reviews |

| OMOP Common Data Model | Standardized data structure for observational data | Enabling large-scale validation across datasets | Facilitates federated validation across institutions |

| LLM-Assisted Assessment (Claude 3.5) | Automated signaling question response | Scaling risk of bias assessments | 65-70% accuracy against human reviewers |

The evidence for systemic gaps in clinical prediction model validation is compelling and quantifiable. Addressing the triad of high risk of bias (86%), insufficient external validation (27%), and limited model updating (13%) requires a fundamental shift from model development to validation and implementation science. The semi-automated protocols presented in this application note provide practical pathways to address these gaps at scale.

Implementation of this framework requires coordinated effort across multiple stakeholders. Researchers should prioritize validation of existing models over new model development, journal editors and peer reviewers should enforce stricter validation standards, and healthcare organizations should establish continuous monitoring systems for implemented models. The integration of LLM-assisted tools presents a promising approach to scaling validation efforts without compromising methodological rigor, potentially reducing assessment time from 31.5 minutes to 1.9 minutes per study while maintaining acceptable accuracy [24].

Future directions should focus on developing standardized implementation frameworks for model updating, creating fairness-aware validation protocols, and establishing real-world performance monitoring systems. By addressing these systemic gaps through semi-automated, scalable approaches, the research community can transform the current landscape from one of proliferation to one of reliable, clinically useful prediction tools.

Implementing Semi-Automated Validation: Platforms, Techniques, and Real-World Workflows

Clinical prediction models are essential for diagnosing diseases, forecasting prognoses, and guiding treatment decisions in modern healthcare. Their reliability, however, is not universal and depends heavily on performance within the specific target population where they are applied. External validation is therefore a critical step to evaluate model performance—specifically its calibration (the agreement between predicted and observed risks) and discrimination (the ability to distinguish between different outcomes)—before clinical implementation [16]. Traditionally, this process is a manual, time-consuming, and statistically intensive task, which acts as a significant barrier to the widespread and routine validation of models. This bottleneck can delay the adoption of robust models into clinical practice and hinder the identification of models that perform poorly in new settings.

Semi-automated validation platforms have emerged to address this challenge. These tools, such as Evidencio, aim to streamline and accelerate the validation process. By partially automating the statistical computations and providing a structured framework, they make validation more accessible to researchers and clinicians, potentially increasing the number of models that are properly validated and ensuring that clinical decisions are based on predictions that are accurate for the local patient population [16].

Evidencio is an online platform designed to host, use, share, and validate medical algorithms. Its core mission is to improve the accessibility, reliability, and transparency of prediction models used in healthcare [25] [16].

Key Functionalities and Services

The platform operates on two main levels: as a service and as a platform [25].

- Evidencio as a Service: This facet provides support for algorithm developers, including:

- Development and Validation Support: Offering certification-aware services to aid in the algorithm development and validation process.

- CE Certification and Legal Manufacturing: Specializing in certifying medical algorithms as medical devices (focusing on Class IIa MDR and Class B IVDR devices) and acting as the Legal Manufacturer to accelerate market access for innovators.

- Integration and Distribution: Providing API-based integration (including JSON, XML, HL7 FHIR) to embed algorithms into third-party software, electronic medical records (EMRs), and other healthcare applications.

- Evidencio as a Platform: This aspect constitutes the community-facing toolkit, featuring:

- Algorithm Library: Hosting a library of over 8,000 medical algorithms that can be configured, published, used, and validated.

- Validation Tools: Providing free tools for the scientific community to perform algorithm validations, facilitating external validation by researchers across different institutions and populations [25] [16].

For algorithm developers, using a specialized legal manufacturer like Evidencio offers key benefits such as significantly reduced time to market (9-12 months for Class IIa devices compared to 2+ years alone) and lower certification costs (typically less than 50% of a self-managed approach) [26].

Performance Evaluation of Semi-Automated Validation

A pivotal 2019 study directly compared the performance of Evidencio's semi-automated validation tool against traditional manual validation methods. The study focused on four distinct breast cancer prediction models with different underlying statistical structures: CancerMath (a Kaplan-Meier based calculator), INFLUENCE (a time-dependent logistic regression model), PPAM (a logistic regression model), and PREDICT v.2.0 (a Cox regression model) [16].

Quantitative Outcomes

The following table summarizes the comparative results of semi-automated versus manual validation for key performance metrics across the four models.

Table 1: Comparison of Semi-Automated and Manual Validation Performance for Breast Cancer Prediction Models

| Model Name | Underlying Model Type | Validation Metric | Semi-Automated Result | Manual Result | Difference |

|---|---|---|---|---|---|

| CancerMath | Kaplan-Meier | Calibration Intercept | Not Reported | Not Reported | 0.00 |

| Calibration Slope | Not Reported | Not Reported | 0.00 | ||

| Discrimination (AUC) | Identical | Identical | 0.00 | ||

| INFLUENCE | Logistic Regression | Calibration Intercept | Not Reported | Not Reported | 0.00 |

| Calibration Slope | Not Reported | Not Reported | 0.03 | ||

| Discrimination (AUC) | Identical | Identical | 0.00 | ||

| PPAM | Logistic Regression | Calibration Intercept | Not Reported | Not Reported | 0.02 |

| Calibration Slope | Not Reported | Not Reported | 0.01 | ||

| Discrimination (AUC) | Identical | Identical | 0.00 | ||

| PREDICT v.2.0 | Cox Regression | Calibration Intercept | Not Reported | Not Reported | 0.02 |

| Calibration Slope | Not Reported | Not Reported | 0.01 | ||

| Discrimination (AUC) | Identical | Identical | 0.00 |

Data adapted from van der Stag et al. (2019) [16]. AUC: Area Under the Curve.

The study concluded that the differences in calibration measures (intercepts and slopes) between the two methods were minimal, ranging from 0 to 0.03, and were not considered clinically relevant. Most importantly, discrimination (AUC) was identical across all models for both validation methods. This demonstrates that the semi-automated process reliably replicated the results of the manual statistical calculations [16].

User Experience and Qualitative Benefits

Beyond statistical accuracy, the study reported significant qualitative benefits:

- User-Friendliness: The validation tool was found to be intuitive and easy to use.

- Time Efficiency: It saved researchers a substantial amount of time compared to the manual validation process.

- Accessibility: The tool reduces the barrier to entry for researchers who may not have advanced statistical programming expertise, thereby facilitating more widespread model validation [16].

Experimental Protocol for Semi-Automated External Validation

This protocol outlines the steps to perform an external validation of a clinical prediction model using a semi-automated platform like Evidencio.

Pre-Validation Preparations

Step 1: Model and Data Specification

- Identify Prediction Model: Select the clinical prediction model to be validated. Ensure that the model's underlying formula, coefficients, and all necessary input variables are available.

- Define Target Dataset: Obtain a dataset from the target population for validation. This dataset must contain all variables required by the model and the outcome variable being predicted.

- Data Preparation: Clean the dataset according to the model's original inclusion and exclusion criteria. Handle missing values as specified by the model (e.g., set to 'unknown' if the model supports it, or exclude the record).

Step 2: Platform Setup

- Upload or Select Model: If the model is not already on the platform, it may need to be implemented. This involves entering the model's formula, coefficients, and variable definitions into the platform. For pre-existing models on the platform, simply select the model to validate.

Validation Execution Workflow

The workflow for conducting the validation involves a structured sequence of data and model handling, as illustrated below.

Post-Validation Analysis

Step 3: Interpretation and Reporting

- Analyze Results: Review the platform-generated validation report. Key metrics to assess include:

- Calibration: Examine the calibration intercept (ideal = 0) and slope (ideal = 1). A slope <1 suggests the model needs updating.

- Discrimination: Evaluate the Area Under the ROC Curve (AUC). An AUC of 0.5 indicates no discrimination, while 1.0 indicates perfect discrimination.

- Report Findings: Document the validation performance in the context of the target population. The report should inform whether the model is fit for clinical use in that specific setting.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Semi-Automated Validation of Clinical Prediction Models

| Item | Function in Validation |

|---|---|

| Target Validation Dataset | A dataset from the intended patient population, containing all model variables and the outcome. It serves as the ground truth for testing the model's performance. |

| Model Specification | The complete mathematical formula, coefficients, and variable definitions of the prediction model. This is the "reagent" being tested. |

| Semi-Automated Validation Platform (e.g., Evidencio) | The core tool that automates statistical computations for calibration and discrimination, generating a performance report and saving time. |

| Statistical Analysis Software (e.g., R, Stata) | Used for manual validation comparisons and any additional, non-automated statistical analyses required for the study. |

| Clinical Domain Expertise | Necessary for interpreting the clinical relevance of statistical findings (e.g., is a change in calibration slope clinically significant?). |

Dedicated validation platforms like Evidencio represent a significant advancement in the field of clinical prediction models. The evidence demonstrates that semi-automated validation is a statistically reliable substitute for manual methods, producing nearly identical results for key metrics like calibration and discrimination. The primary advantages of this approach are its accessibility, efficiency, and potential to increase the throughput of model validations. By lowering the technical and time barriers, these tools empower researchers and healthcare organizations to more easily ensure that the prediction models they use are accurate and reliable for their specific patient populations, ultimately supporting better, evidence-based clinical decision-making.

Automated Machine Learning (AutoML) for Model Development and Validation

The development and validation of clinical prediction models are being transformed by Automated Machine Learning (AutoML). In clinical research, AutoML addresses critical challenges such as high-dimensional data, clinical heterogeneity, and the need for rapid, reproducible model development. By automating the processes of feature selection, algorithm selection, and hyperparameter tuning, AutoML frameworks streamline the creation of robust prediction models while maintaining methodological rigor essential for clinical applications. This automation is particularly valuable in dynamic clinical environments where model performance must be sustained despite evolving medical practices, patient populations, and data collection methods.

Recent evidence demonstrates AutoML's successful application in critical care settings. For instance, an interpretable AutoML framework developed for delirium prediction in emergency polytrauma patients achieved an area under the receiver operating characteristic curve (ROC-AUC) of 0.9690 on the training set and 0.8929 on the test set, significantly outperforming conventional prediction models [27]. This performance highlights AutoML's potential to enhance clinical decision-making while addressing the complexities of real-world medical data.

Experimental Design and Workflow

Core AutoML Framework Architecture

A robust AutoML framework for clinical prediction models integrates multiple interconnected components that automate the end-to-end modeling pipeline. The architecture typically encompasses data preprocessing, feature engineering, model selection, hyperparameter optimization, and model validation, all while maintaining compliance with clinical research standards.

Table 1: Core Components of an AutoML Framework for Clinical Prediction Models

| Component | Function | Clinical Research Considerations |

|---|---|---|

| Data Preprocessing | Handles missing values, outlier detection, data normalization | Preserves clinical meaning during transformation; manages censored data |

| Feature Engineering | Automated creation, selection, and transformation of predictive variables | Incorporates clinical knowledge; manages high-dimensional biomarker data |

| Model Selection | Algorithm comparison and selection from a predefined library | Prioritizes interpretability alongside performance; includes clinical validation |

| Hyperparameter Optimization | Efficient search for optimal model settings | Balances computational efficiency with model performance |

| Model Validation | Performance assessment using appropriate metrics | Implements temporal validation; assesses generalizability across populations |

The workflow initiates with comprehensive data preprocessing, where clinical data undergoes cleaning, transformation, and normalization. In the referenced delirium prediction study, researchers addressed missing data through median replacement for continuous variables and mode substitution for categorical variables, achieving a 97.43% completeness rate across 956 polytrauma patients [27]. Subsequent feature engineering cycles leverage both automated selection and clinical expertise to identify the most predictive variables.

AutoML Experimental Workflow

The following diagram illustrates the standardized protocol for AutoML-based clinical prediction model development:

Validation Framework and Performance Metrics

Comprehensive Validation Protocol

Robust validation is paramount for clinical prediction models. The proposed framework incorporates multiple validation stages to ensure model reliability and clinical applicability:

Temporal Validation addresses dataset shift in clinical environments where patient characteristics, treatments, and documentation practices evolve. A diagnostic framework for temporal validation evaluates performance across different time periods, characterizing the evolution of patient outcomes and features, and assessing model longevity through sliding window experiments [28]. This approach is particularly crucial in oncology, where rapid evolution of clinical pathways necessitates continuous model monitoring.

Discrimination and Calibration Metrics provide complementary insights into model performance. Beyond traditional ROC-AUC and precision-recall AUC (PR-AUC), clinical models require calibration assessment to ensure predicted probabilities align with observed event rates. Decision Curve Analysis further evaluates clinical utility across different risk thresholds [29].

Fairness and Equity Assessment examines model performance across patient subgroups defined by demographics, socioeconomic status, or clinical characteristics. This includes evaluating potential algorithmic bias by monitoring outcomes for discordance between patient subgroups and ensuring equitable access to AI solutions [29].

Performance Benchmarking

Table 2: Performance Comparison of AutoML vs. Conventional Models for Delirium Prediction

| Model Type | Training ROC-AUC | Test ROC-AUC | Training PR-AUC | Test PR-AUC | Key Predictors Identified |

|---|---|---|---|---|---|

| AutoML (IFLA-enhanced) | 0.9690 | 0.8929 | 0.9611 | 0.8487 | GCS, Lactate, CFS, BMI, FDP |

| Logistic Regression | 0.8512 | 0.8124 | 0.8233 | 0.7615 | Not specified |

| Support Vector Machine | 0.8835 | 0.8347 | 0.8512 | 0.7893 | Not specified |

| XGBoost | 0.9218 | 0.8652 | 0.9024 | 0.8216 | Not specified |

| LightGBM | 0.9341 | 0.8719 | 0.9187 | 0.8295 | Not specified |

The superior performance of the AutoML framework demonstrated in this comparison highlights its ability to handle complex clinical interactions. The model identified five key predictors: Glasgow Coma Scale (GCS) score, lactate level, Clinical Frailty Scale (CFS), body mass index (BMI), and fibrin degradation products (FDP) [27]. This demonstrates AutoML's capacity to integrate diverse data types including physiological scores, laboratory biomarkers, and clinical assessments.

Implementation Protocols

Optimization Algorithm Enhancement

Advanced optimization algorithms significantly enhance AutoML performance in clinical applications. The Improved Flood Algorithm (IFLA) integrates sine mapping initialization and Cauchy mutation perturbations to improve optimization efficiency [27]. The implementation protocol involves:

- Sine Mapping Initialization: Generates diverse initial populations using chaotic sequences to improve search space coverage

- Cauchy Mutation Perturbation: Introduces strategic perturbations to escape local optima during the optimization process

- Fitness Evaluation: Assesses solution quality using predefined objective functions (e.g., AUC maximization)

- Population Update: Iteratively refines solutions based on fitness evaluation and perturbation

This enhanced algorithm demonstrated significant performance improvements on 12 standard test functions, including multimodal, hybrid, and composite functions from the IEEE CEC-2017 test suite [27].

Model Interpretability and Explanation

The integration of explainability techniques is essential for clinical adoption of AutoML models. The SHapley Additive exPlanations (SHAP) framework quantifies predictor contributions, enabling transparent interpretation of model decisions [27]. The implementation protocol includes:

- Feature Importance Calculation: Computes SHAP values for each feature across all predictions

- Global Interpretability: Visualizes overall feature importance and relationships

- Local Interpretability: Explains individual predictions for clinical decision support

- Interaction Effects: Identifies and quantifies feature interactions

This explanatory framework facilitates clinical validation by domain experts and builds trust in model recommendations.

The Scientist's Toolkit

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Computational Tools for AutoML Implementation

| Tool/Category | Function | Implementation Example |

|---|---|---|

| Optimization Algorithms | Hyperparameter tuning and feature selection | Improved Flood Algorithm (IFLA) with sine mapping and Cauchy mutation [27] |

| Model Interpretation | Explain model predictions and feature importance | SHapley Additive exPlanations (SHAP) framework [27] |

| Temporal Validation | Assess model performance over time | Diagnostic framework for temporal consistency [28] |

| Clinical Codelist Generation | Standardize clinical concepts for analysis | Generalised Codelist Automation Framework (GCAF) [30] |

| Performance Assessment | Evaluate discrimination, calibration, and clinical utility | Decision Curve Analysis, calibration plots, ROC/PR-AUC [29] |

Results and Performance Benchmarking

Optimization Algorithm Performance

The enhanced optimization algorithm demonstrated superior performance across benchmark functions. When validated on 12 standard test functions from the IEEE CEC-2017 suite, the Improved Flood Algorithm (IFLA) significantly outperformed conventional optimization approaches [27]. Testing parameters included variable dimension of 10, population size of 30, maximum iterations of 500, with 30 independent runs for statistical robustness.

Clinical Implementation and Workflow Integration

Successful clinical implementation requires seamless integration into existing workflows. The referenced delirium prediction study implemented a MATLAB-based clinical decision support system (CDSS) for real-time risk stratification [27]. The system demonstrated clinical utility with net benefit across risk thresholds, highlighting the translational potential of properly validated AutoML frameworks.

The FAIR-AI (Framework for the Appropriate Implementation and Review of AI) evaluation framework provides guidance for responsible implementation, emphasizing validation, usefulness, transparency, and equity [29]. This includes assessing net benefit by weighing benefits and risks while considering workflows that mitigate risks, and evaluating factors such as resource utilization, time savings, ease of use, and workflow integration.

Leveraging Large Language Models (LLMs) for Criteria Transformation and Data Processing

Within the broader thesis on semi-automated validation of clinical prediction models (CPMs), the reliable transformation of unstructured clinical criteria into structured, queryable data represents a critical foundational step. Semi-automated validation platforms have demonstrated reliability in reproducing manual validation results for CPMs, increasing accessibility and adoption [10]. However, their effectiveness is contingent on the quality and structure of input data. Large Language Models (LLMs) offer a transformative approach to automating the conversion of free-text clinical information, such as eligibility criteria from trial protocols, into structured formats required for robust validation and analysis [31]. This protocol details methodologies for leveraging LLMs to enhance data processing pipelines for CPM research.

Application Note: LLMs for Criteria Transformation to OMOP CDM

Background and Rationale

Assessing CPM performance across diverse populations requires efficient querying of real-world data (RWD) repositories. The Observational Medical Outcomes Partnership Common Data Model (OMOP CDM) provides a standardized structure for such data, but converting free-text eligibility criteria from clinical trials or cohort studies into executable Structured Query Language (SQL) queries remains a manual, time-consuming bottleneck [31]. LLMs can automate this transformation, accelerating the feasibility assessments that underpin external validation of CPMs.

A systematic evaluation of eight LLMs was conducted for converting free-text eligibility criteria into OMOP CDM-compatible SQL queries [31]. The study employed a three-stage preprocessing pipeline (segmentation, filtering, and simplification) that achieved a 58.2% reduction in tokens while preserving clinical semantics. Performance was measured based on the rate of effectively generated SQL and the frequency of model "hallucinations" – the generation of non-existent medical concept identifiers.

Table 1: Performance Comparison of Selected LLMs for SQL Query Generation

| Model | Effective SQL Rate | Hallucination Rate | Key Finding |

|---|---|---|---|

| llama3:8b (Open-source) | 75.8% | 21.1% | Achieved the highest effective SQL rate |

| GPT-4 | 45.3% | 33.7% | Lower effective SQL rate despite strong concept mapping |

| Overall (8 models) | - | 32.7% (avg) | Wrong domain assignments (34.2%) were the most common error |

In a related concept mapping task, which is fundamental to accurate criteria transformation, GPT-4 demonstrated superior performance against the rule-based USAGI system [31].

Table 2: Concept Mapping Accuracy (GPT-4 vs. USAGI)

| System | Overall Accuracy | Domain-Specific Accuracy (Range) |

|---|---|---|

| GPT-4 | 48.5% | 38.3% (Measurement) to 72.7% (Drug) |

| USAGI | 32.0% | - |

Experimental Protocol: Automated Criteria-to-SQL Transformation

Objective: To automatically convert free-text clinical trial eligibility criteria from ClinicalTrials.gov into OMOP CDM-compatible SQL queries using a structured LLM pipeline.

Materials:

- Source Data: Eligibility criteria from the Aggregate Analysis of ClinicalTrials.gov (AACT) database.

- Validation Data: OMOP CDM-formatted database (e.g., Asan Medical Center database with ~5M patients or the synthetic SynPUF dataset).

- LLM Options: GPT-4 (via API) or open-source alternatives like Llama3:8b.

- Computing Environment: Standard workstation with internet access for API calls or sufficient memory for local model deployment.

Methodology:

Preprocessing Module: