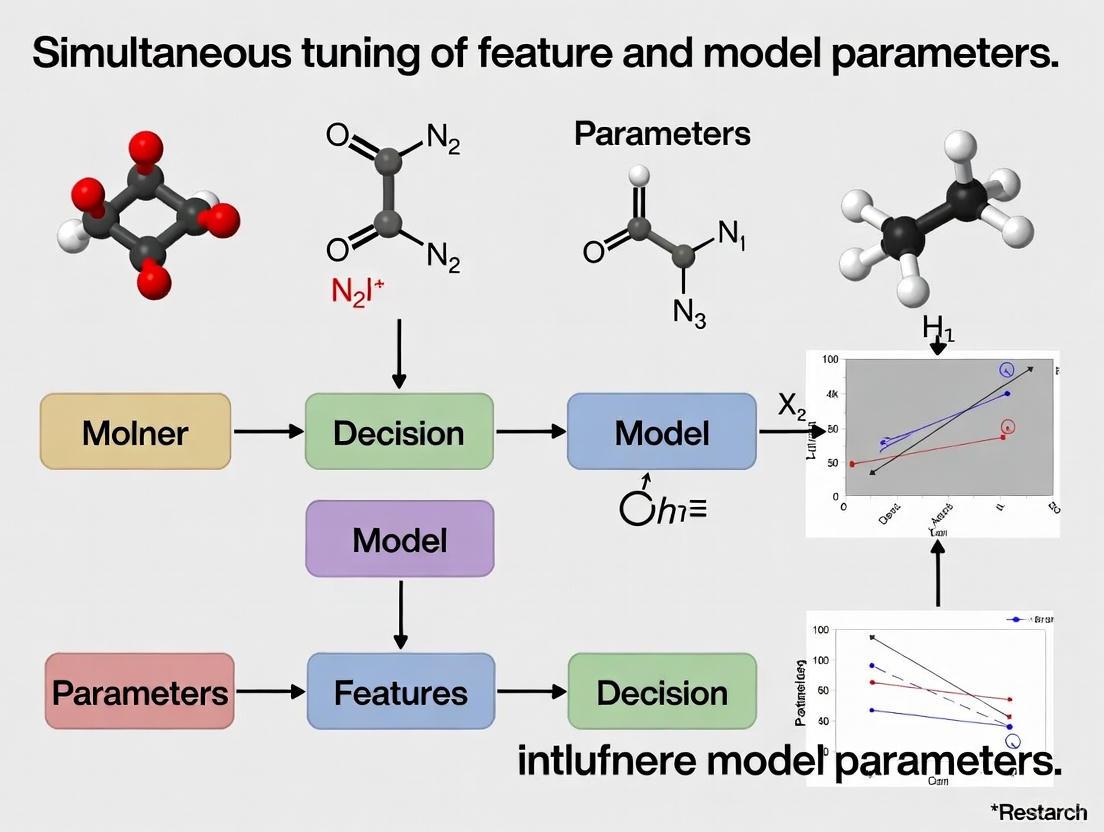

Simultaneous Tuning of Features and Model Parameters: An Integrated Framework for Enhanced Predictive Performance in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the integrated tuning of feature selection and model hyperparameters.

Simultaneous Tuning of Features and Model Parameters: An Integrated Framework for Enhanced Predictive Performance in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the integrated tuning of feature selection and model hyperparameters. It explores the foundational principles that make simultaneous optimization necessary, detailing methodological approaches like Bayesian optimization and hierarchical Bayesian models. The content addresses common troubleshooting challenges and optimization strategies, and presents rigorous validation and comparative frameworks. Through application-focused insights from computational drug discovery, including drug-target affinity prediction and dynamic treatment regimens, this article serves as a practical resource for building more accurate, robust, and generalizable predictive models in biomedical research.

The Critical Need for Simultaneous Tuning: Overcoming Limitations of Sequential Approaches in Biomedical Data

In the pursuit of robust predictive models for drug discovery, researchers must navigate a complex tuning landscape involving three distinct parameter classes: feature parameters that govern input data selection, model hyperparameters that control the learning algorithm's behavior, and calibration parameters that ensure predicted probabilities reflect true empirical likelihoods. The simultaneous optimization of these parameters presents both a formidable challenge and a significant opportunity for increasing model reliability and performance in critical pharmaceutical applications. This guide provides troubleshooting and methodological support for researchers undertaking this integrated optimization process.

Core Parameter Definitions & Troubleshooting

What is the fundamental difference between feature parameters, model hyperparameters, and calibration parameters?

Understanding the distinct roles of each parameter type is crucial before attempting simultaneous optimization.

- Feature Parameters: These control the selection and preprocessing of input variables for your model. A common example is the percentile of features to select in a filtering approach.

- Model Hyperparameters: These are configuration settings for the machine learning algorithm itself that must be specified before training begins. They control the learning process and model structure [1] [2]. Examples include the regularization strength

Cin Support Vector Machines or the learning rate for a neural network [3] [2]. - Calibration Parameters: These are adjusted after a model is trained to ensure that its predicted probabilities align with true observed frequencies. For instance, the

temperatureparameter in Temperature Scaling is a calibration parameter that adjusts a model's output probabilities without changing the predicted class labels [4].

The table below summarizes the key distinctions:

Table 1: Core Parameter Types in Machine Learning

| Parameter Type | Definition | Set By | Examples |

|---|---|---|---|

| Feature Parameters | Control input data selection and preprocessing | Practitioner before training | percentile in SelectPercentile, feature subset [5] |

| Model Hyperparameters | Govern the model's learning process and structure [1] [2] | Practitioner before training | SVM's C and gamma, Random Forest's max_depth [3] [2] |

| Calibration Parameters | Adjust output probabilities to match empirical likelihoods [6] [4] | Practitioner after training | temperature in Temperature Scaling [4] |

What is the most common error when building a pipeline for simultaneous tuning, and how can I fix it?

The most frequent error is incorrect parameter naming when defining the search space for a pipeline that combines feature selection and model estimation [5].

- Error Scenario: You create a scikit-learn

Pipelinewith a feature selection step ('anova') and a classifier step ('svc'). You then try to tune this pipeline usingGridSearchCVorHalvingGridSearchCVwith a parameter grid defined as{"C": [0.1, 1, 10]}, resulting in a ValueError stating thatCis not a valid parameter for the pipeline [5]. - Root Cause: The tuner is trying to find a parameter

"C"for the overallPipelineobject, rather than for the specific'svc'estimator within it. - Solution: Use the double-underscore (

__) syntax to specify which pipeline step a parameter belongs to. The correct parameter name in the grid should be"svc__C"[5]. All hyperparameters for your model, and any parameters for the feature selector, must be specified this way.

How can I diagnose if my model is poorly calibrated, and what are my remediation options?

A model is poorly calibrated if its predicted probabilities do not match the observed event rates. For example, of all the instances for which it predicts a 70% probability, about 70% should actually belong to the positive class [6].

- Diagnosis with Calibration Plots: The primary diagnostic tool is the calibration plot (or reliability diagram) [6] [4]. These plots group predictions into bins based on their predicted probability and plot the bin's mean predicted probability against the bin's actual observed event rate. A well-calibrated model will have points close to the diagonal line.

- Remediation with Post-Processing: If diagnostics reveal poor calibration, you can post-process the predictions.

- Platt Scaling: Uses logistic regression to map model outputs to calibrated probabilities [6].

- Isotonic Regression: A non-parametric approach that can learn more complex, non-sigmoid calibration mappings [4].

- Temperature Scaling: A single-parameter variant of Platt Scaling commonly used for neural networks, which softens the softmax function to produce smoother probability estimates [4].

Integrated Tuning Methodologies

Experimental Protocol: Simultaneous Feature Selection and Hyperparameter Tuning with scikit-learn

This protocol outlines the steps to integrate feature selection parameters and model hyperparameters within a single tuning process using scikit-learn pipelines.

- Step 1: Construct the Pipeline. Create a

Pipelineobject that sequentially combines your feature selection method and your estimator. This ensures the feature selection is performed correctly for each cross-validation fold during tuning. - Step 2: Define the Integrated Parameter Grid. Specify the parameter grid for the search. Remember to use the

step_name__parametersyntax for all parameters, including those for the feature selector. - Step 3: Execute the Tuning Process. Use a search strategy like

HalvingGridSearchCVorGridSearchCVto find the best combination of parameters across the entire pipeline. - Step 4: Analyze Results. The best found combination of feature selection percentile and model hyperparameters is available in

search.best_params_.

Advanced Bayesian Integration Framework

For complex scientific computing and computer experiments, a hierarchical Bayesian framework can be employed to simultaneously determine tuning parameters (which have no physical meaning, akin to feature/hyperparameters) and calibration parameters (which have true, unknown values in the physical system) [7]. This method uses a Gaussian stochastic process model and Markov Chain Monte Carlo (MCMC) simulation to draw from the posterior distribution of the calibration parameters while identifying optimal tuning settings [7]. This approach is particularly valuable in drug design for tasks like predicting molecular binding affinity where it is critical to quantify uncertainty for multiple parameter types simultaneously [8] [7].

Essential Research Reagents & Computational Tools

Table 2: Key Software Tools for Integrated Parameter Tuning

| Tool / Reagent | Function / Purpose | Application in Tuning |

|---|---|---|

| scikit-learn (Python) | Provides machine learning algorithms and utilities. | Core library for building pipelines, implementing feature selection, and performing hyperparameter tuning via GridSearchCV and RandomizedSearchCV [1] [5]. |

| probably (R) | A specialized package for post-processing classification models. | Creates calibration plots (e.g., cal_plot_breaks, cal_plot_windowed) for diagnosing probability miscalibration [6]. |

| Bayesian Optimization Libraries (e.g., scikit-optimize) | Implements smart, sequential model-based optimization. | Efficiently navigates the hyperparameter space by modeling the performance as a probabilistic function, requiring fewer evaluations than grid or random search [1]. |

| Gaussian Process Model | A statistical model for modeling unknown functions. | Serves as a surrogate model in Bayesian optimization and in advanced Bayesian calibration frameworks for computer experiments [7]. |

| MCMC Sampler (e.g., PyMC3, Stan) | Performs Markov Chain Monte Carlo simulation. | Used in advanced hierarchical Bayesian frameworks to draw samples from the posterior distribution of calibration parameters [7]. |

Frequently Asked Questions (FAQs)

Why should I tune feature and model parameters simultaneously instead of sequentially?

Sequential tuning (e.g., first selecting the best features, then freezing them to tune the model) can lead to suboptimal models. This is because the "best" set of features can be dependent on the model's hyperparameters and vice-versa [9]. Simultaneous tuning ensures the selected features are optimized in the context of the model's overall structure, leading to a better-performing and more robust final model [9].

My simultaneous tuning process is computationally expensive. What are my options?

This is a common challenge. Consider these strategies:

- Switch from Grid Search: Replace

GridSearchCVwithRandomizedSearchCV, which often finds a good combination much faster by sampling a fixed number of parameter settings from distributions [1] [2]. - Use Successive Halving: Employ

HalvingGridSearchCVorHalvingRandomSearchCV. These methods quickly weed out poor parameter combinations by allocating more resources to promising candidates [5]. - Adopt Bayesian Optimization: This is a more efficient, model-based search strategy that uses past evaluation results to choose the next hyperparameters to evaluate, significantly reducing the number of iterations needed [1] [2].

In the context of drug design, how does this tuning relate to "selectivity"?

In drug discovery, "selectivity" refers to a compound's ability to interact with a primary target while minimizing interactions with off-targets [8]. In machine learning terms:

- Narrow Selectivity is like precise feature selection—identifying and exploiting small, critical differences (e.g., a single methyl group in a binding pocket) to make a model highly specific to a single target [8]. This can be controlled by feature parameters.

- Broad Selectivity/Promiscuity is managed by the model's regularization hyperparameters (like

Cin SVM). A model with high regularization might be more "general," avoiding overfitting to a single target's noise, much like a drug designed to be robust against multiple mutant strains [8]. Tuning these parameters effectively helps achieve the desired balance between specificity and generality in predictive models for drug activity.

What is sequential tuning in the context of model development?

Sequential tuning refers to the practice of repeatedly adjusting model hyperparameters or checking experimental results at multiple interim points during training or evaluation. Unlike approaches that set parameters once, sequential methods involve an iterative process where decisions are based on cumulative, repeatedly-measured results. In drug development, this is analogous to repeatedly analyzing clinical trial data as new patient data arrives, rather than just once at the trial's conclusion [10] [11].

How does sequential tuning differ from simultaneous parameter tuning?

While sequential tuning adjusts parameters in a step-wise manner, often focusing on one parameter type at a time, simultaneous tuning optimizes all feature and model parameters concurrently. Simultaneous approaches typically employ more sophisticated optimization techniques like Bayesian optimization to find the global optimum across all parameters at once, whereas sequential methods risk suboptimal solutions by fixing one set of parameters before moving to the next [12] [13].

Troubleshooting Guides

How can I detect if false discoveries are inflating my model's performance?

False discoveries often manifest as performance metrics that degrade significantly when the model encounters truly unseen data, particularly in "cold start" scenarios with novel data structures.

Table 1: Indicators of False Discovery Inflation

| Observation | Potential Cause | Diagnostic Check |

|---|---|---|

| Large performance gap between training/validation sets | Overfitting to training data | Compare performance on holdout set with completely unseen data types |

| High variance in performance across different data splits | Data leakage or over-optimistic validation | Implement block cross-validation to account for experimental effects |

| Performance drops significantly with novel compound scaffolds or cell lines | Poor generalizability to new entities | Test model on data with different scaffolds/clusters than training set |

| Inconsistent results when adding minor data variations | High sensitivity to data perturbations | Conduct sensitivity analysis with bootstrapped or synthetic data |

To diagnose, systematically evaluate your model under different scenarios. For drug response prediction, this means testing under warm start (similar compounds/cell lines) versus cold start (novel compounds/cell lines) conditions. Research shows that performance metrics like Pearson Correlation can drop from 0.9362 (warm start) to 0.4146 (cold scaffold) when models face truly novel data, indicating false discoveries during development [14].

What strategies prevent error accumulation during sequential tuning?

Error accumulation occurs when early tuning decisions based on imperfect metrics constrain later optimization potential, creating a cascade of suboptimal choices.

Implementation Protocol:

- Employ Bayesian Optimization: Use sequential model-based optimization (SMBO) that builds a probabilistic model of the objective function. This approach uses prior evaluation results to select the most promising hyperparameters for future evaluations, reducing random exploration [12] [13].

- Implement Strict Data Separation: Maintain rigorous separation between training, validation, and test sets throughout the entire tuning process. Never reuse test data for model selection decisions [15].

- Use Block Cross-Validation: When your data has inherent groupings (e.g., different cell lines, experimental batches, or time periods), implement block cross-validation where entire groups are held out together during validation. This prevents artificially inflated performance from data leakage [15].

- Set Early Stopping Criteria: Define objective performance thresholds before beginning tuning. Use methods like the YEAST sequential test that control false discovery rates despite repeated checks, allowing for early termination of unpromising experiments without inflating Type I error rates [10].

Diagram 1: Sequential Tuning Safeguards Workflow (67 characters)

Quantitative Analysis of Sequential Tuning Pitfalls

How significant is the generalizability degradation in sequential approaches?

Research demonstrates that sequential tuning methods exhibit substantial performance degradation when models encounter data distributions different from training sets.

Table 2: Performance Degradation in Cold Start Scenarios

| Scenario | Performance Metric | Warm Start Performance | Cold Start Performance | Performance Drop |

|---|---|---|---|---|

| Cold Drug | Pearson Correlation | 0.9362 ± 0.0014 | 0.5467 ± 0.1586 | 41.6% |

| Cold Scaffold | Pearson Correlation | 0.9362 ± 0.0014 | 0.4816 ± 0.1433 | 48.5% |

| Cold Cell & Scaffold | Pearson Correlation | 0.9362 ± 0.0014 | 0.4146 ± 0.1825 | 55.7% |

| Cold Cell (10 clusters) | Root Mean Square Error | 0.9703 ± 0.0102 | ~1.34 (estimated) | ~38.1% |

Data derived from TransCDR drug response prediction studies showing how model generalizability degrades under different cold start conditions [14].

What is the impact of repeated interim checks on false discovery rates?

Sequential testing without proper statistical correction substantially inflates false discovery rates. In A/B testing scenarios, repeatedly checking results after each new observation can dramatically increase Type I error rates beyond the nominal 5% threshold. Methods like YEAST (Yet Another Sequential Test) have been developed specifically to control false discovery rates in continuous monitoring scenarios by "inverting" bounds on threshold crossing probabilities derived from maximal inequalities [10].

Experimental Protocols for Mitigating Sequential Tuning Pitfalls

Protocol: Implementing false discovery rate control in sequential tuning

This protocol adapts statistical methods from clinical trial monitoring to machine learning tuning processes.

Materials: Experimental data divided into training, validation, and test sets; statistical software capable of implementing sequential testing procedures.

Procedure:

- Define maximum sample size or tuning iterations based on computational constraints

- Set alpha-spending function that determines how Type I error rate is allocated across interim checks

- Implement sequential boundaries using methods like YEAST or group sequential tests

- At each interim check, compute test statistics and compare to sequential boundaries

- Stop tuning early if boundaries are crossed, indicating statistical significance

- For final analysis, use only the data and tuning decisions up to the stopping point [10]

Validation: Apply tuned model to completely held-out test set that wasn't used for any tuning decisions. Compare performance with models tuned using traditional approaches.

Protocol: Cross-validation strategy to preserve generalizability

Proper cross-validation is crucial for obtaining realistic performance estimates during sequential tuning.

Materials: Dataset with documented experimental blocks or natural groupings; machine learning framework with cross-validation capabilities.

Procedure:

- Identify inherent data groupings (e.g., experimental batches, cell line families, compound scaffolds)

- Implement block cross-validation where entire groups are held out together in validation folds

- Ensure no data from the same group appears in both training and validation splits

- Perform hyperparameter tuning within each cross-validation fold separately

- Aggregate performance across all folds to select optimal hyperparameters

- Validate selected hyperparameters on completely independent test set [15]

Troubleshooting: If performance varies dramatically across folds, this indicates high model sensitivity to specific data groups and potential generalizability issues.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Robust Sequential Tuning

| Research Reagent | Function | Application Context |

|---|---|---|

| YEAST Sequential Test | Controls false discovery rates in continuous monitoring | Statistical validation of interim results during sequential tuning |

| Bayesian Optimization | Probabilistic model-based hyperparameter optimization | Simultaneous tuning of multiple parameter types while managing uncertainty |

| Block Cross-Validation | Performance estimation while accounting for data groupings | Prevents over-optimistic performance estimates from data leakage |

| TransCDR Framework | Transfer learning for improved generalizability | Enhancing performance on novel compounds/scaffolds in drug response prediction |

| Adam Optimizer | Adaptive moment estimation for stable training | Optimization algorithm with hyperparameters (learning rate, beta1, beta2) that require tuning |

| Pharmacokinetic-Pharmacodynamic (PK-PD) Models | Mathematical framework for drug effect modeling | Integrating multiple data sources for more robust parameter estimation in drug development [11] |

Advanced Methodologies: Integrating Transfer Learning to Enhance Generalizability

How can transfer learning address sequential tuning pitfalls?

Transfer learning mitigates sequential tuning issues by leveraging knowledge from large-scale source domains to reduce the parameter space requiring tuning in target domains.

Implementation Protocol:

- Select Pre-trained Encoders: Choose encoders pre-trained on large, diverse datasets (e.g., ChemBERTa for molecular structures, GIN models for graph data)

- Freeze Early Layers: Keep lower-level layers fixed to preserve general features learned from source domain

- Sequential Unfreezing: Gradually unfreeze and fine-tune higher-level layers with a decreasing learning rate

- Multi-modal Integration: Fuse multiple data representations (e.g., SMILES strings, molecular graphs, ECFPs) using attention mechanisms

- Progressive Evaluation: Systematically evaluate generalizability across warm start, cold drug, cold cell, and cold scaffold scenarios [14]

This approach was validated in drug response prediction, where TransCDR significantly outperformed models trained from scratch, particularly in cold start scenarios with novel compound scaffolds or cell line clusters [14].

Diagram 2: Transfer Learning for Generalizability (52 characters)

Frequently Asked Questions (FAQs)

Why does my model perform well during tuning but poorly in real application?

This discrepancy typically stems from improper validation strategies during tuning. Common issues include:

- Data leakage: Information from the test set indirectly influencing training decisions

- Over-optimistic cross-validation: Using simple random splits instead of block cross-validation for grouped data

- Insufficient scenario testing: Only evaluating on warm start scenarios without testing cold start conditions

- False discoveries: Inflated performance metrics due to repeated testing without proper statistical correction [15] [14]

How many sequential tuning iterations are typically safe before error accumulation becomes problematic?

There's no universal safe number, as it depends on your dataset size, model complexity, and statistical correction methods. However, research indicates that:

- Without proper statistical correction, error rates inflate rapidly after just 5-10 interim checks

- Methods like YEAST sequential testing allow unlimited checks while controlling false discovery rates

- Bayesian optimization typically requires fewer iterations than grid or random search, reducing cumulative error potential [10] [12] [13]

In drug development applications, what specific sequential tuning pitfalls should I be most concerned about?

For drug development, the highest risks include:

- Poor generalizability to novel compounds: Models that work well for known chemical scaffolds but fail for novel structures

- Overfitting to specific cell lines: Models that don't translate to new biological systems

- Integration errors: Failure to properly integrate multi-omics data (genomics, transcriptomics, proteomics) during sequential tuning

- Translation failures: Models that show promising in silico performance but fail in clinical applications due to tuning artifacts [11] [14]

In computational research, the traditional approach to building models often involves a sequential, isolated process: first selecting features, then tuning model parameters. However, a paradigm shift is underway toward joint optimization, where these steps are performed simultaneously. This integrated methodology leverages synergistic interactions between feature subsets and model hyperparameters, often yielding superior performance, enhanced robustness, and more parsimonious models. This article explores the theoretical foundations of joint optimization and provides a practical technical support guide for researchers implementing these advanced techniques in fields like drug discovery and biomarker identification.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What is the fundamental advantage of jointly optimizing feature selection and model parameters?

The core advantage lies in escaping local optima that plague sequential methods. When features are selected in isolation, the chosen subset may be optimal for a simple baseline model but suboptimal for the final, more complex model's architecture and hyperparameters. Joint optimization allows the algorithm to evaluate feature subsets in the context of the specific model that will use them, leading to a more globally optimal solution. This is because the relevance of a feature can be dependent on the model's inductive bias [16] [17].

Q2: Our joint optimization process is computationally expensive. What strategies can mitigate this?

Computational intensity is a common challenge. Several strategies can help:

- Employ Efficient Algorithms: Use frameworks designed for efficiency, such as LightGBM, which utilizes histogram-based algorithms and grows trees leaf-wise to accelerate training [16].

- Leverage Bayesian Optimization: For hyperparameter tuning, Bayesian optimization is more sample-efficient than grid or random search, intelligently selecting the most promising hyperparameters to evaluate next [16].

- Incorporate Dimensionality Reduction: As a preprocessing step, techniques like Principal Component Analysis (PCA) can compress high-dimensional data into a lower-dimensional form, reducing the feature space the joint optimizer must explore [16].

Q3: How can we prevent overfitting when the number of features is much larger than the number of samples?

Overfitting in high-dimensional spaces is a critical risk. Joint optimization frameworks address this by embedding sparsity constraints directly into the objective function.

- Use Regularization: Integrate L1 regularization (Lasso) or group Lasso penalties. These penalties push the coefficients of less important features toward zero, effectively performing feature selection within the model training process. This embedded regularization is a hallmark of robust joint optimization [18] [17].

- Cross-Validation: Rigorously use cross-validation to tune the strength of the regularization parameter, ensuring that the selected features generalize to unseen data [19].

Q4: In multi-stage decision problems, why is simultaneous optimization across all stages preferred?

In sequential methods like Q-learning for dynamic treatment regimens, performing variable selection at each stage independently allows false discovery errors to accumulate over time. A feature unimportant at one stage might be critical at another. A joint framework, such as the L1 multistage ramp loss (L1-MRL), uses a single, unified optimization problem across all stages with a group penalty. This identifies variables that are unimportant across all stages, leading to more reliable and parsimonious decision rules and controlling error propagation [17].

Experimental Protocols & Methodologies

Protocol 1: Three-Segment Dynamic Feature Optimization for Spectral Data

This protocol, adapted from a study on authenticating Chinese medicinal materials, outlines a joint framework for high-dimensional spectral data [16].

- 1. Objective: To identify a minimal set of spectral features that maximize the accuracy of a geographical origin classification model.

- 2. Methodology:

- Data Preprocessing: Perform integrity checks and outlier correction on mid-infrared spectral data (e.g., using a five-point moving average interpolation).

- Feature Stratification: Use the minimum Redundancy Maximum Relevance (mRMR) algorithm to rank all spectral features by their importance. Dynamically divide them into three segments:

- Retention Segment: Top-ranked features with high relevance and low redundancy.

- Dimensionality Reduction Segment: Features with intermediate relevance, compressed using Principal Component Analysis (PCA).

- Deletion Segment: Low-relevance features discarded as noise.

- Joint Optimization with Bayesian Search: Use Bayesian optimization to jointly determine the optimal thresholds for the three segments and the hyperparameters of a LightGBM classifier.

- 3. Outcome: The proposed mRMR-PCA-LightGBM model achieved 90.9% accuracy, significantly outperforming control models by strategically capturing origin-specific information while eliminating noise [16].

Protocol 2: Joint Molecule Generation and Property Prediction with a Transformer Model

This protocol uses a joint model for de novo molecular design, where generation and prediction are learned simultaneously [20].

- 1. Objective: To develop a single model capable of both generating novel molecular structures and predicting their properties with high accuracy.

- 2. Methodology:

- Model Architecture: Implement the Hyformer, a transformer-based model that blends an autoregressive decoder (for generation) and a bidirectional encoder (for prediction) with shared parameters.

- Training Scheme: Use an alternating training scheme, switching between causal attention (for generation) and bidirectional attention (for prediction) to prevent gradient interference between the two objectives.

- Learning Task: The model is trained to learn the joint distribution ( p(\mathbf{x}, y) ) of molecules (( \mathbf{x} )) and their properties (( y )), enabling it to perform unconditional generation, property prediction, and conditional generation (creating molecules with desired properties).

- 3. Outcome: The Hyformer demonstrates synergistic benefits, including improved control over the generative process, robust property prediction for out-of-distribution molecules, and high-quality molecular representations [20].

The following table summarizes quantitative evidence from studies employing joint optimization strategies.

Table 1: Performance Comparison of Joint vs. Isolated Optimization Methods

| Application Domain | Joint Optimization Method | Key Performance Metrics | Comparison to Isolated Processes |

|---|---|---|---|

| Origin Identification of Medicinal Materials [16] | mRMR-PCA-LightGBM with Bayesian Optimization | Accuracy: 90.9%F1-Score: 0.91Cohen's Kappa: 0.90 | "Markedly better" than five tested control models using isolated feature selection and model tuning. |

| Dynamic Treatment Regimens (DTRs) [17] | L1 Multistage Ramp Loss (L1-MRL) | Model Sparsity & False Discovery Control | Outperforms sequential stage-wise variable selection methods, which suffer from accumulating false discovery errors. |

| Molecule Generation & Property Prediction [20] | Hyformer Transformer Model | Generation Quality & Prediction Robustness | Demonstrates synergistic benefits over separate models, especially in conditional sampling and out-of-distribution prediction. |

Workflow Visualization

The diagram below illustrates the integrated workflow of a joint optimization process for feature selection and model tuning.

Joint Optimization Workflow for Feature Selection and Model Tuning

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Joint Optimization Experiments

| Tool / Reagent | Type | Primary Function in Joint Optimization |

|---|---|---|

| mRMR Algorithm [16] | Feature Selection Filter | Ranks features based on their relevance to the target and redundancy with each other, providing a foundation for dynamic segmentation. |

| LightGBM [16] | Gradient Boosting Framework | An efficient machine learning model whose hyperparameters (e.g., numleaves, learningrate) are jointly tuned with feature selection thresholds. |

| Bayesian Optimization [16] | Hyperparameter Tuning | Intelligently navigates the combined space of feature selection parameters and model hyperparameters to find the global optimum. |

| L1 (Lasso) / Group Lasso Penalty [18] [17] | Regularization Technique | Embedded in the model's loss function to perform feature selection by shrinking irrelevant feature coefficients to zero during training. |

| Transformer (Hyformer) [20] | Deep Learning Architecture | Serves as a joint model backbone that can be alternately configured for both generative and predictive tasks, sharing parameters. |

| Variational Autoencoder (VAE) [21] [22] | Generative Model | Used in active learning cycles to generate novel molecular structures; its parameters are optimized based on feedback from property prediction oracles. |

Frequently Asked Questions

Q1: What is the fundamental difference between a tuning parameter and a calibration parameter?

- A: A tuning parameter is a numerical quantity present only in a computer simulation that controls a numerical algorithm (e.g., mesh density, load discretization). It has no physical analogue in the real-world experiment. In contrast, a calibration parameter is a input to the simulation that has a physical meaning in the real-world experiment, but whose true value is unknown or unmeasured during the physical trial (e.g., friction coefficient, initial position) [7].

Q2: What are the observable symptoms in my results if I have mistakenly treated a tuning parameter as a calibration parameter?

- A: A key symptom is obtaining implausible or highly uncertain posterior distributions for the parameters. For instance, the posterior distribution may become bimodal or exhibit an extremely large variance, making it impossible to determine a definitive value. This was demonstrated in a finite element analysis case study, where this treatment led to a bimodal posterior for a tuning parameter, rendering the results uninterpretable for practical use [7].

Q3: Why can't I use a standard Bayesian calibration approach for all unknown parameters?

- A: Standard Bayesian calibration frameworks are designed to infer the distribution of physically meaningful calibration parameters. When tuning parameters (which are purely numerical) are included in this process, they can "absorb" discrepancy in the model that should be attributed to the bias function or the calibration parameters themselves. This leads to biased estimations and misrepresentation of the uncertainty associated with the true calibration parameters [7].

Q4: Are there methodologies designed to handle these parameters simultaneously?

- A: Yes, advanced statistical methodologies have been proposed for this purpose. These often involve a hybrid approach: tuning parameters are set by minimizing a discrepancy measure, while the distribution of calibration parameters is determined using a hierarchical Bayesian model. This method views the output as a realization of a Gaussian stochastic process with hyperpriors, and draws from the posterior distribution are obtained via Markov chain Monte Carlo (MCMC) simulation [7].

Q5: How does the confusion between tuning and calibration relate to other concepts like volatility and stochasticity?

- A: The core challenge is similar: dissociating different sources of noise that have opposite effects on learning. In computational learning, volatility (rapid changes in a hidden state) demands a higher learning rate, while stochasticity (moment-to-moment observation noise) requires a lower learning rate. Confusing them leads to suboptimal predictions. Similarly, confusing tuning and calibration parameters, which have different ontological statuses, leads to suboptimal and biased model fitting [23].

Troubleshooting Guides

Problem: Unidentifiable or Highly Uncertain Parameters After Model Calibration

Description: After running a calibration process, the posterior distributions for your parameters are bimodal, flat, or otherwise uninterpretable. You cannot determine a definitive value for the parameters, and the associated uncertainty is unreasonably large.

Diagnostic Steps:

- Audit Your Parameters: Create a table listing every unknown parameter in your simulation. For each, ask: "Does this have a direct physical meaning in the real-world experiment?" If the answer is "no," it is a tuning parameter.

- Check for Symptomatic Output: Compare your results to the documented case study [7]. Look for bimodality in trace plots or a lack of convergence in your MCMC chains, particularly for parameters you suspect might be tuning parameters.

- Review the Experimental Protocol: Verify the data sources. The table below contrasts the nature of data from computer versus physical experiments, which is fundamental to correctly defining the problem [7].

Table: Characteristics of Computer and Physical Experiments

| Aspect | Computer Experiment | Physical Experiment |

|---|---|---|

| Input Variables | Control, Tuning, and Calibration parameters | Control variables only |

| Primary Goal | Improve representativeness to a physical phenomenon | Study relationship between response and control variables |

| Data Output | Simulated response | Physical measurement |

| Key Challenge | Managing lengthy run times and different parameter types | Measurement error and uncontrolled environmental variables |

Solution:

- Implement a Simultaneous Determination Methodology: Do not use a standard calibration tool for all parameters. Adopt a methodology that explicitly separates the treatment of tuning and calibration parameters [7].

- Adopt a Hierarchical Bayesian Model: Use a framework where tuning parameters are set to minimize discrepancy, while calibration parameters are inferred probabilistically. This often involves modeling the system output as a Gaussian stochastic process [7].

- Validate with a Known Case: Test your new methodology on a simplified or well-understood case where the true values of calibration parameters are known, to ensure it recovers them accurately.

The following workflow diagram illustrates the core methodology for simultaneously determining tuning and calibration parameters, providing a corrective action to the single-problem treatment.

Problem: Model Fails to Generalize or Predicts Poorly on New Data

Description: Your model fits the calibration data well but performs poorly when making predictions for new input conditions, indicating overfitting or an incorrect representation of model discrepancy.

Diagnostic Steps:

- Check Parameter Compensation: Investigate if the tuning parameters are being adjusted to over-fit the noise in the calibration data, rather than to improve the fundamental numerical solution. This is a classic sign that a tuning parameter is being used as a "fudge factor."

- Examine the Bias Function: In Bayesian calibration frameworks, if tuning parameters are incorrectly specified, the bias (or discrepancy) function cannot be accurately estimated, leading to poor extrapolation.

Solution:

- Reframe the Learning Problem: Recognize that distinguishing parameter types is analogous to dissociating volatility and stochasticity in a learning algorithm. Your model needs to separate the "numerical noise" (tuning) from the "physical uncertainty" (calibration) [23].

- Incorporate a Structured Bias Function: Ensure your Bayesian model includes a non-parametric bias function that is independent of the tuning parameters. This prevents the tuning parameters from absorbing systematic errors that belong to the model itself.

The Scientist's Toolkit

Table: Essential Reagents and Computational Tools for Simultaneous Tuning and Calibration Research

| Research Reagent / Tool | Type | Function in the Experiment |

|---|---|---|

| Gaussian Stochastic Process Model | Statistical Model | Serves as a surrogate for the computer code, enabling predictions at untried inputs and quantifying uncertainty [7]. |

| Hierarchical Bayesian Model | Modeling Framework | Provides the structure for simultaneously handling different types of parameters and data sources, with priors that capture uncertainty at multiple levels [7]. |

| Markov Chain Monte Carlo (MCMC) | Computational Algorithm | Used to draw samples from the complex posterior distribution of the parameters, facilitating Bayesian inference [7]. |

| Kalman Filter | Computational Algorithm | An optimal Bayesian estimator for systems with Gaussian noise; its theoretical principles inform how learning rates should adapt to different noise types (volatility vs. stochasticity) [23]. |

| Sparse Distributed Representation | Computational Concept | A neural-inspired tuning strategy that activates specific neuron subsets for different tasks, improving efficiency in multi-task learning and avoiding interference [24]. |

| NSL-KDD Dataset | Benchmark Data | A standard dataset for evaluating intrusion detection systems, used here as an analogy for testing the robustness of ML classifiers under feature selection, similar to testing model robustness under parameter uncertainty [25]. |

Integrated Methodologies: From Bayesian Frameworks to Regularized Machine Learning for Simultaneous Optimization

Hierarchical Bayesian Models for Tuning and Calibration with Gaussian Stochastic Processes

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using a Hierarchical Bayesian Model (HBM) over traditional calibration for multi-source data? HBMs offer significant advantages when calibrating models using data from multiple specimens, tests, or environmental conditions. Unlike traditional methods that either pool all data (obscuring specimen-to-specimen variability) or analyze datasets separately (losing population-level insights), HBMs explicitly separate and quantify different uncertainty types [26]. They model parameters for individual experiments as stemming from a common population distribution, whose hyperparameters are also inferred. This structure simultaneously quantifies epistemic uncertainty (from limited data) and aleatory uncertainty (inherent specimen-to-specimen variability), providing a full uncertainty representation for reliable predictions of new specimens [27] [26].

Q2: My Gaussian Process (GP) surrogate is computationally expensive to train. How can HBM and multi-fidelity approaches help? Integrating HBM with multi-fidelity modeling creates a powerful strategy to overcome computational bottlenecks. A Gaussian Process-based Multi-Fidelity Bayesian Optimization (GP-MFBO) framework can be employed, which builds a hierarchical model combining low-fidelity (fast, approximate) and high-fidelity (slow, accurate) data sources [28]. This allows the model to leverage the information from abundant low-fidelity simulations to inform the high-fidelity model, drastically reducing the number of expensive high-fidelity evaluations needed for reliable calibration and uncertainty quantification [28].

Q3: The predictive distributions from my calibrated GP seem overconfident. How can I improve calibration?

Miscalibration of GP predictive distributions is a known issue when hyperparameters are estimated from data. The calGP method addresses this by retaining the GP posterior mean but recalibrating the predictive variance [29]. It models the normalized prediction error using a generalized normal distribution, whose parameters are tuned via a posterior sampling strategy guided by Probability Integral Transform (PIT)-based metrics. This post-processing step improves the tail behavior and calibration of confidence intervals without retraining the underlying GP, leading to more reliable uncertainty estimates for decision-making [29].

Q4: In the context of drug discovery, how can I ensure my bioactivity model's uncertainty estimates are reliable? For high-stakes fields like drug discovery, model calibration is paramount. It is recommended to use accuracy and calibration scores together for hyperparameter tuning, as they often optimize different model properties [30]. Furthermore, employing train-time uncertainty quantification methods, such as Hamiltonian Monte Carlo for Bayesian Last Layers (HBLL), can significantly improve the reliability of uncertainty estimates. These methods treat model parameters as random variables, providing a principled estimate of epistemic uncertainty. For best results, these can be combined with post-hoc calibration methods like Platt scaling [30].

Troubleshooting Guides

Problem: Prohibitively High Computational Cost of HBM Inference

Issue: A direct ("vanilla") computational approach to HBM inference is often intractable for complex models due to high dimensionality [27].

Solution: Implement a dimension-reduction strategy by marginalizing over individual experiment parameters.

- Step 1: Offline Emulator Construction: Before MCMC sampling, build a computationally efficient emulator (surrogate model) for the response of each individual experiment. This replaces expensive physics-based simulations (e.g., Finite Element Analysis) during sampling [27].

- Step 2: Approximate Marginal Likelihood: Derive an approximate likelihood for the hyperparameters by marginalizing over the individual parameters. For each experiment, this involves integrating out the specimen-specific parameters using Bayesian Quadrature, leveraging the pre-built emulator [27].

- Step 3: Efficient MCMC Sampling: Perform MCMC sampling only on the hyperparameters (e.g., population means and variances). Since the dimension of hyperparameters is much lower than the full parameter set, this makes the inference computationally feasible [27].

Problem: Poor Calibration of Predictive Uncertainty

Issue: The model's confidence scores do not match the actual frequency of correct predictions (e.g., a predicted probability of 0.9 should correspond to a 90% chance of being correct) [30].

Solution: Apply a combination of train-time Bayesian methods and post-hoc calibration.

- Step 1: Implement a Bayesian Method: Replace deterministic neural network layers with Bayesian ones to capture epistemic uncertainty. For example, use a Bayesian last layer where weights are treated as distributions sampled via Hamiltonian Monte Carlo (HMC) [30].

- Step 2: Apply a Post-Hoc Calibrator:

- Split your data to create a hold-out calibration set.

- On this set, fit a calibrator like Platt Scaling (a logistic regression) to map your model's raw output scores to well-calibrated probabilities [30].

- Apply this mapping to your test predictions.

- Step 3: Validate with Calibration Diagnostics:

- Plot a Calibration Curve (reliability diagram). A well-calibrated model will have a curve close to the diagonal.

- Calculate the Expected Calibration Error (ECE). A lower ECE indicates better calibration [30].

Problem: Handling Coupled Multi-Physics Systems in Calibration

Issue: Standard multi-fidelity methods, designed for single-physics problems, perform poorly on systems with coupled physics (e.g., temperature and humidity in a calibration chamber) [28].

Solution: Use a dedicated multi-fidelity framework for coupled systems.

- Step 1: Decoupled Design: Formulate independent candidate spaces and objective functions for each physical field (e.g., temperature and humidity). This avoids interference during optimization [28].

- Step 2: Three-Layer Multi-Fidelity Modeling: Build a hierarchical model that integrates:

- Layer 1: Fast, physical analytical models for each field.

- Layer 2: Computational Fluid Dynamics (CFD) numerical simulations.

- Layer 3: High-fidelity experimental verification data [28].

- Step 3: Adaptive Acquisition: Use an acquisition function that balances the uncertainty penalty for each field and evaluates the information gain across all fidelity levels. This strategically selects the next best point and fidelity level to evaluate [28].

Experimental Protocols & Data Presentation

Protocol: HBM for Constitutive Material Model Calibration

This protocol details the calibration of a material model (e.g., the Giuffré-Menegotto-Pinto model for steel) using cyclic test data from multiple coupons [26].

- Data Collection: Perform experimental tests (e.g., cyclic stress-strain tests) on ( Ns ) nominally identical material specimens (coupons). The dataset is ( Y = { y1, y2, ..., y{Ns} } ), where ( yi ) is the stress response of the i-th specimen [26].

- Model Parameterization: Define the constitutive model ( M ) with a parameter vector ( \theta_i ) for each specimen ( i ) [26].

- Hierarchical Model Specification:

- Likelihood: ( yi | \thetai \sim N( M(\thetai), \Sigma{\text{error}} ) )

- Prior for individual parameters: ( \thetai | \phi \sim \text{LogNormal}( \mu{\theta}, \Sigma{\theta} ) ) (or another suitable distribution). The hyperparameters are ( \phi = { \mu{\theta}, \Sigma_{\theta} } ).

- Hyper-priors: Place weakly informative priors on ( \mu{\theta} ) and ( \Sigma{\theta} ) (e.g., Normal for means, Inverse-Gamma for variances) [26].

- Posterior Inference: Use sampling-based methods (e.g., MCMC, Hamiltonian Monte Carlo) to compute the joint posterior distribution of all parameters and hyperparameters, ( P(\theta1, ..., \theta{N_s}, \phi | Y ) ) [26].

- Validation: Use the posterior predictive distribution to simulate the response of a new, unseen specimen and compare it against fresh experimental data [26].

Quantitative Comparison of Calibration Methods

The table below summarizes key performance metrics from recent studies, useful for selecting a calibration approach.

Table 1: Performance comparison of various calibration and optimization methods across different applications.

| Method | Application Context | Key Performance Metrics | Results |

|---|---|---|---|

| GP-MFBO [28] | Calibration chamber optimization | Temperature uniformity score, Humidity uniformity score, Confidence interval coverage | Temp. score: 0.149 (within 4.5% of theoretical optimum), Humidity score: 2.38 (within 3.6% of optimum), Coverage: 94.2% |

| Residual Bayesian Attention (RBA) [31] | Engineering optimization & time-series forecasting | Coefficient of Determination (R²), Expected Calibration Error (ECE), Prediction Interval Normalized Average Width (PINAW) | R²: 0.972, ECE: 0.1877, PINAW: 0.180 |

| HMC Bayesian Last Layer (HBLL) [30] | Drug-target interaction prediction | Calibration Error (CE), Brier Score | Improved calibration and accuracy over baseline models; effective combination with Platt scaling |

| calGP [29] | Calibration of GP predictive distributions | Kolmogorov-Smirnov PIT (KS-PIT) distance | Better calibration than standard GP, with controllable conservativeness |

Research Reagent Solutions: Computational Tools

This table lists essential computational components for implementing the discussed methodologies.

Table 2: Key computational components and their functions in HBM and GP calibration workflows.

| Research Reagent (Component) | Function in the Experiment |

|---|---|

| Markov Chain Monte Carlo (MCMC) Sampler | Generates samples from the complex posterior distribution of parameters and hyperparameters [26]. |

| Gaussian Process (GP) Surrogate / Emulator | Replaces a computationally expensive physical model or simulation during inference and optimization [27] [28]. |

| Bayesian Quadrature | Approximates the intractable integral when marginalizing over individual parameters in an HBM [27]. |

| Multi-Fidelity Model Architecture | Integrates data of varying cost and accuracy to achieve reliable predictions with fewer high-fidelity evaluations [28]. |

| Information-Theoretic Acquisition Function | Guides sequential data collection by quantifying the expected information gain at candidate points [32]. |

Workflow and Conceptual Diagrams

HBM for Multi-Specimen Calibration

Multi-Fidelity Bayesian Optimization

Bayesian Optimization as a Unified Framework for Black-Box Tuning of Complex Pipelines

Frequently Asked Questions

What makes Bayesian Optimization (BO) well-suited for tuning complex, multi-stage pipelines? BO is ideal for optimizing black-box systems where the relationship between inputs and outputs is unknown, and each evaluation is expensive [33]. For multi-stage pipelines, specialized algorithms like Lazy Modular Bayesian Optimization (LaMBO) can exploit the sequential structure to dramatically reduce costs. LaMBO minimizes "switching costs" by being passive with early-stage module variables, as changing these requires re-running all subsequent modules. In one neuroimaging application, LaMBO achieved 95% optimality in 1.4 hours compared to 5.6 hours for the best alternative method [34].

My BO algorithm is not converging well. What could be wrong? Poor BO performance can often be traced to a few common pitfalls [35]:

- Incorrect Prior Width: The prior (specified by the kernel and its hyperparameters) may be mis-specified. An overly narrow prior can prevent the model from fitting the data, while an overly wide one can lead to over-exploration.

- Over-smoothing: The kernel's lengthscale might be too large, causing the surrogate model to oversmooth the objective function and miss important, sharper features.

- Inadequate Acquisition Maximization: The process of finding the maximum of the acquisition function itself is an optimization problem. If this inner optimization is not performed thoroughly, good candidate points may be missed.

How can I make my tuning workflow more robust to failures? When tuning complex systems, individual evaluations can fail due to issues like non-convergence, memory limits, or unseen data categories. To handle this [36]:

- Use Encapsulation: Configure your learner to encapsulate errors during training and prediction, preventing a single failure from crashing the entire tuning process.

- Set a Fallback Learner: Specify a simple, reliable learner (e.g., a featureless classifier) to be used automatically if the primary learner fails during resampling.

- Implement Timeouts: Set timeouts for training and prediction to prevent the process from hanging indefinitely on a single configuration.

What should I do if my parameter optimization needs to consider multiple, competing objectives? This is known as multi-objective optimization. In such cases, the goal is not to find a single best configuration, but a set of non-dominated solutions known as the Pareto front. A configuration is Pareto-efficient if no other configuration is better in all objectives. BO frameworks can be extended to handle multiple measures and identify this Pareto set [36].

Are there any pre-built services or packages to help me implement Bayesian Optimization? Yes, several packages can significantly reduce the implementation burden. A prominent example is OpenBox, an open-source system that supports a wide range of functionalities including [33]:

- Single and multi-objective optimization.

- Optimization with constraints.

- Multiple parameter types (Float, Integer, Ordinal, Categorical).

- Transfer learning and distributed parallel evaluation.

Troubleshooting Guides

Problem: Optimization is Slow to Converge or Makes Poor Choices

Potential Causes and Solutions:

Check Surrogate Model Hyperparameters

- Issue: The Gaussian Process (GP) kernel hyperparameters (like lengthscale and output scale) may be poorly fit, leading to a bad surrogate model [35].

- Action: Ensure the GP's hyperparameters are being optimized by maximizing the marginal likelihood. Visualize the surrogate model's mean and uncertainty to see if it reasonably fits your observed data [37].

Review Your Acquisition Function

- Issue: The balance between exploration and exploitation may be off.

- Action: Understand the behavior of your acquisition function. For example, Probability of Improvement (PI) can be made more exploratory by increasing its

ϵparameter, but setting it too high can lead to purely random search [38]. Expected Improvement (EI) is often a more robust default choice as it considers both the probability and magnitude of improvement [35] [38].

Validate the Optimization of the Acquisition Function

- Issue: The inner-loop optimization that finds the maximum of the acquisition function may be getting stuck in local optima or not searching thoroughly enough [35].

- Action: Increase the number of starting points for this inner optimizer or try a more robust optimization method for this sub-problem.

Potential Causes and Solutions:

Implement Error Encapsulation

- Issue: Your learner fails on specific hyperparameter combinations or data splits, crashing the entire tuning run [36].

- Action: Activate encapsulation for your learner. This isolates errors during training and prediction stages, allowing the tuning process to continue.

Manage Memory Usage

- Issue: The tuning experiment consumes too much memory, especially during nested resampling.

- Action: Disable the storage of non-essential objects [36]:

- Set

store_models = FALSE(this is often the biggest saving). - Set

store_benchmark_result = FALSE(this disables storing predictions). - Set

store_tuning_instance = FALSE(but note this limits some post-hoc analysis).

- Set

Experimental Protocols & Methodologies

Protocol 1: Tuning a Modular Pipeline with Lazy Modular BO (LaMBO)

This protocol is designed for systems where inputs are processed through a sequence of modules, and changing an early-stage variable is costly because it requires re-running all downstream modules [34].

- System Modeling: Define your pipeline as a sequence of

Mmodules. Let the parameters of modulembex_m. The total cost of a query is the cost to run the system from the first module where a parameter has changed. - Algorithm Selection: Implement the Lazy Modular Bayesian Optimization (LaMBO) algorithm. The key idea is to use a tree-based structure to group parameters and apply a conditional sampling strategy that encourages "laziness" by minimizing changes to early-stage parameters.

- Optimization Loop:

- Maintain a probability distribution over the parameter space.

- At each iteration, conditional on the previous selection, sample a new configuration, favoring parameters close to the previous one in the tree structure.

- Update the surrogate model (Gaussian Process) and the probability distribution using a multiplicative update rule based on the observed performance.

- Validation: The algorithm achieves sublinear regret regularized with the accumulated switching cost, providing a theoretical guarantee of performance [34].

Protocol 2: Optimizing a Black-Box Function using Standard BO with Gaussian Processes

This is a standard protocol for a general black-box optimization problem [37] [38].

- Initialization: Define the search space (the hyperparameters and their ranges) and select an initial set of points (e.g., via Latin Hypercube Sampling) to evaluate.

- Surrogate Modeling: Fit a Gaussian Process (GP) to the set of evaluated points

{x_i, y_i}. The GP provides a posterior meanμ(x)and varianceσ²(x)for any pointxin the search space. - Acquisition Maximization: Use the GP posterior to calculate an acquisition function

α(x)across the search space (e.g., Expected Improvement). Find the pointx_nextthat maximizesα(x). - Evaluation and Update: Evaluate the black-box function at

x_next, record the resulty_next, and add the new observation(x_next, y_next)to the dataset. - Termination: Repeat from step 2 until a predefined budget (e.g., number of iterations) is exhausted or the improvement falls below a threshold.

Structured Data Summaries

Table 1: Comparison of Key Black-Box Optimization Algorithms

| Algorithm | Core Principle | Best For | Strengths | Weaknesses |

|---|---|---|---|---|

| Bayesian Optimization (BO) [33] [38] | Uses a probabilistic surrogate model (e.g., GP) and an acquisition function to guide the search. | Expensive black-box functions with low-to-medium dimensional inputs. | Sample-efficient; theoretically grounded; handles noise. | Surrogate model can be computationally heavy for many observations. |

| Lazy Modular BO (LaMBO) [34] | Extends BO to modular systems, minimizing the cost of switching early-stage parameters. | Multi-stage pipelines with high cost to change early-stage parameters. | Reduces cumulative switching cost; achieves sublinear regularized regret. | More complex implementation; requires system to have modular structure. |

| Random Search [33] | Samples parameter configurations randomly from the search space. | Simple baselines; high-dimensional spaces where BO struggles. | Simple to implement and parallelize; often better than grid search. | Can be very inefficient compared to BO for expensive functions. |

| Grid Search [33] | Evaluates every combination from a predefined set of values for each parameter. | Very low-dimensional parameter spaces. | Exhaustive over the defined grid. | Suffers from the "curse of dimensionality"; highly inefficient. |

Table 2: Essential Research Reagent Solutions for Bayesian Optimization Experiments

| Item / Tool | Function / Purpose | Example Tools / Libraries |

|---|---|---|

| Optimization Framework | Provides the core infrastructure for defining the problem, managing trials, and running the optimization loop. | OpenBox [33], mlr3 with mlr3mbo [36], BoTorch [37], Scikit-Optimize. |

| Surrogate Model | The probabilistic model that approximates the unknown black-box function and provides uncertainty estimates. | Gaussian Process (GP) [37] [38], Random Forest (e.g., in SMAC), Tree-structured Parzen Estimator (TPE). |

| Acquisition Function | The utility function that guides the selection of the next point to evaluate by balancing exploration and exploitation. | Expected Improvement (EI) [35] [38], Upper Confidence Bound (UCB) [34] [35], Probability of Improvement (PI) [38]. |

| Error Handling & Fallback | Prevents the entire optimization from failing due to errors in individual function evaluations. | Encapsulation methods and fallback learners (e.g., featureless baseline) [36]. |

Workflow and Logical Visualizations

This diagram illustrates the iterative cycle of Bayesian Optimization. After an initial design of experiments, a Gaussian Process (GP) model is built to create a surrogate of the black-box function. An acquisition function uses this surrogate to propose the most promising point to evaluate next. The black-box is evaluated at this point, and the result is used to update the GP model, closing the loop until a stopping criterion is met [33] [38].

This diagram shows the application of LaMBO to a multi-stage pipeline. The key insight is that changing parameters in an early module (like Module 1) requires re-executing all subsequent modules, incurring a high "switching cost." LaMBO accounts for this by being "lazy"—it preferentially makes changes to parameters in later modules (θ₂, θ₃) and avoids unnecessary changes to costly early-stage parameters (θ₁) between consecutive iterations [34].

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using Group Lasso over standard Lasso for feature selection with categorical data?

Group Lasso extends standard Lasso (L1 regularization) by penalizing groups of variables collectively. When you have categorical variables converted into dummy variables, standard Lasso may select only a subset of dummies from a single category, leading to an incomplete or misleading model [39]. Group Lasso solves this by forcing the entire group of dummy variables representing a single categorical feature to be either selected or eliminated as a whole, ensuring model interpretability.

Q2: My model is highly sensitive to outliers. Which technique should I consider and why?

Ramp Loss is particularly effective for outlier suppression [40]. Unlike traditional loss functions like Hinge Loss, where the loss value can grow indefinitely for outliers, Ramp Loss defines a maximum loss value. When a sample's training error exceeds a predefined range, its loss value does not increase further, which explicitly limits the influence of outliers and makes the model more robust [40].

Q3: How does the L1-MRL framework integrate feature selection and model tuning across multiple stages?

The L1 Multistage Ramp Loss (L1-MRL) framework unifies the estimation of treatment rules (or decision functions) across all stages into a single optimization problem [17]. It uses a multistage ramp loss to estimate optimal decisions and imposes a group Lasso-type penalty on the coefficients of the decision rules across all stages simultaneously [17]. This enables the identification of features that are unimportant across all stages, leading to more robust cross-stage variable selection and reducing false discovery errors that can accumulate in sequential methods.

Q4: What is a major computational challenge associated with the Ramp Loss function?

The primary challenge is that the Ramp Loss function is non-convex [40]. This non-convexity makes direct optimization NP-hard. However, this is typically addressed using optimization procedures like Concave-Convex Programming (CCCP), which iteratively solves a series of reconstructed convex optimization problems until convergence [40].

Troubleshooting Guides

Issue 1: Model Fails to Select Categorical Features as a Complete Group

Problem: After applying Group Lasso, some dummy variables from a categorical feature are retained while others are dropped.

Solutions:

- Verify Group Assignment: Ensure that the indices for all dummy variables belonging to the same categorical feature are correctly assigned to the same group. An incorrect group structure is the most common cause.

- Check Regularization Parameters: If using a Sparse Group Lasso implementation (which combines Group Lasso and standard Lasso), ensure that the L1 regularization parameter (

l1_reg) is set to zero if your goal is pure group selection [39]. A non-zerol1_regwill perform selection within groups. - Explore Specialized Packages: Consider using Python packages designed for this purpose, such as

celer[39] orskglm[39], which offer efficient Group Lasso implementations that align with the scikit-learn API.

Issue 2: Poor Model Convergence When Using Ramp Loss

Problem: The training process is unstable, fails to converge, or is computationally slow.

Solutions:

- Confirm CCCP Implementation: Ensure you are using a correct optimization procedure like Concave-Convex Programming (CCCP) to handle the non-convex Ramp Loss [40]. Do not attempt to use gradient-based methods designed for convex problems.

- Inspect Hyperparameters: Review the hyperparameters of the Ramp Loss function itself (e.g., the margin where the loss becomes constant) as they significantly impact the optimization landscape [40].

- Leverage Fast Solvers: For a Ramp-Loss Nonparallel Support Vector Regression (RL-NPSVR) model, the dual problem of each reconstructed convex optimization in the CCCP process can have the same formulation as standard SVR. This allows the use of fast algorithms like SMO to accelerate training [40].

Issue 3: Accumulating False Discoveries in Multi-Stage Decision Models

Problem: In multi-stage analyses (e.g., Dynamic Treatment Regimens), features are selected at each stage independently, leading to a high cumulative false discovery rate.

Solutions:

- Adopt a Simultaneous Framework: Implement a simultaneous estimation and selection method like L1-MRL [17]. This approach uses a single optimization with a group penalty across all stages, which directly identifies variables that are unimportant at every stage, thereby controlling the global false discovery rate.

- Apply Dual Feature Reduction (DFR): For high-dimensional problems with group structures, consider applying a pre-optimization screening method like DFR for Sparse-Group Lasso. This can drastically reduce the computational cost and input space without affecting the optimal solution, making the multi-stage problem more tractable [41].

Experimental Protocols & Data Presentation

Protocol 1: Implementing Group Lasso for Feature Selection

This protocol outlines the steps for using Group Lasso to select features from a dataset containing categorical variables.

1. Preprocessing and Group Formation:

- Encode categorical variables into dummy variables. Let's say you have a categorical variable "ZIP Code" with 100 unique values. This will be converted into 100 dummy variables.

- Assign all dummy variables derived from the same original categorical feature to a single group. In our example, all 100 dummies for "ZIP Code" belong to group 1.

- Standardize continuous numerical features. Each continuous feature can be treated as its own group or assigned to a shared group for continuous variables.

2. Model Fitting with Group Lasso Penalty:

- The objective is to minimize a loss function (e.g., logistic loss for classification) with an added Group Lasso penalty.

- The general form of the optimization problem is:

Minimize (Loss(β)) + λ * Σ (||β_g||_2)whereβ_gis the coefficient vector for groupg, and||.||_2is the L2-norm. The penalty termλ * Σ (||β_g||_2)encourages sparsity at the group level [39] [42].

3. Model Evaluation and Selection:

- Use cross-validation to tune the regularization parameter

λ. - A group of features is selected if the L2-norm of its coefficient vector

β_gis non-zero. All features within the group are retained.

Table 1: Comparison of Lasso Variants for Feature Selection

| Technique | Regularization Type | Selection Unit | Key Advantage | Ideal Use Case |

|---|---|---|---|---|

| Standard Lasso | L1 Penalty | Individual Features | Promotes sparsity; simple to implement. | Datasets with only continuous, independent features. |

| Group Lasso | L1/L2 Penalty | Pre-defined Groups | Selects or drops entire groups, preserving categorical structure. | Datasets with categorical variables or naturally grouped features (e.g., genes). |

| Sparse Group Lasso | L1 + L1/L2 Penalty | Groups & Individual Features | Performs group and within-group selection simultaneously. | When groups are large and not all features within a group are relevant [41]. |

| Adaptive Sparse-Group Lasso | Weighted L1 + L1/L2 | Groups & Individual Features | Uses weights to improve estimation consistency and bias. | High-dimensional settings where some features/groups are more important than others [41]. |

Protocol 2: Building a Robust Classifier with Ramp Loss

This protocol describes the process of training a robust classifier using a support vector machine with Ramp Loss.

1. Model Formulation:

- Replace the standard Hinge Loss with the Ramp Loss function. The Ramp Loss can be defined as

R_s(u) = min(1 - u, s), whereuis the margin value andsis a parameter defining where the loss becomes constant [40]. - The resulting optimization problem becomes non-convex.

2. Optimization via CCCP:

- Decompose the Ramp Loss

R_s(u)into a convex part (e.g., Hinge Loss) and a concave part. - The CCCP procedure involves: a. Initialization: Start with an initial estimate of model parameters. b. Linearization: In each iteration, linearize the concave part at the current parameter estimate. c. Solving Convex Subproblem: Solve the resulting convex optimization problem. For RL-NPSVR, this subproblem can have the same dual as a standard SVR, allowing for efficient solvers [40]. d. Iteration: Repeat steps (b) and (c) until convergence.

3. Hyperparameter Tuning:

- Key hyperparameters include the Ramp Loss parameter

sand the standard SVM regularization parameterC. - Use a validation set or cross-validation to find the optimal values that maximize robustness and generalization performance.

Table 2: Quantitative Performance Comparison of Regression Models on Noisy Data This table simulates results based on findings from RL-NPSVR research [40].

| Dataset | Model | Mean Absolute Error (MAE) | Sparsity (\% of SVs) | Outlier Sensitivity (Score) |

|---|---|---|---|---|

| Synthetic Dataset 1 | Standard SVR | 2.45 | 65\% | High (85) |

| Synthetic Dataset 1 | TSVR | 2.80 | 58\% | Very High (92) |

| Synthetic Dataset 1 | RL-NPSVR (Proposed) | 1.92 | 80\% | Low (25) |

| Real-World Dataset A | Standard SVR | 15.3 | 70\% | High (80) |

| Real-World Dataset A | TSVR | 16.1 | 62\% | Very High (88) |

| Real-World Dataset A | RL-NPSVR (Proposed) | 12.8 | 85\% | Low (30) |

Visualization of Workflows

L1-MRL Simultaneous Optimization Workflow

L1-MRL Simultaneous Optimization Workflow

Group Lasso for Categorical Features

Group Lasso for Categorical Features

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Regularization Experiments

| Tool / 'Reagent' | Function / Purpose | Example / Notes |

|---|---|---|

| Group Lasso Solvers | Efficiently optimizes the Group Lasso objective function. | Python: celer [39], skglm [39]. R: grplasso package. |

| Optimization Frameworks | Solves non-convex problems like Ramp Loss via CCCP. | Custom implementation based on [40]; can leverage CVXPY or scipy.optimize. |

| Dual Feature Reduction (DFR) | Pre-optimization screening to reduce problem size. | Applied before Sparse-Group Lasso optimization to drastically cut computational cost [41]. |

| Hyperparameter Tuning Modules | Automates the search for optimal regularization parameters. | scikit-learn GridSearchCV or RandomizedSearchCV; Optuna for larger scales. |

| Neural Network Libraries | Implements Group Lasso penalties on network weights. | TensorFlow/PyTorch with custom regularization terms to induce group-level sparsity [42]. |

Frequently Asked Questions (FAQs)

Q1: What does "simultaneous tuning" mean in the context of Drug-Target Affinity (DTA) prediction models? Simultaneous tuning refers to the integrated optimization of both feature representations (for drugs and targets) and model parameters within a single, unified deep learning framework. Unlike sequential approaches that first fix features then tune a model, this method allows feature extraction and regression tasks to co-inform and enhance each other during training. This is a core principle in modern multi-modal and multi-task learning frameworks, which use shared feature spaces to improve prediction accuracy for both binding affinity and related tasks like target-aware drug generation [43].

Q2: I am encountering vanishing gradients during training of my deep learning-based DTA model. What could be the cause? Vanishing gradients are a common challenge in deep networks. In DTA models, this can occur when using very deep convolutional neural networks (CNNs) for processing long protein sequences and drug SMILES strings [44]. To mitigate this, consider integrating residual networks (ResNet) as they allow gradients to flow through skip connections, preventing them from vanishing during backpropagation [45]. Furthermore, ensure you are using appropriate activation functions and consider gradient clipping.

Q3: My model's performance is highly sensitive to small changes in the learning rate. How can I stabilize training? Learning rate sensitivity often indicates an unstable optimization landscape. To address this:

- Use adaptive optimizers like Adam, which are less sensitive to the precise learning rate value [45].

- Implement a learning rate scheduler to reduce the learning rate as training progresses.

- If you are employing a multi-task learning framework with shared features, be aware that conflicting gradients from different tasks can cause instability. To resolve this, use gradient alignment algorithms, such as the FetterGrad algorithm, which is designed to minimize the Euclidean distance between task gradients to mitigate conflict [43].

Q4: What are the most critical evaluation metrics for validating a DTA prediction model? The critical metrics for DTA prediction, which is a regression task, are [46] [45]:

- Mean Squared Error (MSE): Measures the average squared difference between predicted and actual affinity values.

- Concordance Index (CI): Evaluates the probability that the predictions for two random drug-target pairs are in the correct order.

- rm2: A metric for the external predictive potential of a regression model. For a comprehensive performance analysis, it is also good practice to report the Area Under the Precision-Recall Curve (AUPR), especially when working with datasets where non-interacting pairs are considered [46] [45].

Q5: How can I add interpretability to my "black-box" deep learning DTA model? To enhance interpretability, integrate attention mechanisms into your model architecture. Multi-head self-attention mechanisms can be added to deep residual networks to automatically identify and weight the importance of specific subsequences in drug SMILES and protein sequences. This allows the model to highlight which parts of the molecule and protein are most critical for the binding affinity prediction, providing valuable insights for researchers [45].

Troubleshooting Guides

Issue 1: Poor Model Performance on Benchmark Datasets

Problem: Your DTA model shows significantly higher Mean Squared Error (MSE) and lower Concordance Index (CI) on benchmark datasets like Davis or KIBA compared to state-of-the-art methods.

Investigation & Resolution:

Verify Input Data Representation: