SMILES vs Graphs vs Fingerprints: A 2025 Guide to Molecular Representations in AI-Driven Drug Discovery

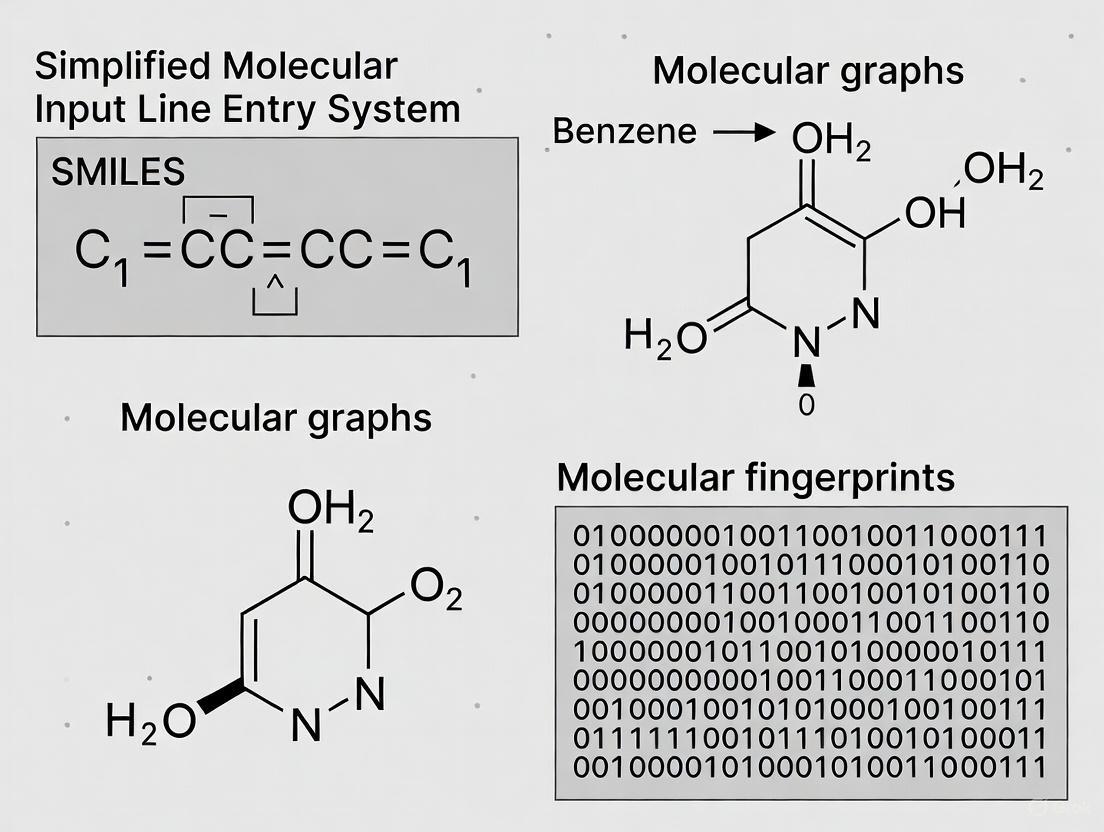

This article provides a comprehensive guide to the three pillars of molecular representation—SMILES, Graphs, and Fingerprints—tailored for researchers and professionals in drug development.

SMILES vs Graphs vs Fingerprints: A 2025 Guide to Molecular Representations in AI-Driven Drug Discovery

Abstract

This article provides a comprehensive guide to the three pillars of molecular representation—SMILES, Graphs, and Fingerprints—tailored for researchers and professionals in drug development. It explores the foundational concepts behind each method, delves into modern AI-driven applications from property prediction to scaffold hopping, addresses critical challenges like data robustness and model interpretability, and offers a comparative analysis for method validation. By synthesizing the latest advancements, this review serves as a practical resource for selecting and optimizing molecular representations to accelerate the drug discovery pipeline.

The Three Pillars of Cheminformatics: Deconstructing SMILES, Molecular Graphs, and Fingerprints

What is a Molecular Representation? Bridging Chemical Structures and Computational Models

Molecular representation serves as the foundational bridge connecting chemical structures with computational models, enabling the application of artificial intelligence in modern drug discovery. This technical guide provides a comprehensive examination of molecular representation methods, from traditional approaches to cutting-edge AI-driven techniques. We explore the fundamental principles, comparative advantages, and practical implementations of key representation formats including SMILES, molecular fingerprints, and graph-based representations, with particular emphasis on their applications in property prediction, virtual screening, and scaffold hopping. The content is structured to equip researchers and drug development professionals with both theoretical understanding and practical methodologies for selecting and implementing appropriate molecular representations across various drug discovery scenarios, framed within the context of ongoing research comparing SMILES, graphs, and fingerprints.

Molecular representation forms the critical infrastructure that translates chemical structures into computationally tractable formats, serving as the essential bridge between molecular reality and algorithmic analysis [1]. In the context of drug discovery, where researchers must navigate virtually infinite chemical spaces to identify viable compounds, effective molecular representation enables the transformation of structural information into predictive models for biological activity, physicochemical properties, and binding affinity [1] [2].

The core challenge in molecular representation lies in capturing sufficient structural and chemical information to enable accurate property prediction while maintaining computational efficiency for high-throughput screening and machine learning applications [2]. This balance becomes increasingly critical as drug discovery tasks grow more sophisticated, requiring representations that can capture subtle structure-function relationships beyond what traditional methods can provide [1]. The choice of representation significantly influences model performance, interpretability, and applicability across different domains, from small molecule drugs to biomolecules and metabolomes [3] [4].

Within the broader thesis research comparing SMILES, graphs, and fingerprints, this review establishes the fundamental principles and evolutionary trajectory of molecular representation methods, setting the stage for detailed technical comparisons and applications in subsequent sections.

Theoretical Framework: The Molecular Representation Landscape

Core Principles and Definitions

Molecular representation refers to the process of converting chemical structures into mathematical or computational formats that algorithms can process to model, analyze, and predict molecular behavior [1]. An effective representation must fulfill several key criteria: ability to represent local molecular structure, efficient encoding and decoding capabilities, feature independence, and sufficient information content for the intended application [2].

The fundamental challenge stems from the need to represent nearly infinite chemical complexity within finite computational constraints. Small-molecule chemicals typically comprise 20-30 non-hydrogen atoms with four bond types (single, double, triple, or aromatic), but the connectivity and steric patterns create a druglike molecule space estimated at 10^60 compounds [2]. Molecular representations compress this complexity into consistent input formats suitable for machine learning and similarity analysis.

Historical Evolution and Paradigm Shifts

The development of molecular representation has evolved through distinct phases, from early structural keys to contemporary AI-driven embeddings as illustrated in Table 1.

Table 1: Historical Evolution of Molecular Representation Methods

| Era | Dominant Methods | Key Innovations | Limitations |

|---|---|---|---|

| Pre-1980s | IUPAC nomenclature, Wiswesser Line Notation (WLN) | Standardized chemical naming, linear notation | Human-readable but not machine-optimized |

| 1980s-2000s | SMILES, Molecular descriptors, Structural fingerprints | Graph-based linearization, predefined substructural keys | Limited capturing of complex structural relationships |

| 2000s-2010s | Extended-connectivity fingerprints (ECFP), Atom-pair fingerprints | Circular substructures, topological descriptors | Handcrafted features requiring expert knowledge |

| 2010s-Present | Graph neural networks, Transformer-based models, Multimodal representations | AI-learned features, end-to-end learning | Data hunger, computational intensity, interpretability challenges |

The initial paradigm established molecular representations as human-readable strings or predefined feature sets, while the contemporary paradigm has shifted toward data-driven representations that learn features directly from molecular data [1]. This evolution reflects the broader transformation in cheminformatics from expert-defined rules to machine-learned patterns, enabling more nuanced capture of structure-property relationships.

Traditional Molecular Representation Methods

String-Based Representations: SMILES and Beyond

The Simplified Molecular Input Line Entry System (SMILES) represents one of the most widely adopted string-based molecular representations since its introduction by David Weininger in the 1980s [5]. SMILES encodes molecular graphs as linear strings using short ASCII sequences according to specific grammatical rules:

- Atoms: Represented by standard element symbols, with atoms in the "organic subset" (B, C, N, O, P, S, F, Cl, Br, I) typically written without brackets when they have no formal charge and standard valence [5] [6].

- Bonds: Single bonds are implied by adjacency and typically omitted, double bonds represented by '=', triple bonds by '#', and aromatic bonds by ':' [5].

- Branches: Specified using parentheses to denote side chains from the main molecular backbone [5] [7].

- Cyclic structures: Represented by breaking ring bonds and assigning numerical labels to connection points [5].

- Stereochemistry: Specified using '/' and '\' for double bond geometry and '@' symbols for tetrahedral chirality [6] [7].

The canonical SMILES algorithm generates unique representations for molecules through a two-step process: the CANON algorithm assigns canonical labels to atoms based on invariant structural properties, while the GENES algorithm generates the unique string representation from these labels [7]. Key atomic invariants include connection count, non-hydrogen bond count, atomic number, charge sign, and attached hydrogen count [7].

Table 2: Comparative Analysis of String-Based Molecular Representations

| Representation | Key Characteristics | Advantages | Limitations |

|---|---|---|---|

| SMILES | Depth-first traversal of molecular graph | Human-readable, compact, widespread support | Multiple valid strings per molecule, syntax violations possible |

| Canonical SMILES | Unique representation via canonical atom ordering | Standardized representation, database indexing | Computational overhead for complex molecules |

| InChI | IUPAC standard, layered structure | Standardization, open algorithm | Less human-readable, complex representation |

| SELFIES | Grammar-based, guaranteed validity | No invalid strings, better for generation | Lower performance in some ML benchmarks [8] |

Despite its widespread adoption, SMILES has inherent limitations including the generation of multiple valid strings for the same molecule and sensitivity to small string changes that can produce invalid syntax or significantly different structures [1] [8]. These limitations have motivated development of alternative representations better suited for AI applications.

Molecular Fingerprints: Structural and Circular

Molecular fingerprints encode molecular structures as fixed-length bit vectors or numerical arrays, enabling efficient similarity comparison and machine learning applications. These can be broadly categorized into structural keys and circular fingerprints as detailed in Table 3.

Table 3: Classification and Applications of Molecular Fingerprints

| Fingerprint Type | Representative Examples | Generation Method | Optimal Applications |

|---|---|---|---|

| Structural Keys | MACCS, PubChem fingerprints | Predefined structural patterns mapped to fixed bit positions | Rapid substructure search, high-throughput screening |

| Circular Fingerprints | ECFP, FCFP, Morgan fingerprints | Circular atom environments generated iteratively around each atom | QSAR, similarity searching, activity prediction |

| Topological Fingerprints | Atom pairs, Topological torsions | Atom path enumeration with distance information | Scaffold hopping, shape similarity |

| Advanced Hybrids | MAP4, MHFP6 | MinHashing of circular or atom-pair shingles | Cross-domain applications, biomolecules |

Structural keys fingerprints, such as the 166-bit MACCS keys, use predefined structural patterns where each bit position corresponds to a specific chemical feature or substructure [9]. The presence or absence of these features determines the bit value, creating a binary fingerprint that enables rapid similarity assessment using metrics like Tanimoto coefficient [2] [9].

Circular fingerprints, particularly extended-connectivity fingerprints (ECFP), generate molecular features dynamically rather than relying on predefined dictionaries [2]. The ECFP algorithm operates through an iterative process:

- Initialization: Assign initial identifiers to each atom based on local structure

- Iteration: Update each atom identifier by combining with neighbors' identifiers

- Hashing: Convert structural identifiers to integer indices within the fixed-length fingerprint

- Finalization: Aggregate all hashed identifiers to form the final fingerprint [2]

The MAP4 (MinHashed Atom-Pair fingerprint) represents a recent advancement that combines substructure and atom-pair concepts by creating "atom-pair shingles" where circular substructures around each atom in a pair are written as SMILES and combined with their topological distance [3]. These shingles are then MinHashed to form the final fingerprint, creating a representation effective for both small molecules and biomolecules [3].

Modern AI-Driven Molecular Representations

Graph-Based Representations

Graph-based representations conceptualize molecules as graphs with atoms as nodes and bonds as edges, preserving the inherent topology of molecular structures [1] [4]. This approach naturally aligns with chemical intuition and enables direct application of graph neural networks (GNNs) for molecular property prediction.

Table 4: Graph Representation Types and Characteristics

| Graph Type | Node Definition | Edge Definition | Advantages | Implementation |

|---|---|---|---|---|

| Atom Graph | Atoms | Chemical bonds | Natural topology, comprehensive structure | Message-passing neural networks |

| Pharmacophore Graph | Pharmacophoric features | Spatial relationships | Activity-focused, binding relevance | Extended reduced graphs (ErG) |

| Junction Tree | Molecular fragments | Fragment connections | Captures key substructures | Tree decomposition |

| Functional Group Graph | Functional groups | Inter-group connections | Chemically intuitive | Subpattern identification |

Atom-level graphs represent the most direct mapping where nodes correspond to atoms with feature vectors encoding atomic properties (element, charge, hybridization), while edges represent bonds with features such as bond type and conjugation [4]. Reduced molecular graphs abstract atom groups into single nodes, creating higher-level representations that capture pharmacophoric features or functional groups [4].

The MMGX (Multiple Molecular Graph eXplainable discovery) framework demonstrates how integrating multiple graph representations (Atom, Pharmacophore, JunctionTree, and FunctionalGroup) can enhance both model performance and interpretability [4]. This multi-view approach provides complementary structural perspectives that address limitations of individual representations.

Language Model-Based Representations

Inspired by natural language processing, language model-based approaches treat molecular string representations (particularly SMILES) as a specialized chemical language [1]. These methods adapt transformer architectures to learn molecular embeddings through techniques such as:

- Tokenization: SMILES strings are decomposed into tokens representing atoms, bonds, and structural indicators

- Embedding: Each token is mapped to a continuous vector representation

- Contextual processing: Transformer models process token sequences to capture long-range dependencies and structural patterns [1]

Unlike traditional fingerprints that encode predefined substructures, language model-based representations learn contextual embeddings that capture complex structural relationships through self-supervised pretraining objectives such as masked token prediction [1].

Experimental Protocols and Methodologies

Performance Benchmarking Framework

Comprehensive evaluation of molecular representations employs standardized benchmarking frameworks that assess performance across diverse chemical tasks and datasets. The experimental protocol typically involves:

Dataset Curation:

- Collection of benchmark datasets from sources like MoleculeNet covering various property prediction tasks

- Pharmaceutical endpoint datasets with known structural patterns for knowledge verification

- Synthetic datasets with ground truth annotations for explanation validation [4]

Representation Generation:

- Implementation of different molecular representations using toolkits such as RDKit

- Parameter optimization for each representation type (e.g., radius for circular fingerprints)

- Feature standardization and normalization where appropriate [10]

Model Training and Evaluation:

- Application of consistent machine learning models across representations

- Rigorous cross-validation protocols to prevent data leakage

- Performance metrics aligned with task objectives (AUROC for classification, RMSE for regression) [10]

Statistical Analysis:

- Comparative statistical testing to identify significant performance differences

- Analysis of performance patterns across chemical space and task types

- Computational efficiency assessment including training and inference times [10]

Experimental Insights and Comparative Performance

Benchmarking studies reveal that molecular representation performance is highly task-dependent. Molecular descriptors generally excel at physical property prediction, while fingerprints show advantages in activity classification tasks [10]. Surprisingly, despite their simplicity, MACCS fingerprints demonstrate robust performance across diverse tasks, while more complex representations like graph neural networks achieve competitive but not universally superior performance [10].

The MAP4 fingerprint significantly outperforms other fingerprints on an extended benchmark combining small molecules and peptides, achieving recovery rates of BLAST analogs from scrambled or point-mutated sequences [3]. This demonstrates the importance of representation selection based on the molecular domain and specific application requirements.

Visualization and Interpretation

Molecular Representation Workflow

The following diagram illustrates the complete workflow from chemical structure to computational representation, highlighting the key transformation stages and representation types:

Molecular Representation Workflow: This diagram illustrates the transformation of chemical structures into computational representations through multiple pathways, culminating in AI-driven embeddings and direct application in computational models.

Multi-View Graph Representation

The integration of multiple molecular graph representations provides complementary structural perspectives that enhance both model performance and interpretability:

Multi-View Graph Representation: This diagram illustrates the MMGX framework approach of integrating multiple graph representations to provide complementary structural perspectives that enhance prediction accuracy and interpretation credibility.

Table 5: Essential Software Tools and Resources for Molecular Representation

| Tool/Resource | Type | Key Functionality | Application Context |

|---|---|---|---|

| RDKit | Open-source cheminformatics toolkit | SMILES parsing, fingerprint generation, graph representation | General-purpose molecular representation and manipulation |

| Daylight Toolkit | Commercial cheminformatics platform | SMILES canonicalization, fingerprint implementation | Production cheminformatics systems |

| DeepChem | Deep learning library | Graph neural networks, molecular feature representations | AI-driven drug discovery applications |

| ChemAxon | Commercial chemistry toolkit | Extended SMILES (CXSMILES), structure canonicalization | Pharmaceutical research and development |

| MayaChemTools | Open-source cheminformatics | Fingerprint calculation, diversity analysis | Computational chemistry and screening |

Molecular representation serves as the critical translation layer between chemical structures and computational models, enabling modern AI-driven drug discovery. The evolution from traditional string-based representations to contemporary graph-based and learned embeddings reflects a paradigm shift from expert-defined features to data-driven representations that capture complex structure-property relationships.

The optimal choice of molecular representation depends significantly on the specific application context, with different methods excelling in tasks ranging from virtual screening to property prediction. The emerging trend toward multi-view representations that integrate complementary structural perspectives shows particular promise for enhancing both predictive performance and model interpretability.

As molecular representation continues to evolve, the integration of domain knowledge with data-driven approaches will likely yield increasingly powerful representations that bridge the gap between chemical intuition and computational efficiency, ultimately accelerating therapeutic discovery and development.

Table of Contents

- Introduction

- SMILES String and Syntax

- Advanced and Isomeric Notation

- SMILES in Machine Learning and AI

- Comparative Analysis of Molecular Representations

- Experimental Protocols in SMILES-Based Research

- The Scientist's Toolkit

The Simplified Molecular-Input Line-Entry System (SMILES) is a line notation for describing the structure of chemical species using short ASCII strings [5]. Developed in the 1980s by David Weininger and funded by the US Environmental Protection Agency, SMILES has become a cornerstone of chemical informatics [5]. It serves as a bridge between a molecule's graphical structure and computer-readable data, enabling efficient storage, retrieval, and analysis of chemical information [11]. This technical guide details the SMILES syntax, its role in modern artificial intelligence (AI) research for drug discovery, and provides a comparative analysis with other molecular representations like graphs and fingerprints, framed within the context of molecular representation research.

SMILES String and Syntax

The SMILES language is built upon a small set of rules for encoding atoms, bonds, branches, and cyclic structures into a single text string without spaces [11].

Atoms

- Standard Atoms: Atoms are represented by their atomic symbols. Elements in the "organic subset" (B, C, N, O, P, S, F, Cl, Br, I) can typically be written without brackets, with hydrogen atoms implied by standard valence assumptions [5] [11]. For example,

Crepresents carbon with its implicit hydrogens. - Atoms in Brackets: All other elements, atoms with non-standard valences, formal charges, or explicit hydrogen counts must be enclosed in square brackets [5] [11].

Bonds

- Bond Types: Bonds are represented by specific symbols. Single, double, triple, and aromatic bonds are denoted by

-,=,#, and:, respectively [5] [6]. - Implied Bonds: Single and aromatic bonds between aliphatic and aromatic atoms, respectively, can be omitted and are assumed by adjacency in the string [5] [6]. For example, ethanol is most simply written as

CCOrather thanC-C-O. - Disconnection: A period (

.) is used to indicate that components are not bonded together, as in ionic compounds (e.g.,[Na+].[Cl-]for sodium chloride) [5] [6].

Table 1: SMILES Bond Type Representations

| Bond Type | Symbol | Example SMILES | Example Molecule |

|---|---|---|---|

| Single | - (often omitted) |

CCO |

Ethanol |

| Double | = |

O=C=O |

Carbon Dioxide |

| Triple | # |

C#N |

Hydrogen Cyanide |

| Aromatic | : |

c1ccccc1 |

Benzene |

| Non-Bond | . |

[Na+].[Cl-] |

Sodium Chloride |

Branches

Branches from a parent chain are specified by enclosing them in parentheses. The connection point is always to the immediate left of the parenthesis. Branches can be nested or stacked [5] [11]. For example, isobutyric acid is written as CC(C)C(=O)O [11].

Cyclic Structures

Ring structures are encoded by breaking one single or aromatic bond in the ring and assigning a numerical ring closure label to the two atoms involved [5] [11]. For example, cyclohexane is written as C1CCCCC1, where the 1 after the first and last carbon atoms indicates a bond between them. A single atom can have multiple ring closures, as in cubane: C12C3C4C1C5C4C3C25 [11]. For ring numbers 10 and above, the label is preceded by a % (e.g., C1%12%24) [5].

Aromaticity

Aromaticity can be represented in different ways. A common and concise method is to represent aromatic atoms using lower-case atomic symbols (e.g., c, n, o). This defines aromatic bonds implicitly, without the need for explicit bond symbols [5]. For example, benzene can be written as c1ccccc1 [5].

The following diagram illustrates the logical workflow for interpreting and generating a SMILES string.

Diagram 1: SMILES Generation Workflow

Advanced and Isomeric Notation

SMILES can encode stereochemical and isotopic information, creating "isomeric SMILES" [5] [11].

Tetrahedral Chirality

Configuration at tetrahedral centers is specified by the symbols @ and @@ immediately following the atomic symbol [6] [11]. These symbols indicate the chiral ordering of the adjacent atoms. For example, N[C@@H](C)C(=O)O and N[C@H](C)C(=O)O represent the D- and L- enantiomers of alanine, respectively [11].

Double Bond Stereochemistry

Geometry around double bonds is specified using the directional bond symbols / and \ to indicate the relative orientation of adjacent bonds [5] [6]. For example, the E- and Z- isomers of difluoroethene are written as F/C=C/F and F/C=C\F, respectively [11].

Isotopes

Isotopic specifications are indicated by placing the isotope mass number immediately before the atomic symbol within brackets. For example, deuterium oxide is [2H]O[2H] and uranium-235 is [235U] [11].

SMILES in Machine Learning and AI

SMILES strings are treated as sentences in a chemical language, enabling the application of Natural Language Processing (NLP) techniques for molecular property prediction and drug discovery [12].

Feature Extraction with N-grams

A novel NLP-based method involves using N-grams (contiguous sequences of N characters) to extract interpretable features from drug SMILES strings [12]. This approach captures local and global associations among atoms in the sequence, resulting in sparse, explainable feature vectors that can be used to build machine learning models for tasks like personalized drug screening (PDS) [12].

Deep Learning Models

Various deep learning architectures are used to process SMILES strings:

- RNN-based models: Such as Seq2seq fingerprint and SMILES2vec, use Recurrent Neural Networks (RNNs) like LSTMs to learn vector representations of SMILES strings [12].

- Transformer-based models: Such as SMILES-transformer, SMILE-BERT, and CHEM-BERT, leverage the transformer architecture to capture complex patterns in SMILES sequences, often generating rich molecular fingerprints [12].

A significant challenge in this domain is the interpretability of model predictions. Explainable AI (XAI) techniques calculate attribution scores for SMILES tokens (both atoms and non-atom characters like [, ]), which can be difficult to map back to the molecular structure [13]. Tools like XSMILES provide interactive visualizations to explore these attributions by coordinating a bar chart of the SMILES string with a highlighted 2D molecular diagram, facilitating model interpretation [13].

Comparative Analysis of Molecular Representations

In AI-based drug discovery, SMILES is one of several molecular representations. The table below compares it with graph-based representations and molecular fingerprints.

Table 2: Comparison of Molecular Representations in AI

| Feature | SMILES | Molecular Graph | Molecular Fingerprints (e.g., Morgan) |

|---|---|---|---|

| Core Principle | 1D string notation; depth-first traversal of molecular graph [5] [14]. | Explicit graph with atoms as nodes and bonds as edges [14]. | Bit-vector representing the presence/absence of specific substructures [12]. |

| Handling of Valence | Focused on molecules whose bonds fit the 2-electron valence model [14]. | Can be extended to represent multicenter or coordinative bonds with specialized coding [14]. | Implicitly handled by the fingerprint generation algorithm. |

| Stereochemistry | Limited array of types (tetrahedral, double bond); specified with @, /, \ [6] [14]. |

Requires additional node/bond parameters; can be extended to complex types but is non-trivial [14]. | Often not directly encoded; may require a separate representation. |

| Aromaticity | No single standard; depends on implementation (e.g., lower-case atoms vs. Kekulé form) [5] [14]. | Aromaticity model must be defined; can be explicit bond type or inferred from connectivity [14]. | Aromatic rings are common components in the hashed substructures. |

| Canonicalization | No universal standard; unique SMILES generation is algorithm-dependent (e.g., CANGEN has known flaws) [5]. | Canonical atom ordering can be applied (e.g., using the InChI algorithm) [14]. | The generation process is typically deterministic and canonical. |

| Use in ML | Treated as a sequence for NLP models (RNNs, Transformers) [12]. | Processed by Graph Neural Networks (GNNs) like Graph Convolutional Networks [14]. | Used as direct input for traditional ML models (e.g., Random Forests, SVMs). |

The diagram below conceptualizes the relationships and trade-offs between these representations in a research context.

Diagram 2: Molecular Representations Relationship Framework

Experimental Protocols in SMILES-Based Research

The following is a detailed methodology for a typical experiment comparing SMILES-derived features to other representations, as cited in the literature [12].

Protocol: Building a Personalized Drug Screening (PDS) Model

1. Objective To build a machine learning model that predicts drug efficacy (measured as LN(IC50), the natural log of the half-maximal inhibitory concentration) based on patient gene expression (GE) data, cancer type, and drug structural features derived from SMILES strings [12].

2. Data Preparation

- Input Data:

- Drug Features: Generate NLP-based features from drug SMILES strings using the N-gram method [12]. As a comparator, generate 512-bit and 1024-bit Morgan fingerprints from the same SMILES strings using a toolkit like RDKit [12].

- Biological Context: Collect patient-derived Gene Expression (GE) data from a database like GDSC (Genomics of Drug Sensitivity in Cancer) for 657 genes, along with the cancer type [12].

- Target Variable: Obtain experimentally determined LN(IC50) values for drug-cell line pairs [12].

- Data Integration: Merge the drug features (NLP-based or Morgan fingerprints) with the GE data and cancer type to create a complete feature vector for each drug-cell line combination [12].

3. Model Training and Validation

- Data Splitting: Divide the integrated dataset into a training set (80%) and a hold-out test set (20%) [12].

- Model Building: Treat the problem as a regression task. Train a model (e.g., Gradient Boosting) on the training set.

- Cross-Validation: Perform 10-fold cross-validation on the training data to optimize hyperparameters and prevent overfitting [12].

- Evaluation: Predict LN(IC50) values on the test set. Evaluate model performance using metrics like Mean Absolute Error (MAE), Mean Squared Error (MSE), and R-squared (R²) [12].

4. Expected Results and Analysis As demonstrated in a pan-cancer case study, models using NLP-based SMILES features can achieve performance comparable to those using Morgan fingerprints (e.g., R² ≈ 0.82) [12]. The key advantage often lies in the sparsity and interpretability of the NLP-based features, which can highlight distinct functional groups relevant to the model's prediction [12].

The Scientist's Toolkit

The following table lists key software tools and libraries essential for working with SMILES in a research setting.

Table 3: Essential Research Reagents and Software for SMILES-Based Research

| Tool / Library | Type | Primary Function |

|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Parsing, validating, and generating SMILES strings; canonicalization; calculating molecular fingerprints; generating 2D molecular diagrams from SMILES [13]. |

| Daylight Toolkit | Commercial Cheminformatics API | One of the original implementations of SMILES; provides robust algorithms for canonical SMILES generation and chemical information management [5] [11]. |

| Marvin (ChemAxon) | Commercial Cheminformatics Suite | Importing, exporting, and drawing chemical structures with support for SMILES, CXSMILES, and stereochemistry rules [6]. |

| Chemistry Development Kit (CDK) | Open-Source Cheminformatics Library | A Java library for bio- and chemo-informatics that supports SMILES I/O and a wide range of molecular algorithms [5]. |

| Python N-gram Library | Custom Python Library | Feature extraction from drug SMILES strings using N-grams for building machine learning models, as described in the literature [12]. |

| XSMILES | Interactive Visualization Tool | JavaScript-based tool for visualizing and interpreting explainable AI (XAI) attribution scores on both SMILES strings and 2D molecule diagrams [13]. |

In computational drug discovery, representing molecular structures in a format amenable to machine analysis is a foundational challenge. Among the various representation schemes, the molecular graph paradigm—where atoms serve as nodes and chemical bonds as edges—has emerged as a powerfully intuitive structural blueprint that closely mirrors chemical reality. This representation stands in contrast to string-based formats like SMILES (Simplified Molecular-Input Line-Entry System) and fingerprint-based approaches that encode molecular substructures as fixed-length vectors [1]. Where SMILES strings represent molecules as linear text sequences and fingerprints capture presence or absence of specific substructures, molecular graphs explicitly preserve the topological relationships and connectivity patterns that define a molecule's identity and properties [10].

The molecular graph approach provides several distinct advantages for modern computational chemistry applications. By directly representing the non-Euclidean structure of molecules, graphs naturally capture the inherent symmetries and functional relationships that are often obscured in string-based representations [1] [15]. This structural fidelity makes graph representations particularly valuable for predicting complex molecular properties, generating novel drug candidates, and understanding structure-activity relationships at an atomic level [16] [17]. As drug discovery increasingly relies on artificial intelligence, molecular graphs have become the foundation for advanced deep learning architectures that learn directly from structural information, enabling more accurate prediction of biological activity, toxicity, and pharmacokinetic properties [1] [18].

Molecular Representations: A Comparative Framework

The Representation Landscape

Molecular representations can be broadly categorized into three principal classes: string-based, fingerprint-based, and graph-based representations. Each employs distinct strategies for encoding chemical structure and possesses characteristic strengths and limitations for various applications in cheminformatics and drug discovery.

SMILES (Simplified Molecular-Input Line-Entry System) provides a compact string representation where atoms are denoted as elemental symbols and bonds as specific characters (= for double, # for triple). While computationally efficient and human-readable, SMILES representations suffer from several critical limitations: they lack explicit structural information, the same molecule can have multiple valid SMILES strings, and minor string alterations can produce chemically invalid structures [1] [17].

Molecular fingerprints encode molecular substructures as fixed-length binary or count vectors. These can be classified as substructural (detecting predefined patterns) or hashed (using hash functions to map subgraphs to vector positions). Extended Connectivity Fingerprints (ECFP) are particularly widely used for similarity searching and structure-activity modeling [19] [10]. Though highly efficient for database screening, fingerprints capture only predefined features and may miss novel structural patterns.

Molecular graphs represent atoms as nodes (with features like element type, charge) and bonds as edges (with features like bond type, conjugation). This explicit representation of connectivity allows molecular graphs to naturally capture the structural determinants of molecular function and activity [20] [16].

Quantitative Comparison of Representation Performance

Table 1: Performance comparison of molecular representations across benchmark tasks

| Representation Type | Structural Information | Interpretability | Performance in Property Prediction | Performance in Novel Scaffold Identification |

|---|---|---|---|---|

| SMILES/SELFIES | Low (sequential) | Moderate | Variable; struggles with complex properties | Limited by syntax constraints |

| Molecular Fingerprints | Medium (substructure-based) | High | Strong on traditional QSAR tasks [10] | Limited to chemical space of predefined features |

| Molecular Graphs | High (topological) | High | Excellent for complex bioactivity prediction [16] | Superior for exploring novel chemical space [1] |

| 3D Molecular Graphs | Very High (structural + spatial) | High | State-of-the-art for binding affinity prediction [15] | Advanced for structure-based drug design |

Table 2: Computational efficiency comparison across representations

| Representation | Training Speed | Inference Speed | Data Requirements | Hardware Demands |

|---|---|---|---|---|

| MACCS Fingerprints | Fast | Very Fast | Low | Low |

| ECFP Fingerprints | Fast | Very Fast | Low | Low |

| SMILES-based Models | Medium | Medium | High | Medium |

| 2D Graph Models | Medium to Slow | Medium | Medium to High | Medium to High |

| 3D Graph Models | Slow | Slow | High | High |

Molecular Graph Construction and Feature Encoding

Fundamental Construction Principles

The process of constructing molecular graphs begins with the fundamental principle of representing atoms as nodes and bonds as edges [20]. Each atom node is characterized by a feature vector that typically includes atomic number, degree, formal charge, hybridization, aromaticity, and other atomic properties. Similarly, bond edges are characterized by features such as bond type (single, double, triple, aromatic), conjugation, and stereochemistry [19] [16].

The resulting graph structure G = (V, E) consists of:

- V = {v₁, v₂, ..., vₙ} where each vᵢ ∈ ℝᵃ is an a-dimensional feature vector for atom i

- E = {e₁, e₂, ..., eₘ} where each eᵢⱼ ∈ ℝᵇ is a b-dimensional feature vector for the bond between atoms i and j

This explicit representation preserves the complete topological structure of the molecule, including cyclic systems, branching patterns, and functional group arrangements that are critical for determining molecular properties and biological activity [20] [16].

Advanced Feature Encoding Strategies

Beyond basic atom and bond features, molecular graphs can incorporate increasingly sophisticated encoding strategies:

Geometric and Spatial Information: 3D molecular graphs extend the basic 2D topology by incorporating spatial coordinates, bond lengths, angles, and torsion angles, which are critical for modeling molecular interactions and binding conformations [15].

Electronic Properties: Some graph representations include atomic-level electronic properties such as partial charges, polarizability, and electronegativity, which influence intermolecular interactions and reactivity [16].

Knowledge-Enhanced Features: Approaches like KANO (Knowledge graph-enhanced molecular contrastive learning with functional prompt) enrich molecular graphs with external chemical knowledge from structured databases, creating connections between atoms that share chemical relationships beyond direct bonding [16].

Diagram Title: Molecular Graph Construction Workflow

Computational Architectures for Molecular Graph Processing

Graph Neural Networks (GNNs)

Graph Neural Networks have emerged as the primary architecture for learning from molecular graph representations. Most GNNs for molecular applications follow a message-passing framework where information is exchanged between connected atoms and aggregated at each layer [19]. The fundamental message-passing operation can be described as:

- Message Function: For each edge (i,j), compute a message mᵢⱼ = M(hᵢ, hⱼ, eᵢⱼ) where hᵢ, hⱼ are node features and eᵢⱼ are edge features

- Aggregation Function: For each node i, aggregate messages from its neighbors N(i): aᵢ = A({mᵢⱼ | j ∈ N(i)})

- Update Function: Update node features: hᵢ' = U(hᵢ, aᵢ)

After multiple message-passing layers, a readout function generates graph-level representations by aggregating node-level features, typically using sum, mean, or attention-weighted pooling [19].

Several specialized GNN architectures have been developed for molecular graphs:

Graph Isomorphism Networks (GIN): Proven to be as expressive as the Weisfeiler-Lehman graph isomorphism test, making them particularly powerful for capturing molecular topology [19].

Graph Transformer Networks: Incorporate self-attention mechanisms to capture both local and global dependencies in molecular structures, often outperforming message-passing GNNs on complex property prediction tasks [19].

Knowledge-Enhanced Graph Learning

The KANO framework demonstrates how external chemical knowledge can enhance molecular graph learning through several innovative components [16]:

ElementKG Construction: A comprehensive knowledge graph incorporating element properties from the periodic table, functional groups, and their relationships, providing fundamental chemical knowledge as a prior.

Element-Guided Graph Augmentation: Unlike traditional augmentation techniques that may violate chemical semantics (e.g., random node dropping or edge perturbation), KANO uses element knowledge to create chemically meaningful augmented views by connecting atoms that share chemical relationships beyond direct bonding.

Functional Prompting: During fine-tuning, task-specific prompts based on functional group information evoke relevant chemical knowledge acquired during pre-training, bridging the gap between pre-training objectives and downstream applications.

Diagram Title: Knowledge-Enhanced Molecular Graph Learning

Experimental Protocols and Benchmarking

Molecular Property Prediction Protocols

Comprehensive evaluation of molecular graph representations requires rigorous benchmarking across diverse property prediction tasks. Standard experimental protocols include:

Dataset Splitting: Both random splits and more challenging scaffold splits (where molecules in test sets have core structures not seen during training) are used to assess generalization capability [19] [10].

Evaluation Metrics: Common metrics include ROC-AUC and PR-AUC for classification tasks, RMSE and MAE for regression tasks, with careful statistical significance testing [19].

Baseline Comparisons: Molecular graph models are typically compared against traditional fingerprint-based methods (ECFP, MACCS) and SMILES-based approaches to establish performance advantages [10].

Recent benchmarking studies have revealed surprising insights about molecular representation performance. One extensive comparison of 25 pretrained molecular embedding models across 25 datasets found that nearly all neural models showed negligible or no improvement over the baseline ECFP molecular fingerprint, with only specialized models incorporating strong chemical inductive bias performing competitively [19].

Case Study: MultiFG Framework for Side Effect Prediction

The Multi Fingerprint and Graph Embedding model (MultiFG) demonstrates a sophisticated integration of graph-based and fingerprint representations for predicting drug side effect frequencies [20]. The experimental methodology includes:

Dataset Preparation: Based on 743 drugs and 994 side effects with frequency information mapped to five levels (very rare to very frequent), creating a sparse matrix of 36,895 known drug-side effect pairs [20].

Multi-view Feature Integration:

- Drug fingerprint features (MACCS, Morgan, RDKIT, ErG) representing different molecular properties

- Drug graph embedding features capturing topological structure

- Similarity features derived from known drug-side effect associations

Architecture Design:

- Attention-enhanced convolutional networks to capture local to global molecular features

- Multi-head attention with side effect features as query and drug features as keys/values

- Kolmogorov-Arnold Networks (KAN) as prediction layers to capture complex relationships

Evaluation Results: MultiFG achieved an AUC of 0.929 and significant improvements in precision (7.8%) and recall (30.2%) over previous state-of-the-art methods, demonstrating the power of integrated graph-fingerprint representations [20].

Case Study: MolEM for 3D Molecular Graph Generation

MolEM addresses the critical challenge of sequentializing 3D molecular graphs for generation by introducing a variational expectation-maximization framework that jointly learns molecular structures and their generative orders [15]. The key methodological innovations include:

Likelihood Formulation: Deriving a tight evidence lower bound (ELBO) for the exact graph likelihood, which involves marginalizing over all possible sequential orders (factorial in graph size).

Variational EM Framework:

- E-step: Inferring the posterior distribution over sequential orders using an ordering generator

- M-step: Updating the molecule generator parameters using orders from the E-step

Molecular Docking Integration: Incorporating QuickVina 2 for binding pose generation without using docking scores as direct supervision, ensuring realistic binding conformations.

Experimental results demonstrated that MolEM significantly outperformed baseline models in generating molecules with high binding affinities and realistic structures, while efficiently approximating the true marginal graph likelihood [15].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential computational tools for molecular graph research

| Tool/Category | Function | Application Context |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit | Molecular graph construction, feature calculation, fingerprint generation [20] [10] |

| Graph Neural Networks (GIN, GCN, GAT) | Deep learning on graph-structured data | Molecular property prediction, representation learning [19] |

| Molecular Fingerprints (ECFP, MACCS) | Substructure pattern detection | Baseline comparisons, hybrid models [20] [10] |

| Knowledge Graphs (ElementKG) | External knowledge integration | Chemically-aware pre-training, explainable AI [16] |

| Molecular Docking (QuickVina 2) | Binding pose prediction | 3D structure generation, binding affinity estimation [15] |

| Discrete Diffusion Models | Generative modeling | Molecular graph generation, structure-based drug design [17] |

Future Directions and Challenges

Despite significant advances in molecular graph representations, several challenges remain unresolved. The generalization capability of graph-based models beyond their training distributions requires continued improvement, particularly for novel scaffold prediction and out-of-domain chemical spaces [19] [10]. The integration of 3D structural information while maintaining computational efficiency presents another significant challenge, as accurate conformation generation remains computationally expensive [15].

Future research directions likely to shape the field include:

Multimodal Molecular Representation: Frameworks like UTGDiff that unify text and graph modalities within single transformer architectures show promise for instruction-based molecule generation and editing [17].

Explainable AI Integration: Approaches like KANO that provide chemically sound explanations for predictions will be crucial for building trust and facilitating scientist-in-the-loop drug discovery [16].

Scalable Generation Methods: New paradigms for molecular graph generation that avoid the combinatorial complexity of sequential ordering while maintaining structural validity, as demonstrated by MolEM [15].

As molecular graphs continue to evolve as the intuitive structural blueprint for computational chemistry, their capacity to bridge the gap between structural representation and predictive performance will undoubtedly expand, accelerating the discovery of novel therapeutic agents and materials.

Molecular fingerprints are foundational tools in cheminformatics, serving as simplified vector representations that encode chemical structures for rapid computational analysis. They address a core challenge in the field: the quantification of molecular similarity. As the underlying data structure of a molecule is a graph, directly comparing molecules equates to solving a subgraph isomerism problem, which is computationally intensive and classified as at least NP-complete [21]. Fingerprints reduce this problem to the comparison of vectors, enabling the application of efficient approximation methods and heuristics [21]. In the context of a broader investigation into molecular representations, fingerprints offer a critical midpoint between the sequential simplicity of SMILES (Simplified Molecular-Input Line-Entry System) and the structural completeness of molecular graphs. While SMILES strings provide a compact, line-entry format and graphs offer an explicit atomic connectivity map, fingerprints excel in facilitating high-speed similarity searches, virtual screening, and the mapping of chemical space, which are essential for modern drug discovery and the exploration of complex chemical datasets [1] [22].

The evolution of molecular representation has progressed from traditional, rule-based descriptors to advanced, data-driven learning paradigms [1]. Early methods relied on predefined molecular descriptors or structural keys. However, as drug discovery tasks have grown more sophisticated, these conventional methods often struggle to capture the intricate relationships between molecular structure and function. This has spurred the development of AI-driven techniques, including deep learning models that learn continuous, high-dimensional feature embeddings directly from large datasets [1]. Within this landscape, fingerprints remain a cornerstone due to their computational efficiency and proven utility in tasks such as quantitative structure-activity relationship (QSAR) modeling and ligand-based virtual screening [22].

Core Concepts and Fingerprint Typologies

Molecular fingerprints can be broadly categorized based on their method of feature generation and the type of information they encode.

Fundamental Types of Fingerprints

- Substructure-based Fingerprints: These fingerprints, such as MACCS keys and PubChem fingerprints, use a predefined dictionary of molecular fragments. Each bit in the fingerprint vector signals the presence or absence of a specific substructure within the molecule [22].

- Circular Fingerprints: Unlike substructure-based fingerprints, circular fingerprints generate features dynamically from the molecular graph without a predefined fragment library. The most prominent example is the Extended-Connectivity Fingerprint (ECFP). ECFP works by iteratively updating a numeric identifier for each atom based on its own properties and those of its neighbors within an increasing radius. The resulting identifiers, which represent circular substructures, are then hashed into a fixed-length bit vector [21] [22].

- Path-based Fingerprints: These algorithms, such as Daylight-style fingerprints, generate features by enumerating all linear paths of bonded atoms up to a specified length within the molecular graph [22].

- Atom-Pair Fingerprints: These encode the topological distance between all pairs of atoms in a molecule, often combined with atom type information. This provides an excellent perception of global molecular shape and size, making them suitable for scaffold hopping and describing large molecules like peptides [23].

- String-based Fingerprints: These operate directly on the SMILES string of a compound. For example, LINGO fragments the SMILES into fixed-size substrings, while MinHash Fingerprints (MHFP) apply natural language processing techniques to circular substructures represented as SMILES strings [22].

Information Encoding and Similarity Measurement

Fingerprints can also be characterized by how they represent features within the vector [22]:

- Binary Fingerprints: Indicate the presence (1) or absence (0) of a molecular pattern.

- Count-based Fingerprints: Use integer values to represent the number of occurrences of a specific fragment.

- Categorical Fingerprints: Use numerical identifiers to describe chemical motifs, as seen in MinHashed fingerprints.

The most common metric for comparing binary and count-based fingerprints is the Jaccard-Tanimoto similarity. For two sets A and B (where a set can be the list of features present in a molecule), the Jaccard similarity coefficient is calculated as J(A, B) = |A ∩ B| / |A ∪ B| [21]. For categorical fingerprints, a modified version of this metric considers two bits as a match only if they contain exactly the same integer [22].

Technical Deep Dive: Hashed Substructure Fingerprints

The Extended-Connectivity Fingerprint (ECFP)

The ECFP is a circular fingerprint that has become a de facto standard in small molecule drug discovery. It encodes circular substructures with a high level of detail, which accounts for its superior performance in benchmarking studies focused on drug analog recovery [21].

Experimental Protocol for ECFP Generation:

- Atom Initialization: Assign an initial numeric identifier to each non-hydrogen atom based on a set of atomic invariants (e.g., atomic number, connectivity, valence, atomic mass).

- Iterative Update (Radius Expansion): For each iteration (radius), update each atom's identifier by combining its current identifier with the identifiers of its immediate neighbors. This process effectively captures the molecular environment within a growing diameter around each atom.

- Hashing and Folding: The unique set of numeric identifiers generated at each iteration is hashed into a large integer space. These hashes are then mapped (or "folded") into a fixed-length bit vector using a modulo operation [21].

A key limitation of ECFP is the curse of dimensionality. To perform well, it requires high-dimensional representations (typically ≥ 1024 dimensions). This makes nearest neighbor searches in very large databases like PubChem or ZINC computationally expensive and slow [21].

MinHash Fingerprint (MHFP)

The MHFP fingerprint was developed to combine the detailed substructure encoding of ECFP with the computational advantages of the MinHash technique, a locality sensitive hashing (LSH) scheme borrowed from natural language processing [21].

Experimental Protocol for MHFP6 Generation:

- Molecular Shingling: For each atom in the molecule, extract all circular substructures up to a diameter of six bonds (analogous to ECFP4). However, instead of converting them to numeric identifiers, each substructure is written as a canonical, rooted SMILES string. This collection of SMILES strings is termed the "molecular shingling."

- Hashing: Apply a hash function (e.g., SHA-1) to each SMILES string in the shingling, converting them into a set of integers.

- MinHashing: The core of MHFP. A family of k different hash functions is applied to the set of integer hashes. For each hash function, the minimum value in the set is recorded. These k minimum values form the final MHFP vector of dimension k [21].

The primary advantage of MHFP is its use of MinHash, which allows for the direct application of Locality Sensitive Hashing (LSH) Forest algorithms for approximate nearest neighbor searching. LSH Forest creates self-tuning indices that enable very fast similarity searches in large databases, effectively circumventing the curse of dimensionality that plagues ECFP [21]. Benchmarking studies have shown that MHFP6 outperforms ECFP4 in analog recovery tasks [21].

Figure 1: The MHFP6 generation workflow, from molecular shingling to the final fingerprint vector enabling LSH-based searching.

MinHashed Atom-Pair Fingerprint (MAP4)

The MAP4 fingerprint was designed to create a universal representation suitable for both small molecules and large biomolecules like peptides. It achieves this by hybridizing the concepts of circular substructures and atom-pair fingerprints [23].

Experimental Protocol for MAP4 Generation:

- Circular Substructure Generation: For each non-hydrogen atom j, generate the canonical SMILES of the circular substructure at radii 1 and 2 (diameter of 4 bonds), denoted as CSᵣ(j).

- Topological Distance Calculation: Calculate the minimum topological distance TPⱼₖ for every atom pair (j,k) in the molecule.

- Atom-Pair Shingling: For each atom pair and each radius, create an "atom-pair shingle" in the format: CSᵣ(j) | TPⱼₖ | CSᵣ(k), where the two SMILES strings are placed in lexicographical order.

- Hashing and MinHashing: Hash the entire set of atom-pair shingles to a set of integers, then apply the MinHash procedure (as in MHFP) to form the final MAP4 fingerprint [23].

MAP4 significantly outperforms ECFP in small molecule virtual screening and surpasses other atom-pair fingerprints in a peptide benchmark designed to recover BLAST analogs. Its ability to effectively describe a wide range of molecules, from drugs to metabolites, makes it a strong candidate for a universal fingerprint [23].

Figure 2: The MAP4 fingerprint generation process, which combines circular substructures with atom-pair information.

Performance Benchmarking and Quantitative Comparison

The performance of molecular fingerprints is typically evaluated using benchmarks for ligand-based virtual screening and, increasingly, on their ability to handle diverse molecular classes, including natural products and peptides.

Table 1: Benchmarking performance of key molecular fingerprints across different molecular classes.

| Fingerprint | Type | Small Molecule (Drug-like) Performance | Peptide & Biomolecule Performance | Natural Products Performance | Key Characteristic |

|---|---|---|---|---|---|

| ECFP4 [21] [23] [22] | Circular | Excellent | Poor | Good, but can be outperformed | De facto standard for small molecules; suffers from curse of dimensionality |

| MHFP6 [21] [22] | Circular (String-based) | Outperforms ECFP4 | Moderate (better than ECFP) | Good | Enables fast LSH searches; avoids folding |

| MAP4 [23] [22] | Hybrid (Atom-Pair & Circular) | Excellent, matches or outperforms ECFP4 | Superior to ECFP and other atom-pair fingerprints | Good universal performance | Universal fingerprint for small and large molecules |

| Atom-Pair (AP) [23] | Path-based / Topological | Poor compared to ECFP | Excellent | Varies | Excellent perception of molecular shape and size |

| MACCS Keys [9] [22] | Substructure-based | Good for similarity search | Limited | Varies | Predefined structural keys; computationally efficient |

Table 2: Technical summary of fingerprint calculation methodologies and properties.

| Fingerprint | Feature Generation Method | Information Encoded | Typical Dimension | Similarity Metric |

|---|---|---|---|---|

| ECFP4 [21] | Iterative atomic identifier update and hashing | Local circular substructures | 1024 - 2048 (folded) | Jaccard-Tanimoto |

| MHFP6 [21] | MinHash of circular SMILES shingles | Local circular substructures | 1024 - 2048 (unfolded) | Jaccard-Tanimoto (modified) |

| MAP4 [23] | MinHash of atom-pair SMILES shingles | Local environments + global topology | 1024 - 2048 (unfolded) | Jaccard-Tanimoto (modified) |

| PubChem Fingerprint [9] [22] | Predefined substructure dictionary | Presence of 881 specific substructures | 881 | Jaccard-Tanimoto |

| MACCS Keys [9] | Predefined substructure dictionary | Presence of 166 specific structural patterns | 166 | Jaccard-Tanimoto |

A 2024 study on the effectiveness of fingerprints for exploring the chemical space of natural products (NPs) highlighted that different encodings can provide fundamentally different views of the NP chemical space [22]. While ECFP is often the default choice for drug-like compounds, the study found that other fingerprints, particularly MAP4 and other string-based or atom-pair fingerprints, can match or outperform ECFP for bioactivity prediction of NPs. This underscores the importance of evaluating multiple fingerprinting algorithms for optimal performance on specific chemical classes [22].

Essential Research Reagents and Computational Tools

Table 3: Key software tools and resources for molecular fingerprint calculation and application.

| Tool / Resource | Type | Function in Research | Example Fingerprints Supported |

|---|---|---|---|

| RDKit [23] | Open-Source Cheminformatics Library | Core library for molecule handling, fingerprint calculation, and cheminformatics workflows. | ECFP, Atom-Pair, MACCS, Pharmacophore |

| MHFP [21] | Specialized Python Package | Calculates MinHash fingerprints from molecular shingling. | MHFP6 |

| MAP4 [23] | Specialized Python Package | Calculates MinHashed Atom-Pair fingerprints. | MAP4 (and variants MAP2, MAP6) |

| LSH Forest Algorithms [21] | Indexing Algorithm | Enables fast approximate nearest neighbor searches in high-dimensional spaces. | Native support for MinHash-based fingerprints (MHFP, MAP4) |

| PubChem Database [9] [24] | Chemical Database | Source of compounds for benchmarking; provides its own predefined fingerprint. | PubChem Fingerprint |

| COCONUT/CMNPD [22] | Natural Product Databases | Specialized databases for benchmarking fingerprint performance on natural products. | Various (for research purposes) |

Molecular fingerprints that leverage hashed substructures and bit vectors, such as ECFP, MHFP, and MAP4, are indispensable for rapid similarity searching in cheminformatics. Their development represents a continuous effort to balance structural detail with computational efficiency. The evolution from hashed circular fingerprints like ECFP to MinHash-based approaches like MHFP6 addresses critical limitations in searching large databases, while hybrid fingerprints like MAP4 demonstrate a move towards universal representations capable of spanning the entire size spectrum of chemical space, from small drugs to large biomolecules.

Future research in molecular fingerprints is likely to be influenced by several key trends. The rise of AI-driven representations, including graph neural networks and transformer models, offers a complementary paradigm that learns continuous molecular embeddings directly from data [1]. Furthermore, the need to handle diverse chemical classes, as highlighted by benchmarking studies on natural products and peptides, will drive the development and adoption of more robust and universal fingerprints like MAP4 [23] [22]. Finally, innovative applications such as visual fingerprinting—bypassing SMILES or graph reconstruction to generate fingerprints directly from chemical images—represent an emerging frontier for extracting molecular information from scientific literature and patents [24]. In this evolving landscape, traditional hashed fingerprints will remain a vital tool due to their interpretability, computational speed, and proven success in powering drug discovery.

The process of drug discovery is notoriously time-intensive and costly, driving the continual development of new computational methods to accelerate development [1]. A fundamental prerequisite for these methods is the translation of molecules into a computer-readable format, a process known as molecular representation [1]. This representation serves as the bridge between chemical structures and their biological, chemical, or physical properties, forming the cornerstone of computational chemistry and drug design [1].

The evolution of these representations mirrors the technological capabilities of their time. This document traces the journey from early, human-readable notations to modern, AI-ready formats that enable machines to not only store, but also to learn from and generate molecular structures. This progression is critical for understanding the current landscape of molecular representation within cheminformatics research, particularly in the context of comparing SMILES, graphs, and fingerprints.

The Era of Human-Readable Notations

Before computers could process chemical information, the primary challenge was developing concise, unambiguous systems that humans could use to communicate complex structures.

IUPAC Nomenclature

The IUPAC (International Union of Pure and Applied Chemistry) name was first introduced by the International Chemical Congress in Geneva in 1892 and established by the IUPAC to provide a systematic and standardized method for naming chemical compounds [1]. While precise and universally accepted, its verbose and complex nature makes it poorly suited for direct computational processing and large-scale data storage.

Wiswesser Line Notation (WLN)

In 1949, William J. Wiswesser invented the Wiswesser Line Notation (WLN), which was the first line notation capable of precisely describing complex molecules [25]. It became a serious contender to replace IUPAC nomenclature before being superseded by later digital formats [26].

- Design Philosophy: WLN was designed to mirror the way chemists think about chemistry, giving central roles to functional groups, carbon chains, and rings [26]. It uses a limited character set (uppercase letters, numbers, and a few symbols) to create compact strings [27].

- Key Features and Examples: WLN condenses common functional groups into single characters. For instance, a saturated one-carbon chain (methyl group) is "1", and a carbonyl group is "V" [26].

- Acetone is represented as

1V1(two methyl groups connected by a carbonyl). - Diethyl ether is

2O2(two ethyl groups connected by an oxygen). - Benzene is represented by the symbol

R. Thus, acetophenone is1VR[26].

- Acetone is represented as

- Canonicalization: WLN uses a simple alphanumeric order for canonicalization, with priority increasing from symbols, to numbers, to letters (with

Rfor benzene having the lowest priority) [26]. - Decline and Legacy: WLN's reliance on a limited character set and manual encoding led to its decline. However, its conceptual influence persists. Modern parsers and finite state machines have been developed to extract and convert historical WLN data into contemporary formats like SMILES, rescuing valuable chemical information from obscurity [27].

The Shift to Machine-Oriented and AI-Ready Formats

The advent of digital computing necessitated representations that were not only machine-readable but also efficient for storage, retrieval, and algorithmic processing.

The SMILES Revolution and Its Ecosystem

The Simplified Molecular Input Line Entry System (SMILES), introduced by Weininger et al. in 1988, represented a paradigm shift [1]. It encodes molecular graphs as compact ASCII strings using a small set of simple rules [28].

- Basic Syntax:

- Atoms: Represented by atomic symbols (e.g.,

C,N,O). Special atoms are in square brackets (e.g.,[Na+]). - Bonds: Single (

-), double (=), triple (#); aromatic bonds are implied by lowercase atom symbols (c1ccccc1for benzene). - Branches: Enclosed in parentheses (e.g.,

CC(=O)Ofor acetic acid). - Ring closures: Indicated by matching numbers (e.g.,

C1CCCCC1for cyclohexane). - Stereochemistry: Specified with

@and@@symbols [28].

- Atoms: Represented by atomic symbols (e.g.,

- Challenges: A key limitation is that SMILES is not unique; the same molecule can have multiple valid SMILES strings. It also lacks spatial information and can be prone to syntactic errors that generate invalid structures [28].

- Extensions: The SMILES ecosystem has expanded to include:

- SMARTS (SMILES Arbitrary Target Specification), for substructure searching.

- CXSMILES (ChemAxon Extended SMILES), adding additional information like coordinates.

- Machine Learning Application: SMILES became the de facto standard for early AI in chemistry due to its sequence-based nature, which is analogous to natural language.

- Tokenization: SMILES strings are broken into chemically meaningful tokens (atoms, brackets, bonds) using regex-based tokenizers, crucial for model comprehension [28].

- Embeddings: These tokens are mapped to numerical vectors (embeddings) using techniques like learned embeddings in RNNs/Transformers or pre-trained models like ChemBERTa, allowing models to learn chemical semantics [28].

Molecular Fingerprints

Molecular fingerprints are a fundamentally different approach, designed not to reconstruct the structure but to encode its key features for rapid comparison and similarity searching [1].

- Concept: Fingerprints encode substructural information as fixed-length binary strings or numerical vectors [1]. Each bit in the vector represents the presence or absence of a specific substructure or property.

- Applications: They are exceptionally effective for similarity searches, clustering, and as input features for Quantitative Structure-Activity Relationship (QSAR) modeling and machine learning classifiers [1]. For example, they have been used to build robust prediction frameworks for ADMET properties and molecular sweetness [1].

- Examples: Extended-connectivity fingerprints (ECFP) are among the most widely used, representing local atomic environments in a circular manner [1].

The Rise of Graph-Based Representations

Graph-based representations are the most natural computational abstraction of a molecule, making them particularly powerful for modern, deep learning applications [1] [29].

- Representation Schema: Atoms are represented as nodes, and chemical bonds are represented as edges [29]. This structure allows AI models to natively learn from molecular topology.

- AI Application: Graph Neural Networks (GNNs) operate directly on this graph structure. For instance, the 'Edge Set Attention' model developed at Cambridge leverages attention mechanisms on chemical bonds (edges) instead of just atoms (nodes), achieving state-of-the-art results on molecular property prediction benchmarks [29]. This approach allows the AI to identify the most relevant functional groups or atoms for a given task [29].

Table 1: Comparative Analysis of Molecular Representation Methods

| Representation Format | Primary Focus | Key Advantages | Primary Limitations | Ideal Use Cases |

|---|---|---|---|---|

| IUPAC Name | Human Communication | Standardized, precise, universal | Verbose, not machine-optimized | Systematic literature, education |

| Wiswesser Line Notation (WLN) | Human & Early Machine | Compact, functional-group oriented | Obsolete, requires special training | Historical data mining [27] |

| SMILES | Machine Storage & Processing | Compact, simple syntax, widely supported | Non-unique, lacks spatial data, syntactic errors | Sequence-based AI (LSTMs, Transformers) [28] |

| Molecular Fingerprints | Similarity & Comparison | Fast similarity search, good for QSAR/ML | Lossy; cannot reconstruct structure | Virtual screening, clustering, classic ML [1] |

| Graph Representation | Structural Topology | Native molecular abstraction, powerful for DL | Computationally intensive, complex models | Graph Neural Networks, property prediction [29] |

Experimental Protocols for Modern Molecular Representation Research

This section outlines key methodologies for conducting research involving modern molecular representations and AI.

Protocol 1: Building a SMILES-Based Property Predictor

Aim: To train a model to predict molecular properties (e.g., solubility, toxicity) from SMILES strings.

- Data Curation & Canonicalization: Acquire a dataset (e.g., from ChEMBL or PubChem) with associated properties. Use a toolkit like RDKit to convert all SMILES into a single, canonical form to ensure consistency and remove duplicates [28].

- Tokenization: Implement a regex-based tokenizer to split SMILES strings into chemically meaningful tokens (e.g., atoms, bonds, branches). This prevents misinterpreting multi-character atoms like

Cl[28]. - Vocabulary and Embedding Generation: Create a vocabulary of all unique tokens. Initialize an embedding layer that maps each token to a dense, continuous vector of a specified dimension (e.g., 256) [28].

- Model Architecture: Employ a sequence model. Recurrent Neural Networks (RNNs) like LSTMs or GRUs can be used to process the embedded token sequences. Alternatively, Transformer-based models (e.g., ChemBERTa) with self-attention mechanisms are more powerful for capturing long-range dependencies [28].

- Training & Evaluation: Train the model in a supervised manner to map the input sequence to the target property. Evaluate performance on a held-out test set using metrics like Mean Squared Error (MSE) for regression or AUC-ROC for classification.

Protocol 2: Graph Neural Network for Molecular Property Prediction

Aim: To leverage a graph-based representation for advanced property prediction.

- Graph Construction: Use RDKit or OpenBabel to convert molecular structures (e.g., from SMILES) into graph objects. Nodes (atoms) are featurized with properties like atom type, degree, and hybridization. Edges (bonds) are featurized with bond type and conjugation [29].

- Model Architecture: Implement a Graph Neural Network (GNN). The 'Edge Set Attention' model is a recent innovation that applies attention mechanisms directly to the bonds (edges), allowing the model to focus on the most important molecular interactions for the prediction task [29].

- Readout Function: After the GNN processes the graph, a readout function (or graph pooling) aggregates the updated node/edge features into a single, graph-level representation. The development of adaptive readout functions has been shown to significantly improve performance, unlocking transfer learning for graph-structured data [29].

- Multi-Fidelity Learning: For real-world drug discovery, train the model on large, low-fidelity datasets (e.g., primary screening data) and fine-tune on smaller, high-fidelity datasets (e.g., detailed secondary assays). This transfer learning approach optimizes resource-intensive processes [29].

Visualization of Molecular Representation Evolution and AI Workflow

Diagram 1: Evolution of molecular representations and their pathways to AI models.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Software Tools and Datasets for Molecular Representation Research

| Item Name | Type | Primary Function | Relevance to Research |

|---|---|---|---|

| RDKit | Software Library | Cheminformatics toolkit | Core functionality for reading/writing SMILES, generating molecular graphs, fingerprint calculation, and molecular visualization [28]. |

| OpenBabel | Software Library | Chemical file format converter | Supports conversion between a vast array of chemical formats, including legacy notations like WLN [27]. |

| PyTorch / TensorFlow | Software Library | Deep Learning Framework | Provides the foundation for building, training, and deploying custom AI models (RNNs, Transformers, GNNs) for molecular data. |

| ChemBERTa / MolBERT | Pre-trained Model | Molecular Language Model | Offers chemically informed embeddings for SMILES tokens, giving models a head start in training [28]. |

| ChEMBL / PubChem | Database | Public Chemical Repository | Primary sources for large-scale, annotated molecular data for training and benchmarking AI models [27]. |

| WLN Parser (e.g., from GitHub) | Specialized Tool | Legacy Format Converter | Extracts and converts Wiswesser Line Notation from historical documents and databases into modern formats [27]. |

| Adaptive Readout Functions | AI Component | Graph-Level Pooling | Advanced function in GNNs that improves the aggregation of node/edge features into a molecular representation, boosting prediction accuracy [29]. |

| Edge Set Attention | AI Architecture | Graph Neural Network | A state-of-the-art GNN component that applies attention mechanisms to bonds (edges), improving model performance and interpretability [29]. |

The evolution from IUPAC and WLN to SMILES, fingerprints, and graph representations reflects a clear trajectory: from human-centric communication to computational efficiency, and now, to AI-native understanding. While SMILES remains a vital standard for its simplicity and compactness, graph-based representations are increasingly powering the most advanced AI applications in drug discovery by directly modeling molecular topology. Fingerprints continue to offer unparalleled speed for similarity and search.

The future of molecular representation is likely multimodal, combining the strengths of these formats—perhaps by aligning sequence-based (SMILES), graph-based, and 3D structural information—to create richer, more powerful models. Furthermore, the principles of data readiness—ensuring data is cleaned, standardized, and formatted for scalable AI training—are becoming as critical as the AI models themselves, especially when dealing with leadership-scale datasets [30]. As AI continues to evolve, so too will the languages we use to describe the molecular world, driving forward innovations in scaffold hopping, lead optimization, and the entire drug discovery pipeline.

From Data to Drugs: How AI Harnesses Different Representations for Discovery

The Simplified Molecular Input Line Entry System (SMILES) is a line notation method that encodes the structure of chemical molecules as strings of ASCII characters, representing atoms, bonds, branches, and ring structures [31]. Inspired by remarkable successes in natural language processing (NLP), transformer-based language models have been extensively adapted to learn from SMILES strings, treating molecules as sequential data analogous to sentences [32]. These chemical language models (CLMs) leverage vast amounts of unlabeled molecular data through self-supervised pre-training, demonstrating powerful capabilities for molecular property prediction and de novo molecular design [32] [33]. Within the broader context of molecular representations, SMILES strings offer a unique balance between structural expressiveness and sequential simplicity, competing with graph-based representations that explicitly encode atom connectivity and traditional molecular fingerprints that capture predefined substructural patterns [19].