Smoothness Analysis in Computational Models: Enhancing Predictiveness in Drug Development

This article provides a comprehensive guide to smoothness analysis for computational model outputs, tailored for researchers and professionals in drug development.

Smoothness Analysis in Computational Models: Enhancing Predictiveness in Drug Development

Abstract

This article provides a comprehensive guide to smoothness analysis for computational model outputs, tailored for researchers and professionals in drug development. It explores the foundational role of smoothness as a marker of robust, predictive models, covering key methodologies from signal processing and machine learning. The content details practical applications in analyzing kinetic data and model outputs, addresses common troubleshooting and optimization challenges, and presents rigorous validation and comparative frameworks. By synthesizing these areas, the article serves as a strategic resource for leveraging smoothness analysis to improve the reliability and translation of computational findings into successful clinical outcomes.

What is Smoothness Analysis? Core Concepts and Importance in Computational Modeling

In computational research, the smoothness assumption is a foundational principle stating that if two data points are close in a high-density region of the input space, their corresponding outputs should be similar. Conversely, points separated by a low-density region may have differing outputs [1]. This principle enables generalization from finite training data to unseen examples and is enforced through regularization techniques that penalize abrupt changes, thereby promoting continuity in function representations [1]. In practical applications, from image processing to weather forecasting, smoothness is not just a mathematical ideal but a property that can be quantified, measured, and optimized to improve model performance and interpretability.

Troubleshooting Guides and FAQs

This section addresses common challenges researchers face when defining, measuring, and applying smoothness in computational models.

Frequently Asked Questions

Q1: What does the "smoothness assumption" mean in the context of machine learning? The smoothness assumption posits that for two closely located data points in a high-density region of the input space, their corresponding labels or outputs should be similar. This assumption allows models to generalize from a limited training set to a broader set of unseen test examples by leveraging the inherent structure of the data [1].

Q2: My model's output appears "noisy" and lacks smoothness. What are the primary methods to enforce smoother outputs? Enforcing smoothness is typically achieved through regularization. This involves adding a penalty term to your model's objective function that discourages complex, non-smooth solutions. Common stabilizers include penalizing the magnitude of the model's gradients or using higher-order differential operators like the Laplacian [1].

Q3: How can I quantify the smoothness of a function or model output mathematically? Smoothness can be quantified using measures of differentiability. A key method is the Sobolev norm, which aggregates the norms of a function's derivatives. It is expressed as ( \|g\|{WN^2} = \sum_k |g^{(k)}|^2 ), where ( g^{(k)} ) is the k-th derivative of the function ( g ) [1].

Q4: What are the limitations of enforcing global smoothness on data with inherent discontinuities, like images? Global smoothness stabilizers often fail at boundaries, such as edges in images or sudden shifts in time-series data, leading to oversmoothing. This occurs because the stabilizer cannot distinguish between noise (which should be smoothed) and genuine, important discontinuities (which should be preserved) [1].

Q5: What advanced techniques can preserve edges while smoothing homogeneous regions? To handle discontinuities, several advanced approaches have been developed:

- Nonquadratic Stabilizers: Using penalty functions that are less severe on high gradients, thereby preserving edges.

- Controlled-Continuity Stabilizers: Explicitly introducing a line process to mark and allow for discontinuities.

- Variational Techniques: Optimizing functionals that model the interaction between a smooth intensity field and a set of unknown discontinuities.

- Adaptive Filtering: Modifying algorithm parameters locally based on a pixel's neighborhood to reduce noise without blurring edges [1].

Common Computational Problems and Solutions

| Problem Category | Specific Issue | Proposed Solution |

|---|---|---|

| Model Output | Noisy or non-generalized predictions. | Apply regularization with a Sobolev seminorm stabilizer. Balance data fidelity and smoothness using a positive regularization parameter (λ) [1]. |

| Model Output | Oversmoothing across critical boundaries and edges. | Implement edge-preserving techniques such as nonquadratic or controlled-continuity stabilizers instead of global smoothness enforcers [1]. |

| Optimization | Algorithm fails to converge or converges slowly. | Verify that the objective function is L-smooth (its gradient does not change too rapidly). Ensure step sizes (e.g., γ ≤ 1/L) are set appropriately for gradient-based methods [1]. |

| Data Preprocessing | High-frequency noise obscuring the signal of interest. | Apply a linear denoising filter (e.g., Savitzky-Golay filter, Wiener filter) which uses a moving window to average nearby data points, effectively smoothing the signal [1]. |

| Spatial Verification | High computational complexity when smoothing fields on a global spherical domain (e.g., in climate science). | Utilize specialized methodologies for fast smoothing on the sphere that account for variable grid point areas and can handle missing data, enabling metrics like the Fraction Skill Score (FSS) to be calculated globally [2]. |

| Vision-Language Models | Brief, unsustained attention to key objects in an image ("advantageous attention decay"), leading to errors in attribute and relation understanding. | Implement Cross-Layer Vision Smoothing (CLVS), which uses a vision memory to maintain smooth attention distributions on key objects across model layers, terminating the process once visual understanding is complete [3]. |

Experimental Protocols and Methodologies

This section provides detailed methodologies for key experiments and concepts cited in this guide.

Protocol: Enforcing Smoothness via Regularization

Objective: To obtain a smooth function g that approximates a set of noisy data points.

- Define the Functional: Minimize a least-squares functional that includes a smoothness stabilizer:

Total Functional = Σ (data_observation - g(location))² + λ * Stabilizer(g) - Choose a Stabilizer:

- First-Order Smoothness (C₂):

Stabilizer(g) = ∫ ‖∇g(x)‖² dx. This penalizes large gradients. - Higher-Order Smoothness: Use the Laplacian

Δor other higher-order differential operators.

- First-Order Smoothness (C₂):

- Set Regularization Parameter (λ): A positive λ > 0 balances the trade-off between data fidelity and smoothness. A larger λ results in a smoother output.

- Numerical Optimization: Solve the minimization problem using an appropriate numerical optimization algorithm, such as a gradient-based method [1].

Protocol: Cross-Layer Vision Smoothing (CLVS) for LVLMs

Objective: To enhance visual understanding in Large Vision-Language Models (LVLMs) by sustaining attention on key objects throughout the model's layers.

- Initialization (First Layer): Normalize the positional indices of all visual tokens to a single, unified index to remove initial positional bias in the model's attention [3]. Initialize a vision memory with this unbiased visual attention distribution.

- Iterative Smoothing (Subsequent Layers): For each new layer, the model's visual attention is computed as a joint consideration of the current input and the vision memory from the previous layer.

- Memory Update: Update the vision memory iteratively using a smoothing factor, which blends the previous memory state with the new attention distribution. This ensures that attention to key objects is maintained across layers.

- Termination: Use an uncertainty-based criterion to determine when the visual understanding process is complete. Once this threshold is reached, the cross-layer smoothing process is terminated [3].

Workflow: Global Spatial Smoothing for Verification

Objective: To calculate spatial verification metrics, such as the Fraction Skill Score (FSS), on high-resolution global fields.

- Grid Handling: Account for the non-equidistant and irregular nature of grids on a spherical domain (e.g., latitude-longitude).

- Area Weighting: Incorporate the variability of grid point area sizes into the smoothing calculation to ensure accuracy.

- Smoothing Operation: Apply one of two novel, computationally efficient methodologies designed specifically for smoothing in a global domain.

- Metric Calculation: Compute the desired smoothing-based spatial metric (e.g., FSS) on the processed field to verify forecast performance [2].

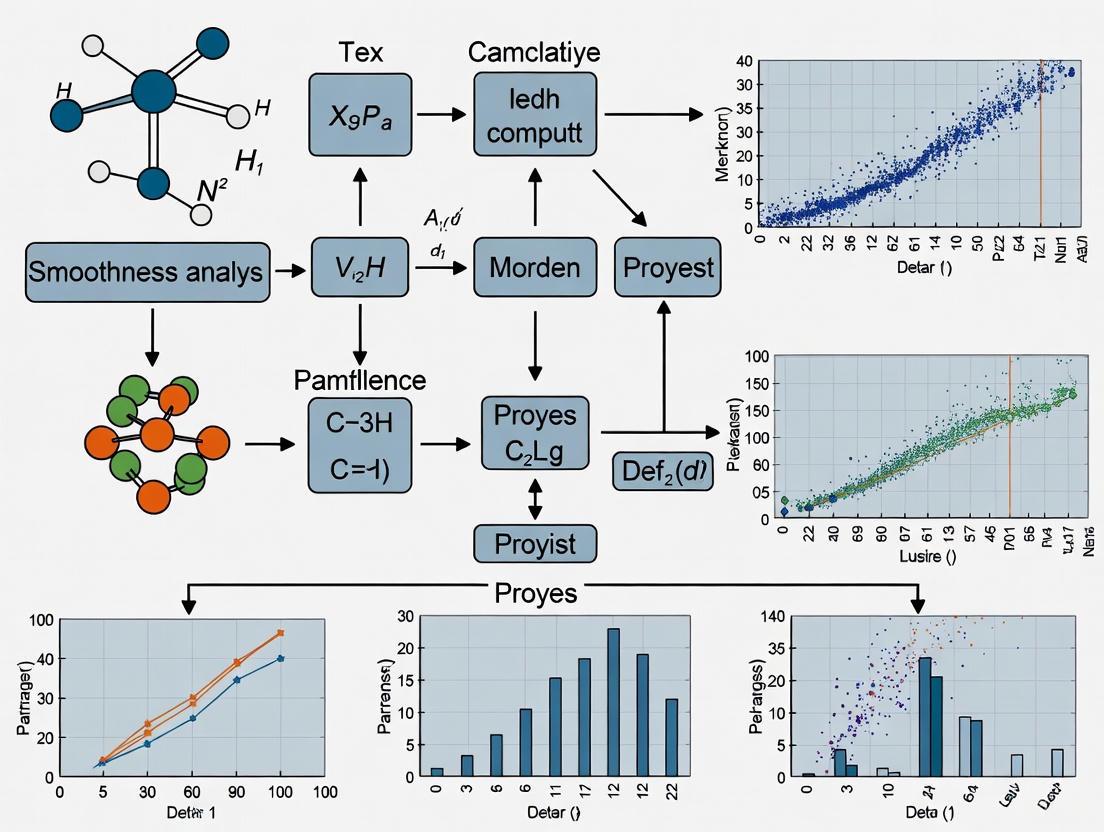

Visualizing Smoothness Concepts and Workflows

Core Smoothness Workflow

The following diagram illustrates the general decision-making process for applying and adjusting smoothness in a computational model.

Title: Smoothness Analysis Workflow

CLVS Method Architecture

This diagram outlines the architecture of the Cross-Layer Vision Smoothing method for maintaining visual attention.

Title: Cross-Layer Vision Smoothing (CLVS)

The Scientist's Toolkit: Research Reagents & Essential Materials

This table details key computational "reagents" and tools essential for experiments in smoothness analysis.

| Research Reagent / Tool | Function / Explanation |

|---|---|

| Sobolev Norm | A mathematical measure used to quantify the smoothness of a function by aggregating the norms of its derivatives [1]. |

| Regularization Parameter (λ) | A hyperparameter that controls the trade-off between fitting the training data accurately and achieving a smooth solution. A higher λ imposes greater smoothness [1]. |

| L-Smoothness Constant (L) | A constant (Lg > 0) that bounds the rate of change of a function's gradient. Critical for guaranteeing convergence in gradient-based optimization algorithms [1]. |

| Savitzky-Golay Filter | A digital filter that can smooth data without heavily distorting the signal by applying a low-degree polynomial to adjacent points in a moving window [1]. |

| Controlled-Continuity Stabilizer | A type of stabilizer that explicitly models discontinuities, allowing smoothness constraints to be relaxed at boundaries, thus preventing oversmoothing [1]. |

| Vision Memory (in CLVS) | A memory module that retains attention distributions from previous layers, enabling sustained focus on key objects throughout a model's forward pass [3]. |

| Global Smoothing Methodology | Specialized algorithms designed to efficiently smooth data on spherical geometries (like Earth's climate system), accounting for irregular grids and missing data [2]. |

Smoothness as a Marker of Robust and Predictive Models

Frequently Asked Questions (FAQs)

Q1: What is the fundamental connection between model smoothness and robustness?

A1: Model smoothness, often related to concepts like Lipschitz continuity, implies that small changes in the input do not lead to large, erratic changes in the output. This property directly enhances model robustness by making the model less sensitive to noise and small adversarial perturbations in the input data. A novel metric called TopoLip bridges topological data analysis and Lipschitz continuity, providing a unified framework for theoretical and empirical robustness comparisons. Studies using this metric have demonstrated that attention-based models, which typically exhibit smoother transformations, show greater robustness compared to convolution-based models [4].

Q2: My convolutional neural network is prone to noise in medical image data. How can smoothing techniques help? A2: Spatial smoothing methods, such as adding blur layers to your network, can significantly improve performance. These methods work by spatially ensembling neighboring feature maps, which stabilizes the features and leads to a smoother loss landscape. This not only improves accuracy but also enhances the model's uncertainty estimation and robustness to input perturbations. This approach is effective for both Bayesian neural networks (BNNs) and canonical deterministic networks [5].

Q3: In signal processing for biosensors, how do I choose the right smoothing technique for my spectral data? A3: The optimal technique depends on your specific signal characteristics and the balance you wish to strike between noise suppression and feature preservation. The table below summarizes four common advanced curve smoothing techniques used in fields like Surface Plasmon Resonance (SPR) biosensor analysis [6]:

| Technique | Principle | Best Use Cases |

|---|---|---|

| Gaussian Filter | Applies a normal distribution function, assigning greater weight to data points closer to a central value. Effective for linear and nonlinear systems. | General noise reduction; preserving overall data structure with a smooth transition [6]. |

| Savitzky-Golay Filter | Performs local polynomial regression to preserve higher-order moments of the data distribution. | Preserving important spectral features like peak heights and widths while smoothing [6]. |

| Smoothing Splines | Fits a piecewise polynomial (spline) under a constraint that minimizes its second derivative, controlling the trade-off between fit and smoothness. | Creating a smooth curve that closely follows the trend of noisy data [7] [6]. |

| Exponentially Weighted Moving Average (EWMA) | Applies weighting factors that decrease exponentially, giving more importance to recent observations. | Real-time smoothing of data streams; tracking trends in sequential data [6]. |

Q4: The process of manually selecting smoothing parameters is slow and subjective. Can this be automated? A4: Yes, deep learning approaches can automate this. One study trained a Convolutional Neural Network (CNN) to classify plots of smoothed equating curves and select the optimal smoothing parameter. The trained network achieved a 71% agreement rate with human expert choices, demonstrating significant potential for automating this traditionally manual and subjective process, thereby increasing scalability and consistency [8].

Q5: How can smoothing be incorporated into training reinforcement learning models for better performance? A5: In the context of reinforcement learning for clinical decision support, a technique called reward smoothing has been developed. This involves a custom attention-weighted reward function that filters out noise in the model's output. This smoothing mechanism enhances training stability and leads to continuous improvement in the model's reasoning capabilities [9].

Troubleshooting Guides

Issue 1: Poor Model Robustness to Adversarial Attacks or Noisy Inputs

Problem: Your model's performance degrades significantly when presented with slightly perturbed or noisy data.

Diagnosis Steps:

- Evaluate Smoothness: Quantify your model's smoothness using a metric like

TopoLip[4]. Compare it to known robust architectures to establish a baseline. - Analyze Feature Maps: Visualize the feature maps of your convolutional layers. High-frequency, noisy patterns may indicate instability that smoothing could address.

Solutions:

- Architecture Change: Consider switching to or incorporating attention-based layers, as they have been shown to exhibit inherently smoother transformations and greater robustness [4].

- Add Spatial Smoothing: Integrate spatial blur layers (e.g., Gaussian blur) into your existing convolutional network. Start by adding them after the first convolutional layer and before the final output layer. This acts as an implicit ensemble, stabilizing feature maps [5].

- Regularize the Loss Landscape: Apply regularization techniques that explicitly promote a smoother loss function, making the model less sensitive to small input variations.

Issue 2: Choosing an Optimal Smoothing Parameter

Problem: You are using a smoothing technique but are unsure how to select the parameter (e.g., bandwidth h, smoothing parameter S) that best balances smoothness and fidelity.

Diagnosis Steps:

- Visual Inspection: Plot your original data alongside smoothed curves with a range of parameter values. Look for the value where the curve appears smooth but does not deviate systematically from the original data points [7] [8].

- Check Central Moments: Compare the central moments (mean, variance) of the smoothed data to the original data. A large discrepancy indicates the smoothing might be too aggressive [8].

Solutions:

- Manual Grid Search: Experiment with a grid of different smoothing parameters. Use a quantitative metric like Root Mean Square Deviation (RMSD) between the smoothed and original data, alongside visual inspection, to make a choice [8].

- Automate with Deep Learning: If you have a large number of datasets, follow the methodology in [8] to train a CNN on human-classified smoothing plots to predict the optimal parameter.

- Use Established Formulae: For methods like LOESS, the

spanparameter can be set to a proportion (e.g., 0.5) that determines the fraction of data points used in each local fit [7].

Issue 3: Over-Smoothing and Loss of Critical Signal Information

Problem: After applying smoothing, your model or analysis has lost important features (e.g., sharp peaks in spectral data, fine-grained details in an image).

Diagnosis Steps:

- Compare Extreme Parameters: Smooth your data with a very high smoothing parameter. If the resulting curve is a straight line or a featureless blob, you are likely in the over-smoothing regime [8].

- Calculate Bias: Quantify the systematic error (bias) introduced by smoothing. A sharp increase in bias is a hallmark of over-smoothing.

Solutions:

- Select a Less Aggressive Parameter: Reduce the smoothing parameter (e.g., a smaller bandwidth in bin smoothing, a smaller

Sin cubic splines) [7] [8]. - Switch Smoothing Method: Change to a method better at preserving feature shapes. The Savitzky-Golay filter is specifically designed to preserve higher-order moments like peak width and height, making it superior for such cases compared to a Gaussian filter [6].

- Use a Hybrid Approach: Apply a mild smoothing overall and then use a separate, targeted algorithm to detect and protect known critical features.

Experimental Protocols

Protocol 1: Assessing Model Robustness via the TopoLip Metric

This protocol outlines how to use the TopoLip metric to compare the robustness of different models [4].

1. Purpose To quantitatively evaluate and compare the smoothness and inherent robustness of different machine learning models (e.g., CNN vs. Transformer) in a unified framework.

2. Materials

- Pre-trained models to be evaluated.

- A calibration dataset (e.g., a subset of the model's training or test data).

- Implementation of the TopoLip metric, which combines Topological Data Analysis (TDA) and Lipschitz continuity [4].

3. Procedure 1. Model Preparation: Load the pre-trained models and ensure they are in evaluation mode. 2. Data Sampling: Sample a batch of data from the calibration dataset. 3. Layer-wise Activation Extraction: For each model, run the batch through the network and extract the activation maps from each layer. 4. Topological Analysis: For each layer's activations, use TDA to construct a persistence diagram that captures the topological features (e.g., connected components, loops). 5. Lipschitz Constant Estimation: Calculate a stability measure from the persistence diagrams, which relates to the Lipschitz constant of the layer's transformation. 6. Compute TopoLip: Aggregate the layer-wise stability measures to compute the final TopoLip score for the model. A higher TopoLip score indicates a smoother, more robust model.

4. Analysis Compare the TopoLip scores of the different models. The model with a consistently higher TopoLip score is expected to demonstrate better empirical robustness under adversarial attacks or noisy inputs [4].

Protocol 2: Implementing Spatial Smoothing in a Convolutional Neural Network

This protocol describes how to add spatial smoothing layers to a CNN to improve its accuracy, uncertainty estimation, and robustness [5].

1. Purpose To stabilize the feature maps and smooth the loss landscape of a CNN by integrating spatial smoothing layers, thereby making it more robust and accurate.

2. Materials

- A defined CNN architecture (e.g., PyTorch or TensorFlow model).

- Training and validation datasets.

- Standard deep learning training hardware (GPU).

3. Procedure 1. Identify Insertion Points: Choose where to add the smoothing layers. Common strategies include: * After the first convolutional layer to smooth initial features. * Before the final classification layer to stabilize high-level features. 2. Select Smoothing Operation: Choose a spatial smoothing operation, such as a 2D Gaussian blur layer or an average pooling layer. 3. Integrate Layers: Modify the CNN architecture to include the smoothing layers at the chosen points. 4. Train/Finetune the Model: Train the model from scratch or finetune the existing model with the new smoothing layers included. The smoothing operation is differentiable and allows for end-to-end training. 5. Evaluate Performance: Test the model on clean and perturbed validation sets to measure improvements in accuracy and robustness.

4. Analysis Compare the accuracy, uncertainty calibration (e.g., via Brier score), and adversarial robustness of the model with and without spatial smoothing. The model with spatial smoothing should show improved performance across these metrics [5].

Workflow Visualization

The following diagram illustrates a generalized workflow for analyzing model smoothness and integrating smoothing techniques to enhance robustness.

Smoothness Analysis and Enhancement Workflow

Research Reagent Solutions

The table below lists key computational "reagents" (algorithms, models, and metrics) essential for experiments in model smoothness and robustness.

| Item | Function/Application |

|---|---|

| TopoLip Metric | A unified metric for theoretical and empirical robustness comparisons across model architectures by bridging TDA and Lipschitz continuity [4]. |

| Spatial Smoothing (Blur Layers) | A method to improve CNN accuracy, uncertainty, and robustness by spatially ensembling neighboring feature maps [5]. |

| Convolutional Neural Network (CNN) | A baseline architecture for image processing that can be made more robust through the integration of spatial smoothing layers [5] [4]. |

| Attention-Based Model (e.g., Transformer) | An architecture that typically exhibits smoother transformations and greater inherent robustness compared to CNNs, as measured by TopoLip [4]. |

| Savitzky-Golay Filter | A smoothing algorithm ideal for preserving important spectral features (e.g., peak shapes) in signal data like biosensor outputs [6]. |

| Cubic Spline Postsmoothing | A smoothing method for score equating in psychometrics; its parameter selection can be automated using a trained CNN [8]. |

| Reward Smoothing (in RL) | A custom function used in reinforcement learning to filter noise in model outputs, enhancing training stability and reasoning capability [9]. |

FAQs and Troubleshooting Guides

Pharmacokinetics-Pharmacodynamics (PK/PD) Modeling

Q1: What is PK/PD modeling and why is it critical in early drug discovery?

PK/PD describes the relationship between drug concentration in the systemic circulation and the pharmacological response it elicits [10]. It serves as a crucial connector between the administered dose and the clinical outcome [10]. Implementing PK/PD thinking early in discovery, rather than just before clinical trials, helps guide target commitment and informs medicinal chemistry on how to best deploy resources by determining whether the biology is driven by Cmin (minimum concentration) or AUC (Area Under the Curve) [10].

Q2: How can researchers overcome the challenge of limited in vivo data for building early PK/PD models?

When dedicated in vivo animal models are unavailable or resource-prohibitive, a knowledge-driven approach is recommended [10]. Instead of relying solely on project-specific in vivo data, leverage information from multiple sources:

- Physiological knowledge from scientific literature about the time course of the biological processes involved.

- Data from previous in vitro or in vivo studies on related targets or systems. Bridging knowledge gaps with well-designed, focused in vitro studies can provide the necessary parameters to build a useful hybrid model without initial large-scale in vivo experimentation [10].

Q3: Our PK/PD model predictions do not match experimental results. What are common sources of error?

- Imperfect PK Surrogate: Using systemic PK concentration as a direct surrogate for target site concentration can be misleading, especially for novel modalities like PROTACs, covalent inhibitors, or biologics where target engagement is complex [10].

- Unmodeled Biology: Downstream biological effects like feedback loops, feedforward mechanisms, and pathway redundancies can create a disconnect between target engagement and the final pharmacological effect [10].

- Incorrect Driver Assumption: The model may be built on a wrong assumption of the PK driver (

Cmin,Cmax,AUC, or time above a certain threshold). Re-evaluate this fundamental principle [10].

Systems Pharmacology

Q4: What is Quantitative and Systems Pharmacology (QSP) and how does it differ from traditional PK/PD?

Quantitative and Systems Pharmacology (QSP) is an integrative approach that combines physiology and pharmacology to analyze the dynamic interactions between drugs and a biological system as a whole [11]. Its key advantage is the simultaneous "horizontal integration" (considering multiple receptors, cell types, and pathways) and "vertical integration" (spanning multiple time and space scales, from molecular to whole-body levels) [11]. Unlike traditional PK/PD, QSP uses mechanistic, mathematical models (often Ordinary Differential Equations) to represent pathophysiological details and perform "what-if" experiments in silico [11].

Q5: How can QSP assist in designing combination therapies for complex diseases?

QSP is particularly valuable for understanding Traditional Chinese Medicine (TCM) and other multi-compound therapies [12]. It helps:

- Identify bioactive compounds and predict their targets from a complex mixture [12].

- Illustrate the molecular mechanisms of action from a network perspective [12].

- Analyze synergistic effects and dissect the contribution of individual components in a formula, moving beyond over-reliance on practitioner experience [12].

Q6: Encountered "over-smoothing" in a QSP network model? How can it be resolved?

Over-smoothing is a phenomenon where node representations in a network become indistinguishable, hindering predictive performance [13]. This is common in Graph Convolutional Networks (GCNs) used for structured data when the model uses a uniform strategy to aggregate information from neighbors [13]. Solution: Implement a graph disentanglement framework [13]. This technique:

- Separates the complex graph into multiple latent factors (e.g., different reasons for connections in a social network).

- Uses a multi-channel message-passing layer where each channel aggregates features related to only one specific factor.

- Prevents chaotic information fusion and helps maintain distinct node characteristics, thereby relieving over-smoothing [13].

Virtual Screening

Q7: What are the main types of virtual screening methods?

Table: Virtual Screening Method Categories

| Method Type | Description | Key Techniques |

|---|---|---|

| Ligand-Based [14] [15] | Relies on the similarity of query compounds to known active molecules. Used when the 3D structure of the target is unknown. | Pharmacophore modeling, 2D/3D shape similarity (e.g., ROCS), quantitative structure-activity relationship (QSAR), machine learning models [14]. |

| Structure-Based [14] [15] | Requires the 3D structure of the target protein. Focuses on the complementarity of compounds with the binding site. | Molecular docking, structure-based pharmacophore prediction, molecular dynamics simulations [14]. |

| Hybrid Methods [14] | Combines ligand- and structure-based approaches to overcome the limitations of each. | Methods like PoLi use global structural similarity of proteins and ligand similarity metrics to find new binders [14]. |

Q8: The virtual screening hit rate is low, or hits are structurally similar. How to improve diversity?

- Employ a Hierarchical Workflow: Use a multi-stage VS workflow where different methods act as sequential filters. This combines the strengths of various methods (e.g., fast ligand-based pre-screening followed by more computationally expensive structure-based docking) [15].

- Use Multiple Query Compounds: In ligand-based screening, using multiple, structurally diverse known active compounds as queries, rather than a single reference structure, leads to more accurate performance and identifies a more diverse set of hits [14].

- Leverage Active Learning: In ultra-large library screening, use AI-accelerated platforms that employ active learning. These platforms simultaneously train a target-specific neural network during docking to intelligently select promising and diverse compounds for further expensive calculations, efficiently exploring the chemical space [16].

Q9: A compound identified by virtual screening failed experimental validation. What could have gone wrong?

- Inadequate Conformer Sampling: The computational generation of 3D molecular conformations may have missed the bioactive conformation. Ensure a sufficiently broad yet energetically reasonable set of conformers is generated for each compound using robust algorithms (e.g., OMEGA, ConfGen, RDKit's ETKDG) [15].

- Improper Molecular Preparation: The protonation states, tautomers, or stereochemistry of the test compound or the known actives were not correctly defined during library preparation. Use standardization software (e.g., Standardizer, MolVS) [15].

- Overlooked SAR Data: The selected compound might possess functional groups previously reported in SAR studies to be detrimental to activity. Always integrate available SAR knowledge into the final hit selection process [15].

- Limitations of Retrospective Benchmarks: Be cautious of over-relying on retrospective benchmark performance. These are not always good predictors of real-world prospective performance, which is the true test of a method [14].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagents for Featured Computational Fields

| Reagent / Material | Function / Application | Field |

|---|---|---|

| Tool Compound [10] | A pharmacologically characterized molecule used to first establish and validate a PK/PD relationship in an animal model before testing novel compounds. | PK/PD Modeling |

| Validated Crystallographic Structure [15] | A high-quality 3D protein structure from the PDB, validated for reliability (especially in the binding site), is crucial for structure-based virtual screening and docking. | Virtual Screening |

| Known Active Ligands & Decoys [14] [15] | A set of confirmed active molecules and assumed inactives (decoys) used to develop, validate, and benchmark the performance of virtual screening workflows. | Virtual Screening |

| Virtual Compound Library [14] [15] | A large collection of small molecules in a computable format (e.g., SDF, SMILES) from commercial or in-house sources, representing the chemical space to be screened. | Virtual Screening |

| Multi-Omics Datasets | Integrated genomic, proteomic, and metabolomic data used to build and constrain the biological networks within QSP models, enhancing their physiological relevance. | Systems Pharmacology |

Experimental Protocols & Workflows

Protocol 1: Standard Workflow for Structure-Based Virtual Screening

This protocol is adapted from established practices and the OpenVS platform for screening ultra-large libraries [16] [15].

- Bibliographic Research & Data Collection: Research the target's biological function, natural ligands, and any known inhibitors or SAR studies. Retrieve all relevant protein structures (PDB) and validate them with software like VHELIBS [15].

- Library Preparation: Obtain the small molecule library (e.g., from ZINC or in-house collections). Generate 3D conformations, protonation states, and tautomers for each molecule using a conformer generator (e.g., OMEGA, RDKit) and standardization tools (e.g., MolVS) [15].

- Hierarchical Screening:

- Step A (Fast Pre-screening): Use a rapid docking mode (e.g., RosettaVS's VSX mode) or ligand-based similarity search to reduce the library size [16].

- Step B (High-Precision Docking): Subject the top hits from Step A to a more accurate, flexible docking protocol (e.g., RosettaVS's VSH mode) that allows for side-chain and limited backbone movement [16].

- Hit Selection & Analysis: Rank the final compounds using an improved scoring function (e.g., RosettaGenFF-VS) that combines enthalpy and entropy estimates. Visually inspect top-ranked complexes and cross-reference with SAR data before selecting compounds for experimental testing [16] [15].

Protocol 2: Building a Mechanistic QSP Model

This protocol outlines the "learn and confirm" paradigm for QSP model development, using a glucose regulation model as an exemplar [11].

- Establish Project Objectives and Scope: Define the specific question the model should answer. For example: "Describe the return to baseline plasma glucose levels after an intravenous glucose injection" [11].

- Diagram the Biological Mechanism: Create a visual representation of the system's key "states" (e.g., plasma glucose, plasma insulin) and the flows between them (e.g., glucose input from liver, insulin-dependent clearance). This forms the "mental model" [11].

- Formulate Mathematical Equations: Translate the diagram into a set of Ordinary Differential Equations (ODEs). Define the relationships between states mathematically, incorporating parameters from physiology (e.g., rates of insulin secretion, glucose uptake) [11].

- Parameterization and Integration: Populate the model with data from diverse sources, using a "top-down" (clinical data like HbA1c) and "bottom-up" (cellular data like insulin secretion rates) approach [11].

- Model Refinement and "What-If" Testing: Run simulations to test if the model reproduces known behavior. Use the model to generate new, testable hypotheses (e.g., predict the effect of a drug combination) and refine the model with subsequent experimental findings [11].

The Critical Link Between Model Smoothness and Biological Plausibility

In computational biology and drug development, the smoothness of a model's output is not merely an aesthetic concern—it is a fundamental determinant of biological plausibility and predictive reliability. Model smoothness refers to the stability and gradual progression of a model's predictions in response to changes in input parameters. Excessive roughness in model outputs often signals overfitting to noise in experimental data, leading to biologically implausible predictions that fail to generalize to new experimental conditions. Conversely, appropriately smooth models typically demonstrate better generalization and align more closely with the continuous nature of biological systems, from gradually dose-response relationships in pharmacology to the continuous dynamics of signaling pathways.

The relationship between smoothness and plausibility is particularly crucial in high-stakes applications like drug discovery, where computational models guide expensive experimental campaigns. This technical support center provides practical guidance for researchers navigating the critical intersection of technical model optimization and biological fidelity.

Troubleshooting Guides

Guide 1: Diagnosing Biological Implausibility from Rough Model Outputs

| Symptom | Potential Causes | Diagnostic Steps | Biological Impact |

|---|---|---|---|

| Erratic dose-response curves | Overfitting, insufficient regularization, inappropriate smoothing parameters | Check learning curves; validate on holdout dataset; perform sensitivity analysis | Poor translation from in silico to in vitro; inaccurate IC₅₀ predictions |

| Inconsistent mechanism-of-action predictions | Lack of mechanistic constraints in model architecture | Analyze feature importance; check alignment with known pathways | Misplaced target engagement hypotheses; failed clinical trials |

| High variance in binding affinity predictions ($>1$ log unit) | Noisy training data, inadequate feature engineering | Compute confidence intervals; assess data quality at the point of failure | Wasted resources on synthesizing low-potency compounds |

| Unstable classification of active/inactive compounds | Class imbalance, poorly calibrated classification thresholds | Plot ROC curves; calculate precision-recall metrics | Inaccurate virtual screening; missed lead compounds |

Guide 2: Smoothing Parameter Selection for Biological Data

| Smoothing Method | Optimal For | Parameter Selection Guide | Biological Considerations |

|---|---|---|---|

| Gaussian Filter [6] | Spectral data (e.g., SPR biosensors) | Start with σ = 1-2 data point widths; adjust based on known peak separation | Preserves actual binding kinetics while reducing high-frequency noise |

| Savitzky-Golay [6] | Preserving higher moments of distributions | Use polynomial order 2-4; window size 5-15% of data points | Maintains true shape of pharmacological response curves |

| Smoothing Splines [6] [17] | Irregularly sampled biological measurements | Use generalized cross-validation or marginal likelihood to estimate λ | Balances fidelity to experimental data with physical constraints |

| Exponentially Weighted Moving Average (EWMA) [6] | Time-series biological data | Set smoothing factor based on expected biological response time | Respects temporal dynamics of cellular responses |

Frequently Asked Questions (FAQs)

Q: How can I determine if my model is appropriately smooth or oversmoothed?

A: The optimal smoothness preserves meaningful biological variation while eliminating experimental noise. Use a two-step validation: First, technical validation through cross-checking multiple smoothing techniques (Gaussian, Savitzky-Golay, splines) and comparing their performance using metrics like Akaike Information Criterion (AIC) [17]. Second, biological validation by testing whether smoothed predictions align with established biological mechanisms and demonstrate coherence with existing knowledge [18] [19]. Oversmoothing typically eliminates real biological signal, manifesting as failure to capture known biphasic responses or threshold effects.

Q: What are the best practices for integrating biological plausibility directly into smoothing procedures?

A: Theory-guided smoothing incorporates biological constraints directly into the smoothing process. For drug discovery applications, this means enforcing monotonicity in dose-response relationships where biologically justified, constraining parameters to physiologically plausible ranges, and incorporating mechanistic regularizers that penalize biochemically impossible predictions [20] [21]. For example, when smoothing binding curves, apply constraints based on the law of mass action to maintain plausible dissociation constant ranges.

Q: How can deep learning help with smoothing parameter selection while maintaining biological relevance?

A: Deep learning approaches, particularly convolutional neural networks (CNNs), can automate smoothing parameter selection by learning from human expert classifications of what constitutes optimal smoothness [8]. These systems can be trained on datasets where smoothing parameters have been validated against biological outcomes, capturing expert intuition while scaling to large datasets. However, ensure the training data includes biological validation metrics alongside technical smoothness assessments to maintain plausibility.

Q: My smoothed model fits training data well but fails in experimental validation. What might be wrong?

A: This discrepancy often indicates a generalisability problem in your smoothing approach [18]. The smoothing parameters may be too specific to your training dataset's noise characteristics. Re-evaluate using domain adaptation techniques and ensure your smoothing approach accounts for inter-individual variability and experimental conditions differences. Incorporate multi-scale validation comparing predictions at molecular, cellular, and tissue levels where possible [20].

Experimental Protocols for Validating Model Smoothness and Biological Plausibility

Protocol 1: Systematic Smoothness-Plausibility Validation for Drug Response Models

Purpose: To establish an optimal smoothing threshold that balances noise reduction with preservation of biologically meaningful signal in dose-response modeling.

Workflow:

- Data Preparation: Collect concentration-response data with sufficient replicates to estimate experimental variability

- Multi-Method Smoothing: Apply Gaussian, Savitzky-Golay, and spline smoothing across a range of parameters [6]

- Goodness-of-Fit Assessment: Calculate AIC, BIC, and cross-validation error for each smoothed model [17]

- Biological Fidelity Testing:

- Compare smoothed predictions to known mechanisms of action

- Test prediction of established positive and negative controls

- Validate against orthogonal assay data where available

- Iterative Refinement: Select parameters that optimize both statistical and biological validity

Protocol 2: Mechanistic Consistency Check for Smoothed Predictive Models

Purpose: To ensure smoothing procedures maintain consistency with established biological mechanisms.

Workflow:

- Pathway Mapping: Diagram known biological pathways relevant to your prediction

- Constraint Implementation: Enforce pathway-derived constraints during smoothing:

- Maintain hierarchical relationships in signaling cascades

- Preserve known feedback loop dynamics

- Respect thermodynamic constraints on binding energies

- Perturbation Testing: Introduce simulated pathway perturbations; verify smoothed models respond plausibly

- Expert Review: Domain experts evaluate smoothed outputs for biological realism [22]

Essential Visualizations

Diagram 1: Smoothness Validation Workflow for Biological Models

Diagram 2: Biological Plausibility Assessment Framework

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Category | Specific Examples | Function in Smoothness Analysis | Biological Validation Role |

|---|---|---|---|

| Smoothing Algorithms | Gaussian filter, Savitzky-Golay, smoothing splines, EWMA [6] | Reduce high-frequency noise in experimental data | Preserve meaningful biological variation while eliminating technical artifacts |

| Model Evaluation Metrics | AIC, BIC, cross-validation error, precision, recall [22] [17] | Quantify trade-off between smoothness and fit quality | Ensure models generalize to new biological contexts |

| Biological Plausibility Frameworks | Bradford Hill criteria [19], mechanistic toxicology | Assess causal evidence strength for exposure-disease relationships | Ground computational predictions in established biological principles |

| Deep Learning for Automation | Convolutional Neural Networks (CNNs), Reinforcement Learning [8] [23] | Automate smoothing parameter selection | Scale expert-level biological validation to large datasets |

| Multi-scale Modeling Platforms | Cardiac electrophysiology models [20], Physiome project tools | Integrate smoothness constraints across biological scales | Ensure predictions remain plausible from molecular to organism levels |

A Practical Toolkit: Key Smoothing Techniques and Their Implementation

Frequently Asked Questions

Q1: What is the primary reason for applying smoothing to computational model outputs in research? Smoothing is primarily used to increase the signal-to-noise ratio in data. It is a process that suppresses high-frequency noise while enhancing the low-frequency signal, making it easier to identify underlying trends and patterns crucial for analyzing experimental results [24].

Q2: My Gaussian-filtered image looks overly blurred and has lost important details. How can I fix this? Over-blurring occurs when the standard deviation (σ) of the Gaussian kernel is too large. To fix this, use a smaller σ value, which results in a narrower kernel and preserves more detail. The kernel size should be large enough to adequately represent the Gaussian; a common rule is to set the kernel width to about 3 standard deviations on each side of the center [25] [26].

Q3: When using a Savitzky-Golay filter, the smoothed data at the very beginning and end of my dataset appears distorted. Why does this happen? The Savitzky-Golay filter operates by fitting a polynomial to a window of points. At the edges of the dataset, there are insufficient points on one side to form a complete symmetric window, leading to inaccurate polynomial fits. This is a known limitation called the edge effect [27] [28].

Q4: Can I use the Kalman filter for real-time, online smoothing of data streams? Yes, the Kalman filter is ideally suited for real-time applications. It is a recursive algorithm, meaning it produces an updated estimate each time a new measurement arrives. It only requires the most recent measurement and the previous state estimate, making it computationally efficient for live data streams [29] [30] [31].

Q5: How does the Savitzky-Golay filter preserve sharp features in a signal better than a Gaussian filter? The Savitzky-Golay filter works by fitting a low-degree polynomial to a window of data points. This process acts as a local least-squares regression that maintains higher-order moments (like the slope and curvature) of the signal. In contrast, a Gaussian filter is a weighted average that tends to blur sharp peaks and rapid transitions [27] [31].

Troubleshooting Guides

Issue 1: Choosing the Smoothing Parameters for a Gaussian Filter

- Problem: The smoothed output is either too noisy (under-smoothed) or has lost critical features like peaks and edges (over-smoothed).

- Diagnosis: This is caused by an incorrect selection of the kernel's standard deviation,

σ. - Solution:

- Understand the Parameter: The

σparameter controls the width of the Gaussian kernel. A largerσproduces a wider kernel and more aggressive smoothing [25] [26]. - Experimental Protocol:

- Start with a small

σ(e.g., 1.0) and visually inspect the result. - Gradually increase

σuntil the noise is acceptably reduced, but stop before important features begin to visibly diminish in sharpness or amplitude. - For images, you can use the

imgaussfiltfunction in MATLAB or its equivalent in other languages, trying scalar values for isotropic smoothing or a 2-element vector for direction-dependent (anisotropic) smoothing [26].

- Start with a small

- Understand the Parameter: The

Issue 2: Optimizing Savitzky-Golay Filter Parameters to Avoid Overfitting or Over-smoothing

- Problem: The smoothed signal either still appears noisy and follows random fluctuations (overfitting) or appears too "stiff" and misses genuine trends (over-smoothing).

- Diagnosis: This is due to an imbalance between the window size (

w) and the polynomial order (p). - Solution:

- Understand the Parameters: The window size determines how many adjacent data points are used for each local fit. The polynomial degree controls the flexibility of the curve used to approximate the data in that window [27] [28].

- Experimental Protocol:

- A good starting point is a 2nd (quadratic) or 3rd (cubic) order polynomial [27].

- The window size should be larger than the polynomial degree. A useful rule of thumb is to set the window size large enough to encompass the primary width of the features you wish to preserve.

- Systematically test different combinations. For example, fit a polynomial of order 2, 3, and 4 with window sizes of 5, 11, and 21. Visually compare the results to the original data. The optimal parameters preserve the shape of the signal while effectively reducing random noise [27] [31].

- Use the

savgol_filterfunction fromscipy.signalin Python for implementation [28].

Issue 3: Tuning a Kalman Filter for a Noisy Sensor Data Application

- Problem: The Kalman filter output either lags significantly behind the true signal or is too jittery and does not suppress enough noise.

- Diagnosis: This is typically caused by incorrect tuning of the process and observation noise covariance parameters.

- Solution:

- Understand the Parameters: The filter balances trust between its internal process model (which predicts the next state) and the new measurements it receives. High trust in the model makes the filter smoother but increases lag; high trust in measurements makes it more responsive but noisier [29] [30].

- Experimental Protocol:

- If the filter is too smooth and laggy, it means it trusts its internal model too much. You should increase the value of the

observation_stdparameter (or equivalent), telling the filter that the measurements are more reliable [31]. - If the filter is too jittery and noisy, it means it trusts the measurements too much. You should increase the value of the

transition_stdparameter (or equivalent), telling the filter that its internal process model is more reliable [31]. - This tuning process is often iterative. Use a segment of data where the true signal is known or can be reasonably estimated to calibrate these parameters effectively.

- If the filter is too smooth and laggy, it means it trusts its internal model too much. You should increase the value of the

Algorithm Comparison & Selection

The table below summarizes the key characteristics of the three smoothing algorithms to aid in selection.

| Algorithm | Primary Mechanism | Key Parameters | Best Used For | Major Considerations |

|---|---|---|---|---|

| Gaussian Filter [25] [26] [24] | 2-D convolution with a bell-shaped (Gaussian) kernel for weighted averaging. | Standard Deviation (σ), Kernel Size. | General-purpose blurring and noise reduction; pre-processing for edge detection. | Can blur sharp edges; kernel size should be ~3σ for an accurate representation [25]. |

| Savitzky-Golay Filter [32] [27] [28] | Local least-squares polynomial fitting within a sliding window. | Window Size, Polynomial Degree. | Preserving higher-order signal features (e.g., peak heights and widths) while reducing noise. | Sensitive to parameter choice; suffers from edge effects [27] [28]. |

| Kalman Smoother [29] [30] [31] | Recursive probabilistic estimation using a system's dynamic model and noisy measurements. | Process Noise, Observation Noise. | Real-time sensor data fusion, systems with known dynamics, and handling missing data. | Requires a model of the system dynamics; parameter tuning can be complex [29] [31]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

This table lists key computational "reagents" and tools essential for implementing the discussed smoothing algorithms in a research environment.

| Item / Software Library | Primary Function | Key Utility in Smoothing Analysis |

|---|---|---|

| SciPy Signal Library (Python) [27] [28] | Provides signal processing functions, including savgol_filter. |

Direct implementation of Savitzky-Golay filtering and other related signal operations. |

| Image Processing Toolbox (MATLAB) [26] | Offers comprehensive functions for image analysis, including imgaussfilt. |

Application of Gaussian smoothing filters to 2D and 3D image data with control over σ. |

| NumPy & SciPy (Python) [24] | Foundational libraries for numerical computation and linear algebra. | Enables custom implementation of convolution operations (e.g., for Gaussian kernels) and matrix manipulations required for Kalman filters. |

| PyKalman Library (Python) | A dedicated library for Kalman filtering and smoothing. | Simplifies the implementation and tuning of Kalman filters for time-series data. |

Experimental Workflow Visualization

The diagram below illustrates a general decision-making workflow for selecting and applying a smoothing algorithm to computational model outputs.

Smoothing Algorithm Selection Workflow

The following diagram details the core computational process of the Savitzky-Golay filter, which involves fitting a local polynomial to the data within a sliding window.

Savitzky-Golay Filter Mechanism

This final diagram illustrates the recursive predict-update cycle that forms the core of the Kalman filter algorithm.

Kalman Filter Predict-Update Cycle

FAQs and Troubleshooting Guide

Q1: What is the core principle behind Cross-Layer Vision Smoothing (CLVS) in Large Vision-Language Models (LVLMs)?

A1: The core principle of CLVS is to mitigate "advantageous attention decay," a phenomenon where an LVLM's focus on key objects in an image is accurate but very brief [3]. CLVS introduces a vision memory that smooths the visual attention distribution across the model's layers [3] [33]. This ensures that once the model identifies a crucial object, it maintains a sustained focus on it, rather than letting its attention drift in subsequent layers. This sustained focus leads to more robust visual understanding, particularly for object attributes and relations [3].

Q2: My LVLM model suffers from hallucinations, especially describing attributes or relations inaccurately. Could CLVS help?

A2: Yes. Experiments show that CLVS is particularly effective at reducing hallucinations related to object attributes and relations [3]. By maintaining smooth attention on key objects throughout the processing layers, the model has a more consistent and reliable "view" of the objects it needs to describe, leading to more accurate and grounded outputs [3].

Q3: How does CLVS handle potential positional biases in visual attention?

A3: CLVS explicitly addresses positional bias in its initial step. In the first layer of the model, it unifies the positional indices of all image tokens to a single, unbiased index [3]. This position-unbiased visual attention is then used to initialize the vision memory, ensuring the smoothing process starts from a neutral foundation and is not skewed towards certain areas of the image, such as the bottom-right corner [3].

Q4: At what point in the model's processing does the vision smoothing occur?

A4: The smoothing process is applied from the second layer onwards and is terminated once the model's visual understanding is deemed complete [3]. CLVS uses the model's internal uncertainty as an indicator to decide when to stop the smoothing, preventing unnecessary computation in later layers where visual understanding is typically finalized [3].

Q5: How does CLVS differ from other methods that try to improve visual attention?

A5: Many existing approaches enhance visual attention independently within each layer [3]. In contrast, CLVS is distinctive because it specifically manages the evolution of visual attention across different layers [3]. It is a training-free method that focuses on the cross-layer dynamics of attention, ensuring consistency over depth rather than just boosting attention weights at a single point [3].

Experimental Protocols and Data Presentation

The following table summarizes the key components of the CLVS methodology as described in the research [3].

Table 1: Core Components of the Cross-Layer Vision Smoothing (CLVS) Protocol

| Protocol Component | Description | Function |

|---|---|---|

| Unified Visual Positions | Normalizing positional indices of all image tokens to a single, unbiased index in the first layer. | Initializes the model with position-unbiased perception, countering inherent positional biases [3]. |

| Vision Memory Initialization | The vision memory is initialized with the unbiased visual attention from the first layer. | Provides the initial state for the cross-layer smoothing process [3]. |

| Visual Attention Smoothing | In subsequent layers, the current visual attention is interpolated with the vision memory, which is then updated iteratively. | Ensures attention to key objects is maintained smoothly across layers, preventing advantageous attention decay [3]. |

| Uncertainty-Based Termination | The smoothing process is halted when the model's uncertainty indicates visual understanding is complete. | Optimizes computational efficiency by stopping the process in the early or middle layers where visual understanding primarily occurs [3]. |

The effectiveness of CLVS was validated across multiple benchmarks and models. The table below provides a simplified summary of its performance impact.

Table 2: Performance Impact of CLVS on LVLMs

| Evaluation Aspect | Impact of CLVS | Interpretation |

|---|---|---|

| Overall Visual Understanding | Achieves state-of-the-art performance across a variety of tasks [3] [33]. | CLVS generally enhances the model's ability to understand and reason about visual content. |

| Attribute & Relation Understanding | Significant improvements noted, with reduced hallucinations [3]. | Sustained focus on objects allows for more accurate inference of their properties and interactions. |

| Image Captioning | Attains comparable results to leading approaches [33]. | The method is competitive in generating descriptive and coherent textual summaries of images. |

| Generalizability | Effective across three different LVLMs and four benchmarks [3]. | The approach is not model-specific and can be generalized to various architectures. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a CLVS "Experiment"

| Item | Function in the "Experimental" Setup |

|---|---|

| Transformer-based LVLM | The base model (e.g., LLaVA) whose internal attention mechanisms are being smoothed and analyzed [3]. |

| Input Image & Text Query | The raw multimodal input that triggers the model's visual and linguistic processing [3]. |

| Vision Memory Module | The core "reagent" that stores and updates the smoothed attention distribution across layers [3]. |

| Uncertainty Quantification Metric | The "assay" used to determine when the visual understanding process is complete and smoothing can terminate [3]. |

| Position Unification Algorithm | The pre-processing step applied to visual tokens in the first layer to remove positional bias [3]. |

CLVS Workflow and Signaling

The following diagram illustrates the logical workflow and data flow of the Cross-Layer Vision Smoothing process.

CLVS Workflow Diagram

Comparative Analysis of Cross-Layer Methods

The table below contrasts CLVS with another advanced cross-layer attention method, Consistent Cross-layer Regional Alignment (CCRA), to highlight different technical approaches.

Table 4: Comparison of Cross-Layer Attention Methods

| Feature | Cross-Layer Vision Smoothing (CLVS) | Consistent Cross-layer Regional Alignment (CCRA) |

|---|---|---|

| Primary Goal | Sustain focus on key objects to prevent attention decay [3]. | Coordinate diverse attention mechanisms for fine-grained regional-semantic alignment [34]. |

| Core Mechanism | A single vision memory that is iteratively updated across layers [3]. | Progressive Attention Integration (PAI) applying three attention types in sequence [34]. |

| Key Innovation | Uncertainty-based termination of the smoothing process [3]. | Layer-Patch-Wise Cross Attention (LPWCA) for joint regional-semantic weighting [34]. |

| Handling of Layers | Smooths attention via memory across all layers until understanding is complete [3]. | Explicitly models layer and patch indices in a unified attention space [34]. |

| Reported Outcome | Reduced hallucinations; improved attribute/relation understanding [3]. | State-of-the-art performance on diverse benchmarks; enhanced interpretability [34]. |

Implementing Smoothness Analysis for Time-Series and High-Dimensional Data

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between time series analysis and time series forecasting? Time series analysis is a method used for analysing data to extract meaningful statistical information. In contrast, time series forecasting is focused on predicting future values based on previously observed values over time [35].

FAQ 2: My high-dimensional state-space model is suffering from the "curse of dimensionality." What are my options? The curse of dimensionality refers to the problem where the number of particles or samples required for accurate smoothing increases exponentially with the dimension of the hidden state, making traditional methods computationally prohibitive. One advanced method to address this is the Space–Time Forward Smoothing (STFS) algorithm, which uses a polynomial cost structure (e.g., O(N²d²T)) to make smoothing more feasible for high-dimensional problems. This is particularly applicable for models with local interactions [36].

FAQ 3: How do I choose the right smoothing factor (α) for Exponential Smoothing? The smoothing factor (α) in Single Exponential Smoothing controls the exponential decrease of weights assigned to past data points and can vary between 0 and 1. A larger α means past data points have less weight, resulting in less smoothing. The optimal α can be found manually or through optimization methods available in statistical software packages [37].

FAQ 4: Why am I getting null values at the start and end of my smoothed time series? This is a common issue with methods like the centered moving average, where the time window extends beyond the available data. Many tools offer an parameter (e.g., "Apply shorter time window at start and end") that, when enabled, will truncate the window at the series boundaries and perform smoothing with the available values, thus preventing null results [38].

FAQ 5: What is the "path degeneracy" problem in Sequential Monte Carlo (SMC) smoothing? Path degeneracy is a severe drawback of SMC methods. As the algorithm progresses through many time steps, the number of unique particles representing the initial states decreases with every resampling step. Consequently, approximations of the smoothed state distribution for early time points can become poor, as they rely on very few unique particle trajectories [36].

Troubleshooting Guides

Issue 1: Poor Forecast Accuracy After Smoothing

Problem Description: After applying smoothing techniques to a time series, the resulting forecasts are inaccurate, failing to capture the true trends or seasonality.

Diagnostic Steps:

- Decompose the Series: Visually or statistically decompose your time series into its core components: Trend, Seasonality, and Residual noise [35]. This helps identify which component your smoothing method might be incorrectly handling.

- Check Method Suitability: Verify that the chosen smoothing method is appropriate for your data's structure.

- Parameter Tuning: Use optimization functions (e.g.,

fit(method="basinhopping")instatsmodels) to find the optimal smoothing factors (α, β, γ) instead of relying on manual guesswork [37].

Solution: Select a smoothing method that matches your data's components. For a series with trend and seasonality, implement Triple Exponential Smoothing and use automated parameter optimization.

Issue 2: Inability to Handle High-Dimensional Data

Problem Description: Standard smoothing algorithms (e.g., basic SMC) become computationally intractable or fail completely when applied to high-dimensional data, such as spatial-temporal fields or models with many hidden variables.

Diagnostic Steps:

- Associate Model Structure: Determine if your high-dimensional state-space model has a structure that assumes only local interactions (where the dynamics of one component depend only on its neighbors) [36].

- Evaluate Algorithm Cost: Check if the computational cost of your current smoother scales exponentially with the state dimension (d), a clear sign of the curse of dimensionality [36].

Solution: For high-dimensional models with local interactions, implement specialized algorithms designed to mitigate the curse of dimensionality.

- Blocked Forward Smoothing Algorithm: This algorithm uses a blocking strategy, breaking down the high-dimensional state into smaller, overlapping blocks. It then applies smoothing to these blocks in parallel, significantly reducing the computational cost compared to full-dimensional smoothing [36].

- Space–Time Forward Smoothing (STFS) Algorithm: This is another advanced method that efficiently calculates smoothed additive functionals in an online manner for a specific family of high-dimensional state-space models. Its cost is polynomial in the state dimension (d), making it more scalable than traditional methods [36].

Issue 3: Choosing the Right Smoothing Method

Problem Description: A researcher is unsure which smoothing method to use for their specific time series data, which has characteristics like noise, trend, and seasonality.

Diagnostic Steps:

- Identify Data Components: Analyze the time series to confirm the presence or absence of Trend (long-term increase/decrease) and Seasonality (regular, periodic fluctuations) [35].

- Assess Data Volume: Determine if you have a sufficient volume of historical data, as some methods (like neural networks or complex exponential smoothing) require more data than others [35].

- Define Requirement: Decide if you need a simple, fast method for visualization, or a sophisticated one for forecasting.

Solution: Select the algorithm based on the components present in your data and your end goal. The table below summarizes the primary methods.

| Time Series Characteristics | Recommended Algorithm | Key Parameters | Notes |

|---|---|---|---|

| No clear trend or seasonality | Single Exponential Smoothing [37] | Smoothing Factor (α) | Simple and fast for stationary data. |

| Trend but no seasonality | Double Exponential Smoothing [37] | α, Trend Smoothing (β) | Handles additive (linear) or multiplicative (exponential) trends. |

| Trend and seasonality | Triple Exponential Smoothing (Holt-Winters) [37] | α, β, Seasonal Smoothing (γ) | The most sophisticated exponential smoothing method. |

| Little noise, long-term trend highlighting | Moving Average [38] [37] | Window Size (k) | Simple but cannot handle seasonality well. Values at series ends can be problematic. |

| Complex trends, variable smoothing | Adaptive Bandwidth Local Linear Regression [38] | (Bandwidth estimated by tool) | Automatically adjusts the smoothing window; excellent for visualization. |

| High-dimensional state-space models | Blocked Forward Smoothing / STFS Algorithm [36] | Number of Particles (N), Block Size | Designed to overcome the "curse of dimensionality." |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" – algorithms, models, and standards – essential for conducting smoothness analysis research.

| Research Reagent | Function / Purpose | Key Considerations |

|---|---|---|

| Functional Autoregressive (FAR) Model [39] | Models curve evolution over time in a functional data framework; useful for high-dimensional FTS. | A lightweight and innovative prediction system; shown to outperform some machine learning techniques for temperature forecasting [39]. |

| Sequential Monte Carlo (SMC) [36] | A class of methods (particle filters) that use weighted samples to approximate complex smoothing distributions in non-linear non-Gaussian state-space models. | Suffers from path degeneracy in basic form, where approximations of early states become poor over long series [36]. |

| CDISC Standards (SDTM/ADaM) [40] | Standardized data structures for clinical and preclinical data. | Facilitates consistent reporting and easy aggregation of data from multiple studies for integrated analysis; crucial for regulatory submissions [40]. |

| Forward Filtering Backward Smoothing (FFBS) Recursion [36] | A numerical scheme that provides a substantial improvement in the asymptotic variance of the estimator for smoothed additive functionals compared to the basic path space method [36]. | Computationally more expensive than the path space method but mitigates the path degeneracy problem [36]. |

| Benefit Risk Action Team (BRAT) Framework [40] | A structured framework of processes and tools for selecting, organizing, and interpreting benefit-risk data. | Becoming increasingly important in regulatory submissions to provide a standardized platform for benefit-risk assessment [40]. |

Troubleshooting Guides

Guide 1: Addressing Computational Complexity in Global Field Smoothing

Problem: Smoothing high-resolution global forecast fields is computationally prohibitive, taking too long to complete.

Explanation: Using a naive explicit summation method for smoothing on a spherical geometry has a time complexity of O(n²), which becomes intractable for models with millions of grid points (e.g., ~6.5 million points in an O1280 octahedral reduced Gaussian grid) [41].

Solution: Implement efficient, area-size-informed smoothing methodologies designed for spherical domains.

- Step 1: Verify your grid geometry and area size data. Ensure your input data includes the area size (e.g., in km²) for each grid point, as this is essential for accurate, area-size-informed smoothing [41].

- Step 2: Choose and apply a computationally efficient smoothing algorithm suitable for your grid. The formula for area-size-informed smoothing is [41]:

f_i'(R) = ( Σ ( f_j * a_j ) ) / ( Σ a_j )for all j within a great-circle distance R of point i. - Step 3: For regular equidistant grids, leverage the summed-fields approach (O(n) complexity) or Fast-Fourier-Transform-based convolution (O(n log n) complexity) for maximum efficiency [41].

Guide 2: Handling Signal Noise in Gravitational Wave Data

Problem: Experimental noise in data (e.g., from sensors) obscures the true signal, making accurate resonance or feature detection difficult.

Explanation: All experimental data contains noise, which can be exacerbated by environmental variations and the inherent sensitivity of the instrumentation. Effective smoothing balances noise suppression with preserving critical signal features [6].

Solution: Apply appropriate curve-smoothing techniques and evaluate their performance.

- Step 1: Import your experimental data into an analysis environment (e.g., Python, MATLAB) [6].

- Step 2: Select and apply one or more smoothing techniques from the table below, adjusting parameters to find the optimal fit for your data.

- Step 3: Visually compare the smoothed curve to the raw data to ensure key features have not been distorted. The optimal filter minimizes noise without altering the signal's peak or baseline characteristics [6].

Frequently Asked Questions (FAQs)

Q1: Why is standard smoothing insufficient for global forecast fields? Standard smoothing algorithms often assume a planar, equidistant grid. Global domains have spherical geometry with non-equidistant, irregular grids and variable grid point area sizes. Applying planar methods can distort spatial integrals, for instance, by altering the total precipitation volume in a domain [41].

Q2: My smoothed signal appears distorted, and critical peaks are blunted. What should I do? This indicates your smoothing parameters are too aggressive. To resolve this:

- For Gaussian Filters: Reduce the standard deviation (σ) parameter [6].

- For Savitzky-Golay Filters: Decrease the window size or increase the polynomial order [6]. Always start with milder settings and increase strength gradually, using a visual comparison to ensure signal integrity is maintained.

Q3: How do I choose the best smoothing technique for my specific dataset? There is no universal best technique. The choice depends on your data's noise structure and the features you need to preserve. The following table provides a comparison of common methods to guide your selection.

Table 1: Comparison of Common Smoothing Techniques

| Technique | Primary Use Case | Key Parameters | Strengths | Weaknesses |

|---|---|---|---|---|

| Gaussian Filter [6] | General-purpose noise reduction | Standard Deviation (σ) | Simple, effective for Gaussian noise; provides smooth output. | Can oversmooth sharp features and peaks. |

| Savitzky-Golay Filter [6] | Preserving higher-order moments & peak shapes | Window Size, Polynomial Order | Excellent at preserving signal shape and features like peak width and height. | Less effective on signals with very high noise levels. |

| Smoothing Splines [6] | Flexible fitting for irregular data | Smoothing Parameter | Highly flexible; can fit complex, non-uniform data very well. | Computationally more intensive; risk of overfitting. |

| Exponentially Weighted Moving Average (EWMA) [6] | Real-time, streaming data | Decay Factor | Simple and efficient for on-line data processing; gives more weight to recent data. | Can lag behind rapid changes in the signal. |

Experimental Protocols

Protocol 1: Area-Size-Informed Smoothing for Global Precipitation Data

Objective: To smooth a high-resolution global precipitation field using an area-size-informed method to enable accurate spatial verification.

Materials:

- NetCDF file containing global precipitation data (e.g., from ECMWF IFS), including lat-lon coordinates and grid point area sizes [41].

- Computational environment with sufficient memory (e.g., Python with NumPy, SciPy).

Methodology:

- Data Preparation: Load the precipitation field and the corresponding area size for each grid point [41].

- Parameter Selection: Define the smoothing radius (R), typically based on the spatial scale of interest (e.g., 50 km, 100 km) [41].

- Smoothing Execution: For each grid point

iin the domain: a. Identify all pointsjwhere the great-circle distance fromitojis less thanR[41]. b. Calculate the smoothed value using the formula:f_i'(R) = ( Σ ( f_j * a_j ) ) / ( Σ a_j )for all j in the neighborhood [41]. - Output: A new, smoothed global field where the spatial integral of precipitation is preserved.

Protocol 2: Noise Reduction for Biosensor Spectral Data

Objective: To reduce experimental noise in a spectral data curve to accurately determine the resonance angle in a surface plasmon resonance (SPR) biosensor experiment.

Materials:

- Experimental spectral data (e.g., reflectance vs. incidence angle) [6].

- Data analysis software (e.g., MATLAB, Python with SciPy).

Methodology:

- Data Import: Load the raw experimental data into the analysis environment [6].

- Technique Selection: Choose a smoothing technique from Table 1. The Savitzky-Golay filter is often a good starting point for preserving resonance peaks [6].

- Parameter Optimization: Apply the filter with an initial, conservative parameter set (e.g., a small window size). Iteratively adjust parameters while visually inspecting the result to ensure the resonance dip is not distorted [6].

- Validation: Identify the resonance angle (

θ_min) from the smoothed curve as the angle of minimum reflectance. Compare the clarity of this minimum against the raw data to confirm improvement [6].

Workflow Visualization

Smoothing Analysis Workflow

Computational Smoothing Pipeline

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions and Materials

| Item | Function/Application |

|---|---|

| UncertainSCI Software [42] | An open-source Python software suite for uncertainty quantification. It uses polynomial chaos emulators to non-intrusively probe parametric variability and uncertainty in biomedical and other simulations. |

| Global Forecast Data (e.g., ECMWF IFS) [41] | Provides high-resolution, operational global model data (e.g., precipitation on an O1280 grid) essential for developing and testing spatial verification metrics like the Fraction Skill Score (FSS). |

| Surface Plasmon Resonance (SPR) Biosensor [6] | An optical sensor used for label-free, real-time detection of molecular interactions (e.g., antigen-antibody binding). Its characterization requires precise smoothing of spectral data to find the resonance angle. |

| Savitzky-Golay Filter [6] | A digital smoothing filter that fits successive data subsets with a low-degree polynomial via linear least squares. It is critical for preserving signal shape and peak integrity when denoising data. |

| Area-Size-Informed Smoothing Algorithm [41] | A specific methodology for smoothing data on spherical geometries that accounts for the variable area sizes of grid points, preventing distortion of spatial integrals in global domains. |

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving Baseline Issues

Problem: Baseline Drift The baseline (signal in the absence of analyte) is unstable or drifting [43].

- Solution Checklist [43]:

- Buffer Preparation: Ensure the buffer is properly degassed to eliminate air bubbles, which can cause signal fluctuations.