Solving Class Imbalance in Molecular Property Classification: Advanced Strategies for Robust AI in Drug Discovery

Class imbalance is a pervasive challenge in molecular machine learning, where inactive compounds vastly outnumber active ones, leading to models biased toward the majority class.

Solving Class Imbalance in Molecular Property Classification: Advanced Strategies for Robust AI in Drug Discovery

Abstract

Class imbalance is a pervasive challenge in molecular machine learning, where inactive compounds vastly outnumber active ones, leading to models biased toward the majority class. This article provides a comprehensive guide for researchers and drug development professionals to tackle this issue. We explore the roots of data imbalance in chemical datasets and its impact on predictive accuracy. The core of the article details a suite of proven solutions—from data-level resampling and algorithm-level adjustments to advanced geometric deep learning and multi-task training schemes. We also establish a rigorous framework for evaluating model performance with imbalanced data, moving beyond misleading metrics like accuracy. Finally, we present real-world case studies and benchmarking results, offering practical insights for developing reliable and generalizable predictive models in cheminformatics and AI-driven drug discovery.

The Class Imbalance Problem: Why Molecular Property Prediction is Inherently Skewed

Frequently Asked Questions

Q1: What defines a "class-imbalanced" dataset in molecular property prediction? A class-imbalanced dataset in molecular property prediction is one where the number of samples belonging to one class (the majority class, e.g., inactive compounds) significantly outweighs the number of samples in another class (the minority class, e.g., active compounds) [1] [2]. In real-world chemical contexts like drug discovery, this imbalance is pervasive; for instance, in high-throughput screening (HTS) data, inactive compounds can outnumber active ones by ratios exceeding 1:80 [3]. This skew makes it difficult for standard machine learning models to learn the characteristics of the minority class, as they become biased toward predicting the majority class [1] [2].

Q2: Why are standard machine learning models problematic for my imbalanced chemical data? Most standard machine learning algorithms, including Random Forests (RF) and Support Vector Machines (SVM), assume a relatively uniform distribution of classes [2]. When this assumption is violated:

- Training batches may lack minority samples: With a severe imbalance and standard batch sampling, many training batches might contain no examples of the minority class, preventing the model from learning its features [1].

- Models optimize for the majority class: The training process minimizes overall error, which is most easily achieved by focusing on the more frequent class. This results in models with high overall accuracy but poor performance at predicting the rare, often critical, minority class (e.g., active drugs or toxic molecules) [2] [4].

Q3: Which performance metrics should I use instead of accuracy for imbalanced chemical datasets? Accuracy is a misleading metric for imbalanced datasets. You should use metrics that are sensitive to the performance on the minority class [3]. Common and recommended metrics include:

- Balanced Accuracy: The average of recall obtained on each class.

- F1-Score: The harmonic mean of precision and recall, providing a single metric that balances both concerns.

- Matthews Correlation Coefficient (MCC): A correlation coefficient between observed and predicted classifications that is generally regarded as a robust measure for imbalanced datasets [4] [3].

- Area Under the Receiver Operating Characteristic Curve (ROC-AUC): Measures the model's ability to distinguish between classes [3].

Q4: What are the most effective techniques to handle class imbalance in molecular data? No single technique is universally best, and the optimal choice often depends on your specific dataset. Effective approaches can be categorized as follows:

- Data-Level Methods: Adjust the training data to create a more balanced distribution.

- Random Undersampling (RUS): Randomly removes samples from the majority class. Studies show this can be highly effective for severe imbalances, sometimes outperforming more complex techniques [3].

- Oversampling: Creates additional copies or synthetic samples of the minority class. The Synthetic Minority Over-sampling Technique (SMOTE) and its variants (e.g., Borderline-SMOTE, Safe-level-SMOTE) generate new synthetic examples [2] [4].

- Algorithm-Level Methods: Modify the learning algorithm to account for the imbalance.

- Weighted Loss Functions: Assign a higher penalty for misclassifying minority class samples during training, forcing the model to pay more attention to them [4].

- Advanced Frameworks: Novel training schemes like Adaptive Checkpointing with Specialization (ACS) for multi-task learning can mitigate "negative transfer," where updates from data-rich tasks harm the performance of data-poor tasks [5].

- Hybrid and Advanced Methods: Combine multiple approaches or use specialized architectures.

- Adversarial Augmentation: Methods like AAIS (Adversarial Augmentation to Influential Sample) identify and augment data points that most influence the model's decision boundary, improving robustness [6].

- Few-Shot Learning Frameworks: Frameworks like MolFeSCue use pre-trained models and a dynamic contrastive loss function to excel in data-scarce and imbalanced situations [7].

Q5: How does the "imbalance ratio" affect model performance, and is there an optimal ratio? The imbalance ratio (IR) has a significant impact, and simply balancing to a 1:1 ratio is not always optimal. Recent research suggests that for highly imbalanced drug discovery datasets (e.g., with original IRs from 1:82 to 1:104), a moderately balanced ratio of 1:10 (minority to majority) can be more effective than a perfect 1:1 balance [3]. This "adjusted imbalance ratio" can lead to a better trade-off between true positive and false positive rates, improving metrics like F1-score and MCC on external validation sets [3].

Troubleshooting Guides

Symptoms:

- High overall accuracy but recall or precision for the active/toxic class is near zero.

- The model fails to identify any true positive hits in validation.

Solution Steps:

- Diagnose with Correct Metrics: Immediately stop using accuracy. Calculate MCC, F1-score, and balanced accuracy for a true picture of performance [4] [3].

- Apply a Data-Level Technique:

- For severely imbalanced datasets (IR > 1:80), start with Random Undersampling (RUS). Evidence shows it can significantly boost recall and MCC in such scenarios [3].

- For milder imbalances or when you must avoid losing majority class data, try SMOTE or Random Oversampling (ROS). Be cautious, as ROS can lead to overfitting [2] [3].

- Implement an Algorithm-Level Technique: If resampling does not suffice, use a weighted loss function. This is often simpler than resampling and directly informs the model of the class imbalance [4]. Most modern deep learning libraries allow easy implementation of class weights.

Experimental Protocol: Comparing Resampling Techniques

- Select Dataset: Use a benchmark molecular dataset like those from MoleculeNet (e.g., Tox21, SIDER) [7] [5].

- Choose Model: Train a standard model like a Graph Neural Network (GNN) or Random Forest as a baseline [4].

- Apply Techniques: Train identical models on datasets preprocessed with RUS, ROS, and SMOTE. Also, train a model using a weighted loss function without resampling.

- Evaluate: Compare the models using MCC, F1-score, and balanced accuracy on a held-out test set. A sample result structure is shown below [3]:

Table 1: Example Performance Comparison on a HIV Bioassay Dataset (IR 1:90)

| Technique | ROC-AUC | Balanced Accuracy | MCC | F1-Score |

|---|---|---|---|---|

| Original Data (Baseline) | 0.72 | 0.51 | -0.04 | 0.10 |

| Random Oversampling (ROS) | 0.75 | 0.65 | 0.15 | 0.25 |

| Random Undersampling (RUS) | 0.79 | 0.72 | 0.31 | 0.45 |

| SMOTE | 0.73 | 0.58 | 0.12 | 0.22 |

| Weighted Loss Function | 0.76 | 0.68 | 0.22 | 0.38 |

Issue 2: Handling Multi-Task Property Prediction with Task Imbalance

Symptoms:

- Model performance is strong on tasks with abundant data but poor on tasks with very few labeled samples.

- Training on multiple tasks simultaneously leads to worse performance than training separate models (negative transfer).

Solution Steps:

- Adopt a Specialized MTL Framework: Use the Adaptive Checkpointing with Specialization (ACS) training scheme [5].

- Architecture Setup: Employ a shared graph neural network (GNN) backbone with task-specific multi-layer perceptron (MLP) heads.

- Checkpointing: During training, monitor the validation loss for every task independently. Save a checkpoint of the model (both shared backbone and task-specific head) each time a task achieves a new minimum validation loss.

- Final Model: After training, each task uses its best-performing checkpoint, which represents a point where the shared representations were most beneficial for it, thereby mitigating negative transfer.

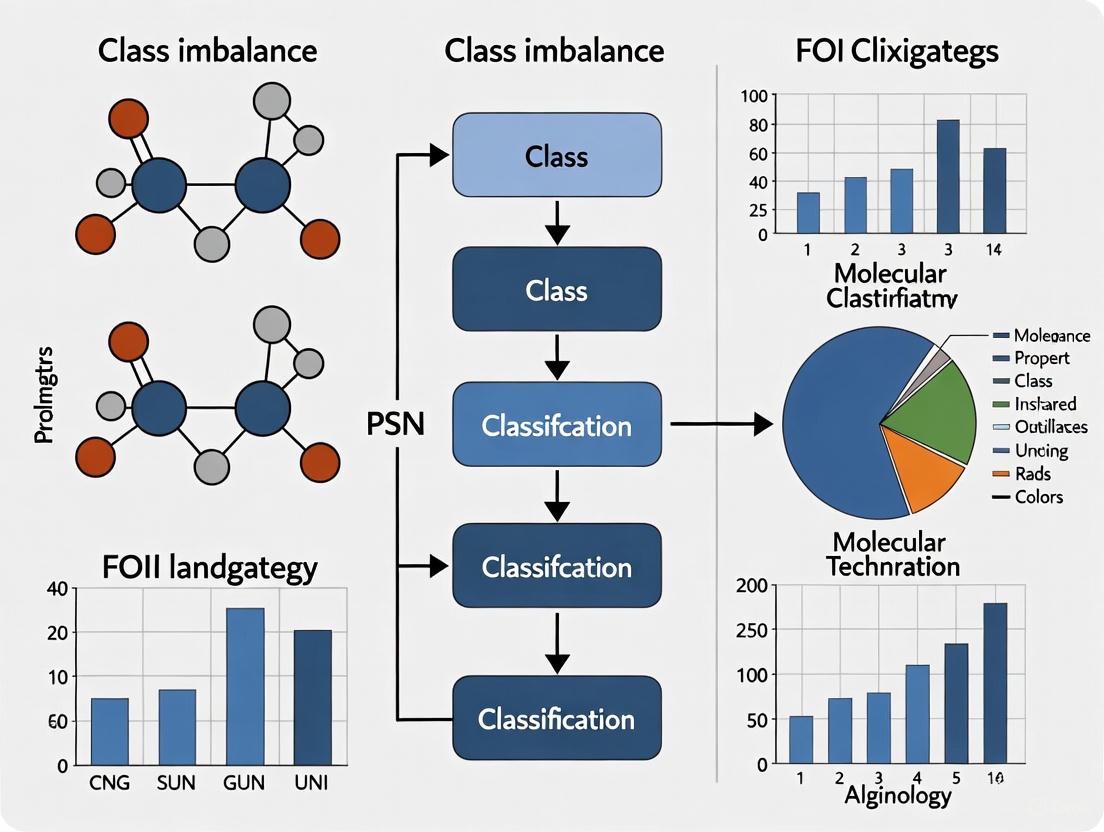

The workflow below illustrates the ACS process for mitigating negative transfer in multi-task learning.

Issue 3: Model Fails to Generalize After Balancing

Symptoms:

- Performance on the training set is excellent, but performance on the test set or external validation set remains poor.

- The model has overfitted to the synthetic samples or the specific examples retained during undersampling.

Solution Steps:

- Try Advanced Augmentation: Use adversarial augmentation (AAIS) instead of simple random or SMOTE-based oversampling. AAIS identifies and augments "influential samples" near the decision boundary, which helps flatten the decision boundary and improves generalization [6].

- Leverage Pre-trained Models and Few-Shot Learning: Utilize frameworks like MolFeSCue, which combines pre-trained molecular models with a few-shot learning setup. The built-in dynamic contrastive loss helps learn robust representations even from limited and imbalanced data [7].

- Optimize the Imbalance Ratio: Do not default to a 1:1 balance. Experiment with different imbalance ratios (e.g., 1:10, 1:25) using a validation set to find the ratio that gives the best MCC on a held-out test [3].

The Scientist's Toolkit

Table 2: Key Research Reagents & Computational Solutions

| Item Name | Function in Solving Class Imbalance | Example Context |

|---|---|---|

| SMOTE & Variants [2] | Generates synthetic samples for the minority class to balance the dataset. | Used in materials design to predict polymer properties and in catalyst design to screen hydrogen evolution reaction candidates. |

| Random Undersampling (RUS) [3] | Reduces majority class samples to a specified ratio, improving the probability of the model learning minority features. | Effective in anti-pathogen activity prediction, with an optimal imbalance ratio (IR) often found around 1:10. |

| Weighted Loss Function [4] | A cost-sensitive method that assigns a higher penalty for errors on the minority class during model training. | Commonly applied in Graph Neural Network (GNN) training for molecular property prediction to improve sensitivity to active compounds. |

| ACS Framework [5] | A multi-task learning scheme that uses adaptive checkpointing to prevent negative transfer from data-rich to data-poor tasks. | Used for predicting multiple physicochemical properties of molecules simultaneously in ultra-low data regimes (e.g., with only 29 labeled samples). |

| MolFeSCue Framework [7] | A few-shot learning framework that employs pre-trained models and a dynamic contrastive loss to handle data scarcity and imbalance. | Evaluated on benchmarks like Tox21 and SIDER for molecular property prediction, demonstrating superior performance in imbalanced settings. |

| Adversarial Augmentation (AAIS) [6] | Augments influential data points near the decision boundary to flatten it and improve model robustness and generalization. | Applied to graph-level tasks for molecular property prediction, boosting AUC and F1-scores on imbalanced datasets. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the root causes of class imbalance in molecular property classification? Class imbalance in molecular datasets primarily stems from two sources: naturally occurring skewed distributions in chemical space and human-introduced selection biases during data collection [2].

- Natural Molecular Distributions: In nature and established compound libraries, certain molecular structures are inherently more abundant. For instance, in drug discovery, inactive compounds significantly outnumber active drug molecules due to fundamental constraints of cost, safety, and the rarity of a molecule possessing the desired biological activity [2].

- Selection Bias: This occurs during the experimental process. In High-Throughput Screening (HTS), biases can be introduced through priorities in experimental design, technical limitations of assays, or the over-representation of specific, well-studied molecular families in commercial screening libraries [2]. A common technical bias is spatial bias within microtiter plates, where systematic errors from factors like reagent evaporation or liquid handling errors create location-dependent patterns of activity (e.g., false positives or negatives on plate edges) [8].

FAQ 2: Why is class imbalance a critical problem for AI in drug discovery? Most standard machine learning (ML) algorithms, including random forests and support vector machines, assume a relatively uniform distribution of classes [2]. When trained on imbalanced data, these models become biased toward the majority class (e.g., inactive compounds). They achieve high overall accuracy by correctly predicting the majority class but fail to identify the minority class (e.g., active compounds), which is often the most critical for discovery. This leads to models with poor robustness and applicability that cannot reliably predict underrepresented classes, ultimately limiting their real-world utility in screening campaigns [2].

FAQ 3: How can I identify if my HTS data is affected by spatial bias? Spatial bias can be identified through statistical analysis and visualization of the screening data across the plates. Researchers typically examine assay plates for systematic row or column effects. The presence of signals that form specific patterns (e.g., all wells on the top row showing elevated activity) rather than a random distribution can indicate spatial bias [8]. Using robust Z-scores and applying statistical tests like the Mann-Whitney U test or the Kolmogorov-Smirnov test on plate measurements can help objectively detect these biases [8].

FAQ 4: What are the most effective strategies to correct for spatial bias in HTS data? The correction method depends on whether the bias is additive or multiplicative [8].

- Additive Bias Model: Corrected Value = Raw Value - (Row Effect + Column Effect)

- Multiplicative Bias Model: Corrected Value = Raw Value / (Row Effect * Column Effect) Algorithms like the Plate Model Pattern (PMP) correction, followed by normalization using robust Z-scores, have been shown to effectively minimize both assay-specific and plate-specific spatial biases, leading to higher true positive rates and fewer false positives/negatives during hit identification [8]. The table below summarizes the core approaches.

Table 1: Methods for Correcting Spatial Bias in HTS Data

| Method | Core Principle | Best For |

|---|---|---|

| B-score [8] | A plate-specific correction method using median polish to remove row and column effects. | Traditional HTS data analysis. |

| Well Correction [8] | An assay-specific technique that removes systematic error from biased well locations across all plates in an assay. | Correcting errors persistent in specific well positions (e.g., all corner wells). |

| PMP with Robust Z-scores [8] | A two-step method that first corrects plate-specific bias (additive or multiplicative) and then normalizes the entire assay. | Complex datasets with a mix of assay-wide and plate-specific bias patterns. |

Troubleshooting Guides

Issue: High False Positive/Negative Rates in HTS Workflow

Problem: The primary HTS campaign identifies many hits that fail confirmation or misses known active compounds. This is often traced to class imbalance and spatial bias.

Solution: Implement a rigorous data preprocessing and validation pipeline.

Table 2: Troubleshooting Steps for HTS Data Quality

| Step | Action | Protocol & Details |

|---|---|---|

| 1. Pilot Study | Run a small-scale pilot to validate the assay before full-scale HTS. | Use a representative subset of compounds and control compounds (positive/negative) to determine the Z'-factor, a statistical parameter that assesses assay quality. A Z'-factor > 0.5 is generally considered excellent [9]. |

| 2. Bias Detection | Analyze raw HTS data for spatial bias. | Protocol: For each plate, visualize the raw signal intensity or activity as a heatmap. Statistically, fit both additive and multiplicative models to the plate data and use tests (e.g., Mann-Whitney U test) to determine the presence and type of bias [8]. |

| 3. Bias Correction | Apply an appropriate correction algorithm. | Protocol: Based on the detection results, apply a method like the PMP algorithm. For example, if a multiplicative bias is detected in a 384-well plate, use: Corrected Value = Raw Value / (Row Effect * Column Effect). Follow this with robust Z-score normalization across the entire assay to standardize the data [8]. |

| 4. Hit Confirmation | Use a multi-stage process to confirm initial "hits." | Protocol: Do not rely on a single "single-shot" assay [10]. Active compounds from the primary screen should undergo:1. Confirmatory Screening: Re-testing at the same concentration to check reproducibility.2. Dose-Response Screening: Testing over a range of concentrations to determine potency (IC50/EC50).3. Orthogonal Screening: Using a different, unrelated assay technology to confirm the activity and rule out technology-specific artifacts [10]. |

The following workflow diagram illustrates the key stages of a robust HTS campaign that incorporates checks for data imbalance and bias.

Issue: Building Predictive ML Models with Imbalanced Molecular Data

Problem: An ML model for molecular property prediction shows high overall accuracy but fails to predict the rare, critical class (e.g., toxic compounds or active drugs).

Solution: Apply techniques specifically designed for imbalanced data learning. These can be categorized into data-level, algorithm-level, and hybrid approaches [2] [11].

Table 3: Strategies for Mitigating Class Imbalance in ML Models

| Category | Method | Experimental Protocol & Application |

|---|---|---|

| Data Re-balancing (Oversampling) | SMOTE [2] | Protocol: 1. Identify a sample from the minority class. 2. Find its k-nearest neighbors (k-NN). 3. Create a synthetic sample along the line segment joining the original sample and one of its neighbors. Application: Used with XGBoost to improve prediction of mechanical properties in polymer materials [2]. |

| Borderline-SMOTE [2] | Protocol: A variant of SMOTE that only generates synthetic samples for minority instances that are on the "borderline" (near the decision boundary) or are misclassified by a classifier. Application: Effectively used with CNN models to predict protein-protein interaction sites, a task with severe class imbalance [2]. | |

| Data Re-balancing (Undersampling) | NearMiss [2] | Protocol: Reduces majority class samples by selecting those that are closest to the minority class samples in the feature space. Application: Applied in protein acetylation site prediction to significantly improve model accuracy [2]. |

| Algorithmic Approach | Cost-Sensitive Learning [12] | Protocol: Modify the learning algorithm to assign a higher misclassification cost (penalty) for errors made on the minority class. This forces the model to pay more attention to the minority class. Application: Can be integrated into ensemble methods like Cost-Sensitive Random Forests. |

| Hybrid Method | Ensemble + Sampling [2] [11] | Protocol: Combine data-level sampling (e.g., SMOTE) with ensemble learning algorithms (e.g., Random Forests). For example, generate multiple balanced training sets and train a classifier on each, then aggregate the predictions. Application: An RF-SMOTE model demonstrated superior performance in identifying new HDAC8 inhibitors in drug discovery [2]. |

The diagram below maps the logical decision process for selecting an appropriate technique to handle class imbalance.

The Scientist's Toolkit

Table 4: Essential Research Reagents & Solutions for HTS and Imbalance Correction

| Item | Function & Rationale |

|---|---|

| Diverse Compound Library [10] | A high-quality, curated library of chemical compounds is the foundation of HTS. Diversity ensures broad coverage of chemical space, increasing the chance of finding novel hits. Evotec's library, for example, contains >850,000 compounds selected for diversity and drug-likeness [10]. |

| Control Compounds (Positive/Negative) | Essential for validating assay performance (Z'-factor), normalizing data, and setting activity thresholds. They serve as a baseline for distinguishing true signals from noise [10]. |

| Robust Z-Score Normalization [8] | A statistical method used to normalize HTS data by measuring how many standard deviations a data point is from the median. It is more robust to outliers than mean-based standardization and is critical for correcting assay-wide spatial bias [8]. |

| SMOTE Algorithm [2] [11] | A computational tool to synthetically generate new examples for the minority class, balancing the dataset before training an ML model. It helps prevent model bias toward the majority class. |

| B-score / PMP Algorithms [8] | Statistical tools specifically designed for plate-based assays. They model and remove row and column effects from HTS data, correcting for spatial bias and reducing false positives/negatives [8]. |

| Orthogonal Assay Reagents [10] | A separate set of reagents and materials for a secondary, functionally different assay. This is used for hit confirmation to rule out false positives caused by interference with the primary assay's chemistry or readout [10]. |

FAQs on Imbalanced Data in Molecular Property Classification

This section addresses common challenges researchers face when working with imbalanced chemical datasets.

Why does my model achieve high accuracy but fails to predict the rare molecular property I'm interested in?

High accuracy on imbalanced data is often misleading. When one class (e.g., "inactive compounds") significantly outnumbers another (e.g., "active compounds"), models tend to become biased toward the majority class. They may achieve high accuracy by simply always predicting the common class, while completely failing to learn the characteristics of the rare, but often scientifically critical, minority class [1] [13]. In drug discovery, for instance, active drug molecules are often vastly outnumbered by inactive ones, causing models to neglect the active compounds [13].

What evaluation metrics should I use instead of accuracy?

Accuracy is not a reliable metric for imbalanced datasets. Instead, you should use a suite of metrics that provide a more nuanced view of model performance, especially for the minority class [14]. The table below summarizes key metrics to use.

| Metric | Description | Why It's Useful for Imbalance |

|---|---|---|

| Confusion Matrix | A table showing true positives, false positives, true negatives, and false negatives [14]. | Helps visualize where the model is making errors, particularly the number of false negatives for the minority class. |

| Precision | The proportion of correct positive predictions (e.g., how many predicted active compounds are truly active) [14]. | Measures the model's reliability when it predicts the minority class. |

| Recall (Sensitivity) | The proportion of actual positives correctly identified (e.g., what percentage of truly active compounds are found) [14]. | Measures the model's ability to find all relevant minority class instances. |

| F1-Score | The harmonic mean of precision and recall [14]. | Provides a single balanced score when both precision and recall are important. |

| AUC-PR | The area under the Precision-Recall curve [14]. | More informative than AUC-ROC for imbalanced data as it focuses directly on the performance for the positive (minority) class. |

My dataset is very small. How can I possibly balance it without collecting more data?

Data-level techniques like oversampling can generate synthetic samples for your minority class, effectively creating a larger, balanced dataset from your existing data [13]. One advanced method is the Synthetic Minority Over-sampling Technique (SMOTE), which creates new, synthetic examples of the minority class in the feature space, rather than just duplicating existing data [13] [14]. This has been successfully applied in chemistry for tasks like predicting polymer material properties and screening catalysts [13].

How can I make my existing algorithm pay more attention to the minority class?

You can use algorithm-level solutions that directly adjust the learning process. A key strategy is cost-sensitive learning, which imposes a higher penalty on the model when it misclassifies a minority class example than a majority class one [14]. In practice, this is often implemented by setting class_weight='balanced' in algorithms like Logistic Regression and Random Forest, or by using a weighted loss function in neural networks [14].

Troubleshooting Guide: Solving Imbalance in Molecular Datasets

This guide provides a step-by-step methodology for diagnosing and mitigating class imbalance.

Problem: Model is biased towards the majority class and has poor generalization for rare properties.

Solution: A Multi-Pronged Approach to Rebalance Data and Learning.

Step 1: Diagnose the Imbalance and Establish a Performance Baseline

- Action: Before applying any fixes, use the evaluation metrics listed in the table above (like F1-Score and AUC-PR) to establish a performance baseline for your model on the raw, imbalanced data. This allows you to quantitatively measure the improvement from subsequent techniques [14].

Step 2: Implement and Compare Mitigation Strategies Two primary pathways exist, and they can be used in combination. The following workflow outlines the process for experimenting with these solutions.

Path A: Data-Level Solutions (Resampling)

- Action: Balance your dataset before training the model.

- Detailed Protocol for SMOTE:

- Preprocess Your Data: Represent your molecules as feature vectors (e.g., using molecular descriptors or fingerprints).

- Split the Data: Perform a train-validation-test split, ensuring the imbalance is represented in each split. Crucially, apply SMOTE only to the training set to avoid data leakage and over-optimistic performance on the validation/test sets.

- Apply SMOTE: Use a library like

imbalanced-learn(imblearn) in Python. SMOTE works by:- Selecting a random sample from the minority class.

- Finding its k-nearest neighbors (typically k=5).

- Creating a new synthetic point at a random location along the line segment connecting the original sample and one of its neighbors [13].

- Train Model: Train your classifier (e.g., Random Forest, XGBoost) on the resampled training set.

Path B: Algorithm-Level Solutions (Cost-Sensitive Learning)

- Action: Modify the learning algorithm to be more sensitive to the minority class.

- Detailed Protocol for Weighted Loss/Random Forest:

- For Tree-Based Models (e.g., Random Forest, XGBoost): Set the

class_weightparameter to'balanced'. This automatically adjusts weights inversely proportional to class frequencies. In XGBoost, you can also use thescale_pos_weightparameter to control the balance of positive weights [14]. - For Neural Networks: Use a weighted loss function. For example, in a binary classification task, you can calculate the class weight for the minority class as

(total_samples / (2 * count_minority_samples))and pass this to thelossfunction in frameworks like TensorFlow or PyTorch [14].

- For Tree-Based Models (e.g., Random Forest, XGBoost): Set the

Step 3: Explore Advanced and Combined Techniques

- Action: If the above methods are insufficient, consider more advanced strategies.

- Ensemble Methods: Use algorithms like Balanced Random Forest or EasyEnsemble which internally combine bagging with data sampling to handle imbalance [14].

- Two-Step Technique (Downsampling + Upweighting): This method, recommended by Google ML, involves:

- Downsampling: Artificially reducing the number of majority class examples in the training set to create a more balanced dataset. This helps the model learn the features of the minority class more effectively [1].

- Upweighting: Applying a weight to the downsampled majority class examples in the loss function to compensate for their reduced count. This weight is typically the factor by which you downsampled, correcting the bias introduced by downsampling and teaching the model the true class distribution [1].

The Scientist's Toolkit: Research Reagents & Computational Solutions

This table lists key computational "reagents" and tools essential for tackling data imbalance in molecular research.

| Tool / Technique | Function / Explanation | Example Use Case in Chemistry |

|---|---|---|

| SMOTE | Generates synthetic samples for the minority class to balance the dataset, reducing overfitting compared to random oversampling [13]. | Balancing datasets of active vs. inactive compounds in virtual screening for drug discovery [13]. |

| Class Weights | A cost-sensitive learning method that makes the algorithm penalize misclassifications of the minority class more heavily [14]. | Training a model to predict rare toxicants in environmental chemistry, ensuring these rare but critical compounds are not ignored. |

| Precision-Recall (PR) Curve | A diagnostic plot that shows the trade-off between precision and recall for different probability thresholds; more informative than ROC for imbalanced data [14]. | Evaluating the performance of a model tasked with identifying a rare, therapeutic protein-protein interaction. |

| Ensemble Methods (e.g., XGBoost) | Advanced algorithms that can be configured with parameters like scale_pos_weight to natively handle class imbalance during training [14]. |

Building a robust predictive model for material properties where successful examples are scarce (e.g., high-efficiency catalysts) [13]. |

| Meta-Learning | A framework for "learning to learn," where a model is trained on a variety of tasks so it can quickly adapt to new tasks with very little data [15]. | Few-shot molecular property prediction, where labeled data for a new, desired property is extremely limited [15]. |

Troubleshooting Guides

FAQ: Addressing Common Experimental Challenges

Q: My model achieves high overall accuracy but fails to predict the minority class (e.g., active drug molecules). What is wrong?

A: This is a classic symptom of class imbalance. Your model is biased toward the majority class. To address this:

- Diagnose the issue: Calculate metrics like sensitivity, specificity, and F1-score for each class, not just overall accuracy [13].

- Apply resampling: Use techniques like SMOTE (Synthetic Minority Over-sampling Technique) to generate synthetic samples for the minority class and balance your dataset [13] [16].

- Use algorithmic approaches: Implement models like XGBoost with built-in mechanisms to handle imbalance, or use cost-sensitive learning to assign higher penalties for misclassifying minority class samples [16].

Q: How can I validate my model effectively when working with a small, imbalanced dataset?

A: Standard validation can be misleading with imbalance. Employ these strategies:

- Use stratified sampling: Ensure that each cross-validation fold preserves the same class distribution as the full dataset [16].

- Focus on relevant metrics: Prioritize the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) and the precision-recall curve (AUC-PR), as they are more informative for imbalanced data than accuracy [16].

- Consider meta-learning: For extreme few-shot scenarios, context-informed meta-learning frameworks that extract both property-shared and property-specific molecular features can improve predictive accuracy with limited data [15].

Q: What are the best practices for reporting results to ensure my work on imbalanced data is credible?

A: Transparency is key. Your reports should include:

- A clear description of the dataset, including the exact number of samples in each class [13] [16].

- A full suite of metrics, including sensitivity, specificity, balanced accuracy, and AUC for all classes [16].

- Details of the technique used to mitigate imbalance (e.g., "SMOTE was applied to the training set") [13].

Experimental Protocols & Methodologies

Detailed Methodology: XGBoost with Ensemble Mapping for hERG Toxicity Prediction

This protocol details a robust approach to building a classification model for predicting hERG channel blockade, a critical cardiotoxicity endpoint in drug discovery, while explicitly addressing severe class imbalance [16].

1. Dataset Curation and Partitioning

- Source: Use the largest public dataset of hERG inhibitory activity (e.g., from Sato et al., containing 291,219 molecules) [16].

- Curation: Implement a multi-step curation protocol:

- Remove structures with erroneous representations or inorganic atoms.

- Standardize chemotypes, tautomeric forms, and neutralize charges using defined chemical transformation rules.

- Remove duplicate molecules and curate experimental data for consistency [16].

- Partitioning:

- Subtract a pre-defined external test set (ET I, ~30%) for final model evaluation.

- Split the remaining 70% (Modeling set) into a training set (90% of Modeling set) and an internal test set (10% of Modeling set) [16].

2. Molecular Descriptor Calculation

- Compute diverse 2D molecular representations using tools like the RDKit plugin in KNIME or alvaDesc. Include:

- Physicochemical properties (e.g., ESOL, molecular weight).

- Topological indices (e.g., Kappa, MATS1i).

- Fingerprints (e.g., Morgan, MACCS) [16].

3. Handling Class Imbalance with Balanced Training & XGBoost

- The full training set is highly imbalanced (e.g., ~9,900 inhibitors vs. ~281,000 non-inhibitors) [16].

- Strategy: Develop an XGBoost consensus model. Create multiple balanced training sets from the full training set via sampling. Train separate XGBoost models on these balanced sets [16].

- XGBoost is particularly suitable due to its inherent robustness to class imbalance and superior predictive performance [16].

4. Isometric Stratified Ensemble (ISE) Mapping

- Use ISE mapping on the internal test set to estimate the model's Applicability Domain (AD) and stratify predictions into confidence levels (e.g., High, Medium, Low). This improves prediction confidence evaluation for new compounds [16].

5. Variable Selection and Model Interpretation

- Perform recursive feature selection to identify the most important molecular descriptors for hERG inhibition, enhancing model interpretability [16].

Table 1: Key Performance Metrics for hERG Toxicity Prediction Model Using XGBoost and ISE Mapping

| Metric | Value | Interpretation |

|---|---|---|

| Sensitivity (Recall) | 0.83 | Model correctly identifies 83% of actual hERG inhibitors. |

| Specificity | 0.90 | Model correctly identifies 90% of non-inhibitors. |

| Balanced Approach | Achieved | Good balance between identifying toxic compounds (sensitivity) and avoiding false alarms (specificity). |

Detailed Methodology: SMOTE for Imbalanced Data in Catalyst and Material Design

This protocol uses the Synthetic Minority Over-sampling Technique (SMOTE) to rebalance imbalanced datasets in materials science and catalysis [13].

1. Problem Identification and Data Preparation

- Catalyst Design Example: Collect data for heteroatom-doped arsenenes. Using a threshold (e.g., Gibbs free energy |ΔGH| > 0.2 eV), the data is divided into two imbalanced categories (e.g., 88 in one class, 38 in the other) [13].

- Polymer Material Example: Collect experimental data and use an algorithm like Nearest Neighbor Interpolation (NNI) for initial data expansion. Then, cluster the data (e.g., using K-means) to identify minority clusters [13].

2. Application of SMOTE

- Apply the SMOTE algorithm to the identified minority class(es). SMOTE generates synthetic samples by interpolating between existing minority class instances in feature space, effectively balancing the class distribution [13].

3. Model Training and Validation

- Train machine learning models (e.g., XGBoost, Random Forest) on the newly balanced dataset.

- Validate model performance using stratified cross-validation and report metrics relevant to all classes [13].

Table 2: Application of SMOTE in Chemistry Domains

| Chemistry Domain | Imbalance Challenge | SMOTE Application & Outcome |

|---|---|---|

| Catalyst Design [13] | Uneven data for hydrogen evolution reaction catalysts. | SMOTE balanced data distribution, improving model prediction and candidate screening. |

| Polymer Material Design [13] | Clustered data with minority sample boundaries after K-means clustering. | Borderline-SMOTE was used to interpolate along minority cluster boundaries, generating balanced clusters. |

Workflow Visualization

Experimental Workflow for Imbalanced Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Tackling Class Imbalance in Molecular Property Classification

| Tool / Resource | Function | Application Example |

|---|---|---|

| SMOTE & Variants [13] | Algorithmic oversampling to generate synthetic minority class samples. | Balancing active vs. inactive compounds in drug discovery [13] [16]. |

| XGBoost [16] | A gradient boosting framework robust to class imbalance, often used with balanced training sets. | Predicting hERG toxicity with high sensitivity and specificity [16]. |

| Stratified K-Fold Cross-Validation [16] | Data partitioning method that preserves class distribution in each fold. | Ensuring reliable performance estimation on imbalanced datasets. |

| Meta-Learning Frameworks [15] | Few-shot learning approach that leverages property-shared and property-specific knowledge. | Accurate molecular property prediction when labeled data is very limited. |

| ISE Mapping [16] | Defines the model's Applicability Domain (AD) and stratifies prediction confidence. | Identifying reliable predictions and guiding compound selection in early drug discovery [16]. |

A Practical Toolkit: Data, Algorithm, and Model Solutions for Imbalance

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between oversampling and undersampling techniques?

Oversampling and undersampling are data-level approaches to handle class imbalance, but they operate in opposite ways. Oversampling increases the number of minority class instances by generating new synthetic samples (like SMOTE and ADASYN) or duplicating existing ones. This helps the model better learn the characteristics of the minority class without losing any information from the original dataset [2]. Undersampling, such as Random Undersampling (RUS), reduces the number of majority class samples by randomly removing instances to balance the class distribution. While this can reduce computational cost and mitigate bias, it carries the risk of discarding potentially important information from the majority class [17] [2].

Q2: When should I use SMOTE over Random Undersampling in my molecular property prediction project?

The choice depends on your dataset size, computational resources, and the specific problem. Use SMOTE when your dataset is not extremely large and preserving all majority class information is crucial. It generates synthetic minority samples to help the model learn better decision boundaries [2]. However, be cautious with high-dimensional data, as SMOTE can sometimes bias classifiers like k-NN towards the minority class if no variable selection is performed [18]. Use Random Undersampling when dealing with very large datasets where computational efficiency is a priority, or when the majority class contains many redundant samples. Studies in drug discovery have shown RUS can significantly boost recall and F1-score for highly imbalanced bioassay data [3].

Q3: Why does my model performance sometimes decrease after applying ADASYN?

ADASYN adaptively generates minority samples based on learning difficulty, focusing more on boundary regions that are harder to learn [19]. This can sometimes lead to overfitting on noisy regions if the dataset contains many outliers or noisy samples, as the method will aggressively generate synthetic samples in these problematic areas [20] [19]. To address this, consider implementing a noise-filtering step before applying ADASYN, such as using the Tukey criterion to remove outliers or employing Edited Nearest Neighbors (ENN) to clean the data [20].

Q4: How do I handle extreme class imbalance (e.g., >1:100 ratio) in drug discovery datasets?

For extreme imbalance scenarios common in drug discovery (where active compounds are rare), consider these strategies: Adjust the Imbalance Ratio (IR) rather than aiming for perfect 1:1 balance. Research has shown that a moderate IR of 1:10 can significantly enhance model performance while maintaining better generalization than perfect balance [3]. Combine multiple approaches - use hybrid methods like SMOTE-ENN that both generate minority samples and clean the resulting dataset, or employ ensemble methods with built-in sampling like RUSBoost [19]. Consider algorithm-level solutions such as cost-sensitive learning that assign higher misclassification costs to minority samples [3] [19].

Q5: What evaluation metrics should I use instead of accuracy when working with resampled imbalanced data?

When working with resampled imbalanced data, avoid using accuracy as it can be misleading. Instead, employ metrics that better capture minority class performance: F1-score - harmonic mean of precision and recall, providing balanced view [3] [21]. Matthews Correlation Coefficient (MCC) - considers all confusion matrix categories and works well on imbalanced data [22] [19]. Area Under the Precision-Recall Curve (PR-AUC) - more informative than ROC-AUC for imbalanced data [19]. G-mean - geometric mean of sensitivity and specificity [20]. These metrics provide a more comprehensive view of model performance on both majority and minority classes.

Troubleshooting Guides

Problem: Model shows high accuracy but poor recall for minority class after SMOTE

Diagnosis: This often indicates the synthetic samples generated by SMOTE are not effectively improving learning of the minority class characteristics, potentially due to noisy samples or improper parameter tuning.

Solution:

- Pre-clean your data using noise filtering techniques like Tomek Links or Edited Nearest Neighbors before applying SMOTE [17] [20].

- Try Borderline-SMOTE which focuses specifically on minority samples near the decision boundary rather than all minority samples [19].

- Adjust the k-neighbors parameter in SMOTE (default is 5) - smaller values might be needed for very small minority classes [18].

- Combine with undersampling using SMOTE-Tomek or SMOTE-ENN hybrid approaches to remove noisy majority samples that might be interfering with classification [20] [19].

Problem: Model overfits after applying ADASYN

Diagnosis: ADASYN's adaptive nature may have over-generated samples in noisy regions, causing the model to learn artificial patterns rather than true minority class characteristics.

Solution:

- Implement noise detection prior to ADASYN using methods like the Tukey criterion for outlier removal [20].

- Reduce the sampling strategy parameter to generate fewer synthetic samples than full balance (e.g., achieve 1:2 ratio instead of 1:1) [3].

- Apply stronger regularization in your classifier to prevent overfitting to the synthetic samples.

- Switch to SVM-SMOTE which generates samples considering the decision boundary learned by an SVM classifier, potentially creating more meaningful synthetic samples [20].

Problem: Significant information loss after Random Undersampling

Diagnosis: Important patterns from the majority class may have been removed during random selection, reducing model performance.

Solution:

- Use informed undersampling instead of random, such as NearMiss which selects majority samples based on their distance to minority samples [17] [2].

- Apply ensemble undersampling - create multiple balanced subsets with different majority samples and ensemble the results [19].

- Try the Cluster Centroids method which undersamples by generating representative cluster centroids rather than removing instances, preserving distribution characteristics [17].

- Adjust the imbalance ratio - instead of 1:1 balance, try moderate ratios like 1:10 or 1:25 which retain more majority samples while still reducing imbalance [3].

Problem: Poor generalization on test data after successful resampling

Diagnosis: The resampling process may have created artificial patterns that don't represent the true population, or the synthetic samples may differ significantly from real minority instances.

Solution:

- Ensure proper validation - use strict train-validation-test splits where resampling is applied only to training data, never to validation or test sets.

- Try domain-specific augmentation instead of generic resampling. For molecular data, consider SMILES enumeration or structure-based augmentation [2].

- Use hybrid approaches like SMOTE-ENN that include cleaning steps to remove unrealistic synthetic samples [20] [19].

- Implement cross-validation correctly by applying resampling within each fold rather than to the entire dataset before splitting.

Performance Comparison of Resampling Techniques

Table 1: Comparative Performance of Resampling Methods Across Different Domains

| Method | Best For | Advantages | Limitations | Reported Performance |

|---|---|---|---|---|

| SMOTE | General-purpose use; Moderate imbalance | Reduces overfitting vs. ROS; Widely implemented | Can generate noisy samples; Struggles with high-dimensional data | F1: 0.73, MCC: 0.70 in financial distress prediction [19] |

| ADASYN | Complex boundaries; Hard-to-learn samples | Adaptive to learning difficulty; Focuses on boundary regions | Can overfit on noisy regions; Computationally intensive | Accuracy: 0.717, MCC: 0.512 in Caco-2 permeability classification [23] |

| Random Undersampling | Large datasets; Computational efficiency | Fast training; Simple implementation | Loses potentially useful majority information | High recall (0.85) but lower precision (0.46) in financial prediction [19] |

| Borderline-SMOTE | Datasets with clear decision boundaries | Focuses on critical boundary samples; Improves class separation | Sensitive to parameter tuning; May ignore safe minority samples | Better recall than standard SMOTE in financial applications [19] |

| SMOTE-Tomek | Noisy datasets; Quality-focused applications | Combines creation and cleaning; Better sample quality | More complex implementation; Higher computational cost | Enhanced recall with slight precision sacrifice [19] |

| SMOTE-ENN | Very noisy data; Quality over quantity | Aggressive cleaning; High-quality output | Can remove useful samples; May over-clean | Effective for genotoxicity data in hybrid approach [21] |

Table 2: Algorithm-Specific Recommendations for Molecular Property Classification

| Classifier Type | Recommended Resampling | Considerations | Reported Outcome |

|---|---|---|---|

| Tree-Based (RF, XGBoost) | SMOTE or SMOTE-ENN | Handles synthetic samples well; Benefits from boundary emphasis | MACCS-GBT-SMOTE: Best F1 score in genotoxicity prediction [21] |

| k-Nearest Neighbors | Random Undersampling or SMOTE with variable selection | Sensitive to high-dimensional noise; Requires careful preprocessing | SMOTE beneficial only with variable selection in high-dimensional data [18] |

| Support Vector Machines | Borderline-SMOTE or SVM-SMOTE | Benefits from boundary-focused sampling; Works with class weights | SVM-SMOTE generates samples along decision boundary [20] |

| Neural Networks | ADASYN or Moderate RUS | Can handle complex patterns; Benefits from adaptive sampling | ADASYN with XGBoost: Best for multiclass permeability prediction [23] |

| Ensemble Methods | Hybrid approaches (SMOTE-Tomek) | Multiple learners handle synthetic and cleaned data effectively | Bagging-SMOTE: Balanced performance (AUC 0.96, F1 0.72) in financial prediction [19] |

Experimental Protocols

Protocol 1: Standard SMOTE Implementation for Molecular Data

Purpose: To generate synthetic minority samples for imbalanced molecular classification datasets.

Materials:

- Imbalanced dataset (features and labels)

- Python with imbalanced-learn library

- Computing environment with sufficient memory

Procedure:

- Data Preprocessing: Clean your molecular dataset, handle missing values, and perform feature scaling as SMOTE is sensitive to distance metrics.

- Train-Test Split: Split data into training and test sets, ensuring the imbalance ratio is preserved in both splits.

- Apply SMOTE Only to Training Data:

- Train Classifier: Use the resampled training data to train your chosen classifier.

- Evaluate on Original Test Data: Test the model on the untouched test set using appropriate metrics (F1, MCC, G-mean).

Validation: Compare performance against the same classifier trained on original imbalanced data using cross-validation. The SMOTE approach should show significantly improved recall and F1-score for the minority class while maintaining reasonable overall performance [2] [21].

Protocol 2: Random Undersampling for High-Ratio Imbalance

Purpose: To address extreme class imbalance (e.g., >1:50) by reducing majority class samples.

Materials:

- Highly imbalanced dataset

- Computational resources for potential multiple iterations

Procedure:

- Data Preparation: Clean and preprocess data as usual.

- Determine Optimal Imbalance Ratio: Rather than defaulting to 1:1, test different ratios (1:10, 1:25, 1:50) to find the optimal balance between performance and information retention [3].

- Implement Controlled Undersampling:

- Ensemble Approach (Optional): Create multiple undersampled datasets with different random states and ensemble the resulting models.

- Validate Extensively: Use comprehensive metrics and external validation sets to ensure generalization.

Validation: The approach should significantly improve minority class recall while maintaining acceptable precision. For bioactivity prediction, optimal results have been observed with moderate ratios around 1:10 rather than perfect balance [3].

Protocol 3: Hybrid SMOTE-Tomek for Noisy Molecular Datasets

Purpose: To generate synthetic samples while cleaning noisy instances that could hinder classification.

Materials:

- Noisy imbalanced dataset

- Imbalanced-learn library

- Domain knowledge for noise validation

Procedure:

- Initial Data Preparation: Standard preprocessing of molecular features and labels.

- Apply SMOTE-Tomek Hybrid:

- Inspect Removed Samples: Examine which samples were identified as Tomek links to understand the noise pattern.

- Train Classifier: Proceed with standard training on the cleaned and balanced dataset.

- Comparative Evaluation: Test against standard SMOTE and no resampling approaches.

Validation: This approach should yield better precision than standard SMOTE while maintaining good recall, as the Tomek link removal eliminates ambiguous boundary samples that could cause misclassification [20] [19].

Workflow Visualization

Resampling Strategy Selection Workflow

Research Reagent Solutions

Table 3: Essential Computational Tools for Resampling Experiments

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| imbalanced-learn | Python library | Provides implementation of SMOTE, ADASYN, RUS, and hybrid methods | General resampling experiments; Supports scikit-learn compatibility [17] |

| scikit-learn | Python library | Machine learning algorithms; Base functionality for custom resampling | Model training and evaluation; Feature preprocessing |

| KNIME Analytics | Workflow platform | Visual workflow for data preprocessing and resampling | Genotoxicity prediction; Data balancing workflows [21] |

| RDKit | Cheminformatics library | Molecular fingerprint generation; Chemical descriptor calculation | Molecular property prediction; Feature engineering [21] |

| XGBoost | Algorithm | Gradient boosting with handling of imbalanced data | Financial distress prediction; Molecular classification [19] [23] |

| Tukey Criterion | Statistical method | Identification and removal of outliers in data | Noise filtering prior to resampling [20] |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: In a cost-sensitive learning experiment for drug-target interaction prediction, my model's recall for the active class (minority) is still very low, even after assigning a higher misclassification cost. What could be going wrong?

A1: Several factors could be at play. First, verify that your cost matrix is properly scaled. A common issue is that the assigned cost for false negatives, while higher than for false positives, is still not sufficient to overcome the extreme class imbalance [24] [25]. The theoretical optimal threshold for classification might not be at the default 0.5; you should calculate and adjust the decision threshold based on your cost matrix [26]. Furthermore, in high-dimensional molecular data, the combination of many features and class imbalance can degrade performance. Consider integrating feature selection with your cost-sensitive learning to reduce noise and improve model focus on the most predictive features [27].

Q2: When using ensemble methods like Random Forest on an imbalanced molecular dataset, the overall accuracy is high, but the model fails to predict most active compounds. How can I adapt the ensemble to fix this?

A2: High overall accuracy with poor minority class performance is a classic sign of a model biased toward the majority class [28] [29]. You can adapt ensemble methods in several ways. For bagging-based ensembles like Random Forest, leverage class weighting by setting class_weight='balanced' in your implementation, which adjusts the algorithm's objective function to penalize minority class misclassifications more heavily [29]. Alternatively, use specialized ensemble algorithms designed for imbalance, such as RUSBoost, which combines random undersampling of the majority class with the boosting process, forcing the model to focus on the minority class in successive iterations [29]. Another effective strategy is to build an ensemble of cost-sensitive classifiers, where each base learner (e.g., an SVM) is trained with a custom cost matrix to address the imbalance [28].

Q3: For a molecular property prediction task, when should I choose a cost-sensitive learning approach over a data-level method like SMOTE?

A3: The choice depends on your data characteristics and computational goals. Cost-sensitive learning is often preferable when you have a clear understanding of the real-world economic or clinical costs associated with different types of prediction errors [25]. It is also a good choice when you want to avoid the potential overfitting that can be introduced by synthetic data generation or the information loss from undersampling [3] [27]. Conversely, data-level methods like SMOTE can be more suitable when the class imbalance is moderate and you are using a simple, off-the-shelf classifier that does not natively support instance or class weights [2]. In many real-world applications, a hybrid approach that uses a moderate level of data resampling (e.g., adjusting the imbalance ratio to 1:10 instead of a perfectly balanced 1:1) combined with a cost-sensitive algorithm has been shown to yield the best balance between true positive and false positive rates [3].

Troubleshooting Common Experimental Issues

Problem: High Variance in Model Performance During Cross-Validation

- Symptoms: Sensitivity or F1-score for the minority class varies widely across different folds of cross-validation.

- Potential Causes and Solutions:

- Cause 1: Insufficient minority class examples. With very few minority samples, splitting them into folds can lead to some folds having unrepresentative data.

- Solution: Use stratified cross-validation to ensure the relative class frequencies are preserved in each fold [29]. Consider using a repeated cross-validation strategy to obtain more stable performance estimates.

- Cause 2: Small disjuncts within the minority class. The minority class may consist of several sub-concepts, some of which are very small and get missed in some folds [24].

- Solution: Apply clustering analysis on the minority class to identify these sub-concepts. Techniques like informed oversampling (e.g., generating synthetic samples for the smallest clusters) can help reinforce these small disjuncts [24].

Problem: Cost-Sensitive Model Performs Well on Validation Data but Poorly on External Test Set

- Symptoms: Strong performance on held-out validation data from the same source, but a significant drop in recall and precision on a truly external test set (e.g., from a different bioassay or literature source).

- Potential Causes and Solutions:

- Cause 1: Dataset shift and activity cliffs. The chemical space of the external set may differ from the training data, and molecules with high similarity but different activity (activity cliffs) can severely impact predictions [30].

- Solution: Analyze the chemical similarity between your training and external test sets. Investigate misclassified active compounds to see if they reside near activity cliffs. Incorporate domain-aware features or use models that can better generalize across chemical space [3] [30].

- Cause 2: Over-optimization on the validation set. The cost matrix or model hyperparameters may have been tuned too specifically to the validation set's particular distribution.

- Solution: Perform a more robust validation process, such as nested cross-validation. Simplify the model and avoid overly complex cost matrices that may not generalize.

Experimental Protocols and Methodologies

Protocol 1: Implementing Cost-Sensitive Learning for a Binary Classifier

This protocol outlines the steps to implement a cost-sensitive Support Vector Machine (SVM) for imbalanced molecular property prediction, based on a study that achieved 79.5% sensitivity in a medical screening task [28].

Define the Cost Matrix: Construct a 2x2 cost matrix where the rows represent the true class and the columns represent the predicted class. For a binary problem with "Active" as the minority (positive) class and "Inactive" as the majority (negative) class, a typical structure is:

- Cost(True Active, Predicted Active) = 0

- Cost(True Active, Predicted Inactive) = CFN (False Negative cost)

- Cost(True Inactive, Predicted Active) = CFP (False Positive cost)

- Cost(True Inactive, Predicted Inactive) = 0 The cost CFN should be set higher than CFP to reflect the greater penalty for missing an active compound [26] [28].

Integrate Costs into the Classifier: In the SVM formulation, this is typically achieved by assigning different penalty parameters

Cto each class. TheCparameter for the minority class should be larger. In libraries like scikit-learn, this is done using theclass_weightparameter. Set it to'balanced'to automatically adjust weights inversely proportional to class frequencies, or pass a dictionary like{'Active': 10, 'Inactive': 1}for manual control [26] [28].Adjust the Classification Threshold (Optional but Recommended): After training a model that outputs probabilities, you can adjust the decision threshold from the default 0.5 to minimize expected cost. The theoretical optimal threshold

t*can be calculated from the cost matrix [26]:t* = (C_FP - C_TN) / (C_FP - C_TP + C_FN - C_TN)Since the costs for correct classification (CTP, CTN) are usually 0, this simplifies tot* = C_FP / (C_FP + C_FN).Validate with Cost-Sensitive Metrics: Do not rely on accuracy. Use metrics like Sensitivity (Recall), Precision, F1-Score, and the Matthews Correlation Coefficient (MCC) to evaluate performance, particularly on the minority class [28] [29].

Protocol 2: Building a Hybrid Ensemble for Severe Class Imbalance

This protocol describes the construction of an ensemble that integrates undersampling, cost-sensitive learning, and bagging, mirroring a method that achieved 82.8% sensitivity for screening a rare cardiovascular disease [28].

Feature Selection: Perform statistical analysis (e.g., significance tests like chi-square or t-test) on the features to select the most relevant ones for the classification task. This reduces dimensionality and can help the model focus on the most important signals, especially with limited minority class data [28] [27].

Assign Misclassification Costs: Define a cost matrix for your base classifier, as detailed in Protocol 1.

Create Balanced Subsets via Undersampling: Randomly select a subset of the majority class instances without replacement. The size of this subset can be set to match the size of the minority class (1:1 ratio) or to a less aggressive ratio (e.g., 1:10) which has been shown to be effective without excessive information loss [3].

Train an Ensemble of Cost-Sensitive Classifiers: For each of the

Nbalanced subsets created in step 3, train a cost-sensitive weak classifier (e.g., a cost-sensitive SVM). Each classifier is trained on a different subset of the majority class combined with all the minority class instances [28].Aggregate Predictions: To make a final prediction for a new molecule, aggregate the predictions from all weak classifiers in the ensemble. For a classification task, use majority voting. For probability outputs, average the probabilities and then apply a threshold [28] [29].

Implementation Workflow for a Hybrid Ensemble Model

Data Presentation: Performance Comparison of Algorithm-Level Methods

Table 1: Summary of Algorithm-Level Approaches and Their Reported Performance on Imbalanced Datasets

| Method Category | Specific Technique | Dataset / Application Context | Key Performance Results | Advantages | Limitations |

|---|---|---|---|---|---|

| Cost-Sensitive Learning | Cost-Sensitive SVM [28] | Aortic Dissection Screening (Ratio 1:65) | Sensitivity: 79.5%, Specificity: 73.4% | Directly incorporates domain knowledge of error costs; No risk of overfitting from synthetic data. | Requires estimation of misclassification costs, which may not always be known. |

| Hybrid Ensemble | Ensemble of Cost-Sensitive SVMs with Undersampling & Bagging [28] | Aortic Dissection Screening (Ratio 1:65) | Sensitivity: 82.8%, Specificity: 71.9%, Low variance in CV. | Combines strengths of multiple approaches; Robust and stable performance. | Higher computational cost for training multiple models. |

| Cost-Sensitive + Feature Selection | Cost-Sensitive Random Forest with Feature Selection [27] | High-Dimensional Genomic Datasets | Improved MCC and F1-score compared to using either method alone. | Reduces noise from high-dimensional data; Improves model interpretability and focus. | Performance depends on the choice of feature selection heuristic. |

| Data-Level + Algorithm-Level | Random Undersampling (to 1:10 ratio) with various ML/DL models [3] | HIV Bioassay Prediction (Original Ratio 1:90) | RUS outperformed ROS and synthetic methods, enhancing ROC-AUC, Balanced Accuracy, and F1-score. | Simpler than complex ensembles; A moderate imbalance ratio can be sufficient for good performance. | Can still lead to loss of potentially useful information from the majority class. |

The Scientist's Toolkit: Key Research Reagents and Computational Solutions

Table 2: Essential Tools and Algorithms for Implementing Algorithm-Level Solutions

| Item Name | Type | Function in Experimentation | Example Implementations / Libraries |

|---|---|---|---|

| Cost Matrix | Conceptual Framework | Defines the penalty for each type of classification error, formally encoding the research priority on the minority class. | Custom-defined in code (e.g., a Python dictionary or 2D array). |

| Class Weighting | Algorithmic Modifier | A common meta-learning technique to inject cost-sensitivity into standard algorithms by weighting the loss function. | class_weight='balanced' in scikit-learn (SVM, Random Forest). |

| Ensemble Frameworks | Algorithmic Infrastructure | Provides the structure to combine multiple weak learners, which can be individually adapted for class imbalance. | Scikit-learn (BaggingClassifier), IMBLearn (RUSBoost, SMOTEBoost). |

| Threshold Moving | Post-processing Technique | Adjusts the decision threshold from the default 0.5 to a value derived from the cost matrix, optimizing for cost minimization. | setThreshold() in MLR, or custom implementation using predict_proba(). |

| Performance Metrics | Evaluation Tools | Provides a true picture of model performance on imbalanced data, focusing on minority class detection and cost. | Sensitivity/Recall, Precision, F1-Score, MCC, AUC-PR (from scikit-learn). |

| Molecular Representations | Data Input | The fundamental encoding of a chemical compound that the algorithm learns from. Different representations can significantly impact performance [31] [30]. | Extended-Connectivity Fingerprints (ECFP), Molecular Graphs, SMILES strings. |

Technical Support Center

Troubleshooting Guides

Guide 1: My GNN Model Fails to Learn on Molecular Data

Problem: The training loss does not decrease, or decreases very slowly, when training a Graph Neural Network on molecular property prediction tasks.

Solution:

- Verify Code is Bug-Free: Check for common programming errors in your GNN implementation. Ensure that weight updates are correctly applied, gradient expressions are correct, and that your loss function is appropriate for the task (e.g., do not use categorical cross-entropy for a regression problem) [32].

- Scale Your Data: Neural networks are highly sensitive to the scale of input data. Standardize your node and edge features to have a mean of 0 and unit variance, or scale them to a small interval like [-0.5, 0.5]. This acts as a pre-conditioning step and can dramatically improve training [32].

- Start with a Simple Model: Before using a complex architecture, build a simple GNN with a single hidden layer and verify it works. Incrementally add complexity (e.g., more layers, attention mechanisms) only after the simple model trains successfully [32].

- Check Your Data Pipeline: Inspect your data for

NaNorInfvalues. Ensure that when using a train/test split, the test data is scaled using the statistics from the training set, not its own. Visualize a batch of data to confirm the features and labels are correctly paired [33] [32]. - Overfit a Single Batch: A powerful diagnostic heuristic is to try and overfit your model to a very small batch of data (e.g., 5-10 examples). If the model cannot drive the training loss on this batch close to zero, it strongly indicates a fundamental bug in your model or data pipeline [33].

Guide 2: Addressing Data Imbalance in Molecular Property Regression

Problem: The model performs poorly on molecules with rare, but critically valuable, properties (e.g., high potency), which occupy sparse regions of the target label space [34].

Solution:

- Apply Targeted Data Augmentation: Use spectral-domain augmentation frameworks like SPECTRA to generate realistic synthetic molecular graphs tailored to underrepresented label regions. This method interpolates Laplacian eigenvalues/eigenvectors and node features of matched molecule pairs to create chemically plausible intermediates with interpolated property targets [34].

- Implement Rarity-Aware Sampling: Derive a budgeting scheme from a kernel density estimation of your labels. This concentrates augmentation efforts where data is scarcest, densifying these regions without distorting the global molecular topology [34].

- Leverage Advanced Architectures: Integrate Kolmogorov-Arnold Networks (KANs) into your GNN. KA-GNNs replace standard MLP components in node embedding, message passing, and readout functions with Fourier-based KAN modules. This enhances expressivity and parameter efficiency, which can be particularly beneficial for learning from limited data in rare property ranges [35].

- Use Appropriate Loss Functions and Regularization: Instead of optimizing for average error across the entire label distribution, consider cost-sensitive learning that increases the loss weight for under-represented, high-value samples. Techniques like RankSim can also regularize the latent space by aligning distances in the label and feature spaces [34].

Frequently Asked Questions (FAQs)

FAQ 1: What are the core components of a standard GNN architecture?

A standard GNN architecture is built from three fundamental layers [36]:

- Permutation Equivariant Layers: These layers (e.g., message passing layers) map a graph to an updated representation of the same graph. Nodes update their representations by aggregating information from their neighbors.

- Local Pooling Layers: These coarsen the graph via downsampling, increasing the receptive field of the GNN.

- Global Pooling (Readout) Layers: These provide a fixed-size representation of the entire graph and must be permutation invariant (e.g., using element-wise sum, mean, or maximum) [36].

FAQ 2: My model trains well but doesn't generalize to the test set. What should I check?

This is a classic sign of overfitting. Focus on these areas:

- Data Integrity: Ensure there is no "futurebleed" – that you have not accidentally included features or information in the training set that would not be available at test time. Verify your training and test sets are from the same distribution and were split correctly [37] [32].

- Regularization: Introduce or increase regularization techniques. For GNNs, this can include dropout on node features or attention weights, and graph normalization techniques [33].

- Model Complexity: Your model may be too complex for the amount of training data. Simplify the architecture by reducing the number of GNN layers or hidden units, which also shrinks the receptive field and can prevent over-smoothing [37].

FAQ 3: How can I represent a molecule as a graph for a GNN?

In molecular graphs [38] [39]:

- Nodes represent atoms.

- Edges represent covalent bonds between atoms. Each node and edge can store feature information. Node features may include the atom type, charge, or other atomic properties. Edge features can include bond type (e.g., single, double) and bond length [38].

FAQ 4: What are some common GNN architectures used in molecular property prediction?

Two widely used architectures are:

- Graph Convolutional Networks (GCNs): These layers perform a first-order approximation of spectral graph convolution. A node's representation is updated by aggregating the transformed features of its neighbors [36] [39].

- Graph Attention Networks (GATs): These layers use self-attention mechanisms to compute a weighted average of a node's neighbors' features. This allows the model to assign different levels of importance to different neighbors [36].

Experimental Protocols & Data

| Methodology | Core Principle | Key Advantage | Reported Performance (Example) |

|---|---|---|---|

| SPECTRA [34] | Spectral Target-Aware Graph Augmentation; interpolates graphs in the spectral domain (Laplacian eigenspace). | Generates structurally coherent, chemically plausible molecules for rare property ranges. | Improves error on rare compounds without degrading overall MAE on benchmarks. |

| KA-GNN [35] | Integration of Kolmogorov-Arnold Networks (KANs) into GNN components (embedding, message passing, readout). | Enhanced expressivity and parameter efficiency; improved interpretability by highlighting substructures. | Consistently outperforms conventional GNNs in accuracy and efficiency across 7 molecular benchmarks. |

| GraphKAN/GKAN [35] | Replaces MLPs in GNNs with KANs using B-spline basis functions. | Aims to improve the function approximation capability within the message-passing framework. | Enhanced performance compared to their original base models. |

Table 2: Essential Research Reagent Solutions

| Reagent / Component | Function in GNN Experimentation |

|---|---|

| Graph Convolutional Network (GCN) [36] | A foundational GNN architecture that performs convolutional operations on graphs, suitable for building baseline models. |

| Graph Attention Network (GAT) [36] | An architecture that uses attention mechanisms to assign different importance to different neighbors, beneficial for tasks where certain connections matter more. |

| Fourier-based KAN Layer [35] | A novel layer using Fourier series as learnable activation functions, can be integrated into GNNs to capture both low and high-frequency patterns in graph data. |

| Spectral Graph Augmentation (SPECTRA) [34] | A methodology for generating synthetic molecular graphs in the spectral domain to address label imbalance in regression tasks. |

| Message Passing Neural Network (MPNN) [36] [39] | A general framework that encapsulates many GNN architectures; useful for understanding and designing custom message-passing schemes. |

Methodologies and Workflows

Diagram 1: SPECTRA Augmentation Workflow

Diagram 2: KA-GNN High-Level Architecture

Diagram 3: Troubleshooting GNN Learning Failure

Harnessing Transfer Learning and Δ-ML for Low-Data Regimes

Frequently Asked Questions & Troubleshooting Guides

This technical support resource addresses common challenges in molecular property prediction, focusing on transfer learning and class imbalance issues critical for research in drug development and materials science.

Data and Model Selection

Q1: How can I select a good source model for transfer learning to avoid negative transfer on my specific target property?

Negative transfer occurs when a source task unrelated to your target task degrades performance. To quantify transferability before fine-tuning, use the Principal Gradient-based Measurement (PGM) [40].

- Experimental Protocol: Principal Gradient-based Measurement (PGM)

- Objective: Quantify the transferability between a source molecular property dataset and your target dataset.

- Method:

- Initialize a model with parameters θ.

- For both your source (S) and target (T) datasets, compute the "principal gradient." This is done by performing a forward pass on each dataset, calculating the gradient of the loss, and then taking the expectation of these gradients. This principal gradient approximates the direction of model optimization for that dataset.

- Calculate the distance (e.g., cosine distance) between the principal gradient of the source (gS) and the target (gT). A smaller distance indicates higher task relatedness and a lower risk of negative transfer [40].

- Interpretation: Use the resulting transferability map to select the most suitable source dataset from available options (e.g., PCBA, MUV, Tox21) for your target task.

Table 1: Example PGM Distances for Target Property 'BBBP' [40]

| Source Property | PGM Distance to BBBP | Expected Transfer Performance |

|---|---|---|

| PCBA | Low | High |

| MUV | Medium | Medium |

| Tox21 | High | Low (Risk of Negative Transfer) |

Q2: My dataset has a severe class imbalance. Which performance metrics should I use instead of accuracy?

Traditional metrics like accuracy are misleading for imbalanced datasets, as a model can achieve high accuracy by always predicting the majority class. Instead, use metrics that are sensitive to the performance on the minority class [41].

- Recommended Metrics:

- Precision: Measures the reliability of positive predictions.

- Recall (Sensitivity): Measures the model's ability to find all positive samples.

- F1 Score: The harmonic mean of precision and recall, providing a single balanced metric [41].

- Area Under the Precision-Recall Curve (AUPRC): Often more informative than the ROC curve for imbalanced datasets, as it focuses directly on the performance of the positive (minority) class [22] [41].

Table 2: Key Metrics for Imbalanced Classification

| Metric | Formula (Conceptual) | Focus |

|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) | Overall correctness, misleading when classes are imbalanced. |

| Precision | TP/(TP+FP) | How many of the predicted positives are truly positive. |

| Recall | TP/(TP+FN) | How many of the actual positives were correctly identified. |