Statistical Methods and Machine Learning: Revolutionizing Computational Chemistry in Drug Discovery

This article explores the transformative role of statistical techniques and machine learning in modern computational chemistry, with a specific focus on accelerating drug discovery.

Statistical Methods and Machine Learning: Revolutionizing Computational Chemistry in Drug Discovery

Abstract

This article explores the transformative role of statistical techniques and machine learning in modern computational chemistry, with a specific focus on accelerating drug discovery. It provides a comprehensive analysis for researchers and drug development professionals, covering foundational statistical theories, core methodological applications like QSAR and molecular docking, strategies for troubleshooting and optimizing computational models, and rigorous validation frameworks. By synthesizing the latest advancements, including the integration of artificial intelligence and the analysis of ultra-large chemical libraries, this review outlines how data-driven approaches are streamlining the identification and optimization of therapeutic candidates, reducing reliance on costly experimental methods, and reshaping the entire drug development pipeline.

The Statistical Bedrock: From Quantum Mechanics to Data-Driven Predictions

The Role of Density Functional Theory (DFT) in Predicting Molecular Properties

Density Functional Theory (DFT) has established itself as a cornerstone of modern computational chemistry, providing an unparalleled balance between accuracy and computational cost for predicting molecular properties. This quantum mechanical modeling method revolutionized the field by demonstrating that all properties of a multi-electron system can be determined using electron density rather than dealing with the complex many-electron wavefunction [1] [2]. The theoretical foundation laid by Hohenberg, Kohn, and Sham in the 1960s, which earned Walter Kohn the Nobel Prize in Chemistry in 1998, allows researchers to investigate the electronic structure of atoms, molecules, and condensed phases with remarkable efficiency [3] [2].

In pharmaceutical and materials research, DFT serves as a vital tool for elucidating molecular interactions, reaction mechanisms, and physicochemical properties that are often difficult or time-consuming to determine experimentally. By solving the Kohn-Sham equations with precision up to 0.1 kcal/mol, DFT enables accurate electronic structure reconstruction, providing theoretical guidance for optimizing molecular systems across diverse applications from drug formulation to catalyst design [4]. The method's versatility and predictive power have made it the "workhorse" of computational chemistry, supporting investigations into molecular structures, reaction energies, barrier heights, and spectroscopic properties with exceptional effort-to-insight ratios [5].

Theoretical Foundations and Key Concepts

Fundamental Principles of DFT

The theoretical framework of DFT rests upon two fundamental theorems introduced by Hohenberg and Kohn. The first theorem establishes that all ground-state properties of a many-electron system are uniquely determined by its electron density distribution, n(r) [1]. This revolutionary concept reduces the problem of 3N spatial coordinates (for N electrons) to just three spatial coordinates, dramatically simplifying the computational complexity. The second theorem defines an energy functional for the system and proves that the ground-state electron density minimizes this energy functional [1].

The practical implementation of DFT is primarily achieved through the Kohn-Sham equations, which introduce a fictitious system of non-interacting electrons that experiences an effective potential, Veff, encompassing electron-electron interactions [4] [1]. This approach separates the total energy functional into several components:

E[n] = Tₛ[n] + V[n] + J[n] + Eₓc[n]

Where Tₛ[n] represents the kinetic energy of the non-interacting electrons, V[n] is the external potential, J[n] is the classical Coulomb repulsion, and Eₓc[n] is the exchange-correlation functional that encompasses all quantum mechanical effects not accounted for by the other terms [1]. The accuracy of DFT calculations critically depends on the approximation used for this exchange-correlation functional, leading to the development of various classes of functionals with different accuracy and computational cost trade-offs.

Exchange-Correlation Functionals: The Jacob's Ladder Hierarchy

The development of approximate exchange-correlation functionals is often described in terms of "Jacob's Ladder," which classifies functionals in a hierarchical structure based on their ingredients and sophistication [3]:

Local Density Approximation (LDA): The simplest functional that depends only on the local electron density. While suitable for metallic systems and crystal structures, LDA has limitations in describing hydrogen bonding and van der Waals forces [4].

Generalized Gradient Approximation (GGA): Incorporates both the local electron density and its gradient, providing improved accuracy for molecular properties, hydrogen bonding systems, and surface studies [4].

Meta-GGA: Further enhances accuracy by including the kinetic energy density in addition to the density and its gradient, offering better descriptions of atomization energies and chemical bond properties [4].

Hybrid Functionals: Mix a portion of exact Hartree-Fock exchange with GGA or meta-GGA exchange, with popular examples including B3LYP and PBE0. These are widely employed for studying reaction mechanisms and molecular spectroscopy [4] [5].

Double Hybrid Functionals: Incorporate second-order perturbation theory corrections, substantially improving the accuracy of excited-state energies and reaction barrier calculations [4].

The selection of an appropriate functional depends on the specific research context and the properties of interest, requiring careful consideration of the trade-offs between accuracy, robustness, and computational efficiency [5].

DFT Applications in Molecular Property Prediction

Electronic Properties and Reactivity Descriptors

DFT provides powerful insights into electronic properties that govern chemical reactivity and molecular stability. Key electronic descriptors obtainable through DFT calculations include:

Frontier Molecular Orbitals: The Highest Occupied Molecular Orbital (HOMO) and Lowest Unoccupied Molecular Orbital (LUMO) energies and their spatial distributions provide crucial information about a molecule's reactivity, optical properties, and electron transport capabilities [6]. The HOMO-LUMO gap serves as an important indicator of kinetic stability and chemical reactivity.

Molecular Electrostatic Potential (MEP): MEP maps visualize the regional charge distribution in molecules, revealing electrophilic and nucleophilic sites critical for understanding intermolecular interactions and reaction mechanisms [4].

Fukui Functions: These reactivity indices, derived from DFT calculations, identify regions within a molecule most susceptible to nucleophilic, electrophilic, or radical attacks, enabling precise prediction of reaction sites [4].

Partial Atomic Charges: DFT-derived charge distributions facilitate understanding of polarity, binding interactions, and spectroscopic properties through analysis of electron density partitioning among atoms [6].

In pharmaceutical applications, these electronic descriptors enable rational drug design by predicting how potential drug molecules interact with biological targets, calculating binding energies, and elucidating electronic distributions that influence pharmacological activity [4] [2].

Thermodynamic and Spectroscopic Properties

DFT calculations provide accurate predictions of thermodynamic properties essential for understanding molecular stability and reaction energetics:

Reaction Energies and Barrier Heights: DFT enables precise calculation of reaction energies, activation barriers, and transition state structures, offering quantitative insights into reaction feasibility and kinetics [2].

Vibrational Frequencies and IR Spectra: Through molecular vibrational analysis, DFT predicts infrared spectra, normal modes, and vibrational frequencies that facilitate experimental spectrum interpretation and molecular identification [6].

Thermodynamic Quantities: By creating partition functions from vibrational frequencies, DFT calculates entropy, specific heat, free energy, and other thermodynamic parameters, enabling evaluation of thermodynamic stability at finite temperatures [6].

Zero-Point Vibrational Energies and Thermal Corrections: DFT-derived vibrational frequencies enable calculation of zero-point energy and thermal energy corrections crucial for accurate thermodynamic predictions [7].

For chemotherapy drugs, DFT-based QSPR models incorporating topological indices have successfully predicted essential thermodynamical attributes and biological activities, with curvilinear regression models significantly enhancing prediction capability for analyzing drug properties [7].

Structural Properties and Intermolecular Interactions

DFT excels at determining molecular geometries and quantifying intermolecular forces:

Equilibrium Geometries: Structural optimization through DFT calculations yields accurate bond lengths, angles, and dihedral angles that closely match experimental crystal structures [2].

Intermolecular Interaction Energies: DFT quantifies hydrogen bonding, van der Waals forces, π-π stacking, and other non-covalent interactions crucial for understanding molecular recognition, supramolecular assembly, and materials properties [4].

Binding Energies and Affinities: Calculations of interaction energies between molecules and their targets provide critical insights for drug design, catalyst development, and materials science [3].

In drug formulation design, DFT clarifies the electronic driving forces governing API-excipient co-crystallization, predicting reactive sites and guiding stability-oriented crystal engineering [4]. For nanodelivery systems, DFT optimizes carrier surface charge distribution through van der Waals interactions and π-π stacking energy calculations, thereby enhancing targeting efficiency [4].

Experimental Protocols and Best Practices

DFT Calculation Workflow

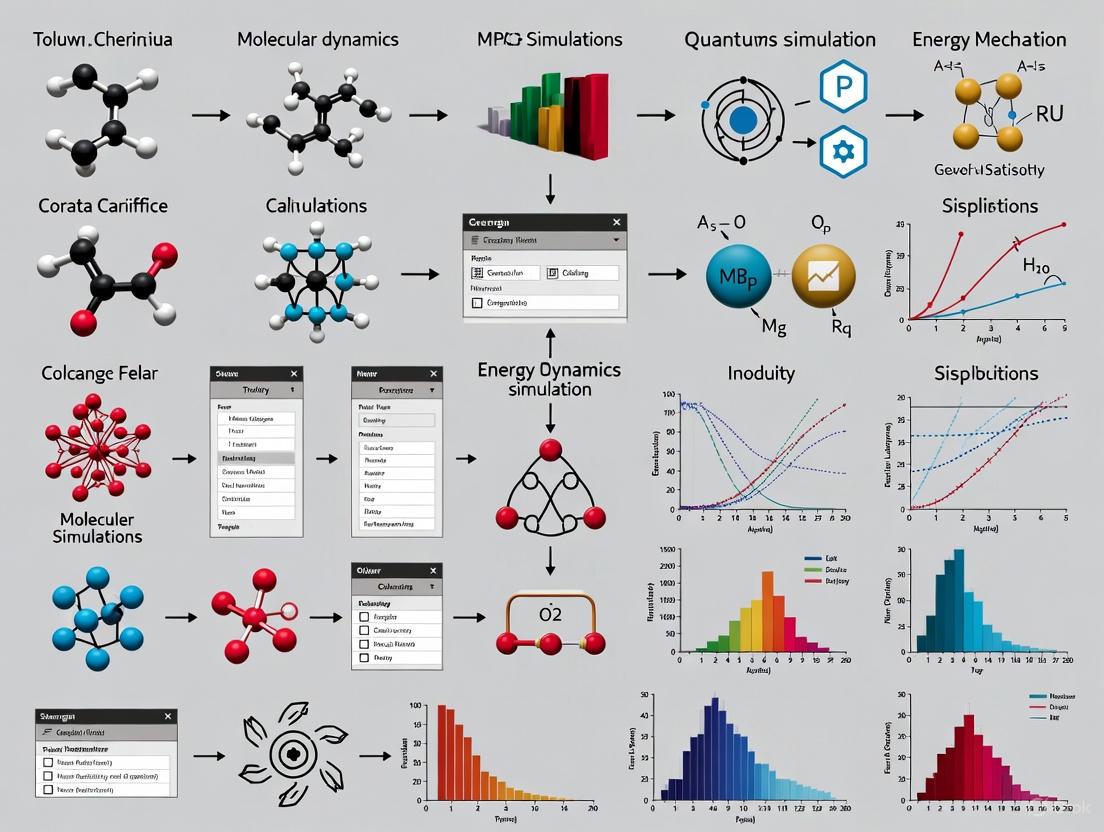

The following diagram illustrates a standardized workflow for conducting DFT calculations in molecular property prediction:

Diagram 1: Standardized DFT calculation workflow for molecular property prediction

Recommended Computational Protocols

Based on extensive benchmarking studies and empirical validation, the following protocols represent current best practices for DFT calculations of molecular properties:

Table 1: Recommended DFT Method Combinations for Different Chemical Applications

| Application Area | Recommended Functional | Basis Set | Dispersion Correction | Solvation Model |

|---|---|---|---|---|

| General Thermochemistry | r²SCAN-3c [5] | def2-mSVP [5] | D4 [5] | COSMO-RS [4] |

| Reaction Mechanisms | PBE0 [4] [8] | def2-TZVP [5] | D3(BJ) [5] | SMD [5] |

| Non-covalent Interactions | ωB97M-V [8] | def2-QZVP [5] | Included in functional | PCM [5] |

| Spectroscopic Properties | B3LYP [7] | 6-311+G(d,p) [5] | D3(0) [5] | COSMO [4] |

| Solid-State Systems | PBE [1] | Plane waves [6] | TS [5] | - |

Table 2: Essential Computational Tools for DFT-Based Molecular Property Prediction

| Tool Category | Representative Examples | Primary Function | Application Context |

|---|---|---|---|

| DFT Software Packages | Gaussian [2], ORCA [8], VASP [2], Quantum ESPRESSO [2] | Electronic structure calculation | Performing DFT calculations with various functionals and basis sets |

| Visualization Tools | GaussView, VESTA, ChemCraft | Molecular structure visualization | Preparing input structures and analyzing computational results |

| Wavefunction Analysis | Multiwfn, Bader Analysis, NBO [5] | Electron density analysis | Calculating topological indices [7] and charge distribution |

| Solvation Models | COSMO [4], SMD [5], PCM [5] | Implicit solvation treatment | Simulating solvent effects on molecular properties and reactions |

| Force Field Methods | GFN-FF, UFF, DREIDING | Molecular mechanics calculations | ONIOM QM/MM simulations [4] and conformational sampling |

| Machine Learning Extensions | Skala [3], ANI [8], MLIPs [8] | Enhanced sampling/property prediction | Accelerating discovery and improving accuracy of DFT predictions |

Advanced Methodologies and Integration with Statistical Approaches

DFT in Quantitative Structure-Property Relationship (QSPR) Modeling

The integration of DFT with QSPR modeling represents a powerful paradigm for predicting molecular properties and biological activities. DFT provides accurate electronic structure descriptors that serve as robust predictors in QSPR models, enabling the correlation of molecular structure with physicochemical properties and biological activities [7]. Key descriptors derived from DFT calculations include:

Quantum Chemical Descriptors: HOMO/LUMO energies, band gaps, dipole moments, polarizabilities, and electrostatic potential-derived parameters [7] [6].

Topological Indices: Wiener index, Gutman index, and other distance-based topological descriptors that can be correlated with DFT-derived thermodynamical attributes [7].

Surface Properties: Molecular surface areas, volume descriptors, and polar surface areas that influence solubility, permeability, and intermolecular interactions [7].

In chemotherapy drug development, DFT-based QSPR models employing curvilinear regression have demonstrated remarkable predictive capability for essential thermodynamical properties and biological activities. Studies show that curvilinear regression models, especially those with quadratic and cubic curve fitting, markedly enhance prediction accuracy for analyzing drug properties, with the Wiener index and Gutman index exhibiting superior performance among topological descriptors [7].

Multiscale Modeling and Machine Learning Integration

The combination of DFT with molecular mechanics and machine learning approaches has achieved computational breakthroughs, overcoming individual method limitations:

ONIOM Multiscale Framework: This approach employs DFT for high-precision calculations of drug molecule core regions while using molecular mechanics force fields to model protein environments, significantly enhancing computational efficiency without sacrificing accuracy [4].

Machine Learning-Augmented DFT: Deep learning models are increasingly used to approximate kinetic energy density functionals and improve exchange-correlation functionals. For instance, the Skala functional developed by Microsoft Research employs machine-learned nonlocal features of electron density to achieve hybrid-level accuracy at substantially reduced computational cost [3].

Machine Learning Interatomic Potentials (MLIPs): MLIPs trained on large DFT datasets enable molecular dynamics simulations at quantum mechanical accuracy for systems containing thousands of atoms, bridging the gap between accuracy and scale [8].

The integration of DFT with geometric deep learning models has shown particular promise in pharmaceutical applications. David F. Nippa's team utilized DFT-derived atomic charges to develop datasets for training graph neural networks that successfully predicted reaction yields and regioselectivity of drug molecules, achieving an average absolute error of 4-5% for yield prediction and 67% regioselectivity accuracy for major products across 23 commercial drug molecules [4].

Current Challenges and Future Perspectives

Limitations and Methodological Constraints

Despite its widespread success, DFT faces several challenges that impact its predictive power for molecular properties:

Exchange-Correlation Functional Approximations: The absence of a universal exchange-correlation functional means that no single functional performs optimally across all chemical systems, requiring careful functional selection for specific applications [1] [2].

Treatment of Weak Interactions: Standard DFT functionals struggle with accurate description of van der Waals forces and dispersion interactions, though modern empirical corrections (e.g., D3, D4) have substantially improved this limitation [5].

Dynamic Processes and Solvent Effects: Current approximations in solvation modeling often fail to accurately represent the effects of polar environments, particularly for dynamic non-equilibrium processes [4].

Strongly Correlated Systems: DFT faces challenges in accurately describing systems with strong electron correlation, such as transition metal complexes and certain radical species, which may require multi-reference approaches [1].

Accuracy of Forces: Recent studies have revealed unexpectedly large uncertainties in DFT forces in several popular molecular datasets, which can impact the training of machine learning interatomic potentials and geometry optimization reliability [8].

Emerging Trends and Future Directions

The future of DFT in molecular property prediction is being shaped by several promising developments:

Data-Driven Functional Development: The integration of machine learning with DFT is leading to a new generation of data-driven functionals trained on highly accurate wavefunction reference data, such as the Skala functional which reaches experimental accuracy for atomization energies of main group molecules [3].

High-Throughput Screening: Automated pipelines combining DFT with AI are enabling the screening of millions of compounds for applications in catalysis, photovoltaics, and pharmaceutical development, dramatically accelerating materials and drug discovery [2] [9].

Advanced Dynamics and Spectroscopy: The combination of DFT with molecular dynamics and enhanced sampling techniques allows for more realistic simulation of chemical processes under experimental conditions, including finite temperature and pressure effects [10].

Quantum Computing Integration: Future quantum computers may complement DFT by solving electronic structures with greater accuracy for challenging systems, potentially addressing current limitations in strongly correlated electron systems [2].

As these advancements mature, DFT is poised to become an even more powerful tool for predictive molecular property calculation, potentially enabling fully automated discovery platforms that accelerate breakthroughs across energy, healthcare, and sustainability research [2].

Linking Microscopic Models to Macroscopic Observables with Statistical Mechanics

Statistical mechanics provides the essential mathematical framework that connects the behavior of atoms and molecules to the macroscopic properties observed in the laboratory. For computational chemistry research, it forms the theoretical foundation that enables the prediction of bulk material properties from first-principles calculations [11]. This connection is achieved through the concept of ensembles—large collections of virtual systems representing possible microscopic states—and the partition function, which serves as the bridge between the quantum mechanical description of molecular systems and their thermodynamic observables [12].

The core challenge in computational chemistry is that directly simulating every microscopic interaction in a macroscopic sample remains computationally intractable. Statistical mechanics resolves this through probabilistic methods, allowing researchers to calculate macroscopic properties as weighted averages over accessible microscopic states [13] [12]. This approach is particularly valuable in drug development, where predicting binding affinities, solubility, and thermodynamic parameters of molecular interactions is crucial for compound optimization.

Fundamental Concepts: Microstates, Macrostates, and Ensembles

Definitions and Relationships

- Microstates: Represent the complete microscopic description of a system, including the precise positions and momenta of all particles [13]. For a quantum system, this corresponds to a specific wavefunction satisfying the Schrödinger equation.

- Macrostates: Describe the bulk, observable properties of a system, such as temperature (T), pressure (P), volume (V), and energy (E) [13]. These are the measurable quantities researchers aim to predict.

- Statistical Weight: The number of microstates (Ω) corresponding to a single macrostate [13]. Systems evolve toward macrostates with the highest statistical weight.

The Ergodic Hypothesis

The ergodic hypothesis posits that the time average of a mechanical property in a system equals the ensemble average over all accessible microstates [13]. This fundamental principle justifies replacing impractical dynamical simulations with statistical ensemble calculations, enabling efficient computation of equilibrium properties.

Table 1: Fundamental Concepts in Statistical Mechanics

| Concept | Mathematical Representation | Physical Significance |

|---|---|---|

| Microstate | Specific configuration (qᵢ, pᵢ) | Complete microscopic description |

| Macrostate | Set of variables (E, V, N) | Observable bulk properties |

| Entropy (Boltzmann) | S = kₐ ln Ω | Measure of disorder/multiplicity |

| Partition Function | Z = Σ e^(-βEᵢ) | Bridge to thermodynamics |

Key Ensemble Theories and Methodologies

Statistical ensembles represent the cornerstone of applying statistical mechanics to computational systems. Each ensemble corresponds to specific experimental conditions, making different ensembles appropriate for different research scenarios [12].

Table 2: Comparison of Primary Statistical Ensembles

| Ensemble Type | Fixed Parameters | Fluctuating Quantity | Partition Function | Primary Applications |

|---|---|---|---|---|

| Microcanonical (NVE) | N, V, E | Temperature | Ω(E,V,N) | Isolated systems, fundamental derivations |

| Canonical (NVT) | N, V, T | Energy | Z = Σ e^(-βEᵢ) | Systems in thermal equilibrium |

| Grand Canonical (μVT) | μ, V, T | Energy & Particle Number | Ξ = Σ e^(-β(Eᵢ-μNᵢ)) | Open systems, adsorption studies |

Computational Protocol 1: Calculating Thermodynamic Properties using the Canonical Ensemble

This protocol details the methodology for deriving macroscopic thermodynamic properties from microscopic energy levels using the canonical ensemble, which is appropriate for systems at constant temperature and volume.

Materials and Computational Requirements

- Quantum Chemistry Software: Packages such as Gaussian, GAMESS, ORCA, or NWChem for electronic structure calculations [11].

- Energy Calculation Method: Hartree-Fock, Density Functional Theory (DFT), or coupled cluster theory for determining electronic energy levels [11].

- Statistical Mechanics Code: Custom scripts or specialized software to compute the partition function and thermodynamic derivatives.

Procedure

System Preparation

- Define molecular geometry and electronic structure

- Select appropriate basis set and theoretical method (e.g., B3LYP/6-31G*)

- Ensure method validation against known experimental or theoretical benchmarks

Energy Level Calculation

- Compute the ground electronic state energy (E₀)

- Calculate vibrational frequencies and normal modes

- Determine rotational constants from molecular geometry

Partition Function Evaluation

- Calculate translational partition function: [ q{trans} = \left( \frac{2\pi mkBT}{h^2} \right)^{3/2} V ]

- Calculate rotational partition function (for linear molecules): [ q{rot} = \frac{8\pi^2 IkBT}{\sigma h^2} ]

- Calculate vibrational partition function (for each normal mode i): [ q{vib,i} = \frac{e^{-hνi/2kBT}}{1 - e^{-hνi/k_BT}} ]

- Calculate electronic partition function: [ q{elec} = g{e0} + g{e1}e^{-ΔE{01}/k_BT} + \cdots ]

- Compute total molecular partition function: ( Z = q{trans} \cdot q{rot} \cdot q{vib} \cdot q{elec} )

Thermodynamic Property Calculation

- Internal energy: ( U = kBT^2 \left( \frac{\partial \ln Z}{\partial T} \right){N,V} )

- Helmholtz free energy: ( A = -k_BT \ln Z )

- Entropy: ( S = kBT \left( \frac{\partial \ln Z}{\partial T} \right){N,V} + k_B \ln Z )

- Chemical potential: ( μ = -kBT \left( \frac{\partial \ln Z}{\partial N} \right){T,V} )

Computational Protocol 2: Free Energy Perturbation for Binding Affinity Calculation

This protocol employs statistical mechanics principles to compute binding free energies, a crucial parameter in drug design and development.

Materials and Computational Requirements

- Molecular Dynamics Software: Packages such as AMBER, GROMACS, or NAMD for sampling configurations.

- Force Field Parameters: Specifically parameterized for the drug candidate and target protein.

- High-Performance Computing Resources: Significant computational resources for adequate sampling.

Procedure

System Setup

- Prepare protein structure with co-crystallized ligand or docking pose

- Solvate the system in explicit water molecules

- Add counterions to neutralize system charge

- Energy minimization to remove steric clashes

Equilibration Protocol

- Position-restrained MD with gradually decreasing restraints

- Constant particle number, pressure, and temperature (NPT) ensemble equilibration

- Constant particle number, volume, and temperature (NVT) ensemble equilibration

- Monitor system stability through RMSD, potential energy, and temperature

Free Energy Calculation using Thermodynamic Integration

- Define coupling parameter λ (0 → 1) for alchemical transformation

- For each λ window, run MD simulation to collect configurations

- Compute ∂H/∂λ at each λ value

- Integrate to obtain free energy difference: [ ΔG = \int0^1 \left\langle \frac{\partial H(\lambda)}{\partial \lambda} \right\rangle\lambda d\lambda ]

Error Analysis

- Perform block averaging to assess statistical uncertainty

- Repeat calculations with different initial conditions where feasible

- Compare forward and backward transformations for hysteresis assessment

The Scientist's Toolkit: Essential Research Reagents and Computational Materials

Table 3: Key Computational Resources for Statistical Mechanics Applications

| Resource Category | Specific Examples | Function in Research |

|---|---|---|

| Electronic Structure Methods | DFT (B3LYP, PBE), MP2, Coupled Cluster | Calculate molecular energies and properties from first principles [11] |

| Force Fields | AMBER, CHARMM, OPLS-AA | Parameterize classical interaction potentials for molecular simulations |

| Molecular Dynamics Engines | GROMACS, NAMD, AMBER, OpenMM | Sample configurations from statistical ensembles |

| Quantum Chemistry Packages | Gaussian, ORCA, GAMESS, NWChem | Solve electronic Schrödinger equation for energy levels [11] |

| Free Energy Methods | FEP, TI, MM/PBSA | Calculate free energy differences for binding and solvation |

| Analysis Tools | MDAnalysis, VMD, PyMOL | Process simulation trajectories and visualize results |

Advanced Applications in Drug Development

Solvation Free Energy Calculations

Solvation free energy represents a critical property in pharmaceutical research, influencing drug solubility, distribution, and membrane permeability. Statistical mechanics approaches, particularly those employing implicit and explicit solvent models, enable accurate prediction of this key parameter through rigorous treatment of solute-solvent interactions.

Protein-Ligand Binding Affinities

The calculation of binding free energies represents one of the most valuable applications of statistical mechanics in drug discovery. Modern computational approaches achieve chemical accuracy (±1 kcal/mol) through advanced sampling techniques and rigorous treatment of entropic and enthalpic contributions, providing crucial insights for lead optimization before synthetic efforts.

Workflow: From Microscopic Models to Macroscopic Observables

Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) are computational modeling techniques that mathematically correlate the structures of chemical compounds with their biological activities (QSAR) or physicochemical properties (QSPR) [14]. These methodologies operate on the fundamental principle that molecular structure determines all properties and activities of a compound, enabling researchers to predict behavior without costly and time-consuming laboratory experiments [14].

The development of QSAR began in the 1960s with Corwin Hansch's pioneering work on Hansch analysis, which quantified relationships using physicochemical parameters like lipophilicity, electronic properties, and steric effects [14]. Over subsequent decades, the field has evolved dramatically—from using few interpretable descriptors and simple linear models to employing thousands of chemical descriptors and complex machine learning algorithms [14]. This evolution has positioned QSAR/QSPR as powerful tools across multiple disciplines, including drug discovery, materials science, toxicology, and environmental chemistry [14] [15].

Molecular Descriptors: The Foundation of QSAR/QSPR

Molecular descriptors are mathematical representations of molecular structures that convert chemical information into numerical values [14] [16]. These descriptors serve as the independent variables in QSAR/QSPR models, quantitatively encoding structural features that influence the property or activity being studied.

Effective descriptors must meet several criteria: they should comprehensively represent molecular properties, correlate with the target activity, be computationally feasible, possess clear chemical meaning, and be sensitive enough to capture subtle structural variations [14]. The accuracy and relevance of selected descriptors directly determine the predictive power and stability of QSAR/QSPR models [14].

Table 1: Categories and Examples of Molecular Descriptors

| Descriptor Category | Representative Examples | Structural Information Encoded | Typical Applications |

|---|---|---|---|

| Topological Indices | Atom Bond Connectivity (ABC) Index, Zagreb Indices, Wiener Index [16] | Molecular branching, connectivity patterns, overall compactness | Predicting stability, solubility of silicate structures [16] |

| Geometric Descriptors | Molecular volume, Surface area, Principal moments of inertia [17] | Three-dimensional size and shape | Porin permeability studies [17] |

| Electronic Descriptors | Partial atomic charges, Dipole moment, HOMO/LUMO energies [18] | Charge distribution, electronegativity, reactivity | Antimalarial drug design [18] |

| Constitutional Descriptors | Molecular weight, Atom counts, Bond counts [19] | Basic composition and bonding | Biofuel property prediction [19] |

| Physicochemical Parameters | LogP (lipophilicity), Polar surface area, Hydrogen bonding capacity [20] | Solubility, permeability, intermolecular interactions | Bioavailability prediction of phytochemicals [20] |

Computational Protocols and Workflows

General QSAR/QSPR Modeling Workflow

The development of robust QSAR/QSPR models follows a systematic workflow comprising several critical stages. The process begins with data collection and preparation, followed by molecular descriptor calculation, model building, validation, and finally application for prediction [14].

Protocol 1: Feature Selection for Predictive Modeling

Feature selection represents a critical step in QSAR/QSPR model development to minimize collinearity and enhance model interpretability without sacrificing predictive accuracy [19].

Materials and Software Requirements:

- Chemical structures in SMILES format

- Molecular descriptor calculation software (PaDEL-Descriptor, alvaDesc, or RDKit)

- Programming environment (Python with scikit-learn, TPOT)

- Dataset of compounds with known property/activity values

Procedure:

- Calculate Molecular Descriptors: Generate a comprehensive set of molecular descriptors from chemical structures using descriptor calculation software [20].

- Data Preprocessing: Handle missing values, normalize descriptor values, and remove constant descriptors.

- Collinearity Analysis: Calculate correlation matrix between all descriptor pairs and remove highly correlated descriptors (typically |r| > 0.95) [19].

- Feature Importance Ranking: Use tree-based algorithms (Random Forest, XGBoost) to rank descriptors by importance.

- Iterative Model Building: Build models with increasing numbers of top-ranked descriptors and evaluate performance via cross-validation.

- Optimal Feature Set Selection: Identify the point where additional descriptors no longer significantly improve model performance.

- Validation: Confirm selected features on hold-out test set and through domain expertise.

This protocol has been successfully applied to develop interpretable models for predicting melting point, boiling point, flash point, and other properties with mean absolute percent error ranging from 3.3% to 10.5% [19].

Protocol 2: Mixture Descriptor Calculation Using CombinatorixPy

Many real-world materials involve multiple components, presenting challenges for traditional QSAR/QSPR approaches. CombinatorixPy provides a method to derive numerical representations for multi-component systems using a combinatorial approach [21].

Materials and Software Requirements:

- Python environment with CombinatorixPy package installed

- Molecular descriptors for individual components

- Structural information for all mixture components

Procedure:

- Individual Component Characterization: Calculate molecular descriptors for each individual component in the mixture system.

- Combinatorial Matrix Generation: Compute the Cartesian product over sets of descriptors of constituents using CombinatorixPy [21].

- Interaction Modeling: Apply combinatorial mixing rules that assume intermolecular interactions in the mixture, calculating all possible interactions between different components [21].

- Descriptor Aggregation: Generate mixture descriptors by aggregating combinatorial descriptors according to mixture composition.

- Model Implementation: Utilize generated mixture descriptors in machine learning-based QSAR and QSPR models for predictive tasks.

This approach has enabled QSAR modeling of complex multi-component materials and polymers by representing them as mixture systems, significantly expanding the application domain of computational chemistry [21].

Advanced Applications and Case Studies

Case Study 1: Predicting Uranium Complex Stability Constants

Objective: Develop a QSAR model to predict stability constants of uranium coordination complexes for designing novel uranium adsorbents [15].

Experimental Design:

- Dataset: 108 uranium complexes with known stability constants

- Descriptors: Physicochemical properties, coordination numbers of ligands, molecular charge, number of water molecules

- Model: CatBoost regressor with hyperparameter optimization

- Validation: External test set with applicability domain analysis

Results: The model achieved R² = 0.75 on the external test set, successfully predicting stability constants from molecular composition alone. This provides a valuable tool for efficient design of uranium adsorption materials, potentially improving uranium collection processes from wastewater and seawater [15].

Case Study 2: Bioavailability Prediction for Phytochemicals

Objective: Develop QSPR models to predict bioavailability indicators of phytochemicals using Caco-2 cell assay data [20].

Experimental Design:

- Dataset: 84 phytochemicals with measured bioavailability indicators (TEER, Papp, efflux ratio)

- Descriptor Calculation: 40 molecular descriptors derived from Isomeric SMILES using PaDEL-Descriptor and alvaDesc

- Model: Random Forest regressor

- Validation: Train-test split with principal component analysis and Williams plot for applicability domain assessment

Results: The models demonstrated strong predictive performance with R² values of 0.63 (TEER), 0.91 (Papp), and 0.85 (efflux ratio) on test sets. This prediction system contributes to advancements in discovering functional ingredients and drugs by efficiently screening phytochemical bioavailability [20].

Table 2: Performance Metrics for Bioavailability Prediction Models

| Bioavailability Indicator | R² Training | RMSE Training | R² Test | RMSE Test |

|---|---|---|---|---|

| Transepithelial Electrical Resistance (TEER) | 0.86 | 55.25 | 0.63 | 74.77 |

| Apparent Permeability (Papp) | 0.95 | 4.54×10⁻⁶ | 0.91 | 6.23×10⁻⁶ |

| Efflux Ratio | 0.92 | 0.39 | 0.85 | 0.71 |

Case Study 3: Machine Learning for PBT Chemical Screening

Objective: Develop QSAR models for predicting Persistent, Bioaccumulative, and Toxic (PBT) properties of chemicals using machine learning [22].

Experimental Design:

- Dataset: Assembled dataset of PBT and non-PBT chemicals

- Descriptor Calculation: Molecular descriptors derived from SMILES representations

- Models: Eight machine learning models including Random Forest, XGBoost, and Multi-Layer Perceptron

- Validation: Accuracy and AUC metrics with cross-validation

Results: Random Forest demonstrated the best predictive ability, highlighting the potential of machine learning for high-throughput screening of hazardous chemicals. This approach supports regulatory decision-making and environmental risk assessment by efficiently identifying PBT compounds [22].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Essential Computational Tools for QSAR/QSPR Research

| Tool Name | Type/Function | Application in QSAR/QSPR | Access |

|---|---|---|---|

| CombinatorixPy | Python package | Calculates mixture descriptors for multi-component materials [21] | Open source |

| PaDEL-Descriptor | Software descriptor | Calculates molecular descriptors from chemical structures [20] | Free for academic use |

| alvaDesc | Software descriptor | Computes molecular descriptors and fingerprints [20] | Commercial |

| RDKit | Cheminformatics library | Generates molecular fingerprints (ECFP4, MHFP6) and descriptors [23] | Open source |

| TPOT | Automated machine learning | Optimizes machine learning pipelines for feature selection [19] | Open source |

| CatBoost | Machine learning algorithm | Gradient boosting for regression and classification tasks [15] | Open source |

Emerging Methodologies and Future Directions

The field of QSAR/QSPR continues to evolve with several emerging trends. Adaptive Topological Regression (AdapToR) represents a recent innovation that maps distances in the chemical domain to distances in the activity domain, demonstrating predictive performance comparable to state-of-the-art deep learning models while maintaining interpretability and computational efficiency [23]. When evaluated on the NCI60 GI50 dataset containing over 50,000 drug responses, AdapToR outperformed competing models including Transformer CNN and Graph Transformer with significantly lower computational cost [23].

The integration of machine learning, particularly deep learning algorithms, has profoundly impacted QSAR/QSPR methodologies [14]. Artificial Neural Networks and Random Forest models can learn complex, non-linear relationships between molecular descriptors and properties, enabling more accurate predictions of physicochemical parameters and biological activities [18]. These advancements are accompanied by growing dataset sizes and more sophisticated molecular descriptors, continuously expanding the applicability domain of QSAR/QSPR models [14].

Future development focuses on creating universal QSAR models capable of predicting activities across diverse molecular classes, which requires larger and higher-quality datasets, more precise molecular descriptors, and powerful yet interpretable mathematical models [14]. As these elements continue to improve, QSAR/QSPR will play an increasingly important role in molecular design across various scientific and industrial fields.

The Evolution from Physical Principles to Machine Learning in Chemistry

The field of computational chemistry is undergoing a profound transformation, evolving from a discipline rooted exclusively in first-principles physical theories to one that increasingly leverages statistical techniques and machine learning (ML). This evolution addresses a fundamental challenge: while ab initio quantum chemistry methods predict molecular properties solely from fundamental physical constants and system composition, they often come with prohibitive computational costs that limit their application to realistically complex systems [24]. The integration of machine learning has created a powerful synergy, where physical principles provide the foundational truth for training models, and statistical methods enable the rapid exploration of chemical space. This paradigm shift is particularly impactful for researchers and drug development professionals who require accurate predictions of molecular behavior without the time and resource constraints of traditional computational methods.

The core of this evolution lies in building ML models that are trained on high-quality quantum mechanical data, enabling them to achieve near-ab initio accuracy at a fraction of the computational cost [25]. This approach maintains the rigor of physical theory while overcoming the scaling limitations of conventional methods. As these trained models can provide predictions thousands of times faster than the density functional theory (DFT) calculations used to train them, they unlock the ability to simulate large atomic systems that have always been out of reach for traditional computational approaches [26]. This document details the protocols, applications, and resources driving this transformation, providing a framework for researchers to implement these advanced techniques in their computational chemistry workflows.

Theoretical Foundation: From Quantum Mechanics to Machine Learning Potentials

The accuracy of machine learning in chemistry is fundamentally dependent on the physical principles embedded within its training data. The hierarchy of computational methods rests on an interdependent framework of physical theories, each contributing essential concepts while introducing inherent approximations [24].

The Physical Theory Hierarchy

Table 1: Foundational Physical Theories in Computational Chemistry

| Physical Theory | Key Contribution to Computational Chemistry | Representative Computational Methods |

|---|---|---|

| Quantum Mechanics | Provides the fundamental description of molecular systems via the Schrödinger equation; determines electronic structure, energies, and properties. | Schrödinger Equation, Wavefunction Methods [27] [24] |

| Classical Mechanics | Enables the Born-Oppenheimer approximation, separating nuclear and electronic motion to simplify quantum calculations. | Molecular Mechanics, Force Fields [24] |

| Classical Electromagnetism | Establishes the form of the molecular Hamiltonian, describing Coulombic interactions between charged particles. | Density Functional Theory (DFT) [26] [24] |

| Thermodynamics & Statistical Mechanics | Provides the critical link between microscopic quantum states and macroscopic observables via the partition function. | Thermodynamic Property Prediction [24] |

| Relativity | Mandatory for accurate treatment of heavy elements, governed by the Dirac equation; affects orbital contraction and spin-orbit coupling. | Relativistic DFT [24] |

| Quantum Field Theory | Provides the second quantization formalism underpinning high-accuracy methods like Coupled Cluster theory. | Coupled Cluster (CCSD(T)) [28] [24] |

The Machine Learning Bridge

Machine learning creates a bridge from these physical theories to practical application. The core concept involves using high-accuracy quantum chemical calculations (e.g., DFT or CCSD(T)) to generate reference data, which is then used to train Machine Learned Interatomic Potentials (MLIPs). These MLIPs learn the relationship between atomic structure and potential energy surfaces, allowing them to predict properties for new, unseen structures with high fidelity and speed [26] [25]. The usefulness of an MLIP is directly determined by the amount, quality, and chemical diversity of the data it was trained on, making the generation of comprehensive datasets a critical research focus [26].

Quantitative Landscape of Modern Chemical Datasets

The data-driven approach to computational chemistry relies on extensive, high-quality datasets for training robust models. Recent efforts have produced datasets of unprecedented scale and diversity, systematically covering vast regions of chemical space.

Table 2: Comparative Analysis of Major Quantum Chemistry Datasets for ML

| Dataset Name | Calculation Method & Data Volume | System Size & Chemical Diversity | Key Computed Properties |

|---|---|---|---|

| Open Molecules 2025 (OMol25) [26] | DFT (100+ million 3D snapshots) | Up to 350 atoms; broad periodic table coverage including heavy elements and metals. | Energies, forces on atoms, system energy. |

| QCML Dataset [29] | 33.5M DFT calculations; 14.7B Semi-empirical calculations | Small molecules (≤8 heavy atoms); large fraction of periodic table; different electronic states. | Energies, forces, multipole moments, Kohn-Sham matrices. |

| QM9 [29] | DFT (133,885 molecules) | Small organic molecules (up to 9 heavy atoms: C, N, O, F). | Atomization energies, dipole moments, HOMO/LUMO energies. |

| PubChemQC [29] | DFT (86 million molecules) | Equilibrium structures for 93.7% of PubChem molecules. | Equilibrium structure properties. |

| ANI-1 [29] | DFT (>20 million conformations) | ~60k organic molecules; off-equilibrium conformations. | Energies and forces for molecular dynamics. |

The scale of computational effort required for these datasets is staggering. For instance, the OMol25 dataset consumed six billion CPU hours, a computation that would take over 50 years on 1,000 typical laptops [26]. This investment is justified by the resulting capabilities, as MLIPs trained on such data can provide predictions of DFT-level caliber approximately 10,000 times faster, making large-scale molecular simulations practical on standard computing resources [26].

Experimental Protocols for ML-Driven Discovery

Protocol 1: Developing a Machine-Learned Force Field (MLFF)

Application Note: This protocol describes the process of creating an MLFF to run accurate molecular dynamics simulations at a fraction of the computational cost of traditional ab initio methods.

Materials & Data Requirements:

- Reference Data: A curated dataset of molecular structures with corresponding energies and forces, typically from DFT or CCSD(T) calculations (e.g., from the QCML or OMol25 datasets) [26] [29].

- Computing Resources: Access to high-performance computing (HPC) clusters for model training, though inference can be run on standard systems.

- Software: ML training frameworks (e.g., PyTorch, TensorFlow) and specialized architectures like E(3)-equivariant graph neural networks [28].

Procedure:

- Data Generation and Curation: Select a diverse set of molecular structures representing the chemical space of interest. This includes both equilibrium and off-equilibrium conformations to ensure the model generalizes well [29].

- Reference Calculation: Perform high-level quantum chemistry calculations (e.g., DFT with a suitable functional) for each structure to obtain the target properties: total energy, atomic forces, and optionally other electronic properties [26] [29].

- Model Selection and Architecture: Choose a suitable ML model architecture. Graph Neural Networks (GNNs), particularly E(3)-equivariant variants, are state-of-the-art as they naturally represent molecular structures and respect physical symmetries [28].

- Model Training: Train the model to learn the mapping from atomic structure (atomic numbers and positions) to the target quantum chemical properties. The training involves minimizing the loss function, which measures the difference between the model's predictions and the reference data.

- Validation and Evaluation: Test the trained model on a held-out set of molecules not seen during training. Evaluate its performance on key benchmarks, such as energy and force errors, and its ability to reproduce known physical phenomena [26].

- Deployment in Simulation: Integrate the validated MLFF into molecular dynamics simulation packages (e.g., LAMMPS, OpenMM) to run large-scale and long-time-scale simulations.

Protocol 2: Predicting Reaction Outcomes with Physical Constraints

Application Note: This protocol uses the FlowER (Flow matching for Electron Redistribution) model to predict the products of chemical reactions while strictly adhering to physical laws like conservation of mass and electrons [30].

Materials & Data Requirements:

- Reaction Data: A dataset of known chemical reactions with reactants and products, such as those derived from the U.S. Patent Office database [30].

- Representation Scheme: The bond-electron matrix representation from Ugi theory, which tracks atoms, bonds, and lone electron pairs [30].

- Software: The open-source FlowER implementation available on GitHub [30].

Procedure:

- Data Preprocessing: Represent all reactions in the dataset using the bond-electron matrix. This matrix explicitly represents the electrons in a reaction, with nonzero values for bonds or lone pairs and zeros otherwise [30].

- Model Training: Train the FlowER model, a generative AI approach using flow matching, on the preprocessed reaction data. The model learns the probability distribution of electron rearrangements that connect reactants to products.

- Reaction Prediction: For a new set of reactants, the model generates potential products by sampling the learned distribution of electron flows. The use of the bond-electron matrix as a foundation ensures that all predicted products automatically conserve mass and electrons [30].

- Validation: Compare the model's predictions against experimentally known outcomes or high-level computational results to assess its accuracy and reliability. The model has been shown to match or outperform existing approaches while guaranteeing physically valid outputs [30].

Workflow Visualization: From Physical Data to Chemical Prediction

The following diagram illustrates the integrated workflow for developing and applying machine learning models in computational chemistry, synthesizing the key steps from the protocols above.

Diagram Title: ML in Chemistry Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Computational Tools and Resources for ML-Driven Chemistry

| Tool/Resource Name | Type | Primary Function in Research |

|---|---|---|

| OMol25 Dataset [26] | Reference Data | Training MLIPs on diverse, large-system chemistry; provides benchmark evaluations. |

| QCML Dataset [29] | Reference Data | Training foundation models for quantum chemistry across a wide elemental range. |

| FlowER Model [30] | Software/Model | Predicting chemical reaction outcomes with guaranteed physical constraints (mass/electron conservation). |

| MEHnet Architecture [28] | Software/Model (Multi-task) | Predicting multiple electronic properties (energy, dipole, polarizability) simultaneously with high accuracy. |

| Stereoelectronics-Infused Molecular Graphs (SIMGs) [31] | Molecular Representation | Enhancing ML models with quantum-chemical orbital interactions for better accuracy on small datasets. |

| Universal Model (from Meta FAIR) [26] | Software/Model | A pre-trained, general-purpose MLIP for "out-of-the-box" atomistic simulations. |

| Coupled Cluster Theory (CCSD(T)) [28] | Computational Method | Generating the "gold standard" reference data for training high-accuracy models on small molecules. |

| Density Functional Theory (DFT) [26] | Computational Method | The workhorse method for generating large-scale reference data for training MLIPs. |

The evolution from physical principles to machine learning in chemistry represents a fundamental shift in scientific methodology. By grounding statistical models in the rigorous data produced by ab initio theories, researchers can now navigate chemical space with unprecedented speed and accuracy. This synergy is not a replacement for physical understanding but rather its amplification, creating a powerful, scalable tool for discovery.

The future of this field lies in several key directions: expanding the breadth of chemical elements and reaction types covered by models, particularly for catalysis and heavy elements [30] [28]; improving the interpretability of ML models to extract new chemical insights [25] [31]; and the development of more sophisticated multi-task models that can predict a wide range of properties from a single architecture [28]. Furthermore, the creation of extensive, open-access datasets and standardized benchmarks will continue to drive progress, fostering community-wide innovation [26] [29]. As these tools mature and become more integrated into automated workflows and autonomous laboratories [25], they will profoundly accelerate the design of new drugs, materials, and energy solutions, firmly establishing a new paradigm for scientific discovery in chemistry.

Core Techniques in Action: QSAR, Docking, and AI-Driven Discovery

Quantitative Structure-Activity Relationship (QSAR) Modeling with Machine Learning Algorithms

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational chemistry and drug discovery, enabling the prediction of biological activity from molecular structure. The integration of machine learning (ML) algorithms has revolutionized QSAR, facilitating the modeling of complex, non-linear relationships in high-dimensional chemical data. This protocol details the application of ML-augmented QSAR methodologies, from foundational principles and descriptor calculation to advanced model construction, validation, and application within drug development pipelines. Adherence to these protocols allows researchers to build robust, predictive models that accelerate virtual screening, lead optimization, and toxicity prediction, while ensuring regulatory compliance and interpretability.

Quantitative Structure-Activity Relationship (QSAR) models are regression or classification models that relate the physicochemical properties or theoretical molecular descriptors of chemicals to a biological activity [32]. The fundamental principle posits that a mathematical relationship exists between molecular structure and biological output, expressed as Activity = f (physicochemical properties and/or structural properties) [33] [32]. The integration of machine learning (ML) has transformed QSAR from classical linear models to sophisticated frameworks capable of navigating complex chemical spaces and capturing non-linear patterns [34]. This shift is critical for modern drug discovery, where ML-powered QSAR facilitates the virtual screening of billion-compound libraries, de novo drug design, and the multi-parametric optimization of lead compounds, ultimately reducing the time and cost associated with experimental hit-to-lead progression [34] [35].

Successful ML-QSAR modeling requires a suite of computational "reagents." The following table details key components.

Table 1: Essential Research Reagent Solutions for ML-QSAR Modeling

| Category | Item | Function and Explanation |

|---|---|---|

| Software & Platforms | KNIME, scikit-learn, RDKit, PaDEL-Descriptor | Provides integrated environments for data preprocessing, machine learning model construction (e.g., AutoQSAR), and molecular descriptor calculation [34] [32]. |

| Molecular Descriptors | Dragon Descriptors, Topological Indices (e.g., Wiener, Zagreb), Quantum Chemical Descriptors (e.g., HOMO-LUMO) | Numerical representations encoding chemical, structural, or physicochemical properties. Topological indices quantify molecular connectivity and shape, while quantum descriptors capture electronic properties crucial for bioactivity [34] [18]. |

| Machine Learning Algorithms | Random Forest (RF), Support Vector Machines (SVM), Graph Neural Networks (GNNs) | Algorithms for constructing predictive models. RF is prized for robustness and handling noisy data; GNNs operate directly on molecular graphs to learn hierarchical features without manual descriptor engineering [34] [35]. |

| Validation Tools | SHAP (SHapley Additive exPlanations), Y-Scrambling, Applicability Domain (AD) Analysis | Methods for model interpretation and validation. SHAP explains feature contributions, Y-scrambling tests for chance correlations, and AD defines the chemical space where the model is reliable [34] [32]. |

| Data Resources | Public Cheminformatics Databases (e.g., ChemSpider), Cloud-Based Platforms (e.g., OrbiTox) | Sources of chemical structures, bioactivity data, and curated models. Platforms like OrbiTox provide vast data points and built-in predictors for regulatory submissions [36] [18]. |

Protocol 1: Foundational Workflow for ML-QSAR Modeling

The following diagram illustrates the standard end-to-end workflow for developing and deploying a validated ML-QSAR model.

Data Set Curation and Preprocessing

- Objective: Assemble a high-quality, congeneric set of molecules with known biological activities (e.g., IC₅₀, EC₅₀) [33].

- Procedure:

- Source Data: Obtain structures and corresponding bioactivity data from public databases (e.g., ChEMBL, PubChem) or proprietary corporate libraries.

- Curate Structures: Standardize chemical structures (e.g., neutralize charges, remove duplicates, specify tautomers) using toolkits like RDKit.

- Handle Activity Data: Convert biological activities to a uniform scale, typically molar units, and express potent activities as pIC₅₀ or pEC₅₀ (-log₁₀(IC₅₀)) to linearize the relationship with binding energy.

- Address Inactives: Include confirmed inactive compounds to improve model classification performance and reduce the risk of false positives.

Molecular Descriptor Calculation and Selection

- Objective: Generate numerical representations of the molecular structures and select the most informative features to avoid overfitting [34] [32].

- Procedure:

- Calculate Descriptors: Use software like PaDEL-Descriptor, DRAGON, or RDKit to compute a wide array of descriptors. These can range from simple 1D/2D descriptors (e.g., molecular weight, logP, topological indices) to 3D descriptors (e.g., molecular surface area, volume) and quantum chemical descriptors (e.g., HOMO-LUMO energy) [34] [18].

- Preprocess Descriptors: Remove descriptors with zero or near-zero variance. Impute missing values if necessary or remove the corresponding descriptors/compounds.

- Select Features: Apply feature selection algorithms such as Recursive Feature Elimination (RFE), LASSO (Least Absolute Shrinkage and Selection Operator), or mutual information ranking to identify a subset of descriptors most relevant to the biological activity [34]. This step is crucial for enhancing model interpretability and generalizability.

Dataset Splitting and Model Training

- Objective: Partition the data and train a machine learning model to learn the relationship between descriptors and activity.

- Procedure:

- Data Splitting: Split the curated dataset into a training set (typically 70-80%) for model development and a hold-out test set (20-30%) for final model evaluation. This split should be stratified if dealing with classification tasks to maintain class distribution.

- Algorithm Selection: Choose an appropriate ML algorithm based on the problem:

- Hyperparameter Optimization: Use techniques like grid search or Bayesian optimization with cross-validation on the training set to find the optimal model parameters.

Model Validation and Interpretation

- Objective: Rigorously assess the model's predictive performance and reliability, and interpret its decisions.

- Procedure:

- Internal Validation: Use k-fold cross-validation (e.g., 5-fold or 10-fold) on the training set to assess model robustness, reporting metrics like Q² (cross-validated R²) [34] [32].

- External Validation: Use the hold-out test set, which was not involved in model training or parameter tuning, to evaluate the model's generalizability. Report statistics like R²ₑₓₜ, RMSEₑₓₜ, and accuracy.

- Interpretability Analysis: Employ model-agnostic interpretation tools like SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to determine which molecular descriptors are driving the predictions, moving the model from a "black box" to a actionable hypothesis generator [34].

Protocol 2: Advanced Integration with Structural Biology

ML-QSAR models can be significantly enhanced by integrating structural information from molecular docking, providing a hybrid ligand- and structure-based approach.

Consensus Docking and QSAR Refinement

- Objective: Overcome the high false-positive rate limitations of single molecular docking programs by combining multiple docking results and refining with QSAR [35].

- Procedure:

- Perform Consensus Docking: Dock a library of compounds against a target protein using at least two different docking programs (e.g., AutoDock Vina and DOCK6) with optimized scoring functions.

- Generate Docking Consensus: Cross-examine results to identify compounds consistently ranked as top hits across different programs. This reduces false positives but may lower the success rate of identifying all true actives [35].

- Build a Hybrid ML-QSAR Model: Use docking scores and interaction fingerprints from the consensus docking results as additional descriptors in the ML-QSAR model. This integrates energetic and structural interaction data with ligand-based physicochemical properties.

- Validate Experimentally: As demonstrated in a beta-lactamase inhibitor study, this hybrid approach can restore a high success rate (e.g., 70%) while maintaining a low false positive rate (e.g., 21%), outperforming consensus docking or QSAR alone [35].

Table 2: Key Metrics from an Advanced ML-QSAR/Consensus Docking Study on Beta-Lactamase Inhibitors [35]

| Method | Success Rate (Identification of Actives) | False Positive Rate | Key Insight |

|---|---|---|---|

| Single Docking (DOCK6) | 70% | Not Specified (High) | Optimized scoring function is critical for performance. |

| Consensus Docking (Vina + DOCK6) | 50% | 16% | Reduces false positives but also lowers success rate. |

| Consensus Docking + RF-QSAR | 70% | ~21% | Restores high success rate while keeping false positives low. |

| Consensus Docking + Logistic Regression QSAR | <70% | >21% | Highlights superiority of non-linear ML (RF) over linear models. |

Data Analysis and Model Validation Standards

A robust ML-QSAR model must be validated both internally and externally, and its applicability domain must be defined.

Validation Techniques

- Internal Cross-Validation: Assess model stability using Leave-One-Out (LOO) or k-fold cross-validation. A commonly reported metric is Q² (cross-validated correlation coefficient). A Q² > 0.5 is generally considered acceptable [34] [32].

- External Validation: The gold standard for evaluating predictive power. The model is used to predict the activity of the external test set compounds. The squared correlation coefficient (R²ₑₓₜ) between predicted and observed activities for these compounds should be greater than 0.6 [32].

- Y-Scrambling: Test for the presence of chance correlations. The response variable (Y) is randomly shuffled multiple times, and new models are built. A valid model should have significantly lower performance metrics in the scrambled models than in the real one [32].

Defining the Applicability Domain (AD)

- Objective: The Applicability Domain is the chemical space region defined by the training set molecules and model descriptors. Predictions are only reliable for new compounds that fall within this domain [32].

- Methods: The AD can be defined using approaches such as:

- Leverage (Hat Matrix): Identifying compounds that are structurally extreme compared to the training set.

- Distance-Based Methods: Using metrics like Euclidean or Mahalanobis distance to the nearest training set compound. It is established that prediction error increases with the distance to the training set [37].

The following diagram summarizes the critical steps and decision points in the model development and validation cycle.

Application Notes in Drug Discovery

ML-QSAR models have demonstrated significant impact across various stages of the drug discovery pipeline, as evidenced by recent case studies.

Table 3: Representative Applications of ML-QSAR in Modern Drug Discovery

| Therapeutic Area / Target | ML-QSAR Approach | Reported Outcome and Impact |

|---|---|---|

| Beta-Lactamase Inhibitors [35] | Random Forest-based QSAR combined with consensus docking (DOCK6 & Vina). | Restored success rate to 70% with a low false-positive rate (~21%), identifying three new inhibitors from an in-house library. |

| Estrogen Receptor (ERα) Binding [38] | 3D-QSAR models using RF, SVM, and Multilayer Perceptron (MLP). | ML-based 3D-QSAR models (especially MLP) outperformed traditional VEGA models in accuracy and sensitivity for predicting endocrine disruption. |

| SARS-CoV-2 Main Protease (Mpro) [34] | Combined ML approaches and QSAR to analyze inhibitors. | Accelerated the virtual screening and identification of potential anti-COVID-19 drug candidates by modeling the structure-activity relationship. |

| Antimalarial Drug Development [18] | QSPR analysis using Artificial Neural Networks (ANN) and RF with topological indices. | Predicted physicochemical properties of antimalarial compounds, supporting the rational design of new therapeutic candidates with improved properties. |

| Alzheimer's Disease (BACE-1 Inhibitors) [34] | 2D-QSAR, docking, ADMET prediction, and Molecular Dynamics (MD). | Enabled the design of blood-brain barrier permeable BACE-1 inhibitors, streamlining the lead optimization process. |

Troubleshooting and Technical Notes

- Low Predictive Performance on Test Set: This is often a sign of overfitting or a poorly defined Applicability Domain. Solutions include increasing the training set size, implementing more aggressive feature selection, using simpler models, or applying stronger regularization [34] [37].

- Model is a "Black Box": To enhance interpretability for regulatory submissions and hypothesis generation, integrate post-hoc explanation tools like SHAP or LIME. These tools quantify the contribution of each descriptor to an individual prediction, revealing structure-activity relationships [34].

- Inability to Generalize: If a model performs well on the training set but fails on new chemical series, it may be learning dataset-specific artifacts. Y-scrambling can identify this issue. Furthermore, ensure new compounds fall within the model's pre-defined Applicability Domain [32] [37].

- Handling Categorical and Mixed Data: For classification tasks (e.g., active/inactive), use metrics like accuracy, sensitivity, specificity, and ROC-AUC. For datasets with mixed activities, ensure the data split maintains the distribution of classes in both training and test sets.

Molecular Docking and Virtual High-Throughput Screening (vHTS) of Ultra-Large Libraries

Virtual High-Throughput Screening (vHTS) of ultra-large libraries represents a paradigm shift in early drug discovery, enabling researchers to computationally screen billions of readily available compounds from make-on-demand chemical libraries. The chemical space for drug-like molecules is estimated to contain up to 10^60 possible compounds, presenting both an unprecedented opportunity and substantial computational challenge for hit identification [39]. Traditional vHTS approaches become prohibitively expensive when applied to libraries containing billions of molecules, especially when incorporating essential molecular flexibility. Ultra-large library screening addresses this challenge through advanced algorithms that efficiently explore combinatorial chemical space without exhaustively enumerating all possible molecules [39]. This approach leverages the fundamental structure of make-on-demand libraries, which are constructed from lists of substrates and robust chemical reactions, enabling the virtual exploration of synthetically accessible compounds that can be rapidly obtained for experimental validation [39].

The statistical foundation of these methods lies in their ability to sample chemical space efficiently, prioritizing regions most likely to contain high-affinity binders through evolutionary algorithms, machine learning, and other optimization techniques. The implementation of these statistical sampling methods has demonstrated improvements in hit rates by factors between 869 and 1622 compared to random selection, making ultra-large library screening one of the most efficient approaches for drug discovery in vast chemical spaces [39].

Key Methodologies and Algorithms

Evolutionary Algorithms for Library Screening

Evolutionary algorithms have emerged as powerful statistical optimization techniques for navigating ultra-large chemical spaces. The RosettaEvolutionaryLigand (REvoLd) protocol implements an evolutionary algorithm specifically designed for screening combinatorial make-on-demand libraries [39]. REvoLd explores the vast search space of combinatorial libraries for protein-ligand docking with full ligand and receptor flexibility through RosettaLigand, employing selection, mutation, and crossover operations inspired by natural evolution [39].

The algorithm begins with a random population of 200 ligands, from which the top 50 scoring individuals are selected to advance to the next generation. Through iterative generations, the protocol applies multiple reproduction mechanisms:

- Crossover operations that recombine well-performing molecular fragments from high-scoring ligands

- Mutation steps that switch single fragments to low-similarity alternatives while preserving well-performing parts of promising molecules

- Reaction switching mutations that change the reaction scheme of a molecule and search for similar fragments within the new reaction group [39]

This approach typically requires only 30 generations of optimization to identify promising compounds, with each run docking between 49,000 and 76,000 unique molecules while exploring chemical spaces containing over 20 billion compounds [39]. The statistical strength of this method lies in its balanced approach between exploitation of high-scoring regions and exploration of novel chemical space, preventing premature convergence to local minima.

Conventional Docking Approaches and Their Limitations

Traditional virtual screening relies on exhaustive docking of compound libraries using various conformational search algorithms. These can be broadly categorized into systematic and stochastic methods:

Table 1: Conformational Search Methods in Molecular Docking

| Method Type | Specific Approach | Representative Software | Key Characteristics |

|---|---|---|---|

| Systematic | Systematic Search | Glide, FRED | Rotates all rotatable bonds by fixed intervals; computationally expensive for flexible molecules [40] |

| Systematic | Incremental Construction | FlexX, DOCK | Fragments molecules and docks rigid components first before assembling complete molecules [40] |

| Stochastic | Monte Carlo | Glide | Uses random sampling with Boltzmann-weighted acceptance criteria [40] |

| Stochastic | Genetic Algorithm | AutoDock, GOLD | Employs selection, crossover, and mutation operations on ligand conformations [40] |

While these methods have proven effective for small to medium-sized libraries (thousands to millions of compounds), they face significant challenges when applied to ultra-large libraries containing billions of molecules. The computational cost becomes prohibitive, and the approximations required for practical screening times (particularly rigid receptor docking) can reduce accuracy and increase false-positive rates [39] [40].

AI-Enhanced Screening Approaches

Artificial intelligence has dramatically transformed molecular representation and screening methodologies. Traditional molecular representations like Simplified Molecular-Input Line-Entry System (SMILES) strings and molecular fingerprints are increasingly being supplemented or replaced by AI-driven approaches that learn continuous, high-dimensional feature embeddings directly from large datasets [41]. These include:

- Language model-based representations that treat molecular sequences as chemical language

- Graph neural networks that directly operate on molecular graph structures

- Multimodal and contrastive learning frameworks that integrate multiple representation types [41]

These AI-enhanced representations have shown particular utility in scaffold hopping—the identification of novel core structures that retain biological activity—which is crucial for exploring diverse chemical space and overcoming patent limitations [41]. Methods such as variational autoencoders and generative adversarial networks can design entirely new scaffolds absent from existing chemical libraries while tailoring molecules to possess desired properties [41].

Experimental Protocols and Workflows

Protocol for REvoLd Screening

The REvoLd protocol implements a sophisticated evolutionary algorithm for ultra-large library screening:

- Initialization: Generate a random population of 200 ligands from the combinatorial library

- Evaluation: Dock all ligands using flexible protein-ligand docking with RosettaLigand

- Selection: Select the top 50 scoring individuals based on docking scores

- Reproduction:

- Perform crossover operations between high-fitness molecules

- Apply mutation steps to introduce fragment substitutions

- Implement reaction scheme mutations to explore new chemical spaces

- Iteration: Repeat the evaluation-selection-reproduction cycle for 30 generations

- Diversity Maintenance: Include secondary crossover and mutation rounds excluding the fittest molecules to preserve genetic diversity [39]

This protocol typically identifies promising hit candidates after 15 generations, with optimal performance observed at 30 generations. For comprehensive coverage of chemical space, multiple independent runs with different random seeds are recommended, as each run explores different regions of the chemical landscape [39].

Automated Virtual Screening Pipeline

For conventional large-scale docking, automated pipelines provide standardized workflows:

- Library Preparation: Generate compound libraries in PDBQT format using tools like jamlib, including energy minimization of all structures [42]

- Receptor Preparation: Convert protein structures to PDBQT format, identify binding pockets using fpocket, and define docking grid boxes [42]

- Docking Execution: Perform high-throughput docking with tools like QuickVina 2, supporting execution on HPC clusters [42]

- Result Analysis: Rank docking results using multiple scoring methods to identify promising hits [42]

This modular approach ensures reproducibility and scalability, making it accessible for both beginners and experts in structure-based drug discovery [42].

Control Procedures for Validation

Best practices in large-scale docking recommend implementing control procedures to validate screening protocols:

- Known ligand validation: Dock compounds with known activity to verify the protocol can reproduce experimental results

- Decoy discrimination: Test the ability to distinguish known binders from non-binders

- Enrichment calculations: Quantify performance through enrichment factors comparing hit rates against random selection [43]

These controls are essential given the approximations inherent in docking simulations, including limited conformational sampling and inaccurate absolute binding energy predictions [43].

Workflow Visualization

Ultra Large Library Screening Workflow - This diagram illustrates the integrated workflow combining conventional library preparation with evolutionary algorithm screening for ultra-large chemical libraries.

Performance Metrics and Benchmarking

Quantitative Performance Assessment

Table 2: Performance Metrics for Ultra-Large Library Screening

| Method | Library Size | Compounds Docked | Hit Rate Improvement | Computational Requirements |

|---|---|---|---|---|

| REvoLd | 20 billion | 49,000-76,000 | 869-1622x vs random | ~30 generations, 50 individuals/generation [39] |

| Traditional vHTS | 100 million+ | 100% of library | Baseline | Massive computational resources, often requiring specialized infrastructure [39] |

| Deep Docking | Billions | Tens to hundreds of millions | Varies | Combines conventional docking with neural network pre-screening [39] |

| V-SYNTHES | Billions | Fragment-based | Varies | Incremental construction from docked fragments [39] |

The exceptional enrichment factors demonstrated by evolutionary algorithms like REvoLd highlight the statistical efficiency of these approaches. By docking only a tiny fraction (0.00025-0.00038%) of the total chemical space, these methods can identify the majority of high-potential compounds through intelligent sampling guided by evolutionary principles [39].

Diversity and Scaffold Hopping Performance