Strategies for Reducing Overfitting in Machine Learning: A Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on addressing the critical challenge of overfitting in computational models.

Strategies for Reducing Overfitting in Machine Learning: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on addressing the critical challenge of overfitting in computational models. Covering foundational concepts to advanced applications, it explores how overfitting compromises model generalizability, particularly in high-stakes fields like drug discovery. The content details proven methodological solutions—from regularization and data augmentation to ensemble techniques—and offers a practical troubleshooting framework for optimizing model performance. By integrating validation strategies and comparative analysis of real-world case studies, such as drug-target interaction (DTI) prediction, this resource equips practitioners with the knowledge to build more reliable, robust, and clinically translatable machine learning models.

Understanding Overfitting: Why Your Model Fails on New Data

Frequently Asked Questions (FAQs)

1. What is overfitting in machine learning? Overfitting occurs when a machine learning model learns the training data too closely, including its noise and random fluctuations, instead of the underlying pattern. This results in a model that performs exceptionally well on its training data but fails to generalize effectively to new, unseen data [1] [2] [3]. It is akin to a student memorizing the answers to practice questions without understanding the concept, causing them to fail when questions are presented differently [4].

2. How can I tell if my model is overfitted? The primary indicator of an overfit model is a significant performance gap between the training data and a validation or test dataset [4] [2] [3]. For instance, you might observe a very high R² (e.g., >95%) or accuracy on the training data, but a much lower R² or accuracy on the validation data [1]. Techniques like k-fold cross-validation are specifically designed to help detect overfitting [2] [3].

3. What are the common causes of overfitting? Several factors can lead to overfitting:

- Excessively complex model: The model has too much capacity, allowing it to learn noise as if it were a true signal [4] [5].

- Insufficient training data: There isn't enough data for the model to discern the true pattern from random variations [4] [2].

- Too many training epochs: The model is trained for so long that it transitions from learning the pattern to memorizing the examples [4].

- Lots of low-correlated features or outliers: Too many irrelevant features or extreme data points can cause the model to learn meaningless patterns [1] [2].

4. What is the difference between overfitting and underfitting? Overfitting and underfitting are two opposite ends of the model performance spectrum. The table below summarizes their key differences:

| Feature | Overfitting | Underfitting |

|---|---|---|

| Model Complexity | Too complex [5] | Too simple [5] |

| Performance on Training Data | Very high [2] [3] | Poor [4] [5] |

| Performance on New Data | Poor [2] [3] | Poor [4] [5] |

| Error Source | High variance [5] | High bias [5] |

| Analogy | Memorizing the textbook [4] | Only reading the summary [4] |

5. How can we prevent overfitting? Multiple proven strategies exist to prevent overfitting:

- Collect more training data: More data makes it harder for the model to memorize noise and easier to generalize [4] [2].

- Reduce model complexity: Simplify the model architecture to match the true complexity of the problem [5].

- Apply regularization: Techniques like L1 (Lasso) and L2 (Ridge) regularization penalize model complexity to prevent it from becoming too complex [4] [5] [3].

- Use dropout: In neural networks, randomly disabling neurons during training prevents over-reliance on any single neuron [4].

- Implement early stopping: Halt the training process as soon as performance on a validation set stops improving [4] [2].

- Perform feature selection: Identify and eliminate redundant or irrelevant features from the training set [2] [3].

Troubleshooting Guide: Identifying and Resolving Overfitting

This guide provides a structured approach to diagnose and fix overfitting in your machine learning experiments.

Step 1: Diagnose the Problem

- Action: Plot your model's learning curves, showing performance metrics (e.g., loss, accuracy) for both the training and validation sets over time (training epochs).

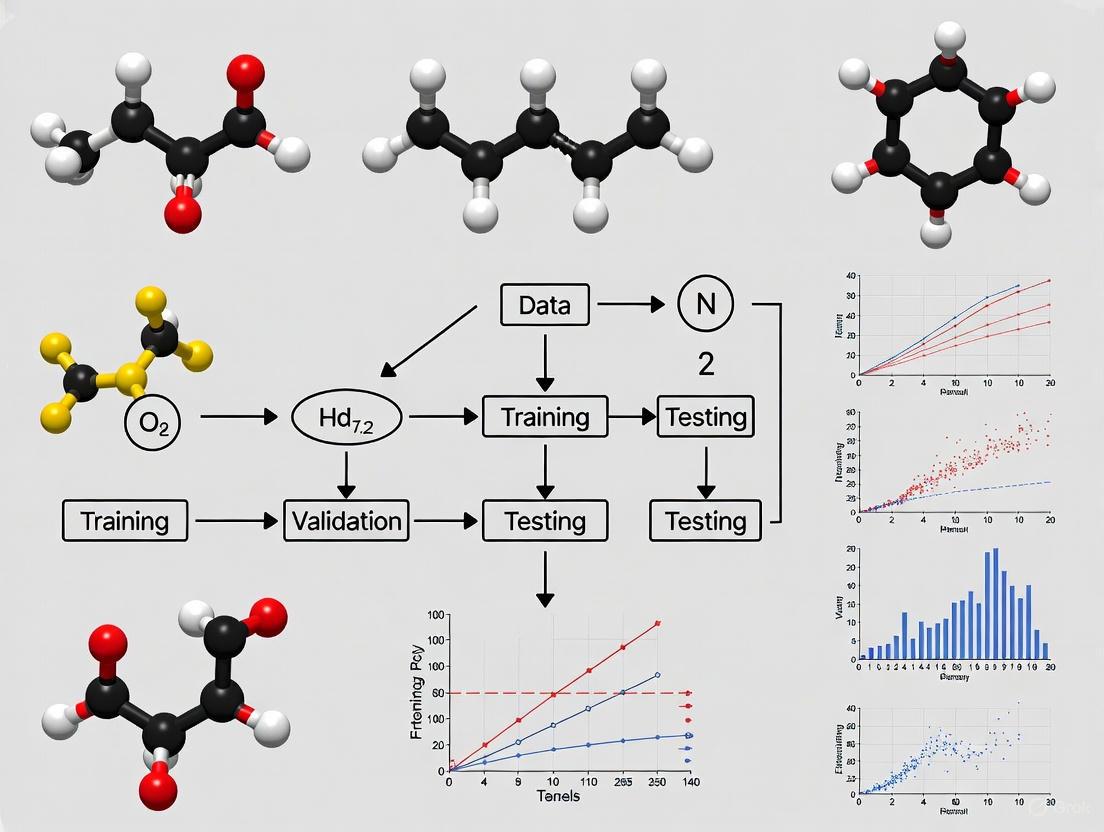

- Interpretation: If the training performance continues to improve while the validation performance stops improving or starts to degrade, your model is likely overfitting [4] [3]. The diagram below illustrates this key relationship and the ideal stopping point.

Step 2: Apply Corrective Measures Based on your diagnosis, select and implement one or more of the following remediation protocols.

Protocol A: Implementing Early Stopping

- Partition your training data into a training set and a validation set (e.g., 80/20 split).

- Train your model iteratively (e.g., epoch by epoch).

- Evaluate the model's performance on the validation set after each iteration.

- Monitor the validation performance. When it stops improving for a pre-defined number of iterations (patience), halt the training process [4] [2]. This "sweet spot" balances bias and variance [3].

Protocol B: Applying Regularization

- Identify the type of model you are using (e.g., linear regression, neural network).

- Select a regularization method:

- L1 (Lasso): Adds a penalty equal to the absolute value of the magnitude of coefficients. This can shrink some coefficients to zero, effectively performing feature selection [3].

- L2 (Ridge): Adds a penalty equal to the square of the magnitude of coefficients. This shrinks coefficients but does not zero them out [5] [3].

- Tune the regularization hyperparameter (e.g., λ or alpha), typically via cross-validation, to find the optimal strength that reduces overfitting without causing underfitting.

Protocol C: Data Augmentation

- Analyze your dataset to determine if its size and diversity are insufficient.

- Apply moderate, label-preserving transformations to your existing data to artificially expand your dataset.

- Example: For image data, use transformations such as rotation, flipping, translation, or slight color variations [2]. For numerical data, adding small amounts of noise can be effective.

Research Reagent Solutions: A Toolkit for Mitigating Overfitting

The following table details key methodological "reagents" used in experiments to combat overfitting, along with their primary function in the research workflow.

| Research Reagent | Function & Purpose |

|---|---|

| K-Fold Cross-Validation | Divides data into K subsets; model is trained on K-1 folds and validated on the remaining fold. This process repeats K times, providing a robust estimate of model generalization and helping detect overfitting [2] [3]. |

| L1 / L2 Regularization | Mathematical techniques that apply a "penalty" to the model's coefficients during training, discouraging complexity and preventing the model from fitting noise [2] [5] [3]. |

| Dropout | A regularization technique for neural networks that randomly "drops out" (ignores) a subset of neurons during training, forcing the network to learn redundant representations and preventing over-reliance on any single neuron [4]. |

| Validation Set | A held-out subset of data not used during training, reserved solely for evaluating model performance during and after training. It is the primary source of truth for detecting overfitting [4] [3]. |

| Pruning / Feature Selection | The process of identifying and eliminating less important features (in general models) or nodes (in decision trees) to simplify the model and reduce its tendency to overfit [2] [5]. |

Experimental Protocol: K-Fold Cross-Validation

This protocol provides a detailed methodology for implementing k-fold cross-validation, a gold-standard technique for assessing model generalizability and detecting overfitting [2] [3].

Objective: To obtain an unbiased evaluation of a model's performance and its susceptibility to overfitting.

Procedure:

- Dataset Preparation: Start with a cleaned and pre-processed dataset. Ensure the data is shuffled randomly to avoid order effects.

- Partitioning: Split the entire dataset into k consecutive folds of approximately equal size. A common value for k is 5 or 10.

- Iterative Training and Validation: For each unique fold

i(whereiranges from 1 to k):- Designate fold

ias the validation set. - Use the remaining k-1 folds as the training set.

- Train a new, untrained instance of your model on the training set.

- Evaluate the trained model on the validation set (fold

i) and record the performance score (e.g., accuracy, R²).

- Designate fold

- Result Aggregation: Once all k iterations are complete, calculate the average of the k recorded performance scores. This average is the final, robust estimate of your model's predictive performance.

The workflow for a single iteration (k=5) is visualized below.

The Bias-Variance Tradeoff

At the heart of the overfitting vs. underfitting problem is the bias-variance tradeoff [5] [3]. A well-generalized model finds the optimal balance between these two sources of error. The following table summarizes the characteristics of this tradeoff.

| Concept | Description | Relationship to Model Error |

|---|---|---|

| Bias | Error from erroneous assumptions in the learning algorithm. A high-bias model is too simple and underfits the data [5]. | High bias leads to inaccurate predictions on both training and new data because the model fails to capture relevant patterns [5]. |

| Variance | Error from sensitivity to small fluctuations in the training set. A high-variance model is too complex and overfits the data [5]. | High variance leads to accurate predictions on training data but poor performance on new data because the model learned the noise [5]. |

| Trade-off | Decreasing bias (by making the model more complex) will typically increase variance, and vice versa. The goal is to find the model complexity that minimizes total error [5]. | The ideal model has low bias and low variance, capturing the true pattern without being overly sensitive to noise. |

Troubleshooting Guides

Model Diagnosis Guide: Are You Overfitting or Underfitting?

Q: How can I quickly diagnose if my model is overfitting or underfitting?

A: The most direct method is to compare your model's performance on the training data versus a held-out validation or test set [6] [7]. Monitor key metrics like loss and accuracy during training to identify the specific issue.

Diagnosis Table:

| Symptom | Training Data Performance | Validation/Test Data Performance | Likely Diagnosis |

|---|---|---|---|

| Symptom A | Poor [5] [6] | Poor [5] [6] | Underfitting (High Bias) |

| Symptom B | Very Good / Excellent [4] [8] | Significantly Worse [4] [8] | Overfitting (High Variance) |

| Symptom C | Good and stable | Good and stable | Well-Fit Model |

Additional signs of overfitting include an overly complex decision boundary that adapts to noise [6] and a learning curve where training loss decreases while validation loss increases [6]. Signs of underfitting include systematic patterns in prediction residuals, indicating the model is missing key relationships in the data [6].

Guide to Fixing an Overfit Model

Q: My model has high variance and is overfitting. What specific steps can I take? [4] [8]

A: Overfitting occurs when a model is too complex and learns the noise in the training data [5]. The goal is to simplify the model and reduce its sensitivity to noise.

Experimental Protocol for Mitigating Overfitting:

| Method | Brief Description & Function | Key Hyperparameters / Considerations |

|---|---|---|

| 1. Regularization [4] [6] | Adds a penalty to the loss function to discourage complex models. | L1 (Lasso): Can shrink coefficients to zero, performing feature selection.L2 (Ridge): Shrinks all coefficients evenly. |

| 2. Data Augmentation [8] [2] | Artificially expands the training set by creating modified versions of existing data. | Apply realistic transformations (e.g., rotation, flipping for images; synonym replacement for text). |

| 3. Dropout (for Neural Networks) [4] [9] | Randomly "drops out" a fraction of neurons during training to prevent co-adaptation. | dropout_rate: The probability of dropping a neuron. |

| 4. Early Stopping [4] [9] | Halts training when validation performance stops improving. | patience: How many epochs to wait after the last improvement before stopping. |

| 5. Simplify Model Architecture [4] [7] | Reduce the model's capacity to learn noise. | Reduce the number of layers or neurons (NN), lower tree depth (Decision Trees), or use fewer features. |

| 6. Increase Training Data [4] [8] | Provide more data for the model to learn the true underlying pattern. | The most effective but often most expensive solution. |

| 7. Ensemble Methods: Bagging [6] [2] | Combines multiple weak learners (e.g., Random Forest) to reduce variance. | n_estimators: The number of base models to combine. |

Guide to Fixing an Underfit Model

Q: My model has high bias and is underfitting. What specific steps can I take? [4] [8]

A: Underfitting happens when a model is too simple to capture the underlying trend of the data [5]. The goal is to increase the model's learning capacity and provide it with better information.

Experimental Protocol for Mitigating Underfitting:

| Method | Brief Description & Function | Key Hyperparameters / Considerations |

|---|---|---|

| 1. Increase Model Complexity [4] [5] | Use a more powerful model architecture capable of learning complex patterns. | Add more layers/neurons (NN), use a non-linear model (e.g., SVM with kernel), or increase tree depth. |

| 2. Feature Engineering [5] [6] | Provide more informative features to the model. | Add new features, interaction terms, or polynomial features to help the model discover patterns. |

| 3. Reduce Regularization [4] [8] | Lower the constraints that are preventing the model from learning. | Decrease the value of the lambda (λ) parameter in L1/L2 regularization. |

| 4. Increase Training Time [4] [6] | Allow the model more time to learn from the data. | Increase the number of training epochs. Useful if the model converged too early. |

| 5. Address Data Quality [6] | Ensure the data itself is clean and relevant. | Remove irrelevant noise from the data and ensure features are properly scaled [5]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between bias and variance?

- Bias is the error due to erroneous assumptions in the learning algorithm. A high-bias model is too simple and makes strong assumptions, leading to underfitting [10]. It is often described as the error resulting from the training data itself [11].

- Variance is the error due to the model's sensitivity to small fluctuations in the training set. A high-variance model is too complex and learns the noise, leading to overfitting [10]. It is the error resulting from the test data [11].

Q2: Can a model be both overfit and underfit at the same time? Not simultaneously for a given state, but a model can oscillate between these states during the training process. This is why monitoring validation performance throughout training is crucial to catch the model at its most generalized state [4].

Q3: Why does collecting more data help with overfitting? More data provides a better representation of the true underlying data distribution. This makes it harder for the model to memorize noise and irrelevant details, forcing it to learn the genuine patterns that generalize to new data [4] [8].

Q4: What is Early Stopping and how does it work? Early stopping is a technique that ends the training process before the model begins to memorize the training data. It works by monitoring the model's performance on a validation set after each training epoch (or iteration) and halting training once the validation performance stops improving for a pre-defined number of epochs ("patience") [4] [9].

Q5: How does k-fold cross-validation help in diagnosing model fit? K-fold cross-validation splits the data into 'k' subsets (folds). The model is trained on k-1 folds and validated on the remaining fold, repeating the process 'k' times [6] [2]. This provides a more robust estimate of model performance and generalization error than a single train-test split. A large performance gap across different folds can indicate model instability or overfitting [12].

Visualizing the Bias-Variance Tradeoff

The following diagram illustrates the core relationship between model complexity, error, and the goal of finding the optimal balance.

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table details key computational "reagents" and methodologies for managing model fit in machine learning research.

| Research Reagent / Solution | Function & Purpose | Typical Use-Case in Experimentation |

|---|---|---|

| L1 / L2 Regularization [4] [6] | Function: Adds a penalty term to the loss function to constrain model weights. Preents overfitting by discouraging model complexity. | Added as a term in the optimization objective. L1 (Lasso) can zero out weights for feature selection; L2 (Ridge) shrinks weights uniformly. |

| Validation Set [4] [6] | Function: A subset of data not used for training, reserved for unbiased evaluation of model performance and tuning hyperparameters. | Used to monitor for overfitting during training and to decide when to apply early stopping. Essential for model selection. |

| K-Fold Cross-Validation [6] [2] | Function: A resampling procedure used to evaluate models on limited data. Provides a robust estimate of model generalization performance. | The dataset is split into K folds. The model is trained and validated K times, each time on a different fold, with results averaged. |

| Dropout [4] [9] | Function: A regularization technique for neural networks that randomly ignores nodes during training, preventing over-reliance on any single node. | Implemented as a layer within a neural network architecture. A dropout_rate hyperparameter controls the fraction of neurons to drop. |

| Data Augmentation Pipeline [8] [2] | Function: Artificially increases the size and diversity of the training dataset by applying realistic transformations, teaching the model to be invariant to irrelevant variations. | Used in the data pre-processing/preparation stage. For images, this includes rotations, flips, and crops. For other data, it could involve adding noise or synonyms. |

FAQs: Understanding and Troubleshooting Overfitting

FAQ 1: What is overfitting and how does it specifically impact AI-driven drug discovery?

In machine learning, overfitting occurs when a model learns the training data too well, including its underlying noise and random fluctuations, but fails to generalize its predictions to new, unseen data [8] [2] [13]. In the context of drug discovery, this means a model might appear perfectly accurate during internal testing but will generate unreliable predictions when used for new compound screening, target validation, or clinical outcome forecasting [14]. This can lead researchers down unproductive paths, wasting critical time and resources on drug candidates that are unlikely to succeed in real-world settings [15] [16].

FAQ 2: What are the practical signs that my drug discovery model is overfitting?

You can identify a potential overfitting problem by watching for these key signs [8] [13] [3]:

- Discrepancy between Training and Validation Performance: The model achieves high accuracy (e.g., 99%) on its training data but performs poorly (e.g., 55% accuracy) on a separate validation or test set [13].

- High Variance in Predictions: The model's outputs are highly sensitive to small changes in the input data [8].

- Unrealistic Performance Claims: If a tool promises to solve complex biological problems with near-perfect accuracy, it may be overfitting to limited or noisy datasets, a phenomenon often described as the "AI hype" in the pharmaceutical industry [14].

FAQ 3: Our AI model identified a promising drug target, but wet-lab experiments failed to validate it. Could overfitting be the cause?

Yes, this is a classic real-world consequence of overfitting. An overfit model may have "memorized" spurious correlations or noise in the high-throughput screening data or genomic datasets used for training, rather than learning the true biological signal [14] [16]. For instance, the model might have associated a specific but irrelevant data artifact with a positive outcome. When this artifact is absent in a real biological system, the prediction fails. This underscores the critical need for robust validation and the integration of human expertise to interpret AI-generated findings [14] [17].

FAQ 4: What are the most effective strategies to prevent overfitting in our clinical prediction models?

Preventing overfitting requires a multi-faceted approach [8] [2] [13]:

- Use More High-Quality Data: The most effective method is to train your model with larger, diverse, and well-curated datasets that accurately represent the biological problem [8] [2].

- Apply Regularization Techniques: Methods like L1 (Lasso) and L2 (Ridge) regularization penalize model complexity, forcing the model to focus on the most important features [8] [3].

- Implement Cross-Validation: Use k-fold cross-validation to ensure your model's performance is consistent across different subsets of your data [8] [13].

- Simplify the Model: Reduce model complexity by using fewer parameters or employing feature selection to eliminate redundant inputs [8] [13].

- Utilize Early Stopping: Halt the training process when the model's performance on a validation set stops improving, preventing it from learning noise in the training data [2] [3].

- Employ Data Augmentation: Artificially increase the size and diversity of your training set by applying realistic transformations to the existing data [8] [2].

Table 1: Quantitative Impact of Overfitting Mitigation Techniques in Model Development

| Mitigation Technique | Reported Performance Improvement | Key Function |

|---|---|---|

| K-fold Cross-Validation | Standard for reliable performance estimation [8] | Provides a robust estimate of model generalizability |

| Early Stopping | Can stop training 32% earlier than naive stopping [18] | Prevents model from over-optimizing on training data |

| Regularization (L1/L2) | Fundamental technique to reduce variance [8] [3] | Penalizes model complexity to discourage overfitting |

| Data Augmentation | Increases effective dataset size and diversity [8] [2] | Teaches model to be invariant to irrelevant variations |

Troubleshooting Guides

Guide 1: Diagnosing and Fixing an Overfit Model in a Target Identification Pipeline

Problem: Your model for predicting novel oncology targets performs excellently in silico but fails consistently in subsequent in vitro assays.

Diagnostic Steps:

- Split Your Data: Ensure you have a clean hold-out test set that was not used in any part of the model training process. Evaluate the model on this set [13] [3].

- Plot Learning Curves: Graph the model's training loss and validation loss over each training epoch (see diagram below). A growing gap between the two curves is a clear indicator of overfitting [18].

- Check for Data Leakage: Verify that information from the validation or test set has not accidentally been used during the training process, which can create a false sense of model accuracy [14].

`

Frequently Asked Questions

1. What are the key indicators of an overfit model? The primary indicators are a large and growing performance gap between the training and validation sets, and a specific pattern on the generalization curve. You will typically observe the training error (e.g., loss) continuing to decrease, while the validation error decreases to a point and then begins to increase again [20]. The model performs well on the training data but fails to generalize to new, unseen data [2] [21].

2. What is the difference between a generalization curve and a learning curve? A learning curve is a plot that shows a model's learning performance (e.g., loss or accuracy) over experience (e.g., epochs or amount of training data) [20]. When this graph shows two or more loss curves, typically for training and validation sets, it is called a generalization curve [21]. Therefore, a generalization curve is a specific type of learning curve used to diagnose how well a model generalizes.

3. My model has a high accuracy on the training set but poor accuracy on the test set. Is this always overfitting? While this is the classic sign of overfitting [5], it is important to rule out other issues. One critical factor is ensuring your training and test datasets are statistically similar and representative of the real-world data distribution [21]. If the test set is fundamentally different or easier than the training set, the performance gap might not be due to overfitting alone [20].

4. Can a model be too accurate on its training data? Yes. In fact, if your model achieves a training accuracy that is suspiciously high (e.g., near 100%) while the validation accuracy is significantly lower, it is a strong indicator that the model has overfit by memorizing the training data, including its noise and irrelevant details, rather than learning the underlying pattern [22].

5. How can I detect overfitting if I don't have a separate validation set? Without a hold-out validation set, techniques like k-fold cross-validation are essential [2] [22]. This method involves splitting your training data into k folds, iteratively training on k-1 folds and validating on the remaining fold. If the model's performance varies significantly across the folds or is much worse than the apparent performance on the entire dataset, it suggests overfitting [23].

Diagnostic Guide: Using Learning Curves

Learning curves are your primary tool for visualizing overfitting. The table below summarizes what to look for in these curves.

| Model Status | Training Loss/Error | Validation Loss/Error | Gap Between Curves |

|---|---|---|---|

| Well-Fitted | Decreases to a point of stability [20]. | Decreases to a point of stability [20]. | Small, stable gap [20] [24]. |

| Overfitting | Continues to decrease [20] [24]. | Decreases then begins to increase after a point [20]. | Large and growing gap [20] [22]. |

| Underfitting | Remains high; may be flat or decrease slowly [20]. | Remains high and is similar to training error [20] [24]. | Very small, but both errors are high [5]. |

The following workflow outlines the systematic process for diagnosing overfitting using these curves.

Experimental Protocol: Generating a Learning Curve

This protocol provides a detailed methodology for creating and analyzing learning curves to diagnose model fit.

Objective: To diagnose overfitting and underfitting by visualizing model performance on training and validation datasets over successive training epochs.

Materials & Setup:

- Dataset: Split your data into three parts: Training Set (e.g., 70%), Validation Set (e.g., 15%), and Test Set (e.g., 15%). Ensure the splits are shuffled and representative [21].

- Model: Your machine learning model in a framework like PyTorch or TensorFlow.

- Metrics: Define a loss function (e.g., Cross-Entropy, MSE) and a performance metric (e.g., Accuracy, RMSE) [20].

Procedure:

- Initialization: Initialize your model with a fixed set of parameters.

- Training Loop: For each epoch (training iteration): a. Train the model on the entire training set. b. Calculate and record the prediction error (loss/metric) on the training set. c. Without updating the model, calculate and record the prediction error on the validation set.

- Repetition: Repeat Step 2 for a predetermined number of epochs or until training convergence is stable.

- Visualization: Plot the recorded errors from Step 2 against the epoch number. This creates the generalization curve with two lines: training error and validation error.

Data Analysis:

- Compare the resulting plot to the patterns described in the Diagnostic Guide table above.

- Identify the inflection point where the validation error stops improving and begins to degrade—this is often the optimal point to stop training to prevent overfitting [20] [23].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational and data "reagents" essential for diagnosing and preventing overfitting.

| Tool / Technique | Category | Primary Function in Diagnosing/Preventing Overfitting |

|---|---|---|

| Generalization Curves [20] [21] | Diagnostic Tool | Provides a visual representation of the performance gap between training and validation sets, which is the key indicator of overfitting. |

| Validation Set [20] [23] | Data Strategy | A held-out subset of data used to evaluate the model's generalization during training, enabling the creation of generalization curves. |

| K-Fold Cross-Validation [2] [22] | Data Strategy | A robust validation technique that uses multiple train/validation splits to provide a more reliable estimate of model generalization and detect overfitting. |

| Early Stopping [23] [22] | Training Algorithm | Monitors the validation loss and automatically halts training when it begins to increase, preventing the model from overfitting to the training data. |

| Regularization (L1/L2) [23] [5] | Optimization Technique | Adds a penalty to the loss function that constrains model complexity, discouraging the model from learning noise and fine details in the training data. |

| Dropout [23] [5] | Model Technique | Randomly "drops" a subset of neurons during training, preventing complex co-adaptations and forcing the network to learn more robust features. |

| Data Augmentation [23] [22] | Data Strategy | Artificially expands the size and diversity of the training set by applying realistic transformations, helping the model learn invariant features and reduce overfitting. |

Next Steps and Corrective Actions

Once overfitting is diagnosed, the following diagram maps the logical path from detection to resolution using the tools listed above.

In machine learning, Root Cause Analysis (RCA) is a systematic process for identifying the fundamental reasons behind model failures, such as poor generalization or inaccurate predictions [25]. For researchers in drug development, where models guide critical decisions from target validation to clinical trial analysis, applying RCA is essential for ensuring model reliability and reproducibility [26]. This guide provides practical troubleshooting frameworks to diagnose and remediate common issues like overfitting, often stemming from model complexity, insufficient data, and noisy datasets [27] [2].

? Frequently Asked Questions (FAQs)

1. What are the primary symptoms of an overfit model in a drug discovery pipeline? An overfit model typically shows a significant performance disparity between training and validation/test sets. It may achieve high accuracy on training data (e.g., bioactivity data used for training) but performs poorly on new, unseen experimental data [2]. This behavior indicates the model has learned the noise and specific patterns in the training set rather than the underlying biological relationships, compromising its utility for predicting new drug candidates [26].

2. How can I determine if my dataset is too small for building a robust predictive model? While the required data volume depends on problem complexity, a clear sign of insufficient data is consistent underfitting or high variance in model performance across different data splits [28]. Techniques like learning curves can diagnose this. In drug discovery, where acquiring labeled data is costly, a dataset might be considered "too small" if model performance fails to stabilize or meet a minimum predictive accuracy threshold (e.g., AUC < 0.7) necessary for generating plausible hypotheses [26].

3. What is the most effective way to handle noisy, high-dimensional data from transcriptomic studies? The key is robust preprocessing and regularization. Start with rigorous data cleaning to handle missing values and outliers [28]. Then, employ feature selection techniques (like PCA or univariate selection) to reduce dimensionality and focus on the most informative features [28] [26]. Finally, use regularization methods (L1/L2) or models like Random Forests that are inherently more robust to noise [27] [26].

4. Can automated RCA be applied to machine learning pipelines in a manufacturing or lab setting? Yes. Automated RCA systems use machine learning to predict the root causes of failures. They work by aggregating data from various sources (e.g., logs, metrics, traces), converting them into standardized feature vectors, and then using trained classifiers to pinpoint the most likely cause [29] [30]. This approach has been successfully implemented in complex manufacturing environments, resolving thousands of issues with high accuracy [30].

Troubleshooting Guides

Issue 1: Model Overfitting

Symptoms:

- High accuracy on training data but low accuracy on validation/test data.

- The model performs poorly when making predictions on new external datasets.

- Extreme parameter weights or reliance on seemingly irrelevant features.

Root Causes & Solutions:

Excessive Model Complexity

- Cause: Using a model with too many parameters (e.g., a very deep neural network) for a simple problem or small dataset, allowing it to memorize noise [27].

- Solution: Simplify the model. Start with a simpler algorithm (e.g., Logistic Regression before a DNN). For complex models, apply regularization (L1/Lasso, L2/Ridge) to penalize large weights and reduce complexity [27] [2]. Pruning can be used for decision trees to remove non-critical branches [2].

Insufficient Training Data

- Cause: The dataset is too small for the model to learn the general underlying patterns [2].

- Solution: Collect more data if feasible. If not, use data augmentation techniques (e.g., adding noise, geometric transformations for images) to artificially expand your dataset [2]. Cross-validation is also crucial to maximize the utility of limited data and provide a realistic performance estimate [27].

Training for Too Long

- Cause: In iterative algorithms like neural networks, continuous training on the same data can lead to learning the noise [2].

- Solution: Implement early stopping. Monitor the model's performance on a validation set during training and halt the process once validation performance begins to degrade, even if training performance is still improving [27] [2].

The following workflow visualizes a systematic diagnostic and remediation process for overfitting.

Issue 2: Poor Data Quality

Symptoms:

- Model performance is poor even on training data.

- The model fails to converge or shows unstable learning.

- Predictions are inconsistent and lack a clear rationale.

Root Causes & Solutions:

Noisy Data (Irrelevant Information)

- Cause: The dataset contains a large amount of irrelevant information or errors (e.g., incorrect labels, corrupted images, instrument measurement errors) that obscure the true signal [2] [28].

- Solution: Perform data cleaning to identify and remove or correct outliers and errors. Feature selection and dimensionality reduction (e.g., PCA) are critical to filter out irrelevant features [28].

Missing Values

- Cause: Incomplete data for certain features or instances can introduce bias and reduce the effective dataset size [28].

- Solution: For minor missing data, use imputation techniques (mean, median, mode, or model-based imputation). If a feature has a high percentage of missing values, it may be better to remove it entirely [28].

Imbalanced Data

- Cause: The dataset is skewed towards one class (e.g., 90% "inactive" compounds vs. 10% "active" compounds), causing the model to be biased toward the majority class [28].

- Solution: Use resampling techniques (oversampling the minority class or undersampling the majority class) or algorithmic techniques (assigning higher class weights) to balance the dataset [28].

Inconsistent Feature Scales

- Cause: Features have vastly different ranges and magnitudes (e.g., molecular weight vs. IC50 values), which can bias certain algorithms [28].

- Solution: Apply feature normalization (scaling to a [0,1] range) or standardization (scaling to have zero mean and unit variance) to bring all features to a comparable scale [28].

Issue 3: Training-Serving Skew

Symptoms:

- The model performs well offline but fails in production.

- Predictions are based on different feature distributions than those encountered during training.

Root Causes & Solutions:

Divergent Data Preprocessing

- Cause: Inconsistent application of preprocessing steps (e.g., normalization, imputation) between the training and serving pipelines [31].

- Solution: Encapsulate and reuse the same preprocessing code in both training and serving environments. Thoroughly test and validate that features are computed identically in both pipelines [31].

Data Source Changes

- Cause: The data distribution in production "drifts" from the static data used for training (e.g., new experimental protocols, different sensor calibrations) [31].

- Solution: Implement continuous monitoring of feature distributions and model performance in production. Retrain models periodically with fresh data that reflects the current environment [31].

Quantitative Data on ML-Driven RCA Performance

The effectiveness of a structured, data-driven approach to RCA is demonstrated by its application in industrial settings. The table below summarizes performance metrics from a real-world case study where a Machine Learning-based RCA system was implemented in a complex manufacturing environment [30].

Table 1: Performance Metrics of a Big Data-Driven RCA System in Manufacturing [30]

| Metric | Performance | Contextual Information |

|---|---|---|

| Analysis Volume | >12,000 quality problems | The system was capable of analyzing a massive number of issues simultaneously. |

| Analysis Speed | Within seconds | Time required after the model was trained, enabling real-time diagnostics. |

| Prediction Accuracy | Up to 90% | Accuracy rate in correctly identifying the root cause of quality problems. |

Experimental Protocol: Implementing an ML-Powered RCA System

This protocol outlines the methodology for building a machine learning system to automatically predict the root causes of failures, adapted from a successful implementation in high-tech manufacturing [30].

Objective: To create a supervised classification model that maps problem descriptions (features) to their known root causes (labels).

Materials and Reagents: Table 2: Research Reagent Solutions for ML-Based RCA

| Item | Function |

|---|---|

| Historical Data | Labeled examples of past incidents, including their features and confirmed root causes. Serves as the training ground for the model. |

| Feature Extraction Library (e.g., Scikit-learn) | Provides tools for text vectorization (TF-IDF), dimensionality reduction (PCA), and feature selection. |

| ML Classifier Algorithms (e.g., Random Forest, XGBoost) | The core models that learn the relationship between the extracted features and the root cause labels. |

| Validation Framework (e.g., Cross-Validation) | Essential for assessing model generalizability and preventing overfitting during the training phase. |

Methodology:

Problem Identification & Feature Library Construction:

- Gather data from all relevant sources: structured databases (ERP, lab equipment logs), textual reports (lab notebooks, quality reports), and expert knowledge [30].

- Convert heterogeneous data into a standardized feature vector. For text data (e.g., incident reports), use techniques like TF-IDF (Term Frequency-Inverse Document Frequency) to extract meaningful features [30].

- Create a unified feature library that provides a consistent way to describe any problem.

Root Cause Identification (Model Training):

- Label your historical data with the verified root causes.

- Treat root cause prediction as a supervised classification task [30].

- Train multiple classifier models (e.g., Random Forest, Gradient Boosting) on the feature vectors and their corresponding root cause labels.

- Use cross-validation to tune hyperparameters and select the best-performing model [27] [28].

Validation and Deployment:

- Hold back a portion of the historical data as a test set to evaluate the final model's accuracy.

- Deploy the model into a user-friendly application. When a new problem occurs, the system converts it into a feature vector and the model predicts the most probable root cause(s) [30].

- Establish a feedback loop where the outcomes of new analyses are used to continuously retrain and improve the model.

The workflow for this automated RCA system, from data ingestion to actionable output, is illustrated below.

Proven Techniques to Combat Overfitting in Computational Models

Troubleshooting Guides

Guide 1: Addressing Overfitting Through Data Augmentation

User Issue: My model shows a significant gap between high training accuracy and low validation accuracy, indicating overfitting. I have a limited dataset and cannot collect more samples easily.

Diagnosis: This is a classic case of overfitting, where the model has memorized the noise and specific patterns in the training data instead of learning to generalize. This is common with small datasets [8] [4] [2].

Solution: Implement a data augmentation strategy to artificially expand your training set.

- For Image Data: Apply random but realistic transformations to your existing images. This teaches the model to be robust to variations. Standard techniques include:

- For Clinical or Tabular Data (e.g., Questionnaire Responses):

- Synthetic Data Generation: Use methods that follow the probability distribution of your original data. One approach is to generate "hybrid-synthetic correlated discrete multinomial variants" of each data item [33].

- Determine the Optimal Augmentation Ratio: Systematically test different sizes of augmented data. Research suggests that augmenting to four times the original dataset size can be an optimal starting point [33].

Validation: After augmentation, retrain your model. A successful reduction in overfitting will show a decreased performance gap between training and validation sets while maintaining or improving validation accuracy [34].

Guide 2: Fixing Underfitting Caused by Poor Data Quality

User Issue: My model performs poorly on both training and test data. It fails to capture the underlying patterns.

Diagnosis: This is underfitting, often caused by a model that is too simple or data that is insufficient in quality or features [8] [4].

Solution: Focus on data cleaning and feature engineering to provide the model with a stronger signal.

- Add More Relevant Features: The model may lack the necessary inputs to detect patterns. Create new features from existing data that have a stronger relationship with the output variable [8].

- Clean Noisy Data: Identify and correct errors or irrelevant information in your training set. An overfit model learns this noise, but an underfit model may fail to learn anything useful because of it [8] [2].

- Reduce Overly Aggressive Regularization: If you are using techniques like L1 or L2 regularization to prevent overfitting, the penalty might be too strong, oversimplifying the model. Try decreasing the regularization strength [8] [4].

Validation: After implementing these changes, the model's training accuracy should significantly improve. If performance on a separate validation set also rises, you have successfully addressed the underfitting.

Guide 3: Managing Imbalanced Datasets in Clinical Classification

User Issue: My model for classifying patient outcomes has high overall accuracy but fails to identify the minority class (e.g., patients with a rare disease).

Diagnosis: This is caused by an imbalanced dataset, where one class has far fewer samples than others. The model becomes biased toward the majority class [34].

Solution: Employ resampling techniques to create a more balanced class distribution.

- Upsampling the Minority Class: Increase the number of samples in the underrepresented class.

- Downsampling the Majority Class: Randomly remove samples from the overrepresented class to balance the dataset.

- Caution: This method discards data and should only be used if you have a sufficiently large initial dataset [34].

Validation: Do not rely on accuracy alone. Use metrics that are robust to class imbalance, such as the F1-score, AUC_weighted, precision, and recall. These provide a better picture of model performance across all classes [34].

Frequently Asked Questions (FAQs)

Q1: What is the simplest way to know if my model is overfitting? A1: The most straightforward sign is a large performance gap. If your model's accuracy (or other relevant metrics) is very high on the training data but significantly worse on a separate validation or test dataset, it is likely overfitting [4] [2] [34].

Q2: I work with clinical data. Is synthetic data generation scientifically valid? A2: Yes, when done and validated correctly. A 2025 scoping review of 118 studies found that data augmentation and synthetic data generation are established methods, particularly in imaging and for addressing data scarcity in rare diseases. The key is to ensure the generated data is biologically plausible and rigorously validated against real-world outcomes [32].

Q3: How much should I augment my dataset? A3: The optimal ratio is problem-dependent. A study using the RCADS-47 clinical scale found that augmenting the dataset to four times its original size yielded the best results for a Random Forest model. We recommend running a systematic experiment, gradually increasing the dataset size and evaluating model performance on a held-out test set to find your project's sweet spot [33].

Q4: Besides augmentation, what are other data-centric ways to prevent overfitting? A4: Several best practices are highly effective:

- Collect More Real Data: This is often the most effective solution [8] [34].

- Data Cleaning: Remove irrelevant information (noise) from your training set [8] [2].

- Prevent Target Leakage: Ensure your features do not contain information that would not be available at the time of prediction in a real-world scenario [34].

- Cross-Validation: Use techniques like k-fold cross-validation to get a more reliable estimate of your model's performance on unseen data [8] [2] [34].

Q5: What is the "data-centric" shift mentioned in regulatory guidelines? A5: Regulators like the ICH are moving from a document-centric to a data-centric approach. This means the focus is on the quality, reliability, and reusability of the data itself, rather than on static documents. This shift, embedded in guidelines like ICH E6(R3) and ICH M11, enables digital data flow—creating data once and using it everywhere—which reduces silos and improves efficiency [35].

Experimental Protocols & Data

Table 1: Data Augmentation Impact on Model Performance

This table summarizes quantitative findings from a study that used data augmentation to predict depression and anxiety, demonstrating its effect on mitigating overfitting. [33]

| Model | Original Dataset Size | Augmented Dataset Size | Macro Average Accuracy (Original) | Macro Average Accuracy (Augmented) |

|---|---|---|---|---|

| Random Forest | 89 cases | 356 cases (4x) | Not Reported | 81% |

| Support Vector Machine | 89 cases | 356 cases (4x) | Not Reported | Lower than Random Forest |

| Logistic Regression | 89 cases | 356 cases (4x) | Not Reported | Lower than Random Forest |

Table 2: Research Reagent Solutions for Data-Centric AI

A toolkit of essential "reagents" or methodologies for building robust, data-centric machine learning models. [8] [33] [2]

| Solution / Method | Function | Application Context |

|---|---|---|

| K-Fold Cross-Validation | A testing method that splits data into K subsets (folds) to provide a robust performance estimate and reduce the chance of overfitting. | General ML model validation. |

| L1 / Lasso Regularization | Adds a penalty equal to the absolute value of coefficient magnitude; can shrink less important feature coefficients to zero, performing feature selection. | Preventing overfitting, especially when you suspect many features are irrelevant. |

| L2 / Ridge Regularization | Adds a penalty equal to the square of coefficient magnitude; forces weights to be small but rarely zero. | General prevention of overfitting by penalizing model complexity. |

| Synthetic Data Generation (Deep Generative Models) | Creates entirely new, synthetic data samples by learning the underlying distribution of the original dataset. | Expanding small datasets in rare diseases [32] or creating balanced classes. |

| Data Augmentation (Classical) | Artificially expands training data by creating modified copies of existing data points (e.g., rotating an image). | Computer vision, and increasingly for clinical/omics data [32]. |

| Early Stopping | Halts the model training process when performance on a validation set stops improving. | Preventing overfitting during the training of iterative models like neural networks. |

Workflow Visualization

Data-Centric Overfitting Solution Workflow

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why does my Lasso model select different features when I re-run the experiment with a slightly different dataset?

This is a known stability issue with Lasso, particularly when predictors are highly correlated [36]. Lasso tends to pick one feature from a correlated group and ignore the others, and this choice can be unstable across different data samples [36]. If you need to retain groups of correlated variables, consider switching to Elastic Net (with l1_ratio < 1) or Ridge Regression, as these methods provide more stable coefficient estimates and group retention [36] [37].

Q2: Should I standardize my data before using Lasso, Ridge, or Elastic Net? Yes, you must standardize your predictors (e.g., to zero mean and unit variance) before applying these regularization methods [36]. If features are on different scales, the same penalty (λ) will apply unequally, unfairly penalizing large-scale features and biasing selection toward small-scale ones [36]. Always center your response variable as well.

Q3: How do I choose the right regularization parameters (e.g., alpha, l1_ratio)?

The canonical method is K-fold cross-validation over a log-spaced grid of λ (often called alpha in software) values [36]. For Elastic Net, you must also tune the l1_ratio parameter that balances the L1 and L2 penalties.

- Use the

LassoCV,RidgeCV, orElasticNetCVclasses inscikit-learnfor built-in cross-validation. - The "one-standard-error" (1-SE) rule is a good practice: select the most parsimonious model (highest λ) whose performance is within one standard error of the best-performing model [36].

Q4: My regularized linear model is underperforming. What are potential causes?

- Overly aggressive regularization: Your

alphavalue might be too high, overshrinking coefficients and introducing bias. Retune hyperparameters over a wider range. - Incorrect feature preprocessing: Failure to standardize data will lead to suboptimal models [36]. Re-check your preprocessing pipeline.

- True relationship is non-linear: Regularized linear models assume a linear relationship. If this is violated, consider tree-based models or neural networks.

Q5: How can I perform valid statistical inference (e.g., get p-values) for a model fitted with Lasso?

You cannot naively apply classical statistical inference to Lasso coefficients because the variable selection process introduces selection bias [36]. Standard p-values and confidence intervals will be invalid. For valid post-selection inference, you need specialized methods and packages like selectiveInference in R [36].

Troubleshooting Common Implementation Issues

Problem: Lasso Regression is Too Slow or Fails to Converge on High-Dimensional Data

- Explanation: The coordinate descent algorithm used for Lasso can struggle with convergence on very wide datasets (where the number of features

pis much larger than the number of samplesn) or with certain hyperparameters. - Solution:

- Increase the maximum iterations: Set the

max_iterparameter to a higher value (e.g., 5000 or 10000) [36]. - Adjust the tolerance: Tightening the

tol(tolerance) parameter can improve precision but may require more iterations. - Use warm starts: Fitting the model along a path of decreasing

alphavalues usingwarm_start=Truecan speed up convergence. - Pre-screening features: For extremely high-dimensional data (e.g., in genomics), consider an initial univariate feature screening to reduce dimensionality before applying Lasso [38].

- Increase the maximum iterations: Set the

Problem: Lasso Selects Too Many Features, Hurting Interpretability

- Explanation: In high-dimensional settings, Lasso's variable selection can be inconsistent, often selecting many irrelevant features to achieve good prediction error [38].

- Solution:

- Tune

alphausing the 1-SE rule: This favors simpler models [36]. - Explore modern alternatives: The recently developed uniLasso algorithm is designed to achieve prediction performance similar to Lasso but with significantly sparser models, enhancing interpretability [38].

- Consider non-convex penalties: Methods like SCAD or MCP can be less prone to including irrelevant features, though they come with their own computational challenges [38].

- Tune

Problem: Model Performance is Poor Due to Highly Correlated Features

- Explanation: Lasso arbitrarily selects one feature from a correlated group, which can be unstable and discard useful information [36]. Ridge shrinks coefficients for correlated features toward each other but keeps all of them.

- Solution:

- Use Elastic Net: It is explicitly designed for the "messy middle" scenario of correlated predictors, combining the sparsity of Lasso with the group retention of Ridge [36] [37].

- Use Ridge Regression: If feature selection is not required and all features are theoretically important, Ridge often provides better predictive performance in the presence of multicollinearity [36] [39].

Comparative Analysis of Regularization Methods

The table below summarizes the key characteristics of L1, L2, and Elastic Net regularization to guide method selection.

Table 1: Comparison of L1, L2, and Elastic Net Regularization Methods

| Aspect | L1 (Lasso) | L2 (Ridge) | Elastic Net |

|---|---|---|---|

| Penalty Term | λ∥β∥₁ (Absolute value) [40] [41] | λ∥β∥₂² (Squared value) [42] [40] | λ(α∥β∥₁ + (1-α)∥β∥₂²) [37] |

| Effect on Coefficients | Shrinks and sets some coefficients to exactly zero [36] [41] | Shrinks coefficients toward zero but rarely sets them to zero [36] [42] | Shrinks and can set coefficients to zero, but less aggressively than Lasso [37] |

| Key Property | Sparsity and Feature Selection [41] | Dense coefficients, Handles Multicollinearity [36] [39] | Balances sparsity and group handling [37] |

| Geometry | Diamond-shaped constraint (hits corners) [36] | Circle-shaped constraint (no corners) [36] | A hybrid of diamond and circle shapes |

| Best Use Case | Creating simple, interpretable models; automated feature selection [36] [41] | Predictive accuracy with correlated features; when you believe all features are relevant [36] [39] | "Messy middle": correlated features, but you still desire some sparsity [36] [37] |

Experimental Protocols for Drug Response Prediction

The following workflow and table outline a standard experimental setup for applying regularization methods in a drug response prediction (DRP) context, a common application in computational biology [43] [44].

Figure 1: A standard workflow for implementing regularized regression in high-dimensional biological data analysis.

Table 2: Research Reagent Solutions for a Drug Response Prediction Pipeline

| Component | Function / Explanation | Example from Literature |

|---|---|---|

| Genomic Data (e.g., GDSC, CCLE) | Provides the high-dimensional input features (e.g., gene expression) and drug sensitivity labels (e.g., IC₅₀) for training models [43] [44]. | GDSC database: 969 cancer cell lines, 297 compounds [44]. CCLE database: 1,094 cell lines [43]. |

| Feature Reduction Method | Reduces the dimensionality of genomic data (often >20,000 genes) to mitigate overfitting and improve interpretability [43]. | Knowledge-based: Landmark genes (L1000), Pathway activities [43]. Data-driven: LASSO, Top principal components (PCs) [43]. |

| StandardScaler | A preprocessing step that standardizes features to have zero mean and unit variance. Essential for regularized models to ensure penalties are applied fairly across features [36]. | StandardScaler from scikit-learn is commonly used in a pipeline before the regressor [36]. |

| Scikit-learn Regressors | Python library providing efficient implementations of Lasso, Ridge, and ElasticNet, integrated with cross-validation tools [36] [44]. | LassoCV, RidgeCV, ElasticNetCV for automated hyperparameter tuning [36]. |

| Cross-Validation Framework | Robustly evaluates model performance and tunes hyperparameters without data leakage, crucial for small sample sizes typical in bioinformatics [36] [43]. | 5-fold or 10-fold cross-validation is standard. Repeated random sub-sampling (e.g., 100 splits) is also used [43]. |

Detailed Methodology: Benchmarking Regularized Models

This protocol is based on a large-scale comparative evaluation of feature reduction and machine learning methods for DRP [43].

Data Acquisition and Splitting:

- Obtain your dataset (e.g., gene expression matrix and drug response values). For a robust evaluation, consider both cell line data (for cross-validation) and clinical tumor data (for external validation) [43].

- Perform a train-test split (e.g., 80%-20%) on the cell line data. Hold out the test set completely until the final model evaluation.

Feature Preprocessing and Reduction:

- On the training set only, apply your chosen feature reduction method (see Table 2). For example, select the top 1,000 most variable genes or calculate pathway activity scores.

- Standardize the reduced features (zero mean, unit variance) based on the training set statistics. Apply the same transformation to the test set.

Hyperparameter Tuning via Nested Cross-Validation:

- Use the training set for model selection. To avoid overfitting during tuning, implement a nested cross-validation scheme [43].

- In the outer loop, perform K-fold cross-validation (e.g., K=5) on the training set.

- In each outer fold, use an inner loop of cross-validation (e.g., 5-fold) on the outer training fold to tune the regularization strength (

alpha) and, for Elastic Net, thel1_ratio. - A log-spaced grid of

alphavalues (e.g.,np.logspace(-3, -1, 7)) is recommended [36].

Model Training and Final Evaluation:

- After identifying the best hyperparameters, refit the model on the entire training set using these parameters.

- Evaluate the final model's performance on the held-out test set using relevant metrics, such as Pearson's Correlation Coefficient (PCC) or Mean Squared Error (MSE) [43].

Figure 2: Nested cross-validation workflow for unbiased hyperparameter tuning and performance estimation.

Frequently Asked Questions (FAQs)

Q1: What is dropout regularization and why is it needed in drug development research? Dropout regularization is a technique that randomly "drops out" or deactivates a proportion of neurons in a neural network during training to prevent overfitting [45] [46]. In drug development, where datasets are often limited and models complex, overfitting is a significant concern. Dropout helps create more robust models that generalize better to new, unseen molecular or clinical data, leading to more reliable predictions in drug discovery and development pipelines [47].

Q2: How do I choose the appropriate dropout rate for my deep learning model? Selecting dropout rates depends on your network architecture and data. Start with these research-tested defaults [46]:

- Input layers: 0.1-0.2 (lower to preserve raw feature information)

- Hidden layers: 0.3-0.5 (moderate to encourage robustness)

- Output layer: Typically no dropout (to preserve final predictions) For convolutional networks, use 0.2-0.5 in fully connected layers, while for RNNs/LSTMs, use 0.1-0.3 as sequential data is more sensitive [46]. Systematically test values through grid search and monitor validation performance.

Q3: Why does my model's training accuracy decrease when I add dropout? This expected behavior indicates dropout is working correctly. By preventing the network from memorizing training samples, dropout reduces training accuracy slightly while typically improving validation accuracy and generalization [48]. If training accuracy drops significantly, your dropout rate might be too high—reduce it gradually until you find a balance where validation performance improves without excessively compromising training performance.

Q4: Should I use dropout with batch normalization in my deep neural network? Batch normalization can sometimes provide similar regularization effects to dropout [45]. When using both techniques, evaluate model performance with and without dropout. In many modern architectures, especially convolutional networks, batch normalization has largely overtaken dropout, though dropout remains valuable in fully connected layers [48]. Test empirically to determine the optimal combination for your specific research problem.

Q5: How does dropout prevent overfitting in deep learning models? Dropout combats overfitting through three primary mechanisms [46] [49]:

- It prevents complex co-adaptations by forcing neurons to work independently

- It creates an ensemble effect by training multiple different subnetworks simultaneously

- It encourages redundant representations by making the network learn features that are useful across various neuronal combinations

Q6: Why does my model show inconsistent results between training and testing when using dropout? This occurs because dropout behaves differently during training versus inference. During training, neurons are randomly dropped, but during testing, all neurons are active, and their outputs are scaled by the dropout probability [50]. Ensure you're properly disabling dropout during evaluation by setting your model to evaluation mode (model.eval() in PyTorch) or setting training=False in TensorFlow/Keras.

Troubleshooting Guide

Problem: Model Performance Decreased After Adding Dropout

Possible Causes and Solutions:

- Excessively high dropout rate: Lower the dropout probability, especially in later hidden layers [46]

- Insufficient training time: Models with dropout typically require longer training—increase epochs by 20-50% [48]

- Improper learning rate: With dropout, use higher learning rates (10x baseline) and momentum (0.9) as recommended in the original paper [51]

Problem: Training Instability or Diverging Loss

Possible Causes and Solutions:

- Missing weight constraints: Implement max-norm constraints (weight constraint of 3) as suggested in the original dropout paper [51]

- Extreme dropout rates: Avoid rates above 0.7, which may remove too much capacity [49]

- Combination with other regularizers: Reduce L2 regularization strength when using dropout [52]

Problem: Inconsistent Results Between Runs

Possible Causes and Solutions:

- Unset random seeds: Set random seeds for reproducibility across training sessions [46]

- Different dropout masks: Ensure consistent initialization and data loading procedures

- Hardware variations: The same model may exhibit slight performance differences across GPU/CPU platforms

Experimental Protocols & Implementation

Standardized Dropout Implementation Protocol

Objective: Systematically evaluate dropout efficacy in deep neural networks for biological data analysis.

Materials:

- Deep learning framework (PyTorch, TensorFlow/Keras)

- Target dataset (e.g., molecular activity, clinical outcomes)

- Computational resources (GPU recommended)

Methodology:

Baseline Model Establishment:

- Train reference model without dropout

- Record training/validation performance and overfitting gap

- Ensure model capacity is sufficient for the task

Progressive Dropout Integration:

- Implement dropout sequentially across layers

- Begin with input layer (0.1-0.2 dropout rate)

- Add dropout to hidden layers incrementally

- Monitor performance impact at each stage

Hyperparameter Optimization:

- Conduct grid search over dropout rates (0.1, 0.3, 0.5)

- Combine with learning rate adjustments

- Implement weight constraints as needed

Validation and Testing:

- Use k-fold cross-validation (typically k=10)

- Compare final model against baseline

- Perform statistical significance testing

PyTorch Implementation Template:

Quantitative Analysis Framework

Performance Metrics Table:

| Model Variant | Training Accuracy | Validation Accuracy | Generalization Gap | Training Time (epochs) |

|---|---|---|---|---|

| Baseline (No Dropout) | 98.7% | 82.3% | 16.4% | 100 |

| Input Dropout Only (0.2) | 96.2% | 85.1% | 11.1% | 120 |

| Hidden Layer Dropout (0.5) | 94.8% | 88.7% | 6.1% | 150 |

| Combined Dropout (0.2/0.5) | 93.5% | 90.2% | 3.3% | 180 |

Optimal Dropout Rates by Architecture:

| Network Type | Input Layer | Hidden Layers | Output Layer | Recommended Use Cases |

|---|---|---|---|---|

| Feedforward DNN | 0.1-0.2 | 0.3-0.5 | 0.0 | Molecular property prediction, clinical risk models |

| Convolutional Neural Network | 0.1-0.2 | 0.2-0.5 (FC only) | 0.0 | Medical imaging, protein structure analysis |

| Recurrent Neural Network | 0.1-0.2 | 0.1-0.3 | 0.0 | Sequence analysis, time-series clinical data |

| Transformer Architecture | 0.1 | 0.1-0.2 (attention) | 0.0 | Chemical language models, biomedical text mining |

Architectural Visualizations

Dropout Mechanism During Training vs. Testing

Ensemble Effect of Dropout Regularization

Research Reagent Solutions

| Research Tool | Function in Dropout Research | Implementation Example |

|---|---|---|

| PyTorch Framework | Provides nn.Dropout module for implementation | self.dropout = nn.Dropout(0.5) [46] [48] |

| TensorFlow/Keras | Offers Dropout layer for model integration | model.add(Dropout(0.5)) [49] [51] |

| Weight Constraints | Prevents weight explosion with dropout | kernel_constraint=MaxNorm(3) [51] |

| Learning Rate Schedulers | Adapts learning rates for dropout training | SGD(learning_rate=0.1, momentum=0.9) [51] |

| Cross-Validation Framework | Evaluates dropout efficacy reliably | StratifiedKFold(n_splits=10) [51] |

| Bernoulli Distribution | Underlying mechanism for random neuron selection | Random binary masks [46] |

Troubleshooting Guides

Guide 1: Resolving Overfitting Despite Using Cross-Validation

Problem: Your model shows a significant performance gap between high training accuracy and lower validation accuracy, even when using k-fold cross-validation. This indicates the model is memorizing the training data rather than learning generalizable patterns [3] [4].

Solution: Implement a robust early stopping routine within your cross-validation framework.

- Procedure:

- Split Data for Early Stopping: For each fold in your k-fold cross-validation, further split the training fold into a new training set and a validation set (e.g., 80-20 split). This validation set is dedicated to guiding the early stopping decision [53].

- Train with Monitoring: Train your model on the new training set. After each epoch (or a set number of iterations), evaluate the model's performance on the dedicated validation set.

- Set Stopping Criterion: Define a patience parameter, which is the number of epochs to continue training without improvement on the validation set before stopping.

- Stop and Record: Once the stopping criterion is met, note the optimal number of epochs for that fold. Training should be halted to prevent memorization [3] [4].

- Repeat per Fold: Repeat this process for all k-folds. The optimal number of stopping epochs may vary between folds [53].

Diagram: Early Stopping within a Cross-Validation Fold

Guide 2: Addressing High Variance in Cross-Validation Results

Problem: You observe widely different performance metrics across different folds of cross-validation, making it difficult to estimate your model's true generalization error.

Solution: Ensure your data splitting strategy is appropriate and consider using repeated or stratified cross-validation.

- Procedure:

- Stratified Splits: For classification problems, use stratified k-fold cross-validation. This ensures each fold has the same proportion of class labels as the entire dataset, leading to more reliable performance estimates [54].

- Increase Folds: Consider increasing the value of k (e.g., from 5 to 10). While computationally more expensive, this provides a more robust estimate as the model is trained and evaluated on more data variations [54].

- Repeated CV: Perform repeated k-fold cross-validation, where the entire process is run multiple times with different random splits of the data. The average of these runs provides a more stable performance estimate.

- Check Data Integrity: Investigate the data for inconsistencies, outliers, or data leaks between training and validation splits that might cause the high variance.

Diagram: High-Level k-Fold Cross-Validation Workflow

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between overfitting and underfitting?

- Overfitting occurs when a model is too complex and learns the noise and random fluctuations in the training data in addition to the underlying pattern. It performs well on training data but poorly on new, unseen data [3] [4].

- Underfitting occurs when a model is too simple to capture the underlying trend in the data. It performs poorly on both the training data and new data [3] [4].

FAQ 2: How can I detect if my model is overfitting during training?

The primary indicator is a large and growing performance gap. You will see a very high accuracy (or low error) on your training dataset, but a significantly worse accuracy when the model is evaluated on a separate validation or test set that it was not trained on [3] [4].

FAQ 3: Can I use the same validation set for both early stopping and hyperparameter tuning?

This is not recommended. Using the same data to make decisions about when to stop training and to select hyperparameters can lead to information "leaking" from the validation set into the model, causing optimistic performance estimates and potential overfitting to the validation set. It is better to use a separate holdout set for early stopping within the training data [53].

FAQ 4: My model training is very slow. How can early stopping and cross-validation be made more efficient?

You can implement aggressive early stopping within the cross-validation folds. Research shows that stopping the evaluation of a hyperparameter configuration after the first fold if its performance is worse than the current best model can save significant computational resources. This allows the search algorithm to explore more configurations within a fixed time budget [55].

FAQ 5: Is some degree of overfitting always unacceptable?

While significant overfitting generally indicates a model that will not perform well in real-world use, a small degree of overfitting might be acceptable in some applications, depending on the cost of errors and the requirements for model performance. The goal is to find a practical balance [4].

Table 1: Impact of Early Stopping Cross-Validation on Model Selection Efficiency

This data is derived from a study on early stopping for cross-validation during model selection, comparing traditional k-fold CV to methods that stop evaluation early [55].

| Metric | Traditional k-Fold CV | Early Stopped CV | Improvement with Early Stopping |

|---|---|---|---|

| Time to Convergence | Baseline | Converged faster in 94% of datasets | 214% faster on average |

| Configurations Evaluated | Baseline | Explored more configurations within a 1-hour budget | +167% more configurations on average |

| Overall Performance | Baseline | Obtained better final model performance | Improved performance in many cases |

Table 2: Comparison of Common Cross-Validation Techniques

A comparison of different validation methods to help select the right strategy for your project [54].

| Feature | K-Fold Cross-Validation | Holdout Method |

|---|---|---|

| Data Split | Dataset divided into k folds; each used once as a test set. | Dataset split once into training and testing sets. |

| Bias & Variance | Lower bias, more reliable performance estimate. | Higher bias if the split is not representative. |

| Execution Time | Slower, as the model is trained k times. | Faster, with only one training and testing cycle. |

| Best Use Case | Small to medium datasets where accurate estimation is critical. | Very large datasets or when a quick evaluation is needed. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software Tools for Model Training Controls

This table lists key software libraries and frameworks used to implement the training controls discussed in this guide.

| Tool Name | Function | Key Features |

|---|---|---|

| Scikit-learn | A comprehensive machine learning library for Python. | Provides easy-to-use implementations for k-fold cross-validation, stratified splits, and various metrics [26]. |

| TensorFlow / Keras | Open-source libraries for deep learning. | Include callbacks like EarlyStopping to automatically halt training when validation performance stops improving [26]. |

| PyTorch | An open-source deep learning framework. | Offers flexibility for building custom training loops, allowing for manual implementation of early stopping and cross-validation logic [26]. |

| Automated ML (AutoML) Systems | Systems that automate the machine learning workflow. | Can handle hyperparameter tuning and cross-validation efficiently, with some now incorporating early stopping for CV to save time [55]. |

Troubleshooting Guides

Guide 1: Addressing Persistent Overfitting Despite Using Ensemble Methods

Problem: Your ensemble model shows excellent performance on training data but poor generalization on validation/test sets, indicating overfitting.

Diagnosis & Solutions:

Verify Ensemble Complexity: Increasing the number of base learners (ensemble complexity) beyond optimal levels can cause overfitting in boosting algorithms [56]. Monitor performance on a validation set as complexity increases.

- Action: For boosting, if performance (e.g., accuracy) plateaus and then decreases on the validation set after adding more learners, reduce the number of estimators or introduce stronger regularization [56].

- Action: For bagging, performance typically plateaus with increasing complexity but rarely decreases. Stop adding learners once performance stabilizes [56].

Adjust Model-Specific Parameters:

- For Boosting (AdaBoost, Gradient Boosting):

- Learning Rate: Decrease the learning rate to shrink the contribution of each subsequent learner, making the learning process more conservative [57].

- Tree Depth: Reduce the maximum depth of decision trees used as weak learners to increase bias and prevent overfitting [58].

- Subsampling: Use stochastic boosting (subsample < 1.0) to train base learners on random fractions of the data, which reduces variance and improves generalization [57].

- For Bagging (Random Forest):

- For Boosting (AdaBoost, Gradient Boosting):

Implement Cross-Validation: Use nested cross-validation for unbiased hyperparameter tuning and model evaluation [61].

- Action: For small datasets, use stratified k-fold cross-validation to maintain class distribution in each fold, preventing skewed performance estimates [61].

Apply Regularization:

- L1/L2 Regularization: If using linear models as base learners, incorporate L1 (Lasso) or L2 (Ridge) regularization in the loss function to penalize complex models [62].

- Dropout (for Neural Networks): While not typical in bagging/boosting, if using neural nets as base models, apply dropout to prevent co-adaptation of neurons [63].