Structural vs. Parametric Uncertainty: A Framework for Robust Models in Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on distinguishing and managing structural and parametric uncertainties in biomedical models.

Structural vs. Parametric Uncertainty: A Framework for Robust Models in Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on distinguishing and managing structural and parametric uncertainties in biomedical models. We explore the foundational definitions, where structural uncertainty stems from model simplifications and incomplete knowledge of underlying processes, while parametric uncertainty arises from imprecise input parameters. The piece details methodological frameworks for quantification, including Bayesian updating and ensemble modeling, and addresses troubleshooting strategies for when structural deficiencies limit parametric optimization. Finally, we cover validation and comparative techniques to assess model credibility, synthesizing key takeaways to enhance the reliability of clinical decision-making and accelerate robust therapeutic development.

Demystifying Uncertainty: Defining Structural and Parametric Sources in Biomedical Models

Frequently Asked Questions (FAQs)

Q1: What is structural uncertainty, and how is it different from parametric uncertainty?

Structural uncertainty arises from errors, simplifications, or missing processes in the mathematical representation of a real-world system. Parametric uncertainty, in contrast, stems from not knowing the exact values of the parameters within a chosen model structure. In climate modeling, for example, structural uncertainty comes from the inability of parameterizations to perfectly represent small-scale processes like cloud convection, while parametric uncertainty arises from fixing model parameters to values based on limited empirical studies [1]. In hydrological models, structural uncertainty exists due to errors in the mathematical representation of real-world hydrological processes, whereas parametric uncertainty exists due to both structural and measurement uncertainty, combined with limited data for calibration [2].

Q2: Why is it important to account for structural uncertainty in computational models?

Accounting for structural uncertainty is crucial for building reliable models and making credible predictions. It facilitates the rejection of deficient model structures and helps identify whether the model structure or the input measurements need to be improved to reduce the total output uncertainty [2]. In Land Use Cover Change (LUCC) modeling, different model software packages conceptualize the same system in different ways, leading to different simulation outputs. Ignoring this structural uncertainty means overlooking a significant source of potential error in your results [3].

Q3: How can I identify if my model has significant structural uncertainty?

A key indicator is a consistent, unexplained bias between your model simulations and observed data, even after thorough calibration. This bias is a direct effect of structural uncertainty that introduces error into parameter estimation [2]. Furthermore, if you obtain significantly different simulation outputs from different model software packages applied to the same geographic area and dataset, this is a strong signal of structural uncertainty inherent in the model designs [3].

Q4: What are some common strategies for managing structural uncertainty?

A common approach is to use multi-model ensembles, running several different model structures to see the range of possible outcomes [4]. Another strategy is to use Bayesian frameworks to learn biases and adjust parameterizations, effectively converting the problem of structural uncertainty into learning a sparse solution of unknown coefficients for basis functions and stochastic processes [1]. User intervention and the provision of several options for each modeling step in software packages also allow for the management of structural uncertainty [3].

Q5: In drug development, what are the main sources of uncertainty?

While this article focuses on computational models, workshops with companies and regulatory agencies have identified that uncertainties in medicine development arise from clinical, regulatory, and Health Technology Assessment (HTA) drivers. Key strategies involve managing or mitigating these uncertainties either during development or post-approval to facilitate decision-making [5].

Troubleshooting Guides

Problem: Model consistently produces a biased prediction, even with "optimal" parameters.

- Potential Cause: This is a classic symptom of structural error, where the model's equations do not fully capture the underlying system's behavior [2].

- Solution:

- Examine Residuals: Analyze the pattern of differences (residuals) between observed and simulated values. Non-random, structured residuals suggest a missing process or incorrect relationship in the model structure.

- Model Selection/Ensemble: Consider testing alternative model structures. If multiple models are available, using an ensemble of models can provide a more robust prediction and quantify the range of uncertainty [4].

- Model Enhancement: If possible, introduce new terms or processes into your model based on domain knowledge to address the identified bias.

Problem: Two different models yield vastly different forecasts for the same scenario.

- Potential Cause: The models have different underlying structural assumptions, leading to divergent conceptualizations of the system [3] [4].

- Solution:

- Compare Model Assumptions: Systematically compare the fundamental equations and processes represented in each model. Identify key differences in how they represent system dynamics.

- Global Sensitivity Analysis (GSA): Use GSA to understand how changes in input parameters influence model outputs in each structure. This helps identify which processes drive the differences [6].

- Use as an Uncertainty Bound: The range of predictions itself is a valuable measure of structural uncertainty. Report this range rather than relying on a single model's output.

Problem: Quantifying structural and measurement uncertainties separately is impossible from residuals alone.

- Potential Cause: The residual time series is an aggregate of structural and measurement uncertainties. For a fixed model parameter set, it is impossible to separate the two without additional information [2].

- Solution:

- Independent Uncertainty Estimation: Obtain an estimate of measurement uncertainty before model calibration. For streamflow, this can be done via rating-curve analysis.

- Pseudo Repeated Sampling: In environmental modeling, use machine learning algorithms like Random Forest as a "pseudo repeated sampler" to identify similar events across different watersheds and estimate measurement uncertainty [2].

Problem: High computational cost of model runs prevents robust uncertainty quantification.

- Potential Cause: Classical uncertainty quantification methods, like Monte Carlo, require thousands of model runs, which is infeasible for complex, high-fidelity models [6] [1].

- Solution:

- Surrogate Modeling: Develop a surrogate model (or emulator) using machine learning. This fast-to-evaluate model is trained on a limited set of full model runs and can be used for extensive uncertainty analysis [1].

- Model Reduction: Use projection-based model reduction techniques like Proper Orthogonal Decomposition to create a lower-dimensional, computationally cheaper surrogate that retains the physics of the full model [6].

Key Experimental Protocols and Data

Protocol: Comparing Model Structures to Illustrate Structural Uncertainty

This protocol is adapted from methodologies used in hydrology to demonstrate the impact of model choice [4].

Objective: To evaluate how different model structures affect simulation outputs for a given system.

Materials:

- A case study system (e.g., a watershed, a land-use map).

- Input data for the system (e.g., precipitation, initial conditions).

- At least two different model software packages or structures (e.g., MARRMoT toolbox models [4], LUCC models like CA_Markov, Dinamica EGO, etc. [3]).

- Computing environment with necessary software licenses.

Methodology:

- Data Preparation: Prepare a consistent set of input data for all models.

- Calibration: Calibrate each model on the same historical period of the case study system. Use the same objective function for calibration where possible.

- Simulation: Run each calibrated model for an identical simulation period.

- Validation & Comparison:

- Quantitatively compare simulation outputs against observed data using validation metrics.

- Qualitatively compare the outputs of the different models against each other.

Expected Output: A range of simulation results, visually and quantitatively demonstrating the uncertainty introduced solely by the choice of model structure.

Quantitative Data from LUCC Model Comparison

The table below summarizes findings from a study comparing four common Land Use Cover Change (LUCC) models, highlighting aspects of structural uncertainty [3].

Table 1: Comparison of Structural Uncertainty in LUCC Model Software Packages

| Model Software Package | Key Finding on Structural Uncertainty | Simulation Accuracy | Repeatability |

|---|---|---|---|

| CA_Markov | Conceptualizes the system differently from other models, leading to different outputs. | Varies by case study | Varies by case study |

| Dinamica EGO | Conceptualizes the system differently from other models, leading to different outputs. | Varies by case study | Varies by case study |

| Land Change Modeler | Conceptualizes the system differently from other models, leading to different outputs. | Varies by case study | Varies by case study |

| Metronamica | Conceptualizes the system differently from other models, leading to different outputs. | Varies by case study | Varies by case study |

| Overall Conclusion | No single "best" modeling approach; each entails different uncertainties and limitations. | Statistical/automatic models did not provide better scores than user-driven models. | Statistical/automatic models did not provide higher repeatability than user-driven models. |

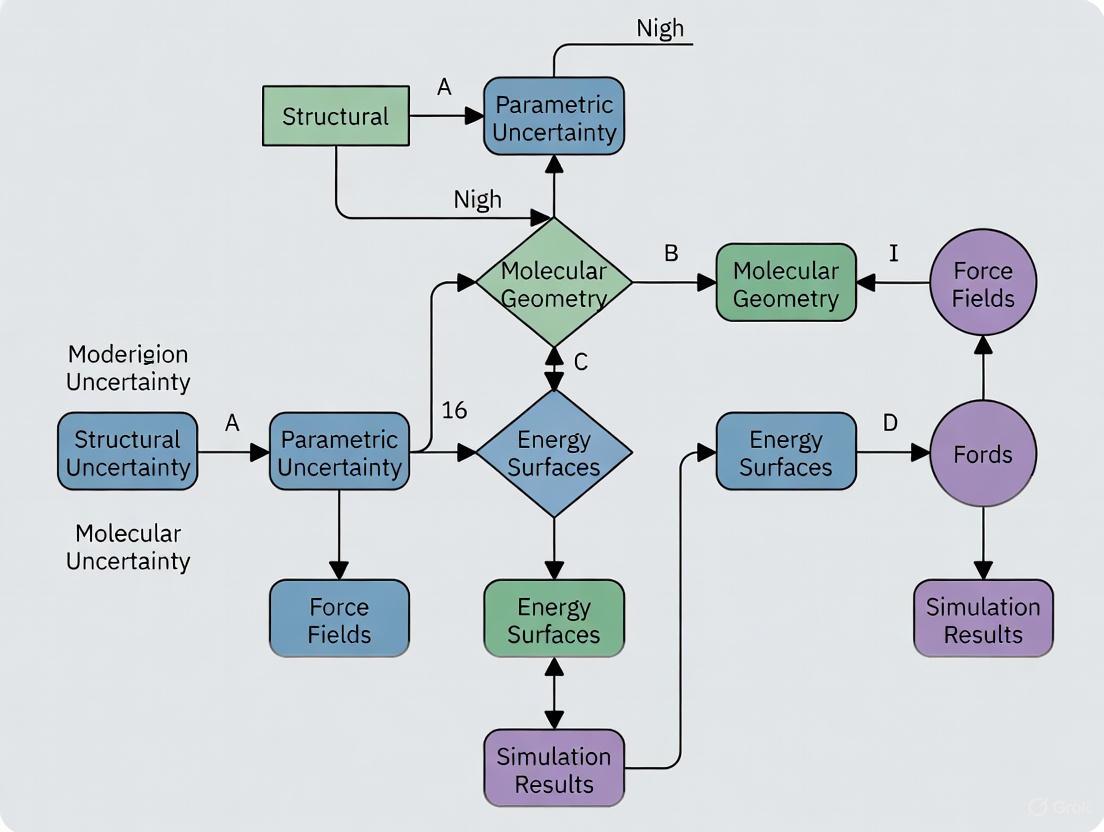

Workflow and Conceptual Diagrams

Structural Uncertainty Identification Workflow

Uncertainty Quantification Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential "Reagents" for Structural Uncertainty Research

| Tool / Solution | Function in Research | Field of Application |

|---|---|---|

| Multi-Model Ensembles | Runs multiple model structures to quantify the range of predictions and formally represent structural uncertainty. | Hydrology [4], Climate Science [1], Land Use Modeling [3] |

| Global Sensitivity Analysis (GSA) | Identifies which input parameters or model processes have the greatest influence on output uncertainty, helping to pinpoint structural weaknesses. | General Computational Models [6] |

| Bayesian Inverse Problems Framework | Provides a mathematical framework to refine prior distributions of inputs (parameters) into data-consistent posterior distributions, quantifying parameter uncertainty. | Climate Modeling [1] |

| Surrogate Models / Emulators | Machine-learning-based models that approximate the behavior of complex, computationally expensive simulators, enabling extensive uncertainty quantification runs. | Climate Modeling [1], Engineering [6] |

| Model Reduction Techniques | Creates lower-dimensional, computationally cheaper surrogate models that retain the physics of the full model, making UQ feasible. | Engineering [6] |

Frequently Asked Questions

Q1: What is the fundamental difference between parametric and structural uncertainty? A: Parametric uncertainty refers to uncertainty about the numerical values of parameters within a chosen mathematical model, while structural uncertainty concerns the model form itself, such as the choice of clinical states in a Markov model or how transition probabilities are defined [7]. Parametric uncertainty is often quantified with probabilistic ranges for parameters, whereas structural uncertainty involves choosing between plausible model architectures.

Q2: My model fitting is computationally expensive. What are efficient methods for parameter estimation and uncertainty quantification? A: For complex models, profile likelihood-based methods are often an efficient choice [8]. This approach uses optimization to find maximum likelihood estimates and explores parameter identifiability by profiling one parameter at a time. Alternatively, fully Bayesian methods using Markov Chain Monte Carlo (MCMC) posterior sampling can formally propagate parameter uncertainty to model outputs, accounting for correlations between parameters [7] [9].

Q3: How can I visually communicate the parametric uncertainty in my model's predictions? A: Several methods are available. For a lay audience, frequency framing or quantile dotplots are effective, as they create a strong intuitive impression of uncertainty by showing discrete possible outcomes [10]. For more technical audiences, error bars indicate confidence intervals for point estimates, and confidence bands show uncertainty around regression curves or other functional outputs [10] [11].

Troubleshooting Common Problems

Problem: Poor model convergence or non-identifiable parameters during estimation. Diagnosis: This often occurs when multiple parameter combinations produce an equally good fit to the data, rendering the model non-identifiable. Solution:

- Profile Likelihood Analysis: Use this method to systematically assess parameter identifiability. A flat profile likelihood indicates that a parameter is non-identifiable or only poorly identifiable with the available data [8].

- Structured Inference: If some parameters have a known, simple effect on the model output (e.g., linear scaling), use a structured inference approach. This method partitions parameters into "inner" and "outer" sets, reducing the dimensionality of the optimization problem and computational cost [8].

Problem: Model predictions fail to account for full parameter uncertainty, leading to overconfident results. Diagnosis: Using only point estimates (e.g., maximum likelihood estimates) for parameters ignores the range of plausible values and their correlation. Solution:

- Probabilistic Propagation: Use methods like MCMC [7] or Monte Carlo simulation [12] to sample from the joint posterior distribution of your parameters. Run your model with these samples to generate a distribution of outputs that fully reflects parametric uncertainty.

- Model Averaging: If structural uncertainty is also present, account for it by averaging posterior distributions from competing models using weights based on model adequacy measures (e.g., deviance information criterion) [7].

Problem: Inefficient parameter estimation for high-dimensional or complex models. Diagnosis: Standard optimization routines (e.g., finite difference approximations) become inefficient and slow for models with many parameters. Solution: Employ gradient-based optimization methods.

- Adjoint Sensitivity Analysis: This method is efficient for large systems of ODEs, as it computes the gradient of an objective function with respect to parameters by solving a single backward integration problem [9].

- Forward Sensitivity Analysis: This augments the original ODE system with sensitivity equations to compute exact gradients. It is effective for models with a moderate number of parameters [9].

Experimental Protocols for Parameter Inference

Protocol 1: Profile Likelihood Workflow for Practical Identifiability [8] This protocol is useful for determining which parameters can be uniquely identified from your data and for quantifying their uncertainty.

- Formulate the Likelihood Function: Define a likelihood function ( L(\theta) ) that measures the probability of your observed data given the model parameters ( \theta ).

- Find Maximum Likelihood Estimates (MLE): Solve ( \theta{\text{MLE}} = \text{argmax}{\theta} L(\theta) ) using an optimization algorithm.

- Compute Profile Likelihoods: For each parameter of interest ( \thetai ):

- Fix ( \thetai ) at a value around its MLE.

- Optimize the likelihood over all other parameters ( \theta{j \neq i} ).

- Repeat across a range of values for ( \thetai ) to build its profile.

- Assess Identifiability: A parameter is practically identifiable if its profile likelihood forms a well-defined peak. A flat profile suggests non-identifiability.

- Construct Confidence Intervals: Use the likelihood ratio test to determine confidence intervals from the profile.

Protocol 2: Bayesian Parameter Inference and Uncertainty Propagation with MCMC [7] This protocol is suitable for formally quantifying parameter uncertainty and propagating it to model predictions.

- Specify Model and Priors: Define your mathematical model and assign prior probability distributions to all unknown parameters.

- Define Posterior Distribution: The posterior distribution is proportional to the likelihood of the data times the prior distributions.

- Sample from the Posterior: Use MCMC sampling algorithms (e.g., implemented in software like WinBUGS or PyBioNetFit) to generate a large number of samples from the joint posterior distribution of the parameters.

- Check Convergence: Ensure the MCMC chains have converged to the target posterior distribution.

- Propagate Uncertainty: Use the sampled parameter values to run the model repeatedly, generating a distribution of outputs that incorporates parameter uncertainty.

Research Reagent Solutions

The table below lists key software tools and their functions for parameter estimation and uncertainty quantification.

| Tool/Reagent Name | Primary Function | Key Application Context |

|---|---|---|

| PyBioNetFit [9] | Parameter inference for biological models | Supports rule-based modeling languages (BNGL) and SBML; performs parameter estimation and uncertainty analysis. |

| AMICI/PESTO [9] | Parameter estimation toolbox for ODE models | Efficiently handles high-dimensional models using advanced sensitivity analysis (adjoint/forward). |

| WinBUGS [7] | Bayesian inference Using Gibbs Sampling | Performs MCMC sampling for Bayesian models, useful for probabilistic sensitivity analysis in health economic models. |

| Profile Likelihood Workflow [8] | Identifiability analysis and uncertainty quantification | An optimization-based method for practical identifiability, estimation, and prediction uncertainty. |

| Structured Inference [8] | Efficient parameter inference | Reduces computational cost by exploiting known parameter relationships (e.g., linear scaling). |

| PINN-UU [12] | Uncertainty quantification in PDE models | Physics-Informed Neural Network for solving PDEs with uncertain parameters, alternative to Monte Carlo. |

Visualizing Uncertainty

Effective visualization is key to communicating parametric uncertainty. The table below summarizes common approaches.

| Visualization Type | Description | Best Use Cases |

|---|---|---|

| Error Bars [10] | Bars extending from a point estimate to show a confidence interval. | Communicating uncertainty of a point estimate (e.g., a mean) in a compact, space-efficient way. |

| Confidence Bands [10] | A shaded region around a line (e.g., a regression curve) to show uncertainty. | Displaying uncertainty in a functional output over a continuous domain. |

| Quantile Dotplots [10] | A series of dots where each dot represents a quantile of the predictive distribution. | Intuitive communication of a full probability distribution for a lay audience; makes uncertainty tangible. |

| Hypothetical Outcome Plots (HOPs) [11] | An animation that cycles through different possible outcomes from the predictive distribution. | Creating an intuitive sense of uncertainty and variability, though requires dynamic media. |

Workflow for Parameter Inference and Uncertainty

The diagram below outlines a general workflow for dealing with parametric uncertainty, from model specification to prediction.

Differentiating Uncertainty Types

The following diagram illustrates the key differences between parametric and structural uncertainty in the modeling process.

In scientific research, particularly in drug development, effectively troubleshooting failed experiments requires more than just technical skill; it demands a deep understanding of the different types of uncertainty inherent in any model or experimental system. The core challenge often lies in distinguishing between parametric uncertainty (uncertainty about the numerical values within a model) and structural uncertainty (uncertainty about the model's fundamental equations and assumptions) [2] [1]. Misdiagnosing the type of uncertainty can lead research teams down a path of futile parameter adjustments when what is truly needed is a re-evaluation of the underlying experimental hypothesis or model framework.

This guide provides a structured approach and toolkit to help researchers correctly identify and resolve these distinct forms of uncertainty.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Our cell-based assay is producing results with unexpectedly high variance and inconsistent signals. We've repeated the experiment with different cell passage numbers, but the problem persists. Is this a parametric or structural issue? A: This is a classic scenario where the symptoms point to parametric uncertainty (e.g., cell viability, concentration levels), but the root cause may be structural. A key structural factor often overlooked is the experimental protocol itself. For instance, an imprecise washing technique during an MTT assay can lead to the accidental aspiration of cells, introducing high variability that is not resolved by simply changing biological reagents [13]. The problem is not the model's parameters but the foundational steps of the method.

Q2: We are developing a new climate model, and its predictions for extreme precipitation events are highly sensitive to small changes in a few parameters. How can we have more confidence in our forecasts? A: This high sensitivity indicates significant parametric uncertainty. The solution is to move from using single, fixed parameter values to adopting a Bayesian inference framework [1]. This involves:

- Defining a prior distribution for the sensitive parameters.

- Using observational data (e.g., from satellites) to calibrate the model and update these distributions.

- Generating a posterior distribution of both parameters and predictions, which provides a robust, probabilistic forecast that quantifies the uncertainty, as shown in climate modeling research [1].

Q3: In a hydrological model, how can we separate the total error into components stemming from structural vs. measurement uncertainty? A: Separation is challenging because residuals aggregate both uncertainties. The only reliable method is to obtain an independent estimate of measurement uncertainty before model calibration [2]. One innovative approach is to use a machine learning algorithm like Random Forest as a "pseudo repeated sampler." By identifying similar rainfall-runoff events across different watersheds, these events can be treated as approximate repeated experiments under identical conditions, providing an estimate of measurement uncertainty that can be isolated from the structural error [2].

Troubleshooting Guide: A Step-by-Step Diagnostic Framework

| Observed Symptom | Initial Hypothesis (Often Parametric) | Deeper Investigation for Structural Causes | Recommended Action |

|---|---|---|---|

| High variability & inconsistent results | Reagent concentration, cell line health, incubation time [13]. | Scrutinize fundamental techniques: pipetting accuracy, washing steps, equipment calibration, protocol fidelity [13]. | Control the protocol: Review video recordings of technique, use calibrated equipment, and strictly adhere to a documented protocol. |

| Systematic bias; model consistently over/under-predicts | Incorrect baseline parameter values. | Flawed model assumptions; missing a key variable or relationship; an oversimplified representation of the biology [2] [1]. | Challenge the model: Design experiments to test core assumptions. Consider adding new variables or using a different mechanistic framework. |

| High sensitivity to tiny parameter changes | The parameters are inherently highly sensitive. | The model structure may be ill-posed or overly simplistic for the system's complexity, making it brittle [1]. | Quantify uncertainty: Employ Bayesian calibration to represent parameters as distributions, not fixed values. This quantifies and propagates parametric uncertainty [1]. |

The Scientist's Toolkit

Key Research Reagent Solutions

| Item | Function in Uncertainty Analysis |

|---|---|

| Bayesian Inference Framework | A mathematical approach to update the probability for a hypothesis (or parameter values) as more evidence or data becomes available. It is the cornerstone for formally quantifying both parametric and structural uncertainty [2] [1]. |

| Random Forest Algorithm | A machine learning method that can be used as a "pseudo repeated sampler" to approximate measurement uncertainty by leveraging similar experimental events across different datasets [2]. |

| Surrogate Model (Emulator) | A computationally cheap model (often machine learning-based) trained to mimic the behavior of a complex, expensive simulation. It allows for rapid exploration of parameter spaces and uncertainty quantification without the high computational cost [1]. |

| High-Resolution Simulation Data | Detailed simulations of small-scale processes (e.g., cloud convection, protein folding) used as "ground truth" data to calibrate and assess the structural adequacy of larger-scale models [1]. |

Experimental Protocol: The Calibrate, Emulate, Sample (CES) Method for Quantifying Parametric Uncertainty

This state-of-the-art methodology, developed for climate modeling and applicable to complex biological systems, provides a rigorous protocol for quantifying parametric uncertainty [1].

- Calibrate: Run an ensemble of simulations with your model, varying the parameters of interest across a wide prior distribution. Use an ensemble-based data assimilation scheme to locate the region of parameter space that produces realistic outcomes.

- Emulate: Train a machine learning-based surrogate model (the emulator) on the input-output data collected from the ensemble simulations. This emulator learns to predict model outcomes for any given parameter set almost instantaneously.

- Sample: Use the trained emulator to run a vast statistical sampling (e.g., via Markov Chain Monte Carlo) to refine the prior parameter distribution into a tight posterior distribution that is consistent with observed data. This posterior distribution formally represents the quantified parametric uncertainty.

Workflow Visualization: Diagnosing Uncertainty in Experimental Research

The following diagram outlines the logical workflow for diagnosing and addressing different types of uncertainty in a research project.

In clinical predictions, structural uncertainty and parametric uncertainty represent two fundamental classes of unknowns that affect the reliability of model-based conclusions. Structural uncertainty, also known as model inadequacy, arises from incomplete knowledge about the model equations themselves, such as the choice of clinical states in a Markov model or the mathematical form of a growth relationship [7] [14]. Parametric uncertainty refers to imperfect knowledge of the fixed, underlying parameters in a chosen model, even if the model structure is correct [7] [15]. Distinguishing between these is critical because they originate from different sources of limited knowledge and often require distinct methodologies for quantification and mitigation. In health economic evaluations and clinical decision-making, failing to account for these uncertainties can lead to overconfident predictions and suboptimal resource allocation [7].

The following table summarizes the core characteristics of these two uncertainty types:

| Characteristic | Structural Uncertainty | Parametric Uncertainty |

|---|---|---|

| Definition | Uncertainty about the model structure or equations [14]. | Uncertainty about the fixed parameter values within a chosen model [7]. |

| Origin | Choice of clinical states, permitted transitions, model complexity, data choice [7]. | Natural variation, measurement error, limited sample size in experimental data [14]. |

| Nature | Epistemic (reducible through better knowledge) [16]. | Often aleatory (irreducible inherent variation) or epistemic [14]. |

| Common Handling | Model averaging, model comparison, sensitivity analysis [7]. | Probabilistic sensitivity analysis, Bayesian inference, profile likelihood [7] [15]. |

Experimental Protocols for Quantifying Uncertainty

Protocol for Assessing Parametric Uncertainty Using Profile Likelihood

Objective: To quantify the identifiability and uncertainty of parameters in a computational model, using a profile likelihood approach [15].

- Model Definition: Define your mathematical model and a likelihood function ( L(\theta) ), which measures the probability of observing the experimental data given a set of model parameters ( \theta ) [15].

- Maximum Likelihood Estimation (MLE): Find the parameter values ( \theta{MLE} ) that maximize the likelihood function: ( \theta{MLE} = \mathrm{argmax}_{\theta}L(\theta) ) [15].

- Profiling a Parameter of Interest:

- Select a single parameter of interest, ( \psi ), from the full parameter vector ( \theta = (\psi, \phi) ), where ( \phi ) represents all other parameters.

- Define a series of fixed values for ( \psi ) across a plausible range.

- For each fixed value of ( \psi ), optimize the likelihood function over all other parameters ( \phi ).

- Calculate Profile Likelihood: The profile likelihood for ( \psi ) is the value of the optimized likelihood at each fixed value of ( \psi ): ( Lp(\psi) = \max{\phi} L(\psi, \phi) ) [15].

- Uncertainty Quantification: Calculate confidence intervals for ( \psi ) based on the profile likelihood and a chi-squared distribution [15].

- Iterate: Repeat steps 3-5 for all parameters in the model to understand the uncertainty and identifiability of each one.

Protocol for Assessing Structural Uncertainty in a Growth Model

Objective: To identify the most suitable model structure for characterizing the progression of a clinical condition, using total Geographic Atrophy (GA) growth as an example [16].

- Data Collection: Collect longitudinal, time-series data from patient presentations in a clinical setting. For GA, this involves fundus autofluorescence (FAF) images from at least three clinical review visits over a minimum of two years [16].

- Image Analysis: Use a semi-automated software algorithm (e.g., RegionFinder) for image segmentation and quantification of the clinical measure (e.g., total GA area) [16].

- Model Candidate Selection: Propose a set of plausible competing model structures (e.g., linear, exponential, quadratic) based on anecdotal clinical observations or previous literature [16].

- Model Fitting & Comparison: Fit each candidate model to the data. Compare them using:

- Model Selection/Averaging: Select the single best model based on the comparison criteria, or use model averaging to produce combined predictions that formally account for the structural uncertainty [7] [16].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and computational tools used in uncertainty analysis for clinical models.

| Reagent/Tool | Function in Uncertainty Analysis |

|---|---|

| WinBUGS | Software for Bayesian inference Using Gibbs Sampling; enables fully Bayesian model fitting and cost-effectiveness prediction via MCMC methods, formally propagating parameter uncertainty to model outputs [7]. |

| Profile Likelihood Workflow | An optimization-based method for parameter inference, identifiability analysis, and uncertainty quantification; more computationally efficient than sampling-based methods for many problems [15]. |

| Fundus Autofluorescence (FAF) Imaging | An ophthalmic imaging technique to capture geographic atrophy as hypoautofluorescent areas; provides the longitudinal, high-reproducibility data required to assess model structure for disease progression [16]. |

| RegionFinder Software | A semi-automated algorithm for segmenting and quantifying lesions in longitudinal FAF images; used to generate the precise area measurements needed to fit and compare growth models [16]. |

| Markov Chain Monte Carlo (MCMC) | A computational algorithm used to sample from probability distributions; applied in Bayesian analysis to sample from the posterior distribution of model parameters, accounting for parameter uncertainty [7]. |

Troubleshooting Guides and FAQs

FAQ 1: How do I know if my model's parameters are identifiable, and what can I do if they are not?

Answer: Parameter non-identifiability occurs when different parameter combinations yield an equally good fit to the data, leading to large uncertainties. This can be detected using a profile likelihood analysis [15]. If the profile likelihood for a parameter is flat, the parameter is non-identifiable.

- Troubleshooting Steps:

- Check for Structural Identifiability: Reformulate your model to remove redundant parameters.

- Incorporate Prior Information: Use a Bayesian framework to include informative prior distributions from previous studies, which can help constrain parameter values [7] [17].

- Collect More Informative Data: Design new experiments that provide information specifically about the non-identifiable parameters.

- Consider a Structured Inference Approach: If some parameters have a simple, known effect on the model output (e.g., linear scaling), use methods that exploit this structure to reduce the dimensionality of the optimization problem [15].

FAQ 2: My model fits the calibration data well but performs poorly in validation. Is this a structural or parametric issue?

Answer: Poor predictive performance despite good calibration is a classic sign of structural uncertainty or model inadequacy [14]. Your chosen model structure may not capture the true underlying biological or clinical process, even with optimally fitted parameters.

- Troubleshooting Steps:

- Model Comparison: Systematically compare a set of alternative, biologically plausible model structures using metrics like the deviance information criterion (DIC) or pseudo-marginal-likelihood (PML) [7].

- Model Averaging: Instead of relying on a single "best" model, use Bayesian model averaging to combine predictions from multiple models, weighted by their evidential support. This formally incorporates structural uncertainty into your predictions [7].

- Review Model Assumptions: Critically re-examine the core assumptions of your model (e.g., linearity, independence) in the context of the system's known biology.

FAQ 3: How can I visually communicate the impact of uncertainty to a clinical audience?

Answer: Effective uncertainty visualization is key. Avoid relying on single-value predictions and instead show distributions.

- Troubleshooting Steps:

- For Parametric Uncertainty: Use error bars or confidence bands around model predictions to show the range of possible outcomes [18] [19].

- For Structural Uncertainty: Plot predictions from multiple candidate models on the same axes, or display a model-averaged prediction with a credible interval that incorporates both parametric and structural uncertainty [7] [19].

- Use Interactive Visualizations: Allow clinicians to explore how predictions change under different model assumptions or parameter values, which can build trust and understanding [18].

FAQ 4: My computational model is too slow for standard uncertainty quantification methods. What are my options?

Answer: This is a common challenge with complex models. Several efficiency-focused strategies exist.

- Troubleshooting Steps:

- Structured Inference: If your model has parameters with a known, simple effect (e.g., multiplicative scaling factors), use a structured inference approach. This nests the optimization, reducing the number of expensive model evaluations required [15].

- Surrogate Modeling: Replace your slow simulator with a fast, approximate statistical model (e.g., a Gaussian process emulator) trained on a limited set of simulator runs. Perform UQ on the surrogate.

- Sensitivity Analysis: First, conduct a global sensitivity analysis to identify the parameters that contribute most to output uncertainty. Focus your UQ efforts on these high-impact parameters, fixing less sensitive ones to nominal values [14].

Workflow and Pathway Visualizations

Diagram 1: Integrated workflow for handling parametric and structural uncertainty in clinical predictions.

Diagram 2: A taxonomy of uncertainties affecting computational clinical models, adapted from [14].

Quantification in Action: Methodologies for Managing Both Uncertainty Types

Frequently Asked Questions

Q1: What is the fundamental difference between structural and parametric uncertainty in models?

Structural uncertainty arises from errors or simplifications in the mathematical representation of real-world processes. In contrast, parametric uncertainty exists due to errors in model parameters, often stemming from structural issues, measurement errors, and limited calibration data [2]. While measurement and parametric uncertainties have been widely studied, research on quantifying structural uncertainty remains less developed, making it a critical area for improving model reliability [2].

Q2: When should researchers choose ensemble modeling over a single best model approach?

Ensemble modeling should be prioritized when dealing with high-stakes predictions where model deficiencies could lead to real-world environmental or societal harm. Research on hydrological indicators shows that outcomes within a single historical scenario can range from "very low to very high ecological condition based solely on a simple set of modeling choices" [20]. Ensembles help manage this structural sensitivity by combining multiple models to balance parsimony and realism [20].

Q3: What are the practical limitations of multi-model averaging (MMA) approaches?

While MMA improves on simple model selection by implementing a form of shrinkage estimation, it has significant limitations [21]. MMA can produce overconfident, overly narrow confidence intervals and performs poorly with correlated variables, where it may bias estimates of weak effects upward and strong effects downward [21]. Other shrinkage estimators like penalized regression or Bayesian hierarchical models with regularizing priors are often more computationally efficient and better supported theoretically [21].

Q4: How can I determine if my model suffers from significant structural uncertainty?

Structural uncertainty manifests as persistent bias that cannot be eliminated through parameter calibration alone. In hydrological modeling, this bias propagates through the modeling process, affecting predictions even at ungauged locations [2]. Testing multiple model structures and comparing their predictions is essential for identifying this uncertainty, as relationship shape, aggregation functions, and assessment timeframes can all be highly influential factors [20].

Troubleshooting Guides

Problem: Poor Model Performance Despite Extensive Parameter Calibration

Symptoms: Persistent systematic errors, inability to match observed data across different conditions, high sensitivity to minor structural changes.

Diagnosis Steps:

- Test different relationship shapes (e.g., linear vs. nonlinear) in your model components [20].

- Vary choices of aggregation methods in space, time, and ecological groupings [20].

- Compare outcomes across multiple competing model structures rather than relying on a single formulation [20].

Solutions:

- Immediate Fix: Implement a comprehensive ensemble approach that builds multi-subject diversified models and combines them through second-level meta-learning [22].

- Long-term Strategy: Develop a model of structural uncertainty that can quantify total uncertainty at ungauged locations by compensating for structural bias [2].

Problem: Overconfident Predictions with Unrealistically Narrow Confidence Intervals

Symptoms: Model predictions frequently fall outside stated confidence bounds, performance degrades significantly on validation data.

Diagnosis Steps:

- Check if you're using multi-model averaging (MMA), which commonly produces overconfident intervals [21].

- Verify whether correlated predictors are causing MMA to shrink estimates toward each other [21].

Solutions:

- Immediate Fix: Use full (maximal) statistical models with principled, a priori decisions about model complexity, possibly with Bayesian priors [21].

- Alternative Approach: Employ penalized regression or Bayesian hierarchical models with regularizing priors instead of MMA for more reliable uncertainty quantification [21].

Problem: Inconsistent Model Performance Across Different Data Types or Contexts

Symptoms: Model works well on daily data but fails on hourly data, performs inconsistently across different watersheds or biological assays.

Diagnosis Steps:

- Assess whether structural uncertainty manifests differently across temporal scales or spatial contexts [2].

- Evaluate if the model structure adequately represents the fundamental processes across all application domains.

Solutions:

- Immediate Fix: Use grouping by type of ecological response (e.g., threshold vs. linear) to balance parsimony and realism [20].

- Comprehensive Solution: Implement pseudo repeated sampling using machine learning algorithms like random forest to identify similar processes across different contexts [2].

Experimental Protocols & Methodologies

Protocol 1: Comprehensive Ensemble Development for QSAR Prediction

This methodology details the comprehensive ensemble approach for Quantitative Structure-Activity Relationship (QSAR) prediction in drug discovery, which consistently outperformed 13 individual models across 19 bioassay datasets [22].

Table 1: Molecular Representations for QSAR Modeling

| Representation Type | Format | Compatible Learning Methods | Key Characteristics |

|---|---|---|---|

| PubChem Fingerprint | Binary vector | RF, SVM, GBM, NN | Retrieved from PubChemPy, non-sequential form [22] |

| ECFP (Extended-Connectivity Fingerprint) | Binary vector | RF, SVM, GBM, NN | Retrieved from SMILES using RDKit, non-sequential form [22] |

| MACCS Fingerprint | Binary vector | RF, SVM, GBM, NN | Retrieved from SMILES using RDKit, non-sequential form [22] |

| SMILES | Sequential string | 1D-CNN, RNN | Simplified Molecular-Input Line-Entry System, requires specialized architectures [22] |

Experimental Workflow:

Implementation Details:

- Dataset Division: Randomly divide data into training (75%) and testing (25%) sets [22].

- Cross-Validation: Partition training data into five portions (one for validation, four for training) [22].

- Feature Processing: Use PubChemPy to retrieve SMILES and PubChem fingerprints; use RDKit for ECFP and MACCS fingerprints [22].

- Ensemble Combination: Use second-level meta-learning to combine predictions from multiple models and representations [22].

Protocol 2: Hepatotoxicity Prediction Using Ensemble Methods

This protocol describes an ensemble approach integrating machine learning and deep learning for hepatotoxicity prediction, achieving 80.26% accuracy and 82.84% AUC [23].

Table 2: Ensemble Method Performance Comparison for Hepatotoxicity Prediction

| Ensemble Method | Prediction Accuracy | AUC | Recall | Key Strengths |

|---|---|---|---|---|

| Voting Ensemble Classifier | 80.26% | 82.84% | >93% | Optimal performance, excellent recall [23] |

| Bagging Ensemble Classifier | (Lower than Voting) | (Lower than Voting) | (Lower than Voting) | Good alternative to voting ensemble [23] |

| Stacking Ensemble Classifier | (Lower than Voting) | (Lower than Voting) | (Lower than Voting) | Effective combination method [23] |

Experimental Workflow:

Validation Framework:

- External Test Set: Verify model performance on completely separate data [23].

- 10-Fold Cross-Validation: Robust internal validation using multiple data partitions [23].

- Benchmark Training: Compare against published models to establish superiority [23].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Ensemble Modeling

| Tool/Resource | Function | Application Context |

|---|---|---|

| RDKit | Generate molecular fingerprints (ECFP, MACCS) from SMILES strings [22] | Cheminformatics, drug discovery, QSAR modeling |

| PubChemPy | Retrieve PubChem chemical IDs, SMILES strings, and molecular descriptors [22] | Access to PubChem database, chemical property retrieval |

| Keras Library | Implement neural network architectures (1D-CNN, RNN) for sequential data [22] | Deep learning models, end-to-end feature extraction |

| Scikit-learn Library | Conventional machine learning methods (RF, SVM, GBM) and model evaluation [22] | Traditional ML implementation, performance metrics |

| Random Forest Algorithm | Pseudo repeated sampling for uncertainty estimation in environmental data [2] | Measurement uncertainty quantification, hydrological modeling |

| Comprehensive Ensemble Framework | Multi-subject model diversification with second-level meta-learning [22] | QSAR prediction, structural uncertainty mitigation |

Critical Limitations and Considerations

Structural Sensitivity in Environmental Management: Research on Murray-Darling Basin management models revealed that structural sensitivity appears at many steps in complex modeling processes. Common default choices like linear relationships and arithmetic means were found to be "not conservative and may inflate risk" [20]. Even scenario comparison, while helpful, only partially reduces this sensitivity [20].

Conceptual Limitations of Multi-Model Approaches: Multi-model averaging represents an unnecessary discretization of a continuous model space. As noted in critical assessments, "If we do not have particular, a priori discrete hypotheses about our system, why does so much of our data-analytic effort go into various ways to test between, or combine and reconcile, multiple discrete models?" [21]. This reflects an "XY problem" where researchers focus on making multimodel approaches work rather than addressing the fundamental challenge of understanding multifactorial systems [21].

Recommendations for Robust Practice:

- Explicitly test and report structural uncertainty for science-based environmental management [20].

- Use grouping by ecological response type rather than default mathematical conveniences [20].

- Consider full models with Bayesian priors instead of MMA for more reliable confidence intervals [21].

- Report several competing structures rather than uncritically using a single model, which represents the highest risk of poor decision-making [20].

Core Concept Definitions

What is a probability-box (p-box) and how does it relate to parametric uncertainty? A probability-box (p-box) is a mathematical structure used to represent epistemic uncertainty in the probability distribution of a random variable. It is defined by upper and lower bounds on the cumulative distribution function (CDF) that enclose all possible distributions consistent with available information. Unlike precise probability distributions that require exact parameter specification, p-boxes accommodate parametric uncertainty by defining a family of distributions bounded by two CDFs, thus capturing uncertainty about distribution parameters themselves [24].

How does Probabilistic Sensitivity Analysis (PSA) complement p-box analysis? Probabilistic Sensitivity Analysis quantifies how uncertainty in model inputs (including distribution parameters) affects model outputs. While traditional PSA often assumes precise input distributions, when combined with p-boxes, it evaluates how the entire range of possible distributions impacts output uncertainty. This allows analysts to determine which uncertain distribution parameters contribute most to output variance and to compute bounds on failure probabilities or other reliability metrics [25] [26].

What is the fundamental difference between parametric and distribution-free p-boxes? Parametric p-boxes constrain the family of possible distributions to a specific distribution family (e.g., all normal distributions with mean between [1, 3] and standard deviation between [0.5, 1.5]). Distribution-free p-boxes only specify upper and lower CDF bounds without assuming an underlying distribution family, thus accommodating a wider class of possible distributions [24].

Troubleshooting Common P-Box Implementation Issues

Table 1: Common Computational Challenges in P-Box Analysis

| Problem | Symptoms | Recommended Solutions |

|---|---|---|

| Excessive Computational Demand | Long processing times; inability to complete analysis with complex models | Use single-loop methods like Bayesian Updating BDRM; Implement surrogate modeling (Kriging); Apply dimension reduction techniques [27] [28] |

| Overly Wide Result Bounds | Uninformatively broad probability bounds; Limited practical utility of results | Incorporate additional data to constrain bounds; Use pinching analysis to identify most influential parameters; Apply dependence constraints between parameters [29] |

| Difficulty Constructing P-Box from Data | Uncertainty in selecting appropriate bounds; Disagreement among experts on parameter ranges | Use confidence intervals on distribution parameters; Employ Kolmogorov-Smirnov confidence bounds; Combine multiple data sources with random set theory [24] |

| Propagation Errors | Inconsistent results; Violation of probability bounds during computation | Verify monotonicity assumptions; Use guaranteed enclosure methods; Implement double-loop approaches for validation [29] [28] |

Why are my p-box computations so resource-intensive and how can I optimize them? P-box propagation traditionally requires nested calculations (double-loop methods) where the outer loop explores distribution parameter space and the inner loop performs probabilistic analysis. This computational burden is particularly challenging for complex engineering models with implicit limit state functions [28]. Recent advances suggest several optimization approaches:

Single-loop methods like the Bayesian Updating Bivariate Dimension Reduction Method (BU-BDRM) reuse a single set of model evaluations, dramatically reducing computational requirements while maintaining accuracy [28].

Surrogate modeling constructs approximate relationships between interval variables and failure probabilities using techniques like Kriging, which requires minimal training data while providing uncertainty quantification [27].

Sparse polynomial chaos expansions create efficient meta-models specifically designed for uncertainty propagation with p-box inputs [28].

How can I determine which uncertain distribution parameters contribute most to my output uncertainty? The "pinching" method provides a systematic approach for sensitivity analysis within p-box frameworks. By fixing specific input parameters to precise values (one at a time) and observing the reduction in output uncertainty, analysts can rank parameters by their influence on overall uncertainty. This approach identifies which parameters would benefit most from additional data collection or more precise estimation [29].

Methodological Protocols & Workflows

Protocol 1: P-Box Construction from Limited Data

Objective: Construct a parametric p-box when only range information on distribution parameters is available.

Materials: Parameter bounds data, computational software with interval analysis capabilities.

Procedure:

- Identify the appropriate distribution family based on physical knowledge of the phenomenon

- Determine interval bounds for each distribution parameter (e.g., mean, standard deviation)

- Define the p-box as the set of all distributions within the specified parameter bounds

- Validate that the constructed bounds enclose all empirical data points

- Perform sensitivity analysis to assess the impact of parameter bound selections [24]

Protocol 2: Monte Carlo Sensitivity Analysis (MCSA) for Misclassification Adjustment

Objective: Account for uncertainty in bias parameters when adjusting for misclassification in epidemiological studies.

Materials: Observed data (dataset or 2x2 table), statistical software with probabilistic sampling capabilities.

Procedure:

- Input observed data and specify the type of misclassification to be adjusted

- Specify probability distributions for sensitivity/specificity parameters based on literature or expert opinion

- Set the number of replications (typically 10,000-100,000)

- For each replication:

- Sample sensitivity/specificity values from their distributions

- Back-calculate expected true cases using: A = [a - (1-S₀) × N]/[S₁ - (1-S₀)]

- Compute positive and negative predictive values (PPV, NPV)

- Reclassify each individual via Bernoulli trials using uniform random numbers

- Compute effect estimates from bias-adjusted datasets

- Calculate simulation intervals for the adjusted estimates [30]

Figure 1: Monte Carlo Sensitivity Analysis Workflow for Bias Adjustment

Protocol 3: Efficient Reliability Analysis with Parametric P-Boxes

Objective: Compute bounds on failure probabilities for structural systems with parametric p-box uncertainties.

Materials: Limit state function model, parameter bounds, computational software with reliability analysis capabilities.

Procedure:

- Employ Fractional Exponential Moments-based Maximum Entropy Method (FEM-MEM) with Bivariate Dimension Reduction Method (BDRM) for initial reliability assessment

- Perform a single round of limit state function evaluations at integration points

- Apply Bayesian Updating to adjust BDRM weights in response to changes in input distribution parameters

- Recompute failure probabilities with updated weights without additional model evaluations

- Use Kriging modeling to construct surrogate relationships between interval variables and failure probabilities

- Calculate precise bounds on failure probabilities using the surrogate model [27] [28]

Computational Implementation Framework

Figure 2: Probability-Box Analysis Framework for Parametric Uncertainty

Table 2: Research Reagent Solutions for P-Box and PSA Implementation

| Tool/Method | Primary Function | Implementation Considerations |

|---|---|---|

| Bayesian Updating BDRM | Efficient reliability analysis with single-loop evaluation | Requires initial integration points; Updates weights with parameter changes [28] |

| Kriging Surrogate Modeling | Approximates relationship between interval variables and failure probabilities | Reduces computational cost; Provides uncertainty quantification for predictions [27] |

| Fractional Exponential Moments MEM | Accurately computes failure probabilities from limited evaluations | Works with BDRM integration points; Captures distribution tail behavior [28] |

| Monte Carlo Sensitivity Analysis | Propagates uncertainty in bias parameters through models | Requires specification of parameter distributions; Computationally intensive but parallelizable [30] |

| Pinching Analysis | Identifies influential uncertain parameters by fixing them to precise values | Systematic approach; Provides parameter importance ranking [29] |

| Double-Loop Sampling | Reference method for p-box propagation with guaranteed bounds | Computationally expensive; Useful for validation of approximate methods [24] |

Advanced Integration Scenarios

How can p-boxes be integrated with traditional probabilistic analysis in drug development? In health technology assessment, p-boxes can enhance probabilistic sensitivity analysis by accommodating epistemic uncertainty in distribution parameters. For instance, when a cost-effectiveness analysis requires input parameters with limited data, p-boxes can represent the uncertainty in the distributional form itself, while PSA propagates this uncertainty to cost-effectiveness outcomes. The National Institute for Health and Care Excellence (NICE) has demonstrated that technologies with higher probabilities of being cost-effective (typically above 40% at relevant thresholds) are more likely to receive positive recommendations, highlighting the importance of comprehensively accounting for all sources of uncertainty [25].

What strategies exist for mixing precise probabilities, intervals, and p-boxes in the same analysis? Real-world analyses often combine different uncertainty representations. P-boxes naturally accommodate this heterogeneity through their position in the uncertainty representation hierarchy. Precise probabilities become special cases of p-boxes where upper and lower bounds coincide. Interval analysis represents scenarios where only range information is available without probabilistic content. The joint propagation of these mixed uncertainties can be achieved through random set interpretation or Dempster-Shafer structures, which provide a consistent mathematical framework for combining different forms of uncertain information [29] [24].

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of using Kriging over other surrogate models in Bayesian updating?

Kriging offers several distinct benefits that make it particularly suitable for Bayesian model updating:

- Exact Interpolation and Uncertainty Quantification: Kriging provides exact predictions at training data points and, uniquely, gives an estimate of the prediction variance at untested locations. This built-in error estimation is invaluable for quantifying surrogate model uncertainty in reliability analysis [31] [32].

- Adaptive Active Learning: The model can be efficiently refined using active learning functions that leverage the predicted variance to sequentially add sample points in regions of interest, such as near a failure boundary or in high-probability density regions of parameters [31] [33].

- Handling Different Data Types: Advanced Kriging frameworks can competently approximate responses of different natures and dimensions (e.g., combining modal frequencies and mode shapes in structural dynamics) by using techniques like multi-dimensional scaling factors in an affine-invariant sampling space [31].

Q2: In the context of treating structural vs. parametric uncertainty, how can Bayesian updating help distinguish between them?

Bayesian methods provide a framework to quantify and separate these uncertainties:

- Parametric Uncertainty is handled directly within the Bayesian updating process. The posterior probability density of the model parameters is estimated, reflecting the updated belief about their values after incorporating observational data [31] [34].

- Identifying Structural Deficiencies: When a model consistently fails to match multiple observational constraints despite parameter adjustments, it indicates structural errors. Workflows exist that use Bayesian inference on perturbed parameter ensembles to reveal such inconsistencies that no combination of parameters can resolve, thereby diagnosing potential structural model deficiencies [35] [36]. For instance, a study on an aerosol model found that structural inconsistencies prevented simultaneous consistency with multiple observations, limiting parametric uncertainty reduction [35].

Q3: Our computational models are very expensive. What strategies can make Bayesian updating feasible?

Integrating surrogate models, specifically Kriging, with advanced sampling algorithms is a highly effective strategy:

- Surrogate-Assisted MCMC: Construct a Kriging model to approximate the computationally expensive system (e.g., a finite element model). This surrogate is then used in place of the original model during the thousands of iterations required by MCMC sampling, drastically reducing computational time. One study on a high-rise building reported a reduction to 1/8 of the time required by the standard approach [34].

- Active Learning Frameworks: Instead of building a surrogate once, use active learning (e.g., with an Expected Improvement-Least Improvement Function, AK-EI-LIF) to iteratively and efficiently train the Kriging model. This focuses computational resources on refining the surrogate in regions most critical for accurately determining the posterior distribution [31].

- Ensemble of Surrogates: To overcome the challenge of selecting a single best surrogate model, combine Kriging with other models like Artificial Neural Networks (ANNs). Use local weighting schemes to leverage the strengths of each model across different parts of the parameter space, improving robustness and accuracy [33].

Q4: What are common signs of convergence failure in MCMC sampling for Bayesian updating, and how can they be addressed?

Convergence issues often manifest and can be mitigated as follows:

- Poor Mixing (High Autocorrelation): The chains move slowly through the parameter space, getting stuck in local regions. Solution: Use advanced sampling techniques like the affine-invariant Transitional MCMC (TMCMC), which is designed to handle complex, high-dimensional posterior distributions more effectively [31].

- Lack of Precision in Surrogate Model: If the Kriging model is not accurate enough, it can misguide the MCMC sampler. Solution: Employ stricter stopping criteria for active learning, such as ensuring the failure probability uncertainty or the learning function value falls below a target threshold before proceeding with final MCMC on the surrogate [32].

- Inefficiency with Complex Posteriors: Traditional MCMC can be inefficient. Solution: Implement adaptive MCMC algorithms that use sub-steps and parallel computing to enhance sampling efficiency [34].

Troubleshooting Guides

Problem: The Kriging surrogate model is inaccurate, leading to biased posterior distributions.

Diagnosis: This occurs when the surrogate model has not been sufficiently trained in the regions of high probability density of the parameters.

Solution: Implement an Active Learning Framework.

- Step 1: Begin with an initial Design of Experiments (DOE), such as Latin Hypercube Sampling, to build a preliminary Kriging model.

- Step 2: Use an active learning function to identify the most valuable new point(s) to add to your training data. A powerful function is the Expected Improvement within Least Improvement Function (AK-EI-LIF) [31].

- The LIF aims to improve the surrogate where it matters most for the posterior. The EI component makes its evaluation computationally efficient.

- Select the next sample point by maximizing the AK-EI-LIF function:

argmax[AK-EI-LIF(x)].

- Step 3: Run your full computational model at the selected point(s) and update the Kriging model.

- Step 4: Check the stopping criterion. This could be a threshold on the maximum learning function value or a minimal change in the estimated parameters over several iterations.

- Step 5: Once stopped, use the highly accurate Kriging surrogate for rapid MCMC sampling to obtain the posterior distribution.

The workflow below illustrates this iterative process:

Problem: The computational cost of quantifying surrogate model uncertainty is prohibitive.

Diagnosis: Directly propagating the uncertainty of the Kriging prediction through the reliability analysis can be complex and expensive.

Solution: Adopt a dedicated surrogate model uncertainty quantification (UQ) method.

- Step 1: Construct your Kriging surrogate model as usual.

- Step 2: Instead of a traditional indicator function, use a Probabilistic Classification Function.

- Step 3: Quantify the impact of surrogate model uncertainty on failure probability estimation by integrating the difference between the traditional indicator function and the probabilistic classification function. This metric is known as Failure Probability Uncertainty (FPU) [32].

- Step 4: Use this FPU as a stopping criterion for your active learning process. You can stop adding samples once the FPU falls below an acceptable tolerance, ensuring the failure probability is estimated with sufficient precision given the surrogate's accuracy.

Problem: My problem involves high-dimensional parameters and highly non-linear responses, and a single surrogate model struggles.

Diagnosis: No single surrogate model may be optimal for the entire parameter space and response surface.

Solution: Use a locally weighted ensemble of surrogates.

- Step 1: Construct multiple types of surrogate models (e.g., Kriging and an Artificial Neural Network) using the same initial training data [33].

- Step 2: For any candidate point

xin the parameter space, assess the local goodness (accuracy) of each surrogate model. This can be done using cross-validation or Jackknife techniques to estimate local prediction errors [33]. - Step 3: Use a Local Weighted Average Surrogate (LWAS) or select the Local Best Surrogate (LBS) for that specific point

x. - Step 4: In the active learning loop, select new sample points that have the largest predicted error from the ensemble model and are close to the limit state (e.g., the failure boundary). This approach has been shown to be more efficient than using a single surrogate model or globally weighted ensembles [33].

The following table summarizes quantitative results from recent studies applying these advanced techniques.

Table 1: Performance of Advanced Bayesian and Kriging Methods in Various Applications

| Application Domain | Method Used | Key Performance Metric | Result | Source |

|---|---|---|---|---|

| High-rise Building Model Updating | Gaussian Process Regression (GPR) Surrogate with MCMC | Computational Time | Reduced to 1/8 of traditional MCMC time [34]. | |

| Reliability Analysis | Ensemble of Kriging & ANN with Local Weighting (LWAS) | Computational Efficiency | More efficient than single surrogate (AK-MCS) and global ensembles in high-dimension/rare event problems [33]. | |

| Laminated Composite Shell Optimization | Adaptive Hybrid Correlation Kriging | Vibration Displacement & Fundamental Frequency | Achieved effective uncertainty optimization for conflicting objectives [37]. | |

| Structural Model Updating | Active Kriging with EI-LIF (AK-EI-LIF) | Parameter Estimation Accuracy | Improved accuracy and efficiency at various noise levels compared to existing approaches [31]. |

Detailed Methodology: AK-EI-LIF for Bayesian Model Updating

This protocol outlines the steps for implementing the AK-EI-LIF method as described in [31].

Problem Definition:

- Define the parameter space for the model parameters

θto be updated. - Identify the observational data

D(e.g., frequencies, mode shapes).

- Define the parameter space for the model parameters

Initial Design and Surrogate Construction:

- Generate an initial set of sample points

θ_iusing a space-filling DOE (e.g., Latin Hypercube Sampling). - For each

θ_i, run the high-fidelity model (e.g., FEM) to compute the corresponding responsesG(θ_i). - Construct an initial Kriging model to approximate the posterior probability density function

p(θ|D).

- Generate an initial set of sample points

Active Learning Loop:

- Calculate Learning Function: For a large set of candidate points, evaluate the AK-EI-LIF learning function. This function modifies the computationally intensive LIF by using an Expected Improvement technique to find the point where adding a sample would most improve the surrogate's accuracy in representing the posterior [31].

- Select and Run: Find the candidate point

θ*that maximizes the AK-EI-LIF function. Run the high-fidelity model atθ*to getG(θ*). - Update and Check: Add

(θ*, G(θ*))to the training set and update the Kriging model. Check the stopping criterion (e.g., maximum learning function value is below a threshold, or the change in posterior estimates is negligible).

Final Sampling and Analysis:

- Once the stopping criterion is met, use the final, accurate Kriging surrogate with a TMCMC sampler to draw samples from the posterior distribution

p(θ|D). - Analyze the posterior samples to obtain estimates (mean, median) and uncertainties (credible intervals) for the updated parameters.

- Once the stopping criterion is met, use the final, accurate Kriging surrogate with a TMCMC sampler to draw samples from the posterior distribution

The Scientist's Toolkit

Table 2: Essential Research Reagents & Computational Tools

| Item / Technique | Function in the Research Process |

|---|---|

| Transitional MCMC (TMCMC) | An advanced sampling algorithm effective for sampling from complex, high-dimensional posterior distributions. It is often paired with affine-invariance to handle parameters of different natures [31]. |

| Kriging (Gaussian Process) | A surrogate modeling technique that provides both a prediction and an uncertainty estimate at any point in the parameter space, forming the backbone of active learning [31] [32]. |

| Active Learning Function (e.g., U, EFF, LIF, AK-EI-LIF) | A criterion used to intelligently select the next most informative sample point to run the expensive computational model, maximizing the efficiency of surrogate training [31] [33]. |

| Affine-Invariant Sampler | A sampling technique that accounts for the different scales and natures of parameters and multi-dimensional responses (e.g., frequencies vs. mode shapes), improving the robustness of MCMC [31]. |

| Ensemble of Surrogates | A framework that combines multiple surrogate models (e.g., Kriging + ANN) with local weighting to improve robustness and accuracy, especially when the best single model is unknown [33]. |

| Finite Element Model (FEM) | The high-fidelity computational model (e.g., of a structure or physical system) that the surrogate is built to emulate. It is the primary source of computational expense [31] [34]. |

Technical Support Center: FAQs on Uncertainty in PK/PD

FAQ 1: What is the core difference between structural and parameter uncertainty in my PK/PD model, and why does it matter?

Understanding this distinction is fundamental to diagnosing and fixing model issues. Parameter uncertainty arises from imprecise knowledge of the numerical values in your model equations, such as clearance (CL) or volume of distribution (Vss). It reflects a lack of information and can often be reduced by collecting more or higher-quality data [38]. In contrast, structural uncertainty results from an imperfect representation of the underlying biology—the model's equations themselves may be an oversimplification or contain the wrong relationships [1] [38]. For example, using a simple one-compartment model when the drug's disposition is truly multi-compartmental is a source of structural uncertainty [39].

It matters because the mitigation strategies differ. Parameter uncertainty is addressed through better experimental design and statistical methods, while structural uncertainty requires a fundamental re-evaluation of the model's assumptions and may involve comparing different model structures [39] [38].

FAQ 2: My model fits the data well but makes poor predictions. Could uncertainty be the cause?

Yes, this is a classic symptom. A model might produce a good fit to a specific dataset by over-relying on a single, uncertain parameter value or an incorrect structural assumption. This is often an issue of identifiability, where multiple parameter combinations can explain the observed data equally well, leading to unreliable predictions [40]. To troubleshoot:

- Check for high correlation between parameter estimates, which suggests identifiability issues.

- Perform a visual predictive check to see if model simulations capture the variability in your data [41].

- Quantify parameter uncertainty using methods like Markov Chain Monte Carlo (MCMC) to see if a wide range of parameter values are plausible [42].

FAQ 3: How can I visually communicate the impact of uncertainty to project teams and decision-makers?

Static plots of model predictions are often insufficient. Use interactive visualization to answer "what-if" questions in real-time during team meetings [41]. For instance, use tools that can instantly simulate the percentage of patients achieving a target effect across a range of doses, while overlaying the variability from multiple simulation runs [41]. This helps teams understand not just the most likely outcome, but the range of possible outcomes and associated risks, enabling more robust decision-making on dose selection and trial design.

FAQ 4: What are the main quantitative sources of uncertainty in human dose prediction from preclinical data?

The table below summarizes key quantitative uncertainties you must account for when translating from animal models to humans [39].

Table 1: Key Sources of Uncertainty in Preclinical to Human Translation

| PK Parameter | Key Sources of Uncertainty | Typical Prediction Performance |

|---|---|---|

| Clearance (CL) | - Species differences in metabolism and excretion.- Choice of scaling method (allometry vs. in vitro-in vivo extrapolation). | ~60% of compounds predicted within 2-fold of true human value for best allometric methods [39]. |

| Volume of Distribution (Vss) | - Interspecies differences in physiology and tissue binding.- Reliance on physicochemical properties. | Often falls within 3-fold of the true human value [39]. |

| Bioavailability (F) | - Interspecies differences in intestinal physiology and gut metabolism.- Difficult to predict for low-solubility or low-permeability compounds. | Physiologically based pharmacokinetic models tend to underpredict; highly variable between species [39]. |

Experimental Protocols for Uncertainty Quantification

Protocol: Quantifying Parameter Uncertainty using Markov Chain Monte Carlo (MCMC)

Objective: To estimate the posterior distribution of PK/PD model parameters, thereby fully characterizing parameter uncertainty.

Background: Parameter uncertainty means that the true value of a model parameter (e.g., clearance) is not a single number but a distribution of plausible values [42]. MCMC is a powerful sampling technique that allows for this distribution to be characterized, even for complex, high-dimensional models [42].

Methodology:

- Define Priors: Specify a prior probability distribution for each model parameter based on existing knowledge (e.g., from literature or in vitro studies).

- Construct Likelihood: Define a function that calculates the probability of observing your experimental data given a specific set of parameter values.

- Run MCMC Sampling: Use an algorithm (e.g., Metropolis-Hastings, Hamiltonian Monte Carlo) to draw thousands of samples from the joint posterior distribution of the parameters [42]. The core of this technique is an algorithm, which determines the sampling efficiency and reliability of uncertainty analysis [42].

- Diagnose Convergence: Ensure the sampling algorithm has stabilized by using diagnostic tools (e.g., trace plots, Gelman-Rubin statistic).

- Analyze Output: The collected samples form the posterior distribution for each parameter. Summarize these distributions using means, medians, and credible intervals (e.g., 95% CrI).

MCMC Workflow for Parameter Uncertainty

Protocol: Evaluating Structural Uncertainty with Model Averaging

Objective: To account for the uncertainty introduced by not knowing the single "true" model structure.

Background: Structural uncertainty arises from not knowing the single "true" model structure, such as whether an Emax model or a linear model best describes the concentration-effect relationship [1] [38]. Ignoring this can lead to overconfident predictions.

Methodology:

- Develop Candidate Models: Propose a set of plausible model structures (e.g., 1-, 2-, and 3-compartment PK models; linear, Emax, and sigmoid Emax PD models).

- Estimate Model Probabilities: Fit all candidate models to the data and calculate a model performance metric (e.g., Akaike Information Criterion (AIC) or -2LL log-likelihood ratio) for each [40].

- Calculate Model Weights: Transform the performance metrics into model weights, which represent the probability of each model being the best among the set.

- Generate Averaged Predictions: For any prediction (e.g., future concentration), generate the prediction from each model and compute a weighted average based on the model weights. The variance of this averaged prediction will more honestly reflect the total uncertainty.

Model Averaging for Structural Uncertainty

The Scientist's Toolkit: Key Reagents & Methods for UQ

Table 2: Essential Tools for PK/PD Uncertainty Quantification

| Tool / Method | Function in Uncertainty Quantification | Relevant Uncertainty Type |

|---|---|---|