Taming Ill-Conditioning in Pharmaceutical Parameter Sweeps: From Theory to Regulatory-Grade Application

This article provides a comprehensive guide for researchers and drug development professionals on addressing the pervasive challenge of numerical ill-conditioning in parameter sweep studies.

Taming Ill-Conditioning in Pharmaceutical Parameter Sweeps: From Theory to Regulatory-Grade Application

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the pervasive challenge of numerical ill-conditioning in parameter sweep studies. It covers foundational concepts, practical methodologies for regularization and constraint handling, optimization techniques for robust parameter selection, and rigorous validation frameworks based on ASME V&V40 standards. By integrating computational techniques with pharmaceutical applications, this resource aims to enhance the reliability and credibility of computational models in drug discovery and development, ultimately supporting more efficient and predictive in silico analyses.

Understanding Numerical Ill-Conditioning: Why Parameter Sweeps Fail in Pharmaceutical Modeling

Core Concepts: Ill-Conditioning and Condition Numbers

What is an ill-conditioned system and why does it matter in computational research?

An ill-conditioned system is one where small changes in the input data lead to large changes in the solution. This sensitivity poses significant challenges in scientific computing and drug development research, particularly in parameter sweep studies where system stability across parameter variations is crucial. The condition number quantifies this sensitivity, with large values indicating ill-conditioning [1] [2].

How is the condition number mathematically defined for matrices?

For a matrix A, the condition number is defined as cond(A) = ‖A‖·‖A⁻¹‖, where ‖·‖ represents a matrix norm. When the condition number is significantly greater than 1, the matrix is considered ill-conditioned. For singular matrices, the condition number is infinite [1] [2].

What are the practical implications of high condition numbers in pharmaceutical research?

High condition numbers lead to amplified numerical errors, unreliable parameter estimates in model fitting, and compromised reproducibility in sensitivity analyses. In drug development, this can manifest as unstable predictions of drug efficacy or toxicity based on computational models [2].

Table 1: Common Matrix Norms and Their Applications

| Norm Type | Calculation Method | Primary Research Application |

|---|---|---|

| Frobenius Norm | ‖A‖₂ = √(ΣΣAᵢⱼ²) | General purpose; least squares problems |

| Spectral Norm | ‖A‖₂ = σ_max(A) (largest singular value) | Error analysis in linear systems |

| Maximum Absolute Row-Sum | maxi Σj|Aᵢⱼ| | Stability analysis of algorithms |

| Maximum Absolute Column-Sum | maxj Σi|Aᵢⱼ| | Input error propagation studies |

Diagnostic Methods: Detecting Ill-Conditioning

How can I detect ill-conditioning in my experimental data analysis?

The most direct approach is computing the condition number of your system matrix. Most computational environments provide built-in functions: numpy.linalg.cond(A) in Python or cond(A) in MATLAB. Additionally, these indicators suggest potential ill-conditioning [1] [2]:

- Large spread in singular values (σmax/σmin ≫ 1)

- Near-singular matrices with very small determinants

- Significant numerical instability during iterative refinement

- Inconsistent results when using different algorithmic approaches

What experimental factors contribute to ill-conditioning in pharmaceutical research?

Ill-conditioning frequently arises from these common scenarios [3] [4]:

- Multicollinearity in regression models where predictor variables are highly correlated

- Poorly designed experiments with inadequate variation in independent variables

- Inappropriate parameter scaling creating large disparities in numerical values

- Over-parameterized models relative to available data points

Table 2: Condition Number Interpretation Guide

| Condition Number Range | System Classification | Impact on Solution Accuracy |

|---|---|---|

| 1-10² | Well-conditioned | Reliable solutions |

| 10²-10⁶ | Moderately ill-conditioned | Progressive loss of precision |

| 10⁶-10¹² | Seriously ill-conditioned | Significant numerical errors |

| >10¹² | Numerically singular | Unreliable or no solution |

Mitigation Strategies: Practical Solutions

What computational approaches can mitigate ill-conditioning effects?

Regularization Methods introduce additional constraints to stabilize solutions. Tikhonov regularization addresses ill-conditioned systems by solving (AᵀA + αI)x = Aᵀb, where α > 0 is a regularization parameter that improves the condition number from cond(AᵀA) to approximately cond(AᵀA + αI) [3].

Robust Regression Techniques provide alternatives to ordinary least squares that are less sensitive to outliers and ill-conditioning. M-estimation, MM-estimation, and Least Trimmed Squares (LTS) offer different trade-offs between robustness and efficiency [3] [4].

How can experimental design prevent ill-conditioning issues?

Proper experimental design significantly reduces ill-conditioning risks [3] [5]:

- Ensure adequate variation in independent variables

- Maintain appropriate scaling of parameters

- Include replication to improve numerical stability

- Use design of experiments (DoE) principles to optimize data collection

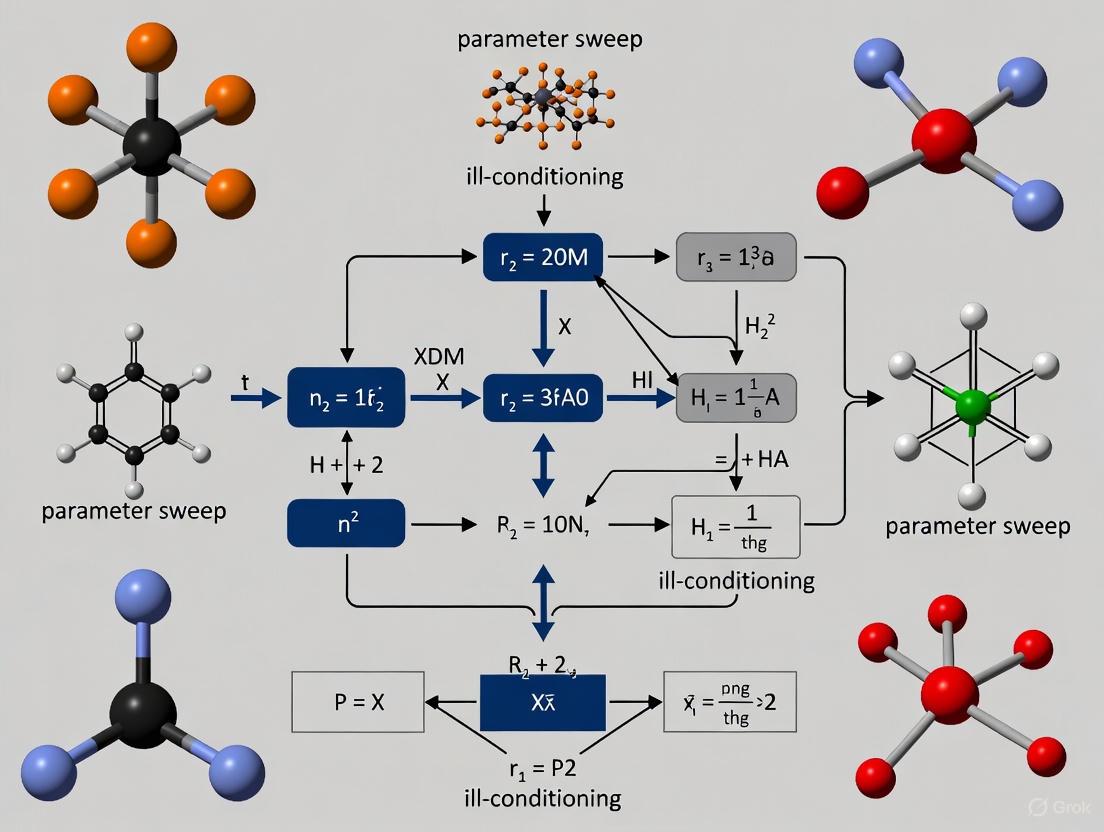

Figure 1: Diagnostic and Mitigation Workflow for Ill-Conditioned Systems

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Resources for Handling Ill-Conditioning

| Resource Category | Specific Tools/Functions | Primary Application |

|---|---|---|

| Condition Analysis | numpy.linalg.cond(), scipy.linalg.norm() |

Matrix condition assessment |

| Regularization Methods | scipy.sparse.linalg.lsqr(), Ridge regression |

Stabilizing ill-conditioned systems |

| Robust Regression | sklearn.linear_model.RANSACRegressor, M-estimators |

Outlier-resistant modeling |

| Matrix Decomposition | SVD, QR, LU factorization | Alternative solution approaches |

| High-Precision Arithmetic | mpmath, gmpy2 libraries |

Reducing roundoff errors |

Experimental Protocols: Case Study

Protocol: Assessing and Addressing Ill-Conditioning in Formulation Stability Modeling

This protocol adapts high-throughput screening methodologies for detecting and resolving numerical instability in pharmaceutical formulation models [5].

Step 1: Problem Formulation and Data Collection

- Define the system of equations representing your formulation model

- Collect experimental data with appropriate replication

- Format data into the matrix equation Ax = b

Step 2: Condition Number Computation

Step 3: Diagnostic Assessment

- Compare computed condition numbers against thresholds in Table 2

- Perform singular value decomposition to analyze eigenvalue distribution

- Check for multicollinearity using variance inflation factors (VIF)

Step 4: Mitigation Implementation For ill-conditioned systems (cond(A) > 10⁴):

Step 5: Validation and Cross-verification

- Compare solutions from different methods

- Assess solution sensitivity to small input perturbations

- Verify biological/pharmaceutical plausibility of results

Frequently Asked Questions

Why do I get different solutions when using different algorithms for the same problem?

This is a classic symptom of ill-conditioning. Different algorithms propagate numerical errors differently, leading to disparate solutions. The condition number precisely quantifies this sensitivity - higher values mean greater algorithm-dependent variation [1] [2].

How does ill-conditioning affect parameter sweep studies in drug development?

In parameter sweep research, ill-conditioning manifests as extreme sensitivity to minor parameter variations, making results unreproducible and biologically implausible. This is particularly problematic when optimizing combination therapies or formulation parameters where reliable interpolation between tested points is essential [6].

Can increasing computational precision solve ill-conditioning problems?

While higher precision arithmetic (e.g., quadruple precision) can help with moderate ill-conditioning, it cannot fix fundamentally ill-posed problems. As the condition number grows, eventually any fixed precision will prove inadequate. Addressing the mathematical root cause through regularization or experimental redesign is more effective [2].

What is the relationship between outliers in experimental data and ill-conditioning?

Outliers can severely exacerbate ill-conditioning problems by distorting the covariance structure and inflating the condition number. Robust regression methods (M-estimation, MM-estimation, Least Trimmed Squares) specifically address this issue by reducing outlier influence [3] [4].

Figure 2: Interrelationships Between Ill-Conditioning Causes and Solutions

Key Takeaways for Researchers

Understanding condition numbers and error propagation in linear systems is fundamental for reliable computational research in pharmaceutical development. The most effective approach combines:

- Proactive assessment of condition numbers during experimental design

- Appropriate mitigation strategies tailored to your specific numerical challenges

- Robust validation using multiple computational approaches

- Documentation of numerical stability alongside scientific results

By implementing these practices, researchers can significantly improve the reliability and reproducibility of computational findings in drug development and formulation optimization.

Frequently Asked Questions (FAQs)

FAQ 1: What does an "ill-conditioned problem" mean in practical terms for my pharmaceutical simulation? An ill-conditioned problem is one where small changes in your input data or model parameters cause large, often unrealistic, variations in the output or solution. This high sensitivity means that tiny numerical errors, which are always present in computational work, can be greatly amplified, leading to unreliable and inaccurate results. It is characterized by a high condition number; the higher this number, the more sensitive the system is to perturbations [7].

FAQ 2: Why does my molecular dynamics simulation sometimes produce non-physical or unstable results? This instability can be a direct manifestation of ill-conditioning in the force fields or the integration algorithms. For instance, some Machine Learning Potentials (MLPs), while fast, can exhibit instabilities such as forming amorphous solid phases instead of correct liquid water structures or showing non-physical energy minima during dynamics [8]. These issues often stem from limitations in the training data or model architecture, causing the simulation to explore unrealistic molecular configurations.

FAQ 3: My parameter sweep for a process optimization shows wildly inconsistent outcomes. Could ill-conditioning be the cause? Yes. In processes like topical drug manufacturing, critical parameters (e.g., mixing speed, temperature, flow rates) are often interdependent [9]. If your parameter sweep varies one factor while ignoring others, it can probe regions of the parameter space where the system is highly sensitive, making the results seem chaotic and non-reproducible. This is a classic sign of an ill-conditioned optimization problem.

FAQ 4: Are there specific stages in drug development that are particularly prone to ill-conditioned problems? Yes, several key areas are prone to these issues:

- Molecular Dynamics (MD): When simulating slow conformational changes or using certain machine-learning force fields [10] [8].

- Process Optimization: When scaling up a manufacturing process, where small changes in mixing time or temperature can lead to batch failures, like changes in viscosity or precipitation [9].

- Population Pharmacokinetic (PK) Modeling: When trying to estimate model parameters from sparse or noisy clinical data, where the condition number of the estimation problem can be high [11].

FAQ 5: What are the most effective strategies to mitigate ill-conditioning in computational drug discovery? A multi-pronged approach is most effective, as summarized in the table below.

Table 1: Strategies for Mitigating Ill-Conditioning

| Strategy | Description | Application Example |

|---|---|---|

| Regularization | Adds a constraint to stabilize the solution of an ill-posed problem. | Tikhonov regularization for inverse problems [7]. |

| Preconditioning | Transforms the problem to a better-conditioned system with a lower condition number. | Diagonal scaling of a matrix before solving a linear system [7]. |

| Enhanced Sampling | Algorithmically improves the exploration of conformational space in MD. | Metadynamics, parallel tempering, and weighted ensemble methods [10]. |

| Quality by Design (QbD) | Systematically understands the impact of process parameters on product quality. | Using Design of Experiments (DOE) to identify robust manufacturing conditions [9]. |

| Advanced Hardware | Uses specialized computing to achieve longer, more stable simulations. | GPUs and purpose-built ASICs (e.g., Anton supercomputer) for MD [10]. |

Troubleshooting Guides

Guide 1: Troubleshooting Ill-Conditioning in Molecular Dynamics Simulations

Symptoms: Simulation crashes, non-physical bond lengths/angles, failure to reproduce known experimental properties (e.g., radial distribution functions), or a model that "explodes."

Table 2: MD Troubleshooting Guide

| Symptom | Possible Cause | Solution |

|---|---|---|

| Simulation crash or 'explosion' | Unstable Machine Learning Potential (MLP); inaccurate force field parameters. | Conduct basic stability tests on the MLP, such as gas-phase simulations and normal mode analysis on simple molecules [8]. |

| Incorrect liquid structure (e.g., water forms ice/glass) | MLP has non-physical energy minima. | Validate the potential against experimental data like radial distribution functions; consider switching to a more robust model architecture [8]. |

| Inadequate sampling of relevant protein conformations | Standard MD is trapped in local energy minima; slow dynamics. | Employ enhanced sampling techniques (e.g., metadynamics, replica exchange) to overcome energy barriers [10]. |

| Poor ligand-binding predictions | Using a single, static protein structure from a tool like AlphaFold. | Generate a conformational ensemble by running short MD simulations to refine sidechain positions or using modified AlphaFold pipelines [10]. |

Workflow for Stable MD Setup: The following diagram outlines a protocol to prevent and diagnose instability in MD simulations.

Guide 2: Troubleshooting Ill-Conditioning in Manufacturing Process Optimization

Symptoms: In a parameter sweep or optimization of a unit operation (e.g., mixing for a topical cream), results are highly sensitive, non-reproducible, or fail scale-up despite working in the lab.

Table 3: Process Optimization Troubleshooting Guide

| Symptom | Possible Cause | Solution |

|---|---|---|

| Drastic viscosity drop or phase separation upon scale-up. | Over-mixing: High shear breaks down polymer structure (e.g., in gels) or causes emulsion separation [9]. | Identify the minimum required mixing time and maximum tolerable shear. Use a recirculation loop instead of increasing mixer speed [9]. |

| Precipitation of solubilized ingredients. | Incorrect temperature control: Excess cooling can cause precipitation; insufficient heat can lead to batch failure [9]. | Tightly control heating/cooling rates. Avoid rapid cooling which can increase viscosity or cause crystallization [9]. |

| Inhomogeneous product (e.g., 'fish eyes' in gels). | Improper ingredient addition: Polymers added too quickly or without proper dispersion [9]. | Add shear-sensitive thickeners (e.g., carbomer) slowly. Use a slurry in a non-solvent (e.g., glycerin) or an eductor for dispersion [9]. |

| Failed quality tests despite being in-spec during R&D. | Poorly controlled Critical Process Parameters (CPPs). | Implement a QbD approach. Use Design of Experiments (DOE) to understand the relationship between CPPs and product Critical Quality Attributes (CQAs) [9]. |

Experimental Protocol: Design of Experiments (DOE) for Process Robustness This methodology helps systematically identify and control ill-conditioning in process parameters.

- Define Objective: State the goal (e.g., "Maximize final product viscosity").

- Identify Factors: Select the critical process parameters (CPPs) to study (e.g., Mixing Speed, Mixing Time, Temperature).

- Design Experiment: Create a matrix (e.g., a full factorial design) that varies all factors simultaneously across a defined range.

- Execute and Measure: Run the experiments and measure the Critical Quality Attributes (CQAs) like viscosity.

- Analyze Data: Use statistical analysis to build a model and identify which factors and interactions have the most significant impact on the CQAs. The goal is to find a robust "sweet spot" where the CQA is insensitive to small variations in the CPPs [9].

The Scientist's Toolkit

Table 4: Essential Research Reagents and Solutions for Featured Fields

| Item | Function / Description |

|---|---|

| Enhanced Sampling Software | Software plugins (e.g., for GROMACS, NAMD) that implement metadynamics or replica exchange to overcome sampling limitations in MD [10]. |

| Machine Learning Potentials (MLPs) | Neural network-based force fields like ANI-2x or MACE that offer quantum-mechanical accuracy at higher speeds, though require stability validation [8]. |

| Programmable Logic Controller (PLC) | A process control tool used in manufacturing vessels to provide reliable and accurate control of temperature, pressure, and mixing parameters [9]. |

| In-line Homogenizer & Powder Eductor | Equipment for ensuring uniform dispersion and hydration of ingredients (e.g., polymers) during manufacturing, which requires optimized flow rates [9]. |

| Condition Number Estimator | Algorithms (e.g., via singular value decomposition in linear algebra packages) to assess the potential sensitivity and stability of a numerical problem [7]. |

Frequently Asked Questions (FAQs)

Q1: What does "ill-conditioning" mean in the context of drug discovery simulations? Ill-conditioning is a numerical property of a mathematical problem where small changes in the input data (e.g., experimental measurements or initial parameter guesses) lead to large, often unstable, changes in the solution. In drug discovery, this frequently occurs in systems of linear equations used for tasks like predicting drug-target binding affinity or estimating kinetic parameters from experimental data. It can cause algorithms to converge slowly, fail entirely, or produce unreliable, non-reproducible results [12] [13].

Q2: How can I tell if my binding affinity prediction or parameter estimation problem is ill-conditioned? A key indicator is a high condition number for the matrices involved in your computation. In practice, you may observe:

- Your optimization algorithm fails to converge or is highly sensitive to the initial starting point.

- Small perturbations in your experimental data lead to wildly different parameter estimates or affinity predictions.

- You encounter numerical overflow or underflow errors during calculations [13] [14]. Diagnostic tools include computing the condition number of your system matrix (available in numerical libraries) and performing sensitivity analyses.

Q3: What practical steps can I take to mitigate ill-conditioning in kinetic parameter estimation? A combination of strategies is often most effective:

- Use Global Optimization Methods: Employ metaheuristics (e.g., scatter search, genetic algorithms) or multi-start of local methods to better navigate the complex, multi-modal objective functions common in kinetic models [13].

- Improve Parameter Scaling: Ensure your model parameters are scaled to have similar orders of magnitude, which can improve the conditioning of the estimation problem.

- Leverage Domain Decomposition: For problems involving partial differential equations, splitting the computational domain into smaller, well-conditioned subdomains can stabilize the solution process [12].

- Utilize Adjoint-Based Sensitivities: These can provide efficient and accurate gradient information, which helps guide optimization algorithms more reliably [13].

Q4: For binding affinity prediction, do newer AI models like Boltz-2 overcome the limitations of ill-conditioned traditional methods? Yes, advanced deep learning models represent a significant step forward. Boltz-2, for example, is a foundation model that jointly predicts protein-ligand complex structures and their binding affinities. It has been shown to achieve accuracy comparable to physics-based Free Energy Perturbation methods, which are less susceptible to the ill-conditioning of simpler models, while being over 1000 times faster [15]. These models learn from vast datasets and complex, non-linear relationships, inherently bypassing some of the numerical instability issues of traditional linear algebra-based approaches [16] [15].

Q5: My collocation method for solving PDEs in pharmacokinetics uses an ill-conditioned differentiation matrix. Why does it still sometimes give accurate results? This touches on a subtle point in numerical analysis. A high condition number provides a worst-case error bound. The accuracy you achieve in practice depends on the specific data (the "right-hand side" of your equation). If the data primarily aligns with the well-conditioned parts of the matrix (the larger singular values), the solution can remain accurate. Conversely, if the data has significant components in the direction of the ill-conditioned modes, errors will be large [14]. Therefore, for specific problems, a method can be ill-conditioned in theory but still provide useful results in practice.

Troubleshooting Guides

Problem: Parameter Estimation for Kinetic Models is Unstable or Non-Reproducible

| Observation | Potential Cause | Solution |

|---|---|---|

| Estimates change drastically with different initial guesses. | Objective function is multi-modal; optimization is stuck in local minima. | Use a hybrid metaheuristic that combines a global search (e.g., scatter search) with a gradient-based local optimizer [13]. |

| Model fits well to one dataset but fails to generalize. | Overfitting and/or ill-conditioning amplifying noise in the data. | Implement regularization techniques (e.g., Tikhonov regularization) to penalize unrealistic parameter values and stabilize the solution [12] [13]. |

| Optimization is prohibitively slow for models with many parameters. | High computational cost of evaluating the objective function and its gradients. | Use adjoint-based methods for efficient gradient calculation, which can accelerate local searches within a multi-start or hybrid framework [13]. |

Problem: Inaccurate or Unreliable Binding Affinity Predictions

| Observation | Potential Cause | Solution |

|---|---|---|

| Poor predictive performance on new protein or compound families. | Model lacks generalization, often due to limited training data or simplistic features. | Use a method like DCGAN-DTA that employs a semi-supervised learning approach with generative adversarial networks to leverage unlabeled data for better feature learning [16]. |

| Predictions are sensitive to small changes in the input protein sequence or compound SMILES string. | The underlying model architecture is sensitive and potentially ill-conditioned for certain inputs. | Adopt state-of-the-art models like Boltz-2 that are specifically designed for robust affinity prediction and have been validated against rigorous benchmarks [15]. |

| Difficulty in interpreting which features drive the affinity prediction. | "Black-box" nature of complex models like deep neural networks. | Choose methods that offer explainability, such as MMGX, which uses multiple molecular graphs to provide insights into model decisions from various perspectives [17]. |

Experimental Protocols & Methodologies

Protocol 1: Robust Workflow for Kinetic Parameter Estimation

This protocol outlines a best-practice methodology for estimating parameters in systems biology models, designed to mitigate the effects of ill-conditioning [13].

- Problem Formulation: Define your ODE model and the objective function (e.g., sum of squared errors between model predictions and experimental data).

- Data Preprocessing: Ensure data is properly normalized and parameter bounds are set based on biological knowledge.

- Selection of Optimization Strategy:

- Recommended: Employ a hybrid metaheuristic. For example, use a global scatter search to explore the parameter space, followed by an interior-point method with adjoint-based sensitivities for local refinement.

- Alternative: Perform a multi-start of a local gradient-based optimizer (e.g., Levenberg-Marquardt) from many random starting points within the bounds.

- Validation: Cross-validate the estimated parameters on a hold-out dataset not used for training. Perform a sensitivity analysis to check the robustness of the solution.

The workflow for this protocol is summarized in the following diagram:

Protocol 2: Benchmarking Binding Affinity Prediction Methods

This protocol provides a standard procedure for evaluating and comparing different affinity prediction methods, ensuring a fair assessment of their performance and robustness [16] [17].

- Dataset Selection: Use recently updated, curated benchmark datasets such as BindingDB or PDBBind [16]. The CARA benchmark offers specialized train-test splitting schemes to eliminate biases [17].

- Data Splitting Strategy: Go beyond simple random splits. Use cold-start splitting strategies:

- Protein Cold-Start: Test proteins are not present in the training set.

- Compound Cold-Start: Test compounds are not present in the training set. This tests the model's ability to generalize to novel targets or drugs [16].

- Model Training & Evaluation:

- Adversarial Control: Conduct experiments with "straw models" or negative controls to validate that the prediction performance is genuine and not an artifact of the experimental setup [16].

The logical flow of the benchmarking process is as follows:

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and resources that are essential for conducting research in this field.

| Tool / Resource Name | Type | Primary Function | Relevance to Ill-Conditioning |

|---|---|---|---|

| AutoDock-GPU | Docking Software | Accelerated molecular docking for virtual screening [18]. | Provides a fast, Lamarckian Genetic Algorithm for conformational search, which can be more robust to rough energy landscapes than pure gradient-based methods. |

| Boltz-2 | Foundation AI Model | Joint prediction of biomolecular structures and binding affinities [15]. | Bypasses traditional numerical linear algebra issues by using a deep learning approach, achieving FEP-level accuracy without the same conditioning problems. |

| DCGAN-DTA | Deep Learning Model | Predicts drug-target binding affinity from sequence and SMILES data [16]. | Uses a semi-supervised GAN framework to learn features from unlabeled data, which can improve robustness when labeled data is limited—a common source of ill-posed problems. |

| RSCD3 | Online Resource | A centralized portal for structure-based computational drug discovery tools and documentation [18]. | Offers access to multiple docking engines (like AutoDockFR for flexible receptors) and tutorials, allowing researchers to choose the right tool for their specific problem. |

| FEP+/OpenFE | Physics-Based Simulation | Computes binding affinities using free energy perturbation calculations [15]. | Considered a gold standard but is computationally expensive. While numerically complex, it is based on statistical mechanics and is less prone to the same form of ill-conditioning as simpler empirical methods. |

Troubleshooting Guides

Troubleshooting Ill-Conditioned Systems in Alchemical Free Energy Calculations

Problem: Large variance in free energy estimates or simulation instability during alchemical transformation (e.g., in FEP or TI calculations). Explanation: As a ligand is alchemically transformed, the system can pass through states with high energy or poor conformational sampling, leading to numerical instability and imprecise free energy estimates [19]. Solution:

- Implement Soft-Core Potentials: Use a soft-core potential to avoid singularities when atoms are created or annihilated. This prevents atoms from getting too close and causing large energy values that destabilize calculations [19].

- Optimize the λ-Schedule: Increase the number of intermediate λ windows, especially in regions where the system changes rapidly (e.g., when charged groups appear or disappear). A finer schedule improves sampling and reduces variance [20].

- Extend Simulation Time: Run longer simulations at problematic λ values to improve sampling of rare events and ensure ergodicity [21] [20].

- Inspect Intermediate States: Check for high energy fluctuations, poor overlap between consecutive λ windows, or abnormal structural changes in the trajectory analysis [20].

Troubleshooting Poor Convergence in Binding Free Energy Estimation

Problem: In methods like MM/PBSA or MM/GBSA, the calculated binding free energy fails to converge or shows high sensitivity to minor changes in the simulation protocol. Explanation: This ill-conditioning can arise from inadequate sampling of the conformational space, the use of an inappropriate internal dielectric constant, or the neglect of entropy contributions [19]. Solution:

- Improve Conformational Sampling: Use enhanced sampling techniques (e.g., metadynamics, GaMD) to overcome energy barriers and achieve better convergence [21] [19].

- Tune Dielectric Constants: For membrane proteins or specific systems, adjust the internal dielectric constant (e.g., to 2.0-4.0 for soluble proteins or 7.0-20.0 for membrane-bound proteins) to better represent the electrostatic environment [19].

- Account for Entropy: Incorporate entropy estimates, such as through interaction entropy (IE) or normal mode analysis, though careful validation is needed as its effectiveness can be system-dependent [19].

- Validate with Experimental Data: Correlate computational results with experimental binding affinities (e.g., IC50, Kd) to identify systematic biases and calibrate the model [21] [19].

Troubleshooting Ill-Conditioned Linear Systems in Continuum Solvent Models

Problem: When solving the Poisson-Boltzmann (PB) equation numerically, the resulting linear system (Ax = b) is ill-conditioned, leading to large errors in electrostatic solvation energy calculations.

Explanation: The discretization of the PB equation can yield a system matrix A where the ratio of its largest to smallest singular value (the condition number) is very large. Small perturbations in the right-hand side b (e.g., from charge assignments or mesh generation) can then cause large errors in the solution x [22].

Solution:

- Apply Regularization Techniques:

- Tikhonov Regularization (TR): Solves a modified problem ( (A^T A + \lambda I)x = A^T b ) to penalize large, unstable solutions. The regularization parameter

λcontrols the trade-off between stability and accuracy [22]. - Truncated Singular Value Decomposition (TSVD): Removes contributions from the smallest singular values of

A, which are the primary source of ill-conditioning [22]. - Combined T-TR Method: A hybrid approach that can more effectively filter out high-frequency noise caused by the smallest singular vectors [22].

- Tikhonov Regularization (TR): Solves a modified problem ( (A^T A + \lambda I)x = A^T b ) to penalize large, unstable solutions. The regularization parameter

- Choose an Optimal Regularization Parameter: For stability-focused applications, the parameter ( \lambda = \sigma{\text{max}} \sigma{\text{min}} ) (the product of the largest and smallest singular values) can be effective. The "sensitivity index" is another criterion that can help select a parameter balancing both stability and accuracy [22].

- Use Effective Condition Number: Monitor the effective condition number ( \text{Cond_eff}(A) = \frac{\|b\|}{\sigma_{\text{min}} \|x\|} ), which is often more informative than the traditional condition number when data errors in

bare the dominant concern [22].

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary sources of ill-conditioning in drug-target binding free energy calculations? Ill-conditioning arises from multiple sources: (1) Energetic Decomposition: The binding free energy is a small difference between large numbers (the energy of the complex minus the energies of the protein and ligand), making it sensitive to small errors in these large terms [19]. (2) Inadequate Sampling: The failure to sample rare but critical conformational states, a problem known as non-ergodicity, leads to biased and unstable estimates [21]. (3) Numerical Discretization: The numerical solution of underlying equations, such as the Poisson-Boltzmann equation in MM/PBSA, can generate ill-conditioned linear systems [22]. (4) Alchemical Transformations: The creation or annihilation of atoms in FEP/TI can cause singularities and poor overlap between intermediate states [19].

FAQ 2: How can I diagnose an ill-conditioned problem in my binding affinity calculations? Key indicators include: (1) High Variance: Large standard errors or significant jumps in free energy estimates between independent simulations or small changes in the λ-schedule [20]. (2) Poor Overlap: Low phase space overlap between consecutive alchemical states in FEP [19]. (3) Large Condition Numbers: A high condition number or effective condition number in the underlying numerical problem (e.g., in a linear solver) [22]. (4) Lack of Convergence: The calculated free energy does not plateau as simulation time increases [21] [19].

FAQ 3: What is the practical difference between the traditional condition number and the effective condition number?

The traditional condition number ( \text{Cond}(A) = \sigma{\text{max}} / \sigma{\text{min}} ) reflects the worst-case sensitivity of the solution x to perturbations in A or b for any possible vector b. The effective condition number ( \text{Cond_eff}(A) = \|b\| / ( \sigma_{\text{min}} \|x\| ) ) describes the sensitivity for the specific vector b in your problem. In many practical applications where the data b has inherent noise, the effective condition number provides a more realistic and often less severe measure of instability [22].

FAQ 4: When should I prioritize stability over accuracy in my calculations? Stability should be the primary concern when (1) the condition number of your system is so high that results are nonsensical or change drastically with minimal protocol adjustments, or (2) the goal is a robust, qualitative ranking of compounds (e.g., in early-stage virtual screening) rather than a highly precise affinity prediction. Applying regularization, even if it introduces a small bias, is often necessary to obtain stable, interpretable results from an otherwise intractable ill-conditioned system [22].

FAQ 5: My MM/PBSA results are unstable. Should I switch to a more advanced method like FEP? Not necessarily. While FEP+ is generally more rigorous and can provide higher accuracy, it is computationally expensive and also susceptible to ill-conditioning if the alchemical pathway is poorly designed [19] [20]. First, try to stabilize your MM/PBSA calculations by improving conformational sampling (e.g., with longer MD trajectories or enhanced sampling), tuning dielectric constants, and using entropy corrections [19]. If high precision is critical for congeneric series and resources allow, then FEP+ is an excellent choice, but it requires expertise to set up and troubleshoot properly [20].

Quantitative Data Tables

Table 1: Comparison of Computational Methods for Binding Free Energy Estimation

| Method | Theoretical Basis | Typical Computational Cost | Key Strengths | Common Sources of Ill-Conditioning |

|---|---|---|---|---|

| MM/PB(GB)SA [19] | End-state approximation using molecular mechanics and implicit solvation. | Medium | Fast; good for virtual screening; no need for a predefined pathway. | Inadequate sampling; choice of internal dielectric constant; decomposition into large energetic terms. |

| Free Energy Perturbation (FEP) [19] [20] | Alchemical transformation using statistical mechanics. | High | High accuracy for congeneric series; rigorous theoretical foundation. | Poor λ-window overlap; soft-core parameter choice; insufficient sampling of slow degrees of freedom. |

| Thermodynamic Integration (TI) [19] | Alchemical transformation, integrating the derivative of the Hamiltonian. | High | Avoids endpoint singularities; can be more stable than FEP. | Same as FEP, plus numerical integration errors from the dE/dλ curve. |

| Deep Learning (e.g., DrugForm-DTA) [23] | Data-driven prediction using neural networks. | Low (after training) | Very fast prediction; does not require 3D protein structure. | Ill-conditioned weight matrices in the network; poor model generalizability if training data is limited. |

Table 2: Regularization Techniques for Ill-Conditioned Linear Systems

| Technique | Principle | Key Parameter(s) | Pros | Cons |

|---|---|---|---|---|

| Tikhonov Regularization (TR) [22] | Adds a constraint (( \lambda I )) to minimize the solution norm. | Regularization parameter (λ) | Stabilizes the solution; relatively simple to implement. | Introduces bias; choice of optimal λ is critical and non-trivial. |

| Truncated Singular Value Decomposition (TSVD) [22] | Removes singular components below a threshold. | Truncation threshold | Removes source of noise directly; intuitive. | Abrupt truncation can discard useful information. |

| T-TR (Combined Approach) [22] | Combines TSVD and TR for enhanced filtering. | Truncation threshold and λ | Can remove high-frequency noise more effectively than either method alone. | Increased complexity with two parameters to choose. |

Experimental Protocols

Protocol: Relative Binding Free Energy Calculation Using FEP+

This protocol outlines the steps for performing a relative binding free energy calculation between a reference ligand and a set of similar compounds using Schrödinger's FEP+ methodology [20].

Objective: To accurately predict the change in binding free energy (( \Delta \Delta G )) for a series of congeneric ligands, enabling lead optimization.

Required Software/Materials:

- Schrödinger Suite (Maestro, FEP+)

- Prepared protein structure (e.g., from PDB)

- 3D structures of ligand pairs for transformation

Workflow:

Detailed Steps:

- Structure Preparation:

- Protein Preparation: Use the Protein Preparation Wizard in Maestro. This involves adding hydrogens, assigning bond orders, filling in missing side chains, and optimizing the hydrogen-bonding network. A critical step is to assess the overall structure quality [20].

- Ligand Preparation: Prepare all ligands using LigPrep to generate low-energy 3D structures with correct ionization states at the target pH (e.g., 7.0 ± 2.0) [20].

Ligand Alignment and FEP Map Generation:

- Align the ligands to be mutated into a common core structure within the binding pocket. This defines the "core" region that remains unchanged during the alchemical transformation.

- Generate the FEP map, which is a graph defining all the pairwise transformations that will be simulated. The map should connect ligands in a way that minimizes the perturbation size for each edge [20].

FEP+ Setup:

- In the FEP+ panel, load the prepared protein and the FEP map.

- Set simulation parameters. The default is often a good starting point: 6-12 ns per window, with a λ-spacing that is denser near end-states (λ=0 and λ=1). Consider enabling "pKa correction" if ligands have different ionization states [20].

- Define the REST region. This is typically the binding site residues within a certain distance (e.g., 5-8 Å) of the ligands, which are simulated at a higher temperature to enhance sampling [20].

Run Simulation:

- Submit the FEP+ job to a suitable computing resource (e.g., a GPU cluster). Monitor the job for errors.

Results Analysis:

- Upon completion, analyze the results within Maestro's FEP+ dashboard.

- Check key metrics: Overlap Statistics: Ensure sufficient phase space overlap between neighboring λ windows (values > 0.5 are generally good). Convergence: Plot ( \Delta G ) as a function of simulation time to see if it has plateaued. Correlation with Experiment: Generate a plot of predicted vs. experimental ( \Delta G ) or activity data to assess predictive accuracy [20].

- Troubleshooting: If results are poor (high uncertainty, poor correlation), consider: extending simulation time, adjusting the λ-schedule, modifying the core definition, or adding key protein residues to the REST region [20].

Protocol: Assessing and Mitigating Ill-Conditioning in a Linear System

This protocol provides a general procedure for diagnosing and treating an ill-conditioned linear system (Ax = b), as encountered in continuum electrostatics or other numerical problems in computational biology [22].

Objective: To solve the linear system Ax = b in a stable and accurate manner when the matrix A is ill-conditioned.

Required Software/Materials:

- Computational environment with linear algebra capabilities (e.g., Python/NumPy/SciPy, MATLAB).

- The matrix A and vector b.

Workflow:

Detailed Steps:

- Diagnose Conditioning:

- Compute the singular values of A: ( \sigma{\text{max}}, \sigma{\text{min}} ).

- Calculate the traditional condition number: ( \text{Cond}(A) = \sigma{\text{max}} / \sigma{\text{min}} ). A very large number (e.g., > 10^6) indicates potential ill-conditioning.

- Calculate the effective condition number: ( \text{Cond_eff}(A) = \|b\| / (\sigma_{\text{min}} \|x\|) ), where

xis a naive solution (e.g., from a direct solver). This may provide a more favorable but problem-specific stability measure [22].

Select and Apply Regularization Method:

- Tikhonov Regularization (TR): Solve ( (A^T A + \lambda I)x = A^T b ). This is a good general-purpose method [22].

- Truncated Singular Value Decomposition (TSVD): Construct a solution using only the k largest singular values and their vectors, discarding the rest. This directly removes the contribution from small, noisy singular values [22].

- Combined T-TR Method: First apply TSVD to remove the most problematic singular components, then apply TR to the truncated system for further stabilization [22].

Choose Regularization Parameter (λ):

- For Stability: The parameter ( \lambda = \sigma{\text{max}} \sigma{\text{min}} ) can be a good starting point for TR, as it aims to significantly reduce the condition number [22].

- Sensitivity Index: Use the sensitivity index, which incorporates both stability (condition number) and solution accuracy (error), to find an optimal λ. The λ with the minimal sensitivity index is often a good balance [22].

- L-Curve: Plot the solution norm ( \|x{\lambda}\| ) against the residual norm ( \|A x{\lambda} - b\| ). The optimal λ is often near the "corner" of this L-shaped curve. The sensitivity index can also be applied here to automate the selection of ( \lambda_{L-curve} ) [22].

Validate the Solution:

- Check the physical plausibility of the result

x. - If possible, validate against a known benchmark solution or experimental data.

- Compare the residual error ( \|A x - b\| ) and the stability of

xunder small perturbations inAorbto ensure the solution is both accurate and stable.

- Check the physical plausibility of the result

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Drug-Target Binding Studies

| Item | Function in Research | Example Use-Case |

|---|---|---|

| Molecular Dynamics (MD) Software (e.g., AMBER, GROMACS, NAMD, OpenMM) | Simulates the time-dependent motion of atoms in a molecular system, providing trajectories for analyzing dynamics and energetics. | Used to generate conformational ensembles for MM/PBSA or to run alchemical simulations for FEP/TI [21] [19]. |

| Enhanced Sampling Algorithms (e.g., Metadynamics, GaMD, Umbrella Sampling) | Accelerates the sampling of rare events (e.g., ligand binding/unbinding) by biasing the simulation along pre-defined collective variables (CVs). | Applied to directly compute binding free energies and kinetics (kon, koff) that are otherwise inaccessible on standard MD timescales [21] [19]. |

| Free Energy Calculation Tools (e.g., FEP+, AMBER FEP, PLUMED) | Specialized software or plugins designed to set up, run, and analyze alchemical free energy calculations. | Used for lead optimization by predicting relative binding affinities of congeneric ligand series with high accuracy [19] [20]. |

| Continuum Solvation Solvers (e.g., APBS, DelPhi) | Numerically solves the Poisson-Boltzmann equation to calculate electrostatic solvation energies. | Provides the polar solvation term (and sometimes the non-polar term) in MM/PBSA calculations [19]. |

| Deep Learning Platforms & Models (e.g., PyTorch/TensorFlow, DrugForm-DTA, ESM-2) | Data-driven models that predict binding affinity or generate molecules based on sequence or structural information. | Predicts drug-target affinity (DTA) without requiring 3D protein structures, useful for high-throughput screening [23]. |

| Regularization Software Libraries (e.g., SciPy, NumPy, MATLAB) | Provides implemented numerical routines for SVD, Tikhonov regularization, and other linear algebra operations. | Used to stabilize ill-conditioned linear systems arising in continuum electrostatics or other numerical problems [22]. |

Frequently Asked Questions (FAQs)

1. What is a condition number, and why is it important in computational research? The condition number of a function measures how much its output value can change for a small change in the input argument. It quantifies how sensitive a function is to changes or errors in the input, and how much error in the output results from an error in the input. A problem with a low condition number is well-conditioned, while a problem with a high condition number is ill-conditioned. In practical terms, an ill-conditioned problem is one where a small change in the inputs leads to a large change in the answer, making the correct solution hard to find [1]. It is a crucial property for assessing the reliability of numerical solutions.

2. What is the fundamental difference between traditional and effective condition numbers?

The traditional condition number provides an upper bound on the relative error for the worst-case perturbation across all possible inputs. In contrast, the effective condition number provides an error bound for a specific given input vector [24]. For a specific problem (a given b in the linear system Ax=b), the true relative errors may be much smaller than the worst-case scenario predicted by the traditional condition number. The effective condition number captures this specific sensitivity.

3. When should I use the effective condition number instead of the traditional one? Use the effective condition number when you want to understand the error sensitivity for your particular problem instance and dataset, especially when the traditional condition number is pessimistically large. The effective condition number is particularly valuable when the worst-case error bound is too conservative for practical decision-making, and a more tailored error estimate is needed for a given set of inputs [25] [24].

4. How can ill-conditioning affect parameter sweep studies? In a parameter sweep, you solve a sequence of problems by varying parameters of interest [26]. If the underlying model is ill-conditioned, the solutions for different parameter values can exhibit extreme and non-physical variations, even if the parameter changes are very small. This can make it difficult to discern true trends from numerical artifacts, leading to incorrect conclusions about the system's behavior [27].

5. Are there any simple rules of thumb for interpreting condition number values?

As a rule of thumb, if the condition number κ(A) = 10^k, then you may lose up to k digits of accuracy in your solution [1]. For the traditional condition number measured in the 2-norm (κ(A)), any value close to 1 (like that of an orthogonal matrix) is excellent, while values greatly exceeding 1 indicate potential trouble. The identity matrix, for example, has a perfect condition number of 1 [28].

Troubleshooting Guide: Diagnosing and Addressing Ill-Conditioning

Symptom: Large oscillations in results during a parameter sweep despite smooth parameter changes.

Diagnosis:

This is a classic sign of an ill-conditioned system. The traditional condition number (e.g., cond(A) in MATLAB) for your system matrix will likely be very large [1] [28]. The high sensitivity amplifies tiny numerical rounding errors, causing the unstable output.

Resolution:

- Calculate the Effective Condition Number: Compute the effective condition number for your specific results. If it is significantly lower than the traditional one, it indicates that your particular solution is less sensitive than the worst-case scenario, and the results in the sweep might be more reliable than they initially appear [24].

- Reformulate the Problem: Often, ill-conditioning stems from the problem's formulation. Consider changing units to normalize the scale of your variables or implement a preconditioning technique to transform the system into a better-conditioned one.

Symptom: A solver fails to converge or issues a warning about matrix singularity during a parameter sweep.

Diagnosis: The system matrix is either singular or so close to singular that the solver cannot handle it. A singular matrix has an infinite condition number [1] [28].

Resolution:

- Identify Linear Dependencies: Use tools like the

gurobi-modelanalyzeror similar diagnostics to find rows or columns in your basis matrix that are nearly linearly dependent. These tools can filter the matrix to pinpoint the source of ill-conditioning, which is often more helpful than just knowing the condition number [29]. - Check for Collinearity: In regression models, collinearity between predictor variables is a common cause of ill-conditioning. Use regression diagnostics (like Variance Inflation Factors) to identify and remove highly collinear parameters [30].

Symptom: Small perturbations in input data lead to large changes in the final result.

Diagnosis: The problem is ill-conditioned. The relative error in the output is bounded by the condition number multiplied by the relative error in the input [28].

Resolution:

- Component-wise Analysis: Calculate the component-wise condition number, which can sometimes be much smaller than the norm-wise condition number, offering a less pessimistic view of the error for your specific data [25].

- Increase Precision: If feasible, conduct computations using higher precision arithmetic (e.g., quadruple instead of double). This can mitigate the amplification of rounding errors, though it does not fix the underlying ill-conditioning.

Comparison of Condition Number Types

The table below summarizes the key characteristics of traditional and effective condition numbers.

| Feature | Traditional Condition Number | Effective Condition Number |

|---|---|---|

| Definition | κ(A) = ‖A‖·‖A⁻¹‖ for a matrix A [1] [28]. |

Defined for a specific input b; multiple formulas exist [24]. |

| Error Bound | Worst-case bound for all possible inputs [24]. | Bound for a specific given input b [24]. |

| Interpretation | Measure of how close the matrix is to being singular [28]. | Measure of sensitivity for a particular problem instance. |

| Typical Use | General assessment of a problem's stability. | Detailed diagnosis for a specific experimental dataset. |

| Value Range | ≥ 1 [28]. | Can be much smaller than the traditional κ(A) for a given b [24]. |

Experimental Protocol: Condition Number Analysis in a Parameter Sweep

This protocol provides a detailed methodology for diagnosing ill-conditioning during a parameter sweep study, common in fields like computational physics and engineering [27] [26].

1. Problem Setup

- Define the System: Formulate the mathematical model (e.g., a system of linear equations

A(p)x = b(p)), wherepis the parameter being swept. - Parameter Selection: Identify the parameter

pand its range of values (p₁, p₂, ..., pₙ) for the sweep.

2. Execution of the Parameter Sweep

- Automated Solving: Use a computational environment (e.g., COMSOL [26], Rescale [27], or custom scripts) to solve the system

A(pᵢ)x = b(pᵢ)for each parameter valuepᵢ. - Solution Storage: Save the solution vector

xᵢfor each parameter value.

3. Condition Number Diagnostics

- Compute Traditional κ: For each unique system matrix

A(pᵢ), compute the traditional condition numberκ(A(pᵢ))using a consistent norm (e.g., the 2-norm) [1] [28]. - Compute Effective Condition Number: For each specific solution

xᵢ(solvingA(pᵢ)xᵢ = b(pᵢ)), compute the effective condition numberCond_effusing formulas from literature [24]. - Visualization: Create plots of the solution

xᵢ, the traditionalκ, and the effectiveCond_effacross the parameter range.

4. Analysis and Interpretation

- Correlate Instability: Identify parameter regions where the solution

xᵢshows large oscillations. Check if these regions correspond to high traditional and/or effective condition numbers. - Assess Practical Impact: If the effective condition number is low in a region where the traditional one is high, it suggests your specific results may still be reliable there.

The following workflow diagram illustrates the diagnostic process for a parameter sweep:

The Scientist's Toolkit: Key Research Reagents

The table below lists essential computational "reagents" for diagnosing and analyzing condition numbers.

| Tool / Concept | Function / Purpose |

|---|---|

| Matrix Norm (‖·‖) | Measures the "size" of a matrix or vector. Essential for calculating the traditional condition number κ(A) = ‖A‖·‖A⁻¹‖ [1] [28]. |

| Singular Value Decomposition (SVD) | A matrix factorization that reveals the singular values of a matrix. The 2-norm condition number is the ratio of the largest to smallest singular value [1]. |

| Effective Condition Number Formula | Provides a problem-specific sensitivity measure. For a linear system, it often depends on the right-hand side vector b and the matrix A [24]. |

| Parameter Sweep Software | Tools like COMSOL [26] or Rescale [27] that automate solving a problem over a range of parameter values. |

| Ill-Conditioning Explainer Tools | Specialized software (e.g., gurobi-modelanalyzer [29]) that filters ill-conditioned matrices to pinpoint the source of linear dependencies. |

| Linear Regression Diagnostics | Metrics like Variance Inflation Factors (VIFs) used to detect multicollinearity, a common cause of ill-conditioning in regression models [30]. |

Practical Regularization Techniques and Constrained Optimization for Stable Parameter Estimation

FAQ: Core Concepts and Method Selection

What is the fundamental difference between TSVD and Tikhonov regularization?

Both methods stabilize ill-conditioned problems but employ different mathematical strategies. Truncated Singular Value Decomposition (TSVD) directly removes unstable components by discarding singular vectors associated with the smallest singular values. In contrast, Tikhonov regularization applies a continuous damping function to all singular components, progressively diminishing the influence of more unstable components [31] [32].

| Characteristic | TSVD | Tikhonov |

|---|---|---|

| Filtering Approach | Hard truncation | Continuous damping |

| Parameter Meaning | Number of retained singular values (k) | Regularization weight (λ) |

| Solution Stability | High for retained components | Moderate for all components |

| Computational Cost | Lower (once SVD computed) | Higher (requires solving linear system) |

When should I prefer TSVD over Tikhonov regularization in pharmaceutical research?

TSVD is particularly advantageous when your parameter space is well-understood and you can clearly interpret the discarded components. For example, in Physiologically-Based Pharmacokinetic (PBPK) modeling, TSVD helps identify and remove parameters with excessive uncertainty that cannot be reliably estimated from available data [33]. Tikhonov is preferable when you need to preserve all model dimensions but require smoother stabilization across all parameters.

How do I interpret the regularization parameters in the context of ill-conditioned problems?

The regularization parameter fundamentally represents a trade-off between solution bias and stability. In TSVD, the truncation index k determines how many singular components are retained. In Tikhonov, the parameter λ controls the penalty on solution norm. For PBPK models with complex biological mechanisms, this translates to balancing physiological relevance against numerical stability [33].

FAQ: Implementation and Parameter Selection

What practical criteria help select optimal regularization parameters for drug development applications?

For TSVD, the L-curve method effectively identifies the optimal truncation point by locating the corner in the plot of solution norm versus residual norm [31]. For Tikhonov in pharmacological contexts, Generalized Cross-Validation (GCV) can automatically determine parameters that minimize prediction error, which is crucial for reliable PBPK model predictions [32]. Recent advances like Double Generalized Cross-Validation (D-GCV) further improve automated parameter selection by considering spatial projection characteristics of measurement information [32].

Why does my regularized solution show unexpected oscillations despite high residual accuracy?

This common issue, known as semi-convergence, occurs when regularization parameters are too lenient, allowing noise-contaminated components to dominate the solution [34]. In antibody disposition PBPK models, this might manifest as unphysiological oscillations in tissue concentration profiles. Implement relaxed iterative Tikhonov regularization with non-decreasing relaxation parameters to achieve more stable convergence [34].

How can I verify that my regularization approach is appropriate for my specific PBPK model?

Implement a comprehensive verification workflow including time-step convergence analysis, smoothness analysis, and parameter sweep analysis [35]. For stochastic models, assess consistency across different random seeds and determine appropriate sample sizes. In drug delivery system modeling, verify that regularized solutions maintain mass balance – a critical physiological constraint that serves as an excellent validation metric [33] [35].

Experimental Protocols and Data Analysis

Protocol 1: Comparative Analysis of Regularization Methods

Objective: Systematically evaluate TSVD and Tikhonov regularization for stabilizing ill-conditioned parameter estimation in PBPK models.

Materials:

- Computational Environment: Python with NumPy, SciPy, and specialized regularization tools (e.g., Model Verification Tools) [35]

- Test Problem: Ill-conditioned system from PBPK model with known ground truth

- Noise Model: Additive Gaussian noise at multiple signal-to-noise ratios

Procedure:

- Problem Formulation: Extract the sensitivity matrix from your PBPK model at nominal parameter values

- Condition Assessment: Compute the condition number and SVD spectrum to characterize ill-conditioning

- TSVD Implementation:

- Perform SVD on the system matrix

- Apply L-curve analysis to identify optimal truncation index

- Compute solutions for multiple truncation levels

- Tikhonov Implementation:

- Apply GCV method for automatic parameter selection

- Compute solutions for multiple regularization parameters

- Implement spatial projection regularization for enhanced performance [32]

- Solution Evaluation:

- Quantify solution error relative to known ground truth

- Assess residual norms and solution stability

- Compute conditioning metrics for regularized solutions

Expected Outcomes: The protocol generates quantitative comparisons of regularization effectiveness, identifying method superiority based on specific problem characteristics.

Protocol 2: Regularization Parameter Selection Study

Objective: Determine optimal regularization parameters for specific classes of pharmacological problems.

Materials:

- Test Suite: Multiple ill-conditioned problems from different pharmacological applications

- Parameter Selection Methods: L-curve, GCV, D-GCV, discrepancy principle

- Evaluation Metrics: Solution error, residual norm, stability metrics

Procedure:

- Problem Selection: Curate a diverse set of ill-conditioned problems from:

- Parameter Sweep: For each method and problem, compute solutions across the full range of possible parameters

- Optimality Assessment: Identify parameters that minimize solution error for each method

- Methodology Evaluation: Compare selected parameters against optimal values

- Robustness Testing: Evaluate sensitivity to noise level and problem structure

Expected Outcomes: Guidelines for method selection based on problem characteristics, with quantitative performance assessments.

Troubleshooting Common Experimental Issues

Unexpected Sensitivity to Noise in Regularized Solutions

| Symptom | Potential Cause | Solution |

|---|---|---|

| High solution variance with minor noise changes | Insufficient regularization | Increase regularization parameter or reduce truncation index |

| Large bias even with minimal regularization | Over-regularization | Reduce regularization strength; verify problem scaling |

| Inconsistent performance across similar problems | Inappropriate parameter selection method | Implement D-GCV for automated parameter selection [32] |

| Semi-convergence in iterative methods | Lack of relaxation in iteration | Apply relaxed iterated Tikhonov with non-decreasing relaxation parameters [34] |

Computational Challenges in Large-Scale Problems

| Symptom | Potential Cause | Solution |

|---|---|---|

| Excessive memory requirements | Full matrix storage | Implement matrix-free methods; use iterative SVD |

| Prohibitive computation time | O(n³) complexity of direct methods | Apply preconditioned iterative methods [36] |

| Numerical instability in regularization | Poor numerical conditioning | Employ Cholesky decomposition with pivoting [37] |

| Convergence failure in iterative methods | Severe ill-conditioning | Implement improved preconditioned iterative integration-exponential methods [36] |

Research Reagent Solutions: Computational Tools

| Tool / Method | Function | Application Context |

|---|---|---|

| Model Verification Tools (MVT) | Automated verification of computational models | Deterministic verification of PBPK models [35] |

| Spatial Projection Regularization (SPR) | Vector space truncation based on projection amplitude | Acoustic inverse problems with general applicability [32] |

| Relaxed Iterated Tikhonov | Semi-convergence elimination with relaxation parameters | Problems requiring stable convergence [34] |

| Preconditioned IIE Method | Exponential integration for ill-conditioned systems | Large-scale sparse problems [36] |

| Double Generalized Cross-Validation | Automated parameter selection | Optimal balance between information preservation and noise suppression [32] |

| Cholesky Decomposition | Efficient matrix inversion | Pseudoinverse-based reconstruction [37] |

Advanced Technical Reference

Quantitative Performance Metrics

Condition Number Analysis: Regularization methods directly impact the effective condition number of treated systems. Effective condition numbers for regularized solutions can be significantly lower than traditional condition numbers, explaining their stabilization properties [31].

| Method | Condition Number Improvement | Error Bound Characteristics |

|---|---|---|

| TSVD | Direct control via truncation | Controlled but discontinuous |

| Tikhonov | Progressive improvement | Smooth but potentially biased |

| SPR | Optimal projection-based control | Balanced preservation and suppression [32] |

Computational Complexity Comparison: Understanding computational requirements is essential for method selection in large-scale pharmacological problems.

| Method | Setup Cost | Per-Solution Cost | Memory Requirements |

|---|---|---|---|

| TSVD | O(mn²) for full SVD | O(kn) for solution | O(mn) for matrix storage |

| Tikhonov | O(n³) for direct inversion | O(n²) for solution | O(n²) for matrix storage |

| Iterative Methods | O(1) for setup | O(iter·nnz) for solution | O(nnz) for sparse storage |

The selection of appropriate regularization methods fundamentally impacts the reliability of parameter estimation in ill-conditioned pharmacological models. Through systematic implementation of the protocols and troubleshooting approaches outlined here, researchers can significantly enhance the robustness of their computational findings in drug development applications.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental advantage of combining TSVD and Tikhonov regularization (T-TR) over using either method alone?

The T-TR hybrid approach leverages the complementary strengths of both methods: Truncated Singular Value Decomposition (TSVD) effectively handles the ill-conditioning of the system matrix by removing noise-amplifying components, while Tikhonov regularization imposes smoothness constraints on the solution, preventing artificial peaks and discontinuities [38]. This combination is particularly effective for severely ill-posed problems where the singular values of the system matrix decay rapidly to zero. The TSVD component acts as a preconditioning strategy, reducing computational cost and improving conditioning, after which Tikhonov regularization further stabilizes the solution [38] [22]. This synergy often results in more accurate and stable solutions compared to either method used independently.

Q2: How do I select the optimal regularization parameters (truncation index and Tikhonov parameter) for the T-TR method?

Parameter selection is critical for T-TR performance. Research indicates several effective strategies:

- Discrete Picard Condition (DPC): This method can be used to jointly select the SVD truncation parameter and the Tikhonov regularization parameter [38]. It analyzes the decay of singular values relative to the Fourier coefficients of the data to guide parameter choice.

- Sensitivity Index: This criterion indicates the severity of ill-conditioning via solution accuracy and can be used to select the regularization parameter by finding the minimal sensitivity index [22].

- Analytical Formula: For some linear algebraic systems, an optimal Tikhonov regularization parameter has been derived as

λ = σ_max * σ_min, whereσ_maxandσ_minare the maximal and minimal singular values of the system matrix, respectively. This choice aims to significantly reduce the condition number, thereby improving stability [22].

Q3: My T-TR solution exhibits unexpected artifacts or oversmoothing. What could be the cause?

This issue typically stems from inappropriate regularization parameters or problem-specific characteristics:

- Over-regularization: If the Tikhonov parameter (

α) is too large or the TSVD truncation index (k) is too low, the solution may be oversmoothed, losing important details [38] [39]. - Under-regularization: Conversely, a too-small

αor too-highkmay fail to suppress noise adequately, leading to noisy solutions with artificial peaks [38]. - Mismatched Regularization: The standard T-TR uses L2-norm regularization, which promotes smoothness. If your true solution is expected to be sparse or have sharp edges (common in imaging), consider hybrid approaches that combine Tikhonov with edge-preserving regularizers like Total Variation (TV) [40].

- Inaccurate Problem Modeling: Ensure the system matrix accurately represents the underlying physical process. Errors in the forward model can introduce artifacts that regularization cannot correct.

Q4: Can the T-TR method be applied to large-scale problems, such as those in 3D medical imaging?

Yes, but computational efficiency requires special implementations. For problems with a separable kernel structure (like some 2D NMR relaxometry problems), the Kronecker product structure of the system matrix can be exploited. Instead of computing the SVD of the large composite matrix, you can compute SVDs of the much smaller individual kernel matrices, dramatically reducing computational cost and memory requirements [38]. For other large-scale problems, iterative solvers (like Conjugate Gradient) are often used within the optimization framework, especially when enforcing non-negativity constraints via methods like the Newton Projection method [38].

Q5: How does the performance of the T-TR method compare to the established VSH algorithm in NMR applications?

Studies evaluating a T-TR method for 2D NMR data inversion have shown it can produce relaxation time distributions of improved quality compared to using separate TSVDs of the individual kernels (as in the VSH method), without a significant increase in computational complexity. The key is using the exact TSVD of the full composite kernel matrix (when computationally feasible), which can be done efficiently by exploiting the Kronecker product structure, rather than approximating it via separate TSVDs [38].

Troubleshooting Guides

Issue 1: Poor Solution Stability or High Sensitivity to Noise

Symptoms: Small changes in input data lead to large fluctuations in the solution. The reconstructed distribution is dominated by noise or exhibits unphysical oscillations.

Diagnosis and Resolution:

- Verify Ill-Conditioning: Check the singular value spectrum of your system matrix. A rapid decay to zero confirms severe ill-posedness, justifying the need for T-TR [38] [39].

- Re-evaluate Parameter Choice:

- Method: Use a more robust parameter selection criterion. The L-curve method can help visualize the trade-off between solution norm and residual norm, but for stability, methods based on the condition number or sensitivity index might be preferable [22].

- Implementation: Ensure your parameter search covers a sufficiently wide range. A logarithmic sweep of the Tikhonov parameter (

α) is often effective.

- Increase Truncation Level: If the TSVD truncation is too aggressive (very small

k), it might remove important solution components. Slightly increasekand re-tuneα[38]. - Consider Constraints: Implement non-negativity constraints (

f ≥ 0) in the Tikhonov minimization problem, which is common in applications like NMR where the solution represents a physical distribution [38]. This can significantly improve stability and physical plausibility.

Issue 2: Excessive Computational Time

Symptoms: The inversion process takes impractically long, especially for 2D or 3D problems.

Diagnosis and Resolution:

- Exploit Problem Structure: For problems with separable kernels, use the Kronecker product property to avoid building the full system matrix. Compute SVDs of the smaller constituent matrices

K1andK2, then combine them as inU = U2 ⊗ U1,Σ = Σ2 ⊗ Σ1,V = V2 ⊗ V1[38]. - Use Iterative Solvers: For the resulting optimization problem (e.g.,

min f≥0 ||Kf - s||² + α||f||²), employ iterative solvers like the Conjugate Gradient method within a Newton Projection framework, which is more efficient for large problems than direct inversion [38]. - Data Compression: Project the measured data onto the dominant column subspace of the regularized kernel to reduce problem size before solving [38].

Issue 3: Inaccurate Solution Despite Regularization

Symptoms: The solution lacks key features, is overly smooth, or does not match validation data.

Diagnosis and Resolution:

- Check the Discrete Picard Condition: This condition helps determine if the TSVD and Tikhonov parameters are chosen to sufficiently filter the noise while retaining signal components. Violation of this condition suggests poor parameter choice [38].

- Validate Forward Model: Ensure the kernel matrix

KinKf = saccurately models the underlying physics. An incorrect forward model will yield an inaccurate solution regardless of the regularization. - Explore Hybrid Regularization: If your solution is known to have piecewise-constant regions or sharp edges, standard T-TR (L2-norm) will cause blurring. Consider switching to or incorporating Total Variation (TV) regularization (

L1-normon gradients) to preserve edges [40]. An adaptive weighting between Tikhonov and TV can be automated based on local image gradients.

Experimental Protocols & Data Presentation

Table 1: Comparison of Regularization Methods for Ill-Posed Inverse Problems

This table summarizes key characteristics of different regularization approaches, helping researchers select the most appropriate method.

| Method | Key Mechanism | Primary Advantage | Primary Disadvantage | Typical Application Context | ||||

|---|---|---|---|---|---|---|---|---|

| TSVD | Truncates the SVD expansion, removing small singular values. | Simple, direct control over noise amplification. | Can lead to discontinuous solutions; choice of truncation index is critical. | Moderate ill-conditioning; when a clear singular value gap exists [38]. | ||||

| Tikhonov | Adds a penalty term (`α | f | ²`) to the least-squares problem. | Promotes smoothness, stable solutions. | Can oversmooth solutions, blurring edges; bias introduced [38] [40]. | Problems where smooth solutions are expected. | ||

| T-TR (Hybrid) | First applies TSVD, then Tikhonov to the reduced problem. | Combines noise removal of TSVD with smoothing of Tikhonov; reduces sensitivity to α [38] [22]. |

More complex parameter selection (both k and α). |

Severely ill-posed problems like 2D NMR relaxometry [38]. | ||||

| Total Variation (TV) | Uses L1-norm on gradients (`α | ∇f | ₁`) as a penalty. | Excellent at preserving edges and sharp features. | Can lead to "staircasing" effects in smooth regions; harder to solve [40]. | Image reconstruction with edges (e.g., ERT [40]). |

Table 2: Regularization Parameter Selection Strategies

A guide to common methods for choosing the crucial parameters in T-TR.

| Method | Principle | Key Strength | Key Weakness |

|---|---|---|---|

| Discrete Picard Condition (DPC) | Analyzes the decay of singular values versus Fourier coefficients to determine where noise dominates. | Provides a joint criterion for selecting both truncation k and Tikhonov parameter α [38]. |

Can be complex to implement and interpret. |

| L-curve | Plots solution norm vs. residual norm for a range of parameters; chooses the corner. | Intuitive visualization of the trade-off. | Corner can be ill-defined; computationally expensive [22] [39]. |

| Sensitivity Index | Selects parameters that minimize an index quantifying ill-conditioning effects on accuracy. | Directly ties parameter choice to solution accuracy [22]. | Requires defining and computing a problem-specific sensitivity metric. |

| Analytical (σmaxσmin) | Sets Tikhonov parameter as λ = σ_max * σ_min. |

Very simple to compute; effective at reducing condition number for stability [22]. | May not be optimal for all problems, particularly regarding accuracy. |

Detailed Methodology: T-TR for 2D NMR Relaxometry

This protocol is adapted from the hybrid inversion method for 2D Nuclear Magnetic Resonance relaxometry [38].

1. Problem Discretization:

- The continuous integral equation relating the measured signal

S(t1, t2)to the unknown relaxation time distributionF(T1, T2)is discretized onto a grid ofM1 × M2measurement times andN1 × N2relaxation times. - This yields a linear system:

Kf + e = s, whereK = K2 ⊗ K1is the Kronecker product of the discretized kernel matrices,fis the unknown distribution vector,sis the measured data vector, anderepresents noise.

2. Compute Truncated SVD (TSVD):

- Compute the singular value decomposition of the full system matrix:

K = UΣVᵀ. This can be done efficiently by exploiting the Kronecker structure:U = U₂ ⊗ U₁,Σ = Σ₂ ⊗ Σ₁,V = V₂ ⊗ V₁[38]. - Select a truncation parameter

k. This defines a truncated matrixK_k = U_k Σ_k V_kᵀ, which is a better-conditioned approximation ofK.

3. Formulate and Solve the T-TR Problem:

- Solve the hybrid regularized problem:

minf ≥ 0 ||Kkf - s||2 + α||f||2. - The non-negativity constraint (

f ≥ 0) is essential as the solution represents a physical distribution. - This large-scale non-negative least squares problem can be solved using algorithms like the Newton Projection (NP) method with an inner Conjugate Gradient (CG) solver [38].

4. Parameter Selection via Discrete Picard Condition: