Taming Linear Dependencies in Diffuse Basis Sets: A Guide for Accurate Biomolecular Simulation

Diffuse basis sets are essential for achieving high accuracy in quantum chemical calculations, particularly for modeling non-covalent interactions critical to drug discovery and biomolecular systems.

Taming Linear Dependencies in Diffuse Basis Sets: A Guide for Accurate Biomolecular Simulation

Abstract

Diffuse basis sets are essential for achieving high accuracy in quantum chemical calculations, particularly for modeling non-covalent interactions critical to drug discovery and biomolecular systems. However, their use introduces significant challenges, including severe linear dependencies that jeopardize computational stability and SCF convergence. This article provides a comprehensive framework for researchers and drug development professionals to understand, diagnose, and resolve these issues. It covers the foundational trade-off between accuracy and stability, presents robust methodological solutions like the pivoted Cholesky decomposition, offers practical troubleshooting protocols for popular quantum chemistry software, and validates alternative strategies to maintain accuracy while ensuring computational robustness.

The Diffuse Basis Set Conundrum: Balancing Accuracy and Computational Stability

Frequently Asked Questions

1. What is a linear dependency in a basis set? A linear dependency occurs when one or more basis functions in a quantum chemistry calculation can be represented as a linear combination of other functions in the same set. This makes the overlap matrix singular or nearly singular, as indicated by very small eigenvalues, preventing the SCF calculation from proceeding correctly [1].

2. Why do diffuse functions specifically cause linear dependencies? Diffuse basis functions have very small exponents, meaning they are spread over a large spatial volume. When added to a basis set, their significant overlap with other functions, including those on neighboring atoms in a molecule, creates near-duplicate descriptions of the electron cloud. This redundancy is the root cause of linear dependencies [2].

3. How can I identify problematic basis functions before running a calculation?

While not always foolproof, a preliminary check involves comparing the exponents of your basis functions. The pairs of exponents that are most similar to each other percentage-wise are often the culprits. For example, in a documented case, exponents of 94.8087090 and 92.4574853342 were identified as the primary source of a linear dependency [1].

4. My calculation failed due to linear dependencies. What is the first thing I should check? Review the output of your electronic structure program for warnings about the overlap matrix. It will typically report the number of eigenvalues found below a certain tolerance. Then, inspect your basis set, paying close attention to the most diffuse functions and any sets of exponents that are very close in value [1] [2].

5. Are some types of calculations more susceptible to this problem? Yes, calculations on large molecules and systems with anions are particularly prone. For anions, diffuse functions are essential for a correct description, but they simultaneously increase the risk of linear dependencies. Calculations using very large, high-zeta basis sets (e.g., cc-pV5Z, cc-pV6Z) are also at higher risk [3] [2].

Troubleshooting Guide: Resolving Linear Dependencies

Problem: Your calculation fails or produces warnings about near-linear-dependencies in the basis set.

| Step | Action | Technical Details & Purpose |

|---|---|---|

| 1. Diagnosis | Check the program output for the smallest eigenvalues of the overlap matrix. | If eigenvalues are below the default tolerance (often ~1e-7), linear dependencies are detected [2]. |

| 2. Manual Inspection | Identify and remove one function from the pair of basis set exponents that are most similar percentage-wise. | This directly removes the mathematical redundancy. Example: Removing one from 94.8087090 and 92.4574853342 [1]. |

| 3. Algorithmic Solution | Use a pivoted Cholesky decomposition to automatically filter out linearly dependent functions. | This is a robust, general solution implemented in programs like ERKALE, Psi4, and PySCF that cures the problem by construction [1]. |

| 4. Adjusting Thresholds | Increase the linear dependency threshold (Sthresh in ORCA) with caution. |

Purpose: Instructs the program to remove functions causing near-singularity. Warning: Use carefully for geometry optimizations to avoid discontinuities [2]. |

| 5. Basis Set Choice | Use a more compact, locally-complete basis set or the CABS singles correction. | This avoids the "curse of sparsity" and reduces non-locality, thereby minimizing the risk of dependencies from the start [3]. |

Experimental Protocols & Data

Protocol 1: Diagnosing and Manually Removing Linear Dependencies

This protocol is based on a real-world example with a water molecule and a large, uncontracted basis set [1].

- Gather Basis Set Data: Compile the full list of exponents for your basis set. For an oxygen atom, this might include exponents from

aug-cc-pV9Zand supplementary "tight" functions fromcc-pCV7Z. - Identify Similar Exponents: Calculate the percentage difference between all pairs of exponents within the same angular momentum channel. The pairs with the smallest percentage difference are the most likely to cause linear dependencies.

- Remove Functions: For each pair of highly similar exponents, manually remove one function from the basis set input file. In the documented case, removing one exponent from the pairs

(94.8087090, 92.4574853342)and(45.4553660, 52.8049100131)successfully resolved two linear dependencies. - Re-run Calculation: Execute your calculation with the modified, smaller basis set. A successful run with no linear dependency warnings and a lower (more stable) Hartree-Fock energy confirms the issue is resolved.

Protocol 2: Using Built-in Program Features to Handle Dependencies

This method uses the electronic structure program's internal safeguards [2].

- Locate the Threshold Parameter: In your input file, find the keyword for the linear dependency threshold (e.g.,

Sthreshin ORCA). - Adjust the Threshold: Increase the value cautiously. The default is often

1e-7; try1e-6or5e-6if linear dependencies persist. - Run with TightSCF: Combine this with a

TightSCFor similar keyword to ensure the SCF procedure is stringent enough to handle the modified basis. - Verify Results: Check the output to ensure the calculation converges and that the number of basis functions removed is reasonable. Be aware that this can introduce small discontinuities in potential energy surfaces.

Table 1: Impact of Basis Set Size and Diffuse Functions on Accuracy and Computational Cost

This data, derived from calculations on the ASCDB benchmark, shows why diffuse functions are necessary despite the challenges they introduce [3].

| Basis Set | RMSD (B) [kJ/mol] | NCI RMSD (B) [kJ/mol] | Time [s] |

|---|---|---|---|

| def2-SVP | 30.84 | 31.33 | 151 |

| def2-TZVP | 5.50 | 7.75 | 481 |

| def2-TZVPPD (with diffuse) | 1.82 | 0.73 | 1440 |

| cc-pVTZ | 9.13 | 12.46 | 573 |

| aug-cc-pVTZ (with diffuse) | 3.90 | 1.23 | 2706 |

Note: RMSD (B) is the basis set error for the entire benchmark. NCI RMSD (B) is the error specifically for non-covalent interactions, where diffuse functions are most critical. The increased time for diffuse basis sets is due to reduced sparsity and increased integral evaluation effort [3].

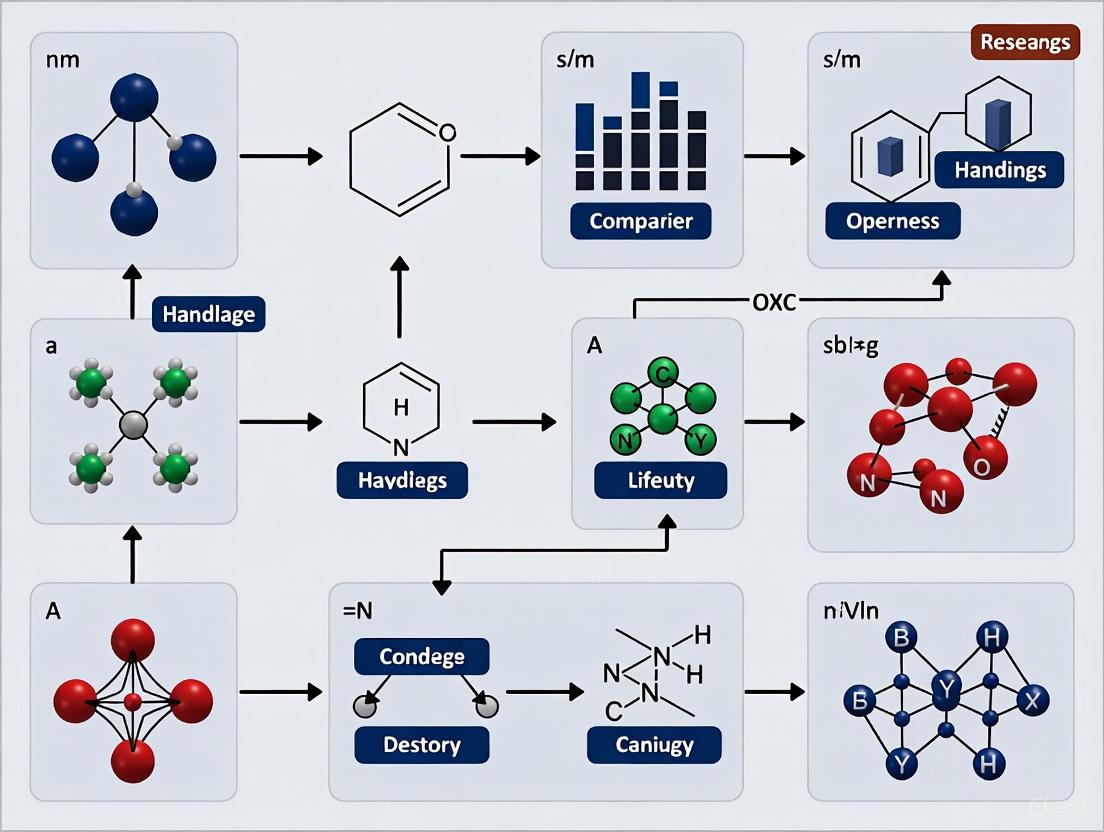

Visualization: From Diffuse Functions to Linear Dependencies

The following diagram illustrates the logical pathway of how the addition of diffuse functions leads to the problem of linear dependencies in electronic structure calculations.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Computational "Reagents" for Basis Set Studies

| Item / Basis Set | Function & Application | Key Characteristic |

|---|---|---|

| Pople Basis Sets (e.g., 6-31G) | Foundational split-valence basis sets for general-purpose calculations. | Somewhat old-fashioned and less consistent across the periodic table than modern alternatives [2]. |

| Dunning's cc-pVXZ | Correlation-consistent basis sets, ideal for systematic studies and extrapolation to the basis set limit [4]. | Designed to recover correlation energy, but can yield poor SCF energies for their size [2]. |

| Karlsruhe def2 Series (e.g., def2-SVP, def2-TZVP) | Modern, consistent basis sets recommended for general non-relativistic calculations across the periodic table [2]. | Excellent balance of cost and accuracy for both SCF and correlated calculations. |

| Augmented/Diffuse Functions (e.g., aug-cc-pVXZ, def2-TZVPPD) | Essential for accurate description of anions, excited states, and non-covalent interactions (NCIs) [3]. | Low exponents cause large spatial extent, reducing locality and increasing risk of linear dependencies [3] [2]. |

| Effective Core Potentials (ECPs) (e.g., SDD, LANL2DZ) | Replace core electrons with a potential, reducing computational cost for heavier elements [4] [2]. | Lead to some savings but geometries and energies are usually better with all-electron relativistic calculations for properties [2]. |

| Pivoted Cholesky Decomposer (e.g., in ERKALE, Psi4) | An algorithmic tool that automatically identifies and removes linear dependencies from the basis set during the calculation [1]. | Provides a robust, general solution to the linear dependency problem. |

Non-covalent interactions (NCIs) are fundamental forces that govern molecular recognition, protein folding, drug-receptor binding, and material assembly. Unlike covalent bonds, these interactions—including hydrogen bonding, van der Waals forces, and π-π stacking—are weak and highly dependent on the accurate description of the electron distribution in the outer regions of molecules. Diffuse basis functions, which are atomic orbitals with small exponents that decay slowly with distance from the nucleus, are essential for capturing these delicate electronic effects [5] [3].

The inclusion of diffuse functions presents a fundamental conundrum in computational chemistry: they are a blessing for accuracy but a curse for computational efficiency [5] [3]. This technical support guide addresses this paradox within the context of thesis research on handling linear dependencies in large, diffuse basis sets. We provide targeted troubleshooting and methodological guidance to help researchers navigate these challenges without sacrificing the accuracy critical for studying non-covalent interactions.

Troubleshooting Guides

Addressing the Sparsity and Linear Dependency Conundrum

Problem Statement: My calculations with diffuse basis sets (e.g., aug-cc-pVXZ) are failing due to linear dependencies, or the density matrix has become unexpectedly dense, causing severe performance degradation and convergence issues.

Root Cause Analysis: This is a direct manifestation of the "curse of sparsity" associated with diffuse basis sets [5]. The one-particle density matrix (1-PDM) loses its sparsity because the inverse overlap matrix (𝐒⁻¹) becomes significantly less local when diffuse functions are added. Furthermore, the inherent local incompleteness of the basis set—where basis functions on one atom cannot adequately represent the electron density on a nearby atom—forces the electronic structure code to use functions from distant atoms, destroying locality. This problem is exacerbated in larger, more diffuse basis sets and is a major source of linear dependencies [5] [3].

Solution Pathway: The following workflow outlines a systematic approach to diagnose and resolve issues related to linear dependencies and sparsity.

Detailed Resolution Steps:

- Diagnose and Confirm: Verify that your basis set is the source of the problem. Check for keywords like

aug-(Dunning series),-D(Karlsruhe series, e.g.,def2-TZVPD), or++which indicate the presence of diffuse functions [3]. - Implement Solution A: CABS Singles Correction: This is a promising approach highlighted in recent literature [5]. Instead of explicitly adding diffuse functions, use a more compact basis set (e.g.,

cc-pVTZ) and recover the accuracy for non-covalent interactions by applying the Complementary Auxiliary Basis Set (CABS) singles correction. This method perturbatively accounts for the effect of diffuse functions without explicitly including them in the main basis, thereby mitigating linear dependence and sparsity issues. - Implement Solution B: Systematic Basis Set Increase: If you must use traditional diffuse basis sets, avoid starting with a very large, diffuse basis. For initial geometry optimizations, use a medium-sized basis without diffuse functions (e.g.,

def2-TZVPorcc-pVTZ). Then, for the final single-point energy calculation—which is critical for NCI accuracy—switch to a larger, augmented basis (e.g.,aug-cc-pVTZordef2-TZVPPD) [3]. - Implement Solution C: Robust SCF Solver and Thresholds: When linear dependencies are mild, use the built-in options in your quantum chemistry package to handle them. In Gaussian, this can involve using

SCF=NoVarAccorIOp(3/32=2). In other codes, increasing the electron density fitting threshold or using a more robust diagonalizer can help. - Test and Validate: Always test your chosen solution on a smaller, representative fragment of your system (e.g., a single base pair from a DNA oligomer) to confirm that it resolves the instability and provides the desired accuracy before proceeding to the full, costly production calculation.

Achieving Accurate Non-Covalent Interaction Energies

Problem Statement: My computed binding energies, interaction energies, or relative conformer energies for systems dominated by non-covalent interactions (e.g., drug-binding complexes, supramolecular assemblies) are inaccurate compared to experimental data.

Root Cause Analysis: This inaccuracy is likely due to an inadequately described basis set, which fails to capture the subtle electron correlation effects in the intermolecular region. Standard basis sets without diffuse functions cannot model the weak but critical interactions in the low-electron-density regions between molecules [3] [6]. The electron density and its derivatives in these regions are essential for correctly characterizing NCIs [6].

Solution Pathway: The workflow below guides you through the process of selecting a basis set that provides the best trade-off between accuracy and computational cost for your specific project phase.

Detailed Resolution Steps:

- Select a Minimally Sufficient Basis Set: For quantitative studies of NCIs, the use of augmented triple-zeta basis sets is the de facto standard. As shown in Table 1, basis sets like

aug-cc-pVTZanddef2-TZVPPDachieve a combined method and basis set error low enough for most applications [3]. Do not use unaugmented double-zeta basis sets (e.g.,cc-pVDZ) for final NCI energy reporting, as they introduce significant errors (>12 kJ/mol) [3]. - Employ a Multi-Stage Computational Protocol:

- Geometry Optimization: Perform initial geometry sampling and optimization with a medium-sized, non-diffuse basis set (e.g.,

cc-pVTZ) to reduce cost. - Final Single-Point Energy Calculation: On the optimized geometry, perform a high-level single-point energy calculation using a large, augmented basis set (e.g.,

aug-cc-pVTZoraug-cc-pVQZ). This two-step process is standard practice for achieving high accuracy efficiently.

- Geometry Optimization: Perform initial geometry sampling and optimization with a medium-sized, non-diffuse basis set (e.g.,

- Apply Counterpoise Correction: To correct for Basis Set Superposition Error (BSSE)—a spurious attractive interaction caused by the use of incomplete basis sets—always perform the Counterpoise Correction (CP) when calculating interaction energies. This involves calculating the energy of each monomer in the complex using the full basis set of the complex.

- Validate with Benchmark Data: Compare your methodology and results against known benchmark datasets like the ASCDB [3] or other well-established benchmarks in the literature to ensure your level of theory is appropriate.

Frequently Asked Questions (FAQs)

Q1: Why are diffuse functions so critical for studying non-covalent interactions, and can I simply use a larger standard basis set instead?

A1: Diffuse functions are essential because they describe the outer regions of the electron density, which are paramount for capturing the weak electrostatic, polarization, and dispersion effects that constitute non-covalent interactions [3] [6]. A larger standard basis set (e.g., cc-pV5Z) without diffuse functions primarily adds higher angular momentum functions to describe the electron density closer to the nuclei, which does little to improve the description of the intermolecular region. The data is clear: for the ASCDB benchmark, the error for NCIs with cc-pV5Z is 1.40 kJ/mol, which is reduced to 0.09 kJ/mol with aug-cc-pV5Z [3]. The augmentation with diffuse functions is non-negotiable for high accuracy.

Q2: My system is very large (e.g., a protein or DNA fragment). Using a diffuse basis set for the entire system is computationally impossible. What are my options?

A2: For large systems, a multi-level or "dual-basis" approach is recommended:

- ONIOM-type Methods: Use a high-level method with a diffuse basis set on the chemically active region (e.g., the drug binding site) and a lower-level method with a compact basis set on the rest of the protein environment.

- Fragment-Based Methods: Utilize methods like Fragment Molecular Orbital (FMO) or Energy Decomposition Analysis (EDA), which can leverage diffuse basis sets on specific interacting fragments.

- CABS Correction: As a newer alternative, explore the use of the CABS singles correction with a compact basis set, which has shown promise for recovering NCI accuracy without the full cost of diffuse functions [5].

Q3: What are the best practices for visualizing and analyzing the non-covalent interactions that my diffuse-basis calculation has revealed?

A3: The NCI (Non-Covalent Interactions) analysis tool is specifically designed for this purpose [7] [6]. It uses the electron density ((\rho)) and its derivatives to compute the reduced density gradient (RDG). The NCI method identifies interactions by locating low-RDG regions at low densities and colors them based on the sign of the second eigenvalue of the density Hessian ((\lambda_2)):

- Blue Isosurfaces: Strong attractive interactions (e.g., hydrogen bonds).

- Green Isosurfaces: Weak van der Waals interactions.

- Red Isosurfaces: Non-bonding, steric repulsions. Software like NCIPLOT [7] and ChemTools [6] can perform this analysis and generate visualizations. For analyzing Molecular Dynamics trajectories, PyContact is a specialized tool [8].

Quantitative Data for Basis Set Selection

The following table summarizes key performance metrics for common basis sets, providing a critical reference for making informed decisions that balance accuracy and computational cost. The data is based on results from the ASCDB benchmark using the ωB97X-V functional [3].

Table 1: Basis Set Performance for Non-Covalent Interactions (NCI) and Computational Cost

| Basis Set | Type | RMSD (NCI) B+M (kJ/mol) | Relative Time (s) | Recommended Use |

|---|---|---|---|---|

| def2-SVP | Standard Double-ζ | 31.51 | 151 | Preliminary Scans |

| cc-pVDZ | Standard Double-ζ | 30.31 | 178 | Preliminary Scans |

| def2-TZVP | Standard Triple-ζ | 8.20 | 481 | Geometry Optimization |

| cc-pVTZ | Standard Triple-ζ | 12.73 | 573 | Geometry Optimization |

| def2-SVPD | Diffuse Double-ζ | 7.53 | 521 | Small Systems NCI |

| aug-cc-pVDZ | Diffuse Double-ζ | 4.83 | 975 | Small Systems NCI |

| def2-TZVPPD | Diffuse Triple-ζ | 2.45 | 1440 | Production NCI (Recommended) |

| aug-cc-pVTZ | Diffuse Triple-ζ | 2.50 | 2706 | Production NCI (Recommended) |

| aug-cc-pVQZ | Diffuse Quadruple-ζ | 2.40 | 7302 | High-Accuracy Benchmarking |

The Scientist's Toolkit: Essential Research Reagents

This table lists key computational tools and resources essential for conducting research involving diffuse basis sets and non-covalent interactions.

Table 2: Key Research Reagents and Software Solutions

| Item Name | Function / Purpose | Relevance to Research |

|---|---|---|

| Dunning's cc-pVXZ | Correlation-consistent basis sets in tiered qualities (X=D,T,Q,5,6). | The gold-standard family for systematic convergence studies towards the complete basis set (CBS) limit [3]. |

| Augmented Basis Sets (aug-cc-pVXZ) | Standard cc-pVXZ basis sets with added diffuse functions for each angular momentum. | Critical for achieving quantitative accuracy in NCI energies and electronic properties [3]. |

| Karlsruhe (def2) Basis Sets | Popular, efficient basis sets of segmented contracted type (e.g., def2-SVP, def2-TZVP). | Widely used in chemistry, with diffuse-augmented versions (def2-SVPD, def2-TZVPPD) offering excellent performance [3]. |

| Basis Set Exchange (BSE) | Online repository and download tool for basis sets. | Essential resource for finding, downloading, and citing standard and specialized basis sets for your calculations [3]. |

| NCIplot | Program for visualization of non-covalent interactions from quantum chemistry output. | Directly visualizes the interactions your diffuse basis sets are capturing, via reduced density gradient (RDG) isosurfaces [7] [6]. |

| PyContact | Tool for analyzing non-covalent interactions in Molecular Dynamics (MD) trajectories. | Complements static quantum calculations by analyzing NCI stability and dynamics over time in large biosystems [8]. |

| CABS Singles Correction | A computational correction that accounts for the effect of diffuse functions without explicitly adding them. | A potential solution to the linear dependence and sparsity problems caused by large, diffuse basis sets [5]. |

Frequently Asked Questions (FAQs)

Q1: Why does my calculation time drastically increase when I use a diffuse basis set like aug-cc-pVTZ? The primary reason is the severe loss of sparsity in the one-particle density matrix (1-PDM). While the electronic structure of insulators is inherently local ("nearsighted"), diffuse functions introduce a basis set artifact that causes significant off-diagonal elements in the 1-PDM, forcing algorithms to process vastly more data and pushing the onset of low-scaling regimes to much larger system sizes [3].

Q2: I need accurate interaction energies for non-covalent interactions (NCIs). Is avoiding diffuse functions a good solution? No, because this sacrifices essential accuracy. Diffuse basis sets are a blessing for accuracy and are indispensable for correctly describing NCIs [3]. The solution is not to avoid them but to adopt strategies that mitigate their detrimental effects, such as the CABS singles correction with compact basis sets [3].

Q3: The sparsity problem persists even when I represent the density on a real-space grid. Why? This observation is key to understanding the problem. The "curse of sparsity" is not just an artifact of the atomic orbital basis representation. It persists in real-space projections because the root cause is the low locality of the contra-variant basis functions, which is quantified by the inverse overlap matrix, (\mathbf{S}^{-1}). This matrix is inherently less sparse than the overlap matrix (\mathbf{S}) itself [3].

Q4: Are some basis sets more prone to causing this issue than others? Yes. The problem is most pronounced for basis sets that are both small and diffuse. The exponential decay rate of the 1-PDM is proportional to the diffuseness and the local incompleteness of the basis set, meaning smaller, diffuse sets are affected most strongly [3].

Q5: How do I know if my matrix problem is ill-conditioned due to the basis set? A key indicator is the condition number of the overlap matrix or other core matrices. Ill-conditioned problems (those with a high condition number) are highly sensitive to tiny perturbations, such as rounding errors in floating-point arithmetic [9]. Diffuse functions can worsen conditioning, making computations numerically unstable.

Troubleshooting Guides

Diagnosing Sparsity and Stability Issues

Use the following table to identify the symptoms and underlying causes of problems related to diffuse functions.

Table 1: Common Issues and Diagnostic Checks

| Observed Symptom | Potential Root Cause | Diagnostic Check |

|---|---|---|

| Drastic increase in computation time & memory usage for medium-to-large systems. | Severe loss of sparsity in the 1-PDM due to diffuse functions [3]. | Plot the decay of off-diagonal elements of the 1-PDM or inspect the number of non-zero elements. |

| Erratic convergence of self-consistent field (SCF) cycles or large numerical errors. | Ill-conditioning of the overlap matrix ((\mathbf{S})) leading to numerical instability [9]. | Calculate the condition number, (\kappa(\mathbf{S})). A high value indicates instability. |

| Inaccurate non-covalent interaction energies despite using a large basis set. | Combined error from method and basis set; diffuse functions may be needed [3]. | Consult benchmark studies (e.g., ASCDB) to ensure your basis set (e.g., aug-cc-pVTZ or def2-TZVPPD) is adequate for NCIs [3]. |

| Slow convergence or failure of linear-scaling algorithms. | The "late onset" of the low-scaling regime due to the non-locality introduced by (\mathbf{S}^{-1}) [3]. | Analyze the sparsity pattern of (\mathbf{S}^{-1}) compared to (\mathbf{S}). |

Diagram 1: A diagnostic workflow for identifying common problems arising from the use of diffuse basis sets.

A Protocol for Mitigating the "Curse of Sparsity"

This protocol outlines a step-by-step approach to achieve accurate results while managing the challenges posed by diffuse basis sets.

Objective: To obtain accurate interaction energies (particularly for non-covalent interactions) while mitigating the detrimental impact of diffuse functions on matrix sparsity and numerical stability.

Background: The protocol is based on the analysis that the non-locality stems from the contra-variant basis functions ((\mathbf{S}^{-1})) and is worst for small, diffuse sets [3]. The solution involves a combination of method and basis set selection.

Table 2: Step-by-Step Mitigation Protocol

| Step | Action | Rationale & Technical Details |

|---|---|---|

| 1. Problem Assessment | Determine if non-covalent interactions (NCIs) are critical for your system. | If NCIs are not central, a compact basis set (e.g., def2-SVP) may be sufficient, avoiding the problem entirely [3]. |

| 2. Basis Set Selection | For NCI accuracy, select a basis set with diffuse functions, but be strategic. | Basis sets like def2-TZVPPD or aug-cc-pVTZ are often the smallest sufficient for NCI convergence [3]. Avoid using very small, diffuse sets. |

| 3. Numerical Stabilization | Implement techniques to improve conditioning and control error propagation. | - Use higher precision arithmetic for critical operations [9].- Apply iterative refinement to improve the accuracy of solutions to linear systems [9].- Employ robust pivoting strategies (e.g., in linear solvers) to enhance numerical stability [9]. |

| 4. Advanced Correction | For production calculations, consider the CABS singles correction. | This approach, combined with compact, low l-quantum-number basis sets, has been shown to offer a promising solution to the conundrum, providing good accuracy while alleviating sparsity issues [3]. |

| 5. Validation | Always benchmark your chosen protocol against a reliable dataset. | Use databases like ASCDB to verify that the combined method and basis set error is acceptable for your application [3]. |

Diagram 2: A strategic protocol for mitigating the impact of diffuse functions, from problem assessment to final validation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational "Reagents" for Handling Diffuse Basis Sets

| Tool / Resource | Function / Purpose | Notes |

|---|---|---|

| Basis Set Exchange | Repository to obtain standard diffuse basis sets (e.g., aug-cc-pVXZ, def2-XVPPD) [3]. | Critical for ensuring the correct and consistent use of published basis sets. |

| Complementary Auxiliary Basis Set (CABS) | Used in the CABS singles correction to improve accuracy with a more compact primary basis, alleviating sparsity [3]. | A proposed solution to the conundrum of balancing accuracy and sparsity. |

| Linear Scaling SCF Algorithms | Algorithms designed to exploit sparsity in the 1-PDM for large systems. | These methods struggle most with diffuse basis sets, highlighting the importance of this research topic [3]. |

| Condition Number Estimator | A numerical routine to compute (\kappa(\mathbf{S})) to diagnose potential instability [9]. | Available in most linear algebra libraries (e.g., MATLAB, NumPy). |

| Iterative Refinement Routine | A numerical technique to improve the accuracy of a computed solution to a linear system [9]. | Helps to compensate for rounding errors introduced during computation. |

| Benchmark Databases (e.g., ASCDB) | A collection of reference data to validate the accuracy of computed properties like interaction energies [3]. | Essential for verifying that a chosen method/basis set combination is fit for purpose. |

Frequently Asked Questions (FAQs)

Q1: What does an unusually small eigenvalue of the overlap matrix indicate in my calculation? An unusually small eigenvalue of the overlap matrix (S) is a primary indicator of linear dependence or near-linear dependence within your atomic orbital basis set [3]. This occurs when diffuse functions are used, as their large spatial extent causes significant overlap with functions on distant atoms, making the basis set overcomplete. The condition number of S (the ratio of its largest to smallest eigenvalue) becomes very large, and the matrix becomes ill-conditioned, which can cause numerical instability in the self-consistent field (SCF) procedure [3].

Q2: Why do my calculations with large, diffuse basis sets become numerically unstable and suffer from a "curse of sparsity"? This "curse of sparsity" is a direct consequence of the low locality of the contravariant basis functions, quantified by the inverse overlap matrix, S⁻¹ [3]. While the electronic structure itself is local (nearsighted), the mathematical representation in a diffuse basis set is not. The matrix S⁻¹ is significantly less sparse than its covariant dual, meaning the one-particle density matrix (1-PDM) remains dense even for large, insulating systems. This loss of sparsity increases computational cost and can lead to erratic cutoff errors [3].

Q3: Are there any solutions that offer both accuracy and computational tractability? Yes, one promising solution is the use of the complementary auxiliary basis set (CABS) singles correction in combination with compact, low angular momentum (low l-quantum-number) basis sets [3]. This approach can provide accurate results for non-covalent interactions without the severe sparsity degradation associated with large, diffuse basis sets [3].

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Linear Dependence

Problem: SCF calculation fails to converge or warns of a non-positive definite overlap matrix.

Diagnosis: This is typically caused by the linear dependence of basis functions. To confirm, follow this diagnostic workflow:

Experimental Protocol: Eigenvalue Analysis of the Overlap Matrix

- Compute the Overlap Matrix: In your quantum chemistry code, calculate the full overlap matrix S for the system.

- Diagonalize the Matrix: Perform a full diagonalization of S to obtain all its eigenvalues, λᵢ.

- Analyze Eigenvalue Spectrum: Sort the eigenvalues in ascending order and analyze their magnitudes. The presence of eigenvalues near or below the numerical zero threshold (e.g., 10⁻⁷) indicates linear dependence.

- Quantify the Condition Number: Calculate the condition number κ(S) = λmax / λmin. A very large condition number (e.g., > 10¹⁰) confirms the matrix is ill-conditioned.

Resolution:

- Apply a Threshold: Most quantum chemistry packages allow you to set a linear dependence threshold. Eigenvectors corresponding to eigenvalues below this threshold are removed from the basis before the SCF calculation.

- Use a More Robust Basis: If the problem persists, switch to a less diffuse basis set. The following table compares the performance of different basis set types.

Table 1: Comparison of Basis Set Types and Their Properties

| Basis Set Type | Example | Typical Use Case | Robustness to Linear Dependence | Accuracy for NCIs |

|---|---|---|---|---|

| Minimal | STO-3G [10] | Preliminary calculations | High | Very Poor |

| Split-Valence | 6-31G [10] | General purpose chemistry | High | Poor |

| Polarized | 6-31G [10] | Molecular geometry & bonding | Medium | Medium |

| Diffuse/Augmented | aug-cc-pVDZ [3] [10] | Anions, NCIs, spectroscopy | Low | Very Good |

| Compact with CABS | Proposed Solution [3] | Accurate NCIs with stability | Medium-High | Good to Excellent |

Guide 2: Restoring Sparsity in the One-Particle Density Matrix

Problem: Calculations with diffuse basis sets are computationally prohibitive for large systems due to low sparsity of the 1-PDM, delaying the onset of linear-scaling regimes.

Diagnosis: The loss of sparsity is an inherent artifact of using diffuse basis functions. Investigate this by plotting the decay of the 1-PDM matrix elements with distance.

Experimental Protocol: Quantifying 1-PDM Locality

- Converge the SCF Calculation: Obtain the converged 1-PDM, P, for your system using the diffuse basis set.

- Compute Spatial Decay: For each matrix element Pμν (corresponding to basis functions χμ and χν), calculate the real-space distance between the centers of the two basis functions.

- Plot and Analyze: Create a scatter plot of |Pμν| versus the inter-function distance. For a system with high locality (nearsightedness), the matrix elements should decay exponentially with distance. With diffuse basis sets, this decay is significantly slower [3].

Resolution:

- Employ CABS Correction: As identified in the research, using the CABS singles correction with a compact basis can bypass the need for highly diffuse functions to achieve accuracy, thereby preserving more sparsity [3].

- Explore Localized Representations: Transform the molecular orbitals into localized orbitals (e.g., Boys, Pipek-Mezey). This can improve sparsity but may not fully overcome the fundamental non-locality introduced by S⁻¹ in a diffuse basis.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Handling Basis Set Overcompleteness

| Item / "Reagent" | Function in Research | Key Considerations |

|---|---|---|

| Overlap Matrix (S) | Core matrix whose eigenvalues diagnose linear dependence [3]. | Must be analyzed before SCF. Condition number predicts stability. |

| Basis Set Libraries (e.g., Basis Set Exchange [3]) | Source for standardized basis sets (Pople, Dunning cc-pVXZ, Karlsruhe def2-X) [10]. | Choose based on target property. Augmented sets (e.g., aug-cc-pVXZ) are for NCIs but cause instability [3]. |

| Linear Dependence Threshold | Numerical parameter that removes eigenvectors of S with negligible eigenvalues. | A necessary stabilization step. Too aggressive a threshold can reduce accuracy. |

| Condition Number Monitor | Metric (κ=λmax/λmin) to assess the stability of the inverse S⁻¹ [11] [3]. | Track this value during basis set selection. A large κ signals impending numerical issues. |

| CABS Singles Correction | A computational method that improves accuracy without relying on highly diffuse basis functions [3]. | A promising solution to the accuracy-sparsity trade-off, especially for NCIs [3]. |

Identifying Systems and Properties Most at Risk (e.g., Large Biomolecules, Anions, Excited States)

In computational chemistry, the use of large, diffuse basis sets presents a significant conundrum. While they are essential for achieving high accuracy, particularly for properties like non-covalent interactions and electron affinities, they simultaneously introduce substantial computational challenges. The core issue is that diffuse functions drastically reduce the sparsity of the one-particle density matrix (1-PDM), which is foundational for linear-scaling electronic structure methods. This problem is acutely manifested in specific systems, including large biomolecules and anions, where diffuse functions are non-negotiable for accuracy but can make calculations prohibitively expensive or even numerically unstable.

Troubleshooting Guide: Systems at High Risk

FAQ: Which specific systems are most vulnerable to problems with diffuse basis sets?

Several key systems are particularly susceptible to the challenges posed by diffuse basis sets. The table below summarizes the primary systems at risk, the nature of their vulnerability, and the underlying physical reason.

Table: Systems and Properties at High Risk from Diffuse Basis Sets

| System/Property | Specific Risk | Physical Reason for Vulnerability |

|---|---|---|

| Large Biomolecules (e.g., DNA fragments) | Severe loss of sparsity in the 1-PDM, eliminating computational benefits of linear-scaling algorithms [3]. | The "nearsightedness" principle of electron behavior is violated by the long-range nature of diffuse orbitals, creating non-local electronic structure representations even in spatially local systems [3]. |

| Non-Covalent Interactions (NCIs) | Highly inaccurate interaction energies if diffuse functions are omitted [3]. | NCIs (e.g., van der Waals, dispersion, hydrogen bonding) are governed by subtle long-range electron correlation effects that require a diffuse basis for correct description [3]. |

| Anions | Pronounced linear dependence in the basis set, leading to numerical instability and SCF convergence failures. | The electron is loosely bound in a large, diffuse orbital, requiring an expansive basis set for an accurate description, which often overlaps excessively with the core basis functions of other atoms [12]. |

FAQ: How does the choice of basis set quantitatively impact accuracy and sparsity?

The conflict between accuracy and computational feasibility is stark. For instance, on a DNA fragment (16 base pairs, 1052 atoms), moving from a minimal STO-3G basis to a medium-sized diffuse basis (def2-TZVPPD) essentially eliminates all usable sparsity in the 1-PDM [3]. Concurrently, the accuracy for non-covalent interactions critically depends on these same diffuse functions.

Table: Impact of Basis Set on Accuracy and Computational Cost

| Basis Set | NCI RMSD (kJ/mol) [3] | Relative Computational Time (for a 260-atom system) [3] |

|---|---|---|

| def2-SVP | 31.51 | 1.0x (Baseline) |

| def2-TZVP | 8.20 | 3.2x |

| def2-TZVPPD | 2.45 | 9.5x |

| aug-cc-pVTZ | 2.50 | 17.9x |

This data demonstrates that while unaugmented basis sets like def2-TZVP are faster, they fail to provide accurate NCI energies. The use of augmented, diffuse basis sets like def2-TZVPPD or aug-cc-pVTZ is essential for accuracy but comes at a significant computational cost, partly due to the loss of sparsity [3].

Experimental Protocols and Diagnostics

Protocol 1: Diagnosing the "Sparsity Curse" in Large Systems

Objective: To quantify the loss of sparsity in the one-particle density matrix (1-PDM) when using a diffuse basis set on a large, structured system like a biomolecule.

- System Preparation: Obtain the molecular geometry of your system (e.g., a protein or DNA fragment from a database like PDB).

- Calculation Setup: Perform two single-point energy calculations at the HF or DFT level using a quantum chemistry package like PSI4.

- Calculation A: Use a compact basis set (e.g., STO-3G or def2-SVP).

- Calculation B: Use a diffuse-augmented basis set (e.g., def2-TZVPPD or aug-cc-pVTZ).

- Data Extraction: After convergence, extract the full 1-PDM for each calculation.

- Sparsity Analysis: For each 1-PDM, apply a threshold (e.g., (10^{-5})) to determine the number of significant elements. Calculate sparsity as: ( \text{Sparsity} = 1 - \frac{\text{Number of significant elements}}{\text{Total number of elements}} )

- Comparison: Compare the sparsity and the spatial distribution of significant elements between the two calculations. A dramatic reduction in sparsity is expected with the diffuse basis set [3].

Protocol 2: Checking for Linear Dependence in a Basis Set

Objective: To identify potential numerical instability in the basis set, a common risk with anions and diffuse functions.

- Basis Set Overlap Matrix: In your calculation, form the atomic orbital overlap matrix, (\mathbf{S}), where elements are (S{\mu\nu} = \langle \chi\mu | \chi_\nu \rangle).

- Diagonalization: Diagonalize the (\mathbf{S}) matrix to obtain its eigenvalues, (\lambda_i).

- Analysis:

- A mathematically complete and linearly independent basis set would have all eigenvalues equal to 1.

- In practice, eigenvalues close to zero indicate near-linear dependence.

- A common rule of thumb is that the condition number of (\mathbf{S}) (the ratio of the largest to the smallest eigenvalue) should not be too large (e.g., < (10^7)), and the smallest eigenvalue should be above ~(10^{-7}).

- Troubleshooting: If linear dependence is detected, most quantum chemistry software (e.g., PSI4) can automatically remove linearly dependent functions. Alternatively, using a slightly contracted basis set or adjusting the molecule's geometry can mitigate the issue.

The workflow below outlines the diagnostic steps and potential solutions for this issue.

The Scientist's Toolkit: Essential Research Reagents

Table: Key Computational "Reagents" for Handling Diffuse Basis Sets

| Tool/Reagent | Function/Purpose | Example Use-Case |

|---|---|---|

| Complementary Auxiliary Basis Set (CABS) | Corrects for basis set incompleteness error without the full sparsity cost of a full diffuse basis; a proposed solution to the conundrum [3]. | Achieving accurate non-covalent interaction energies with a more compact primary basis set, thus preserving better sparsity [3]. |

| Compact, Low-L-Basis Sets | Reduces the number of high-angular-momentum basis functions, which are a primary source of diffuse functions and linear dependence. | Initial scans or calculations on very large systems where full basis sets are computationally prohibitive. |

| BLAS/LAPACK Libraries | Provides highly optimized linear algebra routines (matrix multiplication, diagonalization) essential for handling the dense matrices resulting from diffuse basis sets [13]. | Used in all major quantum chemistry codes (e.g., PSI4) for efficient SCF cycles and matrix operations [13]. |

| Condition Number Analysis | A diagnostic tool to quantify the severity of linear dependence in the basis set. | Checking the stability of a calculation for an anion before running a long, expensive simulation. |

| Correlation-Consistent Basis Sets (cc-pVXZ) | A hierarchical family of basis sets that allows for systematic convergence studies towards the complete basis set (CBS) limit [14]. | Extrapolating to the CBS limit for highly accurate thermochemical data; studying the convergence behavior of Hartree-Fock and correlation energies [14]. |

Workflow for Mitigating Risk in Sensitive Systems

The following diagram illustrates a recommended workflow for researchers to identify, diagnose, and mitigate the risks associated with using diffuse basis sets on sensitive systems.

Practical Strategies and Algorithms for Resolving Linear Dependencies

In quantum chemical calculations, the use of large, diffuse basis sets is essential for achieving high accuracy, particularly for properties such as electron affinities, excited states, and non-covalent interactions [15]. However, these expansive basis sets introduce a significant computational challenge: linear dependence. Linear dependence occurs when basis functions become non-orthogonal and numerically redundant, leading to an over-complete description of the molecular system. This can cause the overlap matrix to become singular or nearly singular, resulting in SCF convergence failures, erratic optimization behavior, and ultimately, the premature termination of calculations [16] [1]. For researchers relying on software like Q-Chem, ORCA, and Gaussian, managing this linear dependence is a critical skill. This guide provides specific protocols for diagnosing and resolving these issues, framed within the context of advanced research employing large diffuse basis sets.

Understanding Linear Dependence and Its Detection

The Fundamental Issue

Linear dependence in a basis set arises when one basis function can be represented as a linear combination of other functions in the set. In practice, near-linear dependence is more common, where functions are very similar but not perfectly redundant. This is a particular problem with:

- Diffuse basis functions: Functions with small exponents (e.g., in

aug-cc-pVnZfamilies) have long tails and can become numerically similar [17] [15]. - Large basis sets: As the basis set size increases, the probability of functional overlap grows [1].

- Systems with many atoms: In large molecules, basis functions on atoms that are far apart can still create linear dependencies [18].

Quantum chemistry programs detect linear dependencies by analyzing the eigenvalue spectrum of the overlap matrix. A perfectly linearly independent basis set has all eigenvalues greater than zero. Eigenvalues very close to zero indicate near-linear dependencies that must be managed to ensure numerical stability [16] [19].

A Priori Identification

While programs typically detect linear dependencies during the SCF process, researchers can proactively identify potential issues. One method involves analyzing the similarity of Gaussian exponents. A study showed that identifying pairs of exponents with the smallest percentage difference and removing one function from each pair successfully cured linear dependence issues. For example, in a water calculation, the exponent pairs 94.8087090/92.4574853342 and 45.4553660/52.8049100131 were identified as the primary culprits for two near-linear dependencies [1].

A more robust, general solution involves using the pivoted Cholesky decomposition of the overlap matrix. This method can be implemented to either customize the basis set by removing redundant shells before the calculation or to modify the orthonormalization procedure. This approach is versatile and also works for systems with "unphysically" close nuclei [1].

Software-Specific Protocols and Thresholds

Q-Chem: Controlling the Linear Dependence Threshold

Q-Chem automatically checks for linear dependence in the basis set by examining the eigenvalues of the overlap matrix. It projects out vectors corresponding to eigenvalues below a defined threshold [16].

Key Configuration Variable:

BASIS_LIN_DEP_THRESH: This$remvariable sets the threshold for determining linear dependence [16].- Type: Integer [16]

- Default:

6(corresponding to a threshold of (10^{-6})) [16] - Options: The integer (n) sets the threshold to (10^{-n}) [16]

- Recommendation: If you encounter a poorly behaved SCF and suspect linear dependence, increase this value to

5or smaller (e.g., (10^{-5})). Be aware that lower values (larger thresholds) may affect the accuracy of the calculation by removing more basis functions [16].

Troubleshooting Workflow:

- Symptom: SCF is slow to converge or behaves erratically; you see warnings about linear dependence [16] [18].

- Initial Action: Check the output for the message "Linear dependence detected in AO basis" and note the number of orthogonalized atomic orbitals versus the total number of basis functions [18].

- Solution: Add

BASIS_LIN_DEP_THRESH <n>to the$remsection of your input file. Start with a value of7or8to remove only the most severe dependencies. If problems persist, gradually tighten the threshold (e.g.,9) [18]. - Additional Measures: For diffuse basis sets, using tighter screening thresholds (

S2THRESH > 12andTHRESH = 14) can also help with SCF convergence issues related to linear dependence [18].

ORCA: Managing Dependencies and Auxiliary Bases

Unlike Q-Chem, ORCA's primary documentation does not detail a specific keyword equivalent to BASIS_LIN_DEP_THRESH. Linear dependence is often mentioned as a known side effect of using diffuse basis sets, and the program handles it internally [17] [15].

Common Scenarios and Solutions:

- Diffuse Basis Sets: ORCA explicitly warns that using diffuse functions from the

aug-cc-pVnZfamily or adding diffuse functions to thedef2family can result in linear dependencies and severe SCF problems [15]. - Auxiliary Basis Sets: The

!AutoAuxkeyword, which automatically generates auxiliary basis sets, can occasionally produce a linearly-dependent basis, leading to errors such as'Error in Cholesky Decomposition of V Matrix'[15]. - Recommended Basis Sets: To avoid these issues, ORCA's input library recommends using minimally augmented

def2basis sets (e.g.,ma-def2-SVP) for calculations requiring diffuse functions, as they are designed to be less prone to linear dependencies while still delivering good performance for properties like electron affinities [15].

Troubleshooting Steps:

- Symptom: SCF convergence problems, especially when using

aug-cc-pVnZor other diffuse basis sets [17] [15]. - Initial Action: Visualize your molecular structure and verify that the charge and multiplicity are correct. Unreasonable coordinates or incorrect electronic state can exacerbate numerical issues [17].

- Primary Solution: Switch from a fully diffuse basis set to a minimally augmented one (e.g., from

aug-cc-pVTZtoma-def2-TZVP) [15]. - Alternative Solution: If you must use a diffuse basis, tighten the integration grid (e.g., use

!DefGrid2or!DefGrid3) and, if usingRIJCOSX, tighten the COSX grid to reduce numerical noise that can interact poorly with a nearly linearly dependent basis [17].

Gaussian: General Workflow and Considerations

The provided search results do not contain specific information about managing linear dependence thresholds in Gaussian. Users facing this issue should consult the official Gaussian documentation for keywords related to basis set handling, integral accuracy, and SCF convergence.

Table 1: Software-specific controls for managing linear dependence.

| Software | Primary Control | Default Value | How to Adjust | Associated Risks/Considerations |

|---|---|---|---|---|

| Q-Chem | BASIS_LIN_DEP_THRESH |

6 ((10^{-6})) |

Increase value (e.g., to 7 or 8) in $rem section |

Setting too high a threshold (low n) may remove necessary functions, affecting accuracy [16]. |

| ORCA | (No direct user threshold) | (Internal) | Use less diffuse basis sets (e.g., ma-def2-SVP); tighten grids [15]. |

Using !AutoAux or highly diffuse basis sets like aug-cc-pVnZ can induce linear dependencies [15]. |

| Gaussian | (Information not available in search results) | (Information not available in search results) | (Information not available in search results) | (Information not available in search results) |

Troubleshooting FAQs

How can I predict linear dependencies before starting a long calculation?

You can perform a preliminary analysis on a smaller version of your system or by analyzing the basis set exponents.

- Exponent Analysis: For a given atom, list all Gaussian exponents. Identify the N pairs of exponents that are most similar to each other percentage-wise. Removing one function from each of these N pairs can often cure N near-linear dependencies [1].

- Overlap Matrix Calculation: For a small model system (e.g., a dimer or a fragment of your large molecule), calculate the overlap matrix and its eigenvalues. This is computationally inexpensive and can reveal problematic function pairs before a full calculation [1].

- Cholesky Decomposition: Use a method based on the pivoted Cholesky decomposition of the overlap matrix, which can be implemented to preemptively remove redundant basis functions [1].

My calculation failed with a linear dependence error. What is my step-by-step recovery plan?

- Confirm the Error: Check your output file for keywords like "linear dependence detected" (Q-Chem) or "Error in Cholesky Decomposition" (ORCA), and note how many basis functions were removed [16] [15].

- Increase Threshold (Q-Chem): If using Q-Chem, add

BASIS_LIN_DEP_THRESH 8to your input file and restart the calculation. This will remove more of the near-linear dependencies [16]. - Change Basis Set (ORCA): If using ORCA, switch to a less diffuse basis set, such as the

ma-def2series (e.g.,ma-def2-TZVP) [15]. - Tighten Numerical Grids: In DFT calculations, increase the integration grid size (e.g.,

!DefGrid3in ORCA) and, if applicable, the COSX grid. This reduces numerical noise that can exacerbate problems from a nearly dependent basis [17]. - Manual Basis Set Pruning: As a last resort, manually inspect and remove basis functions with very similar exponents, as described in the FAQ above [1].

Why did my calculation become linearly dependent when I added diffuse functions?

Diffuse functions have small exponents, meaning they extend far from the atomic nucleus. When added to a basis set, they significantly increase the extent of the electron density description. In large molecules, diffuse functions on atoms separated by long distances can have substantial overlap, creating numerical redundancies. Furthermore, within a single atom, the most diffuse functions can have exponents that are too close to each other, leading to near-linear dependence in the atomic basis itself [1] [15].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key computational tools and their functions in managing linear dependence.

| Item | Function/Purpose | Example Use Case |

|---|---|---|

| Minimally-Augmented Basis Sets | Provides diffuse functions necessary for anion/excited state calculations with a lower risk of linear dependence than fully augmented sets [15]. | ma-def2-TZVP for calculating accurate electron affinities without SCF convergence failures [15]. |

| Linear Dependence Threshold | Directly controls the sensitivity for detecting and removing redundant basis functions (Q-Chem) [16]. | BASIS_LIN_DEP_THRESH 8 to stabilize an SCF calculation struggling with a large, diffuse basis on a big molecule [16]. |

| Tight Integration Grids | Reduces numerical noise in the calculation of exchange-correlation integrals in DFT, preventing this noise from interacting with a nearly linearly dependent basis [17]. | !DefGrid3 in ORCA to eliminate small imaginary frequencies in a frequency calculation caused by numerical noise [17]. |

| Pivoted Cholesky Decomposition | A robust mathematical procedure to identify and remove linear dependencies from a basis set a priori [1]. | Generating a customized, non-redundant basis set for a system with unphysically close nuclei or a heavily augmented standard basis [1]. |

Experimental Workflow for Handling Linear Dependence

The following diagram outlines a logical decision-making process for diagnosing and resolving linear dependence issues in quantum chemical calculations.

Diagram Title: Troubleshooting Linear Dependence in Q-Chem and ORCA

Frequently Asked Questions

What is the primary numerical symptom of basis set overcompleteness that Pivoted Cholesky addresses?

The primary symptom is the failure of the standard Cholesky decomposition, which throws errors indicating that the matrix is not positive definite. For example, in R, you might encounter an error such as: Error in chol.default(corrMat) : the leading minor of order 61 is not positive definite [20]. This signifies that the overlap matrix for your molecular system is numerically rank-deficient.

My standard Cholesky solver failed. How does Pivoted Cholesky provide a solution? The standard Cholesky decomposition requires a strictly positive definite matrix. In contrast, the pivoted Cholesky algorithm incorporates a pivoting (row/column swapping) strategy that identifies and prioritizes the most numerically significant components of the matrix [20]. This process provides a stable, low-rank approximation of the original matrix, effectively pruning away the overcompleteness that causes the linear dependencies [21].

The output of a pivoted Cholesky function includes a 'pivot' vector. What is its purpose, and is the resulting factor useable for simulations? Yes, the factor is useable but requires correct interpretation. The pivot vector indicates the new order in which the matrix's rows and columns were processed to ensure numerical stability [20]. The output Cholesky factor is for this permuted matrix. To use it in subsequent calculations, such as Monte Carlo simulations, you must either apply the same permutation to your other data or reverse the permutation on the Cholesky factor to align it with your original matrix's ordering [20].

I'm using a JAX backend and encountering a static index error with pivoted_cholesky. How can I resolve this?

This is a known issue in specific implementations, where the JIT compiler requires static array indices but the pivoting algorithm is inherently dynamic [22]. A practical workaround is to execute the pivoted Cholesky decomposition outside of a JIT-compiled function, for instance, using TensorFlow Probability's implementation, and then convert the result back into a JAX array [22]:

Troubleshooting Guides

Issue: Dense Overlap Matrix Leading to Computational Bottlenecks

- Problem Description: Despite the system being physically "nearsighted," the one-particle density matrix (1-PDM) remains dense when using diffuse basis sets, crippling linear-scaling algorithms [3].

- Diagnosis: This is a classic manifestation of the "conundrum of diffuse basis sets." While essential for accuracy, diffuse functions cause severe linear dependencies and destroy matrix sparsity [3].

- Solution:

- Apply Pivoted Cholesky Decomposition: Use it to decompose the overlap matrix (

S). This provides a numerically stable way to identify the linearly independent set of basis functions [21]. - Construct a Pruned Basis: The pivot indices from the decomposition directly indicate which basis functions form a complete, non-overcomplete set. You can generate custom, reduced basis sets for each atom, leading to significant cost reductions in subsequent electronic structure calculations [21].

- Apply Pivoted Cholesky Decomposition: Use it to decompose the overlap matrix (

Table: Blessing and Curse of Diffuse Basis Sets

| Basis Set Characteristic | Impact on Accuracy (The Blessing) | Impact on Computation (The Curse) |

|---|---|---|

| Small, non-diffuse sets (e.g., STO-3G) | Poor description of non-covalent interactions, electron affinity, etc. | High sparsity in the 1-PDM; faster computations. |

| Large, diffuse sets (e.g., aug-cc-pVTZ) | Essential for chemical accuracy in non-covalent interactions [3]. | Generates linear dependencies; destroys sparsity; leads to high computational cost and ill-conditioned matrices [3]. |

Issue: Handling a Non-Positive Definite Matrix in a Script

- Problem Description: A script that works for small systems fails with a Cholesky error for a larger, more complex system.

- Diagnosis: The script likely uses the standard

chol()function. As system size and basis set diffuseness increase, the likelihood of numerical rank-deficiency rises, causing this failure. - Solution: Modify your code to use the pivoted version and handle the output correctly. Example in R:

Experimental Protocols

Protocol: Pruning an Overcomplete Basis Set Using Pivoted Cholesky

This protocol details the core methodology for curing basis set overcompleteness, as proposed by Lehtola [21].

1. Objective: To generate a optimal, numerically stable, reduced basis set from an overcomplete one, enabling accurate and efficient electronic structure calculations.

2. Materials and Inputs:

- Molecular Geometry: The atomic coordinates of the system under study.

- Overcomplete Atomic Orbital Basis Set: A large, diffuse basis set (e.g., aug-cc-pVXZ) known to cause linear dependencies [3].

- Software Capability: A computational environment with a linear algebra library that provides a pivoted Cholesky decomposition routine (e.g.,

chol(..., pivot=TRUE)in R).

3. Step-by-Step Workflow:

1. Compute the Overlap Matrix: Calculate the real, symmetric overlap matrix ( S ) for the molecular system using the chosen overcomplete basis set.

2. Perform Pivoted Cholesky Decomposition: Execute chol(S, pivot = TRUE).

3. Extract Pivot Indices: The function returns a pivot vector. The first k elements of this vector (where k is the numerical rank returned by the function) are the indices of the basis functions that form the maximally linearly independent set.

4. Construct Pruned Basis: Use these k indices to select the corresponding basis functions from the original set, creating a new, pruned basis. This new basis is complete enough to describe all original functions but is free of the numerical instability caused by overcompleteness [21].

The following diagram illustrates the logical workflow of this protocol:

Protocol: Stabilizing the Solution of Kernel Systems

This protocol is based on the work by Liu & Matthies, which merges pivoted Cholesky with Cross Approximation for solving large, ill-conditioned kernel systems [23].

1. Objective: To obtain a stable and efficient solution to large, ill-conditioned kernel systems ( Kx = b ) without resorting to ad-hoc regularization.

2. Key Methodology: The algorithm tunes a Cross Approximation (CA) technique to the kernel matrix, leveraging the advantages of pivoted Cholesky. This hybrid approach can solve large kernel systems two orders of magnitude more efficiently than regularization-based methods [23].

3. Workflow Overview: 1. Input: A large, ill-conditioned, positive semi-definite kernel matrix ( K ). 2. Diagonal-Pivoted Cross Approximation: A CA algorithm with diagonal pivoting is applied to the kernel matrix. This step is mathematically aligned with the objectives of pivoted Cholesky. 3. Low-Rank Approximation: The process yields a low-rank factor (e.g., ( LL^T )) that approximates ( K ). 4. Efficient System Solution: Use this low-rank factorization to solve the linear system efficiently and stably.

Table: Comparison of Solution Methods for Ill-Conditioned Systems

| Method | Key Principle | Stability for Rank-Deficient Matrices | Computational Efficiency |

|---|---|---|---|

| Standard Cholesky | Requires positive definiteness | Fails | High (when it works) |

| Tikhonov Regularization | Adds a constant to the diagonal | Stable (with tuned parameter) | Medium (introduces bias) |

| Pivoted Cholesky [20] | Selects independent components via pivoting | Stable | High |

| PCD by Cross Approximation [23] | Merges pivoting with cross-approximation | Highly Stable | Very High |

The Scientist's Toolkit

Table: Essential Research Reagents and Computational Solutions

| Item Name | Function/Brief Explanation | Context of Use |

|---|---|---|

Diffuse Basis Sets(e.g., aug-cc-pVXZ, def2-SVPD) |

Augment standard basis sets with diffuse functions to accurately model electron density tails and non-covalent interactions [3]. | Essential for calculations involving anions, excited states, and van der Waals complexes. The source of the "blessing" of accuracy. |

| Pivoted Cholesky Algorithm | A numerical linear algebra procedure that performs Cholesky decomposition with row/column pivoting. | The core "cure" for diagnosing and resolving linear dependencies induced by diffuse basis sets [20] [21]. |

| Overlap Matrix (( S )) | A matrix whose elements represent the inner products between basis functions in a molecule. Its rank deficiency signals linear dependence. | The primary input for the pivoted Cholesky decomposition to detect overcompleteness [21] [3]. |

| Complementary Auxiliary Basis Set (CABS) Singles Correction | An approach to recover correlation energy and improve accuracy without using large, diffuse basis sets. | A proposed solution to use alongside basis set pruning, allowing for compact basis sets while maintaining accuracy [3]. |

## Frequently Asked Questions (FAQs)

1. What causes linear dependence in a basis set, and why is it a problem? Linear dependence occurs when basis functions are too similar, making the basis set over-complete. This leads to a near-singular overlap matrix with very small eigenvalues, causing numerical instabilities. The Self-Consistent Field (SCF) procedure may converge slowly, behave erratically, or fail entirely. It is a common issue when using very large basis sets, especially those with many diffuse functions, or when studying large molecules [24].

2. How can I identify if my calculation has linear dependency issues? Most electronic structure programs, like Q-Chem, automatically check for linear dependence by analyzing the eigenvalues of the overlap matrix. A warning is typically printed if eigenvalues fall below a predefined threshold (e.g., 10⁻⁶). Inspect your output file for the smallest overlap matrix eigenvalue; if it is below 10⁻⁵, numerical issues are likely [24].

3. Which basis functions should I consider removing first? A practical first step is to identify and remove one function from pairs of primitive Gaussian exponents that are very similar in value. Research has shown that removing functions from the pair of exponents that are closest percentage-wise can effectively cure linear dependencies. For example, in a case with an oxygen atom, removing one function from the pairs (94.8087090, 92.4574853342) and (45.4553660, 52.8049100131) successfully resolved two near-linear-dependencies [1].

4. Are there automated methods for pruning a basis set? Yes, advanced methods exist. The pivoted Cholesky decomposition (pCD) can be used to project out near-degeneracies automatically. Another algorithm, BDIIS (Basis-set Direct Inversion in the Iterative Subspace), optimizes basis set exponents and contraction coefficients while minimizing the total energy and controlling the condition number of the overlap matrix to prevent linear dependence [1] [25].

5. Can tightening the integral threshold help with SCF convergence problems from linear dependence?

Yes, surprisingly, tightening the integral threshold (e.g., setting THRESH = 14) can sometimes help. For large molecules with diffuse basis sets, this can reduce the number of SCF cycles significantly, leading to a faster solution despite a modest increase in cost per cycle [24].

6. Is manual pruning always safe? The manual procedure of removing functions with similar exponents has been shown to work for systems like water. However, for more complex geometries, the relationship between exponents and linear dependencies may be less straightforward. Automated, mathematically robust methods like pivoted Cholesky decomposition are generally more reliable for complex systems [1].

## Troubleshooting Guide: Resolving Linear Dependence Errors

### Symptoms and Diagnostics

If you encounter the following issues, your calculation may be suffering from basis set linear dependence:

- SCF Convergence Failure: The SCF process is slow to converge or behaves erratically [24].

- Program Warnings: The output contains warnings about small eigenvalues in the overlap matrix or near-linear-dependencies [24] [1].

- Unexpected Energy Shifts: The final energy is higher than expected when using a larger basis set compared to a smaller one [1].

Diagnostic Step: Locate the smallest eigenvalue of the overlap matrix in your output file. The table below outlines the interpretation of its value.

Table 1: Diagnosing Linear Dependence from the Overlap Matrix's Smallest Eigenvalue

| Eigenvalue Range | Interpretation & Recommended Action |

|---|---|

| Larger than 10⁻⁵ | Likely no issues. |

| Between 10⁻⁶ and 10⁻⁵ | Caution; numerical issues may occur. Monitor SCF convergence. |

| Smaller than 10⁻⁶ | Linear dependency is causing problems. Action is required [24]. |

### Step-by-Step Manual Pruning Protocol

This protocol provides a detailed method for manually identifying and removing redundant primitive Gaussian functions, based on a successful application for a water molecule calculation [1].

Step 1: Generate a List of Exponents Compile a complete list of all primitive Gaussian exponents for the atom causing the linear dependency, including those from the primary basis set (e.g., aug-cc-pV9Z) and any supplemental sets (e.g., cc-pCV7Z "tight" functions) [1].

Step 2: Calculate Pairwise Percentage Similarity For all possible pairs of exponents within the same angular momentum shell (s, p, d, etc.), calculate the percentage similarity. A smaller percentage difference indicates higher similarity and a greater chance of causing linear dependence.

Step 3: Rank and Select Function Pairs Rank the pairs from the smallest percentage difference to the largest. The pairs with the smallest percentage difference are the most redundant.

Table 2: Example of Redundant Exponent Identification in an Oxygen Atom

| Exponent 1 | Exponent 2 | Percentage Difference | Action |

|---|---|---|---|

| 94.8087090 | 92.4574853342 | ~2.5% | Remove one function |

| 45.4553660 | 52.8049100131 | ~15.0% | Remove one function |

| 0.90164000 | 0.04456 | ~181% | Retain both |

Step 4: Remove Functions and Re-test Create a new, pruned basis set by removing one function from each of the N most similar pairs (where N is the number of linear dependencies detected). Run a new calculation with this modified basis set and check if the linear dependency warnings disappear and if the energy is physically reasonable [1].

### The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Parameters for Basis Set Pruning

| Item | Function / Description | Example / Default Value |

|---|---|---|

| Overlap Matrix Eigenvalue Analysis | Primary diagnostic for linear dependence. Small eigenvalues indicate problems. | Smallest eigenvalue < 10⁻⁶ [24] |

| BASISLINDEP_THRESH (Q-Chem) | A $rem variable that sets the threshold for determining linear dependence. |

Default: 6 (threshold=10⁻⁶). Can be set to 5 for a larger threshold if SCF is poorly behaved [24]. |

| Integral Threshold (THRESH) | Tightening this threshold can paradoxically improve convergence in diffuse, large-molecule cases. | Setting THRESH = 14 is recommended in warnings [24]. |

| Pivoted Cholesky Decomposition (pCD) | An automated mathematical method to project out linear dependencies and generate customized basis sets. | Implemented in ERKALE, Psi4, and PySCF [1]. |

| BDIIS Algorithm | An optimization method that minimizes total energy and controls the overlap condition number to prevent linear dependence. | Used in the CRYSTAL code for solids [25]. |

Leveraging Complementary Auxiliary Basis Sets (CABS) as a Potential Solution

FAQs on CABS and Linear Dependencies

1. What is a Complementary Auxiliary Basis Set (CABS) and why is it used in explicitly correlated (F12) calculations? In explicitly correlated methods (e.g., MP2-F12, CCSD-F12), the CABS is a specialized auxiliary basis set required to resolve the identity in the context of the F12 theory. Its primary role is to represent the products of orbitals that appear in the formalism, leading to dramatically faster basis set convergence of correlation energies. Unlike the standard orbital basis set (OBS), the CABS, together with the RI-MP2 and RI-JK auxiliary basis sets, is essential for the practical application of F12 methods in quantum chemistry codes like MOLPRO, ORCA, and Turbomole [26] [27].

2. How can diffuse basis sets lead to linear dependency, and how does CABS help? Diffuse basis functions are essential for accurately modeling non-covalent interactions and anion states, but they severely impact the sparsity of the one-particle density matrix and can lead to numerical instabilities and linear dependencies [3]. This occurs because the inverse overlap matrix (S⁻¹) becomes significantly less sparse, and the basis functions become less local. The CABS singles correction has been proposed as one solution to this conundrum. When used in combination with compact, low l-quantum-number basis sets, it can help achieve accuracy while mitigating the detrimental effects of highly diffuse functions [3].

3. What does the "Error in Cholesky Decomposition of V Matrix" typically indicate, and how is it resolved?

This error often signals a problem with the auxiliary basis sets used in a RI calculation. It is typically caused by a linearly dependent auxiliary basis set. One solution is to use the AutoAux feature in ORCA, which automatically generates a robust auxiliary basis set to minimize the RI error [15]. If using a pre-defined CABS, ensuring it is properly designed for your specific orbital basis set (e.g., using an autoCABS-generated set) can prevent this issue [26] [27].

4. My calculation fails with a diffuse basis set due to linear dependencies. What are my options? You have several options to address this [15]:

- Use Minimally Augmented Basis Sets: For DFT calculations, consider using Truhlar's minimally augmented

def2-XVPbasis sets (e.g.,ma-def2-SVP). These are the standarddef2basis sets augmented with a single set of diffuse s- and p-functions with exponents set to 1/3 of the lowest exponent in the standard basis. This provides a more economical and numerically stable path for including diffuse functions. - Decontract the Basis Set: In the ORCA

%basisblock, useDecontractAux trueorDecontractCABS true. Decontraction can help eliminate linear dependencies that arise from the general contraction scheme of the basis set. - Employ the CABS Singles Correction: As identified in recent research, using the CABS singles correction with a more compact orbital basis can achieve high accuracy for non-covalent interactions without the severe sparsity and linear dependency problems associated with large, diffuse basis sets [3].

Troubleshooting Guides

Issue: SCF Convergence Failures with Diffuse Basis Sets

| Symptom | Potential Cause | Solution |

|---|---|---|

| SCF cycles oscillating or diverging; warning of linear dependence. | Overly diffuse functions causing near-linear dependencies in the basis set. | 1. Switch to a minimally augmented basis set (e.g., ma-def2-TZVP).2. Use the AutoAux keyword to generate a more compatible auxiliary basis [15].3. In the %scf block, increase the LevelShift parameter to stabilize the initial cycles. |

Issue: Errors in F12 Calculations Due to an Incompatible or Missing CABS

| Symptom | Potential Cause | Solution |

|---|---|---|

| Calculation terminates with an error about a missing CABS or shows slow basis set convergence in F12 energy. | The CABS is not specified or is unavailable for your chosen orbital basis set and element. | 1. Explicitly specify a CABS in the input. For cc-pVnZ-F12 orbital basis sets, use the corresponding cc-pVnZ-F12-CABS [28].2. If a purpose-built CABS is unavailable, use an automated tool like autoCABS to generate one from your orbital basis set [26] [27]. |

Issue: Linear Dependencies When Using Decontracted or General Basis Sets

| Symptom | Potential Cause | Solution |

|---|---|---|

| "Error in Cholesky Decomposition" or similar linear algebra failures during the initial integral evaluation. | Decontraction or the general contraction scheme of the basis set has created redundant primitive Gaussians. | 1. ORCA automatically removes duplicate primitives from generally contracted sets. Verify this with PrintBasis [28].2. If problems persist, avoid full decontraction and use DecontractAuxC true to only decontract the correlation auxiliary basis, which can be sufficient to reduce the RI error without introducing instability. |

Performance Data for CABS-Generated Sets

The autoCABS algorithm automatically generates CABS basis sets comparable to manually optimized ones. The table below summarizes performance data for total atomization energies (TAEs) on the W4-08 benchmark, demonstrating that the auto-generated sets are suitable for production use [27].

Table 1: Performance of AutoCABS vs. OptRI for MP2-F12/cc-pVnZ-F12 on W4-08 TAEs

| Orbital Basis Set | CABS Type | Mean Absolute Error (MAE) [kcal/mol] | Notes |

|---|---|---|---|

| cc-pVDZ-F12 | OptRI | Reference | Purpose-optimized baseline [27] |

| autoCABS | Comparable | Slightly larger error than OptRI, but negligible for n≥T [27] | |

| cc-pVTZ-F12 | OptRI | Reference | |

| autoCABS | Nearly Identical | Quality difference becomes negligible [27] | |

| cc-pVQZ-F12 | OptRI | Reference | |

| autoCABS | Nearly Identical | Quality difference becomes negligible [27] |

Experimental Protocol: Generating and Using an AutoCABS

This protocol details how to generate a CABS basis set using the autoCABS algorithm for an orbital basis set that lacks a pre-defined one [26] [27].

1. Obtain the autoCABS Script:

- The Python script is available under a free license from GitHub at

https://github.com/msemidalas/autoCABS.git.

2. Prepare the Input:

- The script requires your orbital basis set as input, preferably in ORCA or MOLPRO format.

3. Generate the CABS:

- Run the script from the command line. It will deterministically generate a hierarchy of CABS basis sets (e.g.,