Targeted Validation for Clinical Prediction Models: A Practical Framework to Bridge the Gap Between Development and Clinical Implementation

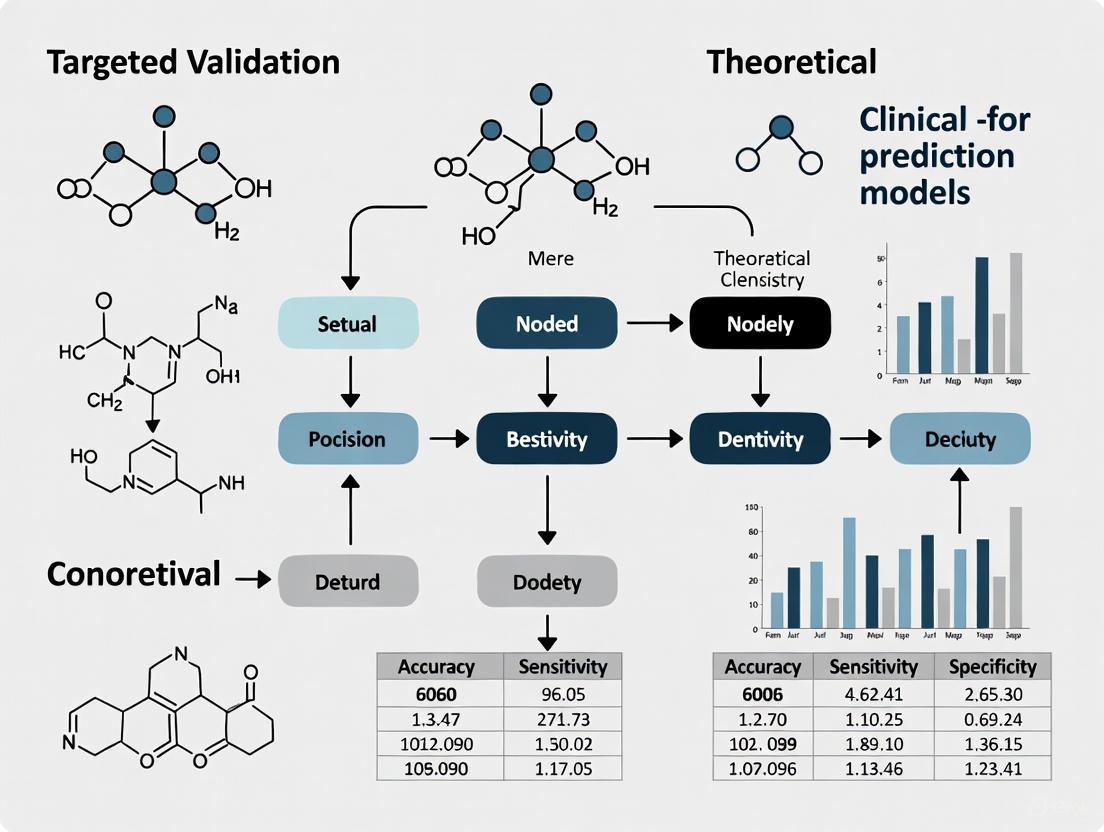

This article provides a comprehensive framework for the targeted validation of clinical prediction models (CPMs), addressing a critical gap between model development and real-world clinical application.

Targeted Validation for Clinical Prediction Models: A Practical Framework to Bridge the Gap Between Development and Clinical Implementation

Abstract

This article provides a comprehensive framework for the targeted validation of clinical prediction models (CPMs), addressing a critical gap between model development and real-world clinical application. Aimed at researchers, scientists, and drug development professionals, it synthesizes current methodologies and evidence to guide the appropriate evaluation of CPMs in their specific intended populations and settings. The content spans from foundational concepts defining targeted validation and the 'validation gap,' to methodological guidance on executing temporal, geographical, and domain validations. It further addresses troubleshooting common pitfalls like data drift and poor calibration, and culminates in strategies for comparative evaluation and impact assessment. The goal is to equip practitioners with the knowledge to enhance model trustworthiness, reduce research waste, and facilitate the successful implementation of robust CPMs in clinical practice.

Why Targeted Validation is the Cornerstone of Trustworthy Clinical Prediction Models

Targeted validation represents a paradigm shift in clinical prediction model (CPM) evaluation by emphasizing validation within specific intended populations and settings rather than relying on convenience samples or generic external validation. This approach ensures that performance metrics accurately reflect real-world clinical utility, addressing critical limitations in traditional validation methodologies that often lead to research waste and potentially misleading conclusions. By aligning validation datasets with precisely defined target populations, researchers and drug development professionals can obtain meaningful estimates of model performance, improve calibration accuracy, and facilitate more effective implementation across diverse healthcare settings. This article establishes comprehensive protocols for designing and executing targeted validation studies, incorporating methodological considerations for electronic health record data, sample size requirements, and practical implementation frameworks.

Conceptual Foundation

Targeted validation is defined as the process of estimating how well a clinical prediction model performs within its intended population and clinical setting [1]. This concept sharpens the focus on the intended use of a model, which may increase the applicability of developed models, avoid misleading conclusions, and reduce research waste [1] [2]. Unlike traditional external validation, which often utilizes arbitrary datasets chosen for convenience rather than relevance, targeted validation requires careful matching between the validation dataset and the specific context where the model will ultimately be deployed [1]. This approach acknowledges that model performance is highly dependent on population characteristics and clinical setting, making context-specific validation essential for meaningful performance assessment.

The foundation of targeted validation rests on recognizing that CPM performance is significantly influenced by case mix (distributions of patient characteristics), baseline risk, and predictor-outcome associations, all of which vary across populations and settings [1] [3]. Consequently, a model demonstrating excellent performance in one context may perform poorly in another, making general claims about model "validity" potentially misleading without precise specification of the intended use context [1]. Targeted validation addresses this limitation by requiring explicit definition of the target population and setting before validation, ensuring that performance estimates directly inform deployment decisions.

The Validation Spectrum: From Internal to Targeted Approaches

Traditional CPM validation has primarily focused on the distinction between internal and external validation, with internal validation examining performance within the development dataset (with appropriate optimism correction) and external validation assessing performance in different datasets [1] [4]. However, this binary classification fails to capture critical nuances in validation objectives. Targeted validation introduces a more refined framework that recognizes different types of external validation studies based on their relationship to the intended use context [1]:

- Reproducibility assessment: Validation in populations/settings similar to the development context

- Transportability assessment: Validation in different populations/settings than the development context

- Generalisability assessment: Validation across multiple relevant populations/settings

- Arbitrary validation: Validation in convenience samples bearing little relevance to any target population

Targeted validation explicitly prioritizes the first three types while discouraging arbitrary validation that has limited relevance to clinical deployment decisions [1]. This framework also reveals that external validation may not always be necessary when the intended population matches the development population, where robust internal validation may suffice, particularly with large development datasets [1].

Table 1: Comparison of Validation Approaches

| Validation Type | Primary Objective | Dataset Relationship to Target | Key Limitations |

|---|---|---|---|

| Internal Validation | Assess and correct for overfitting | Same as development data | May not reflect performance in new samples from same population |

| Traditional External Validation | Assess performance in different data | Often arbitrary convenience samples | May not inform performance in intended setting |

| Targeted Validation | Assess performance in intended use context | Precisely matches target population and setting | Requires careful dataset identification and may need multiple validations |

Methodological Framework for Targeted Validation

Core Principles and Definitions

Targeted validation operates according to several fundamental principles that distinguish it from conventional validation approaches. First, it requires that a CPM be developed with a clearly defined intended use and population specification—including when predictions are to be made, in whom, and for what purpose [1]. Validation should then be specifically designed to show how well the CPM performs at that defined task [1]. This principle emphasizes that model validity is not an intrinsic property but rather context-dependent, with models being "valid for" specific populations and settings rather than "valid" in general [1].

The key components of targeted validation include:

- Population specification: Precise definition of the patient group under consideration, including demographic, clinical, and contextual characteristics [1]

- Setting specification: Clear description of the clinical environment where the model would be used (e.g., primary care, emergency department, intensive care unit) [1]

- Intended use case: Detailed description of the clinical decision the model is intended to inform and the timing of that decision within the care pathway

- Performance benchmarks: Establishment of context-specific performance thresholds for determining whether model performance is adequate for clinical use

A critical insight from the targeted validation framework is that performance in one target population gives little indication of performance in another [1] [3]. This performance heterogeneity across populations and settings necessitates separate validation exercises for each distinct intended use context, particularly when models are deployed across different healthcare systems, levels of care, or patient subgroups [1].

Protocol for Designing Targeted Validation Studies

Population and Setting Specification

The initial step in targeted validation involves precisely defining the target population and setting. This requires specification of inclusion and exclusion criteria that reflect the intended use context, including demographic factors, clinical characteristics, healthcare setting characteristics, and temporal factors [1] [3]. For example, a model intended for use in secondary care settings must be validated using data from secondary care populations, which often have fundamentally different case mixes compared to tertiary care populations where models are frequently developed [3].

When defining the target population, researchers should consider:

- Clinical characteristics: Disease severity, comorbidity profiles, prior treatments

- Demographic factors: Age, sex, ethnicity, socioeconomic factors

- Healthcare system factors: Type of facility, geographic location, referral patterns

- Temporal considerations: Time period, seasonal variations, changes in practice patterns

This detailed specification enables identification of appropriate validation datasets that adequately represent the intended use context, avoiding the "validation gap" that occurs when suitable datasets are unavailable [3].

Dataset Requirements and Quality Assessment

Targeted validation requires validation datasets that closely match the specified target population and setting. Electronic health records (EHRs) offer a promising data source for targeted validation, particularly for secondary care settings, but present specific methodological challenges [3]. When using EHR data for targeted validation, researchers should implement three additional practical steps alongside standard validation checklists:

- Involve local EHR experts: Include clinicians, nurses, or other healthcare professionals in the data extraction process to ensure appropriate interpretation of clinical documentation and context [3]

- Perform comprehensive validity checks: Assess data quality, completeness, and accuracy through systematic validation procedures [3]

- Provide detailed metadata: Document how variables were constructed from EHRs to ensure transparency and replicability [3]

Additionally, EHR data often requires transformation of unstructured clinical text into structured formats using natural language processing (NLP) techniques, introducing potential limitations related to semantic understanding, context interpretation, and information extraction accuracy [3]. These limitations must be carefully addressed during dataset preparation to ensure validation results accurately reflect model performance.

Table 2: Data Source Considerations for Targeted Validation

| Data Source Type | Advantages | Limitations | Quality Assurance Strategies |

|---|---|---|---|

| Prospective Cohort Studies | High data quality, pre-specified variables | Costly, time-consuming, potential selection bias | Protocol adherence monitoring, completeness audits |

| Electronic Health Records | Large sample sizes, real-world clinical context | Missing data, ascertainment bias, variability in documentation | Clinical expert involvement, validity checks, metadata documentation |

| Clinical Trial Data | Standardized data collection, detailed phenotyping | Selective eligibility, limited generalizability | Transportability assessment, case-mix evaluation |

| Disease Registries | Comprehensive coverage, longitudinal data | Variable data quality across sites | Harmonization procedures, quality metrics |

Sample Size Considerations

Appropriate sample size is critical for precise estimation of model performance during targeted validation. Recent methodological advances have moved beyond traditional rules of thumb, such as 10 events per predictor parameter, toward more rigorous approaches [4]. Riley et al. have proposed a comprehensive system for sample size determination that addresses multiple requirements simultaneously [4]:

For continuous outcomes:

- Small optimism in predictor effect estimates (shrinkage factor ≥0.9)

- Small absolute difference (≤0.05) in apparent and adjusted R²

- Precise estimation (margin of error ≤10% of true value) of the model's residual standard deviation

- Precise estimation of the mean predicted outcome value

For binary and time-to-event outcomes:

- Small optimism in predictor effect estimates (shrinkage factor ≥0.9)

- Small absolute difference (≤0.05) in apparent and adjusted Nagelkerke's R²

- Precise estimation of the overall risk in the population

These criteria ensure that targeted validation studies have sufficient precision to inform deployment decisions, particularly given the performance heterogeneity across different populations and settings.

Implementation Protocols

Workflow for Targeted Validation

The following workflow provides a structured approach for conducting targeted validation studies:

Targeted Validation Workflow

Performance Assessment Methodology

Targeted validation requires comprehensive assessment of model performance using appropriate metrics and statistical methods. The core components of performance assessment include:

Discrimination Evaluation:

- Calculate the c-index (area under the ROC curve) for binary outcomes

- Assess time-dependent discrimination measures for survival outcomes

- Evaluate discrimination across relevant clinical subgroups to identify performance heterogeneity

Calibration Assessment:

- Perform calibration-in-the-large by comparing average predicted risk to observed outcome incidence

- Execute calibration slopes to assess agreement across the risk spectrum

- Create calibration plots with smoothed curves using loess or similar methods

- Quantify calibration accuracy using metrics like Emax and Eavg

Clinical Utility Analysis:

- Conduct decision curve analysis to evaluate net benefit across clinically relevant risk thresholds

- Assess potential clinical impact using classification measures (sensitivity, specificity) at operational thresholds

- Evaluate reclassification metrics (NRI, IDI) when comparing multiple models

For each performance measure, precision should be quantified using appropriate confidence intervals (e.g., bootstrap confidence intervals) to communicate estimation uncertainty. Performance should be compared against pre-specified benchmarks that reflect minimum requirements for clinical deployment in the specific intended use context.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Targeted Validation

| Tool Category | Specific Methods/Techniques | Primary Function | Implementation Considerations |

|---|---|---|---|

| Dataset Quality Assessment | PROBAST applicability domain [1], EHR validity checks [3] | Evaluate relevance of validation dataset to target population | Requires clinical expertise for appropriate assessment |

| Performance Metrics | C-index, calibration plots, decision curve analysis [4] | Quantify model discrimination, calibration, and clinical utility | Should be selected based on clinical context and model purpose |

| Statistical Software | R (rms, pmsamps, riskRegression packages) [4], Python (scikit-survival, predictiveness curves) | Implement validation methodologies and performance estimation | Package selection depends on model type and performance measures |

| Sample Size Planning | pmsamps package [4], Riley et al. criteria [4] | Determine required sample size for precise performance estimation | Should account for anticipated performance heterogeneity |

| NLP Tools for EHR Data | CTcue, Amazon Comprehend Medical [3] | Extract structured variables from unstructured clinical text | Requires validation of extraction accuracy for critical variables |

Advanced Applications and Special Considerations

Addressing the Validation Gap in Secondary Care

A significant challenge in targeted validation is the "validation gap" that occurs when models developed in tertiary care settings are intended for deployment in secondary care, but appropriate validation datasets from secondary care are scarce [3]. This gap is particularly problematic because CPMs often have the greatest potential utility in secondary care, where patient case mixes are broad and practitioners need efficient triage tools [3]. However, case mix differences between tertiary and secondary care populations frequently lead to poor model performance, especially miscalibration, when tertiary-developed models are applied in secondary care without appropriate validation [3].

To address this validation gap, researchers can leverage EHR data from secondary care settings, but must account for specific limitations including ascertainment bias, missing data, and documentation variability [3]. The three-step approach described previously—involving clinical experts in data extraction, performing comprehensive validity checks, and providing detailed metadata—is particularly important for secondary care validation studies [3]. Additionally, researchers should consider focused validation studies specifically designed to address known performance concerns, such as calibration in specific risk ranges or discrimination in clinically important subgroups.

Model Updating and Adaptation Strategies

When targeted validation reveals inadequate performance in the intended setting, model updating or adaptation may be necessary before deployment. Several strategies exist for improving model performance in new populations:

Simple Recalibration:

- Intercept adjustment: Modify the model intercept to match the overall event rate in the target population

- Slope adjustment: Recalibrate the model slope to improve agreement across the risk spectrum

- Intercept and slope adjustment: Combine both approaches for comprehensive recalibration

Model Revision:

- Predictor effect updating: Re-estimate some or all predictor coefficients while retaining the original predictor set

- Extended model updating: Add new predictors specifically relevant to the target population

- Model refitting: Completely redevelop the model using data from the target population

The choice among these strategies depends on the magnitude of performance issues identified during targeted validation, the availability of sufficient data from the target population, and the practical constraints of implementation. In all cases, the updating process should be clearly documented, and the updated model should undergo subsequent validation to ensure adequate performance.

Implementation and Impact Assessment

Successful targeted validation should lead to clinical implementation when performance benchmarks are met. Implementation strategies vary, with common approaches including integration into hospital information systems (63% of implemented models), web applications (32%), and patient decision aids (5%) [5]. However, current implementation practices often deviate from prediction modeling best practices, with only 27% of implemented models undergoing external validation and only 13% being updated following implementation [5].

To improve implementation success, targeted validation should be followed by:

- Impact assessment: Evaluation of whether model use improves clinical processes or patient outcomes

- Implementation monitoring: Ongoing assessment of model usage patterns, data quality, and adherence to intended use protocols

- Performance surveillance: Continuous or periodic revalidation to detect performance degradation over time

- Model updating: Systematic processes for incorporating new data and refining models based on clinical feedback

These steps ensure that models remain effective throughout their deployment lifecycle and continue to provide value in evolving clinical environments.

Targeted validation represents a fundamental shift in CPM evaluation by emphasizing context-specific performance assessment rather than generic validation approaches. By aligning validation datasets with precisely defined intended use contexts, researchers and drug development professionals can obtain meaningful performance estimates that directly inform deployment decisions. The methodological framework and implementation protocols outlined in this article provide a structured approach for designing, executing, and interpreting targeted validation studies across diverse clinical settings. As CPMs become increasingly integrated into clinical practice, adopting targeted validation principles will be essential for ensuring that models deliver reliable, clinically useful predictions in their specific contexts of use. Future work should focus on standardizing targeted validation methodologies, developing efficient approaches for leveraging real-world data sources, and establishing context-specific performance benchmarks that reflect clinically meaningful requirements.

The Critical Problem of the 'Validation Gap' in Model Implementation

The implementation of Clinical Prediction Models (CPMs) in real-world healthcare settings is critically hindered by a pervasive issue known as the validation gap. This gap represents the disconnect between the populations and settings in which a CPM is developed and validated versus the specific clinical environments where it is ultimately intended for use [6]. In contemporary clinical research, it is common for validation studies to be conducted with arbitrary datasets chosen for convenience rather than true relevance to the model's intended application [2] [1]. This practice creates a fundamental mismatch that can severely compromise model performance, clinical utility, and patient safety when the model is deployed in practice.

The concept of targeted validation has emerged as a crucial framework for addressing this challenge. Targeted validation emphasizes that how and in what data to validate a CPM should depend explicitly on the model's intended use [2] [1]. This approach requires researchers to precisely define the intended population, setting, and purpose of a CPM before conducting validation studies specifically designed to estimate performance in that target context. By focusing validation efforts on datasets that accurately represent the intended deployment environment, targeted validation provides meaningful evidence about how a model will perform in actual clinical practice [2].

The consequences of ignoring the validation gap are substantial and well-documented. CPMs developed in tertiary care settings, for instance, often demonstrate poor calibration and misleading risk predictions when applied in secondary care populations due to differences in case mix, baseline risk, and predictor-outcome associations [6]. Such performance degradation can directly impact clinical decision-making, potentially leading to inappropriate treatment decisions, false patient expectations, and ultimately, patient harm [6]. The growing recognition of these issues has positioned the validation gap as a central challenge in clinical prediction modeling, particularly as artificial intelligence and machine learning models become more prevalent in healthcare.

Quantifying the Validation Gap: Evidence and Implications

Empirical Evidence of the Problem

The validation gap manifests concretely through measurable deficiencies in model performance when CPMs are applied outside their development contexts. Substantial empirical evidence demonstrates how differences in patient case mix, outcome prevalence, and healthcare settings significantly impact model performance [6]. The following table summarizes key quantitative findings that highlight the scope and consequences of the validation gap:

Table 1: Empirical Evidence of the Validation Gap in Clinical Prediction

| Evidence Type | Findings | Implications |

|---|---|---|

| CPM Performance Across Care Settings | CPMs developed in tertiary care often perform poorly in secondary care; example shows severe overestimation of event probabilities in secondary care population [6] | Inaccurate risk stratification and potential clinical misuse when models are applied outside development context |

| AI-Enabled Medical Device Recalls | Analysis of 950 FDA-authorized AI medical devices found 60 devices associated with 182 recall events; 43% of recalls occurred within one year of authorization [7] | Many AI devices enter market with limited clinical evaluation, especially those using 510(k) pathway without prospective human testing requirements |

| Recall Root Causes | Diagnostic/measurement errors and functionality delay/loss were most common recall causes; vast majority of recalled devices lacked clinical trials [7] | Inadequate pre-market clinical validation directly linked to post-market performance failures and safety issues |

| Manufacturer Factors | Publicly traded companies accounted for ~53% of recalls but >90% of recall events and 98.7% of recalled units [7] | Investor-driven pressure for faster market launches may contribute to inadequate validation practices |

Methodological Deficiencies in Current Validation Practices

Beyond the empirical evidence, systematic reviews of validation studies reveal persistent methodological shortcomings that exacerbate the validation gap. A comprehensive review of methodological guidance for CPM evaluation identified consistent problems in how validation studies are designed and reported [8]. These include insufficient attention to calibration measures, continued use of suboptimal performance metrics, and failure to properly assess clinical usefulness [8]. The absence of standardized approaches for evaluating model performance across diverse populations further compounds these issues.

The PROBAST risk of bias tool for systematic reviews of CPMs includes an 'applicability' domain that specifically checks whether validation studies consider the same setting and population as the review question, highlighting the importance of context-specific validation [2]. Despite this, validation studies frequently fail to adequately report on the representativeness of their datasets for intended target populations [6] [8]. This reporting gap makes it difficult for potential users to determine whether an existing validation study provides meaningful evidence for their specific clinical context.

Targeted Validation Framework: Bridging the Gap

Core Principles of Targeted Validation

Targeted validation represents a paradigm shift in how researchers approach the validation of clinical prediction models. This framework emphasizes that validation should not be a one-time activity conducted with conveniently available datasets, but rather a deliberate process designed to evaluate model performance specifically within the intended context of use [2] [1]. The core principles of targeted validation include:

- Context-Specific Performance Estimation: The primary goal of targeted validation is to estimate how well a CPM performs within its intended population and setting, rather than making broad claims about general validity [2].

- Explicit Intended Use Specification: Targeted validation requires researchers to precisely define the intended use, population, and setting of a CPM before conducting validation studies [1].

- Appropriate Dataset Selection: Validation datasets must be carefully selected to match the intended deployment context, avoiding arbitrary or convenient datasets that do not represent the target population [2] [1].

- Situational Validation Requirements: The necessary validation approach depends on the intended use; in some cases, robust internal validation may suffice, while in others, multiple external validations are required [2].

The fundamental insight of targeted validation is that a model can only be considered "validated for" specific populations and settings where its performance has been empirically assessed [2]. This contrasts with the common practice of referring to models as simply "valid" or "validated" without specifying the contexts in which this holds true.

Practical Implementation Framework

Implementing targeted validation requires a structured approach to ensure that validation activities directly address the intended use of a CPM. The following workflow diagram illustrates the key decision points and processes in applying targeted validation principles:

Targeted Validation Workflow

This framework reveals that when the intended population for a model matches the population used for development, a robust internal validation may be sufficient—especially if the development dataset was large and appropriate methods were used to correct for overfitting [2] [1]. However, when a model is intended for use in new populations or settings, targeted validation in each distinct context becomes essential.

Protocol for Targeted Validation of Clinical Prediction Models

Comprehensive Validation Protocol

Implementing a rigorous targeted validation requires a structured methodology. The following protocol provides detailed steps for conducting targeted validation of clinical prediction models, with particular attention to addressing the validation gap.

Table 2: Comprehensive Targeted Validation Protocol for Clinical Prediction Models

| Protocol Stage | Key Activities | Methodological Considerations |

|---|---|---|

| 1. Define Validation Context | - Precisely specify intended population, setting, and use case- Define performance requirements for clinical utility- Identify relevant existing validation studies | Document inclusion/exclusion criteria that match intended use; define minimum acceptable performance thresholds [2] [1] |

| 2. Select Validation Dataset | - Identify data sources representative of target population- Assess case mix compatibility with intended use- Evaluate data quality and completeness | Ensure dataset reflects the spectrum of disease severity, comorbidities, and demographic characteristics expected in target population [6] |

| 3. Statistical Performance Assessment | - Evaluate discrimination using C-statistic- Assess calibration using calibration plots, slope, and-in-the-large- Calculate overall performance measures | Compare performance to existing models or clinical standards; use bootstrapping for confidence intervals [8] |

| 4. Clinical Usefulness Assessment | - Perform decision curve analysis to evaluate net benefit- Assess potential clinical impact across risk thresholds- Compare to alternative decision strategies | Focus on whether model improves decisions versus current practice; avoid overreliance on statistical significance [8] |

| 5. Heterogeneity Evaluation | - Examine performance across patient subgroups- Assess transportability to relevant subpopulations- Identify contexts where model performs poorly | Evaluate whether performance is consistent across age, sex, ethnicity, disease severity, and clinical centers [2] |

| 6. Model Updating (if needed) | - Apply recalibration methods (intercept, slope)- Consider model revision or extension- Evaluate need for context-specific refitting | Use closed-testing procedures to avoid overfitting during updating; validate updated model performance [8] |

Electronic Health Record Data Extraction Protocol

A significant challenge in targeted validation is obtaining appropriate datasets that represent the intended population and setting. Electronic Health Records (EHRs) offer a potential solution but require careful methodology to ensure data quality. The following protocol outlines a systematic approach for extracting validation datasets from EHRs:

EHR Data Extraction Protocol

This protocol emphasizes three critical enhancements to standard data extraction processes [6]:

Include Clinical EHR Experts: Involve clinicians, nurses, or healthcare professionals in the data extraction process. These experts possess firsthand knowledge of patient conditions, treatments, and histories that may not be well-documented in the EHR, including informal diagnoses or uncoded symptoms [6].

Implement Rigorous Validity Checks: Perform comprehensive data quality assessments to identify ascertainment bias, missingness, and documentation inconsistencies. This is particularly important for unstructured data where semantic and context understanding are required for accurate classification [6].

Provide Comprehensive Metadata: Document precisely how each variable was constructed from the EHR, including definitions, extraction methods, and any transformations applied. This metadata is essential for interpreting validation results and replicating the methodology in other settings [6].

Research Reagent Solutions for Validation Studies

Essential Methodological Tools

Conducting rigorous targeted validation studies requires both methodological expertise and appropriate analytical tools. The following table details key "research reagents" – essential methodological approaches and tools – for implementing comprehensive validation protocols:

Table 3: Research Reagent Solutions for Targeted Validation Studies

| Tool Category | Specific Methods/Tools | Application in Targeted Validation |

|---|---|---|

| Performance Assessment Tools | C-statistic, Calibration plots, Brier score, Decision Curve Analysis | Quantify model discrimination, calibration, overall performance, and clinical usefulness in target population [8] |

| Validation Study Design | Bootstrapping, Cross-validation, Internal-external validation | Estimate and correct for overfitting; assess performance in development dataset with optimism correction [8] |

| Model Updating Methods | Intercept recalibration, Slope adjustment, Model revision, Model extension | Adjust existing models for new populations or settings without complete redevelopment [8] |

| EHR Data Extraction | Natural Language Processing (NLP), CTcue, Amazon Comprehend Medical | Transform unstructured clinical notes into structured data for validation cohorts; extract specific predictors from free text [6] |

| Bias Assessment Tools | PROBAST, TRIPOD statement | Evaluate risk of bias and applicability of validation studies; ensure comprehensive reporting [2] [8] |

| Clinical Impact Assessment | Net Benefit, Quality-Adjusted Life Years (QALYs), Cost-effectiveness analysis | Evaluate whether model implementation improves patient outcomes and represents efficient resource use [8] |

Implementation Considerations

Successfully implementing these methodological reagents requires careful attention to several practical considerations. Bootstrapping techniques are generally preferred over data splitting for internal validation, as they provide more precise estimates of predictive performance without reducing sample size [8]. For EHR-based validation studies, natural language processing tools are essential for leveraging the approximately 70% of EHR data stored as free text, but these require validation of their own accuracy for specific clinical concepts [6].

When applying model updating methods, the choice between simple recalibration and more extensive revision should be guided by the degree of performance degradation observed in the target population [8]. In all cases, validation workflows should incorporate continuous quality monitoring to ensure that models maintain their performance over time as clinical practices and patient populations evolve [9].

The validation gap represents a critical challenge in clinical prediction model implementation, with demonstrated consequences for patient care and medical device safety. Targeted validation provides a principled framework for addressing this gap by emphasizing context-specific performance evaluation and appropriate dataset selection. The protocols and methodologies outlined in this document offer a roadmap for researchers and drug development professionals to implement targeted validation approaches in their work.

As the field of clinical prediction modeling continues to evolve, with increasing use of artificial intelligence and machine learning techniques, the importance of rigorous, context-aware validation will only grow. By adopting targeted validation principles and methodologies, researchers can help ensure that clinical prediction models deliver on their promise to improve patient care while avoiding the pitfalls of inadequate validation. Future work should focus on standardizing targeted validation approaches, developing more efficient methods for multi-context validation, and establishing clearer standards for context-specific model performance.

Clinical prediction models (CPMs) are algorithms that compute the risk of a diagnostic or prognostic outcome to guide patient care [10] [11]. The healthcare environment is fundamentally dynamic, with changes in demographics, disease prevalence, clinical practices, and health policies occurring over time and space [10]. These changes lead to data distribution shifts, particularly case-mix shifts where the distribution of individual predictors [P(X)] changes while the conditional probability of the outcome given the predictors [P(Y|X)] remains unchanged [10]. This phenomenon poses significant challenges for CPM performance when deployed in new populations or settings, creating an urgent need for targeted validation approaches that explicitly account for these population differences [1].

Targeted validation emphasizes that CPMs must be validated within their intended population and setting to provide meaningful performance estimates [1]. The traditional pipeline of CPM production—development followed by arbitrary external validation using conveniently available datasets—often fails to account for population heterogeneity, leading to performance degradation and research waste [12] [1]. This application note provides researchers with a comprehensive framework for understanding and addressing case-mix impacts on CPM performance through targeted validation strategies.

Quantitative Evidence: The Scale and Impact of Population Heterogeneity

Proliferation of Clinical Prediction Models

The expansion of CPM development has been substantial across medical fields, with estimates indicating nearly 250,000 articles reporting the development of CPMs published until 2024 [13]. The table below summarizes the publication trends and their implications for validation practice.

Table 1: Publication Trends for Clinical Prediction Models

| Category | Statistical Estimate | Time Period | Implications for Validation |

|---|---|---|---|

| Regression-based CPM Development Articles | 82,772 (95% CI 65,313-100,231) [13] | 1995-2020 | Significant number of models requiring validation |

| Total CPM Articles (including non-regression) | 147,714 (95% CI 125,201-170,226) [13] | 1995-2020 | Extensive proliferation beyond traditional methods |

| Projected Total CPM Articles | 248,431 [13] | 1950-2024 | Accelerating growth, particularly from 2010 onward |

This proliferation creates a substantial validation gap, as systematic reviews of CPMs frequently cannot identify sufficient external validation or impact studies to assess clinical utility [12]. The scarcity of proper validation hinders the emergence of critical, well-founded knowledge about CPMs' clinical value and contributes to research waste [12].

Documented Performance Variation Across Populations

Case-mix shifts significantly impact model performance metrics, particularly calibration (how well predicted probabilities match observed frequencies) and discrimination (the model's ability to distinguish between cases and non-cases) [10]. The following table summarizes performance variations under different case-mix shift scenarios based on empirical research.

Table 2: Impact of Case-Mix Shift on Model Performance Metrics

| Case-Mix Scenario | Model Development Approach | Performance Metric | Result | Interpretation |

|---|---|---|---|---|

| Partial case-mix shift with insufficient target sample size | Membership-based weighting [10] | Optimism-adjusted calibration slope | 0.98 | Superior performance in correcting for shift |

| Partial case-mix shift with sufficient target sample size | Unweighted on target data only [10] | Optimism-adjusted calibration slope | 0.95 | Better than Membership-based (0.92) with adequate data |

| Complete case-mix shift with insufficient target sample size | Membership-based vs. Unweighted target [10] | Optimism-adjusted calibration slope | 0.77 (both) | Similar performance when target data is limited |

| Complete case-mix shift with sufficient target sample size | Membership-based vs. Unweighted target [10] | Optimism-adjusted calibration slope | 0.94 (both) | Adequate correction with sufficient target data |

Beyond calibration, discrimination also varies substantially across populations. For instance, models predicting in-hospital mortality using different feature combinations demonstrated AUROC values ranging from 0.811 on average to 0.832 for the best-performing feature set [14]. This heterogeneity underscores that model performance is highly dependent on the specific population and setting, necessitating targeted validation approaches [1].

Methodological Protocols for Targeted Validation

Membership-Based Method for Case-Mix Correction

The membership-based method addresses case-mix shifts by re-weighting data samples from the source set (before case-mix shift) to more closely match the target set (after case-mix shift) [10]. This protocol assumes the target set reflects the population in which the model will be implemented.

Table 3: Experimental Protocol for Membership-Based Case-Mix Correction

| Step | Procedure | Specifications | Application Notes |

|---|---|---|---|

| 1. Data Partitioning | Divide development dataset into source (before shift) and target (after shift) subsets [10] | Source size: s; Target size: n | Assume latest distribution shift reflects deployment population |

| 2. Membership Model Development | Develop binary logistic regression model with membership in target set as outcome [10] | Outcome: 1 for target set, 0 for source setPredictors: K relevant variables | Use same predictors intended for CPM development |

| 3. Propensity Score Calculation | Estimate membership propensity score for each individual in source set [10] | Conditional probability of target membership given predictors | PS = P(R=1|X) where R=1 indicates target set membership |

| 4. Weight Assignment | Calculate individual weights for source set samples [10] | Weighti = (PSi/(1-PSi)) × (n/s) | Weights limited to 1 to prevent overoptimistic standard errors |

| 5. Weighted Model Development | Develop CPM using weighted source data [10] | Apply calculated weights during model training | Combines information from both sets while correcting for shift |

This method is particularly valuable when the target set sample size is insufficient for robust model development, as it leverages information from the source set while correcting for distributional differences [10]. The approach shows promise for accounting for case-mix shifts during CPM development, especially when deployment population data is limited.

Dynamic Model Updating Pipeline

Dynamic model updating provides a systematic approach for maintaining CPM performance through periodic updates with new information [15]. The protocol includes two primary pipeline types:

Table 4: Dynamic Updating Pipeline Protocol

| Pipeline Type | Update Trigger | Candidate Model Testing | Update Decision Criteria |

|---|---|---|---|

| Proactive Updating [15] | Any time new data becomes available | Continuous evaluation of potential updates | Predictive performance measures in new data |

| Reactive Updating [15] | Performance degradation detected or model structure changes | Only when triggered by performance decline | Significant degradation in calibration or discrimination |

The implementation workflow involves:

- Performance Monitoring: Track calibration and discrimination metrics over time in new data

- Candidate Model Generation: Create potential updates using methods like model recalibration, coefficient updating, or complete model refitting

- Update Selection: Choose the best-performing candidate based on validation metrics

- Implementation: Deploy the selected update following change management protocols

This systematic approach helps guard against performance degradation while ensuring the updating process is principled and data-driven [15]. In practical applications, such as 5-year survival prediction in cystic fibrosis, dynamic updating pipelines have demonstrated better maintained calibration and discrimination compared to static models [15].

Diagram 1: Dynamic updating pipeline showing proactive and reactive paths for maintaining CPM performance.

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Methodological Reagents for Targeted Validation Research

| Research Reagent | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| Membership Propensity Score [10] | Estimates probability of belonging to target population for sample weighting | Case-mix shift correction during model development | Requires sufficient overlap between source and target distributions |

| Inverse-Odds Weights [10] | Transforms source distribution to resemble target distribution | Re-weighting training data to match deployment population | Limit weights to 1 to prevent overoptimistic standard errors |

| Calibration Slopes [10] | Measures agreement between predicted and observed risks | Performance assessment under population shift | Values closer to 1.0 indicate better calibration |

| TRIPOD+AI Guidelines [12] | Reporting framework for prediction model studies | Ensuring transparent development and validation reporting | Critical for reproducibility and clinical adoption |

| PROBAST Tool [1] | Risk of bias assessment for prediction model studies | Systematic reviews of prediction models | Includes applicability domain for targeted validation |

| Dynamic Updating Pipeline [15] | Systematic process for maintaining model performance | Countering performance degradation over time | Can be proactive or reactive based on update triggers |

Implementation Framework for Targeted Validation

Conceptual Framework for Targeted Validation

Targeted validation emphasizes that validation studies must be carefully designed to match the intended population and setting of the CPM, rather than using arbitrary datasets chosen for convenience [1]. The framework includes several critical components:

Diagram 2: Decision framework for selecting appropriate validation strategies based on intended CPM use.

Target Population Specification: Clearly define the population in which the CPM is intended for use, including demographic, clinical, and temporal characteristics [1]. For example, a model developed for predicting acute myocardial infarction should be validated in emergency department patients with chest pain, not general populations [1].

Setting Definition: Precisely specify the clinical setting where predictions will be made, such as primary care, emergency departments, or intensive care units [1]. Performance in one setting provides little indication of performance in another due to differences in case mix, baseline risk, and predictor-outcome associations [1].

Dataset Selection: Identify validation datasets that closely match the intended population and setting. When the development data adequately represents the target population, robust internal validation may be sufficient, especially with large sample sizes and appropriate optimism correction techniques [1].

Practical Implementation Guidelines

Pre-Validation Assessment

- Conduct a thorough analysis of case-mix differences between development and potential validation populations

- Evaluate the clinical relevance of the CPM for the target population before proceeding with validation

- Ensure sufficient sample size for precise performance estimation using established methods [12]

Performance Metrics Selection

- Include both discrimination (e.g., C-statistic, AUROC) and calibration measures (e.g., calibration slope, calibration plots)

- Consider clinical utility measures such as decision curve analysis when appropriate

- Report confidence intervals for all performance metrics to quantify uncertainty

Validation Gap Analysis

- Systematically identify differences between validation populations and intended target populations

- Document limitations in generalizability resulting from these differences

- Prioritize validation studies that address the most critical gaps for clinical implementation

The framework emphasizes that CPMs cannot be considered "validated" in general—they can only be considered validated for specific populations and settings where this has been rigorously assessed [1]. This approach reduces research waste by focusing validation efforts on contexts where the CPM has potential for clinical implementation.

Case-mix and setting differences profoundly impact CPM performance, necessitating a shift from convenience-based validation to targeted approaches. The documented effects on calibration and discrimination metrics underscore the importance of population-aware validation strategies. The methodologies presented—including membership-based case-mix correction, dynamic updating pipelines, and targeted validation frameworks—provide researchers with practical tools to address these challenges. As the proliferation of CPMs continues, with an estimated 250,000 development articles published to date [13], focused efforts on targeted validation rather than new model development will be essential for advancing clinically useful prediction tools. Future directions should include standardized reporting of population characteristics, development of validation-specific sample size methods, and increased emphasis on impact studies assessing whether CPM use actually improves patient outcomes in target populations.

The Proliferation of New Models vs. The Scarcity of Proper Validation

The field of clinical prediction model (CPM) research is characterized by a fundamental paradox: an incessant proliferation of newly developed models alongside a critical scarcity of proper validation. This discrepancy represents a significant challenge to advancing personalized medicine, where reliable risk stratification is crucial for informed clinical decision-making. Despite widespread recognition that validation is essential for ensuring models are fit for purpose, most models never progress beyond the initial development stage [8]. This validation gap persists across healthcare domains, with reviews identifying redundant models competing to address the same clinical problems—exemplified by approximately 60 models for breast cancer prognostication and over 300 models predicting cardiovascular disease risk, most featuring similar predictor sets [8].

The consequences of this validation scarcity are far-reaching. Without rigorous evaluation, models may demonstrate inadequate performance when applied to new populations, potentially leading to misguided clinical decisions. This issue gained prominence during the COVID-19 pandemic, where hundreds of prediction models were rapidly developed but most were deemed useless due to insufficient validation and ignored calibration [8]. This article examines the roots of this validation gap and provides structured methodological guidance for strengthening validation practices, thereby enhancing the reliability and clinical applicability of CPMs.

Quantitative Evidence of the Validation Gap

Systematic Assessment of Current Practices

Empirical evidence consistently reveals substantial deficiencies in prediction model evaluation. A systematic review of 56 implemented prediction models found that only 27% underwent external validation before implementation, and merely 32% were assessed for calibration during development and internal validation [16]. Perhaps most strikingly, only 13% of implemented models have been updated following deployment, indicating that most models remain static despite evolving clinical practices and patient populations [16].

The implications of poor validation are clearly demonstrated in a recent external validation study of cisplatin-associated acute kidney injury (C-AKI) prediction models. When the Motwani and Gupta models—originally developed for US populations—were applied to a Japanese cohort of 1,684 patients, both exhibited poor calibration despite maintaining some discriminatory ability (AUROC: 0.616 vs. 0.613) [17]. This miscalibration necessitated recalibration specifically for the Japanese population, highlighting the essential role of geographic validation [17].

Table 1: Evidence of Validation Gaps from Systematic Reviews

| Validation Aspect | Finding | Reference |

|---|---|---|

| External Validation | Only 27% of implemented models underwent external validation | [16] |

| Calibration Assessment | Only 32% of models were assessed for calibration during development | [16] |

| Model Updating | Only 13% of models have been updated following implementation | [16] |

| Model Redundancy | ~60 competing models for breast cancer prognostication with similar predictors | [8] |

Comparative Performance in External Validation

The C-AKI model validation study further illustrates how performance varies when models are applied to new populations. While the Gupta model demonstrated better discrimination for severe C-AKI (AUROC: 0.674 vs. 0.594; p=0.02), both models required recalibration to achieve acceptable performance in the Japanese cohort [17]. This underscores that discriminatory ability alone is insufficient without proper calibration—the agreement between predicted probabilities and observed event rates.

Table 2: Performance of C-AKI Prediction Models in External Validation

| Model | AUROC for C-AKI | AUROC for Severe C-AKI | Calibration Status | Post-Recalibration Improvement |

|---|---|---|---|---|

| Gupta et al. | 0.616 | 0.674 | Poor | Significant |

| Motwani et al. | 0.613 | 0.594 | Poor | Significant |

Methodological Framework for Model Validation

Core Validation Principles and Terminology

Validation constitutes the process of assessing model performance in specific settings, encompassing both internal and external approaches [8]. Internal validation evaluates reproducibility in subjects from the same data source as the derivation data, while external validation assesses generalizability to different populations or settings [8]. Without these validation steps, models risk overfitting—where they perform well on development data but poorly on new data—and lack demonstrated transportability across diverse clinical environments.

The key dimensions of model performance include:

- Discrimination: The model's ability to distinguish between patients who do and do not experience the outcome, typically measured using the area under the receiver operating characteristic curve (AUROC) or C-statistic [8]

- Calibration: The agreement between predicted probabilities and observed event rates, assessed through calibration-in-the-large, calibration slope, and visual calibration plots [8]

- Clinical usefulness: The potential impact on clinical decision-making, evaluated using decision-analytic measures like Net Benefit rather than simplistic classification metrics [8]

Comprehensive Validation Workflow

The following workflow outlines a systematic approach to model validation, from initial planning through to implementation decisions:

Experimental Protocols for Model Validation

Protocol 1: External Validation Study Design

Objective: To evaluate the performance of an existing prediction model in a new population or setting different from the development data.

Materials and Data Requirements:

- Representative sample from target population with sufficient outcome events

- Dataset containing all predictor variables required by the model

- Outcome measurements consistent with original model definition

- Ethical approval for use of clinical data

Methodology:

- Cohret Selection: Define inclusion/exclusion criteria ensuring representation of target population

- Sample Size Calculation: Ensure adequate number of events (minimum of 100-200 total events recommended)

- Data Collection: Extract predictor variables and outcomes, documenting any missing data

- Risk Score Calculation: Apply original model to calculate predicted probabilities for each patient

- Performance Assessment:

- Discrimination: Calculate AUROC/C-statistic with confidence intervals

- Calibration: Assess calibration-in-the-large and calibration slope; create calibration plots

- Clinical utility: Perform decision curve analysis to evaluate net benefit across threshold probabilities

- Comparison: If multiple models exist, compare performance using recommended metrics

Analysis Considerations:

- Handle missing data appropriately (multiple imputation recommended over complete-case analysis)

- Account for dataset clustering if applicable (e.g., center effects)

- Present performance metrics with precision estimates (confidence intervals)

The C-AKI validation study exemplifies this approach, applying both Motwani and Gupta models to a Japanese cohort of 1,684 patients and evaluating discrimination, calibration, and net benefit [17].

Protocol 2: Model Recalibration Methods

Objective: To adjust an existing model's predictions to better align with observed outcomes in a specific population.

Materials: Validation dataset with observed outcomes, statistical software (R/Python/Stata)

Methodology:

- Assess Original Model: Apply original model to validation data and evaluate calibration

- Recalibration Approaches:

- Intercept Adjustment: Modify baseline risk while keeping predictor effects constant

- Logistic Calibration: Re-estimate linear predictor using validation data

- Model Extension: Add new predictors or interactions if needed

- Implementation:

- For intercept adjustment: newintercept = originalintercept + calibration-in-the-large

- For logistic calibration: re-estimate slope and intercept on linear predictor

- Validation: Assess performance of recalibrated model using bootstrap validation

In the C-AKI study, recalibration significantly improved both models' performance, particularly for severe AKI prediction where the Gupta model demonstrated highest clinical utility after adjustment [17].

The following diagram illustrates the recalibration decision process based on validation results:

Table 3: Key Methodological Resources for Prediction Model Validation

| Resource Category | Specific Tool/Method | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Discrimination Metrics | AUROC/C-statistic | Measures model's ability to distinguish between outcome groups | Interpret with confidence intervals; context-dependent acceptable values |

| Calibration Assessment | Calibration plots | Visualizes agreement between predicted and observed risks | Smoothing methods (loess) often needed for continuous representation |

| Calibration Statistics | Calibration-in-the-large, Calibration slope | Quantifies average prediction accuracy and predictor effects | Values near 1.0 indicate good calibration; significant deviations require adjustment |

| Clinical Utility | Decision Curve Analysis (DCA) | Evaluates clinical value across decision thresholds | Superior to classification metrics as incorporates clinical consequences |

| Internal Validation | Bootstrapping | Assesses internal validity and overfitting | Preferred over data splitting as maintains sample size |

| Model Updating | Recalibration methods | Adjusts model predictions for new populations | Range from simple intercept adjustment to model extension |

| Reporting Guidelines | TRIPOD Statement | Standardized reporting of prediction model studies | Ensures transparent and complete methodology reporting |

Emerging Challenges and Future Directions

The Impact of Large Language Models and AI

The rapid emergence of large language models (LLMs) and artificial intelligence approaches presents new validation challenges. While demonstrating promise in processing multimodal electronic health record data and supporting multi-outcome predictions [18], these models introduce unique methodological concerns. LLMs frequently show poor calibration, with high confidence in incorrect predictions posing potential safety risks in clinical settings [18]. Additionally, their "black box" nature complicates explainability, and their two-step development process (pretraining followed by fine-tuning) creates novel challenges for proper data splitting to prevent overfitting [18].

The implementation of an AI-based prediction model for colorectal cancer surgery decision support demonstrates a comprehensive approach addressing these challenges [19]. This model underwent rigorous development and validation using data from 18,403 patients, followed by implementation assessment in a prospective clinical cohort [19]. The model achieved an AUROC of 0.79 in external validation and demonstrated significant improvement in clinical outcomes, with complication rates dropping from 28.0% to 19.1% after implementation [19].

Methodological Recommendations for advancing Validation Science

To address the persistent validation gap, researchers should prioritize the following approaches:

Adopt Decision-Analytic Frameworks: Move beyond traditional performance metrics to assess clinical usefulness through measures like Net Benefit, which incorporates clinical consequences of decisions [8]

Implement Dynamic Updating Strategies: Develop protocols for continuous model monitoring and updating to maintain performance as clinical practices and populations evolve [8]

Address Fairness and Bias Systematically: Evaluate model performance across relevant subgroups to identify potential disparities, particularly important for LLMs which may amplify biases in training data [18]

Promote Model Updating Over De Novo Development: When possible, refine and update existing models rather than developing new ones, conserving research resources and building on prior knowledge [8]

The field must shift from emphasizing novel model development to prioritizing robust validation and implementation science. Only through this paradigm shift can clinical prediction models fulfill their potential to enhance patient care and clinical decision-making.

The development and implementation of clinical prediction models (CPMs) hold immense promise for enhancing patient care through stratified medicine and improved clinical decision-making [20]. However, the potential benefits of these models are entirely contingent upon their rigorous validation and methodological soundness. Poor validation practices introduce significant bias, undermine model reliability, and can lead to two critical negative outcomes: substantial research waste and direct patient harm [5] [20]. This article, framed within a broader thesis on targeted validation for CPM research, details the consequences of inadequate validation and provides application notes and protocols to uphold the highest standards in model development and evaluation.

The Scale of the Problem: Quantitative Evidence

Systematic reviews of the prediction model literature reveal a pervasive issue of insufficient validation and high risk of bias. The data below summarizes findings from recent analyses of CPMs, including those for self-harm and suicide.

Table 1: Evidence of Poor Validation Practices in Clinical Prediction Model Research

| Metric | Findings | Source |

|---|---|---|

| Overall Risk of Bias | 86% of publications in a general CPM review were at high risk of bias [5]. All model development studies in a suicide/self-harm review were at high risk of bias [20]. | [5] [20] |

| External Validation | Only 27% of implemented models underwent external validation [5]. Only 8% of developed suicide/self-harm models were externally validated [20]. | [5] [20] |

| Calibration Assessment | Only 32% of models were assessed for calibration during development/internal validation [5]. Calibration was assessed for only 9% of suicide/self-harm models in development [20]. | [5] [20] |

| Model Updating | Only 13% of implemented models were updated after deployment [5]. | [5] |

| Model Presentation | Only 17% of suicide/self-harm models were presented in a format enabling use or validation by others [20]. | [20] |

| Common Bias Drivers | Inappropriate evaluation of predictive performance (92%), insufficient sample size (77%), inappropriate handling of missing data (66%), and not accounting for overfitting (63%) [20]. | [20] |

Consequences of Poor Validation

Research Waste

The field is characterized by an "oversupply of unvalidated prediction models," which dilutes research efforts and resources [20]. When models are developed without subsequent external validation or transparent reporting, they cannot be reliably used or built upon by the scientific community. This constitutes a significant waste of research funding, time, and data, stifling genuine progress in the field.

Compromised Clinical Decision-Making and Patient Harm

A model with high bias and poor calibration may provide inaccurate risk estimates. For example, a model that systematically underestimates the risk of self-harm or suicide could lead to the under-treatment of vulnerable individuals, with potentially fatal consequences [20]. Conversely, overestimation of risk could lead to unnecessary interventions, causing patient anxiety and incurring avoidable healthcare costs. The implementation of such models, despite not fully adhering to best practices, directly threatens patient safety [5].

Experimental Protocols for Model Validation

To mitigate these consequences, the following protocols for validation are essential.

Protocol for External Validation of a Clinical Prediction Model

1. Objective: To assess the performance and transportability of an existing CPM in a new participant sample.

2. Essential Materials & Reagents: Table 2: Research Reagent Solutions for Validation Studies

| Item | Function | Example/Note |

|---|---|---|

| Validation Dataset | A dataset distinct from the development data, with the same predictors and outcome, used to test model performance. | Should be representative of the intended target population [20]. |

| Statistical Software (R, Python) | To perform statistical analyses, including discrimination and calibration metrics. | Packages: rms in R, scikit-learn in Python. |

| PROBAST Tool | A structured tool to assess the risk of bias and applicability of the prediction model study [20]. | Ensures standardized critical appraisal. |

3. Methodology:

- Data Extraction: Extract participant data from the chosen validation cohort, ensuring variables match the definitions in the original model.

- Predictor Variables: Harmonize the predictors from your dataset with those required by the model.

- Outcome: Determine the outcome status (e.g., occurrence of self-harm) for each participant within the specified prediction horizon.

- Risk Calculation: Apply the original model's algorithm (e.g., regression formula) to calculate a predicted probability for each participant.

- Performance Assessment:

- Discrimination: Calculate the C-statistic (AUC) to evaluate the model's ability to distinguish between participants with and without the outcome [20].

- Calibration: Assess calibration by plotting observed outcomes against predicted probabilities (calibration plot) and performing a calibration test. A model is well-calibrated if predictions match observed event rates across the risk spectrum [20].

- Reporting: Report the C-statistic with confidence intervals and all calibration metrics transparently.

Protocol for Model Updating After Implementation

1. Objective: To modify and recalibrate a previously implemented CPM that shows performance decay in a new setting or over time.

2. Methodology:

- Performance Monitoring: Continuously or periodically monitor model performance (discrimination and calibration) in the implementation environment (e.g., within a Hospital Information System) [5].

- Trigger for Updating: A significant drop in calibration (calibration-in-the-large) is a common trigger.

- Updating Methods:

- Intercept Update: Adjust the model's intercept to correct for overall over- or under-prediction.

- Logistic Calibration: Re-estimate the intercept and slope of the linear predictor to correct for uniform miscalibration.

- Model Revision: Re-estimate the coefficients of individual predictors or add new predictors, though this requires a larger sample size and more caution.

The workflow for developing, validating, and maintaining a robust CPM is summarized below.

- PROBAST (Prediction model Risk Of Bias ASsessment Tool): A critical appraisal tool for systematic reviews of prediction model studies to assess risk of bias and applicability [20].

- TRIPOD (Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis): A reporting guideline that ensures complete and transparent reporting of prediction model studies, facilitating replication and validation [5] [20].

- ColorBrewer: An interactive tool for selecting colorblind-safe qualitative, sequential, and diverging color schemes for data visualization [21].

- Color Oracle: A free color blindness simulator that allows designers to check their visuals in real-time for common types of color vision deficiency [21].

Executing Targeted Validation: A Step-by-Step Methodological Guide

Defining the intended use is the critical first step in clinical prediction model (CPM) research, forming the bedrock upon which all subsequent validation efforts are built. A precisely mapped intended use scope—encompassing the target population, healthcare setting, and clinical task—ensures that a model is developed and validated for a specific, realistic clinical scenario. This precision is a primary defense against model failure in real-world deployment. Research indicates that a significant majority of published prediction models suffer from a high risk of bias, often stemming from unclear definition and validation of their intended use context [5] [16]. This application note provides a structured framework to address this gap, guiding researchers in explicitly defining these core elements to enhance the validity, usability, and ultimate clinical impact of their CPMs within a targeted validation paradigm.

Core Concepts and Definitions

The intended use of a CPM is a multi-faceted concept that must be explicitly defined before model development begins. The following components are essential:

- Target Population: The specific group of patients for whom the model is designed to provide predictions. This requires clear eligibility criteria (e.g., patients with a first diagnosis of relapsing-remitting multiple sclerosis) [22].

- Health Outcome: The endpoint that the model is designed to predict, which must be clinically relevant and precisely defined (e.g., 5-year overall survival, disease progression within 12 months) [22].

- Healthcare Setting: The specific clinical environment where the model is intended to be deployed (e.g., primary care, tertiary hospital emergency department) [22].

- Clinical Task and User: The specific medical decision the model is meant to inform (e.g., initiating a treatment, ordering a diagnostic test) and the healthcare professional (e.g., nurse, general practitioner, specialist) who will act upon its output [22] [23].

Quantitative Landscape of Current Practice and Gaps

A systematic review of implemented CPMs reveals significant gaps in the current adherence to best practices in defining and validating intended use. The following table summarizes key quantitative findings from recent research:

Table 1: Deficiencies in Current Clinical Prediction Model Practice Based on a Systematic Review

| Aspect of Practice | Finding | Implication for Intended Use |

|---|---|---|

| Overall Risk of Bias | 86% of publications were at high risk of bias [5] [16] | Undermines confidence in the model's intended application. |

| Calibration Assessment | Only 32% of models assessed calibration during development/validation [5] [16] | Limits trust in the accuracy of predicted probabilities for the target population. |

| External Validation | Performed for only 27% of models [5] [16] | Raises questions about generalizability and transportability to new settings and populations. |

| Post-Implementation Updating | Only 13% of models were updated after implementation [5] [16] | Suggests a lack of ongoing validation for the intended use in a dynamic clinical environment. |

These findings underscore a critical need for a more rigorous and structured approach to defining the intended use from the outset, as this foundational work directly impacts the potential for successful validation and implementation.

Experimental Protocols for Defining Intended Use

Protocol 1: Multi-Stakeholder Team Assembly and Scoping

Objective: To form a interdisciplinary team and collaboratively define the preliminary scope of the CPM's intended use.

Background: The development of a fit-for-purpose CPM requires a collaborative and interdisciplinary effort. This team is responsible for defining the aim and ensuring the model is grounded in clinical reality [22]. Engaging end-users from the beginning is crucial for later adoption, as models must support, not supplant, critical clinical thinking and integrate into existing decision-making processes [23].

Methodology:

- Team Formation: Assemble a team that includes, at a minimum:

- Clinicians with content expertise on the medical condition.

- Methodologists (e.g., biostatisticians, data scientists).

- Intended End-Users (e.g., nurses, general practitioners) to provide workflow insights.

- Patients or individuals with lived experience to ensure patient-centered outcomes.

- Preliminary Scoping Session: Conduct a structured meeting to draft initial answers to the following:

- What is the unmet clinical need or decision-making gap?

- What is the precise health outcome of interest?

- Who is the typical patient that would trigger the use of this model?

- In what physical and digital environment will the model be used?

- Literature Review: Systematically review existing models and clinical guidelines to justify the need for a new model and to learn from the intended use definitions of previous efforts [12].

Deliverables: A project charter document that records the consensus on the preliminary intended use, including the clinical rationale and the list of stakeholders.

Protocol 2: Operationalizing the Core Elements of Intended Use

Objective: To translate the preliminary scope into a precise, operationalized definition for the target population, setting, and clinical task.

Background: Vague definitions lead to models that are not reproducible or transportable. A model intended for "all cancer patients" will fail; a model for "postmenopausal women in Western Europe with a first diagnosis of hormone receptor-positive breast cancer" is specific and testable [22]. This precision is necessary for a robust validation strategy.

Methodology:

- Define the Target Population using PICOT Framework:

- P (Population): Define eligibility criteria (e.g., age range, disease stage, comorbidities) and exclusion criteria.

- I (Intervention/Indicator): The act of applying the prediction model.

- C (Comparison): The standard of care without the model (for later impact assessment).

- O (Outcome): The health outcome to be predicted, including the time horizon (e.g., mortality within 30 days of surgery).

- T (Time): The timeframe for prediction and follow-up.

- Specify the Healthcare Setting:

- Describe the level of care (primary, secondary, tertiary), geographic location, and specific workflow step where the model will be integrated (e.g., "at patient discharge from an intensive care unit in a digitally mature metropolitan hospital").

- Articulate the Clinical Task and Decision Threshold:

- State the actionable decision the model will inform (e.g., "to decide on initiating prophylactic treatment").

- Discuss, with clinical partners, potential probability thresholds that might trigger different actions, acknowledging that exact thresholds may be refined later [4].

Deliverables: A finalized protocol section that unambiguously defines the intended use, which will guide data selection, model development, and most importantly, the validation strategy.

Protocol 3: Workflow Integration and Contextual Requirement Analysis

Objective: To model how the CPM will integrate into the clinical workflow and identify the contextual requirements for successful implementation.

Background: Even a statistically perfect model will fail if it disrupts workflow or provides non-actionable outputs. Studies show that clinicians want models that assist in generating and testing diagnostic hypotheses, not those that replace critical thinking or mandate rigid protocols [23].

Methodology:

- Workflow Mapping: Create a detailed process map of the current clinical workflow for the targeted task (e.g., managing a deteriorating patient). Identify the exact point where the model's prediction will be introduced and which user will receive it.

- Contextual Inquiry: Through interviews or focus groups with end-users, determine:

- What other sources of information are used for this task?