The Ultimate Guide to Benchmarking Machine Learning Models on MoleculeNet Datasets (2025)

This article provides a comprehensive resource for researchers and drug development professionals on benchmarking machine learning models using the MoleculeNet ecosystem.

The Ultimate Guide to Benchmarking Machine Learning Models on MoleculeNet Datasets (2025)

Abstract

This article provides a comprehensive resource for researchers and drug development professionals on benchmarking machine learning models using the MoleculeNet ecosystem. It covers foundational knowledge of MoleculeNet's structure and datasets, explores advanced methodologies including representation learning and foundation models, addresses critical troubleshooting for data quality and experimental design, and offers a rigorous framework for model validation and performance comparison. By synthesizing the latest research and practical insights, this guide aims to establish robust, reproducible, and clinically relevant benchmarking practices in molecular machine learning.

Understanding MoleculeNet: The Foundational Benchmark for Molecular Machine Learning

MoleculeNet is a cornerstone benchmark suite in molecular machine learning, introduced to standardize the evaluation of algorithms predicting molecular properties. This guide explores its history, core components, and impact, providing a balanced comparison of its datasets and the methodologies for using them.

A Benchmark is Born: The History and Purpose of MoleculeNet

The field of molecular machine learning has been maturing rapidly, with improved methods and larger datasets enabling increasingly accurate predictions of molecular properties [1]. However, prior to 2017, algorithmic progress was hampered by the lack of a standard benchmark. Researchers benchmarked new methods on different datasets, making it challenging to gauge the true quality and improvement of proposed techniques [1]. MoleculeNet was created to fill this void.

Following in the footsteps of WordNet and ImageNet, MoleculeNet was introduced as a large-scale benchmark for molecular machine learning [1]. Its primary purpose was to curate multiple public datasets, establish standardized metrics for evaluation, and provide high-quality, open-source implementations of previously proposed molecular featurization and learning algorithms, released as part of the DeepChem library [1]. By providing this platform, the creators aimed to stimulate the same kind of breakthroughs in molecular machine learning that ImageNet triggered in computer vision [1].

Inside MoleculeNet: A Technical Breakdown of the Benchmark Suite

MoleculeNet provides a systematic framework for benchmarking, integrating datasets, featurization methods, and learning algorithms into a cohesive system.

Core Datasets and Categorization

MoleculeNet curates over 700,000 compounds, with properties subdivided into four key categories that cover different levels of molecular properties [1] [2]. The table below summarizes the primary datasets available in the original MoleculeNet suite.

Table: Original MoleculeNet Dataset Categories and Examples

| Category | Description | Example Datasets |

|---|---|---|

| Quantum Mechanics | Calculated quantum chemical properties of molecules, often including 3D structures [1]. | QM7, QM7b, QM8, QM9 [1] [2] |

| Physical Chemistry | Measured values for fundamental physicochemical properties [1]. | ESOL (solubility), FreeSolv (solvation energy), Lipophilicity [1] [2] |

| Biophysics | Datasets exploring protein-ligand binding and other biochemical interactions [1] [3]. | PCBA, MUV, HIV, BACE, Tox21 [1] [2] |

| Physiology | Data on physiological effects and toxicology in biological systems [1] [3]. | BBBP (blood-brain barrier penetration), SIDER, ClinTox [1] [4] |

Key Components of the Benchmarking System

A typical MoleculeNet benchmarking workflow, accessible via DeepChem, involves several critical components [1]:

- Featurization: The process of transforming a molecule (e.g., from a SMILES string) into a fixed-length numerical vector suitable for machine learning. MoleculeNet implements numerous featurization methods, from simple fingerprints to learnable representations [1].

- Splitting Methods: Defining how a dataset is divided into training, validation, and test sets is crucial for a realistic performance assessment. MoleculeNet provides various splitting mechanisms, including random, scaffold, and stratified splits, to assess a model's ability to generalize to new, structurally distinct molecules [1].

- Metrics: Standardized evaluation metrics (e.g., Mean Absolute Error (MAE) for regression, ROC-AUC for classification) are defined for each dataset to ensure consistent and fair comparisons between different algorithms [1].

The MoleculeNet Workflow: From Data to Model

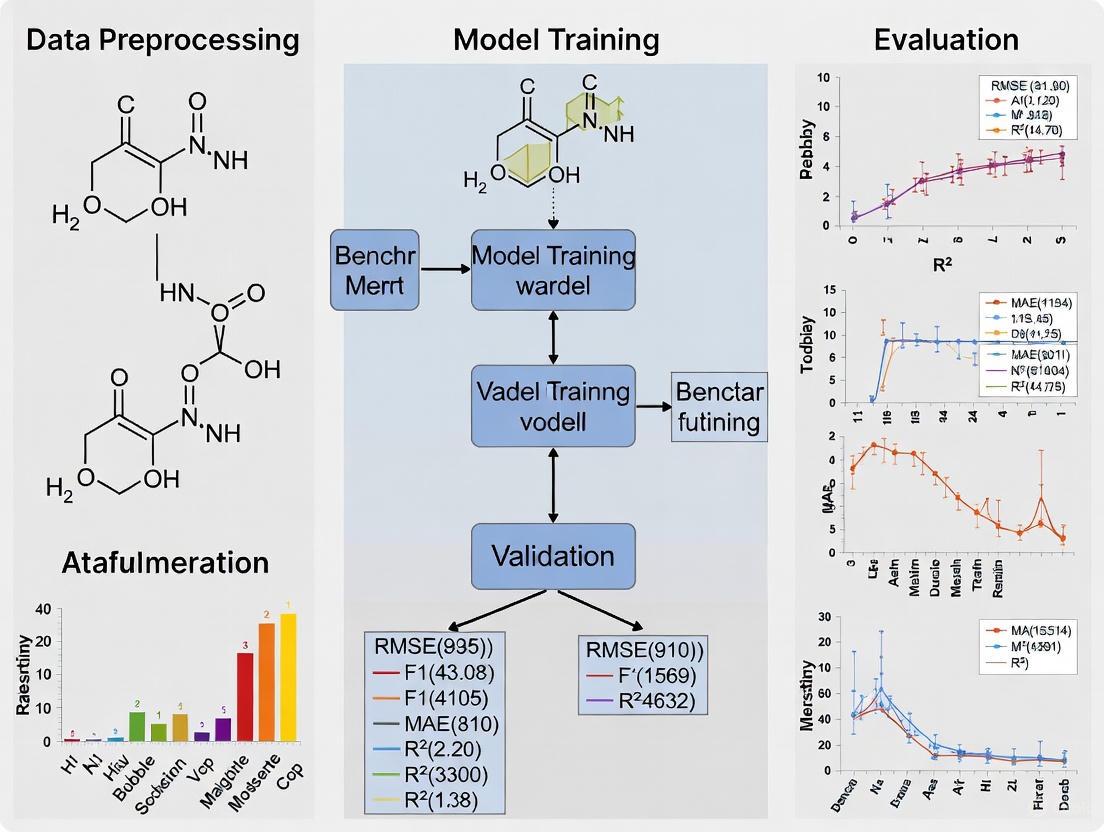

The following diagram illustrates the standard workflow for conducting a benchmark experiment using the MoleculeNet suite.

To effectively use MoleculeNet, researchers rely on a suite of software tools and libraries that handle data loading, molecular manipulation, and model implementation.

Table: Essential Tools for Working with MoleculeNet

| Tool / Resource | Function | Key Feature |

|---|---|---|

| DeepChem Library | The primary platform for loading MoleculeNet datasets and running benchmarks [1] [2]. | Provides dc.molnet.load_* functions for easy dataset access and integration with models [2]. |

| PyTorch Geometric | A library for deep learning on graphs and irregular structures. | Includes a MoleculeNet class for direct access to several datasets in a graph format [4]. |

| RDKit | Open-source cheminformatics toolkit. | Used for parsing SMILES, standardizing chemical structures, and calculating molecular descriptors. |

| SMILES Strings | A line notation for representing molecular structures [1]. | The standard textual representation for molecules in most MoleculeNet datasets [1]. |

Critical Analysis: Strengths and Limitations of MoleculeNet as a Benchmark

While MoleculeNet has become a standard, a critical analysis reveals both its profound impact and significant limitations, necessitating careful usage.

Impact and Key Findings

MoleculeNet's establishment as a common benchmark has enabled meaningful progress. Its early benchmarking demonstrated that learnable representations are powerful tools that often offer the best performance [1]. However, it also revealed important caveats: these representations can struggle with complex tasks under data scarcity and highly imbalanced classification. Furthermore, for quantum mechanical and biophysical datasets, the use of physics-aware featurizations can be more important than the choice of a particular learning algorithm [1].

Documented Limitations and Criticisms

Despite its utility, MoleculeNet has been criticized for several issues that can affect benchmarking results [3]:

- Data Quality and Validity: Some datasets contain invalid chemical structures, such as SMILES strings with uncharged tetravalent nitrogen atoms that cannot be parsed by standard toolkits like RDKit [3].

- Stereochemistry Ambiguity: A significant portion of molecules in datasets like BACE have undefined stereocenters. Since stereoisomers can have vastly different biological activities, this ambiguity makes it challenging to know what is being modeled [3].

- Inconsistent Measurements: Datasets aggregated from numerous sources (e.g., BACE data from 55 papers) may suffer from a lack of experimental consistency, introducing noise and making it difficult to distinguish true model performance from experimental variance [3].

- Task Relevance and Data Leakage: Some benchmark tasks, like FreeSolv, were designed for specific computational physics evaluations and may not be directly relevant to real-world drug discovery applications [3]. Furthermore, the presence of duplicate structures with conflicting labels in datasets like BBBP undermines their reliability [3].

The following diagram maps the logical relationship between these criticisms and their implications for benchmarking.

MoleculeNet has undeniably shaped the field of molecular machine learning by providing an essential, standardized benchmarking platform. It has enabled researchers to compare methods directly and has driven progress in algorithmic development. However, users must be aware of its documented limitations regarding data quality, chemical accuracy, and task relevance. The future of benchmarking in this field lies in the community-driven development of more rigorously curated, application-relevant datasets that build upon the foundation MoleculeNet provided.

The development of robust machine learning (ML) models for chemical and biological sciences requires standardized benchmarks to enable meaningful comparison between proposed methods. MoleculeNet, introduced in 2017, addresses this critical need by providing a large-scale benchmark for molecular machine learning that has been cited in over 1,800 publications [1] [3]. This comprehensive collection consists of multiple public datasets, established evaluation metrics, and high-quality open-source implementations of molecular featurization and learning algorithms, all released as part of the DeepChem library [1]. Unlike previous chemical databases that were researcher-oriented with web portals for browsing, MoleculeNet is specifically designed for machine learning development, providing prescribed data splits and evaluation metrics that enable direct comparison between different algorithmic approaches [1].

Molecular machine learning presents unique challenges that distinguish it from other ML domains. Data acquisition requires specialized instruments and expert supervision, resulting in typically smaller datasets than those available in fields like computer vision or natural language processing [1]. Furthermore, the properties of interest for molecules can range from quantum mechanical characteristics to measured impacts on the human body, requiring models capable of predicting an extremely broad range of properties from inputs that have arbitrary size, variable connectivity, and complex three-dimensional conformers [1]. MoleculeNet aims to facilitate methodological progress by providing a standardized platform that encompasses this diversity while addressing the key issues of limited data, heterogeneous outputs, and appropriate learning algorithms [1].

This guide provides a comprehensive navigation of MoleculeNet's dataset taxonomy, focusing on its four primary categories—quantum mechanics, physical chemistry, biophysics, and physiology—to assist researchers in selecting appropriate benchmarks for their molecular machine learning projects. Within the context of benchmarking machine learning models, understanding the characteristics, appropriate use cases, and limitations of each dataset category is essential for producing meaningful evaluations and advancing the field.

MoleculeNet Dataset Taxonomy and Characteristics

The Four Primary Dataset Categories

MoleculeNet organizes its datasets into four primary categories that span different levels of molecular properties, ranging from molecular-level quantum characteristics to macroscopic physiological impacts on the human body [1] [3]. This hierarchical organization reflects the fundamental principles of chemical and biological systems, where properties at each level emerge from interactions at lower levels.

Quantum Mechanics: These datasets contain calculated quantum mechanical properties for organic molecules derived from the GDB (Generated Database) databases [1] [3]. The properties in these datasets are derived from quantum chemical calculations rather than experimental measurements, making them particularly valuable for benchmarking models intended to approximate computational chemistry methods.

Physical Chemistry: This category aggregates experimental measurements of fundamental physicochemical properties including aqueous solubility, hydration free energy, and lipophilicity [1] [5]. These properties represent crucial parameters in drug discovery and environmental chemistry that influence compound behavior in biological and environmental systems.

Biophysics: Datasets in this category explore various aspects of protein-ligand binding and biomolecular interactions [1] [3]. These benchmarks are essential for evaluating models designed to predict molecular recognition events central to drug discovery and molecular biology.

Physiology: This grouping includes datasets measuring complex physiological endpoints such as blood-brain barrier penetration and various toxicological readouts [1] [3]. These properties represent higher-level biological responses that emerge from complex interactions within biological systems.

Quantitative Dataset Comparison

The following table provides a comprehensive overview of key datasets across MoleculeNet's primary categories, including task types, data sizes, and recommended evaluation metrics:

Table 1: MoleculeNet Dataset Characteristics by Category

| Category | Dataset | Task Type | Compounds | Recommended Split | Recommended Metric |

|---|---|---|---|---|---|

| Quantum Mechanics | QM7 | Regression | 7,165 | Stratified | MAE |

| QM7b | Regression | 7,211 | Random | MAE | |

| QM8 | Regression | 21,786 | Random | MAE | |

| QM9 | Regression | 133,885 | Random | MAE | |

| Physical Chemistry | ESOL (Delaney) | Regression | 1,128 | Random | RMSE |

| FreeSolv (SAMPL) | Regression | 643 | Random | RMSE | |

| Lipophilicity | Regression | 4,200 | Random | RMSE | |

| Biophysics | BACE | Classification/Regression | 1,513 | Scaffold | ROC-AUC/MAE |

| HIV | Classification | 40,000 | Scaffold | ROC-AUC | |

| PCBA | Classification | 400,000 | Random | PRC-AUC | |

| MUV | Classification | 90,000 | Random | PRC-AUC | |

| PDBBind | Regression | 4,852-12,800 | Random | MAE/RMSE | |

| Physiology | BBBP | Classification | 2,000 | Scaffold | ROC-AUC |

| Tox21 | Classification | 8,000 | Random | ROC-AUC | |

| SIDER | Classification | 1,427 | Random | ROC-AUC | |

| ClinTox | Classification | 1,484 | Random | ROC-AUC |

Beyond the original MoleculeNet collection, the benchmark suite has expanded significantly over time. The current DeepChem implementation includes approximately 46 different dataset loaders, encompassing new categories such as chemical reactions, molecular catalogs, structural biology, microscopy, and materials properties [2]. This expansion reflects the evolving needs of the molecular machine learning community and the growing recognition of MoleculeNet as a central benchmarking resource.

Dataset Taxonomy and Relationships

The following diagram illustrates the hierarchical organization and relationships between datasets within the MoleculeNet taxonomy:

Experimental Protocols for Benchmarking on MoleculeNet

Standardized Evaluation Framework

Benchmarking machine learning models on MoleculeNet requires strict adherence to standardized experimental protocols to ensure fair comparisons between different approaches. The DeepChem library provides a consistent framework for this evaluation process, encompassing dataset loading, featurization, splitting, transformation, and model assessment [1] [2]. A typical benchmarking workflow follows these essential stages:

Dataset Selection and Loading: Researchers select appropriate datasets from MoleculeNet's collection using dedicated loader functions (e.g.,

dc.molnet.load_delaney()for ESOL ordc.molnet.load_bace_classification()for BACE classification) [2]. These loaders return a tuple containing task names, datasets (already split into training, validation, and test sets), and any necessary data transformers [2].Featurization: Molecular structures in SMILES format or 3D coordinates must be converted to fixed-length numerical representations using featurization methods. MoleculeNet supports diverse featurization approaches including Extended-Connectivity Fingerprints (ECFP), Graph Convolutions, Coulomb Matrices, and many others [1].

Data Splitting: Appropriate dataset splitting is critical for meaningful evaluation. MoleculeNet provides multiple splitting methods including random splits, scaffold splits (grouping molecules based on common molecular substructures), and stratified splits [1]. The choice of split significantly impacts performance estimates, particularly for assessing model generalization to novel chemical structures.

Model Training and Evaluation: Models are trained on the training set, with hyperparameter optimization performed using the validation set. Final evaluation occurs on the held-out test set using dataset-specific metrics [1].

MoleculeNet Benchmarking Workflow

The following diagram illustrates the standard experimental workflow for benchmarking machine learning models on MoleculeNet datasets:

Critical Considerations in Experimental Design

When benchmarking models on MoleculeNet datasets, researchers must address several critical considerations that significantly impact the validity and interpretation of results:

Data Leakage Prevention: The splitting strategy must align with the dataset's characteristics and the real-world scenario being modeled. Scaffold splitting, which ensures that molecules with common substructures appear in the same split, provides a more challenging but realistic assessment of a model's ability to generalize to novel chemotypes compared to random splitting [1] [3].

Evaluation Metric Selection: Each MoleculeNet dataset includes recommended metrics appropriate for its task type and label distribution. For classification tasks with class imbalance, area under the receiver operating characteristic curve (ROC-AUC) or precision-recall curve (PRC-AUC) are typically recommended, while regression tasks commonly use mean absolute error (MAE) or root mean square error (RMSE) [1].

Statistical Significance: Due to the often small size of many molecular datasets, performance comparisons should include statistical significance testing, ideally through multiple random splits or cross-validation, rather than relying on single split results [3].

Reproducibility: Benchmarking scripts should specify random seeds for all stochastic processes and document all hyperparameters to ensure result reproducibility. The DeepChem framework facilitates this through standardized dataset loading and processing functions [2] [6].

Comparative Analysis of Dataset Categories

Performance Patterns Across Categories

Extensive benchmarking conducted in the original MoleculeNet study and subsequent research has revealed distinct performance patterns across the four dataset categories. These patterns provide insights into the relative strengths and limitations of different molecular representations and learning algorithms:

Quantum Mechanics Datasets: Learnable representations, particularly deep neural networks operating on 3D molecular structures or graph representations, generally achieve the best performance on quantum mechanical property prediction [1]. However, these methods require sufficient training data, with performance degrading significantly under data scarcity conditions. For these datasets, physics-aware featurizations such as Coulomb matrices can be more important than the choice of specific learning algorithm [1].

Physical Chemistry Datasets: Traditional machine learning methods using extended-connectivity fingerprints often compete effectively with more complex deep learning approaches on these datasets, particularly given their relatively small sizes [1]. The performance gap between different methods tends to be narrower for physical chemistry datasets compared to other categories.

Biophysics Datasets: Deep learning methods typically outperform traditional approaches on biophysical datasets, particularly for binding affinity prediction [1]. However, these datasets frequently exhibit significant class imbalance, presenting challenges for all methods. Multi-task learning, where models are trained simultaneously on related tasks, has demonstrated particular utility for biophysical prediction [1].

Physiology Datasets: Complex endpoints like toxicity and blood-brain barrier penetration present the greatest challenges for all methods, with absolute performance metrics typically lower than for other categories [1] [3]. Scaffold splitting often reveals substantial performance degradation compared to random splitting, indicating limited generalization to novel chemical scaffolds.

Comparative Performance Across Algorithms and Representations

Table 2: Typical Performance Ranges by Dataset Category and Method

| Category | Dataset | Traditional ML with ECFP | Graph Neural Networks | Physics-Informed Featurizations | Key Challenges |

|---|---|---|---|---|---|

| Quantum Mechanics | QM9 (MAE) | ~20-30% higher error | State-of-the-art | Competitive with GNNs | Data scarcity for larger molecules |

| Physical Chemistry | ESOL (RMSE) | 0.58-0.68 log mol/L | 0.50-0.60 log mol/L | 0.55-0.65 log mol/L | Limited dataset size |

| Biophysics | BACE (ROC-AUC) | 0.80-0.85 | 0.85-0.90 | 0.75-0.82 | Class imbalance, undefined stereochemistry |

| Physiology | BBBP (ROC-AUC) | 0.85-0.90 | 0.89-0.93 | 0.80-0.87 | Invalid structures, duplicate entries |

Impact of Dataset-Specific Considerations

Each dataset category presents unique considerations that significantly influence benchmarking outcomes:

Data Quality and Standardization: Particularly for physiology datasets, issues with chemical structure representation, stereochemistry definition, and inconsistent experimental measurements across sources can substantially impact model performance and interpretability [3]. For example, the BBBP dataset contains invalid SMILES strings with uncharged tetravalent nitrogen atoms and 59 duplicate structures, including 10 pairs with conflicting labels [3].

Experimental Variability: Aggregated data from multiple sources introduces experimental noise that limits achievable prediction accuracy. For the BACE dataset, which combines results from 55 different publications, approximately 45% of values for the same molecule measured in different papers differed by more than 0.3 logs, exceeding typical experimental error thresholds [3].

Task Relevance and Dynamic Range: Some datasets exhibit dynamic ranges that don't reflect realistic application scenarios. The ESOL solubility dataset spans more than 13 logs, while most pharmaceutical compounds fall within a narrow 2.5-3 log range, potentially inflating perceived model performance [3].

The Scientist's Toolkit: Essential Research Reagents

Computational Frameworks and Libraries

Successful benchmarking experiments on MoleculeNet datasets require familiarity with several essential computational tools and libraries:

Table 3: Essential Research Tools for MoleculeNet Benchmarking

| Tool/Library | Primary Function | Usage in MoleculeNet Research |

|---|---|---|

| DeepChem | Primary ML framework for molecular data | Provides MoleculeNet dataset loaders, featurization methods, and model implementations [2] [6] |

| RDKit | Cheminformatics toolkit | Handles molecular standardization, descriptor calculation, and substructure operations [3] |

| PyTorch Geometric | Graph neural network library | Implements graph-based models for molecular data with MoleculeNet integration [4] |

| TensorFlow | Machine learning framework | Backend for DeepChem models and custom neural network architectures [1] |

| Scikit-Learn | Traditional machine learning | Provides implementations of Random Forests, SVMs, and other baseline models [1] |

Critical Software Components

Beyond the major frameworks, several specialized components are essential for rigorous MoleculeNet benchmarking:

MoleculeNet Loaders: These specialized functions within DeepChem (e.g.,

load_bace_classification(),load_delaney()) provide standardized access to datasets, returning consistent splits and transformations [2] [6]. All loaders follow the pattern of returning a tuple containing(tasks, datasets, transformers)wheredatasetscontains(train, valid, test)splits [2].Featurization Methods: Different molecular representations capture complementary chemical information. MoleculeNet supports diverse featurization approaches including Circular Fingerprints (ECFPs), Graph Convolutions, Weave Featurizations, Coulomb Matrices, and Grid Featurizations for spatial data [1] [6].

Splitting Strategies: The choice of data splitting method significantly impacts performance estimates. MoleculeNet provides implementations of random splitting, scaffold splitting (grouping by Bemis-Murcko scaffolds), stratified splitting (maintaining class balance), and index-based splits for predefined divisions [1].

Validation Metrics: Appropriate metric selection is task-dependent. MoleculeNet specifies recommended metrics for each dataset, including ROC-AUC for balanced classification, PRC-AUC for imbalanced classification, RMSE for regression with normal error distributions, and MAE for regression with potential outliers [1] [6].

Critical Assessment and Limitations

Despite its widespread adoption, researchers must recognize several important limitations and criticisms of MoleculeNet datasets when interpreting benchmarking results:

Data Quality Issues: Multiple MoleculeNet datasets contain fundamental data quality problems including invalid chemical structures, undefined stereochemistry, duplicate entries with conflicting labels, and aggregation artifacts [3]. For example, in the BACE dataset, 71% of molecules have at least one undefined stereocenter, with some molecules containing up to 12 undefined stereocenters, creating significant ambiguity in structure-activity relationships [3].

Task Relevance Concerns: Some datasets included in MoleculeNet have limited relevance to practical applications in drug discovery and chemical research. The FreeSolv dataset, designed to evaluate molecular dynamics simulations for solvation free energy calculation, represents a quantity rarely used in isolation in practical settings [3].

Benchmarking Misuse: The original quantum mechanical datasets (QM7, QM8, QM9) are frequently misused in benchmarking studies [3]. These properties are conformation-dependent, yet many studies utilize them without consideration of molecular geometry, potentially leading to inflated performance metrics that don't reflect real-world utility.

Experimental Noise: The aggregation of data from multiple sources without adequate standardization introduces experimental noise that limits achievable prediction accuracy and complicates method comparison [3]. For endpoints like IC50 measurements, variations in experimental protocols across laboratories can produce significant discrepancies.

These limitations highlight the importance of critical dataset selection and careful interpretation of benchmarking results. Researchers should supplement MoleculeNet evaluations with domain-specific validation on internally consistent datasets that reflect realistic application scenarios.

MoleculeNet provides an essential benchmarking resource for the molecular machine learning community, offering standardized datasets across quantum mechanics, physical chemistry, biophysics, and physiology domains. Its taxonomy reflects the hierarchical organization of chemical and biological systems, enabling comprehensive evaluation of machine learning methods across different property types and complexity levels. The integrated implementation within DeepChem ensures consistent data processing, featurization, and evaluation, facilitating direct comparison between different algorithmic approaches.

For researchers benchmarking new machine learning methods, careful consideration of dataset characteristics within each category is essential for meaningful experimental design and result interpretation. The selection of appropriate data splits, evaluation metrics, and baseline comparisons must align with both the technical specifics of each dataset and the practical applications being targeted. While MoleculeNet has significantly advanced molecular machine learning research, critical awareness of its limitations—including data quality issues, task relevance concerns, and experimental noise—is necessary for proper use and interpretation.

The ongoing evolution of MoleculeNet, with an expanding collection that now includes approximately 46 datasets across broader categories such as chemical reactions, materials science, and microscopy, reflects its growing role as a community resource [2] [7]. Future developments will likely address current limitations through improved data curation, standardized splitting protocols, and the inclusion of more application-relevant benchmarks. By providing both a comprehensive taxonomy of molecular datasets and a standardized benchmarking framework, MoleculeNet continues to facilitate the development of more capable and robust machine learning methods for chemical and biological sciences.

The benchmarking of machine learning models for molecular property prediction requires standardized evaluation protocols to ensure fair comparison and reproducible results. MoleculeNet, a widely adopted benchmark suite in cheminformatics, provides such a framework by curating multiple public datasets, establishing evaluation metrics, and offering standardized data splitting techniques [1]. This guide examines the key metrics, data splitting methodologies, and performance indicators essential for rigorous evaluation of molecular machine learning models, providing researchers and drug development professionals with a comprehensive framework for model assessment.

MoleculeNet Benchmarking Framework

MoleculeNet serves as a standardized benchmark for molecular machine learning, addressing critical challenges in the field including limited dataset sizes, heterogeneous data types, and diverse prediction tasks [1]. The benchmark consolidates over 700,000 compounds with properties spanning quantum mechanics, physical chemistry, biophysics, and physiology, enabling comprehensive evaluation of machine learning algorithms across different domains of chemical research [1] [6].

The framework provides high-quality implementations of molecular featurization methods and learning algorithms through its integration with the DeepChem library [1]. This standardization allows researchers to focus on algorithmic development rather than data preprocessing, facilitating direct comparison between different approaches.

Dataset Categorization

MoleculeNet datasets can be categorized into four primary domains based on the nature of the molecular properties being predicted:

- Quantum Mechanics: Includes datasets such as QM7, QM7b, QM8, and QM9 containing calculated quantum mechanical properties for small organic molecules [1]

- Physical Chemistry: Covers experimental physicochemical properties including solubility (ESOL), hydration free energy (FreeSolv), and lipophilicity (Lipophilicity) [1]

- Biophysics: Contains bioactivity data such as BACE inhibition, HIV replication suppression, and toxicity (Tox21, ToxCast) [1] [6]

- Physiology: Includes pharmaceutical-relevant properties like blood-brain barrier penetration (BBBP) and clinical toxicity (ClinTox) [1] [6]

Key Evaluation Metrics

Regression Metrics

For regression tasks predicting continuous molecular properties, MoleculeNet primarily employs two evaluation metrics:

- Mean Absolute Error (MAE): Recommended for quantum mechanics datasets, MAE measures the average magnitude of errors without considering their direction [1]

- Root Mean Squared Error (RMSE): Preferred for physical chemistry datasets, RMSE penalizes larger errors more heavily through squaring [1]

Classification Metrics

For classification tasks involving categorical molecular properties:

- Area Under the Receiver Operating Characteristic Curve (ROC-AUC): Measures the model's ability to distinguish between classes across all classification thresholds [8] [9]

- Balanced Accuracy: Used particularly for imbalanced datasets to ensure fair evaluation across underrepresented classes

Table 1: Primary Evaluation Metrics in MoleculeNet

| Task Type | Key Metrics | Primary Datasets | Interpretation |

|---|---|---|---|

| Regression | Mean Absolute Error (MAE) | QM7, QM7b, QM8, QM9 | Average absolute difference between predicted and actual values |

| Regression | Root Mean Squared Error (RMSE) | ESOL, FreeSolv, Lipophilicity | Square root of average squared differences, penalizes outliers |

| Classification | ROC-AUC | BACE, HIV, Tox21, SIDER, ClinTox | Model's classification capability across all thresholds |

| Classification | Balanced Accuracy | Imbalanced datasets | Accuracy adjusted for class imbalance |

Data Splitting Methodologies

Splitting Strategies

The method used to split data into training, validation, and test sets significantly impacts model evaluation. MoleculeNet implements multiple splitting strategies:

- Random Splitting: Divides datasets randomly while preserving distribution, suitable for large datasets with diverse structures [1]

- Scaffold Splitting: Groups molecules by their Bemis-Murcko scaffolds, separating structurally distinct molecules to test generalization [1] [8]

- Stratified Splitting: Maintains class distribution across splits, particularly important for imbalanced classification tasks [1]

- Time-Based Splitting: Orders compounds chronologically to simulate real-world discovery settings [1]

Recommended Splitting Protocols

MoleculeNet provides dataset-specific splitting recommendations based on chemical domain knowledge:

Table 2: Recommended Data Splits for Select MoleculeNet Datasets

| Dataset | Data Type | Task Type | Recommended Split | Rationale |

|---|---|---|---|---|

| QM7 | SMILES, 3D | Regression | Stratified | Ensures representation across chemical space |

| BACE | Molecules | Classification/Regression | Scaffold | Tests generalization to novel molecular scaffolds |

| ESOL | SMILES | Regression | Random | Sufficient size and diversity for random splitting |

| FreeSolv | SMILES | Regression | Random | Moderate dataset size with diverse structures |

| HIV | Molecules | Classification | Scaffold | Critical for generalizing to novel compound classes |

| ClinTox | Molecules | Classification | Scaffold | Ensures evaluation on structurally distinct molecules |

| BBBP | Molecules | Classification | Scaffold | Tests model on novel blood-brain barrier penetrators |

Scaffold splitting is particularly recommended for bioactivity and toxicity prediction tasks (e.g., BACE, HIV, ClinTox, BBBP) as it provides a rigorous test of model generalizability to structurally novel compounds [1] [8]. This approach better simulates real-world drug discovery scenarios where models must predict properties for compounds with novel scaffolds.

Experimental Protocols and Workflows

Standard Benchmarking Workflow

The following diagram illustrates the standard MoleculeNet benchmarking workflow implemented in DeepChem:

MoleculeNet Benchmarking Workflow

Implementation Example

The benchmarking process is implemented in DeepChem through standardized functions:

This implementation demonstrates how MoleculeNet standardizes the evaluation process, ensuring consistent featurization and splitting across different models [6].

Performance Indicators and Interpretation

Benchmarking Results and Comparative Performance

Recent advances in molecular machine learning have demonstrated varied performance across different model architectures and datasets:

Table 3: Comparative Model Performance on MoleculeNet Classification Tasks (ROC-AUC)

| Model | BBBP | ClinTox | Tox21 | HIV | BACE | SIDER |

|---|---|---|---|---|---|---|

| MLM-FG (RoBERTa, 100M) | 0.946 | 0.942 | 0.858 | 0.893 | 0.887 | 0.675 |

| MLM-FG (MoLFormer, 100M) | 0.938 | 0.931 | 0.851 | 0.884 | 0.879 | 0.668 |

| Graphormer | 0.723 | 0.902 | 0.791 | 0.807 | 0.841 | 0.629 |

| EGNN | 0.698 | 0.814 | 0.772 | 0.754 | 0.796 | 0.601 |

| GIN | 0.685 | 0.801 | 0.763 | 0.742 | 0.788 | 0.592 |

Table 4: Performance on Regression Tasks (MAE/RMSE)

| Model | FreeSolv (RMSE) | ESOL (RMSE) | QM9 (MAE) | LIPO (RMSE) |

|---|---|---|---|---|

| MLM-FG | 0.796 | 0.521 | 0.038 | 0.545 |

| MolCLIP | 0.832 | 0.558 | 0.041 | 0.578 |

| Graph Neural Networks | 0.871 | 0.612 | 0.045 | 0.621 |

| Traditional ML | 0.943 | 0.684 | 0.052 | 0.693 |

Transformer-based models like MLM-FG, which use functional group-aware pretraining on SMILES sequences, have shown superior performance across multiple MoleculeNet benchmarks, outperforming both graph-based models and traditional machine learning approaches [8]. Recent frameworks like MolCLIP, which leverage vision foundation models pretrained on molecular images, demonstrate competitive performance with significantly less molecular pretraining data [10].

Critical Considerations in Performance Interpretation

When interpreting model performance on MoleculeNet benchmarks, several factors require careful consideration:

- Dataset Characteristics: Performance can vary significantly based on dataset size, balance, and structural diversity [1]

- Splitting Strategy Impact: Models typically show degraded performance under scaffold splitting compared to random splitting, highlighting the importance of rigorous evaluation [8]

- Domain Specificity: Model performance is often task-dependent, with different architectures excelling in different domains [9]

Research Reagent Solutions

Essential Tools and Libraries

Table 5: Key Research Tools for Molecular Machine Learning

| Tool/Library | Function | Application in Benchmarking |

|---|---|---|

| DeepChem | Primary framework for molecular ML | Provides MoleculeNet dataset loaders, featurizers, and splitting methods [1] [6] |

| RDKit | Cheminformatics toolkit | Molecular descriptor calculation, image generation, and structural manipulation [10] |

| Graphviz | Graph visualization | Molecular structure depiction and workflow visualization [11] [12] |

| Scikit-Learn | Traditional ML algorithms | Baseline model implementation and metrics calculation [1] |

| TensorFlow/PyTorch | Deep learning frameworks | Neural network model development and training [1] |

| OpenAI CLIP | Vision foundation model | Backbone for molecular image representation learning (MoleCLIP) [10] |

- ChEMBL: Large-scale bioactivity data used for pretraining molecular representation learning models [10]

- PubChem: Publicly accessible database containing purchasable drug-like compounds for pretraining [8]

- ZINC: Database of commercially available compounds for virtual screening [9]

- QM9: Quantum chemical properties for 134,000 stable small organic molecules [1] [9]

Emerging Trends and Future Directions

The field of molecular property prediction continues to evolve with several emerging trends:

- Foundation Model Integration: Leveraging large-scale pretrained models (e.g., CLIP, GPT) as backbones for molecular representation learning [10]

- Multi-modal Fusion: Combining molecular structure with textual expert knowledge using cross-attention mechanisms [13]

- 3D-Aware Modeling: Incorporating geometric and spatial information through equivariant graph neural networks [9]

- Data Efficiency: Developing methods that achieve competitive performance with limited labeled data through improved pretraining strategies [10] [8]

These advances are progressively addressing key challenges in molecular machine learning, particularly around data scarcity, model generalizability, and interpretation, paving the way for more reliable and impactful applications in drug discovery and materials science.

The reliability of machine learning (ML) models in chemistry is fundamentally constrained by the data upon which they are trained. Public chemical databases such as ChEMBL, PubChem, and ChemSpider provide vast repositories of chemistry-to-protein relationships and bioactivity data, serving as primary feeding grounds for model development [14] [15]. However, these resources are populated using different curation rules, standardization protocols, and inclusion criteria, leading to significant discordance in their content. For instance, a detailed comparison revealed that sources nominally in common across PubChem, UniChem, and ChemSpider can have substantially different structure counts, often due to differences in loading dates and structural standardization [15]. This variability presents a major challenge for ML. The field addresses this through the development of standardized benchmarks like MoleculeNet, which curate and harmonize data from these primary sources to provide a consistent and fair ground for evaluating algorithm performance [16]. This guide explores the journey from raw, heterogeneous data sources to polished benchmarks, objectively comparing their content and highlighting the experimental methodologies essential for building reliable molecular ML models.

Comparative Analysis of Major Public Chemical Databases

Quantitative Comparison of Database Content

The table below summarizes the core statistics and primary focus of four key databases, highlighting their distinct niches and scale.

Table 1: Key Characteristics of Major Chemical Databases (2011-2018 Timespan)

| Database | Reported Size (Compounds/Targets) | Primary Focus | Key Characteristics |

|---|---|---|---|

| ChEMBL | 1,254,575 compounds; 9,570 targets (2013) [14] | Bioactive molecules, SAR data | Curated from medicinal chemistry literature; extensive bioactivity annotations (e.g., IC50, Ki). |

| PubChem | 95 million distinct structures (2018) [15] | Aggregated chemical information | Largest public repository; aggregates data from over 500 sources, including vendors and patents. |

| ChemSpider | 63 million structures (2018) [15] | Curated chemical structures | Focuses on chemical structure integration and validation from over 280 sources. |

| DrugBank | 6,516 drug entries; 4,233 protein IDs (2013) [14] | Drug & drug-target data | Detailed information on FDA-approved and experimental drugs, mechanisms, and pharmacologic data. |

| Human Metabolome Database (HMDB) | 40,437 metabolite entries (2013) [14] | Human metabolism | Comprehensive data on human metabolites with linked enzymatic pathways. |

A comparative study of ChEMBL, DrugBank, HMDB, and the Therapeutic Target Database (TTD) underscored their "expanding complementarity," meaning their contents overlap but also contain significant unique elements, driven by their different curation goals [14]. For example, while DrugBank is the definitive source for approved drug information, ChEMBL offers a much broader set of SAR data from journal articles. This complementarity extends to the larger trio of PubChem, ChemSpider, and UniChem. Although they subsume many of the same primary sources, a 2018 analysis found that their coverage is "significantly different" across 587, 282, and 38 contributing sources, respectively [15]. Consequently, a query for the same compound (e.g., aspirin) can return different associated metadata and annotations depending on the database, directly impacting the data quality for ML tasks.

Experimental Protocols for Database Comparison and Curation

To ensure consistency and reproducibility when working with these databases, researchers employ standardized protocols for data comparison and curation.

Protocol 1: Chemical Structure Standardization and Overlap Analysis This methodology is used to quantify the unique and overlapping chemistry between different databases [14].

- Data Acquisition: Download structural data files (e.g., SDF files) from each database source.

- Structure Normalization: Process all structures using a cheminformatics toolkit (e.g., CACTVS) to normalize stereochemistry, charges, tautomers, and remove counter-ions. This step is critical, as differences in standardization rules are a primary cause of discordance between databases [15].

- Identifier Generation: Calculate unique hash codes or identifiers (e.g., FICTS, FICuS, uuuuu) at different levels of normalization (e.g., ignoring stereochemistry, tautomers, or salts). The IUPAC International Chemical Identifier (InChI) and InChIKey are also calculated for cross-referencing [14].

- Set Comparison: Use the generated identifiers to perform set operations (unions, intersections) between the normalized structure sets from each database. This identifies structures unique to each source and those shared between them.

Protocol 2: Constructing a Standardized ML Benchmark from Multiple Sources This protocol outlines the process used to create benchmarks like MoleculeNet [16].

- Dataset Selection: Curate multiple public datasets spanning diverse molecular properties (e.g., quantum mechanics, biophysics, physiology).

- Data Unification and Curation: Establish a consistent data format. This includes:

- Structural Standardization: Apply a uniform standard to all molecules (e.g., using the RDKit library).

- Duplicate Removal: Identify and remove duplicate entries based on standardized structures.

- Activity/Value Annotation: Ensure property labels are consistent and correctly mapped.

- Data Splitting: Partition each dataset into predefined training, validation, and test sets. Implement multiple splitting strategies (e.g., random, scaffold-based) to evaluate model performance under different conditions and avoid overfitting to specific molecular scaffolds.

- Featurization: Provide high-quality, open-source implementations of various molecular featurization methods (e.g., molecular fingerprints, graph representations, 3D descriptors) to ensure fair comparison between different ML algorithms.

- Evaluation Metrics: Establish standard metrics (e.g., ROC-AUC, RMSE, MAE) for evaluating model performance across all tasks in the benchmark.

The Path to Standardized Benchmarking: MoleculeNet and Beyond

The Role of MoleculeNet as a Consensus Benchmark

MoleculeNet was introduced to address the critical lack of a standard benchmark for comparing molecular machine learning methods [16]. It serves as a large-scale benchmark that curates multiple public datasets, establishes evaluation metrics, and offers high-quality open-source implementations of featurization and learning algorithms. By providing this standardized framework, MoleculeNet allows researchers to objectively gauge the quality of new algorithms, a process that was previously challenging as most were benchmarked on different datasets [16]. Key findings from the MoleculeNet benchmark demonstrate that learnable representations (e.g., graph neural networks) are generally powerful but struggle with data-scarce or highly imbalanced tasks. It also showed that for quantum mechanical and biophysical datasets, the choice of a physics-aware featurization can be more impactful than the choice of the learning algorithm itself [16].

An Emerging Focus on Fine-Grained Reasoning: FGBench

While MoleculeNet operates primarily at the molecular level, new benchmarks are emerging that focus on finer-grained chemical information. FGBench is a dataset designed for molecular property reasoning at the functional group (FG) level [17]. It contains 625,000 problems that require understanding how specific functional groups (e.g., hydroxyl, carboxylic acid) impact molecular properties. This moves beyond molecule-level prediction to probe a model's ability to understand the structure-activity relationships (SAR) that underlie chemical properties, mimicking the reasoning process of a chemist [17]. Benchmarking state-of-the-art LLMs on FGBench has revealed that they currently struggle with FG-level property reasoning, highlighting a key area for future development in the field [17].

The following diagram illustrates the typical workflow for curating data from primary sources into standardized benchmarks and using them to evaluate ML models.

Diagram: The workflow from raw chemical data to model evaluation, showing the critical role of the curation and standardization pipeline.

Table 2: Key Research Reagent Solutions for Molecular Machine Learning

| Resource / Solution | Function in Research |

|---|---|

| ChEMBL | Provides a primary source of curated bioactive molecules with compound-target relationships and structure-activity relationship (SAR) data for model training [14]. |

| PubChem | Serves as a massive aggregator of chemical structures and bioassay data from hundreds of sources, useful for large-scale data mining and validation [15]. |

| MoleculeNet | Offers a standardized benchmark suite for the fair comparison of machine learning algorithms across diverse molecular tasks [16]. |

| FGBench | Provides a benchmark for evaluating fine-grained reasoning capabilities of models at the functional group level, linking structure to property [17]. |

| DeepChem Library | An open-source toolkit that provides high-quality implementations of featurizers and model architectures tailored to molecular machine learning [16]. |

| InChI/InChIKey | A standardized chemical identifier critical for deduplication and cross-referencing compounds across different databases [14]. |

| CACTVS Toolkit | A cheminformatics toolkit used for structural normalization, descriptor calculation, and identifier generation, essential for data preprocessing [14]. |

The journey from vast, heterogeneous public databases like ChEMBL and PubChem to rigorously curated benchmarks like MoleculeNet and FGBench is fundamental to progress in molecular machine learning. Objective comparisons reveal significant differences in the content and focus of primary data sources, driven by their distinct curation philosophies. These differences necessitate robust experimental protocols for data standardization and benchmarking. As the field evolves, benchmarks are increasingly focusing on finer-grained chemical reasoning, pushing models beyond simple property prediction toward a deeper, more interpretable understanding of chemical structure-activity relationships. This ongoing refinement of data sources and benchmarks ensures that ML models can be fairly evaluated and reliably applied to accelerate scientific discovery and drug development.

The Role of MoleculeNet in Advancing Molecular Property Prediction

Table of Contents

- Introduction to MoleculeNet

- MoleculeNet as a Benchmarking Platform

- Performance Comparison of ML Models

- Experimental Protocols in MoleculeNet Benchmarking

- Critical Analysis and Limitations

- Essential Research Toolkit

- Conclusion and Future Directions

MoleculeNet is a large-scale benchmark for molecular machine learning, established to address the critical challenge of standardizing the evaluation of algorithms designed to predict molecular properties. Before its introduction, the field was hampered by a lack of standard benchmarks; new algorithms were typically evaluated on different datasets, making it difficult to gauge true performance improvements and slowing overall progress [1]. MoleculeNet consolidates multiple public datasets, establishes consistent metrics, and provides high-quality open-source implementations of various molecular featurization and learning algorithms through the DeepChem library [1] [16]. This collection encompasses over 700,000 compounds and covers a wide spectrum of molecular properties, ranging from quantum mechanical characteristics to physiological effects [1] [2]. By serving as a centralized, standardized testing ground, similar to the role of ImageNet in computer vision, MoleculeNet has become a foundational resource that facilitates reproducible, comparable, and rigorous assessment of molecular machine learning models, thereby accelerating innovation in the field [1].

MoleculeNet as a Benchmarking Platform

MoleculeNet's structure is designed to provide a comprehensive evaluation framework for machine learning models. Its core components include curated datasets, predefined data splitting methods, evaluation metrics, and integrated featurization techniques.

1. Dataset Curation and Categorization MoleculeNet datasets are systematically organized into categories based on the nature and scale of the molecular properties they represent. The table below outlines the primary categories and their representative datasets.

Table 1: Categories of Datasets in MoleculeNet

| Category | Description | Example Datasets | Data Type |

|---|---|---|---|

| Quantum Mechanics | Calculated quantum chemical properties of small molecules [1]. | QM7, QM8, QM9 [1] [6] | Regression |

| Physical Chemistry | Measured physicochemical properties like solubility and lipophilicity [1]. | ESOL, FreeSolv, Lipophilicity [6] [5] | Regression |

| Biophysics | Biomolecular interaction data, such as protein-ligand binding [1]. | BACE, HIV, PCBA, MUV, Tox21 [6] [2] | Classification/Regression |

| Physiology | Complex physiological endpoints and toxicity in organisms [1]. | BBBP, ClinTox, SIDER [6] [2] | Classification |

2. Data Splitting and Evaluation Metrics A key contribution of MoleculeNet is its emphasis on appropriate data splitting strategies. Unlike random splitting, which can lead to overly optimistic performance estimates, MoleculeNet advocates for scaffold splitting [1] [18]. This method groups molecules based on their two-dimensional structural frameworks (scaffolds) and ensures that molecules with different core structures are placed in training, validation, and test sets. This provides a more realistic and challenging estimate of a model's ability to generalize to novel chemical structures [1]. For each dataset, MoleculeNet also recommends specific evaluation metrics, such as Root Mean Squared Error (RMSE) for regression tasks and Area Under the Receiver Operating Characteristic Curve (ROC-AUC) or Average Precision (AP) for classification tasks [1] [18].

3. Featurization Methods MoleculeNet, via DeepChem, supports a diverse array of molecular featurization techniques that convert raw molecular structures (e.g., SMILES strings) into fixed-length numerical representations suitable for machine learning models. These include:

- Fixed-Length Fingerprints: Such as Extended-Connectivity Fingerprints (ECFP), which are vector representations of molecular substructures [1].

- Learnable Representations: Such as graph convolutional networks, which learn feature representations directly from the molecular graph structure of atoms and bonds [1] [6].

The following diagram illustrates the standard workflow for benchmarking a model on MoleculeNet.

Performance Comparison of ML Models

MoleculeNet has been instrumental in objectively comparing the performance of diverse machine learning approaches. Benchmarks run on its datasets have yielded key insights into the relative strengths of different algorithms and representations.

Table 2: Comparative Performance of Model Types on Select MoleculeNet Tasks

| Model Type | Example Model | Dataset (Task) | Performance Metric | Result | Key Insight |

|---|---|---|---|---|---|

| Learnable Graph-Based | Graph Convolutional Network (GCN) [19] | ClinTox (Classification) | ROC-AUC (%) | 62.5 ± 2.8 [19] | Learnable representations generally offer strong performance [1]. |

| Learnable Graph-Based | Graph Isomorphism Network (GIN) [19] | Tox21 (Classification) | ROC-AUC (%) | 74.0 ± 0.8 [19] | |

| SMILES-based Language Model | MLM-FG (RoBERTa) [8] | ClinTox (Classification) | ROC-AUC | 0.945 [8] | Can outperform graph and 3D models by leveraging functional group context [8]. |

| 3D Graph Model | SchNet [19] | Tox21 (Classification) | ROC-AUC (%) | 77.2 ± 2.3 [19] | Physics-aware featurizations can be critical for quantum tasks [1]. |

| Advanced MTL GNN | ACS (This work) [19] | ClinTox (Classification) | ROC-AUC (%) | 85.0 ± 4.1 [19] | Effective MTL mitigates negative transfer in low-data regimes [19]. |

The benchmarks demonstrate that learnable representations, such as those from Graph Neural Networks (GNNs) and molecular language models, are powerful tools that often deliver top-tier performance [1]. For instance, the MLM-FG model, which uses a novel pre-training strategy of masking functional groups in SMILES strings, outperformed existing SMILES- and graph-based models on 9 out of 11 MoleculeNet tasks, sometimes even surpassing models that use explicit 3D structural information [8]. However, the results also highlight important caveats. Learnable representations can struggle with complex tasks under conditions of data scarcity and highly imbalanced classification [1]. Furthermore, for certain tasks like those in quantum mechanics, the use of physics-aware featurizations can be more impactful than the choice of the learning algorithm itself [1].

Experimental Protocols in MoleculeNet Benchmarking

To ensure fair and reproducible comparisons, benchmarking studies on MoleculeNet follow a standardized experimental protocol.

1. Dataset and Split Selection Researchers select one or more datasets from the MoleculeNet suite relevant to their target property prediction domain. The recommended data splitting method (e.g., scaffold split) is typically used to ensure a rigorous assessment of generalizability [1] [8].

2. Model Training and Evaluation The chosen model is trained on the training set, and its performance is monitored on the validation set. Hyperparameter tuning is conducted based on validation performance. Finally, the model is evaluated only once on the held-out test set using the pre-defined metric (e.g., ROC-AUC, MAE). It is critical to avoid making any decisions based on the test set to prevent information leakage.

3. Addressing Data Challenges

- Multi-Task Learning (MTL): For datasets with multiple related prediction tasks (e.g., Tox21, SIDER), MTL can be employed. A recent advancement, Adaptive Checkpointing with Specialization (ACS), uses a shared GNN backbone with task-specific heads. It checkpoints the best model for each task individually during training, effectively mitigating "negative transfer" where learning one task harms another. This approach has shown to match or surpass state-of-the-art methods on benchmarks like ClinTox, SIDER, and Tox21 [19].

- Low-Data Regimes: Techniques like ACS are particularly valuable in ultra-low data regimes, enabling accurate predictions even with as few as 29 labeled samples, as demonstrated in predicting sustainable aviation fuel properties [19].

The architecture of a modern MTL GNN model like ACS can be visualized as follows.

Critical Analysis and Limitations

Despite its widespread adoption and utility, MoleculeNet is not without limitations, and a critical understanding of these is necessary for proper interpretation of benchmarking results.

Data Quality and Standardization: Some datasets within MoleculeNet contain technical issues, such as invalid chemical structures (e.g., uncharged tetravalent nitrogens in the BBBP dataset) and a lack of consistent stereochemistry definition (e.g., in the BACE dataset, where many molecules have undefined stereocenters) [3]. Inconsistent representation of chemical groups (e.g., carboxylic acid represented as protonated, anionic, or salt forms) within a single dataset can also confound model learning [3].

Experimental Consistency: Many datasets are aggregated from multiple literature sources, leading to potential inconsistencies in experimental conditions and measurement protocols. For example, the BACE dataset was collected from 55 different papers, and combining IC₅₀ data from different assays can introduce significant noise, with values for the same molecule sometimes differing by more than 0.3 logs between studies [3].

Task Relevance and Dynamic Range: The practical relevance of some benchmarks has been questioned. The ESOL solubility dataset spans over 13 logs, which is much wider than the typical 2-3 log range of interest in pharmaceutical development, potentially leading to inflated performance estimates [3]. Similarly, classification cutoffs, such as the 200 nM cutoff in the BACE classification task, may not reflect realistic scenarios in drug discovery for screening hits or lead optimization [3].

Data Leakage and Splitting: While MoleculeNet proposes splitting strategies, errors in source data can undermine them. The BBBP dataset, for instance, contains duplicate structures, some with conflicting labels, which can lead to data leakage if not identified and handled [3].

These limitations underscore that while MoleculeNet is an invaluable tool for methodological comparison, performance on its benchmarks should not be over-interpreted as a direct guarantee of performance in real-world, prospective drug discovery applications.

To conduct research and benchmarking in molecular property prediction, scientists rely on a core set of software tools and data resources. The following table details key components of the modern research toolkit.

Table 3: Essential Toolkit for Molecular Property Prediction Research

| Tool / Resource | Type | Primary Function | Relevance to MoleculeNet |

|---|---|---|---|

| DeepChem [1] [6] | Software Library | Provides end-to-end tools for molecular ML, including data loading, featurization, model building, and training. | The primary library that hosts and provides access to the MoleculeNet datasets. |

| RDKit [18] | Cheminformatics Toolkit | Handles chemical informatics tasks: parsing SMILES, generating molecular fingerprints, calculating descriptors, and substructure searching. | Used for molecule parsing, standardization, and featurization (e.g., ECFP generation). Critical for graph construction in OGB [18]. |

| OGB (Open Graph Benchmark) [18] | Benchmarking Suite | Provides standardized, large-scale graph learning benchmarks. | Includes pre-processed MoleculeNet datasets (e.g., ogbg-molhiv, ogbg-molpcba) as graph objects, ensuring consistent comparison. |

| PyTorch / TensorFlow | Machine Learning Frameworks | Provide flexible, low-level environments for building and training complex deep learning models. | Used for implementing custom neural network architectures, including GNNs and transformers. |

| PyTorch Geometric (PyG) / DGL | Library Extensions | Provide specialized, efficient implementations of graph neural network layers and operations. | Essential for building and training GNN models on molecular graph data from MoleculeNet and OGB. |

| SMILES [8] | Data Format | A line notation for representing molecular structures as text. | The standard string-based representation for molecules in many MoleculeNet datasets and for training language models like MLM-FG [8]. |

MoleculeNet has played a pivotal role in advancing the field of molecular machine learning by providing a standardized, large-scale benchmarking platform. It has enabled rigorous and reproducible comparison of diverse algorithms, from traditional fingerprints with random forests to sophisticated graph neural networks and transformer-based language models. The benchmarks run on MoleculeNet have yielded critical insights, establishing the power of learnable representations while also revealing their limitations in low-data scenarios and highlighting the enduring importance of physics-aware featurizations for certain tasks.

Looking forward, the evolution of molecular benchmarking is progressing in several key directions. There is a growing emphasis on incorporating finer-grained structural information, as seen with datasets like FGBench that annotate functional groups to enable more interpretable and structure-aware reasoning in large language models [20]. Another significant trend is the development of more robust learning paradigms like Adaptive Checkpointing with Specialization (ACS) that effectively manage the challenges of multi-task learning and extreme data scarcity [19]. Furthermore, the community continues to refine benchmarks to address known limitations, moving towards higher-quality, more relevant, and more rigorously curated datasets that better reflect the real-world challenges of molecular design and drug discovery. Through these continued efforts, building upon the foundation laid by MoleculeNet, the field is poised to develop more powerful, reliable, and impactful predictive models for molecular science.

Advanced Methodologies: From Molecular Representations to Foundation Models

Molecular representation learning (MRL) has catalyzed a paradigm shift in computational chemistry and drug discovery, transitioning the field from reliance on manually engineered descriptors to the automated extraction of features using deep learning [21]. This transition enables data-driven predictions of molecular properties, inverse design of compounds, and accelerated discovery of chemical and crystalline materials [21]. The choice of molecular representation—whether graph-based, string-based, image-based, or 3D structural—fundamentally influences model performance in predicting critical chemical properties. Within the context of benchmarking machine learning models on MoleculeNet datasets, this guide provides an objective comparison of dominant representation paradigms, their performance characteristics, and implementation protocols to inform researchers, scientists, and drug development professionals in selecting optimal approaches for their specific applications.

Molecular representation learning encompasses diverse approaches to encoding chemical structures into computationally tractable formats. Each paradigm offers distinct advantages and limitations for capturing relevant chemical information.

Graph-based representations explicitly model molecules as graphs with atoms as nodes and bonds as edges, naturally capturing molecular topology and connectivity patterns [21] [22]. Popular implementations include Message-Passing Neural Networks (MPNNs), Graph Attention Networks (GATs), and Graph Convolutional Networks (GCNs), which operate on 2D molecular structures but can be extended to 3D configurations [22].

String-based representations leverage textual encodings of molecular structures, with SMILES (Simplified Molecular-Input Line-Entry System) and SELFIES (SELF-referencing Embedded Strings) being the most prominent [23]. These sequential representations are compatible with natural language processing architectures but vary in their robustness for generative tasks, with SELFIES demonstrating particular advantages by guaranteeing semantically valid molecular representations [23].

Image-based representations render molecular structures as 2D images, enabling the application of computer vision models and foundation architectures like CLIP for molecular property prediction [24]. This approach facilitates knowledge transfer from pre-trained vision models, potentially reducing data requirements for effective molecular representation learning [24].

3D structural representations capture spatial atomic arrangements, molecular geometry, and conformational properties that are critical for understanding molecular interactions and stereochemistry [25] [21]. These representations can incorporate physical symmetries and constraints, such as SE(3) equivariance, to enhance model robustness and physical consistency [25].

Performance Benchmarking on MoleculeNet

Comparative evaluation across representation paradigms reveals distinct performance profiles across different chemical property prediction tasks. The following table synthesizes experimental findings from rigorous benchmarking studies.

Table 1: Performance Comparison of Molecular Representation Learning Paradigms

| Representation Type | Sample Model Architectures | Key Strengths | Performance Highlights (MoleculeNet Tasks) | Computational Considerations |

|---|---|---|---|---|

| Graph-Based | MPNN, GAT, GCN [22] | Natural encoding of molecular topology; Permutation invariance [22] | State-of-the-art in many classification tasks [22]; Optimal with bidirectional message-passing & attention [22] | Moderate computational cost; 2D graphs reduce cost by >50% vs 3D [22] |

| SMILES/SELFIES | ChemBERTa, SMILES Transformer [23] | Compact representation; Leverages NLP advances [23] | ROC-AUC: 0.803 (HIV), 0.858 (Tox21), 0.916 (BBBP) with optimal tokenization [23] | Low computational cost; Tokenization strategy critical [23] |

| 3D Structures | SE(3)-encoder, Uni-Mol, 3D Infomax [25] [21] | Captures stereochemistry & spatial interactions [25] | Superior chirality-aware tasks [25]; Enhanced prediction for spatially-dependent properties [21] | High computational cost; Conformational generation required [25] |

| Multi-Modal | MMSA, MolFusion, OmniMol [25] [26] | Integrates complementary information; Robust to distribution shifts [26] | 1.8% to 9.6% avg. ROC-AUC improvement over single modalities [26]; SOTA in 47/52 ADMET tasks [25] | High computational cost; Complex training protocols [25] [26] |

| Image-Based | MoleCLIP [24] | Leverages vision foundation models; Data efficient [24] | Competitive with SOTA models using less pretraining data [24]; Robust to distribution shifts [24] | Moderate computational cost; Transfer learning from vision models [24] |

Table 2: Tokenization Method Performance for String-Based Representations

| Representation | Tokenization Method | HIV (ROC-AUC) | Tox21 (ROC-AUC) | BBBP (ROC-AUC) | Key Findings |

|---|---|---|---|---|---|

| SMILES | Byte Pair Encoding (BPE) | 0.781 | 0.841 | 0.901 | Standard approach for sub-word tokenization [23] |

| SMILES | Atom Pair Encoding (APE) | 0.803 | 0.858 | 0.916 | Preserves chemical integrity; Superior performance [23] |

| SELFIES | Byte Pair Encoding (BPE) | 0.772 | 0.839 | 0.902 | Robust representation; fewer invalid outputs [23] |

| SELFIES | Atom Pair Encoding (APE) | 0.793 | 0.851 | 0.910 | Improved over BPE but slightly behind SMILES+APE [23] |

Experimental Protocols and Methodologies

Graph-Based Representation Learning

Optimal performance in graph-based molecular representation learning employs simplified message-passing architectures. State-of-the-art implementations utilize bidirectional message-passing with attention mechanisms, applied to minimalist message formulations that exclude redundant self-perception components [22]. Experimental findings indicate that convolution normalization factors do not consistently benefit predictive power across diverse datasets [22]. For 3D graph representations, spatial features can be incorporated while maintaining computational efficiency; research demonstrates that 2D molecular graphs supplemented with carefully chosen 3D descriptors preserve predictive performance while reducing computational costs by over 50%, offering significant advantages for high-throughput screening campaigns [22].

String Representation and Tokenization Strategies

String-based representation learning relies critically on effective tokenization strategies. Recent research introduces Atom Pair Encoding (APE) as a novel tokenization approach specifically designed for chemical languages, which significantly outperforms traditional Byte Pair Encoding (BPE) by better preserving structural integrity and contextual relationships among chemical elements [23]. Experimental protocols typically involve training BERT-based models with masked language modeling objectives on large molecular datasets (e.g., 77 million SMILES for ChemBERTa), followed by fine-tuning on specific MoleculeNet benchmark tasks [23]. Evaluation across multiple datasets (HIV, Tox21, BBBP) consistently demonstrates that APE tokenization with SMILES representations achieves superior classification accuracy, establishing new benchmarks for chemical language modeling [23].

3D and Geometry-Aware Learning

3D molecular representation learning incorporates spatial geometry through specialized architectures and training strategies. The OmniMol framework implements an innovative SE(3)-encoder for physical symmetry, applying equilibrium conformation supervision, recursive geometry updates, and scale-invariant message passing to facilitate learning-based conformational relaxation [25]. Experimental validation confirms that this approach achieves state-of-the-art performance in property prediction, excels in chirality-aware tasks, and provides enhanced explainability for molecular-property relationships [25]. Training typically leverages large-scale DFT datasets such as Open Molecules 2025 (OMol25), which contains over 100 million density functional theory calculations providing comprehensive coverage of elemental, chemical, and structural diversity [27].

Multi-Modal and Fusion Approaches

Multi-modal molecular representation methods integrate information from different modalities (images, 2D/3D topologies) to create unified molecular embeddings. The MMSA framework employs a structure-awareness module that enhances molecular representation by constructing hypergraph structures to model higher-order correlations between molecules [26]. This approach incorporates a memory mechanism for storing typical molecular representations and aligning them with memory anchors to integrate invariant knowledge, improving model generalization ability [26]. Experimental results demonstrate that MMSA achieves state-of-the-art performance on MoleculeNet benchmarks, with average ROC-AUC improvements ranging from 1.8% to 9.6% over baseline methods [26].

Image-Based Representation Learning

Image-based molecular representation leverages computer vision foundation models for chemical property prediction. MoleCLIP adapts OpenAI's vision foundation model CLIP as a backbone for molecular image representation learning, employing a stratified pretraining workflow that requires significantly less molecular pretraining data to match state-of-the-art performance [24]. Experimental protocols involve rendering molecular structures as standardized 2D images, followed by transfer learning from pre-trained vision models and fine-tuning on target property prediction tasks [24]. This approach demonstrates particular robustness to distribution shifts and effectively adapts to varied tasks and datasets, highlighting the potential of foundation model innovations to advance synthetic chemistry applications [24].

Workflow Visualization

Diagram 1: Molecular Representation Learning Workflow. This diagram illustrates the comprehensive pipeline from molecular structures through different representation paradigms and training strategies to property prediction.

Research Reagent Solutions

Table 3: Essential Research Tools for Molecular Representation Learning

| Tool/Category | Specific Examples | Function & Application | Key Features |

|---|---|---|---|

| Molecular Datasets | MoleculeNet, OMol25, ADMETLab 2.0 [27] [25] | Benchmarking & model training | Curated property labels; Diverse chemical space [27] |

| Graph Neural Network Frameworks | MPNN, GAT, GCN implementations [22] | Graph-based representation learning | Bidirectional message-passing; Attention mechanisms [22] |

| Chemical Tokenizers | Atom Pair Encoding (APE), Byte Pair Encoding (BPE) [23] | Processing string representations | Preserves chemical integrity; Contextual relationships [23] |

| 3D Structure Tools | SE(3)-encoder, RDKit modules [25] [22] | 3D molecular representation | Chirality awareness; Conformational generation [25] |

| Multi-Modal Fusion Architectures | MMSA, OmniMol, MolFusion [26] [25] | Integrating multiple representations | Hypergraph structures; Task-adaptive outputs [26] [25] |

| Foundation Model Adapters | MoleCLIP [24] | Leveraging pre-trained vision models | Transfer learning; Reduced data requirements [24] |

The benchmarking analysis of molecular representation learning paradigms reveals a complex performance landscape where optimal approach selection depends significantly on specific task requirements, available computational resources, and target chemical properties. Graph-based representations provide strong all-around performance with natural molecular topology encoding, while string-based approaches offer computational efficiency when paired with advanced tokenization strategies like Atom Pair Encoding. 3D representations excel in chirality-aware tasks and spatially-dependent properties but incur higher computational costs. Multi-modal approaches consistently achieve state-of-the-art performance by integrating complementary information sources, though with increased implementation complexity. For researchers working with limited data, image-based representations leveraging vision foundation models demonstrate remarkable data efficiency and robustness to distribution shifts. As the field advances, the integration of physical principles, improved explainability, and more efficient fusion strategies will further enhance the predictive power and practical utility of molecular representation learning in drug discovery and materials science applications.

The application of deep learning in chemistry and drug discovery hinges on the ability to create powerful molecular representations. Pretraining strategies—including contrastive learning, masked modeling, and multi-task objectives—have emerged as pivotal techniques for learning these general-purpose representations from unlabeled molecular data. These approaches aim to capture fundamental chemical principles and structural patterns, enabling models to perform effectively on downstream tasks with limited labeled data. This guide provides a comparative analysis of these pretraining paradigms, evaluating their performance, experimental methodologies, and practical implementation within the context of molecular machine learning, with a specific focus on benchmarking against MoleculeNet datasets.