Traditional vs. Deep Learning for Molecular Property Prediction: A Comprehensive Comparison for Drug Discovery

This article provides a systematic comparison of traditional machine learning and modern deep learning methods for molecular property prediction, a critical task in drug discovery and materials science.

Traditional vs. Deep Learning for Molecular Property Prediction: A Comprehensive Comparison for Drug Discovery

Abstract

This article provides a systematic comparison of traditional machine learning and modern deep learning methods for molecular property prediction, a critical task in drug discovery and materials science. Aimed at researchers and development professionals, it explores the foundational principles of expert-crafted features like molecular fingerprints and descriptors versus the end-to-end learning capabilities of Graph Neural Networks and Transformers. The content delves into practical applications, addresses key challenges such as data scarcity and model interpretability, and offers a rigorous validation framework based on benchmark datasets and performance metrics. By synthesizing the latest research, this guide serves as a strategic resource for selecting and optimizing predictive models to accelerate scientific innovation.

From Expert Features to Learned Representations: The Evolution of Molecular Property Prediction

Molecular property prediction is a computational task that uses a molecule's structure to predict its physical, chemical, or biological characteristics. It is a cornerstone of modern research, drastically accelerating the design of new drugs and materials by acting as a fast, in-silico replacement for costly and time-consuming lab experiments [1] [2]. The field is currently defined by a pivotal comparison between traditional machine learning methods and emerging deep learning techniques.

Experimental Comparison of Prediction Methods

The performance of different molecular property prediction methods has been rigorously evaluated across multiple public benchmarks. The following table summarizes key quantitative results from recent, comprehensive studies.

Table 1: Performance Comparison of Molecular Property Prediction Methods on Benchmark Datasets

| Method Category | Specific Model/Representation | Dataset(s) | Key Performance Metrics | Experimental Setup & Notes |

|---|---|---|---|---|

| Traditional Machine Learning | Random Forest (RF) with RDKit Descriptors [3] | CATMoS (NT & VT) [3] | Balanced Accuracy: ~0.785 [3] | Mondrian conformal prediction framework; performance is strong on balanced datasets [3]. |

| Traditional Machine Learning | RF with CDDD (Autoencoder) Descriptors [3] | CATMoS (NT & VT) [3] | Balanced Accuracy: ~0.785 [3] | Autoencoder-generated descriptors; performs similarly to physico-chemical descriptors for this task [3]. |

| Deep Learning (Descriptor-Based) | Random Forest with CDDD [3] | CATMoS (NT & VT) [3] | Efficiency (Class 0/1): 0.879 / 0.855 [3] | Used as a baseline in comparison with descriptor-free deep learning methods [3]. |

| Deep Learning (Graph-Based) | Directed-MPNN (D-MPNN) [4] [5] | Multiple MoleculeNet benchmarks [5] | Matches or surpasses recent supervised models [5] | A robust graph-based architecture often used as a strong baseline; outperforms other node-centric message passing models by 11.5% on average [5]. |

| Deep Learning (Sequence-Based) | MolBERT (SMILES-based) [3] | CATMoS (Very Toxic) [3] | Balanced Accuracy: 0.86-0.87; Sensitivity/Specificity: 0.86-0.87 [3] | Pre-trained model; outperformed other methods on a highly imbalanced dataset without needing over-sampling [3]. |

| Deep Learning (Geometric) | Geometric D-MPNN [4] | Novel thermochemistry datasets [4] | Achieves "chemical accuracy" (<1 kcal mol⁻¹ error) [4] | Incorporates 3D molecular information; meets stringent accuracy requirements for thermochemistry predictions [4]. |

| Multimodal Fusion | MMFRL [6] | 11 MoleculeNet tasks [6] | Significantly outperforms existing methods in accuracy and robustness [6] | Integrates multiple data modalities (e.g., graph, NMR, image) via relational learning; best performance with intermediate fusion [6]. |

| Multi-Task Learning | ACS (Adaptive Checkpointing) [5] | ClinTox, SIDER, Tox21 [5] | Outperforms Single-Task Learning (STL) by 8.3% on average [5] | Effectively mitigates "negative transfer" in multi-task learning; excels in ultra-low data regimes (e.g., 29 samples) [5]. |

Detailed Experimental Protocols

To ensure reproducibility and provide context for the data in Table 1, here are the detailed methodologies from key cited experiments.

Table 2: Detailed Experimental Protocols from Key Studies

| Study Component | Protocol Description |

|---|---|

| Comparative Analysis (CATMoS) [3] | Dataset: CATMoS acute toxicity data (Very Toxic VT, Non-Toxic NT). Data Splits: Used original training/evaluation sets; random splits for training, validation, and conformal prediction calibration. Feature Generation: Standardized SMILES strings; calculated 96 RDKit physico-chemical descriptors and 512 CDDD autoencoder descriptors. Models & Training: Compared Random Forest (RDKit/CDDD) vs. deep learning MolBERT/Molecular-graph-BERT. Used Mondrian conformal prediction for valid, efficient outcomes and to handle class imbalance without sampling. |

| Systematic Model Evaluation [7] | Dataset Scope: Extensive evaluation on MoleculeNet, opioids-related datasets, and molecular descriptor datasets. Experimental Scale: Trained 62,820 models total (50,220 on fixed representations, 4,200 on SMILES, 8,400 on molecular graphs). Representations Compared: Fixed descriptors (e.g., ECFP, RDKit2D), SMILES strings, and molecular graphs. Key Focus: Investigated impact of dataset size, activity cliffs, and statistical rigor on model performance. |

| Geometric Deep Learning [4] | Data: Novel quantum-chemical datasets (ThermoG3, ThermoCBS) of ~124,000 molecules. Model Architecture: Geometric Directed Message Passing Neural Networks (D-MPNN) that incorporate 3D molecular coordinates. Accuracy Goal: Aimed for "chemical accuracy" (≈1 kcal mol⁻¹ for thermochemistry). Techniques: Used Δ-ML (learning the difference between high/low-fidelity data) and transfer learning to enhance accuracy. |

| Low-Data Regime Multi-Task Learning [5] | Method: Adaptive Checkpointing with Specialization (ACS). Architecture: A shared GNN backbone with task-specific heads. Training Mechanism: Monitors validation loss per task; checkpoints the best model parameters for each task individually when it hits a new minimum. Purpose: Designed to mitigate "negative transfer" in multi-task learning, especially when tasks have imbalanced data (ultra-low data regimes). |

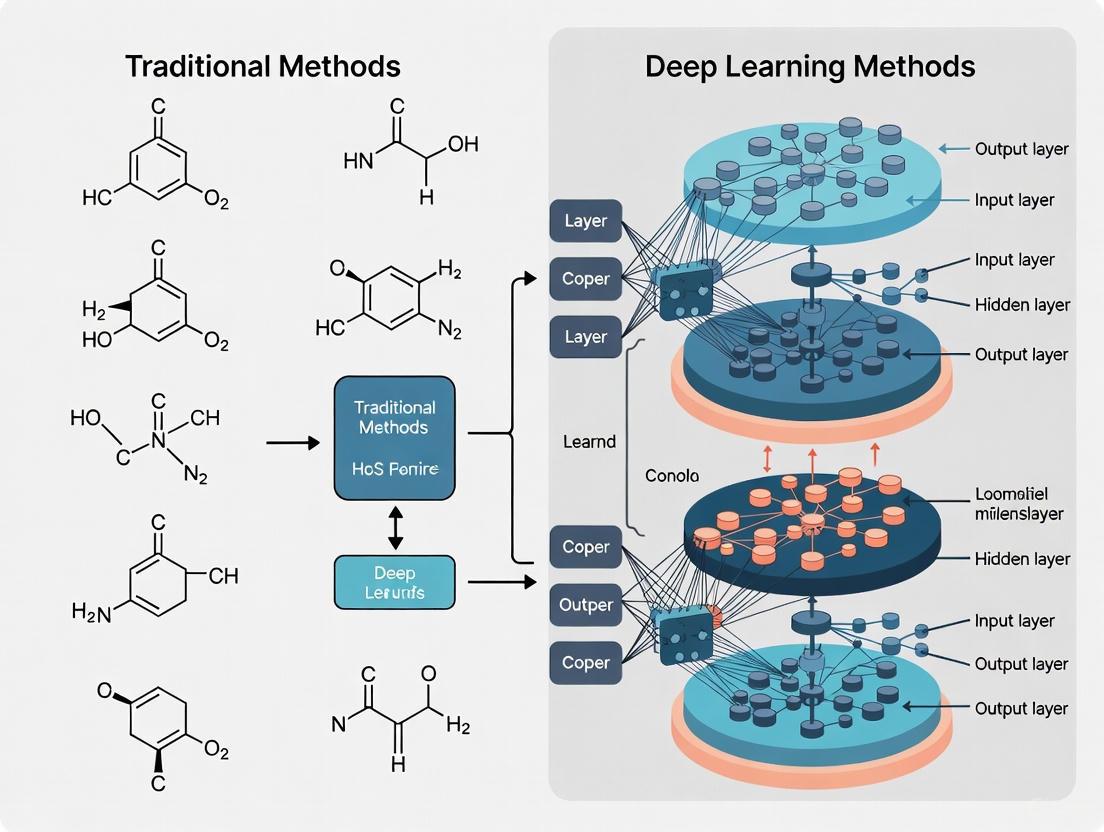

Visualizing the Method Comparison Workflow

The logical workflow for comparing traditional and deep learning methods, as derived from the experimental protocols, can be visualized as follows.

Diagram Title: Molecular Property Prediction Workflow

This table details key computational "reagents" - datasets, software, and molecular representations - that are essential for conducting molecular property prediction research.

Table 3: Key Research Reagent Solutions for Molecular Property Prediction

| Tool Name/Type | Function/Description | Relevance to Experimentation |

|---|---|---|

| CATMoS Dataset [3] | A benchmark dataset for computational toxicology, specifically for acute toxicity prediction. | Used to train and compare models for predicting toxic vs. non-toxic compounds, as shown in Table 1. |

| MoleculeNet Benchmark [7] [6] [5] | A standardized collection of multiple datasets for molecular machine learning. | Serves as the primary benchmark for objectively comparing the performance of new algorithms against existing ones. |

| RDKit [3] [7] | An open-source cheminformatics toolkit. | Used to calculate 2D/3D molecular descriptors, generate fingerprints, and standardize structures in numerous studies. |

| Conformal Prediction [3] | A statistical framework that produces predictions with valid confidence measures. | Used to ensure model predictions are reliable and to define an "applicability domain" for the model. |

| SMILES/String Representations [3] [1] [8] | A line notation for representing molecular structures using ASCII strings. | The input for sequence-based models (e.g., BERT, RNNs); requires tokenization before processing. |

| Molecular Graph (2D/3D) [4] [7] [6] | A representation where atoms are nodes and bonds are edges; can include 3D coordinates. | The standard input for Graph Neural Networks (GNNs) like D-MPNN, capturing structural connectivity and spatial geometry. |

| ChemXploreML [2] | A user-friendly, offline desktop application for molecular property prediction. | Democratizes access to state-of-the-art ML models for chemists without deep programming expertise. |

The experimental data reveals a nuanced landscape. Traditional methods like Random Forest with expert-curated descriptors remain strong, computationally efficient baselines, particularly when data is limited [7]. However, deep learning approaches, especially graph-based (D-MPNN) and geometric models, have demonstrated superior ability to capture complex structure-property relationships, achieving chemically accurate results on challenging thermochemical tasks [4].

The frontier of research is moving beyond simple model comparisons toward sophisticated strategies like multimodal fusion (MMFRL) [6] and specialized multi-task learning (ACS) [5], which are setting new performance standards. Furthermore, the development of accessible tools like ChemXploreML [2] is crucial for translating these advanced computational techniques into practical tools for researchers in drug discovery and materials science.

In the field of molecular property prediction, traditional machine learning (ML) paradigms have long relied on expert-engineered representations to map chemical structures to computationally tractable data. These representations—primarily molecular descriptors and molecular fingerprints—serve as the critical input features for statistical models that predict properties ranging from pharmacological activity to environmental toxicity. Molecular descriptors are typically numerical values that quantify specific physicochemical properties (e.g., molecular weight, logP) or topological features of a molecule. In contrast, molecular fingerprints are binary or count vectors that encode the presence or absence of specific structural patterns or substructures within a molecule, providing a structural signature for similarity searching and machine learning applications [9] [10] [11]. For decades, these hand-crafted features have formed the foundation of Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) models, enabling significant advancements in drug discovery and materials science. This guide provides an objective comparison of these traditional representations, detailing their performance, methodological protocols, and inherent limitations within the evolving landscape of molecular property prediction.

Comparative Performance Analysis of Traditional Molecular Representations

To objectively evaluate the efficacy of different traditional representations, we synthesized data from multiple benchmarking studies that compared molecular descriptors and fingerprints across various property prediction tasks. The table below summarizes key performance metrics.

Table 1: Performance Comparison of Molecular Feature Representations in Predictive Modeling

| Feature Representation | Description | Best-Performing Model Pairing | Representative Performance Metrics | Key Strengths |

|---|---|---|---|---|

| Morgan Fingerprints (ECFP) [12] [10] | Circular fingerprints capturing atomic environments and connectivity within a specific radius. | XGBoost [12] | AUROC: 0.828, AUPRC: 0.237 on multi-label odor prediction [12] | Superior performance in bioactivity and odor prediction tasks; excels at capturing structural cues [12] [10]. |

| Molecular Descriptors (1D & 2D) [9] [10] | Predefined physicochemical (e.g., MolWt, LogP) and topological (e.g., TPSA) properties. | XGBoost [9] | Superior for ADME-Tox targets and physical property prediction compared to fingerprints [9] [10]. | Direct encoding of human-understandable properties; often better for predicting physical and ADME-Tox properties [9]. |

| MACCS Fingerprints [10] | Structural key-based fingerprints with 166 predefined chemical substructures. | Not Specified | Competitive overall performance in broad benchmarking studies [10]. | Simplicity, interpretability, and robust performance across diverse tasks despite lower dimensionality [10]. |

| AtomPair Fingerprints [9] [7] | Encodes molecules based on the presence of atom pairs and their topological distances. | RPropMLP Neural Network [9] | Performance varies significantly by dataset and target [9]. | Captures information on molecular size and shape [7]. |

The comparative analysis reveals a lack of a single universally superior representation. The choice depends heavily on the specific prediction task: Morgan fingerprints demonstrate a notable advantage in complex perception tasks like odor prediction [12], while traditional 1D and 2D molecular descriptors can be more effective for specific physical property and ADME-Tox predictions [9] [10]. Furthermore, simpler fingerprints like MACCS remain highly competitive, challenging the assumption that more complex representations are always better [10].

Experimental Protocols and Methodologies

The performance data presented in the previous section are derived from rigorous, standardized experimental protocols. This section details the common workflows and methodologies employed in benchmarking studies to ensure fair and reproducible comparisons.

Common Benchmarking Workflow

The following diagram illustrates the standard pipeline for evaluating molecular representations in predictive modeling.

Diagram 1: Molecular Representation Benchmarking Pipeline

Detailed Methodological Components

Dataset Curation and Preprocessing: Benchmarking studies typically employ multiple publicly available datasets, such as those from MoleculeNet or specialized collections (e.g., a curated set of 8,681 odorants from ten expert sources) [12] [9] [7]. Standard preprocessing includes removing duplicates and salts, standardizing chemical structures, and applying filters based on heavy atom counts and allowed elements [9].

Feature Extraction:

- Molecular Descriptors: These are calculated using software like RDKit or the PaDEL descriptor and include a range of 1D and 2D properties such as molecular weight (MolWt), topological polar surface area (TPSA), number of rotatable bonds, and molecular logP (molLogP) [12] [9].

- Fingerprints: The most common is the Morgan fingerprint (the implementation of Extended Connectivity Fingerprints, ECFP), generated using the Morgan algorithm from RDKit. This process involves iterative updates of atom identifiers to capture circular atomic neighborhoods up to a specified radius [12] [7]. Other fingerprints like MACCS and AtomPair are also generated using standard cheminformatics toolkits [9] [7].

Model Training and Evaluation: The extracted features are used to train various traditional ML models. Tree-based ensembles like Random Forest (RF) and eXtreme Gradient Boosting (XGBoost) are particularly popular due to their robustness and performance [12] [9]. A critical step is rigorous validation, often using fivefold cross-validation on an 80:20 train-test split, with stratification to maintain the positive-to-negative ratio in each fold. Performance is assessed using metrics like Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) [12].

Key Limitations of Traditional Paradigms

Despite their proven utility, traditional molecular representations suffer from several inherent limitations that constrain their application and performance.

Table 2: Key Limitations of Traditional Molecular Representations

| Limitation | Description | Impact on Predictive Modeling |

|---|---|---|

| Fixed Representational Capacity | The features are pre-defined and static, unable to adapt or learn from data beyond their initial design [11] [13]. | Limits the model's ability to discover novel, complex, or task-specific structural patterns that are not explicitly encoded by experts. |

| Poor Out-of-Distribution (OOD) Generalization | Models built on these representations struggle to make accurate predictions for molecules that are structurally different from those in the training set [14] [15]. | A major hurdle for molecule discovery, which requires extrapolating to new regions of chemical space. OOD error can be 3x larger than in-distribution error [15]. |

| Dependence on Dataset Size | The performance of models using traditional features is highly dependent on the size and quality of the labeled dataset [7] [5]. | They are often ineffective in "ultra-low data regimes," which are common in real-world discovery projects for novel targets or properties [5]. |

| Information Bottleneck | Complex molecular structures must be compressed into a fixed-length vector, which can lead to information loss. For example, ECFPs can suffer from "bit collisions" due to the hashing step [13]. | The representation may fail to capture critical stereochemical, conformational, or electronic information necessary for accurate property prediction [11]. |

These limitations are driving the exploration of deep learning approaches, which aim to learn optimal representations directly from data. However, it is crucial to note that recent large-scale benchmarks have shown that deep representation learning models do not consistently outperform traditional expert-based representations across diverse molecular property prediction tasks [7].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The experimental workflows for implementing traditional ML paradigms rely on a core set of software tools and libraries. The following table details these essential "research reagents."

Table 3: Key Research Reagent Solutions for Traditional ML Modeling

| Tool / Library | Type | Primary Function in Workflow |

|---|---|---|

| RDKit [12] [9] [7] | Open-Source Cheminformatics Library | The workhorse for chemical informatics; used for reading molecules, calculating molecular descriptors (e.g., MolWt, logP, TPSA), and generating fingerprints (e.g., Morgan, AtomPair). |

| PaDEL-Descriptor [10] | Molecular Descriptor Calculation Software | Used to calculate a comprehensive suite of 1D, 2D, and 3D molecular descriptors for QSAR modeling. |

| XGBoost [12] [9] | Machine Learning Library | A leading gradient-boosting framework frequently used as the predictive model due to its high performance with structured, tabular data derived from descriptors and fingerprints. |

| Random Forest [12] [9] | Machine Learning Algorithm | A robust ensemble method commonly benchmarked against other models for its interpretability and performance on fingerprint data. |

| Python (scikit-learn) [10] | Programming Language & ML Library | Provides the ecosystem for data preprocessing, model training, hyperparameter tuning, and evaluation (e.g., cross-validation, metric calculation). |

| DeepChem [15] [13] | Deep Learning Library for Chemistry | Offers standardized implementations of dataset loaders, molecular featurizers (including traditional fingerprints), and model architectures for benchmarking. |

The field of molecular property prediction is undergoing a fundamental transformation, moving from traditional machine learning methods that rely on human-engineered features toward deep learning approaches that learn directly from molecular structure. This revolution of end-to-end learning is reshaping how researchers and drug development professionals predict molecular behavior, enabling more accurate, generalizable, and insightful computational models. Where traditional methods required domain experts to manually design feature representations such as molecular fingerprints and descriptors, modern deep learning architectures automatically learn relevant features from raw molecular representations, uncovering complex structure-property relationships that previously eluded manual feature engineering. This comprehensive analysis compares the performance, methodological approaches, and practical applications of these competing paradigms, providing researchers with evidence-based guidance for selecting appropriate methodologies across different pharmaceutical and materials science contexts.

Performance Comparison: Traditional Machine Learning vs. Deep Learning Approaches

Quantitative Benchmarking Across Multiple Molecular Properties

Table 1: Performance Comparison of Traditional ML and Deep Learning Models on Benchmark Tasks

| Model Category | Specific Model | Key Features/Representation | Performance Metrics | Dataset |

|---|---|---|---|---|

| Traditional ML | Morgan-FP + XGBoost | Morgan structural fingerprints | AUROC: 0.828, AUPRC: 0.237, Accuracy: 97.8% | Odor Perception (8,681 compounds) [12] |

| Traditional ML | Molecular Descriptors + XGBoost | Classical molecular descriptors | AUROC: 0.802, AUPRC: 0.200 | Odor Perception [12] |

| Traditional ML | Functional Group + XGBoost | Functional group fingerprints | AUROC: 0.753, AUPRC: 0.088 | Odor Perception [12] |

| Deep Learning | DLF-MFF | Multi-type feature fusion (2D/3D graph, image, fingerprints) | SOTA on 6 benchmark datasets | Molecular Property Benchmarks [16] |

| Deep Learning | ACS (Multi-task GNN) | Adaptive checkpointing with specialization | Accurate prediction with only 29 labeled samples | Sustainable Aviation Fuels [5] |

| Deep Learning | DeepDTAGen | Multitask: affinity prediction + drug generation | MSE: 0.146, CI: 0.897, r²m: 0.765 | KIBA [17] |

| Deep Learning | Molecular Property Foundation Models | Pre-training on large unlabeled data | Strong in-context learning, variable OOD performance | BOOM Benchmark [14] |

Out-of-Distribution Generalization Capabilities

A critical challenge in molecular property prediction is model performance on out-of-distribution (OOD) data, which tests true generalization capability. The BOOM benchmark (Benchmarks for Out-Of-distribution Molecular property predictions) evaluated over 140 model-task combinations, revealing that neither traditional nor deep learning models consistently achieve strong OOD generalization across all tasks [14]. The top performing model exhibited an average OOD error 3 times larger than in-distribution error, highlighting the generalization challenge. Interestingly, classical machine learning models with high inductive bias can perform well on OOD tasks with simple, specific properties, while current chemical foundation models show promising in-context learning but lack strong OOD extrapolation capabilities [14].

The relationship between in-distribution (ID) and OOD performance varies significantly based on the splitting strategy used to create test sets. For scaffold splitting, the correlation between ID and OOD performance is strong (Pearson r ∼ 0.9), whereas for the more challenging cluster-based splitting (using K-means clustering on ECFP4 fingerprints), this correlation decreases significantly (Pearson r ∼ 0.4) [18]. This indicates that model selection based solely on ID performance may be insufficient for applications requiring strong OOD generalization.

Experimental Protocols and Methodologies

Traditional Machine Learning Workflow

Experimental Protocol for Fingerprint-Based Models (as described in odor prediction study [12]):

- Dataset Curation: Unified dataset of 8,681 unique odorants from ten expert-curated sources with 200 candidate odor descriptors

- Feature Extraction:

- Morgan Fingerprints: Structural fingerprints derived using Morgan algorithm from MolBlock representations

- Functional Group Features: Generated by detecting predefined substructures using SMARTS patterns

- Molecular Descriptors: Calculated using RDKit library including molecular weight, hydrogen donors/acceptors, topological polar surface area, logP, rotatable bonds, heavy atom count, and ring count

- Model Training: Benchmarking of Random Forest, XGBoost, and LightGBM with fivefold cross-validation on 80:20 train:test split

- Evaluation Metrics: Accuracy, AUROC, AUPRC, Specificity, Precision, and Recall with multi-label classification setup

Deep Learning End-to-End Approaches

Experimental Protocol for Multi-type Features Fusion (DLF-MFF) [16]:

- Multi-representation Input:

- Molecular fingerprints (expert knowledge)

- 2D molecular graph (structural information)

- 3D molecular graph (spatial topology)

- Molecular image (global perspective)

- Feature Extraction Backbones:

- Fully Connected Neural Network for fingerprint features

- Graph Convolutional Network for 2D molecular graphs

- Equivariant Graph Neural Network for 3D molecular graphs

- Convolutional Neural Network for molecular images

- Feature Fusion: Integration of four final feature vectors through concatenation

- Prediction Layer: Fully connected layers for final molecular property prediction

Experimental Protocol for Ultra-Low Data Regime (ACS) [5]:

- Architecture: Shared GNN backbone with task-specific multi-layer perceptron heads

- Training Scheme: Adaptive checkpointing with specialization monitoring validation loss for each task

- Negative Transfer Mitigation: Checkpointing best backbone-head pair when validation loss reaches new minimum

- Evaluation: Testing under severe task imbalance conditions with as few as 29 labeled samples

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Computational Tools for Molecular Property Prediction

| Tool/Resource | Type | Function | Applicable Paradigm |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular descriptor calculation, fingerprint generation, SMILES processing | Both traditional ML and deep learning |

| Morgan Fingerprints | Structural Representation | Circular fingerprints capturing molecular substructures | Primarily traditional ML |

| PyTor Geometric | Deep Learning Library | Graph neural networks for molecular structures | Deep learning |

| SMILES | Molecular Representation | String-based molecular representation | Both paradigms |

| Molecular Graphs | Graph Representation | Atoms as nodes, bonds as edges for GNN input | Deep learning |

| ChemXploreML | Desktop Application | User-friendly ML without programming expertise | Traditional ML |

| Multi-task GNNs | Neural Architecture | Simultaneous prediction of multiple molecular properties | Deep learning |

Specialized Solutions for Challenging Scenarios

Ultra-Low Data Regime: The ACS (Adaptive Checkpointing with Specialization) method enables effective learning with as few as 29 labeled samples by combining shared task-agnostic backbones with task-specific heads, dynamically checkpointing parameters to prevent negative transfer [5].

Multi-task Prediction: DeepDTAGen provides a unified framework for both drug-target affinity prediction and target-aware drug generation using shared feature spaces, addressing gradient conflicts through the novel FetterGrad algorithm [17].

Out-of-Distribution Generalization: The BOOM benchmark suite provides systematic evaluation protocols for assessing model performance on OOD data, crucial for real-world applications where chemical space differs from training data [14].

Integration of Large Language Models and Knowledge Enhancement

A recent innovation in molecular property prediction involves integrating knowledge extracted from Large Language Models (LLMs) with structural features from pre-trained molecular models. This approach, exemplified by Zhou et al., prompts LLMs (GPT-4o, GPT-4.1, and DeepSeek-R1) to generate both domain-relevant knowledge and executable code for molecular vectorization [19]. The resulting knowledge-based features are fused with structural representations, creating a hybrid model that leverages both human prior knowledge and learned structural relationships. This integration addresses the limitation of pure LLM-based approaches, which suffer from knowledge gaps and hallucinations for less-studied molecular properties, while simultaneously overcoming the data hunger of pure structure-based deep learning models [19].

The revolution in molecular property prediction is characterized by a shift from manual feature engineering to end-to-end learning from molecular structure. However, rather than completely replacing traditional methods, the evidence suggests a more nuanced landscape where each approach excels in different scenarios:

- Traditional ML with expert-crafted features (particularly Morgan fingerprints with XGBoost) delivers strong performance on well-defined problems with sufficient training data and remains more interpretable [12]

- Deep learning approaches excel in low-data regimes, multi-task settings, and when handling raw molecular representations without manual feature engineering [5] [16]

- Hybrid approaches that combine structural information with external knowledge from LLMs represent a promising direction for enhancing prediction accuracy [19]

For researchers and drug development professionals, selection criteria should consider data availability, property complexity, interpretability requirements, and generalization needs. Traditional methods provide robust baselines and interpretability, while deep learning approaches offer superior performance in challenging scenarios including ultra-low data regimes, multi-task prediction, and complex structure-property relationship modeling. As the field evolves, the integration of large language models and specialized architectures for out-of-distribution generalization will likely expand the boundaries of predictive capability in molecular property prediction.

The pursuit of accurate molecular property prediction is a cornerstone of modern computational chemistry and drug discovery. The choice of how a molecule is digitally represented—its data structure—profoundly influences the performance and applicability of artificial intelligence (AI) models. While traditional machine learning (ML) models often relied on handcrafted molecular descriptors, the rise of deep learning (DL) has shifted the paradigm towards learned representations from raw molecular inputs. The three predominant data representations are SMILES strings, molecular graphs, and 3D conformations, each with distinct trade-offs in structural fidelity, computational cost, and informational completeness.

This guide provides an objective comparison of these core representations, framing them within the broader thesis of traditional versus deep learning methodologies. We summarize quantitative performance benchmarks across standardized tasks, detail experimental protocols from key studies, and provide essential resources to inform the selection of appropriate representations for specific research goals in molecular property prediction.

Performance Comparison of Molecular Representations

The following tables synthesize experimental results from recent benchmark studies, comparing the performance of models utilizing different molecular representations across various property prediction tasks.

Table 1: Performance on Quantum Chemical and Physical Property Prediction Tasks

| Representation | Model Example | Dataset | Key Metric | Performance | Key Advantage |

|---|---|---|---|---|---|

| 3D Conformation | Uni-Mol+ [20] | PCQM4MV2 (HOMO-LUMO gap) | Mean Absolute Error (MAE) | State-of-the-Art (0.0079 improvement over prior SOTA) [20] | Captures spatial, quantum properties |

| 3D Conformation | Uni-Mol+ [20] | Open Catalyst 2020 (IS2RE) | MAE (eV) | Competitive State-of-the-Art [20] | Models catalyst relaxation energy |

| 2D Graph | GROVER [21] | Various MoleculeNet Tasks | AUC-ROC / MAE | Strong General Performance [21] | Balances structure and data efficiency |

| SMILES | MLM-FG [22] | BBBP (MoleculeNet) | AUC-ROC | 0.939 [22] | Effective for permeability prediction |

| SMILES | MLM-FG [22] | ClinTox (MoleculeNet) | AUC-ROC | 0.944 [22] | Accurately flags drug toxicity |

Table 2: Performance on Bioactivity and Olfaction Prediction Tasks

| Representation | Model Example | Task/Dataset | Key Metric | Performance | Key Advantage |

|---|---|---|---|---|---|

| Molecular Fingerprints (2D) | XGBoost [12] | Odor Prediction | AUROC | 0.828 [12] | Superior for complex perceptual properties |

| 3D Conformation | SCAGE [21] | 9 Molecular Property Benchmarks | Varies | Significant Improvements [21] | Identifies activity cliffs & functional groups |

| SMILES | MLM-FG [22] | HIV (MoleculeNet) | AUC-ROC | 0.824 [22] | Effective for antiviral activity prediction |

| Molecular Descriptors | XGBoost [12] | Odor Prediction | AUROC | 0.802 [12] | Interpretable, classic cheminformatics |

| Functional Group Fingerprints | XGBoost [12] | Odor Prediction | AUROC | 0.753 [12] | Simple, chemically intuitive |

Experimental Protocols and Model Methodologies

3D Conformation-Based Models

Protocol for Uni-Mol+ [20] Uni-Mol+ addresses the dependency of quantum chemical (QC) properties on refined 3D equilibrium conformations. Its methodology is a two-step process:

- Initial Conformation Generation: A raw 3D conformation is generated from a 1D SMILES string or 2D graph using fast, cheap methods like RDKit's ETKDG algorithm, with optimization via the MMFF94 force field. This step costs approximately 0.01 seconds per molecule.

- Iterative Conformation Refinement: The raw conformation is iteratively updated towards the target Density Functional Theory (DFT) equilibrium conformation using a deep learning model. The model backbone is a two-track transformer that maintains separate atom and pair representation tracks, enhanced with outer product and triangular update operators inspired by AlphaFold2 to bolster 3D geometric information.

A novel training strategy involves sampling conformations from a pseudo trajectory between the RDKit conformation and the DFT equilibrium conformation, using a mixture of Bernoulli and Uniform distributions. This provides diverse training examples and ensures the model learns an accurate mapping to the final QC properties [20].

Protocol for Structure-Based Drug Design with Chem3DLLM [23] For generative tasks in drug design, Chem3DLLM tackles the challenge of representing 3D structures within a discrete token space. The methodology involves:

- Reversible Text Encoding: A novel encoding scheme using run-length compression converts 3D molecular structures into a format compatible with Large Language Models (LLMs), achieving a 3x size reduction while preserving structural information.

- Multimodal Integration: This encoding allows for the seamless integration of 3D molecular geometry and protein pocket features within a single LLM architecture.

- Reinforcement Learning Optimization: The model is further refined using reinforcement learning with stability-based rewards to optimize for chemical validity and desired biophysical properties like binding affinity [23].

2D Graph and SMILES-Based Models

Protocol for MLM-FG (SMILES) [22] MLM-FG is a transformer-based model that enhances SMILES representation learning through a specialized pre-training task:

- Functional Group-Aware Masking: Instead of randomly masking individual tokens in a SMILES string, the model identifies subsequences corresponding to chemically significant functional groups (e.g., carboxylic acid "-COOH").

- Pre-training Objective: The model is then trained to predict these masked functional groups, forcing it to learn the chemical context and the relationship between molecular substructures and properties. This approach incorporates implicit structural awareness without needing explicit 3D structural data [22].

Protocol for SCAGE (2D Graph with 3D Guidance) [21] SCAGE is a self-conformation-aware graph transformer that leverages 3D information to guide 2D graph representation learning. Its multi-task pre-training framework, M4, includes:

- Multiscale Conformational Learning (MCL): A module that guides the model to understand atomic relationships at different conformational scales.

- Multi-Task Pre-training: The model is trained on four tasks simultaneously: molecular fingerprint prediction, functional group prediction, 2D atomic distance prediction, and 3D bond angle prediction. This ensures the model learns comprehensive semantics from molecular structures to functions.

- Dynamic Adaptive Multitask Learning: A strategy that automatically balances the contribution of the four pre-training tasks to the total loss, leading to more robust and generalized molecular representations [21].

Workflow and Relationship Diagrams

The following diagram illustrates the logical relationship between the core molecular representations and the modeling approaches they enable, culminating in their primary predictive applications.

The experiments and models discussed rely on a suite of software tools and data resources that form the essential "reagent solutions" for modern molecular property prediction research.

Table 3: Key Research Reagents for Molecular Representation Studies

| Tool / Resource Name | Type | Primary Function | Relevance to Representations |

|---|---|---|---|

| RDKit [20] [12] | Cheminformatics Software | Generation of 2D/3D molecular structures and fingerprints. | Core tool for generating initial 3D conformations (via MMFF94/ETKDG) and calculating molecular descriptors. |

| PubChem [22] | Chemical Database | Public repository for purchasable, drug-like compounds. | Primary source of large-scale molecular data (SMILES) for pre-training models. |

| PCQM4MV2 [20] | Benchmark Dataset | Quantum chemical property (HOMO-LUMO gap) prediction. | Standard benchmark for evaluating 3D conformation-based models on quantum mechanical tasks. |

| Open Catalyst 2020 (OC20) [20] | Benchmark Dataset | Catalyst relaxation energy and structure prediction. | Challenging benchmark for 3D models on catalyst systems. |

| MoleculeNet [22] | Benchmark Suite | Collection of datasets for molecular property prediction. | Standard for broad evaluation across SMILES, graph, and descriptor-based models (e.g., BBBP, ClinTox, HIV). |

| MMFF94 [21] [12] | Force Field | Energy minimization and conformation optimization. | Used to generate stable, low-energy 3D conformations for model input. |

| Transformer Architecture [23] [22] | Neural Network Model | Core backbone for sequence and multimodal learning. | Foundation for modern SMILES-based LLMs (e.g., MLM-FG) and multimodal 3D models (e.g., Chem3DLLM). |

| Graph Neural Network (GNN) [21] [24] | Neural Network Model | Learning directly from graph-structured data. | Foundation for models that process 2D molecular graphs (e.g., GROVER, SCAGE). |

The empirical evidence clearly demonstrates a performance-sophistication trade-off among core molecular representations. SMILES strings, while efficient and scalable, are fundamentally limited by their lack of explicit structural awareness, though modern pre-training strategies like functional group masking [22] have narrowed this gap. 2D molecular graphs strike a robust balance, offering a direct encoding of molecular topology that is sufficient for a wide range of bioactivity and property prediction tasks [21] [12]. However, for properties governed by quantum mechanics and spatial complementarity—such as HOMO-LUMO gaps, catalyst energies, and protein-ligand binding affinity—3D conformational representations are unequivocally superior, providing the most informative modality [23] [20].

The frontier of research is increasingly focused on hybrid and multimodal approaches. Models like SCAGE inject 3D conformational knowledge into 2D graph learning [21], while frameworks like Chem3DLLM and 3DSMILES-GPT integrate 3D structural data into the flexible architecture of large language models [23] [24]. This convergence, coupled with physics-informed learning to ensure generated structures are physically plausible [25], points to a future where the distinctions between these representations blur, giving rise to holistic, context-aware models that can seamlessly leverage all available molecular information for accelerated scientific discovery.

A Practical Guide to Methodologies: From Random Forests to Graph Neural Networks

In the rapidly evolving field of molecular property prediction, deep learning approaches often dominate contemporary research discourse. However, traditional machine learning (ML) methods employing molecular fingerprints remain indispensable tools for researchers and drug development professionals. These "traditional workhorses"—Random Forest (RF), eXtreme Gradient Boosting (XGBoost), and Support Vector Machines (SVM)—continue to deliver state-of-the-art performance across diverse prediction tasks while offering computational efficiency and operational transparency.

A 2023 comprehensive evaluation noted that despite the current prosperity of representation learning, fixed molecular representations consistently achieve competitive performance, with dataset size being a critical factor for success [7]. This guide provides an objective comparison of RF, XGBoost, and SVM with molecular fingerprints, presenting experimental data from recent studies to inform method selection for molecular property prediction tasks.

Performance Comparison: Quantitative Benchmarking Across Studies

Predictive Performance Metrics

Table 1: Performance comparison of traditional ML methods with molecular fingerprints across different prediction tasks

| Study Focus | Dataset | Best Model | Key Metric | Performance | Comparative Performance |

|---|---|---|---|---|---|

| Odor Prediction [12] | 8,681 compounds, 200 odor descriptors | XGBoost with Morgan fingerprints | AUROC | 0.828 | XGB > RF > SVM |

| AUPRC | 0.237 | ||||

| Reproductive Toxicity [26] | 1,823 compounds | Ensemble (RF+XGB+SVM) | Accuracy | 86.33% | Ensemble > Individual Models |

| AUC | 0.937 | ||||

| Kinase Profiling [27] | 141,086 compounds, 354 kinases | Random Forest | Average AUC | 0.807-0.825 | RF > XGB > SVM |

| General Molecular Properties [28] | 11 public datasets | SVM (Regression) | RMSE (varies by endpoint) | Best on 7/11 datasets | SVM (Regression) > RF ≈ XGB (Classification) |

| RF/XGBoost (Classification) | AUC/Accuracy | Best on 8/11 datasets |

Computational Efficiency and Training Time

Table 2: Computational characteristics and resource requirements

| Algorithm | Training Speed | Memory Usage | Hyperparameter Sensitivity | Scalability to Large Datasets |

|---|---|---|---|---|

| Random Forest | Fast | Moderate | Low | Excellent |

| XGBoost | Moderate to Fast | Moderate | High | Excellent |

| SVM | Slow for large datasets | High | High | Limited |

According to large-scale benchmarking, tree-based ensembles like RF and XGBoost demonstrate remarkable computational efficiency, often requiring only "a few seconds to train a model even for a large dataset" [28]. In a 2023 comparison of gradient boosting implementations, LightGBM was noted as requiring the least training time for larger datasets, though XGBoost generally achieved the best predictive performance [29].

Molecular Fingerprints: The Critical Input Features

Fingerprint Types and Their Applications

Molecular fingerprints encode chemical structures as numerical vectors, enabling machine learning algorithms to identify patterns correlating with molecular properties. The most commonly employed fingerprints include:

Extended Connectivity Fingerprints (ECFP): Circular fingerprints capturing atomic environments within specific radii (typically ECFP4 with radius=2 or ECFP6 with radius=3) [7]. These have become the "de facto standard circular fingerprint" in drug discovery [7].

MACCS Keys: Structural keys encoding the presence or absence of 166 predefined chemical substructures [27].

Atom-Pair Fingerprints: Capture atomic pairwise relationships emphasizing molecular size and shape [7].

Functional Group Fingerprints: Encode presence of specific functional groups using SMARTS patterns [12].

RDKit 2D Descriptors: 200+ molecular features including molar refractivity, topological polar surface area, and fragment counts [7].

Research Reagent Solutions: Essential Computational Tools

Table 3: Essential software tools and their functions in molecular property prediction

| Tool Name | Function | Application Notes |

|---|---|---|

| RDKit | Molecular descriptor calculation and fingerprint generation | Open-source; calculates 200+ 2D descriptors and multiple fingerprint types [7] |

| PaDEL-Descriptor | Molecular descriptor and fingerprint calculation | Generates 9+ fingerprint types; suitable for high-throughput screening [26] |

| XGBoost | Gradient boosting implementation | Regularized learning objective; often top performer in benchmarks [29] |

| Scikit-learn | Machine learning library | Implements RF, SVM, and other traditional ML algorithms [28] |

| SHAP | Model interpretation | Explains feature importance in descriptor-based models [28] |

Experimental Protocols: Methodologies from Key Studies

Benchmarking Workflow for Method Comparison

Dataset Preparation and Curation

Rigorous dataset preparation is fundamental to reliable model performance. The odor prediction study [12] exemplifies best practices with their multi-step refinement process:

- Data Sourcing: Unified ten expert-curated sources containing 8,681 unique odorants

- Descriptor Standardization: Standardized inconsistent odor descriptors to a controlled set of 201 labels

- Structure Processing: Retrieved canonical SMILES via PubChem's PUG-REST API

- Feature Extraction: Generated Morgan fingerprints, functional group fingerprints, and molecular descriptors

Similarly, kinase profiling research [27] implemented stringent data processing: standardizing molecular structures, removing duplicates, filtering by molecular weight (<1000 Da), and labeling actives/inactives using consistent threshold (pKi/pKd/pIC50/pEC50 ≥ 6).

Model Training and Evaluation Protocols

Comprehensive benchmarking studies employ rigorous evaluation methodologies:

- Cross-Validation: Stratified 5-fold cross-validation maintaining positive:negative ratios in each fold [12] [26]

- Hyperparameter Optimization: Systematic tuning of algorithm-specific parameters

- Evaluation Metrics: Multiple metrics including AUROC, AUPRC, accuracy, specificity, precision, and recall [12]

- Statistical Testing: Repeated runs with different random seeds to ensure result stability [7]

Algorithm-Specific Considerations

Random Forest Implementation

Random Forest operates by constructing multiple decision trees through bagging and random feature selection [30]. Key advantages include:

- Robustness to Overfitting: Natural regularization through ensemble diversity [30]

- Handle High-Dimensional Data: Effective with thousands of fingerprint features without performance degradation [30]

- Missing Data Tolerance: Can manage missing values without complex imputation [30]

Critical hyperparameters include number of trees (nestimators), maximum features per split (maxfeatures), and minimum samples per leaf (minsamplesleaf) [30].

XGBoost Implementation

XGBoost's superior performance often stems from its regularized learning objective and optimization approach [29]. The algorithm introduces:

- Regularization: L1 (Lasso) and L2 (Ridge) regularization terms in the objective function to prevent overfitting [29]

- Newton Descent: Second-order optimization for faster convergence [29]

- Handling Sparse Data: Efficient management of sparse fingerprint representations [31]

Essential hyperparameters include learning rate, maximum tree depth, regularization terms (lambda, alpha), and subsampling ratios [29].

SVM Implementation

Support Vector Machines seek optimal separating hyperplanes in high-dimensional feature space [28]. For molecular fingerprints:

- Kernel Selection: Radial basis function (RBF) kernels typically perform best for complex structure-activity relationships [28]

- Feature Scaling: Critical for stable performance with fingerprint inputs [28]

- Class Imbalance Adjustment: Class weighting strategies for unbalanced bioactivity data [26]

Key hyperparameters include regularization (C), kernel coefficient (gamma), and class weights [28].

Case Studies: Experimental Evidence

Odor Prediction with Morgan Fingerprints

A 2025 comparative study on odor decoding provides compelling evidence for XGBoost's performance advantage [12]. Using a curated dataset of 8,681 compounds, researchers benchmarked RF, XGBoost, and LightGBM across three feature sets. The Morgan-fingerprint-based XGBoost model achieved superior discrimination (AUROC 0.828, AUPRC 0.237), consistently outperforming descriptor-based models. The study concluded that "structure-derived fingerprints are highly effective in capturing olfactory cues, and that gradient-boosted decision trees—particularly XGB—are well suited to leveraging this information for accurate multi-label odor prediction" [12].

Kinase Profiling Prediction

A large-scale 2024 comparison of machine learning methods for kinase inhibitor selectivity revealed Random Forest's strong performance [27]. After evaluating 12 ML and deep learning methods on 141,086 unique compounds and 216,823 bioassay data points across 354 kinases, the study found that "RF as an ensemble learning approach displays the overall best predictive performance" among conventional methods [27]. The RF::AtomPairs + FP2 + RDKitDes fusion model achieved the highest average AUC value of 0.825 on test sets.

Reproductive Toxicity Prediction

A 2021 study on reproductive toxicity prediction demonstrated the power of ensemble approaches combining all three traditional workhorses [26]. Using nine molecular fingerprint types with SVM, RF, and XGBoost on 1,823 compounds, their Ensemble-Top12 model achieved accuracy of 86.33% and AUC of 0.937 in 5-fold cross-validation. The research highlighted that ensemble learning "can sufficiently fuse model predictions together" and "usually produces higher accuracy than individual models because it can manage the strengths and weaknesses of each base learner" [26].

Decision Framework: Method Selection Guidelines

Based on extensive benchmarking evidence, the following guidelines emerge for method selection:

- Choose XGBoost when prioritizing ultimate predictive performance, with sufficient computational resources for hyperparameter tuning [29] [12]

- Select Random Forest when seeking robust performance with minimal hyperparameter tuning, enhanced interpretability, or working with mixed data types [27] [30]

- Employ SVM when dealing with smaller datasets (<5,000 compounds) where its capacity to find complex boundaries in high-dimensional space excels [28] [26]

- Consider Ensemble Approaches combining multiple algorithms when the highest possible accuracy is required for critical applications [26]

Traditional machine learning methods with molecular fingerprints remain powerful, efficient tools for molecular property prediction. The experimental evidence demonstrates that RF, XGBoost, and SVM each have distinct strengths and application scenarios where they excel. While deep learning approaches continue to advance, these traditional workhorses offer compelling advantages in computational efficiency, interpretability, and robust performance across diverse prediction tasks—securing their ongoing relevance in drug discovery and molecular sciences.

Graph Neural Networks (GNNs) have revolutionized molecular property prediction by directly learning from topological structures, surpassing traditional descriptor-based methods. This guide objectively compares three fundamental GNN architectures—Graph Isomorphism Network (GIN), Graph Convolutional Network (GCN), and Graph Attention Network (GAT)—within molecular research contexts. We present consolidated performance metrics across standardized benchmarks, detail experimental methodologies for fair evaluation, and visualize architectural mechanisms. Experimental data reveal that GIN achieves superior accuracy on topology-sensitive tasks like molecular symmetry prediction (92.7% accuracy), while attention-based mechanisms in GAT enhance node-specific representation learning. These GNN architectures consistently outperform conventional machine learning models that rely on hand-crafted molecular fingerprints, establishing end-to-end deep learning as a transformative paradigm for computational chemistry and drug discovery.

Molecular property prediction has traditionally relied on machine learning models using hand-crafted descriptors or fingerprints, which often overlook intricate topological and chemical structures [32]. Graph Neural Networks represent a paradigm shift by enabling direct learning from molecular graphs, where atoms constitute nodes and bonds form edges, eliminating the need for manual feature engineering [32]. This end-to-end learning approach captures both local atomic environments and global molecular structure more effectively than traditional methods.

Among GNN architectures, GCN, GAT, and GIN have emerged as foundational models with distinct mechanistic approaches to topological structure learning. GCN applies spectral graph convolutions with layer-wise transformation, GAT introduces attention-based neighborhood aggregation, and GIN achieves maximum expressiveness for graph isomorphism through injective aggregation functions. Their complementary strengths make them suitable for different molecular prediction tasks, from quantum chemical property estimation to bioactivity classification.

Architectural Comparison and Molecular Applications

Core Architectural Mechanisms

Graph Convolutional Network (GCN): Operates through spectral graph convolutions approximated by layer-wise transformation. Each node's representation is updated by averaging neighboring features followed by a linear transformation and non-linear activation. This approach inherently assumes equal importance of all neighbors, making it computationally efficient but potentially limited in discriminative power for heterogeneous molecular structures.

Graph Attention Network (GAT): Incorporates self-attention mechanisms into the propagation rule, computing hidden representations by attending over neighbor nodes. The attention coefficients are learned through a shared parametric function, enabling differentiated importance weighting for different neighbors within the aggregation process. This proves particularly valuable for molecular graphs where certain atomic interactions or functional groups dominate property outcomes.

Graph Isomorphism Network (GIN): Designed to achieve maximum discriminative power equivalent to the Weisfeiler-Lehman graph isomorphism test. GIN utilizes a multi-layer perceptron (MLP) to update node representations and employs a sum aggregator that can injectively represent neighborhood features. This architectural choice makes GIN particularly powerful for capturing subtle topological differences in molecular graphs.

Performance Benchmarking on Molecular Tasks

Experimental evaluations across standardized molecular benchmarks demonstrate the complementary strengths of each architecture. The table below summarizes quantitative performance metrics for key molecular property prediction tasks.

Table 1: Performance comparison of GNN architectures on molecular property prediction

| Architecture | QM9 (HOMO-LUMO gap) MAE | Molecular Point Group Prediction Accuracy | OGB-MolHIV (ROC-AUC) | logKow Prediction MAE |

|---|---|---|---|---|

| GIN | - | 92.7% [33] | - | - |

| GCN | 0.12 eV (test) / 0.8 eV (gen) [34] | - | - | - |

| GAT | - | - | - | - |

| Graphormer | - | - | 0.807 [32] | 0.18 [32] |

Table 2: Environmental fate prediction performance (MAE)

| Architecture | logKaw | logK_d |

|---|---|---|

| GIN | - | - |

| EGNN | 0.25 [32] | 0.22 [32] |

| Graphormer | - | - |

GIN demonstrates exceptional capability in symmetry-related prediction tasks, achieving 92.7% accuracy in molecular point group prediction from 2D topological graphs, significantly surpassing other GNN-based methods [33]. This superior performance stems from GIN's ability to capture both local connectivity and global structural information essential for symmetry detection.

For quantum chemical properties like HOMO-LUMO gap prediction, GCN-based proxies trained on QM9 dataset achieve MAE=0.12eV on test data, though performance degrades (MAE≈0.8eV) on generated out-of-distribution molecules [34]. This highlights the generalization challenges even for powerful GNN architectures.

Environmental fate prediction benchmarks reveal that geometrically-aware models like EGNN achieve superior performance for partition coefficients (logKaw MAE=0.25, logK_d MAE=0.22), though GIN and Graphormer maintain competitive accuracy, with Graphormer achieving the best performance on logKow prediction (MAE=0.18) [32].

Experimental Protocols and Methodologies

Dataset Preparation and Preprocessing

Standardized molecular benchmarks ensure fair architectural comparison:

QM9 Dataset: Contains 134,000 stable small organic molecules with quantum chemical properties computed using DFT [34] [32]. Standard splitting protocols (80/10/10 train/validation/test) ensure comparable evaluations. Molecular graphs are constructed with atoms as nodes and bonds as edges, with node features including atomic number, hybridization, and valence state.

OGB-MolHIV: Part of the Open Graph Benchmark containing over 41,000 molecules for binary classification of HIV replication inhibition [32]. This represents a real-world biological activity prediction task with significant class imbalance, requiring careful metric selection (ROC-AUC).

Molecular Symmetry Dataset: Derived from QM9 but with point group labels annotated for the most stable 3D conformations [33]. This challenges models to predict 3D symmetry from 2D topological graphs alone.

Preprocessing typically involves node feature normalization, graph normalization, and optionally edge feature incorporation. For GAT and GIN, self-loop addition is common to ensure nodes incorporate their own features during aggregation.

Model Training and Evaluation Protocols

Consistent training methodologies enable meaningful architecture comparisons:

Regularization Techniques: Dropout (rate=0.2-0.5), batch normalization, and graph size normalization are standard. For molecular tasks, data augmentation via canonical SMILES rotation or virtual adversarial training improves generalization.

Optimization: Adam optimizer with initial learning rate 0.001-0.01 and reduce-on-plateau scheduling. Early stopping based on validation loss prevents overfitting.

Evaluation Metrics: Task-dependent metrics include Mean Absolute Error (MAE) for regression, Accuracy/F1-score for classification, and ROC-AUC for binary classification with class imbalance.

Reproducibility: Fixed random seeds, cross-validation (typically 5-fold), and multiple runs with different initializations ensure statistical significance of results.

Architectural Workflows and Signaling Pathways

The diagram below illustrates the fundamental differences in how GCN, GAT, and GIN process molecular graph information during message passing.

GNN Architecture Comparison: Information aggregation mechanisms in GCN, GAT, and GIN

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential computational tools for GNN research in molecular property prediction

| Resource | Type | Function | Example Applications |

|---|---|---|---|

| QM9 Dataset | Molecular Dataset | Quantum chemical properties for small organic molecules [34] [33] [32] | HOMO-LUMO gap prediction, molecular energy estimation |

| OGB-MolHIV | Benchmark Dataset | Bioactivity classification for HIV inhibition [32] | Drug discovery screening, molecular activity prediction |

| PyTorch Geometric | Deep Learning Library | GNN implementation and training [34] | Model prototyping, molecular graph processing |

| RDKit | Cheminformatics Library | Molecular graph construction and descriptor calculation | SMILES to graph conversion, molecular feature extraction |

| Density Functional Theory (DFT) | Computational Method | Ground-truth property calculation for validation [34] | Verification of ML-predicted molecular properties |

Discussion and Research Implications

The empirical evidence demonstrates that GIN, GCN, and GAT each occupy distinct niches within the molecular property prediction landscape. GIN's superior performance on symmetry-related tasks [33] and structural discrimination makes it ideal for conformation analysis and materials design. GAT's attention mechanism offers interpretability advantages for drug discovery, where understanding specific atomic contributions to bioactivity is crucial. GCN remains a computationally efficient baseline for large-scale virtual screening.

These GNN architectures collectively represent a significant advancement over traditional descriptor-based machine learning methods, which often overlook intricate topological relationships [32]. The ability to learn directly from molecular graph structure enables more accurate modeling of complex structure-property relationships, particularly for quantum chemical properties and bioactivity endpoints.

Future research directions include developing geometry-aware GNNs that incorporate 3D molecular conformations without sacrificing computational efficiency, creating better regularization techniques to address the distribution shifts between training and generated molecules [34], and improving interpretability to build trust in predictive models for critical applications like toxicity assessment and drug candidate prioritization.

The field of molecular property prediction has undergone a significant transformation, moving from traditional descriptor-based machine learning methods to sophisticated deep learning architectures capable of learning directly from molecular structure. Traditional approaches relied heavily on hand-crafted molecular descriptors or fingerprints, which often overlooked intricate topological and chemical information [32]. The advent of Graph Neural Networks (GNNs) marked a pivotal shift by enabling direct learning from molecular graphs, where atoms are represented as nodes and bonds as edges, eliminating the need for manual feature engineering [32].

Within this evolution, two advanced architectural paradigms have emerged as particularly powerful: Equivariant Graph Neural Networks (EGNNs) that explicitly incorporate 3D molecular geometry, and Graph Transformer models like Graphormer that capture global dependencies through attention mechanisms. These architectures address fundamental limitations of earlier GNNs that were restricted to 2D topologies and lacked spatial knowledge of molecular geometry, which is crucial for accurately predicting properties influenced by 3D conformation and long-range interactions [32]. This guide provides a comprehensive comparison of these advanced architectures, their performance characteristics, and practical implementation considerations for molecular property prediction in drug discovery and environmental chemistry applications.

Equivariant Graph Neural Networks (EGNNs)

Equivariant Graph Neural Networks incorporate a crucial physical inductive bias by design: they preserve Euclidean symmetries under translation, rotation, and reflection. This means that rotating or translating a molecule in 3D space does not change the model's scalar predictions (e.g., energy, toxicity), while vector and tensor outputs transform consistently with the input [35]. This property is fundamental for molecular systems where properties are invariant to orientation in space.

The core innovation of EGNNs lies in their direct integration of 3D atomic coordinates into the learning process. Unlike traditional GNNs that operate solely on topological connections, EGNNs update both atomic features and their coordinates through equivariant operations. For example, the Equivariant Transformer (ET) in TorchMD-NET implements E(n)-equivariant layers that update atom representations using vectorial features (e.g., direction and distance) between atoms in 3D space [35]. This allows the network to learn representations that respect the physical symmetries of molecular systems, making them particularly suitable for predicting quantum-mechanical properties, toxicity, and other geometry-sensitive molecular characteristics.

Graphormer: Global Attention for Graph-Structured Data

Graphormer adapts the powerful Transformer architecture, which has revolutionized natural language processing, to graph-structured data. The key innovation lies in replacing the local message-passing paradigm of conventional GNNs with a global attention mechanism that enables direct information exchange between all nodes in the graph [32]. This allows Graphormer to capture long-range dependencies that might be crucial for molecular properties but are often diluted in multiple layers of message passing.

The architecture incorporates several graph-specific modifications to the standard Transformer, including spatial encoding based on shortest path distances between nodes, and edge encoding that incorporates bond information into the attention mechanism [32]. Rather than being limited to local neighborhoods, each node can attend to all other nodes in the graph, with the attention weights modulated by structural information. This global receptive field makes Graphormer particularly effective for tasks requiring an integrated understanding of the entire molecular structure, such as predicting partition coefficients and bioactivity [32].

Exphormer: Bridging Efficiency and Global Attention

A significant challenge with graph transformers is their quadratic computational complexity relative to graph size. Exphormer addresses this limitation through a sparse attention framework that combines three components: local attention from the input graph, global attention via virtual nodes, and expander edges to ensure rapid information mixing [36]. This architecture maintains linear complexity while preserving the benefits of global attention, enabling application to larger molecular graphs [36].

Architecture comparison between Equivariant GNN and Graphormer

Performance Comparison: Quantitative Benchmarking

Environmental Fate Prediction

Partition coefficients are crucial for understanding how chemicals behave in the environment, including their solubility, volatility, and degradation pathways. Benchmarking studies reveal distinct performance patterns across architectures for predicting these environmentally significant properties [32].

| Architecture | log Kow (MAE) | log Kaw (MAE) | log K_d (MAE) | Key Strengths |

|---|---|---|---|---|

| Graphormer | 0.18 | 0.31 | 0.29 | Superior on octanol-water partitioning, global attention captures complex molecular interactions |

| EGNN | 0.24 | 0.25 | 0.22 | Best performance on geometry-sensitive properties (volatility, soil adsorption) |

| GIN (2D Baseline) | 0.31 | 0.38 | 0.35 | Competitive baseline for topology-driven properties |

| Traditional ML | 0.35-0.42 | 0.41-0.49 | 0.39-0.47 | Outperformed by all GNN architectures |

Comparative performance on environmental partition coefficient prediction (MAE = Mean Absolute Error; lower is better). Data sourced from benchmark studies [32].

Quantum Property and Bioactivity Prediction

The comparative advantages of each architecture become more pronounced when examining performance across diverse molecular benchmark datasets spanning quantum properties, drug-like molecules, and real-world bioactivity.

| Architecture | QM9 (Quantum, MAE) | ZINC (Drug-like, MAE) | OGB-MolHIV (ROC-AUC) | Computational Efficiency |

|---|---|---|---|---|

| Graphormer | 0.021 | 0.085 | 0.807 | Moderate (quadratic scaling, optimizations available) |

| EGNN | 0.015 | 0.092 | 0.784 | High (linear scaling, parallelizable) |

| GIN (2D Baseline) | 0.038 | 0.121 | 0.762 | High (linear scaling, simple architecture) |

| Exphormer | - | - | - | Very High (linear scaling, large graph capability) |

Performance comparison across standard molecular benchmarks. EGNN excels in quantum properties, Graphormer leads in bioactivity classification [32] [36].

Toxicity Prediction

For toxicity prediction, EGNNs demonstrate particular promise by leveraging 3D molecular conformations. Studies evaluating the Equivariant Transformer (ET) on eleven toxicity datasets from MoleculeNet, TDCommons, and ToxBenchmark show that ET adequately learns 3D representations that successfully correlate with toxicity activity, achieving accuracies comparable to state-of-the-art models [35]. The incorporation of 3D geometry is particularly valuable for distinguishing stereoisomers like cis- and trans-Platin, which have identical 2D structures but dramatically different toxicological profiles [35].

Experimental Protocols and Methodologies

Standardized Benchmarking Approaches

Robust evaluation of molecular property prediction models requires standardized datasets, splitting strategies, and evaluation metrics. The experimental protocols commonly employed in benchmarking studies include:

Dataset Curation and Preprocessing: Models are typically evaluated on established molecular benchmarks including QM9 (quantum mechanical properties of small organic molecules), ZINC (drug-like molecules), OGB-MolHIV (bioactivity classification), and MoleculeNet (environmental partition coefficients) [32]. For 3D-aware models like EGNN, high-quality molecular conformers are generated using tools like CREST with the GFN2-xTB semiempirical method or extracted from databases like GEOM [35].

Training-Testing Splits: Standardized data splits ensure fair comparison, typically employing 80/20 training-test splits with stratified sampling to maintain class balance in classification tasks [32]. For molecular datasets, scaffold splits that separate structurally distinct molecules provide a more challenging evaluation of generalizability.

Evaluation Metrics: Regression tasks utilize Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE), while classification tasks employ ROC-AUC and accuracy metrics [32]. These metrics provide complementary insights into model performance across different error characteristics and classification thresholds.

Ablation Studies and Sensitivity Analysis

Rigorous experimentation includes ablation studies to isolate the contribution of specific architectural components. For EGNNs, this involves testing the importance of equivariant constraints by comparing against non-equivariant baselines [35]. For Graph Transformers, studies examine the impact of different encoding strategies and attention sparsification approaches [36] [37].

Hyperparameter sensitivity analysis is crucial for both architecture types. EGNN performance depends on choices related to coordinate update mechanisms, representation dimensionality, and interaction cutoffs [35]. Graph Transformer performance is sensitive to attention heads, positional encoding strategies, and depth-width tradeoffs [37].

Standard experimental workflow for benchmarking molecular property prediction models

Implementation Considerations: The Researcher's Toolkit

Successful implementation of advanced GNN architectures requires both computational resources and specialized software tools. The following table outlines key components of the research toolkit for developing and deploying these models.

| Resource Category | Specific Tools & Platforms | Application Context |

|---|---|---|

| Deep Learning Frameworks | PyTorch, PyTorch Geometric, JAX | Core model implementation and training |

| Equivariant GNN Libraries | TorchMD-NET, e3nn, SE(3)-Transformers | 3D molecular representation learning |

| Graph Transformer Implementations | Graphormer, Exphormer, GraphGPS | Global attention models for graphs |

| Molecular Conformer Generation | CREST (GFN2-xTB), RDKit, GEOM database | 3D structure preparation for EGNNs |

| Benchmark Datasets | MoleculeNet, OGB, QM9, ZINC, ToxBenchmark | Standardized model evaluation |

| Computational Infrastructure | GPU clusters (NVIDIA A100/H100), Cloud computing | Handling 3D molecular graphs and attention mechanisms |

Integration Strategies and Best Practices

Based on empirical results, researchers can optimize their architectural choices through several strategic considerations:

Architecture Selection Guidance: For properties dominated by 3D geometry, stereochemistry, or quantum effects (e.g., toxicity, energy, spectral properties), EGNNs provide superior performance [35]. For tasks requiring integrated understanding of global molecular structure (e.g., bioactivity, partition coefficients), Graphormer and its variants excel [32]. In resource-constrained environments or with large graphs, Exphormer provides an efficient compromise with linear complexity [36].

Hybrid and Ensemble Approaches: Promising research directions include hybrid models that incorporate both equivariant layers and global attention mechanisms. The GraphGPS framework demonstrates the potential of combining message passing with graph transformers, achieving state-of-the-art results across multiple benchmarks [36]. Ensemble approaches that leverage both 2D and 3D representations can capture complementary molecular characteristics.

Interpretability and Explainability: Both architectures offer pathways for model interpretation. EGNNs allow visualization of important atomic contributions through attention weight analysis in 3D space [35]. Graph Transformers can highlight important molecular substructures and long-range interactions through attention maps, providing chemical insights alongside predictions [37].

The comparative analysis of Equivariant GNNs and Graphormer architectures reveals a nuanced landscape where architectural alignment with molecular property characteristics drives performance. EGNNs demonstrate clear advantages for geometry-sensitive properties by incorporating physical priors and 3D structural information, while Graphormer excels at capturing global dependencies crucial for complex molecular interactions.

Future research directions include developing more efficient equivariant operations to reduce computational overhead, creating hybrid architectures that combine the strengths of both approaches, and advancing transfer learning techniques to leverage molecular representation across property prediction tasks. The ongoing development of sparse transformers like Exphormer addresses scalability limitations, enabling application to larger biomolecules and materials [36]. As these architectures mature, they promise to significantly accelerate drug discovery, environmental chemistry, and materials design through more accurate and efficient molecular property prediction.

For researchers implementing these technologies, the key recommendation is to match architectural selection to both the molecular characteristics most relevant to the target property and the computational constraints of the research environment. By leveraging the complementary strengths of these advanced architectures, the scientific community can continue to advance the frontiers of molecular property prediction.

The field of molecular property prediction stands at a pivotal juncture, marked by a transition from traditional quantitative structure-activity relationship (QSAR) models and expert-crafted descriptors toward sophisticated deep learning approaches. The recent emergence of Large Language Models (LLMs) represents a transformative development, offering a new paradigm for understanding and predicting chemical behavior. These models, initially designed for natural language processing, are now being adapted to interpret the complex "languages" of chemistry—from SMILES strings and molecular graphs to scientific literature. This integration promises to accelerate drug discovery and materials science by bridging the gap between computational prediction and experimental validation. As researchers and drug development professionals navigate this rapidly evolving landscape, understanding the comparative performance, methodologies, and practical applications of these tools becomes essential. This guide provides a systematic comparison of traditional and LLM-based approaches, grounded in current experimental data and evaluation frameworks.

Performance Benchmarking: Traditional Methods vs. Modern LLMs

Table 1: Comparative Performance of Molecular Property Prediction Approaches

| Model Category | Representative Examples | Key Features | Reported Performance | Primary Applications |

|---|---|---|---|---|

| Traditional Fixed Representations | ECFP Fingerprints, RDKit 2D Descriptors [7] [38] | Expert-defined molecular features; fast computation. | Strong performance on small datasets (<1000 molecules) [38]; outperformed by learned representations on larger datasets. | Baseline QSAR models, virtual screening. |

| Deep Learning (Graph-Based) | D-MPNN [38], GCNs, 3D-GCN [16] | Learns features directly from molecular graph structure. | Consistently matches or outperforms fingerprint models on public/industrial datasets [38]. | Drug discovery, molecular property classification & regression. |