Training Set vs. Validation Set: A Machine Learning Guide for Biomedical Research

This article provides a comprehensive guide to the critical roles of training and validation sets in machine learning, tailored for researchers and professionals in drug development and biomedical sciences.

Training Set vs. Validation Set: A Machine Learning Guide for Biomedical Research

Abstract

This article provides a comprehensive guide to the critical roles of training and validation sets in machine learning, tailored for researchers and professionals in drug development and biomedical sciences. We cover the foundational concepts of how a model learns from a training set and is tuned using a validation set, practical methodologies for data splitting specific to biomedical datasets like clinical trials and omics data, strategies to troubleshoot common pitfalls like overfitting and data leakage, and a comparative analysis of evaluation metrics. The goal is to equip practitioners with the knowledge to build robust, generalizable, and clinically relevant predictive models.

Core Concepts: How Models Learn and Are Evaluated

In machine learning, the division of a dataset into training, validation, and test sets constitutes a foundational protocol for developing models that generalize effectively to new, unseen data. This separation is crucial for mitigating overfitting, enabling unbiased model selection, and providing a faithful estimate of real-world performance. Within the context of a broader thesis on the validation set versus the training set, this article delineates the distinct roles of these three data partitions, underscoring the critical function of the validation set in hyperparameter tuning and model refinement—a process entirely separate from the core learning that occurs on the training set. We provide structured quantitative guidelines, detailed experimental protocols, and visual workflows tailored for researchers and scientists in fields like drug development, where robust, generalizable models are paramount.

The primary objective of a supervised machine learning model is to learn patterns from a known dataset that allow it to make accurate predictions on unknown data. This capability is known as generalization [1]. Using a single dataset for both training and evaluation leads to overoptimistic and misleading performance estimates, as the model may simply memorize the training data, including its noise and irrelevant features, a phenomenon known as overfitting [1] [2]. Consequently, the established practice is to partition the available data into three distinct subsets: the training set, the validation set, and the test set [3] [4]. Each serves a unique and critical purpose in the model development lifecycle, forming a rigorous methodology for creating reliable and assessable predictive algorithms.

Core Concepts and Definitions

The following table summarizes the distinct purposes and characteristics of the three data subsets.

Table 1: Distinctive Roles of Training, Validation, and Test Sets

| Feature | Training Set | Validation Set | Test Set |

|---|---|---|---|

| Primary Purpose | Model learning and parameter fitting [5] | Model tuning and hyperparameter optimization [6] [5] | Final, unbiased model evaluation [7] [2] |

| Usage in Workflow | Directly used to train the model [2] | Indirectly used for model selection during training [2] | Used only once, after all tuning is complete [2] |

| Impact on Model | The model's internal parameters (e.g., weights) are adjusted. | The model's hyperparameters (e.g., architecture, learning rate) are tuned. | The model and its configuration are fixed; no tuning occurs. |

| Analogy in Research | Laboratory experimental data for hypothesis generation. | Internal peer-review for protocol refinement. | Final publication and independent replication of results. |

| Risk of Overfitting | High if the model is too complex or the set is too small [2] | Medium; used to signal overfitting via early stopping [8] | Low, provided it is never used for any training decisions [2] |

The Training Set

The training set is the foundational dataset from which the model learns [3]. It consists of input data (features) and the corresponding correct output (labels or targets). During the training process, the model's algorithm analyzes these examples and iteratively adjusts its internal parameters (e.g., the weights in a neural network) to minimize the difference between its predictions and the true labels [1] [9]. The quality, quantity, and representativeness of the training set directly determine the model's ability to learn underlying patterns. A larger and more diverse training set typically leads to better model performance [2].

The Validation Set

The validation set (also called the development set or "dev set") is a separate subset of data used to provide an unbiased evaluation of a model's performance during the training phase [1] [8]. Its core function is hyperparameter tuning and model selection. Hyperparameters are the adjustable configuration settings of a model (e.g., the number of layers in a neural network, the learning rate, or the regularization strength) that are not learned directly from the training data [6]. By evaluating different models or configurations on the validation set, practitioners can choose the best-performing one and optimize its hyperparameters without touching the test set [5]. This set is also crucial for implementing early stopping, a regularization technique that halts training when performance on the validation set begins to degrade, a key indicator of overfitting [1] [6].

The Test Set

The test set is the final, held-out portion of the data that is used exclusively once, after the model is fully trained and tuned [7] [2]. It serves as a proxy for real-world, unseen data and provides an honest assessment of the model's generalization ability [10]. The performance metrics calculated on the test set (e.g., accuracy, precision, F1-score) are considered the best estimate of how the model will perform in production. Crucially, the test set must never be used for any form of training or model selection; using it for such purposes leads to data leakage and an optimistic bias in the performance estimate, defeating its primary purpose [10].

Experimental Protocols and Data Splitting Methodologies

A rigorous protocol for splitting data is essential for the integrity of the machine learning pipeline.

Standard Hold-Out Method

The most straightforward protocol involves a single, random partition of the dataset. The typical split ratio is 60/20/20 for training, validation, and testing, respectively, though this can vary with dataset size and model complexity [2] [4]. The following workflow diagram illustrates this process.

Diagram 1: Workflow for a standard 60-20-20 train-validation-test split.

Protocol Steps:

- Preprocessing and Randomization: Begin by shuffling the raw dataset randomly to avoid any order-related biases [4]. All necessary cleaning and feature engineering steps should be planned and their parameters (e.g., imputation values, scaling parameters) learned from the training fold only to prevent data leakage [10].

- Initial Split: Perform the first split to isolate the training set. A common initial split is 80% for training and 20% as a temporary held-out set.

- Secondary Split: Split the temporary held-out set into two equal parts (50/50) to create the final validation and test sets. This results in an approximate 60/20/20 final distribution [3].

Code Implementation with Scikit-Learn

The following Python code demonstrates the implementation of the standard hold-out method using the train_test_split function from scikit-learn.

Advanced Protocol: k-Fold Cross-Validation

For smaller datasets, a single train-validation split might be unstable. k-Fold Cross-Validation is a robust alternative, especially for the model tuning stage [4].

Protocol Steps:

- Hold Out Test Set: First, set aside the test set (e.g., 20% of the data). Do not use it for any further steps.

- Split Training Data: The remaining data (the training fold from the initial split) is divided into k equal-sized folds (e.g., k=5).

- Iterative Training and Validation: For each of the k iterations:

- Train the model on k-1 folds.

- Validate the model on the remaining 1 fold.

- Average Performance: Calculate the average performance across all k validation folds. This average provides a more reliable estimate of model performance for hyperparameter tuning.

- Final Training and Test: After selecting the best hyperparameters, train the final model on the entire training fold (all k folds) and evaluate it on the held-out test set.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Methods for Data Splitting and Model Validation

| Tool / Method | Function | Key Considerations |

|---|---|---|

Scikit-learn train_test_split |

A Python function to randomly split datasets into training and testing (and optionally validation) subsets [3]. | The random_state parameter ensures reproducibility. stratify parameter maintains class distribution in splits. |

| Stratified Sampling | A splitting method that ensures each subset maintains the same proportion of class labels as the original dataset [7] [4]. | Critical for imbalanced datasets (e.g., rare disease identification) to prevent skewed performance estimates. |

| k-Fold Cross-Validation | A resampling procedure used to evaluate models on limited data, providing a robust estimate of model performance [4]. | Computationally expensive but reduces the variance of the performance estimate. The test set is still held out from this process. |

| Early Stopping | A regularization method that halts model training once performance on the validation set stops improving [1] [6]. | Effectively prevents overfitting by using the validation set performance as a stopping criterion. |

| Data Augmentation | A technique to artificially expand the size and diversity of the training set by creating modified versions of existing data points [10]. | Must be applied only to the training set. Applying it before splitting can cause data leakage into the validation/test sets [10]. |

Common Pitfalls and Best Practices

- Data Leakage: The most critical error is allowing information from the test or validation set to influence the training process [10]. This can occur during global preprocessing (e.g., scaling the entire dataset before splitting) or feature engineering. Always fit preprocessing transformers (like scalers) on the training set and then use them to transform the validation and test sets.

- Overfitting the Validation Set: Repeatedly tuning hyperparameters based on the validation set can lead to a model that is over-optimized for that specific partition, reducing its generalizability [10]. The validation set should be used for guidance, not for excessive fine-tuning. The final arbiter of performance must be the test set.

- Insufficient Data: For very small datasets, a strict 60-20-20 split may leave too little data for effective training. In such cases, k-fold cross-validation on the training fold is a strongly recommended strategy [2] [4].

The disciplined partitioning of data into training, validation, and test sets is a non-negotiable practice in rigorous machine learning research. Each subset plays a distinct and vital role: the training set for learning, the validation set for unbiased tuning and model selection, and the test set for the final, honest evaluation of generalization performance. For scientists in high-stakes fields like drug development, adhering to these protocols, along with the visualization and methodologies outlined herein, is fundamental to building models that are not only powerful on paper but also reliable and effective when deployed in the real world. A clear understanding of the distinction between the validation set and the training set is, therefore, central to any thesis on building generalizable machine learning models.

In machine learning, the training set is the foundational dataset used to fit a model, enabling it to learn the underlying patterns and relationships within the data [1]. This process involves adjusting the model's internal parameters based on the input data and the corresponding target answers, a method known as supervised learning [1] [11]. The ultimate goal is to produce a trained model that can generalize well to new, unseen data [1]. The training set operates in conjunction with two other critical data subsets: the validation set, used for unbiased evaluation and hyperparameter tuning during training, and the test set, which provides a final, unbiased assessment of the model's generalization ability [1] [12]. This tripartite division is essential for developing robust and reliable models, particularly in scientific and pharmaceutical domains where model accuracy and reproducibility are paramount [13].

Core Concepts: The Triad of Data Partitioning

Distinct Roles in Model Development

The training, validation, and test sets serve distinct and crucial purposes in the machine learning workflow [14] [11]:

Training Set: This is the primary dataset from which the model learns. The model sees and learns from this data through an iterative process of adjusting its parameters (e.g., weights and biases in a neural network) to minimize the discrepancy between its predictions and the actual target values [1] [3]. This process often uses optimization algorithms like gradient descent or stochastic gradient descent [1].

Validation Set: This set provides an unbiased evaluation of a model fit on the training data while tuning the model's hyperparameters (e.g., the number of hidden layers in a neural network, learning rate) [1] [15]. It acts as a hybrid set—training data used for testing—that helps in model selection and preventing overfitting by signaling when training should stop (early stopping) before the model overfits the training data [1] [16].

Test Set: Also called a holdout set, this is a completely independent dataset that follows the same probability distribution as the training data but is never used during the training or validation phases [1] [12]. Its sole purpose is to offer a final, unbiased estimate of the model's performance on unseen data, simulating how the model will perform in a real-world, operational environment [11] [12].

Table 1: Core Functions of Training, Validation, and Test Sets

| Dataset | Primary Function | Used for Parameter/Hyperparameter Tuning? | Impact on Model |

|---|---|---|---|

| Training Set | Model fitting and learning underlying data patterns [1] [3] | Yes, for model parameters (e.g., weights) [1] | Directly determines the model's learned mappings |

| Validation Set | Model selection and hyperparameter tuning [1] [14] | Yes, for model hyperparameters (e.g., architecture) [1] | Guides model configuration; indirectly influences the final model |

| Test Set | Final, unbiased evaluation of model generalization [1] [12] | No [11] | Provides a performance metric; no influence on the model itself |

The Iterative Learning and Validation Workflow

The relationship between the training and validation sets is inherently iterative. A model undergoes multiple training cycles (epochs) on the training data. After each cycle or at specific intervals, its performance is assessed on the validation set. This validation performance provides feedback that can be used to adjust hyperparameters or even halt training, creating a continuous loop aimed at optimizing model performance without overfitting [1] [12]. The test set remains entirely separate from this iterative process.

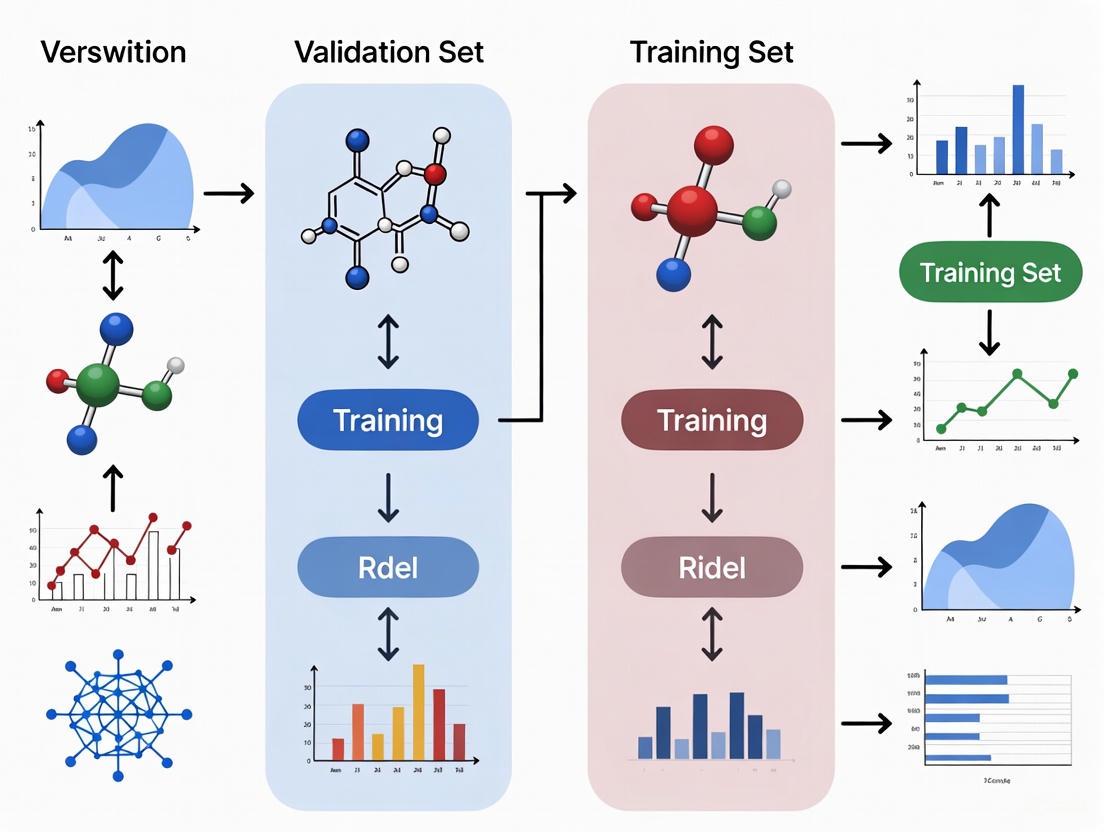

Figure 1: Workflow of model development showing the distinct roles of training, validation, and test sets. The iterative loop between training and validation continues until model performance on the validation set is satisfactory.

Experimental Protocols for Effective Data Splitting

Determining Data Split Ratios

There is no universally optimal split ratio; the ideal partitioning depends on the size and nature of the dataset, the model's complexity, and the number of hyperparameters [14] [13]. The following table summarizes common split strategies based on dataset size.

Table 2: Recommended Data Split Ratios Based on Dataset Size

| Dataset Size | Typical Training Ratio | Typical Validation Ratio | Typical Test Ratio | Key Considerations |

|---|---|---|---|---|

| Large (e.g., >10,000 samples) | 70% [13] | 15% [13] | 15% [13] | Smaller relative validation/test sizes are sufficient for statistical significance [14]. |

| Medium (e.g., 1,000-10,000 samples) | 60% [13] | 20% [13] | 20% [13] | Balances the need for ample training data with robust validation and testing. |

| Small (e.g., <1,000 samples) | 70% [13] | - | 30% [13] | Use cross-validation (e.g., k-fold) instead of a separate validation set to maximize training data utility [1] [13]. |

| General Practice | 50-80% [15] [3] | 10-25% [3] | 10-25% [3] | A typical starting point is 70/15/15 or 80/10/10 [14] [11]. |

Protocol: Implementing a Standard Train-Validation-Test Split

This protocol describes a methodological approach for splitting a dataset into training, validation, and test sets, which is critical for building generalizable machine learning models.

Principle: The dataset must be partitioned in a way that ensures the model is trained on one subset, its hyperparameters are tuned on a second, and its final performance is evaluated on a third, entirely unseen subset. This prevents overfitting and provides an honest assessment of generalization ability [1] [12].

Research Reagent Solutions (Computational Tools)

Table 3: Essential Computational Tools for Data Splitting and Model Training

| Tool / Component | Function | Example in Protocol |

|---|---|---|

| Programming Language | Provides the environment for data manipulation and algorithm execution. | Python 3.x |

| Data Manipulation Library | Handles data structures and operations on numerical tables and arrays. | pandas, numpy |

| Machine Learning Library | Provides functions for data splitting and model building. | scikit-learn (sklearn) |

| Dataset | The raw data to be partitioned, typically a feature matrix (X) and target vector (y). | Custom dataset |

Procedure

Data Preparation and Shuffling:

- Load your dataset, ensuring it is cleaned and preprocessed.

- Shuffle the data randomly to ensure that the splits are representative of the overall data distribution. This helps prevent bias that could arise from the original order of the data [14].

- For datasets with class imbalance, use stratified sampling to maintain consistent class distributions across all subsets [14] [11].

Initial Split (Training vs. Temporary Set):

- Perform the first split to isolate the training set from the remaining data. A common initial split is 80% for training and 20% for the temporary set, though this can be adjusted based on Table 2.

- Code Example (using

scikit-learn):

Secondary Split (Validation vs. Test Set):

- Split the temporary set (

X_temp,y_temp) from the previous step into the final validation and test sets. A 50-50 split of the temporary set is typical, resulting in 10% validation and 10% test of the original data. - Code Example:

- The

random_stateparameter ensures the split is reproducible.

- Split the temporary set (

Verification:

- Print the shapes of the resulting sets to confirm the split aligns with expectations.

Interpretation and Troubleshooting: After splitting, the training set (X_train, y_train) is used for model fitting. The validation set (X_val, y_val) is used for hyperparameter tuning and model selection during training. The test set (X_test, y_test) is stored securely and not used until the final model is selected, at which point it provides an unbiased performance metric [1] [12]. A significant performance drop from validation to test sets may indicate that the model was overfitted to the validation set during excessive tuning [15] [12].

The Scientist's Toolkit: Key Considerations for Robust Training

Advanced Techniques for Small Datasets and Complex Models

When dealing with limited data or models with many hyperparameters, simple splitting may be insufficient.

K-Fold Cross-Validation: This technique is a powerful alternative to using a single, static validation set, especially for small datasets [11] [13]. The training data is randomly partitioned into k equal-sized folds (e.g., k=5 or 10). The model is trained k times, each time using k-1 folds for training and the remaining one fold as the validation set. The performance is averaged over the k trials, providing a more robust estimate of model performance and reducing the variance of the validation estimate [1] [11].

Nested Cross-Validation: For both model selection and hyperparameter tuning, nested cross-validation provides an almost unbiased estimate of the true test error. It involves an outer k-fold loop for assessing model performance and an inner k-fold loop for selecting the best hyperparameters, effectively simulating a train-validation-test split within the constraints of a single dataset.

Critical Pitfalls and Mitigation Strategies

Data Leakage: A primary threat to model validity is data leakage, which occurs when information from the test set inadvertently influences the training process [14]. This can happen if the test set is used for feature selection, normalization, or during the iterative tuning process. To prevent this, the test set must be kept in a "vault" and only brought out for the final evaluation [15] [12]. All preprocessing steps (e.g., scaling, imputation) should be fit on the training data and then applied to the validation and test sets without recalculating parameters [14].

Overfitting and Underfitting: The training and validation sets are instrumental in diagnosing these fundamental issues.

- Overfitting: Occurs when the model performs well on the training set but poorly on the validation set. This indicates the model has learned the noise and specific details of the training data rather than generalizable patterns [1] [16]. Mitigation strategies include simplifying the model, applying regularization, or using early stopping based on validation performance [1].

- Underfitting: Occurs when the model performs poorly on both the training and validation sets. This suggests the model is too simple to capture the underlying structure of the data and may require a more complex model or additional features [11].

The training set is the cornerstone upon which all machine learning models are built, serving as the primary source from which patterns and relationships are learned. Its effective use, however, is inextricably linked to the disciplined employment of validation and test sets. The validation set acts as a crucial guide during the training process, enabling unbiased hyperparameter tuning and model selection, which is the central theme of the broader thesis on the training-validation dynamic. Finally, the test set stands as the ultimate arbiter of model quality, providing a guarantee of performance on unseen data. Adhering to rigorous data splitting protocols, understanding the iterative workflow between training and validation, and mitigating common pitfalls like data leakage are non-negotiable practices for researchers and scientists aiming to develop reliable, generalizable, and compliant predictive models in demanding fields like drug development.

In machine learning, a model's performance on its training data is often a poor indicator of its real-world effectiveness. This discrepancy arises from overfitting, where a model learns the noise and specific patterns in the training data rather than the underlying generalizable relationships [1]. The validation set functions as a crucial, unbiased checkpoint during the model development process. It provides a hybrid dataset that is used for testing, but neither as part of the low-level training nor as part of the final testing [1]. Within the context of scientific and drug development research, where model decisions can impact clinical outcomes, the rigorous use of a validation set is non-negotiable for building trustworthy and reliable predictive models.

This document outlines the formal protocols and application notes for the proper deployment of validation sets, framing them as the essential tool for model tuning and hyperparameter optimization in a research environment. The core distinction lies in the data's purpose: the training set is used for learning model parameters, the validation set for tuning the model's architecture and hyperparameters, and the test set for the final, unbiased evaluation of the fully-specified model [2]. Adherence to this separation is a foundational principle for rigorous machine learning research.

Core Concepts and Quantitative Comparisons

Distinguishing Between Data Partitions

The following table summarizes the distinct roles and characteristics of the three primary data sets in a machine learning workflow.

Table 1: Roles and Characteristics of Training, Validation, and Test Sets

| Feature | Training Set | Validation Set | Test Set |

|---|---|---|---|

| Primary Purpose | Model learning and parameter fitting [2] | Model tuning and hyperparameter optimization [2] | Final model evaluation [2] |

| Usage Phase | Model training phase [2] | Model validation phase [2] | Final testing phase [2] |

| Exposure to Model | Directly used for learning [2] | Indirectly used for guiding tuning [2] | Never used during training or tuning [2] |

| Impact on Model | Determines the model's internal weights [1] | Influences the choice of hyperparameters (e.g., learning rate, network layers) [1] | Provides an unbiased estimate of generalization error [1] |

| Risk of Overfitting | High if the set is too small or overused [2] | Medium; overfitting to the validation set is possible without a final test set [1] | Low, provided it remains completely untouched until the final assessment [2] |

Quantitative Data Splitting Strategies

The division of available data is problem-dependent, but standard practices provide a starting point. The following table offers common splitting strategies, which can be adjusted based on dataset size and model complexity [2].

Table 2: Common Data Set Splitting Strategies

| Dataset Size | Recommended Split (Train/Val/Test) | Rationale and Considerations |

|---|---|---|

| Large (e.g., >1M samples) | 98%/1%/1% or similar | Very large datasets can dedicate a small percentage to validation and testing while still having millions of samples for training and robust evaluation. |

| Medium (e.g., 10,000 samples) | 60%/20%/20% or 70%/15%/15% | A balanced split ensures sufficient data for training while retaining enough for reliable validation and testing [2]. |

| Small (e.g., <1,000 samples) | Use Nested Cross-Validation | Simple splits may be unstable; cross-validation uses data more efficiently by creating multiple train/validation splits [1] [17]. |

For small datasets, the holdout method can be problematic, and techniques like cross-validation and bootstrapping are recommended [1]. In k-fold cross-validation, the original data is randomly partitioned into k equal-sized folds. Of the k folds, a single fold is retained as the validation set, and the remaining k-1 folds are used as the training set. This process is repeated k times, with each of the k folds used exactly once as the validation data [17].

Experimental Protocols for Model Tuning and Validation

Protocol 1: Holdout Method for Hyperparameter Tuning

This protocol describes the standard procedure for using a single, held-out validation set to tune model hyperparameters.

3.1.1 Workflow Diagram

3.1.2 Step-by-Step Procedure

- Data Preparation: Begin with a cleaned and pre-processed dataset. Shuffle the data randomly to avoid any inherent ordering biases [2].

- Data Partitioning: Split the data into three subsets:

- Training Set (e.g., 60%): Used to fit the model for each candidate hyperparameter set.

- Validation Set (e.g., 20%): Used to evaluate and compare the performance of the models trained with different hyperparameters.

- Test Set (e.g., 20%): Held back and completely isolated from the tuning process [2].

- Hyperparameter Grid Definition: Define the set of hyperparameters to be explored (e.g., learning rate, number of layers in a neural network, tree depth in a random forest) and their candidate values [1].

- Model Training and Validation Loop: For each combination of hyperparameters in the defined grid:

- Train a new model from scratch using only the Training Set.

- Use the trained model to predict outcomes for the Validation Set.

- Calculate the chosen performance metric(s) (e.g., accuracy, F1-score, mean squared error) on the Validation Set.

- Model Selection: Compare the validation set performance metrics across all hyperparameter combinations. Select the hyperparameter set that yielded the model with the best validation performance.

- Final Assessment: Train a final model on the combined Training and Validation Sets using the selected optimal hyperparameters. Obtain the final, unbiased performance estimate by evaluating this model on the untouched Test Set [1].

Protocol 2: Nested Cross-Validation for Algorithm Selection

This protocol is used when both the model family (e.g., SVM vs. Random Forest) and its hyperparameters need to be selected. It provides a robust, nearly unbiased estimate of the model's performance by preventing information leakage from the model selection process into the performance evaluation.

3.2.1 Workflow Diagram

3.2.2 Step-by-Step Procedure

- Define Outer and Inner Loops: The process involves two layers of cross-validation.

- Outer Loop: Splits the data into K folds (e.g., K=5) for estimating generalization error.

- Inner Loop: Splits the training data from the outer loop into L folds (e.g., L=3) for model and hyperparameter selection.

- Outer Loop Iteration: For each fold

iin the K outer folds:- Set aside fold

ias the outer test set. - Use the remaining K-1 folds as the data for the inner loop.

- Set aside fold

- Inner Loop Model Selection: On the K-1 folds from the outer loop, perform a standard cross-validation (the inner loop) to select the best model and hyperparameters. This involves trying different algorithms and hyperparameters, training on L-1 folds, and validating on the L-th fold, repeating for all L inner folds.

- Train and Evaluate Final Model: Once the best model and hyperparameters are identified via the inner loop, train a final model on the entire set of K-1 folds using this optimal configuration. Evaluate this model on the held-out outer test set (fold

i) and record the performance metric. - Aggregation: After iterating through all K outer folds, aggregate the performance metrics from each outer test set (e.g., by computing the mean and standard deviation). This aggregated metric provides a robust estimate of how the selected modeling process will generalize to unseen data [18].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Computational Tools and Libraries for Model Validation

| Tool / Reagent | Function and Description | Example Uses in Protocol |

|---|---|---|

scikit-learn (Python) |

A comprehensive open-source machine learning library. | Provides utilities for train_test_split, cross_val_score, GridSearchCV, and various model implementations, enabling the execution of all protocols described above [17]. |

TensorFlow/PyTorch |

Open-source libraries for building and training deep learning models. | Used to define complex model architectures (hyperparameters) and perform efficient gradient-based optimization during the training phases of the protocols. |

| Stratified Sampling | A sampling technique that ensures each data split maintains the same proportion of class labels as the original dataset. | Critical for splitting imbalanced datasets in classification tasks to prevent biased training or validation sets [2]. |

| Hyperparameter Optimization Suites (e.g., Optuna, Weka) | Advanced software tools designed to automate the search for optimal hyperparameters beyond simple grid search. | Used in the inner loop of Protocol 2 to efficiently navigate a large hyperparameter space using methods like Bayesian optimization. |

| Data Visualization Libraries (e.g., Matplotlib, Seaborn) | Libraries for creating static, animated, and interactive visualizations. | Essential for plotting learning curves (training vs. validation loss over time) to diagnose overfitting and underfitting visually. |

Application in Drug Development: A Case for Rigorous Validation

The principles of model validation are critically important in drug development, where AI and machine learning models are increasingly used for tasks ranging from target identification to clinical trial optimization [19] [20]. Regulatory bodies like the U.S. FDA emphasize the need for a risk-based framework and robust validation of AI components in regulatory submissions [20].

A key challenge in this domain is the gap between retrospective validation on curated datasets and prospective performance in real-world clinical settings. Models that perform well on static, historical data may fail when making forward-looking predictions in dynamic clinical environments [19]. Therefore, the validation set in this context serves as a proxy during development for the ultimate test: prospective clinical validation. For AI tools claiming clinical benefit, this often necessitates rigorous validation through randomized controlled trials (RCTs) to demonstrate safety and clinical utility, meeting the same evidence standards expected of therapeutic interventions [19]. This rigorous approach is essential for securing regulatory approval, reimbursement, and, ultimately, trust from clinicians and patients [19].

In machine learning research, particularly in high-stakes fields like drug development, the journey from a conceptual model to a deployable solution hinges on a rigorous evaluation protocol. This process relies on partitioning available data into three distinct subsets: the training set, the validation set, and the test set. Each serves a unique and critical purpose in the model development lifecycle. The training set is the foundational dataset used to teach the model by allowing it to learn patterns and relationships [21]. Following this, the validation set is used to tune the model's hyperparameters and make iterative adjustments during the development phase [22] [23]. However, it is the test set—used for a single, final evaluation—that provides the definitive, unbiased measure of a model's ability to generalize to new, unseen data [4] [23].

Confusing the role of the validation set with that of the test set is a common pitfall that can lead to overly optimistic performance estimates and models that fail in real-world applications. This article delineates the distinct purposes of these datasets and provides detailed protocols to ensure that the test set remains the non-negotiable cornerstone for final model evaluation, a practice paramount for researchers and scientists aiming to build reliable and generalizable models.

Conceptual Foundations: The Distinct Roles of Validation and Test Sets

The Protocol Workflow: From Data to Deployable Model

The following diagram illustrates the standard machine learning workflow, highlighting the strict separation between the model development phase and the final evaluation phase. This separation is crucial for preventing information leakage and obtaining an unbiased assessment.

Comparative Analysis of Dataset Roles

The table below summarizes the core functions and characteristics of each dataset, underscoring their unique contributions to the machine learning pipeline.

Table 1: Core Functions and Characteristics of Data Subsets

| Data Subset | Primary Function | Stage of Use | Informs Decisions On | Common Splitting Ratio |

|---|---|---|---|---|

| Training Set | To fit the model parameters; the model learns underlying patterns from this data [5] [21]. | Training Phase | Internal model parameters (e.g., weights in a neural network). | ~70% |

| Validation Set | To tune hyperparameters and select the best model architecture; provides an intermediate check for overfitting [5] [22] [23]. | Development Phase | Hyperparameters (e.g., learning rate, network depth, regularization strength). | ~15% |

| Test Set | To provide an unbiased final evaluation of the fully-trained model's generalization error [5] [4]. | Final Reporting Phase | Final model performance and expected real-world behavior. | ~15% |

The cardinal rule in this workflow is that the test set must be used only once, at the very end of the entire development process [23]. Using the test set for iterative tuning or model selection causes data leakage, as the test set information implicitly influences the model design. This leads to overfitting to the test set, producing a performance estimate that is optimistically biased and not representative of true generalization ability [22] [24]. The validation set, in contrast, is designed for this iterative feedback loop during development.

Experimental Protocols for Data Splitting and Evaluation

Protocol 1: Simple Hold-Out Validation Split

This is the most straightforward method for creating the essential data subsets and is suitable for large datasets.

Procedure:

- Initial Partitioning: Randomly shuffle the entire dataset, then perform an initial split, allocating a portion (e.g., 20-30%) to serve as the final test set. This set is sealed and not used further in development.

- Secondary Partitioning: The remaining data (70-80%) is then split again to create the training set (e.g., 85% of the remainder) and the validation set (e.g., 15% of the remainder).

- Iterative Development: The model is trained on the training set. After each training epoch, its performance is evaluated on the validation set to guide hyperparameter tuning and model selection.

- Final Assessment: After the model is fully tuned and the final model is selected, its performance is evaluated exactly once on the sealed test set.

Python Code Snippet:

Code adapted from common practices illustrated in [4].

Protocol 2: K-Fold Cross-Validation for Robust Validation

For smaller datasets, a simple hold-out validation might be unstable. K-fold cross-validation provides a more robust use of the available data for the training/validation process, while still requiring a separate test set for final evaluation.

Procedure:

- Reserve Test Set: Begin by holding out a separate test set (e.g., 20% of the data).

- Create Folds: The remaining data (the training/validation pool) is randomly partitioned into k equal-sized subsets (folds).

- Iterative Training and Validation: For k iterations, a different fold is used as the validation set, and the remaining k-1 folds are combined to form the training set. The model is trained and validated each time, resulting in k performance estimates.

- Model Selection: The average performance across all k folds is used to compare and select the best model or hyperparameter set.

- Final Assessment: The selected model is retrained on the entire training/validation pool and evaluated exactly once on the held-out test set.

The following diagram visualizes this robust process, emphasizing that the test set remains isolated from the cross-validation cycle.

The Scientist's Toolkit: Essential Research Reagents

In the context of machine learning research, "research reagents" refer to the fundamental software tools and libraries that enable the implementation of the protocols described above.

Table 2: Essential Tools for Model Evaluation and Validation

| Tool / Library | Function | Application in Protocol |

|---|---|---|

scikit-learn |

A comprehensive machine learning library for Python. | Provides the train_test_split function for data splitting and various modules for cross-validation, model training, and performance metric calculation [4]. |

TensorFlow/PyTorch |

Open-source libraries for building and training deep learning models. | Used to define model architecture, perform gradient-based optimization during training, and implement custom training loops with validation checkpoints. |

XGBoost |

An optimized gradient boosting library. | Useful as a robust model that can handle missing data natively, mitigating a common pre-modeling pitfall [24]. |

Pandas & NumPy |

Foundational libraries for data manipulation and numerical computation. | Used for data cleaning, preprocessing, feature engineering, and managing dataframes and arrays before splitting. |

Matplotlib/Seaborn |

Libraries for creating static, animated, and interactive visualizations. | Essential for plotting learning curves (training vs. validation loss) to diagnose overfitting and underfitting [22]. |

Adhering to a strict separation between validation and test sets is not a mere technical formality but a fundamental requirement for scientific rigor in machine learning. The validation set is a tool for development, while the test set is the instrument for unbiased final evaluation. By implementing the detailed protocols and best practices outlined in this document—particularly the non-negotiable rule of using the test set only once—researchers and drug development professionals can ensure their models are truly evaluated for their generalizability, leading to more reliable and trustworthy applications in critical scientific domains.

In machine learning research, the core objective is to develop models that generalize effectively—making accurate predictions on new, unseen data. The integrity of this process hinges on a fundamental practice: partitioning available data into distinct subsets for training, validation, and testing [1] [12]. This protocol prevents the critical failure of overfitting, where a model performs well on its training data but fails to generalize [1] [11]. Within the broader context of comparing validation and training sets, it is essential to understand that these sets are not rivals but complementary components of a rigorous, iterative model development workflow. The training set is used for parameter estimation, while the validation set provides an unbiased evaluation for model selection and hyperparameter tuning during this iterative process [1] [5]. This document outlines detailed application notes and protocols for implementing this workflow, with a focus on applications relevant to researchers and drug development professionals.

Core Concepts and Definitions

The Triad of Data Subsets

The machine learning workflow employs three distinct data subsets, each serving a unique and critical function in the model development pipeline. Their primary purposes and characteristics are summarized in Table 1.

Table 1: Primary Purposes and Characteristics of Training, Validation, and Test Sets

| Data Subset | Primary Purpose | Used to Adjust | Frequency of Interaction with Model | Typical Proportion of Data |

|---|---|---|---|---|

| Training Set | Fit the model; enable learning of underlying patterns [1] [2] | Model parameters (e.g., weights in a neural network) [1] | Repeatedly, throughout the training process [25] | 60% - 80% [26] [25] |

| Validation Set | Model selection and hyperparameter tuning; prevent overfitting [1] [2] [5] | Model hyperparameters (e.g., learning rate, number of layers) [1] [5] | Periodically, during the training process [25] | 10% - 20% [26] [25] |

| Test Set | Final, unbiased evaluation of the fully-trained model's performance [1] [2] [5] | Nothing; provides a final performance metric [2] | Once, after all training and tuning is complete [25] | 10% - 20% [26] [25] |

The Critical Distinction: Validation vs. Test Set

A common point of confusion lies in the distinct roles of the validation and test sets. The validation set is an integral part of the training loop; it is used repeatedly to evaluate the model after various training epochs or hyperparameter adjustments. This feedback guides the researcher to select the best model architecture and hyperparameters [1] [5]. In contrast, the test set must be held in a "vault" and used only at the end of the entire development process [5]. Its sole purpose is to provide a statistically rigorous, unbiased estimate of the model's real-world performance on truly unseen data, ensuring that the model has not inadvertently been tuned to the peculiarities of the validation set [5] [12].

Experimental Protocols for Data Splitting

The method for partitioning data is not one-size-fits-all and must be chosen based on dataset size and characteristics. Below are detailed protocols for different scenarios.

The Standard Hold-Out Method

This is the most common approach, suitable for large datasets with hundreds of thousands or millions of samples.

Protocol:

- Shuffle: Randomly shuffle the entire dataset to minimize any inherent ordering bias [2].

- Split: Partition the data into training, validation, and test sets according to a pre-defined ratio. A typical starting ratio is 60/20/20, but 70/15/15 or 80/10/10 are also common, depending on data size [2] [26].

- Ensure Independence: Verify that no examples are duplicated across the splits. Remove any duplicates in the validation or test set that appear in the training set to ensure a fair evaluation [12].

Cross-Validation for Small Datasets

For smaller datasets, where a single hold-out validation set would be too small to provide reliable feedback, k-fold cross-validation is the preferred protocol [2] [27]. This method maximizes data usage for both training and validation.

Protocol:

- Shuffle and Partition: Randomly shuffle the dataset and split it into

kequal-sized folds (a common choice is k=5 or k=10) [27]. - Iterative Training and Validation:

- For each iteration

i(whereiranges from 1 tok), use thei-th fold as the validation set and the remainingk-1folds as the training set. - Train the model on the training folds and evaluate it on the validation fold.

- Record the performance metric from each iteration.

- For each iteration

- Average Results: Calculate the average performance across all

kiterations to produce a single, more robust estimation of model performance [27]. - Final Model Training: After using cross-validation for model selection and hyperparameter tuning, train the final model on the entire dataset (excluding the final test set).

- Final Evaluation: Evaluate this final model on the held-out test set for an unbiased performance estimate [5].

Stratified Splitting for Imbalanced Datasets

In classification problems with imbalanced class distributions (e.g., a rare disease subtype is present in only 2% of samples), random splitting can create subsets that are not representative of the overall class distribution.

Protocol:

- Identify Classes: Determine the different classes within the dataset.

- Stratify: Perform the split in such a way that the relative proportion of each class is preserved in the training, validation, and test sets [27]. This ensures the model is evaluated on a realistic distribution of classes.

Workflow Visualization and Logical Relationships

The interaction between the training, validation, and test sets is a dynamic, iterative process. The following diagrams, generated with Graphviz, illustrate the logical flow and decision points.

High-Level Model Development Workflow

Diagram 1: High-level model development workflow.

The Model Tuning Loop

This diagram details the iterative cycle between training and validation, which is the core of model optimization.

Diagram 2: The model tuning loop.

The Scientist's Toolkit: Essential Research Reagents

In machine learning, the "reagents" are the datasets, algorithms, and evaluation metrics. For a rigorous experimental protocol, the following tools are essential.

Table 2: Key Research Reagent Solutions for ML Experiments

| Reagent / Solution | Function / Purpose | Example Instances |

|---|---|---|

| Training Data | The foundational substrate for model learning. Used to fit model parameters via optimization algorithms [1] [21]. | Labeled examples (e.g., chemical compound structures with associated bioactivity [21]). |

| Validation Data | The internal quality control. Provides an unbiased evaluation for model selection and hyperparameter tuning during development [1] [5]. | A held-out set from the original dataset, not used for initial parameter training [1]. |

| Test Data | The final validation assay. Provides an unbiased estimate of the model's generalization error on unseen data [1] [5]. | A completely held-out dataset, kept in a "vault" until the very end of the research project [5]. |

| Optimization Algorithm | The mechanism that drives parameter learning. Minimizes a loss function between predictions and true labels on the training set [1]. | Gradient Descent, Stochastic Gradient Descent (SGD), Adam [1]. |

| Performance Metrics | The measurement instruments. Quantify model performance on validation and test sets to guide decision-making [1] [2]. | Accuracy, Precision, Recall, F1-Score, Mean Squared Error, Area Under the Curve (AUC) [1] [2]. |

Common Pitfalls and Mitigation Strategies

- Inadequate Sample Size: Ensure training, validation, and test sets are large enough for their respective purposes [27] [12]. A small test set may not provide a reliable performance estimate [2].

- Data Leakage: Strictly separate training, validation, and test sets [27]. Information from the test set must never influence training or tuning [12]. Mitigation includes removing duplicate examples across splits [12].

- Overfitting to the Validation Set: Repeated use of the validation set for tuning can cause the model to overfit to it [5] [12]. The test set exists to detect this. If resources allow, periodically refresh validation and test sets with new data [12].

- Improper Shuffling: Always shuffle data before splitting to avoid introducing bias from the original data order [2] [27].

Implementing Data Splits in Biomedical Research

In machine learning research, the division of data into training, validation, and test sets forms the cornerstone of robust model development and reliable performance validation. This protocol details the implementation of common data splitting strategies, specifically focusing on the 70-15-15 and 60-20-20 ratios, within the context of scientific research and drug development. We provide a comparative analysis of these partitioning schemes, experimental protocols for their application, and visual workflows to guide researchers in selecting appropriate strategies to optimize model generalization and prevent overfitting, thereby enhancing the reliability of predictive models in critical research applications.

In supervised learning, a model's ability to generalize to unseen data is the ultimate measure of its success [27]. The central thesis of modern machine learning validation hinges on the critical separation of data used for training versus data used for validation and testing [28]. This separation is not merely a procedural formality but a fundamental requirement for building models that perform reliably in real-world scenarios, such as drug discovery and clinical development [29].

The practice of splitting a dataset into three distinct subsets—training, validation, and test—addresses a core challenge in model development: the need for multiple, independent data assessments [30]. The training set is used to fit model parameters; the validation set provides an unbiased evaluation for hyperparameter tuning and model selection during training; and the test set is held back for a final, unbiased assessment of the fully-trained model's generalization capability [27] [31]. Using the same data for both training and evaluation leads to overoptimistic performance metrics and models that fail in production environments, a pitfall known as overfitting [4] [32].

This document frames data splitting methodologies within the broader research thesis of "validation set versus training set," exploring how different partitioning ratios balance the competing needs of sufficient training data and statistically reliable validation.

Comparative Analysis of Common Splitting Ratios

Selecting an appropriate data split ratio is a trade-off between providing enough data for the model to learn effectively and retaining sufficient data for robust validation and testing [33]. The optimal balance depends on factors including dataset size, model complexity, and the required confidence in performance metrics [32].

Table 1: Characteristics of Common Data Split Ratios

| Split Ratio (Train-Valid-Test) | Typical Use Case | Advantages | Limitations |

|---|---|---|---|

| 70-15-15 | Medium-sized datasets; Models requiring moderate hyperparameter tuning [34]. | Balanced allocation for both training and evaluation; Sufficient validation data for reliable tuning. | Training data might be insufficient for very complex models. |

| 60-20-20 | Scenarios requiring extensive hyperparameter tuning or robust performance validation [34]. | Larger validation and test sets provide more reliable performance estimates. | Smaller training set may lead to higher variance in parameter estimates [33]. |

| 80-10-10 | Large datasets (e.g., >1M samples) [31] [33]. | Maximizes data for training; 1-10% of large datasets is sufficient for evaluation. | Smaller evaluation sets may have higher variance in performance metrics [33]. |

| 98-1-1 | Very large-scale datasets (e.g., millions of samples) [31]. | Absolute number of evaluation samples is still statistically significant. | Requires extremely large initial dataset to be viable. |

Table 2: Data Split Ratio Selection Guide

| Dataset Characteristic | Recommended Split Strategy | Rationale |

|---|---|---|

| Small Sample Size | Cross-Validation (e.g., 5-fold or 10-fold) [28] [34]. | Avoids reducing the training set size further; provides more robust performance estimate. |

| Class Imbalance | Stratified Split (e.g., Stratified 70-15-15) [27] [31] [32]. | Preserves the class distribution in all subsets, preventing biased training or evaluation. |

| Temporal Dependence | Time-based Split (e.g., Chronological 70-15-15) [31] [34]. | Prevents data leakage from the future; ensures realistic evaluation on future unseen data. |

| Grouped Data | Group Split (e.g., Grouped 60-20-20) [31]. | Keeps all data from a single group (e.g., patient) in one set; prevents over-optimistic estimates. |

The 70-15-15 and 60-20-20 ratios are particularly relevant for medium-sized datasets common in early-stage research, where the total number of samples may be in the thousands or tens of thousands [33]. A key consideration is the absolute size of the validation and test sets. While a 20% test set might be appropriate for a dataset of 10,000 samples (yielding 2,000 test samples), the same 20% would be excessive for a dataset of 1,000,000 samples, where a smaller percentage (e.g., 1% or 10,000 samples) can provide a statistically reliable performance estimate while reserving more data for training [31] [33].

Experimental Protocols

Protocol 1: Implementing a 70-15-15 Split using Scikit-Learn

This protocol outlines the steps for a standard 70-15-15 random split, a common starting point for model development.

Research Reagent Solutions

- Python Programming Environment: A configured environment (e.g., Jupyter Notebook) for executing code.

- Scikit-Learn Library (

v1.0+): Provides thetrain_test_splitfunction for efficient data partitioning [4] [34]. - NumPy/Pandas Libraries: For data manipulation and handling.

- Dataset: A labeled dataset in a suitable format (e.g., CSV, NumPy array).

Methodology

- Data Preparation: Load the dataset and separate the feature matrix (

X) from the target variable vector (y). - Primary Split (Train vs. Temp): Perform the first split to isolate the training data.

random_stateensures reproducibility of the split [4]. - Secondary Split (Train vs. Validation): Split the temporary set into final training and validation sets. This two-step process accurately achieves the 70-15-15 ratio [4].

- Verification: Check the shapes of the resulting sets to confirm the split ratios.

Protocol 2: Implementing a Stratified 60-20-20 Split

This protocol is essential for imbalanced datasets, ensuring proportional representation of classes in all subsets.

Research Reagent Solutions

- Python Programming Environment: As in Protocol 1.

- Scikit-Learn Library: For

train_test_splitwith thestratifyparameter. - Imbalanced Dataset: A classification dataset with skewed class distributions.

Methodology

- Data Preparation: Load and separate features (

X) and targets (y). - Primary Stratified Split: Isolate the test set while preserving class distribution.

stratify=yensures the class distribution inX_tempandX_testmirrors that ofy[31] [32]. - Secondary Stratified Split: Create the training and validation sets from the temporary set.

- Verification: Examine the class distribution in each subset (e.g., using

np.unique(y_train, return_counts=True)).

Protocol 3: Performance Validation via Learning Curves

This protocol provides a methodology for empirically validating the adequacy of a chosen split ratio by diagnosing variance and bias.

Research Reagent Solutions

- Trained Model: A candidate model (e.g., SVM, Random Forest).

- Computational Resources: Sufficient for multiple model training iterations.

- Plotting Library: Matplotlib or Seaborn for visualization.

Methodology

- Subsample Training Data: Create progressively larger random subsets of the training set (e.g., 20%, 40%, 60%, 80%, 100%).

- Iterative Training and Validation: For each training subset:

- Train the model.

- Record the performance score on the training subset.

- Record the performance score on the validation set.

- Plot Learning Curves: Plot both training and validation scores against the training set size.

- Analysis:

- High Bias (Underfitting): Both training and validation scores converge to a low value. Indicates a need for more features or a more complex model.

- High Variance (Overfitting): A large gap between training and validation scores, with the training score being significantly higher. Suggests a need for more training data or regularization [33] [32].

The Scientist's Toolkit

Table 3: Essential Reagents and Tools for Data Splitting Experiments

| Item | Function / Purpose | Example / Specification |

|---|---|---|

| Scikit-Learn | Primary library for data splitting and model evaluation. | train_test_split, StratifiedKFold, cross_val_score [4] [34]. |

| Stratification Parameter | Ensures proportional class representation in all data splits for classification tasks. | stratify=y in train_test_split [31]. |

| Random State Seed | Ensures the reproducibility of random splits for robust, repeatable research. | random_state=42 (or any integer) [4]. |

| Cross-Validation | A robust alternative to single split for small datasets or enhanced performance estimation. | KFold(n_splits=5), StratifiedKFold [27] [29] [34]. |

| Encord Active / Lightly | Platforms for curating and managing dataset splits, especially for computer vision. | Used for filtering data based on quality metrics before splitting [27] [31]. |

Critical Considerations and Best Practices

Avoiding Common Pitfalls

- Data Leakage: A critical error where information from the validation or test set inadvertently influences the training process [27] [34]. This can occur through improper preprocessing (e.g., scaling before splitting) or feature engineering. Prevention: Always split data first, then perform any preprocessing, fitting the scaler/transformer on the training set only and applying it to the validation and test sets [34].

- Overfitting the Validation Set: Repeatedly tuning hyperparameters based on the validation set can cause the model to overfit to the validation set, compromising its role as an unbiased proxy for unseen data [31]. Prevention: Use the test set only for the final evaluation and never for decision-making during model development [30] [32].

- Inadequate Sample Size: If the validation or test set is too small, the performance statistic will have high variance, making it an unreliable measure of true model performance [27] [33]. Prevention: Ensure the absolute size of the evaluation sets is statistically meaningful, which may require adjusting ratios for smaller datasets or employing cross-validation.

Advanced Techniques: Cross-Validation

For smaller datasets, a single train-validation-test split may be inefficient or unreliable. K-Fold Cross-Validation is a preferred advanced technique in such scenarios [27] [34]. The dataset is partitioned into K equal folds. The model is trained K times, each time using K-1 folds for training and the remaining fold for validation. The final validation performance is the average across all K runs. This method maximizes data usage for both training and validation and provides a more robust performance estimate, directly addressing the core thesis of optimizing validation reliability against limited training data [29].

The division of a dataset into distinct subsets represents a foundational step in the machine learning (ML) pipeline, directly impacting the reliability and validity of model evaluation. Within the broader thesis context of validation set versus training set dynamics, this protocol examines the mechanistic roles of these splits: the training set facilitates model parameter learning, the validation set enables hyperparameter tuning and model selection without bias, and the test set provides a final, unbiased assessment of generalization performance [32] [14] [27]. Improper splitting leads to overfitting, where a model excels on its training data but fails on unseen data, or underfitting, where it fails to capture underlying data patterns [32] [35]. This document outlines standardized protocols for two core splitting methodologies—random shuffling and stratified sampling—to ensure robust model validation, particularly for scientific and drug development applications where generalizability is paramount.

Core Concepts and Quantitative Guidelines

The Purpose of Each Data Subset

- Training Set: This is the largest subset, used directly to fit the model and optimize its internal parameters (weights) through exposure to data patterns [32] [31]. Its composition dictates the features and relationships the model will learn.

- Validation Set: A separate set of data used to evaluate the model periodically during training [32] [27]. It provides an unbiased evaluation for tuning hyperparameters (e.g., learning rate, regularization strength) and guides model architecture decisions, thus preventing the model from overfitting to the training data [14] [31].

- Test Set: This set is held out entirely until the final stage of model development [14] [31]. It is used exactly once to assess the performance of the final, tuned model on completely unseen data, simulating real-world application and providing a key metric for generalization capability [32] [27].

Determining the Data Split Ratio

The optimal split ratio is not universal but depends on dataset size, model complexity, and the specific use case. The core trade-off is between the variance of parameter estimates (benefiting from more training data) and the variance of performance statistics (benefiting from larger validation/test sets) [33]. The following table summarizes common split ratios and their applications:

Table 1: Common Data Split Ratios and Their Applications

| Split Ratio (Train/Val/Test) | Typical Dataset Size | Rationale and Best Use Context |

|---|---|---|

| 70/15/15, 60/20/20 [4] [31] | Medium-sized datasets (e.g., thousands of samples) | A balanced approach that provides substantial data for both training and evaluation. A good starting point for many research applications. |

| 80/10/10 [31] | Medium to large datasets | Allocates more data to training, which can be beneficial for complex models, while still reserving a statistically significant portion for evaluation. |

| 98/1/1 [31] | Very large datasets (e.g., millions of samples) | For massive datasets, even a small percentage (e.g., 1%) provides a sufficiently large and representative validation and test set for reliable evaluation. |

| N/A (K-Fold Cross-Validation) [32] [4] | Small datasets | Replaces a single validation split. The data is divided into k folds; the model is trained on k-1 folds and validated on the remaining fold, repeated k times. This maximizes data use for both training and validation. |

Sampling Methodologies: Protocols and Workflows

Method 1: Random Shuffling and Split

Principle: This method involves randomly permuting the entire dataset before partitioning it into subsets. It assumes the data is independent and identically distributed (i.i.d.) and that a random subset will be representative of the whole [4] [36].

Best For: Large, well-balanced datasets where all classes or categories of interest are approximately equally represented [36]. It is a simple and efficient default.

Protocol:

- Preprocessing: Ensure the dataset is cleaned and formatted.

- Randomization: Shuffle the data randomly to eliminate any order-dependent biases [4] [35]. Critical step: Set a random seed for reproducibility.

- Partitioning: Split the shuffled data according to the chosen ratio (see Table 1).

Experimental Workflow Diagram:

Method 2: Stratified Sampling

Principle: Stratified splitting ensures that the distribution of a critical categorical variable (most often the target variable for classification) is preserved across all data subsets [32] [36]. This is crucial for imbalanced datasets where a random split might by chance exclude rare classes from the training or validation sets.

Best For: Imbalanced datasets, clinical trial data with rare outcomes, and any scenario where maintaining the proportion of key strata is critical for model fairness and performance [37] [35] [36].

Protocol:

- Identify Stratification Variable: Select the variable to stratify by, typically the target label (e.g., "disease" vs. "control").

- Calculate Proportions: Determine the proportion of each class within the entire dataset.

- Stratified Split: Perform the data split in a way that preserves these proportions in the training, validation, and test sets [32] [36].

Experimental Workflow Diagram:

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software and Libraries for Data Splitting

| Tool / Reagent | Function / Application | Key Utility in Research |

|---|---|---|

Scikit-learn (train_test_split, StratifiedShuffleSplit) [4] [36] |

A core Python library for machine learning. Provides functions for both random and stratified data splitting. | The industry standard for prototyping and implementing robust data splitting protocols with minimal code. |

| Skmultilearn [38] | A Scikit-learn extension for multi-label classification. | Enables multi-stratified splitting when dealing with complex datasets with multiple, interdependent categorical targets. |

| Encord Active [27] | A platform for managing computer vision datasets. | Provides tools for visualizing and curating data splits, ensuring balanced coverage of features like image quality, brightness, and object density. |

| Lightly [31] | A data-centric AI platform for computer vision. | Helps curate the most representative and diverse samples for each split, ensuring balanced class distributions and reducing biases. |

Advanced Topics and Best Practices

Cross-Validation: A Robust Alternative

For smaller datasets or to obtain more robust performance estimates, K-Fold Cross-Validation is a superior alternative to a single validation split [32] [4]. The dataset is randomly partitioned into k equal-sized folds. The model is trained k times, each time using k-1 folds for training and the remaining fold for validation. The final performance is the average of the k validation scores [32]. Stratified K-Fold Cross-Validation further refines this by preserving the class distribution in each fold, which is especially important for imbalanced data [32].

Critical Pitfalls to Avoid

- Data Leakage: This occurs when information from the test set inadvertently influences the model training process [35] [27]. To prevent this, all preprocessing steps (e.g., feature scaling, imputation) must be fit only on the training data and then applied to the validation and test sets [14] [35]. The test set must remain completely untouched until the final evaluation.

- Ignoring Temporal Structure: For time-series data (e.g., patient longitudinal data, sensor readings), a standard random split is invalid. Instead, a time-based split must be used, where the model is trained on past data and validated/tested on future data to simulate real-world forecasting and prevent leakage [31].

- Inadequate Sample Size: While ratios provide a guideline, the absolute size of the validation and test sets matters. Too few samples can lead to high-variance performance estimates that are unreliable [33]. Ensure these sets are large enough to be statistically significant for your evaluation metrics.

In the field of biomedical machine learning research, the central challenge of model validation lies in accurately estimating a model's performance on unseen data, thereby ensuring its clinical utility and generalizability. The conventional approach of using a single, static validation set versus training set creates a fundamental tension: while it provides a straightforward mechanism for evaluation, it often fails to provide a robust estimate of performance, particularly when working with the small or unique datasets common in biomedical contexts. This methodological limitation is especially problematic in healthcare applications, where models developed using simplistic validation approaches may appear to perform well during development but fail to generalize to real-world clinical populations, potentially compromising patient safety and decision support.

Cross-validation emerges as a powerful alternative that addresses these limitations by systematically partitioning the available data to maximize both model development and validation efficacy. Unlike the single holdout method, cross-validation utilizes the entire dataset for both training and validation through iterative partitioning, providing a more reliable estimate of model performance while mitigating the risks of overfitting. This approach is particularly valuable for biomedical research, where data collection is often constrained by privacy concerns, rare conditions, and the substantial costs associated with biomedical data acquisition [39]. By embracing cross-validation methodologies, researchers can achieve more reliable model evaluation, enhance reproducibility, and ultimately accelerate the translation of machine learning innovations into clinically meaningful applications.

Cross-Validation Techniques: A Comparative Analysis

Various cross-validation techniques offer distinct advantages and disadvantages, making them differentially suitable for specific biomedical research scenarios. The choice of technique involves careful consideration of dataset characteristics, computational constraints, and the specific goals of model evaluation.

K-Fold Cross-Validation represents the most widely adopted approach, where the dataset is partitioned into k equal-sized folds. The model is trained on k-1 folds and validated on the remaining fold, repeating this process k times such that each fold serves as the validation set exactly once [40] [41]. The final performance metric is calculated as the average across all iterations. This method typically uses k=5 or k=10, providing a reasonable balance between bias and variance [42]. The primary advantage of k-fold cross-validation is its demonstrated ability to provide a more reliable and stable performance estimate compared to a single holdout validation set [42].

Stratified K-Fold Cross-Validation introduces a crucial refinement for classification problems with imbalanced class distributions, a common characteristic of biomedical datasets where disease populations may be underrepresented. This technique ensures that each fold maintains approximately the same percentage of samples of each target class as the complete dataset, thus preventing the creation of folds with unrepresentative class distributions that could skew performance evaluation [40] [42]. For highly imbalanced classes, stratified cross-validation is considered essential rather than merely recommended [39].

Leave-One-Out Cross-Validation (LOOCV) represents the extreme case of k-fold cross-validation where k equals the number of samples in the dataset (n). In each iteration, a single sample is used for validation while the remaining n-1 samples form the training set [42]. Although LOOCV is nearly unbiased and maximizes training data usage, it becomes computationally prohibitive for large datasets as it requires building n models. Consequently, the data science community generally prefers 5- or 10-fold cross-validation over LOOCV based on empirical evidence of its optimal bias-variance tradeoff [42].

Nested Cross-Validation addresses the critical issue of optimistic bias that arises when the same data is used for both hyperparameter tuning and model evaluation. This sophisticated approach implements two layers of cross-validation: an inner loop for parameter optimization and an outer loop for performance estimation [39]. While nested cross-validation provides an almost unbiased performance estimate, it comes with substantial computational demands, requiring the model to be trained numerous times [39].

Table 1: Comparative Analysis of Cross-Validation Techniques

| Technique | Best For | Key Advantage | Key Disadvantage | Recommended Use in Biomedicine |

|---|---|---|---|---|

| K-Fold | Small to medium datasets [40] | More reliable than holdout; balanced bias-variance [42] | Performance varies with choice of K [43] | General-purpose internal validation |

| Stratified K-Fold | Imbalanced classification problems [40] [42] | Preserves class distribution in folds [40] | Only applicable to classification tasks | Rare disease classification, clinical outcome prediction |

| Leave-One-Out (LOOCV) | Very small datasets (<50 samples) [42] | Low bias, maximal training data usage [42] | Computationally expensive; high variance [42] | Extremely limited patient cohorts (e.g., rare diseases) |

| Nested CV | Hyperparameter tuning & unbiased performance estimation [39] | Reduces optimistic bias in performance reports [39] | Significant computational cost [39] | Final model evaluation before external validation |

Table 2: Impact of Cross-Validation Configuration Choices

| Configuration Factor | Impact on Model Comparison & Evaluation | Practical Recommendation |

|---|---|---|

| Number of Folds (K) | Higher K increases chance of detecting "significant" differences between models even when none exist [43] | Use consistent K (5 or 10) for comparable studies; avoid arbitrary changes |

| Number of Repetitions (M) | Repeated CV (M>1) with different random seeds increases false positive rate for model superiority claims [43] | Use M=1 for standard K-Fold; use repeated CV only with statistical correction |

| Subject-wise vs Record-wise Splitting | Record-wise splitting with correlated measurements can cause data leakage and overoptimistic performance [39] | Use subject-wise splitting for patient-level predictions; ensure no patient appears in both train and test sets simultaneously |

Experimental Protocols and Implementation

Protocol 1: Implementing K-Fold Cross-Validation for Clinical Phenotype Classification

Background: This protocol details the application of k-fold cross-validation for developing a classifier that predicts clinical phenotypes from high-dimensional biomedical data, such as neuroimaging features or genomic markers.

Materials and Reagents:

- Computing Environment: Python 3.7+ with scikit-learn 1.0+ ecosystem

- Biomedical Dataset: Tabular format with samples as rows and features as columns

- Classification Algorithm: Logistic Regression (or other appropriate classifier)

Procedure:

- Data Preprocessing: Standardize features by removing the mean and scaling to unit variance. For neuroimaging data, this might include intensity normalization.

- Stratified K-Fold Splitting: Initialize the stratified k-fold splitter with k=5 or 10 and a fixed random state for reproducibility.

- Model Training & Validation: For each fold, the pipeline is fitted on the training folds and used to predict the held-out validation fold.

- Performance Aggregation: Calculate the mean and standard deviation of the performance metric (e.g., accuracy) across all folds.

Troubleshooting Tips:

- For highly imbalanced datasets, use

scoring='balanced_accuracy'orscoring='f1_macro'instead of accuracy. - If convergence warnings occur, increase the

max_iterparameter in LogisticRegression.

Protocol 2: Nested Cross-Validation for Hyperparameter Tuning with Electronic Health Record Data

Background: This protocol describes the use of nested cross-validation for hyperparameter optimization and unbiased performance estimation when working with Electronic Health Record (EHR) data, which often contains correlated patient records.