Uncertainty Quantification in Computational Chemistry: Building Trust in AI for Drug Discovery and Materials Design

This article provides a comprehensive guide to uncertainty quantification (UQ) in computational chemistry, tailored for researchers and drug development professionals.

Uncertainty Quantification in Computational Chemistry: Building Trust in AI for Drug Discovery and Materials Design

Abstract

This article provides a comprehensive guide to uncertainty quantification (UQ) in computational chemistry, tailored for researchers and drug development professionals. As artificial intelligence and machine learning models become central to molecular design, assessing their reliability is crucial. We explore the fundamental sources of uncertainty—aleatoric and epistemic—and detail state-of-the-art UQ methods, including ensemble, Bayesian, and similarity-based approaches. The article further addresses practical challenges in optimizing UQ for real-world applications, compares the performance of different techniques, and validates their impact through case studies in drug discovery and materials science, offering a roadmap for implementing trustworthy computational models.

What is Uncertainty in Computational Models? Core Concepts and Sources of Error

In computational chemical data research, the reliability of machine learning (ML) models is paramount for accelerating discovery, particularly in high-stakes fields like drug development. Uncertainty Quantification (UQ) has thus emerged as a critical discipline, enabling researchers to gauge the confidence of model predictions and make more informed decisions [1]. Without effective UQ, predictions of molecular properties or drug candidate viability can lead to costly failed experiments and misguided research directions [2]. The foundation of robust UQ lies in distinguishing between two fundamental types of uncertainty: aleatoric and epistemic.

Aleatoric uncertainty (from the Latin alea, meaning "dice") refers to the inherent randomness or noise intrinsic to the data itself, while epistemic uncertainty (from the Greek epistēmē, meaning "knowledge") stems from a model's lack of knowledge [2] [3]. This distinction is not merely philosophical; it provides a diagnostic framework for researchers to understand the sources of error in their models and determine the most effective strategies for improvement—whether by refining experimental protocols to reduce noise or by collecting more data in underrepresented regions of chemical space to enhance model knowledge [3]. This guide provides an in-depth technical examination of these concepts, their mathematical foundations, quantification methodologies, and practical applications within computational chemistry research.

Theoretical Foundations and Definitions

Aleatoric Uncertainty: The Irreducible Stochastic Component

Aleatoric uncertainty captures the innate stochasticity of a system. It arises from the natural variability in data generation processes, such as random measurement errors, inherent biological stochasticity, or the unpredictable fluctuations in experimental conditions [2] [4]. A key characteristic of aleatoric uncertainty is its irreducibility; it cannot be diminished by collecting more data or refining the model architecture, as it is an inherent property of the data-generating process itself [1] [3].

In a regression model, this is often represented mathematically as: y = f(x) + ε, where ε ~ N(0, σ²) Here, the noise term ε, assumed to follow a Gaussian distribution with variance σ², represents the aleatoric uncertainty [1]. Aleatoric uncertainty can be further categorized as:

- Homoscedastic: The uncertainty σ² is constant for all input data points [5].

- Heteroscedastic: The uncertainty σ² varies as a function of the input x, which is more reflective of reality in most chemical systems, where noise may depend on the specific molecular context or experimental setup [5].

In drug discovery, aleatoric uncertainty can manifest as the inherent variability in measuring molecular binding affinities due to biological stochasticity or human intervention in experimental protocols [4].

Epistemic Uncertainty: The Reducible Knowledge Gap

Epistemic uncertainty arises from a model's incomplete knowledge or ignorance about the system. This type of uncertainty is attributable to insufficient training data, model limitations, or a fundamental lack of understanding of the underlying processes [6] [7]. In contrast to aleatoric uncertainty, epistemic uncertainty is reducible. It can be mitigated by incorporating more high-quality training data, especially in regions of the chemical space where the model is currently uncertain, or by improving the model's architecture and training procedures [2] [3].

From a Bayesian perspective, epistemic uncertainty is quantified by placing a probability distribution over the model's parameters, θ. Before observing data, this belief is encoded in the *prior distribution, p(θ). After observing data *D, this belief is updated to form the *posterior distribution, p(θ|D), using Bayes' theorem: *p(θ|D) = [p(D|θ) p(θ)] / p(D) The spread of this posterior distribution reflects the epistemic uncertainty; a wider spread indicates greater uncertainty about the correct model parameters [1]. In practical terms, a model will exhibit high epistemic uncertainty when making predictions for molecules that are structurally dissimilar to those in its training set, effectively operating outside its "applicability domain" (AD) [2].

Table 1: Core Characteristics of Aleatoric and Epistemic Uncertainty

| Feature | Aleatoric Uncertainty | Epistemic Uncertainty |

|---|---|---|

| Origin | Inherent randomness in data [2] | Model's lack of knowledge [6] |

| Reducibility | Irreducible [3] | Reducible [3] |

| Primary Cause | Measurement noise, biological stochasticity [4] | Lack of training data, model limitations [6] [2] |

| Mathematical Representation | Variance of the noise term ε in y=f(x)+ε [1] | Variance of the posterior predictive distribution [1] |

| Context in Drug Discovery | Inherent unpredictability of molecular interactions [4] | Predictions for novel scaffolds outside the model's training domain [2] |

Mathematical Frameworks for Uncertainty Quantification

Quantifying both types of uncertainty typically involves probabilistic models that output a distribution instead of a single, deterministic value.

Quantifying Aleatoric Uncertainty

For aleatoric uncertainty, the model directly learns to predict the parameters of a distribution. In regression, a common approach is Mean-Variance Estimation, where a neural network has two output neurons: one for the predicted mean, μ(x), and another for the predicted variance, σ²(x), which represents the heteroscedastic aleatoric uncertainty [5] [3]. The model is trained by minimizing the Gaussian negative log-likelihood (NLL) loss: L_NLL(θ) = (1/2) log(2πσ²_θ(x)) + (y - μ_θ(x))² / (2σ²_θ(x)) This loss function encourages the model to assign high uncertainty (large σ²) to predictions with large errors, thereby learning the inherent noise in the data.

Quantifying Epistemic Uncertainty

For epistemic uncertainty, the goal is to estimate uncertainty over the model parameters. Bayesian Neural Networks (BNNs) are a fundamental approach, where the model weights are treated as probability distributions rather than fixed values [2] [1]. Performing inference in a BNN involves marginalizing over the posterior distribution of the weights, a process that approximates the integral: p(y|x, D) = ∫ p(y|x, θ) p(θ|D) dθ This integral is typically intractable and is approximated using techniques like Monte Carlo (MC) Dropout or Markov Chain Monte Carlo (MCMC) methods [1]. In MC Dropout, for example, dropout is applied at test time, and multiple stochastic forward passes are performed. The variance across these different predictions provides an estimate of the epistemic uncertainty [1].

Ensemble Methods for Combined Quantification

A highly effective and widely used practical alternative is ensemble learning [2]. Multiple models (e.g., neural networks with different random initializations) are trained on the same task. The disagreement or variance in the predictions of these individual models serves as a measure of epistemic uncertainty, while the average of their predicted variances captures the aleatoric uncertainty [3] [8]. Ensembling is known to be a reliable tool for quantifying and improving model performance, specifically for reducing the variance component of epistemic uncertainty [3].

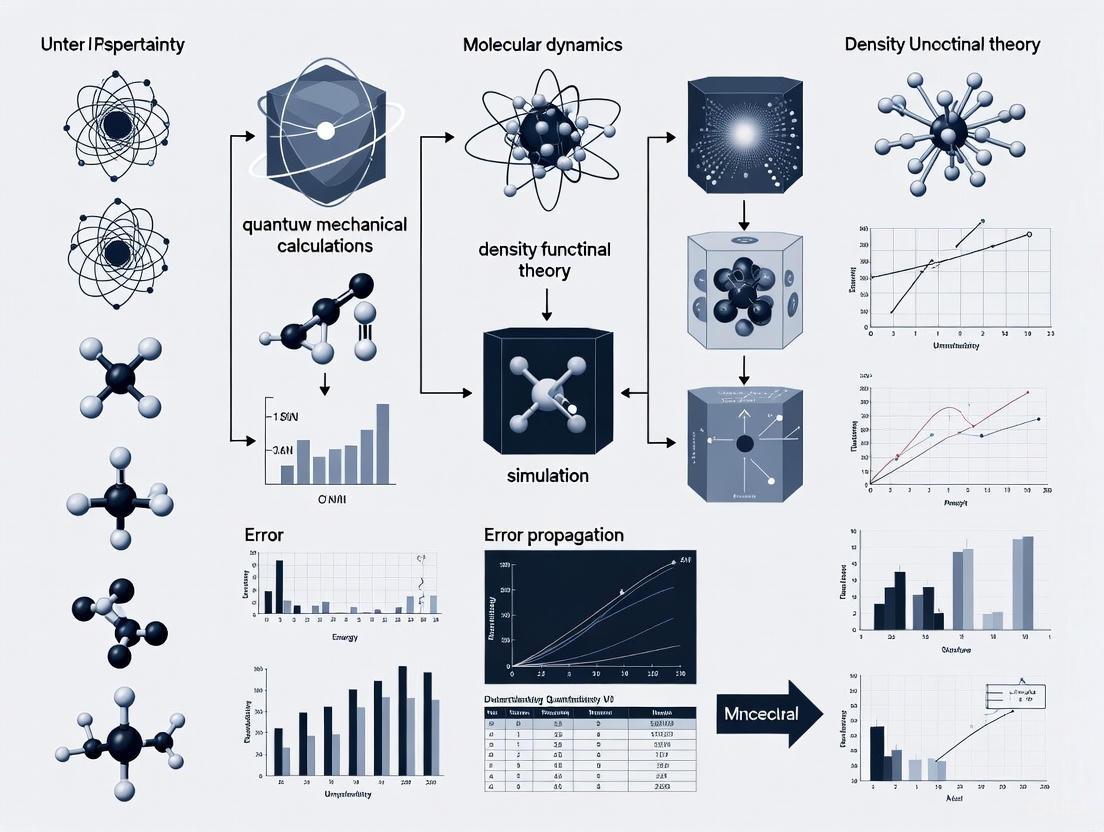

Diagram 1: Ensemble UQ Workflow. An input molecule is passed through an ensemble of models. The mean of the predicted variances (⟨σ²ᵢ⟩) quantifies aleatoric uncertainty, while the variance of the predicted means (Var(μᵢ)) quantifies epistemic uncertainty.

Experimental Protocols in Computational Chemistry

The theoretical concepts of aleatoric and epistemic uncertainty are best understood through their manifestation in practical experimental settings. The following protocols outline standard methodologies for characterizing these uncertainties in chemical data research.

Protocol 1: Characterizing Uncertainty with Censored Data in Drug Discovery

Objective: To enhance uncertainty quantification in molecular property prediction (e.g., binding affinity) by incorporating censored experimental data, which provides thresholds rather than precise values [4].

Background: In early drug discovery, assays often have a limited measurement range. If a compound shows no activity within the tested concentration range, the result is censored—the exact half-maximal inhibitory concentration (IC₅₀) is unknown, but it is known to be above a certain threshold. Standard ML models typically discard this partial information [4].

Methodology:

Data Preparation:

- Precise Labels: Data points with directly measured quantitative values (e.g., IC₅₀ = 10 nM).

- Censored Labels: Data points where the measurement is only known to be above (right-censored) or below (left-censored) a specific threshold (e.g., IC₅₀ > 100 μM) [4].

Model Adaptation:

- Adapt ensemble-based, Bayesian, or Gaussian models to learn from both precise and censored labels using the Tobit model from survival analysis [4].

- The loss function (e.g., Gaussian NLL or MSE) is modified to be one-sided for censored data points. For a right-censored observation with threshold C, the loss becomes ∫_C^∞ N(y | μ(x), σ²(x)) dy [4].

Uncertainty Decomposition:

Evaluation:

- Compare the predictive performance (e.g., RMSE, calibration) and the quality of uncertainty estimates (e.g., correlation between uncertainty and prediction error) between models trained with and without censored data [4].

Table 2: Key Research Reagents for Censored Data Analysis

| Reagent / Tool | Function in Protocol |

|---|---|

| Internal Bioassay Data (e.g., IC₅₀/EC₅₀ from target or ADME-T assays) | Provides the experimental data containing both precise and censored labels for model training and validation [4]. |

| Tobit Regression Model | A statistical model from survival analysis that forms the basis for adapting standard loss functions to handle censored regression labels [4]. |

| Ensemble of Neural Networks | A practical modeling framework that can be adapted with a censored data loss function to disentangle aleatoric and epistemic uncertainty [4] [3]. |

| Temporal Data Splitting | A realistic data splitting strategy that approximates the true predictive performance in a drug discovery pipeline by evaluating on data generated after the training data was collected [4]. |

Protocol 2: Systematic Error Analysis in Molecular Property Prediction

Objective: To systematically dissect the total prediction error of an ML model for a molecular property (e.g., enthalpy) into contributions from data noise (aleatoric), model bias, and model variance (both epistemic) [3].

Background: Optimizing a model requires understanding the primary source of its error. A large bias suggests a need for architectural change, while large variance suggests a need for more data or regularization [3].

Methodology:

Controlled Data Set Construction:

- Use a synthetic, noise-free data set, such as one built using group additivity principles for molecular enthalpy, to establish a ground-truth baseline [3].

- Systematically introduce controlled levels of Gaussian noise to the target values to simulate aleatoric uncertainty.

- Vary the training set size to study its impact on epistemic uncertainty.

Model Training and Evaluation:

- Train multiple model architectures (e.g., Graph Neural Networks vs. Random Forests) and molecular representations (e.g., fingerprints vs. graphs) on the data sets from step 1 [3].

- For a given architecture, create an ensemble of models trained with different random seeds.

Error Decomposition:

- Total Error: Mean Squared Error (MSE) on a held-out test set.

- Aleatoric Uncertainty: Estimated as the mean of the predicted variances from the ensemble.

- Epistemic Uncertainty - Model Variance: Computed as the variance of the predicted means across the ensemble members [3].

- Epistemic Uncertainty - Model Bias: Estimated as the residual error: Bias² ≈ Total Error - (Aleatoric Uncertainty + Model Variance). This captures the error due to the model's architectural limitations [3].

Interpretation and Model Improvement Guidelines [3]:

- High Aleatoric Dominance: The model has learned the data's inherent noise. Further model improvement is unlikely to reduce error; focus on improving data quality (e.g., repeat experiments).

- High Model Variance Dominance: The model is sensitive to small changes in the training data. Mitigate by increasing training data, using ensembles, or applying regularization.

- High Model Bias Dominance: The model is too simple to capture the underlying relationship. Address by using a more complex model architecture, a more informative molecular representation, or extended training.

Diagram 2: Error Decomposition Protocol. An ensemble of models is trained on a molecular data source. Their combined predictions are used to calculate the total error, which is then decomposed into aleatoric uncertainty and the epistemic components of model variance and model bias.

Application Scenarios and Case Studies

Case Study 1: Uncertainty in Quantum Chemical Reference Data

Context: When training neural networks on potential energy surfaces (PESs), the reference data from quantum chemical calculations contain both aleatoric and epistemic errors [9].

- Aleatoric Errors: Statistical noise introduced by convergence thresholds in self-consistent field (SCF) iterations [9].

- Epistemic Errors: Systematic errors due to specific choices, such as the basis set used in the calculation, which limit the completeness of the theoretical model [9].

Findings: A study on H₂CO and HONO molecules found that for chemically "simple" cases like H₂CO (a single-reference problem), the effect of noise from standard single-point calculations did not significantly deteriorate the quality of the final PES. However, for molecules like HONO with significant multi-reference character, a clear correlation was found between model quality and the degree of multi-reference character (measured by the T1 amplitude). This highlights that epistemic errors arising from an insufficient theoretical model (e.g., using a single-reference method for a multi-reference system) require careful attention and can introduce substantial uncertainty [9].

Case Study 2: Active Learning for Efficient Drug Discovery

Context: The drug discovery process is resource-intensive, and deciding which compounds to synthesize and test next is a major challenge.

Application: An active learning loop uses epistemic uncertainty as a selection criterion.

Workflow:

- An initial model is trained on a small set of labeled compounds.

- The model screens a large virtual library and predicts the properties of all compounds.

- The compounds for which the model has the highest epistemic uncertainty (i.e., they are most different from the training set) are selected for experimental testing [2].

- The new experimental data is added to the training set, and the model is retrained.

- This loop repeats, strategically reducing the model's epistemic uncertainty and expanding its applicability domain with each iteration, thereby maximizing the informational gain per experiment [2].

The explicit distinction between aleatoric and epistemic uncertainty provides a powerful and necessary framework for advancing computational chemical data research. As demonstrated, aleatoric uncertainty defines the fundamental limit of predictability imposed by irreducible noise, while epistemic uncertainty serves as a diagnosable and actionable measure of a model's ignorance. The systematic quantification and decomposition of these uncertainties, through methods like ensembling, Bayesian inference, and tailored experimental protocols, enable researchers to make more reliable predictions, strategically guide resource-intensive experiments, and ultimately build more trustworthy AI models for drug discovery and materials design. Embracing this distinction is not just an academic exercise; it is a practical prerequisite for developing robust, efficient, and credible computational pipelines that can truly accelerate scientific discovery.

In the high-stakes landscape of drug discovery, decisions regarding which experiments to pursue are heavily influenced by computational models for quantitative structure-activity relationships (QSAR). These decisions are critical due to the time-consuming and expensive nature of wet-lab experiments, where missteps can cost millions of dollars and years of development time. The central challenge is that computational methods for QSAR modeling often suffer from limited data and sparse experimental observations, creating a trust deficit in model predictions [10].

Within this context, Uncertainty Quantification (UQ) has emerged as a transformative approach for assessing prediction reliability. UQ provides a statistical framework that not only delivers predictions but also quantifies the confidence in those predictions, enabling researchers to distinguish between reliable and unreliable results. This is particularly vital when exploring expansive chemical spaces where models must operate beyond their training data, a common scenario in molecular design [11].

Perhaps the most significant advancement in UQ involves leveraging previously underutilized information—censored labels. In pharmaceutical settings, approximately one-third or more of experimental labels are censored, providing thresholds rather than precise values of observations. Traditional machine learning approaches discard this partial information, but modern UQ frameworks can now incorporate it to significantly enhance reliability [10].

The Fundamentals of Uncertainty Quantification

Defining Uncertainty in Computational Chemical Data

Uncertainty in drug design manifests in two primary forms:

- Epistemic uncertainty: arises from limited data and knowledge, affecting model predictions for molecules structurally different from those in the training set.

- Aleatoric uncertainty: stems from inherent noise in experimental measurements, which is particularly relevant when dealing with censored data or stochastic biological assays.

The integration of UQ becomes essential when models guide exploration of broad chemical spaces. Without accurate uncertainty estimates, optimization algorithms may become trapped in false maxima or pursue chemically unrealistic molecules [11].

Current UQ Methodologies in Computational Chemistry

| Method Category | Key Examples | Strengths | Limitations |

|---|---|---|---|

| Ensemble Methods | Deep Ensemble D-MPNN | Simple implementation High scalability | Computationally intensive Requires multiple models |

| Bayesian Approaches | Bayesian Neural Networks | Theoretical foundations Coherent uncertainty estimates | Complex implementation Computationally demanding |

| Gaussian Processes | GPR, Kriging models | Accurate uncertainty estimates Non-parametric | O(n³) computational complexity Limited to smaller datasets |

| Hybrid Methods | UQ-enhanced GNNs | Scalable with large datasets Balances accuracy with efficiency | Requires specialized implementation [11] |

Each methodology offers distinct advantages for pharmaceutical applications. Ensemble methods train multiple models and measure disagreement as uncertainty, while Bayesian approaches infer probability distributions over model parameters. Gaussian process regression provides theoretically grounded uncertainty estimates but becomes computationally prohibitive with large datasets [11].

Advanced UQ Implementation: Methodologies and Protocols

Learning from Censored Data with the Tobit Model

A groundbreaking advancement in UQ for drug discovery involves adapting ensemble-based, Bayesian, and Gaussian models to learn from censored labels using the Tobit model from survival analysis. This approach transforms how partial information is utilized in pharmaceutical research [10].

Experimental Protocol for Censored Regression:

- Data Preparation: Collect experimental measurements with identified censored regions (e.g., solubility values reported as ">10μM" due to detection limits)

- Model Adaptation: Implement Tobit likelihood function within chosen UQ framework (ensemble, Bayesian, or Gaussian)

- Training Procedure: Optimize parameters using maximum likelihood estimation accounting for both precise and censored observations

- Uncertainty Calibration: Validate uncertainty estimates against holdout set with known outcomes

The critical innovation lies in modifying the loss function to handle censored data. For right-censored data (common when compounds exceed detection limits), the model maximizes the probability that the true value exceeds the censoring threshold, rather than treating these observations as missing data [10].

UQ-Enhanced Graph Neural Networks for Molecular Design

The integration of UQ with Graph Neural Networks (GNNs), particularly Directed Message Passing Neural Networks (D-MPNNs), represents a paradigm shift in computational-aided molecular design (CAMD) [11].

Experimental Workflow for UQ-Enhanced GNNs:

Figure 1: UQ-Enhanced Molecular Design Workflow

This workflow demonstrates how uncertainty estimates directly influence molecular optimization decisions. The Probabilistic Improvement Optimization (PIO) method quantifies the likelihood that candidate molecules will exceed predefined property thresholds, enabling more reliable exploration of chemical space [11].

Detailed Protocol for UQ-GNN Implementation:

- Molecular Representation: Convert molecular structures into graph representations with atoms as nodes and bonds as edges

- D-MPNN Architecture: Implement directed message passing to capture complex molecular interactions

- Uncertainty Quantification: Employ ensemble methods by training multiple GNNs with different initializations

- Genetic Algorithm Integration: Use uncertainty estimates to guide mutation and crossover operations

- Multi-objective Optimization: Apply probabilistic improvement to balance competing property objectives

This approach has demonstrated particular effectiveness in multi-objective tasks, where it balances competing objectives and outperforms uncertainty-agnostic approaches [11].

Benchmarking and Validation: Quantitative Evidence

Performance Metrics for UQ in Drug Discovery

Robust evaluation is essential for validating UQ methodologies. Key metrics include:

- Calibration: How well predicted confidence intervals match empirical frequencies

- Sharpness: The tightness of prediction intervals (should be minimized subject to calibration)

- Temporal Performance: Model accuracy and uncertainty reliability under dataset shift over time

Temporal evaluation is particularly crucial, as drug discovery projects evolve over time, and models must maintain reliability as chemical space exploration expands [10].

Benchmarking Results Across Pharmaceutical Applications

| Application Domain | Dataset | Without UQ | With UQ | Improvement with Censored Data |

|---|---|---|---|---|

| Organic Emitter Design | Tartarus OLED | 62% success | 78% success | +12% success rate |

| Protein Ligand Design | Tartaurus Docking | 55% success | 72% success | +14% success rate |

| Reaction Substrate Design | Tartarus Reaction | 58% success | 75% success | +11% success rate |

| Multi-objective Optimization | GuacaMol Suite | 47% success | 68% success | +21% success rate |

Table 2: Performance comparison of UQ methods across pharmaceutical design tasks. Data synthesized from benchmark studies [11].

The tabulated results demonstrate that UQ integration substantially improves optimization success rates across diverse pharmaceutical applications. The most significant improvement occurs in multi-objective optimization tasks, where UQ methods better balance competing constraints [11].

The value of censored data is particularly notable in real pharmaceutical settings, where approximately one-third or more of experimental labels are censored. Models that incorporate this previously discarded information show significantly enhanced reliability in uncertainty estimation [10].

Practical Implementation: The Scientist's Toolkit

Essential Research Reagent Solutions

| Tool/Category | Specific Examples | Function in UQ for Drug Design |

|---|---|---|

| Computational Frameworks | Chemprop, PyTorch, TensorFlow Probability | Implements D-MPNN and Bayesian neural networks for molecular property prediction |

| UQ Methodologies | Ensemble Methods, Bayesian NNs, Gaussian Processes | Quantifies prediction uncertainty for reliable decision-making |

| Optimization Algorithms | Genetic Algorithms, Probabilistic Improvement Optimization | Guides exploration of chemical space using uncertainty estimates |

| Data Handling Tools | Tobit Model, Survival Analysis Extensions | Enables learning from censored experimental data |

| Benchmarking Platforms | Tartarus, GuacaMol | Provides standardized evaluation across diverse drug discovery tasks |

Table 3: Essential computational tools for implementing UQ in drug design workflows

Implementation Workflow for Pharmaceutical Research Teams

Figure 2: Practical UQ Implementation Protocol

The integration of Uncertainty Quantification into computational drug design represents a fundamental shift from point-estimate predictions to confidence-aware forecasting. By systematically quantifying uncertainty, particularly through innovative approaches that leverage censored data, pharmaceutical researchers can make more informed decisions, reduce costly experimental failures, and accelerate the discovery of novel therapeutics.

The evidence from rigorous benchmarking demonstrates that UQ-enhanced methods, particularly those combining graph neural networks with probabilistic optimization frameworks, significantly improve success rates in molecular optimization tasks. As the field advances, the adoption of these uncertainty-aware approaches will become increasingly critical for navigating the complex trade-offs between exploration and exploitation in vast chemical spaces.

Trust in computational predictions is no longer a qualitative notion but a quantifiable property that can be optimized, validated, and integrated into the strategic planning of drug discovery campaigns. The organizations that embrace this paradigm will possess a decisive advantage in the efficient translation of computational insights into tangible therapeutic breakthroughs.

The Applicability Domain (AD) of a predictive model defines the boundaries within which the model's predictions are considered reliable and accurate [12]. It represents the chemical, structural, or biological space encompassed by the training data used to develop the model [12]. In the context of computational chemistry and quantitative structure-activity relationship (QSAR) modeling, establishing a well-defined AD is a fundamental principle for ensuring predictions are used appropriately and safely, particularly for regulatory decision-making [13] [12].

The core premise is that predictive models are primarily valid for interpolation within the chemical space of their training data rather than for extrapolation beyond it [12]. When a new compound falls outside a model's AD, its predictions become less reliable, and using them could lead to incorrect conclusions with significant consequences, especially in fields like drug development and toxicological safety assessment [14]. The Organisation for Economic Co-operation and Development (OECD) mandates that a defined AD is a necessary condition for a QSAR model to be considered valid for regulatory purposes [12].

The Critical Need for a Defined Applicability Domain

The Problem of Model Over-Extrapolation

Without a clear understanding of its Applicability Domain, any predictive model can be misapplied to compounds or materials for which it was never designed, leading to severe performance degradation. This degradation can manifest as high prediction errors and/or unreliable uncertainty estimates [15]. In computational chemistry and materials science, where machine learning (ML) is increasingly used for property prediction, the exponential growth of publications makes the rigorous assessment of model domain a prerequisite for trustworthy science [15].

Consequences in Drug Discovery and Development

The stakes for defining model limits are exceptionally high in drug development. Alzheimer's disease (AD) drug development, for instance, has a failure rate of over 99% [16]. While this high attrition is due to many factors, the pursuit of biologically unvalidated targets is a significant contributor [16]. This context underscores the importance of "the right target"—a critical aspect of the "rights" of precision drug development [16]. Computational models used for target validation, lead compound identification, and toxicity prediction must therefore be used within their well-characterized domains to avoid costly late-stage failures. The process from target identification to approved drug can take over 12 years and cost an average of $2.6 billion, making early, reliable predictions from computational models invaluable [17].

Table: The "Rights" of Precision Drug Development Aligned with Applicability Domain Concepts

| The "Right" Principle | Description | Connection to Applicability Domain |

|---|---|---|

| Right Target | Identifying the appropriate biologic process for a therapeutic intervention. | Ensures models are built on a relevant biological and chemical space. |

| Right Drug | A molecule with well-understood PK/PD properties, BBB penetration, and acceptable toxicity. | Confirms a candidate molecule is within the AD of property prediction models (e.g., for solubility, toxicity). |

| Right Participant | Selecting patients in the correct phase of the disease who are most likely to respond. | Defines the population for which clinical outcome models are applicable. |

| Right Trial | A well-conducted trial with appropriate clinical and biomarker outcomes. | Establishes the boundaries for extrapolating trial results to the broader patient population. |

Methodological Approaches for Defining the Applicability Domain

There is no single, universally accepted algorithm for defining an AD [12]. Instead, multiple methods are commonly employed to characterize the interpolation space of a model, each with its own strengths and weaknesses [13] [12]. These methods can be grossly classified into several categories.

Range-Based and Geometrical Methods

These are among the simplest approaches. The bounding box method defines the AD as the multidimensional space within the minimum and maximum values of each descriptor in the training set. A new compound is considered within the domain only if all its descriptor values fall within these ranges [13] [12]. While simple to implement, this method can include large, empty regions of chemical space where no training data exists.

The convex hull method defines a geometrical boundary that encompasses all training compounds in the descriptor space. A prediction is considered reliable if the new compound falls within this hull [12]. A limitation is that the convex hull may include vast regions with no training data, and it is computationally intensive to calculate in high-dimensional spaces.

Distance-Based Methods

These methods assess the similarity of a new compound to the training set based on distance metrics in the descriptor space.

- Leverage Approach: For regression-based QSAR models, the leverage of a compound is calculated from the hat matrix of the molecular descriptors. A commonly used rule is the Williams plot, which plots standardized residuals versus leverage values. A threshold value (typically h* = 3p/n, where p is the number of model parameters and n is the number of training compounds) is used to identify compounds with high leverage, which are structurally influential or outside the AD [13] [12].

- Euclidean and Mahalanobis Distance: The average Euclidean distance to the k-nearest neighbors in the training set is a simple measure. The Mahalanobis distance, which accounts for the correlation between descriptors, is another measure used to determine if a new compound is too distant from the training set mean [13] [15].

Probability Density and Kernel-Based Methods

Kernel Density Estimation (KDE) offers several advantages over other approaches. It provides a density value that acts as a dissimilarity measure, naturally accounts for data sparsity, and can handle arbitrarily complex geometries of data and ID regions without being limited to a single, pre-defined shape like a convex hull [15]. KDE-based methods have been shown to effectively differentiate data points that are inside the domain (with low residuals and reliable uncertainties) from those that are outside (with high errors and unreliable uncertainty estimates) [15].

Comparison of Key AD Methods

Table: Comparison of Applicability Domain Definition Methods

| Method | Brief Description | Advantages | Limitations |

|---|---|---|---|

| Bounding Box | Defines AD based on min/max values of each descriptor. | Simple to implement and interpret. | Can include large, empty regions of chemical space; sensitive to outliers. |

| Convex Hull | Creates a geometrical boundary encompassing all training data. | Provides a well-defined interpolation region. | Computationally intensive in high dimensions; includes empty spaces within the hull. |

| Leverage | Uses the hat matrix to identify influential/remote compounds. | Standardized approach in QSAR; easy to visualize (Williams plot). | Limited to linear model frameworks. |

| k-Nearest Neighbors (k-NN) | Measures distance (e.g., Euclidean) to the k-nearest training compounds. | Intuitive; accounts for local data density. | Choice of k and distance metric can affect results; suffers from the "curse of dimensionality." |

| Kernel Density Estimation (KDE) | Estimates the probability density distribution of the training data. | Handles complex data distributions and multiple ID regions; accounts for sparsity. | Choice of kernel and bandwidth can impact results. |

The following diagram illustrates the logical workflow for determining the Applicability Domain of a model and deciding on a prediction for a new compound.

A Formal Framework: Decomposing the Applicability Domain

The variety of methodologies has led to confusion among end-users. To address this, a formal framework proposes that the AD is not a monolithic concept but can be broken down into three distinct sub-domains [18]:

- Model Domain: This defines the chemical space where the model is, in principle, applicable. It is determined solely by the information from the training set and the model's descriptors.

- Prediction Domain: This assesses the confidence for a specific prediction. A compound can be within the model's global domain, but if it lies in a sparsely populated region of the feature space, the confidence for that specific prediction may be low.

- Decision Domain: This incorporates regulatory or business context, defining the level of prediction confidence required for a particular decision (e.g., prioritization for screening vs. regulatory submission).

This separation provides a more nuanced and actionable understanding of model reliability, moving beyond a simple binary "in/out" classification [18].

Experimental Protocols for AD Determination and Validation

Protocol 1: Defining Domain with Kernel Density Estimation (KDE)

This protocol is based on a recent general approach for determining the AD of machine learning models [15].

- Feature Space Preparation: Standardize the features (descriptors) of the training set. Dimensionality reduction (e.g., PCA) may be applied to simplify the density estimation.

- KDE Model Fitting: Fit a Kernel Density Estimation model to the preprocessed training data. The kernel type (e.g., Gaussian) and bandwidth must be selected, often via cross-validation.

- Density Threshold Determination: Calculate the density value for every training compound using the KDE model. Establish a density threshold,

T, below which a compound is considered out-of-domain. A common method is to setTas a low percentile (e.g., the 5th percentile) of the density distribution of the training set. - Validation: Apply the trained KDE model and the threshold

Tto an external test set. The protocol should confirm that test compounds with KDE likelihoods belowTare chemically dissimilar to the training set and are associated with higher prediction errors and/or unreliable uncertainty estimates.

Protocol 2: Validation of Prediction Uncertainty in the Calibration-Sharpness Framework

This protocol is essential for validating that the uncertainty estimates for a model's predictions are themselves reliable, which is a key aspect of understanding a model's limits [19].

- Data Collection: For a set of

Npredictions (e.g., from a test set), collect the triples(y_i, ŷ_i, u_i)wherey_iis the true value,ŷ_iis the predicted value, andu_iis the predicted uncertainty (e.g., standard deviation). - Calibration Check: A model is well-calibrated if, for example, a 95% prediction interval contains the true value about 95% of the time. This can be assessed graphically by plotting the observed versus predicted confidence levels, or by calculating metrics like the root mean square calibration error (RMSCE).

- Sharpness Check: Sharpness evaluates how narrow the prediction intervals are. A model with tighter intervals is more informative, provided it is well-calibrated. Sharpness can be measured by the average width of the prediction intervals.

- Interpretation: The ideal model is both well-calibrated and sharp. Poor calibration indicates that the model's uncertainty estimates are not trustworthy, which is a critical failure for assessing the applicability domain.

The Scientist's Toolkit: Key Reagents and Computational Tools

Table: Essential "Reagents" for Applicability Domain Research

| Tool / Reagent | Type | Primary Function in AD Analysis |

|---|---|---|

| Molecular Descriptors | Software-Derived Metrics | Quantify chemical structure and properties to define the feature space for models (e.g., logP, polar surface area, topological indices). |

| Training Set Compounds | Chemical Library | The set of molecules used to build the predictive model; defines the initial chemical space of the AD. |

| External Test Set Compounds | Chemical Library | An independent set of molecules used to validate the model's performance and the robustness of its defined AD. |

| KDE Software Library | Computational Tool | (e.g., scikit-learn in Python) Used to estimate the probability density of the training data in feature space, serving as a distance measure. |

| PCA Software Library | Computational Tool | (e.g., scikit-learn in Python) Used for dimensionality reduction to simplify the feature space before AD analysis. |

| Reference Compounds | Chemical Standards | Well-characterized compounds, often including those known to be structurally distinct from the training set, used to test the boundaries of the AD. |

Applications in Computational Chemistry and Drug Discovery

Role in QSAR and Nano-QSAR

In QSAR modeling, the AD is crucial for estimating the uncertainty of a prediction for a new chemical based on its similarity to the chemicals used in model development [13]. The concept has also expanded into nanotechnology and nanoinformatics. For nano-QSARs, which predict the properties or toxicity of engineered nanomaterials, assessing the AD helps determine if a new nanomaterial is sufficiently similar to those in the training set to warrant a reliable prediction, thereby addressing challenges of data scarcity and heterogeneity [12].

Supporting Target Validation in Drug Discovery

A critical step in drug discovery is target validation—determining that a biological target is relevant to a disease and can be modulated to provide a therapeutic effect [17] [20]. Computational models are often used to predict the activity of compounds against a novel target. Using these models within their strict AD increases confidence that a predicted "hit" is a true positive, helping to de-risk the expensive and long process of drug development. This is particularly important for complex diseases like Alzheimer's, where the failure rate for drug candidates is exceptionally high [16] [20]. The following diagram summarizes how AD integrates into the broader drug discovery workflow.

Artificial Intelligence (AI) has ushered in a transformative era for computational chemical data research, offering unprecedented capabilities in predicting molecular properties, optimizing reactions, and accelerating drug discovery. However, a critical challenge threatens to undermine its scientific value: AI overconfidence. This phenomenon occurs when models produce confident, incorrect predictions without appropriate uncertainty quantification, potentially leading research down costly and unproductive paths [21] [22].

The consequences of overconfident AI are particularly acute in drug development, where decisions based on faulty predictions can compromise patient safety, waste extensive resources, and delay life-saving therapies. This technical guide examines the roots and repercussions of AI overconfidence within computational chemistry, providing researchers with methodologies to detect, quantify, and mitigate these risks in their scientific workflows. Understanding and addressing this uncertainty is not merely a technical exercise but a fundamental requirement for responsible AI adoption in chemical sciences [23].

The High Stakes: Consequences in Drug Development

In the high-risk domain of pharmaceutical research, overconfident AI predictions manifest with particular severity across several critical areas.

Toxicity Prediction Failures

AI-driven toxicity prediction has emerged as a promising alternative to traditional methods, which are often hampered by high costs, low throughput, and uncertain cross-species extrapolation [24]. However, when these models are overconfident, they produce misleading results with serious consequences:

- Misleading Safety Profiles: Overconfident models may generate incorrect toxicity classifications with high certainty, leading to the advancement of toxic compounds while potentially discarding safe candidates based on flawed predictions.

- Clinical Trial Risks: Compounds with unanticipated toxicity profiles can progress to clinical stages, exposing trial participants to preventable risks and resulting in late-stage failures that cost billions of dollars [24].

- Resource Misdirection: Research efforts may be channeled toward optimizing compound series that appear promising according to flawed AI predictions but ultimately fail due to unanticipated toxicity issues.

Table 1: Quantitative Impact of AI Toxicity Prediction Errors

| Error Type | Development Phase | Estimated Cost Impact | Timeline Impact |

|---|---|---|---|

| False Negative (Toxic compound advanced) | Preclinical | $5-15 million in wasted research | 6-18 months lost |

| False Positive (Safe compound discarded) | Early Discovery | $1-3 million in missed opportunity | 3-9 months for replacement |

| Late-Stage Toxicity Failure | Clinical Phase II/III | $100-500 million total costs | 2-4 years delay to market |

Regulatory and Compliance Challenges

The regulatory landscape for AI in drug development remains complex and evolving. Overconfident models that lack proper validation create significant regulatory hurdles [23]:

- Validation Deficits: Regulatory agencies require demonstrated reliability through rigorous validation processes. Overconfident models that cannot quantify uncertainty properly fail to meet these standards.

- Explainability Gaps: The "black box" nature of many advanced AI systems obscures their reasoning, making it difficult to justify predictions to regulatory bodies such as the FDA, which emphasizes transparency in its evolving AI/ML frameworks [23].

- Intellectual Property Risks: Overconfident predictions based on improperly trained models may inadvertently utilize copyrighted or proprietary data, creating legal exposure as seen in cases against major AI developers [21].

Technical Roots of AI Overconfidence

Understanding the technical foundations of overconfidence is essential for developing effective countermeasures.

Model Architecture Limitations

Current AI architectures, particularly large language models, exhibit fundamental limitations that contribute to overconfidence:

- Surface-Level Pattern Matching: Research indicates that models often rely on statistical patterns in training data rather than genuine comprehension or reasoning capabilities. A 2025 Apple study found that large reasoning models suffer from "complete accuracy collapse" when faced with even low-complexity reasoning tasks [21].

- Lack of Actual Comprehension: These models statistically predict likely sequences without understanding content or context, generating confident-sounding answers that are fundamentally incorrect [21].

- Architectural Blind Spots: Standard neural network architectures often lack built-in uncertainty quantification mechanisms, treating all predictions with equal confidence regardless of the model's actual knowledge about a specific chemical domain.

Data Quality and Bias Issues

The foundation of any AI system—its training data—introduces multiple pathways to overconfidence:

- Unrepresentative Training Data: Models trained on limited chemical spaces or biased compound libraries develop skewed confidence boundaries, performing poorly on novel structural classes outside their training distribution.

- Data Scraping Controversies: Many AI models are trained on data scraped from diverse sources without sufficient oversight, transparency, or consent, potentially incorporating low-quality or problematic data that undermines reliability [21].

- Ecological Fallacies: The probability structure of the training environment may not match real-world chemical spaces, leading to systematic miscalibration when models encounter compounds with different properties from their training sets [25].

Detection and Quantification Methods

Researchers must employ rigorous methodologies to identify and measure overconfidence in AI systems for chemical data.

Calibration Techniques

Proper calibration ensures that a model's confidence scores align with its actual accuracy:

- Temperature Scaling: A popular calibration method that adjusts a model's confidence by scaling its output logits using a temperature parameter. The MIT "Thermometer" approach builds a smaller, auxiliary model that runs on top of a primary model to automatically predict the optimal temperature for new tasks without requiring labeled validation data [22].

- Universal Calibration: Unlike traditional machine learning models calibrated for specific tasks, large chemical AI models require calibration approaches that work across diverse prediction tasks, from toxicity endpoints to physicochemical properties [22].

- Confidence Interval Validation: Models should be tested to ensure that their 90% confidence intervals actually contain the true value approximately 90% of the time, addressing the tendency toward overconfidence observed in AI forecasting [26].

Table 2: Experimental Protocols for Detecting AI Overconfidence

| Method | Experimental Protocol | Key Metrics | Interpretation |

|---|---|---|---|

| Confidence Calibration | 1. Split data into training/validation/test sets2. Train model on training set3. Measure confidence vs. accuracy on validation set4. Apply calibration method5. Verify on test set | Expected Calibration Error (ECE)Maximum Calibration Error (MCE)Brier Score | Lower ECE/MCE indicates better calibrationLower Brier score indicates better overall accuracy |

| Out-of-Distribution Testing | 1. Train model on primary chemical library2. Test on structurally distinct compound library3. Compare confidence scores between libraries | Confidence Drop RatioOut-of-Distribution AUCSelectivity Index | Significant confidence drop indicates proper uncertainty awareness |

| Adversarial Validation | 1. Generate slight perturbations to molecular structures2. Measure confidence change3. Assess robustness of predictions | Confidence Stability MetricAdversarial Robustness Score | High stability indicates reliable confidence estimates |

Uncertainty Quantification Frameworks

Implementing robust uncertainty quantification is essential for trustworthy AI predictions:

- Epistemic vs. Aleatoric Uncertainty: Distinguishing between uncertainty from the model itself (epistemic) and inherent data noise (aleatoric) provides clearer insights into the sources of unreliability.

- Bayesian Neural Networks: These architectures provide natural uncertainty estimates by maintaining distributions over weights rather than point estimates, though they require significant computational resources.

- Ensemble Methods: Multiple models with different architectures or training data subsets can yield confidence estimates through prediction variance, offering a practical approach to uncertainty quantification without architectural changes.

Mitigation Strategies for Research Applications

Implementing targeted strategies can effectively reduce AI overconfidence in chemical data research.

Technical Solutions

- The "Thermometer" Approach: This method, developed by MIT researchers, provides efficient calibration for large models across diverse tasks without extensive retraining or significant computational overhead, preserving model accuracy while improving reliability [22].

- Federated Learning Systems: These approaches enable collaborative model training without centralizing sensitive chemical data, addressing privacy concerns while expanding the diversity of training compounds to reduce biased confidence estimates [23].

- Explainable AI (XAI) Integration: Implementing XAI techniques provides transparency into model reasoning, allowing researchers to understand the basis for predictions and identify unjustified confidence. The FDA emphasizes XAI in its evolving regulatory frameworks for AI/ML-based medical products [23].

Process and Validation Improvements

- Comprehensive Benchmarking: Regular testing against diverse chemical databases ensures models maintain appropriate confidence boundaries across different compound classes and properties [24].

- Human-in-the-Loop Validation: Maintaining expert chemical oversight for high-stakes predictions creates essential safeguards against automated overconfidence, particularly for critical decisions like compound advancement [27].

- Continuous Monitoring and Updating: Implementing systems to track prediction accuracy versus confidence over time enables early detection of emerging overconfidence patterns as models encounter novel chemical spaces.

Table 3: Research Reagent Solutions for AI Overconfidence Mitigation

| Reagent / Resource | Type | Primary Function | Application in Overconfidence Mitigation |

|---|---|---|---|

| TOXRIC Database | Toxicity Database | Provides comprehensive toxicity data for compounds | Benchmarking AI predictions against established toxicity endpoints |

| ChEMBL Database | Bioactivity Database | Manually curated database of bioactive molecules | Training and validating models on reliable bioactivity data |

| DrugBank Database | Pharmaceutical Knowledge Base | Detailed drug and drug target information | Grounding predictions in established pharmaceutical knowledge |

| OCHEM Platform | Modeling Environment | Enables building QSAR models for chemical properties | Implementing and testing calibration methods |

| FAERS Database | Adverse Event Reporting System | FDA database of adverse drug reactions | Validating safety predictions against real-world outcomes |

| Thermometer Calibration | Software Method | MIT-developed calibration technique for LLMs | Adjusting confidence scores to align with actual accuracy |

| Differential Privacy | Mathematical Framework | Provides formal privacy guarantees | Enabling secure data sharing for model training |

Future Directions and Research Agenda

Addressing AI overconfidence requires ongoing research and development across multiple fronts.

Emerging Technical Approaches

- Hybrid AI-Quantum Systems: UK-based Riverlane is developing quantum error correction systems that could enable more stable quantum computing platforms, potentially overcoming current data generation limitations that constrain AI training for novel chemical spaces [28].

- Causal Reasoning Integration: Moving beyond correlation-based pattern matching to incorporate causal reasoning frameworks would address fundamental limitations in current AI architectures, potentially reducing unjustified confidence in spurious relationships.

- Adaptive Confidence Boundaries: Developing models that dynamically adjust confidence thresholds based on chemical domain complexity and data quality would provide more nuanced uncertainty quantification.

Regulatory and Standards Evolution

The regulatory landscape for AI in drug development continues to evolve, with significant implications for confidence calibration:

- FDA Framework Development: The FDA is actively working on regulatory frameworks for evaluating AI/ML-based medical products, emphasizing validation processes, transparency, and accountability [23].

- Global Regulatory Alignment: Disparities between regulatory approaches (EU AI Act, US state-level laws, Canada's AIDA) create compliance challenges for global pharmaceutical companies, necessitating harmonized standards for AI validation [21].

- Independent Oversight Mechanisms: Implementing third-party auditing and certification of AI systems for chemical prediction would establish trustworthiness standards similar to other validated scientific instruments.

Overconfident AI predictions represent a critical vulnerability in modern computational chemical research, with potential consequences ranging from minor inefficiencies to serious clinical risks. By understanding the technical roots of this overconfidence and implementing rigorous detection, quantification, and mitigation strategies, researchers can harness AI's transformative potential while maintaining scientific integrity.

The path forward requires a fundamental shift from treating AI as an oracle to approaching it as a tool—one with remarkable capabilities but significant limitations. Through improved calibration techniques, robust uncertainty quantification, human oversight, and evolving regulatory frameworks, the research community can develop AI systems that not only predict but also know the boundaries of their knowledge. This nuanced understanding of uncertainty will ultimately enable more reliable, trustworthy, and impactful AI applications across drug discovery and development.

How to Quantify Uncertainty: A Guide to Modern UQ Methods and Their Applications

In computational chemical data research, the ability to quantify the confidence of a prediction is as critical as the prediction itself. Decisions in drug discovery—such as selecting a compound for costly synthesis or a protein target for further validation—are inherently risky and resource-intensive. Ensemble methods, which leverage committees of models, have emerged as a powerful paradigm for providing reliable confidence scores alongside these predictions. By combining the predictions of multiple individual models, ensemble approaches mitigate the limitations of any single model and provide a natural framework for uncertainty quantification (UQ). The variance in the predictions of committee members directly estimates the epistemic uncertainty in a model, arising from a lack of knowledge, while the inherent noise in the data is captured as aleatoric uncertainty [29] [30]. In drug discovery, where data is often scarce, noisy, and subject to distribution shifts, this quantified uncertainty becomes an indispensable tool for prioritizing experiments and allocating resources efficiently [10] [31].

Theoretical Foundations of Ensemble-Based Uncertainty

Aleatoric vs. Epistemic Uncertainty

In the context of ensemble methods for molecular property prediction, it is essential to distinguish between the two fundamental types of uncertainty:

- Aleatoric Uncertainty: This is the uncertainty inherent in the data itself. It stems from measurement errors, experimental noise, or stochastic processes. Aleatoric uncertainty is considered irreducible because collecting more data of the same type will not eliminate it. In ensemble models, it is often quantified by the average predictive variance of the individual models [29] [32].

- Epistemic Uncertainty: This uncertainty arises from a lack of knowledge in the model. It is caused by insufficient training data in certain regions of the chemical space or by model limitations. Epistemic uncertainty is reducible by gathering more relevant data or improving the model architecture. In an ensemble, it is quantified by the statistical dispersion (e.g., variance) among the predictions of the different committee members [29] [33] [30].

The total predictive uncertainty is a combination of these two components. A well-designed ensemble can disentangle and quantify both, providing deep insight into the potential sources of error for a given prediction [29].

The Ensemble Paradigm for Uncertainty Quantification

The core principle of ensemble-based UQ is to train multiple models that exhibit diversity. This diversity can be introduced through various mechanisms, such as different model initializations, different subsets of the training data, or even different model architectures. For a given input molecule, each model in the committee produces a prediction. The committee's final prediction is typically the mean of these individual predictions for regression tasks, or the average probability for classification tasks.

The confidence score, or total uncertainty, is derived from the spread of these individual predictions. A large variance indicates high epistemic uncertainty, suggesting the input is unlike what the models encountered during training. A consensus among models, indicated by low variance, suggests high confidence. The mathematical representation of this paradigm often treats the final predictive distribution as a mixture of the distributions from the individual models, allowing for a principled estimation of both types of uncertainty [29].

Implementing Ensemble Committees: Architectures and Methods

Common Ensemble Techniques

Several practical methods exist for constructing model committees. The table below summarizes the most prominent ones used in computational chemistry and drug discovery.

Table 1: Common Ensemble Methods for Uncertainty Quantification

| Method | Key Mechanism | Uncertainty Type Captured | Key Advantages |

|---|---|---|---|

| Deep Ensembles [29] | Train multiple models independently with different random initializations. | Both Epistemic and Aleatoric | Simple, highly effective, considered a strong baseline. |

| Bootstrap Ensembles [34] [33] | Train multiple models on different random subsets (with replacement) of the training data. | Primarily Epistemic | Captures uncertainty due to data sampling variability. |

| Monte Carlo (MC) Dropout [31] [32] | Apply dropout during both training and inference; multiple stochastic forward passes act as an ensemble. | Epistemic | Computationally efficient, requires only a single model. |

| Snapshot Ensembles [33] | Collect multiple models (snapshots) from different local minima along a single training trajectory. | Epistemic | Lower training cost than full deep ensembles. |

| Divergent Ensemble Networks (DEN) [30] | A single network with a shared base and multiple independent output branches. | Both Epistemic and Aleatoric | More parameter-efficient than independent deep ensembles. |

Advanced Architectures: The Divergent Ensemble Network (DEN)

To address the computational overhead of traditional ensembles, novel architectures like the Divergent Ensemble Network (DEN) have been proposed. DEN uses a shared input layer to learn a common representation of the molecule, which is then processed by multiple independent branching networks. This design balances efficiency with diversity: the shared layer reduces redundant parameter usage, while the independent branches maintain the prediction variance necessary for robust uncertainty estimation [30]. This is particularly advantageous for large-scale virtual screening or real-time prediction scenarios.

Experimental Protocol for Benchmarking Ensembles

To reliably compare the performance of different ensemble methods, a standardized evaluation protocol is essential. The following methodology outlines key steps for a robust benchmark, drawing from practices in recent literature [10] [33] [11].

- Data Partitioning: Split the dataset into training, calibration (optional), validation, and test sets. A temporal split, where the test set comes from a later time period than the training set, is highly recommended for drug discovery applications to simulate real-world performance degradation and assess model robustness to distribution shift [10].

- Model Training: For each ensemble method (e.g., Deep Ensembles, MC Dropout), train the required number of models or configure the network as specified. Ensure diversity is introduced via the method's specific mechanism (e.g., random initialization, bootstrapping, dropout).

- Uncertainty Quantification:

- For regression tasks, predict the mean (µ) and variance (σ²) for each molecule. The total uncertainty can be derived from the ensemble's predictive variance.

- For classification, use the average predicted probability from the ensemble. The uncertainty can be quantified via the entropy of the predictive distribution or the variance of the predicted probabilities.

- Evaluation Metrics:

- Predictive Accuracy: Standard metrics like Root Mean Squared Error (RMSE) for regression or Area Under the Curve (AUC) for classification.

- Calibration: Measure how well the predicted confidence scores align with actual accuracy. Use Expected Calibration Error (ECE) or plot reliability diagrams [31].

- Uncertainty Quality: Assess if uncertainty estimates are higher for incorrect predictions and for out-of-distribution (OOD) data [33].

Practical Applications in Drug Discovery

Leveraging Uncertainty for Decision-Making

Quantified uncertainty directly informs critical decision-making processes in the drug discovery pipeline. The table below summarizes key applications.

Table 2: Applications of Ensemble-Based Uncertainty in Drug Discovery

| Application | Description | Impact |

|---|---|---|

| Compound Prioritization | Rank candidates not just by predicted activity, but by a utility function that balances high predicted potency with low uncertainty [10] [11]. | Focuses experimental resources on promising and reliable predictions, increasing the success rate of hit identification. |

| Active Learning | Use epistemic uncertainty to identify which compounds, if experimentally tested, would provide the most information to the model [29]. | Dramatically reduces the number of wet-lab experiments needed to explore a vast chemical space. |

| Out-of-Distribution (OOD) Detection | Flag predictions with high epistemic uncertainty as potentially OOD, indicating novel chemotypes not well-represented in the training data [33]. | Prevents over-reliance on predictions for unfamiliar chemical structures, alerting researchers to potential model extrapolation. |

| Model Diagnostics and Explainability | Attribute uncertainty estimates to specific atoms or substructures within a molecule, providing chemical insight into unreliable predictions [29] [32]. | Helps chemists understand model failures and guides the design of better compounds or the curation of more informative training data. |

Uncertainty-Guided Molecular Design and Optimization

In computational-aided molecular design (CAMD), ensemble uncertainty is integrated directly into the optimization loop. For instance, a Genetic Algorithm (GA) can use a fitness function based not only on the predicted property but also on the associated uncertainty. One effective approach is Probabilistic Improvement Optimization (PIO), which calculates the likelihood that a candidate molecule will exceed a predefined property threshold, given the model's prediction and its uncertainty [11]. This strategy encourages exploration of chemically diverse regions with reliable property estimates, leading to more robust and successful optimization, particularly in multi-objective tasks where balancing competing properties is essential.

The Scientist's Toolkit: Essential Research Reagents

Implementing and applying ensemble methods requires a suite of computational tools and conceptual "reagents." The following table details key components of the modern UQ toolkit for computational chemists.

Table 3: Key "Research Reagent Solutions" for Ensemble Modeling

| Item / Tool | Function / Description | Relevance to Ensemble Methods |

|---|---|---|

| Deep Learning Frameworks (PyTorch, TensorFlow) | Flexible libraries for building and training neural network models. | Essential for implementing custom ensemble architectures, loss functions, and training loops. |

| UQ-Specialized Libraries (Chemprop, KLIFF) | Domain-specific software with built-in support for UQ methods. | Chemprop provides D-MPNN models with ensemble UQ for molecules [11]. KLIFF supports UQ for interatomic potentials [33]. |

| Censored Regression Labels [10] | Data points where the precise value is unknown, but a threshold (e.g., ">10 μM") is known. | Specialized techniques (e.g., Tobit model) allow ensembles to learn from this abundant, imperfect data, improving uncertainty estimates. |

| Post-Hoc Calibration (e.g., Platt Scaling) [31] | A method to adjust the output probabilities of a classifier to better match true frequencies. | Corrects for over- or under-confidence in ensemble models, ensuring that a "80% confidence" prediction is correct 80% of the time. |

| Graph Neural Networks (GNNs) | Neural networks that operate directly on graph-structured data, such as molecular graphs. | The primary architecture for modern molecular property prediction. Ensembles of GNNs are a standard for high-performance, uncertainty-aware modeling [11]. |

Visualizing Workflows and Architectures

Standard Ensemble Workflow for Molecular Property Prediction

The following diagram illustrates the end-to-end process of applying ensemble methods for uncertainty-aware prediction in drug discovery.

Standard Ensemble Workflow for Molecular Property Prediction

Divergent Ensemble Network (DEN) Architecture

The DEN architecture provides a computationally efficient alternative to traditional ensembles by sharing lower-level representations.

Divergent Ensemble Network (DEN) Architecture

Ensemble methods represent a mature and powerful approach for deriving confidence scores from computational chemical models. By leveraging model committees, researchers can move beyond single-point predictions to obtain a probabilistic understanding of a forecast's reliability. This is paramount in drug discovery, where well-informed decision-making under uncertainty directly impacts the efficiency and success of bringing new therapeutics to market. As the field progresses, the integration of ensemble UQ into automated design platforms, coupled with advances in model calibration and explainability, will further solidify its role as a cornerstone of reliable, data-driven molecular research.

In computational chemistry and drug development, deep neural networks (DNNs) have emerged as powerful tools for predicting molecular properties, binding affinities, and reaction outcomes. However, traditional DNNs trained via maximum a posteriori (MAP) estimation provide only point estimates of their predictions, lacking crucial uncertainty quantification. This limitation poses significant risks in scientific applications where understanding the confidence of predictions informs downstream experimental decisions [35] [36]. Bayesian Neural Networks (BNNs) address this fundamental limitation by treating network weights as probability distributions rather than fixed values, naturally providing uncertainty estimates that are essential for reliable scientific applications [36] [37].

The inherent flexibility of conventional neural networks makes them particularly susceptible to overfitting, especially when working with the small, noisy datasets common in experimental materials science and chemistry [36]. This overfitting problem manifests mathematically through the optimization process: where standard neural network training aims to minimize a loss function (L(D, w)) with respect to weights (w) given dataset (D = {xi, yi}), equivalent to maximum likelihood estimation. This approach finds weights that perform well on training data but may generalize poorly to test data [36]. BNNs fundamentally reformulate this learning paradigm through Bayesian inference, thereby enabling researchers to distinguish between reliable and uncertain predictions when exploring new chemical spaces or molecular structures [37].

Theoretical Foundations of Bayesian Neural Networks

From Deterministic to Probabilistic Deep Learning

In a conventional neural network, the mapping (y \approx f(x, w)) is deterministic once the weights (w) are learned through optimization. In contrast, a BNN represents the weights as probability distributions, transforming the network into a probabilistic model [38]. This probabilistic formulation enables BNNs to naturally quantify uncertainty in their predictions, making them particularly valuable for scientific applications where understanding reliability is crucial [36].

The Bayesian framework defines a prior distribution (p(w)) over the weights, representing our initial beliefs about plausible parameter values before observing data. After collecting data (D), Bayes' theorem is used to compute the posterior distribution over the weights:

[ p(w | D) = \frac{p(D|w)p(w)}{p(D)} = \frac{p(D|w)p(w)}{\int_{w'} p(D|w')p(w') dw'} ]

This posterior distribution captures updated beliefs about the weights after considering the evidence provided by the data [36]. For prediction, BNNs use the posterior predictive distribution:

[ p(\hat{y}(x)| D) = \int{w} p(\hat{y}(x)| w) p(w | D) dw = \mathbb{E}{p(w|D)}[p(\hat{y}(x)|w)] ]

which can be interpreted as an infinite ensemble of networks, with each network's contribution weighted by the posterior probability of its weights [36] [38].

Categorizing Uncertainty in Bayesian Neural Networks

BNNs naturally disentangle two fundamental types of uncertainty that are crucial for scientific applications:

Epistemic uncertainty (model uncertainty) arises from uncertainty in the model parameters themselves. This uncertainty reflects limited knowledge about the true data-generating process and can be reduced by collecting more data. In materials science, this might manifest when predicting properties for molecular structures far from the training distribution [37].

Aleatoric uncertainty (data uncertainty) stems from inherent noise or stochasticity in the observations. This uncertainty cannot be reduced by collecting more data. In experimental chemical data, this might include measurement errors or intrinsic variability in experimental conditions [37].

The predictive variance (U_{post}) naturally combines both epistemic and aleatoric uncertainty, providing a comprehensive measure of predictive uncertainty [37].

Computational Approaches for Bayesian Inference

The posterior distribution (p(w|D)) is typically intractable for deep neural networks due to the high-dimensional integral in the denominator of Bayes' rule. Several approximation methods have been developed:

Table 1: Computational Methods for Bayesian Neural Networks

| Method | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Markov Chain Monte Carlo (MCMC) | Generates samples from the posterior using stochastic sampling | Asymptotically exact, theoretical guarantees | Computationally intensive for large networks [38] [37] |

| Variational Inference (VI) | Approximates posterior with parameterized distribution (q_\phi(w)) | Faster than MCMC, scalable to larger networks | May underestimate uncertainty [36] [37] |

| Monte Carlo Dropout | Approximates Bayesian inference through dropout at test time | Easy implementation, minimal computational overhead | Less accurate uncertainty estimates [35] |

| Stochastic Variational Inference | Combines variational inference with stochastic optimization | Scalable to large datasets, compatible with standard optimizers | Requires careful selection of approximate posterior [36] |

For molecular property prediction, advanced MCMC methods such as Hamiltonian Monte Carlo (HMC) and its extension, the No-U-Turn Sampler (NUTS), have shown particular promise. These methods efficiently explore the posterior distribution of neural network parameters in high-dimensional spaces without significant manual tuning [37].

Experimental Framework and Implementation Protocols

Workflow for Bayesian Neural Network Implementation

The following diagram illustrates the complete workflow for implementing and applying Bayesian Neural Networks in computational chemical research:

Protocol 1: Implementing a Basic Bayesian Neural Network with Pyro

For molecular property prediction, the following protocol implements a BNN with Gaussian priors using the Pyro probabilistic programming language [38]:

Materials and Experimental Setup:

- Software Environment: Python 3.7+, PyTorch 1.8+, Pyro 1.7+

- Hardware: GPU-enabled system for accelerated sampling (recommended)

- Data Requirements: Molecular descriptors or features with associated property values

Step-by-Step Procedure:

Network Architecture Definition:

Posterior Sampling with MCMC:

Predictive Distribution Calculation:

This protocol provides full posterior distributions over both network weights and predictive outputs, enabling comprehensive uncertainty quantification for molecular property predictions [38].

Protocol 2: Partially Bayesian Neural Networks for Efficient Uncertainty Quantification

For large-scale chemical datasets or applications requiring frequent retraining, partially Bayesian neural networks (PBNNs) offer a computationally efficient alternative [37]:

Rationale: PBNNs transform only selected layers to be probabilistic while keeping others deterministic, significantly reducing computational cost while maintaining accurate uncertainty estimates.

Implementation Workflow:

Step-by-Step Procedure:

Deterministic Pre-training:

- Train a conventional neural network on available chemical data

- Apply Stochastic Weight Averaging (SWA) to enhance robustness against noisy training objectives

- Regularize using a Gaussian MAP prior to prevent overfitting

Probabilistic Layer Selection:

- Identify critical layers for uncertainty propagation (typically later layers)

- Common configurations include making only the final layer probabilistic or alternating probabilistic and deterministic layers

Bayesian Fine-tuning:

- Initialize prior distributions using pre-trained weights from selected layers

- Freeze deterministic layers

- Apply HMC/NUTS sampling only to probabilistic layers

Predictive Combination:

- Combine samples from probabilistic layers with frozen deterministic layers

- Compute predictive mean and variance using Monte Carlo integration [37]

Table 2: Performance Comparison of Fully Bayesian vs. Partially Bayesian Neural Networks on Materials Science Datasets

| Model Architecture | Predictive Accuracy (RMSE) | Uncertainty Calibration | Computational Cost (Hours) | Recommended Use Case |

|---|---|---|---|---|

| Fully Bayesian NN | 0.124 ± 0.015 | Excellent | 48.2 | Small datasets (< 1,000 samples), high-stakes applications |

| PBNN (All Hidden Layers) | 0.131 ± 0.018 | Very Good | 24.7 | Medium datasets, balanced accuracy/efficiency needs |

| PBNN (Final Layer Only) | 0.145 ± 0.022 | Good | 8.3 | Large datasets (> 10,000 samples), screening applications |

| Deterministic NN | 0.152 ± 0.035 | Poor | 4.1 | Baseline comparison only, not recommended for AL |