Validating Density Functional Theory: Best Practices, Common Pitfalls, and Applications in Biomedical Research

This article provides a comprehensive framework for validating Density Functional Theory (DFT) calculations against experimental data, a critical step for ensuring reliability in research and drug development.

Validating Density Functional Theory: Best Practices, Common Pitfalls, and Applications in Biomedical Research

Abstract

This article provides a comprehensive framework for validating Density Functional Theory (DFT) calculations against experimental data, a critical step for ensuring reliability in research and drug development. It explores the foundational principles of DFT validation, outlines methodological protocols for accurate computation across molecular and solid-state systems, and addresses common troubleshooting scenarios including grid errors, SCF convergence, and low-frequency modes. Featuring comparative case studies from structural biology, materials science, and spectroscopy, the content synthesizes current best practices to help researchers critically assess DFT performance, optimize computational workflows, and confidently apply these methods to predict molecular properties, drug-target interactions, and material behaviors relevant to biomedical and clinical applications.

The Foundation of Trust in DFT: Principles and Importance of Experimental Validation

Density Functional Theory (DFT) has established itself as a cornerstone of modern computational materials science and drug discovery, providing a balance between computational cost and accuracy for predicting electronic structures and properties. However, the true value of DFT calculations emerges only when their predictions are rigorously validated against experimental data. This process of DFT validation transforms abstract computational results into reliable insights that can guide research and development. Validation serves as a critical bridge, ensuring that theoretical models accurately reflect reality, thereby enabling researchers to make confident decisions based on computational findings.

The integration of computational and experimental approaches has become increasingly crucial for designing and optimizing functional materials and pharmaceutical compounds. As highlighted in recent studies, this combined approach allows researchers to not only interpret experimental observations but also to predict new properties and behaviors with greater confidence. For instance, in magnetic materials development, this synergy has proven essential for understanding complex electronic interactions and their relationship to macroscopic properties. This guide examines the current landscape of DFT validation, providing researchers with a comprehensive framework for evaluating computational predictions against experimental reality.

Understanding DFT Software and Capabilities

DFT software packages vary significantly in their target applications, capabilities, and computational requirements. The selection of appropriate software represents the foundational first step in establishing a reliable validation workflow. These packages can be broadly categorized into those designed for solid systems (such as metals, semiconductors, and periodic structures) and those optimized for molecular systems (including individual molecules and molecular clusters) [1].

Solid-system software typically employs periodic boundary conditions to model infinitely extended structures, making it ideal for calculating properties of crystals, surfaces, and bulk materials. In contrast, molecular-system software generally treats systems in vacuum, though implicit solvation models can account for solvent effects. The choice between these categories depends fundamentally on the research question and the nature of the system under investigation [1].

Beyond this fundamental distinction, software packages differ in their supported physical properties, computational efficiency, and compatibility with experimental data types. Common properties accessible through DFT calculations include structural parameters (lattice constants, equilibrium geometries), electronic properties (band structure, density of states, molecular orbitals), thermodynamic properties (formation energy, free energy), and various response functions (optical properties, vibrational frequencies) [1]. Understanding these capabilities is essential for designing appropriate validation studies.

Representative DFT Software Comparison

The table below summarizes major DFT software packages, their primary applications, and key characteristics:

Table 1: Representative DFT Software Packages and Their Characteristics

| Software | Main Target System | Key Features | License Type | Common Visualization Tools |

|---|---|---|---|---|

| VASP [1] | Solid | Industry standard for solid-state/periodic systems | Paid | p4vasp, VESTA |

| Quantum Espresso [1] [2] | Solid | Free, open-source platform for materials modeling | Free | VESTA |

| SIESTA [1] | Solid | Adjustable mathematical representation for efficiency | Free | VESTA |

| Gaussian [1] | Molecular | Industry standard for molecular systems, GUI available | Paid | GaussView, Avogadro |

| GAMESS [1] | Molecular | Free, actively developed features | Free | MacMolPlt, Avogadro |

| ORCA [1] | Molecular | Strong capabilities for optical properties and high-precision calculations | Paid (Academic free) | Avogadro, ChimeraX, Chemcraft |

| Jaguar [3] | Molecular | Pseudospectral DFT for speed, specialized workflows | Paid | Integrated in Maestro |

Quantitative Validation: Comparing Computational Methods with Experimental Data

The accuracy of DFT predictions must be quantitatively assessed against experimental measurements to establish their reliability. Recent comprehensive studies have benchmarked various computational methods, including traditional DFT functionals and emerging machine-learning approaches, against experimental datasets for key electronic properties.

Reduction Potential Prediction Accuracy

Reduction potential is a critical property in electrochemical studies and drug metabolism research. The following table compares the performance of various computational methods in predicting experimental reduction potentials for main-group and organometallic species:

Table 2: Method Performance for Reduction Potential Prediction (Values in Volts) [4]

| Method | System Type | Mean Absolute Error (MAE) | Root Mean Square Error (RMSE) | Coefficient of Determination (R²) |

|---|---|---|---|---|

| B97-3c | Main-Group | 0.260 | 0.366 | 0.943 |

| B97-3c | Organometallic | 0.414 | 0.520 | 0.800 |

| GFN2-xTB | Main-Group | 0.303 | 0.407 | 0.940 |

| GFN2-xTB | Organometallic | 0.733 | 0.938 | 0.528 |

| UMA-S (OMol25) | Main-Group | 0.261 | 0.596 | 0.878 |

| UMA-S (OMol25) | Organometallic | 0.262 | 0.375 | 0.896 |

| UMA-M (OMol25) | Main-Group | 0.407 | 1.216 | 0.596 |

| UMA-M (OMol25) | Organometallic | 0.365 | 0.560 | 0.775 |

| eSEN-S (OMol25) | Main-Group | 0.505 | 1.488 | 0.477 |

| eSEN-S (OMol25) | Organometallic | 0.312 | 0.446 | 0.845 |

The data reveals several important trends. For main-group systems, the B97-3c functional demonstrates excellent accuracy (MAE = 0.260 V, R² = 0.943), while GFN2-xTB shows reasonable performance. Interestingly, the UMA-S neural network potential trained on the OMol25 dataset achieves comparable accuracy to B97-3c for organometallic systems, suggesting that machine-learning approaches can compete with traditional DFT for certain applications despite not explicitly incorporating charge-based physics [4].

Electron Affinity Prediction Accuracy

Electron affinity represents another fundamental electronic property with implications for reactivity and charge transfer processes. The following table summarizes computational method performance for predicting experimental electron affinities:

Table 3: Method Performance for Electron Affinity Prediction (Values in eV) [4]

| Method | System Type | Mean Absolute Error (MAE) | Root Mean Square Error (RMSE) | Coefficient of Determination (R²) |

|---|---|---|---|---|

| r2SCAN-3c | Main-Group | 0.171 | 0.219 | 0.966 |

| ωB97X-3c | Main-Group | 0.175 | 0.226 | 0.964 |

| g-xTB | Main-Group | 0.259 | 0.330 | 0.924 |

| GFN2-xTB | Main-Group | 0.266 | 0.355 | 0.911 |

| UMA-S (OMol25) | Main-Group | 0.242 | 0.324 | 0.929 |

| UMA-M (OMol25) | Main-Group | 0.246 | 0.323 | 0.930 |

| eSEN-S (OMol25) | Main-Group | 0.267 | 0.348 | 0.916 |

| r2SCAN-3c | Organometallic | 0.330 | 0.402 | 0.826 |

| ωB97X-3c | Organometallic | 0.381 | 0.479 | 0.768 |

| UMA-S (OMol25) | Organometallic | 0.284 | 0.370 | 0.877 |

For main-group systems, r2SCAN-3c and ωB97X-3c functionals demonstrate the highest accuracy for electron affinity prediction (MAE = 0.171-0.175 eV, R² = 0.964-0.966), while the OMol25-trained neural network potentials show slightly reduced but still respectable performance [4]. Notably, for organometallic systems, the UMA-S model outperformed traditional DFT functionals, achieving a lower MAE (0.284 eV) and higher R² value (0.877) compared to r2SCAN-3c (MAE = 0.330 eV, R² = 0.826) and ωB97X-3c (MAE = 0.381 eV, R² = 0.768) [4].

Experimental Protocols and Methodologies for DFT Validation

Robust validation of DFT predictions requires carefully designed experimental protocols and systematic comparison methodologies. The following sections outline common experimental approaches used to validate computational predictions across different material systems and properties.

Structural and Magnetic Property Validation

In studies of magnetic materials such as Mn-substituted Co-Zn ferrites, researchers typically employ a combination of structural and magnetic characterization techniques [2]. The experimental protocol generally includes:

Material Synthesis: Samples are prepared using controlled methods such as auto-combustion synthesis to ensure phase purity and precise compositional control [2].

Structural Characterization: X-ray diffraction (XRD) with Rietveld refinement confirms phase formation, quantifies lattice parameters, and identifies any structural distortions or impurities.

Magnetic Measurements: Vibrating sample magnetometry (VSM) provides quantitative data on saturation magnetization (Ms) and coercivity (Hc) across different doping concentrations and temperature conditions.

Electronic Structure Analysis: X-ray photoelectron spectroscopy (XPS) may be employed to determine oxidation states and chemical environments.

The corresponding DFT calculations typically involve density of states (DOS) analysis, band structure calculations, and Bader charge analysis to understand the effects of elemental substitution on electronic structure and magnetic interactions [2]. Validation occurs through direct comparison of calculated versus experimental lattice parameters, magnetic moments, and trends in magnetic properties with composition.

Catalytic Property Validation

For catalytic systems such as Fe-doped CoMn₂O₄ for selective catalytic reduction (SCR) of NOx, validation protocols focus on catalytic performance metrics [5]:

Catalyst Synthesis: Sol-gel and impregnation methods prepare catalysts with controlled doping levels and surface properties.

Surface Characterization: Techniques such as temperature-programmed reduction (TPR), Brunauer-Emmett-Teller (BET) surface area analysis, and chemisorption probes quantify active sites and surface properties.

Performance Testing: Reactor systems measure NOx conversion efficiency as a function of temperature, space velocity, and gas composition.

Adsorption Studies: Calorimetric or spectroscopic methods quantify reactant adsorption energies and surface coverage.

Complementary DFT calculations model adsorption geometries, reaction pathways, energy barriers, and electronic structure modifications due to doping [5]. Validation focuses on correlating calculated adsorption energies with experimental performance metrics and connecting reduced energy barriers to enhanced catalytic activity.

Sorption Property Validation

For sorbent materials such as graphene-based CO₂ capture systems, validation protocols typically include [6]:

Material Preparation: Synthesis of graphene materials with controlled defect density, functionalization, and porosity.

Structural Analysis: Raman spectroscopy, XPS, and transmission electron microscopy characterize material structure and surface chemistry.

Sorption Measurements: Volumetric or gravimetric analysis quantifies gas uptake capacities under varying pressure and temperature conditions.

In Situ Characterization: Spectroscopic techniques monitor gas-surface interactions under operational conditions.

DFT calculations in these systems model interaction energies, binding configurations, and electronic charge transfer during gas adsorption [6]. Molecular dynamics (MD) simulations may complement DFT to study structural dynamics and ensemble behaviors. Validation emphasizes correlating calculated interaction energies with experimental uptake capacities and linking electronic structure modifications to sorption performance.

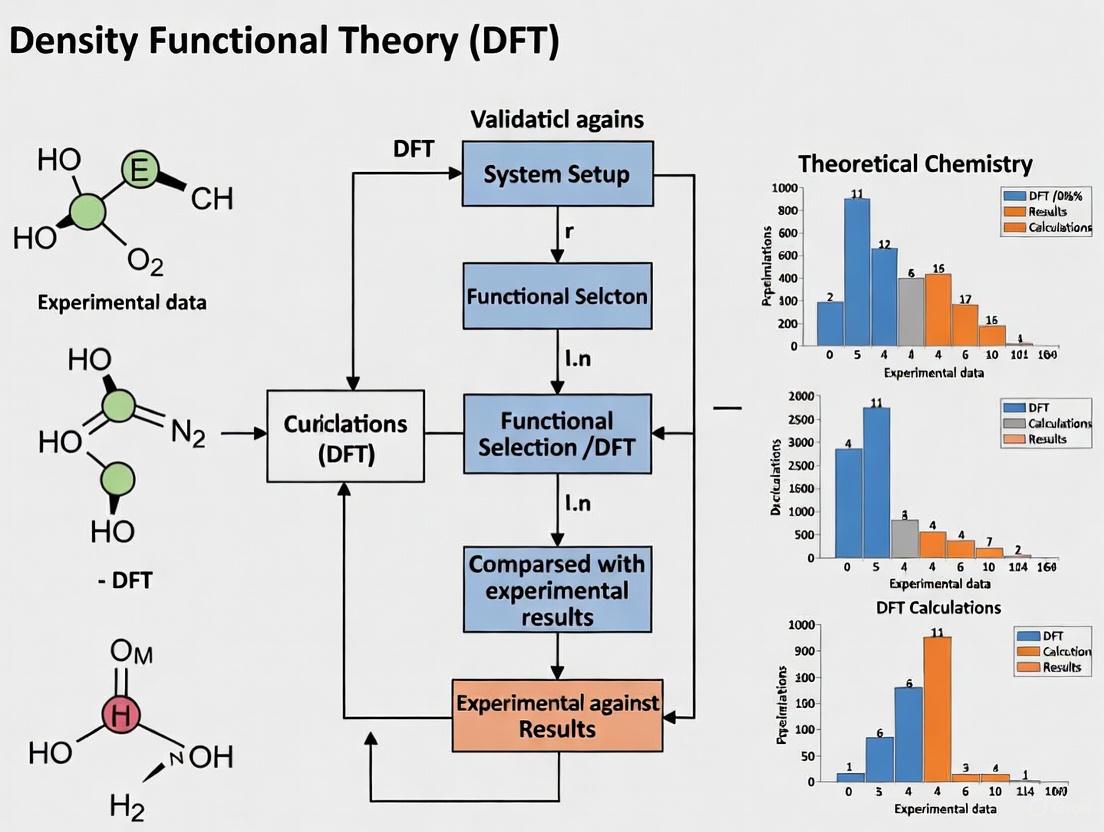

Visualization of DFT Validation Workflows

The following diagram illustrates the integrated computational-experimental workflow for DFT validation:

DFT Validation Workflow: Integrated computational and experimental approach.

Emerging approaches leverage artificial intelligence to automate and enhance DFT validation processes. The DREAMS framework exemplifies this trend with a multi-agent system for autonomous materials simulation:

AI-Enhanced DFT Framework: Multi-agent system for automated simulation.

Successful DFT validation requires access to specialized software tools, computational resources, and experimental databases. The following table catalogs essential resources for researchers conducting DFT validation studies:

Table 4: Essential Research Resources for DFT Validation

| Resource Category | Specific Tools | Primary Function | Access Information |

|---|---|---|---|

| DFT Software [1] | VASP, Quantum Espresso, Gaussian, ORCA | Electronic structure calculation | Commercial licenses, free academic versions |

| Visualization Tools [1] | VESTA, Avogadro, GaussView | Structure modeling and result visualization | Free and commercial options |

| Experimental Databases [7] | JARVIS, Materials Project | Reference data for validation | Publicly accessible |

| Benchmark Datasets [4] | OMol25, Experimental redox data | Method validation and benchmarking | Publicly accessible |

| Computational Environments [1] | High-performance computing clusters, Cloud services | Execution of demanding calculations | Institutional resources, commercial cloud |

| Python Libraries [1] | PySCF, Psi4 | Workflow integration and customization | Open source |

The validation of DFT predictions against experimental data remains an essential process in computational chemistry and materials science. Based on the current analysis, several best practices emerge:

Method Selection Should Match System Type: Traditional DFT functionals like B97-3c excel for main-group systems, while neural network potentials such as UMA-S show particular promise for organometallic complexes, especially for charge-related properties [4].

Multiple Validation Properties Enhance Reliability: Successful validation studies typically compare computational predictions with multiple experimental observables (structural, electronic, magnetic, catalytic) to build comprehensive confidence in the computational models [2] [5].

Integrated Workflows Improve Efficiency: Combining computational and experimental approaches from the initial research design phase creates a virtuous cycle of prediction, validation, and refinement that accelerates materials discovery and optimization [2] [5] [6].

Emerging AI Technologies Show Promise: Frameworks like DREAMS demonstrate that AI-enhanced DFT approaches can achieve expert-level accuracy while reducing reliance on human intervention, potentially democratizing access to high-fidelity computational materials science [8].

As computational power increases and methodological innovations continue to emerge, the integration between DFT predictions and experimental validation will likely strengthen further. This synergy promises to accelerate the discovery and development of novel materials and pharmaceutical compounds while deepening our fundamental understanding of matter at the atomic scale.

Density Functional Theory (DFT) has become a cornerstone computational method across chemistry, materials science, and drug development. However, the predictive power of any DFT calculation depends critically on the chosen functional, basis set, and the specific physical properties being modeled. This guide provides an objective comparison of DFT performance against experimental data for three core physical properties: geometric structure, energy, and spectroscopic parameters. By synthesizing recent validation studies, we aim to equip researchers with practical benchmarks for selecting appropriate computational methods for their specific applications, from drug design to materials engineering.

The reliability of DFT predictions varies significantly across different molecular systems and properties. While some functionals excel at predicting molecular geometries, others may perform better for energy-related properties or spectroscopic simulations. This comparative analysis draws on direct experimental validation to highlight these performance differences, providing a framework for assessing computational results against empirical evidence across diverse chemical spaces.

Geometric Structure Validation

Performance Benchmarks for Molecular Systems

The accuracy of DFT in predicting molecular geometry is routinely validated against experimental X-ray crystallography data. Performance varies significantly across functionals and basis sets, with hybrid functionals generally providing superior agreement with experimental structures.

Table 1: Performance of DFT Functionals for Geometric Structure Prediction of Triclosan

| Functional | Basis Set | Mean Absolute Error (Bond Lengths, Å) | Best Performing Bonds |

|---|---|---|---|

| M06-2X | 6-311++G(d,p) | 0.0353 | C3-O10, O22-H23 |

| CAM-B3LYP | LANL2DZ | 0.0360 | C12-C11, C3-C4 |

| LSDA | LANL2DZ | 0.0367 | C12-Cl24, C6-Cl20 |

| B3LYP | LANL2DZ | 0.0453 | - |

| PBEPBE | LANL2DZ | 0.0514 | - |

In a comprehensive study of the triclosan molecule, the M06-2X functional coupled with the 6-311++G(d,p) basis set demonstrated superior performance in predicting bond lengths, achieving the lowest mean absolute deviation from experimental values (0.0353 Å) [9]. The CAM-B3LYP functional also performed well, particularly for predicting C12-C11 and C3-C4 bond distances [9]. The local spin-density approximation (LSDA) functional surprisingly outperformed B3LYP and PBEPBE for certain chlorine-containing bonds (C12-Cl24 and C6-Cl20), though it was less accurate for oxygen-hydrogen bonds [9].

For periodic systems like SiO₂ polymorphs, dispersion-corrected functionals are essential for accurate structure prediction. A broad assessment of 27 semi-local approaches found that the best-performing functionals achieved mean unsigned errors of approximately 0.2 T atoms per 1000 ų for framework densities when validated against experimental data [10].

Experimental Protocols for Geometric Validation

Standard experimental protocols for geometric validation typically involve single-crystal X-ray diffraction analysis. For the triclosan study, experimental molecular geometry parameters were obtained from crystallographic data and used as reference values for assessing computational predictions [9]. Similarly, structural validation of 5-(4-chlorophenyl)-2-amino-1,3,4-thiadiazole utilized single-crystal X-ray diffraction, with the compound crystallizing in the orthorhombic space group Pna2₁ with eight asymmetric molecules in the unit cell [11]. The experimental bond lengths and angles were directly compared with DFT-optimized geometries using root-mean-square deviation and mean absolute error as quantitative accuracy metrics.

Energy Property Validation

Reduction Potential and Electron Affinity Benchmarks

Energy-related properties such as reduction potentials and electron affinities present particular challenges for DFT methods due to their dependence on accurate electron correlation and charge distribution. Recent benchmarking studies reveal significant performance variations across computational methods.

Table 2: Performance of Computational Methods for Reduction Potential Prediction (Volts)

| Method | System Type | Mean Absolute Error (V) | Root Mean Square Error (V) | R² |

|---|---|---|---|---|

| B97-3c | Main-group (OROP) | 0.260 | 0.366 | 0.943 |

| B97-3c | Organometallic (OMROP) | 0.414 | 0.520 | 0.800 |

| GFN2-xTB | Main-group (OROP) | 0.303 | 0.407 | 0.940 |

| GFN2-xTB | Organometallic (OMROP) | 0.733 | 0.938 | 0.528 |

| UMA-S (NNP) | Main-group (OROP) | 0.261 | 0.596 | 0.878 |

| UMA-S (NNP) | Organometallic (OMROP) | 0.262 | 0.375 | 0.896 |

For reduction potential prediction, the B97-3c functional demonstrated strong performance for main-group species (MAE = 0.260 V) but showed reduced accuracy for organometallic systems (MAE = 0.414 V) [4]. Interestingly, the Universal Model for Atoms Small (UMA-S) neural network potential showed more consistent performance across both main-group and organometallic species, with MAEs of 0.261 V and 0.262 V respectively [4]. The semiempirical GFN2-xTB method performed reasonably for main-group molecules but exhibited significantly poorer accuracy for organometallic complexes (MAE = 0.733 V) [4].

For electron affinity calculations, the ωB97X-3c and r2SCAN-3c functionals generally provided the best agreement with experimental data for both main-group organic/inorganic species and organometallic coordination complexes [4]. These findings highlight the importance of method selection based on the specific chemical system under investigation.

Point Defect Formation Energy Validation

In materials science applications, predicting point defect formation energies represents another critical energy validation metric. Semi-local DFT functionals with a-posteriori corrections are often employed for high-throughput screening of defect properties, though their quantitative accuracy remains limited compared to hybrid functional approaches.

The formation energy for a charged defect Xᵩ is calculated as: Eᶠ(Xᵩ, εF) = Etot(Xᵩ) - Etot(bulk) - Σniμi + qεF + E_corr

where the correction term (E_corr) addresses spurious periodic image interactions and potential alignment issues [12]. Benchmarking against 245 "gold standard" hybrid calculations revealed that while semi-local DFT methods can provide useful qualitative trends for materials screening applications, their quantitative accuracy for defect transition levels and formation energies remains limited, particularly for systems with significant charge localization effects [12].

Experimental Protocols for Energy Validation

Experimental reduction potential values are typically determined through electrochemical measurements in appropriate solvent systems. The benchmarking study by Neugebauer et al. compiled experimental reduction potential data for 193 main-group species and 120 organometallic species, with geometries optimized using GFN2-xTB and solvent corrections applied using the Extended Conductor-like Polarizable Continuum Solvation Model (CPCM-X) [4].

For electron affinity validation, experimental gas-phase values were obtained from established literature for 37 simple main-group organic and inorganic species [4]. For organometallic systems, electron affinities were derived from experimental ionization energies of coordination complexes by reversing the sign of the reported values [4]. All DFT computations in these benchmark studies were conducted with strict convergence criteria, including a (99, 590) integration grid with robust pruning and an integral tolerance of 10⁻¹⁴ to ensure numerical accuracy [4].

Spectroscopic Parameter Validation

Vibrational Frequency Prediction

The accuracy of DFT in predicting vibrational frequencies is commonly assessed through comparison with experimental infrared and Raman spectroscopy data. Performance varies significantly with the choice of functional and basis set, with different combinations excelling for different molecular systems.

Table 3: Performance of DFT Methods for Vibrational Spectroscopy

| System | Optimal Method | Key Metrics | Correlation with Experiment |

|---|---|---|---|

| Triclosan | LSDA/6-311G | Best vibrational frequency prediction | R² = 0.998 for 5-(4-chlorophenyl)-2-amino-1,3,4-thiadiazole |

| 5-(4-chlorophenyl)-2-amino-1,3,4-thiadiazole | B3LYP/6-31+ G(d,p) | Vibrational frequencies | R² = 0.998 |

| Graphene/GO | B3LYP/6-311 G | Longitudinal Optical mode | 1585 cm⁻¹ (graphene), 1582 cm⁻¹ (graphene oxide) |

| Corannulene/Coronene | B3LYP/6-311 G | IR and Raman intensity | Aligns with theoretical predictions |

For triclosan, the LSDA functional with the 6-311G basis set demonstrated superior performance in predicting vibrational spectra compared to other functionals, including hybrid methods [9]. The study employed the wavenumber-linear scaling (WLS) method to correct for the overestimation of calculated vibrational frequencies caused by neglect of anharmonicity effects and electron correlation [9].

For 5-(4-chlorophenyl)-2-amino-1,3,4-thiadiazole, DFT calculations at the B3LYP/6-31+ G(d,p) level showed excellent correlation with experimental vibrational frequencies (R² = 0.998) [11]. The B3LYP functional with the 6-311 G basis set also successfully predicted the Longitudinal Optical vibration mode in graphene-based systems, yielding values of 1585 cm⁻¹ for graphene and 1582 cm⁻¹ for graphene oxide that aligned well with theoretical predictions [13].

Electronic Spectroscopy and Properties

DFT calculations also facilitate the prediction of electronic spectroscopic properties, including UV-Vis absorption spectra and electronic transition energies. For 5-(4-chlorophenyl)-2-amino-1,3,4-thiadiazole, computational analysis revealed n→π* UV absorption characteristics and a significant first-order hyperpolarizability, suggesting potential applications in nonlinear optics [11].

The electronic properties of corannulene (C₂₀, C₂₀O) and coronene (C₂₄, C₂₄O) systems, including HOMO-LUMO energy levels and band gaps, have been successfully modeled using DFT with the 6-311 G basis set and B3LYP hybrid functional [13]. The calculated band gaps for corannulene (3.7 eV - 2.1 eV) and coronene (3.5 eV - 1.68 eV) provided insights into their electronic structures and reactivity [13].

Experimental Protocols for Spectroscopic Validation

Experimental vibrational validation typically employs Fourier-transform infrared (FT-IR) spectroscopy with samples prepared as KBr pellets for solid compounds [11]. Raman spectroscopy complements IR measurements, with spectra recorded across appropriate wavenumber ranges (e.g., 500-3500 cm⁻¹) [9]. For electronic spectroscopy, UV-Vis absorption spectra are measured in suitable solvents using spectrophotometers, with comparison to time-dependent DFT (TD-DFT) calculations for assignment of electronic transitions [11].

NMR spectroscopy provides additional validation through comparison of calculated chemical shifts with experimental ¹H and ¹³C NMR data [11]. High-resolution mass spectrometry (HRMS) serves to verify molecular ion peaks and confirm elemental compositions [11].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Computational Tools for DFT Validation

| Item | Function | Application Examples |

|---|---|---|

| Gaussian 09W/16 | Quantum chemistry software package | Geometry optimization, frequency calculations [9] |

| CP2K | DFT code specializing in solid-state and periodic systems | SiO₂ polymorph studies, zeolite frameworks [10] |

| Quantum ESPRESSO | Open-source DFT package for periodic systems | Magnetic ferrite simulations [2] |

| GFN2-xTB | Semiempirical quantum mechanical method | Initial geometry optimization, conformer searching [4] |

| B3LYP functional | Hybrid density functional | General-purpose geometry and frequency calculations [11] [9] |

| M06-2X functional | Meta-hybrid density functional | Non-covalent interactions, precise geometry optimization [9] |

| 6-311++G(d,p) basis set | Triple-zeta valence basis set with diffuse functions | Accurate geometry prediction for organic molecules [9] |

| def2-TZVPD basis set | Triple-zeta valence basis set | High-level reference calculations [4] |

| CPCM-X | Implicit solvation model | Solvent correction for reduction potential calculations [4] |

This comparative analysis demonstrates that DFT validation against experimental data remains system- and property-dependent. For geometric structure prediction, the M06-2X/6-311++G(d,p) level consistently outperforms other functionals for organic molecules, while dispersion-corrected functionals are essential for periodic systems. For energy-related properties like reduction potentials, the B97-3c functional excels for main-group species, while neural network potentials like UMA-S show promising consistency across diverse chemical spaces. For spectroscopic validation, the optimal functional varies, with LSDA unexpectedly outperforming hybrid functionals for vibrational frequency prediction in some systems.

These findings underscore the importance of method validation for specific applications rather than relying on universal recommendations. Researchers should prioritize establishing validation protocols relevant to their target molecular systems and properties of interest. As computational methods continue to evolve, particularly with the emergence of machine-learning potentials, validation against robust experimental data will remain essential for ensuring predictive accuracy in drug development and materials design.

The validation of Density Functional Theory (DFT) against experimental crystallographic data represents a cornerstone of modern computational chemistry and materials science. This guide provides an objective comparison of various computational methods, with a specific focus on their performance in predicting molecular geometries and crystal packing, benchmarked against high-quality X-ray crystallographic data. The reliability of computational predictions is paramount for researchers in drug development and materials science, where in silico models are routinely used to predict molecular behavior, stability, and interactions before synthesis. We systematically evaluate the accuracy of multiple DFT functionals, semi-empirical methods, and machine learning potentials against experimental benchmarks for bond lengths, angles, and crystal packing arrangements, providing a clear framework for selecting appropriate computational tools based on specific research requirements.

Methodologies for Computational Validation

Experimental Crystallographic Benchmarking

The primary methodology for establishing ground-truth molecular geometries relies on single-crystal X-ray diffraction (SCXRD). This technique provides unambiguous three-dimensional structural information by measuring the diffraction pattern produced when X-rays interact with a crystalline sample [14]. The resulting electron density maps allow for precise determination of atomic positions, from which bond lengths, bond angles, and torsional angles can be derived with high accuracy. For organic compounds and small molecules, modern SCXRD can achieve precision in bond lengths of approximately 0.002 Å for non-hydrogen atoms [15], establishing it as the gold standard for structural validation.

When utilizing crystallographic data for benchmarking, several critical factors must be considered. The resolution of the diffraction data directly impacts model reliability, with higher resolution (typically <1.0 Å) providing greater atomic positioning accuracy [16]. Additionally, the completeness of the diffraction data and the clashscore of the refined model serve as important quality indicators. Researchers must also distinguish between equilibrium bond lengths (re) and vibrationally-averaged bond lengths (r0), as computational methods typically predict the former while experimental results from rotational spectra often provide the latter [17].

Computational Approaches for Geometry Prediction

Density Functional Theory (DFT) calculations represent the most widely used quantum mechanical approach for predicting molecular geometries. In a typical benchmarking study, computational methods are assessed by comparing predicted bond lengths, bond angles, and sometimes dihedral angles with their experimentally determined counterparts from crystallographic studies. The calculations involve geometry optimization of the target molecule, starting from either the experimental coordinates or a computationally generated structure, until a local energy minimum is located on the potential energy surface [15] [18].

For crystalline materials, more advanced approaches involve periodic DFT calculations that include the full crystal lattice parameters in the optimization process. This method allows for assessment of not only intramolecular geometry but also intermolecular interactions and crystal packing effects. In such cases, the root-mean-square Cartesian displacement (RMSD) between the experimental and optimized structures serves as a key metric, with values below 0.25 Å generally indicating correct structures [18].

Semi-empirical quantum mechanical methods such as GFN2-xTB offer a middle ground between accuracy and computational cost, enabling exhaustive conformational sampling of large molecular sets [19]. Recent advances also include machine learning potentials (MLPs) that can approach DFT-level accuracy at significantly reduced computational expense, making them increasingly valuable for crystal structure prediction [20].

Performance Comparison of Computational Methods

Accuracy in Bond Length Prediction

The performance of various computational methods was evaluated using creatininium cation structures as a benchmark system, with results compared against high-precision X-ray crystallographic data (Table 1) [15].

Table 1: Performance of Computational Methods for Bond Length Prediction

| Method | Type | Mean Bond Length Error (Å) | Rank |

|---|---|---|---|

| MPW1B95 | HMGGA | 0.0126 | 1 |

| PBEh | HGGA | 0.0129 | 2 |

| mPW1PW | HGGA | 0.0133 | 3 |

| SVWN5 | LSDA | 0.0142 | 4 |

| B97-2 | HGGA | 0.0144 | 5 |

| B3LYP | HGGA | 0.0178 | 16 |

| SCC-DFTB | SEMO | ~0.03 | >16 |

Note: HMGGA = Hybrid Meta Generalized Gradient Approximation; HGGA = Hybrid Generalized Gradient Approximation; LSDA = Local Spin Density Approximation; SEMO = Semiempirical Molecular Orbital

The data reveal significant variation in performance among DFT functionals, with the top-performing functionals (MPW1B95, PBEh, mPW1PW) achieving mean bond length errors of approximately 0.013 Å, approaching the experimental uncertainty of 0.002 Å [15]. Notably, the popular B3LYP functional performed less favorably with an error of 0.0178 Å, ranking 16th among the 21 tested functionals. Semi-empirical methods including SCC-DFTB demonstrated substantially larger errors, approximately 0.03 Å, highlighting the superior accuracy of DFT methods for geometric predictions [15].

Conformer Generation and Torsional Angle Prediction

Beyond bond lengths, the accurate prediction of molecular conformations and torsional preferences represents another critical benchmarking area. Large-scale studies comparing over 3 million compounds have revealed that quantum chemical methods like GFN2-xTB can generate conformer ensembles that closely match experimental crystallographic geometries, particularly for molecules with fewer rotatable bonds [19].

Table 2: Performance of Conformer Generation Methods

| Method | Basis | RMSD for Small Molecules | RMSD for Protein Ligands |

|---|---|---|---|

| CREST/GFN2 | Quantum | ~0.2-0.5 Å (COD) | Higher RMSD (Platinum) |

| ETKDG | Crystallographic | Higher RMSD (COD) | Lower RMSD (Platinum) |

For small molecules from the Crystallographic Open Database (COD), CREST/GFN2 ensembles demonstrated lower root-mean-square displacement (RMSD) values compared to the knowledge-based ETKDG method, particularly for molecules with zero to approximately 3-4 rotatable bonds [19]. This improved performance stems from better treatment of nonbonded interactions and electrostatics in the quantum mechanical method. However, for protein-bound ligands from the Platinum diverse set, ETKDG outperformed CREST/GFN2, suggesting that crystallographic data may better capture the extended conformations stabilized in binding sites [19].

Crystal Structure Prediction and Packing

The accurate prediction of complete crystal structures represents the most challenging benchmark, requiring correct reproduction of both molecular geometry and intermolecular packing. Recent evaluations of 13 state-of-the-art crystal structure prediction (CSP) algorithms revealed that performance remains far from satisfactory, with most algorithms struggling to identify correct space groups [20].

Machine learning potential-based CSP algorithms have achieved competitive performance compared to traditional DFT-based approaches, with success strongly dependent on the quality of the neural potentials and the global optimization algorithms employed [20]. For organic crystal structures, dispersion-corrected DFT (d-DFT) methods have demonstrated remarkable accuracy, with full energy minimization including unit-cell parameters producing average RMS Cartesian displacements of only 0.095 Å compared to experimental structures [18].

Figure 1: Workflow for Validating Computational Methods Against Crystallographic Data

Experimental Protocols

Crystallographic Data Collection and Processing

High-quality benchmarking requires meticulous attention to crystallographic data collection and processing protocols. Single crystals of suitable size and quality are mounted on diffractometers, and X-ray diffraction data are collected at appropriate temperatures (typically 100-293 K) [14]. The raw diffraction images are processed using specialized software to determine unit cell parameters and generate intensity data. Structure solution is typically achieved through direct methods or intrinsic phasing, followed by iterative least-squares refinement against F² values [14] [18].

Critical quality indicators must be monitored throughout this process, including data completeness, Rmerge, and the final R-factor values (Rwork and Rfree) [16]. For the deposited models of glutamate transporters, for instance, Rwork/Rfree values typically range from 21-30%, reflecting the moderate resolution (2.5-4.5 Å) of these membrane protein structures [16]. For small organic molecules, these values are generally significantly lower, reflecting higher precision.

Computational Benchmarking Procedures

Standardized computational protocols are essential for meaningful method comparisons. For molecular geometry assessments, researchers typically:

- Extract molecular structures from crystallographic data, removing solvent molecules and counterions if necessary [19]

- Perform geometry optimization using various computational methods, starting from the experimental coordinates [15]

- Calculate root-mean-square deviations (RMSD) between experimental and optimized structures [18]

- Statistically analyze differences in specific geometric parameters (bond lengths, angles, dihedrals) [15]

For crystal packing validation, more sophisticated approaches are required:

- Energy minimization of the complete crystal structure including unit cell parameters using dispersion-corrected DFT methods [18]

- Comparison of experimental and optimized structures using RMS Cartesian displacements [18]

- Analysis of intermolecular interactions and packing motifs before and after optimization [18]

RMSD values below 0.25 Å generally indicate correct structures, while higher values may signal problems with either the experimental model or the computational method [18].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources

| Resource | Type | Function | Example Sources |

|---|---|---|---|

| Crystallographic Databases | Data | Source of experimental reference structures | Crystallographic Open Database (COD), Cambridge Structural Database (CSD) [19] |

| Quantum Chemistry Software | Computational | Molecular geometry optimization | ORCA, Gaussian, CREST [19] [15] |

| DFT Functionals | Computational | Electron exchange-correlation approximation | MPW1B95, PBEh, B3LYP [15] |

| Semi-empirical Methods | Computational | Rapid conformational sampling | GFN2-xTB, AM1, PM3 [19] [15] |

| CSP Algorithms | Computational | Crystal structure prediction | CALYPSO, USPEX, GNOA [20] |

| Benchmark Platforms | Validation | Performance assessment of methods | CSPBench, CCCBDB [20] [17] |

This comparison guide demonstrates that careful benchmarking against crystallographic data remains essential for validating computational methods in chemical research. DFT methods, particularly hybrid functionals like MPW1B95 and PBEh, provide excellent agreement with experimental bond lengths, with errors approaching experimental uncertainty. For conformational sampling, quantum chemical methods like GFN2-xTB outperform knowledge-based approaches for small molecules in the gas phase, while crystallography-derived methods maintain advantages for protein-bound ligands. In crystal structure prediction, machine learning potentials are achieving competitive performance with traditional DFT-based approaches, though significant challenges remain. Researchers should select computational methods based on their specific needs, considering the trade-offs between accuracy, computational cost, and applicability to their chemical systems of interest. As computational power increases and methods evolve, ongoing benchmarking against experimental crystallographic data will continue to be essential for methodological advancement and reliable application in drug development and materials design.

Density Functional Theory (DFT) has become an indispensable tool in computational chemistry, enabling the prediction of molecular properties by solving the fundamental equations of quantum mechanics. A critical validation of its performance lies in its ability to reproduce experimental spectroscopic data, particularly Nuclear Magnetic Resonance (NMR) parameters. This guide provides an objective comparison of DFT methodologies for predicting NMR chemical shifts and scalar coupling constants (J-couplings), benchmarking performance against experimental data and higher-level computational methods to establish reliability and identify limitations in the context of pharmaceutical and materials research.

Performance Comparison of DFT Methodologies

Accuracy of Chemical Shift Predictions

Extensive benchmarking studies have established the typical accuracy levels achievable with modern DFT approaches for predicting NMR chemical shifts. The table below summarizes the performance of various methodologies for a complex drug molecule, (R)-ispinesib, and small organic molecules.

Table 1: Accuracy of DFT for Predicting NMR Chemical Shifts in Drug Molecules and Small Organic Molecules

| Method | Basis Set | Nucleus | Mean Absolute Error (MAE) | System Studied |

|---|---|---|---|---|

| O3LYP [21] | DGDZVP [21] | ¹H | 0.174 ppm [21] | (R)-ispinesib [21] |

| O3LYP [21] | DGDZVP [21] | ¹³C | 3.972 ppm [21] | (R)-ispinesib [21] |

| B3LYP [22] | 6-31G(d) [22] | ¹H | 0.185 ppm [22] | Small Organic Molecules (NMRShiftDB2) [22] |

| B3LYP [22] | 6-31G(d) [22] | ¹³C | 0.944 ppm [22] | Small Organic Molecules (NMRShiftDB2) [22] |

| B3LYP [22] | 6-31G(d) [22] | ¹H | 0.078 ppm [22] | Small Organic Molecules (CHESHIRE) [22] |

| B3LYP [22] | 6-31G(d) [22] | ¹³C | 0.504 ppm [22] | Small Organic Molecules (CHESHIRE) [22] |

| Not Specified [21] | 6-31++G(d,p) [21] | ¹H | ~0.2 ppm [21] | Complex Drug Molecules [21] |

| Not Specified [21] | 6-31++G(d,p) [21] | ¹³C | <~6.0 ppm [21] | Complex Drug Molecules [21] |

The data demonstrates that DFT can achieve high accuracy for ¹H chemical shifts, with MAE values often below 0.2 ppm, which is sufficient for distinguishing between many different chemical environments [21]. For ¹³C nuclei, errors are larger but remain chemically insightful, typically under 6 ppm for complex drug molecules and below 1 ppm for optimized small molecule datasets [22] [21]. The choice of basis set is crucial, with double-ζ basis sets like DGDZVP and 6-31++G(d,p) often providing an optimal balance of accuracy and computational cost, sometimes outperforming larger triple-ζ sets [21].

Accuracy of Scalar Coupling Constant (J) Predictions

The prediction of scalar coupling constants presents a greater challenge for DFT than chemical shifts. J-couplings, especially the dominant Fermi contact term, are highly sensitive to the electron density at the nucleus, requiring high-quality wavefunctions [23].

Table 2: Performance of Computational Methods for Scalar Coupling Constants

| Method | Type of Coupling | Performance / Key Findings |

|---|---|---|

| DFT (General) [23] | Multiple types (¹J, ²J, ³J) | More demanding than chemical shift calculations due to sensitivity to wavefunction near the nucleus [23]. |

| Graph Angle-Attention Neural Network (GAANN) [24] | Multiple types (¹J, ²J, ³J) | Prediction accuracy log(MAE) = -2.52, close to DFT accuracy but much faster [24]. |

| DFT for Enantiospecificity | J-couplings between enantiomers | Fails to explain reported enantiospecific NMR responses; differences between enantiomers are negligible and attributable to numerical noise [25]. |

| DFT/FPT (B3PW91/6-311G) [26] | Hydrogen-bond couplings (e.g., ( ^h2J_{N-H...N} )) | Can successfully calculate J-couplings through hydrogen bonds and correlate them with H-bond distances [26]. |

A significant finding from recent research is that standard DFT calculations are parity-conserving, meaning they predict identical J-couplings for two enantiomers (mirror-image molecules) [25]. Reported enantiospecific differences in cross-polarization NMR experiments are likely due to variations in sample conditions (purity, crystallinity) rather than calculable differences in J-couplings themselves [25]. For standard applications, machine learning models like the Graph Angle-Attention Neural Network (GAANN) now offer accuracy close to DFT calculations at a fraction of the computational cost, highlighting a growing trend in the field [24].

Experimental and Computational Protocols

Standard Protocol for Chemical Shift Calculation

A robust workflow for calculating NMR chemical shifts involves multiple steps to ensure accuracy and reliability, from initial structure generation to final calculation.

Diagram 1: Chemical shift calculation workflow.

1. Initial Structure and Conformer Generation: The process begins with a 2D molecular representation (e.g., SMILES or InChI). For flexible molecules, multiple low-energy 3D conformers are generated using algorithms like ETKDG and force fields like MMFF94 [22] [27]. The lowest-energy conformer is typically selected, or chemical shifts are Boltzmann-averaged across several low-energy conformers [27].

2. Geometry Optimization: This is a critical step. The initial 3D structure must be optimized using DFT to locate a true energy minimum. Complete geometry optimization is essential for achieving the highest accuracy in both ¹H and ¹³C chemical shifts [21]. This can be performed in the gas phase or, more accurately, using an implicit solvation model (e.g., PCM, SMD, or COSMO) to mimic the experimental solvent environment [21] [27].

3. NMR Calculation with GIAO: The chemical shielding tensor (σ) is calculated for the optimized geometry using the Gauge-Including Atomic Orbital (GIAO) method, which ensures results are independent of the coordinate system origin [21] [28]. This calculation is performed at a consistent level of theory (functional and basis set).

4. Referencing and Linear Regression: The isotropic shielding constant (σi) for each nucleus is converted to a chemical shift (δi) by referencing against a standard like tetramethylsilane (TMS): δi = σref - σi + δref, where δ_ref for TMS is 0 ppm [27]. For greater accuracy, empirical linear regression (scaling) between calculated shieldings and experimental shifts of a training set is often applied [22].

Protocol for Scalar Coupling Constants

The calculation of J-couplings is more specialized. The Fermi contact (FC) term is usually dominant and requires a high-quality basis set capable of describing the wavefunction correctly at the atomic nucleus [23]. The finite perturbation theory (FPT) approach is commonly used within DFT frameworks [26] [23]. As with chemical shifts, using a well-optimized geometry is paramount. It is critical to use identical, mirror-image conformations when comparing enantiomers, as conformational differences (e.g., dihedral angles) can induce large apparent variations in J-couplings via the Karplus relationship, which are easily mistaken for genuine enantiospecific effects [25].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Software, Functionals, and Basis Sets for DFT-NMR Validation

| Tool Name | Type | Primary Function & Application Notes |

|---|---|---|

| Gaussian [21] | Software Suite | Industry-standard software for quantum chemistry calculations, widely used for NMR parameter prediction via GIAO [21]. |

| ORCA [25] | Software Suite | Open-source quantum chemistry package featuring advanced methods (e.g., X2C) for relativistic NMR calculations [25]. |

| NWChem [27] | Software Suite | High-performance computational chemistry software used for automating chemical shift calculations on large molecule sets [27]. |

| B3LYP [22] [21] | Hybrid Functional | The dominant hybrid functional for NMR calculations, offering a reliable balance of accuracy for various systems [22] [21]. |

| PBE0/BP86 [29] | Functional | Popular GGA functionals (BP86) and their hybrid variants (PBE0) often used for geometry optimization and property calculations [29]. |

| 6-31G(d) / 6-31++G(d,p) [21] | Basis Set | Pople-style basis sets; double-ζ with polarization and diffuse functions offer a good cost-accuracy balance for NMR [21]. |

| def2-TZVP [25] | Basis Set | Ahlrichs-style triple-ζ basis set with polarization, used for higher-accuracy calculations [25]. |

| DGDZVP [21] | Basis Set | Double-ζ basis set specifically developed for DFT, often excellent for NMR chemical shift prediction [21]. |

| IGLO-III [23] | Basis Set | Historically significant basis set designed for NMR property calculations (IGLO = Individual Gauge for Localized Orbitals) [23]. |

DFT has matured into a highly reliable tool for predicting NMR chemical shifts, with accuracy often sufficient to guide structural assignment and elucidation in complex drug molecules and organic compounds. Its performance for scalar coupling constants is more nuanced; while it successfully predicts J-couplings in hydrogen-bonded systems and for conformational analysis, it cannot explain purported enantiospecific couplings, a limitation rooted in its parity-conserving nature. The synergy between experimental NMR spectroscopy and DFT calculations, when protocols are carefully followed, provides a powerful framework for validating molecular structures, with emerging machine learning methods offering a promising path for rapid, large-scale predictions.

The Critical Role of Validated Datasets for Method Development and Benchmarking

Density Functional Theory (DFT) serves as the workhorse of modern quantum mechanics calculations for molecular and periodic structures, yet its predictive reliability depends critically on the quality of validation against experimental data. Despite countless studies demonstrating DFT's accuracy across various systems, few have comprehensively targeted industrially-relevant materials or provided clear guidance on functional selection, expected deviation from experimental values, or pseudopotential performance [30]. This validation gap becomes particularly problematic as researchers increasingly rely on computational data to train machine learning interatomic potentials (MLIPs), where errors in underlying DFT calculations propagate and potentially amplify through trained models. The foundational question remains: how can practitioners distinguish between methodological limitations and numerical errors in their computational workflows?

The emergence of large-scale DFT datasets has attempted to address this challenge, but recent investigations reveal surprising inconsistencies in even widely-used benchmark data. This analysis examines the critical importance of validated datasets for robust method development and benchmarking in computational chemistry, providing a structured comparison of available resources and their experimental validation status to guide researchers in selecting appropriate datasets for their specific applications.

The Current Landscape of DFT Datasets

The proliferation of DFT datasets has created both opportunities and challenges for computational chemists. These datasets vary dramatically in chemical diversity, numerical quality, and experimental validation, factors that directly impact their utility for method development and benchmarking.

Table 1: Key Characteristics of Major Molecular DFT Datasets

| Dataset | Size (Configurations) | Level of Theory | Chemical Diversity | Reported Validation |

|---|---|---|---|---|

| OMol25 [31] | 100 million | ωB97M-V/def2-TZVPD | 83 elements (H-Bi); biomolecules, metal complexes, electrolytes | Extensive baseline MLIP benchmarks; internal consistency checks |

| ANI-1x [32] | 5.0 million (6-31G*), 4.6 million (def2-TZVPP) | ωB97x/6-31G* and ωB97x/def2-TZVPP | Organic molecules and drug-like compounds | Comparison between basis sets; limited experimental validation |

| SPICE [32] | 2.0 million | ωB97M-D3(BJ)/def2-TZVPPD | Small molecules and peptides | Intended for biomolecular force field development |

| ANI-1xbb [32] | 13.1 million | B97-3c | Diverse organic molecules | Focus on broad coverage rather than high accuracy |

| QCML [32] | 33.5 million | PBE0 | Focused chemical space | High-throughput screening oriented |

| Transition1x [32] | 9.6 million | ωB97x/6-31G(d) | Reaction transition states | Chemical reaction benchmarking |

Recent analyses have uncovered significant quality concerns in several popular datasets. A 2025 study examining net forces in DFT datasets found that the ANI-1x, Transition1x, AIMNet2, and SPICE datasets contain unexpectedly large nonzero net forces, indicating suboptimal DFT settings and numerical errors [32]. These force inaccuracies averaged from 1.7 meV/Å in the SPICE dataset to 33.2 meV/Å in the ANI-1x dataset when compared to recomputed forces using more reliable DFT settings at the same level of theory [32]. Such errors are particularly concerning given that general-purpose MLIP force mean absolute errors are now approaching 10 meV/Å, meaning that errors in training data may fundamentally limit model accuracy.

Diagram 1: The dataset development and validation workflow. The red node highlights quality control as a critical checkpoint, while green indicates experimental validation - the essential step often minimized in practice.

Case Studies: Dataset Validation in Practice

OMol25: A High-Precision Benchmark

The OMol25 dataset represents a significant advancement in dataset quality, incorporating rigorous quality control measures and extensive chemical diversity. With over 100 million calculations at the ωB97M-V/def2-TZVPD level, OMol25 spans 83 elements (H through Bi) and includes biomolecules, metal complexes, and electrolytes [31]. The dataset implements stringent numerical precision protocols, including the DEFGRID3 setting in ORCA 6.0.0 with 590 angular points for exchange-correlation and 302 for COSX, specifically designed to mitigate numerical noise between energy gradients and forces [31]. This attention to numerical detail results in negligible net forces throughout the dataset, addressing a key limitation of earlier resources.

OMol25's validation approach includes comprehensive baseline evaluations using state-of-the-art equivariant graph neural network architectures (eSEN, GemNet-OC, MACE) with explicit reporting of out-of-distribution test errors [31]. The dataset also includes specialized benchmarking tasks such as conformer ensemble ranking, protein-ligand interaction energies, and spin-gap calculations, providing multifaceted validation metrics beyond simple energy comparisons.

Experimental Validation in Materials Science

The National Institute of Standards and Technology (NIST) addresses DFT validation through targeted studies on industrially-relevant material systems [30]. Their work focuses on three critical domains: pure and alloy solids for CALPHAD method development, metal-organic frameworks (MOFs) for carbon capture applications, and metallic nanoparticles for catalytic applications. In each domain, systematic comparisons between different functionals, pseudopotentials, and basis sets provide practical guidance for method selection.

A particularly insightful finding from NIST's work concerns the sensitivity of MOF adsorption properties to the choice of partial charge calculation scheme [30]. Despite using identical DFT methodologies, different charge derivation approaches produced significantly different equilibrium properties for MOF-adsorbate systems, highlighting how methodological choices beyond the core DFT calculation can impact predictive accuracy and experimental agreement.

Machine Learning Correction of DFT Errors

A 2025 study demonstrated a machine learning approach to correct systematic errors in DFT-calculated formation enthalpies for alloys [33]. By training neural network models on the discrepancy between DFT-calculated and experimentally measured enthalpies for binary and ternary alloys, researchers achieved significant improvements in phase stability predictions. The model utilized structured feature sets including elemental concentrations, atomic numbers, and interaction terms to capture key chemical effects, then applied these corrections to the Al-Ni-Pd and Al-Ni-Ti systems relevant for high-temperature aerospace applications [33].

This approach highlights a pragmatic middle ground: rather than seeking perfect DFT functionals, researchers can develop targeted error correction models trained on well-validated experimental data, effectively bridging the accuracy gap for specific applications.

Quantitative Benchmarking of Computational Methods

Recent comparative studies provide valuable insights into the relative performance of different computational methods when validated against experimental data.

Table 2: Method Performance on Experimental Reduction Potentials (Mean Absolute Error in V) [4]

| Method | Main-Group Species (OROP) | Organometallic Species (OMROP) | Validation Approach |

|---|---|---|---|

| B97-3c | 0.260 | 0.414 | Experimental reduction potentials in solvent |

| GFN2-xTB | 0.303 | 0.733 | Experimental reduction potentials in solvent |

| eSEN-S (OMol25) | 0.505 | 0.312 | Experimental reduction potentials in solvent |

| UMA-S (OMol25) | 0.261 | 0.262 | Experimental reduction potentials in solvent |

| UMA-M (OMol25) | 0.407 | 0.365 | Experimental reduction potentials in solvent |

The benchmarking results reveal several noteworthy patterns. First, the performance of methods varies significantly between main-group and organometallic species, highlighting the domain dependence of computational accuracy [4]. Surprisingly, certain neural network potentials (UMA-S) achieved accuracy comparable to or better than traditional DFT for organometallic systems despite not explicitly incorporating charge-based physics in their architecture. Second, method performance is not necessarily correlated with computational cost, with the smaller UMA-S model outperforming the larger UMA-M model on both chemical domains [4].

For electron affinity predictions, the same study found that OMol25-trained NNPs performed comparably to low-cost DFT and semiempirical quantum mechanical methods for main-group species but showed advantages for organometallic complexes, suggesting that data-driven approaches may offer particular benefits for chemically complex systems [4].

Experimental Protocols for DFT Validation

Force Validation Protocol

The 2025 force accuracy study established a rigorous protocol for validating DFT forces [32]:

- Sample Selection: Randomly select 1000 configurations from the target dataset

- Reference Computation: Recompute forces using the same functional and basis set but with tighter numerical settings

- Error Metric Calculation: Compute root mean square errors (RMSE) for force components

- Net Force Analysis: Calculate the magnitude of net forces per atom as an initial error indicator

This protocol identified that disabling the RIJCOSX approximation in older ORCA versions eliminated significant net forces in several datasets, providing both a specific remediation for existing data and a warning for future dataset generation [32].

Thermodynamic Property Validation

The ML correction study for formation enthalpies implemented this validation workflow [33]:

- Data Curation: Filter experimental formation enthalpies to exclude unreliable values

- Feature Engineering: Create structured input features including elemental concentrations, weighted atomic numbers, and interaction terms

- Model Training: Train neural network regressors to predict DFT-experiment discrepancies

- Cross-Validation: Apply leave-one-out and k-fold cross-validation to prevent overfitting

- Application: Apply corrected enthalpies to phase diagram calculations

This approach demonstrated that ML corrections could significantly improve predictive accuracy for ternary phase stability while maintaining computational efficiency [33].

Essential Research Reagents and Computational Tools

Table 3: Key Computational Tools for DFT Validation

| Tool/Resource | Function | Application in Validation |

|---|---|---|

| Quantum ESPRESSO [34] | Plane-wave DFT code | Electronic structure, mechanical properties |

| ORCA [32] | Quantum chemistry package | Molecular DFT with various functionals |

| NIST CCCBDB [30] | Computational chemistry database | Experimental comparison data |

| DFT Material Properties Simulator [34] | Web-based simulation tool | Educational validation exercises |

| MSR-ACC/TAE25 [35] | Accurate thermochemistry dataset | High-level reference data |

The selection of appropriate computational tools significantly impacts validation outcomes. For example, the DFT Material Properties Simulator provides an accessible platform for novice users to compute electronic band structures, density of states, and mechanical properties while maintaining advanced options for experienced researchers [34]. Such tools lower the barrier to entry for DFT validation while maintaining methodological rigor.

Future Directions and Recommendations

The evolving landscape of DFT validation suggests several critical directions for future development:

Standardized Validation Metrics: The field would benefit from community-agreed standards for dataset quality metrics, particularly for force accuracy, energy consistency, and experimental agreement.

Specialized Benchmark Sets: Rather than universal datasets, purpose-built benchmark sets for specific chemical domains (organometallics, biomolecules, materials interfaces) would provide more targeted validation.

ML-Augmented Validation: Machine learning approaches show promise for both error correction and quality assessment, potentially identifying problematic calculations before they enter training datasets.

Transparent Reporting: Dataset creators should provide comprehensive documentation of numerical settings, convergence criteria, and known limitations to enable appropriate use.

Experimental Collaboration: Stronger partnerships between computational and experimental groups would ensure validation against reliable, well-characterized reference data.

As dataset size and diversity continue to expand, the critical role of rigorous validation against experimental data becomes increasingly important. By adopting the protocols, metrics, and resources outlined here, researchers can make informed decisions about dataset selection and method development, ultimately enhancing the predictive reliability of computational chemistry across scientific and industrial applications.

Computational Protocols and Applications: Achieving Accurate DFT Results for Biomolecules and Materials

Density Functional Theory (DFT) has become an indispensable computational tool for researchers investigating molecular and material properties across chemical, biological, and physical sciences. The practical application of DFT requires careful selection of two fundamental components: the exchange-correlation functional and the atomic basis set. These choices create a complex landscape where accuracy, computational cost, and applicability to specific chemical systems are often in direct competition. The proliferation of available functionals and basis sets necessitates evidence-based protocols to guide researchers in selecting optimal combinations for their specific tasks. This guide provides a comparative analysis of contemporary DFT approaches, validating methodologies against experimental data to establish robust protocols for diverse research applications in drug development and materials science.

Table 1: Common Basis Set Families and Their Characteristics

| Basis Set Family | Key Examples | General Characteristics | Recommended Use Cases |

|---|---|---|---|

| Pople | 6-31G(d), 6-311+G(d,p) [36] | Split-valence, widely used, good balance of speed/accuracy [37] | General organic molecules; 6-31G(d) is a common default [37] |

| Dunning's cc-pVXZ | cc-pVDZ, cc-pVTZ, cc-pVQZ [36] | Correlation-consistent, systematic convergence to complete basis set limit [36] | High-accuracy post-Hartree-Fock and DFT calculations [37] |

| Ahlrichs/Karlsruhe (def2) | def2-SVP, def2-TZVP, def2-QZVP [36] [38] | Balanced performance, widely used in modern composite methods [36] | General-purpose DFT, especially with transition metals [36] |

| Jensen (pcseg) | pcseg-1, pcseg-2, aug-pcseg-2 [37] | Polarization-consistent, often outperform Pople sets at similar cost [37] | Highly recommended for DFT calculations [37] |

| Specialized (vDZP) | vDZP [38] | Recently developed, minimizes basis set superposition error (BSSE) [38] | Efficient, low-cost calculations with various functionals [38] |

Comparative Analysis of Density Functionals

The Benchmarking Landscape and Functional Performance

The development of robust DFT protocols relies on comprehensive benchmarking against reliable experimental data and high-level theoretical references. The GMTKN55 database, an expansive collection of main-group thermochemistry benchmarks, has become a standard for quantifying functional accuracy [38]. Performance on such benchmarks reveals that no single functional excels universally, but their strengths and weaknesses can be mapped to specific chemical properties and systems.

The ubiquitous B3LYP functional serves as a common starting point in many studies. However, benchmarks show it has specific limitations: it performs reasonably well for basic properties and barrier heights but is "one of the worst overall for reaction energies" and can over-stabilize high-spin states in open-shell 3d transition metal complexes [39]. Its performance improves significantly when augmented with an empirical dispersion correction, such as D3(BJ) [39]. Modern functionals like ωB97X-D, PW6B95, and M06-2X often outperform B3LYP across broader benchmark sets [39]. The machine-learning-aided development of functionals is an emerging frontier, showing promise for creating more universal exchange-correlation approximations trained on high-quality quantum data [40].

Task-Specific Functional Recommendations

- Reaction Energies and Thermochemistry: Functionals like PW6B95 and double-hybrid functionals (e.g., DSD-BLYP) generally show superior performance for reaction energies and isomerizations [39]. B3LYP is not recommended for this domain due to its high errors [39].

- Non-Covalent Interactions: Accurate modeling of dispersion forces (London dispersion, π-π stacking) requires functionals with built-in dispersion corrections or the explicit addition of empirical corrections like D3(BJ) or D4 [39] [38]. The ωB97X-D functional is a strong performer in this category [39].

- Transition Metal Chemistry: Standard hybrid functionals like B3LYP are known to over-stabilize high-spin states. Using a modified version with 15% Hartree-Fock exchange (B3LYP*) or opting for functionals like TPSSh can mitigate this issue [39].

- Band Structures and Solid-State Properties: For periodic systems, meta-GGAs like SCAN and r²SCAN offer a good balance of accuracy and cost, often outperforming GGAs and hybrids for bulk properties [38].

Basis Set Selection Strategies

Basis Set Types and Systematic Convergence

Basis sets expand molecular orbitals as linear combinations of atom-centered functions, with quality increasing with the number of functions per atom (ζ-level). Minimal basis sets (e.g., STO-3G) provide the most computationally economical description but suffer from significant incompleteness error [38]. Double-ζ basis sets (e.g., 6-31G(d), def2-SVP, pcseg-1) offer a better balance and are common for geometry optimizations and frequency calculations [37]. For higher accuracy, triple-ζ basis sets (e.g., 6-311+G(d,p), def2-TZVP, cc-pVTZ) provide results closer to the complete basis set limit but at a substantially higher computational cost—often more than five-fold slower than double-ζ sets [38].

The recent vDZP basis set demonstrates that specialized double-ζ sets can achieve accuracy approaching that of conventional triple-ζ basis sets. In benchmarks, vDZP combined with various functionals (B3LYP-D4, M06-2X, B97-D3BJ, r²SCAN-D4) yielded results "only moderately worse" than the much larger (aug)-def2-QZVP basis set, making it a highly efficient choice for a wide range of functionals without need for reparameterization [38].

Practical Basis Set Recommendations

- Geometry Optimizations and Frequency Calculations: Double-ζ basis sets with polarization functions, such as 6-31G(d) (also called 6-31G*), def2-SVP, or the highly recommended pcseg-1, offer a good compromise between cost and accuracy [37].

- Accurate Energy Calculations (Single-Points): For final energy evaluations on pre-optimized structures, use larger triple-ζ basis sets like 6-311+G(d,p), def2-TZVP, or cc-pVTZ(seg-opt) [37]. The "seg-opt" variant of Dunning's basis sets is recommended for improved computational efficiency [37].

- Non-Covalent Interactions and Anionic Systems: Include diffuse functions (e.g., 6-311++G(d,p), aug-cc-pVDZ) to properly describe electron densities far from the nucleus [36] [37].

- High-Throughput and Large Systems: The vDZP basis set or polarized minimal basis sets like MIDI! provide maximum speed while retaining useful accuracy [37] [38].

Table 2: Functional/Basis Set Performance on GMTKN55 Benchmark (Weighted Total Mean Absolute Deviation (WTMAD2) in kcal/mol) [38]

| Functional | Large Quadruple-ζ Basis Set | vDZP Basis Set | Performance Gap |

|---|---|---|---|

| B97-D3BJ | 8.42 (def2-QZVP) | 9.56 | +1.14 |

| r²SCAN-D4 | 7.45 (def2-QZVP) | 8.34 | +0.89 |

| B3LYP-D4 | 6.42 (def2-QZVP) | 7.87 | +1.45 |

| M06-2X | 5.68 (def2-QZVP) | 7.13 | +1.45 |

| ωB97X-D4 | 3.73 (def2-QZVP) | 5.57 | +1.84 |

Experimentally Validated Case Study: Dopamine Sensing with CuO–ZnO Nanocomposites

Experimental Protocol and Methodology

A practical example integrating DFT with experimental validation involves developing a high-sensitivity sensor for the neurotransmitter dopamine (DA) using CuO–ZnO nanocomposites [41]. Researchers synthesized four distinct CuO–ZnO composites via a one-step hydrothermal method by varying the mass fraction of CuCl₂ precursor (1%, 3%, 5%, 7%) [41]. The composite formed with 3% CuCl₂ developed a unique nanoflower morphology composed of intersecting nanorods, which exhibited superior catalytic performance [41].

DFT simulations revealed the origin of this enhanced activity: the CuO–ZnO nanoflower structure reduced the reaction energy barrier for dopamine oxidation to 0.54 eV and modified the electronic structure (projected density of states), bringing the d-band center of Cu closer to the Fermi level [41]. This theoretical insight guided the construction of an electrochemical sensor, which demonstrated a remarkably low detection limit (LOD) of 6.3 nM for dopamine and high sensitivity (34467.3 µA·(mM)⁻¹·cm⁻²) in biological fluids like human serum and urine [41]. The close correlation between predicted catalytic enhancement and experimental performance validates the DFT model's predictive power for materials design.

Table 3: Key Research Reagent Solutions for Electrochemical Sensor Development [41]

| Material/Reagent | Function/Role in Experiment |

|---|---|

| ZnCl₂ | Primary zinc precursor for ZnO formation in hydrothermal synthesis |

| CuCl₂ | Copper dopant precursor; concentration variation (1-7 wt%) controls composite morphology |

| NaOH | Hydroxide source for metal oxide precipitation and crystal growth |

| PEG-400/Water Solution | Solvent medium (1:1 v/v) for hydrothermal synthesis; PEG acts as a stabilizing agent |

| Dopamine (DA) | Target analyte for electrochemical sensing and catalytic performance validation |

| Human Serum and Urine | Complex biological matrices for validating sensor practicality and selectivity |

Integrated Protocols for Robust Computational Chemistry

Consolidated Best-Practice Recommendations

- Define the Objective First: Select the functional and basis set based on the target property (e.g., reaction energy, geometry, non-covalent interaction). Avoid using a single combination for all problems.

- Employ a Multi-Level Approach: Optimize molecular geometries with a moderate-cost method (e.g., B3LYP-D3(BJ)/def2-SVP or pcseg-1) then perform single-point energy calculations with a higher-level method (e.g., DSD-BLYP/def2-TZVP or ωB97X-D/cc-pVTZ) on the optimized structure.

- Always Include Dispersion Corrections: For most modern functionals, add an empirical dispersion correction (e.g., D3(BJ) or D4), as it dramatically improves the description of non-covalent interactions at negligible computational cost [39] [38].

- Validate with Composite Methods: For non-specialists, established composite methods (e.g., B97-3c, ωB97X-3c, r²SCAN-3c) provide robust, well-balanced performance as they integrate a pre-defined functional, basis set, and empirical corrections [38].

- Benchmark Where Possible: For systems with known experimental data or high-level theoretical references, conduct a limited benchmark of 2-3 functionals to identify the best-performing one for your specific chemical system.

Future Directions in DFT Development

The field of DFT continues to evolve rapidly. Key trends include the rise of machine-learned functionals, which are trained on high-quality quantum data to discover more universal exchange-correlation approximations while keeping computational costs low [40]. The development of novel, efficient basis sets like vDZP that minimize BSSE and approach triple-ζ accuracy at double-ζ cost is also progressing [38]. Furthermore, the increased integration of DFT with molecular dynamics (DFT-MD) allows for the simulation of materials and chemical processes under realistic conditions, as demonstrated in studies of graphene-CO₂ interactions [6]. These advancements promise to further narrow the gap between computational prediction and experimental reality, solidifying DFT's role as a cornerstone of modern molecular research and drug development.

Density Functional Theory (DFT) has become the most widely utilized first-principles method for theoretically modeling materials at the electronic level because it provides a reasonable balance between accuracy and computational cost [42]. Within the Kohn-Sham approach to DFT, the most complex electron interactions are collected into an exchange-correlation (XC) energy functional (E({}_{xc})), whose exact form remains unknown and must be approximated [42]. The accuracy of DFT predictions therefore hinges upon the choice of XC functional used to model electron-electron interactions [42] [43].

Perdew and coworkers proposed an illustrative hierarchy known as Jacob's ladder, which classifies XC functionals in ascending order of theoretical rigor and complexity [42]. This ladder provides a framework for understanding the relationships between different functional types:

- Local Density Approximation (LDA): Occupies the first rung, depending only on the electron density ρ

- Generalized Gradient Approximation (GGA): The second rung incorporates both ρ and its gradient ∇ρ

- Meta-GGA: The third rung adds dependency on the orbital kinetic energy density τ

- Hybrid functionals: The fourth rung incorporates a fraction of exact Hartree-Fock exchange

- Double hybrids: The fifth rung includes both Hartree-Fock exchange and perturbative correlation