Validating Multi-Level Quantum Chemistry Workflows: A 2025 Roadmap from Theory to Clinical Application

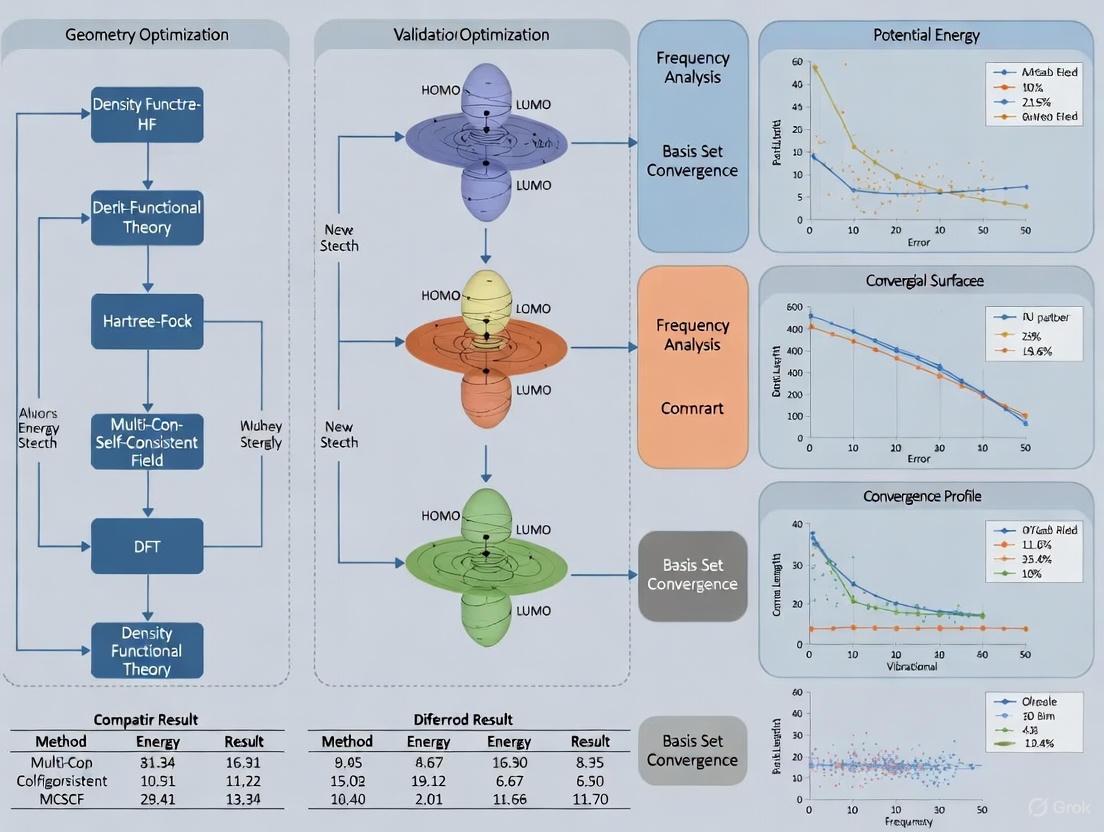

This article provides a comprehensive framework for the validation and comparison of multi-level quantum chemistry workflows, a critical frontier in computational drug discovery.

Validating Multi-Level Quantum Chemistry Workflows: A 2025 Roadmap from Theory to Clinical Application

Abstract

This article provides a comprehensive framework for the validation and comparison of multi-level quantum chemistry workflows, a critical frontier in computational drug discovery. We explore the foundational principles of quantum computing hardware and algorithms, detail the construction of hybrid quantum-classical methods for simulating molecules and proteins, and address the pivotal challenges of noise and error correction in current NISQ-era devices. Through a systematic analysis of benchmarking strategies and real-world case studies, we present a rigorous validation protocol. Designed for researchers and drug development professionals, this review synthesizes the latest 2025 breakthroughs to guide the practical integration of quantum computing into pharmaceutical R&D pipelines.

Quantum Computing Foundations: Core Principles and Hardware for Chemical Simulation

The pursuit of practical quantum computing is being advanced through several competing hardware platforms, each with distinct strengths and weaknesses. For researchers in quantum chemistry and drug development, the choice of platform involves critical trade-offs between qubit connectivity, gate fidelity, operational speed, and scalability. This guide provides a detailed, objective comparison of the three leading modalities—superconducting, trapped-ion, and neutral-atom qubits—focusing on their performance in validated multi-level quantum chemistry workflows. Recent breakthroughs in error correction and logical qubit creation in 2025 have substantially accelerated the timeline for achieving quantum advantage in molecular simulation, making this comparison particularly timely for scientific professionals.

The following table summarizes the core physical principles and current technical specifications of the three leading quantum computing platforms.

Table 1: Core Characteristics of Leading Quantum Computing Platforms

| Feature | Superconducting Qubits | Trapped-Ion Qubits | Neutral-Atom Qubits |

|---|---|---|---|

| Qubit Physical Unit | Superconducting electronic circuits (e.g., transmons) [1] | Individual, charged atoms (ions) held in electromagnetic traps [2] | Neutral atoms (e.g., Rubidium) held by optical tweezers [2] |

| Operating Temperature | Near absolute zero (≈10 mK) [2] | Room-temperature enclosure for apparatus; ions are laser-cooled [2] | Room-temperature enclosure; atoms are laser-cooled [2] |

| Native Qubit Connectivity | Sparse, nearest-neighbor in fixed 2D architecture [2] | All-to-all connectivity within a trapping module [3] | Configurable via atom shuttling; can be long-range [2] |

| Typical Gate Speed (Two-Qubit) | Nanoseconds to microseconds (very fast) [2] | Hundreds of microseconds (slower) [2] [4] | Microseconds to milliseconds (varies) [4] |

| Dominant Error Correction Approach | Surface codes; Quantum Low-Density Parity-Check (qLDPC) [5] | Surface codes enabled by mid-circuit measurement [3] | Surface codes with machine-learning decoders [4] |

Performance Benchmarking & Experimental Data

Performance benchmarks are critical for evaluating a platform's suitability for quantum chemistry applications, where circuit depth and coherence are paramount.

Table 2: Performance Benchmarks for Quantum Hardware (2024-2025 Data)

| Benchmark | Superconducting Qubits | Trapped-Ion Qubits | Neutral-Atom Qubits |

|---|---|---|---|

| Reported Best Coherence Time | Up to 0.6 milliseconds [5] | Minutes (enabling extended computations) [2] | Information persists for extended periods [2] |

| Best Single-Qubit Gate Fidelity | 99.98 - 99.99% [1] | Exceeds 99.99% (among the highest) [3] | Data not specified in search results |

| Best Two-Qubit Gate Fidelity | 99.8 - 99.9% [1] | Approximately 99.7% [1] [3] | Data not specified in search results |

| System Size (Physical Qubits) | 1,000+ qubits demonstrated (e.g., IBM Condor) [6] | Dozens of qubits (e.g., 36-qubit IonQ Forte Enterprise) [7] [8] | 288+ qubits for error correction experiments [4] |

| Logical Qubit Progress | IBM roadmap targets 200 logical qubits by 2029 [5] | Demonstration of real-time error correction [3] | 48 logical qubits demonstrated on a 448-atom processor [2] [4] |

Relevance to Quantum Chemistry Workflows

The true test of a quantum hardware platform is its performance in real-world scientific applications. The following experimental data highlights progress in quantum chemistry simulations.

Table 3: Documented Applications in Molecular Simulation and Quantum Chemistry

| Experiment / Application | Platform | Key Performance Result | Implication for Drug Development |

|---|---|---|---|

| Medical Device Fluid Simulation [5] [8] | Trapped-Ion (IonQ) | Outperformed classical HPC by 12% in speed. | Enables faster, more complex biomedical engineering simulations. |

| Cytochrome P450 Enzyme Simulation [5] | Superconducting (Google) | Simulated with greater efficiency and precision than traditional methods. | Could significantly accelerate prediction of drug metabolism and toxicity. |

| Molecular Geometry Calculation [5] | Superconducting (Google) | Created a "molecular ruler" for measuring longer distances than traditional methods. | Provides a new tool for understanding molecular structures in drug design. |

| General Chemistry Simulations [8] | Trapped-Ion (IonQ) | Surpassed classical methods in certain chemistry simulations. | Indicates growing utility for a range of quantum chemistry problems. |

Experimental Protocols for Validation

To ensure the validity and reproducibility of results in multi-level quantum chemistry workflows, researchers must adhere to rigorous experimental protocols. The following methodologies are cited from recent, key demonstrations.

Protocol for Error-Corrected Quantum Simulation (Neutral-Atom)

This protocol is adapted from the 2025 study demonstrating repeatable error correction on a neutral-atom processor [4].

System Initialization:

- Atom Array Preparation: Initialize a 2D array of up to 288 Rubidium-87 atoms using dynamic optical tweezers.

- Laser Cooling: Cool the atoms to their motional ground state using resolved-sideband cooling techniques.

- State Initialization: Prepare all data qubits in the |0⟩ state via optical pumping.

Quantum Error Correction (QEC) Cycle:

- Stabilizer Measurement: Execute multiple rounds of syndrome extraction using the surface code architecture. This involves:

- Entangling Gates: Apply laser pulses to perform Rydberg-based entangling gates between data and ancillary qubits.

- Ancilla Readout: Measure the ancillary qubits to obtain syndrome data without collapsing the data qubits' state.

- Classical Decoding: Feed the syndrome data in real-time to a machine-learning-based decoder (e.g., a neural network running on a GPU) to identify likely error locations and types.

- Correction Application: Apply a predicted correction operation to the data qubits in subsequent quantum gates or via classical tracking (Pauli frame).

- Stabilizer Measurement: Execute multiple rounds of syndrome extraction using the surface code architecture. This involves:

Logical Gate Execution:

- Transversal Gates: Perform logical operations, such as a CNOT gate, by applying physical gates across corresponding qubits in different logical code blocks.

- Lattice Surgery: Execute mergers and splits of logical qubits by measuring operators on the boundaries between surface-code patches.

Data Readout and Validation:

- Logical Qubit Readout: Perform a projective measurement on the entire logical qubit.

- Post-Selection: Use "superchecks" and erasure information to identify and discard runs with atom loss.

- Fidelity Calculation: Compare the experimental outcome probabilities with theoretical expectations to compute the logical state fidelity.

The workflow for this protocol is summarized in the following diagram:

Protocol for Quantum Advantage Validation (Superconducting)

This protocol is based on Google's "Quantum Echoes" algorithm benchmark, which demonstrated a verifiable speedup over classical supercomputers [5] [8].

Algorithm Specification:

- Algorithm: Implement the Out-of-Order Time Correlator algorithm (Quantum Echoes).

- Circuit Compilation: Compile the algorithm into native gates (e.g., single-qubit rotations and two-qubit iSWAP-like gates) for the specific superconducting processor (e.g., Google's Willow chip).

Classical Baseline Establishment:

- Hardware Selection: Run the same computational task on the world's fastest classical supercomputer (e.g., El Capitan or Fugaku).

- Optimized Code: Use the most efficient known classical algorithm for the problem.

- Runtime Measurement: Record the total time-to-solution for the classical machine.

Quantum Execution:

- Calibration: Ensure the superconducting processor is properly calibrated, with qubit frequencies and gate parameters tuned.

- Circuit Execution: Run the compiled quantum circuit on the quantum hardware multiple times to gather sufficient statistics.

- Runtime Measurement: Record the total quantum computation time, including any necessary classical post-processing.

Verification and Comparison:

- Result Cross-Check: Verify that the quantum and classical computations produce the same result within an acceptable margin of error.

- Speedup Calculation: Compare the time-to-solution. Google's benchmark showed the quantum computer completed the task 13,000 times faster than the classical supercomputer [5] [8].

- Peer Review: Make the algorithm and benchmarking methodology public to allow for independent verification by the scientific community.

Essential Research Reagent Solutions

The following table details key resources and tools required for conducting advanced experiments on these platforms, as referenced in the protocols and commercial offerings.

Table 4: The Scientist's Toolkit for Quantum Chemistry Hardware Research

| Tool / Resource | Function | Example Platforms / Vendors |

|---|---|---|

| Cloud Quantum Access Services | Provides remote, on-demand access to quantum hardware without capital investment. Essential for algorithm testing and validation across modalities. | Amazon Braket [9] [6], Microsoft Azure Quantum [3], IBM Quantum [6] |

| Quantum Programming SDKs | Frameworks for designing, simulating, and compiling quantum algorithms. | Qiskit (IBM) [6], CUDA-Q (Nvidia) [8], Braket SDK (Amazon) [7] [6] |

| Classical Simulators & HPC | Provides a baseline for verifying quantum results and simulating quantum circuits that are beyond classical reach. | State Vector Simulators (e.g., SV1 on Braket) [6], Tensor Network Simulators (e.g., TN1) [6], Fugaku Supercomputer [8] |

| Machine Learning Decoders | Classical software for real-time interpretation of error correction syndrome data, crucial for fault-tolerant experiments. | Custom neural networks deployed on high-performance GPUs [4] |

| Optical Tweezer Arrays | Technology for trapping, moving, and individually addressing neutral atoms or ions. The core of reconfigurable atom-based processors. | Systems used by QuEra (neutral atoms) [2] [4], AQT & IonQ (trapped ions) [7] [9] |

The quantum hardware landscape in 2025 is characterized by rapid, parallel advancement across multiple modalities. For the quantum chemistry and drug development professional, the optimal platform is highly dependent on the specific research problem. Superconducting platforms offer speed and scale but face challenges in connectivity and infrastructure. Trapped-ion systems provide unparalleled fidelity and connectivity, though at slower operational speeds. Neutral-atom architectures present a compelling balance of inherent uniformity, configurable connectivity, a clear path to scaling logical qubits, and room-temperature operation, making them a increasingly viable candidate for the deep-circuit calculations required for molecular simulation. The demonstrated experimental protocols and available toolkits now provide a concrete foundation for researchers to rigorously validate and compare these platforms within their own multi-level quantum chemistry workflows.

This guide provides an objective comparison of three fundamental quantum algorithms for computational chemistry: the Variational Quantum Eigensolver (VQE), Quantum Phase Estimation (QPE), and the Quantum Approximate Optimization Algorithm (QAOA). Framed within multi-level quantum chemistry workflow validation research, it details their principles, performance, and suitability for near-term applications.

The following diagram illustrates the core procedural differences and logical relationships between VQE, QPE, and QAOA.

Comparative Analysis of Algorithmic Performance

The table below summarizes the core characteristics, resource requirements, and typical performance of VQE, QPE, and QAOA based on current research and hardware implementations.

| Feature | VQE (Variational Quantum Eigensolver) | QPE (Quantum Phase Estimation) | QAOA (Quantum Approximate Optimization Algorithm) |

|---|---|---|---|

| Primary Objective | Find approximate ground state energy of a Hamiltonian [10] [11] | Find exact energy eigenvalues of a Hamiltonian with high precision [10] [11] | Solve combinatorial optimization problems [10] [12] |

| Computational Paradigm | Hybrid quantum-classical [11] | Purely quantum (can be standalone) [11] | Hybrid quantum-classical [10] |

| Key Principle | Variational principle; parameterized quantum circuits (ansatz) [11] | Quantum Fourier Transform & controlled unitary operations [10] [11] | Alternating application of cost and mixer Hamiltonians [10] [12] |

| Circuit Depth/Complexity | Low to moderate (NISQ-friendly) [11] | High (requires fault tolerance) [13] [11] | Moderate (NISQ-friendly, but depth scales with layers) [12] |

| Resource Requirements | Shallow circuits, resilient to some noise [11] | Deep circuits, high qubit coherence, error correction [13] [11] [14] | Moderate, but performance gains may need error detection [12] [15] |

| Error Resilience | More resilient to noise on NISQ devices [11] | Highly susceptible to noise; requires robust error correction [11] | Moderately resilient; benefits from error detection in practice [12] [15] |

| Typical Accuracy | Limited by ansatz and noise; can struggle for chemical accuracy [16] | High precision (theoretically exact) [11] | Good for approximation; outperforms classical in some cases with error detection [12] [15] |

| Maturity & Demonstration | Demonstrated on multiple NISQ devices [16] | Demonstrated with error correction on small molecules [14] | Scalable demonstrations with error detection on ~20 logical qubits [12] [15] |

Detailed Experimental Protocols and Validation

VQE Protocol for Molecular Ground States

The Variational Quantum Eigensolver (VQE) employs a hybrid quantum-classical workflow to find the ground state energy of molecular systems [11]. The protocol involves preparing a parameterized trial wavefunction (ansatz) on a quantum processor, measuring the energy expectation value, and using a classical optimizer to minimize this energy [11]. Adaptive variants like ADAPT-VQE and GGA-VQE iteratively construct system-tailored ansätze to improve accuracy and reduce circuit depth, though they face challenges with measurement noise on real hardware [16]. A key challenge is the "barren plateau" phenomenon, where gradients vanish exponentially with system size [11]. Knowledge Distillation Inspired VQE (KD-VQE) has shown improved convergence for the Fermi-Hubbard model by using a collection of trial wavefunctions [11].

QPE Protocol for High-Precision Energy Calculations

Quantum Phase Estimation (QPE) is a cornerstone algorithm for fault-tolerant quantum computation, designed to determine the eigenvalues of a unitary operator with high precision [13] [11]. The standard protocol involves an auxiliary register of qubits for the phase kickback, controlled time evolutions, and an inverse Quantum Fourier Transform (QFT) [11]. Recent experimental work has demonstrated a complete quantum chemistry simulation using QPE with quantum error correction on Quantinuum's H2-2 trapped-ion quantum computer to calculate the ground-state energy of molecular hydrogen [14]. This implementation used a seven-qubit color code for logical qubits and inserted mid-circuit error correction routines, producing an energy estimate within 0.018 hartree of the exact value [14]. To overcome the challenges of deep circuits, "control-free" QPE variants that leverage classical signal processing and phase retrieval are being developed [11].

QAOA Protocol for Combinatorial Optimization

The Quantum Approximate Optimization Algorithm (QAOA) solves combinatorial problems by preparing a parameterized state through a sequence of layers that alternate between a cost Hamiltonian (encoding the problem) and a mixing Hamiltonian [12] [15]. The parameters are tuned to maximize the expectation value of solutions. A significant recent demonstration involved a partially fault-tolerant implementation of QAOA using the [[k+2, k, 2]] "Iceberg" quantum error detection code on the Quantinuum H2-1 trapped-ion quantum computer [12] [15]. This experiment solved MaxCut problems, showing that error detection improved the approximation ratio for problems with up to 20 logical qubits compared to unencoded circuits [12] [15]. The study proposed a model to predict code performance, identifying regimes where error detection is beneficial and outlining conditions for QAOA to potentially outperform classical Goemans-Williamson algorithm on future hardware [12] [15].

The Scientist's Toolkit: Essential Research Reagents

The table below lists key software, hardware, and methodological "reagents" essential for experimental work in quantum algorithms for chemistry.

| Research Reagent | Type | Primary Function | Relevance to Algorithms |

|---|---|---|---|

| Software Development Kits (SDKs) | Software | Provides high-level programming languages, circuit construction libraries, compilers, and interfaces to QPUs [11]. | All algorithms (VQE, QPE, QAOA); essential for translation from theory to executable code [11]. |

| Parameterized Quantum Circuits (Ansätze) | Methodological | A sequence of parameterized gates that prepare a trial quantum state; can be fixed or adaptive [10] [11]. | Core to VQE and QAOA; the ansatz choice critically determines performance and accuracy [11] [16]. |

| Classical Optimizers | Software/Method | Algorithms that adjust quantum circuit parameters to minimize a cost function [11]. | VQE, QAOA; handles the classical loop in hybrid algorithms [11] [16]. |

| Quantum Error Correction/Detection (QEC/D) Codes | Methodological/Software | Techniques to protect logical quantum information from noise by encoding it into multiple physical qubits [14]. | Essential for QPE [14]; shown to benefit QAOA performance on current hardware [12] [15]. |

| Trapped-Ion Quantum Computers | Hardware | A type of quantum hardware known for high-fidelity gates, all-to-all connectivity, and native mid-circuit measurement [12] [14]. | Platform for advanced demonstrations of all algorithms, particularly those requiring error correction or detection [12] [14]. |

| Operator Pools | Methodological/Software | A pre-selected set of unitary operators from which an ansatz is adaptively constructed [16]. | Critical for adaptive VQE protocols (e.g., ADAPT-VQE) for building compact, problem-specific circuits [16]. |

VQE currently offers the most practical pathway for experimentation on NISQ devices, while QPE remains the gold standard for precise, fault-tolerant simulation. QAOA presents a promising hybrid approach for optimization problems relevant to chemistry, with recent advances in error detection enabling more scalable implementations. The trajectory of the field points toward a co-design paradigm, where algorithms, software, and hardware evolve synergistically to tackle scientifically meaningful quantum chemistry problems [11] [17]. The emerging 25–100 logical qubit regime is poised to be a pivotal transitional window, enabling quantum utility in chemistry through polynomial-scaling phase estimation and direct simulation of quantum dynamics [17].

Quantum computing holds transformative potential for fields such as drug development and materials science, where it could dramatically accelerate the simulation of molecular interactions. However, the physical qubits that form the foundation of these computers are highly susceptible to errors from environmental noise, thermal fluctuations, and control inaccuracies, leading to rapid information loss through a process called decoherence [18]. Unlike classical bits, quantum bits (qubits) can experience both bit-flip and phase-flip errors, making error correction considerably more challenging [18].

Quantum Error Correction (QEC) addresses this fragility by encoding a single, more reliable logical qubit across multiple physical qubits. This redundancy allows the system to detect and correct errors without directly measuring and collapsing the quantum information it is protecting [19] [18]. Achieving fault-tolerant quantum computation—where reliable operations are possible even with imperfect components—is a critical milestone for the field. Recent experimental breakthroughs have fundamentally shifted QEC from a theoretical pursuit to the central engineering challenge shaping hardware roadmaps and national quantum strategies [20]. This guide provides researchers with a comparative analysis of current QEC approaches, detailing the experimental protocols and performance data that underpin this rapid progress.

Core Concepts: Physical Qubits, Logical Qubits, and the Threshold Theorem

A physical qubit is a physical device, such as a superconducting circuit or a trapped ion, that behaves as a two-state quantum system [19]. Their individual error rates are currently too high to sustain meaningful computations. A logical qubit is an encoded information unit, constructed from many physical qubits, designed to be error-resistant [19]. The collective state of these physical qubits is used to infer and correct errors affecting the logical information.

The fundamental principle of QEC is the threshold theorem, which states that if the physical error rate (p) is below a certain critical value (p_thr), the logical error rate (ε_d) can be suppressed exponentially by increasing the code distance (d). The code distance, an odd integer, is a measure of the code's error-correcting power [21] [22]. This relationship is captured by the equation:

$${\varepsilon}{d} \propto {\left(\frac{p}{{p}{{\rm{thr}}}}\right)}^{(d+1)/2}$$

When the physical error rate is below this threshold, increasing the number of physical qubits per logical qubit yields a dramatic improvement in logical fidelity. Operating "below threshold" has been a primary goal for experimental quantum computing for nearly three decades [23].

Comparative Analysis of Quantum Error Correction Implementations

The table below summarizes the performance of recent landmark QEC demonstrations across different hardware platforms and code architectures.

Table 1: Comparative Performance of Recent Quantum Error Correction Implementations

| Organization/ Platform | Code Type | Key Performance Metrics | Logical Error Rate | Error Suppression Factor (Λ) |

|---|---|---|---|---|

| Google (Willow)Superconducting [21] [23] | Surface Code | Distance-7 code (101 qubits); 1.1 μs cycle time; Real-time decoding (63 μs latency) | 0.143% ± 0.003% per cycle | 2.14 ± 0.02 |

| Google (Willow)Superconducting [21] | Repetition Code | Tested up to distance 29 to probe error floors | Limited by rare events (~1/hour) | - |

| QuantinuumTrapped-Ion [24] | Concatenated Symplectic Double Code | High-rate code with "SWAP-transversal" gates; Roadmap: 10⁻⁸ logical error rate by 2029 | - | - |

| IBMSuperconducting [19] | Quantum Low-Density Parity-Check (QLDPC) Codes | Protected 12 logical qubits for ~1 million cycles using 288 physical qubits | - | - |

| Microsoft & QuantinuumTrapped-Ion [19] | Active Syndrome Extraction | Created 4 logical qubits using 30 physical qubits via qubit virtualization | - | - |

Key Findings from Comparative Data

- Exponential Suppression Achieved: Google's Willow processor provides the first definitive experimental evidence of exponential error suppression with the surface code, a cornerstone theoretical prediction of QEC. Increasing the code distance from d=3 to d=5 to d=7 reduced the logical error rate by a factor of Λ = 2.14 ± 0.02 with each step [21] [23].

- Beyond Breakeven: The distance-7 surface code on Willow achieved a logical lifetime of 291 ± 6 μs, which is a factor of 2.4 ± 0.3 longer than the lifetime of its best constituent physical qubit. This "breakeven" milestone proves that error correction can genuinely extend quantum information longevity [21].

- Architectural Diversity for Scaling: Alternative codes are being pursued to improve qubit efficiency. IBM's QLDPC codes and Quantinuum's concatenated codes aim for a higher encoding rate (more logical qubits per physical qubit), which is crucial for reducing the massive physical qubit overhead required for large-scale algorithms [24] [19].

Experimental Protocols: Methodologies for QEC Validation

The validation of QEC performance requires carefully designed experiments to measure the stability of logical quantum information over time.

Surface Code Memory Experiment

This is the standard protocol for benchmarking a quantum memory's stability.

- 1. Logical State Initialization: The experiment begins by preparing the data qubits of the surface code lattice in a product state that corresponds to a known logical eigenstate (e.g.,

|0_L⟩or|1_L⟩) [21]. - 2. Syndrome Extraction Cycles: A rapid, repeated sequence of operations, termed a "cycle," is performed. Each cycle involves entangling measure qubits with their neighboring data qubits and then reading out the measure qubits. These measurements, known as syndromes, provide parity information about errors on the data qubits without collapsing their quantum state. A single cycle on Google's Willow processor takes 1.1 microseconds [21].

- 3. Real-Time Decoding: The stream of syndrome data is fed to a decoder—a classical algorithm that diagnoses the most likely errors to have occurred. In advanced setups, this decoding happens in real-time with an average latency of 63 microseconds for a distance-5 code [21].

- 4. Logical Measurement and Final Correction: After a variable number of cycles (e.g., up to 250), the data qubits are measured. The decoder uses the complete history of syndromes to interpret the final data qubit measurements and determine the logical output. The experiment is a success if the final, corrected logical outcome matches the initial logical state [21].

- 5. Logical Error Rate Calculation: The experiment is repeated thousands of times. The logical error per cycle (ε_d) is characterized by fitting the decay of the logical state's survival probability over the number of cycles [21].

Repetition Code for Probing Error Floors

To probe the ultimate limits of error correction, researchers use repetition codes. These codes only protect against bit-flip errors, allowing them to reach much lower logical error rates and identify rare, correlated error events that could set a "floor" for logical performance. On Willow, repetition codes were run for up to 3 billion cycles, revealing that logical performance was limited by rare correlated errors occurring approximately once every hour [21] [23].

The following diagram illustrates the workflow of a surface code memory experiment, integrating both quantum and classical processing components.

Diagram 1: Surface code memory experiment workflow, showing the integration of quantum and classical processing for real-time error correction.

The Scientist's Toolkit: Essential Research Reagents for QEC

The following table details key components, both hardware and software, that are essential for executing and analyzing QEC experiments.

Table 2: Essential "Research Reagent Solutions" for Quantum Error Correction

| Tool / Component | Category | Function in QEC Research | |

|---|---|---|---|

| High-Coherence Physical Qubits | Hardware | The foundational component; improved coherence times (e.g., T₁) directly lower the physical error rate (p), enabling operation below the QEC threshold [21] [23]. | |

| Surface Code Lattice | Code Architecture | A 2D array of physical qubits (data and measure qubits) that provides protection against all local errors. It is the most mature and experimentally validated code for scalable QEC [21]. | |

| Real-Time Decoder | Classical Software | A classical algorithm (e.g., neural network, minimum-weight perfect matching) that processes syndrome data during computation to identify errors. Low latency is critical to keep pace with the quantum processor [21]. | |

| Leakage Removal Units | Hardware/Software | Specialized operations that reset qubits that have leaked into non-computational states (e.g., | 2⟩), preventing the spread of this error type throughout the quantum processor [21]. |

| Repetition Code | Diagnostic Code | A simpler code used as a diagnostic tool to probe specific error channels (bit-flips) and identify rare, correlated error events that set the ultimate error floor for a system [21] [23]. |

Implications for Multi-Level Quantum Chemistry Workflows

The progression in QEC has direct implications for quantum chemistry applications relevant to drug development. Predictive simulation of molecular systems and surface interactions requires "gold standard" coupled-cluster methods, which have prohibitive computational costs on classical computers for large molecules [25]. Reliable quantum computers promise to overcome this barrier.

The experimental validation of below-threshold operation means that the exponential suppression of errors is now a practical tool. For quantum chemistry workflows, this translates to a clear, scalable path toward achieving the required logical error rates (e.g., below 10⁻¹⁰) for complex simulations. The ability to run real-time decoding ensures that these long computations can proceed without being bottlenecked by classical processing [21]. Furthermore, the development of high-rate codes (e.g., by Quantinuum and IBM) is critical for reducing the immense physical resource overhead, making the simulation of large, pharmacologically relevant molecules more feasible [24] [19]. As error rates continue to improve exponentially with advances in hardware and codes, quantum computers are poised to become a reliable component in the multi-level validation of quantum chemical models.

Defining the Path to Quantum Advantage in Chemistry and Drug Discovery

The field of quantum computing for chemistry and drug discovery has transitioned from theoretical promise to demonstrable milestones in 2024-2025. This comparative analysis examines the current landscape where verifiable quantum advantage has been achieved in specific, constrained molecular simulations, while the broader path to universal fault-tolerant quantum computing for pharmaceutical applications continues to evolve. The emergence of quantum-classical hybrid architectures has enabled practical workflows that leverage quantum processors for specific computational bottlenecks while maintaining classical infrastructure for validation and data management. This guide objectively compares the performance, methodologies, and experimental protocols across leading quantum computing platforms, providing researchers with a framework for evaluating this rapidly advancing technological landscape.

Performance Benchmarking: Quantum vs. Classical Approaches

Molecular Simulation Performance Metrics

Table 1: Comparative performance of quantum algorithms versus classical supercomputers for molecular simulations

| System/Algorithm | Provider/Platform | Problem Scale | Performance Advantage | Accuracy Metrics |

|---|---|---|---|---|

| Quantum Echoes Algorithm [26] [27] | Google Willow | 15-atom & 28-atom molecules | 13,000x faster than classical supercomputers | Matched traditional NMR results |

| Error-Corrected QPE [14] | Quantinuum H2-2 | Molecular hydrogen | Energy within 0.018 hartree of exact value | Below chemical accuracy (0.0016 hartree) threshold |

| FAST-VQE Algorithm [28] | Kvantify/IQM Sirius & Garnet | Butyronitrile (20-qubit system) | Beyond classical simulation capacity | Consistent error trends with simulator |

| Quantum-Enhanced Screening [29] | IBM Eagle | Protein-ligand systems | 47x speedup in binding simulations | Verified against Summit/Frontier supercomputers |

Algorithm-Specific Performance Characteristics

Table 2: Quantum algorithm performance across chemical applications

| Algorithm Type | Best Demonstrated Application | Hardware Requirements | Current Limitations | Error Mitigation Approach |

|---|---|---|---|---|

| Quantum Echoes (OTOC) [27] | Molecular structure determination | 105-qubit Willow chip | Specialized application | Quantum verifiability through repetition |

| Quantum Phase Estimation [14] | Ground-state energy calculation | 22-qubit trapped-ion with QEC | Resource-intensive for small molecules | Mid-circuit error correction routines |

| Variational Quantum Eigensolver [28] [29] | Potential energy surface mapping | 16-20 qubit superconducting | Requires many iterations | Zero-noise extrapolation, probabilistic cancellation |

| Quantum Machine Learning [29] | Compound binding affinity prediction | 29-qubit trapped-ion systems | Limited training data | Hybrid classical-quantum architecture |

Experimental Protocols and Methodologies

Quantum Echoes Protocol for Molecular Structure Determination

The Quantum Echoes algorithm, demonstrated on Google's Willow processor, implements a four-step process for molecular structure analysis [27]:

- System Initialization: Prepare a 105-qubit array in a known quantum state, with qubits representing aspects of the molecular system.

- Forward Evolution: Apply a carefully crafted sequence of quantum operations to evolve the system forward in time.

- Qubit Perturbation: Introduce a controlled disturbance to a specific qubit in the system.

- Reverse Evolution and Measurement: Precisely reverse the forward evolution sequence and measure the resulting "quantum echo."

This protocol functions as a molecular ruler, with the amplified echo signal providing enhanced sensitivity to molecular geometry. The methodology was validated in partnership with UC Berkeley on molecules containing 15 and 28 atoms, with results cross-referenced against traditional Nuclear Magnetic Resonance (NMR) data [27].

Error-Corrected Quantum Chemistry Protocol

Quantinuum's implementation of quantum error correction (QEC) for chemistry calculations establishes a benchmark for fault-tolerant quantum simulations [14]:

- Logical Qubit Encoding: Encode each logical qubit using a seven-qubit color code across physical qubits on the H2-2 trapped-ion processor.

- Circuit Compilation: Compile quantum phase estimation circuits using both fault-tolerant and partially fault-tolerant methods to balance error protection with resource overhead.

- Mid-Circuit Correction: Insert QEC routines between quantum operations to detect and correct errors as they occur during computation.

- Noise Characterization: Utilize numerical simulations with tunable noise models to identify memory noise as the dominant error source.

- Dynamic Decoupling: Apply dynamical decoupling techniques to mitigate idle qubit errors during computation.

This protocol demonstrated the first complete quantum chemistry simulation using quantum error correction on real hardware, calculating the ground-state energy of molecular hydrogen with increased accuracy despite added circuit complexity [14].

Hybrid Quantum-Classical Chemistry Workflow

The FAST-VQE algorithm developed by Kvantify exemplifies the modern hybrid approach to quantum computational chemistry [28]:

- Classical Preprocessing: Use classical computers to generate molecular Hamiltonians and select active spaces using realistic basis sets (e.g., PCSEG-2).

- Hardware-Efficient Execution: Run adaptive operator selection directly on quantum hardware (IQM's Sirius and Garnet processors) for each geometry point in the chemical reaction path.

- High-Performance Simulation: Employ chemistry-specific state vector simulators to handle the optimization steps of the variational algorithm.

- Iterative Convergence: Execute 60 adaptive iterations per geometry point, requiring 2-3 seconds of quantum runtime per iteration.

- Reference Validation: Compare results against exact CASCI references calculated in the same orbital space to quantify accuracy.

This workflow was successfully applied to study the dissociation of butyronitrile, a molecule with applications in battery and solar cell research, scaling to 20 qubits and demonstrating consistent error trends between hardware and simulator [28].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key platforms and algorithms for quantum computational chemistry

| Tool/Platform | Provider | Function/Role | Current Specifications | Access Model |

|---|---|---|---|---|

| Willow Quantum Chip [27] | Google Quantum AI | Runs Quantum Echoes algorithm for molecular structure | 105-qubit processor, verifiable quantum advantage | Not publicly available |

| H2-2 Quantum Computer [14] | Quantinuum | Error-corrected chemistry calculations | Trapped-ion architecture, all-to-all connectivity | Cloud access via partnership |

| Kvantify Chemistry QDK [28] | Kvantify | Quantum chemistry development kit | FAST-VQE algorithm, hardware-efficient | Cloud access via IQM Resonance |

| eSEN Neural Network Potentials [30] | Meta FAIR | Classical AI alternative for molecular modeling | Trained on OMoI25 dataset (100M+ calculations) | Open source via HuggingFace |

| OMol25 Dataset [30] | Meta FAIR | Training data for molecular AI models | 100M+ calculations, 6B CPU-hours generated | Publicly available dataset |

Comparative Analysis: Performance Across Molecular System Complexity

Table 4: Performance across varying molecular system complexity

| Molecular System Complexity | Leading Quantum Approach | Classical Alternative | Current Advantage Status | Key Limiting Factors |

|---|---|---|---|---|

| Small Molecules (≤30 atoms) [26] [27] | Quantum Echoes, QPE with QEC | High-accuracy DFT (ωB97M-V/def2-TZVPD) | Demonstrated: 13,000x speedup for specific tasks | Qubit fidelity, error correction overhead |

| Reaction Pathways [28] | FAST-VQE with realistic basis sets | CASSCF/CASCI methods | Emerging: Beyond classical simulation capacity | Circuit depth, iterative convergence |

| Protein-Ligand Binding [29] | Quantum-enhanced screening | Classical molecular dynamics | Limited: 47x speedup in specific cases | System size, noise susceptibility |

| Large Biomolecules [30] | Classical NNPs (eSEN/UMA) | Traditional force fields | Classical AI Advantage: Better than affordable DFT | Training data diversity, transferability |

The experimental data and performance comparisons presented in this analysis demonstrate that quantum advantage in chemistry and drug discovery is no longer theoretical but remains highly context-dependent. Google's Quantum Echoes algorithm has achieved verifiable advantage for specific molecular structure problems [27], while hybrid approaches like Kvantify's FAST-VQE are pushing beyond classical simulation capabilities for reaction pathway analysis [28]. Simultaneously, error correction milestones from Quantinuum show that fault-tolerant quantum chemistry is progressively becoming practical [14].

However, the landscape is nuanced: for many practical drug discovery applications, classical AI approaches like Meta's eSEN models trained on massive datasets (OMol25) currently offer more accessible performance gains for molecular modeling [30]. The path to broad quantum advantage will require co-evolution of hardware capabilities, error mitigation strategies, and algorithmic innovations, with hybrid quantum-classical architectures serving as the transitional framework. Researchers should strategically integrate quantum computing into their workflows for specific problem classes where current demonstrations show measurable advantage, while maintaining classical and AI approaches for the majority of computational chemistry tasks.

Building Hybrid Workflows: Integrating Quantum and Classical Computing for Practical Chemistry

The pursuit of solving problems beyond the reach of classical computing is driving a fundamental shift in high-performance computing (HPC) architecture. With Moore's Law slowing, the integration of quantum processing units (QPUs) with state-of-the-art supercomputers represents the next disruptive wave in computational science [31] [32]. This co-design effort aims not to replace classical HPC but to create hybrid quantum-classical systems where each platform handles the tasks for which it is best suited. For researchers in quantum chemistry and drug development, this integration promises to unlock new capabilities in molecular simulation and materials design by providing access to unprecedented computational power.

The industry is rapidly moving from theoretical research to tangible commercial reality. By 2025, the global quantum computing market has reached an inflection point, with market size estimates ranging from $1.8 billion to $3.5 billion and projections suggesting growth to $20.2 billion by 2030 [5]. This growth is fueled by breakthroughs in hardware performance, error correction, and the emergence of practical applications demonstrating real-world quantum advantage in specific domains [5] [33]. This guide provides an objective comparison of current approaches to quantum-HPC integration, focusing on their implications for computational chemistry and drug development workflows.

Industry Landscape and Strategic Initiatives

Market Dynamics and Investment Trends

The financial landscape for quantum computing reflects unprecedented investor confidence. Venture capital funding surged dramatically with over $2 billion invested in quantum startups during 2024, a 50% increase from 2023 [5]. The first three quarters of 2025 alone witnessed $1.25 billion in quantum computing investments, more than doubling previous year figures. Major institutional players have signaled their commitment to the sector, with JPMorgan Chase announcing a $10 billion investment initiative specifically naming quantum computing as a strategic technology [5]. Governments worldwide have invested $3.1 billion in 2024, primarily linked to national security and competitiveness objectives [5].

International competition in quantum computing has intensified significantly. China's national venture fund has committed RMB 1 trillion (approximately $140 billion) for quantum technology development, while Europe advances through the Quantum Flagship Program coordinating research across member states [5]. The U.S. National Quantum Initiative has invested $2.5 billion in programs between 2019 and 2024, establishing Quantum Leap Challenge Institutes and the National Quantum Virtual Laboratory as national resources for quantum research and development [5].

Key Hardware Platforms and Performance Metrics

Table 1: Comparative Analysis of Leading Quantum Hardware Platforms (2025)

| Provider | Qubit Technology | Key System | Qubit Count | Key Performance Metrics | Error Correction Approach |

|---|---|---|---|---|---|

| IBM | Superconducting | Nighthawk | 120 qubits | 57/176 couplings with <0.1% error rate; 330,000 CLOPS | Square topology; Quantum Low-Density Parity Check (qLDPC) codes |

| Superconducting | Willow | 105 qubits | Calculation完成 in 5 mins vs. 10^25 years classical | Exponential error reduction as qubit counts increase | |

| IonQ | Trapped Ion | Forte Enterprise | 36 algorithmic qubits | High-fidelity operations | Clifford Noise Reduction (CliNR) technique |

| Atom Computing | Neutral Atom | - | - | 28 logical qubits encoded onto 112 atoms | - |

| Alice & Bob | Superconducting (cat qubits) | Graphene (planned) | Target: 100 logical qubits | Built-in bit-flip error suppression | Cat qubit design reduces error correction overhead |

| Microsoft | Topological | Majorana 1 | - | 1,000-fold error rate reduction | Novel four-dimensional geometric codes |

Table 2: Quantum-as-a-Service (QaaS) Platform Comparison

| Platform | Hardware Providers | Key Features | Target Users |

|---|---|---|---|

| Amazon Braket | Rigetti, Oxford Quantum Circuits, QuEra, IonQ, D-Wave, Xanadu | Pay-as-you-go; Direct reservation program; Educational resources | Enterprises exploring quantum applications |

| IBM Quantum | IBM systems only | Qiskit Runtime; Quantum System Two; Hardware-aware optimization | Quantum developers and researchers |

| Azure Quantum | Multiple | Integration with Microsoft AI services; Hybrid quantum-classical workflows | Enterprise developers |

Architectural Frameworks for Quantum-HPC Integration

System-Level Integration Approaches

A foundational study by Oak Ridge National Laboratory (ORNL) has proposed a comprehensive software architecture for integrating emerging quantum computers with the world's fastest supercomputing systems [32]. The ORNL approach emphasizes a unified resource management system that efficiently coordinates quantum and classical resources, addressing the fundamental challenge of combining two distinct computing paradigms [32]. This architecture includes a flexible quantum programming interface that abstracts hardware-specific details, allowing future designs to be included without fundamentally changing the programming model.

The proposed framework positions quantum computers as accelerators rather than equal partners to supercomputers in the near term [32]. A quantum controller would connect the two machines and act as an interpreter device, translating between quantum and classical computations. The team proposes a specific quantum platform management interface that would simplify this integration and translation, making a variety of combinations easy to deploy [32]. Most of the software would operate on the classical side, with the quantum machine functioning similarly to how GPUs accelerate specific computational tasks in current HPC systems.

The Quantum Framework for Hybrid Workflows

Recent research has demonstrated the practical implementation of hybrid quantum-HPC workflows through the Quantum Framework (QFw), a modular and HPC-aware orchestration layer [34]. This framework integrates multiple local backends (Qiskit Aer, NWQ-Sim, QTensor, and TN-QVM) and cloud-based quantum hardware (IonQ) under a unified interface, enabling researchers to execute both non-variational and variational workloads across diverse simulators and hardware backends [34].

The QFw approach addresses the critical challenge that no single simulator offers the best performance for every circuit type. Simulation efficiency depends strongly on circuit structure, entanglement, and depth, making a flexible and backend-agnostic execution model essential for fair benchmarking, informed platform selection, and ultimately the identification of quantum advantage opportunities [34]. Empirical results highlight workload-specific backend advantages: while Qiskit Aer's matrix product state excels for large Ising models, NWQ-Sim leads on large-scale entanglement and Hamiltonian simulations and shows the benefits of concurrent subproblem execution in a distributed manner for optimization problems [34].

Experimental Protocols and Performance Benchmarks

Methodologies for Quantum-HPC Workflow Validation

Experimental validation of quantum-HPC workflows requires rigorous benchmarking across multiple dimensions. The extended Quantum Framework (QFw) study implemented a methodology focusing on performance portability and backend-agnostic execution [34]. The experimental protocol involved:

Circuit Characterization: Each quantum circuit was analyzed for structure, entanglement depth, and operational complexity to determine the most suitable backend.

Multi-Backend Execution: Identical circuits were executed across Qiskit Aer, NWQ-Sim, QTensor, TN-QVM, and IonQ hardware to collect comparative performance data.

Hybrid Workflow Orchestration: Complex workflows combining classical pre/post-processing with quantum computations were managed through QFw's distributed task scheduling.

Performance Metrics Collection: Execution times, fidelity measures, and resource utilization metrics were systematically recorded for each backend and workload type.

For variational workloads, researchers implemented a hybrid approach where classical HPC resources handled parameter optimization while quantum resources executed the circuit evaluations [34]. This co-design pattern leverages the strengths of both platforms—classical systems excel at optimization while quantum systems can explore complex state spaces more efficiently.

Empirical Performance Data

Table 3: Quantum-HPC Workflow Performance Benchmarks

| Workload Type | Backend | System Scale | Performance Metric | Comparative Advantage |

|---|---|---|---|---|

| Ising Model Simulation | Qiskit Aer (MPS) | 100+ qubits | 25% more accurate results with dynamic circuits | Best for large-scale spin systems with limited entanglement |

| Hamiltonian Simulation | NWQ-Sim | 80+ qubits | 58% reduction in two-qubit gates | Superior for strongly correlated systems |

| Optimization Problems | QTensor | 50-70 qubits | Efficient concurrent subproblem execution | Optimal for QAOA and combinatorial optimization |

| Chemical Simulation | IonQ Hardware | 36 algorithmic qubits | Outperformed classical HPC by 12% on medical device simulation | Early quantum advantage for specific chemistry problems |

Recent breakthroughs have demonstrated tangible performance gains in practical applications. In March 2025, IonQ and Ansys achieved a significant milestone by running a medical device simulation on IonQ's 36-qubit computer that outperformed classical high-performance computing by 12%—one of the first documented cases of quantum computing delivering practical advantage over classical methods in a real-world application [5]. Google announced the Quantum Echoes algorithm breakthrough, demonstrating the first-ever verifiable quantum advantage running the out-of-order time correlator algorithm, which runs 13,000 times faster on Willow than on classical supercomputers [5].

Quantum Chemistry Applications and Validation

Advancements in Quantum Chemistry Simulation

The co-design of quantum and HPC systems has yielded particularly promising results in quantum chemistry, where simulating molecular systems remains computationally challenging for classical computers. Recent research has developed a multi-resolution quantum embedding scheme that enables "gold standard" coupled-cluster with single, double, and perturbative triple excitations (CCSD(T)) calculations for extended surface chemistry problems [25]. This approach achieves linear computational scaling up to 392 atoms, demonstrating the importance of converging to extended system sizes for accurate simulation of molecular interactions at surfaces [25].

In one benchmark study, researchers applied this method to the interaction of water on a graphene surface, systematically enlarging the substrate size to eliminate finite-size errors [25]. The results provided a definitive benchmark for water-graphene interaction that clarified the preference for water orientations at the graphene interface. For the largest systems containing more than 11,000 orbitals, the gap between open and periodic boundary conditions was reduced to just 5 meV, effectively eliminating finite-size errors that had plagued previous computational studies [25].

The release of Meta's Open Molecules 2025 (OMol25) dataset represents a significant development for both classical and quantum computational chemistry. This massive dataset comprises over 100 million quantum chemical calculations that took over 6 billion CPU-hours to generate, providing an unprecedented resource for training and validating quantum chemistry models [30]. The dataset covers diverse chemical structures with particular focus on biomolecules, electrolytes, and metal complexes, all calculated at the ωB97M-V/def2-TZVPD level of theory [30].

Coupled with Neural Network Potentials (NNPs) like the eSEN and Universal Model for Atoms (UMA) architectures, these resources enable rapid molecular simulations that approach quantum chemical accuracy [30]. User feedback indicates that these models provide "much better energies than the DFT level of theory I can afford" and "allow for computations on huge systems that I previously never even attempted to compute" [30]. Such resources are invaluable for validating quantum computing approaches to chemical problems and establishing reliable benchmarks for quantum advantage claims.

Essential Research Reagent Solutions

Table 4: Critical Research Tools for Quantum-HPC Workflow Development

| Tool/Category | Representative Examples | Primary Function | Application in Research |

|---|---|---|---|

| Quantum Programming SDKs | Qiskit, CUDA-Q, PennyLane | Circuit design, compilation, and execution | Provides abstraction layer for quantum algorithm development |

| Hybrid Workflow Orchestrators | Quantum Framework (QFw), Qiskit Runtime, Amazon Braket Hybrid Jobs | Manage execution across quantum and classical resources | Enables complex workflows dividing tasks between HPC and QPU |

| Error Mitigation Tools | Q-CTRL Fire Opal, Samplomatic, Probabilistic Error Cancellation (PEC) | Reduce impact of noise and errors in quantum computations | Improves result quality on current noisy quantum devices |

| Quantum Simulators | Qiskit Aer, NWQ-Sim, QTensor | Emulate quantum circuits on classical HPC | Algorithm validation and benchmarking without QPU access |

| Quantum-HPC Integration APIs | Qiskit C++ API, ORNL Quantum Platform Management Interface | Enable deep integration between quantum and classical codes | Facilitates tight coupling of quantum and classical compute resources |

| Performance Analysis Tools | Quantum Advantage Tracker, Circuit Profilers | Monitor and evaluate quantum system performance | Objective assessment of quantum utility and advantage claims |

The co-design of quantum and high-performance computing systems has evolved from theoretical concept to practical engineering challenge. Current evidence suggests that hybrid quantum-classical architectures represent the most viable path toward practical quantum advantage in the near term [5] [32] [34]. The emerging tiered workflow paradigm—where classical HPC systems handle large-scale data processing and quantum resources accelerate specific computationally intensive subproblems—leverages the complementary strengths of both platforms.

For researchers in quantum chemistry and drug development, these architectural advances promise to significantly expand the scope of addressable problems. Materials science and quantum chemistry have been identified as the fields most likely to benefit from early fault-tolerant quantum computers (eFTQC) [31]. As algorithmic advances continue to reduce quantum resource requirements and hardware performance improves, the integration of quantum accelerators into existing HPC infrastructures will create unprecedented opportunities for scientific discovery.

The coming years will see increased focus on developing standardized interfaces, performance portability tools, and application libraries that abstract the underlying complexity of hybrid systems. Success in this endeavor will require continued collaboration between the quantum computing and HPC communities, with domain scientists playing a crucial role in identifying the applications where quantum acceleration can deliver maximum impact.

The accurate prediction of molecular properties represents a fundamental challenge in chemistry, materials science, and drug discovery. Traditional computational approaches, ranging from force fields to high-level quantum chemistry, often face a difficult trade-off between accuracy and computational cost. The emergence of quantum machine learning (QML), particularly Hybrid Quantum Neural Networks (HQNNs), promises to reshape this landscape by harnessing the unique capabilities of quantum mechanics to enhance computational efficiency and predictive accuracy. HQNNs represent a class of algorithms that strategically integrate parameterized quantum circuits with classical deep learning architectures, creating synergistic systems that leverage the strengths of both paradigms. For molecular property prediction, this hybrid approach offers the potential to capture complex quantum chemical relationships more effectively than purely classical models, while requiring fewer parameters and offering potential computational advantages. This guide provides an objective comparison of HQNN performance against established classical alternatives, detailing experimental protocols, benchmarking results, and the essential tools required to implement these cutting-edge approaches in scientific research.

HQNN Architectures and Comparative Performance

Hybrid Quantum Neural Networks typically function by using classical neural networks for initial feature extraction from molecular structures, which are then processed by a parameterized quantum circuit—often called a quantum node or variational quantum circuit. This quantum component leverages phenomena like superposition and entanglement to model complex, non-linear relationships in the data. The output from the quantum circuit is then fed back into a classical network for final prediction [35] [36]. This architecture is particularly suited for molecular problems where the underlying physics is inherently quantum mechanical.

Recent empirical studies across diverse molecular prediction tasks demonstrate that HQNNs can match or exceed the performance of state-of-the-art classical models, often with significantly greater parameter efficiency. The table below summarizes key quantitative findings from published studies:

Table 1: Performance Comparison of HQNNs vs. Classical Models in Molecular Property Prediction

| Study & Application | Classical Model Performance (R²/MAE) | HQNN Model Performance (R²/MAE) | Parameter Efficiency |

|---|---|---|---|

| CO2-Capturing Amine Solvent QSPR [35] | Classical MLP/GNN: Baseline | Fine-tuned HQNN (9 qubits): Highest ranking accuracy across pKa, viscosity, boiling/melting points, and vapor pressure | Not specified |

| Protein-Ligand Binding Affinity Prediction [37] | Classical DeepDTAF: Baseline | HQDeepDTAF: Comparable or superior performance | HQNN achieved similar performance with fewer parameters |

| General Molecular Property Prediction [38] | Classical NN with Classical Data Augmentation: Baseline | HQNN with QGAN Augmentation: Performance improvement using QGAN vs. classical augmentation | QGAN achieved similar performance to DCGAN with 50% fewer parameters |

Analysis of Comparative Performance

The consolidated results indicate a consistent trend: HQNNs are capable of achieving competitive, and in some cases superior, predictive accuracy compared to their classical counterparts. A key advantage emerging across multiple studies is enhanced parameter efficiency [38] [37]. This means HQNNs can achieve similar results with smaller model sizes, which can lead to faster training times and reduced computational resource requirements. Furthermore, simulations have demonstrated that HQNNs maintain robustness even in the presence of quantum hardware noise, a critical property for practical applications on today's noisy intermediate-scale quantum (NISQ) devices [35]. It is critical to interpret these results with the understanding that the quantum advantage is often measured in terms of resource efficiency and learning capability on specific problem classes, rather than a universal speedup over all classical algorithms.

Experimental Protocols and Methodologies

Protocol for HQNN-based QSPR Modeling

The following protocol is derived from a study enhancing Quantitative Structure-Property Relationship (QSPR) models for CO2-capturing amines [35] [39]:

- Data Collection and Curation: Collect experimental data for target properties (e.g., basicity/pKa, viscosity, boiling point). Data should be sourced from literature and validated databases. Critical pre-processing includes log-scale transformation for properties like viscosity and vapor pressure, and min-max scaling of target values for model training.

- Molecular Featurization: Generate multiple molecular fingerprint representations for each compound. The protocol specifies concatenating MACCS keys (166 bits) with other fingerprints like Avalon, ECFP6, and Morgan (1024 bits each) to create a diverse and comprehensive feature set.

- Classical Feature Compression: The high-dimensional fingerprint vectors are processed by a classical multi-layer perceptron (MLP) or Graph Neural Network (GNN). The role of this network is to non-linearly compress the features into a lower-dimensional vector suitable for encoding into a quantum circuit.

- Quantum Circuit Processing: The compressed feature vector is mapped to the quantum circuit via angle embedding. A variational quantum circuit with a specified number of qubits (e.g., 9) and layers is used. The circuit consists of repeated layers of rotational gates and entangling gates. The quantum state is measured, and the expectation values are used as the output.

- Hybrid Training: The entire classical-quantum network is trained end-to-end using a classical optimizer (e.g., Adam). The loss function (e.g., Mean Absolute Error) is minimized via gradient-based optimization, where gradients for the quantum circuit are computed using techniques like the parameter-shift rule.

Protocol for Protein-Ligand Binding Affinity Prediction

This protocol outlines the methodology for the HQDeepDTAF model [37]:

- Multi-Module Input Processing: The model processes three separate inputs concurrently:

- Protein Sequence: The entire protein sequence is fed into an embedding layer and a classical 1D convolutional network.

- Local Protein Pocket: The amino acid sequence of the binding pocket is processed similarly.

- Ligand SMILES: The Simplified Molecular-Input Line-Entry System string of the ligand is tokenized and processed through an embedding layer and a 1D convolutional network.

- Hybrid Quantum-Classical Feature Learning: The flattened feature vectors from each of the three classical convolutional modules are not directly concatenated. Instead, each is passed into its own separate Hybrid Quantum Neural Network (HQNN) block. This is a key difference from simpler architectures.

- HQNN Block Design: Each HQNN block uses a data re-uploading strategy, where the classical feature vector is encoded multiple times into the quantum circuit interleaved with variational layers. This enhances the model's expressive power without requiring an exponential number of qubits.

- Classical Regression Head: The outputs from the three HQNN blocks are concatenated and passed to a final classical fully connected regression layer to produce the predicted binding affinity value.

- Noise-Aware Training and Evaluation: The model is trained on noiseless simulations, and its feasibility for NISQ devices is explicitly evaluated by testing its performance under simulated quantum hardware noise.

Diagram: Workflow for Hybrid Quantum Neural Network (HQNN) in Molecular Property Prediction

Implementing HQNNs for molecular property prediction requires a suite of computational tools, datasets, and platforms. The following table details key resources that form the foundation for this research.

Table 2: Essential Research Reagents and Resources for HQNN-based Molecular Prediction

| Category | Resource Name | Description and Function |

|---|---|---|

| Datasets | OMol25 [30] | A massive dataset from Meta FAIR with over 100 million high-accuracy (ωB97M-V/def2-TZVPD) quantum chemical calculations. Provides a robust benchmark for training and evaluating molecular property prediction models. |

| Datasets | Halo8 [40] | A comprehensive dataset focusing on halogen chemistry (F, Cl, Br), containing ~20 million calculations from 19,000 reaction pathways. Essential for testing model generalizability and performance on underrepresented elements. |

| Software & Libraries | Qiskit / PennyLane | Open-source quantum computing SDKs. They provide the essential toolkit for constructing, simulating, and optimizing variational quantum circuits that are integrated into HQNNs. |

| Software & Libraries | RDKit [35] [40] | An open-source cheminformatics toolkit. Its primary function is to generate molecular fingerprints (e.g., MACCS, Morgan) and handle molecular structure input (e.g., SMILES) for featurization. |

| Software & Libraries | PyTorch / TensorFlow | Standard classical deep learning frameworks. They are used to build the classical neural network components of the HQNN and manage the end-to-end gradient-based training of the hybrid model. |

| Hardware Platforms | IBM Quantum Systems [35] | Provider of cloud-accessible quantum processors. Used for running quantum circuits and for evaluating the robustness of HQNN models under real hardware noise conditions. |

| Benchmarking Tools | QuantumBench [41] | A specialized benchmark comprising ~800 multiple-choice questions on quantum science. Useful for evaluating the quantum domain knowledge of LLMs used in automated research workflows. |

The experimental data and protocols presented in this guide demonstrate that Hybrid Quantum Neural Networks are a serious and emerging contender in the field of molecular property prediction. The current evidence, while promising, suggests that the primary advantage of HQNNs in the NISQ era lies not in overwhelming performance dominance, but in their parameter efficiency and their innate ability to model quantum mechanical relationships within a hybrid classical-quantum framework. For researchers and drug development professionals, this translates to a new, powerful tool that can be integrated into multi-level validation workflows. As quantum hardware continues to mature with increased qubit counts and improved fidelity, and as QML algorithms become more sophisticated, the potential for HQNNs to deliver a decisive quantum advantage in practical drug discovery and materials science applications appears increasingly attainable.

Simulating complex biomolecules requires a multi-faceted computational approach that bridges different levels of theory, from highly accurate but expensive quantum mechanical methods to efficient classical and machine learning potentials. This multi-level workflow is essential for tackling real-world biological problems in drug discovery and enzyme modeling, where system size and chemical complexity present significant challenges. The core challenge lies in accurately capturing key interactions—such as electrostatics, dispersion, and polarization—while maintaining computational feasibility for biologically relevant systems and timescales.

The validation of this multi-level workflow depends on high-quality benchmark datasets and standardized assessment protocols. Recent advances have produced massive datasets like the Splinter dataset for protein-ligand interactions [42] and Meta's OMol25 dataset [30], which provide crucial reference data for method development and validation. Simultaneously, best practices have emerged for constructing meaningful benchmarks and preparing systems for reliable free energy calculations [43]. This guide examines and compares the current computational methodologies through the lens of these developing standards, focusing on their application to protein-ligand interactions and cytochrome P450 modeling.

Performance Comparison of Computational Methodologies

Quantitative Performance Metrics Across Methods

Table 1: Performance Comparison of Biomolecular Simulation Methods

| Methodology | Accuracy Range | Computational Cost | System Size Limit | Key Interactions Captured | Primary Applications |

|---|---|---|---|---|---|

| SAPT0 | High (reference for NCIs) | Very High | ~100s of atoms | Electrostatics, exchange, induction, dispersion [42] | Benchmarking, force field development [42] |

| Neural Network Potentials (OMol25-trained) | Near-DFT accuracy [30] | Medium (after training) | 1000s+ of atoms | Full QM potential energy surface [30] | Large biomolecules, MD simulations [30] |

| Alchemical FEP | ~1-1.2 kcal/mol MUE for RBFE [43] | High | 100,000s of atoms | Effective pairwise potentials | Lead optimization, relative binding [43] |

| MM/PBSA | Moderate (>2 kcal/mol MUE) | Medium | 100,000s of atoms | Approximate solvation & electrostatics | Binding affinity screening [43] |

| Quantum SAPT(VQE) | Theoretical, developing [44] | Very High (quantum) | Small active sites | Electrostatics, exchange [44] | Multi-reference systems [44] |

Table 2: Dataset Characteristics for Method Development and Validation

| Dataset | Size | Level of Theory | System Types | Key Features |

|---|---|---|---|---|

| Splinter | ~1.6M configurations [42] | SAPT0/cc-pVDZ [42] | Protein/ligand fragments | SAPT energy decomposition [42] |

| OMol25 | 100M+ calculations [30] | ωB97M-V/def2-TZVPD [30] | Biomolecules, electrolytes, metal complexes | Unprecedented diversity [30] |

| Protein-Ligand Benchmark | Curated set [43] | Experimental affinities [43] | Drug targets | Standardized benchmarking [43] |

Performance Analysis and Interpretation

The quantitative data reveals a clear accuracy-resource tradeoff across methodologies. SAPT0 provides the most rigorous decomposition of noncovalent interactions but remains prohibitively expensive for full-scale biomolecular systems [42]. Alchemical FEP methods strike a practical balance, achieving chemical accuracy (~1-1.2 kcal/mol MUE) for congeneric series in lead optimization, though they face challenges with significant scaffold changes and charge alterations [43].

The emergence of neural network potentials trained on massive datasets like OMol25 represents a paradigm shift, offering near-DFT accuracy for systems containing thousands of atoms [30]. These models effectively interpolate the quantum mechanical potential energy surface while avoiding the explicit calculation cost of traditional QM methods.

Specialized challenges in biomolecular simulation, such as cytochrome P450 modeling, require careful method selection. CYP enzymes often feature complex electronic structures and metal centers that may benefit from multi-reference methods, though homology modeling and docking have successfully guided mutagenesis studies and substrate specificity predictions [45].

Experimental Protocols and Methodologies

SAPT-Based Workflow for Protein-Ligand Interactions

The Splinter dataset provides a comprehensive protocol for studying fundamental protein-ligand interactions [42]. The methodology begins with monomer preparation, selecting chemical fragments representing common protein side chains and drug-like ligands. These fragments undergo geometry optimization at the B3LYP level with correlation-consistent basis sets (cc-pVDZ for neutral/cationic, aug-cc-pVDZ for anionic systems) [42].

Interaction site definition is crucial for systematic sampling. For each monomer, researchers define sets of three noncollinear points: primary interaction points centered on key functional groups, plus secondary points to define angular relationships. These sites are categorized as general, hydrogen bond donor, hydrogen bond acceptor, or Lewis acid/base sites, enabling comprehensive sampling of relevant chemical space [42].

Configuration sampling employs a dual strategy: ~1.5 million random configurations sample the complete potential energy surface, including unfavorable regions, while ~80,000 minimized structures provide local and global minima. This approach ensures broad coverage while emphasizing chemically relevant regions [42].

The electronic structure analysis utilizes SAPT0 with two basis sets, decomposing interaction energies into physically meaningful components: electrostatics, exchange-repulsion, induction, and dispersion. This decomposition provides invaluable insight for force field development and machine learning approaches [42].

Figure 1: SAPT-Based Workflow for Protein-Ligand Interactions [42]

Neural Network Potential Training with OMol25

The OMol25 training protocol represents a massive-scale approach to developing transferable neural network potentials [30]. Dataset construction begins with collecting diverse molecular structures from multiple domains: biomolecules (protein-ligand complexes, nucleic acids), electrolytes (aqueous solutions, ionic liquids), and metal complexes with combinatorially generated ligands and spin states [30].

Quantum chemical calculations employ the ωB97M-V functional with def2-TZVPD basis set and a large integration grid (99,590 points), ensuring consistent high-quality reference data across diverse chemical space. This level of theory balances accuracy and feasibility for large systems [30].

For biomolecular systems specifically, the protocol includes extensive preparation: extracting structures from RCSB PDB and BioLiP2 databases, generating random docked poses with smina, sampling protonation states and tautomers with Schrödinger tools, and running restrained molecular dynamics to sample different poses [30].

The model training utilizes the eSEN architecture, which incorporates equivariant spherical harmonic representations and transformer-style components. A key innovation is the two-phase training scheme: initial training with direct-force prediction followed by fine-tuning for conservative forces, reducing training time by 40% while improving accuracy [30].

Alchemical Free Energy Calculation Protocol

Standardized benchmarking protocols for alchemical free energy calculations emphasize careful system preparation and validation [43]. Benchmark curation requires high-quality experimental data with reliable structures and binding affinities. Systems should represent the methodology's domain of applicability while challenging it with realistic complexity [43].

Structure preparation must address critical factors: protein preparation (protonation states, missing residues), ligand parameterization (partial charges, force field assignment), and solvation model selection. The protocol emphasizes consistency across perturbations, particularly for charged ligands [43].

Simulation methodology involves careful setup of transformation pathways, sufficient equilibration, and monitoring for sampling adequacy. The recommended best practices include using overlapping lambda windows, monitoring Hamiltonian exchange in replica exchange simulations, and ensuring convergence through extended sampling and multiple independent runs [43].

Statistical analysis requires appropriate error assessment, using measures like mean unsigned error (MUE) with confidence intervals, and avoiding statistically deficient analyses that overstate performance. The community-standard "arsenic" toolkit provides standardized assessment methodologies [43].

Cytochrome P450 Modeling Approach

The modeling of cytochrome P450 enzymes, particularly CYP2D6, demonstrates a specialized protocol combining homology modeling, docking, and experimental validation [45]. Template selection begins with identifying suitable structural templates, progressing from bacterial CYP structures (sharing <25% sequence identity) to mammalian CYP2C5 and eventually human CYP crystal structures as they became available [45].

Active site modeling focuses on key functional features, particularly the identification of Asp301 as the critical residue for salt bridge formation with substrate basic nitrogen atoms—a prediction initially from modeling and later confirmed by crystal structures [45].

Model validation employs a cycle of hypothesis-driven mutagenesis and functional assays, creating CYP2D6 mutants with novel activities (testosterone hydroxylation, converting quinidine from inhibitor to substrate) to test and refine structural predictions [45].

Figure 2: Cytochrome P450 Modeling Workflow [45]

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Computational Tools for Biomolecular Simulation

| Tool Category | Specific Tools/Resources | Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Packages | Psi4 [42] | SAPT and DFT calculations | Interaction energy decomposition [42] |

| Neural Network Potentials | eSEN models, UMA [30] | Fast QM-accurate energy evaluation | Large biomolecular systems [30] |

| Free Energy Platforms | PMX/Gromacs, Schrödinger FEP [43] | Alchemical binding free energy calculations | Lead optimization [43] |

| Benchmarking Tools | Protein-ligand-benchmark, arsenic [43] | Standardized performance assessment | Method validation [43] |

| Homology Modeling | Modeller [45] | Protein structure prediction | CYP450 modeling before crystal structures [45] |

| Quantum Computing Hybrid | SAPT(VQE) [44] | Interaction energies for multi-reference systems | Specialized applications [44] |