Validating SCF Convergence Protocols for p-Block Elements: A Quantum Chemistry Benchmarking Guide

Accurate self-consistent field (SCF) convergence for p-block elements is critical for reliable quantum chemical predictions in drug design and materials science.

Validating SCF Convergence Protocols for p-Block Elements: A Quantum Chemistry Benchmarking Guide

Abstract

Accurate self-consistent field (SCF) convergence for p-block elements is critical for reliable quantum chemical predictions in drug design and materials science. This article provides a comprehensive guide for researchers, establishing the foundational challenges of modeling p-block systems, detailing robust methodological protocols, offering advanced troubleshooting for convergence failure, and presenting a validation framework against high-level benchmark data. By synthesizing insights from cutting-edge studies on inorganic heterocycles and color centers, we deliver practical strategies for selecting functionals, basis sets, and convergence algorithms to achieve predictive accuracy for systems involving heavier p-block elements, ultimately enhancing the reliability of computational models in biomedical research.

The p-Block Conundrum: Unveiling Unique Electronic Structure Challenges

Comparative Performance of p-Block Element-Based Electrocatalysts

p-Block elements are emerging as high-performance, cost-effective catalysts for clean energy applications, demonstrating capabilities that rival or even surpass traditional transition metal catalysts in specific reactions such as hydrogen evolution (HER), hydrogen oxidation (HOR), and nitrogen reduction (NRR) [1] [2] [3]. Their tunable electronic structures make them particularly suitable for achieving exceptional activity and selectivity.

The table below summarizes the experimentally determined and computationally predicted performance metrics of selected p-block element-based catalysts for key energy conversion reactions.

Table 1: Performance Comparison of p-Block Element-Based Catalysts

| Catalyst Material | Reaction | Key Performance Metric | Reported Value | Reference/System |

|---|---|---|---|---|

| C-doped Bismuthine | N₂ Reduction (NRR) | Limiting Potential (Uₗ(NRR)) | -0.46 V | 2D Bismuthine Nanosheets [3] |

| C-doped Bismuthine | N₂ Reduction (NRR) | Selectivity [Uₗ(NRR) - Uₗ(HER)] | +1.15 V | 2D Bismuthine Nanosheets [3] |

| Si-doped Bismuthine | N₂ Reduction (NRR) | Limiting Potential (Uₗ(NRR)) | -0.68 V | 2D Bismuthine Nanosheets [3] |

| Si-doped Bismuthine | N₂ Reduction (NRR) | Selectivity [Uₗ(NRR) - Uₗ(HER)] | +0.13 V | 2D Bismuthine Nanosheets [3] |

| p-block modified PGM | Alkaline HER/HOR | Kinetic Rate | Enhanced by orders of magnitude | Pt-group metal hybrids [1] |

Performance Analysis and Key Differentiators

The data indicates that p-block element-based catalysts, particularly doped low-dimensional materials like bismuthine nanosheets, can achieve notable activity and superior proton suppression selectivity for the NRR. This selectivity, quantified by the positive difference between the NRR and HER limiting potentials, is a critical advantage over many transition metal catalysts that fiercely compete with the HER [3]. The enhancement is attributed to p-d orbital hybridization and optimized intermediate adsorption behavior when p-block elements are introduced to platinum group metals (PGMs) [1].

Experimental and Computational Protocols

Validating the performance of p-block element materials requires a combination of advanced computational modeling and precise experimental characterization. Reliable self-consistent field (SCF) convergence in electronic structure calculations is foundational to accurate prediction of catalytic properties.

Computational Protocol for p-Block Catalyst Screening

High-throughput theoretical screening is a powerful method for discovering new p-block catalysts. A recent protocol for identifying NRR catalysts on doped bismuthine nanosheets involved the following steps [3]:

- Descriptor Identification: A symbolic regression algorithm was used to identify simple, explainable descriptors composed of inherent atomic properties (e.g., p-orbital electron number, electron affinity, electronegativity, atomic radius), independent of complex Density Functional Theory (DFT) calculations.

- Stability Assessment: The formation energy of 40 different p-block element-doped and adsorbed bismuthine structures was calculated to evaluate thermodynamic stability.

- Activity & Selectivity Calculation: The Gibbs free energy change for NRR steps and the hydrogen adsorption energy (as a proxy for HER competition) were computed using DFT to determine the limiting potential (Uₗ(NRR)) and the selectivity metric [Uₗ(NRR) - Uₗ(HER)].

- Validation: The predictive power of the descriptors was tested via multi-task regression, confirming that descriptors from doped systems could accurately forecast the performance of adsorbed systems, and vice versa.

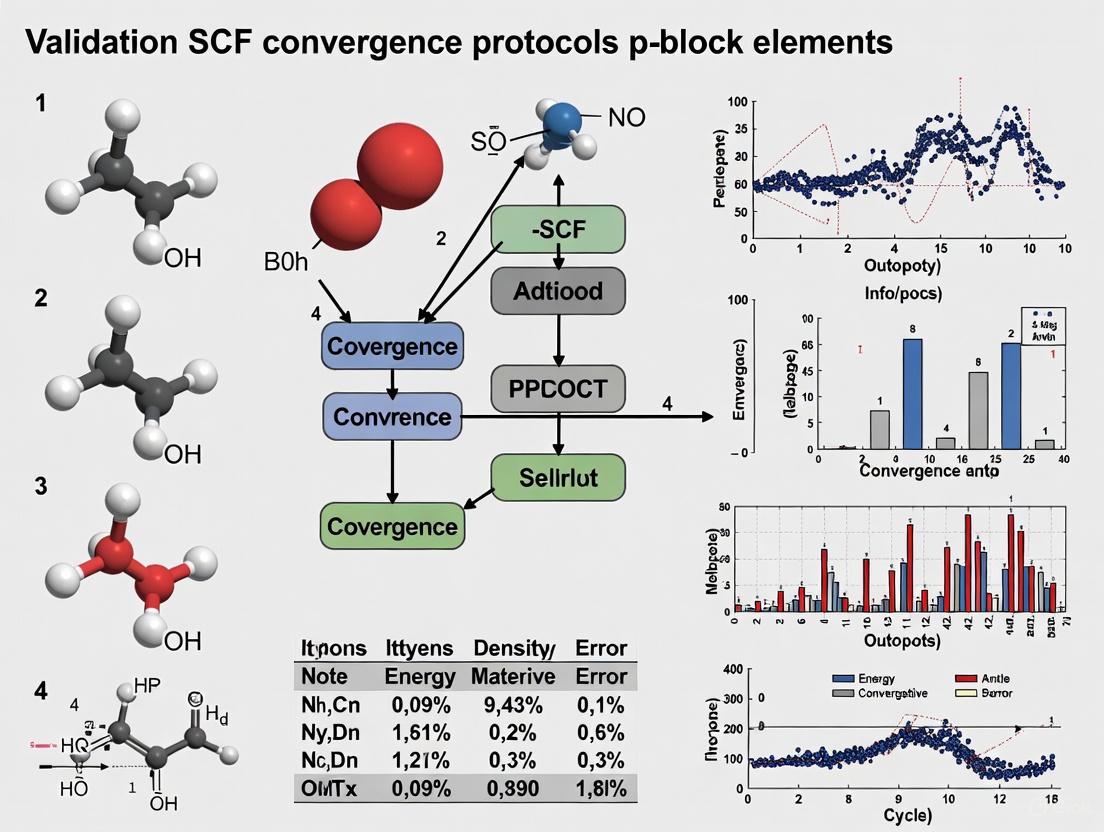

SCF Convergence Protocol for p-Block Systems

Accurate DFT calculations for p-block elements, especially in low-dimensional or open-shell configurations, can present SCF convergence challenges. The following protocol, synthesized from computational chemistry manuals and best practices, ensures robust convergence [4] [5] [6].

Table 2: SCF Convergence Troubleshooting Protocol

| Step | Action | Typical ORCA Input/Setting | Rationale |

|---|---|---|---|

| 1 | Increase Default Precision | ! TightSCF or ! VeryTightSCF |

Reduces error tolerances for energy and density (e.g., TolE 1e-8) [4]. |

| 2 | Modify SCF Algorithm | ! SlowConv or ! KDIIS |

Switches to more stable, damped algorithms or alternative accelerators [6]. |

| 3 | Adjust DIIS Parameters | %scf DIISMaxEq 25 end |

Increasing remembered Fock matrices (15-40) stabilizes convergence in difficult cases [6]. |

| 4 | Utilize Second-Order Methods | ! TRAH |

Enables robust but expensive trust-radius augmented Hessian method [6]. |

| 5 | Apply Electron Smearing | %scf Shift 0.1 end |

Level shifting or finite electron temperature helps overcome small HOMO-LUMO gaps [5]. |

Experimental Characterization Protocol: ToF-SIMS Quantification

Quantifying surface composition of p-block elements in alloys or composite materials is critical. Time-of-Flight Secondary Ion Mass Spectrometry (ToF-SIMS) can be enhanced for quantification via gas flooding to reduce the matrix effect [7].

- Sample Preparation: Polish and clean metal or alloy samples to ensure a consistent surface.

- Gas Environment Selection: Conduct analysis under three environments for comparison:

- Ultra-high vacuum (UHV) as a baseline.

- H₂ atmosphere (∼10⁻⁵ mbar), which shows the most significant improvement for quantifying transition metals.

- O₂ atmosphere (∼10⁻⁵ mbar).

- Data Acquisition: Collect positive and negative secondary ion spectra for elements of interest.

- Quantitative Analysis: Calculate atomic ratios from ion intensities. The study found that maximum deviations from true atomic ratios for transition metals were reduced to 46% in H₂ atmosphere, compared to 228% in UHV [7].

Visualization of Workflows and Relationships

p-Block Catalyst Design and Validation Workflow

The following diagram illustrates the integrated computational and experimental pathway for developing and validating p-block element-based catalysts, emphasizing the critical role of SCF convergence.

The p-Orbital Reactivity Model

The catalytic activity of p-block elements is governed by the electronic structure of their p orbitals, which can be understood through a unified "p-band model" [2]. The diagram below illustrates the key parameters controlling this reactivity.

The Scientist's Toolkit: Essential Research Reagents and Materials

This table details key materials and computational tools used in the research on p-block elements for catalysis and drug design.

Table 3: Key Reagent Solutions and Research Materials

| Item Name | Function/Application | Relevance to Field |

|---|---|---|

| 2D Bismuthine Nanosheets | A foundational material for constructing electrocatalysts. | Serves as a tunable substrate for doping with p-block elements to study N₂ reduction reactivity [3]. |

| p-Block Dopants (C, Si, etc.) | Elements used to modify the electronic structure of host materials. | Introducing these atoms induces p-d hybridization with metals or activates p-orbitals, enhancing catalytic activity [1] [3]. |

| ORCA / ADF Software | Electronic structure modeling software packages. | Used for DFT calculations to predict catalytic properties, optimize geometries, and compute electronic descriptors; robust SCF protocols are essential [4] [5]. |

| Spectrophotometer | Instrument for precise color measurement. | Critical for quality assurance in color-coded pharmaceutical packaging, ensuring color consistency for patient safety and adherence [8]. |

| ToF-SIMS with Gas Inlet | Surface analysis instrument for elemental and molecular mapping. | Enables quantification of surface composition in alloys and materials; H₂ or O₂ gas flooding reduces matrix effects, improving accuracy [7]. |

Accurately modeling the electronic structure of p-block elements is foundational to advancements in catalysis, materials science, and drug development. These systems present a unique triad of challenges: significant electron correlation effects, non-negligible relativistic influences, and often, pronounced multireference character. These features are particularly prevalent in systems featuring stretched bonds, open-shell configurations, or heavy p-block elements. The journey toward a converged Self-Consistent Field (SCF) solution is intrinsically linked to how these challenges are managed. This guide objectively compares the performance of various electronic structure methods and protocols in addressing this triad, providing a framework for researchers to validate their computational approaches and achieve reliable results.

Quantifying and Managing Electron Correlation

Electron correlation, the error introduced by the mean-field approximation in Hartree-Fock theory, is often partitioned into dynamic and static (nondynamical) components. Static correlation, also known as multireference character, is a particularly pressing problem for p-block chemistry, as it arises when multiple electronic configurations contribute significantly to the wavefunction.

Modern Correlation Diagnostics

Robust computational research requires diagnostics to identify multireference character before investing in high-level methods. Natural orbital occupancy (NOO)-based metrics offer a universal and intuitive approach [9].

- ( I{\text{max}}^{\text{ND}} ) Diagnostic: This diagnostic is defined as the maximum deviation from idempotency of the natural orbital occupancies, ( I{\text{max}}^{\text{ND}} = \max(2 - ni, ni) ) for closed-shell systems, where ( n_i ) are the natural orbital occupations [9]. It focuses on the single most strongly correlated orbital, making it an excellent multireference diagnostic. It has been shown to correlate well with established diagnostics like D2 [9].

- ( \bar{I}^{\text{ND}} ) Diagnostic: This measure represents the average deviation from idempotency and is more sensitive to overall dynamic correlation [9]. For a closed-shell system, it is calculated as ( \bar{I}^{\text{ND}} = \frac{2}{N} \sum{i} ni (1 - n_i) ), where N is the number of electrons [9].

The table below summarizes the proposed thresholds for these diagnostics at the MP2 and CCSD levels of theory [9].

Table 1: Thresholds for Natural Orbital-Based Correlation Diagnostics

| Diagnostic | Theory Level | Single-Reference Threshold | Multireference Caution Threshold |

|---|---|---|---|

| ( I_{\text{max}}^{\text{ND}} ) | MP2 | < 0.034 | > 0.034 |

| ( I_{\text{max}}^{\text{ND}} ) | CCSD | < 0.030 | > 0.030 |

| ( \bar{I}^{\text{ND}} ) | MP2 | < 0.007 | > 0.007 |

| ( \bar{I}^{\text{ND}} ) | CCSD | < 0.005 | > 0.005 |

Performance of Methods for Strong Correlation

The performance of electronic structure methods deteriorates as multireference character increases. This can be systematically demonstrated by stretching molecular bonds, which gradually increases static correlation.

A benchmark study on hydrocarbons constructed multidimensional potential energy curves by simultaneously scaling all bond lengths. CCSDTQ/CBS reference data revealed that [10]:

- Conventional DFT functionals (e.g., B97-D, TPSS) and double-hybrid DFT methods are more robust toward multireference effects than standard hybrid GGAs.

- Hybrid meta-GGA functionals with low percentages of exact exchange (e.g., TPSSh) also show improved performance.

- The deterioration of DFT performance worsens with increasing electronic structure complexity: Methane (σ bonds) → Ethane (C–C single bond) → Ethylene (C=C double bond) → Acetylene (C≡C triple bond) [10].

For severe cases, advanced methods like hybrid Kohn-Sham/1-electron Reduced Density Matrix Functional Theory (DFA 1-RDMFT) have been developed to capture strong correlation at a mean-field computational cost. Systematic benchmarking of nearly 200 exchange-correlation functionals within this framework has identified optimal functionals for this approach [11].

Accounting for Relativistic Effects

For p-block elements beyond the third period, relativistic effects become non-negligible and can significantly impact molecular geometries, bond energies, and spectroscopic properties.

Relativistic Hamiltonians and Quantification

The best practice for quantifying relativistic effects involves comparing results obtained with a relativistic Hamiltonian to those from a non-relativistic calculation [12].

- Recommended Hamiltonian: The eXact 2-Component (X2C) Hamiltonian is considered state-of-the-art. It is an infinite-order method that is computationally efficient and superior to older Douglas-Kroll (DK) approaches [12].

- Quantification Protocol: The relativistic contribution to a property is quantified as the difference between the relativistic and non-relativistic results: ( \Delta E_{\text{rel}} = E(\text{X2C}) - E(\text{Non-Rel}) ). To ensure a fair comparison and maximize error cancellation:

SCF Convergence Protocols for Challenging Systems

SCF convergence is a pressing problem; poor convergence increases computation time linearly with the number of iterations and can prevent obtaining a result altogether [4]. This is particularly acute for open-shell transition metal and p-block complexes.

Convergence Criteria and Thresholds

Setting appropriate convergence criteria (tolerances) is critical. Tighter thresholds generally lead to more accurate results but require more SCF cycles. ORCA provides compound keywords that set a group of tolerances to predefined levels [4].

Table 2: Standard SCF Convergence Tolerances in ORCA (Selected) [4]

| Criterion | Description | TightSCF Values | VeryTightSCF Values |

|---|---|---|---|

| TolE | Energy change between cycles | 1e-8 E_h | 1e-9 E_h |

| TolRMSP | RMS density change | 5e-9 | 1e-9 |

| TolMaxP | Maximum density change | 1e-7 | 1e-8 |

| TolErr | DIIS error vector | 5e-7 | 1e-8 |

| TolG | Orbital gradient | 1e-5 | 2e-6 |

The ConvCheckMode keyword controls the rigor of the convergence check. The default ConvCheckMode=2 offers a balanced approach, checking the change in both total and one-electron energy [4].

Advanced SCF Strategies

When standard DIIS fails, alternative strategies are required. The MultiSecant method (or similar "MultiStepper" methods) can be more robust for problem cases at no extra cost per cycle [13]. Other powerful techniques include:

- Damping: Reducing the

Mixingparameter (e.g., from 0.2 to 0.05) stabilizes oscillations by limiting the step size between cycles [13]. - Fermi-Smearing: Applying a small electronic temperature (

ElectronicTemperature) via theDegeneratekeyword smears orbital occupations around the Fermi level, helping to escape metastable states and resolve near-degeneracies [13]. - Initial Guess Manipulation: Using

StartWithMaxSpinorVSplitbreaks initial spin symmetry, which can help converge open-shell or broken-symmetry solutions [13]. For antiferromagnetic coupling,SpinFlipallows for a specific initial spin arrangement on different atoms [13]. - Stability Analysis: After SCF convergence, a stability analysis should be performed to verify that the solution found is a true local minimum and not a saddle point in the orbital rotation space [4].

Experimental Protocols for Method Benchmarking

Protocol: Multidimensional Potential Energy Curves

This protocol benchmarks a method's performance against strong correlation [10].

- System Preparation: Select a molecule of interest (e.g., a p-block hydride or dimer).

- Geometry Generation: Start from an equilibrium geometry. Generate a series of structures by scaling all Cartesian coordinates by a factor ( f ), typically from 0.8 (compression) to 1.5 (stretch), preserving molecular symmetry [10].

- Reference Calculation: Perform single-point energy calculations at each geometry using a high-accuracy, composite method (e.g., CCSDTQ/CBS or W4-type) to establish benchmark values [10].

- Test Method Calculation: Perform the same single-point calculations using the methods under investigation (e.g., various DFT functionals, MP2, CCSD(T)).

- Analysis: Calculate errors relative to the benchmark. Correlate the error with multireference diagnostics (( I{\text{max}}^{\text{ND}} ), ( T1 ), ( D_1 )) computed at each geometry.

Protocol: Quantifying Relativistic Effects

This protocol isolates the contribution of relativistic effects to a molecular property [12].

- System Preparation: Optimize the molecular geometry at the desired level of theory, preferably using a relativistic Hamiltonian.

- Basis Set Selection: Choose a sufficiently large, decontracted basis set. For light systems, an uncontracted standard basis can be used. For heavier elements, a purpose-built relativistic basis (e.g., aug-cc-pVQZ-X2C) is recommended.

- Non-Relativistic Calculation: Calculate the target property (e.g., bond energy, dipole moment) using a non-relativistic Hamiltonian and the selected basis set.

- Relativistic Calculation: Calculate the same property using the X2C Hamiltonian and the identical basis set and computational model.

- Calculation: The relativistic effect is ( \Delta P_{\text{rel}} = P(\text{X2C}) - P(\text{Non-Rel}) ).

The following workflow diagram illustrates the logical decision process for tackling a p-block element calculation, integrating the challenges and protocols discussed.

Diagram 1: A decision workflow for electronic structure calculations on challenging p-block systems, integrating checks for relativistic effects, multireference character, and SCF convergence protocols.

The Scientist's Toolkit: Key Research Reagents

This section details essential computational "reagents" for electronic structure studies of p-block elements.

Table 3: Essential Computational Tools for p-Block Electronic Structure Research

| Tool / Solution | Function / Purpose | Example Use Case |

|---|---|---|

| Multireference Diagnostics (e.g., ( I{\text{max}}^{\text{ND}} ), ( T1 )) | Quantifies static correlation; identifies systems requiring multireference methods. | Screening a series of catalysts for strong correlation before selecting a computational method [9]. |

| Relativistic Effective Core Potentials (ECPs) | Replaces core electrons with a potential, implicitly including relativistic effects; reduces computational cost. | Studying heavy p-block elements like bismuth in catalytic sites (e.g., BiN₄ SACs) [14] [15]. |

| Robust DFT Functionals (e.g., Double-Hybrids) | Provides improved performance for systems with moderate multireference character at a reasonable cost. | Calculating accurate bond dissociation energies for hydrocarbons with stretched bonds [10]. |

| Advanced SCF Algorithms (e.g., MultiSecant) | Enhances convergence stability for difficult systems where standard DIIS fails. | Converging the SCF for an open-shell, antiferromagnetically coupled p-block dimer [13]. |

| Composite Ab Initio Methods (e.g., W4, CCSDTQ/CBS) | Provides gold-standard benchmark energies by approximating the full CI/CBS limit. | Generating reference data for assessing the accuracy of more efficient methods [10]. |

| Stability Analysis | Verifies that a converged SCF solution is a true minimum on the energy surface, not a saddle point. | Checking a converged DFT solution for a singlet biradical to ensure it is stable [4]. |

Navigating the electronic structure triad of correlation, relativity, and multireference character in p-block elements requires a methodical and validated approach. This guide has provided a comparative overview of the available methods, diagnostics, and protocols. Key takeaways include: the superiority of NOO-based diagnostics like ( I_{\text{max}}^{\text{ND}} ) for universal multireference assessment; the recommendation of the X2C Hamiltonian for relativistic calculations; and the necessity of robust SCF convergence protocols like MultiSecant and Fermi-smearing for challenging cases. By integrating these tools and validation protocols into their workflow, researchers in catalysis and materials science can make informed computational choices, ensuring the reliability and predictive power of their calculations on p-block systems.

p-Block elements, spanning main groups III to VI in the periodic table, are increasingly pivotal in developing new catalysts, optoelectronic materials, and frustrated Lewis pairs (FLPs) [16] [2]. However, their theoretical description poses a significant challenge for quantum chemistry. The electronic structures of heavier p-block elements involve large electron correlation contributions, substantial core–valence correlation effects, and notoriously slow basis set convergence [17]. Compounding this problem is a severe lack of high-quality, reliable benchmark data to assess the performance of approximate computational methods like Density Functional Theory (DFT) for these systems [16]. Popular comprehensive thermochemistry databases, such as GMTKN55, heavily underrepresent systems with heavier p-block elements, often masking the specific interactions between these elements with large organic substituents [16]. This benchmarking gap hinders the development and validation of robust, transferable quantum chemical methods, including semi-empirical approaches and emerging machine-learning techniques that require vast amounts of reliable training data [16]. The IHD302 benchmark set was created to address this critical need, providing a specialized test designed to challenge contemporary computational methods with a large number of spatially close p-element bonds that are underrepresented elsewhere [17].

The IHD302 Benchmark Set: Composition and Design Rationale

The IHD302 (Inorganic Heterocycle Dimerizations 302) set is a new, carefully curated benchmark consisting of 604 dimerization energies derived from 302 unique neutral, planar six-membered heterocyclic monomers composed exclusively of non-carbon p-block elements from boron to polonium [16]. The set was inspired by experimentally accessible parent "inorganic benzenes" [16].

- Elemental Composition: The set includes all main group III, IV, V, and VI elements (excluding carbon), with an average of 53 compounds per element. Elements like lead that strongly deviate from planarity were excluded to maintain comparability [16].

- Monomer Combinations: Monomers are categorized into three primary combinations based on their formal bonding:

[EIII3EVI3]H3,[EIII3EV3]H6, and[EIV3EV3]H3[16]. - Dimerization Types: The benchmark is divided into two distinct, chemically relevant subsets as shown in Table 1, posing different challenges for computational methods [17] [16].

Table 1: Subsets of the IHD302 Benchmark Set

| Subset Name | Interaction Type | Description | Key Challenge |

|---|---|---|---|

| Covalent Dimers (COV) | Covalent Bonding | Result from subsequent geometry optimization of dimer structures [16]. | Accurate description of covalent (short-range) electron correlation [16]. |

| Weaker Donor-Acceptor Dimers (WDA) | Non-covalent / Donor-Acceptor | Generated by simple monomer rotation and displacement; represent strongly bound van der Waals complexes [16]. | Interplay of covalent correlation and London dispersion interactions [16]. |

Performance Comparison of Quantum Chemical Methods

Based on reliable reference data generated using a state-of-the-art explicitly correlated local coupled cluster protocol (PNO-LCCSD(T)-F12/cc-VTZ-PP-F12(corr.) with a basis set correction) [17], the performance of 26 DFT functionals, three dispersion corrections, five composite DFT approaches, and five semi-empirical quantum mechanical methods was assessed [16].

Top-Performing DFT Functionals

For the critical task of calculating covalent dimerization energies, several functionals delivered superior performance across different functional classes, as summarized in Table 2.

Table 2: Best-Performing DFT Functionals for Covalent Dimerizations in the IHD302 Set

| Functional Class | Functional Name | Key Characteristics |

|---|---|---|

| Meta-GGA | r2SCAN-D4 [17] |

A modern meta-GGA with the D4 dispersion correction. |

| Hybrid | r2SCAN0-D4 [17] |

A hybrid variant of r2SCAN with D4 dispersion correction. |

| Hybrid | ωB97M-V [17] |

A range-separated hybrid meta-GFA functional. |

| Double-Hybrid | revDSD-PBEP86-D4 [17] |

A double-hybrid functional with D4 dispersion correction. |

The Critical Role of Basis Sets and Pseudopotentials

The study revealed a critical technical issue: the common def2 basis sets can introduce significant errors (up to 6 kcal mol⁻¹) for molecules containing 4th-period p-block elements because they lack associated relativistic pseudopotentials [17]. A significant improvement was achieved by employing ECP10MDF pseudopotentials along with newly introduced re-contracted aug-cc-pVQZ-PP-KS basis sets, highlighting the importance of a properly matched basis set and pseudopotential combination for accurate results [17].

Experimental Protocols and Computational Methodologies

Reference Data Generation Protocol

Generating reliable benchmark data for the IHD302 set is challenging. The protocol established and used in the study is a multi-step process [17]:

- High-Level Correlation Treatment: The core reference energies are computed using the

PNO-LCCSD(T)-F12method with acc-VTZ-PP-F12basis set, which explicitly includes correlation to treat slow basis set convergence [17]. - Basis Set Correction: A separate correction is applied at the

PNO-LMP2-F12level using a largeraug-cc-pwCVTZbasis set to account for core-valence correlation effects [17].

System-Specific Considerations for SCF Convergence

Achieving SCF convergence is a prerequisite for any successful calculation, and it can be particularly difficult for open-shell systems and complexes involving transition metals or p-block elements with delicate electronic structures [4]. The ORCA software package provides a graded set of convergence criteria, and selecting an appropriate threshold is vital for balancing accuracy and computational cost [4]. For instance, using !TightSCF (which sets TolE to 1e-8, TolRMSP to 5e-9, and TolMaxP to 1e-7) is often recommended for challenging systems like transition metal complexes [4]. Furthermore, verifying the stability of the converged solution is crucial, especially for open-shell singlets where achieving a correct broken-symmetry solution can be difficult [4].

Diagram 1: Workflow for Constructing and Using the IHD302 Benchmark Set

Essential Research Reagent Solutions

The rigorous evaluation of computational methods for p-block elements relies on a suite of software tools and theoretical models. The following table details key "research reagents" essential for working in this field.

Table 3: Key Research Reagent Solutions for p-Block Computational Chemistry

| Tool / Model | Type | Primary Function | Relevance to IHD302/p-Block |

|---|---|---|---|

| ORCA [4] [16] | Software Package | A versatile quantum chemistry package for electronic structure calculations. | Used for geometry optimizations (with r2SCAN-3c) and SCF calculations; provides advanced SCF convergence controls [4] [16]. |

| PNO-LCCSD(T)-F12 [17] | Ab Initio Wavefunction Method | A highly accurate coupled cluster method for generating reference data. | The core method used to produce reliable benchmark energies for the IHD302 set [17]. |

| DFT-D4 [17] [18] | Dispersion Correction | An atomic-charge dependent London dispersion correction. | Applied to assessed DFT functionals (e.g., r2SCAN-D4) to model long-range interactions [17] [18]. |

| r2SCAN-3c [16] | Composite DFT Method | A composite DFT method known for providing excellent molecular structures. | Employed for generating the covalent dimer geometries in the IHD302 set [16]. |

| xtb/CREST [18] | Semi-empirical Program & Conformer Sampler | Fast semi-empirical calculations and conformer sampling. | Represents the class of fast methods (SQM) assessed against the IHD302 benchmark [16] [18]. |

The IHD302 benchmark set fills a critical void in the computational chemist's toolkit by providing specialized, high-quality reference data for the energetics of p-block element interactions. Its comprehensive performance assessment reveals that while modern functionals like r2SCAN-D4, r2SCAN0-D4, ωB97M-V, and revDSD-PBEP86-D4 show promising accuracy for covalent dimerizations, the entire field of quantum chemistry is challenged by the complex electronic structure of these inorganic compounds. The set underscores the profound impact of technical choices, such as the selection of pseudopotentials and basis sets, on achieving quantitatively correct results. By enabling the targeted development and validation of more robust and transferable computational methods, the IHD302 set serves as an essential foundation for advancing research in catalysis, materials science, and main-group chemistry.

Understanding Slow Basis Set Convergence and Core-Valence Effects

The accurate theoretical description of p-block elements presents a significant challenge in computational chemistry, particularly due to slow basis set convergence and substantial core-valence correlation effects. These elements, spanning groups III to VI of the periodic table from boron to polonium, are crucial in diverse chemical applications including frustrated Lewis pairs (FLPs) and optoelectronics [17]. However, high-quality benchmark data for assessing approximate quantum chemical methods have been sparse, creating a gap in reliable computational protocols for these systems [17] [16].

The core challenge lies in the electronic structure of p-block elements, where generating reliable reference data requires addressing large electron correlation contributions, core-valence correlation effects, and particularly slow basis set convergence [17]. This phenomenon is especially pronounced for second-row elements and heavier p-block elements, where additional "tight" (high-exponent) basis functions are necessary for accurate descriptions [19]. The IHD302 benchmark set, comprising 604 dimerization energies of 302 "inorganic benzenes" composed exclusively of non-carbon p-block elements, has been developed to address this gap and provides a robust platform for method assessment [17] [16].

The Computational Challenge of p-Block Elements

Electronic Structure Complexities

p-Block elements exhibit unique electronic behaviors that complicate their computational treatment. The dividing line between metals and nonmetals crosses the p-block diagonally, resulting in diverse chemical properties even within individual groups [20]. These elements have ns²np¹ valence electron configurations and tend to lose their three valence electrons to form compounds in the +3 oxidation state, though heavier elements can also form +1 oxidation state compounds [20].

A critical factor in their computational description is the inert-pair effect, where the tendency of the two s-electrons to remain unreacted increases descending each group [20]. This effect, combined with the decreasing tendency to form multiple bonds for heavier elements, creates complex bonding scenarios that challenge standard computational approaches [16].

Specific Convergence Issues

Slow basis set convergence manifests differently across the periodic table. For second-row elements, this phenomenon has been rationalized by the low-lying 3d orbital, which sinks lower as oxidation state increases, becoming available for back-donation from chalcogen and halogen lone pairs [19]. This creates hypersensitivity to high-exponent d functions, as demonstrated by the 8 kcal/mol increase in atomization energy for SO₂ when adding a third set of d functions [19].

For fourth and fifth-row heavy p-block elements, a similar phenomenon occurs but with tight f functions enhancing the description of low-lying 4f and 5f Rydberg orbitals, respectively [19]. This requirement is less pronounced in third-row elements where 4f orbitals are too high in energy while 4d orbitals are adequately covered by standard basis functions [19].

Benchmark Systems and Assessment Protocols

The IHD302 Benchmark Set

The IHD302 (Inorganic Heterocycle Dimerizations 302) benchmark set was specifically developed to address the underrepresentation of p-block elements in comprehensive thermochemistry databases [17] [16]. This set comprises:

- 302 neutral six-membered heterocycles and their corresponding dimers in singlet ground states [16]

- Two dimer classes: covalently bound structures and weaker donor-acceptor (WDA) interacting dimers [17]

- Element coverage: all non-carbon p-block elements of main groups III to VI up to polonium [17]

- Molecular combinations: [Eᴵᴵᴵ₃Eⱽᴵ₃]H₃, [Eᴵᴵᴵ₃Eⱽ₃]H₆, and [Eᴵⱽ₃Eⱽ₃]H₃ motifs [16]

The set deliberately excludes carbon as "a typically saturated organic element with less pronounced donor-acceptor chemistry" [16], focusing specifically on the challenging inorganic bonding motifs.

High-Level Reference Protocol

Generating reliable reference data for these systems requires sophisticated computational approaches due to the significant electron correlation effects. The established protocol involves:

- Primary method: PNO-LCCSD(T)-F12/cc-VTZ-PP-F12(corr.) - explicitly correlated local coupled cluster theory [17]

- Basis set correction: PNO-LMP2-F12/aug-cc-pwCVTZ level [17]

- Relativistic effects: Handled through pseudopotentials, particularly important for heavier elements [17]

This protocol accounts for the slow basis set convergence through explicitly correlated methods and addresses core-valence correlation effects through appropriate basis set selection.

Performance Assessment of Computational Methods

DFT Functional Performance

Based on the IHD302 benchmark data, 26 DFT methods were assessed in combination with three different dispersion corrections and the def2-QZVPP basis set [17]. The performance varies significantly between different functional classes:

Table 1: Performance of DFT Functionals on IHD302 Benchmark Set

| Functional Class | Best Performing Functional | Performance Characteristics |

|---|---|---|

| meta-GGA | r2SCAN-D4 | Excellent for covalent dimerizations [17] |

| Hybrid | r2SCAN0-D4, ωB97M-V | Top performers for covalent dimerizations [17] |

| Double-Hybrid | revDSD-PBEP86-D4 | Best-performing double-hybrid for covalent dimerizations [17] |

The study revealed significant errors (up to 6 kcal mol⁻¹) in covalent dimerization energies for molecules containing fourth-period p-block elements when using def2 basis sets not associated with relativistic pseudopotentials [17]. Substantial improvements were achieved using ECP10MDF pseudopotentials with re-contracted aug-cc-pVQZ-PP-KS basis sets [17].

Basis Set Dependencies

The performance of basis sets for p-block elements shows distinct patterns across the periodic table:

Table 2: Basis Set Requirements Across the Periodic Table

| Element Group | Basis Set Challenge | Recommended Solution |

|---|---|---|

| Second-Row | Hypersensitivity to high-exponent d functions | cc-pV(n+d)Z, aug-cc-pV(n+d)Z [19] |

| Fourth-Row Heavy p-Block | Need for tight f functions | aug-cc-pVnZ-PP with tight f functions [19] |

| Fifth-Row Heavy p-Block | Need for tight f functions | aug-cc-pVnZ-PP with tight f functions [19] |

For core-electron spectroscopy calculations, specific basis set considerations apply. For first-row elements, relatively small basis sets can accurately reproduce core-electron binding energies, with IGLO basis sets performing particularly well [21]. For K-edge calculations of second-row elements, the pcSseg-2 basis set shows excellent performance, while correlation-consistent basis sets require core-valence correlation functions (cc-pCVTZ) for accurate results [21].

Methodological Recommendations and Protocols

Recommended Computational Strategies

Based on comprehensive benchmarking, the following strategies are recommended for p-block element calculations:

- For covalent dimerizations: r2SCAN-D4 (meta-GGA), r2SCAN0-D4 and ωB97M-V (hybrids), or revDSD-PBEP86-D4 (double-hybrid) provide optimal performance [17]

- For systems with 4th row elements: Use ECP10MDF pseudopotentials with re-contracted aug-cc-pVQZ-PP-KS basis sets to address significant errors [17]

- For second-row compounds: Ensure basis sets include additional "tight" d functions (cc-pV(n+d)Z) [19]

- For heavy p-block elements (4th-5th row): Include tight f functions for proper description of low-lying Rydberg orbitals [19]

Research Reagent Solutions

Table 3: Essential Computational Tools for p-Block Element Research

| Research Reagent | Function/Application | Key Features |

|---|---|---|

| IHD302 Benchmark Set | Reference data for method validation | 604 dimerization energies, 302 inorganic benzenes [17] |

| PNO-LCCSD(T)-F12 | High-level reference method | Explicitly correlated, accounts for slow basis set convergence [17] |

| def2-QZVPP | Standard basis set | Used for DFT assessment, but requires pseudopotentials for 4th period [17] |

| ECP10MDF pseudopotentials | Relativistic effects handling | Essential for heavier p-block elements [17] |

| aug-cc-pV(n+d)Z | Basis set for second-row | Addresses slow d-function convergence [19] |

| cc-pCVXZ basis sets | Core-valence correlation | Additional tight functions for core-electron properties [21] |

The challenges of slow basis set convergence and core-valence effects in p-block elements represent a significant frontier in computational chemistry. The development of specialized benchmark sets like IHD302 and methodological protocols addressing the need for tight basis functions and proper treatment of core-valence correlation has enabled more reliable computations for these systems.

The performance assessment of various DFT functionals reveals that modern density functionals, particularly when combined with appropriate dispersion corrections and basis sets, can achieve reasonable accuracy for many applications. However, the systematic errors observed for certain element groups, particularly fourth-period elements, highlight the ongoing need for method development and careful protocol validation.

Future methodological advances should focus on improving the description of the unique electronic structure features of p-block elements, particularly the complex bonding scenarios and relativistic effects in heavier congeners. The continued development and expansion of benchmark sets covering diverse chemical spaces will be crucial for guiding these advances and ensuring robust computational protocols for p-block element research.

Experimental Workflow and Method Relationships

Diagram 1: Computational protocol development workflow for p-block elements, showing relationships between benchmark sets, methodological challenges, and computational approaches.

The computational characterization of p-block elements is paramount in modern chemistry, driving innovations in areas ranging from catalysis to materials science. However, accurately modeling these systems, particularly those involving complex bonding interactions like inorganic heterocycle dimerizations, presents a significant challenge for quantum chemical methods. This case study uses the recent "IHD302" benchmark set—comprising 604 dimerization energies of 302 inorganic benzenes—as a critical testbed to objectively compare the performance of various density functional theory (DFT) approaches and underscore the non-negotiable importance of robust Self-Consistent Field (SCF) convergence protocols. The findings reveal that the reliability of any functional is contingent upon the precise technical setup, including basis sets and pseudopotentials, especially for heavier p-block elements [17].

The IHD302 Benchmark Set and Its Computational Challenges

The p-Block Benchmarking Gap

The p-block elements of groups III to VI are integral to numerous chemical applications, including frustrated Lewis pairs (FLPs) and optoelectronics [17]. Despite their importance, a scarcity of high-quality benchmark data has made it difficult to assess the performance of approximate computational methods like DFT for these systems. The IHD302 test set was developed to fill this gap, providing a rigorous platform for evaluation [17]. This set is particularly challenging because it contains a large number of spatially close p-element bonds, a feature underrepresented in other benchmark sets, and includes structures formed by both covalent bonding and weaker donor-acceptor interactions [17].

Inherent Difficulties in Reference Calculations

Generating reliable reference data for these systems with ab initio methods is fraught with challenges:

- Significant Electron Correlation: The dimerization energies are heavily influenced by large electron correlation contributions.

- Core-Valence Effects: Core-valence correlation effects are non-negligible and must be accounted for.

- Slow Basis Set Convergence: The slow convergence of energies with respect to the basis set size necessitates the use of large, high-quality basis sets [17]. To overcome these hurdles, the creators of the IHD302 set employed a highly accurate protocol using explicitly correlated local coupled cluster theory, specifically PNO-LCCSD(T)-F12, with a tailored basis set correction [17]. This provided the robust reference data needed for a meaningful assessment of more approximate methods.

Experimental & Computational Methodology

Benchmarking Workflow

The following diagram illustrates the comprehensive workflow used to generate the benchmark data and evaluate the DFT methods.

Reference Protocol in Detail

- Method: PNO-LCCSD(T)-F12, a state-of-the-art explicitly correlated local coupled cluster method.

- Basis Set: cc-VTZ-PP-F12(corr.) for the main calculation.

- Basis Set Correction: An additional correction was applied at the PNO-LMP2-F12 level with an aug-cc-pwCVTZ basis set to ensure results close to the complete basis set limit [17].

- Core Treatment: The protocol included core-valence correlation effects, which are crucial for accuracy.

DFT Assessment Parameters

The assessed methods were evaluated with the following consistent parameters:

- Basis Set: The def2-QZVPP basis set was primarily used.

- Element-Specific Consideration: For systems containing 4th-period p-block elements, significant errors were observed with standard def2 basis sets. A critical improvement was achieved by employing ECP10MDF pseudopotentials alongside re-contracted aug-cc-pVQZ-PP-KS basis sets, whose contraction coefficients were derived from atomic DFT (PBE0) calculations [17].

Comparative Performance of Quantum Chemical Methods

Top-Performing DFT Functionals

The assessment identified several DFT functionals that performed robustly across the covalent dimerization reactions in the IHD302 set. The results are summarized in the table below.

| Functional Class | Functional Name | Key Performance Findings |

|---|---|---|

| Meta-GGA | r2SCAN-D4 | One of the best-performing meta-GGA functionals for covalent dimerizations [17]. |

| Hybrid | r2SCAN0-D4 | Top-performing hybrid functional, recommended for robust quantification of Lewis acid/base interactions [22]. |

| Hybrid | ωB97M-V | Excellent hybrid functional performance for covalent dimerizations [17]. |

| Double-Hybrid | revDSD-PBEP86-D4 | Best-performing double-hybrid functional for the evaluated set [17]. |

The Critical Role of SCF Convergence

The success of any DFT functional depends on achieving a fully converged SCF solution. Inadequate convergence can lead to energies that are not representative of the true electronic state, rendering even the best functional unreliable.

- Convergence Tolerances: For high-accuracy studies on challenging systems like p-block dimers, tight SCF convergence criteria are essential. The

TightSCFkeyword in ORCA, for instance, sets stringent tolerances (e.g.,TolEof 1e-8 for energy change andTolRMSPof 5e-9 for the RMS density change) [4]. - Convergence Check Rigor: The default

ConvCheckMode(2) in ORCA, which checks the change in total energy and one-electron energy, offers a good balance. For maximum rigor,ConvCheckMode=0can be used, which requires all convergence criteria to be satisfied [4]. - Stability Analysis: For open-shell systems or those with potential symmetry breaking, performing an SCF stability analysis is recommended to ensure the solution found is a true local minimum and not a saddle point [4].

This section details the key computational tools and protocols referenced in this case study, which are essential for conducting reliable research on p-block element systems.

| Tool/Resource | Function & Application | Key Consideration |

|---|---|---|

| r2SCAN-3c Composite Method | A composite DFT method recommended for robust quantification of Lewis acid/base binding enthalpies against experimental data [22]. | Particularly useful when experimental calibration is available. |

| PNO-LCCSD(T)-F12 | A high-level ab initio method used to generate benchmark-quality reference data where experiment is unavailable [17]. | Computationally expensive; used for generating references, not for high-throughput screening. |

| ECP10MDF Pseudopotential | A relativistic pseudopotential used with re-contracted basis sets for accurate calculations on 4th-period p-block elements (e.g., Se, Br, Kr) [17]. | Critical for avoiding significant errors (up to 6 kcal mol⁻¹) in dimerization energies for these elements. |

| TightSCF Protocol | An SCF convergence protocol setting stringent tolerances for energy and density changes [4]. | Necessary for achieving reliable results in difficult-to-converge systems like transition metal complexes or large p-block assemblies. |

| SCF Stability Analysis | A post-SCF procedure to verify that the converged wavefunction is a true minimum and not an unstable saddle point [4]. | Should be used in cases of suspected symmetry breaking or for open-shell singlets. |

This case study on the IHD302 benchmark set yields several critical lessons for computational research on p-block elements. First, no single DFT functional is universally superior, but functionals like r2SCAN-D4, r2SCAN0-D4, ωB97M-V, and revDSD-PBEP86-D4 demonstrate strong, reliable performance for complex bonding interactions in inorganic heterocycles. Second, methodological rigor is paramount; the choice of basis set and the application of appropriate relativistic pseudopotentials for heavier elements are as consequential as the choice of functional itself. Finally, these findings cement the necessity of robust SCF convergence protocols. A poorly converged calculation invalidates the accuracy of any functional, making the technical execution of the calculation a cornerstone of reliable and reproducible computational chemistry research. The IHD302 set thus serves as a valuable challenge for the continued development of more robust and transferable quantum chemical methods.

Building Robust Protocols: SCF Methods and Computational Approaches

Quantum chemical methods form the cornerstone of computational chemistry, enabling the prediction of molecular structure, reactivity, and properties from first principles. These methods can be broadly categorized into density functional theory (DFT) and wavefunction-based approaches, each with distinct theoretical foundations and practical applications. DFT revolutionized quantum chemistry by using the electron density as the fundamental variable rather than the many-electron wavefunction, dramatically reducing computational cost while maintaining reasonable accuracy for many chemical systems [23]. In contrast, wavefunction-based methods, often called post-Hartree-Fock methods, systematically approach the exact solution of the Schrödinger equation but at significantly higher computational expense. The performance of all these methods relies critically on the self-consistent field (SCF) procedure, an iterative algorithm that seeks consistency between the computed electron density and the potential it generates [13]. Recent research has highlighted particular challenges in applying these methods to systems containing p-block elements, where complex electronic structures and diverse bonding motifs demand robust validation of computational protocols [16].

Theoretical Foundations

Density Functional Theory (DFT)

DFT is grounded in the Hohenberg-Kohn theorems, which establish that all ground-state properties of a many-electron system are uniquely determined by its electron density [23]. This revolutionary insight reduced the problem of 3N spatial coordinates (for N electrons) to just three coordinates, making computational studies of complex systems feasible. The practical implementation of DFT primarily uses the Kohn-Sham approach, which introduces a system of non-interacting electrons that generate the same density as the real, interacting system [23] [24]. The total energy in Kohn-Sham DFT can be decomposed into several components:

- Ion-electron potential energy: Attraction between electrons and nuclei

- Ion-ion potential energy: Repulsion between nuclei (classical)

- Hartree energy: Classical electron-electron repulsion

- Kinetic energy: Of the non-interacting Kohn-Sham system

- Exchange-correlation energy: The quantum mechanical components encompassing exchange (from the Pauli principle) and correlation (from electron-electron interactions)

The critical approximation in DFT is the exchange-correlation functional, for which the exact form remains unknown. The simplest approximation is the Local Density Approximation (LDA), which uses the exchange-correlation energy of a uniform electron gas [24]. More sophisticated Generalized Gradient Approximations (GGA) incorporate the density gradient, while meta-GGAs additionally include the kinetic energy density. Hybrid functionals mix a portion of exact Hartree-Fock exchange with DFT exchange-correlation, and double-hybrids further incorporate perturbative correlation components.

Wavefunction Theory

Wavefunction-based methods approach the many-electron problem more directly by solving approximations of the Schrödinger equation. These methods form a hierarchy of increasing accuracy and computational cost:

- Hartree-Fock (HF) Theory: The starting point, which treats electrons as moving in an average field but incorporates exchange exactly through the antisymmetry of the wavefunction. It neglects electron correlation entirely [24].

- Post-Hartree-Fock Methods: These include:

- Møller-Plesset Perturbation Theory (particularly MP2): Adds electron correlation effects as a perturbation to the HF solution

- Coupled Cluster (CC) Methods: Especially CCSD(T), often called the "gold standard" for single-reference systems

- Configuration Interaction (CI): Systematically includes excited configurations

For systems with heavy p-block elements, explicitly correlated methods (e.g., F12) significantly accelerate basis set convergence, while local correlation techniques (e.g., PNO-LCCSD(T)-F12) make high-level calculations on larger systems feasible by exploiting the short-range nature of correlation effects [16].

The Self-Consistent Field (SCF) Procedure

The SCF procedure is the iterative heart of most quantum chemical calculations, seeking convergence between the input and output electron densities [13]. The SCF error is typically measured as the root-mean-square difference between input and output densities: (\text{err}=\sqrt{\int dx \; (\rho\text{out}(x)-\rho\text{in}(x))^2 }) [13]. Convergence is declared when this error falls below a predefined threshold, which often depends on the desired numerical quality and system size [13].

Several algorithms exist to accelerate SCF convergence:

- Damping: Simple mixing of input and output densities ((F = \text{mix} F{n} + (1-\text{mix}) F{n-1})) [25]

- DIIS (Direct Inversion in Iterative Subspace): Extrapolates new Fock matrices from previous iterations [13] [25]

- LIST (Linear-Expansion Shooting Technique): Family of methods developed in Wang's group [25]

- ADIIS (Adaptive DIIS): Combines advantages of different DIIS schemes [25]

Modern implementations often use sophisticated hybrid approaches like MESA (Multiple Eigenvalue Shifting Algorithm), which combines several acceleration methods and adaptively switches between them based on convergence behavior [25].

Performance Assessment for p-Block Elements

The IHD302 Benchmark Set

Recent research has highlighted the particular challenges quantum chemical methods face when applied to p-block elements. The IHD302 benchmark set was specifically developed to address this, containing 604 dimerization energies of 302 "inorganic benzenes" composed of all non-carbon p-block elements from main groups III to VI up to polonium [16]. This set is divided into two subsets:

- Covalent dimerizations (COV): Formed by direct covalent bonding

- Weaker donor-acceptor interactions (WDA): Characterized as strongly bound van der Waals complexes on the path to covalent bonding

This benchmark presents a particular challenge due to the large number of spatially close p-element bonds underrepresented in other benchmark sets, and the partial covalent bonding character of the WDA interactions [16]. Generating reliable reference data for these systems requires addressing substantial electron correlation contributions, core-valence correlation effects, and slow basis set convergence.

Reference Data Generation

In the IHD302 assessment, reference values were computed using a sophisticated protocol: PNO-LCCSD(T)-F12/cc-VTZ-PP-F12(corr.) with a basis set correction at the PNO-LMP2-F12/aug-cc-pwCVTZ level [16]. This approach combines explicitly correlated coupled cluster theory with local correlation (PNO) to handle the large electron correlation effects while maintaining computational feasibility. The use of pseudopotentials (PP) is essential for heavier elements to account for relativistic effects.

Comparative Performance of Quantum Chemical Methods

Table 1: Performance of Selected DFT Methods for Covalent Dimerizations of p-Block Elements (IHD302 Benchmark)

| Functional Class | Best-Performing Methods | Mean Absolute Error (kcal mol⁻¹) | Key Characteristics |

|---|---|---|---|

| meta-GGA | r2SCAN-D4 | Among lowest MAE | No exact exchange, improved nonlocality |

| Hybrid | r2SCAN0-D4, ωB97M-V | Among lowest MAE | ~25% exact exchange, nonlocal correlation |

| Double-Hybrid | revDSD-PBEP86-D4 | Among lowest MAE | MP2-like correlation, >50% exact exchange |

| Standard GGA | PBE-D3, BLYP-D3 | Higher MAE | Semilocal, economical but less accurate |

Table 2: Performance of Wavefunction and Composite Methods for p-Block Elements

| Method Class | Specific Method | Application/Performance | Computational Cost |

|---|---|---|---|

| Local Coupled Cluster | PNO-LCCSD(T)-F12 | Reference method for IHD302 | Very high but feasible for medium systems |

| Composite DFT | r2SCAN-3c | Excellent structures for IHD302 | Moderate with geometrical corrections |

| Standard CC | CCSD(T) with large basis | Would be accurate but prohibitive | Extreme for 4th period elements |

| MP2 | DLPNO-MP2 | Reasonable with F12 correction | Moderate with local approximations |

The assessment revealed that for covalent dimerizations, the r2SCAN-D4 meta-GGA, r2SCAN0-D4 and ωB97M-V hybrids, and revDSD-PBEP86-D4 double-hybrid functionals delivered the best performance among 26 evaluated DFT methods [16]. Importantly, the study identified significant errors (up to 6 kcal mol⁻¹) for molecules containing 4th-period p-block elements when using standard def2 basis sets without proper relativistic pseudopotentials [16]. This highlights the critical importance of basis set selection and relativistic treatments for heavier elements, where the use of ECP10MDF pseudopotentials with re-contracted basis sets provided substantial improvements [16].

SCF Convergence Protocols

Convergence Criteria and Thresholds

SCF convergence is typically controlled by multiple thresholds that determine when self-consistency is achieved. Different quantum chemistry packages offer various convergence presets:

Table 3: SCF Convergence Criteria in ORCA for Different Precision Levels

| Convergence Level | TolE (Energy) | TolRMSP (RMS Density) | TolMaxP (Max Density) | TolErr (DIIS Error) |

|---|---|---|---|---|

| Loose | 1e-5 | 1e-4 | 1e-3 | 5e-4 |

| Medium | 1e-6 | 1e-6 | 1e-5 | 1e-5 |

| Strong | 3e-7 | 1e-7 | 3e-6 | 3e-6 |

| Tight | 1e-8 | 5e-9 | 1e-7 | 5e-7 |

| VeryTight | 1e-9 | 1e-9 | 1e-8 | 1e-8 |

These criteria can be applied in different convergence check modes:

- Mode 0: All convergence criteria must be satisfied

- Mode 1: Only one criterion needs to be met (risky)

- Mode 2: Check change in total energy and one-electron energy (default in ORCA) [4]

SCF Convergence Challenges with p-Block Elements

Systems containing p-block elements, particularly those with heavy atoms and open-shell configurations, present distinctive challenges for SCF convergence:

- Near-degeneracy effects: Common in main group elements with open d-shells or lone pairs

- Charge sloshing: Electron density oscillating between different regions of the molecule

- Slow convergence: Particularly problematic for systems with small HOMO-LUMO gaps

- Spin contamination: In open-shell systems, leading to incorrect solutions

For transition metal complexes and heavy p-block elements, convergence may be particularly difficult, requiring specialized techniques beyond default settings [4].

Strategies for Difficult SCF Convergence

When standard SCF procedures fail, several strategies can be employed:

- Initial density selection: Starting from a superposition of atomic densities (

rho) or from an initial eigenvector guess (psi) [13] - Occupational smearing: Applying fractional occupations near the Fermi level using

ElectronicTemperatureorDegeneratekeys [13] - Mixing adjustments: Reducing the

Mixingparameter (default 0.075 in BAND) to damp oscillations [13] - DIIS enhancements: Increasing the number of DIIS vectors (

DIIS N> 10) or using alternative methods like LIST [25] - Level shifting: Applying the

Lshiftkeyword to virtual orbitals to prevent occupancy swapping [25] - Spin manipulation: Using

StartWithMaxSpinorVSplitto break initial symmetry between alpha and beta spins [13]

Research Toolkit for p-Block Element Calculations

Table 4: Essential Computational Tools for p-Block Element Research

| Tool Category | Specific Solutions | Primary Function | Application Notes |

|---|---|---|---|

| DFT Functionals | r2SCAN-D4, ωB97M-V, revDSD-PBEP86-D4 | Accurate energetics for covalent bonding | Include dispersion corrections for WDA interactions |

| Wavefunction Methods | PNO-LCCSD(T)-F12, DLPNO-CCSD(T) | High-level reference data | Required for benchmark-quality results |

| Basis Sets | aug-cc-pVQZ-PP, cc-VTZ-PP-F12 | Atomic orbital expansion | Use pseudopotential-adapted sets for >3rd period |

| Pseudopotentials | ECP10MDF, effective core potentials | Relativistic effects for heavy elements | Essential for 4th period and heavier |

| SCF Accelerators | ADIIS+SDIIS, LIST, MESA | Convergence difficult systems | MESA combines multiple methods |

| Structure Codes | ORCA, ADF, BAND | Quantum chemical calculations | Varying SCF implementation and controls |

The comprehensive assessment of quantum chemical methods for p-block elements reveals a complex landscape where method performance strongly depends on the specific elements and bonding situations. While modern DFT functionals like r2SCAN-D4 and ωB97M-V deliver impressive accuracy for many systems, careful method selection remains crucial, particularly for heavier elements where relativistic effects and core-valence interactions become significant. The SCF convergence protocol represents a critical component of successful calculations, with p-block systems often requiring specialized techniques beyond default settings.

Future method development will likely focus on improving robustness and transferability across the periodic table, with benchmark sets like IHD302 providing essential validation data. The integration of machine learning techniques with traditional quantum chemistry shows promise for accelerating calculations while maintaining accuracy, though these approaches will require extensive training data encompassing diverse p-block element chemistry. For researchers investigating p-block elements, the recommended protocol involves using robust hybrid or double-hybrid functionals with appropriate dispersion corrections and basis sets, coupled with careful validation of SCF convergence and, where possible, comparison with high-level wavefunction methods for critical system components.

The selection of an appropriate density functional approximation (DFA) is a critical step in the application of Density Functional Theory (DFT) to chemical problems, particularly in research involving p-block elements. The performance of a functional can vary significantly depending on the chemical system and property under investigation. This guide provides an objective comparison of the performance of meta-GGAs, hybrids, and double-hybrids across diverse chemical domains, presenting quantitative benchmarking data to inform functional selection. Framed within the broader context of validating self-consistent field (SCF) convergence protocols, this review underscores the importance of matching methodological choices to specific research goals, from main-group thermochemistry to excited-state properties of complex dyes and solid-state defects.

Functional Categories and Theoretical Background

Density functionals are systematically categorized by their ingredients and methodology into a hierarchy known as "Jacob's Ladder," which provides a useful framework for understanding their evolution and expected accuracy.

Figure 1. The "Jacob's Ladder" hierarchy of density functionals, illustrating the progression from simplest to most theoretically sophisticated approximations. Higher rungs generally provide improved accuracy at increased computational cost.

- meta-GGAs: These functionals incorporate the kinetic energy density or other local ingredients in addition to the electron density and its gradient, offering improved accuracy for atomization energies and structural properties without the computational cost of hybrid functionals. Examples include SCAN and B97M-V.

- Hybrids: This class mixes a portion of exact Hartree-Fock exchange with exchange from semi-local functionals. Global hybrids like B3LYP, PBE0, and ωB97X-V typically include 20-25% exact exchange, while range-separated hybrids like ωB97M-V use exact exchange at long ranges.

- Double Hybrids: The most advanced rung on the ladder, these functionals combine Hartree-Fock exchange with a perturbative correlation correction, offering superior accuracy for many properties. Examples include B2GP-PLYP, mPW2-PLYP, and spin-scaled variants like SOS-ωB2GP-PLYP.

Performance Comparison Across Chemical Systems

Main-Group Thermochemistry and Kinetics

The Gold-Standard Chemical Database 137 (GSCDB137) provides a comprehensive benchmark for evaluating functional performance across main-group chemistry, comprising 137 datasets and 8377 individual data points [26].

Table 1: Performance of Selected Functionals on Main-Group Chemistry (GSCDB137 Database)

| Functional | Type | Overall Performance | Strengths | Key Limitations |

|---|---|---|---|---|

| ωB97X-V | Hybrid GGA | Most balanced hybrid GGA | Excellent for diverse properties | Moderate cost for large systems |

| ωB97M-V | Hybrid meta-GGA | Most balanced hybrid meta-GGA | Non-covalent interactions, kinetics | Higher computational cost |

| B97M-V | Meta-GGA | Leads meta-GGA class | Solid overall performance | Less accurate for specific barriers |

| revPBE-D4 | GGA | Leads GGA class | Good efficiency | Limited accuracy for complex systems |

| B2GP-PLYP | Double Hybrid | ~25% lower errors vs. best hybrids | Excellent for reaction energies [27] | Requires careful treatment [26] |

Transition Metal Thermochemistry

For 3d transition-metal-containing molecules, the performance of 13 density functionals was evaluated for predicting gas-phase enthalpies of formation [28].

Table 2: Performance for 3d Transition Metal Thermochemistry

| Functional | Type | Mean Absolute Deviation (kcal mol⁻¹) | Performance Notes |

|---|---|---|---|

| B97-1 | Hybrid | 7.2 | Best overall performance; promising for coordination complexes & metal carbonyls |

| mPW2-PLYP | Double Hybrid | 7.3 | Best for larger molecules; excellent for single-reference systems |

| B98 | Hybrid | Not specified (similar to B97-1) | Excellent for diatomics and triatomics |

| B2-PLYP | Double Hybrid | Not specified (among best) | Excellent for single-reference molecules |

| ωB97X | Hybrid | Not specified (among best) | Excellent for single-reference molecules |

Excited-State Properties: BODIPY Dyes

Time-dependent DFT (TD-DFT) methods systematically overestimate electronic excitation energies in boron-dipyrromethene (BODIPY) dyes. A 2025 benchmarking study assessed 28 TD-DFT methods, revealing that spin-scaled double hybrids with long-range correction overcome this overestimation problem [29].

Table 3: Top-Performing Functionals for BODIPY Absorption Energies (SBYD31 Set)

| Functional | Type | Performance | Key Features |

|---|---|---|---|

| SOS-ωB2GP-PLYP | Spin-scaled double hybrid with long-range correction | Top performer; meets chemical accuracy (0.1 eV) | Solves TD-DFT blueshifting problem |

| SCS-ωB2GP-PLYP | Spin-scaled double hybrid with long-range correction | Second best performer | Robust for solvatochromic dyes |

| SOS-ωB88PP86 | Spin-scaled double hybrid with long-range correction | Third best performer | Accurate for intramolecular charge transfer |

| Conventional TD-DFT | Global hybrids, meta-GGAs | Systematic overestimation (blueshift) | Fails to meet accuracy thresholds |

Solid-State Defects and Multireference Systems

The accurate description of in-gap states of point defects in semiconductors with significant multideterminant character presents challenges for standard DFT methods. The NV⁻ center in diamond exemplifies a system where wavefunction theory (WFT) approaches like CASSCF-NEVPT2 provide superior accuracy [30].

Table 4: Method Performance for Solid-State Defects (NV⁻ Center in Diamond)

| Method | Type | Applicability | Key Strengths | Computational Cost |

|---|---|---|---|---|

| CASSCF-NEVPT2 | Wavefunction theory | High accuracy for multireference systems | Quantitative agreement with experiment; handles static & dynamic correlation | Very high; limited to small clusters |

| Hybrid DFT | Hybrid functional | Routine defect screening | Reasonable structures and energies | Moderate for periodic systems |

| Standard DFT (LDA, GGA) | Semi-local functional | Preliminary studies | Computational efficiency | Low; but often inaccurate |

Silicon Chemistry and Specialized Systems

For Si–O–C–H molecular species, different functionals excel for specific properties according to CCSD(T) benchmarks [27].

Table 5: Performance for Si–O–C–H Molecular Species

| Functional | Type | Enthalpy of Formation (MAE) | Vibrational Frequencies (MAE) | Reaction Energies |

|---|---|---|---|---|

| M06-2X | Hybrid meta-GGA | Lowest MAE | Moderate accuracy | Good performance |

| SCAN | Meta-GGA | Moderate accuracy | Lowest MAE | Good performance |

| B2GP-PLYP | Double Hybrid | Not specified | Not specified | Smallest errors |

| PW6B95 | Hybrid | Consistent overall performance | Consistent overall performance | Most balanced across properties |

Detailed Experimental Protocols

Benchmarking Thermochemical Accuracy (GSCDB137 Protocol)

The GSCDB137 protocol represents the current gold standard for assessing functional performance across diverse chemical domains [26].

- Reference Data Selection: Curate high-quality reference values from coupled-cluster theory [CCSD(T)] or active thermochemical tables, removing spin-contaminated or multireference cases.

- Systematic Calculations: Perform single-point energy calculations on optimized geometries using target functionals with appropriate basis sets.

- Error Analysis: Compute mean absolute errors (MAEs) and root-mean-square errors (RMSEs) for each functional across all 137 datasets.

- Functional Ranking: Rank functionals by overall performance and within specific chemical domains (barrier heights, noncovalent interactions, etc.).

Excited-State Benchmarking for Molecular Dyes

The SBYD31 protocol specifically addresses the challenge of predicting excitation energies in BODIPY dyes [29].

- Benchmark Set Construction: Compile experimental λmax values for 23 BODIPY dyes with 31 excitation energies measured in different solvents.

- Computational Methodology: Employ time-dependent double hybrids with spin-component scaling and long-range correction (e.g., SOS-ωB2GP-PLYP).

- Solvent Treatment: Include implicit solvation models to account for solvatochromic effects.

- Validation: Compare calculated vertical excitation energies directly with experimental λmax values, targeting chemical accuracy (0.1 eV).

Wavefunction Protocol for Solid-State Defects

The CASSCF-NEVPT2 protocol addresses multireference character in defect centers like the NV⁻ center in diamond [30].

- Cluster Model Construction: Create hydrogen-terminated cluster models of increasing size to simulate the defect environment.

- Active Space Selection: Identify defect-localized molecular orbitals for the CASSCF procedure (e.g., CAS(6e,4o) for NV⁻ center).

- State-Specific Optimization: Perform state-specific CASSCF geometry optimization for each electronic state of interest.

- Dynamic Correlation Treatment: Apply NEVPT2 on top of CASSCF wavefunctions to incorporate dynamic correlation effects of the surrounding lattice.

- Property Calculation: Compute energy levels, fine structures, and zero-phonon lines from the correlated wavefunctions.

Figure 2. Decision workflow for selecting density functionals based on research problem, system characteristics, and computational resources. This protocol integrates performance data from multiple benchmarking studies.

Table 6: Key Computational Resources for Density Functional Calculations

| Resource | Type | Function | Application Examples |

|---|---|---|---|

| GSCDB137 | Benchmark Database | Comprehensive validation of functional performance across diverse chemistry | Assessing new functionals; method selection for specific problems [26] |

| OMol25 | Training Dataset | Massive dataset of ωB97M-V calculations for machine learning potentials | Training neural network potentials; reference data [31] |

| def2-TZVPD | Basis Set | Balanced quality basis set for accurate DFT calculations | General-purpose molecular calculations [31] |

| aug-cc-pV(X+d)Z | Basis Set Family | Correlation-consistent basis sets with diffuse functions and polarization | High-accuracy CCSD(T) benchmarks; anionic systems [27] |

| ωB97M-V | Density Functional | State-of-the-art range-separated meta-GGA for reference calculations | Generating training data for ML potentials; accurate single-point energies [31] |

| CASSCF-NEVPT2 | Wavefunction Method | High-level treatment of multireference systems with dynamic correlation | Solid-state color centers; open-shell systems [30] |

The performance of density functionals varies significantly across chemical domains, necessitating careful selection based on the specific research application. For general main-group thermochemistry and kinetics, ωB97M-V and ωB97X-V provide exceptional balance between accuracy and cost, while B97M-V leads the meta-GGA class. For transition metal thermochemistry, B97-1 and mPW2-PLYP deliver superior performance, with the latter particularly effective for larger coordination complexes. Excited-state calculations for challenging systems like BODIPY dyes benefit dramatically from modern spin-scaled double hybrids with long-range correction such as SOS-ωB2GP-PLYP, which solve the characteristic overestimation problem of conventional TD-DFT. For strongly correlated systems with multireference character, including solid-state defects, CASSCF-NEVPT2 provides benchmark accuracy where DFT methods struggle. When selecting functionals for p-block element research, researchers should prioritize those validated against comprehensive benchmarks like GSCDB137 and consider the specific electronic structure challenges presented by their systems of interest.

Basis Set Selection and the Role of Pseudopotentials for Heavier Elements

In the realm of computational chemistry, accurately modeling p-block elements, particularly third-row and heavier atoms, presents distinct challenges. The core electrons in these elements become increasingly significant, necessitating robust methodological choices in both basis set selection and electron interaction modeling. This guide objectively compares the performance of all-electron methods against pseudopotential approaches within the specific context of validating Self-Consistent Field (SCF) convergence protocols for p-block element research. We focus on quantitative benchmarks, particularly for calculating core-electron binding energies (CEBEs), a property highly sensitive to core electron treatment and SCF stability [32].

The selection between all-electron (AE) and pseudopotential (PP) methods involves a critical trade-off between computational tractability and physical completeness. AE methods explicitly treat all electrons but face challenges like variational collapse when modeling core-excited states required for ΔSCF CEBE calculations. Pseudopotentials, by freezing core electrons, offer enhanced numerical stability and computational efficiency for large systems, including surfaces and periodic materials [32]. This guide synthesizes recent findings to help researchers navigate these choices.

Theoretical Background and Key Concepts

The ΔSCF Method for Core-Electron Binding Energies

The Δ Self-Consistent Field (ΔSCF) method is a widely used density-functional theory (DFT) approach for calculating Core-Electron Binding Energies (CEBEs) [32]. It calculates the binding energy as the total energy difference between the initial ground state (N electrons) and the final core-excited state (N-1 electrons), as defined by:

[ Eb = E{N-1}[nF] - EN[n_I] ]

Here, (EN[nI]) is the total energy of the initial ground state, and (E{N-1}[nF]) is the total energy of the final state with a core-hole [32]. The accuracy of this method depends critically on the ability to achieve converged SCF solutions for both states, a process fraught with challenges for core-excited states.

Basis Set Families for p-Block Elements

Basis sets form the mathematical basis for expanding electron orbitals. For heavier p-block elements, the choice of basis set is critical, as it must adequately describe both valence and core regions.

- Pople-style Basis Sets: Examples include 6-31G and 6-311G, developed decades ago primarily for Hartree-Fock calculations. Sets like 6-31G(d,p) are considered double-zeta polarized quality. A key limitation is that many combinations are inherently unbalanced, and the 6-311G basis is argued to be only of double-zeta quality despite its naming [33].

- Correlation-Consistent Basis Sets (Dunning): The cc-pVnZ family (e.g., cc-pVDZ, cc-pVTZ) is designed for wavefunction-based correlation methods. They are systematically convergent and balanced, with the highest angular momentum function defining the quality [33].

- Polarization-Consistent Basis Sets (Jensen): The pcseg-n family (e.g., pcseg-1, pcseg-2) is optimized specifically for DFT methods. They offer significantly lower basis set errors than Pople-style sets of a formal similar quality. For instance, pcseg-1 has a basis set error roughly three times lower than 6-31G(d,p) [33].

Pseudopotentials for Heavier Elements

Pseudopotentials (PPs), or effective core potentials, simplify calculations by replacing core electrons with an effective potential, thereby reducing the number of electrons explicitly treated. For heavier elements, this is not just a computational convenience but often a necessity.