Validating the Future: A Comprehensive Guide to Machine Learning Approaches in Computational Chemistry

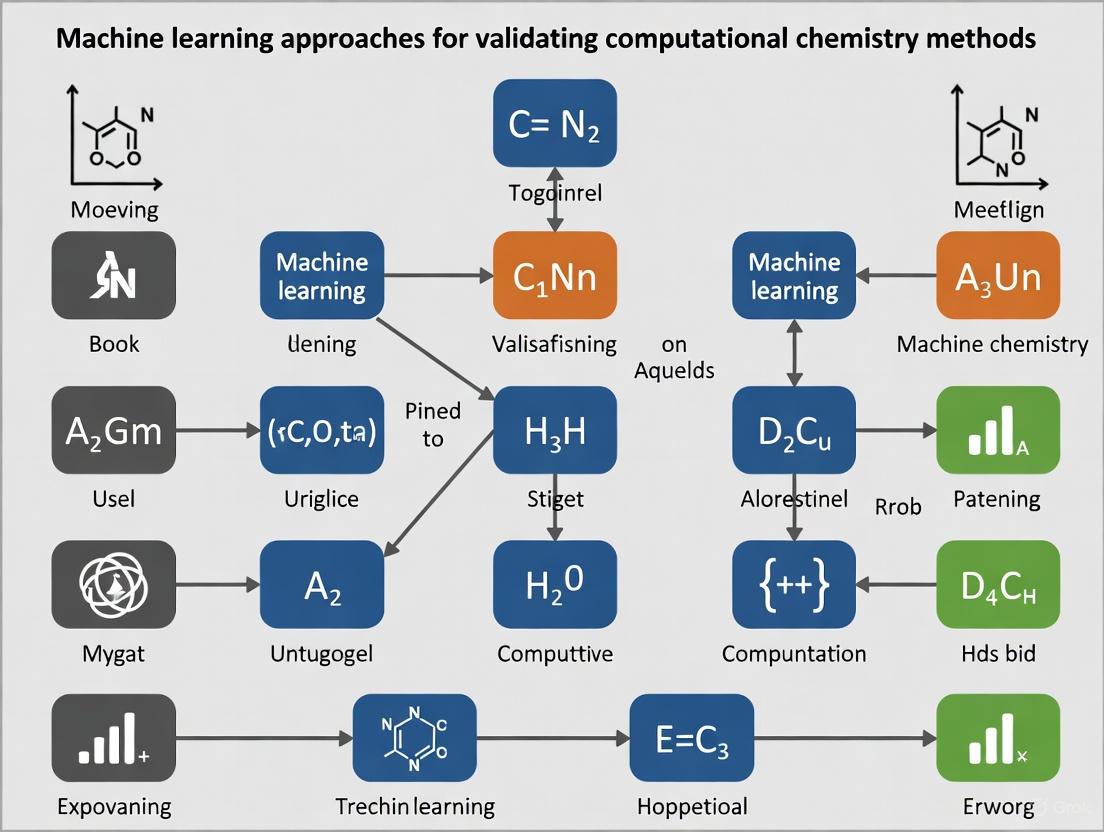

This article provides a comprehensive overview of machine learning (ML) validation frameworks within computational chemistry, tailored for researchers and drug development professionals.

Validating the Future: A Comprehensive Guide to Machine Learning Approaches in Computational Chemistry

Abstract

This article provides a comprehensive overview of machine learning (ML) validation frameworks within computational chemistry, tailored for researchers and drug development professionals. It explores the foundational principles underscoring the necessity of robust validation for model generalizability, moving into a detailed examination of methodological applications from quantum chemistry to materials science. The content addresses critical troubleshooting and optimization strategies for overcoming common pitfalls like data imbalance and hyperparameter tuning. Finally, it presents a comparative analysis of validation techniques, establishing best practices for benchmarking ML models to ensure predictive reliability in biomedical and clinical research applications.

The Critical Role of Validation in Chemical Machine Learning

Why Validation is the Cornerstone of Reliable Chemical Models

In the disciplines of computational chemistry and machine learning (ML), models are developed to predict molecular properties, chemical reactivity, and biological activity. However, the practical utility of these models is determined not by their complexity but by their demonstrated reliability and predictive accuracy when applied to new, unseen data. Validation serves as the critical bridge between theoretical innovation and practical application, ensuring that model predictions can inform real-world decision-making in areas like drug discovery and materials science [1] [2]. This document outlines the essential protocols, metrics, and tools for establishing robust validation practices, framed within the context of computational chemistry and ML.

Core Principles of Model Validation

Effective validation is governed by several foundational principles that guard against over-optimism and model failure.

- Premise of Real-World Performance: The primary goal of validation is to estimate a method's performance in its operational context, predicting properties that are unknown at the time of application. Models must be evaluated on data that was not used in the training process to ensure they capture underlying patterns rather than memorizing the dataset [1].

- Data Sharing and Reproducibility: For results to be credible, studies must provide usable primary data in routinely parsable formats. This includes atomic coordinates for proteins and ligands, with full proton positions and bond order information. Reproducibility is a cornerstone of the scientific method, and sharing data enables independent verification and direct comparison of methods [1].

- "Fit-for-Purpose" Approach: The validation strategy must be aligned with the model's intended application, or its Context of Use (COU). A model designed for virtual screening requires a different validation approach than one designed for predicting binding affinity or synthetic accessibility. The questions of interest and the potential risk of model error dictate the necessary stringency of the validation process [3].

Quantitative Metrics for Model Evaluation

Selecting the appropriate quantitative metrics is essential for an accurate assessment of model performance. The choice of metric depends on the type of task (classification or regression) and the specific costs associated with different types of prediction errors.

Table 1: Key Metrics for Classification Models in Chemical Applications

| Metric | Formula | Interpretation | Ideal Use Case in Chemistry |

|---|---|---|---|

| Accuracy | $(TP + TN) / (TP+TN+FP+FN)$ | Overall proportion of correct predictions | Initial assessment for balanced datasets; can be misleading for imbalanced data [4] [5]. |

| Precision | $TP / (TP + FP)$ | Purity of positive predictions; how many selected compounds are truly active | When the cost of false positives (FP) is high (e.g., prioritizing compounds for expensive synthesis) [4] [5]. |

| Recall (Sensitivity) | $TP / (TP + FN)$ | Completeness of positive predictions; how many active compounds were found | When the cost of false negatives (FN) is high (e.g., toxicity prediction, where missing a toxic compound is unacceptable) [4] [5]. |

| F1-Score | $2 \times (Precision \times Recall) / (Precision + Recall)$ | Harmonic mean of precision and recall | A balanced measure for imbalanced datasets where both FP and FN are important [4] [5]. |

| Area Under the ROC Curve (AUC-ROC) | Area under the TPR vs. FPR curve | Overall model performance across all classification thresholds | Evaluating the model's ability to rank active compounds above inactives in virtual screening [5]. |

Table 2: Key Metrics for Regression Models in Chemical Applications

| Metric | Formula | Interpretation |

|---|---|---|

| Mean Absolute Error (MAE) | $\frac{1}{N} \sum \mid yj - \hat{y}j \mid$ | Average magnitude of error, robust to outliers. Easy to interpret [5]. |

| Root Mean Squared Error (RMSE) | $\sqrt{\frac{1}{N} \sum (yj - \hat{y}j)^2}$ | Average magnitude of error, but penalizes larger errors more heavily than MAE [5]. |

| Coefficient of Determination (R²) | $1 - \frac{\sum (yj - \hat{y}j)^2}{\sum (y_j - \bar{y})^2}$ | Proportion of variance in the dependent variable that is predictable from the independent variables [5]. |

Experimental Validation Protocols

Protocol 1: K-Fold Cross-Validation for Robust Performance Estimation

Purpose: To obtain a reliable and stable estimate of model performance, reducing the variance associated with a single train/test split [6].

Workflow:

- Data Preparation: The entire dataset is randomly shuffled.

- Splitting: The data is split into k equal-sized folds (commonly k=5 or 10).

- Iterative Training and Validation: The model is trained and validated k times. In each iteration:

- A different fold is held out as the validation set.

- The remaining k-1 folds are used as the training set.

- The model is trained on the training set and evaluated on the validation fold, generating a performance score (e.g., AUC, RMSE).

- Performance Averaging: The final reported performance is the average of the k individual scores. The standard deviation of these scores indicates the model's consistency [6].

The following diagram illustrates this iterative process:

Protocol 2: Rigorous Benchmark Dataset Preparation for Virtual Screening

Purpose: To create a benchmark dataset for evaluating virtual screening (VS) methods that accurately reflects the challenges of real-world application, thereby preventing inflated performance estimates [1].

Workflow:

- Define the Objective: Clearly state the goal of the VS experiment (e.g., "enrichment of novel kinase inhibitors").

- Select Active Compounds: Compile a set of experimentally confirmed active compounds (actives) for the target.

- Best Practice: Include chemically diverse actives to ensure the model generalizes beyond obvious analogs [1].

- Select Decoy Compounds: Compile a set of compounds presumed to be inactive (decoys).

- Best Practice: Decoys should be "hard negatives"—pharmacophorically similar but functionally inactive—to avoid creating a trivial discrimination task. Property-matched decoys from databases like the Directory of Useful Decoys (DUD) are commonly used [1].

- Data Curation:

- Standardize Structures: Apply consistent rules for protonation, tautomerism, and stereochemistry.

- Critical Step: Ensure that knowledge of the active compounds' bound states or properties does not "leak" into the preparation of the protein structure or decoy set. For docking, this means avoiding optimizing the protein structure with the cognate ligand before the test [1].

- Performance Evaluation: Use the prepared benchmark to run the VS workflow. Evaluate performance using metrics like Enrichment Factor (EF) and AUC-ROC, and report the curve to show performance across the entire ranking [1] [5].

Protocol 3: Experimental Validation of Computational Predictions

Purpose: To provide ultimate confirmation of a model's practical utility through wet-lab experimentation, moving from in silico prediction to real-world verification [2].

Workflow:

- Model Prediction and Compound Selection: The computational model is used to generate predictions (e.g., a novel drug candidate with high predicted efficacy, a catalyst for a specific reaction, or a molecule with a desired property). A shortlist of top candidates is generated.

- Synthesis and Characterization: The selected compounds are synthesized. Their chemical structures and purity are confirmed using analytical techniques (e.g., NMR, LC-MS).

- In Vitro / Biochemical Testing: The synthesized compounds are tested in relevant biochemical or cell-based assays to measure the property of interest (e.g., binding affinity, inhibitory concentration (IC50), or reaction yield and enantioselectivity).

- Data Comparison and Model Refinement: The experimental results are quantitatively compared to the computational predictions. Statistical analysis (e.g., correlation coefficients, error metrics from Table 2) is used to assess the agreement. Discrepancies inform model refinement and future cycles of research [7] [2].

The iterative nature of this process is key to robust model development:

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational and Experimental Resources

| Category | Item | Function in Validation |

|---|---|---|

| Computational Tools | Cross-Validation Software (e.g., Scikit-learn cross_val_score) |

Implements robust performance estimation protocols to prevent overfitting [6]. |

| Benchmark Datasets (e.g., PDBbind, DUD) | Provides standardized, curated datasets for fair comparison of different computational methods [1]. | |

| Confusion Matrix Analysis | Provides a detailed breakdown of prediction vs. reality for classification tasks, enabling calculation of precision, recall, etc [4] [5]. | |

| Data Resources | Protein Data Bank (PDB) | Source of 3D protein structures for docking studies; requires careful preparation (adding protons, assigning bond orders) [1]. |

| PubChem/ChemBL | Repositories of bioactivity data for training and testing ligand-based models and for comparing generated molecules to existing ones [2]. | |

| Experimental Assays | Cell-Based Viability Assays (e.g., MTT, CellTiter-Glo) | Measures cytotoxicity, a key endpoint for toxicity prediction model validation [8]. |

| Binding Assays (e.g., SPR, FRET) | Quantifies molecular interactions (e.g., protein-ligand binding) to validate affinity predictions [1]. | |

| Analytical Chemistry Tools (e.g., HPLC, NMR) | Determines purity, identity, and enantiomeric excess of synthesized compounds, crucial for validating generative models [7]. |

In machine learning for computational chemistry, generalization refers to a model's ability to make accurate predictions on new, unseen molecular data beyond the compounds it was trained on. The generalization gap—the performance difference between training data and unseen data—serves as a critical indicator of overfitting and prediction reliability in drug discovery applications [9]. This gap quantifies the disparity between a model's empirical performance (on training data) and its expected performance on the true data-generating distribution, which is particularly important when predicting molecular properties, binding affinities, or reaction outcomes [9] [10].

In the context of computational chemistry validation research, understanding and controlling the generalization gap is essential because the ultimate goal is to develop models that reliably predict experimental outcomes for novel chemical structures. The gap encompasses both intrinsic error from finite-sample effects and external error due to shifts in data distribution between training compounds and new chemical spaces being explored [9]. As machine learning plays an increasingly transformative role in accelerating drug discovery by enhancing precision and reducing timelines, ensuring models generalize effectively to real-world scenarios becomes paramount for reducing costly late-stage failures [11].

Theoretical Foundations and Quantitative Metrics

Formal Definitions

The generalization gap is formally defined as the absolute difference between a model's empirical risk and its expected statistical risk. In supervised learning for chemical applications, this is expressed as:

Generalization Gap = |(1/n) × ∑ℓ(θ, xi, yi) - E_(x,y)∼D[ℓ(θ, x, y)]| [9]

Where ℓ is the loss function, θ represents the model parameters, (xi, yi) are training examples (e.g., molecular structures and target properties), and D is the true data distribution encompassing the broader chemical space of interest.

Table: Components of Generalization Error in Chemical ML

| Error Type | Description | Impact in Chemistry Context |

|---|---|---|

| Intrinsic Error | Finite-sample effects and overfitting to training data | Model overfits to specific molecular patterns in training set |

| External Error | Performance degradation from distribution shifts | Model encounters novel structural scaffolds or property ranges |

Quantitative Metrics for Generalization Assessment

Table: Metrics for Quantifying Generalization Gap in Chemical ML

| Metric Category | Specific Measures | Application Context in Chemistry |

|---|---|---|

| Performance Discrepancy | Difference in training vs. test RMSE, MAE, R² | Prediction of molecular properties, binding energies |

| Statistical Bounds | Rademacher complexity, PAC-Bayes bounds | Theoretical guarantees for model reliability |

| Diagnostic Measures | Consistency, Instability, Functional Variance | Practical assessment of model robustness [9] |

For molecular property prediction, the generalization gap often manifests as unexpectedly high errors when models encounter structurally novel compounds or physicochemical properties outside the training distribution. Research indicates that in adversarial training scenarios common for robust molecular models, the generalization gap decomposes into adversarial bias (dominating and growing with perturbation radius) and adversarial variance (exhibiting unimodal dependence) [9].

Experimental Protocols for Generalization Assessment

Protocol: Train-Test Splitting for Chemical Data

Objective: Implement data splitting strategies that realistically simulate real-world generalization challenges in chemical applications.

Procedure:

- Random Splitting

- Shuffle entire dataset randomly

- Allocate 70-80% for training, 10-15% for validation, 10-15% for testing

- Applicable for homogeneous chemical datasets with similar compounds

Scaffold-Based Splitting

- Group compounds by molecular scaffold (core structure)

- Assign different scaffolds to training and test sets

- Tests model ability to generalize to novel chemotypes

Temporal Splitting

- Split data based on publication or discovery date

- Train on older compounds, test on newer ones

- Simulates real-world deployment where new compounds are designed after model development

Property-Based Splitting

- Split based on specific molecular properties (e.g., molecular weight, logP)

- Ensures test set covers different regions of chemical space

- Assesses extrapolation capability beyond training property ranges

Validation Metrics:

- Calculate performance metrics (RMSE, MAE, R²) separately for training and test sets

- Compute generalization gap as absolute difference: |Training Metric - Test Metric|

- Track consistency across multiple splitting iterations with different random seeds

Protocol: Cross-Validation with Chemical Constraints

Objective: Provide robust estimate of generalization performance while respecting chemical relationships.

Procedure:

- Stratified k-Fold Cross-Validation

- Partition data into k folds while preserving distribution of key properties

- Ensure each fold represents overall chemical space diversity

Group k-Fold Cross-Validation

- Group compounds by shared scaffolds or functional groups

- Ensure all compounds from same group remain in same fold

- Prevents information leakage between training and validation

Time-Series Cross-Validation

- For data with temporal component, maintain chronological order

- Expanding window: fixed initial training set, gradually add data

- Rolling window: fixed size training window that moves through time

Calculation:

- Generalization gap calculated as average performance difference between training and validation folds

- Report mean and standard deviation across folds to assess consistency

Protocol: Out-of-Distribution Generalization Testing

Objective: Systematically evaluate model performance under distribution shifts relevant to drug discovery.

Procedure:

- Define Distribution Shifts

- Identify potential shifts: structural scaffolds, property ranges, assay conditions

- Curate specialized test sets representing each shift scenario

Progressive Difficulty Assessment

- Create test sets with increasing distance from training distribution

- Quantify distance using molecular similarity metrics (Tanimoto, RMSE)

Performance Monitoring

- Evaluate model on each specialized test set separately

- Compare performance degradation patterns across shift types

- Identify model weaknesses for specific chemical domains

Analysis:

- Calculate generalization gaps for each distribution shift scenario

- Rank model sensitivity to different types of shifts

- Inform data collection strategies to address largest gaps

Visualization of Generalization Assessment Workflows

Chemical ML Validation Pipeline

Generalization Gap Diagnostic Framework

Research Reagent Solutions for Generalization Research

Table: Essential Computational Tools for Generalization Studies

| Tool Category | Specific Solutions | Function in Generalization Research |

|---|---|---|

| ML Frameworks | TensorFlow, PyTorch, Scikit-learn | Flexible model implementation and experimentation [10] |

| Chemical Libraries | RDKit, OpenChem, DeepChem | Molecular featurization and chemical-aware ML [10] |

| Visualization Tools | Matplotlib, Plotly, RDKit Visualization | Performance analysis and error pattern identification |

| Specialized Architectures | Graph Neural Networks (GNNs), Transformers | Domain-appropriate models for molecular data [10] |

| Generalization Metrics | Custom implementations of consistency, instability | Quantification of generalization behavior [9] |

Mitigation Strategies for Computational Chemistry

Data-Centric Approaches

Chemical Data Augmentation:

- Generate realistic molecular variations while preserving activity

- Apply controlled noise to molecular descriptors and features

- Use generative models (GANs, VAEs) to expand chemical diversity [11]

Strategic Data Collection:

- Identify gaps in chemical space coverage

- Prioritize compounds that maximize diversity and reduce extrapolation distance

- Implement active learning approaches for targeted data acquisition

Model-Centric Approaches

Regularization Techniques:

- Apply L1/L2 regularization to control model complexity

- Implement dropout in neural networks [10]

- Use early stopping to prevent overfitting to training data

Architecture Selection:

- Choose model complexity appropriate for available data

- Utilize domain-specific architectures (GNNs for molecular graphs)

- Implement ensemble methods to reduce variance and improve robustness

Invariant Representation Learning:

- Develop representations robust to irrelevant molecular variations

- Learn features invariant to specific transformation groups

- Minimize representation distance across different environmental conditions [9]

Protocol: Regularization Optimization for Chemical ML

Objective: Systematically identify optimal regularization strategy to minimize generalization gap.

Procedure:

- Regularization Screening

- Test L1, L2, and elastic net regularization across reasonable parameter ranges

- Evaluate dropout rates (0.1-0.5) for neural network architectures

- Assess early stopping patience parameters

Performance Monitoring

- Track both training and validation performance across regularization strengths

- Identify point where generalization gap is minimized without significant underfitting

- Document trade-offs between bias and variance

Cross-Validation

- Optimize regularization parameters using nested cross-validation

- Ensure chemical splits are respected during parameter tuning

- Select parameters that generalize across different chemical subspaces

Case Study: Generalization in Quantum Property Prediction

A practical example from recent literature demonstrates the critical importance of generalization assessment in computational chemistry. When developing machine learning potentials for quantum chemical calculations, researchers observed that models achieving exceptional accuracy on training molecules (RMSE < 1 kcal/mol) showed significantly degraded performance (RMSE > 5 kcal/mol) on novel molecular scaffolds not represented in training data [12].

Intervention Strategy:

- Implemented scaffold-based splitting during model development

- Applied extensive data augmentation through conformer generation

- Utilized graph neural networks with built-in physical constraints

- Incorporated transfer learning from larger molecular datasets

Results: The systematic approach to generalization reduced the gap between training and test performance by 60%, while maintaining competitive accuracy on both familiar and novel molecular structures. This case highlights that in computational chemistry, controlling generalization gap is not merely a statistical concern but a practical necessity for developing useful predictive models.

Emerging Frontiers and Future Directions

The field of generalization in chemical ML is rapidly evolving with several promising research directions:

Causal Representation Learning: Developing molecular representations that capture causal relationships rather than superficial correlations to improve out-of-distribution generalization.

Foundation Models for Chemistry: Leveraging large-scale pre-trained models that learn general chemical principles transferable across diverse tasks and domains.

Uncertainty Quantification: Advanced methods for predicting model uncertainty, particularly for novel compounds where generalization is most challenging.

Federated Learning: Approaches that enable learning from distributed chemical data while preserving privacy and intellectual property.

As machine learning continues to transform computational chemistry and drug discovery, the systematic assessment and control of generalization gap will remain essential for building models that deliver reliable real-world performance [11]. The protocols and methodologies outlined here provide a foundation for researchers to develop more robust and generalizable predictive models in chemical sciences.

In the field of machine learning for computational chemistry, three interconnected challenges consistently impede the development of robust and predictive models: over-fitting, data scarcity, and incomplete chemical space coverage. Over-fitting occurs when models learn noise and patterns from limited training data that do not generalize to new datasets, leading to poor predictive performance in real-world applications. Data scarcity, particularly for specific molecular properties or understudied target classes, restricts the amount of high-quality labeled data available for training, which is a fundamental requirement for most supervised learning algorithms. Furthermore, the chemical space of synthesizable molecules is astronomically vast, estimated to exceed 10^60 compounds, making comprehensive exploration and representation in training datasets practically impossible [13] [14]. These challenges are not independent; data scarcity exacerbates over-fitting, and both prevent adequate coverage of the relevant chemical space. This document outlines practical protocols and application notes to help researchers diagnose, mitigate, and overcome these core challenges within computational chemistry validation research.

Addressing Data Scarcity and Imbalance

Data scarcity is a pervasive obstacle, especially when predicting novel molecular properties or working with newly emerging experimental data. A common manifestation is task imbalance in multi-task learning (MTL), where different predicted properties have vastly different amounts of available labeled data.

Protocol: Adaptive Checkpointing with Specialization (ACS) for Multi-Task Learning

The ACS protocol is designed to mitigate negative transfer in MTL, a phenomenon where learning from data-rich tasks degrades performance on data-scarce tasks [15].

- Objective: To train a single multi-task graph neural network (GNN) that provides specialized models for each task, protecting data-scarce tasks from detrimental parameter updates.

Materials:

- A multi-task dataset with imbalanced labels (e.g., the ClinTox dataset [15]).

- A Graph Neural Network architecture with a shared backbone and task-specific heads.

- Standard deep learning framework (e.g., PyTorch, TensorFlow).

Procedure:

- Model Architecture Setup: Construct a GNN with a shared message-passing backbone. Attach separate, task-specific multi-layer perceptron (MLP) heads to this backbone for each property being predicted.

- Training Loop: Train the entire model on all tasks simultaneously. Use a masked loss function to account for missing labels across tasks.

- Validation and Checkpointing: During training, continuously monitor the validation loss for each individual task. For a given task, when its validation loss reaches a new minimum, checkpoint the shared backbone parameters in combination with that task's specific MLP head.

- Specialization: After training is complete, for each task, the final model is the checkpointed backbone-head pair that achieved its lowest validation loss. This provides each task with a model whose shared parameters were captured at their most beneficial state for that specific task.

Validation: On the ClinTox dataset, ACS demonstrated a 15.3% improvement over single-task learning and a 10.8% improvement over standard MTL without checkpointing, effectively mitigating the negative transfer from the data-rich task to the data-scarce one [15].

Application Note: Data Augmentation and Resampling Techniques

For single-task learning, data augmentation techniques are essential for expanding small datasets. The table below summarizes common approaches.

Table 1: Data Augmentation and Resampling Techniques for Imbalanced Chemical Data

| Technique | Description | Application Context | Considerations |

|---|---|---|---|

| SMOTE [16] | Synthetic Minority Over-sampling Technique. Generates new synthetic samples for the minority class in feature space. | Polymer property prediction [16], catalyst design [16]. | Can introduce noisy samples if the minority class is not well clustered. |

| Borderline-SMOTE [16] | A variant of SMOTE that only oversamples minority instances near the decision boundary. | Identifying HDAC8 inhibitors where active compounds are the minority [16]. | Focuses on strengthening the decision boundary, which can be more effective than SMOTE. |

| Functional Group-Based Coarse-Graining [17] | Represents molecules as graphs of functional groups rather than atoms, reducing dimensionality and data requirements. | Designing adhesive polymer monomers with limited labeled data (~600 samples) [17]. | Leverages chemical knowledge, leading to highly data-efficient models. Achieved >92% accuracy with small datasets. |

Mitigating Over-fitting

Over-fitting is a critical risk when working with high-dimensional molecular data and complex models like deep neural networks. The following protocol provides a robust workflow to prevent it.

Protocol: Conformal Prediction for Robust Model Generalization

Conformal Prediction (CP) is a framework that quantifies the uncertainty of predictions, allowing researchers to set a desired confidence level and control error rates [13].

- Objective: To train a machine learning classifier that can screen vast chemical libraries and output prediction sets with a guaranteed error rate.

Materials:

Procedure:

- Data Splitting: Split the labeled data (e.g., 1 million compounds with docking scores) into a proper training set (80%) and a calibration set (20%).

- Model Training: Train a classifier (e.g., CatBoost) on the proper training set to distinguish between "active" and "inactive" compounds based on a predefined score threshold.

- Calibration: Use the calibration set to compute non-conformity scores, which measure how unusual a new example is compared to the training set.

- Prediction with Confidence: For a new, unlabeled molecule, the CP framework produces a p-value for each possible class (active/inactive). The user selects a significance level (ε, e.g., 0.10). The predictor then outputs the set of classes for which the p-value exceeds ε.

- Interpretation: A prediction set containing only "active" indicates high confidence that the molecule is active. A set containing both labels signifies higher uncertainty, and an empty set indicates the molecule is not like the training data.

Validation: This workflow was applied to screen a 3.5 billion-compound library for GPCR ligands. The CP framework reduced the number of compounds requiring explicit docking by over 1,000-fold while successfully identifying bioactive ligands, demonstrating high generalization capability [13].

Conformal Prediction Workflow: This diagram illustrates the process of using conformal prediction to generate predictions with a guaranteed error rate, enhancing model reliability.

Navigating Vast Chemical Space

The ultimate goal is to design novel, optimal molecules, which requires efficiently exploring the vast chemical space. Generative AI models, when properly optimized, are key to this endeavor.

Protocol: Goal-Directed Molecular Generation with Reinforcement Learning

This protocol uses reinforcement learning (RL) to optimize generative models for specific chemical properties [19].

- Objective: To train a generative model to design novel molecules that maximize a multi-objective reward function (e.g., high target binding, low off-target activity, good drug-likeness).

Materials:

- A generative model (e.g., Graph Convolutional Policy Network - GCPN [19]).

- Property prediction models (e.g., for binding affinity, solubility).

- A defined reward function.

Procedure:

- Agent and Environment Setup: The generative model is the agent. The action space is the set of possible chemical steps (add/remove atom/bond). The state is the current molecular graph.

- Reward Function Design: Define a composite reward function, R(m). Example components include:

- Rbinding(m): Predicted binding affinity to the primary target.

- Roff-target(m): Penalty for predicted binding to off-targets.

- RSA(m): Reward for high synthetic accessibility.

- Rsimilarity(m): Penalty for being too dissimilar from a known active scaffold.

- Training Loop: The agent (generator) produces a molecule step-by-step. After each episode (a complete molecule is generated), the reward R(m) is calculated. The agent's policy is updated using a policy gradient method to maximize the expected cumulative reward.

- Sampling: After training, the agent is used to sample new molecules, which are biased towards high rewards.

Validation: The DeepGraphMolGen framework employed this strategy to generate molecules with strong binding affinity for dopamine transporters while minimizing affinity for norepinephrine receptors, successfully producing candidates optimized for this complex multi-objective profile [19].

Reinforcement Learning for Molecular Generation: This workflow shows the iterative process of training a generative model with reinforcement learning to design molecules that maximize a multi-objective reward function.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Software, Databases, and Models for Computational Chemistry Validation

| Tool Name | Type | Primary Function | Application in Addressing Core Challenges |

|---|---|---|---|

| ZINC / ChEMBL [18] | Database | Provides access to millions of commercially available compounds with annotated bioactivity and physicochemical data. | Foundation for virtual screening and model training; improves chemical space coverage. |

| CatBoost [13] | Software Library | A gradient boosting algorithm that works effectively with categorical features (like molecular fingerprints). | Used in high-throughput virtual screening workflows for its speed and accuracy, mitigating data scarcity. |

| RDKit [17] | Software Library | Open-source cheminformatics toolkit for working with molecular structures and descriptors. | Essential for generating molecular fingerprints, descriptors, and functional-group decomposition. |

| DeepGraphMolGen [19] | Model/Algorithm | A graph-based generative model optimized with reinforcement learning. | Navigates chemical space to design novel molecules with tailored multi-property profiles. |

| ACS Framework [15] | Training Scheme | Adaptive Checkpointing with Specialization for multi-task graph neural networks. | Directly addresses data scarcity and negative transfer in multi-task property prediction. |

| Conformal Predictors [13] | Statistical Framework | Provides predictions with valid, user-specified confidence levels. | Mitigates over-fitting by quantifying model uncertainty and controlling error rates on new data. |

In computational chemistry, the promise of machine learning (ML) to accelerate molecular design and predict chemical properties is tempered by a critical challenge: ensuring that models perform reliably on new, unseen chemical data. The massive search spaces inherent to chemistry, such as the estimated 10^60 feasible small organic molecules, make robust validation not just a technical step, but a fundamental requirement for scientific credibility [20]. A model's performance is only as reliable as the validation workflow that measures it. This document outlines a rigorous validation workflow, from initial data splitting to final blind testing, providing application notes and protocols tailored for researchers, scientists, and drug development professionals working at the intersection of ML and chemistry.

Data Splitting Strategies

The foundation of any robust ML model is a data splitting strategy that accurately assesses its ability to generalize. The choice of strategy should mirror the real-world application of the model.

Table: Data Splitting Strategies for Chemical Data

| Strategy | Methodology | Best-Suited For | Advantages | Limitations |

|---|---|---|---|---|

| Random Split | Random assignment of molecules to training, validation, and test sets. | Homogeneous datasets with simple property prediction tasks. | Simple to implement; maximizes data usage. | High risk of data leakage with structurally similar molecules; unrealistic performance estimates. |

| Scaffold Split | Separation based on molecular scaffold (core structure). | Virtual screening and activity prediction where generalization to new chemotypes is key. | Tests generalization to novel core structures; prevents optimistic bias. | Can be overly challenging; may exclude entire activity classes from training. |

| Butina Split | Cluster molecules by structural similarity (e.g., using fingerprints), then split clusters. | Balancing similarity and diversity between sets. | Ensures similar molecules are in the same set; more realistic than random splits. | Performance depends on clustering parameters and cutoff. |

| Stratified Split | Maintains the distribution of a key property (e.g., active/inactive ratio) across all splits. | Highly imbalanced datasets (e.g., active vs. inactive compounds). | Preserves class distribution; prevents splits lacking minority class. | Does not address structural data leakage. |

| Time Split | Chronological split, training on older data and testing on newer data. | Modeling evolving data, like prospective experimental results or patent data. | Simulates real-world deployment and temporal drift. | Requires timestamped data. |

Protocol: Implementing a Scaffold Split

Objective: To partition a dataset of molecules into training, validation, and test sets such that molecules sharing a common Bemis-Murcko scaffold are contained within a single split. This tests a model's ability to generalize to entirely new molecular scaffolds.

Materials:

- Input Data: A file containing molecular structures (e.g., SMILES strings).

- Software: RDKit (Python package).

Methodology:

- Scaffold Generation:

- For each molecule in the dataset, generate its Bemis-Murcko scaffold using RDKit's

GetScaffoldForMolfunction. This scaffold represents the core ring system with attached linkers, excluding side chains.

- For each molecule in the dataset, generate its Bemis-Murcko scaffold using RDKit's

- Scaffold Grouping:

- Group all molecules by their identical scaffolds. Each unique scaffold defines a cluster of molecules.

- Sorting and Assignment:

- Sort the scaffold clusters by their size (number of molecules) in descending order.

- Iterate through the sorted list of scaffolds. Assign all molecules belonging to a scaffold to the training, validation, and test sets in a round-robin fashion (e.g., 70% train, 15% validation, 15% test) until the desired set sizes are reached. This approach helps maintain a balanced distribution of scaffold frequencies across splits.

Model Training and Hyperparameter Tuning

With data splits established, the model training and tuning phase begins. A critical best practice is the strict separation of the validation and test sets.

Protocol: k-Fold Cross-Validation with Hyperparameter Tuning

Objective: To reliably estimate model performance and optimize model hyperparameters without using the final test set.

Materials:

- Training set (from Data Splitting phase).

- Validation set (from Data Splitting phase).

Methodology:

- Define Hyperparameter Space: Specify the hyperparameters to be optimized and their value ranges (e.g., learning rate, number of layers in a neural network, dropout rate).

- k-Fold Splitting: Split the training set into k subsets (folds) of approximately equal size. For imbalanced datasets, use stratified k-fold to preserve the class distribution in each fold [21].

- Iterative Training and Validation:

- For each unique combination of hyperparameters:

- For each of the k folds:

- Designate the current fold as the validation fold.

- Train the model on the remaining k-1 folds.

- Use the validation fold to compute the chosen evaluation metric(s) (e.g., F1 score, ROC-AUC).

- Calculate the average performance metric across all k folds for this hyperparameter set.

- For each of the k folds:

- For each unique combination of hyperparameters:

- Model Selection: Select the hyperparameter set that yields the highest average performance.

- Final Training: Train a final model using the selected optimal hyperparameters on the entire training set.

- Validation Set Check: Evaluate this final model on the held-out validation set to get a final pre-deployment performance estimate. The test set remains completely unused at this stage.

Visualization: k-Fold Cross-Validation Workflow

Model Evaluation Metrics

Selecting the right evaluation metrics is crucial for a truthful assessment of model performance, especially given the prevalence of imbalanced datasets in chemistry, such as those for toxicity prediction where active compounds are rare [22].

Table: Key Model Evaluation Metrics for Classification

| Metric | Formula | Interpretation & Use-Case |

|---|---|---|

| Accuracy | (TP + TN) / (TP + TN + FP + FN) | Use with caution. Overall correctness. Misleading for imbalanced data (e.g., 99% accuracy if 1% are active compounds) [23] [21]. |

| Precision | TP / (TP + FP) | Measures model's reliability when it predicts a positive. Crucial when false positives are costly (e.g., wrongly labeling a compound as non-toxic) [24] [25]. |

| Recall (Sensitivity) | TP / (TP + FN) | Measures model's ability to find all positives. Crucial when false negatives are costly (e.g., failing to identify a toxic compound) [24] [25]. |

| F1 Score | 2 × (Precision × Recall) / (Precision + Recall) | Harmonic mean of precision and recall. Provides a single balanced metric when both false positives and negatives are important [24] [25]. |

| AUC-ROC | Area Under the Receiver Operating Characteristic Curve | Measures the model's ability to distinguish between classes across all thresholds. A value of 0.5 is random, 1.0 is perfect. Independent of class imbalance [24] [25]. |

| MCC (Matthews Correlation Coefficient) | (TP×TN - FP×FN) / √((TP+FP)(TP+FN)(TN+FP)(TN+FN)) | A balanced metric that considers all four confusion matrix categories. Good for imbalanced datasets as it produces a high score only if the model performs well on all classes [25]. |

Blind Testing and Prospective Validation

The most rigorous test of a model is its performance on a truly external, blind test set. This is the final step that simulates real-world performance.

Protocol: Establishing a Blind Test Set

Objective: To conduct an unbiased final evaluation of the model's generalizability using data that was completely withheld during the entire model development process.

Materials:

- Test set (withheld from the initial Data Splitting phase).

- Final model trained on the full training set with optimized hyperparameters.

Methodology:

- Data Integrity: Ensure the blind test set has no overlapping molecules or scaffolds with the training or validation sets. For temporal splits, confirm all test data is from a later time period.

- Final Evaluation: Run the final trained model on the blind test set.

- Metric Calculation: Calculate all relevant evaluation metrics (see Section 4) exclusively on the blind test set. This report represents the most honest estimate of the model's performance.

- Performance Analysis: Compare performance on the training/validation sets to the blind test set. A significant drop in performance on the blind test indicates overfitting and poor generalizability.

The Scientist's Toolkit: Essential Research Reagents & Datasets

The reliability of an ML model in computational chemistry is contingent on the quality and diversity of the data it is trained and tested on.

Table: Key Datasets for Training and Validating Chemistry ML Models

| Resource Name | Domain & Content | Key Features & Utility in Validation |

|---|---|---|

| Halo8 [26] | Reaction pathways with halogenated molecules. ~20M quantum chemical calculations from 19k reactions. | Provides critical data for validating models on halogen-specific chemistry, a key gap in previous datasets. Essential for testing generalizability to pharmaceuticals and materials. |

| QM9 [27] | Small organic molecules (up to 9 heavy atoms). 134k molecules with stable structures and quantum properties. | A benchmark dataset for validating model predictions of quantum mechanical properties like energy and dipole moments. |

| ANI-1x / ANI-2x [27] | Small organic molecules. Millions of DFT calculations, including halogens in ANI-2x. | Extensive dataset for training and validating ML potentials. Useful for testing model accuracy on conformational and chemical space sampling. |

| Transition1x [26] | Chemical reaction pathways. Focus on C, N, O heavy atoms. | Benchmark for validating models on reaction kinetics and transition state prediction, a challenging task for ML. |

| MoleculeNet [27] | Curated collection of datasets for molecular property prediction (e.g., solubility, toxicity). | Provides standardized benchmarks (like ESOL, FreeSolv, Tox21) for fair comparison of models across multiple chemical property tasks. |

| CLAPE-SMB [22] | Protein-DNA binding site prediction using sequence data. | A specialized tool for validating models in structure-based drug discovery, demonstrating performance comparable to methods using 3D structural data. |

Integrated Validation Workflow

A robust validation pipeline integrates all previously described components into a single, coherent process. The following diagram illustrates the sequential flow of data and the critical checkpoints that ensure the integrity of the final model evaluation.

Visualization: End-to-End Validation Workflow

A Toolkit of ML Methods and Their Chemical Applications

The accurate prediction of molecular and material properties represents a cornerstone in the advancement of computational chemistry, with profound implications for drug development and sustainable energy solutions. Traditional methods for determining properties such as aqueous solubility and catalyst stability often rely on empirical observations and resource-intensive experimental studies, creating bottlenecks in research and development pipelines [28]. The integration of supervised machine learning (ML) approaches has emerged as a transformative paradigm, enabling the development of predictive models that can accelerate the design of novel pharmaceuticals and catalytic materials. This article explores the application of supervised learning techniques for predicting two critical properties: solubility of organic compounds in drug development and stability of catalysts in energy applications, providing a comprehensive framework for researchers seeking to implement these approaches within a computational chemistry validation framework.

Supervised Learning for Aqueous Solubility Prediction

Fundamental Principles and Challenges

Aqueous solubility prediction remains a critical challenge in drug development due to its direct impact on a drug's bioavailability and therapeutic outcomes [29]. The dissolution process involves complex interactions between solute-solute and solute-solvent molecules, governed by the balance between overcoming attractive forces within the compound and disrupting hydrogen bonds between the solid phase and the solvent [30]. These complexities, combined with often unreliable experimental solubility data affected by measurement techniques and purity variations, have historically complicated accurate prediction [28] [30].

Data Curation and Molecular Representation Strategies

The foundation of any robust ML model lies in high-quality, diverse datasets. For solubility prediction, researchers have employed various curation strategies, including:

- Multi-source Data Integration: Combining data from established databases such as Vermeire's (11,804 datapoints), Boobier's (901 datapoints), and Delaney's (1,145 datapoints), followed by removal of non-unique measures and noisy data to create unique datasets of over 8,400 compounds [28].

- Quality Filtering: Implementing curation workflows that remove redundant and conflicting records, control experimental conditions (25±5°C, pH 7±1), and focus on neutral solutes in single-component solvents [30] [29].

- Molecular Weight Consideration: Typically excluding compounds with MW > 500 to maintain relevance to drug discovery intermediates while keeping computational costs reasonable [30].

Molecular representation significantly impacts model performance, with two primary approaches dominating the field:

Table 1: Comparison of Molecular Representation Approaches for Solubility Prediction

| Representation Type | Description | Key Features | Performance (R²) |

|---|---|---|---|

| Descriptor-Based | Uses physicochemical properties and structural features | Mordred package generates 2D descriptors; requires feature selection and correlation filtering [28] | 0.88 [28] |

| Circular Fingerprints | Encodes molecular structure as binary strings | Morgan fingerprints (ECFP4) with 2,048 bits; captures functional groups and connectivity [28] | 0.81 [28] |

| Electrostatic Potential Maps | Derived from DFT calculations | Captures 3D molecular shape and charge distribution; requires geometry optimization [29] | 0.918 (with XGBoost) [29] |

Algorithm Selection and Model Performance

Multiple machine learning algorithms have been successfully applied to solubility prediction, with tree-based ensembles and deep learning approaches demonstrating particular efficacy:

- Random Forest Models: Achieved test R² values of 0.88 with molecular descriptors and 0.81 with fingerprint representations on datasets of ~6,750 training compounds [28].

- XGBoost with Tabular Features: Demonstrated superior performance with MAE of 0.458, RMSE of 0.613, and R² of 0.918 when combined with feature selection [29].

- Comparative Studies: Research comparing eight ML methods (ANN, SVM, RF, ExtraTrees, Bagging, GP, MLR, PLS) found that non-linear models consistently outperformed linear regression approaches, with %LogS ± 0.7 = 60-80 and %LogS ± 1.0 = 74-90 across different solvent datasets [30].

Experimental Protocol: Solubility Prediction Workflow

Materials and Software Requirements:

- RDKit for molecular structure manipulation [29]

- Mordred descriptor calculator [28]

- Gaussian 16 for DFT calculations (for ESP maps) [29]

- Random Forest or XGBoost implementations in Python

Step-by-Step Procedure:

Data Collection and Preprocessing

- Collect solubility data from curated sources (ESOL, AQUA, PHYS, OCHEM)

- Standardize SMILES representations and remove duplicates

- Apply exclusion criteria (MW < 500, neutral compounds)

Molecular Representation Generation

- For descriptor-based models: Calculate 2D descriptors using Mordred, apply correlation filtering (threshold ~0.1), and remove highly correlated descriptors [28]

- For fingerprint models: Generate ECFP4 fingerprints with diameter of 4 and 2,048 bits [28]

- For ESP maps: Perform DFT geometry optimization at B3LYP/6-311++g(d,p) level with SMD solvation model [29]

Model Training and Validation

- Split data into training (80%) and test (20%) sets, maintaining LogS distribution

- Optimize hyperparameters using cross-validation

- Train multiple algorithms (RF, XGBoost, ANN) for ensemble approaches

- Validate using external datasets (e.g., Solubility Challenge 2019)

Model Interpretation and Explanation

- Apply SHAP (SHapley Additive exPlanations) analysis to identify feature importance [28]

- Validate physicochemical relationships between key descriptors and solubility

Supervised Learning for Catalyst Stability Prediction

Unique Challenges in Catalyst Property Prediction

Predicting catalyst stability and activity presents distinct challenges compared to solubility prediction, primarily due to the complex compositional space, diverse catalyst types, and the critical influence of reaction conditions. Traditional catalyst development relies heavily on trial-and-error approaches, which are labor-intensive and time-consuming [31]. ML approaches must account for multiple catalyst categories (alloys, carbides, nitrides, oxides, phosphides, sulfides, perovskites) and their respective structural features [32].

The development of effective catalyst prediction models requires specialized data sources and careful feature selection:

- Catalysis-hub Database: Provides hydrogen evolution reaction free energies and corresponding adsorption structures from DFT calculations, encompassing various catalyst types [32].

- Feature Minimization Approaches: Successful models have utilized minimal feature sets (10-23 features) based on atomic structure and electronic information of catalyst active sites without requiring additional DFT calculations [32].

- Key Feature Identification: Energy-related features such as φ = Nd0²/ψ0 have shown strong correlation with HER free energy [32].

Table 2: Machine Learning Performance for Hydrogen Evolution Catalyst Prediction

| ML Model | Feature Count | R² Score | RMSE | Application Scope |

|---|---|---|---|---|

| Extremely Randomized Trees (ETR) | 10 | 0.922 | N/A | Multi-type HECs [32] |

| Random Forest Regression | 23 | 0.921 (reported for similar approach) | N/A | Multi-type HECs [32] |

| Artificial Neural Network | 62 | High correlation (specific R² not provided) | Low error | SCR NOx catalysts [31] |

| CatBoost Regression | 20 | 0.88 | 0.18 eV | Transition metal single-atom catalysts [32] |

Iterative Machine Learning Approaches

A significant advancement in catalyst prediction is the development of iterative ML-experimental approaches:

- Initial Model Training: Train ML model using existing literature data for relevant catalyst systems [31]

- Candidate Screening: Use genetic algorithms or similar optimization techniques to identify promising catalyst compositions [31]

- Experimental Synthesis and Characterization: Physically create and test predicted catalysts [31]

- Model Updating: Incorporate new experimental results into the training database [31]

- Iterative Refinement: Repeat steps 2-4 until desired catalyst performance is achieved [31]

This approach successfully identified novel Fe-Mn-Ni SCR NOx catalysts with high activity and wide temperature application ranges after four iterations [31].

Experimental Protocol: Catalyst Stability Prediction

Materials and Software Requirements:

- Atomic Simulation Environment (ASE) for feature extraction [32]

- DFT calculation software (for validation)

- ETR, RFR, or ANN implementations in Python

- Catalyst synthesis equipment (round-bottom flask, filtration setup, vacuum oven, calcination furnace)

Step-by-Step Procedure:

Data Collection and Curation

- Extract catalyst structures and properties from Catalysis-hub or similar databases

- Filter data based on adsorption free energy ranges (typically −2 to 2 eV for HER)

- Validate data quality and remove unreasonable adsorption structures

Feature Extraction and Selection

- Use ASE Python module to identify adsorbed atoms and surface structures

- Extract electronic and elemental features for active sites and nearest neighbors

- Apply feature importance analysis to reduce dimensionality (from 23 to 10 features)

- Focus on key energy-related descriptors (φ = Nd0²/ψ0)

Model Building and Optimization

- Train multiple algorithms (ETR, RFR, GBR, XGBR, DTR, LGBMR) for comparison

- Optimize hyperparameters using cross-validation

- Validate against DFT calculations and experimental data where available

Iterative Experimental Validation

- Synthesize top candidate catalysts predicted by model (e.g., via coprecipitation)

- Characterize materials using XRD, TEM, and performance testing

- Update training dataset with experimental results

- Retrain model and identify improved candidates

Integrated Workflow and Visualization

The application of supervised learning for property prediction follows a structured workflow that integrates data curation, model development, and experimental validation. The following diagram illustrates this comprehensive approach:

Supervised Learning Workflow for Chemical Property Prediction

Table 3: Key Research Reagents and Computational Tools for Property Prediction

| Resource Category | Specific Tools/Databases | Function and Application |

|---|---|---|

| Computational Chemistry Software | Gaussian 16 [29] | Performs DFT calculations for geometry optimization and ESP map generation |

| RDKit [28] [29] | Open-source cheminformatics for molecular descriptor calculation and fingerprint generation | |

| Mordred [28] | Calculates 1,613+ 2D molecular descriptors for feature-based models | |

| Machine Learning Algorithms | Random Forest [28] [30] | Ensemble tree method robust to outliers and noise in chemical data |

| XGBoost [29] [32] | Gradient boosting framework with high performance on tabular chemical data | |

| Extremely Randomized Trees [32] | Particularly effective for catalyst prediction with minimal features | |

| Artificial Neural Networks [31] | Captures complex non-linear relationships in catalyst composition-activity maps | |

| Specialized Datasets | Open Molecules 2025 (OMol25) [33] [34] | Massive DFT dataset of 100M+ molecular snapshots for training universal ML potentials |

| Catalysis-hub [32] | Repository of catalyst structures and reaction energies for HER and other applications | |

| Curated Solubility Datasets [28] [30] [29] | High-quality solubility measurements (ESOL, AQUA, PHYS, OCHEM) for model training | |

| Experimental Validation Tools | XRD [31] | Characterizes crystal structure of synthesized catalyst materials |

| TEM [31] | Analyzes morphology and nanostructure of catalytic materials | |

| Performance Testing Reactors [31] | Evaluates catalytic activity under controlled conditions |

The integration of supervised learning approaches for predicting solubility and catalyst stability represents a paradigm shift in computational chemistry and materials science. The methodologies outlined in this article provide researchers with comprehensive protocols for implementing these techniques, from data curation and model selection to experimental validation and iterative improvement. As the field advances, the availability of larger datasets such as OMol25 [33] and more sophisticated algorithms like TabPFN [35] promise to further enhance predictive accuracy. By adopting these structured approaches, researchers can significantly accelerate the development of novel pharmaceuticals and sustainable energy solutions, bridging the gap between computational prediction and experimental realization.

Leveraging Neural Network Potentials (NNPs) for High-Accuracy Energy Surfaces

Neural network potentials represent a transformative advancement in computational chemistry, enabling highly accurate simulations of potential energy surfaces (PES) that approach quantum mechanical accuracy while dramatically reducing computational costs. Traditional quantum mechanical methods like density functional theory (DFT) provide reliable accuracy but remain computationally prohibitive for large systems and long timescales, while classical molecular mechanics force fields offer speed but lack quantum accuracy, particularly for describing bond formation and breaking. NNPs bridge this gap by using machine learning to approximate solutions to the Schrödinger equation, learning the complex relationship between atomic configurations and potential energy from quantum mechanical data [36].

The fundamental architecture of NNPs processes atomic numbers and coordinates to predict system energies, forces, and other electronic properties. Unlike traditional quantum methods that may take years to compute complex wavefunctions, trained NNPs can perform these calculations orders of magnitude faster, making them particularly valuable for molecular dynamics simulations, reaction pathway exploration, and materials property prediction [36]. Modern implementations have evolved from system-specific models to general-purpose potentials capable of handling diverse molecular systems with elements commonly found in organic and materials chemistry, notably C, H, N, and O [37].

Performance Benchmarks and Quantitative Validation

Rigorous validation against established quantum mechanical methods and experimental data demonstrates the capabilities of modern NNPs. The EMFF-2025 model, for instance, has shown exceptional accuracy in predicting structures, mechanical properties, and decomposition characteristics of high-energy materials while maintaining DFT-level precision [37]. Systematic evaluation of energy and force predictions reveals mean absolute errors (MAE) predominantly within ±0.1 eV/atom for energies and ±2 eV/Å for forces across a wide temperature range [37].

Table 1: Performance Metrics of Representative Neural Network Potentials

| NNP Model | Elements Covered | Energy MAE (eV/atom) | Force MAE (eV/Å) | Key Applications | Reference |

|---|---|---|---|---|---|

| EMFF-2025 | C, H, N, O | < 0.1 | < 2.0 | High-energy materials decomposition, mechanical properties | [37] |

| ANI-1 | H, C, N, O | N/A | N/A | Small organic molecules, drug discovery | [36] |

| DP-CHNO-2024 | C, H, N, O | N/A | N/A | RDX, HMX, CL-20 explosives | [37] |

| MatterSim | Extensive (multi-element) | N/A | N/A | Broad materials screening | [38] |

Beyond energy and force predictions, NNPs have demonstrated remarkable accuracy in reproducing experimental observables. For instance, transfer learning approaches that build upon pre-trained models have enabled high-fidelity prediction of complex phenomena such as thermal decomposition pathways and mechanical properties under deformation [37] [39]. Incorporating stress terms into loss functions during training has proven essential for accurately predicting elastic constants and mechanical behavior, addressing limitations of models trained solely on energy and force data [40].

Experimental Protocols for NNP Development and Validation

Protocol 1: Development of a Specialized NNP via Transfer Learning

Purpose: To create an accurate, efficient NNP for a specific material system using transfer learning, minimizing the need for extensive DFT calculations.

Materials and Computational Resources:

- High-performance computing cluster with GPU acceleration

- Quantum chemistry software (e.g., CP2K, Quantum ESPRESSO, VASP) for reference calculations

- Pre-trained universal NNP (e.g., MatterSim, M3GNet, ANI)

- NNP training framework (e.g., DeePMD-kit, TorchANI)

Procedure:

- Initial System Preparation:

- Generate diverse initial configurations encompassing relevant chemical spaces

- Include low-energy stable structures and high-energy transition states

- Ensure coverage of expected bonding environments and structural motifs

Reference Data Generation:

- Perform ab initio molecular dynamics (AIMD) simulations at multiple temperatures

- Conduct targeted DFT calculations for unique configurations identified through active learning

- Calculate energies, forces, and stress tensors for all configurations

- Limit DFT calculations to 100-500 structures through strategic sampling [38]

Knowledge Distillation Implementation:

- Utilize non-fine-tuned, off-the-shelf pre-trained NNP as teacher model

- Generate soft targets for diverse structures, including high-energy regions

- Train student model with soft targets from teacher model

- Fine-tune student model with limited DFT dataset (hard targets)

Model Training and Validation:

- Implement weighted loss function combining energy, force, and stress terms

- Apply transfer learning from pre-trained models using minimal new data

- Validate against hold-out DFT datasets and experimental measurements

- Test extrapolation capability for unseen configurations

Expected Outcomes: A specialized NNP achieving DFT-level accuracy with significantly reduced computational cost (10x reduction in DFT calculations reported) and accelerated inference speed (up to 106x faster than teacher model) [38].

Protocol 2: Validation of NNP Predictive Capability for Reaction Pathways

Purpose: To assess NNP accuracy in predicting transition states and reaction mechanisms compared to high-level quantum chemical calculations.

Materials and Computational Resources:

- Transition state database (e.g., QM9, OC20, ODAC23)

- Quantum chemistry software for benchmark calculations (e.g., Gaussian, ORCA)

- NNP-enabled molecular dynamics package (e.g., LAMMPS, ASE)

- Transition state search tools (e.g., ASE-NEB, DL-FIND)

Procedure:

- System Setup:

- Select representative molecular systems with known reaction pathways

- Define reactant and product configurations for targeted reactions

- Generate initial guess structures for transition states

Transition State Location:

- Perform nudged elastic band (NEB) calculations using NNP-derived forces

- Refine transition states using dimer method or quasi-Newton approaches

- Validate transition states through frequency analysis (exactly one imaginary frequency)

Benchmarking Against Quantum Chemistry:

- Calculate activation energies and reaction energies using high-level theory (CCSD(T), DLPG)

- Compare NNP predictions with benchmark values

- Statistical analysis of errors across diverse reaction types

Kinetic Parameter Extraction:

- Perform molecular dynamics simulations at multiple temperatures

- Calculate rate constants using transition state theory formalism

- Compare Arrhenius parameters with experimental and high-level computational data

Expected Outcomes: Quantitative assessment of NNP performance for reaction barrier prediction, with successful models achieving chemical accuracy (< 1 kcal/mol error) for activation energies [41].

Workflow Visualization for NNP Implementation

The following diagram illustrates the complete workflow for developing and validating neural network potentials, integrating multiple protocols and validation steps:

Research Reagent Solutions: Computational Tools for NNP Development

Table 2: Essential Software and Data Resources for NNP Research

| Tool Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Software | CP2K, Quantum ESPRESSO, VASP, Gaussian, ORCA | Generate training data via DFT and post-Hartree-Fock methods | Reference energy/force calculations for NNP training |

| NNP Architectures | DeePMD, ANI, M3GNet, CHGNet | Neural network frameworks for PES approximation | Core NNP implementation and training |

| Molecular Dynamics Engines | LAMMPS, GROMACS, ASE | Perform simulations using trained NNPs | Property prediction and validation |

| Transition State Search Tools | ASE-NEB, DL-FIND, AutoNEB | Locate and characterize transition states | Reaction pathway analysis |

| Benchmark Datasets | QM9, Materials Project, Open Catalyst Project | Provide standardized training and test data | Model benchmarking and transfer learning |

Applications in Molecular Systems and Materials

NNPs have demonstrated particular utility in studying complex molecular transformations and material behaviors that challenge traditional computational methods. For high-energy materials (HEMs) containing C, H, N, and O elements, the EMFF-2025 model has revealed unexpected similarities in high-temperature decomposition mechanisms, challenging conventional views of material-specific behavior and enabling more predictive models for energetic material design [37]. By integrating principal component analysis and correlation heatmaps, researchers have mapped the chemical space and structural evolution of twenty HEMs across temperature gradients, providing insights into stability and reactivity patterns [37].

In catalytic systems, NNPs have enabled precise transition state prediction through specialized architectures like object-aware equivariant diffusion models and PSI-Net, reducing computation time from hours to seconds while maintaining high accuracy [41]. These advances are particularly valuable for sustainable chemical process development, where understanding reaction mechanisms and optimizing catalysts requires extensive exploration of potential energy surfaces. The application of transfer learning has further enhanced these capabilities, allowing models to approach coupled-cluster accuracy while retaining computational efficiency sufficient for high-throughput screening [40].

For drug discovery applications, NNPs face challenges in modeling solution-phase chemistry but recent advances in implicit solvent corrections have significantly improved their utility. By combining NNPs with analytical linearized Poisson-Boltzmann (ALPB) implicit-solvent models and semiempirical quantum methods (GFN2-xTB), researchers can now model reactions with improved accuracy compared to gas-phase simulations [42]. This approach has proven particularly valuable for studying covalent inhibitor mechanisms like thia-Michael additions, where solvation effects dramatically influence reaction barriers and pathways [42].

Current Limitations and Future Directions

Despite significant advances, several challenges remain in the widespread adoption of NNPs for high-accuracy energy surface prediction. Data scarcity, particularly for transition states and excited electronic states, limits model generalizability across chemical space [41]. Current TS datasets remain sparse compared to molecular structure databases, constraining ML model training and validation [41]. Additionally, the treatment of solvent effects and complex electrochemical environments requires further development, though recent implicit solvent approaches show promise [42].

Future development trajectories include establishing comprehensive datasets encompassing both organic and inorganic chemistry, developing standardized validation frameworks, and improving model architectures to handle larger molecular systems [41]. Integration of multi-fidelity sampling strategies, combining low-cost quantum methods with high-accuracy calculations, will enhance data generation efficiency [40]. For drug discovery applications, incorporating explicit solvation models and improving scalability for biomolecular systems will be essential for studying protein-ligand interactions and biological reaction mechanisms.

As architectural innovations continue, particularly in graph neural networks and equivariant models, NNPs are poised to expand their applicability across increasingly complex chemical systems, potentially enabling fully automated reaction discovery and optimization pipelines that seamlessly integrate computational predictions with experimental validation.

Machine Learning in Transition State Searching and Reaction Pathway Exploration

The exploration of transition states (TSs)—transient molecular configurations at the energy barrier along the reaction pathway—is fundamental to understanding chemical reaction mechanisms and kinetics [41]. Due to their extremely short lifetimes (typically femtoseconds), TSs cannot be isolated experimentally, making computational methods indispensable [41]. Traditional computational approaches, including single-ended methods (e.g., Berny algorithm) and double-ended methods (e.g., nudged elastic band), have provided valuable insights but face significant limitations in computational cost and scalability [41]. These limitations become particularly apparent when dealing with large molecular systems or when rapid screening of multiple reaction pathways is required [41].

Machine learning (ML) has emerged as a powerful paradigm to overcome these challenges, dramatically reducing computational time by leveraging existing data and enabling rapid predictions for novel reactions based on learned chemical principles [41]. The field has evolved from traditional ML methods like random forest and kernel ridge regression to advanced deep learning architectures including graph neural networks (GNNs), tensor field networks, and generative models [41]. This evolution has accelerated significantly since 2020, with ML methods now capable of reducing TS computation time from hours to seconds while maintaining high accuracy [41].

Key Machine Learning Approaches and Their Performance

Categorization of ML Methods for TS Searching

Table 1: Machine Learning Approaches for Transition State Searching

| Method Category | Representative Algorithms | Key Input Requirements | Advantages | Limitations |

|---|---|---|---|---|

| Traditional ML | Random Forest, Support Vector Machine, Kernel Ridge Regression [41] | Structural and electronic descriptors | Interpretability, works with smaller datasets | Limited transferability, manual feature engineering |

| Graph Neural Networks | Basic GNNs, Equivariant GNNs (EGNN) [41] | Molecular graphs | Naturally encodes molecular topology, transferable | Requires aligned 3D geometries [43] |

| Generative Models | Diffusion models (TSDiff, OA-ReactDiff) [43] [41], GANs [41] | 2D molecular graphs or 3D reactant/product geometries | Can generate novel TS conformations, no need for pre-aligned inputs [43] | Higher computational cost during inference [43] |

| Reinforcement Learning | Custom frameworks [41] | Reaction environment | Optimizes for specific objectives | Complex implementation, training instability |

Quantitative Performance Comparison

Table 2: Performance Metrics of Representative ML Methods

| Method | Input Type | Accuracy Metric | Performance | Computational Speed | Reference |

|---|---|---|---|---|---|

| TSDiff | 2D molecular graphs [43] | Success rate in TS validation | 90.6% [43] | Seconds per reaction (5000 denoising steps) [43] | Nature Communications (2024) [43] |

| ColabReaction | 3D reactant and product geometries [44] | Comparison to QM scan-based approaches | ~2 orders of magnitude speedup [45] | Minutes (typically ~10 minutes) [45] | J. Chem. Inf. Model. (2025) [45] |

| OA-ReactDiff | 3D reactant and product geometries [43] | Geometry prediction accuracy | Outperforms previous ML models [43] | Not specified | Concurrent work [43] |

| WASP | Molecular geometries along reaction pathway [46] | Accuracy for transition metal catalysts | MC-PDFT level accuracy [46] | Months to minutes speedup [46] | PNAS (2025) [46] |

Detailed Experimental Protocols

Protocol 1: TSDiff for Transition State Prediction from 2D Molecular Graphs

Principle: TSDiff is a generative approach based on the stochastic diffusion method that learns a direct mapping between TS conformations and 2D molecular graphs, eliminating the need for 3D reactant and product geometries with proper orientation [43].

Materials and Software Requirements:

- Python environment with PyTorch

- RDKit for molecular graph handling

- Pre-trained TSDiff model

- Quantum chemistry software (e.g., Gaussian, ORCA) for validation

Procedure:

- Input Preparation:

- Represent the reaction using a condensed reaction graph (({\mathcal{G}}_{\text{rxn}})) that captures bond changes in reactants and products [43]

- Construct molecular graphs for reactants (({\mathcal{G}}{R})) and products (({\mathcal{G}}{P})) from SMILES strings [43]

- Generate atom-mapping information to combine reactant and product graphs [43]

Model Inference:

Validation:

Troubleshooting:

- If validation fails, increase sampling rounds to explore alternative TS conformations [43]

- For reactions with heavy elements, verify the dataset included similar elements during training [43]

Figure 1: TSDiff Workflow for TS Prediction from 2D Graphs

Protocol 2: ColabReaction with Direct MaxFlux and ML Potentials

Principle: ColabReaction combines the double-ended Direct MaxFlux (DMF) method with machine learning potentials to achieve rapid TS searches, typically within minutes, implemented on Google Colaboratory for accessibility [44] [45].

Materials and Software Requirements:

- Google Colaboratory account with GPU access

- ColabReaction web interface (https://ColabReaction.net)

- 3D molecular structures of reactants and products in proper orientation

Procedure:

- System Setup:

Machine Learning Potential Application:

Transition State Refinement:

Advantages:

- No coding required through web interface [44]

- Eliminates need for local computational resources [44]

- Cost-free solution particularly beneficial for students and experimental researchers [44]

Figure 2: ColabReaction DMF Workflow with ML Potentials

Protocol 3: WASP for Transition Metal Catalysts

Principle: The Weighted Active Space Protocol (WASP) integrates multireference quantum chemistry methods (MC-PDFT) with machine-learned potentials to accurately capture the electronic structure of transition metal catalysts while maintaining computational efficiency [46].

Materials and Software Requirements:

- WASP implementation (https://github.com/GagliardiGroup/wasp)

- Initial reaction pathway sampling using conventional methods

- High-performance computing resources for initial training data generation

Procedure:

- Training Data Generation:

ML Potential Training:

Catalytic Dynamics Simulation:

Application Notes:

- Particularly valuable for transition metal catalysts with complex electronic structures [46]

- Enables simulation of catalytic systems under realistic conditions (temperature, pressure) [46]

- Demonstrated speedup: from months to minutes [46]

Table 3: Key Research Reagent Solutions for ML-Based TS Exploration

| Resource Name | Type | Function/Purpose | Access Information |

|---|---|---|---|

| OMol25 Dataset | Dataset | 100M+ 3D molecular snapshots with DFT properties for training ML potentials [33] | Publicly available dataset |