Verification and Validation in Computational Modeling: A Guide for Credible Drug Development

This article provides a comprehensive guide to Verification, Validation, and Uncertainty Quantification (VVUQ) in computational modeling for biomedical research and drug development.

Verification and Validation in Computational Modeling: A Guide for Credible Drug Development

Abstract

This article provides a comprehensive guide to Verification, Validation, and Uncertainty Quantification (VVUQ) in computational modeling for biomedical research and drug development. It covers foundational concepts, practical methodologies, and optimization strategies essential for building model credibility. Readers will learn to apply VVUQ frameworks like ASME V&V 40 and integrate AI/ML tools to enhance decision-making, satisfy regulatory standards, and accelerate the delivery of new therapies to patients.

The Pillars of Model Credibility: Core Concepts of VVUQ

Verification, Validation, and Uncertainty Quantification (VVUQ) represents a systematic framework for establishing confidence in computational models by ensuring their mathematical correctness, physical accuracy, and statistical reliability. As computational modeling and simulation (CM&S) increasingly replace physical testing across engineering and biomedical sectors, VVUQ provides the essential methodology for assessing model credibility [1]. This trifecta approach has become particularly critical in fields such as medical device development and pharmaceutical research, where regulatory agencies now accept in silico evidence as part of marketing authorization submissions [2].

The fundamental definitions of VVUQ's components are clearly established in technical standards. Verification is "the process of determining that a computational model accurately represents the underlying mathematical model and its solution," essentially answering "Are we solving the equations correctly?" [3]. Validation determines "the degree to which a model is an accurate representation of the real world from the perspective of the intended uses of the model," answering "Are we solving the correct equations?" [3]. Uncertainty Quantification (UQ) is "the science of quantifying, characterizing, tracing, and managing uncertainty in computational and real world systems" [4].

The proper relationship between these components follows a logical sequence: verification must precede validation, which in turn provides context for uncertainty quantification [3]. This sequential approach separates implementation errors (verification) from model formulation shortcomings (validation) while systematically accounting for variabilities and uncertainties that affect predictive confidence [2] [4].

Core Concepts and Definitions

The VVUQ Framework

The VVUQ framework operates as an integrated system where each component addresses distinct aspects of model credibility. Verification ensures the numerical implementation correctly solves the mathematical formalism, validation assesses how well computational predictions match experimental observations of the real world, and uncertainty quantification characterizes the reliability of model predictions given inherent variabilities in inputs, parameters, and model form [4] [3].

This framework has evolved from quality management principles in computational fluid dynamics and solid mechanics, gradually expanding to encompass complex biological systems and computational biomechanics [3]. The American Society of Mechanical Engineers (ASME) has played a pivotal role in standardizing VVUQ terminology and methodologies through publications such as VVUQ 1-2022, which establishes consistent terminology across computational modeling and simulation applications [1].

Detailed Component Definitions

Table: The Three Components of VVUQ

| Component | Core Question | Focus | Key Activities |

|---|---|---|---|

| Verification | "Are we solving the equations correctly?" | Mathematics and code implementation [3] | Code verification, calculation verification, convergence studies [5] [4] |

| Validation | "Are we solving the right equations?" | Physical accuracy and real-world representation [3] | Comparison with experimental data, validation metrics, credibility assessment [2] [5] |

| Uncertainty Quantification | "How reliable are our predictions given uncertainties?" | Reliability and confidence bounds [6] [4] | Identifying uncertainty sources, propagation analysis, sensitivity analysis [4] |

Verification consists of two subordinate processes: code verification and calculation verification. Code verification ensures the computational algorithms correctly implement the mathematical model, typically through comparison with analytical solutions or manufactured problems [3]. Calculation verification focuses on estimating numerical errors introduced by discretization, iteration, and round-off, often assessed through mesh convergence studies [5] [3].

Validation constitutes an evidence-generating process that compares computational outputs with experimental data from the physical system being modeled [3]. This process is always context-dependent, as a model may be adequately validated for one intended use but insufficient for another. The ASME V&V 40 standard emphasizes that validation activities must be informed by the model's "context of use" (COU) and the potential risk associated with an incorrect prediction [2].

Uncertainty Quantification formally characterizes how uncertainties in inputs, parameters, and model form affect the quantity of interest. UQ distinguishes between aleatoric uncertainty (inherent variability irreducible by more data) and epistemic uncertainty (reducible through better information or knowledge) [4]. For computational models, key uncertainty sources include uncertain inputs, model form limitations, computational approximations, and physical testing variability [4].

Verification Methodologies and Protocols

Code Verification Techniques

Code verification methodologies ensure that the mathematical model is correctly implemented in software. The most rigorous approach employs comparison with analytical solutions for simplified problems with known exact answers [3]. When analytical solutions are unavailable for complex systems, the method of manufactured solutions provides an alternative by constructing an arbitrary solution function, deriving corresponding source terms, and verifying that the code reproduces the manufactured solution [3].

Software Quality Engineering (SQE) practices provide the foundation for reliable code verification through systematic code review, debugging, and version control [6]. These processes are particularly critical for in silico trials and medical device applications, where regulatory acceptance requires demonstrated software reliability [2] [6].

Calculation Verification Procedures

Calculation verification estimates numerical accuracy in specific simulations, primarily addressing discretization errors. The standard methodology involves mesh convergence studies, where successive mesh refinements demonstrate asymptotic approach to a continuum solution [3]. A common acceptance criterion requires that further mesh refinement changes the solution output by less than an established threshold (e.g., <5%) [3].

Table: Calculation Verification Methods for Discretization Error Estimation

| Method | Procedure | Application Context | Acceptance Criteria |

|---|---|---|---|

| Grid Convergence Index | Systematic refinement of spatial/temporal discretization [3] | Finite element, finite volume, finite difference methods | Solution change < 5% with refinement [3] |

| Iterative Convergence | Monitoring solution evolution with iteration count [5] | Problems solved through iterative methods | Residual reduction to specified tolerance [5] |

| Time Step Convergence | Progressive reduction of time step size [5] | Transient, dynamic simulations | Insensitive response with further reduction [5] |

For complex biomechanical systems, verification must address multi-physics interactions and nonlinear material behaviors. Ionescu et al. provide an exemplar case where they verified a transversely isotropic hyperelastic constitutive model implementation against an analytical solution for equibiaxial stretch, achieving stress predictions within 3% of the theoretical values [3].

Validation Methodologies and Protocols

The Validation Experiment Framework

Validation requires carefully designed physical experiments that provide high-quality data for comparing with computational predictions. These experiments must capture the essential physics relevant to the model's context of use while providing comprehensive documentation of boundary conditions, initial conditions, and material properties [3]. Validation experiments differ from traditional research experiments through their specific design for computational comparison, requiring rigorous characterization of experimental uncertainties [5].

The validation process follows a structured workflow: (1) define the context of use and quantities of interest; (2) design experiments that isolate these quantities; (3) execute experiments with comprehensive uncertainty characterization; (4) perform corresponding simulations; (5) compare results using appropriate validation metrics; and (6) assess credibility relative to predefined acceptability thresholds [2] [5].

Validation Metrics and Acceptability Criteria

Validation metrics provide quantitative measures of agreement between computational results and experimental data. These range from simple difference measures for scalar quantities to multivariate metrics for field comparisons [5]. The ASME VVUQ 20.1-2024 standard specifically addresses "Multivariate Metric for Validation," providing methodologies for comparing complex data patterns [1].

For regulatory applications, the ASME V&V 40-2018 standard introduces a risk-informed credibility framework that determines the required level of validation evidence based on model influence (how much the decision relies on the model) and decision consequence (potential impact of an incorrect prediction) [2]. This framework ensures validation rigor is proportionate to the model's role in decision-making, with higher-stakes applications requiring more extensive validation evidence.

Uncertainty Quantification Framework

Uncertainty in computational modeling arises from multiple sources, broadly categorized as aleatoric (irreducible randomness) and epistemic (reducible knowledge limitations) [4]. Aleatoric uncertainty includes inherent variabilities in material properties, operating conditions, and manufacturing tolerances, while epistemic uncertainty encompasses model form approximations, parameter estimation errors, and numerical approximations [4].

Table: Classification of Uncertainty Sources in Computational Modeling

| Uncertainty Category | Specific Sources | Representation Methods | Reduction Strategies |

|---|---|---|---|

| Aleatoric (Irreducible) | Natural material variability, environmental fluctuations, operational differences [4] | Probability distributions, random processes | Cannot be reduced; must be characterized [4] |

| Epistemic (Reducible) | Model form assumptions, simplified physics, unknown parameters [4] | Interval analysis, probability boxes, Bayesian methods | Improved models, additional data, expert knowledge [4] |

| Parametric | Imperfectly known material properties, boundary conditions [4] | Probability distributions, intervals | Experimental calibration, parameter estimation [3] |

| Numerical | Discretization error, iterative convergence, round-off [4] [3] | Error estimates, convergence studies | Mesh refinement, higher-order methods [3] |

Uncertainty Quantification Methods

Uncertainty quantification employs both non-probabilistic and probabilistic frameworks. Non-probabilistic methods include interval analysis and fuzzy sets, while probabilistic approaches dominate engineering applications through probability distributions and random field representations [4]. The UQ workflow typically involves: (1) identifying and classifying uncertainty sources; (2) quantifying input uncertainties; (3) propagating uncertainties through the computational model; (4) analyzing output uncertainties; and (5) performing sensitivity analysis to identify dominant uncertainty contributors [4].

Uncertainty propagation methods include sampling approaches (e.g., Monte Carlo, Latin Hypercube), expansion methods (e.g., polynomial chaos), and surrogate-based techniques [4]. Monte Carlo methods remain the gold standard for accuracy but often prove computationally prohibitive for large-scale models, motivating advanced surrogate modeling techniques that approximate complex system responses with computationally efficient models [4].

Implementation and Applications

Implementing comprehensive VVUQ requires both conceptual frameworks and practical tools. The researcher's toolkit includes standardized protocols, software solutions, and reference materials essential for executing rigorous VVUQ processes.

Table: Essential VVUQ Resources for Researchers

| Resource Category | Specific Tools/Standards | Application Context | Key Functions |

|---|---|---|---|

| Technical Standards | ASME VVUQ 1-2022 (Terminology) [1] | All computational modeling | Standardized definitions and concepts |

| ASME V&V 10-2019 (Solid Mechanics) [1] | Structural analysis, biomechanics | Verification and validation protocols | |

| ASME V&V 20-2009 (CFD and Heat Transfer) [1] | Fluid dynamics, thermal analysis | Specific methodologies for CFD | |

| ASME V&V 40-2018 (Medical Devices) [1] [2] | Medical technology, in silico trials | Risk-informed credibility assessment | |

| Software Capabilities | SmartUQ [4] | General engineering systems | Design of experiments, calibration, UQ |

| Custom UQ Tools [4] | Discipline-specific applications | Uncertainty propagation, sensitivity analysis | |

| Experimental Protocols | Validation Experiment Design [5] [3] | Physical testing for validation | Controlled experiments with uncertainty characterization |

| Mesh Convergence Studies [3] | Numerical simulation | Discretization error estimation |

Domain-Specific Applications

VVUQ methodologies have found particularly critical applications in medical domains where computational predictions inform safety and efficacy decisions. For medical devices, the ASME V&V 40 standard provides a risk-based framework for assessing model credibility, with implementation examples including fatigue analysis of tibial tray components [1] [2]. In pharmaceutical development, the Comprehensive in vitro Proarrhythmia Assay (CiPA) initiative employs cardiac electrophysiology models with high-throughput in vitro screening for drug safety assessment, requiring rigorous VVUQ for regulatory acceptance [2].

Digital twins represent an emerging frontier for VVUQ application, particularly in precision medicine where patient-specific models require continuous updating with real-time data [6]. Unlike traditional models, digital twins introduce unique VVUQ challenges related to frequent model updates and bidirectional physical-virtual information flow, necessitating dynamic validation approaches and continuous uncertainty monitoring [6].

The VVUQ trifecta represents an indispensable framework for establishing credibility in computational modeling research. By systematically addressing mathematical implementation (verification), physical accuracy (validation), and statistical reliability (uncertainty quantification), this methodology enables researchers to build confidence in their computational predictions. The rigorous application of VVUQ principles has become particularly crucial as computational models increasingly support high-consequence decisions in medical device regulation, pharmaceutical development, and personalized medicine.

The continuing evolution of VVUQ standards and methodologies reflects the growing sophistication of computational modeling across scientific disciplines. As digital twins and other advanced simulation technologies emerge, VVUQ frameworks must adapt to address new challenges in model updating, real-time validation, and dynamic uncertainty quantification. For computational modeling to fulfill its potential as a reliable tool for scientific discovery and engineering innovation, the principled application of verification, validation, and uncertainty quantification remains essential.

Why VVUQ is Non-Negotiable in Modern Drug Development and Regulatory Submissions

The adoption of Verification, Validation, and Uncertainty Quantification (VVUQ) represents a paradigm shift in modern drug development and regulatory submissions. Computational models have progressively moved from traditional engineering disciplines to critical applications in cell, tissue, and organ biomechanics, enabling unprecedented capabilities in predicting drug effects and medical device performance [7]. These models provide quantitative simulations of living systems that can yield stress and strain data across entire biological continua, offering insights where physical measurements are difficult or impossible to obtain [7]. The fundamental premise of VVUQ lies in establishing model credibility through a systematic framework that ensures mathematical implementation accuracy (verification), physical representation correctness (validation), and comprehensive error assessment (uncertainty quantification).

The regulatory landscape for medical products has evolved to recognize the value of computational modeling, with agencies like the FDA providing structured pathways for model submission and evaluation through programs such as the Q-Submission Program [8]. This program offers mechanisms for sponsors to obtain FDA feedback on computational models included in Investigational Device Exemption (IDE) applications, Premarket Approval (PMA) applications, and other regulatory submissions [8]. The growing acceptance of modeling and simulation in regulatory decision-making underscores why VVUQ has become non-negotiable—it provides the essential evidence base demonstrating that computer models yield results with sufficient accuracy for their intended use in pharmaceutical development and regulatory evaluation.

Core Concepts and Definitions

The VVUQ Framework

The VVUQ framework comprises three interconnected processes that together establish confidence in computational model predictions:

Verification: The process of determining that a model implementation accurately represents the conceptual description and solution to the mathematical model. In essence, verification addresses "solving the equations right" by ensuring that the computational algorithms correctly implement the intended mathematical model and that numerical solutions are obtained with sufficient accuracy [7] [9].

Validation: The process of assessing how well the computational model represents the underlying physical reality by comparing computational predictions with experimental data. Validation addresses "solving the right equations" by evaluating the modeling error through systematic comparison with gold-standard experimental measurements [7].

Uncertainty Quantification: The process of characterizing and assessing uncertainties in model inputs, parameters, and predictions, typically through statistical methods that propagate known sources of variability and error through the computational model to determine their impact on results [10].

Error and Accuracy in Computational Models

Understanding error and accuracy is fundamental to VVUQ implementation. Error represents the difference between a simulated or experimental value and the true value, while accuracy describes the closeness of agreement between a simulation/experimental value and its true value [7]. Errors in computational modeling can be categorized as:

Numerical errors: Result from computational solution techniques and include discretization error, incomplete grid convergence, and computer round-off errors [7].

Modeling errors: Arise from assumptions and approximations in the mathematical representation of the physical problem, including geometry simplifications, boundary condition idealizations, material property estimations, and governing equation approximations [7].

It is crucial to distinguish between error (a known or potential deficiency) and uncertainty (a potential deficiency that may arise from lack of knowledge or inherent variability) [7]. The required level of accuracy for a particular model depends on its intended use in the drug development or regulatory process [7].

VVUQ Methodologies and Experimental Protocols

Model Verification Protocols

Verification ensures the computational model correctly solves the mathematical equations governing the physical system. The following table outlines key verification methodologies:

Table 1: Model Verification Methods and Protocols

| Method Category | Specific Techniques | Application Context | Acceptance Criteria |

|---|---|---|---|

| Code Verification | Method of Manufactured Solutions, Comparison with Analytical Solutions | Software development phase | Relative error < 1-5% for key outputs |

| Solution Verification | Grid Convergence Index (GCI), Richardson Extrapolation | Discrete model solutions | GCI < 3-5% for quantities of interest |

| Numerical Error Assessment | Residual evaluation, Iterative convergence monitoring | All simulation types | Residual reduction > 3-5 orders of magnitude |

Implementation of these verification protocols follows a structured approach. For code verification, the Method of Manufactured Solutions (MMS) involves assuming a solution function, substituting it into the governing equations to compute analytic source terms, solving the equations numerically with these source terms, and comparing the numerical solution with the assumed analytic solution [7]. For solution verification, grid convergence studies require performing simulations on three or more systematically refined meshes, calculating the apparent order of convergence, and applying the Grid Convergence Index to estimate discretization error [7].

Model Validation Protocols

Validation assesses how accurately the computational model represents physical reality through comparison with experimental data. The validation process requires carefully designed experimental protocols that mirror computational model scenarios:

Table 2: Model Validation Experimental Protocols

| Protocol Component | Description | Implementation Example |

|---|---|---|

| Validation Experiments | Specifically designed tests for model comparison | Bi-axial tissue testing with digital image correlation |

| Comparison Metrics | Quantitative measures for computational-experimental agreement | Strain field comparison, statistical confidence intervals |

| Accuracy Assessment | Evaluation of difference between prediction and measurement | ≤15% error for key output metrics (e.g., peak stress) |

A comprehensive validation protocol begins with identifying quantities of interest that are both clinically relevant and experimentally measurable. Validation experiments should be designed to provide detailed boundary conditions and material property data, not just outcome measurements [7]. For example, in validating a coronary stent model, validation experiments would measure not only arterial strain but also precise pressure boundary conditions and stent deployment parameters. Comparison between computational results and experimental data should use both global metrics (e.g., overall deformation, natural frequency) and local metrics (e.g., strain distributions, stress concentrations) with appropriate statistical confidence intervals [7].

Uncertainty Quantification Methods

Uncertainty Quantification (UQ) systematically accounts for variability and limited knowledge in computational models:

Parameter Uncertainty: Characterized through probability distributions for uncertain input parameters (e.g., material properties, boundary conditions) often derived from experimental measurements [7].

Sensitivity Analysis: Determines how variations in model inputs affect outputs, typically using methods like Monte Carlo simulation, Latin Hypercube sampling, or polynomial chaos expansions [10].

Model Form Uncertainty: Assesses errors introduced by mathematical simplifications of physical processes, often evaluated through comparison of multiple model forms against experimental data [7].

Uncertainty quantification follows a structured process of identifying uncertain parameters, characterizing their variability (through literature review or targeted experiments), propagating uncertainties through the computational model using sampling techniques, and analyzing the resulting uncertainties in model predictions [10]. For regulatory submissions, uncertainty quantification should demonstrate that model predictions remain within acceptable bounds despite known sources of variability.

VVUQ Workflow in Computational Modeling

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of VVUQ requires specific computational and experimental resources. The following table details essential components of the VVUQ toolkit for drug development applications:

Table 3: Research Reagent Solutions for VVUQ Implementation

| Tool Category | Specific Tools/Reagents | Function in VVUQ Process |

|---|---|---|

| Computational Modeling Platforms | Finite Element Software (e.g., FEBio, Abaqus), CFD Solvers | Implementation and solution of mathematical models |

| Verification Tools | Code Verification Test Suites, Mesh Generation Tools | Assessment of numerical solution accuracy |

| Validation Experimental Systems | Bioreactors, Mechanical Testers, Imaging Systems | Generation of gold-standard experimental data |

| Biological Reagents | Engineered Tissues, Cell Cultures, Biomarkers | Experimental models for biological validation |

| Uncertainty Quantification Libraries | UQ Toolkits (e.g., DAKOTA, SciPy.stats), Sensitivity Analysis Packages | Statistical analysis of parameter variability |

| Data Management Systems | Laboratory Information Management Systems (LIMS), Electronic Lab Notebooks | Tracking experimental metadata and provenance |

Each tool category plays a distinct role in the VVUQ process. Computational modeling platforms provide the environment for implementing mathematical models of biological systems, while verification tools help establish that these implementations are error-free [7]. Validation experimental systems and biological reagents enable generation of high-quality experimental data for model validation, with particular attention to simulating in vivo conditions [7]. Uncertainty quantification libraries facilitate statistical analysis of parameter variability and its impact on model predictions [10]. Finally, data management systems ensure proper documentation and traceability throughout the VVUQ process, which is critical for regulatory submissions [11].

Regulatory Landscape and Submission Requirements

FDA Guidelines and Submission Pathways

The regulatory environment for computational modeling in drug development has evolved significantly, with explicit recognition of modeling and simulation in regulatory decision-making. The FDA's Q-Submission Program provides formal mechanisms for sponsors to obtain feedback on computational models included in regulatory submissions [8]. This program encompasses:

Pre-Submission (Pre-Sub) Meetings: Allow sponsors to discuss proposed VVUQ approaches for models used in support of regulatory applications.

Informal Meetings: Provide opportunities for early feedback on computational modeling strategies.

Submission Issue Requests: Mechanisms for addressing specific questions during the review process [8].

The FDA differentiates between filing issues (deficiencies that render an application unreviewable) and review issues (complex judgments requiring in-depth assessment) [12]. Inadequate VVUQ documentation can lead to filing issues, resulting in refusal-to-file letters that stop the review process before substantive evaluation [12]. This distinction underscores why comprehensive VVUQ is essential—it addresses potential filing issues related to model credibility.

Documentation Requirements for Regulatory Submissions

Successful regulatory submissions incorporating computational models must include detailed VVUQ documentation:

Model Description: Complete specification of governing equations, constitutive laws, boundary conditions, and initial conditions with scientific rationale for all modeling assumptions [7].

Verification Evidence: Documentation of code verification, solution verification, and numerical error estimation with acceptance criteria and results [7].

Validation Evidence: Comprehensive comparison with experimental data, including description of validation experiments, comparison metrics, quantitative assessment of agreement, and discussion of discrepancies [7].

Uncertainty Quantification: Characterization of parameter uncertainties, sensitivity analysis results, and assessment of how uncertainties impact model predictions relevant to the regulatory decision [10].

Context of Use Statement: Clear specification of the intended use of the model and the domain over which it has been validated, including any limitations on extrapolation beyond directly validated conditions [7] [8].

Documentation should enable regulatory reviewers to independently assess model credibility for the proposed context of use. This requires transparent reporting of all VVUQ activities, including both confirmatory results and identified limitations [8].

Verification, Validation, and Uncertainty Quantification have become non-negotiable components of modern drug development and regulatory submissions due to their critical role in establishing model credibility. The framework provides a systematic approach to demonstrate that computational models are implemented correctly (verification), represent physical reality adequately (validation), and have quantified error bounds appropriate for regulatory decision-making (uncertainty quantification). As computational models assume increasingly important roles in drug development—from predicting pharmacokinetics to optimizing clinical trial design—rigorous VVUQ practices provide the essential foundation for regulatory acceptance. Implementation of comprehensive VVUQ protocols, coupled with early engagement with regulatory agencies through programs like the Q-Submission Program, represents a strategic imperative for modern drug development organizations seeking to leverage computational modeling while maintaining regulatory compliance.

In computational medicine, the journey of a model from a research concept to a tool that informs critical decisions in drug development or patient care is complex. For researchers and drug development professionals, navigating this path requires a deep understanding of three pivotal concepts: Context of Use (COU), Model Credibility, and Fit-for-Purpose (FFP). These interconnected terms form the foundation for establishing trust in computational models and simulations, ensuring they are appropriately developed, evaluated, and applied. Framed within a broader thesis on verification and validation (V&V) in computational modeling research, this guide provides an in-depth technical exploration of these core tenets. V&V processes are the empirical and analytical engines that generate the evidence needed to demonstrate that a model is both technically sound (verification) and scientifically relevant (validation) for a specific purpose, thereby establishing its credibility within a defined COU [13].

Core Terminology and Definitions

Context of Use (COU) is a precise statement that defines the specific role, scope, and application of a computational model in addressing a particular question. It describes what will be modeled, how the model outputs will be used, and any additional information used alongside the model to answer the question of interest [14] [15]. The COU is the cornerstone for all subsequent credibility assessments.

Model Credibility refers to the trust in the predictive capability of a computational model for a specific COU [14] [16]. This trust is not absolute but is established through the collection of evidence, the rigor of which is determined by the model's risk.

Fit-for-Purpose (FFP) is a strategic principle dictating that the development, evaluation, and application of a model must be closely aligned with the specific Question of Interest (QOI) and COU [17]. An FFP approach ensures that the model's complexity, the quality of input data, and the thoroughness of V&V activities are proportionate to the model's intended use, avoiding both oversimplification and unjustified complexity [17].

Table: Core Terminology in Computational Modeling

| Term | Definition | Primary Reference |

|---|---|---|

| Context of Use (COU) | A statement defining the specific role and scope of a computational model used to address a question of interest. | [14] |

| Model Credibility | Trust, established through evidence, in the predictive capability of a computational model for a specific context of use. | [14] |

| Fit-for-Purpose (FFP) | A principle ensuring model development and evaluation are aligned with the question of interest and context of use. | [17] |

| Verification | The process of determining that a computational model accurately represents the underlying mathematical model and its solution. | [13] |

| Validation | The process of determining the degree to which a model is an accurate representation of the real world from the perspective of the intended uses. | [14] |

| Question of Interest (QOI) | The specific question, decision, or concern that is being addressed by the computational model and other evidence. | [14] |

The Verification and Validation Framework

Verification and Validation are the fundamental processes that provide the evidence required to establish model credibility.

Verification answers the question, "Are we building the model right?" It ensures that the computational model is implemented correctly in software and that numerical solutions are accurate [13]. This involves:

- Code Verification: Identifying and removing procedural errors in the source code through unit tests, integration tests, and quality assurance processes [13].

- Calculation Verification: Estimating numerical errors such as discretization and solver errors to ensure they are negligible relative to other uncertainties [13] [15].

Validation answers the question, "Are we building the right model?" It determines how accurately the model represents reality [14]. This is achieved by comparing model predictions with independent experimental or clinical data (comparator data) not used in model development [14] [16].

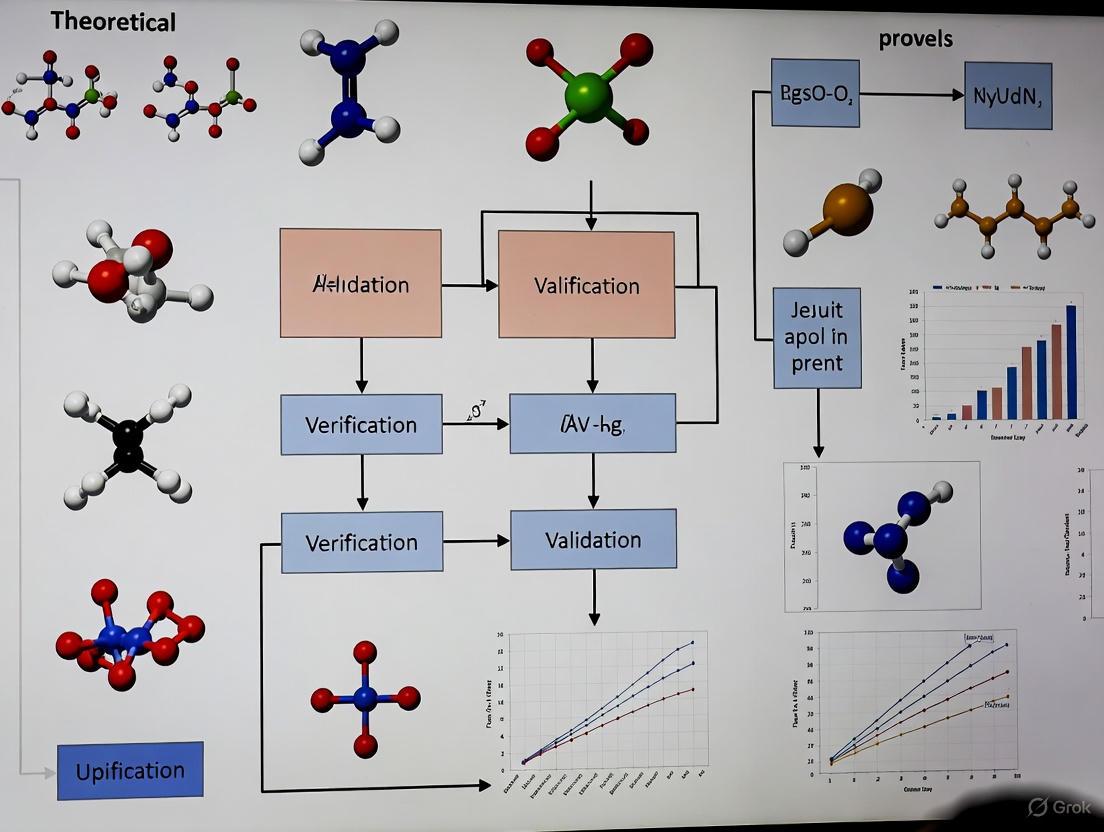

The following diagram illustrates the workflow for establishing model credibility, from defining the need to assessing the resulting evidence, highlighting the roles of V&V.

The Risk-Informed Credibility Assessment

The required level of model credibility is not one-size-fits-all; it is determined through a risk-informed assessment. This risk is a function of two factors [13] [15]:

- Model Influence: The contribution of the computational model relative to other evidence (e.g., clinical trial data, in vitro tests) in addressing the QOI. A model used as the primary evidence has higher influence than one used for supportive, exploratory analysis.

- Decision Consequence: The significance of an adverse outcome resulting from an incorrect decision based on the model. This considers patient safety, impact on public health, and the regulatory impact of the decision.

Table: Model Risk Assessment Matrix (Adapted from FDA Guidance and ASME VV-40)

| Low Decision Consequence | Medium Decision Consequence | High Decision Consequence | |

|---|---|---|---|

| High Model Influence | Medium Risk | High Risk | High Risk |

| Medium Model Influence | Low Risk | Medium Risk | High Risk |

| Low Model Influence | Low Risk | Low Risk | Medium Risk |

This risk assessment directly drives the rigor and extent of V&V activities needed. A high-risk model, such as one used as the primary evidence to waive a clinical trial for a life-saving drug, demands far more extensive credibility evidence than a low-risk model used for internal research and development decisions [14] [13].

Methodologies and Experimental Protocols for Establishing Credibility

The Credibility Evidence Framework

The evidence generated to establish credibility can be categorized. The U.S. Food and Drug Administration (FDA) guidance outlines eight categories of credibility evidence, which provide a practical framework for planning and reporting [15].

Table: Categories of Credibility Evidence for Computational Models

| Category | Type of Evidence | Description and Methodological Purpose |

|---|---|---|

| 1 | Code Verification | Demonstrates the software implementation accurately reflects the underlying mathematical model. Method: Unit and integration testing, software quality assurance. |

| 2 | Model Calibration | Assessment of the model's fit against the data used to estimate its parameters. Note: This alone is insufficient for validation. |

| 3 | Bench Test Validation | Compares model predictions with data from controlled in vitro or bench-top experiments. |

| 4 | In Vivo Validation | Compares model predictions with data from animal studies (in vivo). |

| 5 | Population-based Validation | Compares population-level predictions (e.g., average response) with a clinical dataset, without individual-level comparisons. |

| 6 | Emergent Model Behaviour | Evidence that the model reproduces known real-world phenomena that were not explicitly built into it. |

| 7 | Model Plausibility | Rationale supporting the choice of governing equations, assumptions, and input parameters based on established scientific principles. |

| 8 | Calculation Verification & UQ | Quantifies numerical solution accuracy and uncertainty for the specific simulations run to address the COU. |

A Hypothetical Experimental Protocol: PBPK Model for Dosing

To illustrate the application of these concepts, consider a hypothetical experiment for a drug development project.

- Research Question: How should the investigational drug be dosed when co-administered with moderate CYP3A4 inhibitors?

- Context of Use: A PBPK model will be used to predict the effects of moderate CYP3A4 inhibitors on the pharmacokinetics of the investigational drug in adult patients. The simulations will inform the dosing recommendations in the drug label [14].

- Model Risk Assessment:

- Model Influence: High, as the simulations may form the primary evidence for regulatory labeling, potentially waiving a dedicated clinical drug-drug interaction study.

- Decision Consequence: Medium, as incorrect dosing could lead to reduced efficacy or increased side effects, but within a known therapeutic window.

- Overall Risk: High [14].

Experimental and Credibility Protocol:

- Code and Calculation Verification: Provide evidence of software quality assurance and demonstrate that numerical errors are controlled [13].

- Model Calibration and Validation: The model must be built and validated in a step-wise manner.

- Model Inputs: System-specific parameters (e.g., human physiology) and drug-specific parameters (e.g., in vitro metabolism data) are defined [14].

- Model Calibration: The model is calibrated using observed clinical PK data from studies without CYP3A4 inhibitors.

- Model Validation: The predictive capability is tested by comparing simulated DDI effects against observed data from a clinical study with a strong CYP3A4 inhibitor. This tests the model's ability to extrapolate [14].

- Applicability Assessment: Justify the relevance of the validation against a strong inhibitor to support predictions for moderate inhibitors, based on the shared mechanistic pathway [14].

- Uncertainty Quantification (UQ): Perform sensitivity analysis to identify parameters that most influence the DDI prediction and quantify the uncertainty in the simulated exposure metrics [13].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Credibility Assessment

| Item / Reagent | Function in the Credibility Process |

|---|---|

| ASME VV-40:2018 Standard | Provides the foundational risk-informed framework for planning and assessing credibility activities [14] [13]. |

| FDA Guidance on CM&S | Offers a regulatory perspective and a nine-step process for assessing credibility in medical device submissions, extending ASME VV-40 concepts [16] [15]. |

| Software Verification Suite | A collection of unit and integration tests used for code verification to ensure the software is free of procedural errors [13]. |

| Comparator Datasets | High-quality experimental or clinical data (in vitro, in vivo, clinical) used as a benchmark for model validation [14]. |

| Uncertainty Quantification (UQ) Tools | Software and methodologies (e.g., sensitivity analysis, Monte Carlo simulation) to quantify numerical, parameter, and model form uncertainties [13]. |

The adoption of computational modeling in biomedical research and development hinges on a rigorous and systematic approach to building trust. The triad of Context of Use, Model Credibility, and the Fit-for-Purpose principle provides the conceptual framework for this endeavor. This framework is operationalized through the disciplined processes of Verification and Validation, the rigor of which is strategically calibrated by a risk-informed assessment. For researchers and drug development professionals, mastering these concepts is not merely an academic exercise. It is a critical competency that enables the development of credible, impactful models, facilitates clearer communication with regulators, and ultimately accelerates the delivery of safe and effective therapies to patients.

The Role of VVUQ in Model-Informed Drug Development (MIDD) Frameworks

Verification, Validation, and Uncertainty Quantification (VVUQ) constitute a rigorous framework for establishing the credibility of computational models used in scientific research and industrial applications. Within Model-Informed Drug Development (MIDD), these processes provide the foundational evidence that models are trustworthy for informing critical decisions about drug safety, efficacy, and dosing. Verification addresses the question "Are we building the model right?" by ensuring the computational implementation accurately represents the underlying mathematical model [18]. Validation addresses "Are we building the right model?" by determining how well the computational model represents the real-world biological system [18]. Uncertainty Quantification characterizes uncertainties in model inputs, parameters, and structure, and evaluates how these propagate to affect model outputs and predictions [18]. The adoption of robust VVUQ practices delivers tremendous efficiencies and risk mitigation in drug development, though it remains underutilized across many communities despite its potential [19].

Table: Core Components of VVUQ in MIDD

| Component | Definition | Key Question Answered | Primary Focus |

|---|---|---|---|

| Verification | Process of determining if a computational model is an accurate implementation of the underlying mathematical model [20] | "Are we building the model right?" [9] | Code and calculation accuracy [20] |

| Validation | Process of determining the extent to which a computational model is an accurate representation of the real-world system [20] | "Are we building the right model?" [9] | Agreement with experimental data [18] |

| Uncertainty Quantification | Process of characterizing uncertainties in model inputs and computing resultant uncertainty in model outputs [20] | "How reliable are the predictions?" | Predictive reliability and confidence [18] |

The VVUQ Process: Methodologies and Protocols

Verification Protocols

Verification encompasses two primary activities: code verification and calculation verification. Code verification tests for potential software bugs and implementation errors through methods such as unit testing, regression testing, and comparison with analytical solutions [20]. For complex physiological models in MIDD, this may involve verifying that ordinary differential equation solvers for pharmacokinetic models accurately compute drug concentration time courses. Calculation verification estimates numerical errors arising from spatial or temporal discretization [20]. In a whole-organ model simulation, this would involve demonstrating that numerical errors from mesh discretization are below an acceptable threshold for the context of use.

A robust verification protocol includes:

- Software Quality Assurance (SQA): Implementing full SQA adhering to established standards, including version control, systematic testing, and documentation [20].

- Numerical Code Verification: Testing numerical algorithms against problems with known analytical solutions to confirm proper implementation.

- Discretization Error Estimation: Using mesh refinement studies to quantify and control numerical errors in spatial and temporal discretization.

Validation Methodologies

Validation establishes the model's accuracy in representing reality by comparing model predictions to experimental data not used in model development. The ASME V&V40 Standard provides a risk-informed framework for validation activities, where the extent of validation required depends on the model's context of use (COU) and the decision's risk level [20]. For patient-specific models (PSMs) used in MIDD, special considerations include inter- and intra-user variability, multi-patient error estimation, and clinical validation across diverse populations [20].

Key validation factors include:

- Model Form Validation: Assessing whether the model structure, governing equations, and boundary conditions appropriately represent the biological system.

- Input Validation: Ensuring model inputs (e.g., drug properties, physiological parameters) are accurate and appropriate for the COU.

- Output Comparison: Quantifying agreement between model outputs and experimental data using appropriate metrics (e.g., mean absolute error, R-squared).

Uncertainty Quantification Techniques

UQ systematically analyzes how uncertainties from multiple sources affect model predictions. In healthcare applications, sources of uncertainty include intrinsic variability (e.g., time-dependent changes in patient physiology), extrinsic variability (e.g., patient-specific genetics and lifestyle), measurement error, and model discrepancy [18]. UQ methodologies include:

- Sensitivity Analysis: Identifying which input parameters contribute most significantly to output uncertainty.

- Forward Uncertainty Propagation: Using sampling methods (e.g., Monte Carlo, Latin Hypercube) to propagate input uncertainties through the model to quantify output uncertainty.

- Inverse Uncertainty Quantification: Inferring parameter uncertainties from available data using Bayesian methods.

Table: Sources of Uncertainty in MIDD Models

| Uncertainty Category | Specific Sources | Impact on MIDD Applications |

|---|---|---|

| Data-Related (Aleatoric) | Intrinsic variability (e.g., circadian blood pressure changes) [18] | Affects predictability of drug exposure and response time courses |

| Extrinsic variability (e.g., patient genetics, physiology) [18] | Impacts virtual population generation and subgroup analysis | |

| Measurement error (e.g., analytical assay precision) [18] | Affects parameter estimation from preclinical and clinical data | |

| Model-Related (Epistemic) | Model discrepancy (e.g., omitted genetics or disease interactions) [18] | Limits model applicability to specific patient subgroups or conditions |

| Structural uncertainty (e.g., model assumptions) [18] | Affects extrapolation beyond studied conditions | |

| Initial/boundary conditions [18] | Impacts simulation of specific physiological or clinical scenarios | |

| Coupling-Related | Geometry uncertainty (e.g., organ segmentation from medical images) [18] | Affects patient-specific dosimetry or biomechanical simulations |

| Scale transition uncertainty (e.g., cellular to tissue level) [18] | Impacts multiscale models linking cellular pharmacology to organ-level effects |

VVUQ Workflow and Implementation

The implementation of VVUQ follows a structured workflow that integrates these components throughout the model development and application lifecycle. The ASME V&V40 Standard provides a framework for credibility assessment that begins with defining the question of interest and context of use, followed by a risk assessment to determine the necessary level of VVUQ activities [20].

VVUQ Workflow in MIDD

For patient-specific models, which form the basis of digital twins in healthcare, additional considerations include managing inter- and intra-user variability, implementing multi-patient and "every-patient" error estimation, and addressing uncertainty in personalized versus non-personalized inputs [20]. The maturity of VVUQ practices in cardiac electrophysiological modeling provides an exemplar for other MIDD applications, demonstrating how complex multiscale models can be evaluated for credibility [20].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of VVUQ requires specific computational tools and methodologies tailored to MIDD applications. This toolkit enables researchers to execute the rigorous evaluation processes necessary for model credibility.

Table: Essential VVUQ Research Reagents and Computational Tools

| Tool/Resource | Function in VVUQ | Application in MIDD |

|---|---|---|

| Software Quality Assurance (SQA) Framework | Ensures code reliability through version control, systematic testing, and documentation [20] | Maintains integrity of model codebase throughout drug development lifecycle |

| Numerical Code Verification Tools | Tests computational implementation against analytical solutions [20] | Verifies differential equation solvers in PK/PD and systems pharmacology models |

| Mesh Generation/Refinement Tools | Supports calculation verification for spatial discretization [20] | Enables geometry-based simulations (e.g., organ-level distribution, tissue penetration) |

| Sensitivity Analysis Algorithms | Identifies parameters contributing most to output uncertainty [18] | Prioritizes experimental efforts for parameter refinement in quantitative systems pharmacology |

| Uncertainty Propagation Methods | Quantifies how input uncertainties affect model outputs [18] | Establishes confidence intervals for model-informed dose selection and trial designs |

| Model Validation Databases | Provides experimental data for comparison with model predictions [20] | Enables validation of drug exposure-response relationships across populations |

| Credibility Assessment Framework | Guides evaluation of model fitness for purpose [20] | Supports regulatory submissions through standardized credibility evidence |

VVUQ Application in MIDD: Case Examples and Impact

Cardiac Electrophysiology as an Exemplar

Cardiac electrophysiological (EP) modeling represents a mature application of VVUQ with direct relevance to drug safety assessment. These models simulate electrical activity from cellular to organ levels, requiring integration of disparate data sources and solving complex multiscale models [20]. In MIDD, cardiac EP models help assess drug-induced arrhythmia risk (e.g., Torsades de Pointes). The VVUQ process for these models includes:

- Verification: Ensuring proper implementation of ion channel models and numerical solvers for electrical propagation equations.

- Validation: Comparing model predictions of action potential duration and arrhythmia susceptibility to experimental data from pre-clinical assays and clinical studies.

- Uncertainty Quantification: Characterizing uncertainty in ion channel parameters and quantifying its impact on proarrhythmic risk predictions.

Credibility Assessment for Regulatory Decision-Making

The ASME V&V40 Standard provides a risk-informed framework for establishing model credibility that is increasingly recognized in regulatory submissions [20]. This approach links the required level of VVUQ evidence to the impact of the decision the model supports. For MIDD applications, this means:

- Low-Risk Decisions: Limited VVUQ may be sufficient for early research prioritization.

- High-Risk Decisions: Comprehensive VVUQ is required for model-informed regulatory decisions such as dose justification or virtual control arms.

Risk-Based Credibility Assessment

VVUQ provides the methodological foundation for establishing credibility of computational models in MIDD, enabling more reliable prediction of drug behavior in humans. As modeling sophistication increases with the development of patient-specific models and digital twins, robust VVUQ practices become increasingly critical [20] [21]. Future advancements will likely include standardized VVUQ protocols for specific MIDD applications, increased automation of VVUQ processes, and broader adoption of uncertainty quantification to characterize both variability and ignorance in drug development predictions [18]. By systematically implementing VVUQ, drug developers can enhance model reliability, regulatory acceptance, and ultimately, the quality of decisions that bring safe and effective medicines to patients.

In computational modeling research, the processes of verification and validation (V&V) are fundamental to establishing model credibility. Verification addresses the question "Are we solving the equations correctly?" by ensuring the computational model accurately represents the underlying mathematical description. Validation asks "Are we solving the correct equations?" by determining whether the model accurately represents real-world phenomena [1]. Within this V&V framework, Uncertainty Quantification (UQ) plays a critical role in assessing how variations in numerical and physical parameters affect simulation outcomes, forming the complete paradigm of Verification, Validation, and Uncertainty Quantification (VVUQ) [1].

A crucial aspect of UQ involves distinguishing between two fundamental types of uncertainty: aleatory and epistemic. Properly identifying and classifying these sources of error is essential for guiding model improvement, directing resource allocation, and honestly communicating the reliability of computational predictions to stakeholders in fields like drug development where decisions carry significant consequences [22] [23].

Theoretical Foundations of Uncertainty

Aleatory Uncertainty

Aleatory uncertainty (also known as stochastic, irreducible, or variability uncertainty) stems from the inherent randomness and natural variability of physical systems or observation processes [22] [23]. This type of uncertainty is characterized by its irreducible nature; collecting additional data may better characterize the variability but cannot eliminate it [23].

Examples in computational modeling include:

- Natural variations in material properties

- Stochastic processes in biological systems

- Random measurement noise in experimental apparatus

- Inherent variability in patient responses to pharmacological interventions

Epistemic Uncertainty

Epistemic uncertainty (also known as systematic, reducible, or model uncertainty) arises from incomplete knowledge or information about the system being modeled [22] [23] [24]. This uncertainty is fundamentally reducible in principle through improved models, additional data collection, or enhanced measurements [23] [25].

Examples in computational modeling include:

- Uncertainty about the appropriate functional form of a model

- Uncertainty in model parameters due to limited experimental data

- Uncertainty from oversimplified model assumptions

- Lack of knowledge about relevant variables in drug mechanism of action

Comparative Analysis

Table 1: Fundamental Characteristics of Aleatory and Epistemic Uncertainty

| Characteristic | Aleatory Uncertainty | Epistemic Uncertainty |

|---|---|---|

| Origin | Inherent system variability | Incomplete knowledge |

| Reducibility | Irreducible | Reducible |

| Representation | Probability theory | Probability + alternative theories |

| Data Impact | More data characterizes but doesn't reduce | More data potentially reduces |

| Common Descriptors | Randomness, variability, stochasticity | Ignorance, approximation, simplification |

Table 2: Manifestations in Computational Modeling Contexts

| Modeling Context | Aleatory Manifestations | Epistemic Manifestations |

|---|---|---|

| Pharmacokinetics | Inter-patient variability in drug metabolism | Uncertainty in metabolic pathway parameters |

| Clinical Outcomes | Stochastic disease progression | Model simplification of biological processes |

| Material Science | Natural imperfections in crystalline structures | Uncertainty in constitutive model selection |

| Fluid Dynamics | Turbulent fluctuations | Uncertainty in boundary condition specification |

Methodologies for Uncertainty Identification and Quantification

Conceptual Framework for Uncertainty Classification

The following diagram illustrates the systematic process for identifying and classifying uncertainty sources within a computational modeling framework:

Experimental Protocols for Uncertainty Quantification

Protocol 1: Monte Carlo Dropout for Epistemic Uncertainty

Purpose: Estimate epistemic uncertainty in deep learning models used for computational modeling [22] [23].

Detailed Methodology:

- Model Preparation: Implement a neural network architecture with dropout layers inserted between hidden layers.

- Training Phase: Train the network with dropout active using standard optimization procedures.

- Inference Phase:

- Keep dropout active during prediction (unlike standard practice)

- Perform multiple (typically 100-1000) forward passes for the same input

- Record the variation in outputs across different dropout masks

- Uncertainty Quantification:

- Calculate predictive mean across all forward passes

- Compute variance across predictions as epistemic uncertainty measure

- For classification: entropy of mean probability vector

- For regression: variance of output samples

Interpretation: Higher variance across forward passes indicates greater epistemic uncertainty, suggesting the model encounters regions of input space where it has limited knowledge [23].

Protocol 2: Deep Ensembles for Predictive Uncertainty

Purpose: Quantify total predictive uncertainty by combining multiple models [22] [23].

Detailed Methodology:

- Ensemble Generation:

- Train multiple (5-10) neural networks with identical architectures

- Vary random initialization seeds for each model

- Optionally use different training data subsets via bootstrapping

- Inference Procedure:

- Pass each input through all ensemble members

- Collect predictions from all models

- Uncertainty Decomposition:

- Calculate mean prediction across ensemble

- Compute total variance as: Var[f(x)] = (1/N) × Σ(fᵢ(x) - f̄(x))²

- For epistemic uncertainty: variation between model predictions

- For aleatoric uncertainty: average of individual model variances

Interpretation: Disagreement between models (high inter-model variance) indicates epistemic uncertainty, while consistent disagreement with ground truth across all models suggests aleatoric uncertainty [22].

Protocol 3: Bayesian Neural Networks for Uncertainty Quantification

Purpose: Implement principled uncertainty quantification through probabilistic modeling [22].

Detailed Methodology:

- Model Specification:

- Treat network weights as probability distributions rather than point estimates

- Define prior distributions over weights (typically Gaussian)

- Training Procedure:

- Use variational inference or Markov Chain Monte Carlo (MCMC) methods

- Learn posterior distribution over weights given training data

- Prediction Phase:

- Generate predictions by marginalizing over weight posterior

- Obtain predictive distribution through Bayesian model averaging

- Uncertainty Extraction:

- Predictive variance captures both aleatoric and epistemic uncertainty

- Use entropy of predictive distribution for classification tasks

- Calculate credible intervals for regression outputs

Interpretation: The spread of the predictive distribution naturally incorporates both types of uncertainty, with the ability to decompose them through analytical methods [22].

Uncertainty Quantification Workflow

The following diagram illustrates the complete workflow for quantifying and decomposing uncertainty in computational models:

Practical Implementation Framework

Research Reagent Solutions for Uncertainty Quantification

Table 3: Essential Computational Tools for Uncertainty Analysis

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Monte Carlo Simulation Engine | Propagates input uncertainties through model | General computational models with parameter uncertainty |

| Markov Chain Monte Carlo (MCMC) | Samples from complex posterior distributions | Bayesian parameter estimation and model calibration |

| Gaussian Process Regression | Creates surrogate models with built-in uncertainty | Emulation of computationally expensive simulations |

| Bayesian Neural Network Framework | Implements probabilistic deep learning | High-dimensional models with complex uncertainty structures |

| Conformal Prediction Library | Provides distribution-free prediction intervals | Model-agnostic uncertainty quantification with coverage guarantees |

| Ensemble Modeling Toolkit | Manages multiple model training and prediction | Committee-based uncertainty estimation |

| Sobol Sequence Generator | Implements quasi-random sampling | Efficient exploration of high-dimensional parameter spaces |

| Statistical Emulator | Creates fast approximations of complex models | Uncertainty propagation in computationally intensive models |

Decision Framework for Uncertainty Reduction

Table 4: Strategic Approaches for Managing Different Uncertainty Types

| Uncertainty Type | Characterization Methods | Reduction Strategies | Acceptance Measures |

|---|---|---|---|

| Aleatory | Statistical analysis of residuals, Variogram analysis, Noise decomposition | Improved measurement precision, Stratification of populations, Covariate inclusion | Uncertainty propagation, Robust design, Safety margins |

| Epistemic | Sensitivity analysis, Model discrepancy assessment, Bayesian model averaging | Additional targeted experiments, Model structure improvement, Domain expansion | Model averaging, Multiple model comparison, Conservative bounding |

Advanced Topics in Uncertainty Classification

Distinguishing Uncertainty in Complex Models

In practical applications, aleatory and epistemic uncertainties are often intertwined, requiring sophisticated decomposition approaches [25]. For computational models in drug development, this distinction becomes particularly important when:

- Extrapolating beyond clinical trial populations - Aleatory variability between patients vs. epistemic uncertainty about population characteristics

- Predicting long-term drug effects - Aleatory stochasticity in biological processes vs. epistemic uncertainty in mechanism of action

- Scaling from in vitro to in vivo results - Aleatory experimental noise vs. epistemic uncertainty in translational relationships

Recent research demonstrates that linear probes trained on internal activations of large models can effectively distinguish epistemic from aleatoric uncertainty, even when evaluated on unseen data domains [25]. This suggests that neural representations may natively encode information about the nature of uncertainty, providing promising avenues for automated uncertainty classification.

Uncertainty in Model Selection and Development

Beyond parameter uncertainty, model uncertainty represents a significant epistemic challenge in computational modeling. As classified in recent literature, this encompasses [24]:

- Uncertainty about the true model - Concerning the appropriate functional form, variables, and distributional assumptions

- Model selection uncertainty - Reflecting how different data samples can lead to different selected models

- Model selection instability - Where slight changes in data yield different optimal models

Addressing these uncertainties requires techniques such as Bayesian Model Averaging (BMA) and Frequentist Model Averaging (FMA), which propagate model selection uncertainty into predictive uncertainty, providing more honest assessments of predictive reliability [24].

The rigorous distinction between aleatory and epistemic uncertainty provides a critical foundation for credible computational modeling in scientific research and drug development. By implementing the methodologies and frameworks presented in this guide, researchers can not only quantify these uncertainty sources but also develop targeted strategies for uncertainty reduction where possible and appropriate uncertainty communication where inevitable. This systematic approach to uncertainty classification strengthens the verification and validation process, ultimately supporting more reliable scientific inferences and safer, more effective therapeutic developments.

From Theory to Practice: Implementing VVUQ in Biomedical Research

Verification and Validation (V&V) are fundamental pillars of credible computational modeling research. Within a broader VVUQ (Verification, Validation, and Uncertainty Quantification) framework, they serve distinct but complementary purposes. Verification is the process of determining that a computational model accurately represents the underlying mathematical model and its solution ("solving the equations right"). Validation is the process of determining the degree to which a model is an accurate representation of the real world from the perspective of the intended uses of the model ("solving the right equations") [26]. This guide focuses exclusively on the first pillar: code and solution verification, providing researchers and drug development professionals with the methodologies to ensure their software solves equations correctly.

Core Concepts: Code Verification vs. Solution Verification

Verification is subdivided into two critical activities:

- Code Verification: The process of ensuring that the mathematical model and its solution algorithms are correctly implemented in software code, free of programming errors. It answers the question: "Is the code bug-free?"

- Solution Verification: The process of estimating the numerical accuracy of a specific computational solution obtained using a verified code. It quantifies the numerical errors introduced by the discretization of continuous equations and iterative solution methods [27] [26].

The following workflow outlines the typical stages of a verification process, from the foundational mathematical model to a final, credible solution.

Code Verification: Establishing a Bug-Free Code

The objective of code verification is to find and eliminate errors in the source code (e.g., mistakes in logic, syntax, or algorithm implementation). A key methodology is the Method of Manufactured Solutions (MMS).

Experimental Protocol: The Method of Manufactured Solutions (MMS)

The MMS is a rigorous technique for code verification that does not rely on pre-existing analytical solutions to the governing equations [26].

Detailed Methodology:

- Choose an Arbitrary Solution: Begin by choosing a smooth, non-trivial, but arbitrary function for the dependent variable(s). This function should be analytic (infinitely differentiable) and not satisfy the original governing equations.

- Derive Analytical Source Terms: Substitute the manufactured solution into the governing partial differential equations (PDEs). This substitution will result in a residual, as the chosen function is not a solution. This residual is then considered as an analytical source term that must be added to the PDE to make the manufactured solution an exact solution.

- Implement Source Terms in Code: Modify the code to include the derived analytical source terms.

- Run Simulation and Compare: Execute the simulation on a series of progressively refined grids (or meshes).

- Calculate Error and Order of Accuracy: Compute the error by comparing the numerical solution to the known manufactured solution. The key metric is the observed order of accuracy, which should match the formal order of the numerical discretization scheme (e.g., second-order convergence for a second-order method).

The logical sequence of MMS, from creating a known solution to verifying the code's performance against it, is outlined below.

Solution Verification: Quantifying Numerical Error

Once the code is verified, solution verification is performed for each simulation to quantify numerical errors, primarily discretization error.

Discretization Error Estimation

Discretization error arises from approximating continuous PDEs with algebraic equations on a discrete grid. The following table summarizes common methods for estimating this error [26].

Table 1: Methods for Estimating Discretization Error in Solution Verification

| Method | Brief Description | Key Function | Pros & Cons |

|---|---|---|---|

| Richardson Extrapolation | Uses solutions on two or more systematically refined grids to estimate the exact solution and error on the finest grid. | Provides an error estimate and an improved solution estimate. | Pro: High-fidelity estimate. Con: Requires multiple, systematic grid refinements; can be costly. |

| Grid Convergence Index (GCI) | A standardized methodology based on Richardson Extrapolation that provides a consistent error band. | Reports a conservative confidence interval for the discretization error. | Pro: Allows for cross-study comparison; built-in safety factor. Con: Same cost as Richardson Extrapolation. |

| Residual Methods | Uses the local truncation error (the residual when the numerical solution is inserted into the PDE) as an error estimator. | Guides adaptive mesh refinement (AMR). | Pro: Can be computed from a single solution. Con: May not be as accurate as Richardson-based methods. |

Experimental Protocol: Conducting a Grid Convergence Study

A grid convergence study is the primary experimental protocol for solution verification.

Detailed Methodology:

- Generate a Sequence of Grids: Create a series of at least three systematically refined grids. A common practice is to double the number of grid points in each spatial direction for each refinement (e.g., a grid refinement factor of 2).

- Compute Solutions: Run the simulation to obtain a solution for each grid in the sequence.

- Calculate Key Quantities of Interest (QOIs): For each solution, compute the specific QOIs relevant to the study, such as drag coefficient, peak stress, or flow rate.

- Analyze Convergence: Observe the behavior of the QOIs as the grid is refined. The solutions should demonstrate monotonic convergence.

- Apply an Error Estimator: Use a method from Table 1, such as Richardson Extrapolation, to estimate the numerical error in the finest grid solution and the observed order of accuracy of the method.

The Scientist's Toolkit: Essential Research Reagents

The following table details key "research reagents" – the software tools and standards – essential for performing rigorous code and solution verification [28] [29] [30].

Table 2: Key Research Reagent Solutions for Verification

| Tool / Standard Category | Specific Tool / Standard | Function in Verification |

|---|---|---|

| Verification Standards | ASME V&V 10-2019, ASME VVUQ 1-2022, NASA STD 7009 | Provide standardized procedures, terminology, and methodologies for performing and reporting verification activities. Essential for ensuring consistency and credibility, especially in regulated industries [26]. |

| Multiphysics Simulation Platforms | ANSYS, COMSOL Multiphysics | Provide built-in tools for mesh refinement studies and some error estimation. Their solvers undergo rigorous code verification, allowing users to focus on solution verification and application [30]. |

| Mathematical & Scripting Environments | MATLAB & Simulink, Python (NumPy/SciPy) | Enable the implementation and automation of verification protocols, such as running MMS or processing results from grid convergence studies. Offer high flexibility for custom analysis [30]. |

| Open-Source Simulation Environments | OpenModelica | An open-source tool for equation-based modeling. Its transparency allows for direct inspection and verification of implementation, making it valuable for academic research and method development [30]. |

| Advanced Formal Methods Tools | VMCAI-related Research Tools (e.g., for Model Checking, Abstract Interpretation) | While more common in computer science, these tools (often presented at conferences like VMCAI) provide formal, mathematical proof of certain software properties, representing the highest level of code verification for critical systems [29]. |

Code and solution verification are non-negotiable steps in establishing the credibility of computational simulations. Code verification, through methods like the Method of Manufactured Solutions, ensures that the software implementation is free of errors. Solution verification, primarily via grid convergence studies, quantifies the numerical errors in a specific computation. By systematically applying the protocols and tools detailed in this guide, researchers and scientists in drug development and other fields can provide evidence that their software is indeed "solving the equations correctly," thereby creating a solid foundation for subsequent model validation and decision-making.

Verification and Validation (V&V) are fundamental processes for establishing the credibility and reliability of computational models used in scientific research and drug development. Despite their complementary nature, they address distinct questions: Verification is a mathematical exercise that answers "Are we solving the equations correctly?" by checking for programming errors and numerical accuracy, while Validation is a scientific exercise that answers "Are we solving the correct equations?" by assessing how well the computational model represents physical reality [31] [7]. This distinction is crucial for researchers developing in silico models, as a model can be perfectly verified yet remain invalid for its intended purpose if it does not accurately capture the underlying biology [7].

The role of V&V has expanded significantly as high-quality computational modeling becomes available in more application areas [32]. In drug development, where modeling and simulation has progressed from a scientific nicety to a regulatory necessity, rigorous V&V provides the evidence base that allows researchers, clinicians, and regulators to trust model predictions [33]. The U.S. Food and Drug Administration's Project Optimus initiative and the 21st Century Cures Act explicitly recognize the importance of these in silico tools for improving drug development efficiency [34] [33]. This guide provides a comprehensive framework for designing effective validation experiments that bridge bench research and virtual patient applications.

Core Principles of Verification and Validation

Defining the V&V Framework

Verification consists of two complementary activities: code verification addresses coding mistakes and checks the consistency of discretization techniques, while solution verification estimates the numerical uncertainty of solutions when exact answers are unknown [31]. Code verification ensures the mathematical model is implemented correctly in software, whereas solution verification quantifies the numerical error in computed results [32].

Validation quantifies modeling errors by comparing computational predictions to experimental data, accounting for uncertainties in both computational and experimental results [31]. Unlike verification, validation is not a binary pass/fail determination but rather a process of assessing the degree to which a model is an accurate representation of the real world from the perspective of its intended uses [7]. The required level of validation rigor should be commensurate with the importance and needs of the application and decision context [32].

Table 1: Key Definitions in Verification and Validation

| Term | Definition | Primary Question |

|---|---|---|

| Verification | Process of determining that a model implementation accurately represents the conceptual description and solution | "Are we solving the equations right?" |

| Validation | Process of comparing computational predictions to experimental data to assess modeling error | "Are we solving the right equations?" |

| Uncertainty Quantification | Process of characterizing and quantifying uncertainties in model inputs and their propagation to outputs | "What is the potential range of errors in our predictions?" |

| Code Verification | Checking for programming errors and consistency of discretization techniques | "Is the code implemented correctly?" |