Verification vs Validation: A Strategic Guide for Biomedical Model Credibility

This article provides a comprehensive guide to model verification and validation (V&V), tailored for researchers and professionals in drug development and biomedical sciences.

Verification vs Validation: A Strategic Guide for Biomedical Model Credibility

Abstract

This article provides a comprehensive guide to model verification and validation (V&V), tailored for researchers and professionals in drug development and biomedical sciences. It clarifies the foundational distinction between 'building the model right' (verification) and 'building the right model' (validation) and explores their critical roles in ensuring model credibility for research and regulatory acceptance. The content spans from core definitions and methodological processes to advanced troubleshooting and quantitative validation techniques, concluding with best practices for implementing a rigorous V&V framework in biomedical and clinical research settings.

Verification and Validation Defined: Mastering the Core Concepts for Scientific Modeling

In scientific and industrial contexts, a model is a representation of a real-world process, created to understand relationships between input variables and outcomes [1]. These models can be mathematical, simulation-based, or physical, and they allow researchers to study, experiment, and predict system behaviors without directly intervening in the actual process [1]. As noted by statistician George E.P. Box, "Essentially, all models are wrong, but some are useful," highlighting that while no model can fully capture reality, a well-constructed model provides significant practical utility [1].

The development and refinement of a model follow a structured lifecycle to ensure its reliability. This begins with model formulation, where the model's structure and underlying assumptions are defined based on the problem context. Next comes parameter estimation and training, where the model is calibrated using available data. The two crucial stages that follow—verification and validation—serve distinct but complementary purposes in assessing model quality and form the core focus of this technical guide.

Defining Verification and Validation

What is Model Verification?

Model verification is the process of ensuring that a computational model is implemented correctly and functions as intended from a technical perspective [2]. It answers the question: "Have we built the model correctly?" according to its specifications [1]. Verification involves checking that the model's logic, algorithms, code, and calculations are error-free and consistent with its theoretical design [2]. This process does not assess whether the model accurately represents reality, but rather confirms that it operates correctly based on its defined parameters and relationships.

What is Model Validation?

Model validation evaluates whether the model accurately represents the real-world system it is intended to simulate [2] [1]. It answers the question: "Have we built the correct model?" [1]. Validation determines how well the model's predictions correspond to actual observed outcomes in the application domain, ensuring it achieves its intended purpose and is fit for use in decision-making [3] [4].

Core Differences Between Verification and Validation

The table below summarizes the fundamental distinctions between these two critical processes:

Table 1: Key Differences Between Model Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Primary Question | Are we building the model correctly? [1] | Are we building the correct model? [1] |

| Focus | Internal correctness, code implementation, algorithmic accuracy [2] | Correspondence to real-world phenomena, predictive accuracy [1] |

| Basis | Model specifications, design documents, theoretical requirements [2] | Empirical data, experimental results, real-world observations [1] |

| Methods | Code reviews, unit testing, walkthroughs, static analysis [5] [2] | Statistical tests, residual analysis, cross-validation, comparison with new data [3] [6] |

| When Performed | Throughout development, before validation [1] | After verification, using separate validation datasets [1] |

| Outcome | Error-free implementation that matches specifications [1] | Model that accurately represents reality within intended application domain [6] |

The Critical Importance of Verification and Validation

Why Verification Matters

Verification provides the essential foundation for model credibility by ensuring technical correctness. It identifies implementation errors early in the development process, when they are least costly to fix [2]. In complex pharmaceutical development models, verification catches calculation errors, logic flaws, and coding mistakes that could otherwise lead to fundamentally flawed results and misguided decisions [1]. For instance, in a simulation model of a distribution center, verification might reveal an incorrectly entered parameter where "15 minutes" was entered instead of "1.5 minutes" for machine processing time [1]. Regular verification throughout the modeling lifecycle prevents such errors from propagating and saves significant time and resources [2].

Why Validation is Essential

Validation provides the evidence that a model is not just mathematically sound but also scientifically meaningful and applicable to real-world scenarios. In pharmaceutical development and healthcare applications, model validation is particularly crucial as inaccurate predictions can have severe consequences [3] [4]. Proper validation ensures models can generalize beyond their training data to new, unseen instances, which is the ultimate goal of any predictive model [3] [4]. It helps prevent both overfitting (where a model learns noise rather than underlying patterns) and underfitting (where a model fails to capture important relationships), both of which render models unreliable for practical application [3] [4].

Consequences of Inadequate V&V

Neglecting either verification or validation risks substantial operational, financial, and safety consequences. In regulatory environments like pharmaceutical development, insufficient V&V can lead to non-compliance with FDA, EMEA, and ICH guidelines [7]. More critically, unvalidated healthcare models may produce erroneous predictions affecting patient safety, while invalidated manufacturing process models can result in failed production batches, product recalls, and significant financial losses [3] [4].

Methodologies and Experimental Protocols

Model Verification Techniques

Verification employs various systematic approaches to ensure model implementation matches specifications:

Code Inspections and Walkthroughs: Formal, systematic peer reviews of model code and documentation using checklists and responsibilities to identify errors before dynamic testing begins [5] [2]. Team members methodically trace through code logic to detect implementation flaws.

Static Analysis: Automated tools examine source code without execution to detect potential bugs, security vulnerabilities, maintainability issues, and adherence to coding standards [5].

Unit Testing: Isolated testing of individual model components or functions to verify they produce expected outputs for given inputs [5]. Developers create and run test cases to ensure each unit behaves as specified before integration.

Traceability Verification: Ensuring each model requirement has corresponding implementation and test coverage, typically using traceability matrices to map relationships between specifications, code, and tests [5].

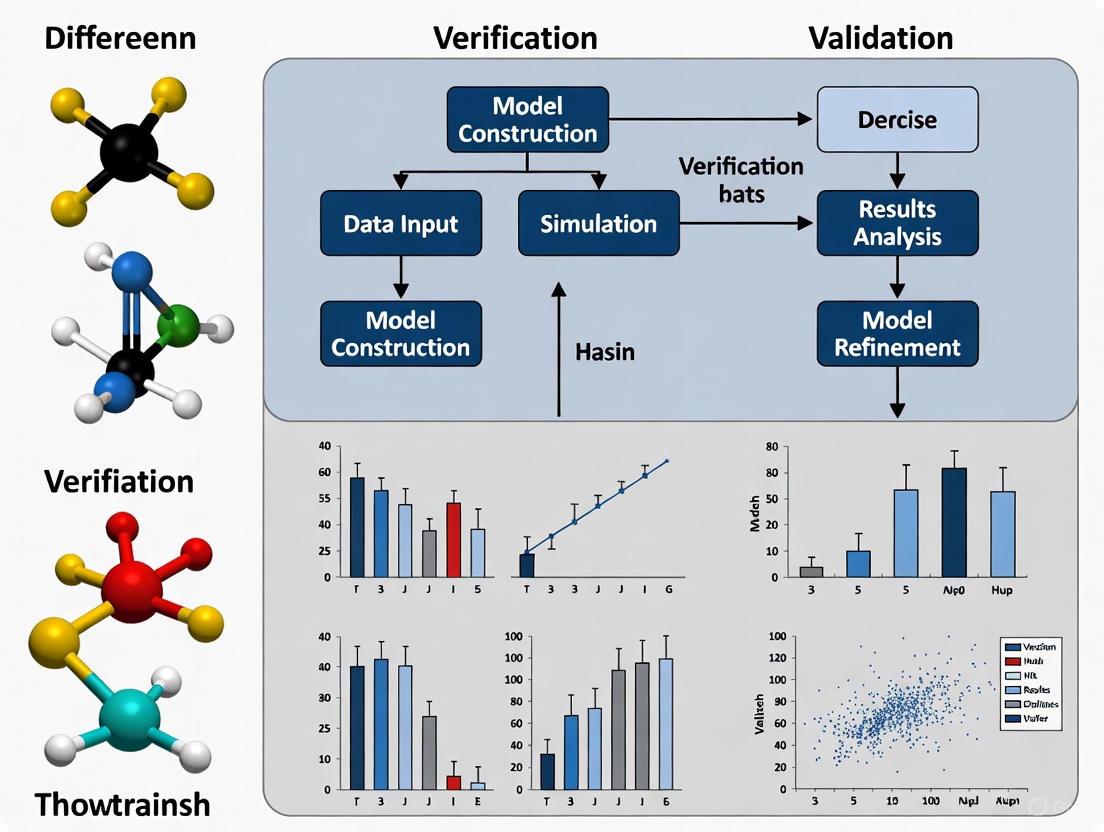

The verification workflow typically follows a structured process from requirements review through defect resolution, as illustrated below:

Diagram 1: Model Verification Workflow

Model Validation Techniques

Validation employs statistical and empirical methods to assess model performance against real-world data:

Residual Diagnostics: Analyzing differences between actual data and model predictions to check for patterns that indicate model flaws [6]. This includes creating:

- Residuals vs. Fitted Values Plot: Checks for constant variance and nonlinear patterns

- Normal Q-Q Plot: Assesses normality of residuals

- Scale-Location Plot: Evaluates homoscedasticity

- Residuals vs. Leverage Plot: Identifies influential data points

Cross-Validation: A resampling technique that iteratively refits the model, each time leaving out a subset of data to test predictive performance on unseen samples [3] [6]. Common approaches include:

- k-Fold Cross-Validation: Data divided into k subsets; model trained on k-1 folds and tested on the remaining fold, repeated k times [3]

- Leave-One-Out Cross-Validation (LOOCV): Special case where k equals the number of data points [3]

- Stratified K-Fold: Preserves class distribution proportions in each fold [3]

Holdout Validation: Splitting data into separate training and testing sets, with the testing set reserved exclusively for validation [3]. Common splits include 70-30 or 80-20 ratios.

External Validation: Testing model performance on completely new datasets not used during model development, providing the strongest evidence of generalizability [6].

The selection of appropriate validation techniques depends on the research context, data availability, and model purpose, as summarized below:

Table 2: Model Validation Methods Based on Research Context

| Research Context | Recommended Validation Methods | Key Considerations |

|---|---|---|

| Existing process with available data | Holdout validation, k-Fold Cross-Validation, Residual diagnostics | Ensure test data represents operational range; use multiple methods for robustness [6] |

| Existing process with limited data | Leave-One-Out Cross-Validation, Bootstrap validation, Bayesian methods | LOOCV computationally intensive with large datasets; consider prior distributions in Bayesian approaches [3] [6] |

| New process with known variable relationships | Correlation analysis, comparison to established theoretical relationships, expert judgment | Use Turing-type tests where experts distinguish between real data and model outputs [1] [6] |

| Time-series data | Time-series cross-validation, temporal holdout, autocorrelation analysis | Respect temporal order; don't use future data to predict past [3] |

Quantitative Framework for V&V

Statistical Measures for Validation

Effective model validation employs quantitative metrics to assess performance objectively. The specific measures used depend on the model type (regression, classification, simulation) and application context:

Table 3: Key Quantitative Measures for Model Validation

| Metric Category | Specific Measures | Interpretation | Application Context |

|---|---|---|---|

| Bias Estimation | Mean difference, Bland-Altman difference, Regression-estimated bias | Measures systematic over/under prediction; should be minimal and consistent across measurement range [8] | Method comparisons, assay verification, instrument calibration |

| Precision Metrics | Standard deviation, %CV (Coefficient of Variation), Confidence Intervals | Quantifies random variation; smaller values indicate higher precision [8] | Replicate analyses, method robustness studies |

| Goodness-of-Fit | R-squared, Adjusted R-squared, Akaike Information Criterion (AIC) | Proportion of variance explained by model; higher R² indicates better fit [6] | Regression models, predictive model development |

| Error Metrics | Mean Squared Error (MSE), Root Mean Squared Error (RMSE), Mean Absolute Error (MAE) | Magnitude of prediction error; smaller values indicate better accuracy [3] | Predictive models, forecasting applications |

| Performance Thresholds | Sensitivity, Specificity, Accuracy, Precision-Recall | Classification performance; context-dependent optimal balances [3] | Binary classification, diagnostic tests |

Experimental Design for Validation Studies

Proper experimental design is crucial for generating meaningful validation data. Design of Experiments (DOE) methodologies enable efficient evaluation of multiple factors simultaneously, providing more reliable information than one-factor-at-a-time approaches [7]. The pharmaceutical development example below illustrates a typical DOE application:

Table 4: Experimental Design for Pelletization Process Optimization

| Run Order | Binder (%) | Granulation Water (%) | Granulation Time (min) | Spheronization Speed (RPM) | Spheronization Time (min) | Yield (%) |

|---|---|---|---|---|---|---|

| 1 | 1.0 | 40 | 5 | 500 | 4 | 79.2 |

| 2 | 1.5 | 40 | 3 | 900 | 4 | 78.4 |

| 3 | 1.0 | 30 | 5 | 900 | 4 | 63.4 |

| 4 | 1.5 | 30 | 3 | 500 | 4 | 81.3 |

| 5 | 1.0 | 40 | 3 | 500 | 8 | 72.3 |

| 6 | 1.0 | 30 | 3 | 900 | 8 | 52.4 |

| 7 | 1.5 | 40 | 5 | 900 | 8 | 72.6 |

| 8 | 1.5 | 30 | 5 | 500 | 8 | 74.8 |

This fractional factorial design (2⁵⁻²) efficiently screens five factors at two levels each in only eight experimental runs, identifying significant factors affecting yield while minimizing resource requirements [7]. Statistical analysis of the results through ANOVA reveals that binder concentration, granulation water percentage, spheronization speed, and spheronization time account for over 98% of the variation in yield, enabling focused process optimization [7].

A Practical Workflow: Verification Precedes Validation

The relationship between verification and validation follows a logical sequence, with verification establishing technical correctness before validation assesses real-world relevance:

Diagram 2: Integrated V&V Workflow

This sequential approach ensures that fundamental implementation errors are corrected before assessing the model's relationship to reality, saving time and resources [1]. As shown in the workflow, both verification and validation may require multiple iterations before a model meets all requirements for deployment.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful model verification and validation in pharmaceutical development requires specific methodological tools and statistical approaches:

Table 5: Essential Research Reagents for Model V&V

| Tool/Category | Specific Examples | Function in V&V Process |

|---|---|---|

| Statistical Software | R, Python (scikit-learn, statsmodels), SAS, SPSS | Implement statistical validation methods, generate diagnostic plots, calculate performance metrics [6] |

| DOE Platforms | JMP, Minitab, SPC for Excel, Design-Expert | Design efficient experiments, analyze factorial designs, optimize process parameters [7] |

| Cross-Validation Methods | k-Fold, Leave-One-Out, Stratified K-Fold, Holdout | Assess model generalizability, detect overfitting, estimate performance on new data [3] [6] |

| Residual Diagnostics | Residual vs. Fitted plots, Q-Q plots, Scale-Location plots, ACF plots | Verify model assumptions, identify patterns in errors, detect heteroscedasticity and autocorrelation [6] |

| Reference Materials | Certified reference standards, quality control materials, spiked samples | Establish measurement accuracy, evaluate systematic bias, demonstrate method validity [8] |

| Data Management Systems | Electronic Lab Notebooks (ELNs), Laboratory Information Management Systems (LIMS) | Maintain data integrity, ensure traceability, document experimental parameters [8] |

Model verification and validation represent complementary but distinct processes that together ensure model reliability and relevance. Verification establishes that a model is implemented correctly according to its specifications, while validation confirms that the correct model was built for its intended real-world application [1]. Both processes are essential across scientific domains, but particularly crucial in regulated environments like pharmaceutical development where models inform critical decisions affecting product quality and patient safety [7].

A robust V&V strategy incorporates multiple techniques tailored to the specific research context, with verification preceding validation in an iterative workflow. Quantitative measures and statistical rigor provide the objective evidence needed to assess model performance, while proper experimental design ensures efficient generation of meaningful validation data. By adopting the comprehensive framework presented in this guide, researchers and drug development professionals can develop models that are not only technically sound but also scientifically meaningful and fit for their intended purpose.

In computational sciences, particularly in high-stakes fields like pharmaceutical development, the processes of verification and validation (V&V) are critical for ensuring model reliability and regulatory acceptance. Despite their intertwined nature, they address two fundamentally distinct questions: verification determines if a model has been implemented correctly according to its specifications ("building it right"), while validation assesses if the model is accurate and fit for its intended real-world purpose ("building the right thing") [1] [9]. This guide provides researchers and drug development professionals with a technical framework for implementing robust V&V practices, underpinned by experimental protocols, quantitative benchmarks, and regulatory considerations.

The creation of any computational model, from a simple pharmacokinetic equation to a complex AI-driven predictive tool, is an exercise in abstraction. All models are, by nature, approximations of reality. As statistician George E.P. Box famously noted, "Essentially, all models are wrong, but some are useful." [1] The journey from a "wrong" model to a "useful" one is navigated through rigorous verification and validation. These are not synonymous terms but complementary processes that form the bedrock of model credibility.

- Verification is the process of confirming that a model is correctly implemented with respect to its conceptual model and specifications. It answers the question: "Did we build the model right?" It is an internal process, checking for coding errors, logical consistency, and alignment with design documents, without reference to real-world data [1] [9].

- Validation is the process of substantiating that a model, within its domain of applicability, possesses a satisfactory range of accuracy consistent with its intended purpose. It answers the question: "Did we build the right model?" It is an external process, testing the model's output against data from the real-world system it aims to represent [1] [9].

The conflation of these two processes is a common pitfall that can lead to technically perfect models that are scientifically irrelevant or dangerously misleading. For drug development professionals, this distinction is not academic; it is a regulatory imperative. The U.S. Food and Drug Administration (FDA) now emphasizes a risk-based framework for establishing AI model credibility, requiring detailed disclosures about model architecture, data, training, and validation processes [10].

Foundational Concepts and Regulatory Context

A Tale of Two Processes: Definitions and Objectives

The core objectives of verification and validation are distinct, as summarized in the table below.

Table 1: Core Objectives of Verification and Validation

| Aspect | Verification ("Building it Right") | Validation ("Building the Right Thing") |

|---|---|---|

| Central Question | Does the model execute as designed? | Does the model accurately represent the real system? |

| Basis of Evaluation | Conceptual model, design specifications, software requirements. | Real-world system data and behavior [9]. |

| Primary Focus | Internal logic, code implementation, numerical accuracy, unit testing. | Model output accuracy, predictive power, fitness for purpose [9]. |

| Key Activity | Debugging, checking algorithms, ensuring calculations are error-free. | Comparing model predictions to empirical observations, sensitivity analysis [1]. |

A classic example illustrates this distinction. Consider a model built to predict waiting time (W) in a queue at an ice cream stand, based on the number of customers (X) and a constant service rate, resulting in the equation W = 3X [1].

- Verification involves testing the implementation: for inputs X=1, 2, 5, does the model output W=3, 6, 15? This confirms the model correctly executes the linear relationship defined by the modeler.

- Validation involves collecting real-world data: when Jessica arrives and finds 5 people in line, does she actually wait 15 minutes? The model may fail validation if real customers leave the line due to long waits, a behavior not captured in the oversimplified model [1].

The Regulatory Imperative in Drug Development

The pharmaceutical industry is undergoing a digital transformation, with Model-Informed Drug Development (MIDD) becoming a central paradigm. The FDA's evolving stance makes robust V&V non-negotiable.

- Shift to Continuous Lifecycle Validation: The FDA's Process Validation Guidance emphasizes a three-stage lifecycle: Process Design, Process Qualification, and Continued Process Verification (CPV), moving away from one-time validation to ongoing, data-driven monitoring [11].

- AI and Machine Learning Governance: The FDA's 2025 draft guidance outlines a risk-based framework for AI models used in drug development. "Model influence risk" and "decision consequence risk" determine the extent of required validation, with high-risk models (e.g., those impacting patient safety or clinical trial outcomes) requiring comprehensive disclosure of architecture, data sources, training methodologies, and performance metrics [10].

- Digital Compliance and Data Integrity: Paper-based validation is being phased out in favor of Digital Validation Platforms (DVPs) and compliance with electronic records standards (e.g., 21 CFR Part 11). Data must be secure, traceable, and tamper-proof [11].

The cost of neglecting proper V&V is high, not only in regulatory delays but also in operational inefficiency. Studies estimate that the use of MIDD yields "annualized average savings of approximately 10 months of cycle time and $5 million per program," savings that are only realized with credible, validated models [12].

Methodological Framework: A Practical Toolkit

This section details the experimental protocols and methodologies that form the backbone of a rigorous V&V strategy.

Model Verification Techniques

Verification ensures the computational integrity of the model. The following dot code and table summarize key activities and reagents for this phase.

Diagram 1: Model Verification Workflow

Table 2: Essential Research Reagents for Model Verification

| Reagent / Tool | Function in Verification |

|---|---|

| Unit Testing Framework (e.g., PyTest, JUnit) | Automates testing of individual functions and modules in isolation to ensure each component produces expected outputs for given inputs. |

| Static Code Analyzer (e.g., SonarQube, Pylint) | Scans source code without executing it to identify potential bugs, coding standard violations, and complex code segments prone to error. |

| Debugger (e.g., GDB, PDB) | Allows interactive tracing of code execution, inspection of variable states, and identification of logical errors. |

| Version Control System (e.g., Git) | Tracks all changes to the model code, enabling collaboration, reproducibility, and rollback to previous stable states. |

| Traceability Matrix | A document mapping model requirements and specifications to specific code components and test cases, ensuring full coverage. |

Model Validation Techniques

Validation tests the model's real-world relevance. The methodologies range from simple data splitting to complex statistical assessments.

Diagram 2: Model Validation Techniques

1. Data Splitting and Cross-Validation These techniques assess a model's ability to generalize to unseen data [13] [14].

- Train-Test Split: The dataset is divided into a training set (e.g., 70-80%) and a held-out test set (e.g., 20-30%). The model is trained on the former and its performance is evaluated on the latter, providing an initial check for overfitting [13] [14].

- K-Fold Cross-Validation: The dataset is partitioned into k subsets (folds). The model is trained k times, each time using k-1 folds for training and the remaining fold for testing. The average performance across all k trials provides a robust estimate of generalizability, especially useful for smaller datasets [14].

- Stratified K-Fold Cross-Validation: A variant that preserves the original class distribution in each fold, crucial for imbalanced datasets common in medical applications (e.g., rare adverse event prediction) [14].

2. Input-Output Transformation Validation This is the core of the validation effort, comparing the model's outputs to the real system's outputs for the same set of input conditions [9]. The Naylor and Finger three-step approach is a widely accepted framework [9]:

- Step 1: Face Validity: Experts knowledgeable about the real system examine the model's structure and output for reasonableness. Does the model "look right"?

- Step 2: Validation of Model Assumptions: All model assumptions are scrutinized. This includes:

- Structural Assumptions: Are the rules and logic of the model correct? (e.g., the flow of a clinical trial simulation).

- Data Assumptions: Is the input data reliable and are the assumed statistical distributions appropriate? Goodness-of-fit tests (e.g., Kolmogorov-Smirnov) are used here [9].

- Step 3: Compare Input-Output Transformations: The model is viewed as a black box. Historical or newly collected system data is used as input, and the model's output is statistically compared to the actual system output.

3. Statistical Methods for Input-Output Validation

- Hypothesis Testing: A t-test can be used to test the null hypothesis that the model's measure of performance (e.g., mean response) is not significantly different from the system's measure of performance. Rejecting the null hypothesis suggests the model needs adjustment [9].

- Confidence Intervals: The model's output is used to construct a confidence interval for the performance measure. If this interval contains the known system value, the model is considered valid for that measure. This approach acknowledges that a model does not need to be perfect, but "close enough" for its intended purpose [9].

4. Robustness and Explainability Validation

- Robustness Testing: The model is subjected to noisy, incomplete, or adversarially manipulated data to ensure it degrades gracefully and remains reliable under non-ideal, real-world conditions [14].

- Explainability Validation: Tools like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) are used to interpret model predictions, ensuring they are based on logically sound and clinically relevant features. This is critical for regulatory compliance and building trust with stakeholders [14].

Table 3: Quantitative Comparison of Validation Methods

| Validation Method | Primary Use Case | Key Metric(s) | Advantages | Limitations |

|---|---|---|---|---|

| Train-Test Split | Initial model assessment, large datasets. | Accuracy, Precision, Recall, F1-Score. | Simple, fast to implement. | Results can be highly dependent on a single random split [13]. |

| K-Fold Cross-Validation | Small to medium datasets, robust performance estimation. | Mean Accuracy (± Std. Dev.) across folds. | Reduces variance in performance estimate, uses data efficiently. | Computationally intensive; assumes i.i.d. data, unsuitable for time series [14]. |

| Hypothesis Testing | Comparing model and system outputs. | t-statistic, p-value. | Provides a formal statistical basis for accepting/rejecting model validity. | Sensitive to sample size; risk of Type I/II errors [9]. |

| Confidence Intervals | Estimating model accuracy as a range. | Interval [a, b] for performance measure. | Quantifies the precision of the model's performance estimate. | Requires model output data to be approximately Normally Distributed [9]. |

Advanced Applications and Future Directions

The principles of V&V are being applied to increasingly complex and critical systems.

- Formal Verification and Model Checking: In security protocol and hardware design, formal methods use mathematical logic to exhaustively prove that a system model adheres to certain properties (e.g., "no secret key is ever disclosed"). Tools like the Murphi verification system are used for this purpose, though they often face state space explosion problems with complex systems [15] [16].

- AI/ML Model Validation in Pharma: The FDA's guidance necessitates a focus on the entire AI lifecycle. This includes monitoring for model drift (performance degradation over time due to changing real-world data), rigorous bias detection in training data, and maintaining comprehensive documentation for audit trails [11] [10]. Specialized platforms are emerging to automate aspects of this continuous validation [14].

- The Future: Predictive and Green Validation: Emerging trends include the use of AI to forecast process deviations before they occur ("predictive validation") and the development of sustainable, energy-efficient qualification methods [11].

The journey from a conceptual model to a credible, regulatory-approved tool is paved with rigorous verification and validation. "Building it right" (verification) through meticulous code review and testing is a prerequisite, but it is meaningless without "building the right thing" (validation) through relentless comparison to empirical reality. For researchers and drug development professionals, mastering this dichotomy is no longer just a technical skill but a strategic imperative. It is the bridge between computational innovation and real-world impact, ensuring that models are not only mathematically elegant but also clinically meaningful, reliable, and safe for patients. As the industry moves towards fully digital, AI-driven development, a robust, lifecycle-oriented V&V framework will be the cornerstone of success.

In scientific research and development, particularly in regulated fields like drug development, the concepts of verification and validation (V&V) represent critical, distinct steps in the model and product lifecycle. While often used interchangeably in casual conversation, they address fundamentally different questions. Verification is the process of confirming that a model or product has been built correctly, adhering to its design specifications—"Did we build the product right?". In contrast, Validation is the process of confirming that the right model or product has been built, fulfilling its intended real-world purpose—"Did we build the right product?" [5] [17] [1]. For researchers and scientists, a rigorous application of V&V is not merely a regulatory hurdle; it is a cornerstone of scientific integrity, ensuring that computational models and software-based tools are both technically sound and fit for their intended purpose.

The consequences of neglecting this distinction are profound. A model can be perfectly verified yet fail validation, meaning it operates exactly as designed but does not achieve the desired outcome in a real-world setting. Conversely, a model might accidentally pass validation despite verification failures, but this success is likely unrepeatable and the model unreliable [18]. A clear understanding of V&V is especially crucial with the rise of Artificial Intelligence (AI) and machine learning (ML) in drug development. The U.S. Food and Drug Administration (FDA) now provides draft guidance outlining a risk-based framework for establishing AI model credibility, which heavily relies on robust verification and validation practices tailored to the model's context of use [10].

Core Concepts and Definitions

What is Verification?

Verification is a static process of checking documents, designs, and code without necessarily executing the software [19]. It is a systematic investigation that provides objective evidence that the specified requirements have been fulfilled [18]. In the context of modeling, verification ensures that the model is producing the predicted outcomes based on the relationships of input and output variables built into it. It confirms that the model is doing what the modeler intended from a technical perspective, without yet comparing it to real-world data [1]. For example, in software development for a medical device, verification would involve testing the algorithm that controls a dosage calculation to ensure it correctly follows the written specifications through code reviews and unit tests [17].

What is Validation?

Validation is a dynamic process that involves executing the software or model and checking its behavior against real-world scenarios and data [19]. It provides objective evidence that the requirements for a specific intended purpose have been fulfilled [18]. Validation answers the question of whether the correct model was built, ensuring it acts similarly to the real-world process so a team can be confident in using it to predict process behaviors [1]. Using the medical device software example, validation would involve testing the entire system in a clinical setting to ensure it functions correctly when managing actual patient data, which might include usability testing and clinical trials [17].

Table 1: High-Level Comparison of Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Core Question | "Are we building the product right?" [17] | "Are we building the right product?" [17] |

| Definition | Confirmation that specified requirements have been fulfilled [18] | Confirmation that requirements for a specific intended purpose are fulfilled [18] |

| Focus | Internal consistency; adherence to specifications and designs [5] [17] | External performance; meeting user needs in the real world [5] [17] |

| Basis | Comparison against design specifications and standards [19] | Comparison against stakeholder and user requirements [19] |

| Primary Testing Type | Static Testing (without code execution) [19] | Dynamic Testing (with code execution) [19] |

Comparative Analysis: Verification vs. Validation

A detailed, side-by-side comparison elucidates the distinct roles that verification and validation play throughout the development and research lifecycle. This distinction is crucial for allocating resources effectively and meeting both technical and regulatory standards.

Table 2: Detailed Comparative Analysis: Focus, Methods, and Goals

| Characteristic | Verification | Validation |

|---|---|---|

| Focus & Scope | Examines documents, designs, code, and programs for correctness and compliance [19]. Ensures the product is built according to the initial plan and specifications [5]. | Examines and tests the actual product for functionality and usability [19]. Ensures the product works as expected and meets user needs in real-world scenarios [5]. |

| Methods & Techniques | - Reviews, Walkthroughs, Inspections [5] [19]- Desk-checking [19]- Static Code Analysis [5]- Evaluation of Coding and Design Reviews [5]- Unit Testing (testing individual components) [5] | - Functional, System, and Integration Testing [5]- User Acceptance Testing (UAT) [5]- Usability Testing [17]- Clinical Evaluations/Performance Trials [17] [18]- Black Box & Non-Functional Testing [19] |

| Goals & Objectives | - Bug prevention and early detection [5]- Ensuring the software conforms to specifications [19]- Application and software architecture correctness [19] | - Detecting errors not found during verification [19]- Ensuring the software meets customer requirements and expectations [19]- Validating the actual product's real-world performance [19] |

| Timing in Lifecycle | Occurs during the development process, typically before validation [19]. A continuous process during the design and coding phases [5]. | Occurs after a development phase is complete or the system is fully developed [5]. Typically toward the end of the development process, before product release [17]. |

| Error Focus | Primarily for the prevention of errors by catching issues early in the lifecycle [19]. | Primarily for the detection of errors that have propagated to the final product [19]. |

| Personnel | Typically performed by the quality assurance (QA) team and developers [19]. | Typically performed by the testing team and involves real users or stakeholders [19]. |

Experimental and Methodological Protocols

Implementing robust verification and validation requires structured protocols. The following workflows provide a methodological foundation for researchers.

A Protocol for Verification Testing

The verification process is a sequential, quality-gated workflow designed to ensure a product is built correctly from the ground up.

Figure 1: A sequential workflow for the verification testing process.

- Requirements Analysis: The process begins with an analysis of software requirements to ensure they are complete, well-defined, and testable before any development proceeds [5].

- Planning and Verification Tasks: Define accountability, deadlines, and specific verification methods to be used (e.g., reviews, static analysis, unit testing) [5].

- Artifact Preparation: Gather or create the necessary items to be checked, such as requirement specifications, design documentation, and source code components [5].

- Check Accuracy: Perform the scheduled verification tasks, including reviews of requirements and designs, static code examination, walkthroughs, assessments, and unit testing [5].

- Results Documentation: Meticulously record any issues or discrepancies discovered and assign them to the appropriate team for remediation [5].

- Defect Resolution: Collaborate with the development team to fix the identified problems [5].

- Re-verification: Re-check the corrected artifacts to ensure they now function properly. This is an iterative loop back to Step 4 [5].

- Reporting and Sign-off: Once all defects are resolved, generate a verification report and obtain stakeholder consent to proceed to the next phase, such as validation or integration testing [5].

A Protocol for Validation Testing

Validation testing follows a more holistic path, focused on the integrated system and its real-world performance.

Figure 2: An iterative workflow for the validation testing process.

- Define Intended Use and Context: Clearly articulate the specific intended purpose of the product or model, the specified users, and the context of use. This is the foundational step per regulatory guidance [18].

- Develop Validation Strategy: Create a master validation plan that outlines the overall approach, resources, and timelines.

- Specify Test Scenarios: Based on the intended use, develop detailed test scenarios that cover both normal operating conditions and extreme or edge cases [1].

- Set Up Production-like Environment: Conduct validation in an environment that closely mirrors the real-world setting where the product will be deployed [17].

- Execute Tests: Run the specified tests, which may include functional testing, User Acceptance Testing (UAT), integration testing, performance testing, and usability testing [5] [18].

- Evaluate Against Real-World Data and User Needs: Compare the test results and performance metrics against data collected from the real world and the predefined user needs [1]. This may involve clinical evaluations for medical devices [18].

- Decision Point: The validation is successful only if it is objectively evidenced that the specified user can achieve the product's intended purpose in the specified context of use [18]. If it fails, the process iterates, requiring refinement of the model or even a reassessment of the intended purpose itself.

The Scientist's Toolkit: Essential Materials and Reagents for V&V

Beyond conceptual workflows, the practical execution of verification and validation relies on a suite of methodological tools and formalized documents.

Table 3: Essential Tools and Materials for Verification and Validation

| Tool / Material | Category | Primary Function in V&V |

|---|---|---|

| Traceability Matrix | Documentation | Provides end-to-end traceability by linking requirements, design inputs, risks, and test results, ensuring comprehensive coverage [20] [17]. |

| Static Code Analysis Tools | Software Tool | Automatically examines source code for bugs, security vulnerabilities, and maintainability issues without executing the program [5]. |

| Unit Testing Frameworks | Software Tool | Provides a structured environment for creating and running tests on individual units or components of code to ensure expected behavior [5]. |

| Risk Management File | Documentation | A centralized file that links risk assessments with design controls and test cases, ensuring identified risks are verified and validated [17]. |

| Style Guide & UI Mockups | Specification | Serves as an objective benchmark for verifying specified ergonomic features like font sizes and colors during usability verification [18]. |

| Clinical Data / Real-World Datasets | Data | Provides the objective, real-world evidence required to validate that a model or product performs as intended in its target environment [1] [10]. |

| Test Automation Suites | Software Tool | Streamlines verification (e.g., regression testing) and validation cycles, enabling frequent and repeatable testing [17]. |

V&V in Practice: A Drug Development Case Study

The FDA's draft guidance "Considerations for the Use of Artificial Intelligence To Support Regulatory Decision-Making for Drug and Biological Products" provides a critical, real-world framework for applying V&V to AI models in drug development [10].

The guidance proposes a risk-based framework where the required depth of V&V information is determined by two factors: the model influence risk (how much the AI model influences decision-making) and the decision consequence risk (the impact on patient safety or drug quality) [10]. For high-risk models—such as those used in clinical trial management or drug manufacturing—the FDA expects comprehensive details on the AI model’s architecture, data sources, training methodologies, validation processes, and performance metrics [10].

In this context, verification would ensure that the AI model's algorithm correctly implements its designed architecture and that its coding is error-free. Validation, however, would require demonstrating that the model's outputs are clinically relevant, generalizable, and reliable within the specific "context of use," such as selecting appropriate patients for a clinical trial or monitoring product quality in manufacturing [10]. This underscores the necessity of a rigorous V&V process to establish model credibility and ensure regulatory compliance.

In the rigorous world of research, drug development, and medical device engineering, verification and validation (V&V) are two distinct but complementary processes essential for ensuring quality, safety, and efficacy. While sometimes used interchangeably, they serve fundamentally different purposes. The sequence in which they are performed is not arbitrary but is critical to a efficient and effective product development lifecycle. This guide establishes a core principle: verification must precede validation [21] [22].

In simplest terms, verification asks, "Did we build the thing right?" while validation asks, "Did we build the right thing?" [21]. Verification is the process of confirming that design outputs match design inputs—that the system, model, or device adheres to its specified requirements. Validation, conversely, is the process of establishing that the final product conforms to user needs and its intended use in a real-world environment [22]. This foundational distinction dictates the logical sequence of these activities, forming a critical pathway from concept to proven product.

The Fundamental Differences: A Comparative Analysis

Understanding the sequence requires a clear grasp of the distinctions between verification and validation. The following table summarizes their core differences, which inherently dictate their order in the development process.

Table 1: Core Differences Between Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Core Question | Did we build the thing right? [21] | Did we build the right thing? [21] |

| Objective | Confirm design outputs meet design inputs [22] | Prove the product meets user needs and intended use [22] |

| Timing | During development [22] | At the end of development or on the final product [22] |

| Focus | Specifications, design documents, sub-system functionality [21] | User interaction, real-world performance, clinical efficacy [21] |

| Methods | Reviews, inspections, static analysis, bench testing [22] | Functional testing, clinical trials, usability studies [21] [22] |

This distinction is maintained across different regulatory frameworks. For medical devices, the FDA defines design verification as "confirmation by examination and provision of objective evidence that specified requirements have been fulfilled," while design validation is "establishing by objective evidence that device specifications conform with user needs and intended use(s)" [21]. Similarly, in pharmaceutical analytics, method validation demonstrates a procedure's suitability for its intended use, while method verification confirms a previously validated method works in a new lab setting [23] [24].

The Logical Imperative: Why Sequence Matters

The Foundational Layer of Verification

Verification serves as the essential first layer of quality assurance. It is an internal process used during development to ensure that the product is being built correctly according to the predefined plans and specifications [21]. By conducting verification activities—such as code reviews, unit testing, component bench testing, and design document analysis—development teams can identify and rectify issues early in the lifecycle [21] [22]. Catching a design flaw or a specification non-conformance during verification is significantly less costly and time-consuming than discovering it during a late-stage validation study, such as a clinical trial. Verification provides the objective evidence that the product's foundational building blocks are sound before its overall purpose is evaluated.

The System-Level Proof of Validation

Validation, performed later in the process, provides the ultimate proof of concept [22]. It tests the device or drug itself, or more specifically, its interaction with the end-user in a simulated or actual operational environment [21]. Attempting to validate a product that has not been first verified is a high-risk endeavor. If the product fails validation, it can be exceptionally difficult to determine whether the failure was due to an incorrect implementation of the design (a verification issue) or a fundamental flaw in the design concept itself (a user needs issue). A verified product provides a stable baseline, ensuring that any failures during validation can be more confidently attributed to the product's concept and its alignment with user needs, rather than underlying implementation errors.

Table 2: Typical Outputs and Artifacts from V&V Activities

| Activity | Typical Outputs | Primary Responsibility |

|---|---|---|

| Verification | Review reports, inspection records, static analysis reports, bench test results [22] | Development team [22] |

| Validation | Test and acceptance reports, clinical study reports, usability test reports [22] | Independent testing group / Quality Assurance [22] |

The sequence creates a defensible chain of evidence for regulatory submissions. Agencies like the FDA require documented evidence that design outputs meet design inputs (verification) before assessing evidence that the device meets user needs (validation) [22]. Presenting a logically sequenced V&V strategy demonstrates a systematic and scientifically sound approach to product development, which is a cornerstone of regulatory compliance.

Experimental Protocols and Methodologies

Protocol for Analytical Method Validation

In pharmaceutical research, analytical method validation is crucial for generating reliable data. The following protocol, based on ICH Q2(R1) guidelines, outlines the key experiments [23] [24].

Table 3: Performance Characteristics for Analytical Method Validation

| Performance Characteristic | Experimental Protocol & Methodology | Objective Data Output |

|---|---|---|

| Accuracy | Analyze a sample of known concentration (e.g., a reference standard) multiple times (n≥9 over 3 concentration levels). | Recovery percentage (e.g., 98-102%) measuring closeness to the true value [24]. |

| Precision | Repeatability: Analyze a homogeneous sample multiple times (n≥6) in one session. Intermediate Precision: Analyze on different days, by different analysts, or with different equipment. | Relative Standard Deviation (RSD) of the results. A lower RSD indicates higher precision [24]. |

| Specificity | Analyze the sample in the presence of likely interferences (e.g., impurities, degradants, matrix components). | Chromatogram or data plot demonstrating that the analyte response is unaffected by interferences [24]. |

| Linearity & Range | Prepare and analyze a series of samples at different concentrations (e.g., 5-8 levels) across the claimed range. | Correlation coefficient (R²) from a linearity plot. The range is the interval where linearity, accuracy, and precision are achieved [24]. |

| Detection Limit (LOD) / Quantitation Limit (LOQ) | LOD: Signal-to-noise ratio of 3:1. LOQ: Signal-to-noise ratio of 10:1 with demonstrated precision and accuracy. | The lowest concentration that can be detected (LOD) or reliably quantified (LOQ) [24]. |

Protocol for Next-Generation Sequencing (NGS) Validation

For novel research methods like NGS in oncology, validation follows an error-based approach. A typical protocol involves [25]:

- Panel Design & Optimization: Define the intended use (e.g., solid tumors, hematological malignancies) and select genes and variant types (SNVs, indels, CNAs, fusions) to be detected [25].

- Familiarization Phase: Conduct pre-validation experiments to optimize sample preparation, library preparation (hybrid-capture or amplicon-based), sequencing, and bioinformatics parameters [25].

- Formal Validation Study: Utilize well-characterized reference cell lines and samples to establish performance metrics.

- Positive Percentage Agreement (Sensitivity): Test samples with known positive variants. Calculate as (True Positives / (True Positives + False Negatives)) * 100.

- Positive Predictive Value (PPV): Calculate as (True Positives / (True Positives + False Positives)) * 100 for each variant type.

- Precision: Assess repeatability and reproducibility by testing replicates across multiple runs, operators, and days [25].

- Establish Performance Specifications: Define minimum depth of coverage (e.g., 500x) and the minimum number of samples used to establish each performance characteristic [25].

Visualizing the V&V Workflow and Its Logical Flow

The following diagram, generated using Graphviz, illustrates the critical sequence and the logical flow of activities from user needs to a validated product, highlighting why verification is a necessary precursor to validation.

A logical V&V workflow.

The diagram underscores that validation is a direct check against user needs, but it can only be meaningfully performed on a product that has first been verified to conform to its design inputs. Skipping verification would mean attempting to validate a product whose internal correctness is unknown, leading to ambiguous results and potential project risks.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials critical for conducting the verification and validation experiments described in this guide, particularly in pharmaceutical and biomedical research.

Table 4: Key Research Reagent Solutions for V&V Experiments

| Reagent / Material | Function in V&V Protocols |

|---|---|

| Certified Reference Standards | Provides a substance of known purity and identity with a certified certificate of analysis. Serves as the benchmark for establishing method accuracy, linearity, and precision during validation [24]. |

| Characterized Reference Cell Lines | Essential for NGS and molecular assay validation. These cell lines contain known genomic variants and are used to establish positive percentage agreement (sensitivity), specificity, and detection limits for bioanalytical methods [25]. |

| Matrix-Matched Quality Controls (QCs) | Control materials prepared in the same biological matrix as the test samples (e.g., plasma, tumor homogenate). Used during both validation and routine testing to monitor assay precision, accuracy, and robustness over time [24]. |

| Bioinformatics Pipelines & Software | Custom or commercial software for data analysis (e.g., variant calling in NGS). Their algorithms and parameters must be verified and validated to ensure they accurately interpret raw data and produce reliable results [25]. |

The sequence of verification before validation is a cornerstone of rigorous research and development, particularly in highly regulated fields like drug and medical device development. This order is not a matter of convention but of logical necessity. Verification provides the foundational confidence that a product has been built correctly according to its specifications, creating a stable and well-understood artifact upon which the critical question of validation can be posed: does this product truly meet the user's needs? Adhering to this critical sequence de-risks development, provides a clear audit trail for regulators, and ultimately ensures that resources are invested in validating a product that is fundamentally sound. It is a discipline that separates robust, reproducible science from mere aspiration.

Within the broader thesis on distinguishing model verification and validation, this guide provides a concrete framework for applying these concepts. Verification asks, "Are we building the model right?" (correctness of implementation), while Validation asks, "Are we building the right model?" (accuracy in representing reality). We use a simple biological system—a ligand-receptor binding assay—to demonstrate this critical distinction.

Core Concepts: Verification vs. Validation

Verification ensures the computational model of the assay is implemented without internal errors. It is a check of the model's code and mathematics against its own specifications. Validation assesses whether the model's predictions accurately reflect the behavior of the real-world biological system.

| Aspect | Verification | Validation |

|---|---|---|

| Question | Are we building the model right? | Are we building the right model? |

| Focus | Internal consistency, code, algorithms. | Correspondence to physical reality. |

| Basis | Model specification and design. | Experimental data from the real system. |

| Methods | Unit testing, code review, convergence analysis. | Comparison of model output to independent experimental data. |

The Simple System: Ligand-Receptor Binding Assay

This system measures the binding affinity (Kd) of a drug candidate (ligand) to its protein target (receptor). The computational model is based on the Langmuir isotherm.

Computational Model (Langmuir Isotherm):

Fraction_Bound = [L] / (Kd + [L])

Where [L] is the free ligand concentration.

Applying V&V to the Computational Model

Model Verification

The goal is to ensure the computational implementation is error-free.

Experimental Protocol: Verification via Unit Testing

- Test Case 1 (Baseline): Set

[L] = 0. The model must returnFraction_Bound = 0. - Test Case 2 (Saturation): Set

[L]to a value 100x greater thanKd. The model must returnFraction_Bound ≈ 1.0. - Test Case 3 (Half Saturation): Set

[L] = Kd. The model must returnFraction_Bound = 0.5. - Test Case 4 (Mass Conservation): Implement a check that the sum of bound and free ligand does not exceed the total added ligand.

Quantitative Verification Results:

| Test Case | Input [L] | Input Kd | Expected Output | Model Output | Pass/Fail |

|---|---|---|---|---|---|

| Baseline | 0 nM | 10 nM | 0.00 | 0.00 | Pass |

| Saturation | 1000 nM | 10 nM | ~1.00 | 0.999 | Pass |

| Half Saturation | 10 nM | 10 nM | 0.50 | 0.50 | Pass |

Title: V&V Process Flow

Model Validation

The goal is to determine if the model's predicted binding curve matches empirical data.

Experimental Protocol: Validation via SPR Binding Assay

- Immobilization: The receptor protein is immobilized on a Surface Plasmon Resonance (SPR) biosensor chip.

- Ligand Injection: A series of ligand solutions at known concentrations (

[L]) are flowed over the chip surface. - Data Acquisition: The SPR signal (Response Units, RU) is measured in real-time, proportional to the amount of bound complex.

- Data Analysis: Equilibrium binding data (RU vs.

[L]) is fitted to the Langmuir isotherm to determine the experimental Kd. - Comparison: The experimental curve is compared to the curve predicted by the computational model.

Quantitative Validation Results:

| Ligand Conc. [L] (nM) | Experimental Fraction Bound | Model-Predicted Fraction Bound | Residual (Exp - Model) |

|---|---|---|---|

| 0.1 | 0.01 | 0.01 | 0.00 |

| 1.0 | 0.09 | 0.09 | 0.00 |

| 10 | 0.50 | 0.50 | 0.00 |

| 100 | 0.91 | 0.91 | 0.00 |

| 1000 | 0.99 | 0.99 | 0.00 |

Title: SPR Assay Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| Biacore SPR System | A platform for label-free, real-time analysis of biomolecular interactions. |

| CM5 Sensor Chip | A carboxymethylated dextran sensor chip for covalent immobilization of proteins. |

| Amine Coupling Kit | Contains reagents (NHS/EDC) for covalently immobilizing the receptor protein to the chip surface. |

| HBS-EP Buffer | Running buffer providing a stable pH and ionic strength, and surfactant to minimize non-specific binding. |

| Recombinant Purified Receptor | The high-purity, correctly folded target protein for the assay. |

Advanced Application: Signaling Pathway Context

Validated binding models are often integrated into larger systems biology models of signaling pathways.

Title: Simplified Signaling Pathway

The V&V Process in Practice: Methodologies and Applications in Biomedical Research

In the context of model development for pharmaceutical research and drug development, Verification and Validation (V&V) represent two fundamentally distinct but complementary processes for ensuring model quality and reliability. The distinction between these processes forms the core thesis of effective model evaluation: verification answers "Are we building the model right?" while validation addresses "Are we building the right model?" [26] [1]. This distinction is not merely semantic but represents a critical methodological division that guides the entire evaluation workflow.

Verification ensures that a computational model correctly implements its intended mathematical representation and computational algorithms, focusing on technical correctness [27] [1]. In contrast, validation assesses whether the model accurately represents the real-world phenomena it purports to simulate, establishing its scientific credibility and predictive power [26] [27]. For drug development professionals, this distinction is particularly crucial as it separates technical implementation quality (verification) from biological and clinical relevance (validation).

The V&V workflow gains additional dimensions in precision medicine applications, where Uncertainty Quantification (UQ) joins verification and validation to form VVUQ [27]. UQ systematically tracks uncertainties throughout model calibration, simulation, and prediction, enabling the prescription of confidence bounds that demonstrate the degree of confidence researchers should have in the predictions. This triple framework ensures that digital twins and other computational models in pharmaceutical research meet the rigorous standards required for clinical applications and regulatory approval.

Foundational Concepts and Definitions

The V&V Distinction in Practice

The essential difference between verification and validation can be illustrated through practical examples. Consider a model predicting queuing behavior at an ice cream stand, where the modeler develops the equation W = 3X to predict waiting time (W) based on number of customers (X) [1]. Verification confirms that the model correctly calculates W as 3, 6, 15, 30, and 60 minutes when X = 1, 2, 5, 10, and 20 respectively, ensuring the mathematical implementation is correct. Validation, however, requires comparing these predictions against actual observed waiting times in the real system, which might differ due to unmodeled behaviors like customers leaving if waiting exceeds tolerance limits [1].

In pharmaceutical contexts, this distinction manifests differently. Process verification confirms that specific manufacturing batches meet predetermined specifications and quality attributes, while process validation establishes documented evidence that a process will consistently produce products meeting these specifications [28]. This lifecycle approach to validation has been emphasized in recent FDA guidance, which shifts from one-time validation events to continuous process verification [29] [28].

The V&V Relationship Framework

The fundamental relationship between verification and validation follows a specific logical sequence that must be maintained throughout the workflow.

Figure 1: The sequential relationship between verification and validation activities in pharmaceutical model development.

As illustrated in Figure 1, verification necessarily precedes validation in an effective workflow [1]. This sequence ensures that technical implementation errors are eliminated before assessing the model's real-world relevance, preventing the confounding of implementation defects with conceptual model flaws.

A Comprehensive V&V Workflow: Step-by-Step Methodology

Stage 1: Pre-V&V Planning and Scoping

Step 1.1: Define Model Purpose and Intended Use Clearly articulate the research question and model purpose, including specific contexts of use and regulatory considerations. For drug development models, this includes defining the target product profile based on patient needs and identifying Critical Quality Attributes (CQAs) that must be controlled [28]. The intended use should specify whether the model will support basic research, inform clinical trial design, or serve as evidence for regulatory submissions.

Step 1.2: Establish Acceptance Criteria Define quantitative and qualitative criteria for both verification and validation success. These criteria should include:

- Verification criteria: Numerical accuracy thresholds, code performance benchmarks, convergence requirements for mathematical discretization [27]

- Validation criteria: Accuracy in predicting clinical outcomes, statistical confidence levels, goodness-of-fit measures for experimental data

- Uncertainty thresholds: Acceptable levels for both aleatoric (natural variability) and epistemic (knowledge limitation) uncertainties [27]

Step 1.3: Develop V&V Protocol Create a comprehensive protocol detailing methods, resources, timelines, and responsibilities. This should align with the FDA's process validation lifecycle approach, covering Process Design, Process Qualification, and Continued Process Verification stages [28]. The protocol should specify statistical methods for analyzing validation data, including sample size determination based on statistical power and capability analysis to quantify process performance [28].

Stage 2: Verification Methodology

Step 2.1: Code and Algorithm Verification Implement rigorous verification processes for computational models:

- Software Quality Engineering (SQE): Apply established SQE practices to ensure code reliability and maintainability [27]

- Solution Verification: Assess convergence of mathematical model discretization, particularly for partial differential equations (PDEs) used in physiological modeling [27]

- Model Checking: For formal behavioral models, use tools like SPIN model checker with Linear Temporal Logic (LTL) to verify properties in UML sequence diagrams or similar representations [30]

Step 2.2: Mathematical Consistency Verification Verify mathematical foundations:

- Unit consistency: Ensure dimensional homogeneity across all equations

- Boundary condition testing: Verify model behavior at operational boundaries

- Parameter sensitivity analysis: Identify parameters requiring precise estimation

Table 1: Quantitative Verification Checks and Acceptance Criteria

| Verification Type | Method | Acceptance Criteria | Documentation |

|---|---|---|---|

| Code Solution | Software Quality Engineering (SQE) | Compliance with coding standards, zero critical defects | Software Verification Report [27] |

| Numerical Accuracy | Solution Verification for PDEs | Convergence below predetermined thresholds | Convergence Analysis Report [27] |

| Behavioral Consistency | Model Checking (e.g., SPIN) | No violations of specified LTL properties | Model Checking Report [30] |

| Data Integrity | ALCOA+ Principles | Complete, attributable, contemporaneous records | Data Integrity Audit Report [29] |

Stage 3: Validation Methodology

Step 3.1: Validation Experiment Design Design validation experiments based on the model's intended use context:

- For existing processes with available data: Test the model under different operational conditions (normal and extreme) using historical data, comparing model outputs to known outcomes [1]

- For existing processes without data: Conduct observational studies comparing model behavior to real-world process behavior [1]

- For novel processes: Use correlation analysis to compare model input-output relationships to theoretically expected relationships [1]

Step 3.2: Data Collection and Management Implement rigorous data collection procedures adhering to ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate, plus Complete, Consistent, Enduring, and Available) [29]. For pharmaceutical applications, this often includes:

- Process Analytical Technology (PAT): Implement real-time monitoring systems for continuous validation [29]

- Electronic batch records: Ensure complete and accurate data capture [29]

- Statistical process control: Establish monitoring protocols for continued process verification [28]

Step 3.3: Validation Execution and Analysis Execute validation protocols and analyze results:

- Comparison to acceptance criteria: Evaluate whether validation results meet predetermined criteria

- Uncertainty Quantification: Formalize the process of tracking uncertainties throughout model calibration, simulation, and prediction [27]

- Statistical analysis: Apply appropriate statistical tests, including capability analysis (Cp, Cpk) and hypothesis testing to confirm process consistency [28]

Table 2: Validation Methods for Different Scenarios

| Modeling Scenario | Primary Validation Method | Key Metrics | Uncertainty Considerations |

|---|---|---|---|

| Existing process with available data | Comparison to historical data under normal and extreme conditions | Prediction accuracy, goodness-of-fit measures | Aleatoric uncertainty from natural process variation [27] [1] |

| Existing process without data | Observation of real-world process behavior | Behavioral consistency, pattern recognition | Epistemic uncertainty from incomplete knowledge [27] [1] |

| Novel process with known variable relationships | Correlation analysis of input-output relationships | Correlation strength, statistical significance | Model form uncertainty, parameter uncertainty [27] [1] |

The Complete V&V Workflow

The entire verification and validation process follows an integrated pathway from initial concept through final documentation, with multiple decision points and potential iteration cycles.

Figure 2: Complete verification and validation workflow for pharmaceutical model development, showing key phases and decision points.

Essential Research Reagents and Tools

The experimental and computational toolkit for V&V in pharmaceutical research includes specialized reagents, software tools, and methodological frameworks.

Table 3: Research Reagent Solutions for V&V Experiments

| Tool/Category | Specific Examples | Function in V&V | Application Context |

|---|---|---|---|

| Model Checking Tools | SPIN model checker, FDR | Formal verification of behavioral properties | Verifying UML sequence diagrams, state machines [30] [31] |

| Simulation Platforms | Patient-specific cardiac EP models, Oncology growth models | Virtual representation for intervention simulation | Cardiology, oncology digital twins [27] |

| Statistical Analysis Tools | Design of Experiments (DOE), Statistical Process Control (SPC) | Designing validation studies, monitoring continued performance | Process validation, continued process verification [28] |

| Data Integrity Systems | Electronic batch records, PAT systems | Ensuring data quality for validation | Pharmaceutical manufacturing [29] |

| Uncertainty Quantification Frameworks | Bayesian methods, Sensitivity analysis | Quantifying confidence in predictions | Digital twin calibration [27] |

Documentation and Regulatory Considerations

V&V Documentation Framework

Comprehensive documentation is essential for regulatory submissions and scientific credibility. The documentation should include:

- V&V Protocol: Detailed methodology, acceptance criteria, and statistical methods

- Traceability Matrices: Linking requirements to architectural components and validation tests [32]

- Uncertainty Analysis Report: Documenting sources and quantification of uncertainties [27]

- Deviation Reports: Documenting and justifying any deviations from protocols

- Final Summary Report: Synthesizing all V&V activities, results, and conclusions

Continuous V&V in the Product Lifecycle

For pharmaceutical applications, validation is not a one-time event but a continuous process throughout the product lifecycle [28]. The FDA's three-stage approach includes:

- Process Design: Building quality into the process through development and scale-up

- Process Qualification: Confirming the process design performs effectively during commercial manufacturing

- Continued Process Verification: Maintaining the validated state through ongoing monitoring [28]

This approach aligns with modern quality management systems, particularly those influenced by Lean Six Sigma principles, emphasizing building quality into processes rather than inspecting it into finished products [28].

A rigorous, well-documented V&V workflow is essential for developing credible, reliable models in pharmaceutical research and drug development. By maintaining the critical distinction between verification ("building the model right") and validation ("building the right model"), researchers can systematically address both technical implementation quality and scientific relevance. The integrated workflow presented here, incorporating both traditional V&V and emerging uncertainty quantification methods, provides a comprehensive framework for establishing model credibility that meets regulatory standards and supports critical decisions in drug development.

In the rigorous framework of model verification and validation (V&V), verification addresses a fundamental question: "Am I building the model right?" [26] [1]. It is the process of ensuring that the computational model correctly implements its intended mathematical representation and that the software is free of coding errors. This contrasts with validation, which answers "Am I building the right model?" by assessing how accurately the model represents real-world phenomena [1] [27]. This guide focuses exclusively on verification, detailing the technical methodologies—code reviews, debugging, and solution accuracy checks—that researchers and scientists must employ to ensure the correctness and reliability of their computational models, particularly in high-stakes fields like drug development.

The criticality of robust verification is magnified in precision medicine, where digital twins and computational models inform clinical decisions. As noted in a 2025 perspective, Verification, Validation, and Uncertainty Quantification (VVUQ) are essential for building trust in these tools, with verification forming the foundational step to ensure software and systems perform as expected [27]. Without rigorous verification, underlying code defects can compromise model predictions, leading to erroneous conclusions and potential risks in translational research.

Core Verification Techniques

Code Reviews

Code review is a systematic examination of software source code, intended to find and fix errors overlooked in the initial development phase. In research settings, it ensures that the implementation faithfully translates the scientific model into code.

Structured Review Methodology: A formal code review process can be broken down into a standard workflow. The diagram below illustrates the key stages, from preparation to follow-up.

Quantitative Analysis of Modern Code Review Tools: The following table summarizes key features of contemporary code review and analysis platforms relevant to research computing environments.

| Tool Name | Primary Analysis Method | Key Features for Verification | Integration & Workflow |

|---|---|---|---|

| SonarQube [33] | Static Code Analysis | Detects bugs, vulnerabilities, and code smells; AI Code Assurance; Customizable rules | CI/CD Pipelines, IDE Integrations |

| Codacy [34] [33] | Automated Code Review | Enforces coding standards; Security analysis (SAST, SCA); Test coverage monitoring | Integrates with 49+ SDLC ecosystems |

| Pylint [35] | Static Analysis | Checks for errors, enforces coding standards; Highly configurable for project needs | IDE, pre-commit hooks, CI/CD pipelines |

| Bandit [35] | AST-based Static Analysis | Scans specifically for Python security issues; Processes Abstract Syntax Tree (AST) | Fits into development lifecycle stages |

| MyPy [35] | Static Type Checking | Checks type annotations against code usage; Enforces type consistency | Popular IDE and editor integration |

Experimental Protocol for a Research Team Code Review:

- Preparation: The code author submits a pull request (PR) with a description linking to the scientific model or issue ticket. The PR description must detail the mathematical changes and expected impact on results.

- Automated Gates: The PR automatically triggers a CI/CD pipeline running integrated verification tools (e.g., Pylint for style, Bandit for security, MyPy for type consistency, and unit tests). The PR cannot be merged if these checks fail [34] [35].

- Reviewer Selection: Assign one to three reviewers, including at least one with domain knowledge (e.g., a pharmacometrician) and one with software architecture expertise.

- Structured Review: Reviewers use inline commenting to provide specific, actionable feedback on code logic, adherence to the mathematical model, potential off-by-one errors, boundary conditions, and error handling [34].

- Iteration and Merge: The author addresses all comments, pushing new commits. The CI/CD pipeline re-runs. Once approved, the code is merged, ensuring the main branch remains stable.

Debugging

Debugging is the process of locating, analyzing, and correcting bugs in software. In scientific computing, this often involves isolating discrepancies between expected model behavior (based on theory) and actual simulation output.

Systematic Debugging Methodology: The following diagram outlines a high-level, iterative strategy for locating and fixing defects in research code.

Essential Debugging Tools and Techniques: The table below catalogs critical debugging tools and their specific applications in a research context.

| Tool / Technique | Primary Function | Application in Research Verification |

|---|---|---|

| GDB (GNU Debugger) [36] | Program Inspection & Control | Allows step-by-step execution of C/C++/Rust code; inspects memory and variables at breakpoints for mechanistic models. |

| Visual Studio Code Debugger [36] | Integrated Debugging | Visual debugging interface; supports run-and-debug within the editor for multiple languages (Python, R, Julia). |

| PyCharm Debugger [36] | Python-Specific Debugging | Visual debugging for Python; supports remote/container debugging and breakpoints in templates (e.g., Django). |

| Sentry [36] | Error Tracking & Monitoring | Captures detailed stack traces with local variables in production or testing environments; tracks error frequency. |

| Conditional Breakpoints | Targeted State Inspection | Pauses execution when a user-defined condition is met (e.g., when a variable drug_concentration > threshold). |

| Real-time State Inspection | Variable & Memory Examination | Examines the values of variables, arrays, and data structures while the program is paused to identify corrupt or unexpected states. |

Experimental Protocol for Debugging a Pharmacokinetic (PK) Model: