Why Hyperparameter Tuning is Critical for Accurate and Generalizable Chemistry Models

This article explores the pivotal role of hyperparameter tuning in developing robust machine learning models for chemical and pharmaceutical research.

Why Hyperparameter Tuning is Critical for Accurate and Generalizable Chemistry Models

Abstract

This article explores the pivotal role of hyperparameter tuning in developing robust machine learning models for chemical and pharmaceutical research. Aimed at researchers, scientists, and drug development professionals, it details how proper tuning moves models beyond theoretical potential to practical, reliable tools. We cover foundational concepts, key methodologies like Bayesian optimization and metaheuristics, strategies to overcome challenges like overfitting in small datasets, and rigorous validation techniques. The discussion synthesizes how automated tuning frameworks are transforming computational chemistry, leading to more efficient drug discovery, accurate molecular property prediction, and ultimately, more successful outcomes in biomedical research.

The Foundation: Why Model Performance in Chemistry Hinges on Hyperparameter Tuning

Defining Hyperparameters vs. Model Parameters in a Chemical Context

In the application of machine learning (ML) to chemical research, the distinction between model parameters and hyperparameters is not merely academic but fundamentally shapes model development, validation, and deployment. For researchers in drug development and materials science, understanding this distinction is crucial for building predictive models that accurately simulate molecular properties, reaction outcomes, and biological activities. Model parameters are the internal variables that the learning algorithm derives from the chemical training data, such as weights in a neural network predicting toxicity or coefficients in a model estimating binding affinity. In contrast, hyperparameters are external configuration variables whose values are set prior to the learning process and control the very nature of the training itself [1]. The careful tuning of these hyperparameters becomes particularly critical when working with complex chemical datasets characterized by high dimensionality, limited samples, and substantial noise, where improper settings can lead to either overfitting that compromises generalizability or underfitting that fails to capture essential structure-activity relationships.

Core Definitions and Conceptual Framework

Model Parameters: The Learned Chemical Representations

Model parameters constitute the internal knowledge that a machine learning model extracts from chemical training data. These values are not set manually but are learned automatically through optimization algorithms during the training process. In essence, they represent the patterns, relationships, and correlations discovered within the chemical data [1].

In different ML approaches applied to chemical problems, parameters manifest differently:

- In linear regression models predicting properties like logP or pKa, the coefficients for each molecular descriptor are the parameters [1].

- In neural networks used for toxicity prediction or molecular property estimation, the weights and biases connecting neurons across layers serve as parameters [1].

- In force field parameterization for molecular dynamics simulations, parameters include bond stiffness constants, equilibrium angles, and partial atomic charges derived from quantum mechanical calculations [2].

These parameters are optimized to minimize the difference between the model's predictions and experimental or high-fidelity computational reference data. The quality of these parameters directly determines the model's predictive accuracy on novel chemical structures.

Hyperparameters: The Architectural Controllers

Hyperparameters are configuration variables that govern the training process itself. They are set before learning begins and remain unchanged during training, acting as control knobs that influence how the model learns its parameters [1].

Key hyperparameters in chemical machine learning include:

- Learning rate in gradient descent optimization, controlling step size during parameter updates

- Number of training epochs, determining how many times the algorithm processes the entire training set

- Network architecture decisions including the number of layers and neurons per layer in neural networks

- Regularization parameters that control model complexity to prevent overfitting

- Cluster count (k) in k-means clustering of chemical structures [1]

Unlike parameters, hyperparameters cannot be learned directly from the data through standard optimization procedures and must be established through systematic experimentation and validation.

Comparative Analysis: Parameters versus Hyperparameters

Table 1: Fundamental distinctions between model parameters and hyperparameters in chemical machine learning

| Aspect | Model Parameters | Hyperparameters |

|---|---|---|

| Definition | Internal variables learned from data | External variables set before training |

| Role | Used for making predictions on new chemical structures | Used to control the learning process |

| Determination | Learned automatically via optimization algorithms (Gradient Descent, Adam) | Set via hyperparameter tuning (Grid Search, Metaheuristics) |

| Dependence | Dependent on training data and hyperparameter choices | Independent of model parameters; set manually |

| Examples in Chemistry | Weights in neural networks predicting toxicity; coefficients in QSAR models | Learning rate; number of neural network layers; number of epochs |

The Critical Importance of Hyperparameter Tuning in Chemical Research

Enhancing Predictive Performance and Generalizability

In chemical informatics and drug discovery, the performance of machine learning models heavily depends on both the dataset characteristics and the training algorithms. Hyperparameter tuning directly addresses this dependency by optimizing the learning process for specific chemical datasets [3]. Research has demonstrated that proper hyperparameter tuning can significantly improve model performance independent of dataset composition, enabling more reliable predictions for critical applications such as toxicity assessment, binding affinity prediction, and reaction yield optimization [3].

A particularly compelling advantage emerges in low-data regimes common in chemical research, where acquiring labeled experimental data is costly and time-consuming. Recent studies have shown that properly tuned and regularized non-linear models can perform on par with or even outperform traditional linear regression in data-limited scenarios [4]. This capability is crucial for domains like early-stage drug discovery where chemical data may be limited to dozens or hundreds of compounds rather than thousands.

Overcoming Computational and Methodological Challenges

Hyperparameter optimization presents a NP-Hard problem in machine learning, with complexity growing exponentially as the number of hyperparameters increases [3]. This challenge is particularly acute in chemical applications where models must balance accuracy with computational feasibility. Blind search approaches like Exhaustive Grid Search (EGS) become computationally prohibitive for complex models with multiple hyperparameters, especially when each model evaluation requires significant computational resources [3].

Metaheuristic optimization approaches such as Grey Wolf Optimization (GWO) and Genetic Algorithms (GA) have demonstrated superior performance in hyperparameter tuning for chemical applications, converging faster to optimal configurations than blind search methods while achieving better performance [3]. These methods are particularly valuable for automating the tuning process for researchers who may not be experts in algorithm design, making advanced machine learning more accessible to chemical researchers focused on domain problems rather than methodological refinements.

Hyperparameter Tuning Methodologies for Chemical Applications

Established Tuning Protocols

Table 2: Hyperparameter tuning methods for chemical machine learning applications

| Method | Mechanism | Advantages | Limitations | Best-Suited Chemical Applications |

|---|---|---|---|---|

| Exhaustive Grid Search (EGS) | Evaluates all combinations in a predefined hyperparameter space | Guaranteed to find best combination within grid; simple implementation | Computationally expensive; discrete nature may miss optimal intermediate values | Small hyperparameter spaces; models with few hyperparameters |

| Metaheuristic (GWO, GA) | Uses optimization algorithms to explore hyperparameter space efficiently | Faster convergence; better performance than EGS; handles high-dimensional spaces | Complex implementation; requires parameterization of the metaheuristic itself | Complex models (DNNs); large hyperparameter spaces; computational chemistry applications |

| Bayesian Optimization | Builds probabilistic model of objective function to direct search | Efficient exploration of parameter space; balances exploration and exploitation | Computational overhead for model updates; complex implementation | Low-data regimes; expensive-to-evaluate models [4] |

Experimental Protocol for Metaheuristic Hyperparameter Tuning

For chemical machine learning applications, the following protocol adapts metaheuristic approaches for optimal hyperparameter tuning:

Problem Formulation:

- Define the hyperparameter search space based on the selected machine learning algorithm

- Establish the objective function (e.g., maximization of R² for regression, F1-score for classification)

- Incorporate overfitting penalties considering both interpolation and extrapolation performance [4]

Optimization Setup:

- Initialize population of candidate solutions (hyperparameter sets)

- For Grey Wolf Optimization: Initialize alpha, beta, and delta positions

- For Genetic Algorithm: Initialize population with random hyperparameter combinations

Iterative Evaluation:

- For each candidate solution, train the model with the proposed hyperparameters

- Evaluate model performance on validation set using k-fold cross-validation

- Calculate fitness score considering both accuracy and regularization terms

Solution Refinement:

- Update candidate solutions based on metaheuristic rules

- For GWO: Update positions based on alpha, beta, and delta wolves

- For GA: Apply selection, crossover, and mutation operations

Termination and Validation:

- Continue iterations until convergence or maximum iterations reached

- Validate best hyperparameter set on held-out test set

- Perform final model training with optimal hyperparameters on complete training set

This protocol has demonstrated statistically significant improvements (p-value 2.6E-5) over randomly chosen hyperparameters in biological and biomedical applications [3].

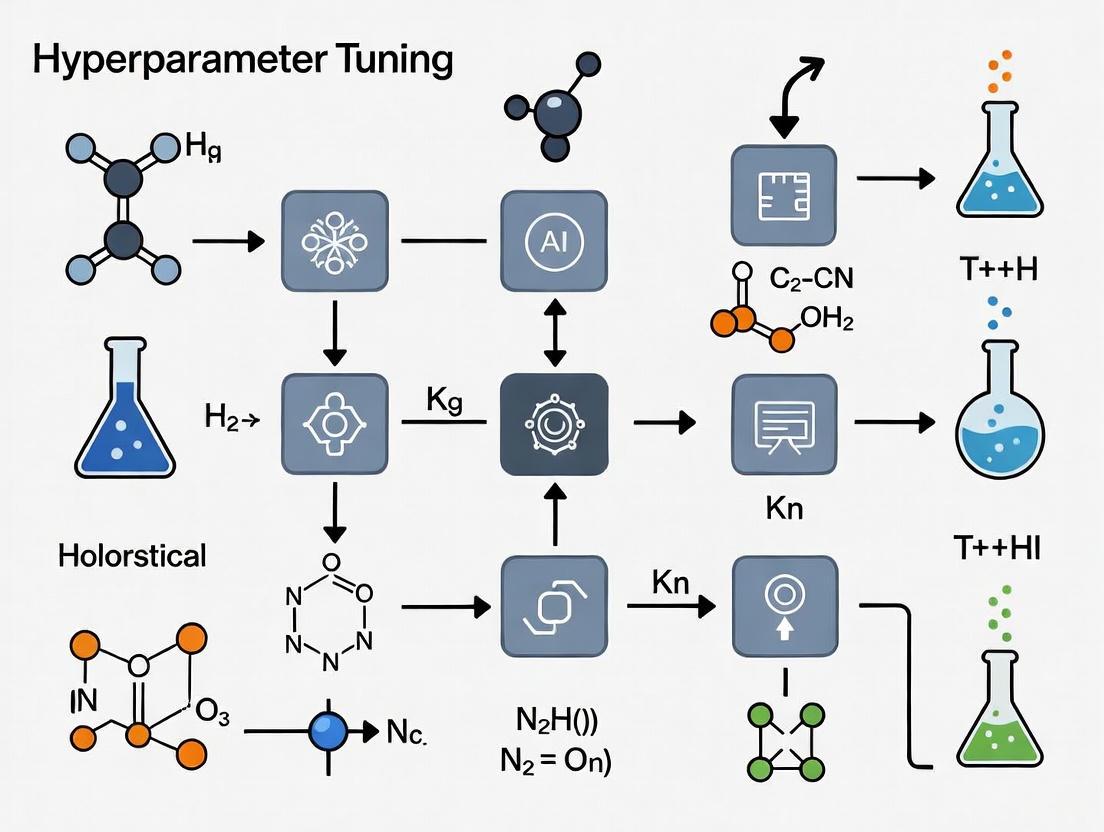

Visualizing Hyperparameter Optimization Workflows

Conceptual Relationship Diagram

Diagram 1: Relationship between chemical data, parameters, and hyperparameters

Metaheuristic Tuning Workflow

Diagram 2: Metaheuristic hyperparameter optimization process

Table 3: Research reagent solutions for parameter and hyperparameter management

| Tool/Resource | Function | Application Context |

|---|---|---|

| Force Field Toolkit (ffTK) | Facilitates parameterization of small molecules for molecular dynamics | Deriving CHARMM-compatible parameters from QM target data [2] |

| Metaheuristic Algorithms (GWO, GA) | Hyperparameter optimization for machine learning algorithms | Tuning complex models on biological and chemical datasets [3] |

| Bayesian Hyperparameter Optimization | Mitigates overfitting in low-data regimes | Automated workflows for non-linear models in chemical applications [4] |

| ParamChem Web Server | Automated parameter assignment by analogy to existing force fields | Initial parameter generation for novel chemical entities [2] |

| Quantum Mechanical Target Data | Provides reference values for parameter optimization | Deriving accurate parameters for force fields and molecular representations [2] |

The distinction between model parameters and hyperparameters is fundamental to developing robust, predictive models in chemical research. While model parameters encapsulate the learned relationships from chemical data, hyperparameters control the learning process itself, making their careful tuning essential for optimal performance. The strategic importance of hyperparameter optimization is particularly pronounced in chemical applications characterized by complex, high-dimensional data and often limited sample sizes. Advanced tuning methods, particularly metaheuristic approaches and Bayesian optimization, demonstrate significant improvements over default configurations or manual tuning, enabling more accurate predictions of molecular properties, biological activities, and reaction outcomes. As machine learning continues to transform chemical research and drug development, systematic approaches to hyperparameter tuning will play an increasingly critical role in ensuring these models achieve their full potential in accelerating discovery while maintaining scientific rigor.

The Direct Link Between Tuning and Predictive Accuracy in Molecular Property Prediction

Hyperparameter optimization (HPO) is a critical, yet often overlooked, step in building deep learning models for molecular property prediction (MPP). In domains such as drug discovery and materials science, where accurate prediction of properties like energy gaps and glass transition temperatures is paramount, the proper configuration of a model's hyperparameters can be the determining factor between a high-accuracy tool and an unreliable one. This technical guide synthesizes recent research to demonstrate that a systematic HPO strategy is not a mere incremental improvement but a fundamental requirement for developing efficient and accurate models. We outline that advanced HPO algorithms, particularly Hyperband, enable researchers to navigate the complex hyperparameter spaces of deep neural networks and graph neural networks, leading to significant gains in predictive performance and, ultimately, more successful computational campaigns in chemistry research.

Machine learning, particularly deep learning, has become an indispensable tool in the acceleration of chemical research and development. Its applications span from de novo molecular design to the prediction of complex physicochemical properties, directly impacting the pace of drug discovery and materials science [5] [6]. In this context, a model's predictive accuracy is of utmost importance, as it directly influences the quality of scientific insights and decisions.

A machine learning model's parameters are of two distinct types: (1) model parameters, which are learned during the training process (e.g., weights and biases in a neural network), and (2) hyperparameters, which are set prior to training and control the learning process itself [5]. For deep neural networks (DNNs) and graph neural networks (GNNs) used in MPP, these hyperparameters can be categorized as:

- Structural hyperparameters: These define the architecture of the network, such as the number of layers, the number of neurons per layer, and the choice of activation function.

- Algorithmic hyperparameters: These are associated with the learning algorithm, such as the learning rate, batch size, number of training epochs, and parameters for regularization techniques like dropout [5].

Hyperparameter Tuning is the systematic process of searching for the optimal combination of these hyperparameters to maximize a model's performance on a given task. Despite its proven importance, many prior applications of deep learning to MPP have paid only limited attention to HPO, resulting in models that deliver suboptimal predictions and hinder research progress [5]. This guide establishes the direct causal link between rigorous HPO and enhanced predictive accuracy, providing methodologies and best practices for chemistry researchers.

Quantitative Impact of HPO on Model Accuracy

The necessity of HPO is most convincingly demonstrated through quantitative comparisons. Controlled studies across various domains, including molecular property prediction, consistently show that tuned models significantly outperform their untuned counterparts.

Table 1: Performance Gains from Hyperparameter Tuning in Various Studies

| Domain / Model | Performance Metric | Baseline (No HPO) | With HPO | Reference |

|---|---|---|---|---|

| Molecular Property Prediction (Dense DNN) | Prediction Accuracy (Case-specific) | Suboptimal | Significant Improvement | [5] |

| Lightweight Image Models (ConvNeXt-T) | Top-1 Accuracy on ImageNet | 77.61% | 81.61% | [7] |

| Lightweight Image Models (MobileViT v2-S) | Top-1 Accuracy on ImageNet | 85.45% | 89.45% | [7] |

| Urban Building Energy Modeling (GBDT) | R² Score | 0.840 | 0.906 (after tuning) | [8] |

| Bridge Damage Identification | Mean Average Precision (mAP) | Baseline mAP | +2.9% improvement | [9] |

The impact of HPO is particularly critical in low-data regimes, which are common in experimental chemistry. A 2025 study introduced automated workflows that mitigate overfitting through Bayesian hyperparameter optimization. The objective function was specifically designed to account for performance in both interpolation and extrapolation, enabling non-linear models to perform on par with or even outperform traditional multivariate linear regression on datasets as small as 18 to 44 data points [10]. This demonstrates that with proper tuning and regularization, complex models can be effectively deployed even with limited data.

Comparative Analysis of HPO Methodologies

Selecting the right HPO algorithm is crucial for balancing computational efficiency with the quality of the final model. The main strategies move beyond naive manual search or exhaustive grid search.

Table 2: Comparison of Hyperparameter Optimization Algorithms

| Method | Core Principle | Advantages | Disadvantages | Best-Suited For |

|---|---|---|---|---|

| Grid Search [11] | Exhaustively searches over a predefined set of values for all hyperparameters. | Guaranteed to find the best combination within the grid; simple and transparent. | Computationally intractable for high-dimensional spaces; curse of dimensionality. | Small, well-understood hyperparameter spaces. |

| Random Search [8] [11] | Randomly samples hyperparameter combinations from predefined distributions. | More efficient than grid search; allows for a better coverage of the space with a fixed budget; highly parallelizable. | May still waste resources on poor hyperparameters; does not use information from past trials to inform next ones. | Moderately sized search spaces where parallel computing resources are available. |

| Bayesian Optimization [5] [10] [11] | Builds a probabilistic model (surrogate) of the objective function to direct the search towards promising regions. | Highly sample-efficient; requires fewer trials than random/grid search to find a good configuration. | Sequential nature can limit parallelization; higher computational overhead per trial. | Expensive-to-evaluate models (e.g., large DNNs) with a limited tuning budget. |

| Hyperband [5] | A multi-fidelity method that uses early stopping to aggressively screen a large number of configurations, then allocates more resources to the most promising ones. | High computational efficiency; can quickly discard underperforming configurations. | Does not use information from past configurations like Bayesian optimization. | Large-scale hyperparameter tuning problems, especially for deep learning. |

| BOHB (Bayesian Optimization + Hyperband) [5] | Combines the early-stopping mechanism of Hyperband with the informed search of Bayesian optimization. | Leverages the strengths of both Bayesian optimization and Hyperband. | More complex to implement and run. | Situations demanding both high efficiency and sample efficiency. |

For molecular property prediction, studies have concluded that the Hyperband algorithm is the most computationally efficient, providing optimal or nearly optimal prediction accuracy [5]. Its ability to rapidly discard poor performers makes it exceptionally well-suited for tuning deep neural networks, where a single training run can be computationally expensive.

Workflow of a Hyperband Optimization

The following diagram illustrates the iterative process of the Hyperband algorithm, which dynamically allocates resources to the most promising hyperparameter configurations.

Experimental Protocols for HPO in Molecular Property Prediction

This section details specific methodologies from key studies, providing a reproducible template for researchers.

Protocol 1: HPO for Dense Deep Neural Networks (DNNs) using KerasTuner

This protocol is based on a case study for predicting the melt index of polymers and the glass transition temperature (Tg) [5].

- Base Model Architecture: A dense DNN with an input layer (9 nodes), three hidden layers (64 nodes each, ReLU activation), and an output layer (linear activation). The model was optimized using Adam and used Mean Squared Error (MSE) as the loss function.

- Hyperparameter Search Space:

- Number of hidden layers: 1 to 5

- Number of units per layer: 10 to 128

- Learning rate: 1e-4 to 1e-2 (log scale)

- Batch size: 16 to 128

- Dropout rate: 0.0 to 0.5

- HPO Method: The study compared Random Search, Bayesian Optimization, and Hyperband algorithms within the KerasTuner library. Hyperband was found to be the most computationally efficient.

- Implementation Steps:

- Data Preprocessing: Normalize the input features and split the data into training, validation, and test sets.

- Model Definition: Create a function that builds the DNN model, taking the hyperparameters as input.

- Tuner Setup: Instantiate a Hyperband tuner object in KerasTuner, specifying the model-building function, the objective (e.g.,

val_loss), and the maximum number of epochs. - Search Execution: Run the tuner, which will automatically manage the training and validation of multiple configurations.

- Retrieval: Extract the best hyperparameter configuration and retrain the model on the full training data.

Protocol 2: HPO for Non-Linear Models in Low-Data Regimes using ROBERT

This protocol addresses the challenge of overfitting in small chemical datasets (e.g., 18-44 data points) [10].

- Base Models: The workflow evaluates non-linear algorithms including Random Forests (RF), Gradient Boosting (GB), and Neural Networks (NN) against Multivariate Linear Regression (MVL).

- Objective Function: A combined Root Mean Squared Error (RMSE) calculated from different cross-validation (CV) methods is used as the objective for Bayesian Optimization.

- Interpolation Performance: Assessed using a 10-times repeated 5-fold CV.

- Extrapolation Performance: Assessed via a selective sorted 5-fold CV, where data is sorted by the target value and partitioned to test extrapolation to high and low values.

- HPO Method: Bayesian Optimization is used to minimize this combined RMSE score, which inherently penalizes overfitting.

- Implementation Steps:

- Data Splitting: Reserve 20% of the data (or a minimum of four points) as an external test set, split evenly to ensure a balanced representation.

- Optimization Loop: For each algorithm, Bayesian optimization iteratively explores the hyperparameter space, evaluating each candidate with the combined CV metric.

- Model Selection: The model with the best combined RMSE is selected as the final model.

- Final Evaluation: The held-out test set is used to provide an unbiased estimate of the model's generalization performance.

Visualization of the Low-Data HPO Workflow

The diagram below outlines the ROBERT program's workflow for optimizing models in low-data scenarios.

Implementing effective HPO requires both software tools and methodological knowledge. The following table lists key "research reagents" for your tuning experiments.

Table 3: Essential Tools and Techniques for Hyperparameter Tuning

| Tool / Technique | Type | Function in HPO | Reference / Source |

|---|---|---|---|

| KerasTuner | Software Library | An intuitive, user-friendly Python library for hyperparameter tuning with Keras/TensorFlow models. Supports Random Search, Bayesian Optimization, and Hyperband. | [5] |

| Optuna | Software Library | A define-by-run Python library that supports a wide range of HPO algorithms, including the combination of Bayesian Optimization and Hyperband (BOHB). | [5] |

| ROBERT | Software Tool | A program that provides a fully automated workflow for data curation, hyperparameter optimization, and model selection, specifically designed for low-data regimes. | [10] |

| Bayesian Optimization | Algorithm | A sample-efficient HPO method that uses a probabilistic surrogate model to guide the search for optimal hyperparameters. | [10] [11] |

| Combined CV Metric | Methodological Technique | An objective function that incorporates both interpolation and extrapolation performance during HPO to rigorously combat overfitting. | [10] |

| Hyperband | Algorithm | A multi-fidelity HPO algorithm that uses early stopping to quickly discard poor hyperparameter configurations, maximizing efficiency. | [5] [12] |

| Graph Neural Networks (GNNs) | Model Architecture | A class of deep learning models that operate directly on graph-structured data, such as molecular graphs, making them particularly powerful for MPP. | [13] [6] |

In the pursuit of accurate and reliable molecular property predictors, hyperparameter tuning is not an optional refinement but a core component of the model development workflow. As evidenced by quantitative studies, neglecting HPO leads to suboptimal models that fail to realize the full potential of deep learning architectures. The adoption of modern, efficient HPO algorithms like Hyperband and Bayesian Optimization, facilitated by user-friendly software libraries, allows researchers in chemistry and drug development to systematically navigate complex hyperparameter spaces. This direct link between tuning and accuracy ensures that computational models are robust, generalizable, and capable of providing truly valuable insights for scientific discovery and innovation. Future work will likely focus on even more automated and adaptive tuning methods, further lowering the barrier to creating state-of-the-art predictive models in chemistry.

In the field of chemical and drug development research, machine learning models promise to accelerate molecular design, predict compound properties, and optimize synthetic pathways. However, the performance of these models hinges critically on a often-overlooked step: hyperparameter tuning. Hyperparameters are the configuration variables that govern the learning process itself, set before the model is trained on chemical data [14]. Unlike model parameters (e.g., weights in a neural network) that are learned from data, hyperparameters control aspects such as model complexity, learning rate, and regularization strength. Their careful selection determines whether a model will uncover meaningful chemical relationships or merely memorize experimental data.

Neglecting proper hyperparameter tuning poses a significant risk to the validity and utility of chemistry models. A survey of machine learning publications in political science found that over 75% failed to adequately report how they tuned their models, a practice that impedes scientific progress and reproducibility [14]. In chemical contexts, where models inform costly experimental decisions, such neglect can lead to two fundamental failures: overfitting and underfitting. An overfit model might appear perfectly accurate on its training set of known compounds but fail to predict the properties of newly designed molecules. An underfit model would be insufficiently powerful to capture the complex structure-activity relationships crucial for drug discovery. This technical guide examines the consequences of tuning neglect, provides methodologies for proper optimization, and frames these practices within the broader thesis that rigorous hyperparameter tuning is indispensable for building reliable, generalizable AI-driven chemistry models.

Core Concepts: Overfitting, Underfitting, and the Bias-Variance Tradeoff

Defining Model Fit and Misfit

The ultimate goal of any machine learning model in chemistry is generalization—the ability to make accurate predictions on new, unseen data based on patterns learned from training data [15]. For instance, a model should predict binding affinities for novel molecular structures not present in its training set. Three distinct outcomes define how well a model achieves this goal:

- Underfitting occurs when a model is too simple to capture the underlying patterns in the chemical data. It exhibits high error on both training and test data because it fails to learn the relevant relationships [16] [17]. Imagine using linear regression to model a complex, non-linear relationship between molecular descriptor and solubility; the oversimplified model would perform poorly even on the compounds it was trained on.

- Overfitting occurs when a model is excessively complex, learning not only the fundamental patterns but also the noise and random fluctuations specific to the training dataset [16] [15]. Such a model may achieve near-perfect accuracy on its training data but performs poorly on new data. In chemistry, this is analogous to a model memorizing specific molecular fingerprints in the training set instead of learning generalizable structure-property relationships.

- Appropriate Fitting represents the ideal balance, where the model captures the true underlying trends in the data without being swayed by noise [16]. A well-fit model will perform well on both training data and unseen validation/test data, indicating it has learned generalizable chemical knowledge.

The Bias-Variance Tradeoff

The concepts of overfitting and underfitting are formalized through the bias-variance tradeoff, a fundamental concept guiding model complexity decisions [16] [17].

- Bias refers to the error introduced by approximating a real-world problem (e.g., a complex quantum mechanical property) by a simplified model. High-bias models make strong assumptions about the data, often leading to underfitting [16].

- Variance refers to the model's sensitivity to small fluctuations in the training data. High-variance models are excessively complex and prone to overfitting, as they learn the noise in addition to the signal [16] [17].

The following table summarizes the key characteristics of these concepts in a chemical context:

Table 1: Characteristics of Model Fit Conditions in Chemical Machine Learning

| Aspect | Underfitting (High Bias) | Appropriate Fitting | Overfitting (High Variance) |

|---|---|---|---|

| Model Complexity | Too simple | Balanced | Too complex |

| Training Data Performance | Poor | Good | Excellent/Perfect |

| Test/Validation Data Performance | Poor | Good | Poor |

| Chemical Interpretation | Fails to capture essential structure-activity relationships | Captures generalizable chemical patterns | Memorizes specific training compounds and noise |

| Example in Chemistry | Linear model for complex QSAR | Well-regularized neural network for toxicity prediction | Ultra-deep network fitting experimental noise |

The "tradeoff" emerges because decreasing bias (by increasing model complexity) typically increases variance, and vice versa [16]. The goal of hyperparameter tuning is to find the optimal balance where both bias and variance are minimized, resulting in a model that generalizes well to new chemical data [16].

Consequences of Neglecting Hyperparameter Tuning

Direct Impact on Model Performance and Generalization

When hyperparameter tuning is neglected in chemical model development, practitioners risk deploying models with serious flaws that can undermine research validity and lead to costly experimental dead-ends. The most immediate consequence is the failure to generalize beyond the training data. An overfit model, while appearing accurate retrospectively, provides false confidence when applied to new compound libraries or reaction spaces [18]. This occurs because the model has essentially memorized the training examples rather than learning the underlying chemical principles [15].

The following diagram illustrates the conceptual relationship between model complexity, error, and the optimal tuning zone that avoids both overfitting and underfitting:

For chemistry-specific applications, the consequences of poor tuning manifest in particularly critical ways:

- Molecular Property Prediction: An overfit QSAR model might perfectly predict activities for its training compounds but fail for structurally novel scaffolds, misleading medicinal chemistry efforts [17].

- Chemical Reaction Optimization: An underfit model could miss complex relationships between reaction conditions and yield, resulting in suboptimal synthetic recommendations.

- Materials Design: Overfit models for material properties may suggest non-viable candidates when applied to new chemical spaces, wasting computational and experimental resources.

Broader Implications for Chemical Research

Beyond immediate performance issues, neglecting hyperparameter tuning has serious implications for scientific integrity and resource allocation in chemical research:

- Reproducibility Crisis: Studies that fail to report tuning methods make it impossible to reproduce results, a critical concern when models inform drug discovery decisions [14].

- Misleading Structure-Activity Relationships: Overfit models may identify spurious molecular descriptors as "important," leading to incorrect hypotheses about the chemical basis of activity.

- Resource Misallocation: Poorly tuned models can direct synthetic chemistry efforts toward dead ends based on inaccurate predictions, wasting valuable laboratory time and materials.

- Hyperparameter Deception: When comparing multiple algorithms, insufficient tuning of baseline models can lead to incorrect conclusions about which method performs best for a given chemical prediction task [14].

Methodologies for Detecting and Addressing Fit Problems

Diagnostic Tools and Techniques

Detecting overfitting and underfitting requires both visual diagnostics and quantitative metrics. Learning curves are among the most valuable tools for diagnosing these issues [17] [15]. These plots show model performance (e.g., loss or error) on both training and validation sets against training iterations or model complexity.

The following experimental protocol can be implemented to diagnose fit problems in chemical models:

Table 2: Experimental Protocol for Diagnosing Model Fit Issues

| Step | Procedure | Chemical Application Example |

|---|---|---|

| 1. Data Partitioning | Split chemical dataset into training, validation, and test sets using stratified sampling if classes are imbalanced (e.g., active/inactive compounds) | Ensure all sets represent similar chemical space distributions; validate with chemical diversity metrics |

| 2. Model Training | Train model on training set while tracking performance on both training and validation sets across epochs | Monitor metrics relevant to chemical prediction (e.g., RMSE for property prediction, AUC for classification) |

| 3. Learning Curve Analysis | Plot training and validation performance against training iterations | Identify divergence points where validation performance plateaus or worsens while training performance improves |

| 4. Decision Boundary Examination | For lower-dimensional data, visualize how the model separates different classes | In chemical space, use PCA-projected views to see if separation boundaries are overly complex |

| 5. Cross-Validation | Perform k-fold cross-validation to assess performance stability across different data splits | Ensure model performance is consistent across different subsets of chemical space |

Addressing Underfitting and Overfitting

Once diagnosed, specific techniques can be applied to address fit problems in chemical models:

To Remediate Underfitting:

- Increase model complexity by adding layers to neural networks or increasing tree depth in ensemble methods [16] [17]

- Enhance feature engineering to include more chemically relevant descriptors [17]

- Reduce regularization strength (L1/L2 parameters) that may be overly constraining the model [17]

- Increase training time (epochs) to allow more thorough learning [16]

- Remove noise from chemical data through appropriate preprocessing and outlier detection [16]

To Remediate Overfitting:

- Apply regularization techniques (L1/L2) to discourage over-reliance on specific features [16] [17]

- Implement dropout for neural networks to prevent co-adaptation of neurons [16]

- Increase training data size through additional experiments or data augmentation [16] [17]

- Simplify the model architecture by reducing parameters or layers [16] [17]

- Apply early stopping when validation performance plateaus [16]

- Use ensemble methods like random forests that naturally resist overfitting [17]

Hyperparameter Optimization Strategies for Chemistry Models

Optimization Algorithms and Their Applications

Effective hyperparameter tuning requires systematic search strategies rather than manual guesswork. Several algorithmic approaches have been developed with varying computational efficiency and performance characteristics:

Table 3: Comparison of Hyperparameter Optimization Methods

| Method | Search Strategy | Computation Cost | Best for Chemical Applications |

|---|---|---|---|

| Grid Search | Exhaustive search over predefined parameter grid | High | Small parameter spaces with known optimal ranges |

| Random Search | Stochastic sampling of parameter combinations | Medium | Moderate-dimensional spaces where some parameters matter more than others |

| Bayesian Optimization | Probabilistic model-based sequential search | High | Expensive chemical simulations where each evaluation is costly |

| Genetic Algorithms | Evolutionary approach with selection, crossover, mutation | Medium-High | Complex, high-dimensional spaces with interacting parameters |

| Grey Wolf Optimization | Swarm intelligence-based metaheuristic | Medium-High | Non-convex optimization landscapes common in chemical data |

Metaheuristic approaches like Genetic Algorithms (GAs) and Grey Wolf Optimization (GWO) are particularly valuable for chemical applications because they can efficiently navigate high-dimensional, complex search spaces [3] [19]. These methods are especially suitable when tuning multiple interacting hyperparameters, such as those in deep neural networks applied to molecular data.

Experimental Workflow for Hyperparameter Optimization

The following diagram illustrates a comprehensive workflow for hyperparameter optimization tailored to chemical machine learning projects:

This workflow emphasizes several critical considerations for chemical applications:

- Problem Definition: Clearly specify the chemical prediction task (classification, regression), relevant evaluation metrics, and success criteria.

- Search Space Definition: Establish biologically/chemically plausible ranges for hyperparameters based on prior knowledge or literature.

- Validation Strategy: Implement rigorous cross-validation that accounts for chemical clustering or temporal effects in data.

- Final Evaluation: Test the fully tuned model on a held-out test set that was not used during tuning to obtain unbiased performance estimates.

Implementing effective hyperparameter optimization requires both computational tools and methodological knowledge. The following table catalogs key resources mentioned in the literature:

Table 4: Research Reagent Solutions for Hyperparameter Optimization

| Tool/Resource | Type | Function in Optimization | Application Context |

|---|---|---|---|

| MetaGen [20] | Python Package | Provides framework for developing and evaluating metaheuristic algorithms | Flexible optimization across diverse chemical problems |

| Grey Wolf Optimization [3] | Metaheuristic Algorithm | Swarm intelligence approach for global optimization | Effective for high-dimensional problems with unknown structure |

| Genetic Algorithms [19] | Metaheuristic Algorithm | Evolutionary approach inspired by natural selection | Complex chemical spaces with interacting parameters |

| K-fold Cross-Validation [17] | Statistical Method | Robust performance estimation through data resampling | Preventing overfitting to specific compound clusters |

| Batch Normalization [21] | Neural Network Technique | Reduces internal covariate shift during training | Stabilizing training of deep networks for chemical data |

Hyperparameter tuning is not a mere technical refinement but a fundamental requirement for developing trustworthy machine learning models in chemistry and drug discovery. The consequences of neglecting this process—overfitting, underfitting, and ultimately poor generalization—directly undermine the scientific validity of computational findings and can misdirect experimental research. As machine learning plays an increasingly central role in chemical research, from molecular design to reaction optimization, the discipline must adopt rigorous tuning practices comparable to established experimental controls.

The broader thesis for chemistry models research is clear: hyperparameter tuning represents the bridge between theoretical algorithm and practical chemical application. Just as reaction conditions are optimized in the laboratory, learning algorithms require systematic optimization to extract meaningful patterns from chemical data. By embracing the methodologies outlined in this guide—diagnostic techniques, optimization algorithms, and rigorous validation—researchers can build models that genuinely generalize to new chemical spaces, accelerating discovery while maintaining scientific rigor. In an era of increasing model complexity and chemical data availability, sophisticated tuning must become standard practice rather than optional afterthought for all computational chemistry workflows.

The application of machine learning (ML) in chemistry represents a paradigm shift in scientific discovery, impacting diverse fields from drug development to materials science [22]. However, this data-driven revolution faces a fundamental obstacle: the unique and challenging nature of chemical data itself. Unlike data-rich domains like computer vision or natural language processing, chemical research often operates under severe data constraints due to the time, cost, ethical considerations, and technical limitations associated with experimental data acquisition [23]. These constraints result in the prevalence of small datasets, which are further complicated by high-dimensionality—where molecules are described by numerous features or complex graph structures—and significant noise originating from sensor inaccuracies, transmission errors, or human annotation mistakes [24]. These characteristics—small sample size, high-dimensionality, and noise—collectively define the core challenge of chemical informatics.

Within this context, hyperparameter tuning transitions from a routine ML step to a critical, non-trivial task essential for model success. Hyperparameters are the configuration settings of an algorithm (e.g., learning rate, network depth, regularization strength) that are not learned from the data but govern the learning process itself. In low-data regimes, the default hyperparameters of many complex models, such as Graph Neural Networks (GNNs) or transformers, are prone to causing overfitting, where a model memorizes the noise and limited samples in the training set instead of learning the underlying chemical relationship, leading to poor generalization on new, unseen data [10] [25]. Consequently, meticulous hyperparameter optimization (HPO) is not merely about maximizing performance; it is a fundamental safeguard for developing robust, reliable, and generalizable models that can truly accelerate scientific discovery in chemistry.

Deconstructing the Core Challenges

The Small Data Problem

In scientific fields, it is often challenging to obtain large labeled training samples due to various restrictions or limitations such as privacy, security, ethics, high cost, and time constraints [23]. When the number of training samples is very small, the ability of ML-based or DL-based models to learn from observed data sharply decreases, resulting in poor predictive performance [23]. This "small data challenge" is technically more severe for machine and deep learning studies than the oft-discussed "big data" problem [23]. For instance, in drug discovery, the discovery of properties of new molecules is constrained by multiple metrics, resulting in few records of successful clinical candidates for a given target [23]. Small datasets are acutely susceptible to both underfitting and overfitting, hindering a model's ability to generalize effectively [10].

High-Dimensionality and Molecular Representation

Molecules are inherently structured data, and representing them for ML models often results in high-dimensional feature spaces. Approaches range from traditional molecular descriptors to modern graph-based representations used by Graph Neural Networks (GNNs) [26]. Cheminformatics leverages computational tools to analyze chemical data, but traditional rule-based algorithms face challenges in scalability and adaptability [26]. GNNs have emerged as a powerful tool for modeling molecules in a manner that mirrors their underlying chemical structures [26]. However, the performance of GNNs is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task [26]. This high-dimensional representation, combined with small sample sizes, exacerbates the curse of dimensionality, where the data becomes sparse, making it difficult for models to learn meaningful patterns without careful regularization through HPO.

The Pervasiveness of Data Noise

Chemical data is frequently contaminated with two primary types of noise: attribute noise and label noise [24]. Attribute noise, or feature noise, arises from issues like sensor inaccuracies, transmission limitations, and noisy environments [24]. Label noise occurs when samples are annotated incorrectly, resulting from factors such as delayed data acquisition, inaccurate sensor signals, human errors, and unknown impact events [24]. In practice, datasets often exhibit both types of noise concurrently. Label noise is particularly harmful, as it can cause models to overfit to incorrect labels, significantly degrading performance [24]. The presence of noise in small datasets is especially damaging, as there are insufficient data points to average out its effect, making the model's learning process highly unstable.

Table 1: Taxonomy of Challenges in Chemical ML

| Challenge | Causes | Impact on Model Performance |

|---|---|---|

| Small Datasets | High experimental cost, time constraints, ethical limits, low clinical candidate yield [23] | Sharp decrease in predictive performance, high susceptibility to overfitting and underfitting [23] [10] |

| High-Dimensionality | Complex molecular representations (e.g., graphs, numerous descriptors) [26] | Data sparsity ("curse of dimensionality"), increased model complexity, need for strong regularization |

| Data Noise | Sensor inaccuracies, human annotation errors, transmission issues [24] | Overfitting to incorrect labels or features, reduced model robustness and generalization [24] |

Why Hyperparameter Tuning is a Cornerstone for Chemistry Models

Hyperparameter tuning is the process of systematically searching for the optimal combination of hyperparameters that results in the best-performing model. In the context of chemical data's unique challenges, its importance is magnified for several critical reasons.

Mitigating Overfitting in Low-Data Regimes

The most limiting factor in applying non-linear models to low-data regimes is overfitting [10]. A study on solubility prediction showed that extensive HPO did not always result in better models, likely due to overfitting when evaluated on the same statistical measures used during the optimization [25]. In some cases, using a preselected set of sensible hyperparameters yielded similar performances to extensive HPO but four orders of magnitude faster, highlighting that indiscriminate HPO can be counterproductive and computationally wasteful [25]. Therefore, the goal of HPO in chemistry is not just to maximize a metric, but to do so in a way that explicitly penalizes over-complexity and promotes generalization, often through cross-validation techniques that account for both interpolation and extrapolation [10].

Enabling the Use of Complex, High-Capacity Models

Non-linear ML algorithms like neural networks and gradient boosting have proven effective for handling large, complex datasets, but their effectiveness in low-data scenarios is often limited by sensitivity to overfitting and difficult interpretation [10]. These models require careful hyperparameter tuning and regularization techniques to generalize effectively [10]. Proper HPO makes it feasible to use these powerful models even with limited data. For example, benchmarking on eight diverse chemical datasets ranging from 18 to 44 data points demonstrated that when properly tuned and regularized, non-linear models could perform on par with or outperform traditional multivariate linear regression [10]. This opens the door to capturing more complex, non-linear structure-property relationships that simpler models might miss.

Navigating High-Dimensional Hyperparameter Spaces

The performance of advanced models like GNNs is highly sensitive to architectural choices and hyperparameters, making optimal configuration a non-trivial task [26]. Neural Architecture Search (NAS) and HPO are crucial for improving GNN performance, but the complexity and computational cost of these processes have traditionally hindered progress [26]. Automated HPO strategies, such as Bayesian optimization, are designed to efficiently navigate these high-dimensional hyperparameter spaces, balancing the exploration of unknown configurations with the exploitation of known promising ones [27]. This is analogous to the way these methods are used for optimizing real chemical reactions, where they explore vast condition spaces to find optimal parameter combinations [27].

Table 2: Key Hyperparameter Optimization Algorithms and Their Applications in Chemistry

| Optimization Algorithm | Core Principle | Application in Chemical ML |

|---|---|---|

| Bayesian Optimization [10] [27] | Builds a probabilistic model of the objective function to balance exploration and exploitation. | Used for tuning model hyperparameters [10] and optimizing real chemical reactions [27]. |

| Evolutionary Algorithms (e.g., Paddy) [28] | A biologically inspired population-based method that propagates parameters without direct inference of the objective function. | Benchmarked for hyperparameter optimization of neural networks and targeted molecule generation [28]. Robust and avoids early convergence. |

| Training Performance Estimation (TPE) [29] | Accelerates HPO by predicting final model performance from early training epochs. | Reduced total time and compute budgets by up to 90% during HPO for large-scale chemical models [29]. |

Experimental Protocols and Workflows for Robust Model Development

An Automated Workflow for Low-Data Regimes: The ROBERT Framework

To address overfitting directly, dedicated workflows like the one implemented in the ROBERT software have been developed [10]. This workflow incorporates a specific objective function during Bayesian hyperparameter optimization that explicitly accounts for overfitting in both interpolation and extrapolation.

Detailed Methodology:

- Objective Function Definition: The hyperparameter optimization uses a combined Root Mean Squared Error (RMSE) calculated from different cross-validation (CV) methods [10].

- Interpolation Performance: Assessed using a 10-times repeated 5-fold CV.

- Extrapolation Performance: Assessed via a selective sorted 5-fold CV, which sorts data by the target value and considers the highest RMSE between the top and bottom partitions [10].

- Bayesian Optimization: The model's hyperparameters are systematically tuned using this combined RMSE metric as the objective function. This iterative process ensures the selected model minimizes overfitting as much as possible [10].

- Data Leakage Prevention: The workflow reserves 20% of the initial data (or a minimum of four data points) as an external test set, which is evaluated only after hyperparameter optimization is complete. The split is set to an "even" distribution to ensure a balanced representation of target values [10].

A Scalable ML Framework for Reaction Optimization: The Minerva Framework

For applications like high-throughput experimentation (HTE), scalable ML frameworks are needed. Minerva is an ML framework designed for highly parallel multi-objective reaction optimization with automated HTE [27].

Detailed Methodology:

- Search Space Definition: The reaction condition space is represented as a discrete combinatorial set of plausible conditions, with automatic filtering of impractical combinations (e.g., temperatures exceeding solvent boiling points) [27].

- Initial Sampling: The workflow begins with algorithmic quasi-random Sobol sampling to select initial experiments, maximizing coverage of the reaction space [27].

- Model Training and Selection: A Gaussian Process (GP) regressor is trained on the experimental data to predict reaction outcomes and their uncertainties for all conditions [27].

- Batch Selection via Acquisition Function: A scalable multi-objective acquisition function (e.g., q-NParEgo, TS-HVI) evaluates all conditions to select the next most promising batch of experiments, balancing exploration and exploitation [27]. This process is repeated for multiple iterations.

A Novel Method for Noise Detection in Sequential Data

Addressing data quality, a novel method was proposed to detect both attribute and label noise in high-dimensional sequential data, which is common in industrial chemical processes [24].

Detailed Methodology:

- Model Construction: An Enhanced Variational Recurrent Prediction Model (EVRPM) is proposed, incorporating a label predictor and an auxiliary task into a Variational Recurrent Neural Network (VRNN) to model the log-likelihood of samples [24].

- Noise Indicator: The log-likelihood, log p(X, y), quantifies how well a sample aligns with the learned data distribution. Noisy samples (with either attribute or label noise) disrupt this distribution and yield lower log-likelihood values [24].

- Iterative Detection: An iterative process is adopted. The initially trained EVRPM detects noisy samples, which are removed. The model is then retrained on the refined dataset, enhancing detection accuracy in subsequent iterations. The process terminates when the prediction error on a validation set no longer decreases [24].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Software and Algorithmic Tools for Chemical ML

| Tool / Algorithm | Function | Relevance to Chemical Data Challenges |

|---|---|---|

| ROBERT Software [10] | Automated workflow for ML model development from CSV files. | Specifically designed for low-data regimes, mitigates overfitting via a specialized HPO objective. |

| ChemProp [22] [25] | A GNN-based method for molecular property prediction. | A state-of-the-art method for modeling physico-chemical and ADMET properties; performance is highly sensitive to HPO. |

| TransformerCNN [25] | A representation learning method using NLP on SMILES strings. | Reported to provide higher accuracy than graph-based methods for solubility prediction with less computational effort. |

| Bayesian Optimization [10] [27] | A probabilistic approach for global optimization of black-box functions. | The core algorithm for efficient HPO and experimental design in chemistry, balancing exploration and exploitation. |

| Paddy Algorithm [28] | A biologically inspired evolutionary optimization algorithm. | Offers robust versatility and innate resistance to early convergence for various chemical optimization tasks. |

| Training Performance Estimation (TPE) [29] | A technique to predict final model performance from early training. | Accelerates HPO for large-scale chemical models by up to 90%, reducing immense computational costs. |

The unique trifecta of challenges presented by chemical data—small datasets, high-dimensionality, and pervasive noise—creates a modeling environment where the default settings of powerful machine learning algorithms are insufficient and often lead to failure. In this context, hyperparameter tuning is not a mere technicality but a fundamental component of the model development process. It is the primary mechanism for injecting domain-aware constraints (regularization) into models, forcing them to learn robust, generalizable patterns from limited and noisy data rather than memorizing artifacts. As the field advances with increasingly complex models and a greater emphasis on automation and scalability, the development of efficient, overfitting-aware HPO workflows—as exemplified by the tools and frameworks discussed—will be critical to unlocking the full potential of AI in accelerating chemical discovery and drug development.

In cheminformatics, the performance of Graph Neural Networks (GNNs) is highly sensitive to architectural choices and hyperparameters, making optimal configuration selection a non-trivial task [26]. Molecular structures present unique computational challenges that necessitate sophisticated tuning approaches beyond standard deep learning practices. The intricate relationship between molecular representation—where atoms correspond to nodes and chemical bonds to edges—and target chemical properties demands careful model configuration to capture complex structure-activity relationships [30] [31]. Without systematic tuning, GNNs may fail to generalize to out-of-distribution molecules or learn spurious correlations that diminish their predictive value in real-world drug discovery applications [13]. This case study examines how advanced tuning methodologies—including hyperparameter optimization (HPO), neural architecture search (NAS), and emerging prompt-based techniques—fundamentally enhance the capability of GNNs to accurately model molecular properties and accelerate chemical discovery.

Tuning Methodologies for Molecular GNNs

Hyperparameter Optimization and Neural Architecture Search

Traditional HPO and NAS algorithms provide foundational approaches for optimizing molecular GNNs. These techniques systematically search through spaces of architectural choices and training parameters to identify configurations that maximize predictive performance on validation metrics. For molecular property prediction, this process is particularly crucial because different properties (e.g., electronic properties versus bioactivity) may rely on distinct molecular features and require specialized architectural biases [26]. Automated optimization techniques have demonstrated potential to enhance model performance, scalability, and efficiency in key cheminformatics applications including drug-target interaction prediction, drug repurposing, and molecular property optimization [26] [31].

Prompt Tuning Strategies for Pre-trained GNNs

Recent advances in transfer learning have introduced prompt-based tuning as a parameter-efficient alternative to full model fine-tuning. Unlike conventional fine-tuning that updates all parameters of a pre-trained GNN, prompt tuning keeps the core model frozen and instead learns task-specific "prompts" that adapt the model to downstream tasks [32] [33].

Universal Prompt Tuning: Graph Prompt Feature (GPF) operates on the input graph's feature space and can theoretically achieve an equivalent effect to any form of prompting function, making it applicable to GNNs pre-trained with diverse strategies [33]. This approach has demonstrated average improvements of about 1.4% in full-shot scenarios and about 3.2% in few-shot scenarios compared to fine-tuning [33].

Edge-Level Prompt Tuning: EdgePrompt manipulates input graphs by learning additional prompt vectors for edges, which are incorporated during message passing in pre-trained GNNs [32]. This approach fundamentally differs from node-level prompt designs by explicitly modeling graph structural information, proving particularly valuable for molecular graphs where bond characteristics critically influence chemical properties [32].

Multi-View Conditional Tuning: For molecules represented with both 2D and 3D structural information, the Multi-View Conditional Information Bottleneck (MVCIB) framework maximizes shared information while minimizing irrelevant features from each view [34]. This approach uses one molecular view as a contextual condition to guide representation learning of its counterpart and aligns important substructures (e.g., functional groups) across views [34].

Table 1: Comparison of GNN Tuning Methodologies for Molecular Structures

| Methodology | Key Mechanism | Advantages | Representative Techniques |

|---|---|---|---|

| Hyperparameter Optimization | Systematic search of training parameters | Improves model performance and generalization | Bayesian optimization, grid search [26] |

| Neural Architecture Search | Automated discovery of optimal GNN architectures | Reduces manual design effort; discovers novel architectures | Reinforcement learning, evolutionary algorithms [26] |

| Prompt Tuning | Learns task-specific prompts for frozen pre-trained models | Parameter-efficient; reduces catastrophic forgetting | EdgePrompt, GPF [32] [33] |

| Multi-View Tuning | Aligns representations across multiple molecular views | Captures complementary structural information | MVCIB [34] |

Experimental Protocols and Performance Analysis

Direct Inverse Design Through Gradient Ascent

A particularly innovative application of tuned GNNs is direct molecular generation through gradient-based optimization. This approach leverages the differentiability of GNNs to perform gradient ascent directly on the molecular graph representation with respect to a target property [13].

Experimental Protocol:

- Model Setup: A GNN is pre-trained on molecular property prediction using datasets such as QM9, which contains quantum mechanical properties of small organic molecules [13].

- Graph Construction: The molecular graph is constructed with constraints that ensure chemical validity. The adjacency matrix is built from a weight vector that is symmetrized and rounded using a sloped rounding function to maintain differentiability while enforcing symmetry and zero trace [13].

- Feature Mapping: Atom types are determined by valence rules (sum of bond orders) with an additional weight matrix to differentiate elements with identical valence [13].

- Optimization Loop: Starting from a random graph or existing molecule, gradient ascent is performed on the graph representation while holding GNN weights fixed, with additional penalty terms to enforce chemical constraints such as maximum valence of four [13].

Performance Analysis: In generating molecules with target HOMO-LUMO gaps, this approach (DIDgen) achieved success rates comparable to or better than state-of-the-art genetic algorithms (JANUS), while consistently generating more diverse molecules [13]. The method generated in-target molecules in 2.1-12.0 seconds per molecule depending on the target difficulty, demonstrating computational efficiency [13].

Table 2: Performance Comparison of DIDgen vs. JANUS for Targeting HOMO-LUMO Gaps

| Target Gap | Method | Molecules within 0.5 eV of Target | Mean Absolute Distance from Target (eV) | Average Tanimoto Distance |

|---|---|---|---|---|

| 4.1 eV | DIDgen | 47 | 0.25 | 0.91 |

| 4.1 eV | JANUS | 42 | 0.27 | 0.89 |

| 6.8 eV | DIDgen | 52 | 0.19 | 0.93 |

| 6.8 eV | JANUS | 48 | 0.22 | 0.90 |

| 9.3 eV | DIDgen | 45 | 0.24 | 0.92 |

| 9.3 eV | JANUS | 43 | 0.26 | 0.88 |

Performance data adapted from [13]

Explainable Drug Response Prediction

The XGDP framework demonstrates how tuning enhances both predictive accuracy and interpretability in drug response prediction [30].

Experimental Protocol:

- Data Preparation: Drug response data from GDSC database combined with gene expression data from CCLE, resulting in 133,212 drug-cell line pairs [30].

- Molecular Representation: Drugs represented as molecular graphs with enhanced node features computed using a circular algorithm inspired by Extended-Connectivity Fingerprints, considering both atoms and their surrounding environments [30].

- Model Architecture: GNN module for molecular graphs combined with CNN module for gene expression profiles, integrated through a cross-attention mechanism [30].

- Interpretation: Application of attribution methods including GNNExplainer and Integrated Gradients to identify salient molecular substructures and their interactions with significant genes [30].

Performance Analysis: The tuned GNN approach outperformed previous methods in drug response prediction accuracy while providing mechanistic insights into drug-gene interactions [30]. The incorporation of chemically-informed node and edge features was critical to this success, demonstrating the importance of domain-specific tuning decisions [30].

Visualization of Tuning Approaches

GNN Tuning Methodology Landscape

Table 3: Essential Research Reagents and Computational Tools for Molecular GNN Tuning

| Resource | Function | Application in Tuning |

|---|---|---|

| QM9 Dataset | Quantum mechanical properties of ~134k small organic molecules | Benchmarking GNN performance on electronic property prediction [13] |

| GDSC/CCLE Data | Drug response data with gene expression profiles | Training and tuning models for drug sensitivity prediction [30] |

| BRICS Algorithm | Retrosynthetically feasible chemical substructure decomposition | Identifying chemically meaningful fragments for explanation and multi-view alignment [35] [34] |

| Substructure Mask Explanation (SME) | Model interpretation via chemically meaningful fragments | Validating tuned GNNs and identifying salient molecular motifs [35] |

| Sloped Rounding Function | Differentiable rounding for adjacency matrix optimization | Enforcing chemical validity during gradient-based molecular generation [13] |

| Edge-Prompt Vectors | Learnable parameters for edge features in pre-trained GNNs | Adapting frozen models to downstream tasks without full fine-tuning [32] |

| Multi-View Conditional Information Bottleneck | Framework for maximizing shared information across molecular views | Aligning 2D and 3D molecular representations during pre-training [34] |

Tuning methodologies represent a critical frontier in advancing GNN applications for molecular structures. As demonstrated across multiple case studies, carefully optimized GNNs consistently outperform their untuned counterparts in predictive accuracy, generalization capability, and practical utility in drug discovery pipelines [13] [30]. The emergence of sophisticated tuning approaches—from prompt-based adaptation to multi-view representation learning—signals a maturation of the field toward more data-efficient and chemically-aware model development [32] [34].

Future progress will likely focus on several key challenges identified in current research. Improving model interpretability remains paramount, with methods like Substructure Mask Explanation (SME) leading the way toward GNNs that provide chemically intuitive rationales for their predictions [35]. Scaling tuning approaches to leverage increasingly diverse molecular representations—including 3D geometric information and multi-omics data—will require continued algorithmic innovation [36] [34]. Furthermore, addressing the computational expense of extensive tuning through more efficient search strategies and transferable tuning policies represents an important direction for increasing accessibility of these methods to broader chemical research communities [26] [31].

As GNNs become increasingly embedded in automated discovery workflows, the role of systematic tuning will only grow in importance. The methodologies and case studies presented here provide both a foundation and future outlook for developing more powerful, reliable, and chemically insightful models to accelerate molecular design and optimization.

Methodologies in Action: A Guide to Hyperparameter Optimization Techniques for Chemistry

In the field of chemical sciences, where the accurate prediction of molecular properties is paramount for drug discovery and materials design, hyperparameter tuning transcends mere technical refinement—it becomes a fundamental step in ensuring model reliability and predictive power. The development of machine learning (ML) models for molecular property prediction (MPP) has witnessed significant advancements, yet many applications pay only limited attention to hyperparameter optimization (HPO), resulting in suboptimal prediction values and reduced scientific utility [5]. The latest research findings emphasize that HPO is a key step when building ML models that can lead to significant gains in model performance, particularly for deep neural networks and ensemble methods commonly employed in chemical informatics [5].

Chemical datasets present unique challenges that make rigorous hyperparameter tuning especially critical. These datasets often exhibit high dimensionality, inherent experimental noise (particularly heteroscedastic noise which is non-constant), and are typically expensive to acquire in terms of time and resources [37] [38]. Furthermore, the relationship between molecular structures and their properties often constitutes a complex "black box" function where gradient-based optimization methods may be inapplicable [38]. Within this context, selecting an appropriate hyperparameter optimization technique becomes essential for extracting meaningful insights while conserving valuable experimental resources.

Fundamentals of Hyperparameters in Machine Learning

In machine learning, hyperparameters are parameters whose values are set before the learning process begins, contrasting with model parameters that algorithms learn during training [5]. These hyperparameters can be categorized into two primary types:

- Structural hyperparameters that describe the architectural configuration of models, such as the number of layers in a neural network, number of units per layer, type of activation function, and number of filters in convolutional layers [5].

- Algorithmic hyperparameters associated with the learning process itself, including learning rate, number of iterations (epochs), batch size, loss functions, and regularization techniques like dropout [5].

The process of hyperparameter optimization involves efficiently identifying the optimal combination of these parameter values to maximize model performance on a given dataset within a reasonable timeframe [5]. For chemical applications, where models must generalize well to novel molecular structures, effective HPO becomes particularly crucial for developing robust predictive tools.

Core Hyperparameter Tuning Methods

Grid Search

Grid search represents the most fundamental approach to hyperparameter tuning, operating through an exhaustive search across a predefined discrete grid of hyperparameter values [39]. The method systematically evaluates every possible combination of values within this grid, typically using cross-validation to assess performance metrics for each configuration [39].

Table 1: Characteristics of Grid Search

| Aspect | Description |

|---|---|

| Approach | Exhaustive search across all specified parameter combinations |

| Computational Cost | High; increases exponentially with parameter dimensions |

| Best For | Small parameter spaces with limited dimensions |

| Key Advantage | Guaranteed to find optimal combination within grid |

| Key Limitation | Computationally prohibitive for high-dimensional spaces |

The primary strength of grid search lies in its comprehensive nature—it is guaranteed to find the optimal point within the specified grid [39]. However, this advantage becomes a significant drawback in high-dimensional parameter spaces, where the number of possible combinations grows exponentially in what is known as the "curse of dimensionality" [37]. This method becomes particularly problematic in chemical applications where evaluating a single model configuration might require substantial computational resources or rely on expensive experimental data.

Grid Search Algorithm Flowchart

Random Search

Random search addresses the computational inefficiency of grid search by evaluating a randomly selected subset of hyperparameter combinations rather than exhaustively searching the entire space [39]. The underlying principle is that randomly sampling parameter values can often identify high-performing configurations with significantly fewer evaluations than grid search [39].

Table 2: Characteristics of Random Search

| Aspect | Description |

|---|---|

| Approach | Random sampling from parameter distributions |

| Computational Cost | Moderate; determined by number of iterations |

| Best For | Medium to large parameter spaces |

| Key Advantage | Faster convergence for many practical problems |

| Key Limitation | No guarantee of finding optimal configuration |

In practice, random search has demonstrated remarkable effectiveness in chemical applications. A recent study on urban building energy modeling found that random search "stands out for its effectiveness, speed, and flexibility" compared to other methods [8]. Similarly, in optimizing machine learning models for predicting high-need healthcare users, random search achieved performance comparable to more sophisticated methods while maintaining computational efficiency [40]. For chemical researchers working with large parameter spaces, random search often provides the best balance between performance and computational demand.

Bayesian Optimization

Bayesian optimization represents a more sophisticated approach that constructs a probabilistic model of the objective function to guide the search process efficiently [37]. This method is particularly valuable for optimizing expensive black-box functions, making it ideally suited for chemical applications where each evaluation might correspond to a costly experiment or computation [37].

The Bayesian optimization framework consists of two key components:

- A surrogate model, typically a Gaussian process, that approximates the objective function and provides estimates with uncertainty at unexplored points [37] [38].

- An acquisition function that uses the surrogate's predictions to balance exploration of uncertain regions with exploitation of known promising areas [37] [38].

Bayesian Optimization Cycle

In chemical research, Bayesian optimization has demonstrated remarkable effectiveness in various applications. A recent study on metabolic engineering showed that Bayesian optimization could identify optimal culture conditions for limonene production using only 22% of the experimental points required by traditional grid search [38]. Similarly, in molecular property prediction, Bayesian optimization has proven valuable for tuning deep neural networks, though it may be computationally heavier than some alternatives [5].

Table 3: Characteristics of Bayesian Optimization

| Aspect | Description |

|---|---|

| Approach | Sequential model-based optimization using surrogate models |

| Computational Cost | High per iteration but fewer evaluations needed |

| Best For | Expensive black-box functions with limited evaluations |

| Key Advantage | Sample efficiency; balances exploration/exploitation |

| Key Limitation | Computational overhead for surrogate model maintenance |

Hyperband and Advanced Hybrid Methods

Hyperband represents an innovative approach that accelerates random search through a multi-armed bandit strategy, dynamically allocating resources to the most promising configurations [5]. This method has shown remarkable efficiency in chemical informatics applications, particularly for tuning deep neural networks for molecular property prediction [5].

The algorithm operates by:

- Sampling random configurations across different resource levels (typically training iterations).

- Progressively eliminating the worst-performing configurations at each resource level.

- Allocating increasing resources to the most promising candidates.

In a comprehensive comparison of HPO algorithms for molecular property prediction, Hyperband emerged as "most computationally efficient" while delivering "optimal or nearly optimal" prediction accuracy [5]. This combination of efficiency and effectiveness makes it particularly valuable for chemical researchers working with computationally intensive models.