Why Model Validation is the Non-Negotiable Foundation of Reliable Computational Science

This article provides a comprehensive overview of model validation's critical role in computational science, particularly for researchers and professionals in drug development and biomedical fields.

Why Model Validation is the Non-Negotiable Foundation of Reliable Computational Science

Abstract

This article provides a comprehensive overview of model validation's critical role in computational science, particularly for researchers and professionals in drug development and biomedical fields. It explores the foundational principles that define validation and distinguish it from verification, then details a wide array of methodological approaches from basic train-test splits to advanced cross-validation techniques. The guide further covers essential troubleshooting and optimization strategies to combat overfitting and enhance generalizability, and concludes with rigorous validation frameworks and comparative metrics for quantitative model assessment. By synthesizing these elements, the article establishes a robust framework for building trustworthy computational models that can accelerate scientific discovery and inform critical decisions in clinical and biomedical research.

What is Model Validation and Why It's Indispensable in Computational Science

In computational science, the credibility of research and its subsequent application in critical fields like drug development hinge on a rigorous process known as model validation. It is a common misconception that a computationally efficient model that produces visually appealing results is sufficient. However, a model can be mathematically perfect yet physically irrelevant. This is where validation provides an essential "reality check," determining whether a model accurately represents the real-world phenomena it is intended to simulate [1] [2]. For researchers and scientists, particularly in high-stakes domains, embracing a culture of validation is not optional; it is fundamental to ensuring that computational predictions can be trusted to inform major decisions, from guiding laboratory experiments to designing clinical trials.

The distinction between verification and validation is the cornerstone of this process. As succinctly described by Roache, verification is "solving the equations correctly," while validation is "solving the correct equations" [3]. In other words, verification deals with the mathematics of the simulation, ensuring the code and numerical algorithms are correct and accurate. In contrast, validation deals with the physics (or biology, or chemistry) of the problem, assessing whether the selected mathematical model is a faithful representation of reality from the perspective of its intended uses [2] [3]. This relationship is foundational and can be visualized as a sequential process.

The V&V Process Flow

Core Principles: Verification vs. Validation

Understanding the nuanced yet critical difference between verification and validation is the first step toward building credible computational models. The following table breaks down the core distinctions that every computational researcher must internalize.

Table: Distinguishing Between Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Core Question | “Is the model solved correctly?” | “Does the model represent reality?” [2] |

| Primary Focus | Mathematical correctness and numerical accuracy [2] | Physical accuracy and relevance of the model itself [2] |

| Primary Methods | Mesh convergence studies, mathematical sanity checks (e.g., unit tests), code comparison [2] [3] | Comparison with experimental data, comparison with analytical solutions, benchmarking [2] |

| Analogy | Solving the equations right [3] | Solving the right equations [3] |

Verification is a prerequisite for validation. There is little value in validating a model whose numerical solution is known to be inaccurate. It is a process that ensures the software correctly implements the intended algorithms and that numerical errors are quantified and acceptable [3]. Techniques include mesh refinement studies to ensure results do not significantly change with a finer mesh, and mathematical "sanity checks" like applying a 1G load to verify that reaction forces equal the model's weight [2].

Validation, the main subject of this guide, moves beyond the mathematics. It asks whether the conceptual model—the set of equations and assumptions—is an adequate representation of the real world for the model's intended purpose [3]. It bridges the gap between the digital simulation and the physical laboratory, providing the evidence needed to trust a model's predictions when experimental data is unavailable or prohibitively expensive to obtain.

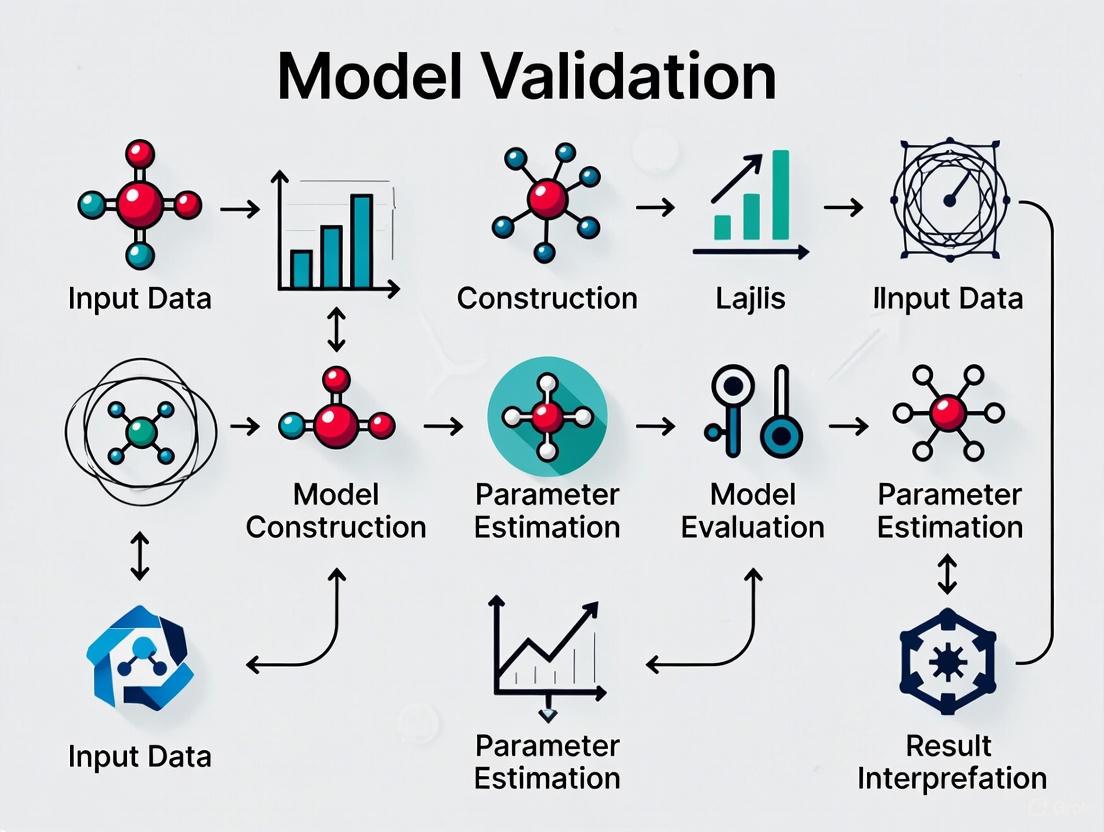

A Framework for Effective Model Validation

Executing a robust validation strategy requires a systematic, multi-faceted approach. The following workflow outlines the key stages, from data preparation to final analysis, which are expanded upon in the subsequent sections.

Model Validation Workflow

Data Validation and Conceptual Review

The foundation of any valid model is high-quality, relevant data and a sound conceptual framework.

Data Validation: Before a model can be validated, the data used for that validation must be trustworthy. This involves checking for and addressing missing values, outliers, and errors that could mislead the model [4]. Furthermore, the data must be a true representation of the underlying problem. In drug discovery, for instance, using cell-line data to validate a model predicting human in vivo efficacy requires careful consideration of the data's relevance and potential translational gaps [1]. It is also critical to assess data for bias, ensuring it has appropriate representation to avoid producing biased or inaccurate results [4].

Conceptual Review: This step involves a critical evaluation of the model's underlying logic and assumptions. Researchers must ask: Is the selected computational technique suitable for the biological or chemical problem at hand? Are the assumptions embedded in the model building—for example, about binding kinetics or cell behavior—justified and clearly understood? [4] Faulty assumptions can lead to a model that is conceptually elegant but practically useless.

Key Validation Techniques and Metrics

With a solid foundation in place, specific technical methods are employed to quantitatively and qualitatively assess the model's performance.

Comparison with Experimental Data: This is the "gold standard" for validation [2]. In practice, this means comparing the model's predictions against data obtained from controlled laboratory experiments. For example, a finite element analysis (FEA) prediction of strain in a material would be compared against measurements from physical strain gauges [2]. In computational drug design, a model predicting a compound's binding affinity must be validated against experimental data from sources like PubChem or the Cancer Genome Atlas, which provide empirical measurements on molecular structures and activities [1].

Benchmarking and Analytical Solutions: When direct experimental data is scarce or initial validation is needed, comparing model results against established analytical solutions or benchmark problems from scientific literature is a highly effective strategy [2] [3]. This provides a reality check against known results before venturing into novel predictions.

Data-Splitting Techniques: To avoid overfitting—where a model performs well on its training data but fails on new data—it is essential to test it on unseen data.

- Train/Test Split: The dataset is divided into a training set to develop the model and a separate testing set to assess its prediction accuracy on new observations [4]. Common split ratios are 70-30 or 80-20.

- Cross-Validation: A more robust technique, such as k-Fold Cross-Validation, involves dividing the data into 'k' subsets. The model is trained and evaluated 'k' times, each time using a different fold as the test set and the remaining folds for training. This provides a more comprehensive assessment of model performance [4].

Table: Key Performance Metrics for Model Validation

| Metric Category | Specific Metric | Definition and Application Context |

|---|---|---|

| Classification | Accuracy, Precision, Recall, F1-Score | Used for categorical outcomes (e.g., classifying a molecule as active/inactive) [4] |

| Regression | Mean Squared Error (MSE), R-squared | Quantifies the difference between predicted and actual continuous values (e.g., predicting binding affinity) [4] |

| Physical Sciences | Strain/Stress Correlation, Concentration Profile Match | Measures the agreement between simulated and experimentally measured physical quantities [2] |

Practical Application: Validation in Scientific Disciplines

The principles of model validation are universal, but their application varies significantly across scientific domains, each with its own unique challenges and best practices.

Validation in Drug Discovery and Development

Computational models in drug development face unique validation challenges due to the complexity of biological systems and the long timelines of clinical experiments.

- Leveraging Public Data: Given that clinical experiments on drug candidates can take years, a primary validation strategy is to compare a proposed drug candidate to the structure, properties, and efficacy of existing drugs using vast public databases like the Cancer Genome Atlas and those from the National Library of Medicine [1]. This provides a critical benchmark for early-stage validation.

- Multi-scale Validation: A model must be validated at multiple levels. A molecular dynamics simulation might be validated against crystallographic data for protein-ligand binding, while a systems pharmacology model would require validation against in vitro or in vivo efficacy and toxicity data.

- Synthesizability and Validity: In molecular design and generation studies, computational findings must be demonstrated to have practical usability. Experimental data confirming the synthesizability and biological validity of newly generated molecules is a powerful form of validation [1].

Validation in the Physical Sciences

In fields like chemistry and materials science, there is often a community expectation that computational work is paired with an experimental component [1].

- Chemistry: For studies in molecular design, experimental data that confirms the synthesizability and validity of newly generated molecules is crucial for verifying computational findings [1]. Without this, claims that a new catalyst or drug candidate outperforms existing ones can be difficult to substantiate.

- Materials Science: If a theoretical prediction points to a new class of materials with exotic properties, then experimental synthesis, materials characterization, and tests within real devices are typically required to support the prediction [1]. The growing availability of experimental data through initiatives like the High Throughput Experimental Materials Database presents exciting opportunities for more effective validation.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagents and Tools for Experimental Validation

| Item / Solution | Primary Function in Validation |

|---|---|

| Strain Gauges | A reliable method for collecting physical deformation data to directly compare with FEA predictions of stress and strain [2] |

| PubChem / OSCAR Databases | Provide existing experimental data on molecular structures and properties for comparison with computational chemistry predictions [1] |

| Cancer Genome Atlas | A source of genomic, epigenomic, and clinical data used to validate bioinformatic models and computational findings in oncology [1] |

| Cell-based Assay Kits | Provide standardized biological readouts (e.g., viability, cytotoxicity) to validate models predicting biological activity in drug discovery |

| High-Throughput Experimental Materials Database | A source of empirical materials data used to validate predictions from computational materials science models [1] |

Model validation is not a one-time activity to be performed after a model is built; it is an integral, ongoing part of the computational research lifecycle. For researchers and drug development professionals, skipping rigorous validation carries significant risks, including false confidence in flawed designs, costly mistakes from decisions based on incorrect data, and a fundamental lack of credibility for their work, especially in regulated industries [2].

The ultimate goal of validation is to achieve model generalization—the ability of a model to make accurate predictions on new, unseen data [4]. This is the true test of a model's utility in a research or development setting. By moving beyond merely "solving the equations right" to rigorously determining that they are "solving the right equations," computational scientists can ensure their work is not just mathematically elegant, but physically meaningful and practically useful, thereby accelerating scientific discovery and innovation.

In computational science research, the integrity of a model determines the validity of its predictions. Models, whether mathematical, simulation-based, or physical, are representations of real-world processes used for studying, experimenting, or predicting real-world events [5]. However, as statistician George E.P. Box famously noted, "Essentially, all models are wrong, but some are useful" [5]. The utility of any scientific model is not inherent but must be rigorously demonstrated through systematic processes—verification and validation. These two distinct but complementary processes form the foundation of credible computational science, ensuring models are both technically correct and scientifically relevant.

The failure to distinguish between verification and validation represents a critical pitfall for many practitioners. Some use the terms interchangeably, while others perform one process while neglecting the other [5]. This leads to unrealistic predictions, misguided results, and ultimately, a loss of model integrity. In fields such as drug development, where computational models increasingly inform critical decisions, the ramifications of using unverified or invalidated models can be severe, potentially compromising research outcomes and patient safety. This guide examines the fundamental differences between verification and validation, provides detailed methodologies for their implementation, and frames their necessity within the broader context of scientific rigor in computational research.

Fundamental Concepts and Definitions

What is a Model?

A model is a simplified representation of a real-world process designed to study relationships between independent variables (inputs) and dependent variables (outcomes) [5]. Models serve as experimental platforms where researchers can observe system behavior without directly intervening in the actual process. In computational science, models typically fall into three categories:

- Mathematical models: Represent systems through equations and formulas (e.g., Little's Law in queuing theory)

- Simulation models: Implement mathematical representations in software to emulate system behavior over time

- Physical models: Scaled or analog representations of systems, common in engineering and architectural applications

Verification: Building the Model Right

Verification is the process of ensuring that a model correctly implements the intended relationships between input and output variables as conceived by the modeler [5]. It answers the fundamental question: "Was the model built correctly?"

Verification is an internal consistency check concerned with whether the computational model accurately solves the equations and implements the logic intended by its designers. It does not assess whether the model represents reality accurately, but rather whether it performs as its designers believe it should. For example, if a model is designed to return a rounded-up integer value of X1 divided by X2, verification confirms it returns 1 when X1=3 and X2=4, rather than 0.75 [5].

Validation: Building the Right Model

Validation is the process of determining whether a model accurately represents the real-world system it is intended to simulate [5]. It answers the fundamental question: "Was the correct model built?"

Validation ensures the model's outputs correspond to observed behaviors in the actual system through comparison with empirical data. It assesses the model's operational usefulness and predictive capability within its intended domain. As one comprehensive review of validation methods notes, validity in the social sciences "very generally refers to the question of whether measures actually measure what they are designed to measure," underpinning "the very essence of scientific progress" [6].

Table 1: Core Differences Between Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Primary Question | Was the model built correctly? | Was the correct model built? |

| Focus | Internal consistency and implementation | Correspondence to real-world behavior |

| Basis of Assessment | Model specifications and design | Empirical data from the actual system |

| Dependencies | Independent of real-world data | Heavily dependent on real-world data |

| Primary Methods | Code review, unit testing, convergence studies | Statistical comparison, hypothesis testing, expert judgment |

| Outcome | Error-free implementation that matches designer intent | Credible representation of the real system |

The Critical Distinction: Why Both Processes Are Essential

Verification and validation serve complementary but fundamentally different roles in model development. The relationship between these processes can be visualized as a sequential workflow where each stage addresses distinct aspects of model credibility:

Sequential Dependency and Iteration

Verification necessarily precedes validation in effective model development [5]. This sequence is logical—there is little value in comparing a model to real-world data if the model contains implementation errors that prevent it from executing as intended. However, the process is often iterative: validation may reveal issues that require returning to verification or even model redesign.

As highlighted in research on validation experiments, the design of validation activities should be directly relevant to the model's purpose—predicting a Quantity of Interest (QoI) at a prediction scenario [7]. This underscores the importance of aligning both verification and validation with the ultimate goals of the modeling effort.

Consequences of Confusion

The consequences of confusing verification and validation, or performing one without the other, are significant:

- Unverified but validated models may appear to match reality by compensating for implementation errors with incorrect parameter settings, but will fail when applied to new scenarios

- Verified but unvalidated models will precisely implement incorrect assumptions, providing false confidence in flawed representations

- Resource misallocation occurs when teams expend effort validating fundamentally flawed implementations or verifying models that represent the wrong system

Methodologies and Experimental Protocols

Verification Methodologies

Verification employs a suite of software and model engineering techniques to ensure correct implementation:

Code Review and Static Analysis

- Objective: Identify implementation errors through systematic examination

- Protocol: Structured walkthroughs, automated static analysis tools, peer review

- Metrics: Code coverage, complexity measures, standards compliance

Unit Testing and Algorithm Verification

- Objective: Verify individual components and algorithms in isolation

- Protocol: Develop tests for specific functions with known expected outcomes

- Example: For a rounding function, verify input-output pairs: (3/4)→1, (5/2)→3, etc. [5]

Solution Verification

- Objective: Ensure numerical solutions meet accuracy requirements

- Protocol: Convergence studies, grid independence tests, numerical error estimation

- Metrics: Residuals, convergence rates, error norms

Table 2: Verification Techniques and Their Applications

| Technique | Primary Application | Key Metrics | Limitations |

|---|---|---|---|

| Code Review | All model types | Compliance with standards, identified defects | Subject to human error, time-consuming |

| Unit Testing | Modular code structures | Test coverage, pass/fail rates | May not catch integration issues |

| Convergence Studies | Numerical models | Convergence rates, error estimates | Requires multiple model executions |

| Symbolic Verification | Mathematical models | Analytical equivalence | Limited to tractable mathematical representations |

Validation Methodologies

Validation methodologies compare model outputs with empirical data using rigorous statistical and expert-driven approaches:

Comparison with Experimental Data

- Objective: Quantify agreement between model predictions and observed system behavior

- Protocol:

- Collect high-quality experimental data under controlled conditions

- Run model with identical inputs and initial conditions

- Compare outputs using statistical measures

- Metrics: Mean error, confidence intervals, validation metrics

Predictive Validation

- Objective: Assess model capability to predict system behavior not used in model development

- Protocol:

- Reserve portion of experimental data for validation only

- Develop model using remaining data

- Compare predictions with validation dataset

- Metrics: Prediction error, confidence in predictions

Expert Assessment

- Objective: Leverage domain knowledge to assess model plausibility

- Protocol: Structured expert elicitation, Delphi methods, peer review

- Metrics: Qualitative assessment, plausibility ratings

As noted in a comprehensive review of topic modeling validation, there is a "notable absence of standardized validation practices" across computational social sciences [6]. This highlights the need for discipline-specific validation frameworks while maintaining scientific rigor.

Optimal Design of Validation Experiments

Advanced validation approaches emphasize designing validation experiments specifically tailored to the model's intended predictive purpose:

Influence Matrix Methodology

- Objective: Select validation scenarios most representative of prediction scenarios

- Protocol: Compute influence matrices characterizing response surfaces of model functionals, minimize distance between validation and prediction influence matrices [7]

- Application: Particularly valuable when prediction scenarios cannot be experimentally replicated

Sensitivity-Based Validation

- Objective: Ensure validation scenarios reflect sensitivities relevant to prediction

- Protocol: Use sensitivity indices (e.g., Sobol indices) to weight validation observations according to their relevance to QoI prediction [7]

- Application: Guides resource allocation to most influential validation activities

The relationship between model components, validation activities, and prediction goals can be visualized as an integrated system:

Case Studies and Applications

Case Study 1: Ice Cream Stand Queuing Model

A modeler builds a queuing model for an ice cream stand to predict customer waiting time (W) based on number of customers (X) in line [5].

Verification Process:

- Model implements equation W = 3X based on observed service rate of 3 minutes/customer

- Testing with inputs X=1,2,5,10,20 returns W=3,6,15,30,60 minutes respectively

- Verification confirms model correctly implements the linear relationship

Validation Process:

- Field observation of actual customer Jessica's waiting time across different queue lengths

- Real-system behavior diverges from model: customers leave when waiting exceeds tolerance

- Actual waiting times shorter than predicted linear relationship

- Model fails validation despite passing verification

Implication: A verified but invalid model produces quantitatively precise but practically useless predictions.

Case Study 2: Distribution Center Simulation

An LSS team develops a simulation model for a distribution center with four product-sorting machines [5].

Initial Findings:

- Model shows excessive queue at Machine B despite equal product distribution

- Model passed verification but produces counterintuitive results

Root Cause Analysis:

- Verification confirmed parameter entry matched design

- Detailed review discovered data entry error: 15 minutes instead of 1.5 minutes for Machine B processing time

- Correction enabled accurate system representation

Implication: Without validation, implementation errors can remain undetected despite verification.

Case Study 3: Pollutant Transport Model

A study on optimal validation design examines a pollutant transport model [7].

Challenge: Predicting contaminant concentration at sensitive location (QoI) where direct measurement is impossible

Solution:

- Designed validation experiments using influence matrices to match prediction scenario sensitivities

- Selected observation locations and conditions that best represented QoI scenario

- Quantified predictive uncertainty based on validation under representative conditions

Implication: Strategic validation design enables confidence in predictions even when QoI cannot be directly measured.

Table 3: Research Reagent Solutions for Model Verification and Validation

| Tool Category | Specific Solutions | Function | Application Context |

|---|---|---|---|

| Verification Tools | Unit testing frameworks (e.g., pytest, JUnit), Static analysis tools (e.g., SonarQube), Continuous integration systems | Automated error detection, Regression testing, Code quality assessment | Software implementation verification |

| Validation Data Sources | Experimental data repositories, Historical system data, Sensor networks, Expert elicitation protocols | Provide empirical basis for comparison, Ground truth establishment | Model validation across domains |

| Statistical Comparison Tools | Statistical software (e.g., R, Python SciPy), Bayesian calibration tools, Uncertainty quantification libraries | Quantitative comparison of model and data, Uncertainty propagation, Validation metrics calculation | Quantitative validation assessment |

| Sensitivity Analysis Tools | Sobol index calculators, Morris method implementations, Active subspace methods | Identify influential parameters, Guide validation resource allocation, Understand model behavior | Validation experiment design |

| Domain-Specific Benchmarks | Standard problems community, Reference implementations, Analytical solutions | Provide known solutions for comparison, Establish minimum capability requirements | Discipline-specific verification and validation |

Verification and validation represent complementary but fundamentally different processes essential to credible computational science. Verification ensures models are built correctly according to specifications, while validation ensures the correct models are built to represent reality. This distinction is not merely academic—it underpins the scientific utility of computational models across disciplines from engineering to drug development.

As computational models increase in complexity and application to critical decisions, the rigorous implementation of both verification and validation becomes increasingly essential. The methodologies and case studies presented provide a framework for researchers to implement these processes systematically, while the visualization of their relationships offers conceptual clarity. By embracing both verification and validation as distinct but essential practices, the computational science community can advance both the credibility and utility of computational modeling for scientific discovery and practical application.

The broader thesis of model validation in computational science research affirms that without rigorous validation, even perfectly verified models remain potentially misleading abstractions. As models continue to inform critical decisions in drug development, public policy, and engineering design, the commitment to both verification and validation represents not merely technical diligence but scientific and ethical responsibility.

Model validation provides the critical foundation for trust and reliability in computational science, particularly in the high-stakes field of drug development. It serves as a essential quality assurance process that evaluates how well a predictive model performs on new, unseen data, confirming that it achieves its intended purpose [4]. In Model-Informed Drug Development (MIDD), a "fit-for-purpose" approach to validation is paramount, ensuring that models are well-aligned with the specific Question of Interest (QOI) and Context of Use (COU) at each development stage [8]. Without rigorous validation, models are prone to validity shrinkage—a significant reduction in predictive performance when applied to new datasets—which can lead to costly late-stage failures and inaccurate regulatory decisions [9]. This technical guide examines the methodologies, metrics, and practical applications of model validation that underpin robust computational research in pharmaceutical sciences.

The Critical Role of Model Validation in Computational Science

Model validation is the systematic process of assessing a trained model's performance on new or unseen data, moving beyond mere mathematical correctness to evaluate real-world applicability [4]. In computational science research, this process transforms a theoretical model into a verified tool for scientific discovery and decision-making.

The core challenge addressed by validation is overfitting, where a model learns not only the underlying signal in the training data but also the random noise, resulting in poor generalization to new data [4]. The phenomenon of validity shrinkage describes the nearly inevitable reduction in predictive ability when a model derived from one dataset is applied to another [9]. This occurs because algorithms adjust model parameters to optimize performance metrics, fitting both the true signal and idiosyncratic noise from measurement error and random sampling variance [9].

The implications of unvalidated models in drug development are particularly severe. Without proper validation, researchers cannot justifiably rely on a model's predictions [4]. In critical domains, errors can have profound consequences, potentially leading to significant patient harm due to incorrect decisions made by models in real-world applications [4].

Table 1: Key Terminology in Model Validation

| Term | Definition |

|---|---|

| Validity Shrinkage | The reduction in predictive ability when a model moves from the data used for construction to a new, independent dataset [9]. |

| Stochastic Shrinkage | Validity shrinkage occurring due to variations from one finite sample to another [9]. |

| Generalizability Shrinkage | Validity shrinkage occurring when a model is applied to data from a different population than the one it was built in [9]. |

| Overfitting | When a model is overly adjusted to fit the training data and fails to predict new data accurately [4]. |

| Underfitting | When a model is too weak and cannot capture the true relationships in the data [4]. |

| Context of Use (COU) | A clearly defined description of how a model should be used and the specific purpose it serves [8]. |

Core Methodologies for Model Validation

Validation Techniques and Protocols

Multiple validation techniques have been developed to assess model performance across different data scenarios. The selection of an appropriate method depends on factors such as dataset size, data structure, and the specific modeling objectives.

Hold-out Methods represent the most fundamental approach to model validation. The Train-Test Split involves randomly dividing the dataset into two parts: one for training the model and a separate portion for testing its performance [10]. For smaller datasets (1,000-10,000 samples), an 80:20 ratio is typically recommended, while medium datasets (10,000-100,000 samples) may use a 70:30 ratio, and large datasets (over 100,000 samples) often employ a 90:10 ratio [10]. The Train-Validation-Test Split extends this approach by creating three distinct data partitions, with the validation set used for parameter tuning and the test set reserved for a single, final evaluation to provide an unbiased assessment of model performance [10].

Cross-Validation Techniques offer more robust evaluation, particularly for limited datasets. K-Fold Cross-Validation divides the data into k subsets (folds), training the model k times while using a different fold as the test set each time and averaging the results [4]. This provides a more extensive analysis than simple hold-out methods [4]. Leave-One-Out Cross-Validation (LOOCV) represents an extreme case of k-fold cross-validation where k equals the number of data points, offering a comprehensive assessment at significant computational expense [4]. Stratified K-Fold Cross-Validation maintains the same ratio of classes/categories in each fold as the overall dataset, which is particularly valuable when dealing with imbalanced data where one class has significantly fewer instances [4].

Advanced and Specialized Methods address specific validation challenges. Nested Cross-Validation combines an outer loop for model evaluation with an inner loop for hyperparameter tuning, assessing how well the model generalizes while simultaneously optimizing parameters [4]. Time-Series Cross-Validation respects temporal dependencies in data by splitting datasets in a way that maintains chronological order, ensuring models are evaluated on future observations rather than randomly partitioned data [4].

The following workflow diagram illustrates the relationship between these key validation methodologies:

Performance Metrics and Quantitative Assessment

Selecting appropriate performance metrics is fundamental to meaningful model validation. These metrics must align with the specific problem type—classification, regression, or time-to-event analysis—and the clinical context of use.

Table 2: Essential Validation Metrics for Different Model Types

| Model Type | Key Metrics | Interpretation | Application Examples |

|---|---|---|---|

| Classification | Sensitivity (Recall) | Proportion of true positives correctly identified [9] | Identifying liver fibrosis in hepatitis C patients [9] |

| Specificity | Proportion of true negatives correctly identified [9] | Identifying risk for undiagnosed diabetes [9] | |

| AUC (Area Under ROC Curve) | Overall measure of model's ability to distinguish classes [9] | Predicting obesity risk from genetic loci [9] | |

| Positive Predictive Value (PPV) | Proportion of positive predictions that are correct [9] | Diabetes remission after gastric bypass [9] | |

| Regression | R² (Coefficient of Determination) | Proportion of variance explained by the model [9] | Body composition prediction equations [9] |

| Adjusted R² | R² modified for number of predictors relative to sample size [9] | More reliable for multi-predictor models [9] | |

| Mean Squared Error (MSE) | Average squared difference between predicted and actual values [9] | Calibration models for insulin sensitivity [9] | |

| Shrunken R² | R² adjusted for expected validity shrinkage in new samples [9] | Provides conservative performance estimate [9] | |

| Survival/Time-to-Event | Concordance Index (c-index) | Measures agreement between predicted and observed event orders [9] | Similar to AUC but for time-to-event data [9] |

The Researcher's Toolkit: Essential Reagents for Model Validation

Implementing robust model validation requires both computational tools and methodological frameworks. The following table outlines key components of the validation toolkit:

Table 3: Essential Research Reagent Solutions for Model Validation

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Stratified Sampling | Ensures representative distribution of classes in training/test splits | Prevents biased performance estimates with imbalanced data [4] |

| Bootstrap Methods | Estimates sampling distribution by drawing random sets with replacement | Quantifies uncertainty and expected validity shrinkage [9] |

| Hyperparameter Tuning | Optimizes model parameters not learned during training | Improves model performance via grid search or random search [4] |

| Statistical Tests (e.g., Wilcoxon Signed-Rank) | Compares performance between different models | Determines if performance differences are statistically significant [4] |

| Adjusted/Shrunken R² | Adjusts performance metrics for model complexity | Provides realistic expectation of performance in new data [9] |

Model Validation in the Drug Development Pipeline

Model validation takes on critical importance in the pharmaceutical industry, where the MIDD framework relies on quantitative models to accelerate hypothesis testing, assess drug candidates more efficiently, and reduce costly late-stage failures [8]. A "fit-for-purpose" approach ensures that validation strategies are closely aligned with the specific questions and contexts at each development stage [8].

The following diagram illustrates how validation activities integrate throughout the drug development lifecycle:

Validation Approaches Across Development Stages

Discovery Stage validation focuses on computational models like Quantitative Structure-Activity Relationship (QSAR) that predict biological activity based on chemical structure [8]. Validation at this stage typically involves leave-one-out cross-validation or external validation using separate chemical classes not included in model training.

Preclinical Research utilizes Physiologically Based Pharmacokinetic (PBPK) models and First-in-Human (FIH) dose algorithms [8]. Validation requires verifying that model predictions align with observed animal study results and can accurately extrapolate to human physiology.

Clinical Research employs Population Pharmacokinetics (PPK) and Exposure-Response (ER) models to explain variability in drug exposure and effects across individuals [8]. Validation uses k-fold cross-validation and bootstrap methods to estimate how well models will perform in broader patient populations.

Regulatory Review and Post-Market Monitoring require continuous validation as models are applied to larger, more diverse populations [8]. This includes monitoring model performance against real-world evidence and updating models when performance degrades.

Model validation represents a fundamental discipline in computational science that bridges theoretical modeling and real-world application. In drug development, where decisions have profound implications for patient safety and therapeutic success, rigorous validation is not merely optional but ethically and scientifically essential. By implementing the methodologies, metrics, and frameworks outlined in this technical guide—from cross-validation techniques to performance metrics and fit-for-purpose approaches—researchers can build trustworthy models that reliably inform critical development decisions. As artificial intelligence and machine learning assume increasingly prominent roles in pharmaceutical research [8], the principles of model validation will remain the foundation upon which reliable, ethical, and effective drug development depends.

In computational science research, the integrity of model-based conclusions is paramount. A robust validation framework is the cornerstone of credible research, ensuring that computational models are not only mathematically sound but also scientifically meaningful and reliable in their predictions. This framework provides a structured defense against model risk—the potential for adverse consequences from decisions based on incorrect or misused model outputs [11]. Within regulated fields like drug development, a "fit-for-purpose" approach is increasingly emphasized, requiring that the validation process be closely aligned with the model's intended context of use and the key questions of interest it aims to address [8]. This guide details the three core components—Data, Conceptual, and Testing elements—that form the foundation of a rigorous validation protocol, providing researchers and drug development professionals with the methodologies to build trust in their computational tools.

Core Component 1: Data Validation

Data validation ensures the quality and relevance of the information used to build and test models, adhering to the principle that a model's output is only as reliable as its input data [12]. This component is critical for preventing the perpetuation of data errors and biases into the model's predictions.

Key Elements and Quantitative Checks

The following table summarizes the core elements of data validation and their associated quantitative checks:

| Data Validation Element | Description | Key Quantitative Checks & Methods |

|---|---|---|

| Data Quality | Ensuring data is accurate, complete, and free from errors that could skew model learning [4]. | - Handle missing values (e.g., imputation or removal)- Detect and manage outliers to prevent skewed predictions [13]- Perform data quality checks on sources, especially third-party data [12] |

| Data Relevance | Verifying the data is a true representation of the underlying problem the model is designed to solve [4]. | - Confirm data represents the scenarios the model will encounter [13]- Assess whether data sources are appropriate for the model's intended purpose [12] |

| Bias and Representation | Checking for appropriate representation to avoid reproducing biased or inaccurate results [4]. | - Analyze data demographics- Use unbiased sampling methods [4]- Scrutinize data for accuracy, completeness, and bias; log treatment of missing values and proxies [12] |

Experimental Protocols for Data Validation

- Protocol for Handling Missing Data: Identify missing values through summary statistics and data visualization. Decide whether to remove records with missing values or to fill them in using techniques such as mean/median imputation for numerical data or mode imputation for categorical data. The choice must be documented, noting the potential impact on the model [13] [12].

- Protocol for Ensuring Representativeness: Compare the distributions of key variables in your dataset against the known distributions in the target population or against a reference dataset. Use statistical tests (e.g., Chi-square tests for categorical data, KS-test for continuous data) to identify significant deviations. For biased datasets, employ techniques like stratified sampling to ensure the training data is representative [4].

- Protocol for Bias Mitigation: Analyze model features to determine if any are proxies for protected class membership (e.g., race, gender). This involves evaluating the correlation between features and protected attributes. Techniques such as reweighting or adversarial debiasing can then be applied to mitigate identified biases [12].

Core Component 2: Conceptual Soundness

Conceptual soundness evaluation assesses the quality of the model's design and theoretical foundation. It ensures that the model's logic, assumptions, and construction are well-informed, carefully considered, and consistent with established scientific principles and the intended business or research objective [11] [12].

Foundational Elements

A conceptually sound model is built upon a logical design that is appropriate for the problem at hand. This involves a critical review of the chosen algorithms and techniques to ensure they are suitable [4]. Furthermore, the model's variables must be relevant and informative to the model's purpose; extraneous variables can lead to poor predictions, while omitting key variables can render the model ineffective [4]. A core aspect of this review is the explicit documentation and understanding of all assumptions embedded in the model's construction. Unchecked invalid assumptions can directly lead to inaccurate forecasts and model failure [4] [14].

Methodologies for Evaluation

- Review of Documentation and Empirical Evidence: Scrutinize the model's documentation to ensure it is sufficiently detailed to allow parties unfamiliar with the model to understand its operation, limitations, and key assumptions [11]. The documentation should provide empirical evidence and reference published research supporting the methods and variables selected.

- Expert-Logical Soundness Analysis: Engage subject matter experts to critically evaluate the model's logic and its alignment with domain knowledge [13]. This involves questioning whether the model's design and the relationships it posits are consistent with sound industry practice and scientific understanding [11].

- Benchmarking Against Established Models: Compare the model's design, theoretical foundation, and outputs to alternative models or established industry approaches. This helps verify that the model's methodology is sound and its results are reasonable within the context of existing knowledge [11].

Core Component 3: Testing and Ongoing Monitoring

Testing and ongoing monitoring provide empirical evidence of a model's performance and ensure its reliability throughout its lifecycle. This component moves from theoretical validation to practical verification under various conditions and over time.

Performance Metrics and Testing Techniques

Selecting the right performance metrics is essential to determine how well a model will perform on new data [13]. The choice of metrics depends on the model's purpose (e.g., classification, regression).

| Model Task | Key Performance Metrics | Description and Use Case |

|---|---|---|

| Classification | Accuracy, Precision, Recall, F1 Score, ROC-AUC | Measures the model's ability to correctly classify and distinguish between classes. F1 score combines precision and recall, while ROC-AUC evaluates performance across thresholds [13] [4]. |

| General | Outcomes Analysis (Back-testing) | Comparing model outputs to corresponding actual outcomes during a time period not used in model development [11]. |

| Stability & Robustness | Sensitivity Analysis, Stress Testing | Testing how model outputs change when inputs vary or are pushed to extreme values to assess stability and identify limitations [12]. |

Key Testing Techniques:

- Train/Test Split & Cross-Validation: The dataset is divided into a training set to develop the model and a testing set to assess its prediction accuracy on new observations [4]. For a more robust evaluation, K-Fold Cross-Validation is preferred, where the data is divided into k subsets and the model is trained and evaluated k times, each time using a different fold as the test set [13] [4].

- Ongoing Monitoring: Model monitoring is not a one-time activity. It involves continuously tracking the model's performance and the data it receives to detect issues like model drift [13] [12]. Effective monitoring includes:

- Population Stability: Tracking whether the input data remains consistent with the data used for development.

- Performance Drift: Monitoring for decay in the model's predictive accuracy over time.

- Data Quality Maintenance: Ensuring ongoing data meets the same quality standards as the training data [12].

Experimental Protocols for Testing

- Protocol for K-Fold Cross-Validation:

- Randomly shuffle the dataset and partition it into k roughly equal-sized folds (commonly k=5 or k=10).

- For each unique fold: a) Use the fold as the validation data (test set). b) Use the remaining k-1 folds as the training data. c) Fit a model on the training set and evaluate it on the test set. d) Retain the evaluation score.

- Calculate the average of the k evaluation scores to produce a single robust estimation of model performance. This method provides a more comprehensive analysis than a single train/test split [13] [4].

- Protocol for Outcomes Analysis (Back-testing):

- Reserve a portion of the dataset from a time period not used in the model's development.

- At a frequency that matches the model's forecast horizon, compare the model's predictions against the actual, realized outcomes.

- Use statistical tests to determine if the differences between predictions and outcomes are significant and fall outside the organization's predetermined thresholds of acceptability [11].

- Protocol for Monitoring Data Drift:

- Establish a baseline distribution for key input variables from the model's training data.

- At a regular cadence (e.g., daily, weekly), compute the distribution of the same variables from the live, incoming data.

- Use a statistical distance metric (e.g., Population Stability Index (PSI), Kullback-Leibler divergence) to quantify the difference between the live data distribution and the baseline.

- Trigger an alert and initiate a model review process if the metric exceeds a predefined threshold [12].

A successful validation process relies on a combination of statistical tools, software libraries, and governance frameworks. The table below details key resources essential for implementing a robust validation framework.

| Tool Category | Specific Examples | Function in Validation |

|---|---|---|

| Statistical & ML Libraries | Scikit-learn, TensorFlow, PyTorch [13] | Provide built-in functions for cross-validation, performance metrics (accuracy, precision, recall, F1-score), and model evaluation APIs. |

| Specialized Validation Platforms | Galileo [13] | Offer end-to-end solutions with advanced analytics, visualization, automated insights, and continuous monitoring for model drift detection. |

| Governance Frameworks | SR 11-7 Guidance on Model Risk Management [11] | Provides a regulatory-backed framework for model risk management, defining standards for development, validation, and governance. |

| Validation Checklists | FairPlay's Six-Step Model Validation Checklist [12] | Offers a practical, question-based framework for validating conceptual soundness, data quality, process, outcomes, and governance. |

The integration of rigorous data validation, conceptual soundness evaluation, and comprehensive testing forms an interdependent triad essential for any robust model validation framework in computational science. By adhering to this structured approach, researchers and drug development professionals can significantly mitigate model risk, enhance the credibility of their findings, and ensure their models are truly fit-for-purpose. As models grow in complexity and are applied in increasingly critical domains, a disciplined and documented validation process, supported by appropriate tools and checklists, transitions from a best practice to a non-negotiable standard of scientific rigor.

Model validation stands as a critical gatekeeper in computational science, ensuring that predictions translate reliably into real-world applications. In healthcare and biomedical research, where models inform diagnoses, treatment decisions, and therapeutic development, the stakes of inadequate validation are monumental. This whitepaper examines the severe consequences of validation failures, ranging from diagnostic inaccuracies and compromised patient safety to the erosion of trust in data-driven technologies. By synthesizing current research, we present a framework of rigorous validation methodologies and best practices designed to fortify computational models against failure, thereby safeguarding public health and accelerating the responsible deployment of artificial intelligence in medicine.

The integration of computational models and artificial intelligence (AI) into healthcare represents a paradigm shift in medical research and clinical practice. These technologies, built upon Medical Laboratory Data (MLD) and other complex datasets, hold the potential to revolutionize disease screening, diagnosis, and personalized medicine [15]. However, this potential is critically contingent on a foundational principle often overlooked in the rush to innovation: rigorous and comprehensive model validation. Validation is the multi-faceted process of evaluating a computational model to ensure its accuracy, reliability, and robustness for its intended purpose.

Within the context of computational science research, validation moves beyond a mere technicality; it is an ethical imperative. In fields such as drug development and clinical diagnostics, models guide decisions that directly impact human lives. A model that predicts patient response to a therapy, identifies malignant tissues in a radiological scan, or forecasts the spread of an infectious disease must be not only sophisticated but also demonstrably trustworthy. The consequences of inadequate validation are not merely statistical errors but can manifest as misdiagnoses, ineffective treatments, and significant patient harm. As noted in studies of model risk management, failures often stem from two broad sources: execution risk, where a model fails to perform its intended function, and conceptual errors, where incorrect assumptions or techniques are used in model development [16] [17]. This paper explores these high-stakes consequences and outlines the rigorous experimental protocols and validation frameworks necessary to mitigate them.

Consequences of Inadequate Model Validation

The failure to adequately validate computational models in healthcare can lead to a cascade of negative outcomes, which can be categorized into direct patient impacts, systemic research inefficiencies, and broader ethical and trust-related repercussions.

Diagnostic Inaccuracies and Patient Harm

The most immediate and severe consequence of model failure is the potential for direct harm to patients. Inaccurate models can lead to both false positives and false negatives, each with serious implications.

- Misdiagnosis and Delayed Treatment: AI models that fail to accurately identify diseases from medical laboratory data can lead to fatal delays in treatment. For instance, a model for early sepsis detection developed at the First Affiliated Hospital of Zhengzhou University demonstrated the high sensitivity (87%) and specificity (89%) required for reliable clinical use. An inadequately validated model with lower performance metrics would miss critical cases, delaying life-saving interventions [15].

- Inadequate Personalization of Therapy: Personalized medicine relies on models to tailor treatments based on individual patient data. A flawed model can lead to the selection of suboptimal or harmful therapeutic regimens. Research into models that analyze biomarkers like circulating tumor DNA has shown great promise in predicting cancer risk and monitoring progression. A validation failure in such a context could direct a patient toward an ineffective therapy while their disease continues to advance [15].

Compromised Data Quality and Research Integrity

The foundation of any reliable computational model is high-quality data. Inadequate validation protocols often fail to identify underlying data issues, corrupting the entire research process.

Table 1: Key Data Quality Dimensions and Consequences of Their Failure

| Data Quality Dimension | Description | Consequence of Inadequate Validation |

|---|---|---|

| Accuracy | The extent to which data are correct, reliable, and free from error [18]. | Leads to model predictions that are fundamentally misaligned with biological reality, causing misdiagnosis and treatment errors. |

| Completeness | The degree to which all required data is present [18]. | Introduces biases and reduces the statistical power of models, leading to unreliable and non-generalizable findings. |

| Reusability | The suitability of data for secondary use in different contexts, supported by metadata and documentation [18]. | Prevents the reproduction and independent verification of research findings, stalling scientific progress. |

Machine learning-based strategies have demonstrated the ability to significantly improve data quality, with one study achieving a rise in data completeness from 90.57% to nearly 100% through techniques like K-nearest neighbors (KNN) imputation [18]. Without validation processes that rigorously check for these dimensions, models are built on a fragile foundation.

Erosion of Trust and Hindered Innovation

Beyond immediate technical failures, inadequate validation has a corrosive effect on the broader ecosystem of computational biomedicine.

- Stifled Clinical Adoption: Clinicians are justifiably hesitant to adopt tools that lack transparent and proven validation records. The gap between AI model development and its deployment in clinical settings is largely attributable to a lack of a unified framework for applying AI to clinical decision-making processes [15].

- Reproducibility Crises: The inability to replicate published findings is a significant problem in computational science. As seen in other fields like Computational Fluid Dynamics (CFD), inconsistencies in documenting geometric fidelity, meshing strategy, and solver configuration can render published models useless for replication or validation, severely impeding scientific progress [19].

- Ethical and Regulatory Breaches: The use of poorly validated models can lead to violations of data privacy regulations (like GDPR and HIPAA) and ethical guidelines. Ensuring that models are fair, unbiased, and secure is an integral part of the validation process, and its failure can have legal and reputational consequences [15].

A Framework for Robust Validation: Methodologies and Protocols

To mitigate the severe risks outlined above, the biomedical research community must adopt a systematic and multi-layered approach to model validation. The following protocols provide a roadmap for ensuring model reliability.

Model Risk Management (MRM) and the Three Lines of Defense

A robust Model Risk Management (MRM) function, staffed by independent experts, is essential for governing a model's entire lifecycle. Best practices from financial risk management, which are highly applicable to healthcare, include [16] [17]:

- Model Tiering: Categorizing models based on their risk to the organization. High-tier models (e.g., those used for patient diagnosis or drug safety prediction) undergo the most rigorous validation, including comprehensive back-testing and frequent full-scope validations every two to three years.

- Continuous Monitoring: MRM should not be a point-in-time exercise. Model performance must be continuously monitored through reports produced by model owners at intervals matching the frequency of the model's use [16].

- Three Lines of Defense:

- First Line: Model developers and owners who own the risk and are responsible for initial quality control.

- Second Line: The independent MRM function that reviews, challenges, and validates models.

- Third Line: Internal audit functions that provide assurance to senior management on the effectiveness of the MRM framework [16].

Technical Validation Protocols and Workflows

The technical core of validation involves a set of experimental and computational protocols designed to stress-test the model.

Table 2: Experimental Protocols for Model Validation in Healthcare

| Protocol Category | Methodology | Key Performance Indicators (KPIs) |

|---|---|---|

| Data Quality Assessment | - Missing Value Imputation: Apply K-nearest neighbors (KNN) imputation [18].- Anomaly Detection: Use ensemble techniques like Isolation Forest and Local Outlier Factor (LOF) [18].- Dimensionality Reduction: Perform Principal Component Analysis (PCA) to identify key predictors. | - Completeness rate (%) pre- and post-imputation.- Number and type of anomalies detected and corrected.- Variance explained by principal components. |

| Performance Validation | - Train-Test Split: Split data into training and hold-out test sets.- Cross-Validation: Use k-fold cross-validation to assess stability.- Comparison to Benchmarks: Compare model performance against established clinical standards or existing methods. | - Accuracy, Sensitivity, Specificity.- Area Under the Curve (AUC) of the ROC curve.- Statistical significance of performance improvements. |

| Clinical Validation | - External Validation: Test the model on a completely independent dataset, ideally from a different institution [15].- Prospective Trials: Validate the model in a real-world clinical setting as part of a structured trial. | - Sensitivity/Specificity on external validation set.- Impact on clinical workflow and patient outcomes. |

The following workflow diagram synthesizes these protocols into a coherent validation pipeline for a healthcare AI model.

The Scientist's Toolkit: Essential Research Reagent Solutions

A robust validation pipeline relies on a suite of computational and data management "reagents." The following table details key components.

Table 3: Key Research Reagent Solutions for Model Validation

| Tool Category | Specific Examples | Function in Validation |

|---|---|---|

| Data Imputation & Cleaning | K-Nearest Neighbors (KNN) Imputation [18] | Addresses missing data to ensure completeness and reduce bias. |

| Anomaly Detection | Isolation Forest, Local Outlier Factor (LOF) [18] | Identifies and corrects outliers and erroneous data points that can skew model performance. |

| Dimensionality Reduction | Principal Component Analysis (PCA) [18] | Simplifies complex data, identifies key predictive variables, and helps in visualizing data patterns for quality assessment. |

| Predictive Modeling | Random Forest, LightGBM [18] | Provides robust, benchmarked algorithms for constructing predictive models whose performance can be rigorously validated. |

| Model Risk Management (MRM) | MRM Framework [16] [17] | Provides the organizational structure and governance for independent model review, tiering, and continuous monitoring. |

| Data Standards | FAIR Principles, HL7, HIPAA [15] | Ensures data is Findable, Accessible, Interoperable, and Reusable, and that its handling complies with privacy and security regulations. |

The integration of computational models into healthcare is inevitable and holds immense promise. However, this promise cannot be realized without an unwavering commitment to rigorous model validation. The consequences of cutting corners are unacceptably high, directly impacting patient safety, research integrity, and the credibility of data science as a discipline. By adopting a structured framework that combines independent model risk management, transparent technical protocols, and a commitment to continuous monitoring, the biomedical research community can build trustworthy and impactful AI systems. The path forward requires a cultural shift where validation is not seen as a final hurdle but as an integral, ongoing component of the computational science research lifecycle, ensuring that innovation always aligns with the principle of "first, do no harm."

Methodologies in Action: A Practical Guide to Validation Techniques for Computational Models

In computational science research, the validity of predictive models determines the reliability of scientific findings and the success of their practical applications. This technical guide examines the foundational validation methodologies of in-sample and out-of-sample testing, providing a comprehensive framework for researchers to evaluate model performance and generalizability. Through detailed protocols, quantitative comparisons, and practical implementations focused on drug development applications, we establish rigorous standards for model validation that ensure computational findings translate effectively into real-world solutions, thereby enhancing research reproducibility and application success.

Model validation represents a critical phase in the computational research pipeline, serving as the definitive process for evaluating a model's performance and confirming it achieves its intended purpose [4]. In computational disciplines, particularly in high-stakes fields like drug development, validation provides the essential link between theoretical models and their reliable application to real-world problems. The core objective is to assess how well a trained model performs on new or unseen data, moving beyond mere data fitting to genuine pattern recognition [20].

Without robust validation, researchers risk building models that appear effective but fail catastrophically when deployed. This is especially crucial in domains like healthcare and drug discovery, where model errors can have severe consequences, leading to significant fatalities due to incorrect decisions [4]. The validation process helps identify and mitigate potential biases, prevents overfitting and underfitting, and ultimately increases confidence in model predictions by providing transparency and explainability [4].

Two foundational paradigms dominate validation methodology: in-sample and out-of-sample approaches. Understanding their philosophical and practical distinctions forms the cornerstone of reliable computational research and enables scientists to make informed decisions about model deployment in critical applications.

Defining the Paradigms: Core Concepts and Terminology

In-Sample Validation

In-sample validation assesses a model's accuracy using the same dataset it was trained on [21]. This approach involves training a model on a dataset and then using that same dataset to generate predictions and calculate performance metrics [22]. For example, if you fit a linear regression model to predict monthly sales using data from 2010 to 2020, in-sample forecasts would predict sales for those same years [21]. Metrics like R-squared or Mean Squared Error (MSE) calculated through in-sample validation reflect how well the model fits the training data but risk overfitting—where a model memorizes noise or irrelevant patterns in the training data [21]. A high in-sample accuracy doesn't guarantee the model will perform well on new data [21].

Out-of-Sample Validation

Out-of-sample validation evaluates a model's performance on data it hasn't encountered during training [21]. This is typically accomplished by splitting the dataset into a training period (e.g., 2010-2018) and a test period (e.g., 2019-2020) before model development begins [21]. For time series data, the split must respect temporal order to avoid data leakage [21]. This method provides a more realistic estimation of how the model will perform in real-world scenarios on unseen data [22], helping identify overfitting and ensuring the model captures generalizable patterns rather than memorizing training artifacts [21].

Table 1: Fundamental Characteristics of Validation Approaches

| Characteristic | In-Sample Validation | Out-of-Sample Validation |

|---|---|---|

| Data Usage | Uses same data for training and testing [21] | Tests on unseen data not used during training [21] |

| Primary Function | Assess model fit to training data [22] | Evaluate model generalizability [23] |

| Overfitting Risk | High [21] | Lower [21] |

| Real-world Performance Estimate | Optimistic and potentially misleading [21] [24] | More realistic [21] [24] |

| Computational Demand | Generally efficient [22] | Can be intensive with cross-validation [22] |

Comparative Analysis: Advantages, Disadvantages, and Performance Metrics

Advantages and Disadvantages

The selection between in-sample and out-of-sample validation strategies involves balancing competing advantages and limitations based on research objectives, data characteristics, and application requirements.

In-sample validation offers computational efficiency, making it particularly valuable during initial model development phases when rapid iteration is necessary [22]. It provides immediate feedback on how well the model learns underlying patterns in the training data, helping researchers identify whether their model architecture can capture the complexity present in the dataset [22]. This approach facilitates direct model evaluation based on the same data used for training, offering insights into the model's learning capacity [22].

However, in-sample validation is profoundly prone to overfitting, where a model achieves high accuracy on training data but fails to generalize [21] [22]. This limitation is particularly problematic with complex models that can inadvertently memorize noise and outliers present in the training set rather than learning generalizable patterns [21]. Consequently, in-sample performance metrics often provide an overly optimistic and potentially misleading estimation of real-world performance [21] [24].

Out-of-sample validation addresses these limitations by providing a more accurate estimation of model performance on unseen data, effectively validating the model's effectiveness in real-world scenarios [22]. This approach represents the gold standard for detecting overfitting and verifying that the model has learned transferable patterns rather than training set specifics [21]. By testing on completely separate data, out-of-sample validation builds confidence in model deployments, particularly in critical applications like medical diagnosis or drug discovery [25] [4].

The primary disadvantages of out-of-sample validation include the requirement for a separate dataset for testing and potentially increased computational demands, especially when implementing multiple iterations or cross-validation techniques [22]. Additionally, proper out-of-sample validation requires careful experimental design, such as maintaining temporal sequences in time-series data, which adds complexity to the validation pipeline [21].

Quantitative Performance Comparison

Empirical studies across multiple domains consistently demonstrate the performance gap between in-sample and out-of-sample evaluations. In financial strategy development, quantitative analysis of 355 trading strategies revealed significant degradation in risk-adjusted returns when moving from in-sample to out-of-sample testing [26].

Table 2: Quantitative Performance Comparison of 355 Trading Strategies

| Performance Measure | In-Sample Results | Out-of-Sample Results | Absolute Change | Percentage Change |

|---|---|---|---|---|

| Average Sharpe Ratio | 1.574 | 1.049 | -0.525 | -33.37% |

| Median Sharpe Ratio | 1.180 | 0.662 | -0.518 | -43.90% |

This observed performance degradation aligns with findings across computational domains, where models typically exhibit superior performance on the data they were trained on compared to unseen data [26]. The magnitude of this gap serves as an important indicator of potential overfitting and model robustness, with smaller gaps generally indicating more generalizable models [26].

Methodological Implementation: Protocols and Workflows

Experimental Protocols for Out-of-Sample Validation

Implementing robust out-of-sample validation requires methodical experimental design. The following protocols ensure scientifically sound validation across different data environments:

Standard Holdout Protocol

- Data Partitioning: Split dataset into training and testing subsets, typically using 70-30 or 80-20 ratios depending on dataset size [4]. For smaller datasets (<10,000 samples), use 70-30 ratio; for medium datasets (10,000-100,000 samples), use 80-20 ratio; for large datasets (>100,000 samples), use 90-10 ratio [20].

- Temporal Preservation: For time-series data, maintain chronological order where training data precedes test data temporally to prevent data leakage [21].

- Single Evaluation: Evaluate the final model on the test set only once to prevent indirect optimization on the test set [20].

K-Fold Cross-Validation Protocol

- Dataset Division: Partition data into k subsets (folds) of approximately equal size [4].

- Iterative Training: Train the model k times, each time using k-1 folds for training and the remaining fold for validation [4].

- Performance Aggregation: Calculate final performance metrics as the average across all k iterations [4].

- Stratification: For classification problems, maintain class distribution ratios in each fold through stratified cross-validation [4].

Temporal Cross-Validation Protocol for Time-Series Data

- Rolling Window: Use expanding or sliding time windows for training with subsequent periods for testing [21].

- Fixed Horizon: Maintain consistent forecast horizons across validation cycles [21].

- Multiple Test Periods: Validate across different temporal regimes to assess model consistency [21].

Workflow Visualization

Applications in Computational Drug Discovery and Development

The distinction between in-sample and out-of-sample validation carries particular significance in computational drug repurposing, where accurate prediction models can significantly accelerate therapeutic development while reducing costs [25]. The rigorous drug repurposing pipeline involves making connections between existing drugs and new disease indications based on features collected through biological experiments or clinical observations [25].

In this domain, computational validation often begins with in-sample approaches to identify potential drug-disease connections, followed by essential out-of-sample validation using independent information sources not utilized during the prediction phase [25]. These validation sources may include previous experimental/clinical studies, protein interaction data, gene expression data, or other independent resources that provide supporting evidence for repurposing hypotheses [25]. This rigorous validation process helps reduce false positives and builds confidence in repurposed drug candidates before committing to expensive clinical trials [25].

Validation Strategies in Computational Drug Repurposing

Research by Brown et al. identified several validation strategies specifically employed in computational drug repurposing, which can be categorized as computational and non-computational approaches [25]:

Computational Validation Methods

- Retrospective Clinical Analysis: Utilizing EHR or insurance claims data to examine off-label drug usage or searching existing clinical trials databases for drug-disease connections [25].

- Literature Support: Manual literature searches or text mining of biomedical literature to find relevant articles containing connections between existing drugs and new therapeutic uses [25].

- Public Database Search: Leveraging specialized databases containing drug-target interactions, side effect profiles, or genomic associations [25].

- Benchmark Dataset Testing: Evaluating model performance against established gold-standard datasets within the field [25].

Non-Computational Validation Methods

- In Vitro Experiments: Laboratory testing of predicted drug-disease relationships in controlled cellular environments [25].

- In Vivo Studies: Animal model testing to validate therapeutic efficacy in whole organisms [25].

- Clinical Trials: Prospective evaluation of repurposing hypotheses through phased clinical trials [25].

- Expert Review: Domain expert evaluation of predictions based on pharmacological and clinical knowledge [25].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Validation Experiments

| Reagent/Material | Function in Validation | Application Context |

|---|---|---|

| Binding Affinity Assays (e.g., ELISA) | Quantify molecular interactions between drug compounds and targets [27] | Initial hypothesis testing for drug repurposing predictions |

| Enzyme Activity Assays | Measure functional biochemical responses to drug treatments [27] | Mechanistic validation of predicted drug effects |

| Cell Viability Assays | Monitor cellular health and metabolic responses to compound exposure [27] | Toxicity screening and therapeutic efficacy assessment |

| Microfluidic Devices | Enable controlled environment drug testing on cells [27] | Mimic physiological conditions for more realistic validation |

| Biosensors | Detect specific analytes with high sensitivity and specificity [27] | Fine-tune assay conditions and monitor biological parameters |

| Automated Liquid Handling Systems | Increase assay throughput and reproducibility [27] | Standardize validation protocols across multiple experiments |

In computational science research, particularly in high-stakes fields like drug development, the distinction between in-sample and out-of-sample validation represents more than a technical formality—it constitutes a fundamental principle of rigorous scientific methodology. While in-sample validation provides initial insights into model behavior and training efficiency, out-of-sample testing remains the unequivocal standard for establishing genuine model generalizability and real-world applicability [21] [4].

The consistent performance degradation observed when moving from in-sample to out-of-sample evaluation across multiple domains [26] underscores the critical importance of this distinction and highlights the risks of relying solely on training data performance metrics. For computational researchers and drug development professionals, implementing robust out-of-sample validation protocols is not merely best practice but an ethical imperative when model predictions may influence therapeutic development decisions [25].